Abstract

Background

Skeletal malocclusion encompasses a range of symptoms, including uneven tooth arrangement, abnormal relationships between the upper and lower arch, and facial deformity. 1 It can be categorized into three different classes - Class I, Class II, and Class III. Class I represents a relatively normal maxillomandibular relationship, whereas Class II is characterized by a protruding upper jaw, and Class III is marked by a comparatively long chin. 2 As adolescence progresses, skeletal malocclusion tends to exhibit a more pronounced aggressiveness, potentially causing harm to oral health as well as physical and mental well-being. Therefore, early intervention can significantly improve its severity by utilizing the growth potential of the skull, resulting in a more harmonious bite relationship and facial proportions. 3 In some cases, severe malocclusion could be corrected into a normal class through intervention,4,5 potentially preventing the need for orthognathic surgery in adulthood.6,7 The concept of early facial management has also gained prominence, with proactive measures in self-managing facial well-being becoming mainstream.

However, adolescent health management is often overlooked, particularly for conditions like malocclusion that do not exhibit apparent symptoms. Moreover, there are limited government health examinations available for young people, not to mention the significantly low number of specialized orthodontists qualified to screen for malocclusion, with only 1 out of every 100,000 people in China. The prevalence of malocclusion in China is 45.5%. 8 However, for various reasons, only a small percentage of people have undergone orthodontic treatment. 9 It cannot be denied that many individuals may not realize the importance of seeking clinical advice due to a lack of awareness. As a potential remedy, the concept of family remote-assisted medicine mode for screening skeletal malocclusion provides an opportunity to the issue.

In the context of family remote-assisted medicine, obtaining lateral cephalograms in a household environment is challenging due to the need for x-ray examinations and measurements. Thus, photographs emerge as a preferable choice for their convenience and classification potential. As orthodontics evolves, there’s an increasing emphasis on studying soft tissue variations across different malocclusions.10–12 The facial external features exhibit a consistency with the underlying craniofacial skeleton, making them valuable in identifying skeletal malocclusion. 13 With smartphones now ubiquitous, the acquisition of photographs has become more straightforward, opening doors to wider applications. Thus, the classification based on lateral photographs is undoubtedly promising.

With the rapid development of AI, more and more successful applications have emerged in the medical field. Deep learning, a crucial component of AI based on deep neural networks, excels at discerning input information, particularly for images. 14 Impressive successes have been achieved in diagnosis and prognosis prediction, even surpassing human capabilities. In dentistry, deep learning has been effectively applied in various areas, including endodontics, periodontics, prosthodontics, and oral maxillofacial surgery.15–18

In the field of orthodontics, a seminal study demonstrated the feasibility of automated malocclusion diagnosis through the direct use of lateral cephalograms, thus obviating the need for complex measurements. 19 This research laid the theoretical groundwork for further exploration into the automated detection of skeletal malocclusion. Building on this foundation, we introduce the concept of self-health management, enabling individuals to undertake self-screening for skeletal malocclusion with photographs at the preclinical stage. With AI assistance, lateral photos could serve as an initial assessment tool for malocclusion. This innovative approach aims to introduce a unique preventive strategy at the household level, potentially engaging a broader patient population to pursue further clinical evaluation of malocclusion. Additionally, it could offer a valuable point of reference for preliminary diagnoses in clinical environments, proving particularly beneficial for general practitioners.

In summary, this study explored the feasibility of using deep learning with lateral photos for self-health management of skeletal malocclusion. By emphasizing early detection in the preclinical environment, our approach may raise public awareness of malocclusion in the future.

Methods

Subject and procedure

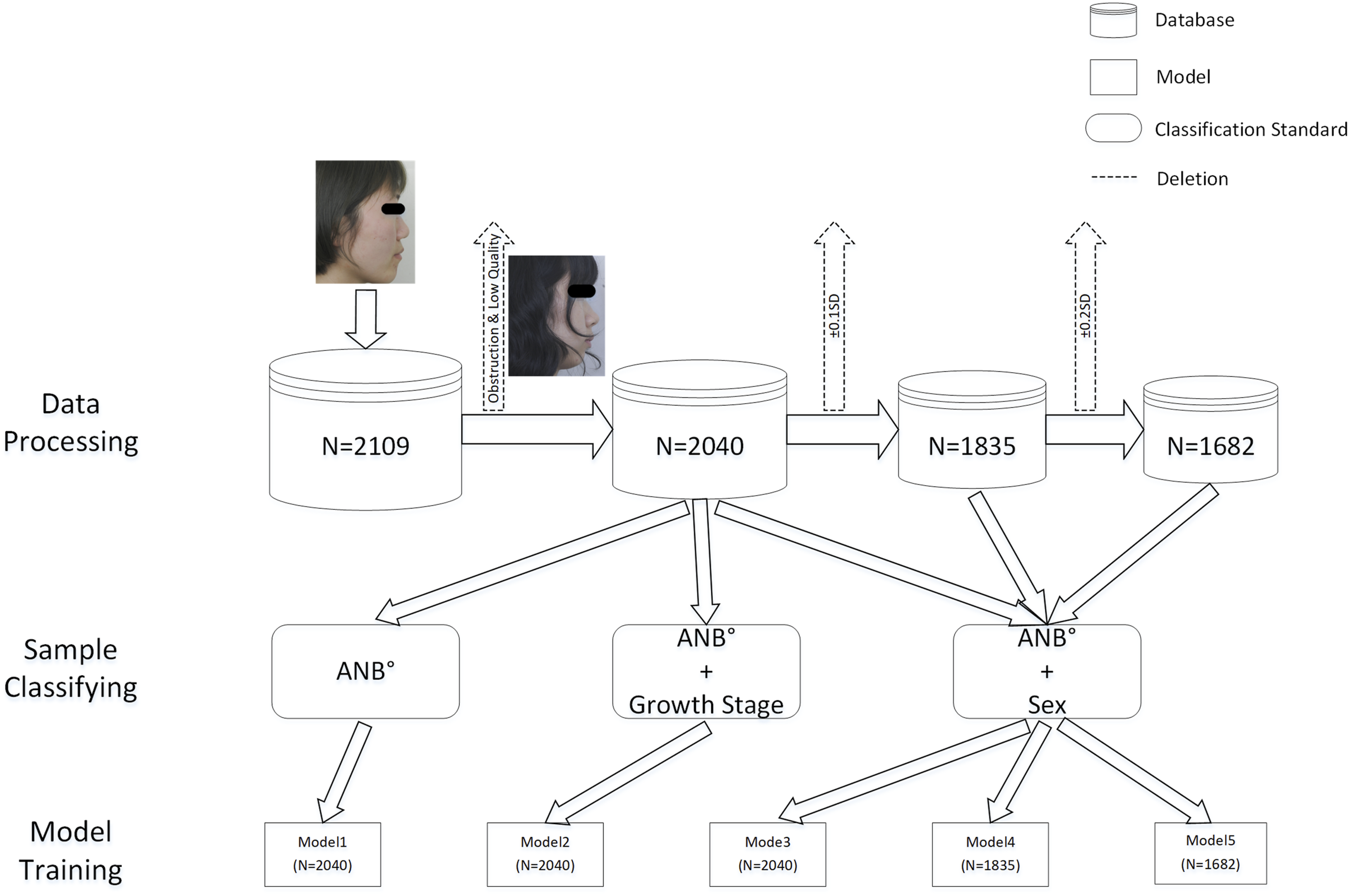

This was an observational study aimed at demonstrating the feasibility of employing deep learning with lateral photographs for early self-health management and screening of skeletal malocclusion. A total of 2109 newly diagnosed patients (536 males, 1573 females) with a mean age of 19.8 years (age range: 12 to 65 years) were included in the study. Lateral cephalograms and photos of the patients were collected for analysis. The patients were categorized into three groups: class I (mean ANB° = 2.34°, ranging from 0° to 4°), class II (mean ANB° = 5.98°, ranging from 4.1° to 13.4°), and class III (mean ANB° = −2.28°, ranging from −13.4° to −0.1°). The inclusion criteria for the study were as follows: 1) females over 12 years old or males over 14 years old; 2) no previous history of orthodontic or orthognathic treatment; 3) the absence of any facial trauma, maxillofacial tumor, or facial deformity resulting from genetic diseases. The exclusion criteria were as follows: 1) without obvious malocclusion; 2) lateral cephalograms with blurring or ghosting; 3) lateral photos that obscured the nasion or were out-of-focus. After excluding 69 poor-quality photos, there were 2040 images left in the lateral photo database (Figure 1). All patients signed an informed consent form before orthodontic treatment, permitting the use of their photos for academic publication or case presentation. Ethical approval for this study was granted by the Ethics Committee. Technical Procedure for Data Processing and Model Generation. In this process, we deleted 69 images due to coverage of nasion soft tissue points or shooting problems.

Classification criteria

In this study, the assessment of the sagittal relationship was determined by ANB angles. Following Steiner’s research, 20 an angle range of 2 ± 2° was considered as the standard. Skeletal malocclusion was classified as follows: Class I was defined as [0° ≤ ANB ≤ 4°], Class II as ANB > 4°, and Class III as ANB < 0°. Sex-related differences in growth patterns were recognized during the developmental phase, which prompted the establishment of development stage standards with a two-year interval. 21 In this study, the final growth spurt stage threshold was defined as 12 years for females and 14 years for males. Additionally, the threshold for the developmental completion stage was set at 16 years for females and 18 years for males. 22

Image augmentation and Assignment

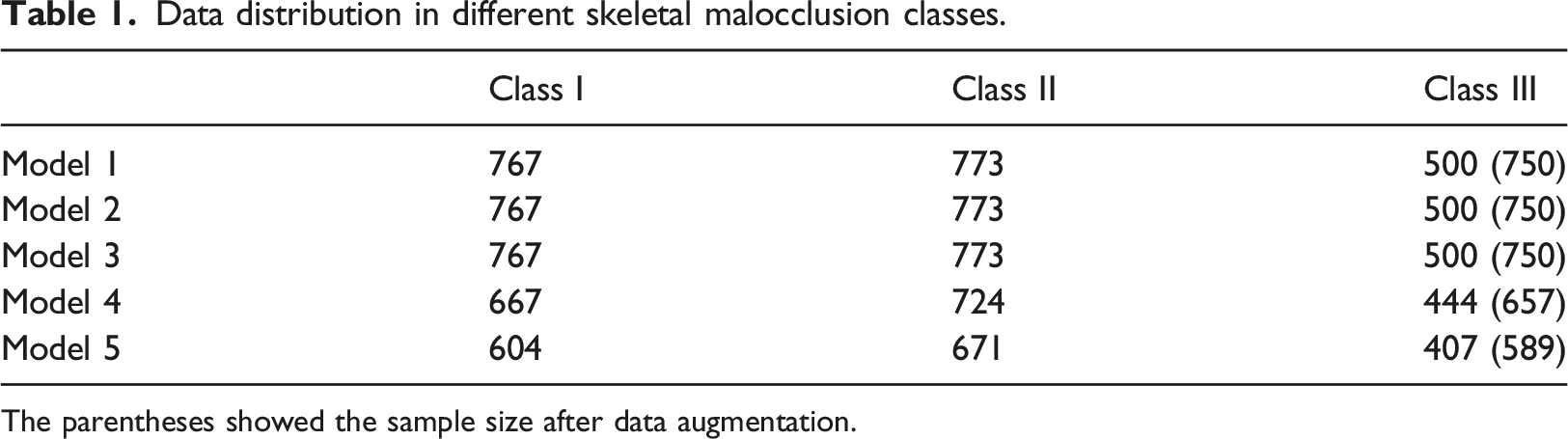

Images of Class III were appropriately augmented due to their low incidence rate, as shown in the Supplementary Data. The images were divided into training, validation, and test sets based on random numbers generated by a computer program. The distribution followed a 7:2:1 ratio, with 70% of the images allocated to the training set, 20% to the validation set, and 10% to the test set.

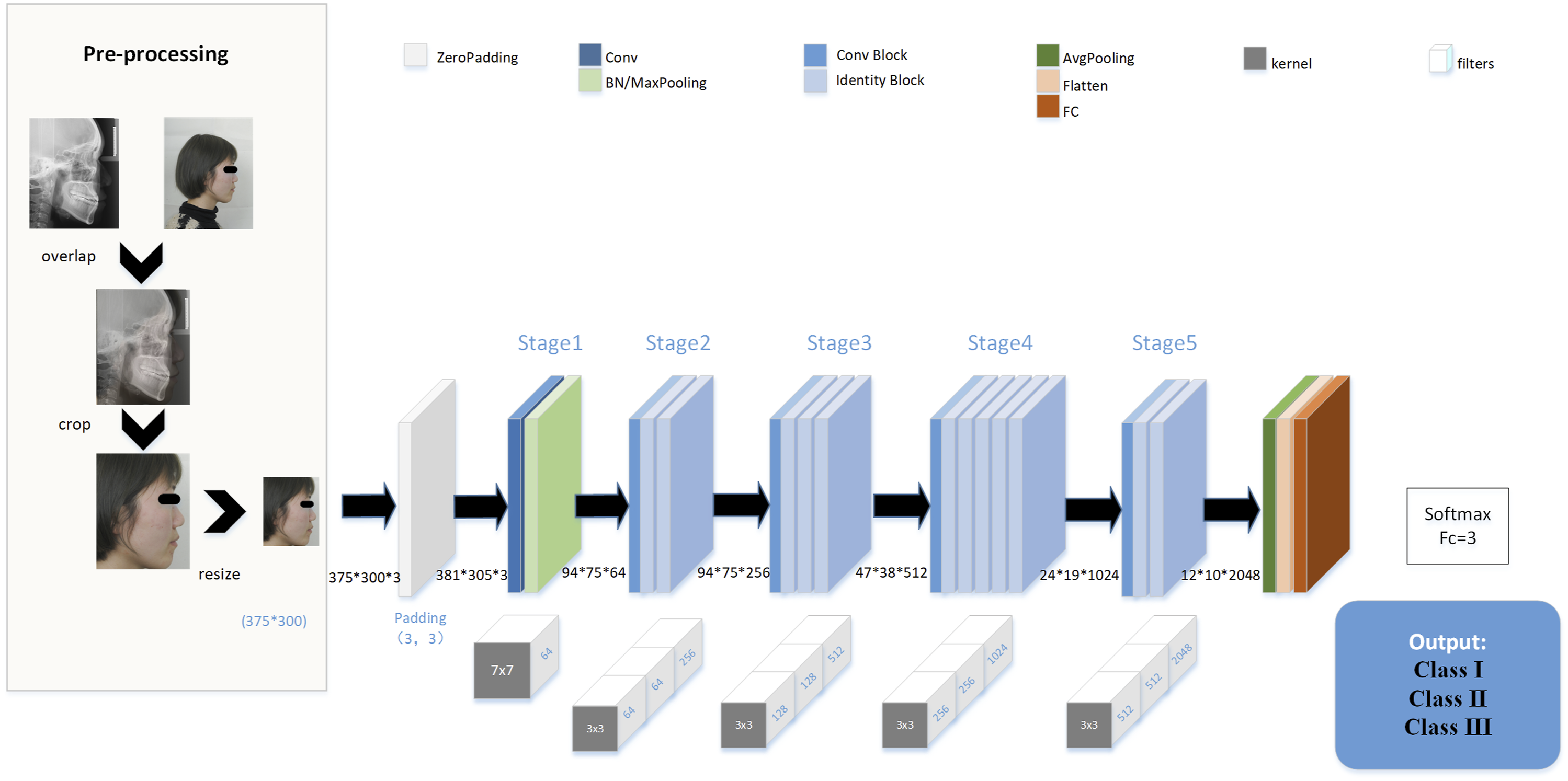

Architecture of models

Our study employed the ResNet50 convolutional neural network as the foundational framework (Figure 2). To accommodate a second input, custom layers were introduced following Stage5. Further information regarding these custom layers can be found in the Supplementary Data (Supplementary Figure 1). The pre-trained weights from the ImageNet dataset were imported into the initial ResNet50 model, and transfer learning was applied using classified images to create Model1. To examine the influence of development, both images and development information were input into the modified ResNet50 model, obtaining Model2. Similarly, Model3 was generated by training with the sex information and skeletal malocclusion images. To compensate for the loss of accuracy in patients with ambiguous features near classification cutoffs, lateral photos with ANB within the range of ± 0.1 standard deviations (SD), [-0.2°, 0.2°]∪[3.8°, 4.2°], were excluded. The remaining images, along with the sex information, were utilized as input for the modified model, resulting in Model4. Finally, lateral photos with ANB within the range of ± 0.2 SD, [-0.4°, 0.4°]∪[3.6°, 4.4°], were removed, resulting in the creation of Model5 (Figure 1). The data distribution was shown in Table 1. An Overview of Data Preprocessing and the Architecture of the ResNet50 Model. Conv, convolutional; BN, batch normalization; FC, fully connected. Data distribution in different skeletal malocclusion classes. The parentheses showed the sample size after data augmentation.

Evaluation and analysis

The performance of each model was evaluated by analyzing metrics such as sensitivity (SN), specificity (SP), accuracy (AC), confusion matrix, receiver operating characteristic (ROC) curve, and area under the curve (AUC) value. In order to validate the reliability of the proposed model, lateral cephalograms were also included for analysis. We provided the photographs from test group in the best-performing model to an orthodontic specialist for classification. the outcomes were reviewed once again to ensure accuracy and consistency. All images were analyzed using the Grad-CAM technique. 23 The last 49th activation layer was selected to extract the gradient, and class activation maps were generated with an intensity factor of 0.5. After excluding the incorrectly predicted images, heat maps of each skeletal malocclusion class were collected. More details were expounded in the Supplementary Data.

Data acquisition and standardization & running environment

Methods were elaborated in the Supplementary Data.

Statistical analysis

The detailed calculation methods for SN, SP, and AC are provided in the Supplementary Data. The 95% confidence intervals for these metrics, as well as for the AUC value, were calculated using the Clopper-Pearson method and the Hanley-McNeil method, respectively. The chi-square test was used to compare the performance of the AI with that of the expert.

Results

Model performance in the lateral photo database.

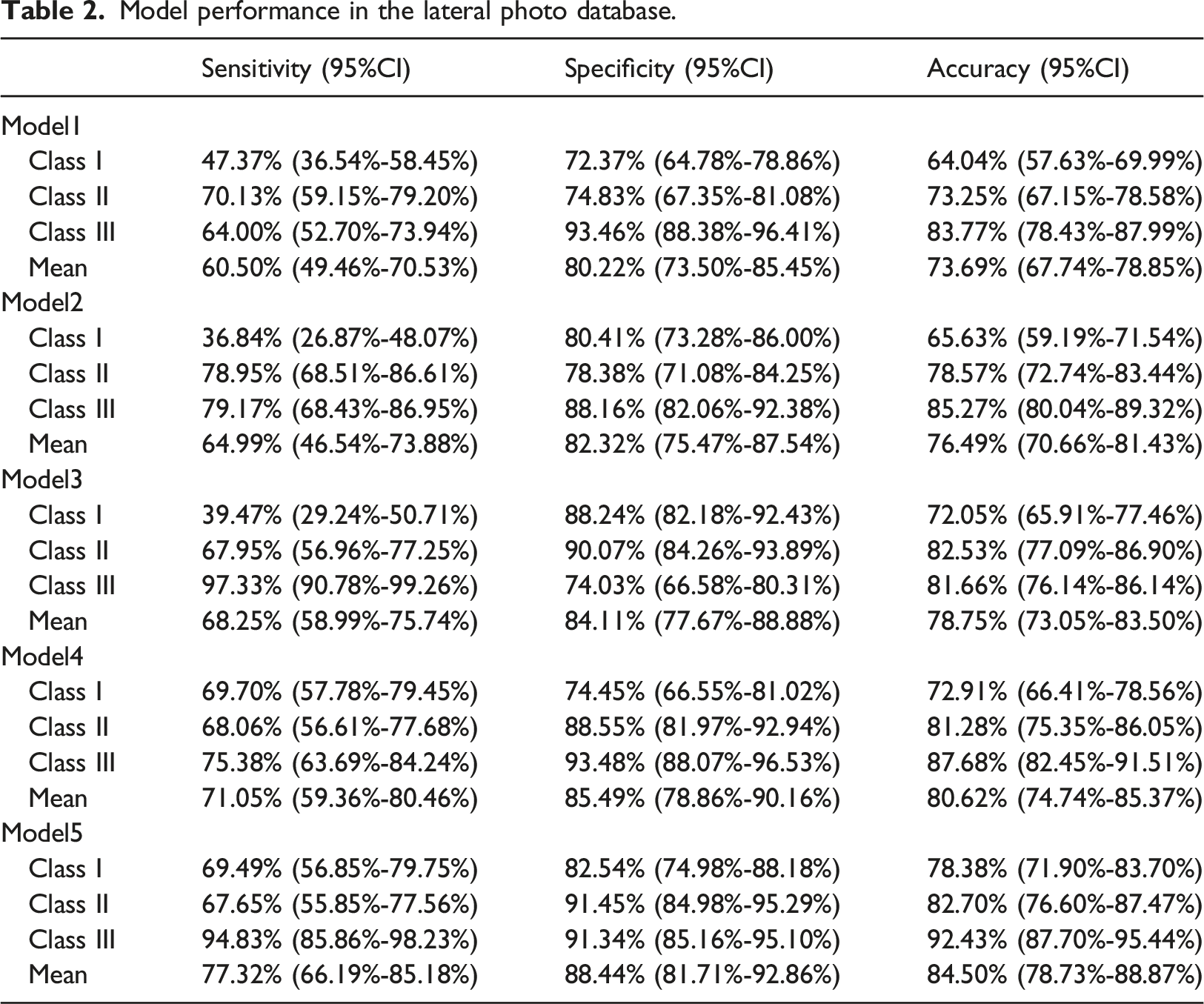

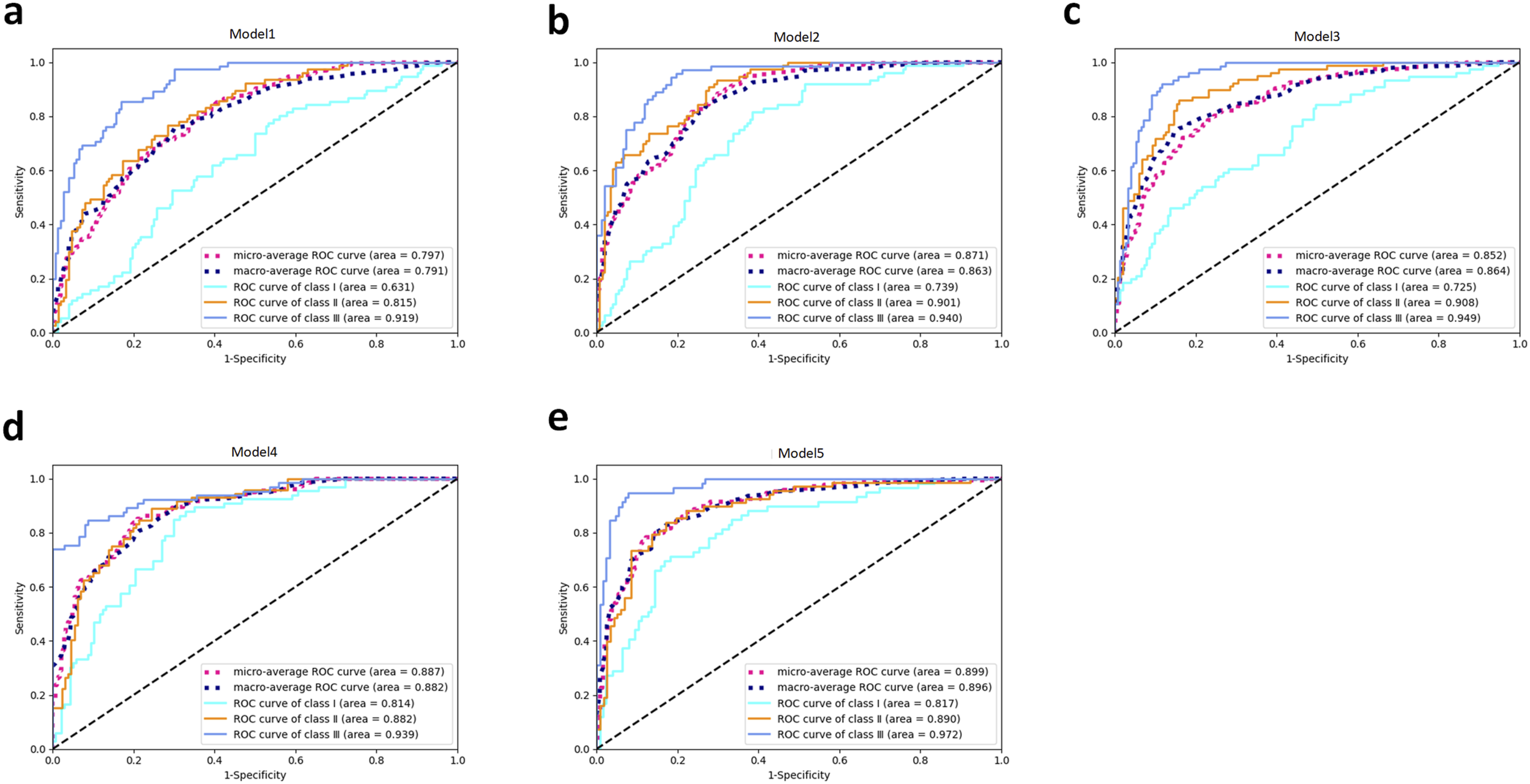

ROC and AUC values for each class were determined (Figure 3). Model1 demonstrated relatively unsatisfactory performance, particularly for class Ⅰ, where the AUC value was only 0.631. However, after moderate data preprocessing, Model5 showed significant improvement, achieving commendable performance across all classes, with all AUC values exceeding 0.8, and the average AUC value was about 0.9(Supplementary Table 1). Examining the ROC curves in all models, it was evident that the performance order was class Ⅲ, class Ⅱ, and finally class Ⅰ. Receiver Operating Characteristic (ROC) Curves for Various Models. Initial ResNet50 model (a), Development model (b), Sex model (c), Sex model without ±0.1SD samples (d), and Sex model without ±0.2SD samples (e).

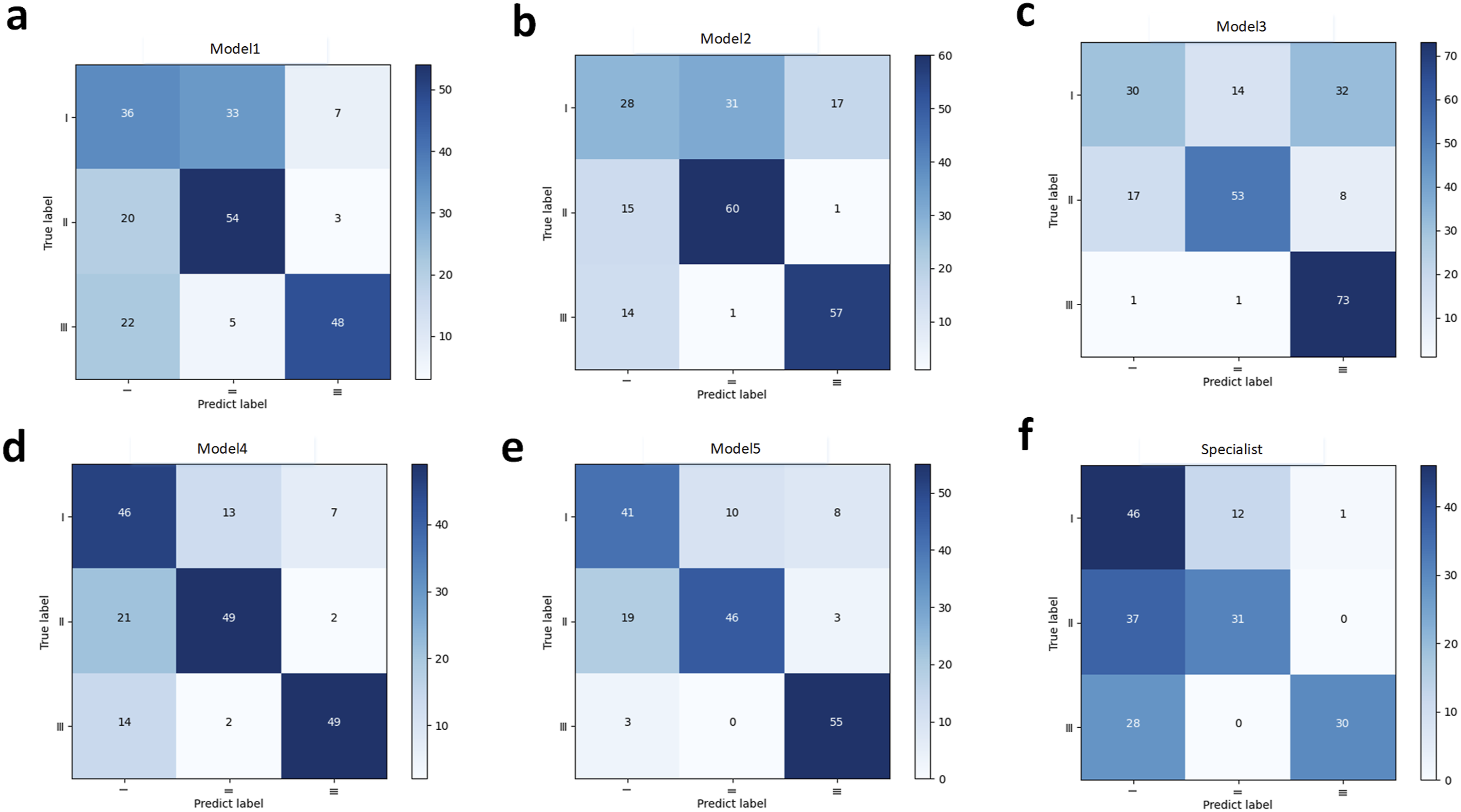

Confusion matrices of all models and the evaluation of Model5 by the orthodontic specialist were depicted (Figure 4). Satisfactory discrimination between class Ⅱ and class Ⅲ was found in all models. After data processing, there was a noticeable improvement in performance compared to the initial Model1. Furthermore, the orthodontic specialist had a tendency to diagnose malocclusion as class Ⅰ, leading to frequent incorrect predictions. Confusion Matrices for Different Models and Conditions. Initial ResNet50 model (a), Development model (b), Sex model (c), Sex model without ±0.1SD samples (d), Sex model without ±0.2SD samples (e), and Specialist’s prediction (f).

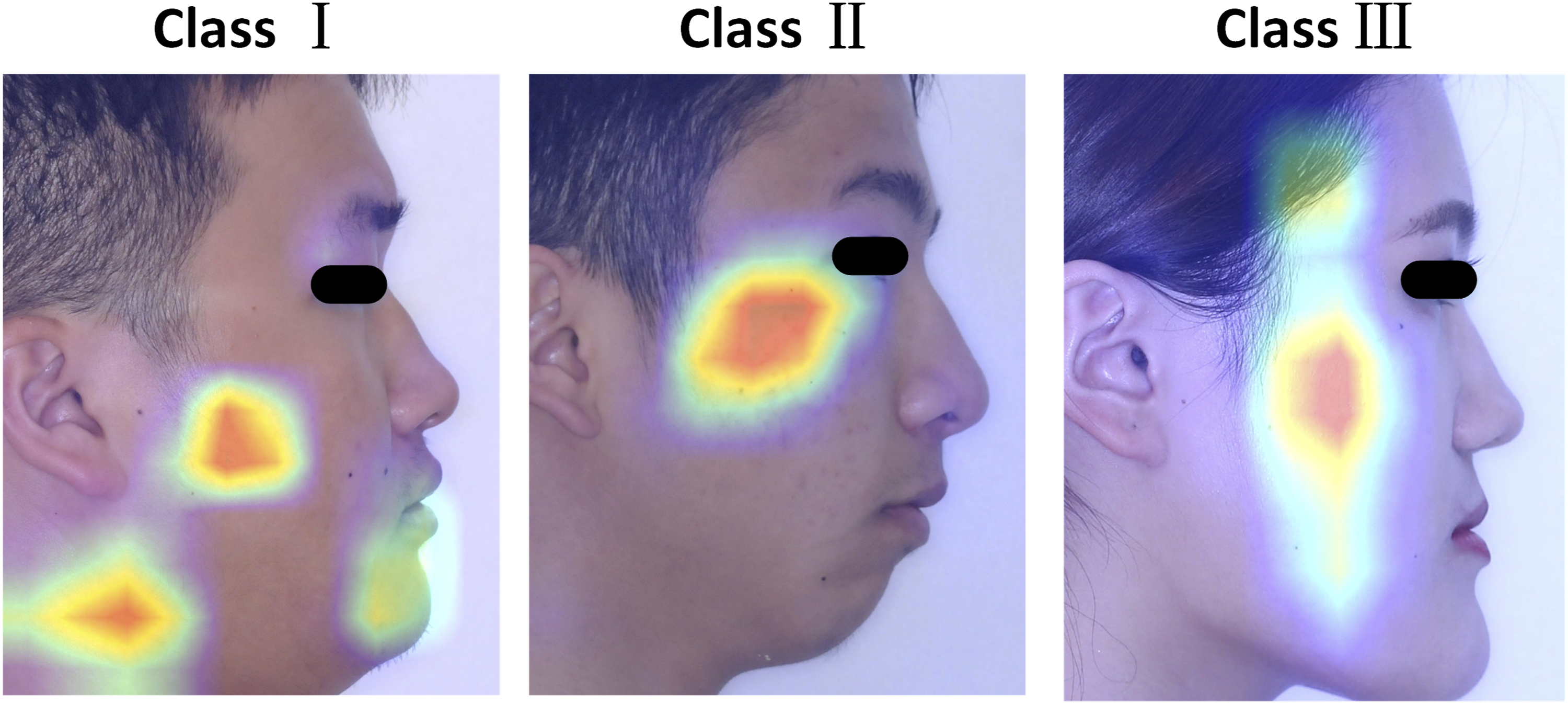

Heat maps were generated using the Grad-CAM technique (Figure 5). In class I, the heat map characteristics were found to be atypical, which varied across different photos. However, in all 46 images of class II, the heat areas consistently converged in the middle third of the face, specifically around the zygomatic region. For class Ⅲ, the heat patterns exclusively manifested on the posterior face. Examples of heat maps generated by the last activation layer.

Discussion

Studies on skeletal malocclusion classification with AI have been popular in orthodontics, with a primary focus on lateral cephalograms. 24 A recent breakthrough in this area has been the proposal of a one-step diagnosis approach, which has shown great potential and served as a valuable guide for further investigation. 19 Lateral photos, which are easier to obtain, are also recommended for evaluation purposes. In this study, the predictive system achieved the highest accuracy of 84.50% when using lateral photos. Despite the initial model’s inferior performance, the classification task was well accomplished after subgroup labeling and data processing. The feasibility of preliminary screening for skeletal malocclusion without relying on radiography was successfully demonstrated.

Skeletal malocclusion, especially in severe class II and class III cases, poses a significant challenge for orthodontists, as mitigating its effects on facial appearance can be highly complex. 25 Consequently, orthodontists generally concur that early intervention is vital for achieving a favorable prognosis in treating skeletal malocclusion. However, many young individuals may remain unaware of their condition, leading to missed opportunities for optimal treatments, such as expansion arch therapy and protraction orthodontic therapy. Therefore, there is an urgent need for a readily accessible screening method for skeletal malocclusion.

Thanks to the widespread use of smartphones and rapid advancements in internet communication technology, the acquisition and sharing of photographic data have become effortless and unencumbered. The integration of photos with AI has led to the emergence of numerous smartphone apps, offering predictions on aspects such as facial aging or genetic traits, often for entertainment purposes. However, these innovations also present potential for practical applications in clinical environments. The COVID-19 pandemic has notably advanced the telemedicine mode, 26 giving rise to various remote smart applications, such as monitoring for diabetes and wound diagnosis.27,28 By integrating an interactive API and building a database within a private cloud system, 29 it becomes feasible to create a smartphone application for detecting malocclusion. Indeed, in the early stages of diagnosis, absolute precision may not be as crucial, making the use of photos for remote smart screening with AI a promising prospect. In the future, a mobile application will be developed with the capability of capturing lateral photos using feature point recognition and action instructions. Subsequently, the captured data will be transmitted to the deep learning model on the host side for classification and assessment. Finally, classification results, along with corresponding explanations and recommendations, will be delivered by the mobile application. In this manner, self-monitoring of malocclusion can be facilitated.

In order to demonstrate the capability of the established structures, lateral cephalograms were initially subjected to testing (Supplementary Table 2). The performance of the lateral cephalogram system was better than other semi-automatic methods. Our proposed model achieved a higher AC of 88.54% compared to the past research, which reported AC of 74.5% to 80.72%.30–32 However, a higher AC of 95.7% was announced in the former one-step classification study, 19 surpassing ours. The main cause was considered to be the utilization of different classification criteria (with a range of 2.166 ± 3.167° for class Ⅰ) based on individual normalized distribution, resulting in more initial class Ⅰ data. Furthermore, a more radical data processing approach was utilized in their study, as they removed images near the cutoffs of ±0.2 SD (±0.63°) and ±0.3 SD (±0.95°), whereas a more conservative approach was employed in this study (±0.2°/±0.4°). As a consequence, the achievement of better performance was deemed reasonable, and the different network structure presented a potential factor that requires further studies for explanation. In conclusion, it was agreed that our proposed model for classifying skeletal malocclusion was feasible.

We aimed to determine whether real photos could be effective, so lateral photos were introduced in this study. The traditional ResNet50 model yielded mediocre results, particularly for class Ⅰ, with a mean AC of 73.69%. However, after incorporating sex or development information, the performance improved noticeably. Although the sensitivity of class Ⅰ slightly decreased, the ROC/AUC result indicated a perceptible enhancement in class Ⅰ. This suggests that both sex and development information play a significant role in the classification of skeletal malocclusion. To simplify the process, we chose only sex information for subsequent research. Ultimately, the best Model5 showed a significant increase in the sensitivity of class Ⅰ and a mean AC of 84.50%. To maintain consistency with lateral cephalograms and reduce variables, we employed sophisticated superimposed shear. However, to streamline the process, we trained the model using the initial lateral photos and achieved a similar result. As a result, the direct use of lateral photos is encouraged in future research. Comparing the performance of the best lateral cephalogram model (88.54%AC) with our proposed lateral photo model (84.50%AC), we concluded that a simple lateral photo would suffice to identify severe malocclusion. This could serve as a preclinical self-monitoring measure, leading to a higher visiting rate for malocclusion in the future.

For simplifying the labeling work, ANB was treated as the sole criterion in this study. For a more precise classification, taking Wits values into account was recommended in the further study. Moreover, complicated models to input both sex and development information were anticipated. It was also worth trying to modify the network structure based on the updated network like SeNet and MobileNet.33,34 As the truth was that more females were willing to check and treat malocclusion, the sex ratio was about 3:1 (1573:536). Therefore, we hoped for a balanced distribution in the following study. The attempt is believed to be successful in raising awareness of early severe malocclusion with an acceptable accuracy of 84.5% in lateral photos. Moreover, further improvement is predictable. As previously mentioned, the accuracy of lateral cephalograms in this experiment is lower than that of the previous study (88.54% vs. 95.7%), attributed to the relatively narrow classification range and different criteria. With adjustments made to the classification criteria and modifications to the latest proposed deep learning model, it is believed that the performance of the model for photos will be significantly enhanced.

Due to the absence of visible landmarks for classification, the Grad-CAM technique was employed to identify certain characteristics. However, the heat maps were not typical in class Ⅰ, and it is likely that more data would be required to achieve convergence for the intermediate class with a narrow range. In class Ⅱ, the hottest area was highlighted in the zygoma, suggesting that it could be distinctive in the middle 1/3 of the face, especially for the zygoma morphous. In class Ⅲ, the longitudinal heat area was conjectured to be related to the craniofacial proportion or the shape of the posterior face. It appears that the posterior face exhibited more skeletal characteristics. With the advancement of facial scans, analyzing the length, proportion, volume, and protrusion of the posterior face in different classes in three dimensions may become possible. 35 The utilization of Grad-CAM represents a preliminary attempt. Not limited to this approach, we hope that unique facial features in different classes can be comprehensively identified and studied.

The primary limitation of this study was the absence of specific diagnostic criteria for lateral photos, making it challenging to compare the performance of AI with that of specialists. However, experienced doctors can form an initial impression to suggest possible treatments before taking an X-ray. Therefore, the orthodontist was asked to classify the images based on clinical experience. A notable difference was observed between AI and the specialist’s classifications (84.50% versus 71.89% in 185 samples, χ2 = 25.89, p < 0.001). It was revealed that the images were primarily classified based on the relationship between the lips and the E-line, guided by experience. 36 However, due to the influence of soft tissue compensation, many images were mistakenly categorized into class Ⅰ, leading to a significant disparity between the two methods. This indicates that relying solely on the evaluation of anterior soft tissues may not be reliable for judging skeletal malocclusion.

To improve performance, we set the initial sample size larger than in previous studies, as more data generally enhances deep learning outcomes. Moreover, overfitting is prone to occur with image data augmentation on small datasets, especially those with fewer than 1000 samples. 37 In this study, we included 2109 matched lateral photographs and cephalograms. We believe that with a more developed database, AI will achieve better success in self-screening for skeletal malocclusion at the preclinical stage.

Conclusion

This study highlighted the potential of AI in offering a one-step preliminary classification of skeletal malocclusion using lateral photos. Our model suggests an innovative approach to self-diagnosing skeletal malocclusion through remote-assisted family medicine. With advancements in communication techniques, the prospects of self-health management are promising. However, more studies comparing this system or summarizing characteristics are needed. In this way, widespread application for most adolescents will be achieved sooner or later.

Supplemental Material

Supplemental Material - The concept of AI-assisted self-monitoring for skeletal malocclusion

Supplemental Material for The concept of AI-assisted self-monitoring for skeletal malocclusion by Hexian Zhang, Chao Liu, Pingzhu Yang, Sen Yang, Qing Yu, and Rui Liu in Health Informatics Journal

Footnotes

Acknowledgements

We extend our gratitude to Qing Yu and Chao Liu from Nanjing Stomatological Hospital for their invaluable assistance during the project. Additionally, we are grateful to Rui Liu and Pingzhu Yang from Daping Hospital for their insightful suggestions on methodological enhancements.

Author’s contribution

All authors participated in the conceptualization, drafting, and critical review of the paper. Everyone has consented to the submission and potential publication of this manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Clinical Innovation Project of Army Medical University (No. 2019XLC2014).

Ethical statement

Disclaimer

The views expressed in this article are solely those of the author and do not reflect the official position of any affiliated institution or funder.

Data availability statement

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.