Abstract

Physician categorizations of electronic health record (EHR) data (e.g., depression) into sensitive data categories (e.g., Mental Health) and their perspectives on the adequacy of the categories to classify medical record data were assessed. One thousand data items from patient EHR were classified by 20 physicians (10 psychiatrists paired with ten non-psychiatrist physicians) into data categories via a survey. Cluster-adjusted chi square tests and mixed models were used for analysis. 10 items were selected per each physician pair (100 items in total) for discussion during 20 follow-up interviews. Interviews were thematically analyzed. Survey item categorization yielded 500 (50.0%) agreements, 175 (17.5%) disagreements, 325 (32.5%) partial agreements. Categorization disagreements were associated with physician specialty and implied patient history. Non-psychiatrists selected significantly (p = .016) more data categories than psychiatrists when classifying data items. The endorsement of Mental Health and Substance Use categories were significantly (p = .001) related for both provider types. During thematic analysis, Encounter Diagnosis (100%), Problems (95%), Health Concerns (90%), and Medications (85%) were discussed the most when deciding the sensitivity of medical information. Most (90.0%) interview participants suggested adding additional data categories. Study findings may guide the evolution of digital patient-controlled granular data sharing technology and processes.

Keywords

Introduction

Sensitive health data sharing is integral to quality and comprehensive patient care. 1 Health data from categories such as domestic violence and mental health are typically considered “sensitive” due to the high risk of social stigma and even physical harm in the event of disclosure. 2 Determination of what is considered sensitive information can affect medical record sharing and release.1,3,4 Factors like comprehension, own experience, stigma, and perception of information applicability affect patients' perceptions on data sensitivity.5,6 Without universal agreement on what constitutes sensitive data and its subjectivity, a wide variety of individual preferences for sensitive health data access and sharing has been considered in recent years. 5

An important strategic goal of the Office of the National Coordinator of Health Information Technology (ONC) is to “build trust and participation in health information technology (IT) and electronic health information exchange by incorporating effective privacy and security into every phase of health IT development, adoption, and use”. 7 The ONC recommended providing patients with more granular control over the use and disclosure of their health data – for instance, sharing all health records with all providers, except records related to history of depression.

The National Committee on Vital and Health Statistics (NCVHS) – a statutory advisory body that informs the Secretary of Health and Human Services regarding health data – identified the necessity of technology use that can assist with the management of sensitive health information. 8 The NCVHS proposed a data taxonomy that includes domestic violence, genetic information, mental health, reproductive health, and substance abuse as sensitive data categories. 5 While other sensitive data taxonomies have been utilized,6,9–12 the NCVHS data taxonomy remains the most frequently examined in published studies.13–18

Based on the ONC and NCVHS recommendations, Karway et al. pilot tested a consent-based granular data sharing technology with 200 English- and Spanish-speaking patients with behavioral health conditions. 2 Most (83%) participants agreed that the NCVHS taxonomy adequately captured their data privacy needs. However, to the best of our knowledge, the NCVHS taxonomy has not been validated by health providers to assess its adequacy for categorizing the sensitive medical record data documented within an individual’s electronic health or medical record.

There is limited research on provider views of patient-driven data sharing and data sensitivity. Studies have found that providers believe patient limits on data access may adversely impact patient care.11,17,19,20 Ivanova et al. interviewed 28 behavioral health providers and professionals to assess their views on patient control of medical record sharing and found that providers’ opinions may be impacted by the medical and mental health problems of their patients.19,20 The study found that behavioral health professionals caring for individuals with a serious mental illness displayed lower levels of data sharing concern and emphasized patient perspectives (63.6%), compared to those caring for individuals with general mental health conditions.

Objectives

Our goals were to assess: 1) how physicians categorize medical record data using provided sensitive data categories, and 2) how physicians perceive the adequacy of sensitive data categories to classify medical record data. Our findings will inform key stakeholders, including ONC and NCVHS, on policies that support granular patient-driven consent process and technology development.

Methods

Patient Electronic Health Data Access

The Arizona State University Institutional Review Board approved on July 18, 2017 the study #00006227 that asked patients for written consent to have access to their electronic health record (EHR) data for research.

Thirty-six adult patients (≥ 21 years) receiving care from two integrated community-based health clinics (physical and behavioral health) consented to give access to their de-identified EHR data for research. Study participants were recruited through flyers.

Physician Recruitment

The Arizona State University Institutional Review Board approved on August 13, 2022 the study #00004359 that asked physicians for written consent to participate in an electronic survey, followed by a remote interview.

The inclusion criterion was age ≥ 18 years, English-speaking physicians with an MD, DO, or MBBS degree. Participants who completed the survey and interview were offered to make contributions to manuscript revisions and approve the final version.

Survey

An online survey was designed to capture physician categorization of health data based on perceived data sensitivity (see Supplementary Materials). A total of 1000 data items consisting of 38 allergies, 388 labs, 266 medications, 234 diagnoses, and 74 procedures/services were extracted from 36 EHRs. Data items could not be traced back to the source EHR. Of the 1000 data items, 170 were duplicates (present in more than one patient EHR). The selected data items were divided into 10 sets with 100 items each.

After consent and five demographic questions, the instructions directed participants to organize 100 data items (e.g., depression diagnosis) into the five sensitive data categories determined by NCVHS: Domestic Violence, Genetic Information, Mental Health, Reproductive Health, and Substance Use, and Other for items felt to be non-sensitive.2,6,21 Of note, the Substance Abuse category was replaced with Substance Use, as recommended by Soni et al. and Karway et al. studies.2,6 On-demand education about the data categories was provided via info button links.22–26 The study concluded with three opportunities for feedback on the process and survey.

Participants were recruited through personal digital and telephone communication supported by a study flyer. The aim was to recruit 10 psychiatrists and 10 non-psychiatrists. Each psychiatrist was randomly paired with one non-psychiatrist physician, for 10 pairs total. Participants in each pair received the same 100 data items to categorize (Figure 1). Study design included creating a survey based on EHR data, creating 10 participant pairs, pairing one non-psychiatrist physician with one psychiatrist, asking participants to use data categories to classify EHR data, comparing data categorizations within pairs, and soliciting rationale for data categorizations through interviews.

As a result of the surveys, all 1000 data items were categorized by a psychiatrist and non-psychiatrist physician. Following the methodology used by Soni et al. et al., data categorizations were assigned to be in agreement, partial agreement, or disagreement with other participants. 6 Agreement occurred when two participants assigned the same category/categories to an item. For instance, for the depression diagnosis, the two participants categorized it as Mental Health. Partial agreement occurred when two participants assigned at least one category in common to an item. For instance, for the medication Vicodin, one participant categorized it as Substance Use while another assigned both Substance Use and Other as categories. Disagreement was noted when two participants assigned different categories to an item. For instance, the lab test corresponding to elevated liver enzymes was categorized as Substance Use by one participant and as Other by the second participant. Results were analyzed with descriptive measures.

Between-provider type (psychiatrist vs. non-psychiatrist) differences in the number of data categories endorsed for each health record item were examined using a mixed effects Poisson regression model with fixed effect for provider type and a random intercept term (to account for within-provider pair non-independence). Then, a cluster-adjusted chi square test was used to examine the overall association between endorsement of Mental Health and Substance Use categories, separately within each provider type subsample, accounting for potential within-provider pair non-independence (clustering) among responses. 27 Finally, a mixed effects multivariate logistic regression model was used to examine between-provider type differences in the likelihood of endorsing Mental Health vs. Substance Use via a fixed effect for the Provider Type x Data Category interaction. These models included random intercept terms for provider pair (to account for clustering at the provider pair level) and provider (i.e., participant; to account for within-participant clustering in the repeated measurements).

Interview

A semi-structured interview script (see Supplementary Materials) was created to assess the rationale behind a subset of data item categorizations. All survey participants were invited to participate in the follow-up interview that was scheduled following the survey. The interviews were conducted via teleconference and video recorded. Each interview began with verbal consent and consisted of four open-ended questions covering participant’s clinical training and experience, in-depth review of 10 data items previously categorized by the survey participant, discussion of NCVHS categories, and three study feedback questions.

In total, 100 data items were discussed during the 20 one-on-one interviews. Each pair of survey participants was assigned the same 10 data items to be discussed during the interview. The 10 data items were randomly selected between the pair’s disagreements and partial agreements. Interview responses, which included the option for changes in data categorizations, were recorded.

Interview audio recordings were led by a researcher (IB) and transcribed by a third party. Transcripts were checked for accuracy by a non-interviewer researcher (AP). Two researchers (IB, KS) separately read interview transcripts, quantified the demographics and other metrics (such as the number of answer changes and added/removed categories), and selected relevant quotes. Inconsistencies were resolved by consensus (AG).

The interview transcriptions were analyzed using the six phases of Braun and Clarke thematic analysis guidelines and the MAXQDA software.28,29 The classes and definitions from the United States Core Data for Interoperability (USCDI) taxonomy V2 were used as themes. 30 Meaningful segments of the conversation were considered the units for coding. Transcripts were coded with potential themes by the two researchers independently (VP, RS). Inconsistencies were resolved by consensus. When a consensus was not reached, a third researcher (AG) resolved the disagreements.

Results

Demographics

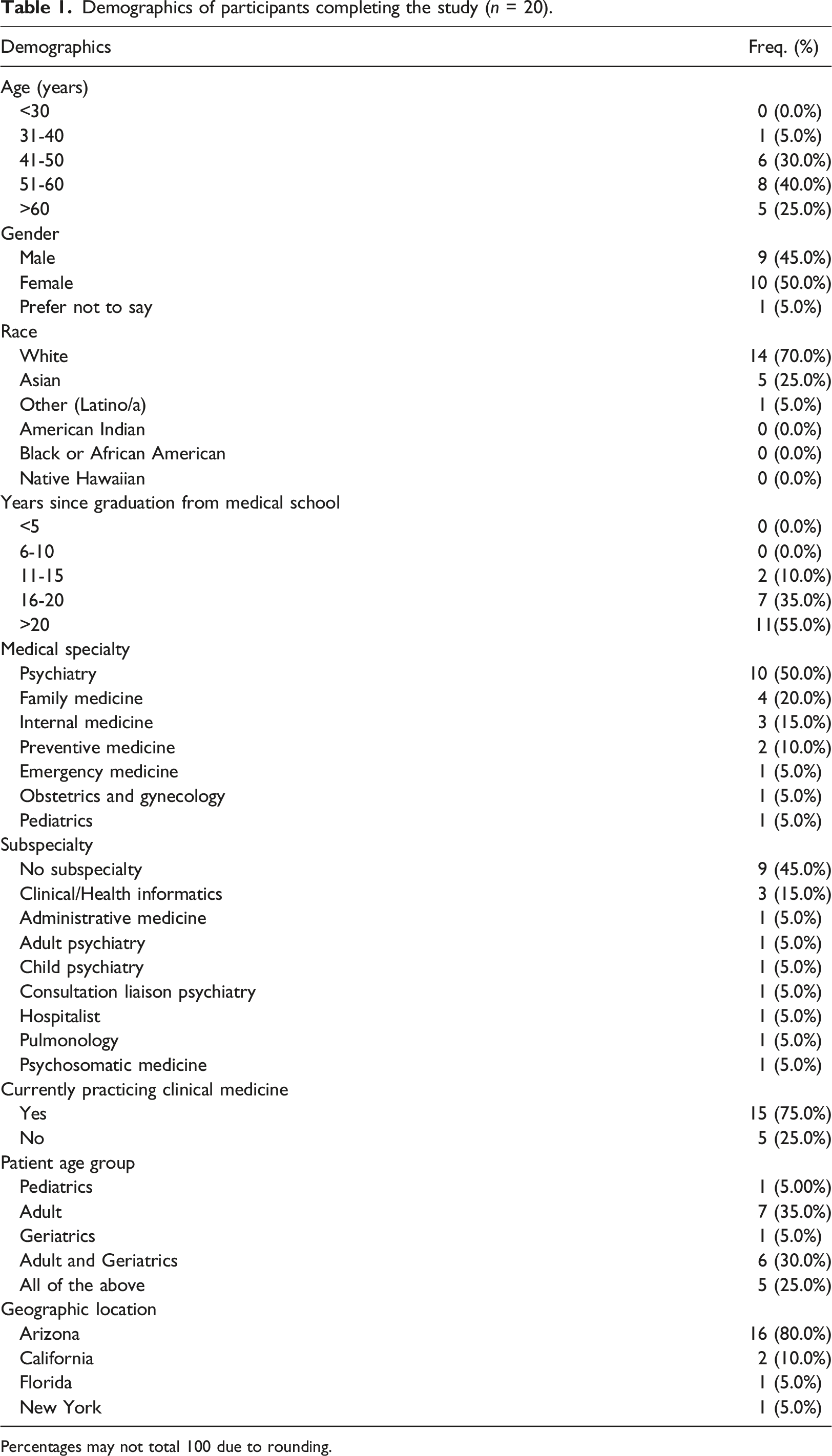

Demographics of participants completing the study (n = 20).

Percentages may not total 100 due to rounding.

Medical Record Categorizations

The 1000 health data items categorized by participants yielded 500 (50.0%) agreements, 175 (17.5%) disagreements, and 325 (32.5%) partial agreements. As shown in Figure 2(a), the highest number of disagreements were observed for Allergies (31.6% out of 38), with Medications (62.4% out of 266) responsible for the highest number of agreements. a) Survey disagreements, partial agreements, and agreements by data type; (b) Survey categorizations based on participant type and data categories.

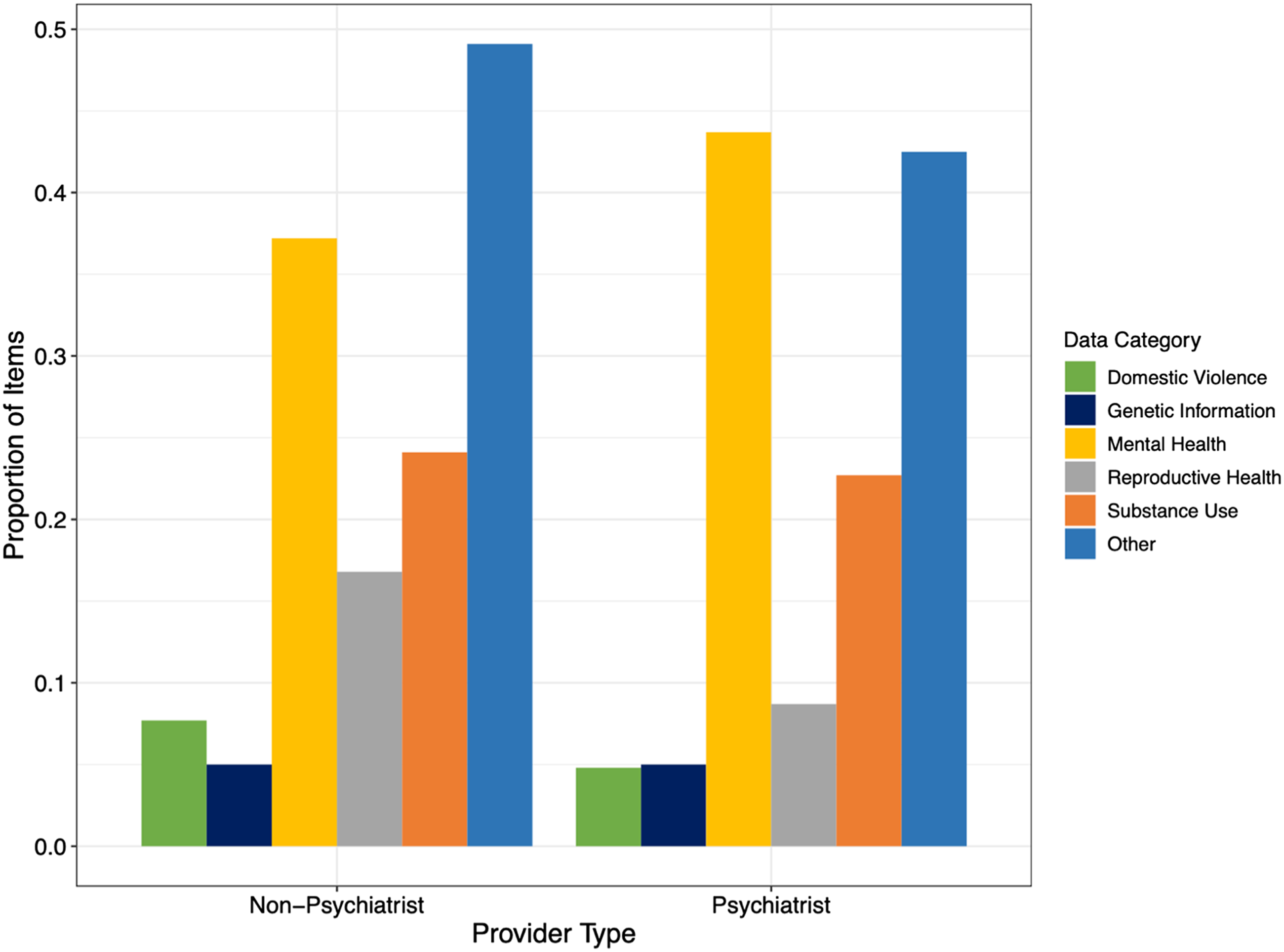

As shown in Figure 2(b), both psychiatrists and non-psychiatrist physicians chose the Other category to categorize items more often, 407 and 459 times respectively. Psychiatrists designated Mental Health as a category more often than non-psychiatrists, 406 and 366 times respectively. The least common categories used were Domestic Violence (82 times for psychiatrists and 111 times for non-psychiatrists) and Genetic Information (59 times for psychiatrists and 68 times for non-psychiatrists): “In particular, Genetic [Information], Mental Health, Reproductive Health, Substance Use for me are pretty broad but inclusive, but Domestic Violence is not.”

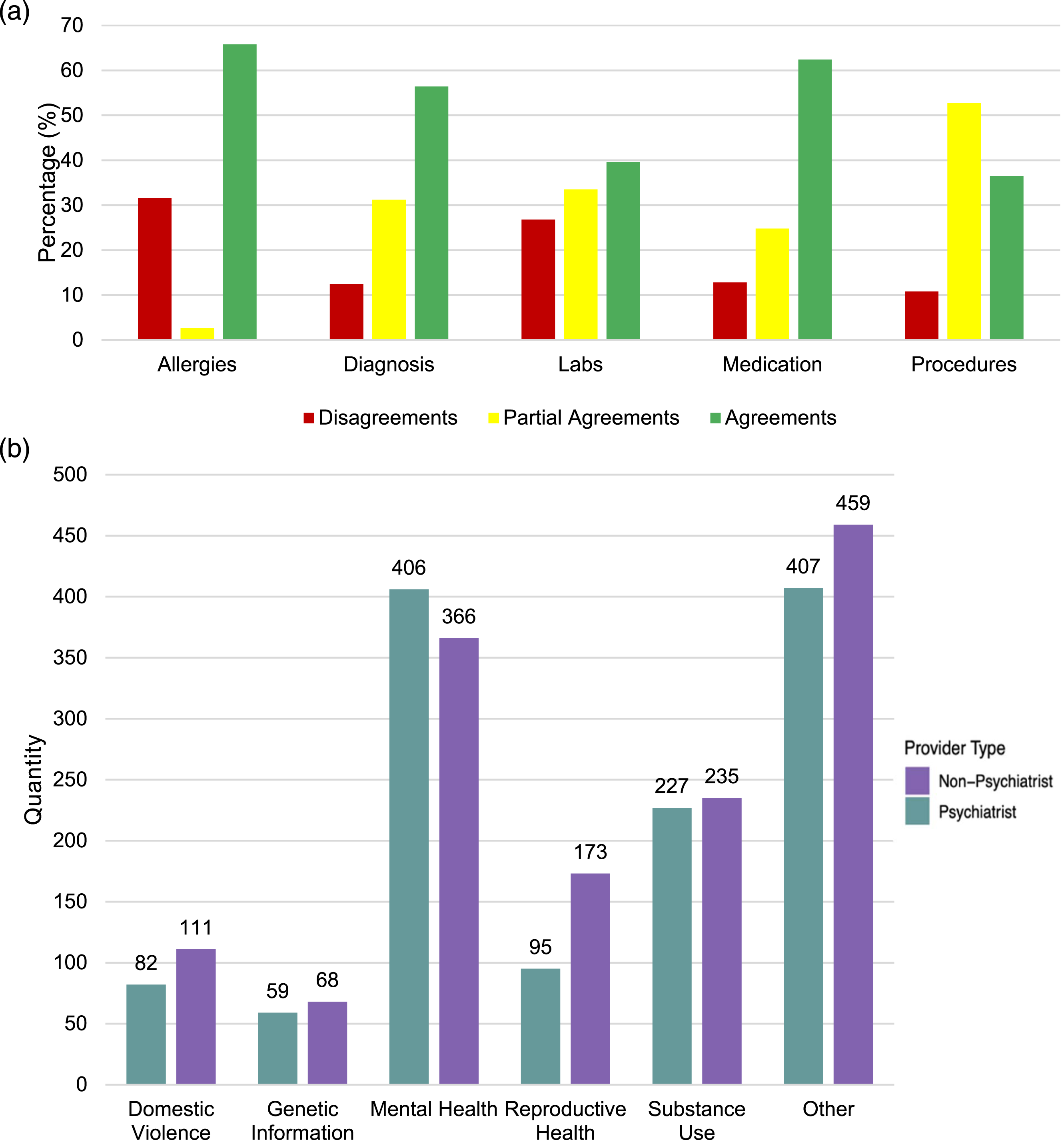

Non-psychiatrists selected significantly (p = .016) more data categories (model-predicted M = 1.38, SE = 0.08) than did psychiatrists (M = 1.25, SE = 0.08). As shown in Figure 3, the groups were very similar with respect to the proportion of items classified under two or three data categories, but non-psychiatrists were less likely than psychiatrists to classify items as belonging to one data category and more likely to endorse four or five data categories than were psychiatrists. Number of data categories endorsed per item by provider type.

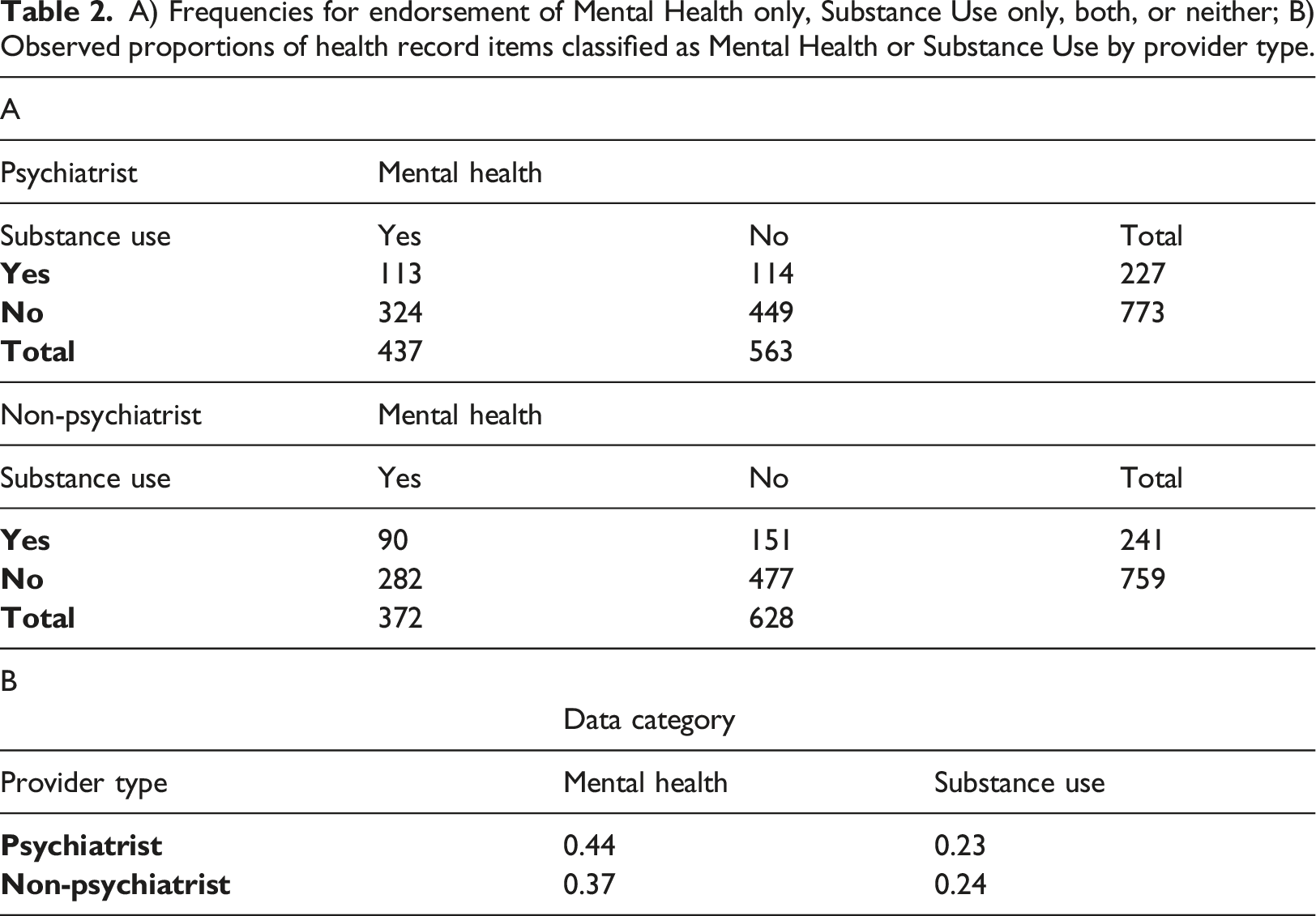

A) Frequencies for endorsement of Mental Health only, Substance Use only, both, or neither; B) Observed proportions of health record items classified as Mental Health or Substance Use by provider type.

Mixed models addressing between-provider type (psychiatrist vs. non-psychiatrist) differences in the likelihood of endorsing Mental Health vs. Substance Use showed that while both groups were more likely to endorse Mental Health than Substance Use (model-predicted probabilities = 0.40 and 0.22, respectively, p < .001) (Table 2(b)), this difference was more qualified by a significant Provider Type x Data Category interaction (OR = 0.72, 95% CI = 0.54, 0.95, p = .022). For instance, the Mental Health vs. Substance Use difference was more pronounced among psychiatrists (model-predicted probabilities = 0.44 and 0.22, respectively) than among non-psychiatrists (0.36 vs. 0.23, respectively) (Figure 4). Proportion of items classified as belonging to each data category, by provider type —because each item could be classified as belonging to multiple data categories, proportions within provider types do not sum to 1.0.

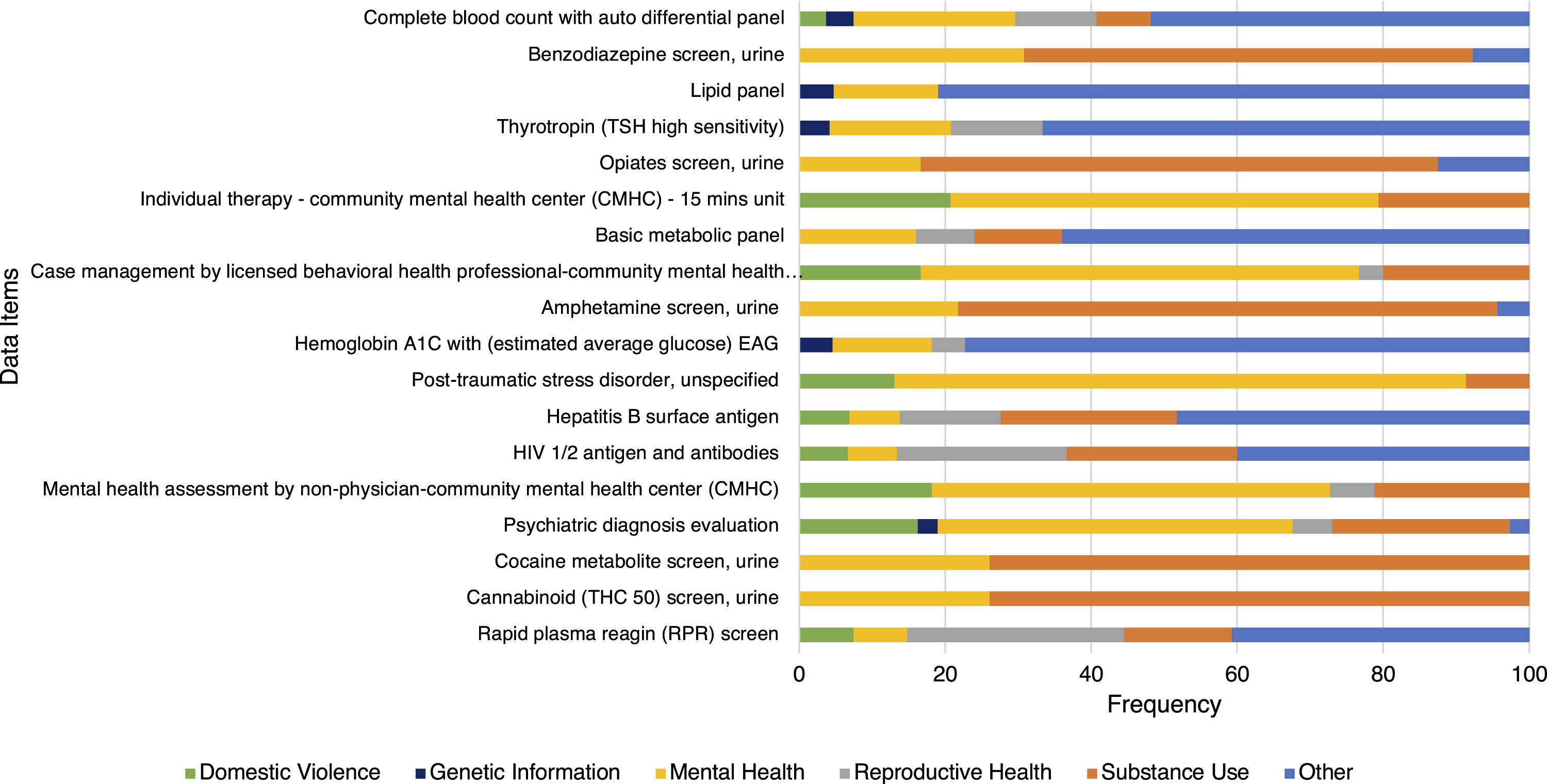

In the survey, participants were asked to categorize 18 common items (Figure 5). Overall, 5 (28.8%) items were most frequently categorized as Mental Health or Substance Use. Eight (44.4%) were predominately categorized as Other. Within the 18 duplicated items, participants often (65.2% SD: 0.15) agreed on a data category. For instance, Opiates screen, urine, was most commonly (70.9%) classified as Substance Use information. Survey categorizations of eighteen common data items based on data categories.

During the post-survey interview, participants opted to change survey responses, adding an average of 2.7 new categories to their categorizations and removing 0.5 categories on average: “Well, those [from ABO and Rh group panel] are blood types, so I didn't think it fit nicely into any of those categories. I suppose it would be partially covered by Genetic Information on a second look. And it may come into play in Reproductive Health […]”. Psychiatrists modified their answers more frequently by adding on average 3.8 (SD: 3.94) categories and removing on average 0.8 (SD: 0.92) categories. Non-psychiatrists added on average 1.7 (SD: 1.42) categories and removed on average 0.3 (SD: 0.46) categories. After adjusting for those changes in categorizations, participants had 502 (50.2%) agreements, 161(16.1%) disagreements, and 337 (33.7%) partial agreements (see Supplementary Materials).

Often categorizations were based on the participant’s patient experience: “Obviously, we use benzodiazepine screens in our field of psychiatry […] And so, where my mind was with this is that if there’s something routinely that we're prescribing to an individual, we're trying to screen and find [it]. When you do a urine drug screening for patients, you're looking for the chemicals that we expect to be there to be there, and for things we don't expect to be there.” Assumptions were also made on other factors, including assumption about a patient’s medical history and social determinants of health: “Decreased libido […] can happen when you have domestic violence in the home, it can happen when there is bad news that you find out about your child diagnosed suddenly with multiple sclerosis […] I mean, it’s so multifactorial – let’s say they’re very obese, they’ve been diagnosed with diabetes, they’ve had a situation at home with three people in their home died of COVID. There could be so many situations that would cause the decreased libido […]”

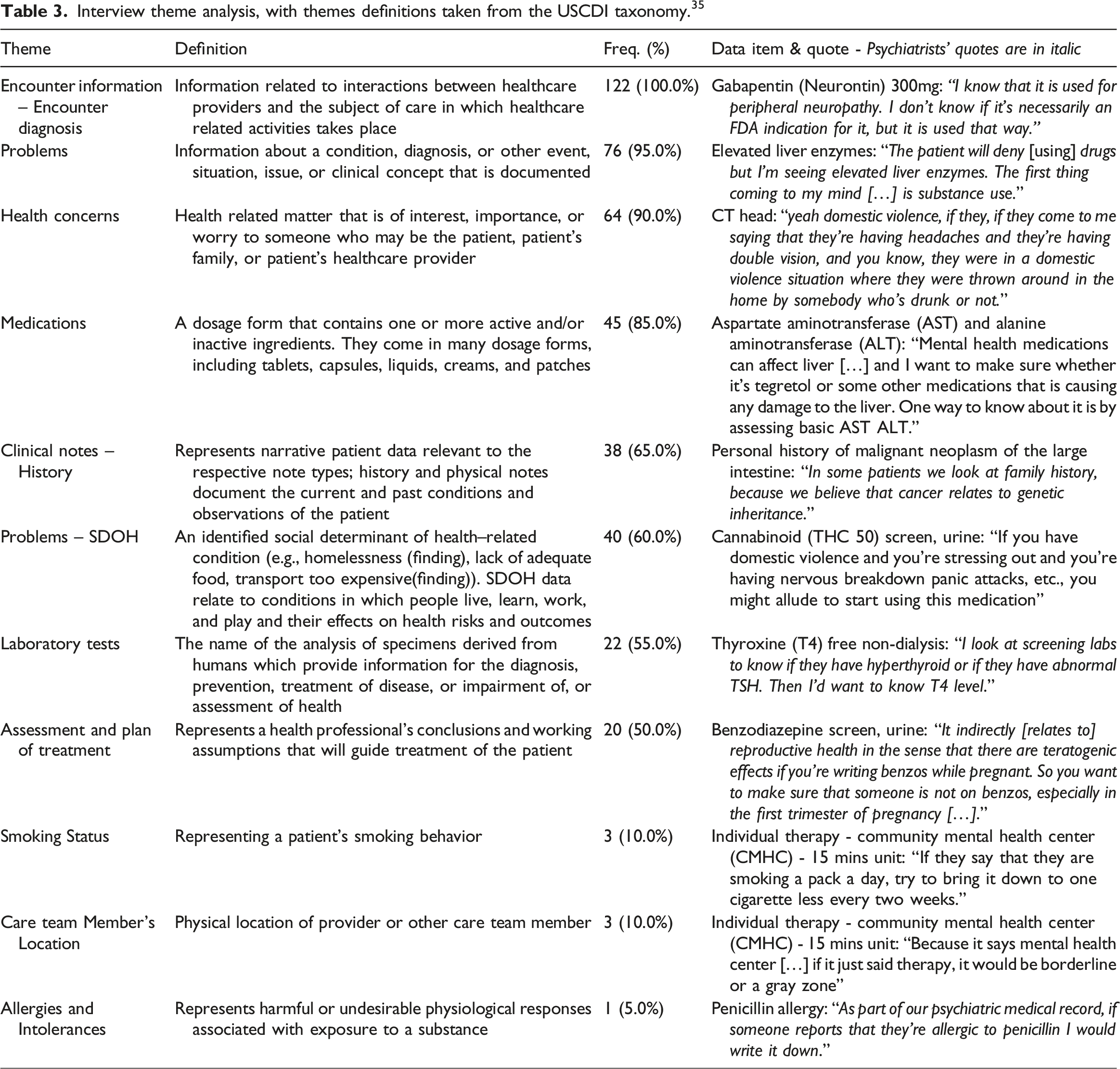

Interview theme analysis, with themes definitions taken from the USCDI taxonomy. 35

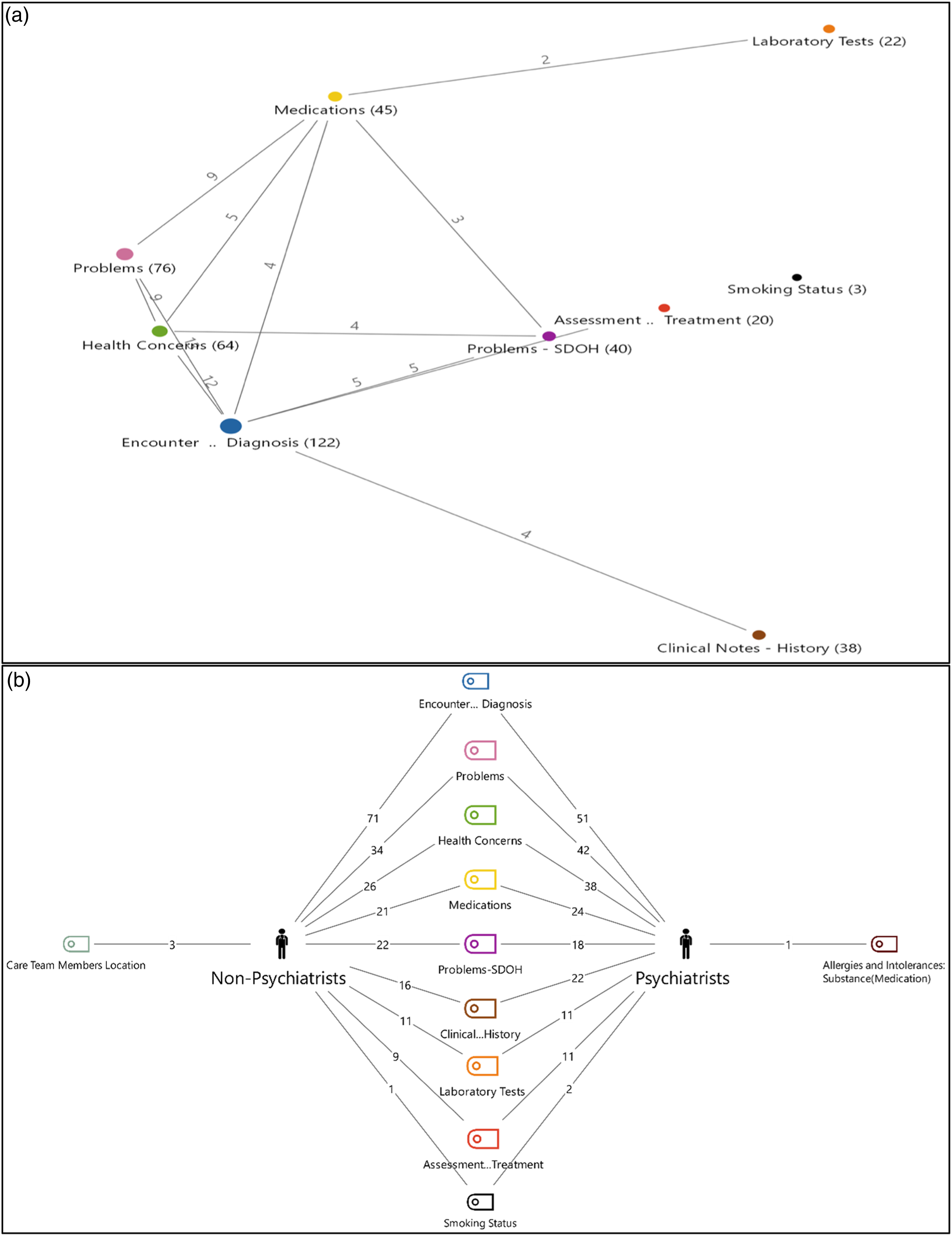

Some themes were discussed together frequently (Figure 6(a)), such as Encounter diagnosis and Problems (n = 13), Encounter Diagnosis and Health Concerns (n = 12), Problems and Health Concerns (n = 9), and Problems and Medications (n = 9). Psychiatrist and non-psychiatrist physician groups made assumptions about patient medical history in similar ways as both groups’ most frequent themes were Encounter Diagnosis (51 vs. 71 respectively), Problems (42 vs. 34 respectively), and Health Concerns (38 vs. 26 respectively) (Figure 6(b)). a) Pairwise theme intersection; (b) Theme frequency differences between psychiatrists and non-psychiatrist physician groups.

Perceptions on Data Sensitivity Categories

During the survey, participants had on-demand access to educational material that explains the five sensitive categories: Domestic Violence, Genetic Information, Mental Health, Reproductive Health, and Substance Use. Fifteen participants (85%) accessed the educational material. Substance Use (n = 12) was most accessed, followed by Reproductive Health (n = 11), Genetic Information (n = 11), Mental Health (n = 10) and Domestic Violence (n = 6). Psychiatrists most often accessed Genetic Information, Reproductive Health, and Substance Use, while non-psychiatrist physicians accessed the Substance Use educational resource most often. Over half (66.7%) of the 15 participants who accessed the educational material found it valuable for their categorization of sensitive medical records. Few (20.0% psychiatrists and 13.3% non-psychiatrists) of the 15 participants who accessed the educational materials did not find them helpful.

As part of the survey, participants were asked to assess the adequacy of the data categories for sensitive record categorization. Majority (70.0% psychiatrists and non-psychiatrists) felt that the data categories were not sufficient to categorize sensitive health data and more data categories were needed. No participant indicated that fewer sensitive data categories were recommended.

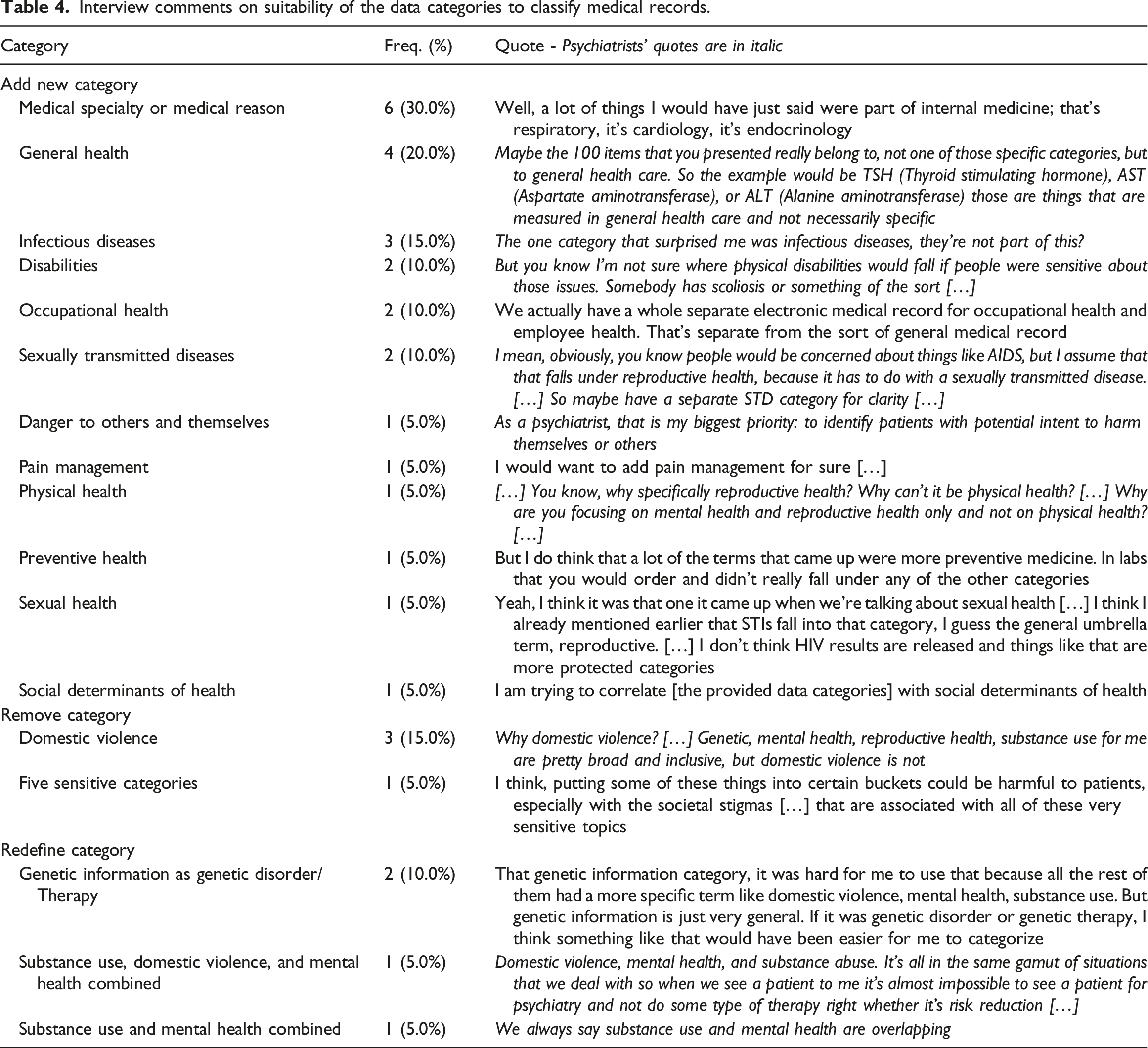

Interview comments on suitability of the data categories to classify medical records.

Discussion

Our study used patient medical record items to gain insight into provider views on granular information sensitivity and categorization. Our first objective was to understand how physicians categorized medical record data elements using data categories. When psychiatrist and non-psychiatrist physicians’ data categorizations were compared disagreements and partial agreements occurred. Similar to the Grando et al. study, which compared medical record data categorizations by health providers and data segmentation technology, data items representing medical procedures were a main source of partial agreements. 31

The selection of Mental Health and Substance Use categories simultaneously were significantly related for both provider types. Few participants indicated that Mental Health could include Substance Use. While both groups were more likely to endorse Mental Health than Substance Use, the difference in the likelihood of endorsing Mental Health versus Substance Use significantly depended on the provider group and data category. Psychiatrists differentiated the Mental Health category from Substance Use more than non-psychiatrists, as psychiatrists may understand the nuances of these categories due to their educational background and training. An open problem remains in understanding the impact that the use of the Substance Abuse category – instead of Substance Use – could have on provider data categorizations.

During the survey, non-psychiatrists selected significantly more data categories and were more likely to endorse multiple data categories than psychiatrists. During the interview, psychiatrists increased endorsement of multiple data categories by broadening contexts and adding categories. We found that the sensitive medical record categorizations of the physicians appear to be impacted by lived clinical experience, as articulated by the contextual assumptions and scenarios voiced during interviews and captured through thematic analysis.

Despite differences in individual survey categorizations, participants most often agreed on one of the data categories used. Future work could focus on understanding if data categorization consensus may be achievable when a larger number of providers are engaged.

Our study found that physicians disagreed or partially agreed when categorizing sensitive medical records based on their clinical specialty and/or implied patient history context (such as potential diagnoses that could have led to a prescription). This is consistent with previous studies that found that patients may disagree (33.8%) with providers when categorizing data items due to contextualization of health information based on health history and experience, fear of stigma, and perceptions of information applicability.6,9 Future studies will focus on recruiting a larger group of stakeholders, including health providers and patients, to assess categorization selection when the source EHRs are available for context.

Our second goal was to assess physicians’ perceptions on the adequacy of the data categories to classify medical record data. Most interview participants thought more categories are required to adequately capture the range of sensitive and not sensitive information. Some requested creating broader, cross-cutting data categories (e.g., General Health), while others recommended adding more granular/specific data categories (e.g., Sexually Transmitted Diseases as a subclass of Sexual and Reproductive Health). Karway et al. asked 200 patients with behavioral health conditions to make granular data choices based on data sharing scenarios that considered data types from the NCVHS taxonomy. In contrast, they found that most (83%) patients felt that the NCVHS taxonomy captured patient data privacy needs. 2

Our study had limitations. Study participants were offered paper co-authorship, which could have affected their responses. To compensate for that, limited details on the study design and goals were provided during the study (see Supplementary Materials). Also, participants may have had different interpretations of the instructions and the meaning of the data categories which could affect agreements, partial agreements, and disagreements. Study participants had no access to the patient EHRs from where the data items were extracted, so the context was absent from the categorizations. Finally, our participant sample size is relatively small.

Our findings will guide future research on granular data sharing and its impact on consent-based data sharing processes, technology, education, health policy, and legal compliance.

Participants suggested that improved instructions were needed to understand the purpose of the data categorizations. The feedback received from study participants reflects a need for further education on the NCVHS taxonomy, including clear definitions for each category to be used when categorizing medical record data and supporting granular medical record sharing.

We found that providers agree only about half of the time on sensitive data categorizations, particularly Substance Use information. The federal laws and policies that protect sharing of sensitive health information, 12 including 42 CFR Part 2 – the federal regulation that governs the sharing of substance use information 32 – have been identified as source of legal confusion, inhibited care coordination, and varying interpretations on what constitutes Substance Use information.33,34 Understanding the implications of our findings on health policy and legal compliance will be the focus of future work.

In terms of technology, participants’ categorizations differ from the way SAMHSA’s electronic consent tool, Consent2Share, categorizes medical record items. 31 Consent2Share supports granular segmentation of medical record information and relies only on value set definitions of sensitive data categories to categorize medical record items (e.g., cannabinoid (THC 50) screen, urine is listed within the Substance Use value set and therefore is categorized as substance use information), disregarding relevant medical information in the patient’s EHR that may impact data sensitivity interpretation. Since medical, behavioral, and social context may be essential for delineating sensitive data, it would be beneficial to replace binary tools like Consent2Share with context-driven AI consent engines. 35

Conclusion

This is the first study to assess provider perception on the adequacy and use of data categories for sensitive information categorization using data elements extracted from patient EHRs.

Our study found frequent differences in providers’ perceptions of sensitive medical record categorizations and suggested modifications to the NCVHS taxonomy. Insights into psychiatrists and non-psychiatrists’ categorizations of medical record item sensitivity are valuable contributions toward realizing the vision of patient-controlled granular data sharing.

Supplemental Material

Supplemental Material - Physicians differ in their perceptions of sensitive medical records: Survey and interview study

Supplemental Material for Physicians differ in their perceptions of sensitive medical records: Survey and interview study by Ipsha Banerjee, Kazi Syed, Aishwarya Potturu, Venkata SVS Pragada, Rishika S Sharma, Anita Murcko, Darwyn Chern, Michael Todd, Padma Aking, Ali Al-Yaqoobi, Patricia Bayless, Winona Belmonte, Teresa Cuadra, Trudy Dockins, Christina Eldredge, Robert El-Kareh, Gregory Gale, Ed Gentile, Edward Kalpas, Meghan Morris, Laurel Mueller, Dorothy Piekut, Mindy K. Ross, John Sarris, Gagandeep Singh, Shalini Tharani, Mark Wallace and Maria Adela Grando in Health Informatics Journal

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported by the National Institute of Mental Health through My Data Choices, evaluation of effective consent strategies for patients with behavioral health conditions (R01 MH108992 and GR03089) grant.

Ethical Approval

The Arizona State University Institutional Review Board approved on July 18, 2017, study #00006227 that asked patients for written consent to have access to their electronic health record (EHR) data for research. The Arizona State University Institutional Review Board approved on August 13, 2022, study #00004359 that asked physicians for written consent to participate in an electronic survey, followed by a remote interview.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.