Abstract

The current study reduced the time lag between performing a diagnostic assessment and identifying a critical finding in CT and MRI exams through improving radiographers’ abilities to identify those critical findings. Radiographers’ diagnostic assessments in CT and MRI exams were used to develop a mobile training application with the aim to improve radiographers’ awareness of critical findings. The current research used data analytics to examine radiographers’ interpretation of imaging studies from a privately owned medical group in Israel. During the project, the radiographers’ ability to identify critical findings improved. Implementation of the mobile training program yielded positive results where the knowledge gap was reduced and time to identify critical cases was decreased. Specifically, this study showed that radiographers can be trained in ways that enhance their involvement with radiologists to provide high quality services and improve treatment Ultimately, this gives patients higher quality of care and safer treatment.

Introduction

Healthcare researchers and practitioners suggest improved patient care can be derived from access to 1 large volumes of medical data coupled with improved data analytics. 2 The effort to increase quality of care can be further improved with medical test classification and management. For instance, one potentially beneficial use of big data applications is to reduce errors in diagnostic assessments by healthcare providers. Researchers have noted the existence of errors in radiological diagnostic assessments and offer suggestions for improvements.3–7 The current study developed a mobile training application with the potential to reduce lag time between performing tests and identifying critical test results by improving radiographers’ diagnostic assessments of CT and MRI Scans. The current research used data analytics to identify gaps in radiographers’ ability to recognize critical findings in imaging studies from a privately owned medical group in Israel then assessed the effect of intervention on the radiographers’ ability to identify critical tests results. Hence the purpose of this study was to map differences between radiographer and radiologist classification regarding severity level of imaging tests, and to reduce these differences by improving radiographers’ abilities to rapidly identify critical findings in the test.

The study was driven by three research hypotheses:

There is a difference between classifications done by radiographers and radiologists in CT scans.

There is a difference between classifications done by radiographers and radiologist in MRI scans.

Intervention through integrated training technology reduces differences between classifications done by radiographers and radiologists in imaging tests.

Changes in the diagnostic procedure in radiology

Radiological tests such as CT and MRI scans are fundamental to medical diagnosis. The tests are performed by radiographers (technicians) and later read by radiologists (physicians). In the past, radiologists in hospitals were involved in imaging tests prior to an interpretation phase. Radiologists would review the referrals and authorize them prior to performing the scans, and occasionally be present alongside the radiographer during screening. 4,5 In addition, reading results often took place in direct contact with the clinical radiographer in a relatively short time span. This collaboration enabled the radiographer to learn more about performing tests and appraising the results. 6

Generally, radiologists currently encounter CT and MRI scan images for the first time when they are tasked with making a clinical diagnosis. Unlike radiographers, radiologists usually make the interpretation without physically seeing the patient. Likewise, they exert no influence on the work procedures done beforehand. Radiographers, who act as hospital representatives, read the referrals, and are required to use their discretion regarding the test methods and processes, especially when the referrals come from physicians without sufficient expertise in clinical imaging. The radiographers are not responsible for identifying abnormal findings in the tests before they transfer the relevant information to the radiologists. 7 However, they do have the right to initiate an expedited processing request for any concerning readings. The current study posits that radiographers can help identify critical cases sooner without being held responsible for the diagnosis. This could be especially helpful in ensuring critical cases receive priority attention or in situations where additional unexpected findings are revealed and require adjustments or immediate actions.

Early detection of critical ailments certainly will improve patient care. Although radiographers are trained in subjects such as human anatomy, physiology and pathology,8–11 and in using safety protocols for imaging test procedures, 12 their evaluation of findings remains an informal activity. They are not trained to provide documentation for an evaluation. In many such cases, radiographers would have been able to detect and ensure early interventions for deadly diseases such as cancer. 13 Another limitation of the radiographer’s role is a short patient interaction time. On average, a radiographer spends about five to ten minutes per patient. Short interactions can lead to inadequate review of test results. 14 Therefore, researchers have noted that training radiographers to identify critical conditions in test results can help with early identification of pathological findings prior to the radiologist’s access to test results.15–17

For many severe medical conditions, time factors play an especially critical role. This is particularly true in situations where waiting for a radiologist’s reading is not feasible and when an immediate life-saving identification may be necessary. The patient’s primary benefit is an efficient early identification of the condition which can enhance early-stage treatment. For instance, recent research showed that immediate identification of a pulmonary embolism by a radiographer shortened the time span between the radiographic test and meeting with the physician from 1.5 days to only 26 min 18 As such, training programs to improve the diagnostic assessments of severe medical conditions by radiographers can lead to early life-saving interventions.19–21

An additional benefit of radiographers’ diagnostic accuracy is financial savings. Based on 1000 simulated patients, radiographers’ reports reduced the diagnosis costs by £8500. 15 After adding treatment-related costs, faster reporting by radiographers was still more cost effective than radiologists' reports and resulted in 1.4 Quality-adjusted Life Year per each 1000 patients who had undergone a radiological Chest X-Ray examination. A probability analysis suggests that radiographers' reports are more cost effective than radiologists’ reporting. The cost of diagnosis and treatment by radiographers was lower at £2,137,983 as compared to diagnosis and treatment costs by physicians at £2,142,299. The conclusion was that radiographers' reports were a cost effective alternative in lung cancer, and provided quick diagnosis to patients and saved costs of expensive treatments at more advanced stages of the disease, thanks to early diagnoses. 15

In other examinations, such as hysterosalpingographies, the radiographer’s involvement can help the physician to be able to conduct and read the examinations in real time. This can complement the physician’s work, and thus shorten waiting times and reduce patient anxieties and uncertainties. 22 Radiographers can utilize Diagnostic Reference Levels (DRL) values for chest, brain, and other X-rays after appropriate training programs. 23 or they can help interpret/decipher various mammograms and ultrasound images. 4 Radiographers' interpretations of CT results can, for example, be precise, especially after receiving appropriate training.24,25 Roe et al. 26 provided additional support for the claim that a radiographer’s involvement could be important and suggested the need for appropriate training. In that study, a system designed to check the precision of a radiographer’s notes regarding the test, vis-à-vis a physician’s notes, found a 98.5% precision rate in the radiographer’s notes. These notes from radiographers could help reduce medical errors and support physicians’ readings. 26

The preceding literature review emphasizes the value that radiographers can bring to patients in identification of crucial situations in real time. Despite such needs, the general, foundation education or training for the radiographers did not address the interpretation of radiography scans. In addition, it is important to note that the case for further training radiographers through IT-based tools does neither intend to reduce physicians’ expertise or skills nor attempt to replace physicians, but rather to enhance the rapid and accurate diagnosis interpretation in critical moments. In the digital age, training radiographers on the job using digital means can be an effective method. Research indicates that learning environments supported by digital imaging or simulations can be very effective.27,28 Furthermore, research indicates that using mobile technology for imaging tests enables radiographers to conduct tests with high precision (87.69%). For example the ANIMATI DICOM Viewer enables accurate visual imaging. 29 Therefore, the current study seeks to close the gap by suggesting a safe way to improve radiographers’ diagnoses, therefore improving timely and accurate treatment, ultimately leading to high quality care and better patient outcomes.

Method

Background and preliminary actions

The study was conducted at the Assuta Medical Centers, a network of healthcare centers in Israel that includes eight hospitals and outpatient centers. The network serves an average of 92,000 patients each month. Two medical imaging tests are included in this study: computed tomography (CT) and magnetic resonance imaging (MRI). In a typical month, Assuta performs 17,000 and 7000 such tests, respectively. The study used a database, which exhibits Big Data characteristics in terms of data volume and variety, to identify the characteristics of the tests in which differences between radiographer and radiologist classification existed.

The current study comprised five stages. The first three stages are regarding data collection and analysis, which are described below. The fourth and fifth stages are regarding the radiographers training, which are described later in Mobile Training Development. In the first stage, radiographers (N = 118) were asked to perform an early evaluation of test results, before these tests were passed to the radiologists for interpretation (N = 98). The radiographers were asked to classify the tests into three types of findings: (1) “Normal range,” (2) “There is a presence of finding” (non-critical), and (3) " There is a critical finding." The classification was performed at the end of the test and documented in the patient’s clinical record. For documentation, a new dedicated field was built in electronic medical record (EMR).

At the end of the interpretation process, the radiologists classified the test into an assessment tool that rated the severity of the findings in five categories: (1) “Normal test”; (2) “Test has a finding, but no urgency to report to the referring physician”; (3) “Test contains a critical finding and must be reported to the referring physician within a week”; (4) “Test contains a critical finding and must be reported to the attending physician or patient within 24 h”; and (5) “Test contains a critical finding and immediate treatment is necessary.” In the usual procedure, in cases where the radiographer suspects or identifies a critical finding, s/he should contact the radiologist on duty immediately, describe the case thoroughly, and prioritize the order of tests based on criticality. The radiographer must also keep the patient remained at the testing facility until the radiologist answers.

In the second stage, at an organized conference for radiographers, we presented a new process of classifying findings in tests and the rationale for the program. As part of the training program, clinical cases were presented. Also, in the conference, we conducted a real-time interactive simulation game, where images of pathological CT scans were presented, and participants were asked to answer questions related to findings and classification. The game was a preview of a training program based on a mobile app being developed. The conference was attended by over 100 radiographers throughout the Assuta network, on two separate dates.

In the third stage, we collect and analyze data to understand the relationship between radiographers’ and radiologists’ classification. Data Collection to Data Validation and Anonymization delineates what we have done in the third stage.

Data collection

The dataset, derived from a large data repository, included 252,957 CT scans and 108,590 MRI scans over a period ranging from July 2018 to January 2020, from all Assuta centers. Information about the scans was extracted from the RIS® system (electronic medical imaging files), and from Tafnit®, an administrative system. 30 The data was retrieved and compiled into a SQL database.

Data cleaning

From the initial set, we performed veracity check. In the CT scans, we removed cardiology scans because radiologists were present with the radiographers while the scans were conducted; therefore, there was no potential early detection of findings by radiographers. We also excluded virtual colonoscopies due to data uniformity (all scans resulted in no findings), and hip comparisons and angular measurements in infants, which are for measurement purposes only (N = 5711). We omitted records that have no radiologist classification (N = 21), missing the radiographer’s name (N = 114), and the experimental scans performed by computer unit personnel (N = 326). Similarly, in MRI scans, we removed scans that were performed specifically for other studies, missing finding’s severity (N = 664), and missing radiologist’s classification (N = 815). After cleaning the data, the study retained 246,785 CT scans and 107,111 MRI scans.

Data Validation and Anonymization

Data went through a final validation process using two complementary methods. In the first method, we selected a random number of scans. These data were verified against the existing information in the patients’ clinical and administrative files by comparing manually data. The second method involved comparing quantities of various tests against existing operating reports. The comparison of information retrieved by two different parties indicated the number of retrieved records was correct.

Once validated, the information underwent anonymization (hiding identifying information about patients) and analyzed using Python 31 and SPSS software. 32 The analyzed information was subsequently transferred to Excel, and from there to InDesign for the purpose of simulating the data. 33

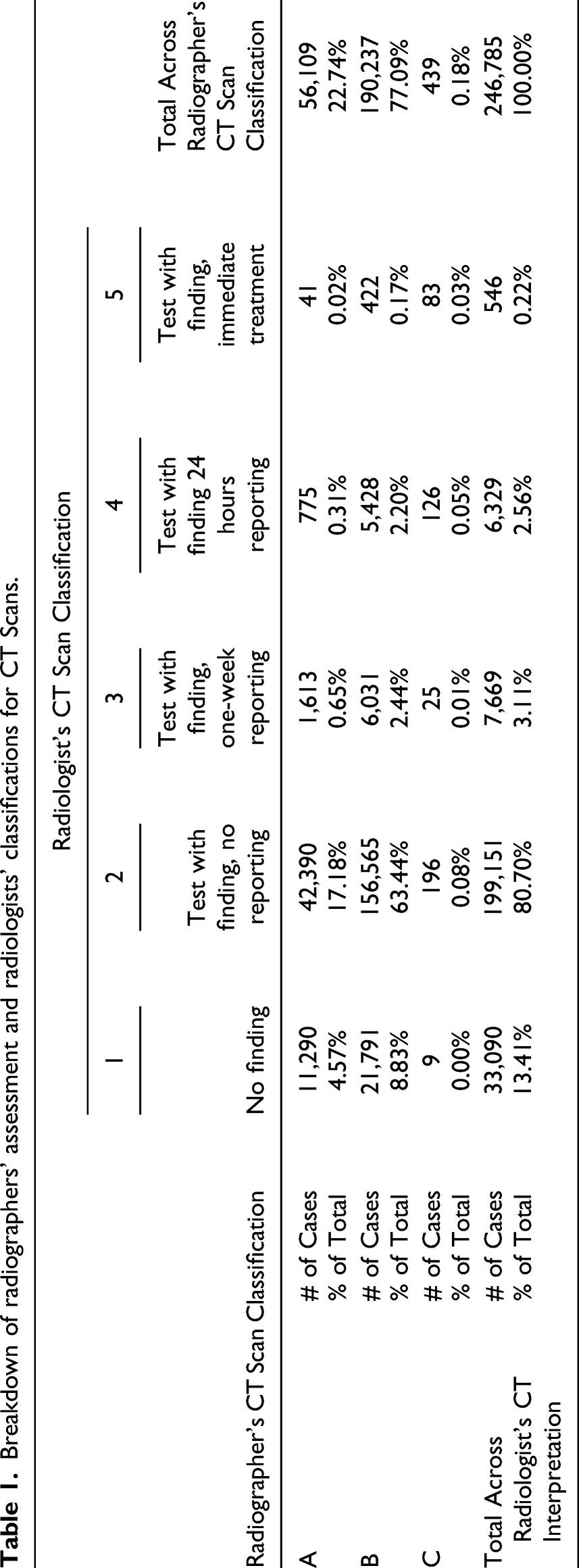

Breakdown of radiographers’ assessment and radiologists’ classifications for CT Scans.

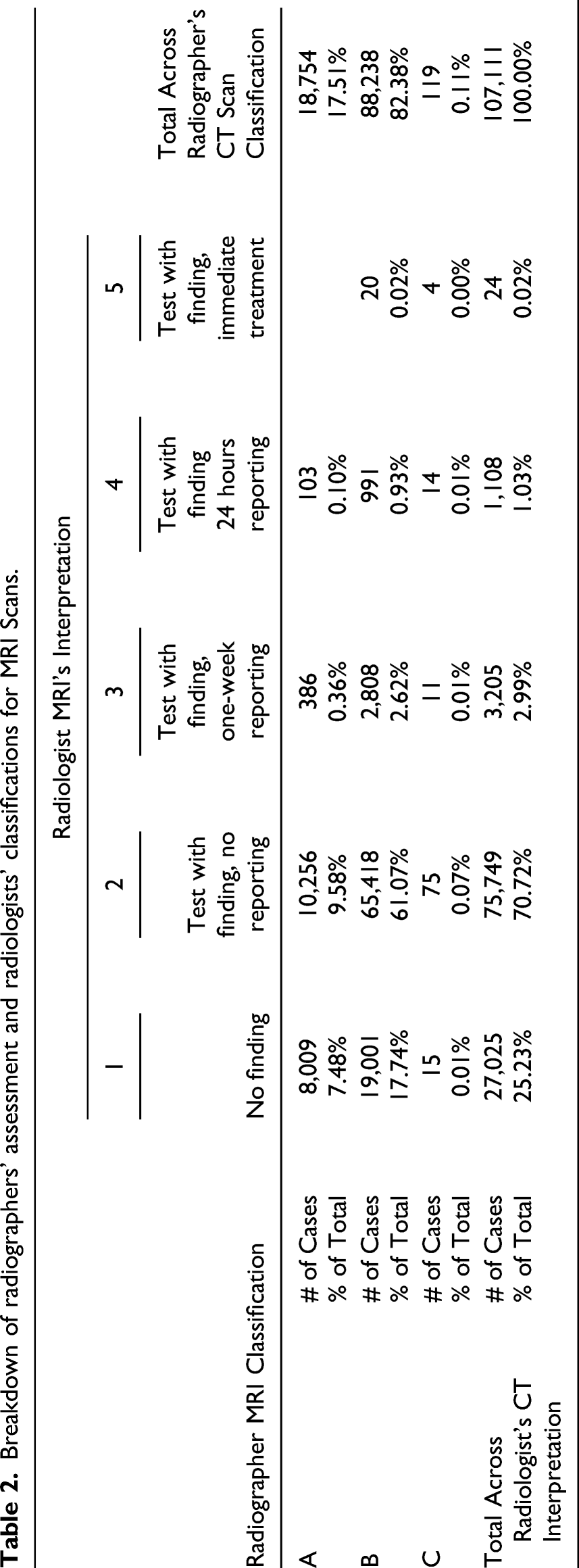

Breakdown of radiographers’ assessment and radiologists’ classifications for MRI Scans.

Each test type used a scale created in the EMR with different purposes. These were mapped into a common scale for the current study. Chi-square tests validated the significant differences between the two groups after conversion (p < 0.01). Data regarding the tests include unique patient number, unique exam number, location and time of the exam, type of tests (Body, Body and Neurological, Musculoskeletal, and General), the primary radiographer and his/her classification, and the radiologist and his/her classification. As for the radiologists and radiographers, profile data collected includes gender, age, years of experience out of medical school, years of professional experience in hospitals, and specialties. It is important to note that the current study analyzes only the characteristics of the radiographers since the training implementation will focus on their actions.

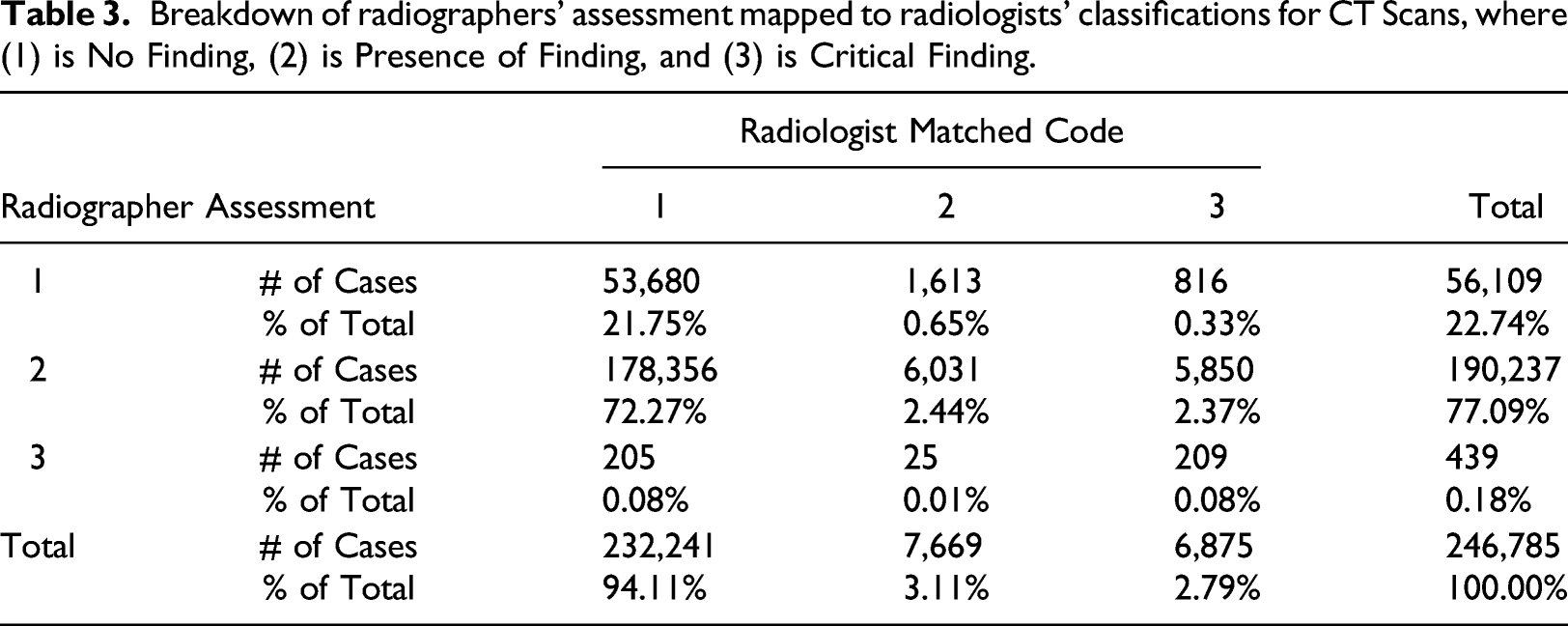

Breakdown of radiographers’ assessment mapped to radiologists’ classifications for CT Scans, where (1) is No Finding, (2) is Presence of Finding, and (3) is Critical Finding.

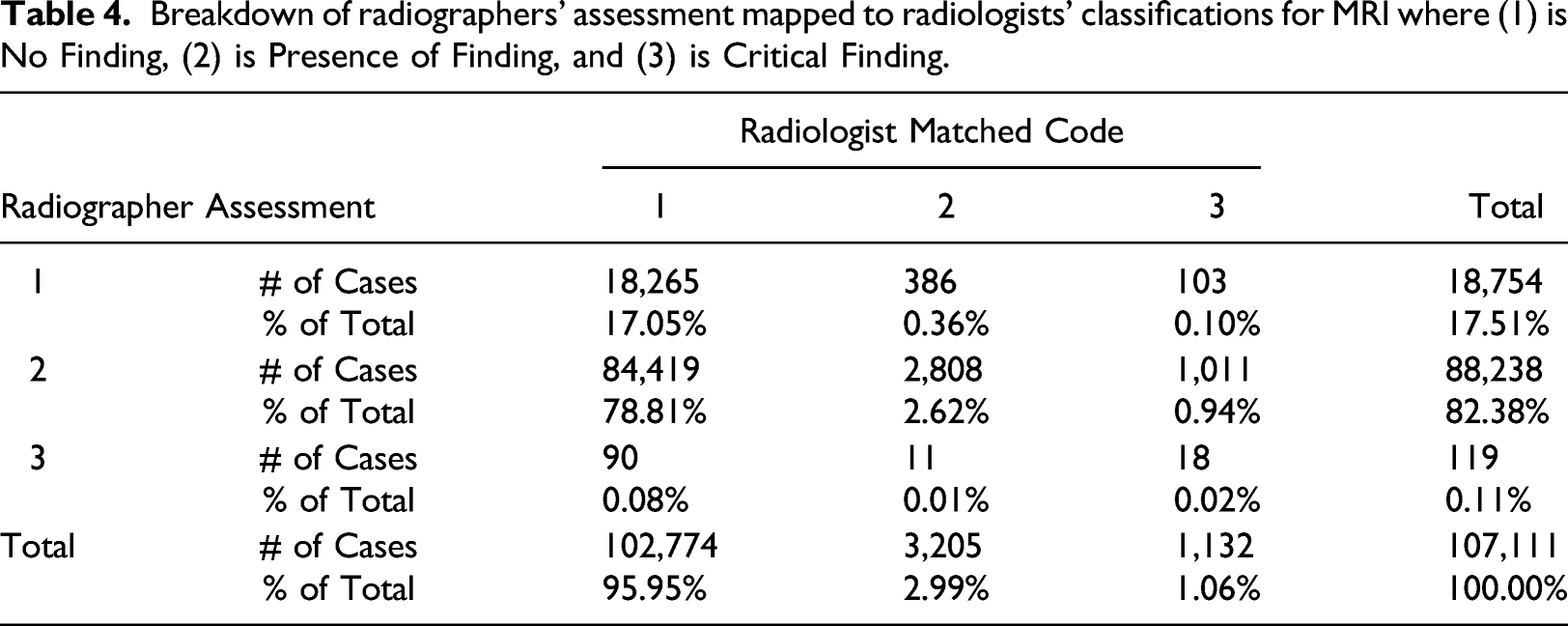

Breakdown of radiographers’ assessment mapped to radiologists’ classifications for MRI where (1) is No Finding, (2) is Presence of Finding, and (3) is Critical Finding.

Tables 3 and 4 depict the total accuracy of the respective tests and provides a visualization of the radiographer’s classifications based on the radiologists' findings. For example, in the CT table, we see the radiologist interpreted 6875 cases as grade 3 (2.79% of the total cases). From this number, the radiographer classified 816 as grade 1, 5850 as grade 2, and 209 as grade 3 to total 6785 cases. The values comparing the 2 groups shown in Tables 3 and 4 were statistically significant based on chi-square tests (p < 0.01). Given the MRI scans are more difficult to interpret than CT scans, a slight increase in accuracy was anticipated since a higher level of training had been administered. All misclassifications are candidates for improvement. However, to make the most effective training program, the targeted program should address the most pressing need.

The tables indicate where training can have the most impact: where radiographers render a score of “1–No Finding” while the radiologist rated the test result with a score of “3–Critical Finding,” as highlighted in red in Table 3 and Table 4. In the case of CT scans, 816 of such cases were identified, making up 0.33% of the total scans. Similarly, for MRI scans, this misclassification was recorded in 103 cases, making up 0.10% of the cases. These cases classified as life threatening by the radiologist and as normal by the radiographer were of primary interest. Even though the number of cases was low, correcting these mismatches are potentially lifesaving because it allows prompt responses by radiologists. Therefore, the training program focused on improving the rate of early identification of such cases.

Mobile training development

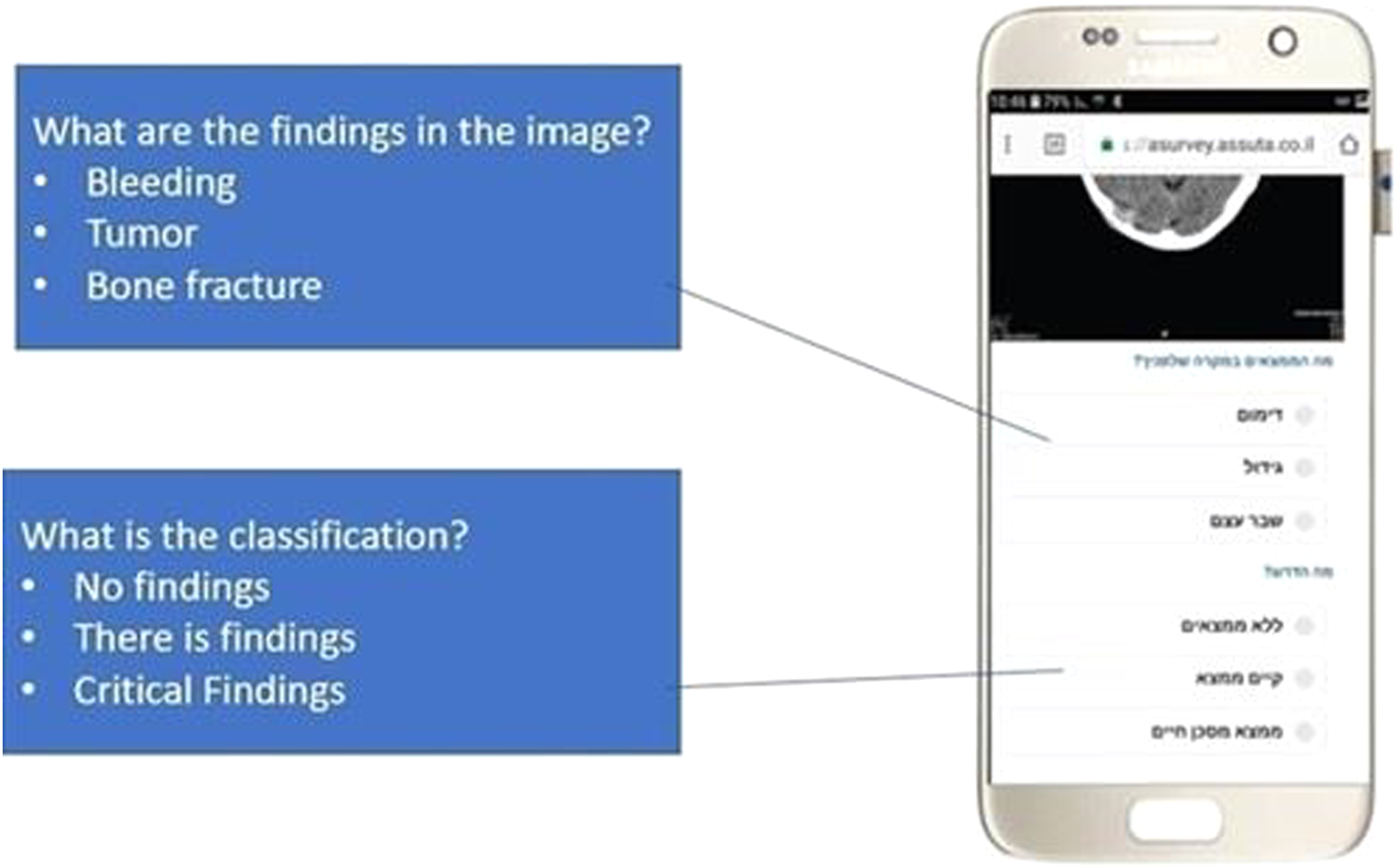

In the fourth stage of the project, a study group was established in which radiographers were presented with anonymous cases for discussion. The mobile training app consisted of two parts: pathology identification and rating of the finding severity. In other words, the participant was asked to diagnose the clinical situation that was presented to him in the mobile app, and then classify the severity of the finding. For example, the radiographer taking the survey had to see a tumor and classify whether to recommend urgent treatment. In this scenario, the radiographer would receive an image on the mobile device with a tumor situation represented. The training would prompt him to first identify the situation (in this example, a tumor) and then secondly require its classification in terms of severity. The severity could be A—no finding; B—there is a finding; or C—there is a life-threatening finding. If the finding is “C," the training would recommend that in real life, the radiographer should call the immediately radiologist and request that the patient stays at the hospital.

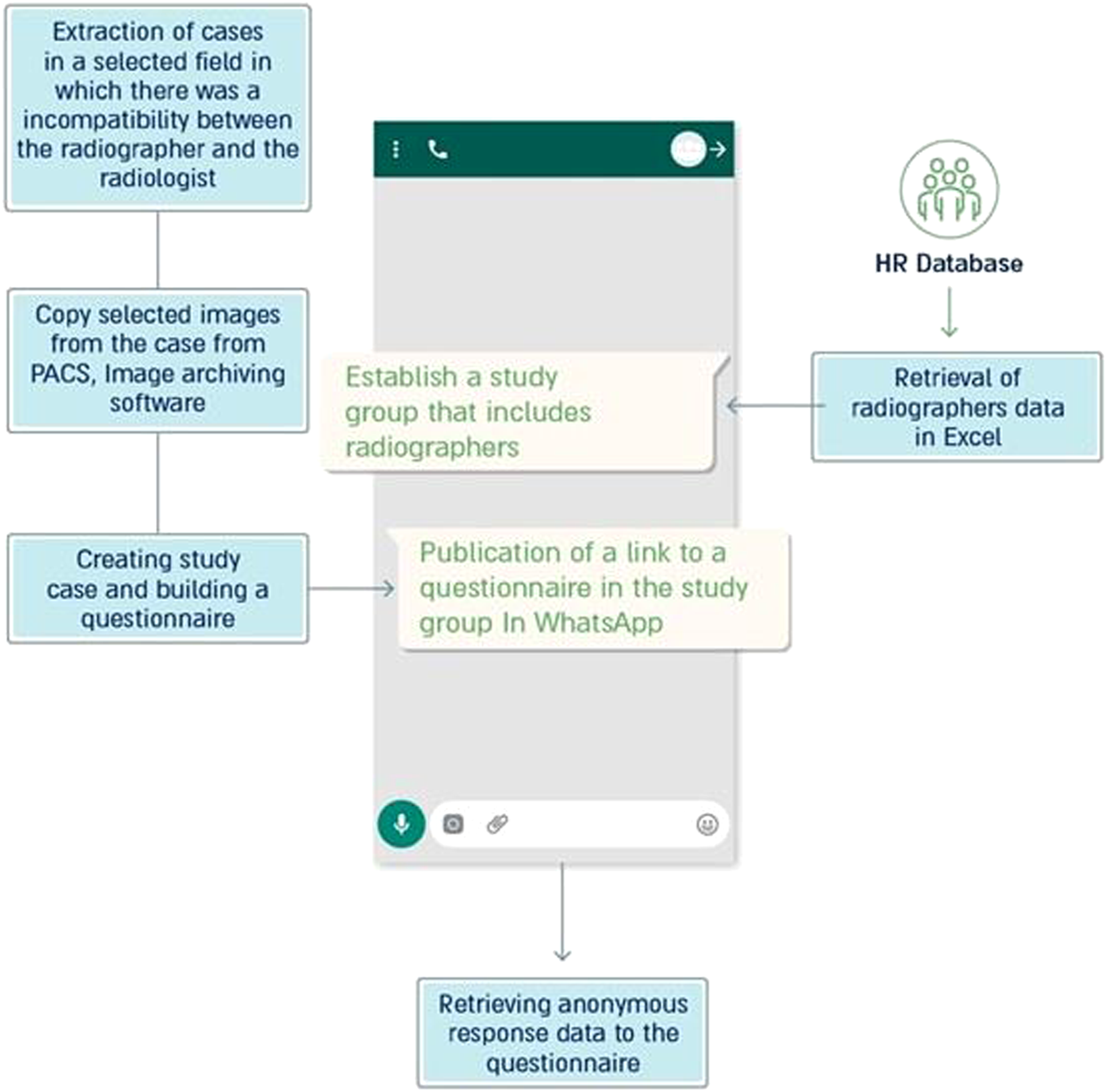

A sample case, which included images and the appropriate responses for participants as well as other pertinent information, was developed and presented via a hyperlink distributed to a dedicated WhatsApp group of radiologists. Figure 1 displays the technical description of the mobile training program. Technical description of building a mobile training program using big data analysis.

The group was established for the purpose of training and was built based on information produced in a dedicated report developed by Assuta human resources. The group included 118 radiographers and the head of the imaging department. After a few days, the case answer was provided to the group. Figure 2 provides a sample screenshot from the mobile app. Experiential mobile learning group for radiographers in the Assuta network.

After the proof of concept was ensured, the fifth stage of the project began. Here, the number of training cases were expanded. Ten cases were selected based on the informational analysis of the imaging tests and in collaboration with the Head of the Imaging and Radiology Division in Assuta. These cases were mismatches between classifications of radiographers and radiologists in critical situations. Two were general cases and eight were from the neurological field. In these cases, a representative image of the test was extracted from the PACS, Picture Archiving and Communication System, and two questions were formulated for the radiographer: (1) What is the clinical condition in the image? (e.g., bleeding or tumor); and (2) What is the severity of the findings? (e.g., “No findings,” “There are findings,” or “There are critical findings”). After characterizing the cases, any remaining identifying details of the patient were removed, and an application was built in the Nemala software environment that included presenting the case and questions to the radiographer. 34

The mobile test interventions through integrated technology were tested to determine if it reduced the differences between the classifications of radiographers and radiologists in imaging tests. The radiographers were asked to classify an EMR presented to them. This classification was compared with that of the radiologist. The radiographer was then trained to identify the differences and congruences with the radiologist’s classification of the EMR.

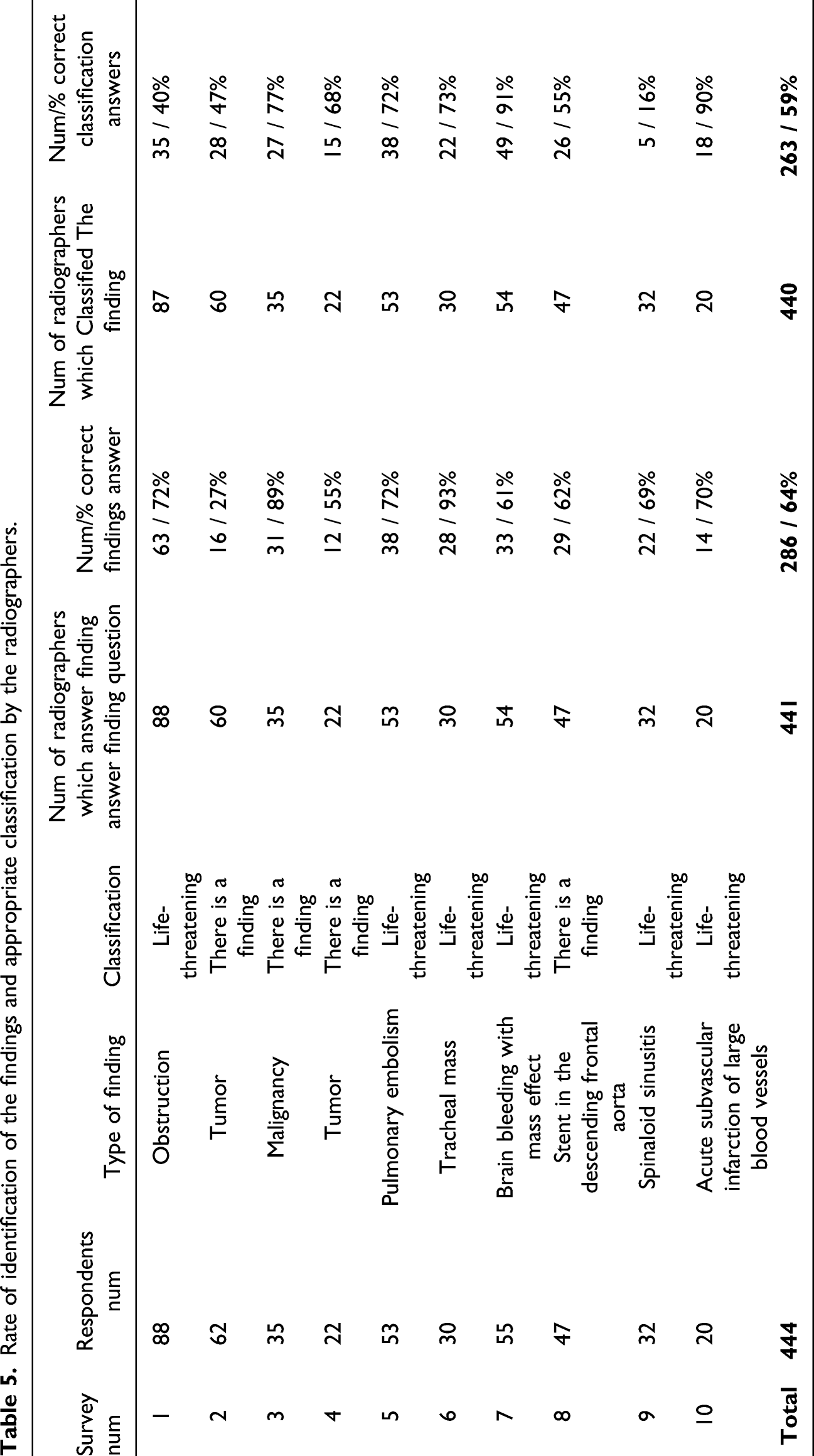

Rate of identification of the findings and appropriate classification by the radiographers.

Results

As described in the methods section of this article significant differences in the rates of detection in critical CT and MRI findings existed between the groups of radiographers and radiologists. This information was used to develop the mobile training application and focus on the most impactful types of cases. Therefore, H1 and H2 were accepted.

H3 was evaluated considering administration of the ten mobile training surveys. The response rate for the surveys was approximately 37%. An average of 64% identified the findings correctly and 59% classified the findings of severity correctly.

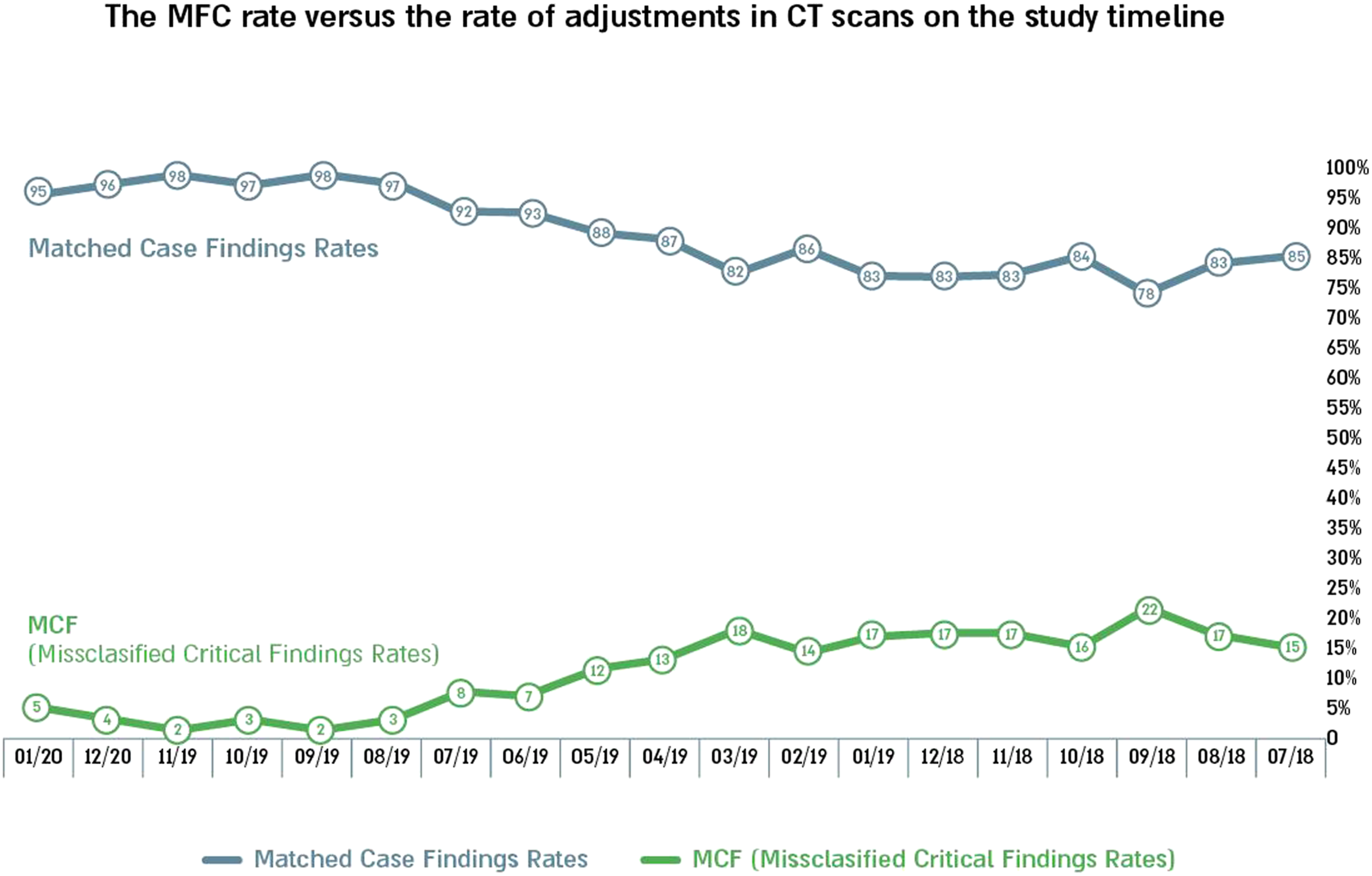

Data was collected to determine if misclassified critical findings (MCF) results showed a steady decrease in the misclassified critical findings (MCF) throughout the study period. This was found to be the case with MCFs dropping from 15.3% to 4.5% by the end of the study period. Also, the rate of cases in which radiologists identified critical findings and acted with the radiologist to perform rapid interpretation increased from 84.7% at the beginning of the study to 95.5% at the end.

Differences in the rate of detection were compared using a chi-square test. The test compared critical findings between the first half year of the study and the half year of the study. Significant differences were found between the groups (χ2 = 204.9, p = 0.000). Therefore, H3 was accepted. Figure 3 provides the results timeline. Rate of misclassified critical findings versus rate of adjustments in CT scans on the study timeline.

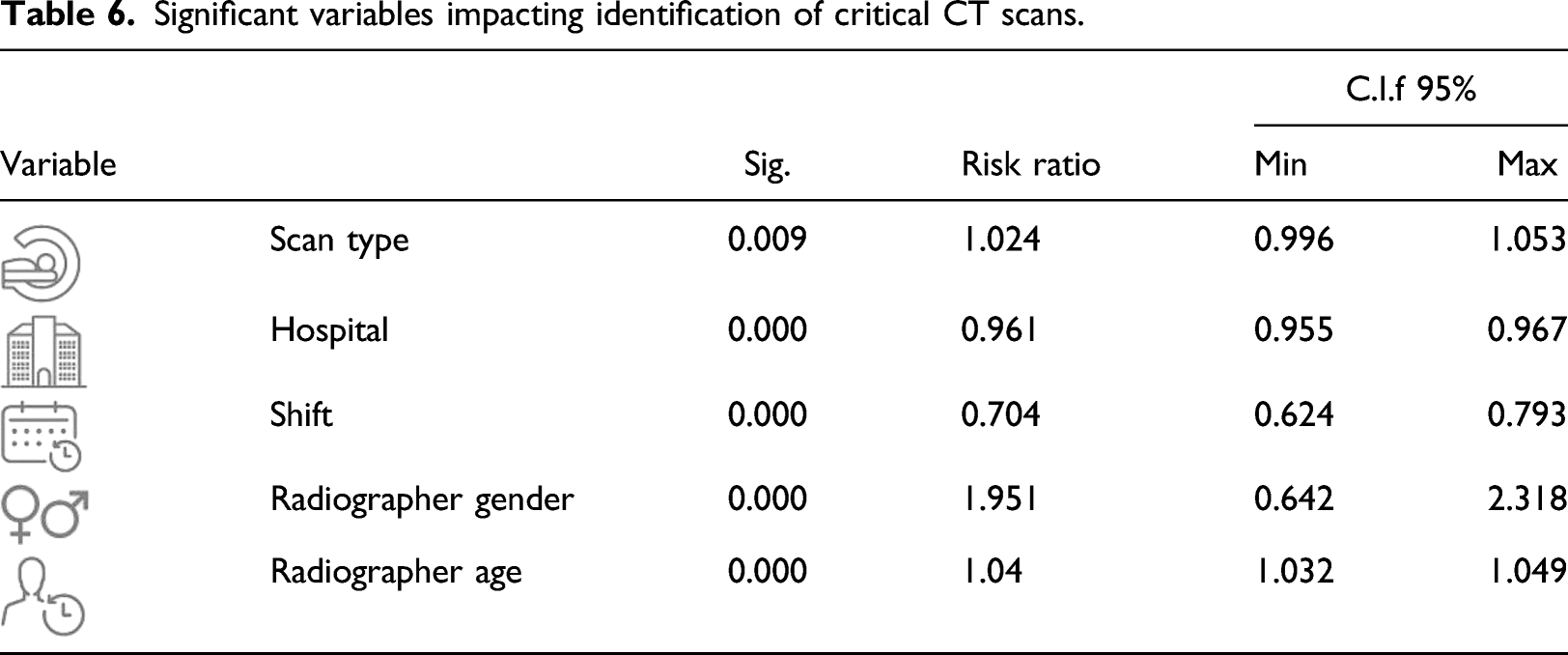

Significant variables impacting identification of critical CT scans.

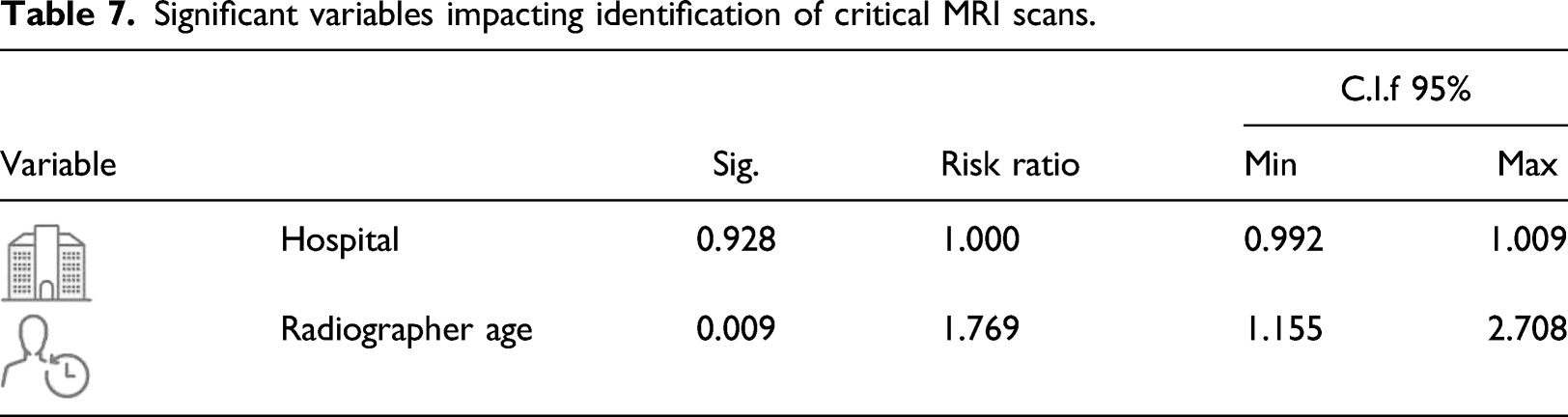

Significant variables impacting identification of critical MRI scans.

Discussion

Today, a radiologist’s encounter with an imaging scan is done at the time of interpretation and has no effect on the preceding work processes. Prior to the official clinical interpretations, the radiographer administers and reads the initial test but is required to exercise discretion as to their opinion on test findings. The radiographer’s assessment activity is informal and undocumented. Sometimes, there are cases where critical treatment could begin faster and perhaps before the patient’s release had findings been identified earlier. The current study was motivated by this possibility: using a radiographer as an early warning mechanism to increase speed with which critical issues are identified. Therefore, this study found there are differences between radiographer and radiologist classification in reading CT and MRI scans and there is a need to reduce the differences and improve radiographers’ awareness of critical findings via a training app. The study is of great importance because it has identified the most significant areas of potential instruction for radiographers based on data analysis and then provided opportunities for focused training to raise improvement identification of tests with critical findings.

The study found a gap in the interpretation of CT and MRI scans between radiographers’ and radiologists’ interpretations. For each scan, the overwhelming majority cases are as follows: 77.09% of the CT scans were marked by the radiographer as having findings while the radiologists classified 94.11% of the tests as having no findings. A similar picture was obtained for MRI scans: 82.38% and 95.95%, respectively. The findings showed that the discrepancies in the radiographer’s reports represent a tendency to report the safest option. In other words, in a state of uncertainty, radiologists prefer to mark “existing findings” rather than make a critical mistake and mark one of the two extreme options (“no findings” or “critical findings”).

As discrepancies were found between the reports, the study focused mainly on cases where radiologists classified “no findings,” while radiologists interpretated the scan as a case with a critical finding, which is a critical finding and should be reported to the attending physician/patient within 24 h. This is the most significant factor when it comes to the quality of patient care and therefore the study chose to focus on this section of the tests.

To do this, the study pulled out the tests in which the radiologist interpretation included a critical finding. The distribution of radiographer classifications was then examined. In 12% of CT scans with critical findings (816 out of 6785) and 11% of MRI scans with critical findings (103 out of 896 tests), radiographers classified the tests as normal. This is a significant and important revelation that points to the most important gap between radiologists and radiologists.

Between hospitals, the rate of discrepancy was mainly distributed between two out of six hospitals (Hospital 1 with 49% and Hospital 3 with 25% of all discrepancies). We continue to explore the differences found here. Timewise, in the morning shift, the rate of discrepancies was the highest (Morning shift—15%, evening shift—10%, night shift—7% of all tests with critical findings). Radiographers on evening and night shifts appear to be more available to detect critical findings. A possible explanation pertains to the flow of patients in a particular part of the day. Mornings are much busier with movement of people around and inside the examination room (incoming and outgoing secretaries/families, etc.) in a way that may cause distraction and a decrease in the level of alertness. In the evening and at night, there is fewer administrative staff in the test area and the patients tend to arrive as a single party.

Radiographers-characteristic-wide, the rate of misclassification was slightly higher among female (women 13%, men 12%). This is a small difference to which we have yet to find an explanation. The rate of misclassification among radiographers in the age group over 31.6 years was twice as high (18%) as in the age group below 31.6 years (9%). The highest rate of discrepancies was among radiographers with over 5 years of professional experience (professional experience more than 5 years—21%, professional experience up to 3 years—8%, professional experience between 3 and 5 years—7%). These findings point out the need for special attention in future training for adult radiographers with over 5 years of professional experience.

For MRI scans, a multivariate analysis of the independent variables found that hospital and age of the radiographer, which again is highly correlated with seniority, significantly affected the variable depending on the phenomenon of mismatch in the scans. In hospital number 5, the rate of misclassification was 17% of the total tests with critical findings in the hospital, and in hospital number 1, 11%, respectively. For the age of the radiographer, in the age group above 35.6 years the rate of non-compliance was 11%, compared with 7% in the age group below 35.6 years. These findings reinforce the need to initiate a training program that considers the hospital and the age of the radiographer.

Another finding relates to the fact that the CT field in neurology is the clinical field with the most tests. This was found to be the area with the highest rate of discrepancy between the radiographer classification and the radiologist classification. This is the most important and central finding on which a mobile-based training program was built that presents critical cases in the field of neurology. The training radiologists was unique because it was based on a big data analysis that could focus attention on knowledge gaps in a central area where the phenomenon of non-compliance is most severe. Previous studies have dealt with clinical situations15–18 that have focused on specific areas of training that are ranked according to specific clinical importance and not necessarily from analyzing data that identify knowledge gaps of the radiologists in the overall process.

Overall, the study determined that mobile training intervention can help reduce discrepancies in critical case identification. The literature shows that training a radiographer while working with the help of digital means can be an effective way to achieve the training goal.27,28 Evidence that use of mobile technology in favor of raising the quality of medical care is beginning to accumulate. 29 The use of mobile technology for the purposes of training the radiographers in this study, adds to this evidence.

At the time this study was published, the app had been active for 17 months. The response rate to the training program in the mobile app was 37%. The training was performed using CT scans but was given to both CT and MRI radiographers. The differences in response rate may have been influenced by test type. The MCF rate was examined in CT scans during the study timeline. The MCF rate gradually decreased from 15.3% on July 2018 to 4.5% at the end of the study period, January 2020. Also, the proportion of cases in which the radiographer identified critical findings and acted with the radiologist to perform rapid interpretation increased from 84.7% at the beginning of the study to 95.5% at the end. The differences were found to be significant in Chi-square test (p = 0.000).

The study showed that radiographers learned to detect critical cases on CT and report them faster to a radiologist. Moreover, from informal conversations with radiologists, it appears that the radiographers have become more involved and more knowledgeable about test results and that the number of referrals regarding patients has increased significantly and radiologists have contacted them much more often than before. This finding was found to be suitable for parallel works in the literature that showed that interpretations of CT results performed by radiographers can be accurate, especially after receiving appropriate training.24,25

Many studies show the need to validate the artificial intelligence recommendation (whether there is a finding). Validation is performed by radiologists and contributes to the ongoing learning processes of artificial intelligence.35–37 During this study, a new process of testing the classification was implemented by the radiographers and a training system for detecting abnormal conditions in the test was implemented. In this way, the professional composition of the factors that help validate artificial intelligence systems can be expanded.

Radiographers can also help deepen the feedback process between artificial intelligence and the human factor: a radiographer who recognizes test findings can help validate the algorithms, and a radiographer who does not recognize-the algorithm can help him deepen knowledge. All of these advance the human-machine interface in the world of radiology towards an era in which re-feeding is an integral part of the changing work processes in the world of radiology. Recently, as part of a commercial collaboration, Assuta tested a system of a commercial company that aims to help the radiologist identify abnormal findings in tests in differentiated clinical situations. The radiographer classification is another tool for assessing the system’s effectiveness.

Conclusion

Advances in medical practice and technology have resulted in greater demand for both CT and MRI scans. Correspondingly, an increase in tests with critical findings that need to be identified quickly has emerged. The current study presented a series of data analysis steps to identify areas can be improved with training. The training method proposed in this study shortens the response time in critical cases and leverages radiographers’ knowledge as well as assists in implementation of AI-based tools in the world of radiology. The mobile training app exposed radiographers to new situations and provided insight into critical case identification to encourage quick intervention steps. The feedback loop between radiographer and radiologist can be reduced in critical situations ultimately saving lives where time reduction is imperative.

It is important to remember that the study neither intends to suggest that a radiographer become a radiologist nor to ask them to take on higher levels of responsibility for interpretating tests. However, their involvement is important for providing high quality service, improving medical care and patient satisfaction.

Likewise, it is important to continue investigating the findings of the mobile training and its effects on the accuracy of the radiographer’s assessment. In the future it is also important to investigate the influence of the variance in readings done by various radiologists and strive to achieve a gold standard in this area.

Limitations of the study

It is possible that gaps discovered between radiographer’s classifications and radiologist’s readings stem from clinical optimizations; the radiologists may tend to classify presence of findings only in cases of significant findings as opposed to the radiographers who may classify every finding. It would be appropriate to build an operational model of the ideal radiologist that would provide the ability to assess and compare between different radiologists and different types of findings. In addition, different radiologists exhibit variances in classifying a scan, but in view of this study’s random distribution and the fact that there is no affinity between the radiologists and the radiographers, we would expect to see a correlation between them. In any case, the analysis of the variance among the radiologists is a well-known issue and remains outside the scope of this study. Another limitation is regarding contact time. Previous literature suggests that short contact with the patients, five to ten minutes on average per patient, may lead to an insufficient review of the test results. 14 This research did not account for the duration due to incomplete data record. However, it appears that despite the short time documented in the literature, appropriate training can have a significant effect on identifying the findings in the test.

Future extensions

Artificial intelligence is becoming more present in the world of radiology and its purpose is to accelerate or improve the accuracy of radiologists in the interpretation process. The main strengths of artificial intelligence-automation, accuracy, and objectivity-are expressed in learning and evolving algorithms, which are embedded in the radiologist’s work environment. Because it is supposed to integrate naturally into the work environment, and be alive and developing, it must include processes for receiving feedback from radiologists for continuous learning and improving application.35,36 Feedback includes independent verification of data the algorithm has not seen before and in-depth test of false and negative results. 37

Documentation of the test findings by the radiographers, and the processes of training the radiologists to identify the findings as presented in this work, have the power to contribute to the learning and re-feeding processes of artificial intelligence algorithms and give validity to the radiologists’ classification of the test.

Footnotes

Author contributions

All authors have made a substantial, direct, intellectual contribution to this study.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

The authors assert that all procedures contributing to this work comply with the ethical standards of the relevant national and institutional committees on human experimentation and with the Helsinki Declaration of 1975, as revised in 2013.