Abstract

No guidelines exist for the conduct and reporting of manuscripts with systematic searches of app stores for, and then appraisal of, mobile health apps (‘health app-focused reviews’). We undertook a scoping review including a systematic literature search for health app-focused reviews describing systematic app store searches and app appraisal, for apps designed for patients or clinicians. We created a data extraction template which adapted data elements from the PRISMA guidelines for systematic literature reviews to data elements operationalised for health app-focused reviews. We extracted the data from included health app-focused reviews to describe: (1) which elements of the adapted ‘usual’ methods of systematic review are used; (2) methods of app appraisal; and (3) reporting of clinical efficacy and recommendations for app use. From 2798 records, the 26 included health app-focused reviews showed incomplete or unclear reporting of review protocol registration; use of reporting guidelines; processes of screening apps; data extraction; and appraisal tools. Reporting of clinical efficacy of apps or recommendations for app use were infrequent. The reporting of methods in health app-focused reviews is variable and could be improved by developing a consensus reporting standard for health app-focused reviews.

Background and significance

Smartphone use is ubiquitous, with user numbers expected to reach 5 billion in 2020. 1 Given the rise in smartphone ownership and internet access, mobile health apps are widely used as a self-management tool for people living with long-term health conditions2–4 Furthermore, clinicians are increasingly using mobile apps in clinical practice for patient education and to facilitate self-management,5,6 and for supporting clinical care such as clinical decision making, diagnosis, disease activity monitoring, and prescribing.

The interest in mobile health apps is evident from the increase in publication rate for manuscripts describing systematic search of apps stores for, and appraisal of health apps (which we have called ‘health app-focused reviews’) in the last 5 years.2,7 Many of these health app-focused reviews have ‘systematic’ in the title or include typical features of a systematic literature review in the reporting of methods. The consistent finding from these health app-focused reviews is that most existing self-management apps from app stores are: developed with minimal end-user input and lack evidence-based content, designed for commercial use with minimal or no clinician involvement, not tested for clinical efficacy.2,4 These results have led to creation of guidelines by government agencies for mobile app developers 8 and critical appraisal checklists for clinicians for recommending mobile apps in clinical practice. 9

These health app-focused reviews, which may describe app features, functionality, efficacy and effectiveness, are critical for several reasons. First, they inform clinicians and health systems about apps that can be recommended for patient use in a patient-empowered health system. Second, they inform researchers and developers about the gaps for high-quality health apps to direct future research or development. Most importantly, they provide a high-quality trusted resource for informed patients to choose the highest quality app to support optimising health.

While these health app-focused reviews can inform evidence-based practice, there is no existing methodological framework to guide such reviews. Adequate conduct and reporting are essential for maximising transparency of search and appraisal methods and to foster confidence in the reliability of health app-focused review findings. 10 A clearly conducted and reported health app-focused review will provide sufficient details about the app store search, app identification, app data extraction and evaluation of apps so that a reader can be confident that all apps relevant to the health condition have been identified and appraised. For example, clear statement of the country and date on which an app store was searched and language of apps included are essential for a reader to know if the apps retrieved are likely to be relevant to them. There are standardised reporting guidelines tailored for systematic reviews and meta-analysis of randomised control trials (Preferred Reporting Items for Systematic Reviews and Meta-Analyses; PRISMA 11 ) and qualitative studies (Enhancing transparency in reporting the synthesis of qualitative research; ENTREQ 12 ). The PRISMA reporting guidelines may be a framework for the potential items for reporting guidelines for health app-focused reviews but it is not clear if all items are relevant or how to operationalise the items that are relevant. A logical next step is to develop a reporting guideline for health app-focused reviews.

As a first step to develop reporting guidelines, it is appropriate to review existing health app-focused reviews. 10 This provides a basis for developing a reporting standard for publishing health app-focused reviews for people managing aspects of their health and for clinicians. The main purpose of this scoping review is to provide a narrative synthesis on reporting of systematic search of app stores and app appraisal methods used in health app-focused reviews, designed to support people to self-manage aspects of diagnosed health conditions or for clinicians for use in clinical care.

Objectives

The key aims of our review were:

What parts of the ‘usual’ methods of systematic review are used and adapted in health app-focused reviews (e.g. how are app search and selection methods used and adapted).

In particular, how are methods of critical appraisal adapted? Usually it is the methods of individual studies that are appraised, but in app reviews it is the content of the apps, and how is this done (including application of guidance on assessing the quality of apps, such as the Mobile App Rating Scale (MARS) 13 ).

What recommendations are made based on health app-focused reviews and how the ‘strength’ of those recommendations is estimated (e.g. any equivalent to GRADE? 14 ).

Materials and methods

A scoping review methodology was adopted based on the Arksey and O’Malley framework stages15,16 as the overarching aim was to explore the current state of reporting of methods of health app-focused reviews. An a priori review protocol was developed (available on request) and registered with PROSPERO International Registry of Prospective Systematic reviews (CRD42018102786). 17 The review was reported based on Preferred Reporting Items for Systematic Reviews and Meta-Analyses – Extension for Scoping reviews (PRISMA-ScR) 18 with checklist provided in Supplemental Appendix 1.

Primary search

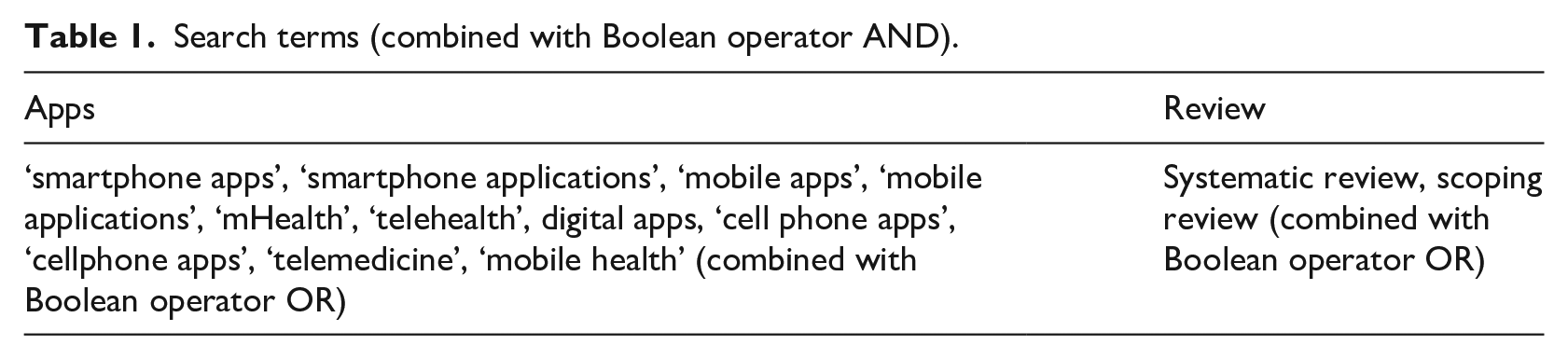

Literature for the scoping review was identified by a systematic keyword search (Table 1) in the following databases: Medline (via Ovid), AMED, PsycINFO, Cochrane Library (via Ovid), PubMed, CINAHL (via EBSCO) and Scopus. The search was undertaken with reference librarian support, limited to 1 January 2012 to 1 August 2018, with the date of last search 9 August 2018. The search was limited to this period to allow for some maturity of the process of app review literature, since mobile apps first become widespread after the launch of the iPhone in 2007. Combining ‘app’ terms AND ‘review’ terms in a preliminary search suggested sufficient articles would be found from this period. All references were imported into Endnote (Version X9, Clarivate Analytics) then uploaded to Covidence 19 for screening.

Search terms (combined with Boolean operator AND).

Secondary search

The same search terms were used to search the journal database of Journal of Medical Internet Research (JMIR) (frequently publishes health app-focused reviews and not all its journals are indexed in MEDLINE). The review registries PROSPERO and The Joanna Briggs Institute (JBI) were both manually searched. Identified records were exported into Endnote and uploaded to Covidence.

Inclusion and exclusion criteria

To identify health app-focused reviews that were most likely to be systematic in nature the following criteria were applied:

(1) The article was ‘systematic’ defined by presence of the term ‘systematic review’ in the title OR an a priori systematic search procedure (along with inclusion and exclusion criteria) was described in the methods section of the article OR the search process was described based on PRISMA standard flow diagram for inclusion and exclusion of apps. (2) The article had a systematic search for health apps in at least one app store described in the text of the article. (3) There was a specific health condition named as focus of the article. (4) The focus of the article was apps for either people with any acute or chronic health condition where a specific disease diagnosis has been made (e.g. inflammatory bowel disease, diabetes, eplilepsy or gout) or for clinicians where the app may support direct clinical care (which may include clinical decision making, diagnosis, disease activity measures, patient education and prescribing, and potentially other aspects, as identified by the review).

The exclusion criteria for articles were: (1) not in English language, (2) focus on apps for general wellbeing or general health, or for supporting aspects of mental or psychological health, and (3) focus on apps for supporting general nutrition outside a named health condition.

Article screening

After automatic removal of duplicates in Covidence, one author (BS) manually removed remaining duplicates. Two authors (BS, RG) independently reviewed all titles and abstracts against eligibility criteria. The full text of all eligible articles then underwent independent review by two authors (BS, RG) to confirm if inclusion criteria were met. At each stage, any disagreements were resolved by consensus discussion between those two authors and if decision could not be reached, articles were discussed with a third author (JHS or HD). When an included article had an author who was a member of the research team, that author was excluded from making decisions about or data extraction from that article.

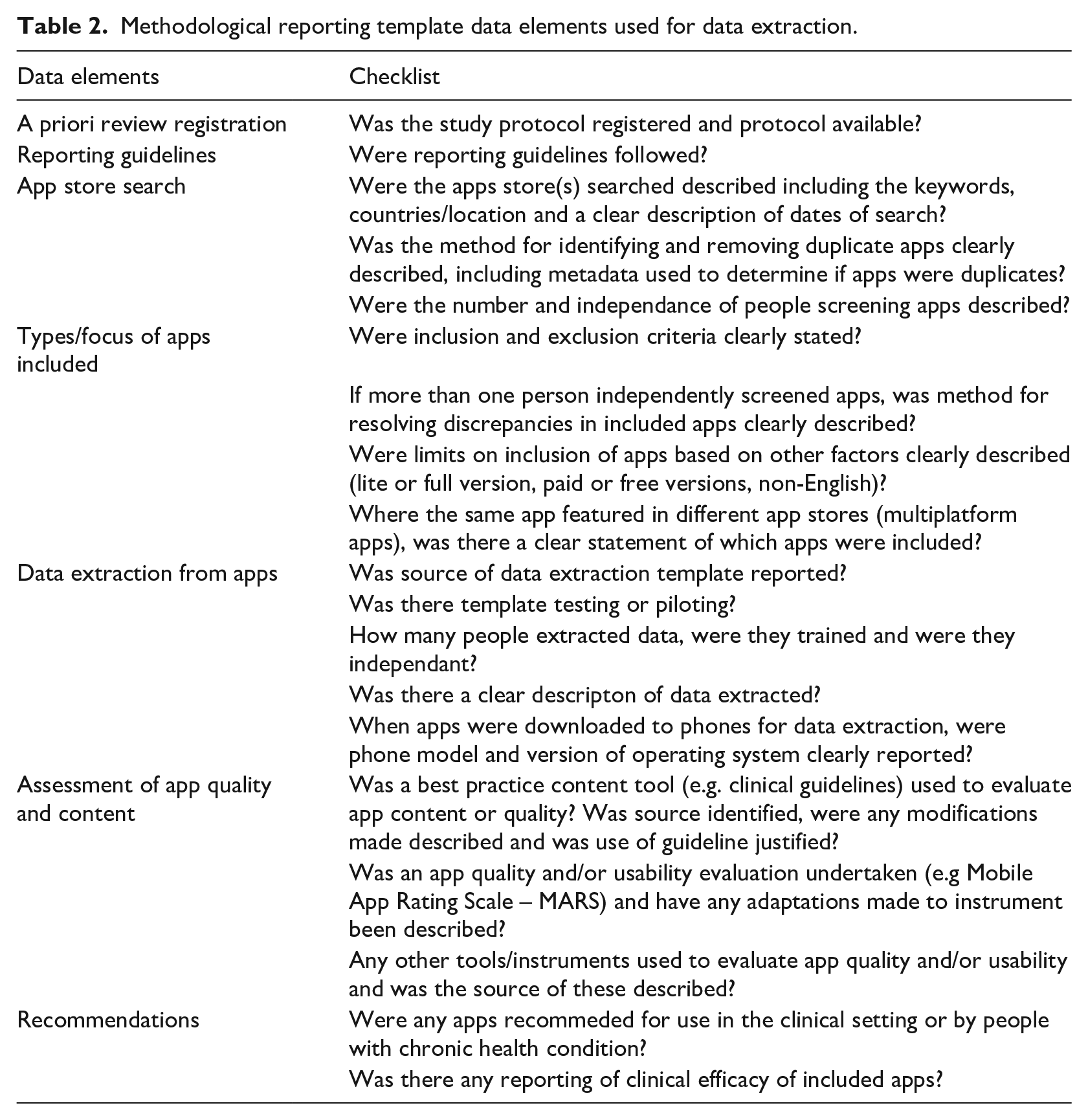

Development of data extraction template

All authors contributed to development of the data extraction template (available on request). A draft template was created in Word (v16.24, Microsoft) based on standard data elements or sections in PRISMA guidelines. The data extraction template was refined by iterative discussions, and then piloted by extracting data from four included articles. For each article, two authors independently extracted the data. Further discussions with all authors (videoconference and email) led to iterative updating of the data extraction template. When disagreement occurred, decision rules were developed for data extraction. The final data extraction template data elements in a health app-focused review are presented in Table 2.

Methodological reporting template data elements used for data extraction.

Data extraction and verification

One author extracted data for every included article (BS) and transferred these to an Excel spreadsheet. The other authors (RG, HD, JHS) each independently extracted data from one third of the included articles. RG entered these data into the Excel spreadsheet. All discrepancies were discussed between the authors (BS and RG for all, with HD or JHS) and agreed by consensus.

Data analysis and synthesis

Reporting of each data element was categorised as ‘yes’ or ‘no’, with some items having categories of ‘unclear’ or ‘not applicable’. Data were reported as frequencies.

Results

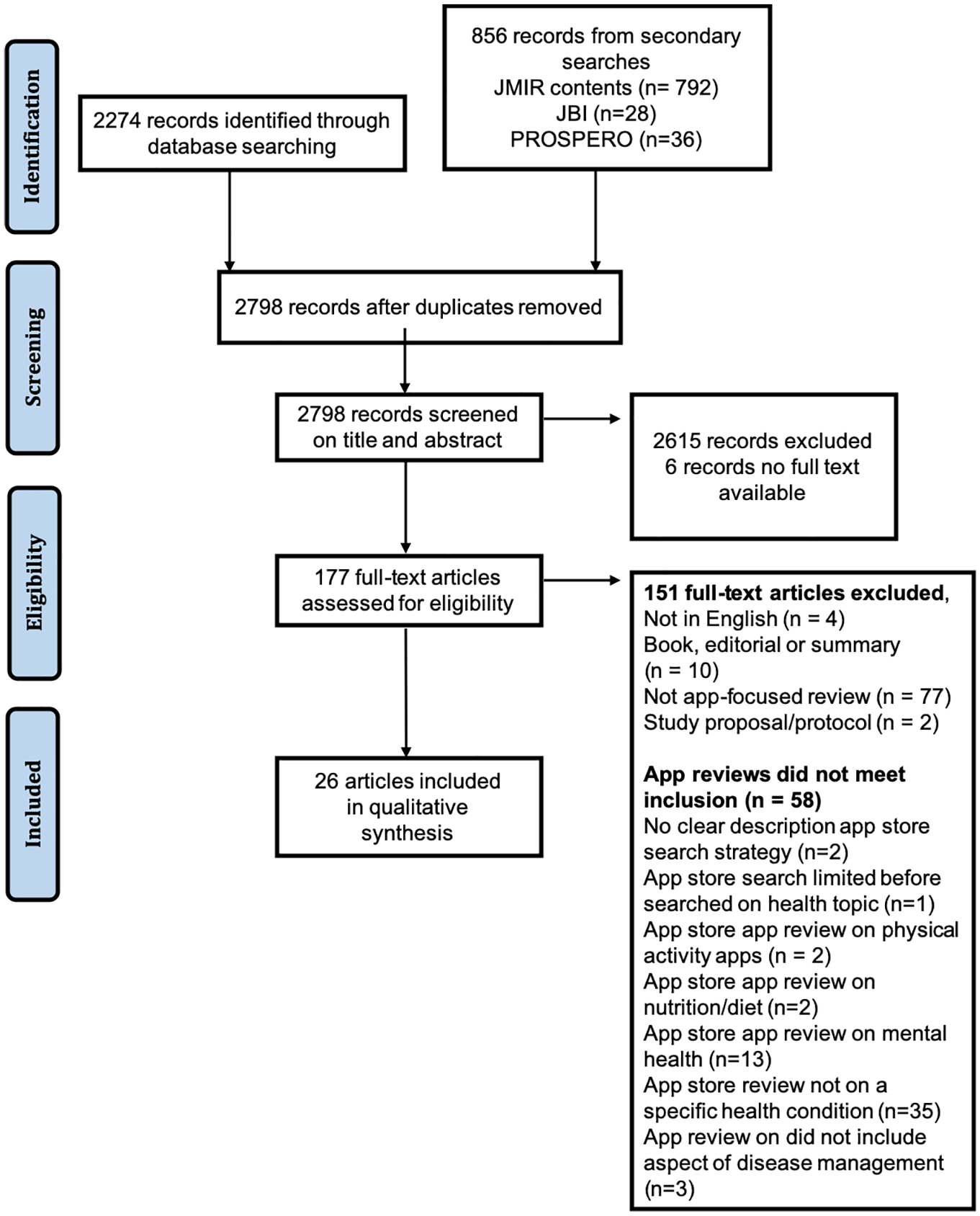

Database search and screening

Flow of references is shown in Figure 1 including reasons for exclusion at full text review. Database searching identified 2274 records, and secondary searching 856 of which 332 were duplicate records. From 2798 records, 2615 were excluded after abstract and title screening. Of the remaining 183, full text was not obtainable for 6, leaving 177 eligible full text articles. After full text review, 26 articles were included for final synthesis. The majority of articles (n = 77) were excluded as they were not app reviews. Of the 58 articles that were app reviews, reasons for exclusion were mainly due to not specific to a health condition (n = 35) (Figure 1).

PRISMA flow diagram for article selection.

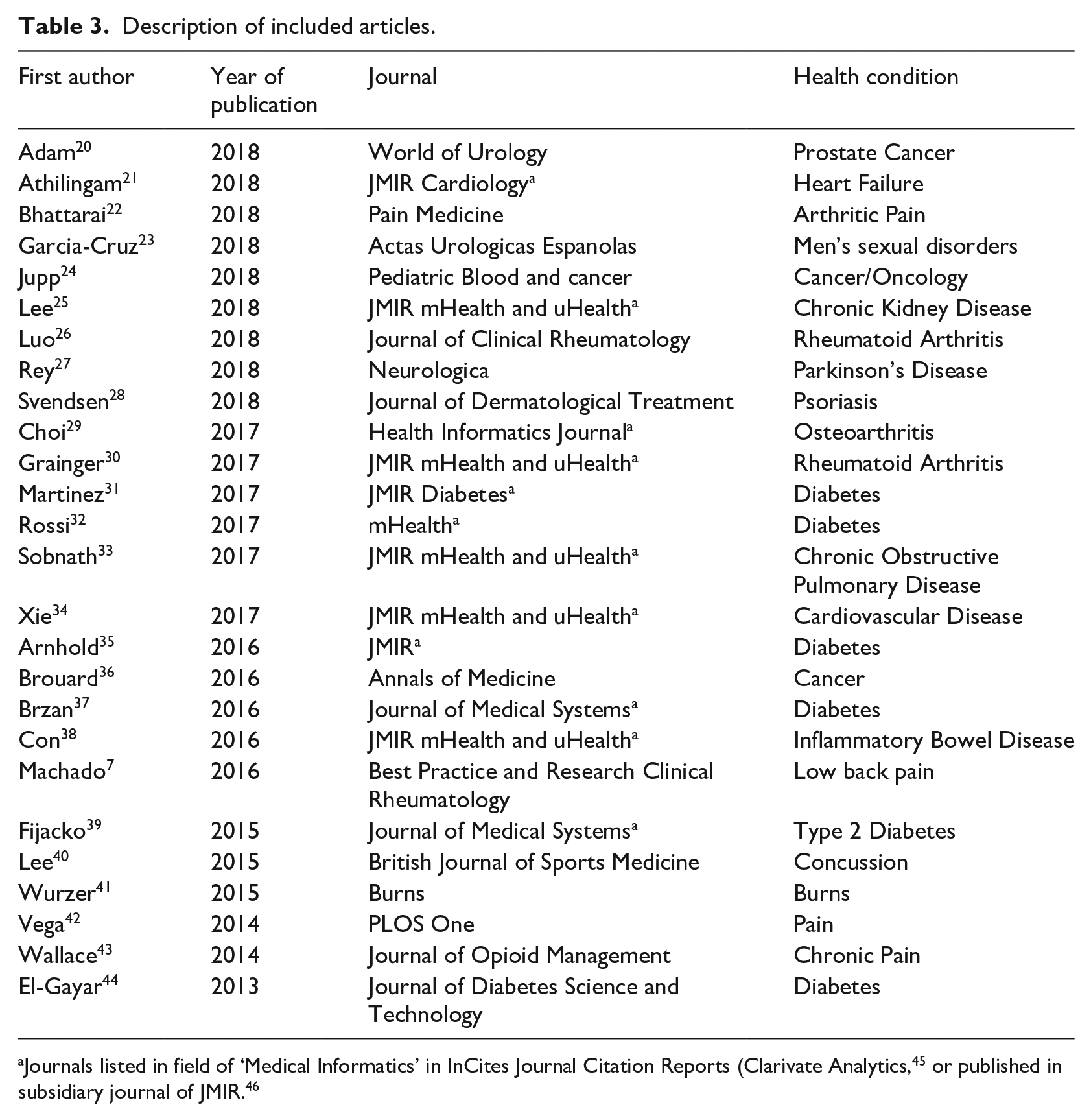

Overview of included articles

Over half (58%, 15/26) of included articles were published in the last 2 years of the search period (2017/18) (Table 3). Fourteen out of 26 articles (54%) stated systematic review in the title. Almost half of the included articles (45%, 12/26) were published in journals in the field of health informatics. Health app-focused reviews addressing a wide range of acute and chronic health conditions were included.

Description of included articles.

A priori review registration and registration guideline

Of the 26 included articles, one reported a priori protocol registration 7 and four stated reporting was based on the PRISMA guideline for systematic reviews.7,30,37,39

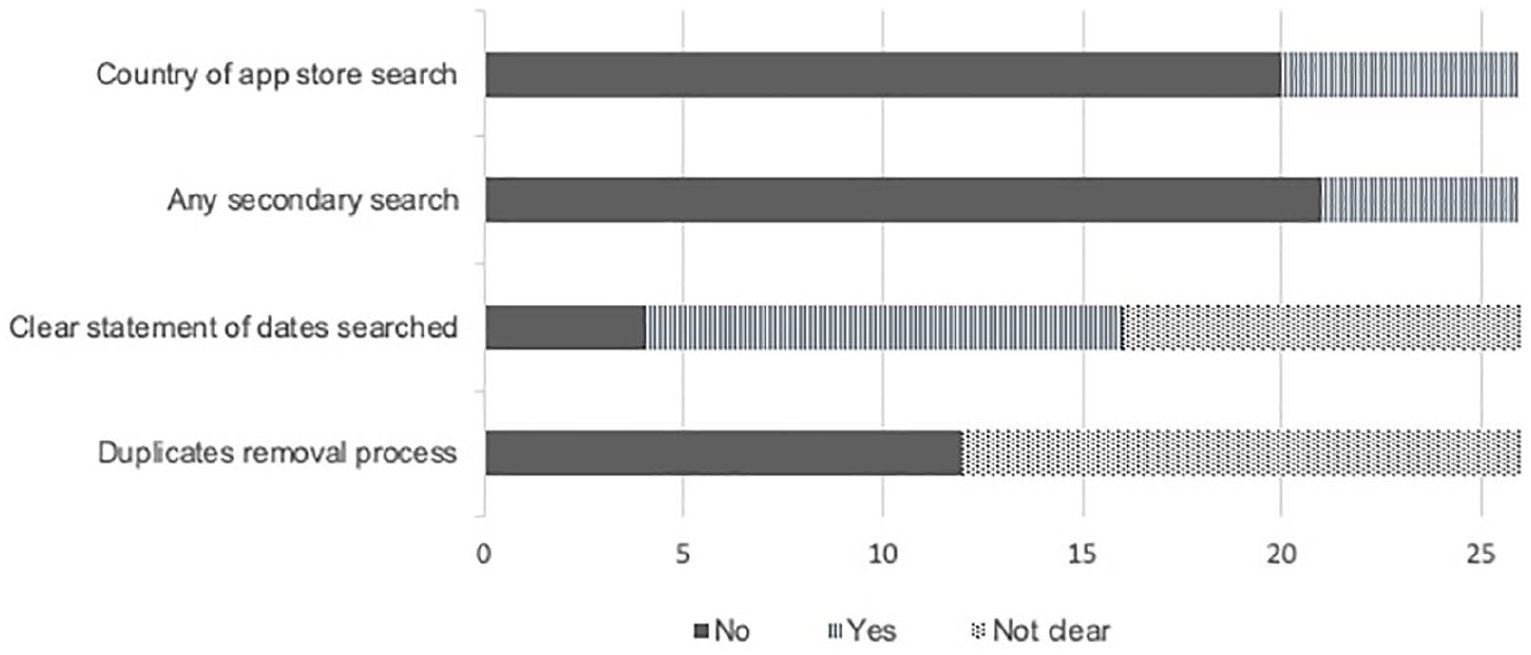

Reporting of search process

The majority (85%, 22/26) searched for apps from two or more app stores. The two most commonly searched app stores were Google Play (Android) and iOS (Apple). Other key aspects of reporting of the search process are presented in Figure 2. Most did not report the country of app store search or if any secondary search (e.g. using the website fnd.io) was conducted. App stores were searched with 1 to 9 keywords, with 17 of the included articles using 2 or more keywords relevant to the topic of interest. However, the duplicates removal process (i.e. same app found in more than one place) was not clearly reported in half of the included articles. While some articles clearly reported the exact date of searching app stores, some articles did not report the date (15%, 4/26) or the date was unclear (38%, 10/26) (e.g. stating the month of app store search only).

Reporting of search process (n=26).

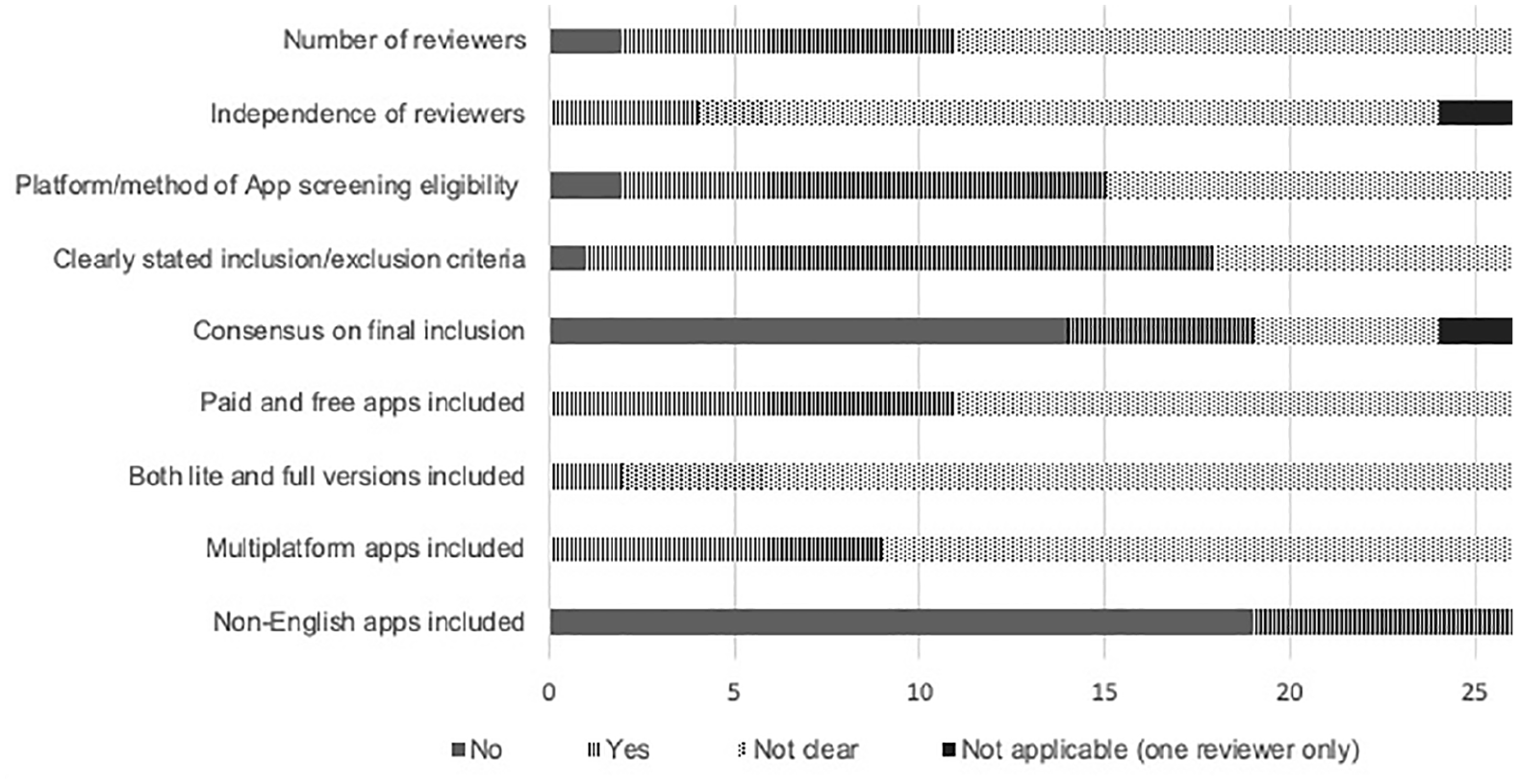

Screening criteria for app selection

The number of reviewers involved in conducting app screening and selection was poorly reported in over half of articles (58%, 15/26) and not reported in two articles (Figure 3). It was unclear if screening was done independently in the majority of included articles (83%, 20/24). Of the 13 articles that clearly reported the method used for screening apps for eligibility in the app stores, three articles22,38,39 used a two-step process involving screening of app store description and then downloading the apps for further screening. The other 10 studies screened apps mainly based on app store description. In articles with two reviewers assessing apps for eligibility, the process of reaching consensus to include apps was not reported in most (74%, 14/19). The reporting of using both lite and full versions of apps and the process of including multiplatform apps was usually unclear (Figure 3). Most of the included articles (73%, 19/26) excluded non-English apps.

Reporting of screening criteria for app selection (n=26).

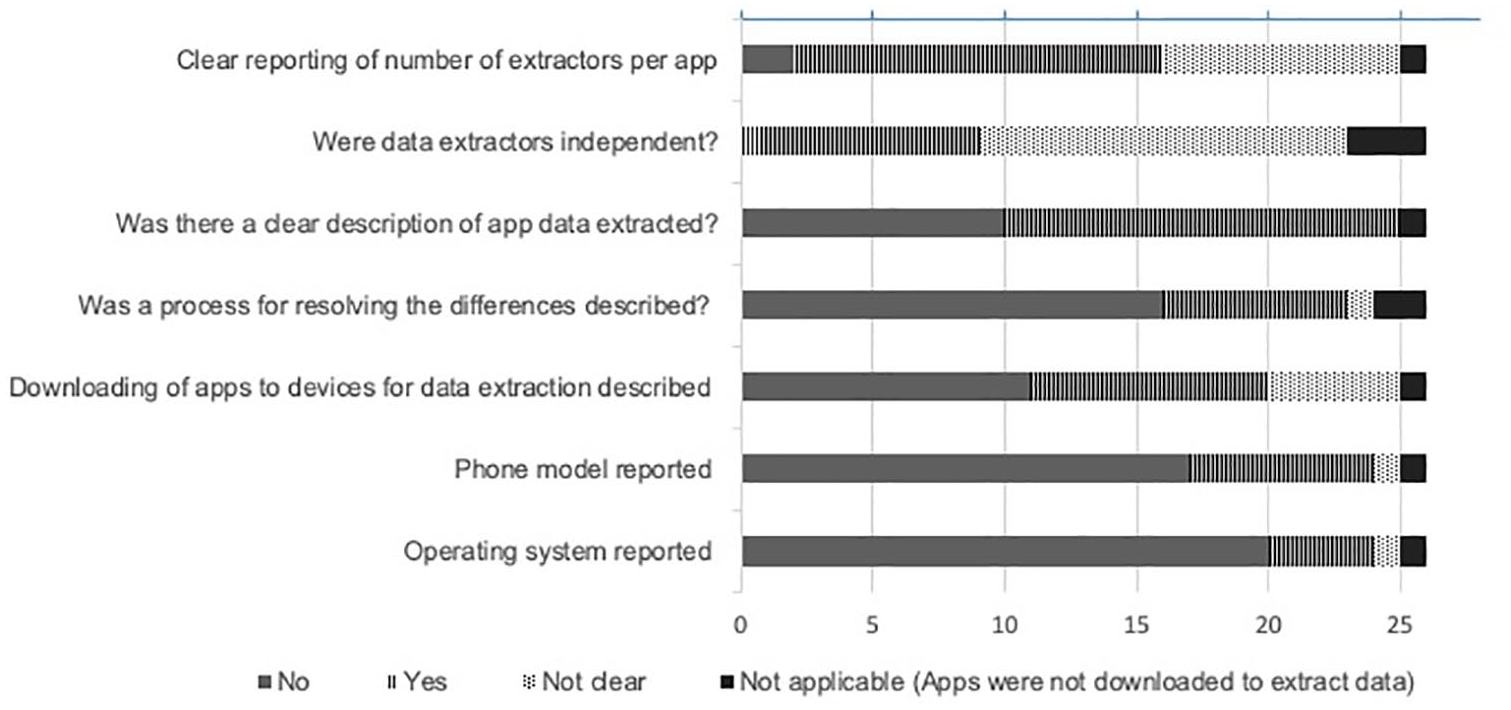

Process of data extraction

While over half of the included articles (61%, 14/23) reported the number of data extractors per app, it was unclear if the data extractors were independent (Figure 4). Furthermore, most articles (67%, 16/24) did not report the process of resolving differences between data extractors. The devices used (i.e. phone model) for downloading the apps and the operating systems were also not reported in most of the included articles (Figure 4).

Reporting of data extraction (n=26).

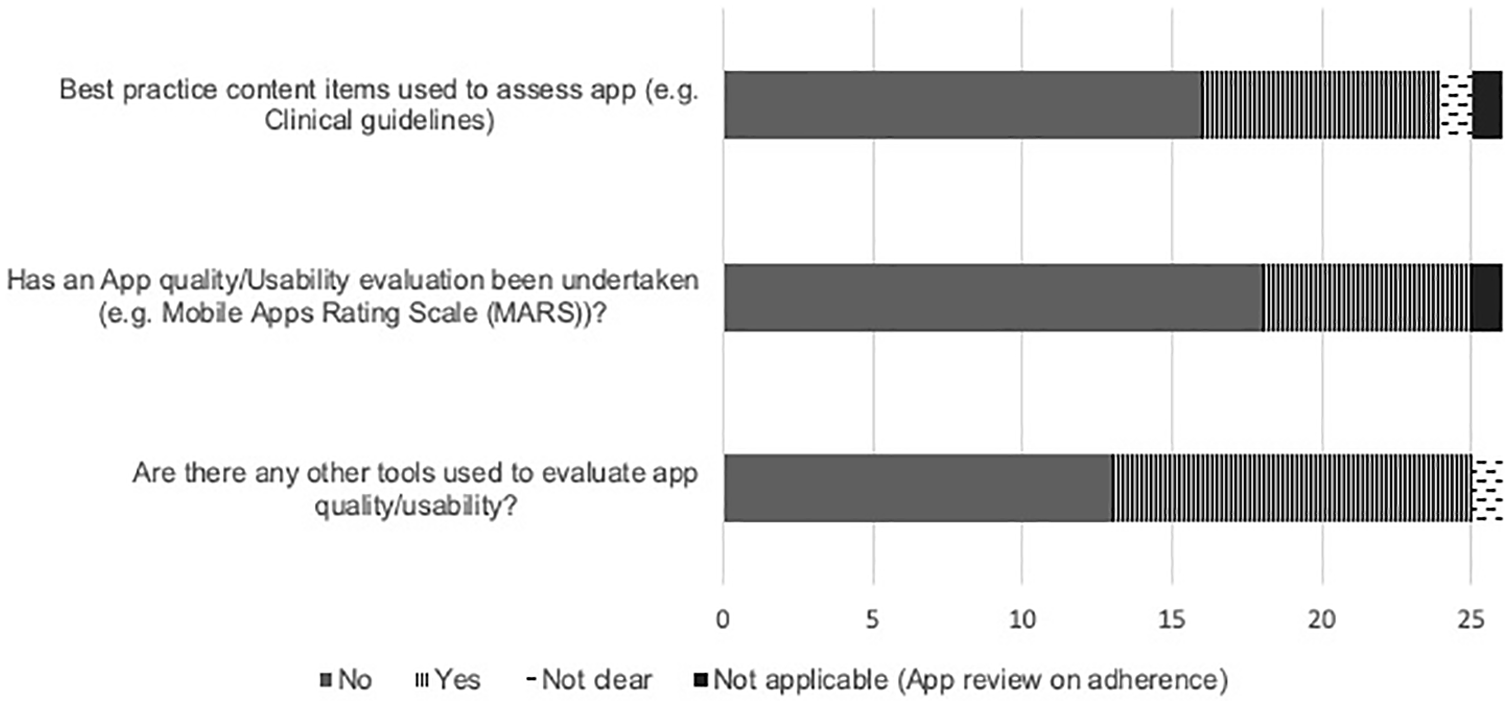

Appraisal of app inclusion of best practice content, and quality or usability

A third of the included articles (32%, 8/25) evaluated the quality of the app contents against best practice content items (e.g. clinical guidelines) relevant to the health condition (Figure 5). Of the eight articles7,22,30,34,37–40 that evaluated the content quality, four22,38,34,40 clearly reported the adaptations made to the guidelines. Seven articles7,20–22,24,30,35 evaluated the quality and/or usability of the included apps using tools such as the Mobile Apps Rating Scale (MARS). 13 About half of the included articles (46%, 12/26) used customised tools to evaluate content quality or usability, of which, only five articles24,26,32–34 clearly reported the source of those critical appraisal tools.

Reporting of content evaluation against recommendations/best practice (n=26).

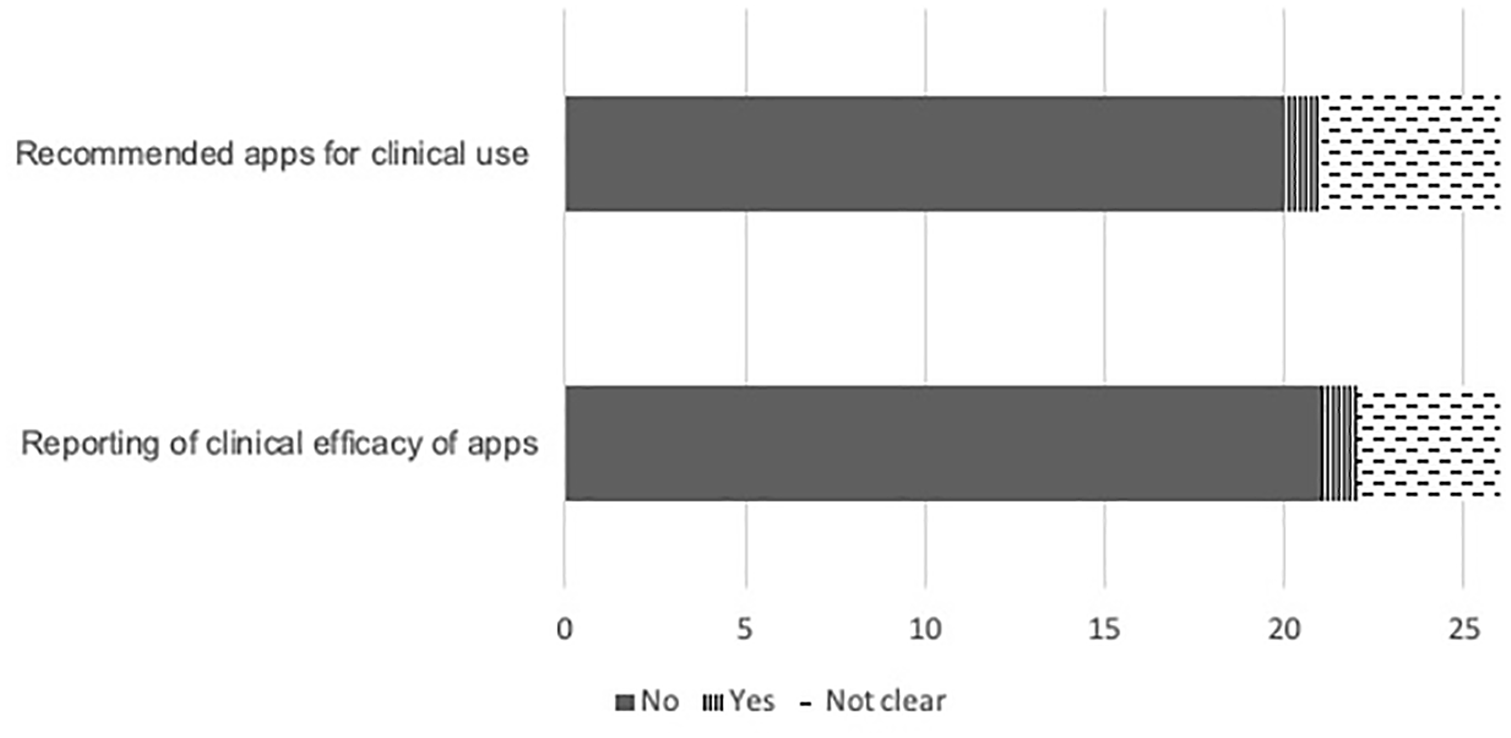

Clinical recommendation and reporting of clinical efficacy of apps

Most articles (77%, 20/26) did not recommend apps for clinical use or report of clinical efficacy of the evaluated apps (Figure 6). One article 38 recommended apps for clinical use and reported the clinical efficacy of apps. There were no examples of strength of recommendations (like GRADE).

Reporting of clinical recommendations and efficacy of apps (n=26).

Discussion

In the 26 included health app-focused reviews, many of the typical procedures of a systematic literature review as operationalised for app store search and app appraisal were not reported, or not clearly reported. Absences, lack of clarity or completeness of reporting were observed in: a priori review protocol registration or following a reporting guideline; the processes of screening apps in the app stores; data extraction details from the app store description or which type of device the app was downloaded to for data extraction; and appraisal tools used for assessing the clinical content, app quality or app usability. Further, the majority of articles did not explicitly report clinical efficacy of apps or recommendations for use in clinical setting.

As health apps are rapidly increasing in number and developed by multiple stakeholders, it is important that health apps are curated and appraised. 47 Health app-focused reviews conducted with methodological rigour and published in the peer-reviewed literature should be the mechanism for identifying best in class apps for a health condition. Our study would suggest that this potential is not being met.

There were few articles that described robust screening processes operationalised for app store searches as an example for other reviewers. One example included two-step screening process based on app store description and subsequently downloading the apps to devices for detailed screening. Using this two-step approach increased rigour of screening against selection criteria and confirmed apps were available for download. Screening on app store alone increases the chance of including apps where the version is no longer usable in current operating system or only available with login. Handling of multiplatform apps during screening was poorly reported, and even when the number of multiplatform apps were reported, it was usually not clear which app version(s) were assessed. This is important as the usability evaluation of an app can vary depending on the operating system. 13 Addressing these methodological shortcomings will require researchers to develop robust protocols and describe the methods of app screening in detail.

Only a small proportion of health app-focused reviews reported the source and any adaptation made to guidelines, frameworks or instruments used to evaluate app quality or usability and clinical quality of app content. Bespoke tools adapted from clinical guidelines were often used for assessing clinical quality of app content.7,22,30 Thus, it seems unlikely any ‘standard’ clinical content quality instrument could be developed and therefore explicit reporting of the criteria and source is necessary to increase confidence in recommending health apps. In a systematic review of 23 studies evaluating the quality of mHealth apps, Nouri et al noted high variability in quality assessment tools or criteria and identified seven classes of quality criteria, with 38 subclasses. 48 The authors concluded this likely reflects different domains for evaluating quality depending on the use-case for the apps but also emphasised the requirement for careful and non-overlapping definition of quality criteria. Health app quality can now be assessed using the validated MARS 13 – published in 2015 and cited almost 550 times. Only a third of the articles published since 2016 assessed app quality with the MARS although use in a higher proportion of health app-focused reviews is likely as maturing methodology and author rigour in app store search and app appraisal methodology occurs. There may be other domains for inclusion in health app-focused reviews. A recent scoping review found multiple safety concerns in health apps and recommended mandatory reporting of health app safety. 49 Another recent study operationalised Health on the Net (HON) Foundation principles, designed for evaluating health web pages, and used these to evaluate popular medical apps. 50 Future health app-focused reviews that aim to evaluate app content quality, usability or clinical quality appraisal should utilise the most appropriate, rigorous and contemporaneous evaluation criteria.

Some lack of rigour is partly attributable to lack of reporting guidelines for the conduct and reporting of health app-focused reviews. Another contributor is the fast-changing environment in which health app-focused reviews are conducted. For example, only one study 7 reported a priori review registration but it seems one review registration database (i.e. PROSPERO) no longer accepts health app-focused review protocols (personal communication to authors CC 6th January 2018 and DD 18th January 2018). However, a fast-changing environment is a further reason for explicit reporting as any health app-focused review will need to be reliably repeated.

Another example of unstable environment is searching app stores; unlike electronic journal databases, app stores are not designed for systematic searching and exporting of data. 51 There are challenges in removing duplicate apps from app store searches (Android and iOS) and the number of hits from using a key word search in the Google Play store can provide endless results. To manage this issue, authors have to decide how to limit searches. One included study screened the first 5 pages of results and deemed this sufficient as no relevant apps were retrieved beyond the first five pages. 22 An app review excluded from our final sample has noted reported the endless Google play app list as a limitation in their review. 52 Further, app store searches can provide inconsistent results within a day and thus it is impossible to replicate the search strategy reliably. This is a key methodological challenge to be addressed for rigour in conduct and reporting of health app-focused reviews.

While this scoping review was planned, conducted, and reported according to relevant guidelines, 18 there are limitations to this work. Included health app-focused reviews attested to being systematic, aiming to identify all relevant health apps available in a structured and repeatable manner. Our definition of ‘systematic’ (as defined in our inclusion criteria) may not have been sufficiently rigorous to select only systematic reviews. If authors used any of three key elements – the article had ‘systematic review’ in the title OR an a priori systematic search procedure was described in the methods section of the article OR the search process was described based on PRISMA standard flow diagram – then these articles met the inclusion criterion whether or not the authors were intentionally systematic. In contrast, we were very rigorous about defining and recording decision rules for our methodology to ensure consistent decision making about inclusion/exclusion, data extraction, and rating a data element as yes, no, unclear or uncertain. It is possible that the decision rules were unreasonably rigorous and therefore identified excessive numbers of data elements that were ‘not clear’. It is also possible that authors conducted health app-focused reviews in a more systematic manner but reported without precision or detail, contributing to the ‘no’ and ‘unclear’ ratings. Thus, the health app-focused reviews may be conducted more robustly than it appears from the sample in our research. While we registered a study protocol a priori, there was one departure from our study protocol. During the record identification stage, the secondary search of the Journal of Medical Internet Research (JMIR) databases retrieved a large number of potentially eligible articles for screening. Therefore secondary searches of other journals were not undertaken as planned due to efficiency requirements. This protocol deviation may have led-to failure to identify potentially eligible high-quality health app-focused reviews. If included there may have been some increase in the number of data elements rated ‘yes’. A further caution is that including only health app-focused reviews for named/diagnosed health conditions excluded health app-focused reviews of other areas of health or wellness (e.g. diet, exercise, mental health apps) where conduct and reporting may be more rigorous.

Our operationalisation of PRISMA guidelines (Table 2) to health app-focused reviews may not have considered all important aspects of conduct and reporting. For example, we did not extract data on type of device (computer or mobile device) on which app store screening was undertaken. Any future development of health app-focused review methods could use our data extraction template, modified from the PRISMA literature search and synthesis guideline as a basis for conduct and reporting. Future researchers should also consider and extend our proposed methods for their current app environment to be as rigorous in conduct and reporting as possible.

People with health conditions and clinicians need ways to find apps to support and manage health conditions or augment clinical care, and have confidence these apps are fit for purpose. Some authors have provided useful summaries of how to find apps 53 recommending use of online vendors (apps stores), online repositories (app repositories, online communities, news stories), and peer-reviewed literature. A recently published framework for finding apps 54 recommends the peer-reviewed literature as first source to identify both high-quality evaluations of single apps and apps identified in app store search. While criteria for reporting evaluations of mobile health interventions in clinical research are already agreed55,56 to support the likelihood of finding a high-quality evaluation of a single app, our work suggests this is currently less likely for health app-focused reviews.

In a previous overview of the quality of health app-focused reviews, the variability in quality was observed. BinDhim et al identified health app-focused reviews that discussed quality or quality-related aspects of health apps for consumers published between 2008 and 2013. 57 The 10 identified articles were scored on 8 bespoke criteria with a mean score of 5 out of 8 and range 2 to 7. Similar to our study, 80% of articles did not include country of search, and 40% did not clearly explain if apps were evaluated based only on app store description or on app download. A more recent study evaluated 91 health app-focused review articles published between 2010 and 2016 based on 53 items that included the eight criteria from BinDhim et al and additional items based on scope of study, appraisal methods and on Cochrane review methodology. 51 Overall, the assessment of reporting appropriate methodological steps had remarkable similarities to our findings. In line with the findings of these previous studies,49,57 our study confirms that the quality of reporting of health app-focused reviews, that is app store searches and app appraisal, could be improved.

The goal of high-quality health app-focused reviews in peer-reviewed literature can only be fully realized in co-operation with major app stores/vendors and regulators as infrastructure and regulatory factors are outside the control of those searching for apps. Firstly app stores need stable search environments, not driven by algorithms to promote apps in a non-transparent nature. Secondly app stores should require standardized app description fields for medical or health apps that explicitly report process and results of content, usability and efficacy evaluation. 54 A coordinated approach will be needed to achieve this. These efforts would considerably advance the efforts to realize the potential for mHealth and improve health outcomes.

Conclusion

Of the 26 health app-focused reviews we identified as having features of a systematic approach, most did not report or incompletely reported many of the typical procedures of a systematic review as operationalized for health app-focused reviews. To improve the quality of the health app-focused review literature key actions include: (1) Identify an appropriate repository for registration of health app-focused reviews, or develop such a registry for health app-focused reviews. (2) Develop a consensus on best practice methods for the conduct of health app-focused review. (3) Agree and achieve transparency in reporting by authors, probably via consensus reporting standards.

Supplemental Material

Appendix_1_PRISMA-ScR_Fillable_Checklist_FINALANON – Supplemental material for Issues in reporting of systematic review methods in health app-focused reviews: A scoping review

Supplemental material, Appendix_1_PRISMA-ScR_Fillable_Checklist_FINALANON for Issues in reporting of systematic review methods in health app-focused reviews: A scoping review by Rebecca Grainger, Hemakumar Devan, Bahram Sangelaji and Jean Hay-Smith in Health Informatics Journal

Footnotes

Author Contributions

RG conceived and designed the study and acquired analyzed and interpreted the data; and drafted the work and revised it critically for intellectual content; and approved the final version; and agreed to be accountable for all aspects of the work. HD made substantial contributions to the conception and design of the study and acquired, analyzed and interpreted the data; and drafted the work and revised it critically for intellectual content; and approved the final version; and agreed to be accountable for all aspects of the work. BS made substantial contributions to design of the study and acquired, analyzed and interpreted the data; and revised the work critically for intellectual content; and approved the final version; and agreed to be accountable for all aspects of the work. JHS made substantial contributions to the conception and design of the study and acquired, analyzed and interpreted the data; and revised the work critically for intellectual content; and approved the final version; and agreed to be accountable for all aspects of the work.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: One author (RG) has a published article included in the data set, but was not involved in data extraction from this article. The authors have no other known conflicts of interest.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.