Abstract

This study reviews the quality of evidence reported in mobile health intervention literature in the context of developing countries. A systematic search of renowned databases was conducted to find studies related to mobile health applications published between a period of 2013 and 2018. After a methodological screening, a total of 31 studies were included for data extraction and synthesis. The mobile health Evidence Reporting and Assessment checklist developed by the World Health Organization was then used to evaluate the rigor and completeness in evidence reporting. We report several important and interesting findings. First, there is a very low level of familiarity with the mobile health Evidence Reporting and Assessment checklist among the researchers and mobile health intervention designers from developing countries. Second, most studies do not adequately meet the essential criteria of evidence reporting mentioned in the mobile health Evidence Reporting and Assessment checklist. Third, there is a dearth of application of design science–based methods and theory-based frameworks in developing mobile health interventions. Fourth, most of the mobile health interventions are not ready for interoperability and to be integrated into the existing health information systems. Based on these findings, we recommend for robust and inclusive study plans to deliver highly evidence-based reports by mobile health intervention studies that are conducted in the context of developing countries.

Introduction

It is predicted that the total market size of mobile health, also known as mHealth, will be almost 60 billion dollars by 2020. 1 There are more than 100,000 mHealth applications (apps) available in the Google Play and Apple’s App Stores 2 that are either available for free or at a minimal cost to the users. Mobile technologies (smartphone, smartwatch, etc.) nowadays are integrated with a plethora of sensors and sophisticated functions. The increasing adoption of these smart devices in developing countries provides opportunities for mHealth applications to deliver versatile and useful healthcare services where clinical resources are in short supply. As the demand for quality healthcare increases, a global shortage of healthcare resources, including nurses and physicians, exists, specifically in underdeveloped countries. To meet such demand, healthcare providers are increasingly relying on technology such as mHealth platforms as a feasible substitute for in-person interactions. As healthcare systems in developing countries are considered immature and often times dysfunctional, the mHealth technology can become a disruptive intervention with positive impact in improving the health of ordinary citizens and to deliver quality care to inaccessible population. In efforts to accelerate progress in achieving sustainable development goals, especially in developing countries, the World Health Organization (WHO) 3 has identified the use of mHealth technology by the public as an important step in providing a cost-effective, convenient, and transparent access to healthcare.

mHealth technology was designed to improve the quality of care and to minimize costs to patients. To achieve these goals, mHealth technology provides several important applications, including educating and awareness building, providing clinical and non-clinical decision support systems (DSSs), epidemic outbreak tracking, training of healthcare workers and remote monitoring, and many others. 4 Despite the availability of thousands of mHealth apps and tremendous prospects and broader scopes of this technology that are beneficial for managing health, the adoption of mHealth in developing countries is relatively slow. Few pieces of research, to date, are focused to understand this low adoption phenomenon. Reviews of existing literature suggest several barriers contribute to the low adoption of mHealth technology in developing countries. These barriers include inadequacy in health literacy, lack of effectiveness, safety, privacy, awareness, and poor integration with the traditional healthcare system. 2 Given these known barriers of mHealth technology adoption, researchers have called for more research to understand this issue from a different perspective.

In the early days of mobile phone adoption, the domain of mHealth was mostly limited to conceptual and proof-of-concept stages. At the time, the matters of mass adoption, scalability, and effectiveness of the technology were not thoroughly considered by researchers and practitioners. Consequently, the lack of evidence of mHealth effectiveness led to the deferral of many mHealth projects. 5 According to the World Bank, mHealth interventions about better public health outcomes show very limited evidence. 6 Given the lack of evidence, researchers call for broader and more adaptive evaluation plans to identify the evidence of positive outcomes, scalability, and sustainability for mHealth intervention projects. 7 Evaluation of reporting of evidence of mHealth technology remains an unexplored area of research and, thus, very important and utmost interest of Information Systems (IS) researchers in recent years. 8

The success of any new technology relies on the successful integration, diffusion, and continued use by intended users. Evaluation of success or failure is critical and one of the essential parts of technology implementation 9 that has to be continuous, comprehensive, and systematic to find evidence of what results have been achieved against the target. Thus, the development and use of standard criteria for the evaluation of mHealth interventions is necessary for evidence reporting from the mHealth studies. The evidence reporting by mHealth evaluation process helps in mHealth policy formation as well as in mHealth intervention selection, promotion, and program expansion. 10 Evidence of mHealth efficiencies and impacts are generally reported by publishing journal articles, white papers, reports, exhibits, and news articles. However, these publications are often biased, incomplete, and incomparable; lack study quality; and have discrepancies in research findings. Many of these problems are due to the absence of standard and rigorous evaluation methodologies for reporting evidence for improving healthcare services by mHealth interventions. Because of these reasons, in 2016, the WHO collaborated with mHealth researchers to formulate an evaluation guideline, known as the mHealth Evidence Reporting and Assessment (mERA) checklist, for evidence reporting on mHealth interventions. 5

The mERA checklist enables researchers to follow a reliable and quality methodology for scrutinizing, reporting, and evaluating evidence of mHealth interventions. Researchers in the field believe that the importance of such methodology-specific evaluation guidance for evidence reporting is substantial and necessary for the effective integration of mHealth technology. Hence, many mHealth studies have recommended for having rigorous methodologies and proper guidelines for mHealth intervention evaluation and evidence reporting. 5

Objective

Informed and motivated by the significance of evidence reporting, this study aims to review the quality of evidence reporting for the mHealth intervention studies in developing countries. As an evaluation tool, we used the mERA checklist to evaluate the quality of evidence reporting in selected studies. According to the best of our knowledge, no similar study to date has been found focusing on this topic in the context of developing countries.

There are different types of evaluation guidance for reporting evidence of technology-based healthcare interventions. For example, Grading of recommendations assessment, development, and evaluation (GRADE), 11 Consolidated standards of reporting trials (CONSORT), 12 Collaborative Adaptive Interactive Technology (CAIT) framework, 13 Mobile Application Rating Scale (MARS), 14 Trial of Intervention Principles (TIP) framework, 15 mHealth Assessment and Planning for Scale (MAPS) Toolkit, 16 and WHO’s mHealth Evidence Reporting and Assessment (mERA) checklist. 10 In this study, we used mERA as it is the most recent, concise, and specific to mHealth interventions. In addition, researchers use mERA as it improves the completeness of reporting of mHealth interventions.10,17,18

Methodology

We conducted this study in four steps: database search, relevance appraisal, data extraction, and evaluation of evidence reporting. As this is a systematic review of existing literature, all steps are qualitative.

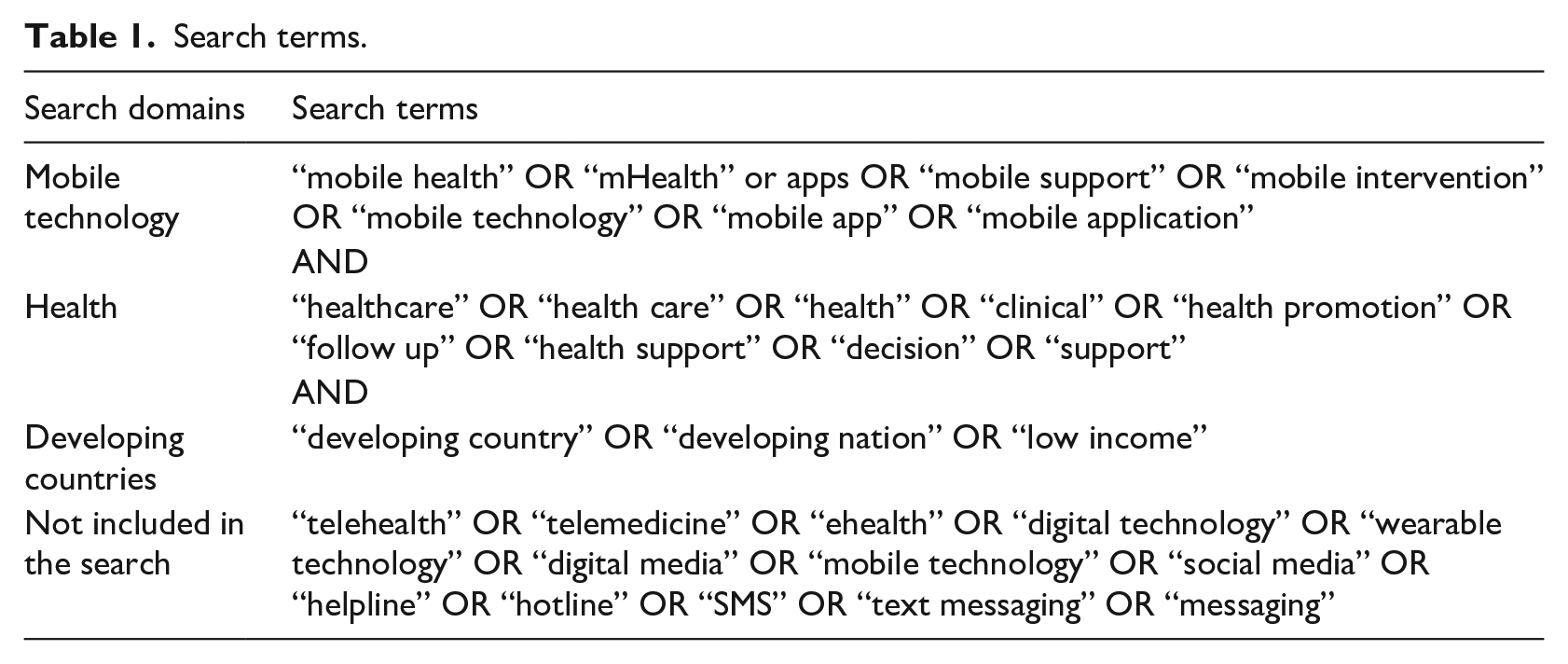

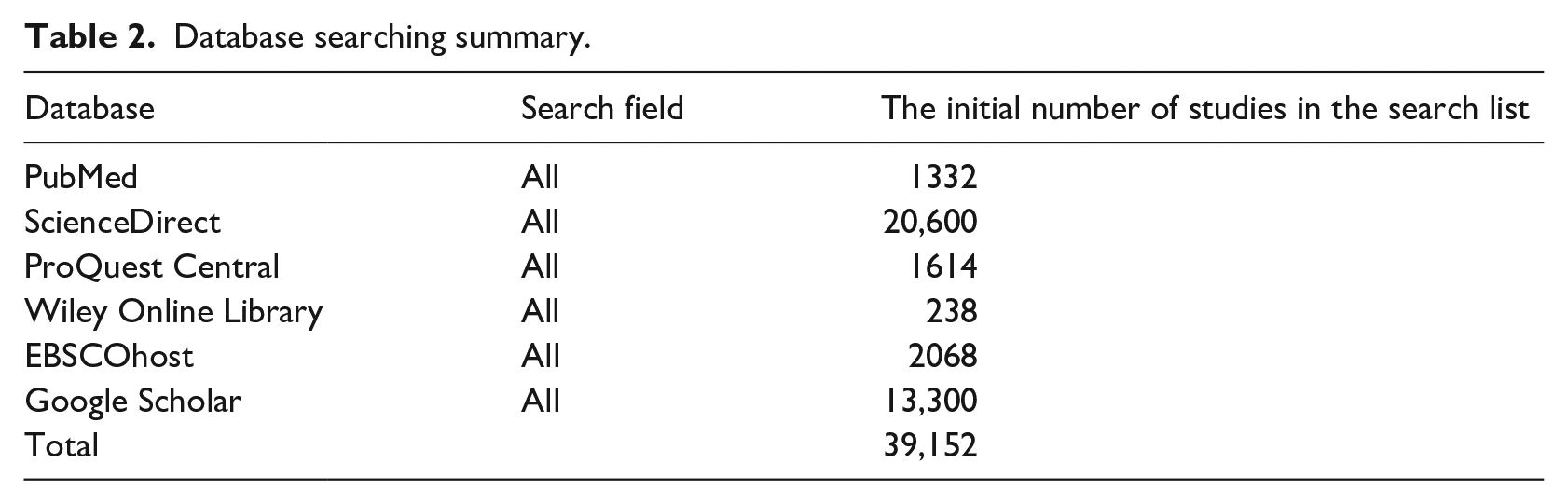

Database search

In this step, a systematic search of six prominent online journal databases was carried out to find extant relevant mHealth studies that are in the context of developing countries. The selected studies were published within the timeframe of the last 5 years from 2013 to 2018. We followed the search strategies of systematic reviews and executed on the selected database to fetch related studies. 19 To do so, we first identified three unique search domains that are related to mHealth research. These search domains include “mobile technology,” “health,” and “developing countries” and are specified in Table 1. We then specified multiple combinations of search terms that belong to our search domains also shown in Table 1. These terms were used to perform a thorough search of six online journal databases. The lists of selected databases are specified in Table 2. For the inclusion of an article, at least one search term was required from each of the three search domains. In addition to the database search, we performed a manual search based on references available in various studies. The initial search query returned with a total number of 39,152 articles.

Search terms.

Database searching summary.

Relevance appraisal

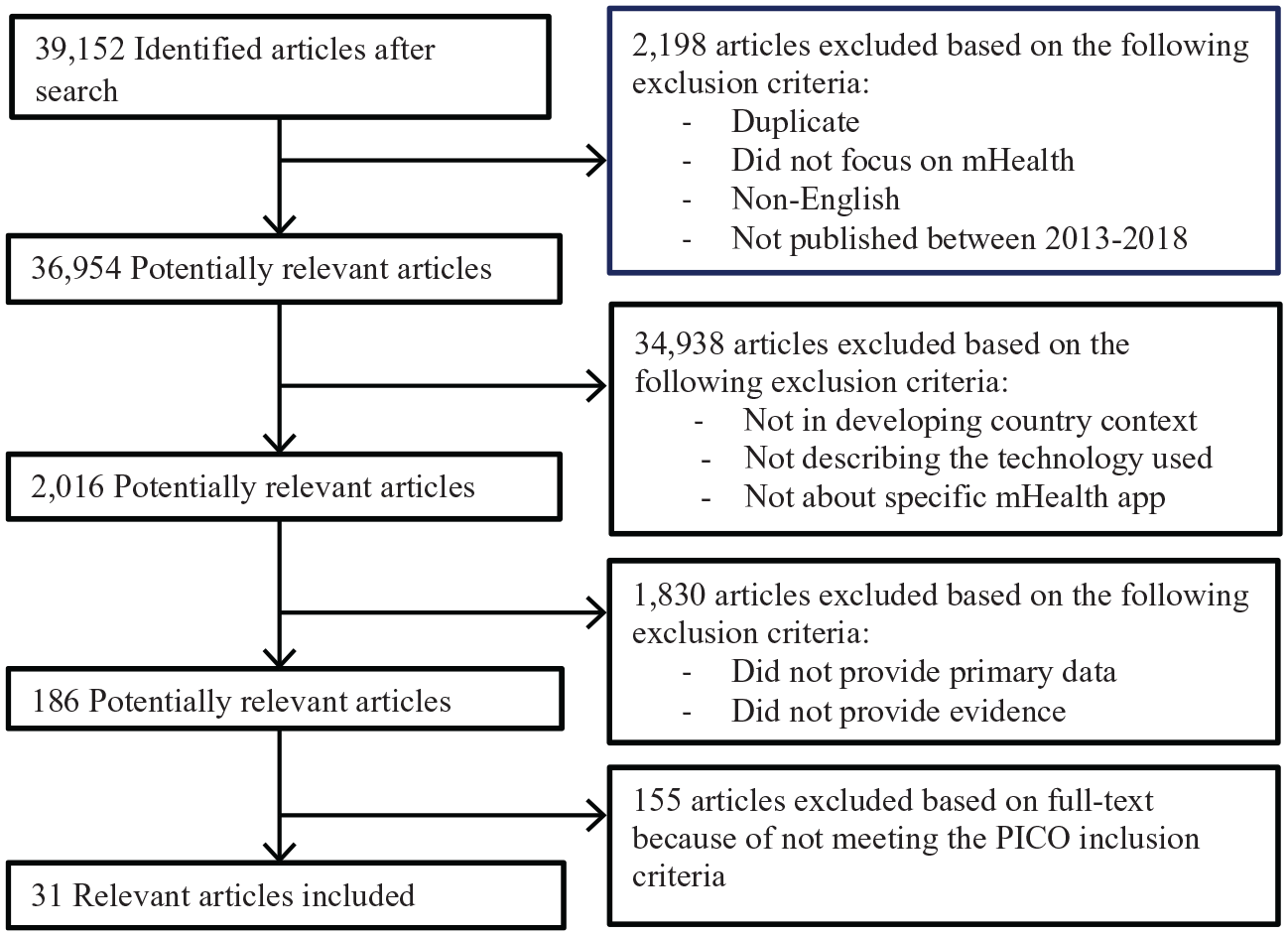

For relevance appraisal, the authors followed the PRISMA 2009 flow diagram known as “Preferred Reporting Items for Systematic Review and Meta Analyses” as recommended by L’Engle et al. 20 Figure 1 shows the results of the following PRISMA flow diagram. PRISMA assisted two authors to select the most potentially relevant studies based on various exclusion and inclusion criteria, details of which are provided in Figure 1 as well. For exclusion, the authors initially screened the title and abstract of every study based on the exclusion criteria. For inclusion, the full texts of the studies were screened carefully using the various inclusion criteria. The inclusion criteria were developed based on a specific framework known as the PICO framework. PICO framework is known to be used in evidence-based medicine to frame and answer a series of healthcare-related questions. PICO stands for Problem, Intervention, Comparison, and Outcome, 21 which uses questions and answers to facilitate a literature search. Following the PICO framework, the authors had to have mutual consent for the exclusion and inclusion of any studies found in the initial database search. The number of identified, excluded, and included studies through the PRISMA flow diagram and PICO framework is shown in detail in Figure 1.

Results of PRISMA flow diagram.

Data extraction

Once irrelevant studies are excluded, we focus on a much smaller set of studies that were considered relevant for this research. In this step, our goal is to extract data from these studies. During the data extraction process, one reviewer was tasked with extracting data and coding while the second reviewer rechecked the accuracy of the first reviewer’s data extraction. The data extraction process and coding are significant for data synthesis, which involved keeping notes about the name of mHealth interventions, targeted population, context/country, study objective, methods, and results. Furthermore, the selected articles were categorized according to their characteristics.

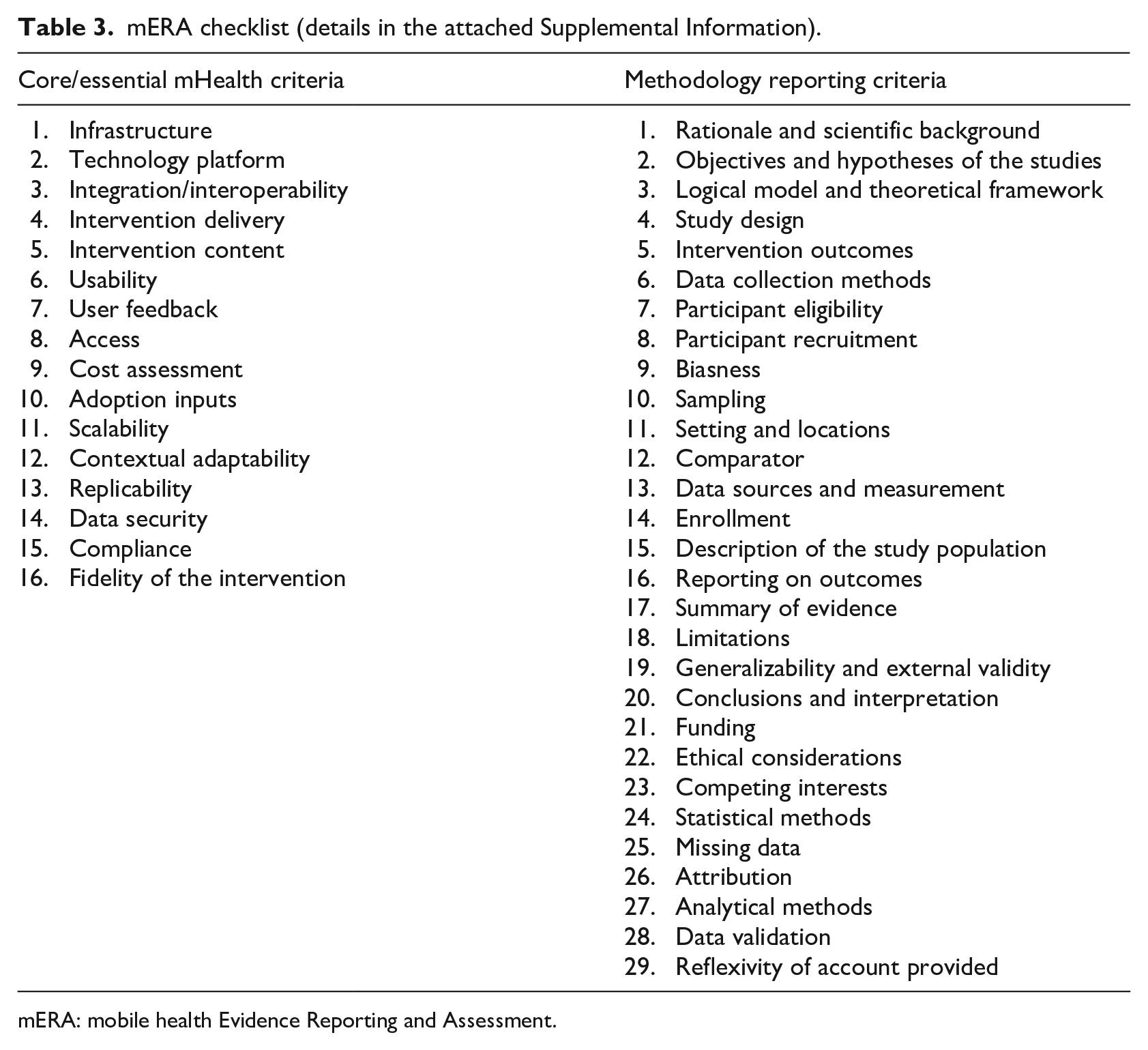

Evaluation of evidence reporting

For evaluating the quality of evidence reporting of each study, we used the mERA checklist following the guidelines by Iribarren et al. 8 In this step, we graded the evidence related to mHealth intervention in each study based on the criteria specified in the mERA checklist. 10 The mERA checklist has two parts, where the first part is known as the core checklist that has 16 essential criteria to evaluate the completeness of reporting. The second part of the mERA checklist is a checklist with 29 methodology criteria to evaluate the evidence reporting and to synthesize study findings (Supplemental File). While the core checklist focuses on the content, context, and technical aspect of the studies, the second part, on the other hand, focuses on the study designs and methodologies.10,20 Table 3 shows two parts of the mERA checklist as discussed.

mERA checklist (details in the attached Supplemental Information).

mERA: mobile health Evidence Reporting and Assessment.

Before applying the grading technique, close attention was paid to practical applications of the mERA checklist and its reliability. For grading, the inter-rater reliability is significant for reliable qualitative studies where more than one rater or grader is involved in grading the contents of data. Inter-rater reliability measures the degree of agreement between the raters. As our grading procedure involved multiple graders, it was important and necessary to test their inter-rater reliability. To do so, we performed a pre-evaluation where three random studies were selected by the following recommendation put forwarded by Agarwal et al. 10 In the grading process, the graders assigned a value of either 1 or 0 for each of the studies, where 1 means that a specific evaluation criterion of mERA is met, and 0 means that a specific evaluation criterion of mERA is not met. To assess inter-rater reliability, we used Cohen’s kappa coefficient (K). 22 It is important to note that in the case of grading healthcare studies, K value as low as 0.41 can be acceptable. 23 The pre-evaluation results show that the inter-rater reliabilities were 0.87, 0.87, and 0.92 for the three randomly selected studies, which are much higher than the recommended acceptable threshold. The pre-evaluation results confirm the reliability of the mERA evaluation technique that we will follow for the actual evaluation. In the next section, we discuss the results for the main evaluation of the selected studies.

Results

Categories of studies

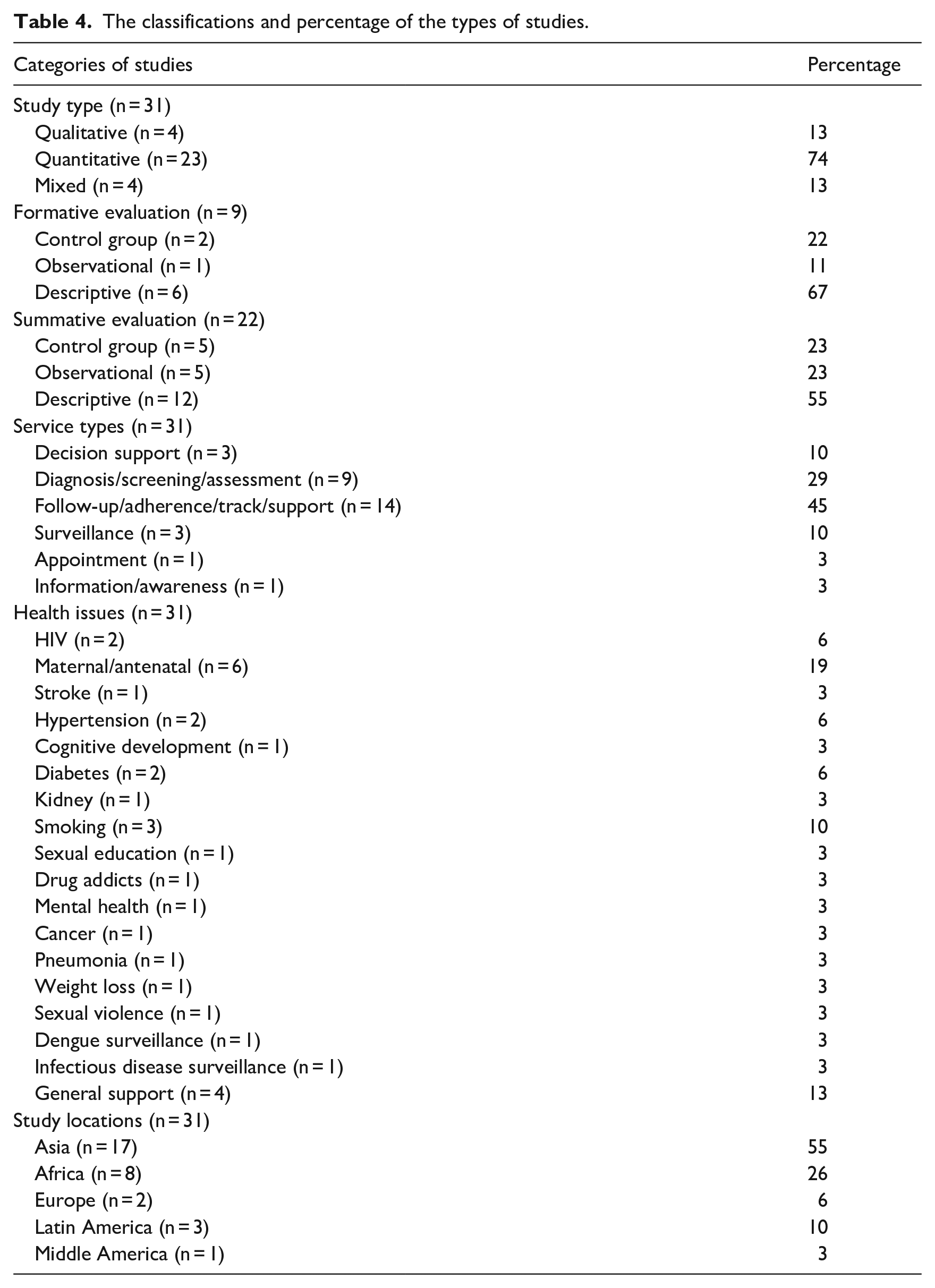

The full-text screening and PICO evaluation during the PRISMA process returned a total of 31 studies for final evaluation consideration. We now classify these studies based on several categories—study types (qualitative study, quantitative study, and mixed study), formative versus summative, service types, health issues, and study location. These classifications were made by carefully reading the 31 selected articles’ abstracts, objectives, and methodologies. Table 4 shows the classifications of the selected studies, their respective frequency (n), and distribution percentages for each of the categories.

The classifications and percentage of the types of studies.

For the first classification category, 13 percent of the studies are classified as qualitative, 74 percent are quantitative, and 13 percent are mixed studies. Based on the methodologies used for evidence reporting, the selected studies are then categorized as formative and summative methodologies. The formative evidence reporting methodology involves the evaluation of evidence during application design and prototype testing phases of mHealth intervention, whereas the summative evidence reporting methodology involves the evaluation of evidence during launches and pilot phases. 24 A total of nine studies (29%) are categorized as formative, while 22 studies (71%) fit the criteria of summative studies. In addition, 11 percent of the formative and 23 percent of the summative evaluations are observational study, whereas 67 percent of the formative and 55 percent of the summative evaluations are descriptive. These classifications are important to know in which phases the mHealth intervention was mostly reported about evidence.

While making categories of the studies according to the service types, most of the studies belong to follow-up (45%) and disease diagnosis (29%) categories. Furthermore, the percentage of service types like decision support and surveillance is about 10 percent. In addition, of 31 studies, maternal/antenatal and cessation of smoking support were the most focused area (19% and 10%, respectively). Finally, of the five different locations, Asian and African countries are the most studied context with 55 and 26 percent, respectively.

Study summaries and synthesis

For synthesizing data, we extracted specific data to summarize all 31 studies. This was done by reading their study objectives, methodologies, and results. Supplemental Appendix 1 individually shows the targeted population, study contexts, study objectives, methodologies, and the results of each study. The findings show that there is a big demand for tailored services in the area of a DSS such as a smoking cessation program and hypertension management where mHealth shows its useful application. Moreover, the feasibility test of mHealth programs for people with depressive disorders shows that mHealth is useful in saving time and expense. Ginsburg et al. 25 studied the usability and acceptability of the mHealth program for pneumonia and reported a positive outcome of mHealth intervention. Similarly, for chronic kidney disease, mHealth programs are found to be satisfactory to users. mHealth is also widely used in the dissemination of information for sexual education. 26

When summarizing the types of services provided through mHealth interventions, it was also found that antenatal service was mostly focused on the behavioral changes in seeking antenatal care services as well as on the satisfactory level of them to find evidence of the usefulness. Another study by Benski et al. 27 particularly focused on how to provide antenatal care services according to WHO guidelines using mHealth intervention. On the other hand, Gunawardena et al. 28 focused on diabetes and pre-diabetes management using mHealth intervention called Smart Glucose Manager, whereas Islam et al. 29 studied the impact of mHealth on the improvement of lifestyle of diabetic patients. Similarly, two other studies30,31 focused on HIV prevention and management which is a significant area in mHealth study. mHealth programs reported in these studies also focused on the provision of healthcare to the elderly people, 32 hazard reporting in a working site, 33 sexual violence reporting, and setting up appointments. In the areas of weight loss and diet control program, evidence of effective uses of mHealth is also reported.

mHealth is also used effectively for ecological momentary assessment (EMA) for tobacco and drug use, although EMA is not found effective as a self-report survey tool in China. Francis-Lyon et al. 34 found that mHealth is a useful intervention for conducting child cognitive assessment in a poverty-stricken context like in Kenya. García et al. 35 conducted a quasi-experiment on the early detection of stroke symptoms by developing a prototype app that was reported very useful. mHealth is also being used on the surveillance of the frequency of particular diseases related to socio-cultural and economic contexts, and as well as infectious diseases such as dengue transmission.

About the methodologies used for evidence reporting, the findings also show that the technique of randomized controlled trial (RCT) was highly preferred in identifying the impact of mHealth on HIV disease awareness and management, such as the studies by Guo et al. 31 and Bull et al. 30 The RCT was also applied for evidence reporting on managing hypertension, 36 drug addiction, 37 and diabetes management 28 through mHealth intervention. We have noticed the effective application of theories for designing mHealth services. For example, Ilozumba et al. 38 used the Theory of Change to determine contextual factors that affect the outcomes of mHealth intervention for pregnant women. Similarly, Bernardes-Souza et al. 39 used the theory of planned behavior to examine the feasibility and user’s perception of facial-aging apps that can discourage secondary school students from smoking.

mERA checklist findings and scores

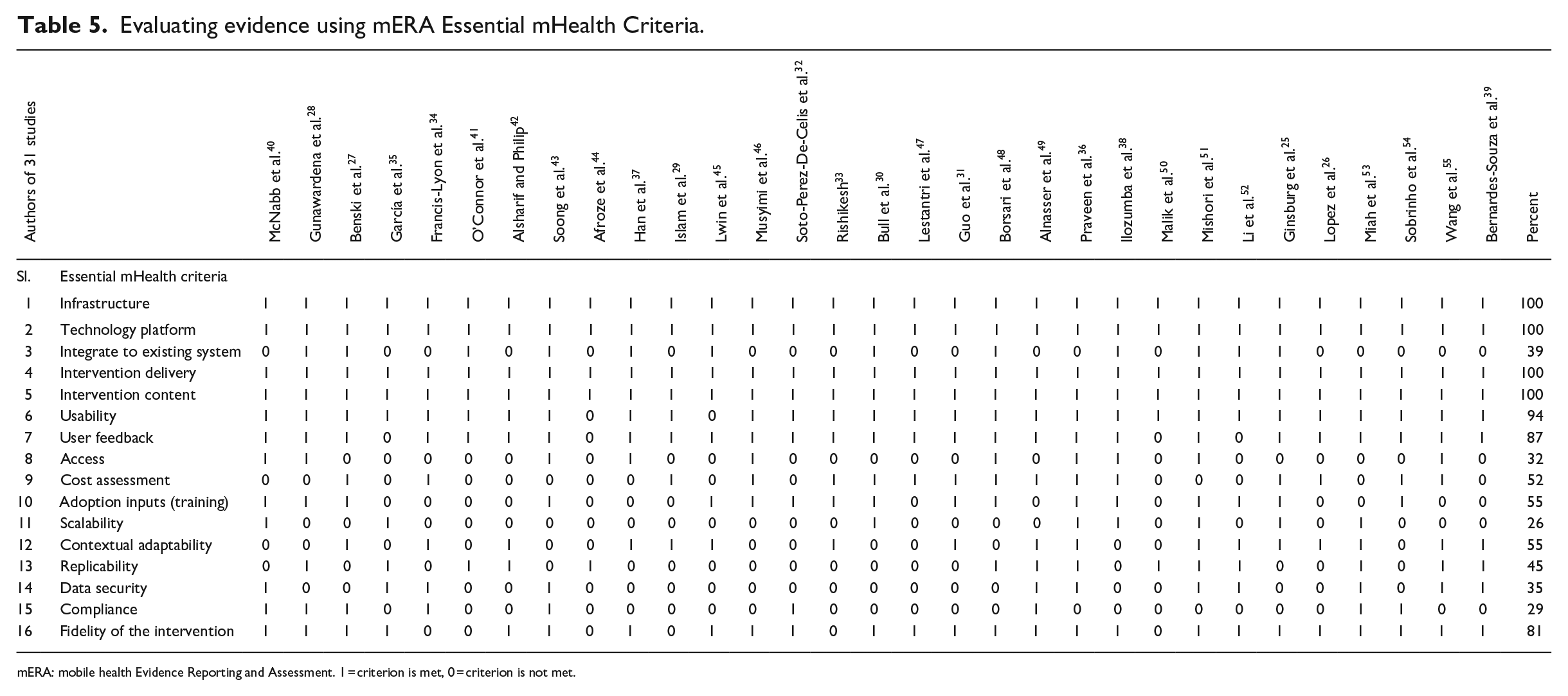

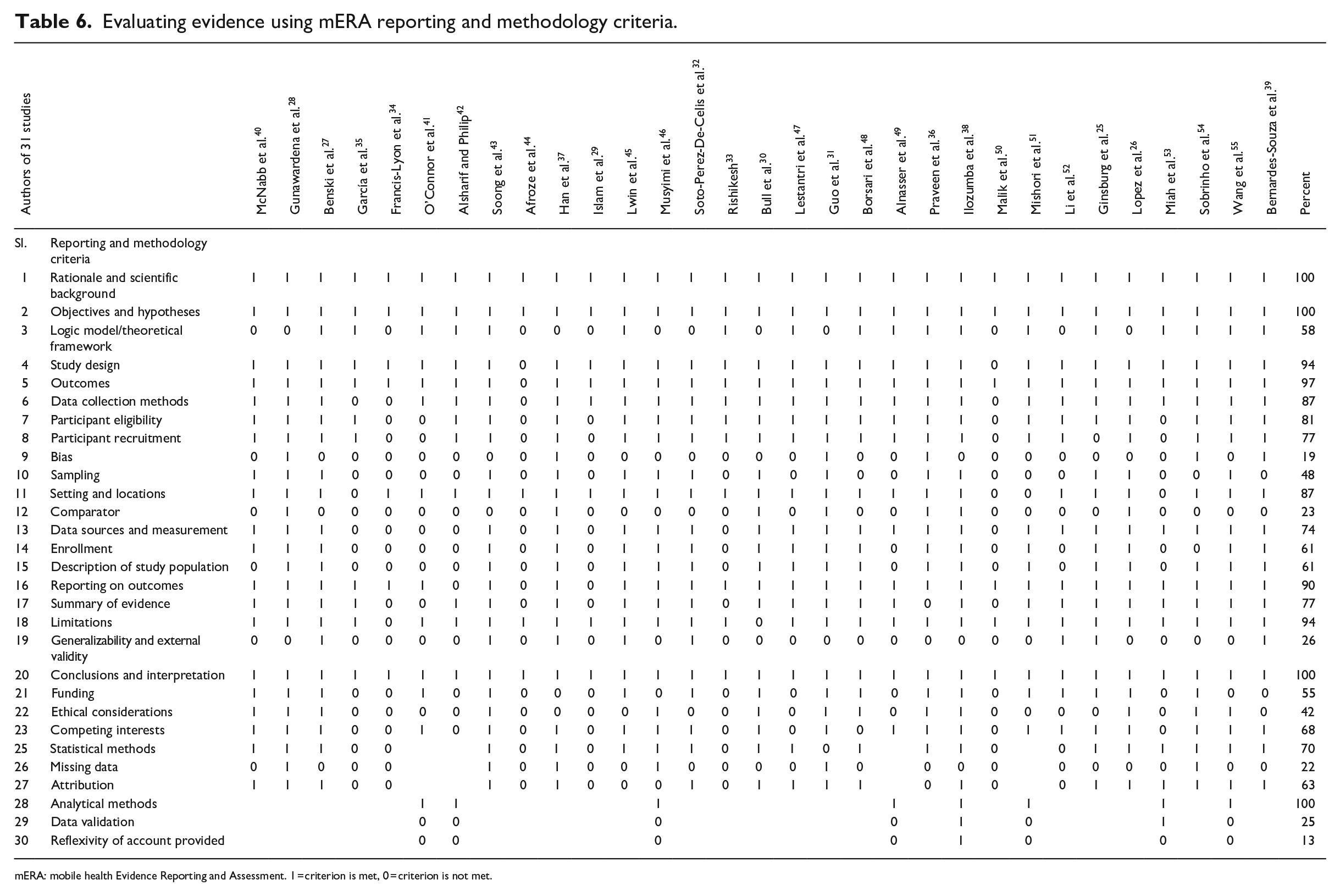

The mERA checklist was developed to systematically identify the inadequacy, biasness, and usefulness of evidence reporting in mHealth studies. This checklist evaluates the rigor and completeness in reporting. It was developed specifically with a focus on low- to middle-income country contexts. The list of criteria incorporated in the checklist can evaluate a wide range of study designs and can aggregate and synthesize a study based on relevant content. The checklist contains a total of 45 mERA evaluation criteria, 16 of which are essential mHealth criteria and 29 are reporting and methodology criteria, as shown in Table 3. As described in the methodology section, we graded every evaluation criterion of mERA with a value of either 1 or 0. The grading along with the relevant findings derived from 31 studies is presented in Tables 5 and 6. In each of the tables, the value of 1 means that the specific evaluation criterion is met in a corresponding study, and 0 means that the specific evaluation criterion is not met in a corresponding study. Findings for essential mHealth criteria and methodology criteria are discussed in the next sections.

Evaluating evidence using mERA Essential mHealth Criteria.

mERA: mobile health Evidence Reporting and Assessment. 1 = criterion is met, 0 = criterion is not met.

Evaluating evidence using mERA reporting and methodology criteria.

mERA: mobile health Evidence Reporting and Assessment. 1 = criterion is met, 0 = criterion is not met.

Findings on the essential mHealth criteria

Table 5 shows the findings of the 16 essential mHealth criteria of the mERA checklist. All 31 studies included infrastructure-related discussion, which is the first item in the checklist. This criterion provides information about the minimum infrastructure required for each mHealth intervention and, thus, facilitates understanding of the viability, generalizability, and replicability of the mHealth interventions. In regard to the second item in the checklist, technology platform, all 31 studies once again cover discussion regarding this criterion without which it is not possible to identify the constraints that might exist and inhibit the implementation and replicability of a mHealth program. Item 3 in the checklist, integration/interoperability, however, was only covered by 12 of 31 or 39 percent of studies. This criterion is used to present a discussion on how the proposed or tested mHealth program fits and integrates with the relevant health information systems (HISs). The next two items in the checklist, intervention delivery and intervention content, are once again covered by all studies under consideration. While the fourth item in the checklist, intervention delivery, is used to describe the channels and mode of service delivery, the fifth item, intervention content, on the other hand, is used to discuss the characteristics, functionalities, and customizations of the contents that were being delivered. Intervention content also includes discussion regarding whether the contents were delivered according to relevant guidelines or no guideline was followed in the process. The analysis shows that 29 studies or 94 percent covered usability criteria, 87 percent of the studies considered users’ feedback, and only 32 percent of the studies considered identifying on any barrier or facilitator to access mHealth technology. These observations are significant because although the majority of the studies discussed usability as an important factor and considered users’ feedback regarding mHealth technology, very few authors went a step forward to identify the facilitators or the barriers to technology access.

The results of our analysis show that 52 and 55 percent of the studies, respectively, considered cost aspect and pre-adoption requirements (e.g. training) of mHealth adoptions. While only 26 percent of the studies considered scalability for mHealth interventions, 55 percent of the studies described contextual adaptability criterion. A total of 14 of the 31 studies or 45 percent provided any instruction or flowchart, or shared code(s) or algorithm for replicability. Another interesting finding is that only 35 percent of the studies discussed data security of mHealth application and only 29 percent of the studies discussed any compliance requirements by the mHealth applications. These findings suggest that despite security and compliance being important aspects of technology adoption and use, they did not get adequate attention from mHealth researchers. Finally, among the essential criteria, only 25 studies or 81 percent of the studies discussed the fidelity (item 16) when describing the outcomes of mHealth intervention.

Findings on the methodology criteria

In this section, we discuss our findings for the 29 reporting and methodological criteria as shown in Table 6. The analysis shows that all the studies covered item 1 and item 2, which is expected as both items (objectives and scientific background) are essential parts of scientific studies. However, item 3 is covered by 58 percent of the studies as not all studies are theoretically grounded. We feel that this coverage is still very good as more than half of the studies used a logical model(s) to show how mHealth intervention can influence users’ behavior for seeking healthcare support. The next three items in the checklist are very similar, and the findings are very similar as well. The item for study design is covered by 94 percent of the studies, the item for study outcome measures against the study objectives is covered by 97 percent of the studies, and the item for the data collection method is covered by 87 percent of the studies. The next two items focus on study participants and as much as 80 percent described the eligibility criteria of the study respondents and 77 percent of the studies mentioned how the study participants were recruited. Study biasness (item 9) was covered by only 19 percent of the studies where the authors discussed and addressed the influence of research on the results. Although less than 48 percent of the studies (15 studies) mentioned what sampling techniques were used, most of the studies (27 of 31 studies) mentioned about the study areas and settings. Also, it is found that only seven studies or 23 percent mentioned about testing comparison groups.

It was found that 74 percent of the studies (23 studies) mentioned the data sources (item 13; e.g. survey) and measurement (e.g. Likert-type scale). Moreover, only 19 studies (61%) mentioned how the participants of studies were screened and enrolled based on eligibility (item 14). Also, regarding item 15 (description of the study population), it is observed that only 19 studies (61%) describe the study population. In addition, most of the studies (90%) presented the study findings or outcomes (item 16) with enough details. Only 24 studies or 77 percent of the studies summarized evidence (item 17) found in the respective studies, and 94 percent of the studies addressed the research limitation (item 18). It is interesting to find only 26 percent of the studies discussed generalizability and external validity, which are very important aspects of empirical research. For describing the conclusions and interpretation, we found all the studies (100%) complied with this item and finished their study with adequate research synthesis and conclusion. About 55 percent of the studies mentioned funding sources (if funded) and 13 of the 31 studies (42%) mentioned ethical considerations which are a very important requirement regarding health-related research. In addition, 68 percent of the studies (21 studies) discussed competing interests, which is item 23 on the checklist.

While evaluating evidence reporting, we found that 19 of the 27 studies (70%) mentioned what types of statistical methods were applied and only 6 studies or 22 percent addressed the issue of missing data. Correspondingly, it is found that only 17 studies (63%) interpreted the attributions from the evidence found (item 26). Subsequently, the authors found that there were 4 qualitative and 4 mixed-method studies of 31 studies. In this regard, eight of the eight studies (100%) mentioned any analytical method(s) used in the qualitative part, and two of the eight studies (25%) applied data validation (e.g. triangulation) techniques. And finally, one of the eight studies (13%) addressed the issue of biasness that could arise from different factors, such as relationships between researchers’ roles and respondents, or the words and phrases used in the questionnaires. Table 6 provides a summary of evidence for the mERA checklist.

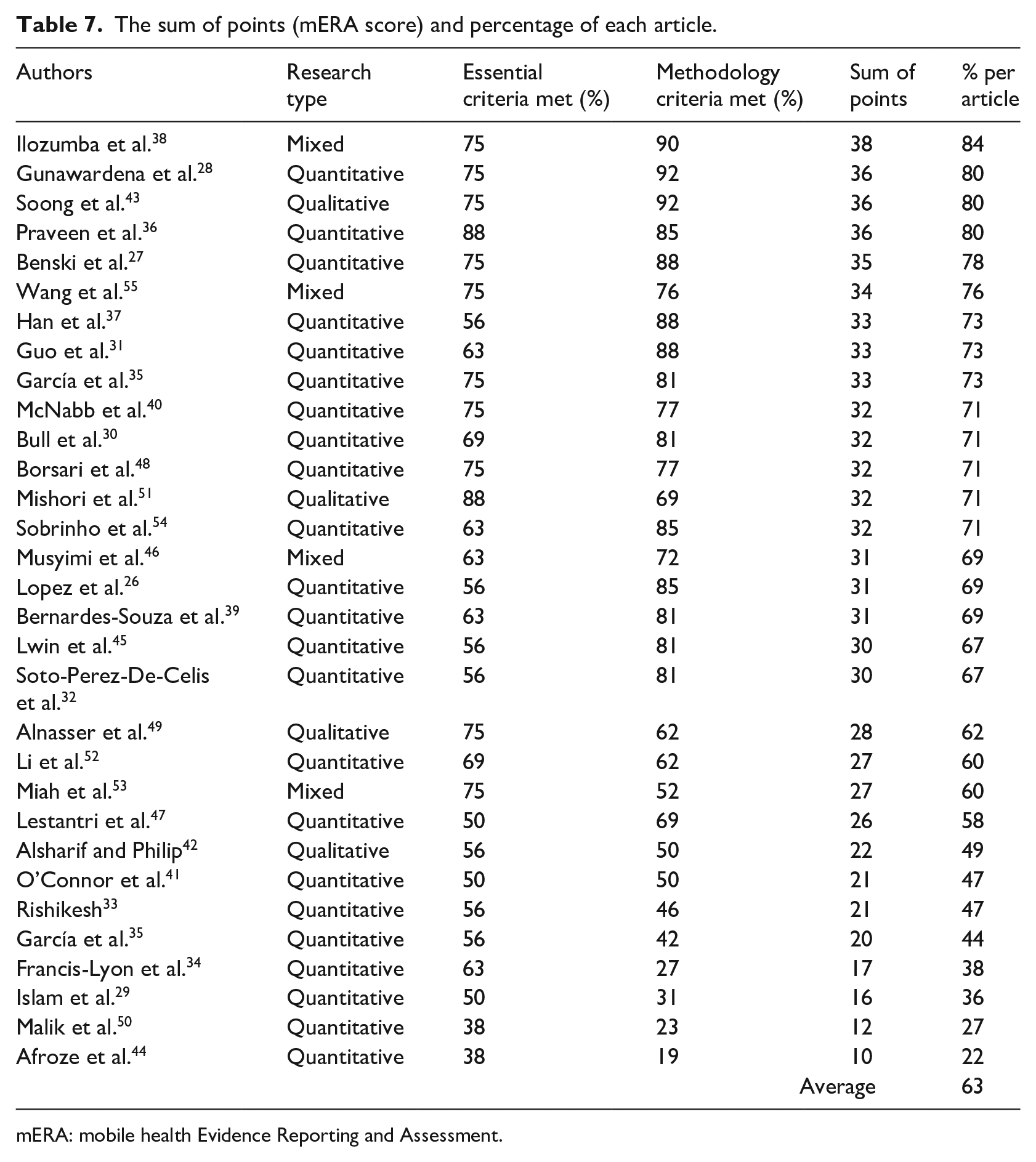

Discussion: summary of evidence reporting

After presenting the findings above which were extracted and synthesized from the 31 studies, Table 7 presents the mERA score and percentage of all mERA checklist criteria met by each of the studies. Despite the presence of robustness and diversity in the research designs and methods applied in these studies, a lack of comprehensiveness and completeness in evidence reporting are common. Our analyses have revealed that the average of the percentage of mERA criteria met by all the studies is 63 percent. It implies that still, a big percentage of the selected studies do not report on the evidence of mHealth interventions properly as recommended by the WHO. This finding of low-quality evidence reporting also conforms with the findings reported by Marcolino et al. 56 Similar finding by Wu et al. 57 also confirms that evidence is still very limitedly reported in the mHealth intervention studies, especially in the context of resource-limited settings.

The sum of points (mERA score) and percentage of each article.

mERA: mobile health Evidence Reporting and Assessment.

From the above-discussed findings, it was found that the highest and lowest percentages of mERA criteria met by the studies were 84 and 22 percent, respectively. It was also found that the studies that have used RCTs have met the high percentages of methodology criteria. For example, the percentages of methodology criteria met by the studies by Gunawardena et al., 28 Han et al., 37 Bull et al., 30 Guo et al., 31 and Praveen et al. 36 were 92, 88, 81, 88, and 85 percent, respectively. However, the percentages of essential criteria met by their studies were 75, 56, 69, 63, and 88 percent, respectively. Therefore, it can be inferred that the percentages for corresponding essential criteria and methodology criteria do not commensurate well. The higher percentage of reporting on methodology criteria than the essential criteria indicates high methodological quality in intervention impact assessment but a low level of comprehensiveness in the essential functionalities and requirements of mHealth interventions.

The higher percentage of reporting on methodology criteria can also imply that although the comprehensive methodologies were applied to report on the evidence of mHealth interventions, because of the lower percentages of meeting the evidence reporting from the perspective of essential criteria, the chance of widespread adoption of the mHealth intervention is still very low. For instance, Table 5 shows lower percentages for accessibility (32%), replicability (45%), scalability (26%), data security (privacy; 35%), compliance (29%), and integration and interoperability with the existing systems (39%) that lead to a low mHealth adoption rate. Such issues like low accessibility and scalability were also reported in the study by Bockting et al. 58 Besides, low evidence reporting on the criteria of cost consideration (52%) and adoption input (promotion and training required; 55%) indicates significant barriers in the low-setting context.

From the perspective of methodology criteria, low criteria meeting for missing data (22%) in quantitative studies and data validation (25%) in qualitative studies can indicate a high presence of biasness in the evidence reporting for mHealth interventions. Also, the low percentages of biasness (19%) and comparator reporting (23%) indicate poor quality of evidence reporting on mHealth programs. Furthermore, more than 50 percent of the studies did not use any logic model or theoretical framework that can lead to higher adoption of mHealth interventions vis-à-vis contextual requirements and demands.

Conclusion

The mERA checklist brings a valuable tool to the researchers for evaluating a wide range and quality of studies on mHealth interventions for low-setting developing world context where the scope of the positive impact of mHealth projects is very high. First released in 2016, until today, the mERA checklist is the most useful tool for comprehensive and transparent evidence reporting on mHealth interventions. The mERA checklist does not evaluate the quality of any mHealth study but evaluates the evidence reported in the mHealth studies. According to our knowledge, this is the first study focusing on the quality of evidence reporting in the studies of mHealth interventions that exist in the context of the developing world.

As mentioned in the discussion section above, the evidence reporting on mHealth research in low-setting developing countries significantly lacks essential criteria meeting, which indicates that mHealth interventions in the context of developing countries have still a long way to go to deliver the optimal benefits. Also, it is found that most of the mHealth studies selected did not follow any design science–based method and theory-based framework for developing mHealth interventions. Thus, robust and more inclusive development plans and studies are required to deliver highly evidence-based recommendations for the improvement and widespread adoption of mHealth interventions in developing countries.

The high presence of studies without peer review from the developing countries is concerning as these studies mostly fail to meet the criteria on evidence reporting severely. Thus, the low percentages of meeting the essential criteria in the developing countries are not surprising as the mERA checklist is still not very familiar to the mHealth researchers in developing countries. Hence, we acknowledge the need for more dissimilation and increased application of mERA checklist to eventually improve the usability and adaptability of mHealth technologies. The extensive application of mERA can also indirectly increase the quality of mHealth evidence reporting. Finally, the findings also reveal that the lack of interoperability and integration with the existing HIS is significantly low in the developing world. To increase and ensure interoperability, the synchronous effort by the industry stakeholders, health policymakers, funding institutes, and mHealth developers is a prerequisite.

A possible limitation of this study is that it is likely possible to include more peer-reviewed studies by altering the search strategy applied for developing country context. It is also important to acknowledge the possibility of unintentional exclusion of some eligible studies. Another limitation of this study is that this study initially searched and used only articles/studies in the context of developing countries; hence, there is no scope of any comparison between studies from developing countries to developed countries in this particular study. We believe that this is a major direction for future studies. Furthermore, the generalization of the findings related to some mERA criteria cannot be possible because of the wide range of socio-economic and contextual differences that exist in the developing world. Thus, studies based on more specific and homogeneous context are needed for obtaining consistent findings on the quality of evidence reporting in the mHealth intervention studies.

Supplemental Material

Appendix – Supplemental material for Mobile health interventions in developing countries: A systematic review

Supplemental material, Appendix for Mobile health interventions in developing countries: A systematic review by Md Rakibul Hoque, Mohammed Sajedur Rahman, Nymatul Jannat Nipa and Md Rashadul Hasan in Health Informatics Journal

Supplemental Material

Supplementary_File – Supplemental material for Mobile health interventions in developing countries: A systematic review

Supplemental material, Supplementary_File for Mobile health interventions in developing countries: A systematic review by Md Rakibul Hoque, Mohammed Sajedur Rahman, Nymatul Jannat Nipa and Md Rashadul Hasan in Health Informatics Journal

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.