Abstract

Pilot implementation is a method for avoiding unintended consequences of healthcare information systems. This study investigates how learning from pilot implementations is situated, messy, and therefore difficult. We analyze two pilot implementations by means of observation and interviews. In the first pilot implementation, the involved porters saw their improved overview of pending patient transports as an opportunity for more self-organization, but this opportunity hinged on the unclear prospects of extending the system with functionality for the porters to reply to transport requests. In the second pilot implementation, the involved paramedics had to print the data they had entered into the system because it had not yet been integrated with the electronic patient record. This extra work prolonged every dispatch and influenced the paramedics’ experience of the entire system. We discuss how pilot implementations, in spite of their realism, leave room for uncertainty about the implications of the new system.

Introduction

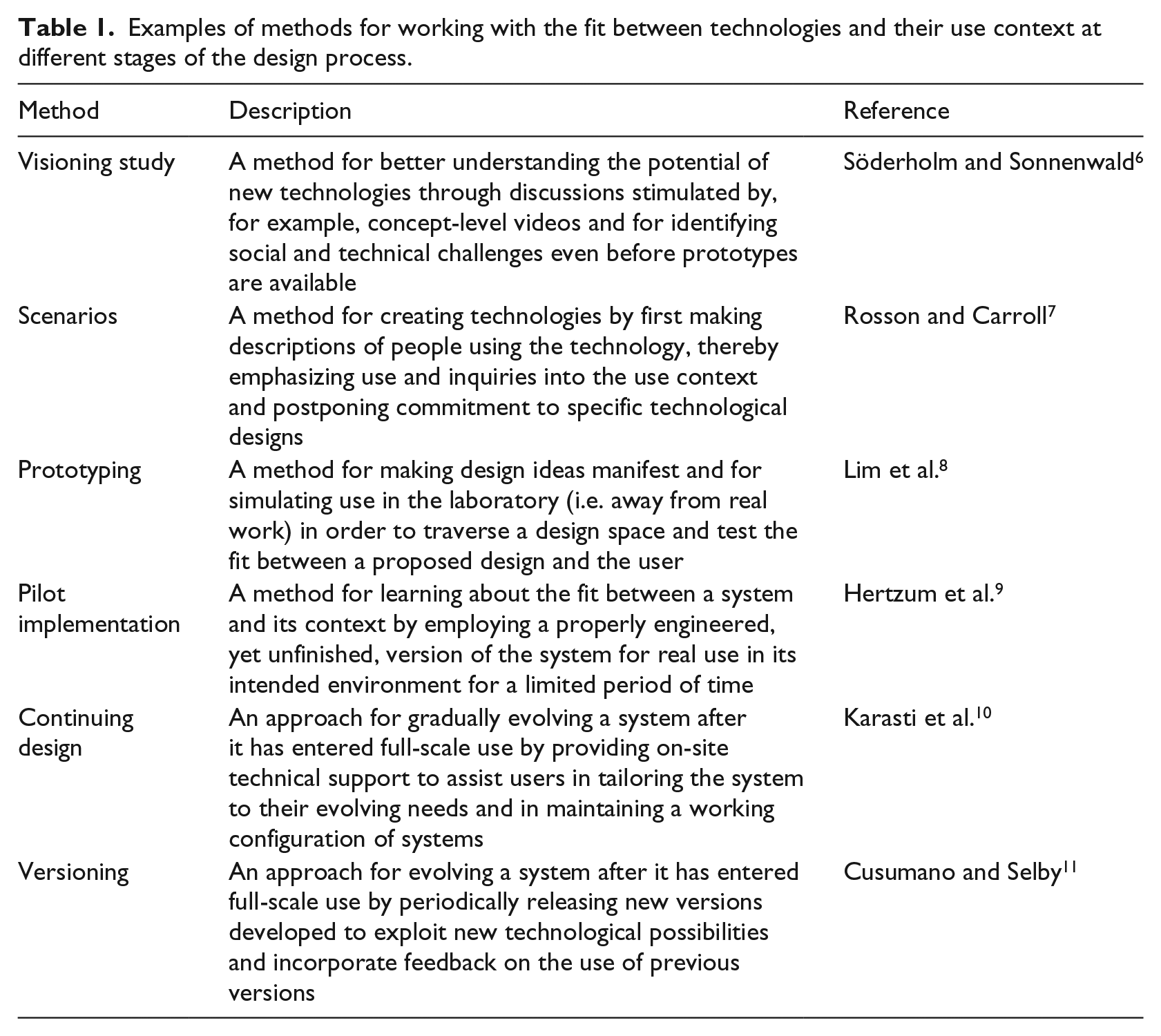

Healthcare information systems are inherently sociotechnical and their success is determined by the mutual adaptation of technology and organization.1–3 Many unintended consequences of healthcare information systems, including under-use and workarounds, flow from discrepancies between the technology and the organizational setting.4,5 To avoid such discrepancies, a rich variety of design methods exists for assessing, ensuring, and otherwise working with the fit between new healthcare technologies and the needs of healthcare organizations and users. Table 1 gives examples of these methods, which span from the first to the last stages of the design process. We focus on one method, pilot implementations, and show that in spite of their realism it is difficult to learn from them because they are situated and “messy.”

Examples of methods for working with the fit between technologies and their use context at different stages of the design process.

Hertzum et al. 9 define pilot implementation as “a field test of a properly engineered, yet unfinished system, in its intended environment, using real data, and aiming—through real-use experience—to explore the value of the system, improve or assess its design, and reduce implementation risk” (p. 314). This definition makes pilot implementation a meeting ground between the system and its environment and, thereby, an opportunity for learning about the mutual adaptations needed to make the system a success. A subset of these adaptations can be anticipated through analysis ahead of use or discovered through in-the-lab use of nonfunctional prototypes. The rest must be learned through practical experience with the system in real use.12–14 Pilot implementations supply such practical experience, but their limited scope and the unfinishedness of the pilot system necessitate preparations to enable real use and safeguard against errors. 9

We will analyze pilot implementations from the point of view that they are situated and messy activities. They are situated because the particulars of the pilot site influence the pilot implementation, irrespective of whether these particulars are representative of the conditions under which the finished system will subsequently be used. As a result, it may be difficult to distinguish the aspects of a pilot implementation that reflect what it will be like to use the system once it is fully implemented from the aspects that are specific to the pilot implementation and, thus, do not reflect what it will be like to use the system once fully implemented. This difficulty makes pilot implementations messy in the sense that they may yield ambiguous or misleading messages about the value of the system and about how to improve its design or reduce implementation risk.

To illustrate the difficulties in learning from pilot implementations, we analyze two pilot implementations in healthcare. The first is a pilot implementation of a system for coordinating patient transports internal to hospitals, the other of an electronic ambulance record. Both pilot implementations were conducted in Region Zealand, one of the five healthcare regions in Denmark. The starting point for our analysis is that pilot implementations are themselves sociotechnical processes. 2 That is, just as pilot implementations contribute to design, they also need designing. It is through the designing of the pilot implementation that the particulars of the pilot site are taken into account and the learning becomes messy.

Background

As a preamble to the analysis of the two pilot implementations, we elaborate the notions of pilot implementation and messiness.

Pilot implementation

Pilot implementation belongs in the later stages of the design process (see Table 1): after prototypes have been tested in the laboratory but before the system is ready for full-scale implementation in the field. The defining characteristic of pilot implementation is that the system is sufficiently functional and robust to enable testing in its intended environment but is not yet finalized.9,15 That is, the results of a pilot implementation can affect the finalization of the technology as well as feed into its organizational implementation. A pilot implementation consists of five activities: 9

Planning and design, that is defining the pilot implementation. This activity involves determining where and when the pilot implementation will take place, what facilities the pilot system will include, and how lessons learned during the pilot implementation will be collected.

Technical configuration, which consists of configuring the pilot system for the pilot site. This activity involves migrating data to the pilot system and developing interfaces, or setting up simulations of interfaces, to other systems at the pilot site.

Organizational adaptation, which means that the pilot site revises its work procedures to benefit from the pilot system. This activity also involves providing users with training in the system and the revised procedures and devising safeguards against user errors and system breakdowns.

Use, during which real work is performed with the pilot system. This activity involves striking a balance between incorporating the system in the normal procedures at the pilot site and maintaining a focus on the system as an object under evaluation.

Learning, which involves collecting information about the introduction and use of the pilot system. Learning about the fit between the system and the pilot site may be derived from all four of the other pilot-implementation activities, not just from the period of use.

It has been argued that failures are more valuable opportunities for learning than successes. 16 Seen in this light, pilot implementations provide opportunities for learning in settings that have been devised to constrain the consequences of failure. It is, however, not apparent how a sustained focus on learning is ensured. Hertzum et al. 9 point out that because the pilot system is used for real work, the learning objective may become secondary to concerns about getting the daily work done. In addition, Winthereik 17 shows that the view of pilot implementations as learning processes may not be shared across the groups of actors involved in pilot implementations. Another challenge in conducting pilot implementations relates to defining their scope. A narrow scope saves resources and constrains the consequences of failure. A broader scope means more use experiences to learn from, in terms of quantity as well as diversity. Finally, it is also challenging to decide on the duration of a pilot implementation. A short pilot implementation consumes fewer resources and proceeds quickly to the full-scale implementation that awaits the completion of the pilot implementation. 18 Conversely, a long pilot implementation is more likely to be unaffected by the start-up problems that are common with new systems. These challenges are nontrivial and inherently sociotechnical.

Messiness

The introduction of information systems in healthcare organizations involves both organizational and technological change.1,19,20 However, only some of the imaginable changes can be realized with any one information system, and these changes affect only part of the clinicians’ work. In spite of the changes, a lot remains the same. It is the system in combination with the local contingencies surrounding its use that determine what changes and what remains the same. Orlikowski12,21 describes the process as improvisational to emphasize that change is not always planned, inevitable, and discontinuous. Rather, it is often realized through the ongoing variations that emerge in everyday activity and are opportunistically incorporated in work practices, or left unexploited. The improvisational, emergent, and opportunistic character of the process makes change situated and messy as opposed to context-independent and orderly.

There are two main drivers of messiness. First, the introduction of a healthcare information system is a sudden and often substantial change whereas the work that is performed with the system may not evolve as quickly. 22 For a period of time, the system will present possibilities that have yet to be incorporated in work practices and the work practices will include activities that have yet to be aligned with the system. The misalignments may gradually disappear or they may persist as unused system facilities, workarounds, and the like. At any one time, it is uncertain whether a misalignment will persist or subsequently disappear. Second, the meaning of a system is determined by the meanings attributed to it by relevant actors; it does not reside in the system itself. This interpretive flexibility 23 means that different meanings may simultaneously be attributed to the same system by different actors. A system may, for example, be perceived as overly bureaucratic by some actors, while others embrace it because they see it as enforcing best practice. The result of such differences may be different use practices, and this may in turn lead to confusion, uncertainty, and misunderstandings—a messy situation.

Pilot implementations embrace the situated view of change by assigning key importance to subjecting the system to the real conditions of the pilot site. At the same time, the premise of pilot implementations is that agreement can be reached about what is learned and that the resulting learning is valid beyond the pilot site. This premise tends toward a view of change as more orderly and context-independent. As an example, Winthereik 17 analyzed the pilot implementation of an electronic maternity care record. The organization that steered the project approached the pilot implementation as a controlled experiment “where the setup could and should not be adjusted, but kept stable” (p. 56). 17 That is, the organization believed the maternity care record had an essence independent of local circumstances and considered it important to keep the pilot implementation stable in order not to distort the clinicians’ experience of the maternity care record. The nurses who used the maternity care record experienced the pilot implementation as an externally imposed, inevitable change in their work: “there is not much one can (or should) do about this” (p. 54). 17 While the nurses had to adjust their work practices to take part in the pilot implementation, they felt peripheral to its learning objective. To them the pilot implementation was largely a ritual. In contrast, the clinicians who had been involved in designing the maternity care record saw the pilot implementation as an opportunity “to learn from clinical practice, and to let what they learned inform the development process” (p. 58). 17 From their point of view, the maternity care record was a malleable object that could and should be changed if it did not fit the clinicians’ ways of working.

The example of the maternity care record begins to illustrate how different actors in pilot implementations may perceive the system as well as the situation differently. This messiness evolves over time because the system evolves in use and because the situation is affected by the uncertainty, confusion, and disparity that arise from the messiness. In the following, we will analyze how such messiness makes it difficult to learn from pilot implementations.

Method

We report from two pilot implementations in Region Zealand, Denmark. Our involvement in the pilot implementations was approved by the healthcare region. We obtained informed consent from the involved nurses, porters, paramedics, and other clinicians prior to our observations and interviews.

The first pilot implementation (see Torkilsheyggi and Hertzum 24 for further information) concerned a system for patient transport coordination (PTC) internal to a medium-sized hospital. Our role in this pilot implementation was twofold. First, we facilitated the activities through which the porters and nurses participated in the technical configuration and organizational adaptation. Second, we were responsible for eliciting, collecting, and documenting the learning that resulted from the pilot implementation. The means for fulfilling the first role was three workshops for specifying the PTC system and the associated work practices. For practical reasons, the two first workshops were attended by porters only and the third workshop by nurses only. Thus, the porters and nurses developed their pre-use perceptions of the PTC system in isolation from each other. On the basis of input from the workshops, the pilot system was configured by the vendor and a local configurator. The second role was our main involvement in the pilot implementation. To fulfill this role, we conducted 23 h of observation during the start-up of the pilot implementation to become acquainted with the work of the porters and nurses and 40 h of observation during the 3-week period of use to learn about their use of the PTC system. During the observations we had informal conversations with porters and nurses about their experiences with the system. In addition, we had more in-depth discussions with porters and nurses in five interviews at the end of the 3-week period and in a group interview after the pilot implementation. The interviews were informed by the interviewees’ experiences with the system and by our observations of their use of it.

The second pilot implementation (see Hansen and Pedersen 25 for further information) concerned an electronic ambulance record (EAR) for use throughout the healthcare region. Our study of this pilot implementation also consisted of observation and interviews. We observed the paramedics at work for 173 h, which included 67 dispatches where we drove with the ambulance to the scene of the emergency and from there to the hospital. The observations also included observation of the paramedics’ work in-between dispatches and of two workshops. The first workshop gathered paramedics, emergency-department physicians, and pre-hospital managers to discuss the effects pursued in the pilot implementation. Through the discussions, the participants were subjected to each other’s expectations and requirements to the EAR system. The second workshop aimed at proposing improvements to the design of the EAR interface. This workshop was convened and driven by the paramedics in response to their frustrations with using the EAR system. During the observations, we talked informally with the participants, mainly paramedics, about their work, their expectations toward the EAR system (during technical configuration and organizational adaptation), and their experiences with it (during the period of use). In addition to the informal conversations, we conducted 41 interviews with paramedics, ambulance dispatch managers, pre-hospital top management, and others. The interviews focused on the interviewees’ experience of the EAR system and of the activities of the pilot implementation.

For both pilot implementations, the observations were documented in real time in field notes. The interviews were audio-recorded and subsequently transcribed, except three interviews in the first pilot implementation, which were documented in detailed notes. The workshops and group interview in the first pilot implementation were audio-recorded, and detailed minutes were written on the basis of the recordings. We analyzed the empirical data by reading them multiple times while making annotations of incidents and themes. In this open coding, the annotations initially consisted of snippets taken directly from the data. 26 Through our discussions, the annotations were grouped and we then reread the data about each group. In this process, some groups were combined or split up, others dropped, and still others written into memos. The memos served to elaborate the annotations and, especially, to link annotations together in themes. While the themes evolved gradually—as we learned about our data—the writing of the memos was throughout directed by our research focus on the situatedness and messiness of pilot implementation. We inferred situated and messy characteristics from observation notes about what participants did during the pilot implementations and from interview statements about their thoughts on the pilot implementations.

We studied the pilot implementations from planning and design to use and learning. While this gave us indispensable insights into the pilot implementations, we cannot rule out that our interactions with the participants affected their thoughts about the pilot implementations. However, the participants interacted much more with each other—during the workshops and their everyday work. Our analysis of the pilot implementations was based on groups of annotations and, thereby, internally validated by data from multiple observations and participants.

Two pilot implementations

The following analysis of the two pilot implementations proceeds from their planning and design, through their technical configuration and organizational adaptation, to the period of use. The learning derived from the pilot implementations is emphasized during the descriptions and summarized at the end.

Coordinating patient transports

Patient transports are an inevitable part of hospital procedures. Most of these transports are internal to the hospital and involve bringing patients to diagnostic tests, scheduled operations, other medical procedures, and then back to their in-patient department. Timely patient transports presuppose efficient coordination between the nurses who order the transports and the porters who perform them. To support this coordination, the studied hospital decided to extend its electronic whiteboards. The whiteboards had recently been mounted on central locations in all wards of the hospital to provide at-a-glance access to an infrastructure for interdepartmental communication and coordination. The extension of the whiteboard to support patient transports involved a pilot implementation because the porters needed to be able to access the information while they were on the move and, thus, benefitted little from the stationary, wall-mounted whiteboards. The aim of the pilot implementation of the PTC system was twofold: (a) to evaluate a system with which nurses ordered patient transports on the whiteboard and porters received notification of these transports via text messages on their phone and (b) to get initial experiences with mobile extensions of the whiteboard. In total, the pilot implementation lasted from March to November, 2013.

In March 2013, the planning and design of the pilot implementation started with considerations about its scope. During dayshifts the porters worked in teams responsible for a specific department, and they proposed the emergency department (ED) as the site for the pilot implementation. An important reason for this choice was the constant flow of patients from the ED to other departments. To limit the number of nurses who had to be trained in ordering patient transports via the whiteboard, the scope was further limited to one of the three wards in the ED, although this meant that the porter team had to respond to two workflows because the nurses in the two other wards of the ED would still be phoning the porters to order a transport. The technical configurations and organizational adaptations necessary for the pilot implementation were made during September and October. To make the ordering of transports easy for the nurses, they were provided with a template that had predefined dropdown menus for the type of transport ordered and for any equipment, such as oxygen, required to perform the transport. A key characteristic of the PTC system was that the porters could not reply to the notifications they received from the nurses. The porters emphasized the importance of being able to reply, for example, to inform about delays and to request additional information. It was, however, decided—and the porters accepted—that reply functionality would not be part of the pilot implementation. The reasons for this decision were mainly technical difficulties in finding a way to present the replies so that they would be noticed and reacted upon but also some skepticism from management about whether reply functionality was truly needed or would merely be nice to have.

All ED nurses working dayshifts during the 3 weeks of pilot use, November 2013, were included in the pilot implementation. These nurses had received a user manual explaining the new way of ordering patient transports, but it immediately became apparent that most of the nurses had not read the manual and therefore did not feel prepared to use the PTC system. As a consequence, demonstrations of how to order patient transports via the whiteboard were improvised at the nurses’ morning meeting for a couple of days. The porters valued receiving information about the required equipment before showing up to collect patients. Previously, they often had to leave the ED again to fetch necessary equipment. With the template for ordering patient transports, the equipment was specified more persistently.

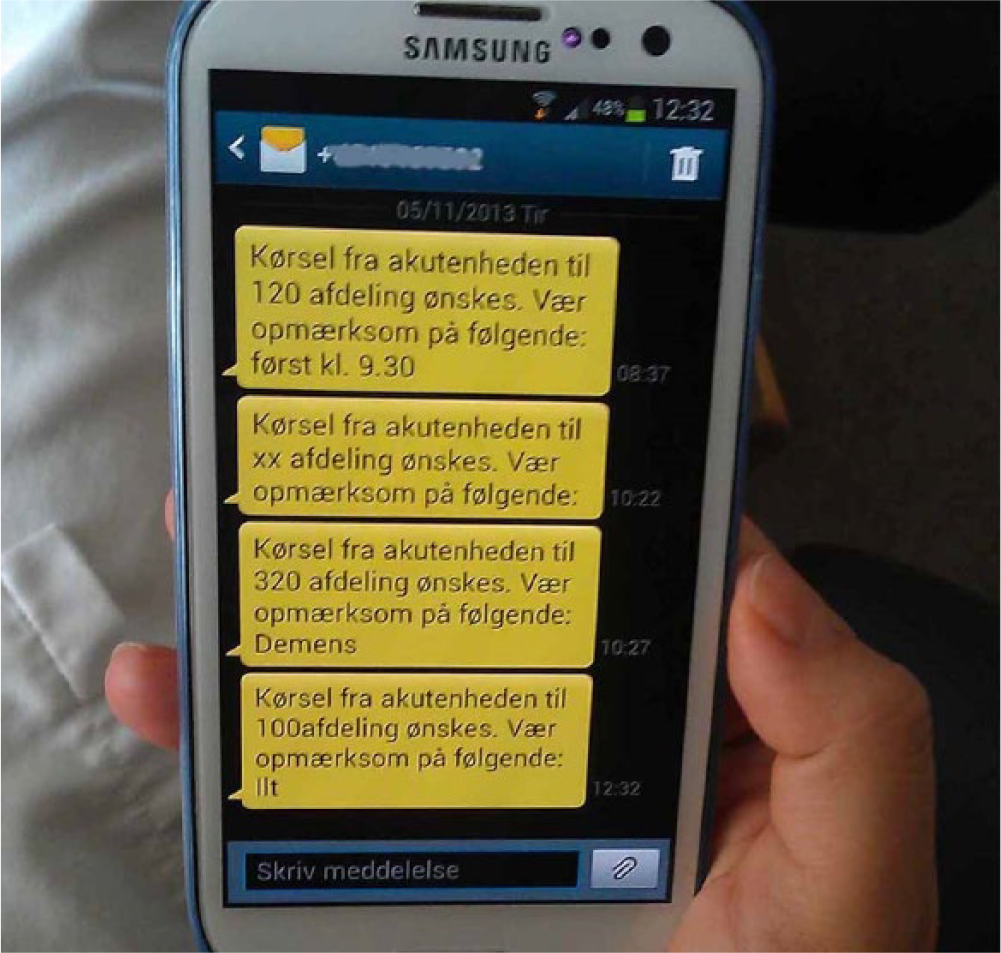

While the PTC system enabled the porters to prepare for the individual transports, it reduced their opportunities to communicate with the nurses about scheduling issues because the porters could not reply to the notifications and because the system was presented as a replacement of coordinating patient transports over the phone. Phone calls were considered a major source of interruptions in the clinical work and avoiding phone calls to the nurses was, therefore, integral to the rationale for coordinating the patient transports via the PTC system. The porters stated that whereas it previously could be a challenge for them to keep track of the incoming phone calls for several transports at a time, they could now receive several text messages in a row without worrying about the information getting lost (Figure 1). The nurses started utilizing the porters’ improved overview of pending transports by increasingly ordering transports in advance, rather than waiting until the patient was ready for the transport. The porters saw the advance orders as an opportunity for increased self-organization of their work. At the same time, they experienced that the absence of reply functionality prevented them from exploiting this opportunity.

Porter phone with four messages about transports.

At the end of the pilot implementation, the porters stated that they wanted functionality for responding to orders before they were prepared to go forward with the PTC system. This requirement was supported by the nurses, who found that they did not receive sufficient feedback from the system and, on multiple occasions, phoned the porters to make sure that they were aware of a pending transport. Independent of the pilot implementation the hospital considered reorganizing the porter service. The current dayshift organization of the porters into separate teams serving different departments would be replaced by a central dispatcher coordinating all patient transports at the hospital, an organization already in use during evening and night shifts. If this reorganization was carried through, the nurses would coordinate transports with the dispatcher rather than directly with the porters. The porters maintained that they would also need reply functionality in communicating with a dispatcher, though the pilot implementation provided no data about this issue because it was restricted to dayshifts. While the considerations about introducing a dispatcher during dayshifts were not part of the pilot implementation, they were part of the context in which the pilot implementation was conducted, the system experienced, and the results interpreted. For example, the porters’ wish for more self-organization was interpreted in the light of the possibility of a reduction in their self-organization if a central dispatcher was introduced.

Electronic ambulance record

Paramedics’ observations at the scene of an emergency are pertinent to their work and to the work of the ED clinicians who assume responsibility for the patient upon arrival to the hospital. In addition, information about the paramedics’ treatment of the patient en route to the hospital is important documentation of their work. To support paramedics in documenting their observations and treatment, the studied healthcare region pilot implemented an electronic ambulance record. The aim of the pilot implementation was threefold: (a) to evaluate the match between the regional pre-hospital services, especially the paramedics’ work, and the EAR system; (b) to enable the extraction of data about the paramedics’ work in order to show that a regional decision to remove physicians from the ambulances did not have adverse consequences for patients; and (c) to provide input to a nationwide tender for an EAR system to be used in all five Danish healthcare regions. In total, the pilot implementation lasted from January 2011 to August 2012.

The first phase of the pilot implementation, January to September 2011, was spent planning and designing the pilot implementation, technically configuring the EAR system, and organizationally adapting the pre-hospital service to the use of the EAR system. During this phase it was, for example, decided to pilot implement the EAR system in ambulances across the region and from both of the ambulance operators contracted by the healthcare region. It was also decided to exclude integration with the electronic patient record in the hospitals from the scope of the pilot implementation. The EAR system was configured so that it contained a superset of the information in the paper-based ambulance record it replaced. The technical configuration also involved some mundane but time-consuming hardware issues, such as replacing the brackets for mounting the EAR computer in the ambulances because the brackets initially delivered were recalled by the manufacturer. In terms of organizational adaptations, a workshop was held to involve representatives of paramedics and ED clinicians in a discussion of the effects pursued with the EAR system. Also, the paramedics received basic training in the use of the system. The paramedics, nevertheless, remained uncertain about the capabilities of the EAR system as well as of the progress of the activities preceding the period of use.

The period of use started in September 2011 and involved 17 ambulances, distributed across 13 ambulance stations and both ambulance operators. It was mandatory for the paramedics to use the EAR system for documenting all acute dispatches with these ambulances. To emphasize the primacy of the patients, the paramedics were instructed that should situations arise in which the use of the EAR system conflicted with concerns for patient health, the paramedics could revert to the paper-based record. Right from the start of the pilot use of the EAR system, the paramedics experienced multiple technical and procedural issues. For example, data entry was divided onto more than 20 screens, thereby degrading the paramedics’ overview of what information they had already entered and what information they still needed to enter. In addition, the absence of integration with the electronic patient record in the hospitals meant that the EAR records had to be printed upon arrival to the EDs (Figure 2). The printing turned out to be exceedingly time consuming and the resulting printouts to be several times longer than the old paper-based records, thereby delaying and degrading the handover of the patients from the paramedics to the ED clinicians. Although the paramedics immediately flagged these issues as detrimental to their work, many of the issues were not resolved until months later or not at all. It was not fully transparent why the issues were not resolved, but the reasons included that the supplier of the EAR system lacked resources, that the supplier was not immediately informed about all the problems flagged by the paramedics, and that though the problems were obvious the solutions were often not. Consequently, the paramedics gradually lost faith in the EAR system, and their use of it declined. In an effort to improve the user interface of the EAR system and reduce the number of screens, five paramedics and a health personnel manager met for a workshop during which they reorganized the interface and proposed a version that was simpler and better aligned with the paramedics’ work. After a month, the period of use was officially put on hold, pending a resolution of the critical issues. Two ambulances started using the pilot system again in March 2012 to assess whether to resume the pilot implementation. While the pilot implementation was not resumed, the system remained in use for a subset of the dispatches with these two ambulances until the pilot implementation was officially discontinued in August 2012.

Paramedic printing EAR record at the ED.

The motivation for the pilot implementation of the EAR system made it a politically textured process. While the consequences of removing physicians from the ambulances may be an unusually sensitive element in a pilot implementation, it was not unusual that multiple interests influenced the pilot implementation because it was set in the field and concerned people’s real work. The process became further politically textured because the pilot implementation included two ambulance operators that were competing for contracts with the healthcare region and because the employees of the ambulance operators (i.e. the paramedics) were increasingly not using the EAR system although its use was mandated by the healthcare region. Such circumstances more likely foster caution and concealment than open dialog. In addition, the substantial impact of the printing problems on the pilot implementation exemplifies the difficulties involved in defining its scope. Another issue that hampered the pilot implementation was the tension between the paramedics’ daily frustrations with the EAR system and the month-long periods required to make revisions of the system. This difference in timeframes meant that the paramedics faced the same problems again and again, also after they had reported them.

Discussion

It was difficult to learn from the pilot implementations of the PTC and EAR systems. In the following, we discuss this difficulty, the complexity it adds to methods for grappling with the future, and the limitations of this study.

Messy learning

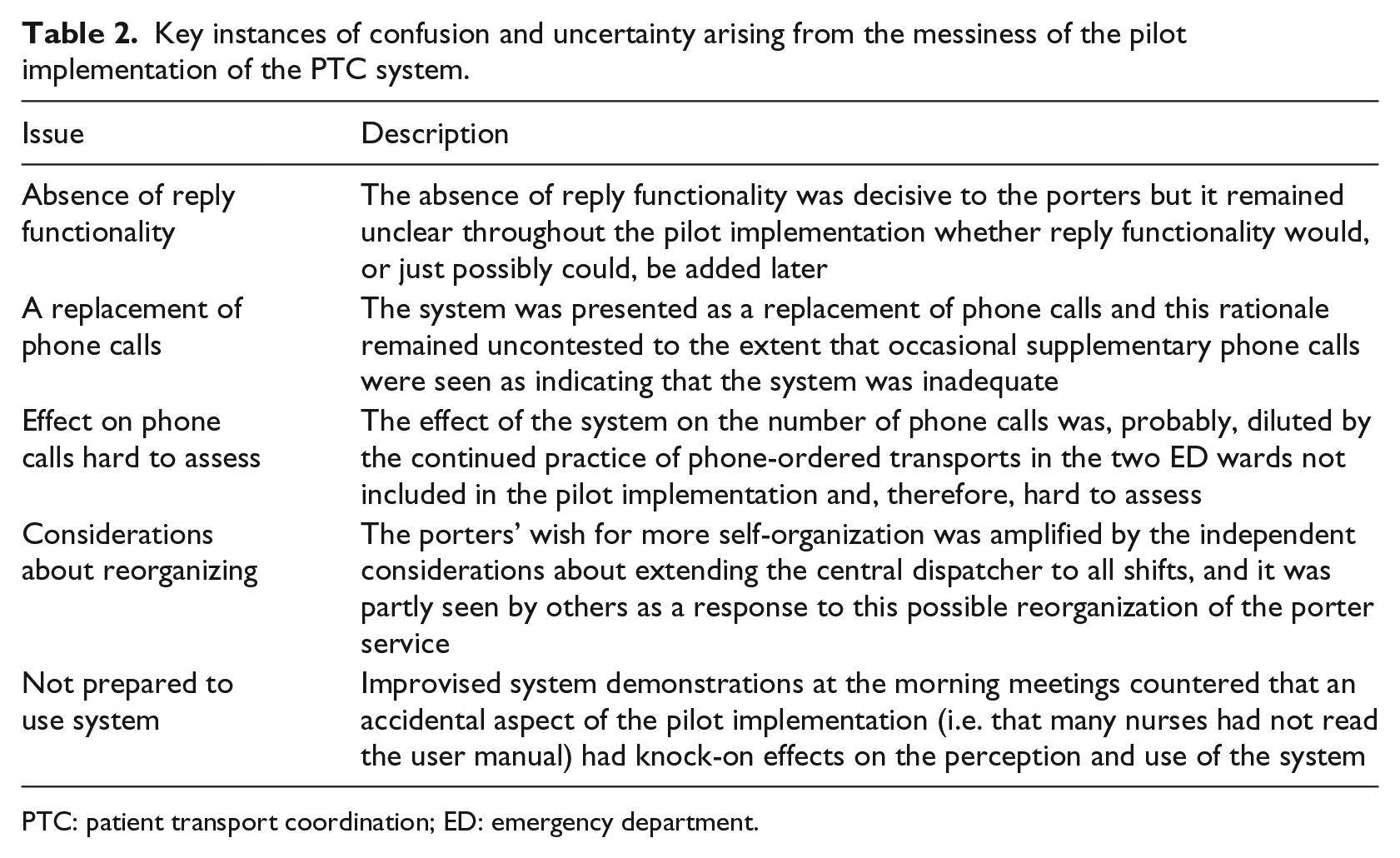

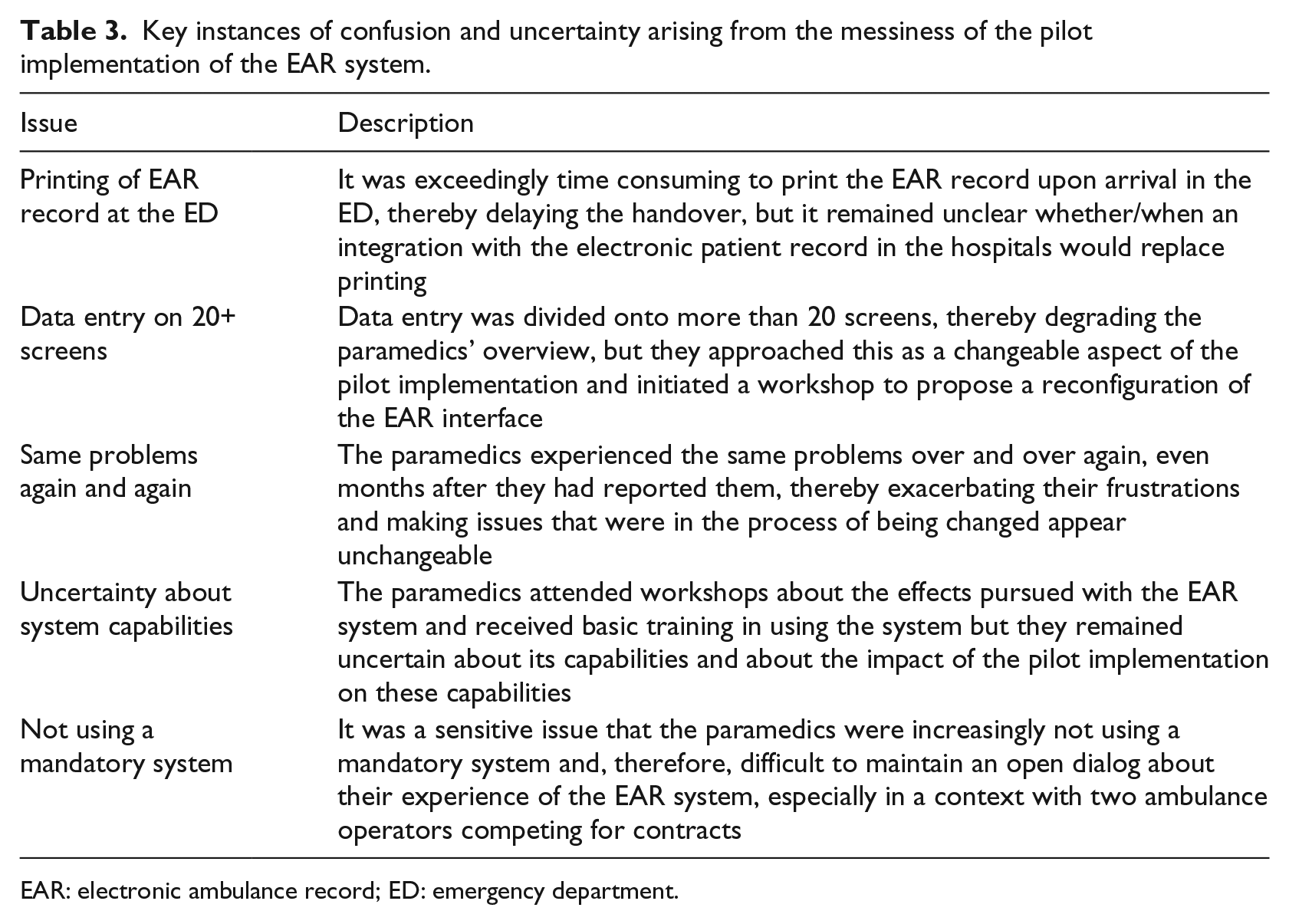

The main finding of this study is that in spite of their realism, the two pilot implementations left room for confusion and uncertainty about the implications of the new systems. Tables 2 and 3 give key instances of such confusion and uncertainty. For example, it remained an uncontested assumption in the pilot implementation of the PTC system that the system should replace, not supplement, phone calls. Consequently, occasional supplementary phone calls were seen as indicating that the system was inadequate. If the focus on replacing phone calls had been contested, then the nurses and porters could, possibly, have arrived at a practice in which the system and phone calls supplemented each other in achieving the best coordination of patient transports. As another example, the paramedics approached the user interface of the EAR system as changeable and took initiative to a workshop proposing a simpler interface. While the interface was configurable, it turned out that the process of reconfiguring it took months and only solved part of the problems. That is, the initial version of the interface was, in practice, fixed to a much larger extent than the paramedics had assumed. We want to raise three issues in relation to the messy character of the learning in pilot implementations:

Key instances of confusion and uncertainty arising from the messiness of the pilot implementation of the PTC system.

PTC: patient transport coordination; ED: emergency department.

Key instances of confusion and uncertainty arising from the messiness of the pilot implementation of the EAR system.

EAR: electronic ambulance record; ED: emergency department.

First, the learning that can be derived from a pilot implementation is not a final or static statement about the fit between the system and the organization. By testing in the field, pilot implementations gain a realism that sets them apart from testing prototypes in the laboratory, 9 but the realism does not end discussions of what using the system will be like. Rather, the realism entails that the system becomes salient to the users because it starts to affect their work and require them to change their practices. Wagner and Piccoli 27 argue that it is at this point most users start reacting to a system and become motivated to influence its design. For example, the porters learned that with the new system they more consistently received information about the equipment necessary for the transports, but they also worked around the system by, occasionally, phoning the nurses and they strove to have reply functionality added to the system though it remained unclear whether it would be added. The learning derived from the pilot implementations was the current state of an evolving process, which contained planned change, workarounds, uncertainty, emerging opportunities, unsuccessful efforts, and other reactions by the involved actors to the technology and the modified contextual conditions. Such reactions, shaped by the particulars of the local context, are not likely to provide unequivocal insights about the wider implementation of a system.

Second, different stakeholder groups experience pilot implementations differently because each group has its own set of tasks and responsibilities in relation to the system. For example, nurses order patient transports and remain largely unaware of other departments’ competing needs for transports, while porters perform transports and are continuously organizing their work so as to meet the needs of multiple departments. 28 As a consequence, different groups experience different uncertainties, possibilities, and frustrations in relation to the possibilities provided, and not provided, by a system. The nurses in the pilot implementation of the PTC system realized that the system enabled them to order transports in advance. This new work practice emerged during use as a welcome but unplanned effect of the PTC system and showed that the nurses’ old practice of not ordering transports until the patients were ready had been an unrecognized bottleneck in the coordination of patient transports. In contrast, the porters’ experience of the PTC system was dominated by the absence of reply functionality and the lack of clarity about whether this lack was temporary or permanent. The study by Winthereik 17 gives further examples of how the stakeholders in a pilot implementation may get quite different learning experiences from it.

Third, the messy learning from pilot implementations may in part resemble uncertainty about their essence and accidental aspects. According to Aristotle, the essence of an object is the part that is retained during any change through which the object remains identifiably the same object; in contrast, the accidental aspects of an object are not bound to its essence but can change independently of it. 29 To the extent that this distinction can be applied to pilot implementations, the essence would be the aspects that are inherent in the system and its use. These aspects accurately reflect the system and what it will be like to use it once it is fully implemented. In contrast, the accidental aspects of a pilot implementation are brought about by the pilot-implementation activities, such as the safeguards necessary to subject an unfinished system to real use. These aspects are not inherent in the system and its use, and they may or may not reflect what it will be like to use the system once it is fully implemented. Several of the difficulties experienced in learning from the two studied pilot implementations appear to involve expectations about a clear division between essence and accidental aspects but difficulties in telling them apart in practice. If essence is mistaken for accidental aspects, or vice versa, confusion and faulty conclusions will ensue.

Grappling with the future

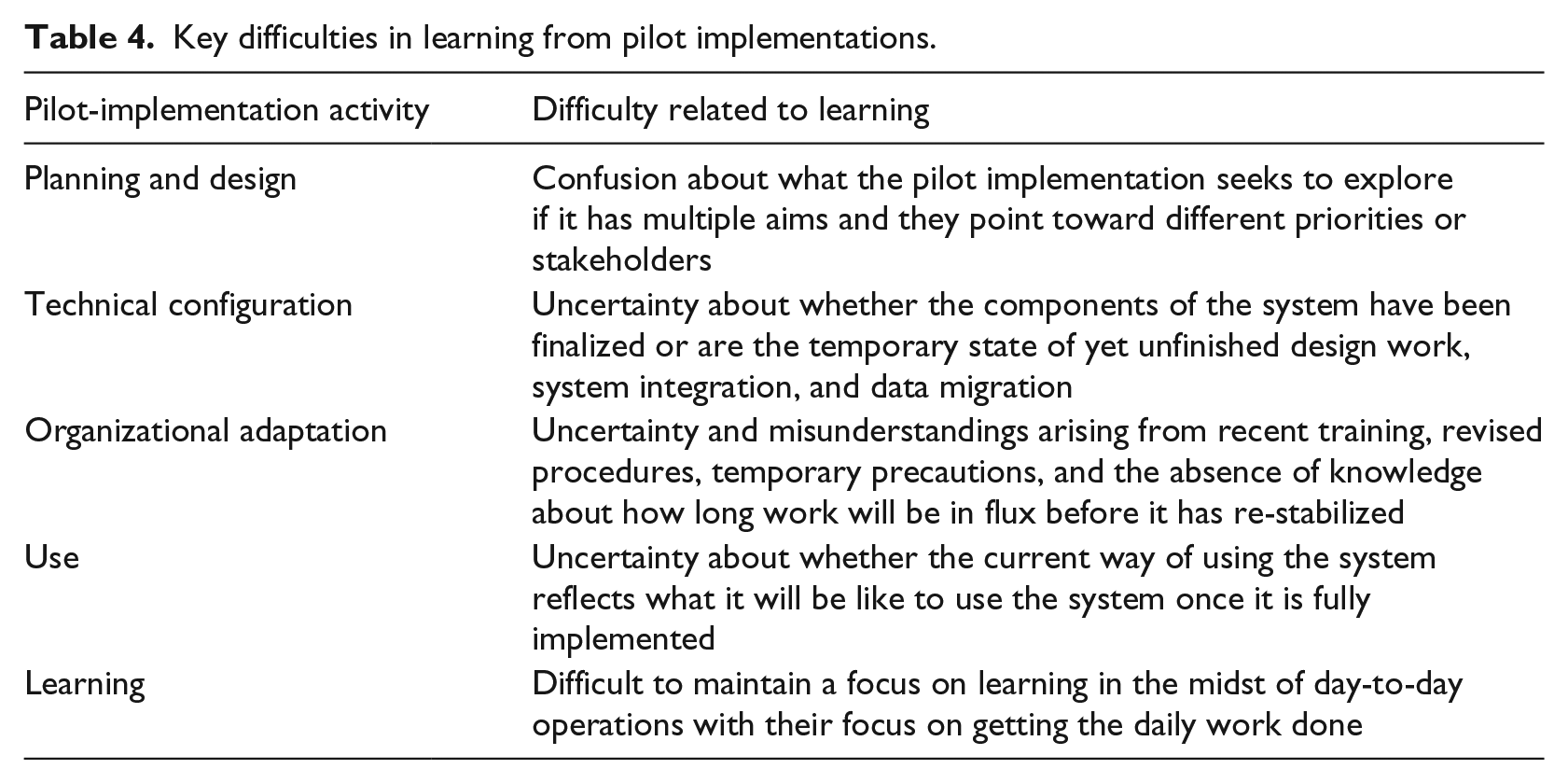

Table 4 summarizes how learning from pilot implementations becomes a situated, messy, and therefore complex process. The complexity is not attributable to a single pilot-implementation activity but rather involves all five of them. For example, the threefold aim of the pilot implementation of the EAR system was a planning and design issue that created uncertainty about what the pilot implementation sought to explore because the different aims pointed at different stakeholders in a politically textured process. And the possible reorganization of the porter service independent of the pilot implementation of the PTC system influenced the organizational adaptations in the pilot implementation as well as the way in which these adaptations were interpreted. To reduce the messiness of pilot implementations, practitioners should first acknowledge it. Second, they should carefully plan and communicate the temporary measures necessary to bridge between the activities supported by the pilot system and those external to it. It may be tempting to assign secondary importance to these measures during planning because they are merely temporary; the printing of the EAR records upon arrival to the EDs exemplifies the consequences of an inadequate temporary measure. Third, communication to counter emergent confusion and uncertainty should continue throughout the pilot implementation. Refraining from such communication—to let the pilot implementation run its course—will most likely make it increasingly messy and thereby reduce the learning that can be derived from it.

Key difficulties in learning from pilot implementations.

While we have investigated the messy character of learning in pilot implementations, it is worth noting that the use of other design methods is also a situated sociotechnical activity.2,30 Thus, visioning studies, scenarios, and other alternatives to pilot implementation also add complexity, just as they contribute to the mutual adaptation of system and organization.

Given the complications involved in learning from pilot implementations, one may ask: What is the use of pilot implementations? We want to point at three uses of pilot implementations: clarifying, kick-starting, and aborting. First, a pilot implementation can be used to clarify what using the forthcoming system will be like and to align expectations with possibilities. Using a pilot implementation for clarification does not mean that the implications of the system will be left uncontested; the meeting between expectations and possibilities may gradually transform the work practice. However, a focus on clarification aims at easing and smoothing the transition to the new system by avoiding uncertainty and confusion and, thereby, making it more readily appreciable what working with the new system will be like.

Second, a pilot implementation can be used to kick-start a process of transforming the system and organization. The transformations accompanying new systems are often slow or they congeal before the full potential of the system has been realized. 31 A pilot implementation may provide inspiration for the kinds of transformation that can be pursued, constitute a forum for negotiating what transformations to pursue, exemplify how they can be pursued, and identify changes in the system necessary to make attractive transformations possible.

Third, a pilot implementation may lead to the abortion, or postponement, of full-scale implementation if there is a severe mismatch between system and organization. The pilot implementations of the PTC and EAR systems are examples. Assessing whether to proceed with full-scale implementation is an important use of pilot implementations because a decision to abort is easier to make after pilot than full-scale implementation and because a pilot implementation shields the organization at large from a system not (yet) fit for use.

In principle, the primary aim of a pilot implementation is to learn about the fit between the system and its use context, while the primary aim of full-scale implementation is efficient quality treatment of the patients. In practice, the learning objective of a pilot implementation may be contested or simply difficult to maintain in the midst of real ambulance dispatches that affect the health of real patients. Clarity about the distinction between pilot implementation and full-scale implementation is however important because it sets expectations and success criteria. For example, Bossen 32 hesitates to call the pilot he studied successful because it did not run smoothly but, at the same time, he lists several important learnings about the system functionality, technical challenges, and organizational issues. Increased clarity about the objective of pilot implementations might have made it easier to assess the pilot implementation. Aarts et al. 14 studied a full-scale implementation and discuss how emergent change and the mutual shaping of technology and organization blurred whether it was a success or a failure. It appears that part of the blur could be explained as uncertainty about the extent to which a successful full-scale implementation may, inadvertently, contain elements of pilot implementation. The study also shows that uncertainty and discussion about the consequences of a system continue into full-scale implementation.

Limitations

Three limitations should be remembered in interpreting the results of this study. First, the data are from pilot implementations in one healthcare region of one country. While the two pilot implementations differ in many respects and show that the results of the study are not peculiar to a single pilot implementation, we acknowledge that both pilot implementations are about transporting patients and, at least partially, about resource optimization. The results may also be influenced by local circumstances, such as the particulars of the Danish healthcare sector. Second, both pilot implementations revealed severe problems in the tested systems. We acknowledge that pilot implementations of more finalized systems will likely be less messy, but they will likely also yield less learning about problems that can still be addressed. This dissonance appears a reminder of Buxton’s 33 law that it is always too early to evaluate until suddenly it is too late. Third, in a healthcare context, porters and paramedics are more peripheral and less powerful user groups than, for example, physicians. The presence of additional aims, beyond that of supporting the porters and paramedics, is more likely for peripheral user groups and it probably increased the messiness of the pilot implementations. More work is needed to examine the transferability of our findings to other circumstances and user groups.

Conclusion

Learning from pilot implementations is messy because they are situated and, thereby, influenced by local contingencies that may or may not reflect what the fully implemented system will be like. In spite of their realism, the two studied pilot implementations left room for confusion and uncertainty about the implications of the new systems. For example, the month-long process for making revisions of the EAR system meant that the paramedics experienced the same issues repeatedly and became increasingly uncertain whether the system could and would be changed to fit their expressed needs. Such confusion and uncertainty temper the contribution of pilot implementations to the mutual adaptation of system and organization because the peculiarities of a pilot implementation may overshadow the aspects that proceed into ordinary use, because the resulting learning may not be valid beyond the pilot implementation, and because the messiness of the learning may preclude directed action. A corollary of this conclusion is that the messy learning in a pilot implementation derives as much from the pilot-implementation activities that lead up to the period of pilot use as from the period of pilot use itself.

Footnotes

Acknowledgements

The pilot implementation of the PTC system was part of the Clinical Communication project, which was a research and development collaboration between Region Zealand, Imatis, Roskilde University, and University of Copenhagen. The first and third author participated in this project, but the empirical work in the pilot implementation was done by the third author. The pilot implementation of the EAR system was part of the Clinical Overview project, which was a research and development collaboration between the Region of Southern Denmark and Roskilde University. The empirical work in this pilot implementation was done by the second author in collaboration with Magnus Hansen. The work reported in this paper was co-funded by Region Zealand and the Region of Southern Denmark. We want to thank the nurses, porters, paramedics, and their managements for their participation in the study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.