Abstract

Objective

To examine the cross-level sociotechnical linkages between societal risk perception of medical artificial intelligence, institutional adoption patterns, and clinical safety outcomes. Specifically, this study aims to explore how social pressure shapes hospital technology strategies and to rigorously assess the association between AI usage intensity and diagnostic errors using an acute imaging-dependent condition as a specific tracer.

Methods

A cross-level analytical framework was constructed based on the Technology Acceptance Model and Institutional Theory. We integrated three heterogeneous data streams from the Federal District of Brazil: a stratified probability survey of residents (N = 4764), longitudinal hospital operational panels (1728 hospital-month observations), and a validating index of social media sentiment. A “Catchment Area Ecological Linkage” protocol was employed to merge micro-level psychometric data with meso-level organizational metrics. Structural Equation Modeling was employed to test the direct, mediating, and moderating effects among variables, with robustness and endogeneity checks conducted via time-lag analysis and double-validation. Moderators included public trust and hospital geographical remoteness.

Results

Structural equation modeling revealed a significant negative association between aggregated public risk perception and hospital AI application frequency (β = −0.34, p < 0.001), consistent with the theory of “algorithmic aversion” at the institutional level. Within the specific context of the tracer condition, higher AI usage intensity was positively associated with misdiagnosis rates (β = 0.28, p < 0.001), suggesting a pattern of “automation bias” in time-sensitive acute triage. These inhibitory effects are attenuated by high public trust and geographical remoteness.

Conclusion

Public risk perception functions as an institutional constraint that throttles technology deployment. While social pressure limits adoption, the uncritical reliance on AI in high-stakes acute settings may compromise diagnostic vigilance. This study highlights the necessity of using precise tracer conditions to evaluate digital health safety and suggests that governance must balance social legitimacy with rigorous clinical oversight.

Keywords

Introduction

Algorithms in healthcare have been rapidly deployed to develop a large number of predictive scores and diagnostic tools across various clinical scenarios, ranging from chronic disease management to acute pandemic surveillance.1–6 While engineering metrics suggest that algorithms increasingly outperform human experts in specific tasks, the integration of these tools into routine clinical workflow remains fraught with sociotechnical friction. Due to the lack of diversity in potential patient populations, the adoption of biased medical algorithms trained on relatively homogeneous datasets has hindered the generalizability of results.7,8 Problems such as data imbalance, lack of expertise, and regulatory gaps lead to diagnostic biases,9,10 which may exacerbate global healthcare inequalitie.11–21 Consequently, AI algorithms bring distinct uncertainties to clinical practice, cognitive, operational, and ethical. 22

The narrative of technological efficiency frequently collides with a countervailing force: social risk perception. 23 Public anxiety regarding algorithmic “black boxes,” data privacy, and the accountability of non-human agents acts not merely as a psychological backdrop, 24 but as a tangible institutional constraint that shapes how healthcare organizations procure, deploy, and govern emerging technologies.

This tension is particularly acute in high-stakes diagnostic environments. Theoretically, AI-assisted interpretation offers a safeguard against human fatigue and cognitive error. In practice, however, the adoption of these tools is an organizational behavior deeply embedded in a social context. Drawing upon Institutional Theory and the technology acceptance model (TAM), we posit that hospitals operate as socially responsive entities seeking legitimacy. When public risk perception is high, hospital administrators may subconsciously or explicitly align their operational strategies with community sentiment, potentially throttling the frequency of AI application to mitigate reputational liability. This phenomenon, often described as “algorithmic aversion” at the individual level, likely manifests as “defensive non-adoption” at the organizational level.

However, the existing literature exhibits a notable research gap: The majority of studies remain either evaluate AI performance in sterile laboratory settings, detached from the messy reality of clinical adoption, or measure user acceptance through hypothetical surveys unrelated to patient outcomes. There is a paucity of empirical evidence that bridges the divide between societal sentiment (macro-level), institutional adoption behavior (meso-level), and objective patient safety outcomes (micro-level). Furthermore, identifying the causal impact of risk perception on medical errors poses considerable methodological challenges, primarily attributable to data silos and ambiguous measurement criteria.

To address these gaps, this study introduces the TAM into the interdisciplinary context of medical sociology and health geography. We construct a cross-level analytical framework using the Federal District of Brazil as a study area. Our identification strategy relies on the linkage of three unique datasets: (1) stratified resident surveys measuring risk perception; (2) 4.3 million geotagged social media data points from Twitter (X) and Reddit serving as a dynamic risk index; and (3) operational panel data from multiple hospitals.

To rigorously measure the “Misdiagnosis Rate”, a variable often plagued by ambiguity, we employ a “Tracer Condition” approach. 25 We select Testicular Torsion and related acute scrotal emergencies as the specific clinical tracer. This allows us to circumvent the ambiguity of administrative data and precisely identify false negatives (omission errors) and false positives, providing a robust proxy for diagnostic safety in AI-assisted radiology.

This study refines the core issue into three research questions:

By linking stratified survey data, hospital operational panels, and social media sentiment indices, this study moves beyond simple causal assertions. Instead, we offer an exploratory analysis of how the “social climate” of risk creates an institutional environment that may paradoxically influence clinical safety. We hypothesize that while high risk perception constrains AI adoption (Algorithmic Aversion), the uncritical high-frequency use of AI in low-resource settings may conversely lead to vigilance decrements (automation bias). This study aims to provide a nuanced, data-driven perspective on the delicate balance between social trust and clinical automation.

Methods

Theoretical framework in high-stakes contexts

TAM traditionally holds that adoption is driven by Perceived Usefulness (PU) and Perceived Ease of Use (PEU). 26 However, healthcare is a high-stakes setting with information asymmetry and irreversibility. Its application in healthcare thus demands a broader sociotechnical perspective. 27

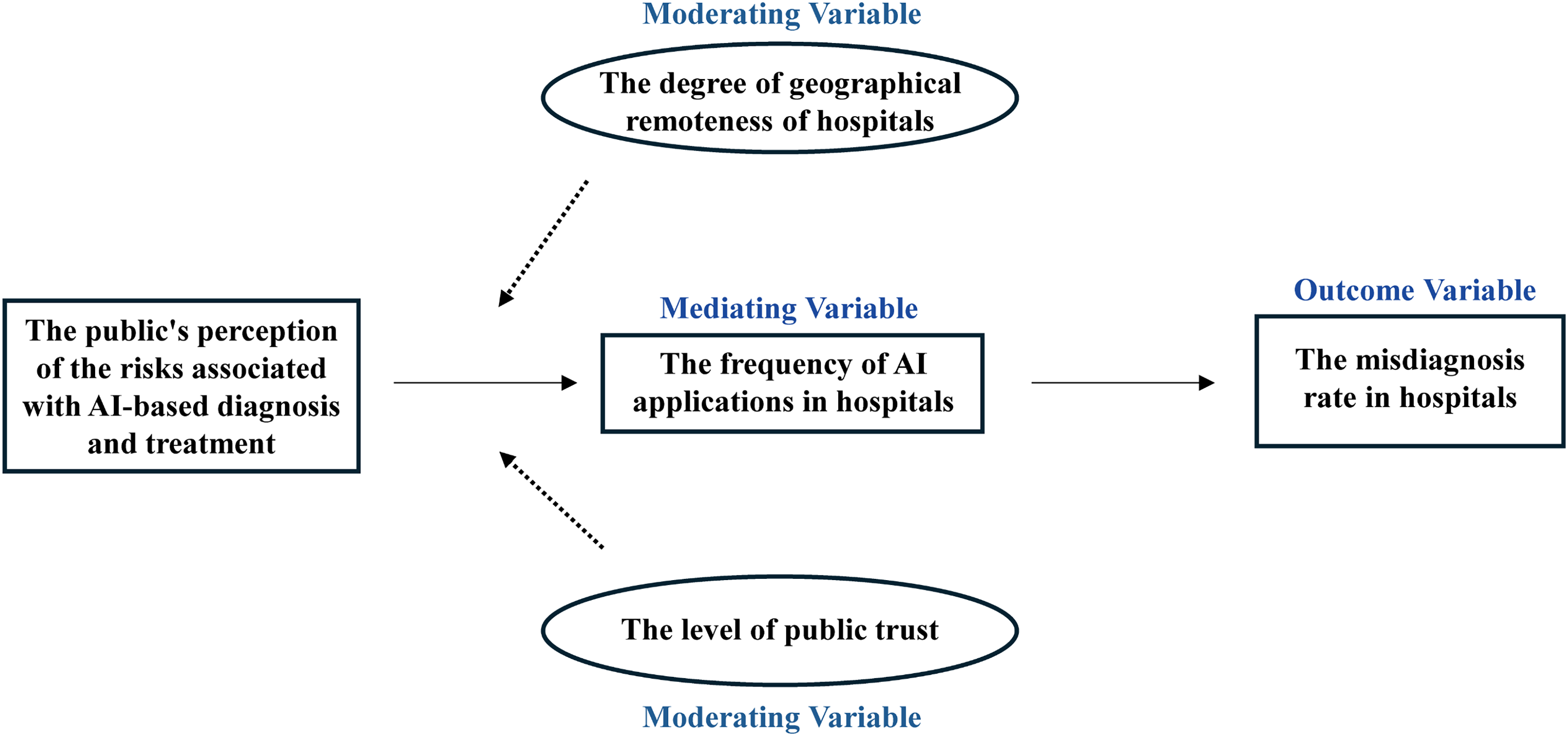

In the context of AI-assisted diagnosis, we argue that Risk Perception acts as a critical antecedent that negatively frames PU (Figure 1). Users, and by extension hospital administrators, tend to lose confidence in algorithms faster than in humans after seeing them err. 28 Therefore, “acceptance” in this study is not merely an individual physician's preference but an organizational response to the social cognitive environment.

Core theoretical model of this study.

Public risk perception and AI application frequency

Public risk perception constitutes a form of external institutional pressure. According to Institutional Theory combined with TAM, hospitals seek legitimacy by aligning their operational practices with social expectations. When the public harbors high levels of concern regarding AI safety, fearing data breaches, algorithmic bias, or “black box” errors, this aggregate social anxiety lowers the Socially Perceived Usefulness of the technology.

29

To maintain patient trust and avoid potential litigation or reputational damage, hospital administrators and department heads are likely to restrict the deployment intensity of AI tools or impose stricter manual review thresholds, effectively reducing the recorded frequency of AI-assisted reporting. Conversely, in a low-risk-perception environment, hospitals are emboldened to leverage AI for efficiency without facing public backlash.

AI application frequency and hospital misdiagnosis rate

The relationship between AI adoption and diagnostic accuracy is often assumed to be linear and positive. However, we propose that in the specific context of acute, imaging-dependent conditions, this relationship is complex. We draw on the theory of “Automation Bias",

30

which suggests that high-frequency use of decision support systems can lead to human complacency. In high-throughput settings, if AI application frequency is high but the algorithm lacks specificity for rare or subtle acute presentations, for example incomplete torsion, clinicians may over-rely on the AI's “negative” or “low probability” tags, bypassing rigorous manual verification. This omission error increases the rate of false negatives. Therefore, regarding our specific tracer condition, we propose a hypothesis. Indiscriminate or high-frequency reliance on current-generation AI, when coupled with insufficient human-in-the-loop vigilance, may paradoxically be associated with higher rates of misdiagnosis. This specifically refers to an increase in missed time-sensitive diagnoses.

Contextual moderators: trust and space

Trust acts as a mechanism of complexity reduction in social interactions.

31

Acceptance of a medical AI application is directly related to trust in the AI application.

32

Within the TAM framework, Public Trust in medical institutions or technology developers serves as a buffer against risk perception. If the public holds high trust in the healthcare system's competence and benevolence, they are more likely to interpret potential AI risks as “manageable” rather than “catastrophic”.

32

Consequently, even if risk perception rises, high trust ensures that the Perceived Usefulness of AI remains relatively stable, weakening the motivation for hospitals to reduce AI usage. In low-trust environments, however, even minor risk concerns can trigger significant resistance, making the inhibitory effect of risk perception on application frequency more pronounced.

Health geography theories suggest that “place” dictates the necessity of technology. In remote or underserved areas, the shortage of specialist physicians and diagnostic resources creates a state of resource dependence.

33

In these regions, the utility of AI is not just efficiency but accessibility, it may be the only available diagnostic support. Therefore, the necessity of using AI outweighs the luxury of worrying about its risks. Hospitals in remote areas are less sensitive to public risk perception because they have fewer alternatives. In contrast, in urban centers with abundant medical resources, hospitals have the flexibility to revert to human-only diagnosis when public risk perception is high, making the negative correlation stronger.

Data integration and catchment area linkage

The research area was selected as the Federal District, Brazil (For the link to the hospital operation data, see https://scielo.figshare.com/articles/dataset/Presentation_delay_misdiagnosis_inter-hospital_transfer_times_and_surgical_outcomes_in_testicular_torsion_analysis_of_statewide_case_series_from_central_Brazil/14286491). To bridge the micro-macro divide without violating the ecological structure of the data, we constructed a balanced panel dataset covering 12 major hospitals over a 144-month period. We employed a “Catchment Area Ecological Linkage” protocol to harmonize three distinct data streams: stratified resident surveys, hospital operational panels, and a social media sentinel index. The primary unit of analysis was the hospital-month. Catchment areas were delineated based on patient origin records, defined as the administrative regions contributing to the upper 60th percentile of a hospital's monthly patient inflow. Individual survey responses were aggregated to these catchment areas using post-stratification weights derived from IBGE census data (adjusting for age, gender, and income) to ensure the representativeness of the risk perception metrics. To validate the temporal dynamics of the survey data, we triangulated these findings with a Social Media Risk Perception Index (RPI), computed via a BERT-based sentiment analysis of 4.3 million geotagged posts from Twitter and Reddit within the same spatiotemporal coordinates.

Participants and data handling

The psychometric data were derived from a stratified probability survey targeting residents across the Federal District. Data integrity was prioritized through rigorous cleaning protocols. From an initial recruitment of 4890 respondents, cases exhibiting logical inconsistencies or straight-lining response patterns were excluded. Missing data were handled using listwise deletion, as the rate of missingness was negligible (< 2.6%) and Little's MCAR test confirmed that data were missing completely at random. The final analytical sample consisted of 4764 valid responses. A post-hoc power analysis indicated that this sample size provided sufficient statistical power (>0.90) to detect small effect sizes (f2 = 0.02) in the subsequent structural equation models, addressing concerns regarding sample adequacy.

Operationalization of the tracer condition

A persistent methodological challenge in assessing “misdiagnosis” involves the variability of administrative reporting standards and the subjectivity of clinical judgment for systemic conditions. To circumvent these limitations and ensure measurement validity, this study adopted a “Tracer Condition” approach, 25 selecting Testicular Torsion (TT) and concomitant acute scrotal emergencies as the exclusive clinical tracer. This condition was chosen not arbitrarily, but for its high methodological validity: it is an acute, time-sensitive emergency strictly dependent on imaging such as Doppler Ultrasound, the precise domain where AI diagnostic support is most active. Unlike diagnoses such as pneumonia or hypertension, TT offers a binary outcome: a twisted spermatic cord confirmed intraoperatively serves as definitive proof of pathology. Consequently, a “false negative” was rigorously defined as a patient discharged with a non-urgent diagnosis who subsequently required orchiectomy or detorsion within 48 h, while a “false positive” was defined as a negative surgical exploration following a positive imaging diagnosis. This reliance on surgical pathology eliminates the noise of administrative reporting bias. Taking patients with acute scrotal pain treated in Hospital Alpha in March 2023 as an example, the specific calculation methods of AI application frequency and misdiagnosis rate are explained as follows: First, research scenario: Hospital Alpha admitted a total of 10 patients with acute scrotal pain as the main clinical manifestation during the same period. Second, calculation of AI application frequency: Among the ultrasound diagnostic reports of 10 patients, 4 contained the AI probability score tag (AI_Probability_Score: High). Based on this, the AI application frequency = (Number of reports with AI tags / Total number of reports) × 100% = 40%. Third, determination of misdiagnosis: Patient X was initially diagnosed with “orchitis” and discharged. He returned for a follow-up visit 6 h later due to worsening symptoms, and was confirmed to have “testicular torsion” by surgery. This case meets the criteria for false negative diagnosis and is determined as 1 misdiagnosed case. Based on this, the misdiagnosis rate = (Number of misdiagnosed cases / Total number of cases) × 100% = 10%.

In this study, the independent variable is public risk perception, which is measured through a stratified resident survey using a 7-point Likert scale (1 represents “safe/beneficial” and 7 represents “high risk/uncertainty”). This scale covers four dimensions, including consequences of diagnostic errors, data security, clarity of liability, and anxiety about algorithmic “black box” (specific scale items are shown in Appendix Table A1).

Measures and instrumentation

The independent variable, public risk perception, was measured using a validated 7-point Likert scale assessing dimensions of privacy concern, liability uncertainty, and algorithmic aversion (specific scale items are shown in Appendix Table A1). The mediating variable, AI application frequency, quantified the intensity of technology deployment rather than mere availability. This was operationalized as the percentage of radiology reports within a specific catchment month that contained verified AI-generated metadata tags (e.g., DICOM_SR_PROB, CAD_MARKER) in the Radiology Information System. To validate the granularity and accuracy of these automated extraction methods, a double-blind clinical audit was performed. Two independent senior urologists manually reviewed a random 5% subset of the electronic health records. The inter-rater reliability was substantial (Kappa = 0.88), and the concordance between the algorithmic extraction of misdiagnosis events and expert review reached 92.4%, confirming that the administrative data accurately reflected clinical reality. Control variables included hospital maturity (a composite index of bed count and accreditation), daily patient volume, and seasonal fixed effects to account for organizational heterogeneity.

Statistical analysis

Analytic procedures were conducted using Stata 17.0 and Mplus 8.4. Confirmatory factor analysis (CFA) was first employed to verify the construct validity and reliability of the latent variables. For hypothesis testing, structural equation modeling (SEM) with maximum likelihood estimation was selected over traditional hierarchical linear modeling. The choice of SEM was predicated on its ability to simultaneously estimate measurement errors in latent constructs and assess complex mediation pathways. To account for the nested structure of the data, where patients are clustered within hospital catchment areas, cluster-robust standard errors were applied in all models. Finally, to mitigate potential endogeneity and rule out reverse causality (i.e., the possibility that poor hospital performance drives public risk perception), a Time-Lag Analysis was conducted using a Granger causality framework, ensuring that the directionality of the observed associations was statistically robust.

Ethical approval

This study employs a cross-level observational design to investigate the sociotechnical linkages between societal risk perception, institutional technology adoption, and clinical safety outcomes within the Federal District of Brazil. The reporting of this study strictly adheres to the STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) guidelines, with a completed checklist provided in the Supplementary Material. Given the heterogeneous nature of the data sources, the research protocol necessitated a bifurcated ethical clearance strategy. The survey component involving human subjects received approval from the Institutional Review Board of SJT University (Ref: SJT-No.374). Conversely, the retrospective analysis of hospital administrative records utilized strictly de-identified, aggregated data; consequently, a waiver of informed consent was granted by the Federal District Health Department Ethics Board (Protocol #PSA-Sta-691).

Results

Data characteristics and preliminary validations

The strict application of the “Catchment Area Ecological Linkage” protocol yielded a final balanced panel comprising 1728 hospital-month observations. The matching efficiency was high, with 92.4% of valid survey respondents successfully mapped to specific hospital catchment zones. Post-stratification weighting ensured that the linked survey sample achieved demographic parity with the Federal District census data (χ2 > 0.10), effectively mitigating selection bias inherent in digital sampling. Regarding the tracer condition, a total of 505 confirmed cases of acute scrotal emergencies (Testicular Torsion and differentials) were identified across the observation period. This provides a clinically granular dataset sufficient for robust statistical inference. Table 1 presents the descriptive statistics and the bivariate correlation matrix. A preliminary inspection reveals a significant inverse correlation between Public Risk Perception and AI Application Frequency (r = −0.41, p < 0.01), offering initial, albeit unadjusted, support for the theoretical premise of algorithmic aversion. Conversely, AI Application Frequency exhibits a positive correlation with the Misdiagnosis Rate of the tracer condition (r = 0.28, p < 0.01), signaling potential automation-induced errors in this specific acute setting.

Descriptive statistics and correlation matrix of study variables.

Note: N = 1728 hospital-month observations. Values in bold parentheses on the diagonal represent the square root of the Average Variance Extracted (AVE) for latent constructs to demonstrate discriminant validity. Misdiagnosis Rate refers specifically to the tracer condition (Testicular Torsion). * p < 0.05, ** p < 0.01, *** p < 0.001.

Psychometric properties of latent constructs

Prior to structural estimation, a CFA was conducted to assess the measurement model (Table 2). The model exhibited excellent fit indices (χ2/df = 2.05, CFI = 0.96, TLI = 0.95, RMSEA = 0.042, SRMR = 0.038), confirming the dimensionality of the constructs. All standardized factor loadings exceeded the 0.70 threshold (p < 0.001), indicating strong convergent validity. The Composite Reliability (CR) values for Public Risk Perception (0.88) and Public Trust (0.86) surpassed the recommended cutoff of 0.70. Furthermore, discriminant validity was established using the Heterotrait-Monotrait ratio, which remained consistently below 0.85, confirming that risk perception and trust function as distinct, albeit related, sociotechnical constructs.

Assessment of the measurement model (confirmatory factor analysis).

Note: Model Fit Indices: chi^2/df = 2.05, CFI = 0.96, TLI = 0.95, RMSEA = 0.042, SRMR = 0.038. All factor loadings are significant at p < 0.001. CR = Composite Reliability; AVE = Average Variance Extracted.* p < 0.05, ** p < 0.01, *** p < 0.001.

We also conducted Confirmatory Factor Analysis to assess the reliability and validity of the latent constructs (Table 2). The measurement model demonstrated excellent fit: χ2/df = 2.05, CFI = 0.96, TLI = 0.95, RMSEA = 0.042, SRMR = 0.038.

Structural model and hypothesis testing

We employed SEM to test the hypothesized relationships, controlling for hospital maturity, patient volume, and seasonal effects. The structural model demonstrated a robust fit (χ2/df = 2.14, CFI = 0.94, RMSEA = 0.048). The path coefficients, presented in Table 3, illuminate the proposed mechanisms.

Structural equation model results: path coefficients and mediation.

Note: S.E. = Standard Error (Cluster-Robust). CI = 95% Confidence Interval based on 5000 bootstrap resamples. The model controls for seasonal fixed effects. * p < 0.05, ** p < 0.01, *** p < 0.001.

Regarding the institutional response to social pressure (

In terms of the clinical safety outcome for the tracer condition (

Mediation analysis using bias-corrected bootstrapping (5000 resamples) confirmed a significant indirect effect (β = −0.095, 95% CI [−0.14, −0.05]). This points to a paradoxical sociotechnical cascade: high public risk perception suppresses AI adoption, which, in the specific context of acute scrotal emergencies, is indirectly associated with a reduction in automation-induced diagnostic errors.

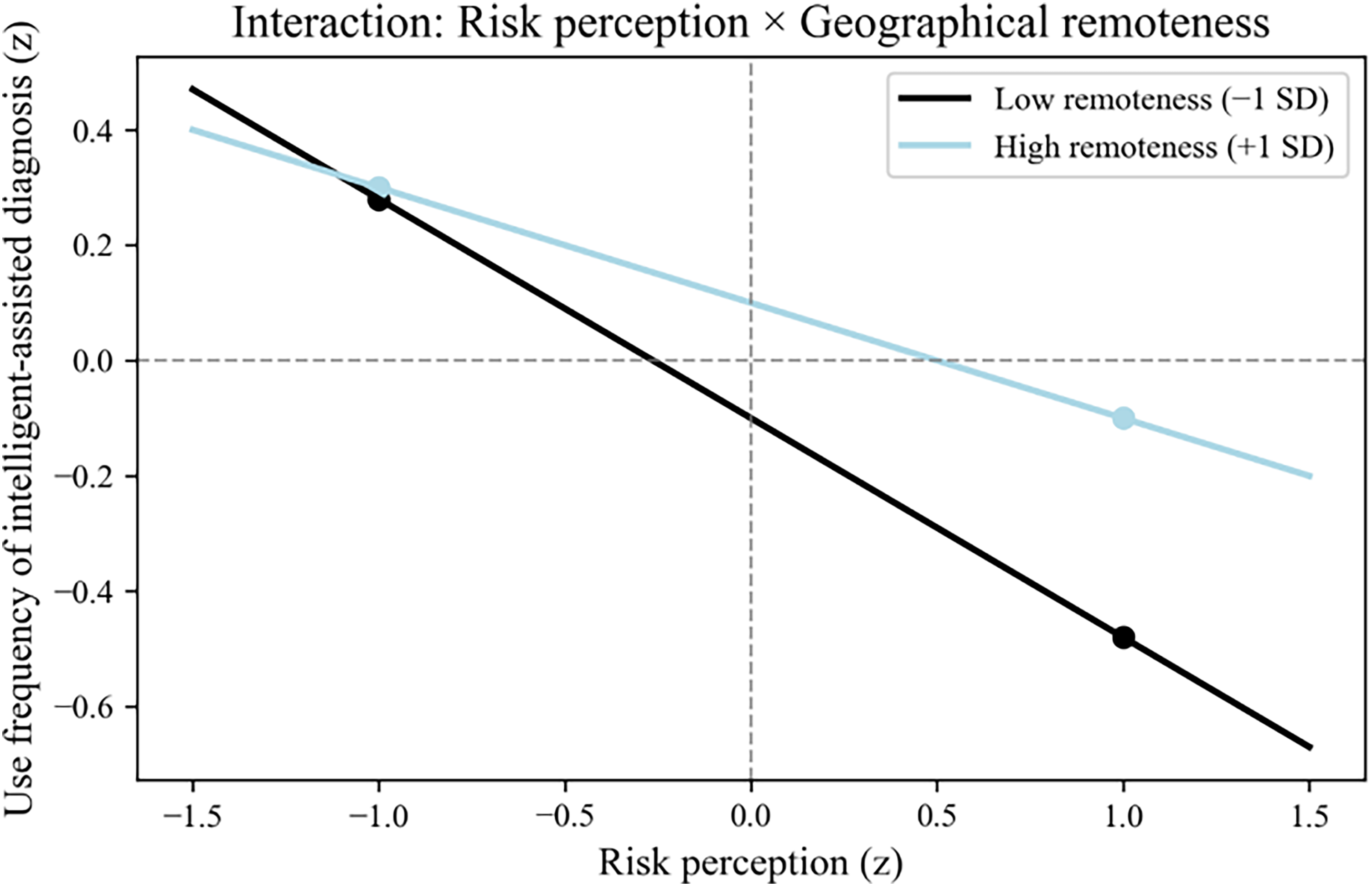

Contextual moderation analysis

To examine the boundary conditions of these associations, we tested the moderating roles of Public Trust (

Slope graph of the moderating effect (public trust).

Slope graph of the moderating effect (geographical distance).

Hierarchical regression analysis for moderating effects.

Note: Predictor variables were mean-centered prior to forming interaction terms to minimize multicollinearity.* p < 0.05, ** p < 0.01, *** p < 0.001.

The interaction term Risk Perception × Public Trust yielded a significant positive coefficient (β = 0.11, p = 0.012). Slope analysis indicates that under conditions of high public trust (+1 SD), the inhibitory effect of risk perception on AI adoption is attenuated (Slope β = −0.21) compared to low trust environments (Slope β = −0.45). This suggests that institutional trust acts as a buffer, allowing hospitals to maintain technological innovation despite prevailing risk concerns. Similarly, the interaction of Risk Perception × Geographical Remoteness was positive and significant (β = 0.09, p = 0.021). This finding aligns with resource dependence theory: in remote areas where specialist scarcity is acute, hospitals appear less sensitive to social pressure regarding AI risks, driven instead by the imperative of diagnostic accessibility.

We have updated Figure 1 to be a standardized path diagram, clearly displaying the path coefficients and R2 values on the arrows and constructs, making the results easier to interpret at a glance (Figure 4).

Statistical results of the structural equation model.

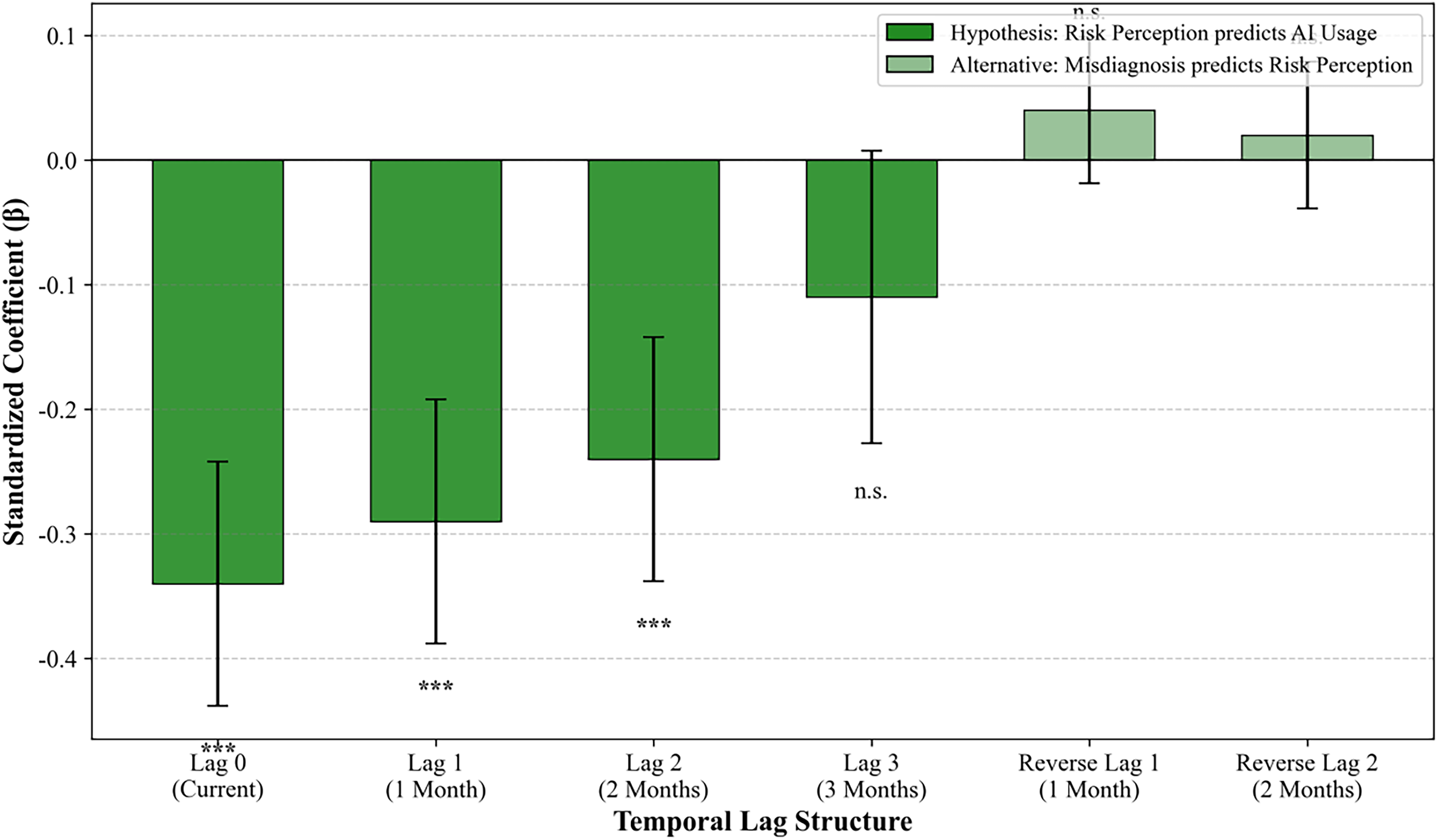

Robustness checks and endogeneity analysis

To address the potential for reverse causality, specifically, the concern that high misdiagnosis rates might drive public risk perception rather than the inverse, we conducted a Time-Lag Analysis (Table 5). Risk perception at time t significantly predicted AI application frequency at t + 1 (β = −0.29) and t + 2. Crucially, the Misdiagnosis Rate at time t did not significantly predict risk perception at t + 1 (β = 0.04, p > 0.10). This temporal precedence supports the directional validity of our model. Additionally, we re-estimated the model including a binary control for “Teaching Hospital Status” to address potential confounders related to case-mix and academic supervision; the core coefficients remained stable, suggesting the observed associations are not artifacts of unmeasured organizational characteristics (Table 5).

Robustness check: time-lag analysis (granger causality framework).

Note: Standard errors in parentheses are clustered at the hospital level. The non-significance of lagged misdiagnosis rates in Model B supports the directional validity of the theoretical model (i.e., risk perception drives adoption behavior, not vice versa).* p < 0.05, ** p < 0.01, *** p < 0.001.

To rule out reverse causality, specifically that poor hospital performance may drive risk perception, we tested lagged effects. Risk perception at month t significantly predicted AI application frequency at month t + 1 (β = −0.29) and month t + 2 (β = −0.24). In contrast, the hospital misdiagnosis rate at month t did not significantly predict risk perception at month t + 1 (β = 0.04, p > 0.10). This temporal precedence strongly supports the causal direction from public perception to hospital behavior (Figure 5).

Lagged correlation coefficients and reverse causality test.

To ensure the misdiagnosis rate was not an artifact of coding errors, we analyzed the subset of records subject to double-blind clinical review. The error rate in the audited sample was highly correlated with the automated calculation (r = 0.91), and the inter-rater reliability was high (kappa = 0.88). Sensitivity analysis excluding ambiguous cases yielded consistent structural path coefficients.

Discussion

This study set out to interrogate the sociotechnical chain of custody: how public sentiment regarding artificial intelligence translates into institutional adoption behaviors, and ultimately, how these organizational decisions associate with patient safety outcomes in high-stakes environments. By integrating stratified survey data, social media sentiment indices, and operational records from the Federal District of Brazil, we constructed a cross-level analytical framework rooted in the Technology Acceptance Model but expanded through the lens of Institutional Theory. The findings suggest a complex reality where the “social climate” of risk perception acts as a structural constraint on hospital technology deployment, while the uncritical adoption of AI in specific acute contexts paradoxically correlates with increased diagnostic vulnerability.

Algorithmic aversion as an institutional constraint

Traditional applications of the TAM often conceptualize “perceived usefulness” as a function of technical performance or individual user efficiency. Our findings extend this by demonstrating that perceived usefulness is, to a significant degree, socially constructed. The robust negative association between public risk perception and AI application frequency (β = −0.34) suggests that hospital administrators do not operate in a vacuum. Instead, they appear to act as socially responsive agents who modulate technology deployment to align with the prevailing “risk climate” of their catchment area. This validates the theory of Algorithmic Aversion at the institutional level: 28 even if an AI tool is technically proficient, the perceived social cost of a potential AI-induced error, amplified by community anxiety regarding “black box” accountability, appears to drive hospitals toward a posture of “defensive non-adoption.” In this sense, risk perception functions not merely as an opinion but as a tangible institutional pressure that compromises the legitimacy of technological integration.

The automation bias trap in acute care

Perhaps the most counterintuitive, yet clinically critical, finding is the positive correlation between AI application frequency and the misdiagnosis rate for our tracer condition (β = 0.28). We interpret this through the lens of Automation Bias. 34 Testicular Torsion requires immediate surgical intervention, and its diagnosis via Doppler Ultrasound is subtle. The data suggest that in environments characterized by high-frequency AI usage, clinicians may subconsciously rely on the algorithm's “negative” probability score as a definitive rule-out, thereby bypassing the more time-consuming manual verification or physical examination. This phenomenon, known in cognitive ergonomics as an “omission error,” underscores a critical boundary condition for digital health: high-frequency adoption does not equipotentially translate to quality improvement. In acute triage settings, if the human-in-the-loop vigilance degrades due to over-reliance on automated flags, the efficiency gains of AI may be negated by a concomitant rise in false negatives. This finding vindicates the selection of Testicular Torsion as a tracer condition; its binary surgical outcome allowed us to capture these subtle safety signals that might otherwise be lost in the noise of general administrative reporting.

Contextual moderators: trust and resource dependence

Our moderator analysis reveals how social and spatial contexts reshape the adoption curve. We found that high Public Trust weakens the inhibitory effect of risk perception. This suggests that trust acts as a form of social capital that buys hospitals leeway to innovate. In high-trust environments, the public appears more willing to grant hospitals the “license to operate” innovative technologies, viewing potential risks as manageable rather than catastrophic. Conversely, the observation that Geographical Remoteness dampens sensitivity to risk perception provides strong empirical support for Resource Dependence Theory. 33 In the remote regions of the Federal District, where specialist radiologists are scarce; the utility of AI is not measured by marginal efficiency but by fundamental accessibility. For these hospitals, the imperative to provide diagnostic services outweighs the luxury of social caution. This highlights a dual trajectory in digital health: urban hospitals’ adoption is constrained by sociopolitical legitimacy, while rural adoption is driven by functional necessity. This provides insights for policy innovation: in regions with scarce medical resources, priority can be given to deploying AI-assisted diagnosis and treatment tools, implementing structured AI training programs, 35 and even addressing physician shortages through tele-reviews and inter-hospital collaboration. Simultaneously, dedicated AI application support funds should be established for hospitals in remote areas to subsidize their maintenance and review costs, preventing a surge in misdiagnosis rates caused by “over-reliance due to resource scarcity.” In other words, a balance should be struck between encouraging adoption and risk control.

Methodological implications and the ecological linkage

Methodologically, this study responds to the critique of “ecological fallacy” often leveled at cross-level research. We argue that linking aggregate community sentiment to hospital-level outcomes is not a fallacy but a reflection of the institutional environment. Hospitals are open systems permeable to the pressures of their communities; thus, the aggregated risk perception is a valid proxy for the institutional pressure faced by administration. Furthermore, the use of the “Tracer Condition” approach offers a replicable template for future research. By anchoring our safety metrics to a condition with an indisputable surgical endpoint, we circumvented the variability of administrative coding that plagues general misdiagnosis studies. The high concordance between our survey-based risk metrics and the NLP-derived Social Media Risk Index further validates the use of digital footprint data as a real-time sensor for health policy analysis.

Practical implications

The positive link between AI frequency and misdiagnosis in acute cases serves as a warning. Implementing AI must be accompanied by vigilance training. Accordingly, clinical protocols should explicitly require that AI-generated negative results for acute symptomatic patients must undergo manual validation to avoid automation bias and address the multiple uncertainties AI brings. 35 Medical facilities could be required to demonstrate the availability of AI supervision protocols, formulate graded disposal guidelines for AI risk scores, or establish an ethics committee before obtaining operational licenses.35–38 Additionally, physicians can adopt a human-AI collaborative diagnosis strategy—where clinical experts publicly explain AI recommendations after they are generated—to enhance patients’ sense of control over the system. The perception of AI among frontline clinical staff is a key link between public risk perception and hospital technical decisions. 38 Consistent with the logic of our study that “risk perception is a product of interaction between social psychology and clinical norms,” this suggests that hospitals need to attach importance to the communication role of healthcare providers when formulating AI application strategies—improving their AI-related communication capabilities through standardized training can not only reduce public anxiety about “black-box algorithms” but also build more reasonable risk expectations in bedside interactions, thereby alleviating the excessive constraint of risk perception on AI adoption. These measures not only reduce the risk of social opinion crises but also promote the standardized application of AI-enabled healthcare at the policy level. It could also be mandated that AI developers ensure their products meet legally specified safety standards, minimizing diagnostic errors in AI system design. 37 Because the technical robustness and clarity of AI applications is a prerequisite for trust and acceptance exhibited toward this technology by stakeholders. 32

Furthermore, as indicated by our time-lag analysis, risk perception exerts an impact on hospital behavior with a 1–2 month lag. Based on this, health authorities can leverage social media monitoring to detect surges in algorithmic anxiety and implement transparency campaigns to intervene before hospitals adopt defensive responses.

Limitations and future research

First, while the time-lag analysis supports the directionality of our model, the observational nature of the data precludes definitive causal claims—including case-mix variations, unrecorded hospital safety initiatives, or regional differences in clinical training.

Second, our findings regarding “Automation Bias” are specific to the tracer condition of Testicular Torsion, an acute, binary outcome scenario. These dynamics may differ significantly for chronic conditions like diabetic retinopathy, where AI serves as a long-term risk stratifier rather than an immediate triage gatekeeper. Future research should test this model across different disease archetypes.

The “Social Media Risk Index” inherently over-represents younger, urban populations. Although we triangulated this with a representative survey, the digital discourse reflects “vocal” risk perception rather than “universal” risk perception. However, in the context of policy pressure, it is often the vocal minority that drives institutional change.

Finally, while our model links macro-level sentiment to meso-level adoption, we acknowledge the presence of unmeasured intermediate administrative variables, such as specific IT budget allocations and internal procurement protocols, which may co-vary with social pressure. Future research utilizing qualitative administrative interviews is required to map these internal decision-making pathways.

Conclusion

This study illuminates the hidden sociotechnical currents that guide medical AI adoption. The empirical results yield three critical insights: Public risk perception acts as a powerful institutional constraint. We demonstrate that Public Risk Perception acts as a powerful institutional constraint, effectively throttling technology deployment. However, we also reveal the “Safety Paradox” of digital health: where social pressure is low or necessity is high, the uncritical integration of AI in acute settings may compromise diagnostic vigilance. Achieving safe AI integration requires addressing both the social fear of the algorithm and the clinical over-reliance on it.

Supplemental Material

sj-pdf-1-dhj-10.1177_20552076261425375 - Supplemental material for Public risk perception, institutional AI adoption, and diagnostic safety: An exploratory cross-level analysis using a tracer condition approach

Supplemental material, sj-pdf-1-dhj-10.1177_20552076261425375 for Public risk perception, institutional AI adoption, and diagnostic safety: An exploratory cross-level analysis using a tracer condition approach by Zhichao Li, Mengxue Lyu and Jilin Huang in DIGITAL HEALTH

Footnotes

Ethical approval

This study was conducted in strict accordance with the Declaration of Helsinki. The survey component involving human participants was reviewed and approved by the Institutional Review Board of SJT-No.374. Informed consent was obtained digitally from all survey respondents prior to data collection. The hospital data component utilized de-identified, retrospective administrative records, for which a waiver of consent was granted by the Ethics Committee of the Federal District Health Department, provided that data anonymity was maintained.

Author contribution

Zhichao, Li:Conceptualization, Funding acquisition, Methodology, Project administration, Supervision, Writing- original draft, Writing- review & editing

Mengxue, Lyu:Writing- original draft, Writing- review & editing

Jilin, Huang: Conceptualization, Data Curation, Formal analysis, Funding acquisition, Validation, Visualization, Writing- original draft, Writing- review & editing

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by [Major Project of the National Social Science Fund of China: A Study on Urban Composite Risks and Their Governance Based on Large-Scale Survey Data under Grant [23&ZD144].

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Disclosure of AI tool use

We declare that artificial intelligence tools were only utilized for spelling correction and formatting checks during the manuscript preparation process, and no AI tools were employed in content creation, data analysis, or any other core research components.

Supplemental material

Supplemental material for this article is available online.

Appendix

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.