Abstract

Smartphones with their rising popularity and versatile software ‘apps’ have great potential for revolutionising healthcare services. However, this was soon overshadowed by concerns highlighted by many studies over quality. These were subject and/or discipline specific and mostly evaluated compliance with a limited number of information portrayal standards originally devised for health websites. Hence, this study aimed to take a broader approach by evaluating the most popular apps categorised as medical in the United Kingdom for compliance with all of those standards systematically using the Health On the Net (HON) Foundation principles.

The study evaluated top 50 free and paid apps of the ‘medical’ category on both iTunes and Google stores for evidence of compliance with an app-adapted version of the HON Foundation code of conduct. The sample included 64 apps, 34/64 (53%) were on Google Play and 36/64 (56%) were free. None of the apps managed to comply with the entire eight principles. Compliance with seven principles was achieved by only one app (1.6%), and the rest were compliant with three, two, and one (14.7%, 27%, and 38%, respectively).

In conclusion, this study demonstrated that most popular apps on the medical category that are available in the United Kingdom do not meet the standards for presenting health information to the public, and this is consistent with earlier studies. Improving the situation would require raising the public awareness, providing tools that would assist in quality evaluation, encouraging developers to use robust development process, and facilitating collaboration and engagement among the stakeholders.

Keywords

Introduction

Healthcare services are facing monumental challenges, ranging from increasing costs, budget cuts, rising ageing population, and increasing burden of chronic illness to issues with health service inequality and pressures for improving the quality of care. 1 The situation has even reached apparent crisis proportions, as what was revealed in the Francis report, 2 where among the various recommendations made, ‘information’ was identified as key to improving services. This was subsequently followed by guidance from the Academy of Royal Colleges, outlining how information technology (IT) could be harnessed by healthcare services to improve access, efficiency, and quality. 3 The concept of using IT solutions in healthcare though is not new, and such solutions often faced various implementation obstacles, such as customisation issues, the lack of advocacy by healthcare professionals, and accessibility problems. 4

In this context, smartphones with their rising popularity 5 and versatile portable software known as ‘apps’ have great potential for overcoming these hurdles 6 and could be a game changer. Nevertheless, the hype was soon overshadowed by negative reports of apps providing the wrong information, 7 making false claims, 8 content plagiarism accusations, 9 and potential for security and privacy breaches. 10

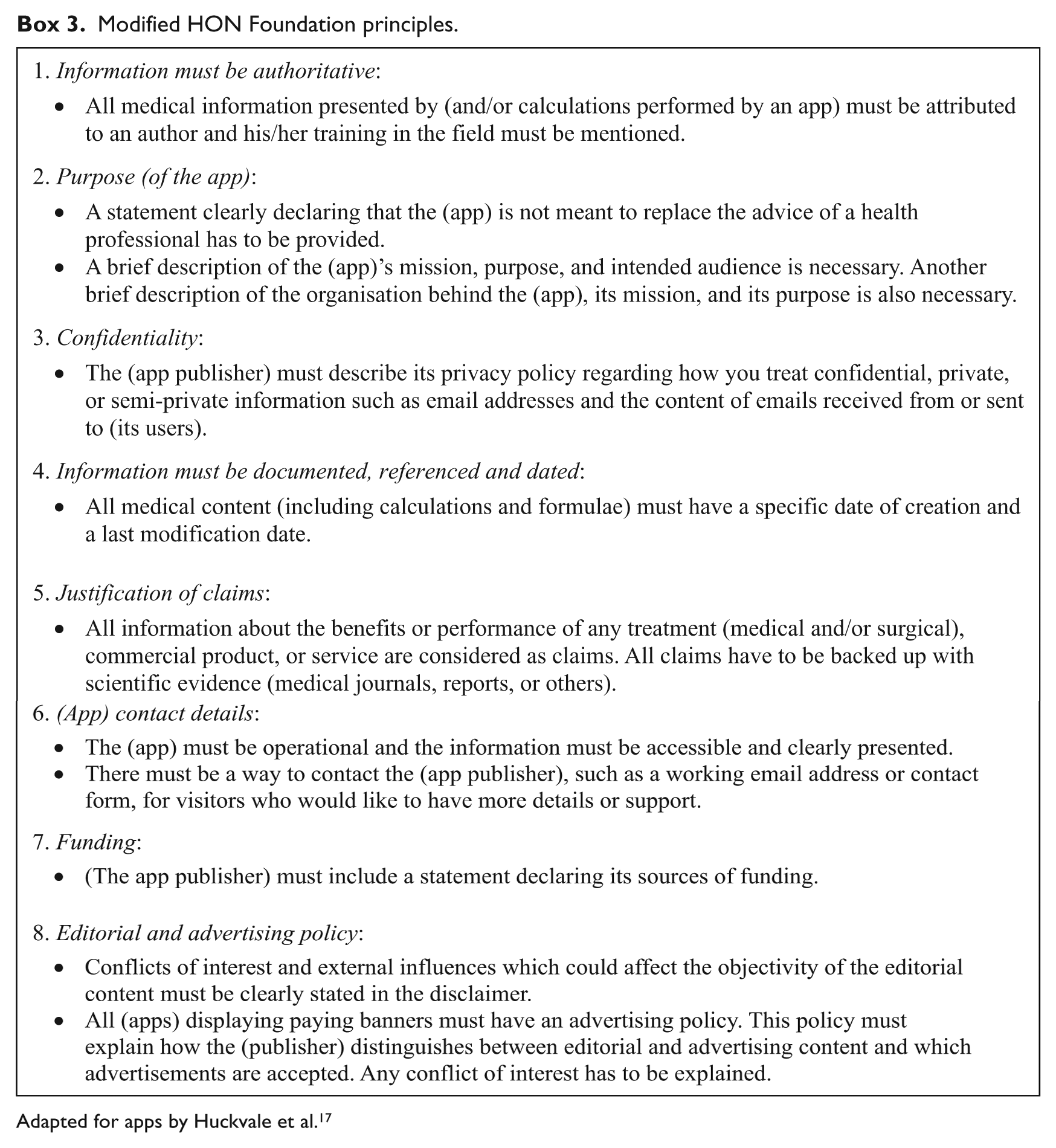

Moreover, studies that evaluated various aspects of medical apps directed towards healthcare professionals and the public were found to be of variable quality and mostly poor.8,11–22 The majority of these evaluated compliance with a limited number of information portrayal standards originally devised for health websites, namely, authorship,14–16,18–21 consistency with professional guidelines,11,19 source attribution,15,23 purpose, 23 content currency,19,24 security, and privacy. 12 The only study that managed to systemically evaluate all of those principles was a study by Huckvale et al. 17 on ‘asthma’ apps where he utilised the Health On the Net (HON) Foundation principles. The HON Foundation is an international, not-for-profit health website accreditation initiative based in Geneva. 25 Its principles have objective standards that are easy to use and widely applicable. 26 The value of evaluating adherence to information portrayal standards lies in that they are based on widely accepted ethical principles, they help reduce misunderstanding by clarifying the context, and they empower users to validate and select suitable information for themselves. 27

This study, hence, aimed to take a broader approach by evaluating the most popular apps categorised as medical in the United Kingdom for compliance with all of those standards systematically by using the HON Foundation principles.

Previous studies on the quality of medical apps quality

Studies that evaluated the quality of medical were subject and/or discipline specific. Topics covered were smoking cessation, 11 colorectal surgery, 18 dermatology,8,16 cardiothoracic surgery, 14 asthma, 17 opioid conversion, 15 alcohol consumption, 22 infectious disease, 24 microbiology, 21 medication self-management, 13 pain self-management,19,20 insulin dose calculation, 23 and medical app security. 12

Quality aspects evaluated were authorship,14–21 consistency with professional guidelines,11,17,19 source attribution,15,17,23 purpose,17,23 functionality testing,8,15,19,22,23 content currency,17,19,24 download popularity,11,19,23 user feedback,13,19,22 confidentiality,12,17 and compliance with principles of the HON Foundation, which encompasses authorship, attribution, purpose, content justifiability, confidentiality, and currency. 17

Healthcare professional input in app creation ranged from 14 per cent of all apps surveyed 20 to 48 per cent. 15 Huckvale et al. 23 showed that 59 per cent of apps in their study had a clinical disclaimer. Frequency of citing references in apps, on the other hand, ranged from 25 per cent of all apps surveyed in the study by Huckvale et al. 17 to 52 per cent by Haffey et al. 15 Moreover, compliance with HON Foundation principles was found to be poor, with 55 per cent of apps had their developer contact details available, 18 per cent provided disclosure of their funding source, and 17 per cent had a confidentiality policy. 17 Furthermore, Albrecht et al. 12 highlighted serious security and privacy issues in their study, where half of the apps lacked encryption or anonymity, data transfer was performed without user acknowledgement, and the content of privacy statements were ambiguous.

Methodology and study design

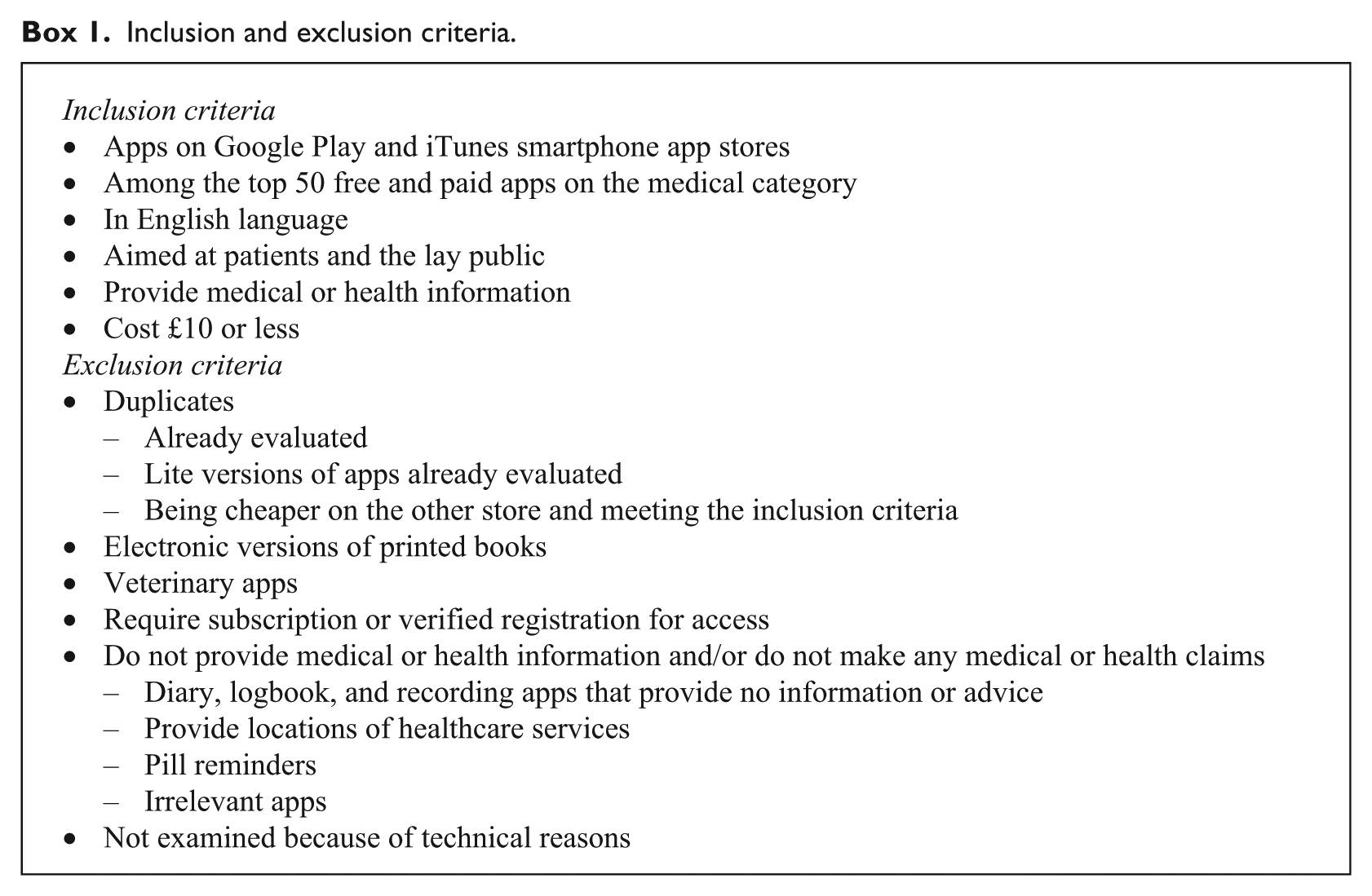

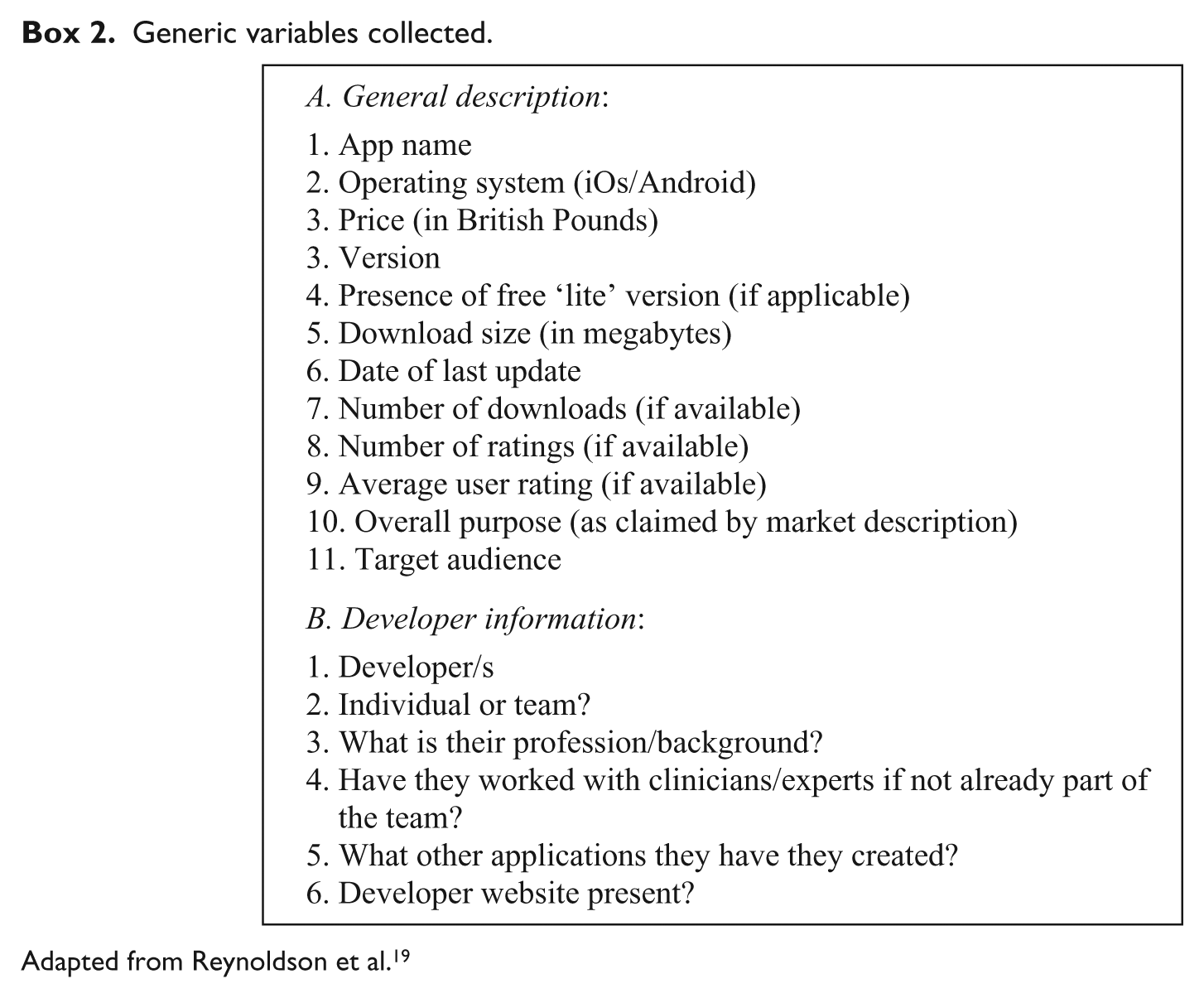

This was an observational cross-sectional study and entailed three stages. The first was capturing the top 50 free and paid smartphone apps on the ‘medical’ category of Apple iTunes (Apple Inc, Cupertino, CA) and Google Play (Google, Mountain View, CA) UK stores on a personal computer using the print screen function and subsequently selecting the sample after applying the inclusion and exclusion criteria (Box 1). The second stage entailed obtaining characteristic descriptive information from the promotional page of selected apps (Box 2). The third stage involved evaluating the app promotional page, developer website if available, and the app itself after installation for evidence of compliance with an app-adapted version of the HON Foundation principles used previously by Huckvale et al. 17 (Box 3).

Inclusion and exclusion criteria.

Generic variables collected.

Adapted from Reynoldson et al. 19

Modified HON Foundation principles.

Adapted for apps by Huckvale et al. 17

Methods

Sampling method

The apps of interest were obtained from Apple iTunes and Google Play UK stores, as these are the two most dominant worldwide smartphone platforms. 28 App stores accessed were in the United Kingdom, as this was the geographical location of the study. Sampling was purposeful, as the aim was to curate apps with particular characteristics. 29 The sample included free and paid apps to ensure fair representation of both types. The ‘medical’ category of both stores was chosen with the assumption that it would contain apps taken more seriously. It is worth noting though that the ‘medical’ category also contains non-relevant apps, 30 and this is due to the loose regulation of categorisation within app stores, as developers often list their apps in multiple categories to reach wider audiences.10,24,30,31

Selecting the apps among the top 50 was to ensure that those sampled were the ones most in demand. To be among the top 50 apps in the US iTunes store, for example, a free app would require at least 23,000 downloads a day, while a paid app would require 950. 32 Additionally, since most app users today are becoming less willing to pay, 33 an arbitrary £10 cap was made. Furthermore, the target audience for sampled apps were patients and the lay public, with the assumption that those two groups are the more vulnerable for false information compared with healthcare professionals.

Funding and ethical approval

This study was self-funded, and purchased apps were subsequently uninstalled and refunded after evaluation. Apps are commercially available and therefore constitute data available in the public domain, hence no ethical approval was required.

Quality assessment standard

Apps selected had their store promotional page and developer website if available, and the app itself checked for evidence of content compliance with an app-adapted version of the HON Foundation principles used by Huckvale et al. 17 (Box 3).

It is worth noting that the final decision on principle compliance is ultimately decided by HON Foundation experts prior to accreditation, 34 and there is currently no information on the validity and reliability of any HON assessment tools used for websites35,36 or apps. However, the Foundation encourages developers and users to be aware of their standards and has even designated an online form to assist with compliance assessment, 37 thus one could reasonably argue that evaluating compliance with the HON Foundation standards does have face validity.

Compliance was checked by the author who is a health informatics master’s degree student at Swansea University. An app was deemed non-compliant with a given HON Foundation principle if the promotional page, developer website, and the app content itself do not fulfil all the applicable criteria. Moreover, in case of doubt or the lack of clarity on whether an app is compliant, the app was designated as non-compliant.

Data collection and analysis

Free access to the app stores was obtained using a personal computer with Microsoft Windows 7 operating system (Microsoft, Inc., Redmond, WA) via the Apple iTunes portal software (version 12.1.2) and the Google Play website portrayed on a Google Chrome browser (version 43.0.2357.81 m). Screenshots of the app store sections containing the required sample were captured on 27 May 2015. The eligibility criteria were applied and the generic information of the selected apps was gathered from the promotional page on the store. Compliance was recorded on Microsoft Excel 2013 spreadsheet table in dichotomous form (yes/no) for each one of the eight principles, akin to the study by Huckvale et al. 17

The apps were downloaded and installed on two personally owned devices. The iPhone apps from Apple iTunes were installed on an Apple iPad 2 tablet computer operated by an iOS version 7.1 operating system, whereas Google Play smartphone apps were tested on Samsung Galaxy Note 3 device (Samsung Electronics Co., Suwon, Korea) operated by Google Android Lollipop operating system version 5.0.

Statistics

Summary statistics were performed using Microsoft Excel 2013 with an Analysis ToolPak add-in. Fisher’s exact and Spearman’s rank tests were performed using IBM SPSS Statistics 22 (IBM, Inc., Armonk, NY).

Results

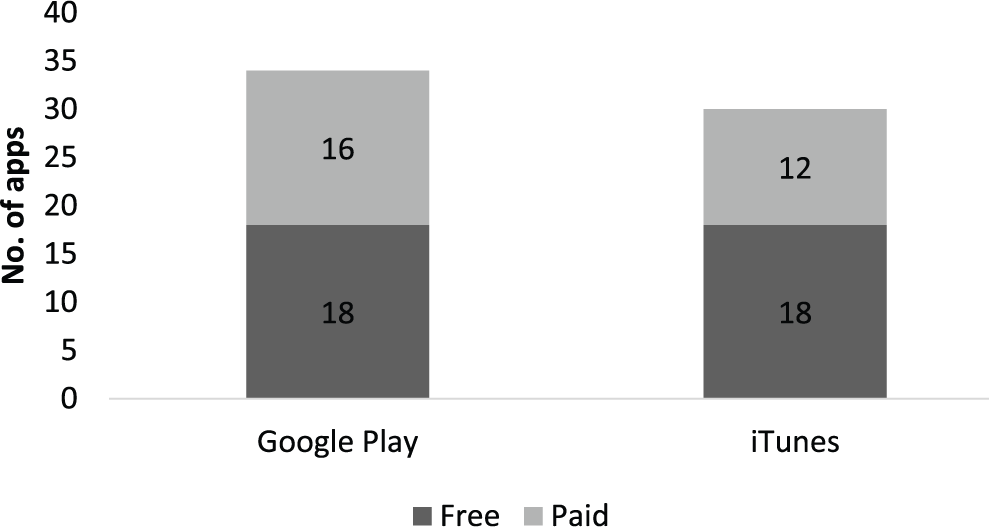

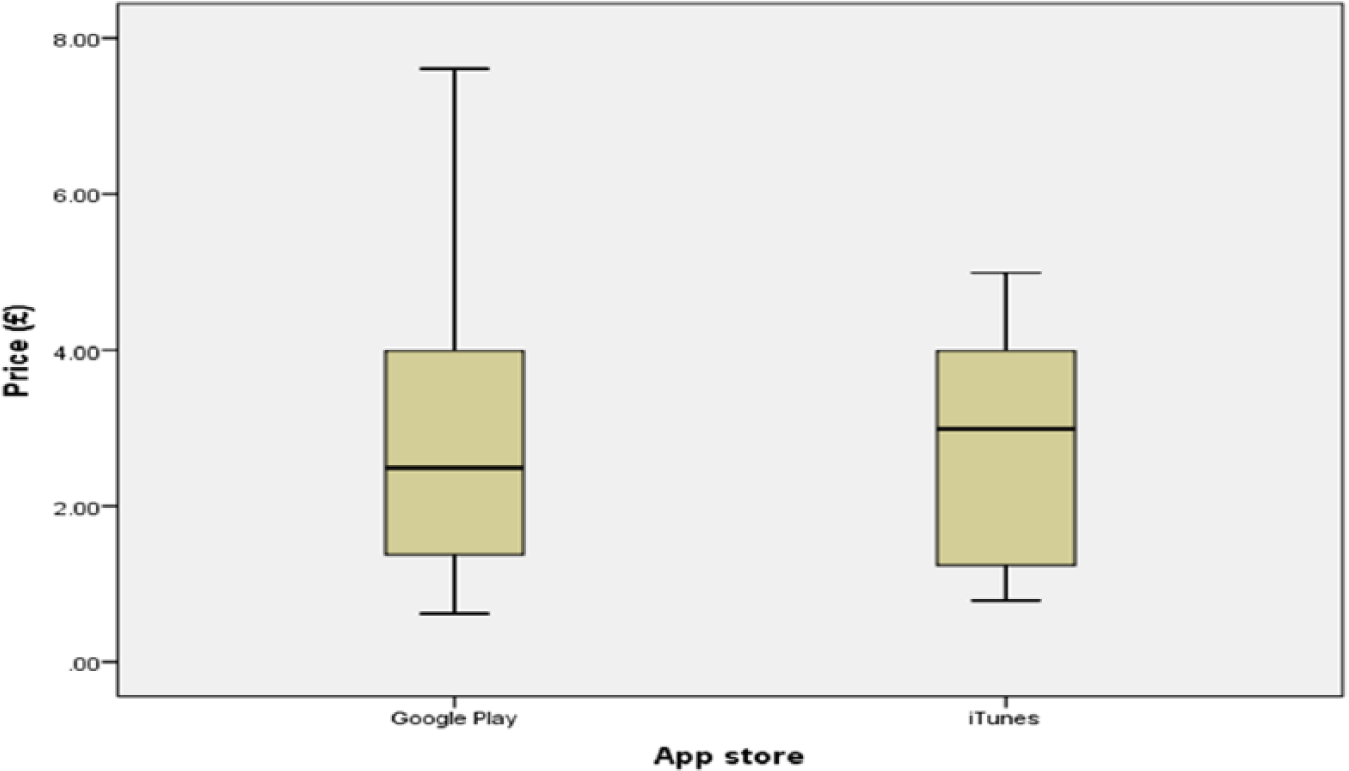

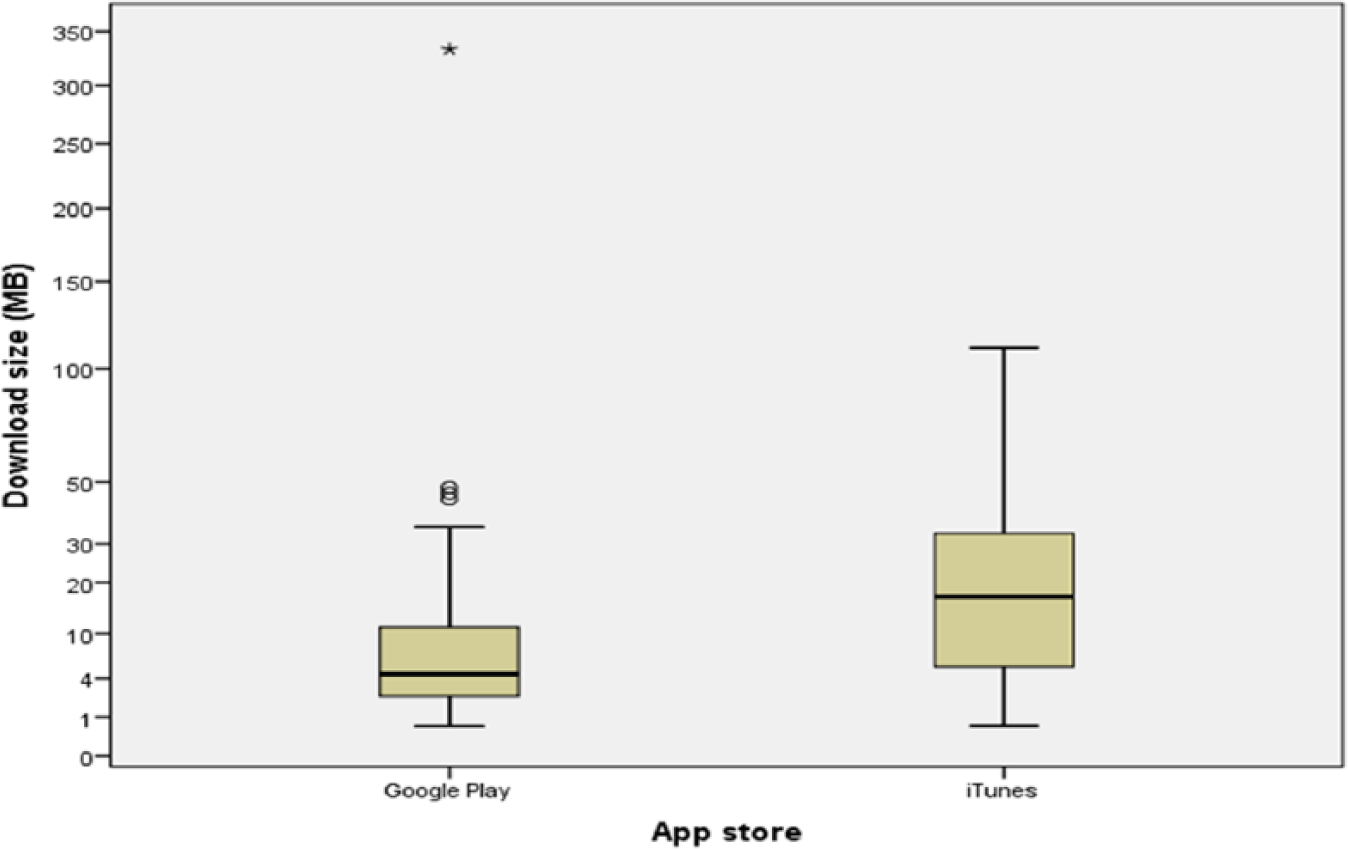

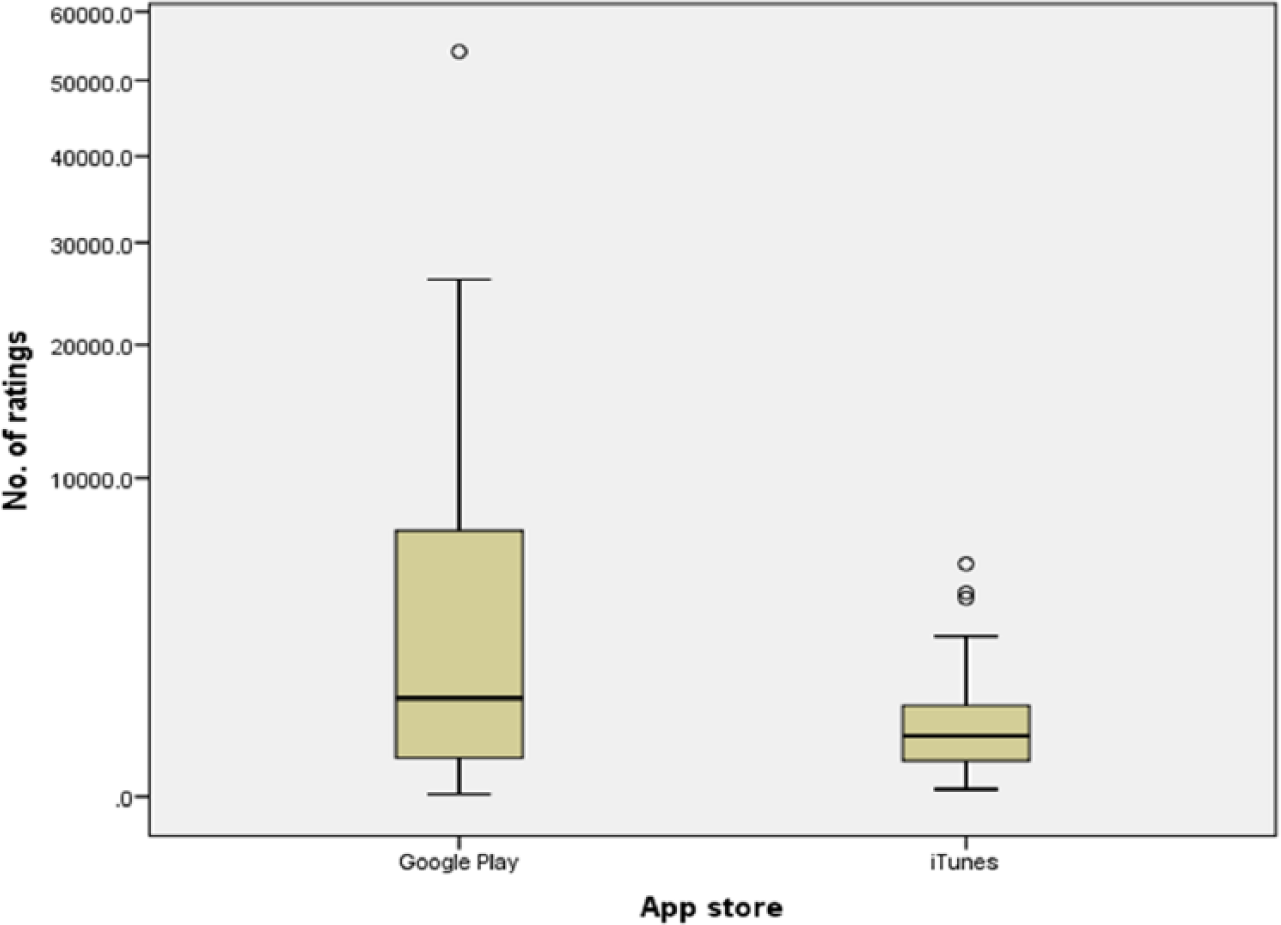

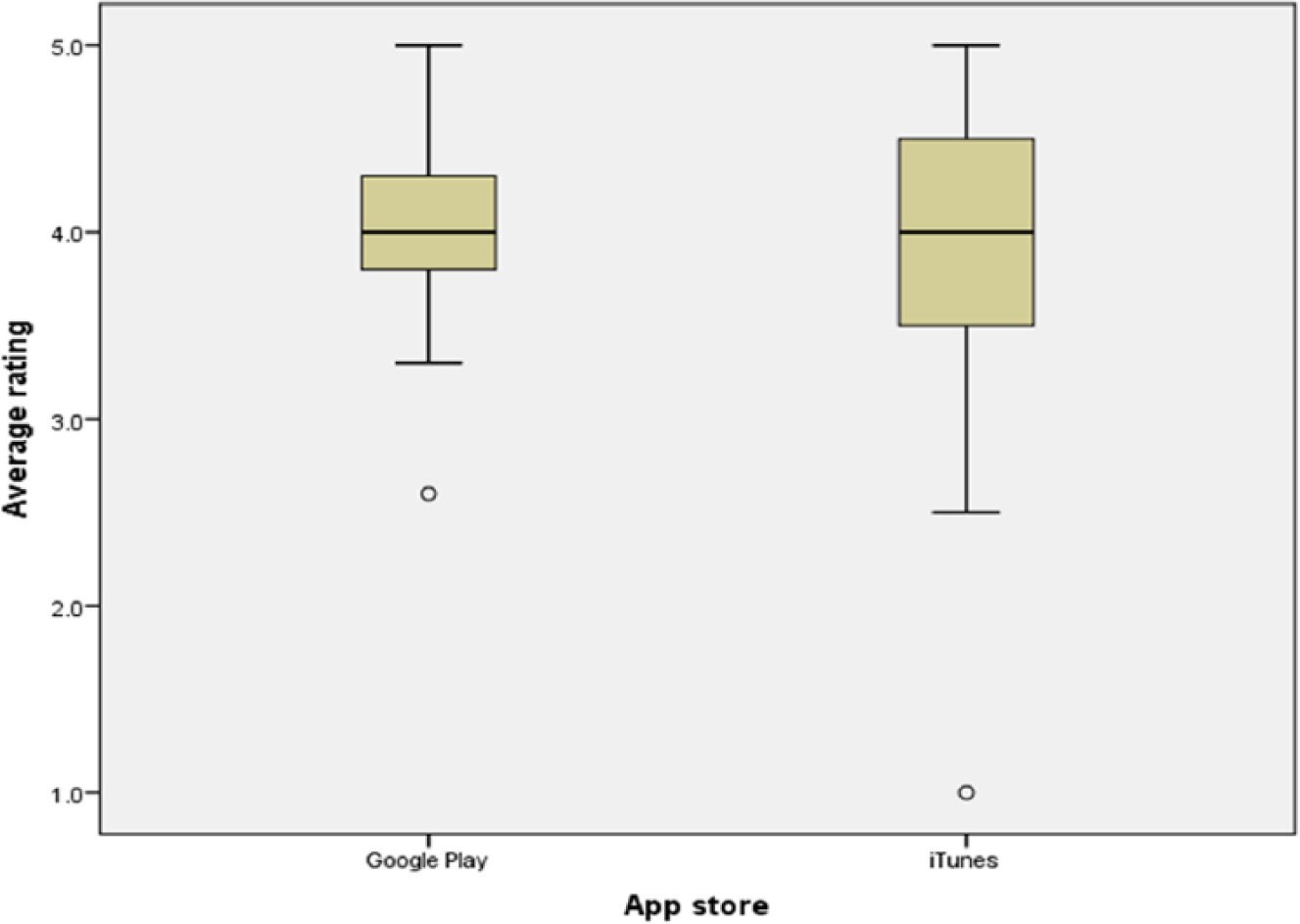

The study sample comprised 64 apps, 34/64 (53%) were from Google Play and 36/64 (56%) were free (Figure 1). The median price was £2.74 (range, 0.62–7.61; Figure 2), and the median download size was 7.15 MB (range, 0.594–333; Figure 3). Last update dates ranged from November 2008 to June 2015. The number of ratings received ranged from 2 to 54,093, and the median individual app rating was 4.1/5 (range, 1–5; Figures 4 and 5).

App sample (n = 64).

App prices.

Download sizes.

Number of ratings.

Average rating.

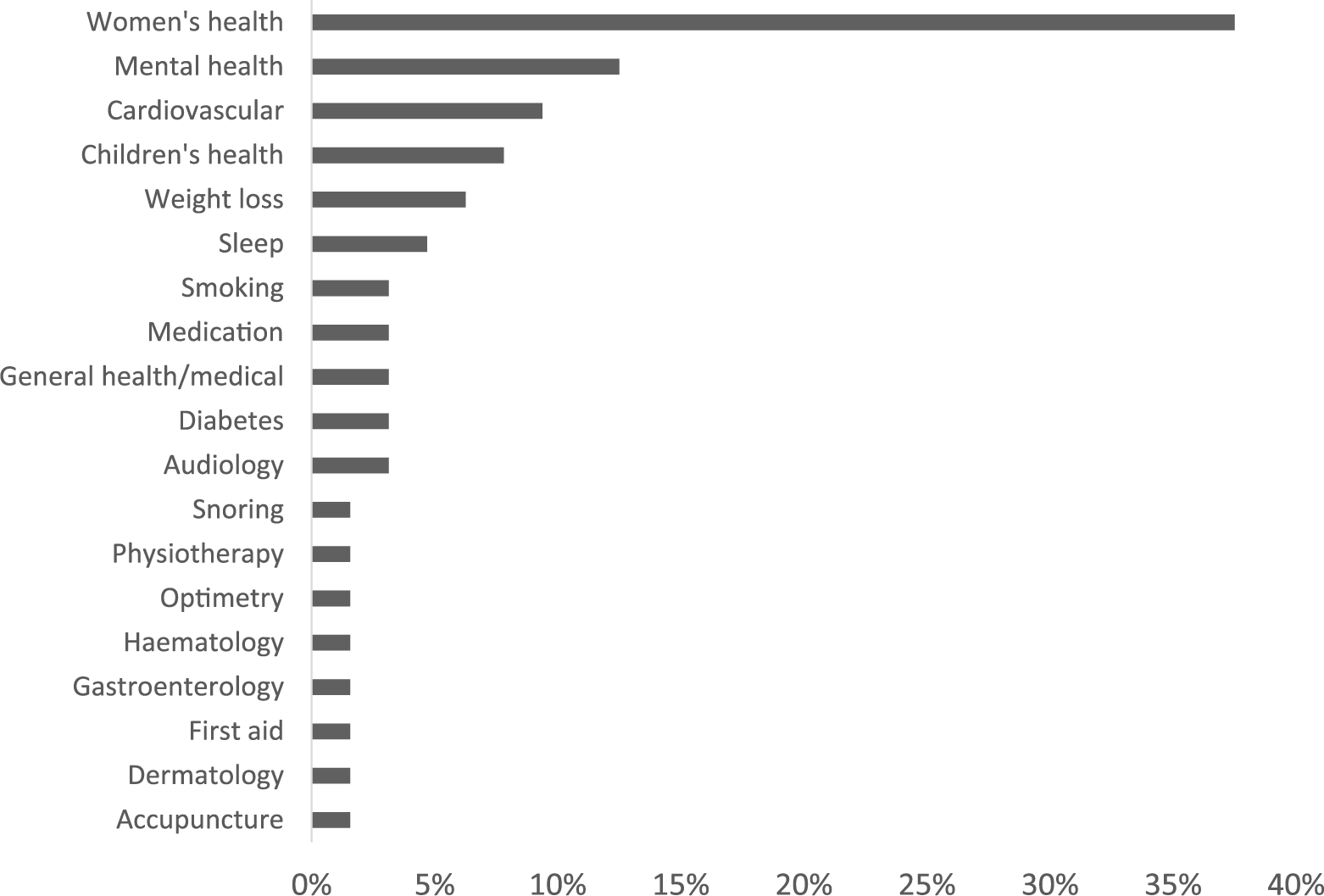

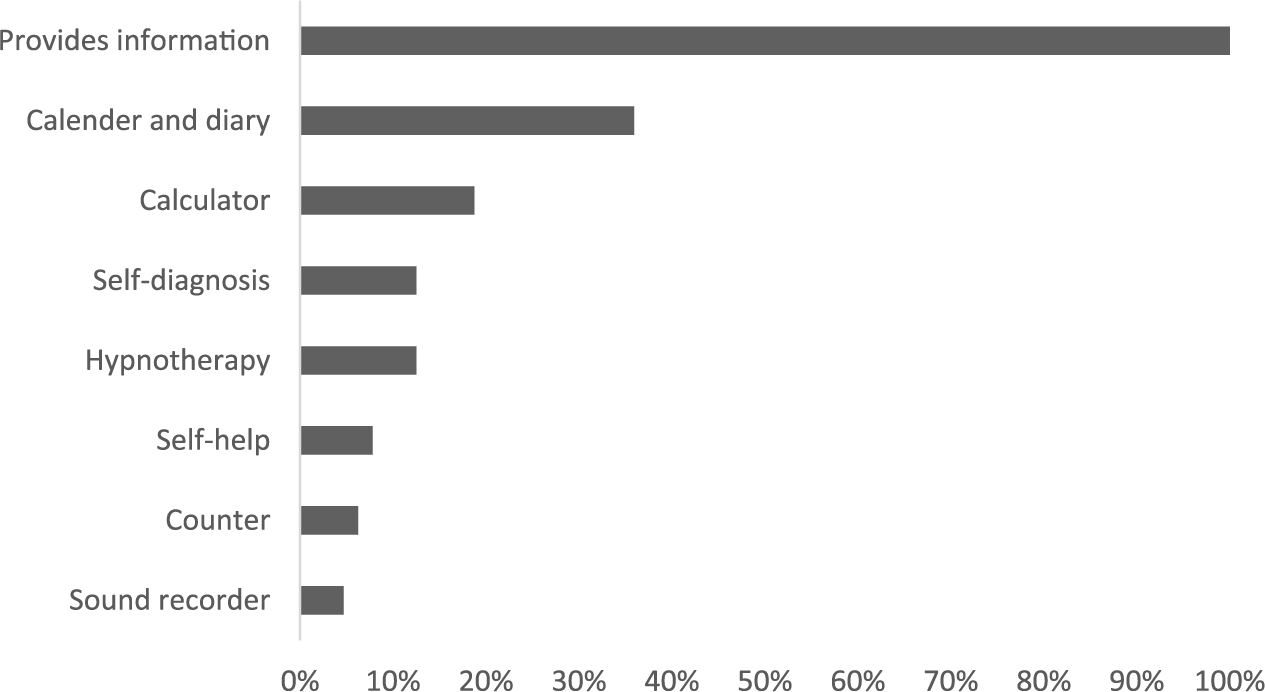

Most topic themes were on women’s health (24/64 (38%)) and mental health (8/64 (13%); Figure 6). The majority of app utilisations were providing medical or health information to the public (study inclusion criteria) (64/64 (100%)), calendars and diaries (23/64 (36%)), calculators (12/64 (19%)), self-diagnosis (8/64 (13%)), and hypnotherapy (8/64 (13%); Figure 7). Most apps were developed by a team (38/64 (59%)), 2/64 (3%) were developed by an individual, and 24/64 (38%) had no developer information.

App themes.

App functions.

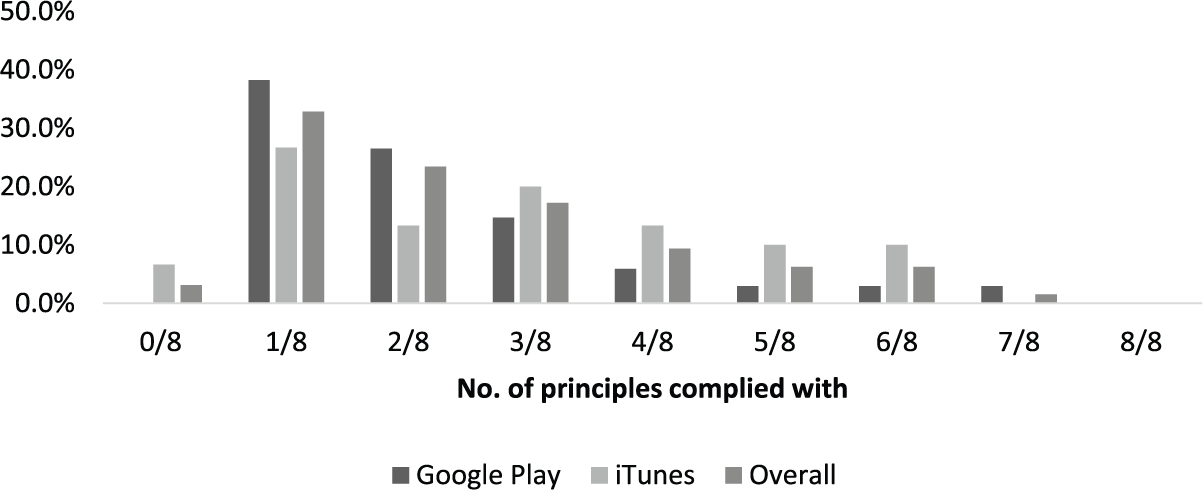

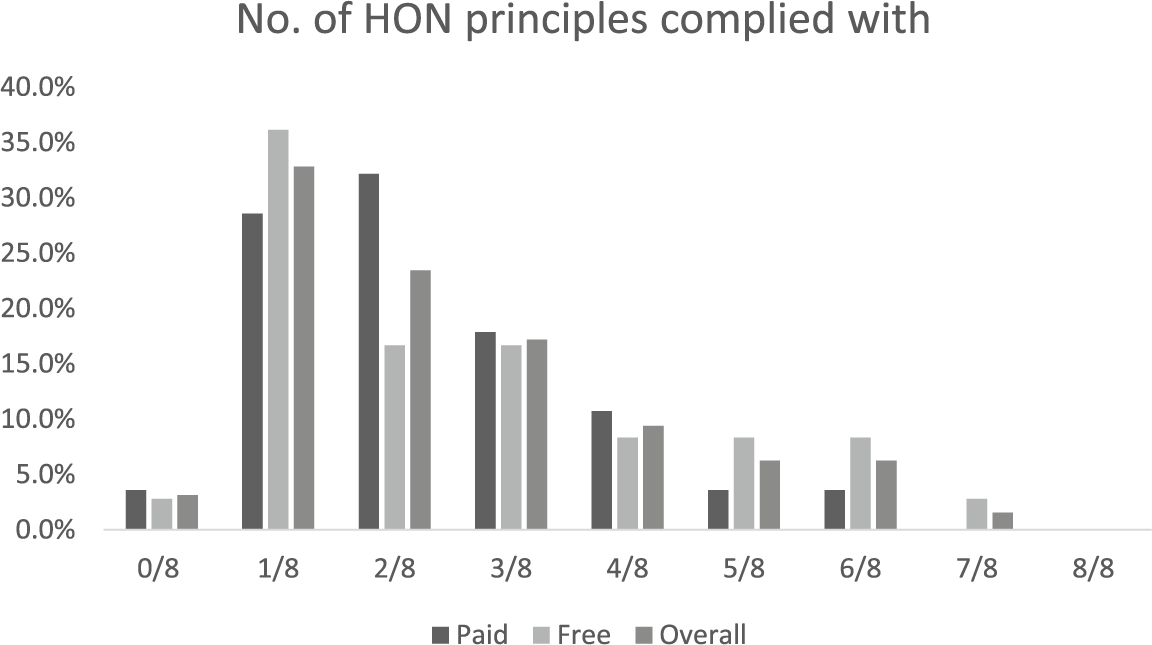

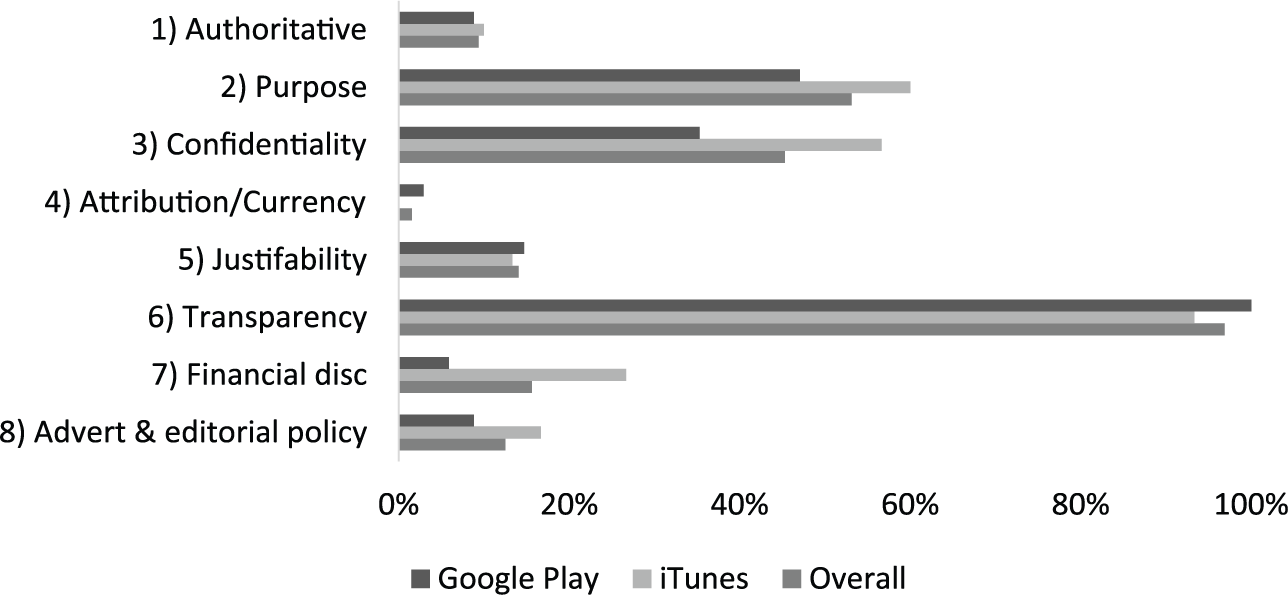

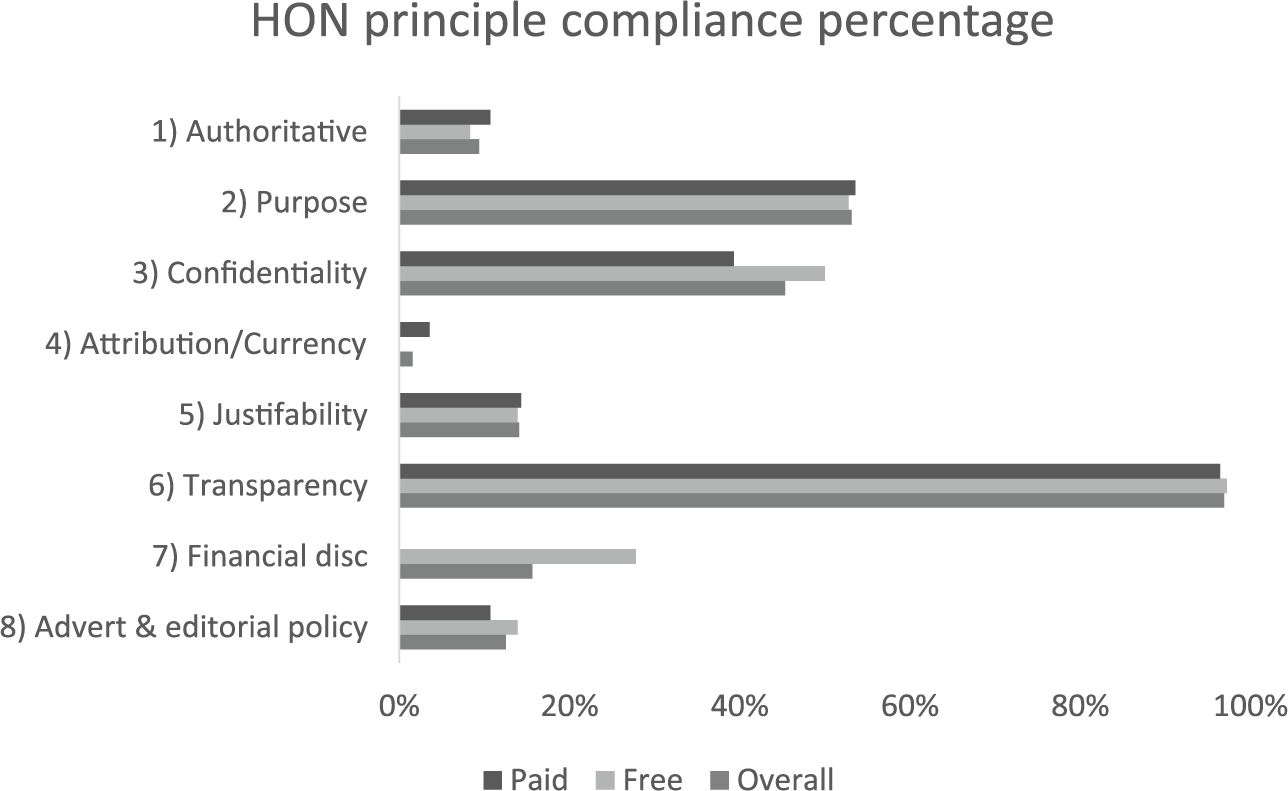

None of the apps within the sample managed to fully comply with the entire eight HON Foundation principles. Compliance with seven principles was achieved by only one app (1.6%) titled ‘Drugs.com Medication Guide’. This was an app rendering a HON Foundation certified website, and the only principle it failed to comply with was ‘attribution/currency’, as the date of when the information was first created was not clear. The rest of the apps were mostly compliant with one, two, or three principles (38%, 27%, and 14.7%, respectively; Figures 8 and 9). Most complied with principles were ‘transparency’, ‘purpose’, and ‘confidentiality’ (97%, 53%, and 43%, respectively), while the least complied with were ‘attribution/currency’, ‘authoritative’, and the ‘advertising and editorial policy’ (2%, 9%, and 12%, respectively; Figures 10 and 11).

Number of HON Foundation principles complied with (by store).

Number of HON Foundation principles complied with (by cost).

HON Foundation principle compliance percentage (by store).

HON Foundation principle compliance percentage (by cost).

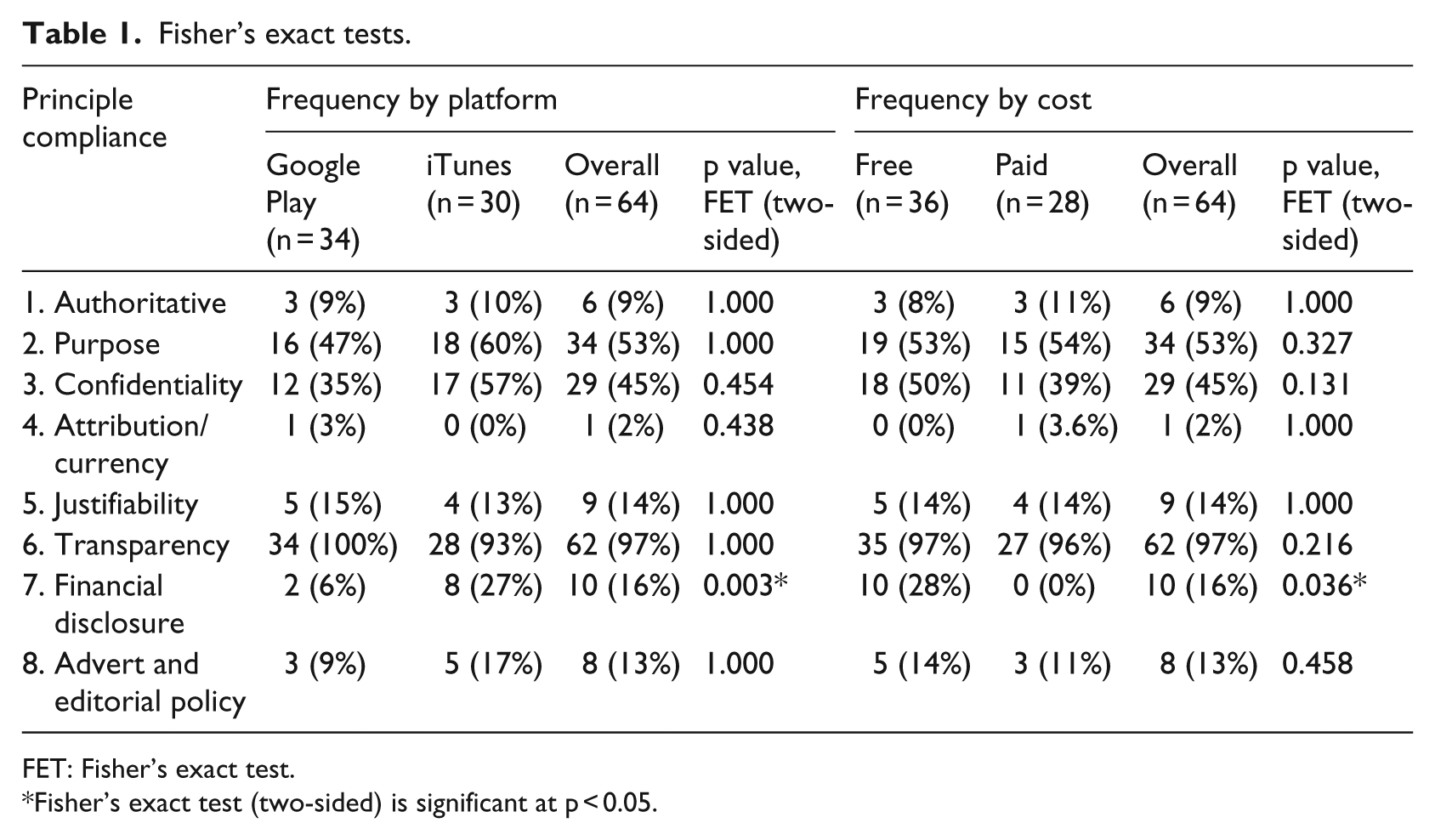

Apps on iTunes had better compliance with ‘authoritative’ (10% vs 8.8%), ‘purpose’ (60% vs 47.1%), ‘confidentiality’ (56.7% vs 35.3%), ‘financial disclosure’ (26.7% vs 5.9%), and ‘advertising and editorial policy’ (16.7% vs 8.8%) principles. Google Play apps, on the other hand, had better compliance in regard to ‘attribution/currency’ (2.9% vs 0%), ‘justifiability’ (14.7% vs 13.3%), and ‘transparency’ (100% vs 93.3%) principles. There was no significant statistical difference in compliance between the two app stores or between free and paid apps, with the exception of compliance with the principle financial disclosure where the results were significant at p < 0.05 (Table 1).

Fisher’s exact tests.

FET: Fisher’s exact test.

Fisher’s exact test (two-sided) is significant at p < 0.05.

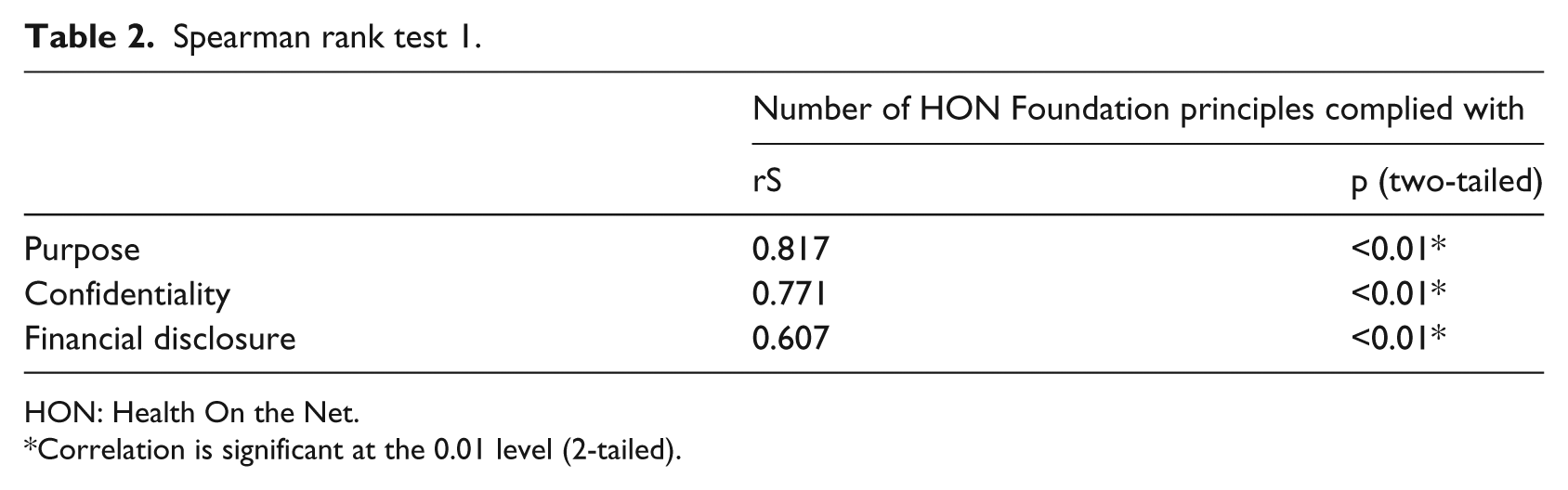

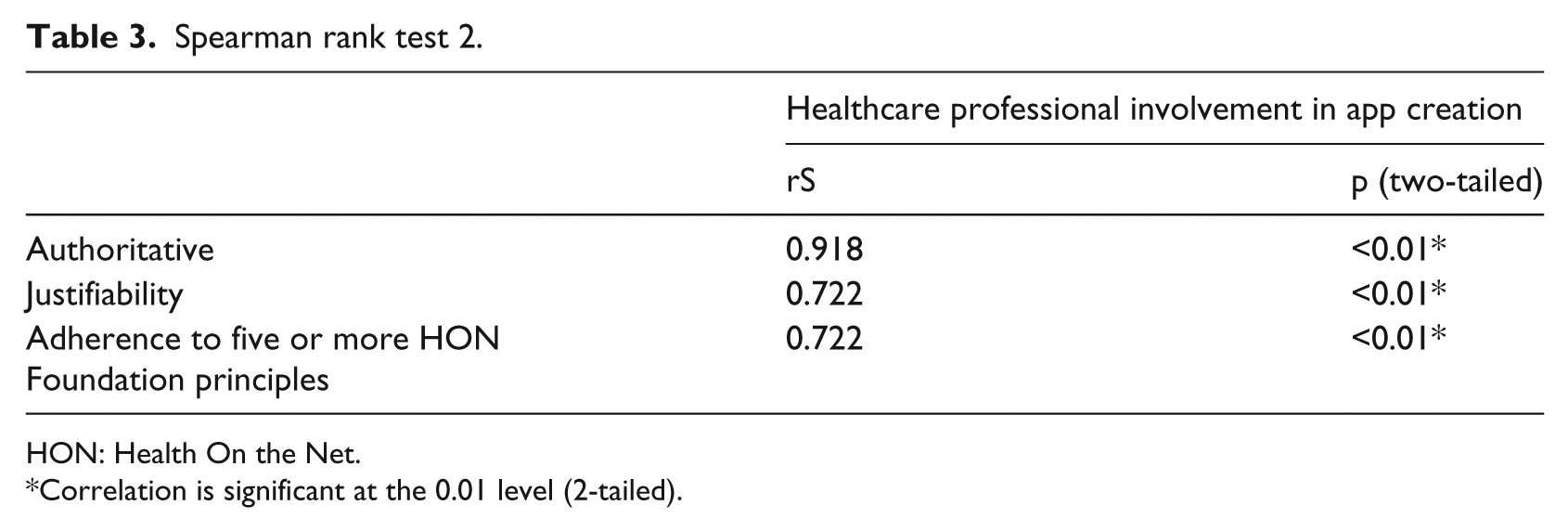

The study also showed that apps compliant with ‘purpose’, ‘confidentiality’, or ‘financial disclosure’ principles tend to correlate strongly with compliance with higher number of HON Foundation principles (rS 0.817, 0.771, and 0.607, respectively; p < 0.01*) (Table 2). Additionally, healthcare professional involvement in app creation was also found to correlate strongly with ‘authoritative’ and ‘justifiability’ principle compliance (rS 0.918 and 0.722, respectively; p < 0.01*), as well as adherence to five or more HON Foundation principles (rS 0.722; p < 0.01*) (Table 3). However, the generalisability of these associations and correlations is questionable given the small sample size.

Spearman rank test 1.

HON: Health On the Net.

Correlation is significant at the 0.01 level (2-tailed).

Spearman rank test 2.

HON: Health On the Net.

Correlation is significant at the 0.01 level (2-tailed).

Discussion

Compared to the best results in the literature, this study had better figures in regard to overall app compliance with transparency and confidentiality principles (97% vs 55% 17 and 45% vs 17%, 17 respectively) and worse figures in regard to ‘purpose’, ‘financial disclosure’, ‘authoritative’, and ‘attribution/currency’ compliance (53% vs 59%, 23 16% vs 18%, 17 9% vs 48%, 15 and 2% vs 52%, 15 respectively).

The better compliance with the ‘transparency’ principle result is unexplained, as there is no evidence to suggest that providing developer contact information for users is currently being required by app stores. On the other hand, better compliance with ‘confidentiality’ might have been influenced by the new mandatory privacy policy for apps involved in the transmission of data containing personal information by Apple, 38 though this is currently only a recommendation by Google. 39 It is worth mentioning that the HON Foundation mandates a privacy policy even when no personal data are being transmitted. 40

The overall poor compliance with the HON Foundation principles revealed in this study is not surprising, as it is comparable to earlier studies on apps evaluating partial and full app compliance. It also indicates that the quality issues with medical apps targeting patients and the lay public are both ongoing and widespread. More needs to be done to reassure users.

The scene on regulating medical apps was initially shrouded with uncertainty over whether they should be regarded as medical devices, and the position of regulatory agencies in Europe and the United States is still developing. However, some consensus has emerged in regard to considering certain apps that meet specified criteria as medical devices, though there are still differences among various agencies, and the situation still leaves wide range of apps outside their realm, such as electronic versions of reference materials, educational tools, and apps that patients use to obtain information. 41

Some have argued that the responsibility for overseeing the quality of these apps should lie with the big app vendors such as Apple and Google. 42 It is worth noting that apps submitted to both stores are subjected to various degrees of scrutiny prior to release, though their content is unlikely to be thoroughly evaluated. 43 However, there are little incentives for these big companies to undertake such role, especially that medical apps are not a major source of revenue, unlike other categories such as Games, 10 though the situation might change in view of the optimistic growth forecasts of the mobile health market. 44 Conversely, while the involvement of Apple and Google in medical app regulation might be desirable, this would also bring up their conflict of interest into question should they become involved. 42

Various initiatives have since emerged to assist users with identifying high-quality medical apps, such as peer-review, 45 certification,46,47 professional review, 48 and user feedback. 49 The scene, though, is still evolving with new emerging proposals such as the utilisation of an app synopsis that could be incorporated into a search engine 50 and self-certification based on standards of health information portrayal akin to the HON Foundation scheme, 51 while others have been discontinued such as Haptique. 52 Moreover, a recent guidance by Royal College of Physicians of London 53 recommended against using apps in healthcare unless they have been registered as a medical device and to exercise professional judgement when using apps in any clinical settings. The college also declared that while they have no intentions of recommending apps to doctors, they are collaborating with other organisations and agencies on developing quality criteria.

Not surprisingly, the current issues with medical app quality seem to mirror earlier problems and debates on health websites,27,54–61 which are still ongoing.26,62–64 These are relevant within the realm of medical apps as both are media for providing information, and their use could involve the collection and transmission of personal data. Furthermore, Internet accessibility is enhanced by smartphones, and websites could be rendered in a compressed format on smartphones known as ‘web apps’. Hence, valuable lessons could be learnt.

Views on health website content quality evaluation and regulation are diverse. Some regarded it as an impossible task and that the responsibility should be left with the users, akin to other information media outlets.62,65 Delamothe, 66 on the other hand, argued that no omniscient non-biased observer exists that would be able to evaluate contents of websites through the eyes of medical professionals, patients, and lay people at the same time. While others proposed and established initiatives and tools with the purpose of assisting users in identifying high-quality websites, such as codes of conduct, self-applied quality labels, user guidance systems, filtering, third-party accreditation for a fee, 60 and curation of useful sites in lists. 55 These took a downstream regulatory approach given the decentralised nature of the Internet 57 and were not without their limitations.

Lone codes of conduct without enforcement mechanisms, for example, were found not to be effective. 67 User guidance tools, on the other hand, could be burdensome as the evaluation process can be time consuming and subjective, 59 while filtering and curation lists require costly continuous updates.55,60 Moreover, third-party accreditation schemes do put up huge costs for participating website providers 60 and self-applied quality labels, such as the HON Foundation scheme, and certify only health information presentation, thus placing some burden on users by requiring them to be aware of the principles while evaluating the content 68 and on website developers in terms of compliance. 60

Given the above, Fahy et al. 26 have hence suggested that perhaps the best approach would be through utilising multiple methods. This is interestingly consistent, in a way, with the conclusion of a landmark market research in the 1980s on consumer preferences, which showed that there was no such thing as a single perfect set of product characteristics, but rather a variety of characteristic sets that would appeal to consumers with different preferences. 69

Recommendations and implications for further research

It would be reasonable to extrapolate that within the realm of medical apps, various quality initiatives and approaches might be needed in order to assist users with different degrees of willingness and abilities in regard to accepting the evaluative burden. This would also help in offsetting the limitations of each method and allows the consideration of various views held by app stakeholders. Therefore, a self-certification scheme similar to that of the HON Foundation, as suggested by Lewis, 51 would be a useful addition to other approaches in use today. This could also be utilised as a first level of a multi-tier app certification system, after which an app could undergo further comprehensive evaluation 50 and risk assessment. 70 However, further research is needed in determining the reliability and validity of the HON Foundation compliance tools within the realm of apps.

Additionally, raising awareness of the wide prevalence of poor quality among medical apps, explaining the potential risks associated, emphasising the importance of evaluating the content critically, and guiding them towards tools that could assist them in the process would help raise consumer expectations and could pressurise developers into raising standards. Developers of medical apps are currently strongly advised to adopt robust quality development processes,10,41 such as the ‘Clarify, Design, and Evaluate’ approach devised by the Imperial College of London. 71

Furthermore, bringing and engaging the stakeholders under a collaborative non-profit organisation, such as HANDI 72 in the United Kingdom, would greatly facilitate app development process through disseminating best practices and providing a great medium for networking and discussions.

Limitations

This study was restricted to Apple iTunes and Google Play stores, as these were the platforms with the biggest share of the market and meet the objective of evaluating most popular apps. Only the UK stores were evaluated as this was the geographical location of the study, though inferences could still be extrapolated to other developed countries. The sampling process was purposeful, and this was intentional as the aim was to obtain apps relevant to the scope of the study and was performed according to a predetermined explicit inclusion and exclusion criteria. The study sample was small due to the lack of funding and time constraints, and this had an impact on the ability to generalise the significance of group comparisons. Further research with a larger sample would provide useful insights.

On the other hand, compliance with the HON Foundation principles was evaluated by a single evaluator who is the study author. Although the Foundation does encourage developers and users to evaluate compliance using its explicit list of principles and criteria, this is ultimately formally assessed by experts prior to certification. This is additionally compounded by the lack of information in regard to the validity and reliability of any available HON assessment tools. Further research is needed to establish this for apps and take it beyond mere face validity.

Moreover, compliance evaluation by a single assessor increases the potential for bias. In an attempt to minimise this, a systematic evaluative methodology was adopted and was performed according to an explicit strict study protocol. Accordingly, an app was only deemed compliant with a given principle when it unequivocally fulfils all the applicable criteria. One would acknowledge though that this has set the bar high and likely to have contributed towards lowering app compliance results in our study.

Conclusion

This study demonstrated that most popular apps on the medical category that are available to the public do not meet the standards for presenting health information to the public, and this is consistent with earlier reports and studies on medical apps.

Our experience from websites tells us that multiple user guidance initiatives are needed to suit people with various differences and preferences. Thus, having a scheme akin to that of the HON Foundation would be a useful addition to the existing initiatives that aim to guide the users towards high-quality apps. This could also be utilised as a first-level stage within a multi-tier certification system.

Moreover, improving the current situation would require raising the public awareness, providing tools that would assist in quality evaluation, encouraging developers to use robust development process, and facilitating collaboration and engagement among stakeholders.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.