Abstract

The aim of this study was to analyse electronic health record–related patient safety incidents in the patient safety incident reporting database in fully digital hospitals in Finland. We compare Finnish data to similar international data and discuss their content with regard to the literature. We analysed the types of electronic health record–related patient safety incidents that occurred at 23 hospitals during a 2-year period. A procedure of taxonomy mapping served to allow comparisons. This study represents a rare examination of patient safety risks in a fully digital environment. The proportion of electronic health record–related incidents was markedly higher in our study than in previous studies with similar data. Human–computer interaction problems were the most frequently reported. The results show the possibility of error arising from the complex interaction between clinicians and computers.

Keywords

Background

Electronic health records (EHRs) are promoted due to their capacity to reduce clinicians’ workloads, costs and errors.1–4 Health information technology (HIT) is also expected to improve the co-ordination of care, thereby allowing for improved follow-up. 5 However, new technology may also pose novel risks to patient safety by disrupting established, traditional working norms and creating new risks in practices related to HIT design, implementation and use.6–10 Despite this, current evidence concerning HIT safety is relatively limited,11,12 and the few studies on the subject suggest that HIT contributes to less than 1 per cent of total errors in healthcare systems.2,13

The establishment of a voluntary patient safety incident reporting system is a core method for receiving and processing patient safety-related information and creating a more accurate understanding of patient safety risks. The data on patient safety incident reporting presented in this study provide a sample of hazards well-suited for identifying risks. 14 Characteristic profiles may be identified when collecting and analysing large numbers of incidents. 15 Magrabi et al. 16 searched and analysed computer-related patient safety incidents in a state-wide Australian Advanced Incident Management System (AIMS) database. Only 0.2 per cent of all reports in the AIMS database were HIT related. Machine-related problems were more common than human–computer interaction issues. The framework described by Magrabi et al. 16 has been used in more recent studies related to incident reporting data in the United Kingdom,17,18 and the results stress the significance of machine-related errors. Controversial evidence about HIT safety shows that HIT-related errors have complex sociotechnical origins.19–22

More information regarding EHR-related patient safety concerns is needed. Different patient safety data sources that complement each other are useful in identifying hazards and providing a more comprehensive view of the risks in a particular system.23,24 Adverse events occurring in one institution are known to recur in other institutions, often with the same causes and contributing factors. 25 By identifying the nature of patient safety incidents, initiatives for improvement can be developed and prioritised. 26 The lessons learned from incident reporting data can also be used to prevent the same incidents from occurring in other organisations on an international level. Currently, these systems do not include specific interventions to reduce risk, which requires consideration.14,26

Methods

Objective

The first aim of this study was to analyse EHR-related patient safety incidents in a patient safety incident reporting database in a fully paperless, digital hospital environment and, consequently, to contribute evidence about HIT safety. Our second aim was to compare these data to a similar international database in public hospitals and discuss the data content. In particular, we aimed to answer the following research questions:

What is the proportion of EHR-related patient safety incidents in a patient safety incident reporting database in a fully paperless, digital hospital environment? Which are the most common types of computer-related patient safety incidents in a patient safety database in a fully digital hospital environment and have actions been taken to prevent such incidents from recurring? And finally, what are the main differences between the two similar databases?

Setting

According to the Finnish Act on Health Care from 2011, 27 all healthcare organisations must maintain a patient safety incident system as a part of their patient safety system. The Finnish patient safety incident reporting model and instrument, HaiPro, was developed mainly during 2006 and is anonymous. 28

The incident reports in HaiPro consist of structured and free-text fields and describe the background details of the incident (e.g. incident unit, time of the incident, reporter’s profession, incident, contributing factors, consequences for the patient and the organisation, quantification of harm on a 5-point scale/risk matrix and corrective measures). Events are classified into 13 incident types using HaiPro’s national classification. The most frequently used incident categories are ‘Medication and Transfusions’, ‘Information Flow’ and ‘Information Management’ categories as well as ‘Laboratory’, ‘Imaging’ and ‘Other Patient Treatment Procedures’ categories. All the HaiPro main categories include more detailed subcategories. An incident report can contain multiple event descriptions.

The hospital district of Helsinki and Uusimaa with over 21,000 employees and some 500,000 patients annually is the largest hospital district in Finland and includes tertiary university hospital functions. By 2011, its reporting system had included all clinical units in its 23 hospitals. The hospital district devotes resources to reporting and analysing the events. The hospital district offers on-going classroom training as well as an e-learning programme to train its staff to report and other responsible persons to handle the reports. Every unit has two medical and nursing managers to classify the incident reports according to uniform national guidelines, which include classification rules. Duplicate reports must be deleted from the database. The quality managers, the hospital district’s chief patient safety officer and a group of the hospital’s HaiPro classification development experts ensure the consistency and compliance with the classification principles. The hospital’s patient safety committee monitors the incident data on a regular basis. Managers are obligated to share reports with staff, and the person reporting an event receives feedback on the investigation through the system.

Since 2007, the entire hospital district has been using a fully paperless, comprehensive EHR system. The hospitals in this study used the same EHR system. HaiPro is not an integrated component of the EHR system, but an Internet-based user-friendly interface.

Data collection

Our analysis included all safety incidents reported through the HaiPro system between December 2011 and November 2013. The study involved searching the HaiPro incident reporting system and identifying incidents according to the current HaiPro classification of incidents. The following search conditions were ‘reports by the category “Information Flow” or “Information Management,”’ ‘reports by the subcategory “Patient Information Management (Documentation)”’ and ‘reports by the category “Devices and Use of Devices.”’ Free-text searches used the keywords ‘EHR’, ‘HIT’, ‘computer’, ‘documentation’, ‘incorrect information’, ‘referral’, ‘missing test result’, ‘identification’, ‘contact details’, ‘EHR downtime’, ‘hardware devices’, ‘device dysfunction’, ‘screen’, ‘mouse’, ‘output’, ‘print’, ‘printout’, ‘interface’ and the most common EHR proprietary names to avoid misclassification of the intended reports into categories other than those used in this study.

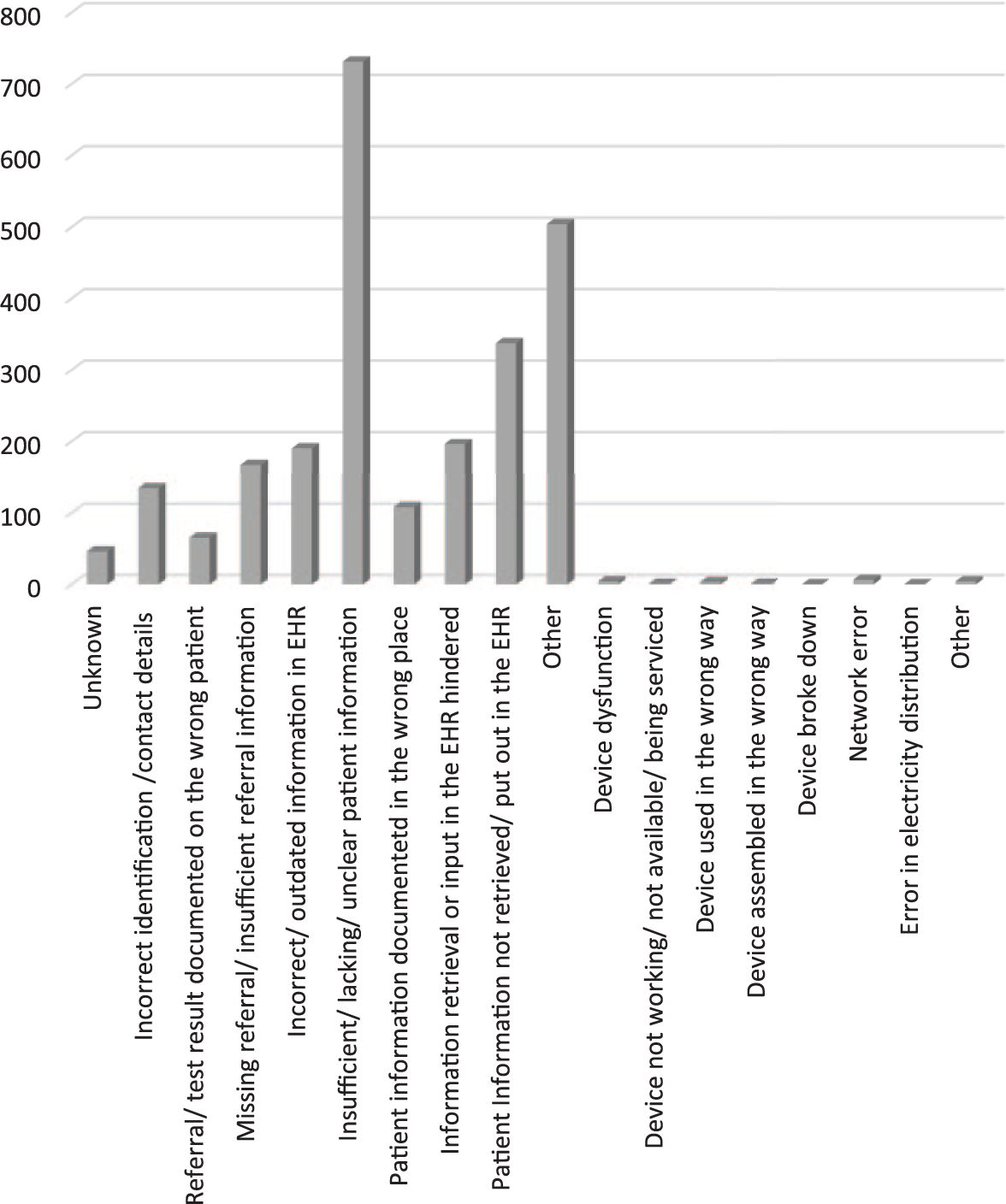

The query of structured data generated a total of 2379 incident reports, while the total number of incident reports in the entire database was 23,023. Of HaiPro’s 13 event types, the analysis included the ‘Information Flow’ and ‘Information Management’ subcategory ‘Patient Information Management (Documentation)’, with its detailed subcategories, as well as the category ‘Devices and Use of Devices’. The free-text search using keywords and analysis of this sample reflected the use of appropriate classifications, and the reports included the subcategory ‘Patient Information’, ‘Device and Use of Devices’ or ‘Unknown’. The frequencies of HaiPro incident reports, according to the HaiPro classification, appear in Figure 1.

Frequencies of HaiPro incident reports.

Taxonomy mapping

Mapping, or the process of linking terms that share the same meaning, is a research method for testing the reliability and validity of standardised taxonomies.29–33 We performed taxonomy mapping between the HaiPro classification and the HIT-specific taxonomy developed by Magrabi et al. 16 because Magrabi’s taxonomy is more widely used and was developed specifically to classify HIT-related incidents.16,34,35 This enables comparisons to international research results.

We first categorised problems into those principally involving human factors or technical problems, and then assigned them to one or more subclasses. Problems involving human factors are related to human interaction with information technology.

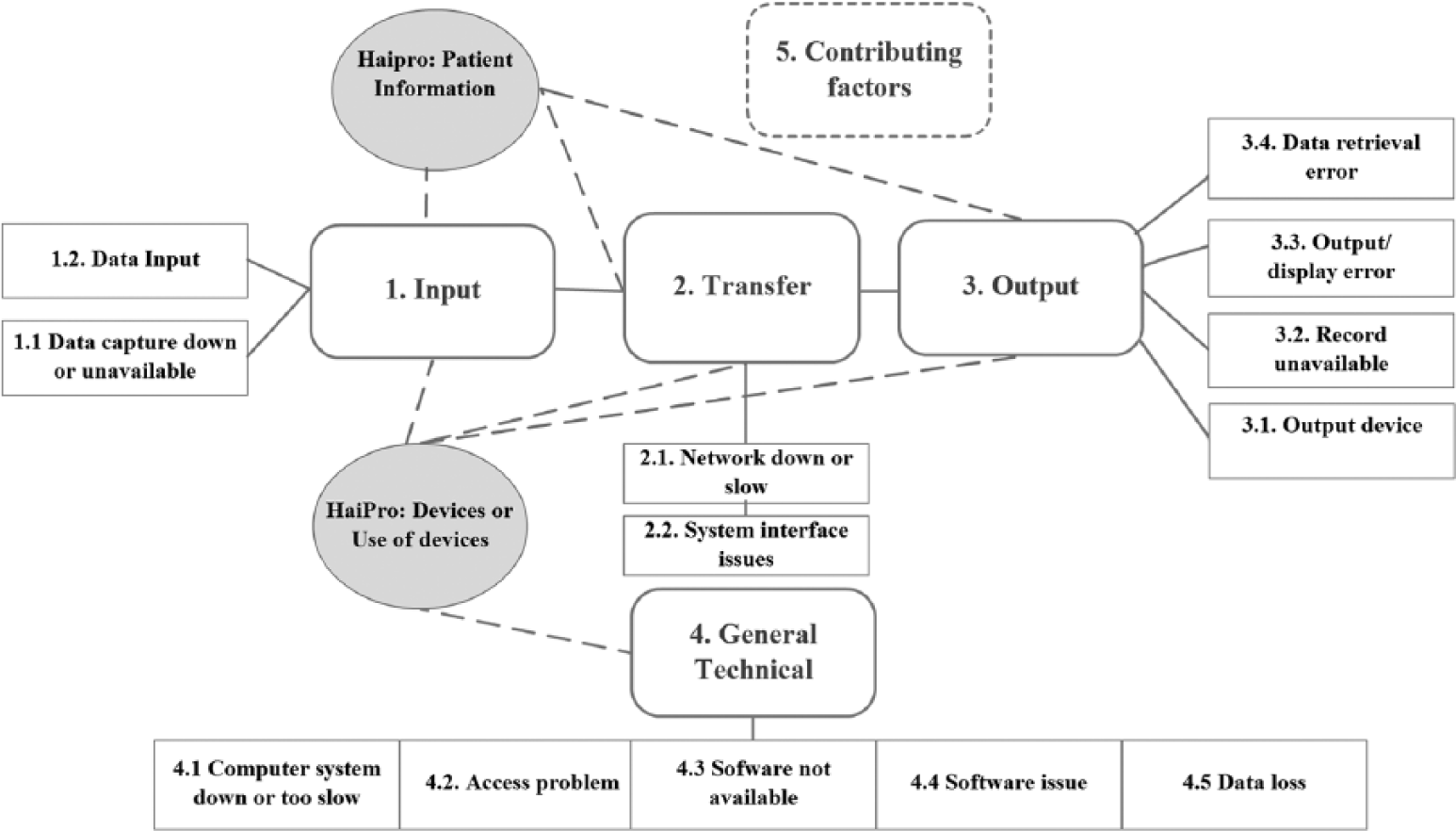

We cross-mapped the HaiPro classification subcategory ‘Patient Information Management (Documentation)’ as well as the category for incidents related to a ‘Device or Use of Devices’ with Magrabi’s taxonomy because these categories include HIT-related content. The main categories of the two classifications appear in Figure 2.

Classification of problems reported in computer-related patient safety incidents modified according to Magrabi et al. 16 and HaiPro.

To measure intercoder reliability, two researchers performed the taxonomy mapping independently in September 2014 on the basis of available definitions and examples according to HaiPro national guidance and the literature.16,28,34–36 The researchers placed appropriate HaiPro classifications into the categories created by Magrabi et al. and performed an inter-rater reliability analysis to ensure consistency between the researchers. One of the researchers is a chief patient safety officer and the other is a senior medical officer; both are experienced in informatics.

After the first coding, the researchers discussed the rules and coherence of the mapping, which neither yielded nor compared any results. The researchers then recoded and compared the chosen categories before compiling the data. Selecting the same category created a match. Choosing a different alternative or failing to recognise the category at all was considered a non-match. In one situation, one researcher understood the definition of the category differently than the other. In another case, the researcher interpreted the content of the class according to his previous understanding rather than the research context. In these cases, discussion made choosing the certain match obvious, and no complex situations developed.

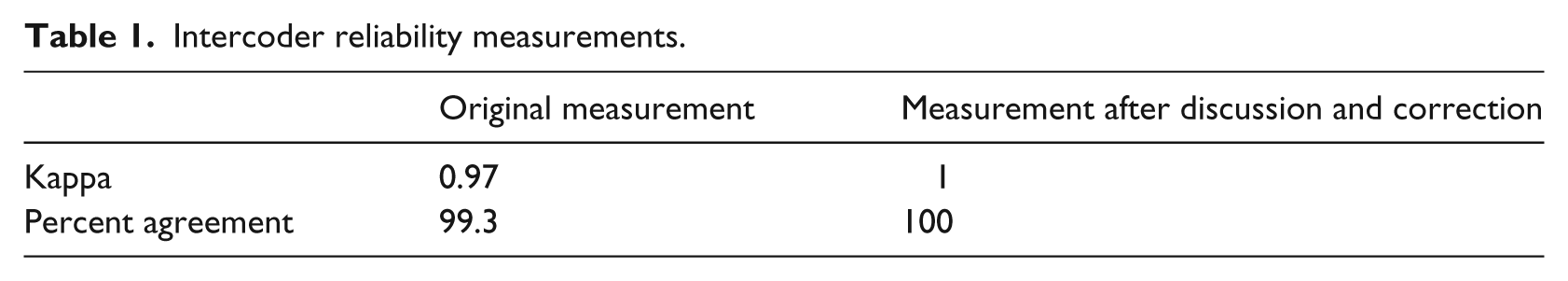

The researchers used percent agreement and Cohen’s kappa coefficient to perform the intercoder reliability measurements. Small corrections and a brief discussion yielded 100 per cent (i.e. perfect) agreement (Table 1).37–39

Intercoder reliability measurements.

The analysis indicated that most of the HaiPro Documentation classes were related to Magrabi’s ‘Input’ category, and the HaiPro ‘Device or Use of Devices’ category was related to all Magrabi’s main categories. If the mapping procedure for the two classifications showed that one HaiPro class was equivalent to several of Magrabi’s categories, the HaiPro classification recognised all Magrabi’s classes and identified the primary class. 16 Responsible persons at the organisations did not classify some of the HaiPro reports (18.5%) or the class was unknown. Cases containing too little descriptive information to classify them in detail fell into the HaiPro main category, with no indication of the exact class (e.g. ‘Missing Referral’ or ‘Wrong/Outdated Information’). Consequently, the tested framework could not classify these incidents exactly.

HaiPro features a separate category ‘Circumstances and Contributing Factors’, which represents an important part of the data on incident reporting. These HaiPro category classes are not interpreted unambiguously as a direct cause of an incident, as is the case in Magrabi’s framework. In HaiPro, ‘Circumstances and Contributing Factors’ may play an important role in the origin of an incident, but the class is still incomparable to Magrabi’s Contributing Factor. Thus, although the researchers in this study decided not to cross-map contributing factors in order to avoid research validity issues, they did identify similarities.

Results

Machine- and human-related problems

In the Finnish HaiPro system, only 8.45 per cent (n = 211) of the reports involved machine-related problems. The category ‘Devices or Use of Devices’ contained only 12 (0.5%) machine-related reports, whereas the category ‘Patient Information Management (Documentation)’ included 199 reports (8.4%). A total of 73 per cent (n = 1755) of the reports involved problems related to human–computer interaction and were classified originally in the HaiPro system category ‘Patient Information Management (Documentation)’. Only three reports (0.13%) in the category ‘Devices or Use of Devices’ were human-related. These cases were related to Magrabi’s categories ‘Data Input’ and ‘Data Retrieval Error’; the remaining HaiPro cases were either not classified or the category was unknown, so the framework could not serve to classify the incidents.

Both machine- and human-related problems in the HaiPro system led to rework (e.g. additional tests or treatments) in 49.5 per cent of cases.

Risk assessment

Of the 2379 HaiPro reports, 2119 (89.1%) contained a completed risk assessment. A majority (89.2%) of the incidents involved low-risk cases, and only a minority (0.8%) involved high-risk incidents.

Information input problems

The information input problems, clearly the largest category in the HaiPro data, accounted for 59.5 per cent (n = 1415) of the incidents, and incorrect identification or contact details accounted for 5.8 per cent (n = 139) of the computer-related incidents. In total, 2.8 per cent (n = 67) of the incidents were related to a case involving assignment of a referral or test result to the wrong patient, and 7.1 per cent (n = 170) of the cases were missing the referral or contained insufficient or wrong referral information. In 30.7 per cent (n = 731) of the incidents, insufficient, lacking or unclear patient information triggered incident reporting. In 4.6 per cent (n = 109) of incidents, patient information had been documented in the wrong place in EHR. Wrong or outdated patient information accounted for 8.2 per cent (n = 196) of the reports. Three reports (0.13%) in the HaiPro ‘Devices or Use of Devices’ category were related to data input.

Information transfer problems

In the HaiPro, data 8.8 per cent (n = 210) of the reports involved information transfer problems and were classified in the category ‘Information Retrieval or Input in the EHR Hindered’ (n = 199, 8.4%), the category’s only machine-related condition. Six reports (0.25%) involved network errors in the HaiPro ‘Devices or Use of Devices’ category, and a total of five reports (0.2%) in the HaiPro ‘Devices or Use of Devices’ category were classified as information transfer problems. The HaiPro categories used in this problem were ‘Device Dysfunction’ and ‘Device not Working, Unavailable, being Serviced (in Repair)’.

Information output problems

In HaiPro, 14.4 per cent (342) of the reports involved human–computer interaction problems coded in the category ‘Patient Information Not Retrieved or Put Out in the EHR’. Moreover, the previously mentioned HaiPro category ‘Insufficient, Lacking or Unclear Patient Information’ is equivalent to this category and accounted for 30.7 per cent (731) of the incidents, although these incidents are primarily regarded as information input problems.

Machine-related problems partly overlapped with Magrabi’s category ‘Information Transfer Problems’. The HaiPro categories used for this problem were ‘Device Dysfunction’ and ‘Device not Working, Unavailable, being Serviced (in Repair)’, which accounted for the same five reports (0.2%) as did the category ‘Information Transfer Problems’.

General technical

In HaiPro, the class ‘Other’ in the ‘Devices or Use of Devices’ category was equivalent to Magrabi’s ‘General Technical’ category, which accounted for four reports (0.17%). In addition, the HaiPro category ‘Unknown’ was mapped as ‘General Technical’, despite having no cases.

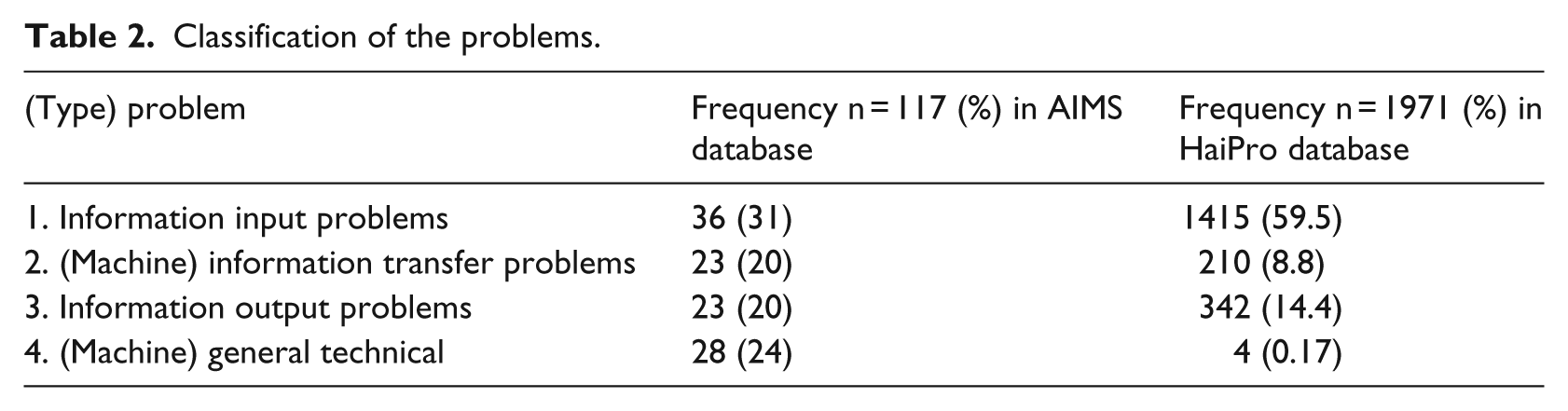

The classification of problems in the HaiPro and AIMS databases appears in Table 2.

Classification of the problems.

Contributing factors

The HaiPro category ‘Circumstances and Contributing Factors’ fails to indicate the specific reason for an incident even if these factors play a major role in the origin of the incident. The researchers decided not to perform a full cross-mapping procedure with regard to contributing factors in order to avoid problems with research validity. We analysed HaiPro’s contributing factors and recognised similarities. Of all HaiPro incidents, 69.3 per cent identified the contributing factor. One of the most common contributing factors in this dataset is communication in general. Reports show that the available information is used only partially and that both oral and written communication contribute to the event.

Some of the HaiPro subcategories were equivalent to Magrabi’s categories. Magrabi’s ‘Staffing and Training’ category corresponded to the HaiPro category ‘Education and Training’, which includes the categories ‘Knowledge and Skills’, ‘Competence and Qualification’ and ‘Availability and Sufficiency of Education and Guidance’. These categories accounted for 8 per cent of the HaiPro incidents.

The HaiPro category ‘Procedures’ included methods, instructions and the availability or use of written material, whereas ‘Clarity of the Task’ is equivalent to Magrabi’s ‘Staffing and Training’ and ‘Interruption and Multitasking’. The HaiPro category ‘Circumstances, Tools and Resources’, which includes the category ‘Problems in the EHR or Other HIT Systems and Problems Using Them’, was considered to be linked to all Magrabi’s categories. Of the HaiPro incident reports, 8.7 per cent identified this as the contributing factor.

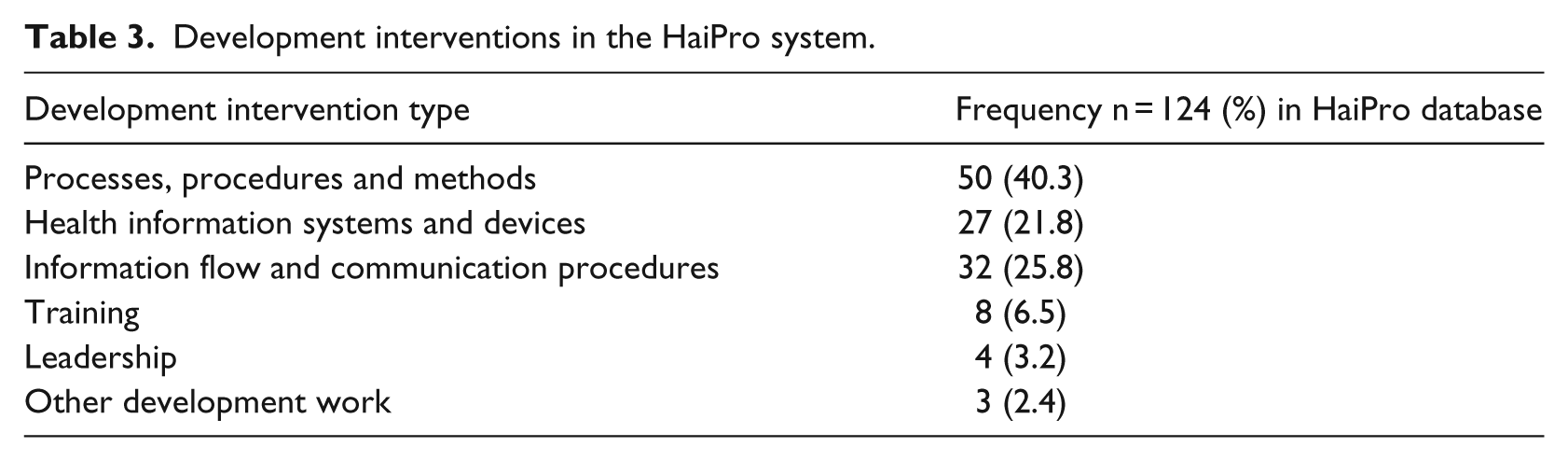

Learning from incidents

HaiPro contains information on ways to prevent incidents recurring. A team comprising a responsible physician and a head nurse in the unit suggest the measures; 8 per cent of the cases led to no actions. The most common (82.6%) way to prevent incidents recurring was to inform the staff of the incident and share the data with other parties; 4.9 per cent of the incidents were transferred to the leaders of the hospital due to the seriousness and recurrence of the case or because support of the need for support to manage the incident. A concrete development intervention took place in 4.3 per cent of the incidents. The action taken was related to EHR downtime, which caused serious problems in a surgery department. The administrators decided to develop structured communication procedures with the information and technology (IT) department and to provide paper copies of patients’ records available depending on the likelihood of EHR downtime. The types of development interventions appear in Table 3.

Development interventions in the HaiPro system.

Discussion

The data with respect to the literature

Our study shows that computer-related safety incidents are far more common than previous studies suggest.2,13,16 Our data are primarily discussed with regard to Magrabi et al.’s 16 study findings, which are based on similar data. In Magrabi’s study, a search of 42,616 patient safety incidents during a 2-year period yielded only 123 computer-related incidents. The Australian data describe 99 computer-related patient safety incidents, which had been analysed by examining free-text descriptions. 16 Our research data contain over 20,000 reports classified by a trained physician and head nurse. Our finding is based on a large, structured dataset of quality reports.

The following facts may account for the number of computer-related incidents in the Finnish data compared to Magrabi et al.’s 16 data. First, the coverage of EHR in Finland is 100 per cent, and Finnish hospitals are fully digital. Previous research shows that new technology increases the number of technology-related errors.6–10,40 Second, hospital districts in Finland devote institutional resources to incident reporting procedures, obtain feedback and share reports. Consequently, the staff are also encouraged to report HIT incidents, because managers consider them as important as clinical bedside events. Our results show that HIT-related problems pose a noteworthy safety risk in fully digital hospitals.

The risk profiles of the two databases clearly differed. In the AIMS data, 16 69 per cent of the cases received a medium-risk score, whereas in HaiPro the corresponding figure was only 10 per cent. This disparity may partly stem from the wide coverage of HaiPro incident reporting, which especially stresses the importance of reporting both minor incidents and near misses. The Finnish Act on Health Care and the National Patient Safety Program27,28 have emphasised the importance of anticipating potential problems. 41

A key finding of our study is that human–computer interaction problems were reported far more often in our study than in Magrabi’s studies,16,18 whereas machine-related problems were reported more rarely. A total of only 8.5 per cent of the incidents were machine-related problems, and 73 per cent were problems of human–computer interaction. Of all Australian AIMS reports, 55 per cent (n = 64) included machine-related problems, and 45 per cent (n = 53) problems of human–computer interaction. 16 In Magrabi’s more recent study, 18 the majority of IT events consisted of notifications about hazardous circumstances related to technical problems.

In the AIMS database, ‘Information Input Problems’ was the largest category, accounting for 31 per cent (n = 36) of the incidents. Most incidents, such as incorrect entry of patient name, diagnosis, discharge hospital or typographical errors, were associated with ‘Incorrect Human Data Entry’ (17%, n = 20). This category was also the largest in the Finnish data, accounting for 60 per cent of incidents. ‘Problems in the Transfer of Information’ accounted for 20 per cent of all AIMS incidents and for 9 per cent of Finnish incidents. ‘Information Output Problems’ did not differ markedly between databases.

Our results support a growing body of research which shows that adverse events result from the complex interaction between Health Information System (HIS) and clinicians.22,42–44 The Finnish data clearly show that the interaction of technology with non-technological factors requires more in-depth research from different perspectives. Users’ interactions with EHR are linked to complex processes which should be better understood. According to a recent study, problems involving human factors were four times more likely to result in harm to patients than technical problems, further stressing the importance of sociotechnical aspects. 18 Moreover, research confirms the importance of a sociotechnical perspective in system design. 45

Incident reporting systems provide a mechanism for identifying safety risks. Although the data suggest that interventions can reduce risks, these systems have not led to expected improvements and interventions to reduce risk.14,46 This raises a question about the appropriate implementation and use of such systems. Measuring the successful use of an incident system is challenging, but can be accomplished by counting the number of system changes made as a result of the system. 14 In the Finnish data, only 8 per cent of the IT-related incidents were left without measures, which can be considered reasonable progress in the optimal use of an incident reporting system, if still below the target level. The aspect of learning from previous incidents must inevitably be the future focus of incident reporting systems and research. Furthermore, studies show that technology-based solutions alone will only partially mitigate concerns. Interventions for EHR-related safety improvement must concentrate on how end-users actually use EHR. 19

The use of standard classifications, including clear category descriptions, makes data more valid and data use across countries possible. At the moment, single organisations are the main users of this valuable data source. Mapping the on-site reporting taxonomy with international standards is feasible,47,48 and Magrabi et al.’s 16 taxonomy constitutes a basis for patient safety incident reporting recommended for use as a starting point for international incident reporting classifications of machine-related incidents. Research shows that pre-defined reporting categories are well-suited to voluntary reporting needs and could also provide solutions for international quality reports. 47

Limitations

In this Finnish dataset, structured responsibilities, manifold surveillance of the quality of data and an on-going training programme all ensure the appropriate use of classifications. However, without content analysis of the incident reports, one cannot be 100 per cent sure of the correct use of classifications. The risk of invalid data, however, is presumably relatively low.

Voluntary incident reporting is an important tool for recognising patient safety issues in healthcare settings. However, these systems have their limitations;49,50 reports do not provide exact frequencies of incidents. Consequently, our data provide not exact error rates but rather a descriptive analysis of typical EHR-related safety problem types in fully digital, paperless hospitals. Large collections of incidents may serve to identify characteristic profiles, thereby enabling the aggregation and analysis of incidents. 15

The cross-mapping procedure clearly showed that the strength of Magrabi’s classification is its ability to identify technical problems. The human–computer perspective in the classification is weaker than it could be, given the complexity of a healthcare organisation. The sociotechnical perspective could be combined into this classification, because it contains multiple dimensions of HIT use.9,20,44,51

Conclusion

The Finnish safety data analysed in this study show that human–computer interaction associated with most HIT-related incidents. Detecting these safety concerns is challenging because they result from complex interactions among heterogeneous triggering factors. Consequently, healthcare information systems require an infrastructure for proactive risk assessment, specifically for EHR-related patient safety concerns. Developing techniques to support user awareness of EHR-related risks and their monitoring and management is therefore necessary.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work benefited Finnish governmental research funding (study grant TYH2014224).