Abstract

Background and Aims:

Trauma registry data are used for analyzing and improving patient care, comparison of different units, and for research and administrative purposes. Data should therefore be reliable. The aim of this study was to audit the quality of the Helsinki Trauma Registry internally. We describe how to conduct a validation of a regional or national trauma registry and how to report the results in a readily comprehensible form.

Materials and Methods:

Trauma registry database of Helsinki Trauma Registry from year 2013 was re-evaluated. We assessed data quality in three different parts of the data input process: the process of including patients in the trauma registry (case completeness); the process of calculating Abbreviated Injury Scale (AIS) codes; and entering the patient variables in the trauma registry (data completeness, accuracy, and correctness). We calculated the case completeness results using raw agreement percentage and Cohen’s κ value. Percentage and descriptive methods were used for the remaining calculations.

Results:

In total, 862 patients were evaluated; 853 were rated the same in the audit process resulting in a raw agreement percentage of 99%. Nine cases were missing from the registry, yielding a case completeness of 97.1% for the Helsinki Trauma Registry. For AIS code data, we analyzed 107 patients with severe thorax injury with 941 AIS codes. Completeness of codes was 99.0% (932/941), accuracy was 90.0% (841/932), and correctness was 97.5% (909/932). The data completeness of patient variables was 93.4% (3899/4174). Data completeness was 100% for 16 of 32 categories. Data accuracy was 94.6% (3690/3899) and data correctness was 97.2% (3789/3899).

Conclusion:

The case completeness, data completeness, data accuracy, and data correctness of the Helsinki Trauma Registry are excellent. We recommend that these should be the variables included in a trauma registry validation process, and that the quality of trauma registry data should be systematically and regularly reviewed and reported.

Keywords

Introduction

Trauma registries contain specific data on injured patients in a detailed form that is usually not available in traditional hospital administrative data (1). The main reason for having a specific trauma registry is to provide information that can be used for improving patient care (2). Trauma registry data can be used for patient and outcome evaluation within a hospital and for comparisons between hospitals, regions, and different countries. It is also common to use trauma registry data for research and to test different effects of a new treatment method. The hospital administration can use the data in determining resource allocations, among other purposes. Therefore, it is very important that the data are reliable, namely, the data should be as complete and accurate as possible.

Nowadays, trauma registry data are in electronic form, often web-based, and can be local, national, or even international (3). Many trauma registries include at least variables according to the Utstein template (4). The Utstein template states that at least 35 precisely defined core data variables are collected and entered in the registry in a similar manner to make different registries more comparable.

Data gathering and assessment processes should be accurate and easily repeatable to consistently produce good quality data. All interpretations could be misleading if data quality is not evaluated. Data of good quality can be achieved only by monitoring and evaluating the entire data-gathering process on a regular basis. Only a few studies have evaluated the data quality of trauma registries, and these studies propose a standardized and reproducible method to evaluate the data quality of different trauma registries (5–8). However, there is so far no standardized method for evaluating or auditing trauma registries (9).

The aim of this study was to internally audit the quality of the Helsinki Trauma Registry (HTR). This was performed by analyzing case and data completeness (whether all necessary cases and their data are collected) and data accuracy and correctness (whether the values within each case are correct or within a tolerable range).

A further aim was to identify possible system problems in patient enrollment, data gathering, and data entry that affect the quality of registry data.

We describe our method of evaluating the quality of the HTR and report the results in a readily comprehensible manner. Based on our experience, we propose a model of what information should be included when reporting the quality and validity of a regional or national trauma registry.

Materials and Methods

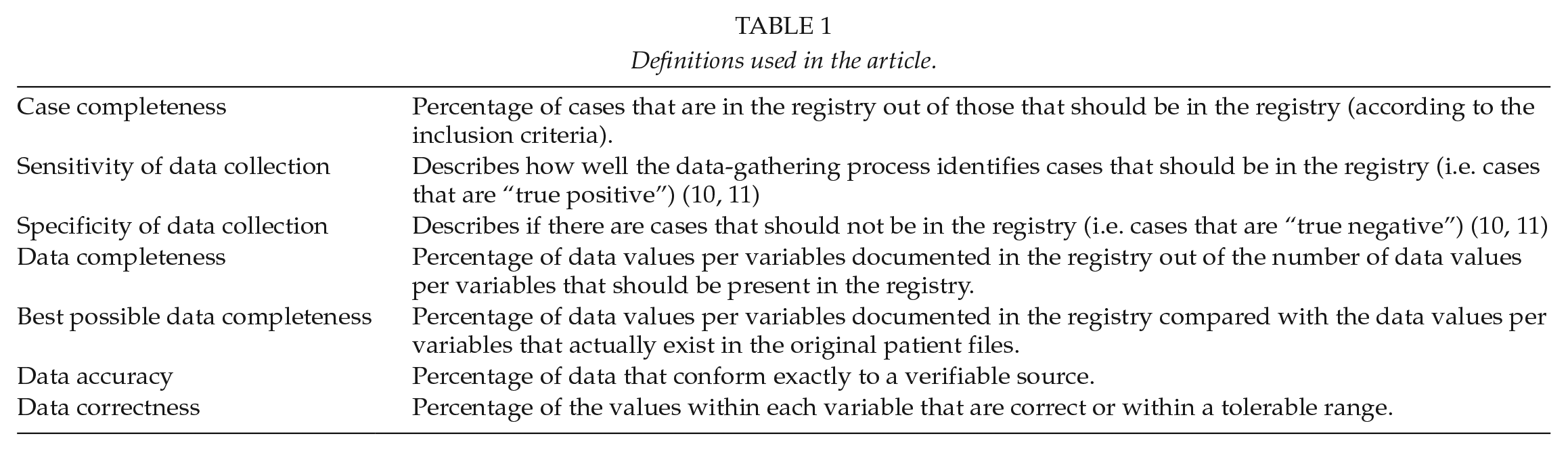

Table 1 describes the definitions used in the text.

Definitions used in the article.

Helsinki Trauma Registry (Htr)

The HTR is the trauma registry of the Helsinki University Hospital Trauma Unit. The Helsinki University Hospital is a tertiary trauma center with a catchment area of approximately 1.8 million. The HTR was established in 2006 and includes all New Injury Severity Score (NISS) > 15 trauma patients admitted to the resuscitation bay within 24 h of the injury. Five trained registry coders review and enter the patients into the registry within 3 months of admission using specialized software.

The retrospectively collected data include demographics, injury details, pre-hospital and hospital treatment, and outcome.

Trauma registry data from the year 2013 were re-evaluated. We chose this year as after 2013 the inclusion process or criteria had not changed, and the registry coders would most likely not be able to remember the cases they had enrolled 5 years earlier.

We examined data quality in three different parts of the data input process:

Process of including patients in the trauma registry (case completeness)

Four registry coders repeated the process of including patients in the trauma registry in the same way as performed originally. Specifically, a registry coder checks the emergency department’s resuscitation bay patient log, from where the basic information (such as reason for accident, first diagnoses, and treatment plan) of each patient treated in the resuscitation bay can be retrieved. The coders then calculated the NISS scores for these patients. Patient with NISS > 15 are included in HTR.

The obtained list of patients was compared with the original HTR list. If there was a discrepancy (either patient missing from the original trauma registry list or patient missing from the new list), these cases were examined together with a trauma surgeon to reach a consensus on whether the patient should be included.

We report all missing patients and the most probable reasons in Supplemental Material 2.

Process of calculating AIS codes (data completeness and correctness)

One trained registry coder recalculated the AIS codes for each injury in all patients. A similar process has been described previously (10). Registry coder and trauma surgeon evaluated all injuries with difference in original and new coding.

To make this part of the work feasible, we chose the subgroup of patients with severe chest injury (AISTHORAX ≧3) for AIS recoding.

We report the data completeness, accuracy, and correctness and the differences between the existing original AIS codes and the newly calculated AIS codes. We categorized the results as follows: AIS codes were correct in the original files (accuracy); AIS codes differed but did not affect AIS severity (correctness); AIS codes differed and affected AIS severity either by over-grading or under-grading severity; AIS codes that were missing; and AIS codes that should not exist (completely wrong diagnosis or extra code that was already included in some other diagnosis).

Process of entering the basic patient parameters into the trauma registry (data completeness, accuracy, and correctness)

One trained registry coder re-entered the 32 trauma registry parameters according to the Utstein template (4). These manually entered parameters were then compared with the original parameters from the trauma registry. The registry coder and a trauma surgeon reviewed all differing values between the original and the new dataset. A consensus was reached to decide which value was correct.

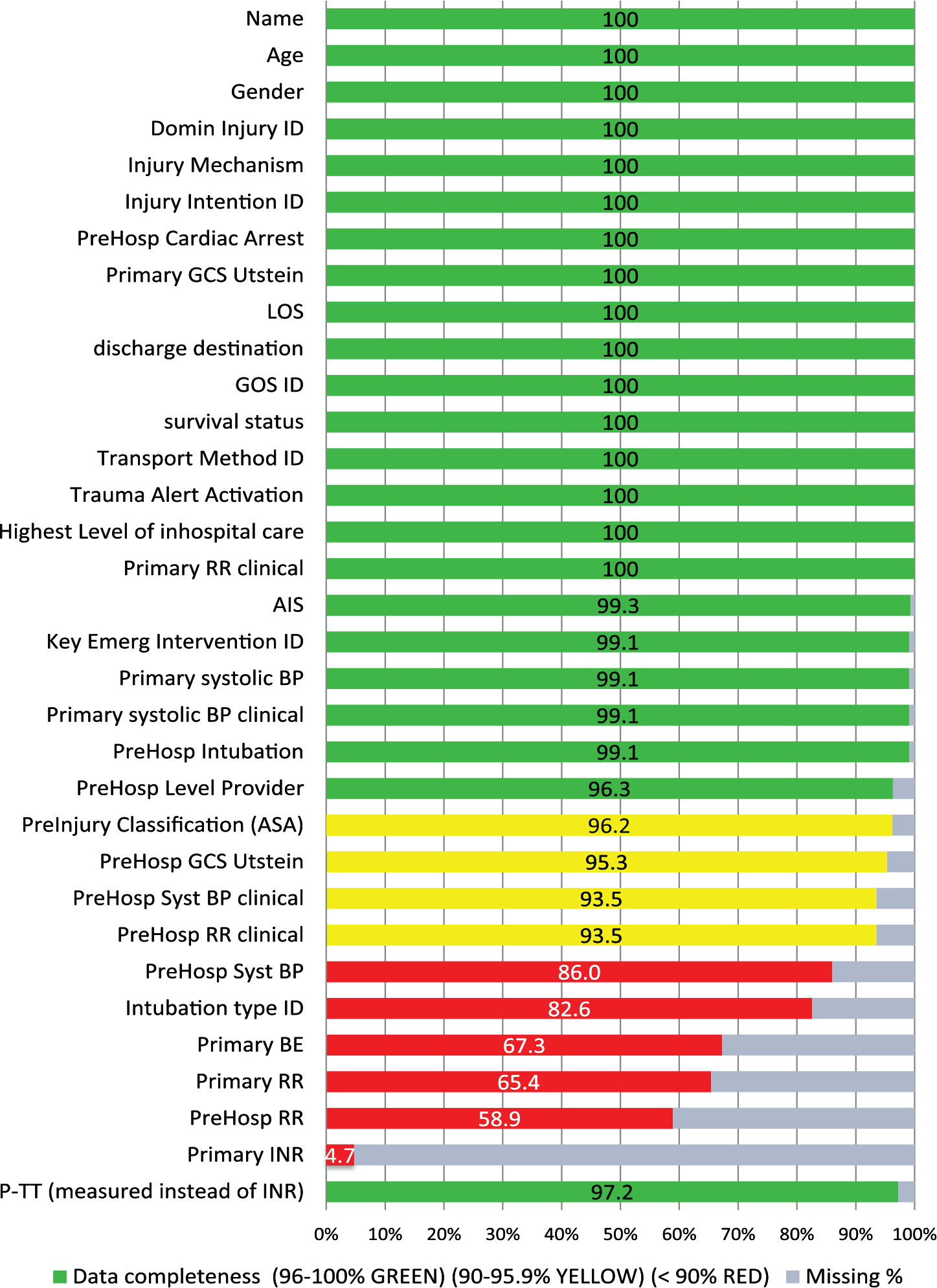

We provide a graph of data completeness and missing information percentages in a simple bar-graphic figure with a traffic-light model for better describing data completeness using the same color coding as the TraumaRegister DGU® uses in their annual report (11): green (96%–100% completeness), yellow (90%–95.9% completeness), and red (<90% completeness).

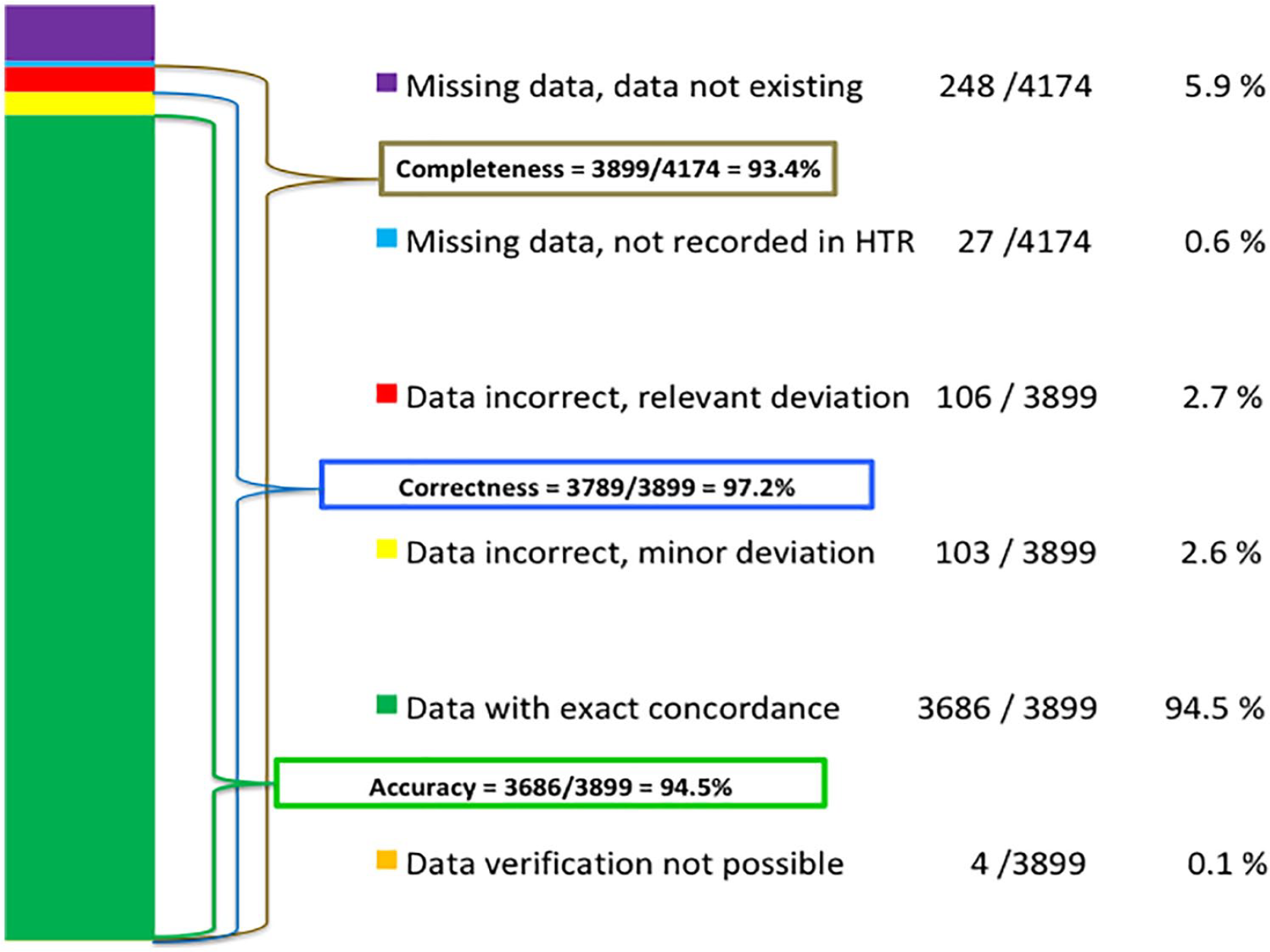

We also present the following novel six-step way to present the results of completeness, accuracy, and correctness in a single graph: (1) Data verification not possible, (2) Data with exact concordance, (3) Data with minor deviation or (4) relevant deviation, (5) Data missing but should have been in the registry, and (6) Data not available (because data did not exist). Minor and relevant deviations are defined in Supplemental Material 1.

We also report the best possible completeness of the data.

Statistics

We calculated case completeness results using raw agreement percentage and Cohen’s κ value. We used percentage and descriptive methods for remaining calculations.

Ethics

The review board of the Helsinki University Hospital approved this study.

Results

Process of Including Patients in the Trauma Registry (Case Completeness)

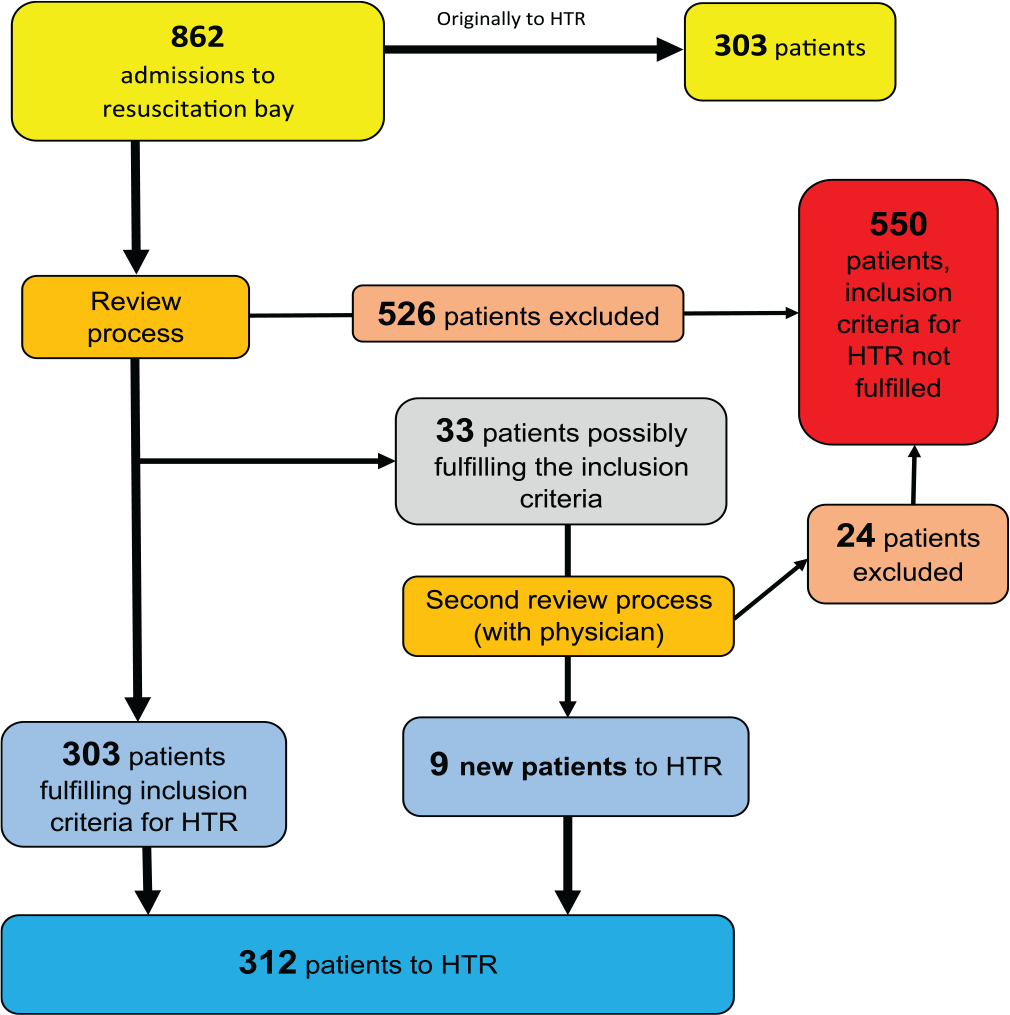

There were 862 patients admitted to the resuscitation bay and eligible for trauma registry inclusion or exclusion analysis according to our resuscitation bay statistics. Out of these 862 patients, 853 were rated identically (to be included or not to be included to the registry), which yielded a raw agreement percentage of 99.0% and a Cohen’s κ value of 0.98. Nine cases that were rated differently were patients who should have been included in the registry. Since 303 patients were originally included in the registry and we missed 9 patients, this means that 97.1% of patients who should have been in the registry were present in the registry; this is also the case completeness result. We were not able to identify patients who were in the registry but should not have been. This yielded a sensitivity value for the data-gathering process of 97.1% and a specificity value of 100%.

We show the HTR case completeness flow chart in Fig. 1.

Case completeness (97.1 %) flow chart.

In Supplemental Material 2, we report the injury mechanisms and diagnoses of the patients originally missing from the trauma registry and provide the most probable reasons for their absence.

Recalculating AIS Codes (Data Completeness, Accuracy, and Correctness)

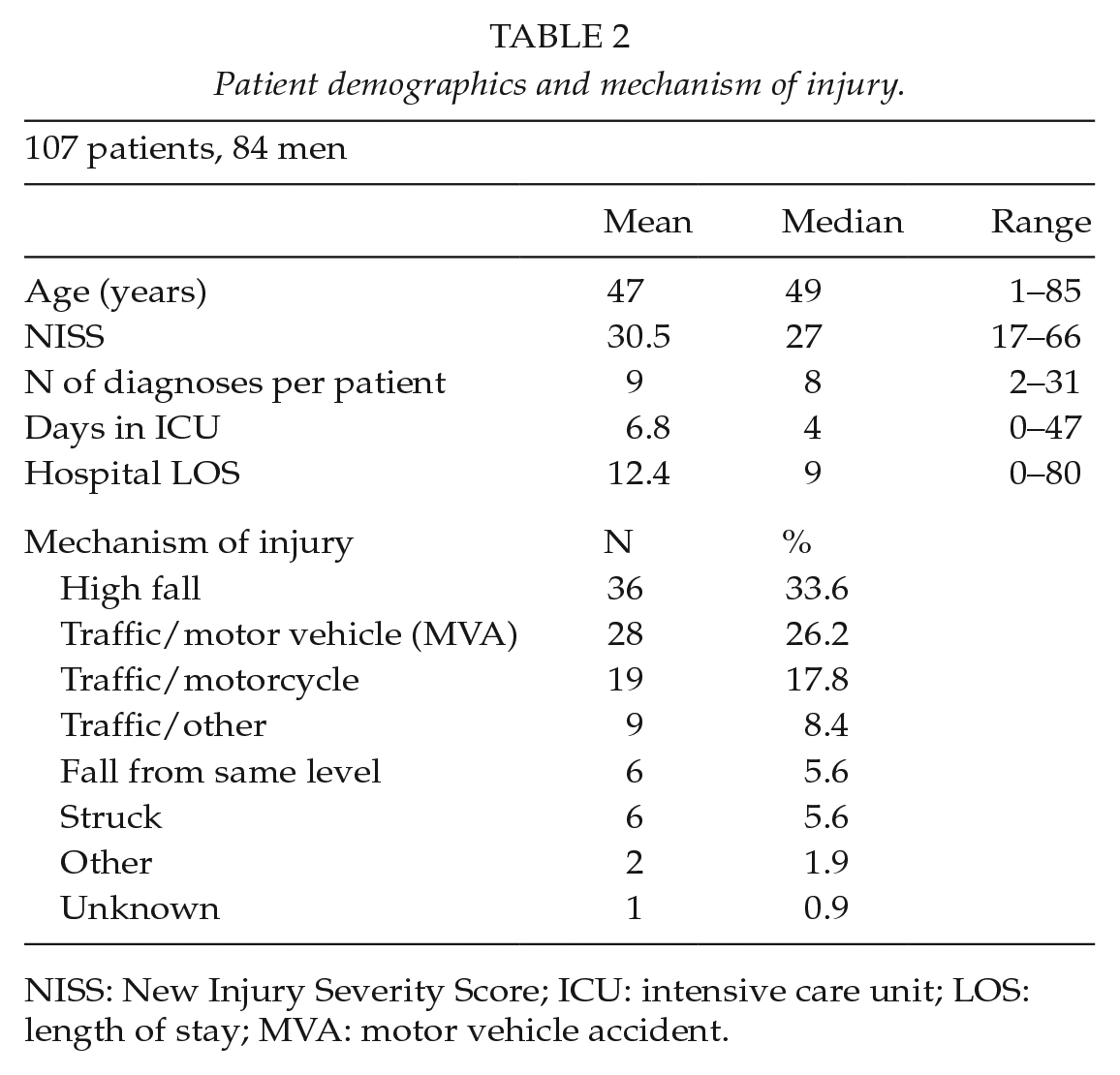

We recalculated the AIS codes of 107 patients with severe thorax injury (AISTHORAX ⩾ 3). The basic patient demographics and injury mechanisms of these patients are shown in Table 2.

Patient demographics and mechanism of injury.

NISS: New Injury Severity Score; ICU: intensive care unit; LOS: length of stay; MVA: motor vehicle accident.

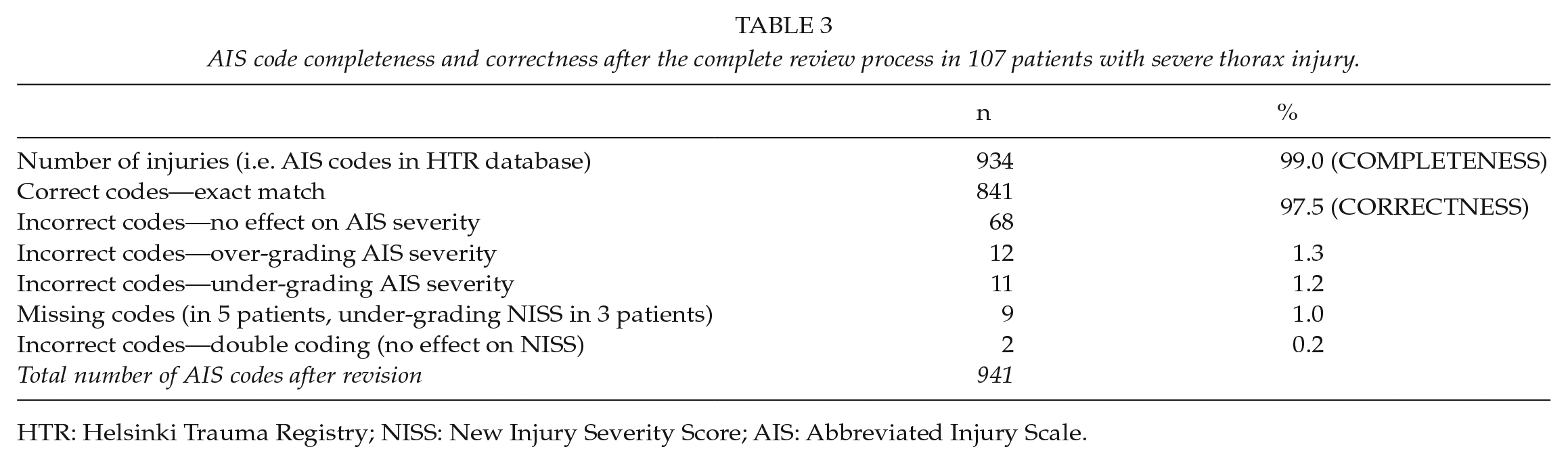

There were 941 AIS codes from these 107 patients. Completeness of the codes was 99.0% (932/941), accuracy 90.0% (841/932), and correctness 97.5% (909/932). The results of data completeness and correctness of AIS codes are shown in Table 3. In 23 diagnoses, the AIS codes differed in severity (i.e. the last number of the seven-digit AIS code was incorrect) and the NISS changed in 12 registry patients. In five patients, the NISS was lower in the original data and in seven patients the score was higher.

AIS code completeness and correctness after the complete review process in 107 patients with severe thorax injury.

HTR: Helsinki Trauma Registry; NISS: New Injury Severity Score; AIS: Abbreviated Injury Scale.

Entering the Basic Patient Parameters in the Trauma Registry (Data Completeness, Accuracy, and Correctness)

We assessed 32 Utstein variables from the HTR database. The overall data completeness was 93.4% (3899 values out of 4174 possible). In 16 of 32 variables, the data completeness was 100%, in seven variables it was 96.0%–99.9%, in three variables it was 90%–95.9%, and in six variables it was <90% (Fig. 2). We use P-TT (thromboplastin time) in most cases instead of INR (international normalized ratio). Therefore, we show also the completeness result of P-TT in Fig. 2.

Data completeness of Utstein variables.

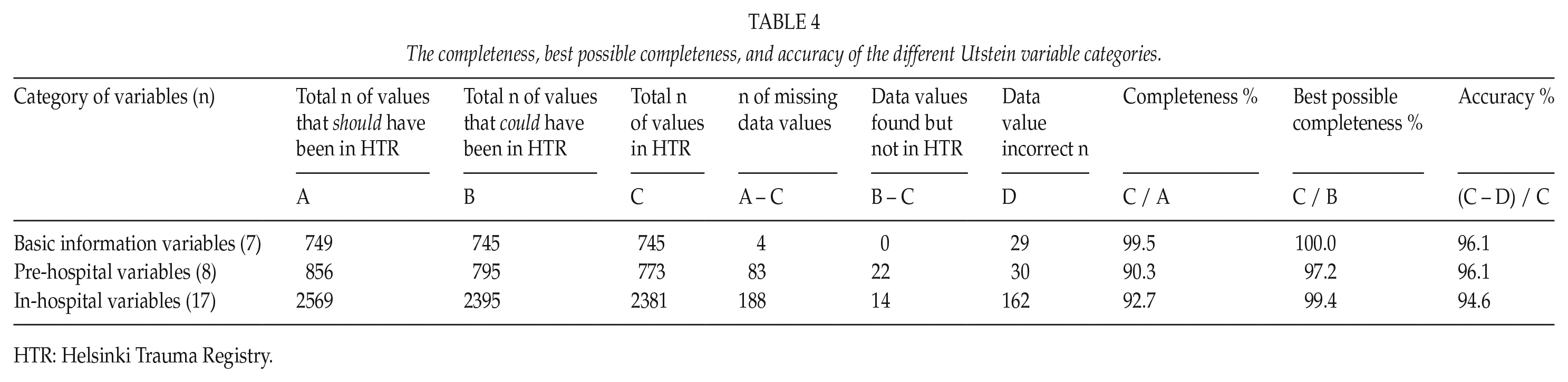

The data accuracy was 94.6% (3899 recorded values, 209 with deviation, and 4 that could not be verified). Nineteen of the 32 evaluated variables had accuracy >96%, nine variables had 90%–95.9% accuracy, and four variables had <90% accuracy. Data correctness was 97.2% (3899 recorded variables, 106 with relevant deviation, and 4 that could not be verified). We also show the data completeness, accuracy, and correctness together in Fig. 3.

Data completeness, accuracy, and correctness of Utstein variables.

Table 4 presents the completeness, best possible completeness, and accuracy of the different Utstein variables when categorized as basic information variables, pre-hospital variables, and in-hospital variables.

The completeness, best possible completeness, and accuracy of the different Utstein variable categories.

HTR: Helsinki Trauma Registry.

A complete table with accuracy and correctness and definitions for the tolerance of minor deviations is found in Supplemental Material 1.

The overall process of the patient treatment chain, HTR data gathering, and validation are summarized in Supplemental Material 3.

Discussion

In this study, we describe the methods of performing an internal audit of a trauma registry to determine whether data extracted from the registry is reliable/trustworthy. We discovered that the case completeness, data completeness, data accuracy, and data correctness of the HTR are excellent.

Process of Including Patients to the Trauma Registry (Case Completeness)

One challenge with a trauma registry (as well as in any medical registry) is to ensure that all patients who should be included are actually included. The inclusion process should therefore be easily repeatable and automated if possible. Some registries include all patients treated in a specified location (such as hospital emergency room (ER) or intensive care unit) and some include all patients with certain diagnoses (such as arthroplasty registries or hip-fracture registries); this kind of inclusion can be performed automatically from administrative data. Although electronic data gathering might be the best method regarding case completeness (12), it is not always possible for trauma registries for severe trauma, as injury severity must often be determined before inclusion criteria are fulfilled, and this requires human effort. Because HTR was originally planned only for severe trauma, we cannot include all patients treated in the hospital ER in the HTR due to variation of the injuries treated in the ER. Therefore, we have a manual enrolling system and registry coders manually verify the inclusion criteria of every single patient eligible for the registry. This can lead to problems in efficiently enrolling patients in the registry if the inclusion criteria are too complex (6, 13).

The case completeness of the HTR was 97.1% in our audit. There are few reports of case completeness of a trauma registry for severe trauma. Ali reported a 60.5% case completeness of the Navarre Trauma Registry in Spain (14). To our knowledge, this is the only study that reported case completeness of a trauma registry for severe trauma.

Out of the nine patients missing from our registry, six patients had traumatic brain injury (TBI). In our regular educational meetings, we have concentrated more on TBI cases and coders also perform more crosschecks on patients with TBIs. We believe that this will lead to a more precise evaluation of those patients with TBI.

There was only one death among the patients who were absent from the registry and this would not have affected the overall standardized mortality, which is often compared between registries (15–17).

Recalculating the AIS Codes (Data Completeness and Correctness)

It is necessary to have a comprehensive sample from the trauma registry in an audit process of a registry. There are some options for choosing the sample. These include choosing all consecutive patients from a sufficiently lengthy time period (such as 1 year), using a computer-based sample extractor (18), or choosing a certain patient group that is representative of the registry, such as patients with severe chest injury in our case. We know from previous work that patients with severe chest injuries have on average 5.5 injuries around the body (19) and also bilateral injuries. This is sometimes a challenge for AIS coding as each injury will need separate AIS codes. For example, a bilateral femoral shaft fracture will yield two AIS codes, which in turn affects NISS codes separately.

AIS coding can be complex and errors occur. AIS coding courses are therefore essential for coding diagnoses correctly (20–22). We also support “the rule of AIS coding,” in which all trauma registry coders should take specific courses and become certified AIS coders before starting to code injuries.

Because of possible calculation errors, we also calculated the NISS scores again for all patients who had different AIS codes after recoding. After recalculation of the NISS, out of 107 patients, the new score was higher in five patients and seven patients had a lower score. Of those that had a higher score, three were due to a missing code. Of those who had lower new NISS score, the score was still at least 16 after recalculation; thus, no patient had to be excluded from the registry because of the recalculation. The basic principle of AIS coding is that “if in doubt, code down,” which in seven patients was not the case in our internal audit. This has been discussed with coders to avoid over-grading.

Entering Basic Patient Parameters in the Trauma Registry (Data Completeness, Accuracy and Correctness)

In some previous studies, the trauma registry data completeness and accuracy have been excellent (23), quite poor (24), or variable depending on the variables evaluated (10). In the HTR, data completeness was good (90%–95.9%) or excellent (96%–100%) in 27 out of 32 variables. The poorest data completeness was in Primary INR (4.7%). This is because in our institution P-TT is more regularly measured compared with INR. For P-TT data completeness was 97.2% (104/107); two values were completely missing and one value was not recorded into the HTR. If the INR results were substituted for P-TT, then the overall data completeness would have been 95.8% instead of 93.4%.

There were five pre-hospital variables among the nine poorest variables in the data completeness category (Fig. 2). Accordingly, we should consider if it is important to collect these values and if the Utstein criteria should be re-evaluated as well. Key variables such as age, gender, injury mechanism, primary Glasgow Coma Scale (GCS), length of stay, AIS points, and primary systolic blood pressure were documented in more than 99% of cases.

In most cases, the reason for missing values in the HTR data was that the values were not documented in the original patient files. This is why the “raw” data completeness does not describe the documentation process of the registry. However, for internal audit purposes we believe that “best possible completeness” (Table 1) is a good way to describe how well the values that exist in the patient files are documented into the registry. This describes the work of registry coders instead of all possible reasons why some values might be missing from the registry, which is described by the “raw” data completeness.

Data accuracy was calculated based on the variables that had data. We defined accuracy as trauma registry data that precisely matched the data in patient charts. The overall accuracy of the HTR was 94.3%.

Correctness is defined here as the values that either precisely match the original patient file values or are within a tolerable range. This range was predefined (Supplemental Material 1). Data correctness better (than pure accuracy) describes how well the data extracted from the registry are reliable for decision-making. For example, if a blood pressure value is 120/90 mmHg and is documented as 125/90 mmHg in the registry, this difference is not clinically relevant and thus the value can be classified as correct (but not accurate) without weakening the reliability of the registry. The overall correctness of the HTR was 97.1%. Therefore, in our opinion, we can say that the reliability of the registry data is excellent.

Some reasons for missing or incorrect data

For missing values, it may be that the value was not documented at all, the registry coder did not find the value, or the value was documented originally by handwriting and was sufficiently unclear such that correct interpretation was not possible. Incorrect values may be due to typographical errors or the registry coder selected the incorrect value from patient charts, calculated the value incorrectly (AIS, Glasgow Outcome Sco [GOS], GCS), or misinterpreted the patient charts. The reason for incorrect data is thus often human error. These mistakes could be avoided if the values could be gathered from structural electronic medical patient charts automatically. This is where in the future all trauma registries should strive to make their data more reliable. However, if the original data entered on the patient charts were incorrect this would also make the trauma registry unreliable.

Furthermore, if the data entry platform is very complex it can make the work of the registry coder more challenging and prone to error. In planning a new trauma registry, this should be addressed to avoid difficulties in the data entry process itself. Possible ways to avoid mistakes include alarms (if the coded number is impossible, such as in typographical errors) or value limits (thus preventing entry of values that could not exist for that variable). Dropdown menus may make the work of coders easier.

The strength of this audit study was the number of patients (862) for the case completeness and the fact that case completeness was reported. Another strength is the large number of data fields (3899) for data completeness and accuracy. We also reported the main results as percentages, which are easily understandable by those not familiar with more complex statistics.

One weakness of this study is that in most cases only one coder performed most of the AIS code recalculations. Although this may have introduced human error, we carefully verified all discrepancies that were detected by comparing the new codes to the originals and consensus was reached together with the trauma surgeon.

Lessons learned

In this audit of the HTR, we understood that it is vitally important to regularly perform internal quality checks.

Even though our data completeness was excellent, we could verify for example TBI as an injury group that is prone to errors.

The key value is the AIS code; difficult patient cases should be discussed among registry coders to ensure that the coding is as uniform as possible and adheres to the guidelines.

We also noticed that large part of the missing data was due to poor documentation of pre-hospital values of individual patients. We must therefore carefully consider which data are necessary for the registry. It is important that there is a need for each data variable. This means that every data point that is collected should have importance either in some of our predictive models or for analyzing patient treatment. Therefore, the Utstein criteria should also be re-evaluated in the near future.

We have also improved the platform for data entry according to the needs of the registry coders.

Due to various reasons, data completeness does not always describe the reliability of the registry. In an audit process, “best possible data completeness” should be used instead.

Conclusions

In the present study, we validated the HTR database and found the HTR to be excellent with respect to case completeness, data completeness, data accuracy, and data correctness. Therefore, the data can reliably be used for research, analyses of patient treatment, and administrative purposes.

The completeness and accuracy of trauma registry data should be systematically and regularly reviewed and reported. Case completeness, data completeness, data accuracy, and data correctness are the variables that should be included in a trauma registry validation process.

The method used here should be easily repeatable and we suggest that trauma registries use this or similar methods to regularly verify the quality of their registry.

Supplemental Material

Supplement_1._Utstein_results_accuracy_and_correctness – Supplemental material for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit

Supplemental material, Supplement_1._Utstein_results_accuracy_and_correctness for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit by M. Heinänen, T. Brinck, R. Lefering, L. Handolin and T. Söderlund in Scandinavian Journal of Surgery

Supplemental Material

Supplement_2._Patients_missing_from_the_trauma_registry – Supplemental material for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit

Supplemental material, Supplement_2._Patients_missing_from_the_trauma_registry for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit by M. Heinänen, T. Brinck, R. Lefering, L. Handolin and T. Söderlund in Scandinavian Journal of Surgery

Supplemental Material

Supplement_3._HTR_inclusion_process_and_validation_process_graph – Supplemental material for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit

Supplemental material, Supplement_3._HTR_inclusion_process_and_validation_process_graph for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit by M. Heinänen, T. Brinck, R. Lefering, L. Handolin and T. Söderlund in Scandinavian Journal of Surgery

Supplemental Material

Supplement_4._Recommendations – Supplemental material for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit

Supplemental material, Supplement_4._Recommendations for How to validate data quality in a Trauma Registry? The Helsinki Trauma Registry internal audit by M. Heinänen, T. Brinck, R. Lefering, L. Handolin and T. Söderlund in Scandinavian Journal of Surgery

Footnotes

Acknowledgements

We thank our registry coders Satu Partanen, Pirkko Tonder, Markku Kytönen, Iiu Laitinen, and Marja-Terttu Bergman for their excellent work in accurately recording data for the HTR. We thank Kirsi Willa for managing the databank of the HTR and Derek Ho for language revision of this article.

Author Contributions

Study design: All authors

Data collection: M.H., R.L.

Data interpretation: All authors

Writing the manuscript: M.H.

Critical revision of the manuscript: All authors

Study supervision: T.S.

Declaration of Conflicting Interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.