Abstract

Aims and objectives:

This study investigates the collocational competence of heritage language (HL) speakers and evaluates the feasibility of using automatic assessment to distinguish language proficiency on the collocational levels. We hypothesize that higher collocational scores, associated with the learner texts, indicate more advanced collocational proficiency.

Methodology:

The study utilizes t-score metrics to assess the significance of bigrams. The protocol involves calculating the strength of each collocation in a large L1 reference corpus, extracting bigrams from HL data, and comparing them with the L1 data. If a match is found, the HL bigram is assigned the corresponding L1 collocation t-score.

Data and analysis:

Research data comprise 118 essays (32,225 tokens) from the National Post-Secondary Russian Essay Contest, USA. The study also utilizes the L1 reference corpus Araneum Russicum and three validation datasets: (a) L1 collocations with the highest t-scores from Araneum Russicum, (b) randomly generated bigrams with low t-scores, and (c) L1 collocations from literary fiction.

Findings:

The proposed method is evaluated against three validation datasets and proven to differentiate them adequately. However, the main findings indicate that t-scores do not effectively differentiate proficiency levels among HL learners. The similarity in t-score distributions between HL learner texts and L1 literary texts highlights the challenges of using collocations as a standalone marker of proficiency.

Originality:

This study proposes and validates a new method for automatically assessing collocational proficiency. It emphasizes the challenges of using collocations as a definitive marker of HL proficiency but also highlights the benefits of this analysis for L1 language learning and research.

Significance/implications:

Automatic collocational analysis shows potential for language proficiency assessment and for developing educational programs to enhance students’ collocational knowledge. By advancing our understanding of collocational patterns and their implications for language use, this study contributes to the development of more effective language assessment tools, fostering greater proficiency and fluency among language learners.

Introduction

Formulaic language, an umbrella term that describes various constructs such as collocations, colligations, multiword units, formulaic sequences, lexical bundles, and others, has been a topic of a wide range of research on language acquisition and second language (L2) pedagogy, especially in the past two decades (Durrant & Mathews-Aydınlı, 2011; Granger, 2021; Wray, 2002). Formulaic language has been explored in relation to L2 writing quality (Jarvis et al., 2003; Lee et al., 2021), first language (L1) background of the learners (Appel & Murray, 2020; Paquot, 2013), and learner proficiency (Granger & Bestgen, 2014; Paquot, 2019). Recognizing the role of formulaic language in evaluating general language proficiency, the field of language assessment has begun to incorporate different measures of formulaic language into various assessment protocols (Erman et al., 2016; Howarth, 1998).

With the growing availability of language corpora and computational tools that can successfully measure different aspects of formulaicity, a greater number of works have explored the potential of automated evaluation of formulaic language as a measure of language proficiency. The majority of these studies, however, focus on L2 English, with a smaller number of worthy exceptions engaging with other L2s, such as French (Vandeweerd, 2019; Vandeweerd et al., 2021), Spanish (Vincze et al., 2016), Turkish (Treffers-Daller et al., 2016), or Italian (Siyanova-Chanturia, 2015). This study aims to contribute to this ongoing research agenda by exploring the feasibility of using collocations, that is, non-stochastic cooccurrences of lexical items, as a metric of formulaic language in automatic assessment of language proficiency in learner Russian.

In addition, we expand the research agenda to include a special group of learners, namely, heritage learners of a language. Heritage language (HL) is a language acquired from birth or very early age at home and/or in the community but in the context of another societally dominant language, which significantly limits the speaker’s experience with the language and creates a unique language developmental trajectory (Montrul, 2015). As young adults, some of these HL speakers, wishing to (re)learn and improve their HL in a formal setting, find their way to the L2 classroom (Montrul, 2010). Comparing the linguistic knowledge and language development of L2 and HL learners is a worthy pursuit, both from language theoretical and pedagogical perspectives. While the field of HL studies has devoted much effort to investigating the differences and similarities of phonological, morphological, and syntactic features of the two groups of learners, their phraseological, or collocational abilities in our case, is a relatively new terrain.

In our current study, we attempt to address this gap in research by drawing upon a learner corpus of Russian that comprises texts authored by Russian HL learners with English as a dominant language at various proficiency levels of Russian. The learner collocations are evaluated against the reference datasets, as well as in relation to each proficiency level assigned to the learner texts, to address the general question of whether HL learner proficiency can be successfully indexed by collocational measures.

Two approaches to collocations

The study of collocations has been approached through various theoretical lenses, largely falling into two primary approaches, the significance-oriented (or phraseological approach) and the statistically-oriented approach. The former, rooted in the tradition of phraseology within lexicology and lexicography, emphasizes the semantic and pragmatic aspects of collocations (Mel’čuk, 2023). According to this approach, collocations are viewed as constrained linguistic signs, known as phrasemes, where the selection of one element depends on others within the combination. For example, phrases like English heavy rain or Russian silʹnyj doždʹ (literally “strong rain”) are considered collocations because of their semantic values. This approach highlights the semantic and formal constraints inherent in collocational usage, offering insights into the linguistic and pragmatic aspects of phraseology.

In contrast, the statistically oriented approach, predominantly developed within corpus linguistics, focuses on the frequency-based identification of collocations without preset criteria for formulaicity (Biber, 2009; Brezina et al., 2015; Evert, 2008). Within this paradigm, collocations are understood as statistically significant co-occurrences; for instance, expressions like in the context of or I don’t think he are considered collocations based on their frequency in corpora, regardless of their grammatical structure or semantic composition.

The difference between the two approaches can be illustrated by two expressions, fast food and fast train. The former undergoes a clear semantic shift, generally meaning “processed food,” while the latter preserves its original meaning while also dis-allowing such alternatives as *quick train. Despite the apparent differences, these instances converge on recognizing usage frequencies as crucial for identifying fixed expressions. Moreover, based on frequent usage, the second expression could develop a shift in the meaning as well. We believe that both approaches share common ground in acknowledging the psycholinguistic validity of collocations and their role in idiomatic language production and comprehension.

In our study, we adopt a statistically based, corpus-driven approach to collocational analysis, leveraging the advantages it offers in terms of methodological simplicity and suitability for automated language assessment algorithms. By employing this approach, we aim to contribute to the advancement of language proficiency assessment methods and pave the way for future research in collocational analysis and its applications in linguistic research and language education.

Collocations in language learning

Serving as “important building blocks in discourse” (Biber, 2009, p. 284), collocations play an essential role in learner language; as such, they have garnered significant attention in language acquisition research. Previous studies have investigated various aspects of collocational knowledge of L2 speakers, including the influence of L1 on L2 formulaic expressions (Appel & Murray, 2020; Nesselhauf, 2003; Paquot, 2013), the impact of instruction and exposure on collocational competence (cf. Erman & Lewis, 2022; Paquot, 2019; Szudarski, 2012; Treffers-Daller et al., 2016), and the correlations between collocational knowledge and writing quality (Jarvis et al., 2003; Lee et al., 2021) as well as general proficiency levels (Erman et al., 2016; Granger & Bestgen, 2014; Paquot, 2019; Vandeweerd et al., 2021). Such correlations between formulaic language use and proficiency levels are not always straightforward; for example, Siyanova-Chanturia and Spina (2020) examined the development of phrasal vocabulary in L2 learners across different proficiency levels, revealing that higher proficiency and greater exposure to the L2 do not necessarily result in more idiomatic or target-like output. Their findings suggest that learners may increasingly rely on low-frequency combinations that are less associated or mutually attracted, which complicates the relationship between proficiency and formulaic language use.

It is important to note that the relationship between collocational proficiency, other learner skills, and textual variables is complex and nuanced, defying straightforward assumptions of direct correspondence. For example, Crossley and Salsbury’s (2011) study of the development of collocational skills in the oral production of L2 speakers of English showed mixed results. Specifically, the researchers utilized a frequency-based measure to track the use of bigrams (i.e., combinations comprising two words) in the speech of the learners over the course of a year. By comparing the overlap between the normalized counts of bigrams in the L2 speaker texts against the L1 reference corpus, the researchers found that half of the study participants showed stable growth in the number of native-like collocations, while other learners exhibited either a non-linear progression toward more standard-like use of bigrams or no growth in frequencies of native-like bigrams at all. Adding a collocational association measure to complement a frequency-based collocational measure, Bestgen and Granger (2014) analyzed growth in collocational knowledge in written speech of L2 English learners. This study found a more stable pattern of development over time with a longitudinal decrease in the use of collocations made up of high-frequency words that are less typical of native writers. The researchers also found a positive correlation between the mean scores on the association measure of the bigrams used by L2 writers and the overall quality of the essays, and a negative correlation between the proportion of bigrams that were absent in the reference corpus and the quality of the texts.

Using a more fine-grained approach to operationalizing collocations and a stricter operationalization of proficiency, Paquot (2019) investigated the feasibility of using measures of phraseological complexity to describe texts by French learners of English at different proficiency levels (B2, C1, and C2 as determined by the Common European Framework of Reference for Languages (CEFR) scale). Focusing on three-word collocations with grammatical relations (adjectival modifiers, adverbial modifiers, and verb + direct object), the author measured the phraseological diversity of the units. The study found that phraseological complexity measures, as opposed to lexical ones, discriminated against the proficiency levels as strength of association scores were found to “increase systematically from B2 to C1 and C2” (Paquot, 2019, p. 131). Moreover, it was found that the structures “verb + direct object” showed significant differences among the two advanced levels which can show how this type is “difficult to master even at more advanced proficiency levels” (p. 138). Similar conclusions were drawn on a study that involved a different L2, French. In a partial replication of Paquot’s (2019) study, Vandeweerd et al. (2021) investigated how phraseological complexity of L2 French written texts compares across proficiency levels. The study found a significant increase in the average score that specifically measures the strength of association of phraseological units, including direct objects and the frequency-based score of adjectival modifiers across proficiency levels. This study provided a cross-linguistic validation for earlier studies and further highlighted the importance of using phraseological measures as indices that successfully track growth in proficiency of L2 learners of various L2s.

The studies above, inter alia, highlight that tracking changes in frequency and association strength of formulaic expressions adds to our ability to describe proficiency levels with much nuance and detail, significantly contributing to the better established lexical and grammatical measures. While findings reveal variability in learners’ development of collocational patterns, overall, results show a positive correlation between frequency and/or collocational association strength of collocations and proficiency, especially when research design accounts for the types of collocations studied.

With regard to the Russian language, research on collocational abilities of language learners remains meager. In one recent study, Eremina (2020) utilized the tagged parts of the Russian Learner Corpus (Rakhilina et al., 2016) using the error tag “Idiom” that marks infelicitous multiword expressions. The researchers categorized the extracted infelicitous expressions into two main types, structural and semantic, and then analyzed the subtypes further, hypothesizing on the nature of each error. Although the study does not venture to implement any statistical procedures, it lays the foundation for subsequent potential statistical analyses of various types of phraseological expressions in the language of L2 learners of Russian. Another study focused specifically on HL learners (Kopotev et al., 2024). This study analyzed collocations in three corpora of narratives, representing the oral language of Russian HL speakers from three different dominant-language backgrounds, namely, German, Finnish, and American English. All bigrams extracted from the heritage corpora were then compared against the bigrams of the Russian National Corpus (Plungian, 2008); the unattested bigrams were then manually categorized based on the taxonomy that specified the nature of non-standard collocations (dominant language transfer, semantic extension, amalgam, etc.). The authors concluded that compared with L1 speakers, the HL speakers employed fewer probabilistic strategies when compared with native speakers and that their active knowledge of and access to ready-to-use multiword units are restricted.

A computational approach to collocation assessment was taken up in Kopotev et al. (2023), which explored written L2 Russian. In this study, the data collected in an immersive intensive Russian language program in the United States were first subjected to proficiency rating based on the ACTFL Proficiency scale 1 ; based on these ratings, four general proficiency groups were created. The researchers extracted all bigrams from the learner texts and ranked the L2 collocations against the collocational strength of collocations extracted from a reference L1 corpus, with the assumption that a greater number of bigrams in an L2 text and higher collocational scores associated with them indicate a more proficient learner. The study’s results suggested that these measures helped distinguish between proficiency levels as their values varied significantly across the four proficiency levels. In addition, the association values of the learner bigrams developed linearly upward, indicating a fairly uniform development from one proficiency level to another.

With only a handful of studies examining the Russian learner language from the perspective of collocational patterns, many questions remain. In the current study, we aim to contribute to this line of inquiry by applying similar computational methods as found in Kopotev et al. (2023) and expand their research questions to include the HL learner group.

Heritage data analysis

Data

The learner data are drawn from a corpus of essays, collected in 2012 through the annual National Post-Secondary Russian Essay Contest organized by the American Council of Teachers of Russian. 2 Hundreds of students, representing 30 to 40 US universities and colleges, voluntarily participate in the contest each year. The participants represent both the L2 “traditional” learners and HL learners of Russian; the information on the language learning history is collected by the Contest organizers and is carefully marked on the essays (in fact learners from different language learning backgrounds, L2 and HL, compete within the categories of speakers). While we acknowledge that HL learners are known to be an extremely heterogeneous group when it comes to their language learning history and the resulting proficiency, our goal is not to track their developmental trajectory diachronically; instead, we aim to assess their current abilities at the synchronic level vis-à-vis their objectively measured proficiency level. All essay writers, regardless of their proficiency level or year of study, are given the same writing prompt; in 2012, when the data were collected, the prompt read What is a friend? The participants were not instructed to adhere to any specific writing genre, but the nature of the prompt led contestants to produce short texts with elements of narration and description. All participants wrote their essays by hand at approximately the same time (between late January and early February 2012) at their universities under the supervision of a proctor. Participants were given 60 minutes and were not allowed to use dictionaries, computers, or any other resources. After the award cycle was completed, the anonymized essays were transferred to researchers for processing and analysis (for more information on the corpus, see Kopotev et al., 2023). Two examples of the essays can be found in Appendix 1.

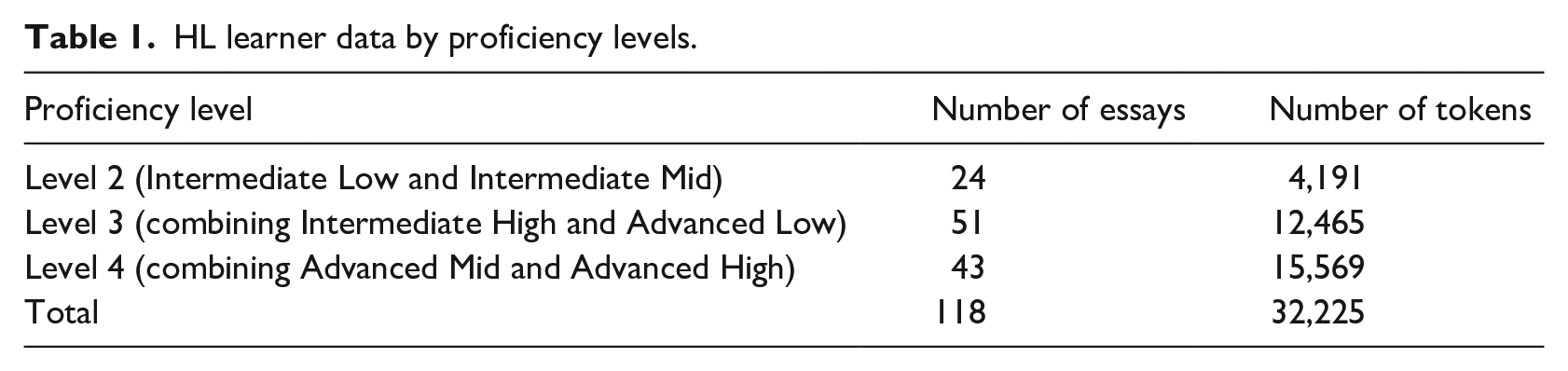

While the essay corpus contains both L2 and HL data, for the present study, we compiled a subcorpus consisting of 118 texts created only by HL learners, as indicated by a special code that distinguishes heritage and non-heritage learners who compete in these two different categories. The essays were first presented as handwritten copies to experienced certified Oral Proficiency Interview (OPI)/Writing Proficiency Test (WPT) raters who assigned each essay a writing proficiency level following the ACTFL Proficiency Guidelines, a standard in the US language education. We believe that proficiency rating serves as an effective proxy in accounting for varying experiences with the HL among the group of HL learners. The resulting breakdown by proficiency levels based on the ACTFL scale is presented in Table 1.

HL learner data by proficiency levels.

Consequently, the handwritten essays were typed up by a research assistant (RA) with experience in teaching Russian as an L2 and cross-checked by a second RA with similar expertise. Next, all the essays were standardized for spelling to ensure the data were lemmatized and properly parsed. All digital files were saved in UTF-8 format, and standardized files were annotated using the UDPipe tagger (Straka & Straková, 2020). UDPipe is a trainable natural language processing tool that provides tokenization, lemmatization, full morphological annotation, and syntactic dependency parsing. In this research, we utilized the Universal Dependencies 2.5 model for Russian, trained on the SynTagRus corpus (Apresjan et al., 2006); tokenization accuracy provided by the model equals 99.6%, lemmatization accuracy 96.5%, part-of-speech tagging 97.8%, morphological features 93.2%, and syntax 85% (UDPipe 1 Models, 2025). Subsequent computational analyses were conducted using customized Python scripts.

In addition to the HL learner data, our dataset also includes one L1 reference corpus and three contrastive datasets created for the study. The reference corpus is the Araneum Russicum, a freely available large reference corpus of the Russian language (Benko, 2014). The three additional datasets comprise (a) collocations extracted from texts of literary fiction; (b) a list of collocations from the Araneum, which have the highest t-score values; and (c) a list of bigrams randomly generated from words pulled from Araneum that naturally have very low t-score values. We explain the methodology behind these datasets in the next section.

Enhanced methodology for HL collocation validation

Approaches to automatic extraction of collocations from text corpora have rapidly developed in the past decades, utilizing a range of tools and methodologies to enhance linguistic analysis. These approaches include the widely used Mutual Information (MI) score, Dice coefficient, t-score, and log-likelihood ratios, each with its unique advantages and drawbacks (Brezina et al., 2015; Gablasova et al., 2017). For example, MI score, which measures the extent to which the observed frequency of the co-occurrence of the items in the collocation differs from a statistical expectation, might overestimate less frequent collocations, while the t-score, which measures confidence with which the association is claimed, tends to highlight high-frequency pairings more effectively (for a discussion of various measures with regard to the Russian language, see Pivovarova et al., 2018). In the current study, we opted to use the t-score metric to assess the significance of each collocation in our data based on the findings in previous research (Kopotev et al., 2024) that showed that t-score may effectively assess proficiency levels in Russian L2 data. This assumption was tested in this study and the findings are discussed in detail in the “Discussion” section.

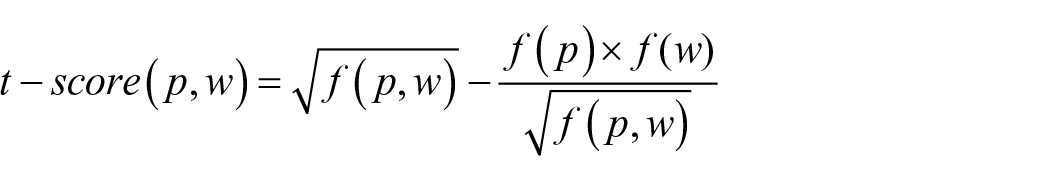

The t-score is a measure derived from the well-known Student’s t test and is widely acknowledged for its ability to calculate the relevance of two words’ co-occurrence based on their individual and joint frequencies in the corpus. It ranks collocations by frequency but also adjusts the score to discount the impact of excessively frequent words, thus filtering out common but less informative word pairs. The t-score formula is given by

where (p) and (w) are the components of a bigram, f(p) and f(w) are their frequencies in the corpus, and f(p,w) is the frequency of their co-occurrence in the corpus.

This measure, like any other, cannot be calculated based exclusively on the learner data due to the limited size of the data and potential inaccuracies introduced during transcription and processing. To improve our analysis, we proposed the following protocol: First, we extract bigrams from the L1 reference corpus, the Araneum Russicum corpus (Benko, 2014), a freely available POS-annotated reference corpus comprising 1.2 billion running words. Every bigram extracted from this reference corpus is ascribed a t-score. We then extract bigrams from the “HL data” (sdelat’ vremja “make time,” dobryj i “good and,” vopros družby “question of friendship,” etc.); these bigrams are compared with the L1 reference and, if the match is found, the HL bigram is ascribed a t-score corresponding to the L1 t-score value. If an HL bigram is not attested in the L1 data, the t-score value assigned is, naturally, a zero.

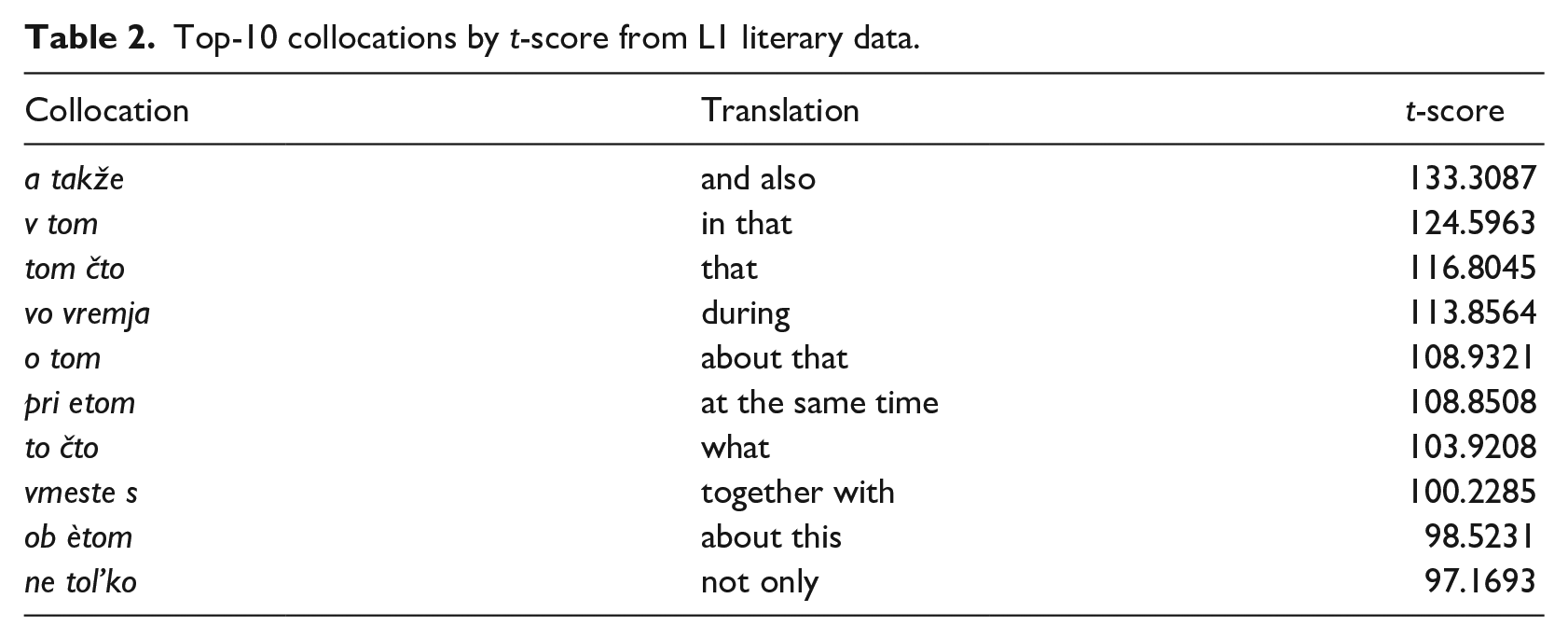

T-values are also assigned to bigrams of the three validation datasets used in this study. The first dataset contains bigrams culled from 12 L1 texts, which represent literary fiction created not by well-known writers lauded for their original style and remarkable use of language, but rather by second-tier authors whose language and style were deemed rather derivative and average. By focusing on texts with little literary acclaim, we hoped to avoid the risk of introducing a large number of idiosyncratic formulaic expressions typical of one or another fiction author from the beginning of the 21st century (see an example in Appendix 2). We believe this dataset helps us to establish a baseline in the collocational preferences of average L1 users. We refer to this dataset as “L1 literary data” in this paper. The other contrastive dataset, referred to as “L1 top collocations,” includes 3651 collocations from the Araneum Russicum that have the highest t-score values (see examples in Table 2).

Top-10 collocations by t-score from L1 literary data.

Finally, we created a dataset with randomly generated bigrams using legitimate Russian words from the Araneum corpus; these are 24,191 random concatenations of any two words, with no preset semantic constraints, we refer to this dataset as “Random bigrams” (gvardii konečno “of guards of course,” syna malejšix “of son of the smallest,” každyj ne “each not,” etc.). The two latter datasets are aimed at establishing the extremes that the t-score may theoretically have.

As such, the final data for analysis consisted of all bigrams from the HL dataset (for each of the three proficiency groups), enriched with the data culled from the L1 dataset, such as word frequency, bigram frequency, and L1 t-score value, as well as three contrastive datasets, L1 Literary data, L1 top collocations, and Random bigrams. Through the analysis, we compared variations across the three different proficiency levels in the HL data, with the assumption that all levels are located well above the randomly generated sequences and well below the top collocations, as well as learners with higher language proficiency are hypothesized to produce more standard collocations similar to those found in the L1 literary data.

Results

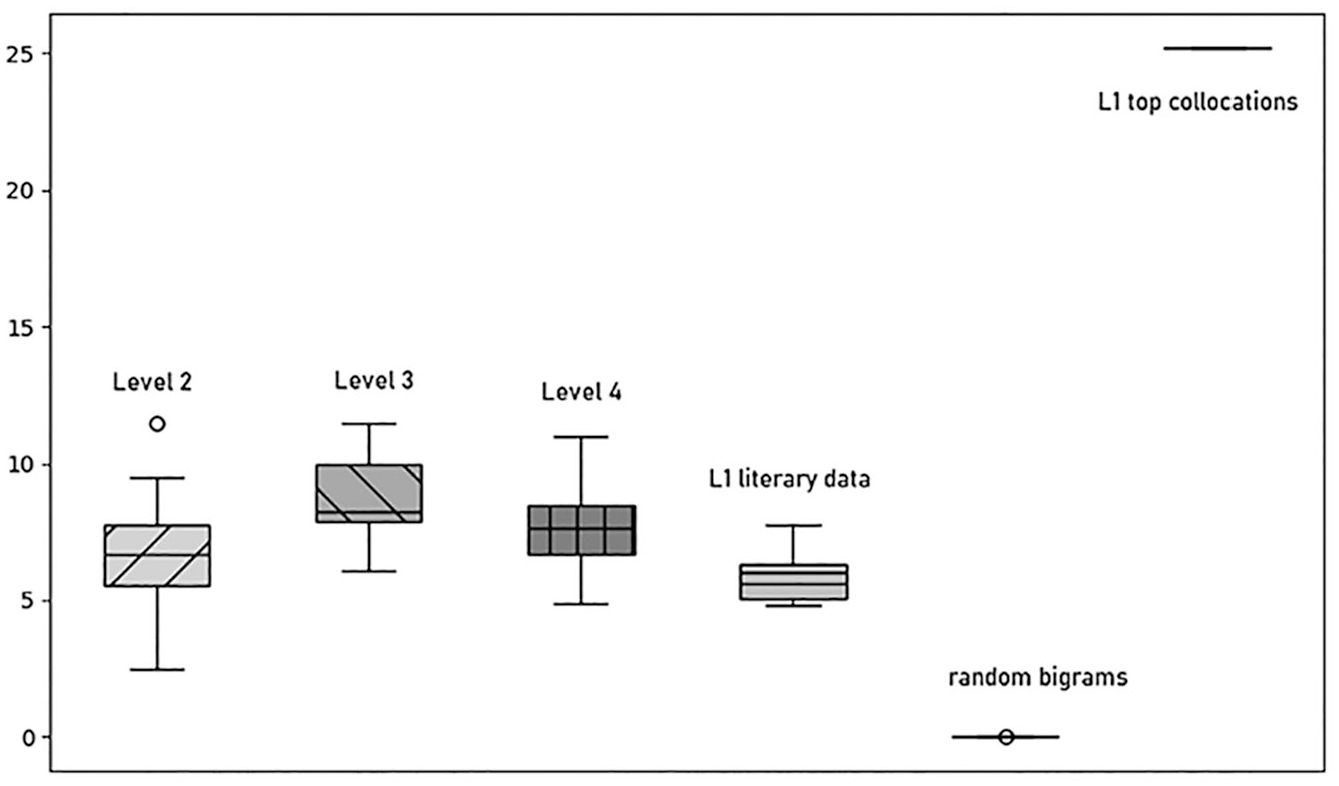

In this subsection, we delve into the comparative analysis of collocations across different language proficiency levels in the HL data in comparison with the three contrastive datasets. The results of the t-score test are represented in the box plot (Figure 1) that visually compares the distribution of t-scores across the data. The diagram includes the following elements: patterns of the boxes show different datasets; each box shows the first (25th percentile) and third (75th percentile) quartiles as well as the median of the t-score distribution (horizontal line in the boxes); whiskers represent the minimum and maximum t-score values excluding outliers; finally outliers, whose values significantly deviating from the main distribution, are presented with empty points.

Distribution of t-scores across the datasets.

To demonstrate the overall plausibility of our approach, we first analyzed collocations within two contrastive datasets: randomly generated bigrams (box fused into a thick line in the lower right corner) and top collocations (box fused into a thick line in the upper right corner). As becomes clear on the plot, the cumulative t-score of randomly generated bigrams is significantly narrower and close to 0 (with the mean at 0.025) compared with those authored by the HL learners and to L1 literary texts. This notable disparity in t-score distribution underscores the distinctiveness of randomly generated bigrams from the coherent texts in terms of collocational patterns. Conversely, the top collocations exhibit a predictable extremely high uniformity: their distribution is densely concentrated around the median (25.207), with no outliers in this dataset. This stark contrast in t-score distribution between two maximally contrastive datasets highlights the potential of this metric as a discriminative tool for distinguishing between cohesive texts and random word sequences.

With regard to the feasibility of using t-score values to distinguish proficiency levels, the cumulative t-score of texts produced by HL learners demonstrates minimal variance across different proficiency levels: in all three groups, the majority of texts consistently fall within the 5.525−9.965 range, indicating no significant differences between the three proficiency levels, particularly between Proficiency Levels 3 and 4. However, Level 2 displays the greatest in-group variability in t-score ranges, spanning from 2.4771 to 7.745 (excluding the outlier), with the mean at 6.604. This suggests that the texts from this lower proficiency group (Level 2) vary considerably from the higher proficiency level groups, 3 and 4, from a collocational perspective. Proficiency Group Level 3 which includes Intermediate High and Advanced Low learners shows a range of t-scores from 6.0529 to 11.5013, with the mean at 8.809. Level 4 has a variation of 6.1141 and demonstrates a slightly higher density in using collocations. Although this might suggest better collocational abilities to the naked eye, the differences in the numbers are not statistically significant to conclusively indicate improvement in using collocations.

Now, we turn our attention to collocations in L1 literary texts. This comparison is crucial as it allows us to understand whether the patterns observed in the HL data are unique to these groups or if they reflect broader tendencies in language use. By examining L1 literary texts, we aim to determine whether the cumulative t-score can effectively distinguish between the standard L1 texts and the HL texts. As shown in Figure 1, the cumulative t-score of texts representing L1 literary language exhibits a similar pattern to that of HL learner data, spanning from 4.814 to 7.764, with the mean at 5.92, which is not considerably different from the HL data. This observation suggests that the overall t-score alone does not provide sufficient discriminatory power to differentiate between L1 writers and HL writers based solely on collocational patterns.

This inconsistent t-score distribution suggests that collocational patterns may not effectively distinguish between different levels of language proficiency among HL learners, nor are they statistically different from L1 literary texts. This result contrasts with our previous findings, where the same metric effectively indexed proficiency levels in L2 Russian data (Kopotev et al., 2023).

Discussion

Investigating the feasibility of using t-scores to differentiate proficiency levels of HL writers, we found that the cumulative t-score of the HL learner texts varies across the three proficiency groups of HL learners only slightly, all within the range from approximately 2.5 to 11.5. Even with slight variation, the differences in t-score values are minimal and non-unidirectional, suggesting a similarity in collocational usage regardless of language proficiency level. Based on these results, we came to a conclusion that differences in t-scores cannot effectively differentiate the proficiency levels of HL learners in our data.

In addition, the comparison of the cumulative t-scores of the HL texts for all three proficiency groups and the L1 literary texts shows that all texts/levels fall within a narrow range, suggesting that the difference is also minimal. Despite the inherent richness of collocational usage in literary texts, the similarity in t-score distribution between literary fiction and student HL speaker texts underscores the complexity of using collocations as a definitive marker for distinguishing between language proficiency levels in this context.

These findings were unexpected; first of all, based on the previous research (Treffers-Daller et al., 2016), which demonstrated that HL learners do use non-standard collocations and, while recognizing the vast majority of standard collocations, perform worse than L1 speakers on acceptability judgments (Kessler & Perevozchikova, 2024), as well as on evidence from a study by Dąbrowska (2019) showing that a collocation test reveals the largest differences between native speakers and L2 learners, we hypothesized that HL learners would differ from L1 writers in their collocational usage; we also hypothesized that collocational measures will help index different proficiency levels, in the same manner, that syntactic and lexical measures have shown to differentiate proficiency levels (cf. Kisselev et al., 2020, 2021). In addition, the same method helped index proficiency levels in L2 Russian. Specifically, in a study that is similar methodologically, Kopotev et al. (2023) have shown that collocations association measures (t-score and Dice-score) developed linearly, in an up-trending direction, across proficiency levels in L2 Russian language data.

Heritage speakers are typically exposed to colloquial spoken language, in which the types of multiword expressions are expected to differ significantly from those found in written literary texts. Despite indisputable variations in language proficiency levels between the HL texts and the L1 literary texts, the observed similarity in collocational strength indicates challenges associated with the characteristics of heritage data when using collocations as a standalone measure for assessing HL language proficiency.

These results may be attributed to several factors, including the nature of language acquisition among HL learners, as well as the methodologies used to determine proficiency levels among HL learners. If the classification of learners into proficiency groups is based on measures that do not strongly correlate with collocational competence, this might partly explain the absence of significant differences in t-scores. Further research might explore alternative measures of proficiency that account more explicitly for lexical and collocational richness.

In addition, it is important to consider the characteristics of the t-score measure itself. While t-score is widely used in collocational analysis, it primarily captures statistical associations based on frequency and co-occurrence, without taking into account semantic or syntactic appropriateness. It is possible that alternative association measures, such as mutual information (MI) or more context-sensitive approaches, could reveal greater distinctions in collocational usage between proficiency groups. Future studies could explore a combination of multiple collocational metrics to provide a more nuanced picture of HL learners’ proficiency levels.

Next, we discuss the discernible differences in t-score distributions that we found between coherent texts and randomly generated word sequences. This contrast underscores the effectiveness of t-score in distinguishing between meaningful collocational relationships and random word associations. We believe that measures of strength of association in multi-word expressions, such as the t-score used in our study, may have applicability for automatic language proficiency assessment, although with certain caveats. However, we recognize the potential utility of automatic collocational analysis in other research contexts where distinguishing between coherent and incoherent texts is relevant.

In educational settings, automatic collocational analysis can inform language teachers and curriculum developers about the effectiveness of collocation-based instruction and its impact on language proficiency development. By identifying patterns of collocational usage in student writing, educators can tailor instructional strategies to target specific collocational competencies and enhance learners’ lexical and collocational knowledge.

In linguistic research, automatic collocational analysis can contribute to studies investigating the cognitive processes involved in language production and comprehension. By comparing collocational patterns in coherent and randomly generated texts, researchers can gain insights into the cognitive mechanisms underlying language use and the role of collocations in linguistic creativity and fluency.

Moreover, the incorporation of collocational analysis into language learning curricula offers opportunities for authentic language practice and application. Through activities such as collocation-based exercises, writing tasks, and language games, students can actively engage with collocational patterns in meaningful contexts, thereby consolidating their understanding and retention of lexical combinations. Overall, by incorporating measures of associative strength into language learning programs, educators can empower students to become more proficient and confident language users, equipped with the skills to effectively navigate and manipulate collocational patterns in their written and spoken discourse.

Conclusion and further research

The analysis of collocations across proficiency levels and different datasets offered in this study highlights the complexities inherent in using collocational patterns as a standalone measure for language proficiency assessment. While measures of associative strength, such as the t-score, offer valuable insights into collocational usage, the study of collocations in learner language will benefit from a more nuanced approach that incorporates different automatic measures.

Applying these metrics can yield distinct outcomes, attributable to the specific focus of each measure. As demonstrated by Pivovarova et al. (2018) when applied to Russian data, the t-score primarily enhances the visibility of the most frequently occurring collocations, such as English do homework. In contrast, the Dice coefficient favors infrequent but strongly associated word pairs that are more idiomatically interconnected, such as English lo and behold. This observation aligns with the distinction made in the “Introduction” section between statistically oriented and phraseological approaches to studying collocations. Although we posit that the two approaches substantially overlap, it is observed that students in class tend to learn phraseological units, whereas the metric employed in this study predominantly captures statistical collocations. The variation in applying different metrics naturally leads to further investigation in our research. For instance, incorporating syntactic and semantic information into collocational analysis may enhance their precision and effectiveness in capturing nuances of language proficiency levels.

In conclusion, while our study highlights the challenges associated with using collocations as a definitive marker of language proficiency, it also underscores the potential benefits of collocational analysis for language learning and research. By advancing our understanding of collocational patterns and their implications for language use, we can contribute to the development of more effective language teaching methods and assessment tools, ultimately fostering greater proficiency and fluency among language learners.

Footnotes

Appendix 1

Two sample essays from different proficiency levels, Intermediate Mid (lower level) and Advanced High (higher level). Grammar and spelling errors are underlined; non-standard bigrams are in square brackets. Please note that collocational analysis was conducted on standardized texts with all spelling errors corrected.

Appendix 2

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethics approval and informed consent statements

Ethical approval to use the data was obtained from the Institution overseeing the ACTR Contest (The Brigham Young University) and the compilation of the corpus (The Pennsylvania State University).