Abstract

Teacher-education programmes increasingly turn to AI to personalise learning, yet many designs still inherit assumptions from learning-styles (LS) models. This review synthesises 63 empirical studies from Scopus and Web of Science using a PRISMA-guided process to clarify what LS research in teacher education shows and how these insights should redirect AI-enabled personalisation. We find widespread declared support for LS (77.4% of studies) alongside limited comparative testing against alternative frameworks (51.6%), and substantial heterogeneity of instruments and methods that undermines comparability. Notably, the intersection of “personalised learning” and LS is comparatively small, suggesting a weak empirical basis for LS-driven personalisation. Concerns about LS as a neuromyth, and calls to bridge neuroscience and educational practice, recur across the corpus; the literature also points to conceptual-change strategies that can reduce erroneous beliefs among pre- and in-service teachers. Taken together, the evidence does not support style-matched instruction. We therefore argue for AI-enabled personalisation anchored in observable performance, strategy use, and self-regulation, with transparent evidence pipelines and attention to workload and equity. This contribution reframes personalisation for teacher preparation beyond fixed style labels and outlines a realistic route for school improvement in an AI-intensive educational landscape.

Keywords

Introduction

The tools used for teaching and learning have undergone significant evolution throughout history. From early oral traditions and handwritten manuscripts to printed books, each new medium has altered how knowledge is represented, stored, and circulated. The invention of the printing press in the 1440s, for example, radically expanded access to written material and reshaped the social organisation of knowledge (Rey-García et al., 2024). In the contemporary period, advances in electronics and computing have again transformed how people communicate, work, and learn, giving rise to dense digital ecosystems in which educational experiences are mediated by platforms, learning management systems, and data-rich applications (Chituc, 2021).

In today’s educational landscape, traditional resources such as books, classroom work guides, and slide presentations coexist with emerging technologies including artificial intelligence (AI), the Internet of Things (IoT), robotics, and 3D printing (El Moutchou & Touate, 2024; Rincon-Flores et al., 2024). In policy and industry discourse, these tools are often grouped under the umbrella of the Fourth Industrial Revolution and presented as drivers of flexibility, innovation, and personalised learning. Rather than if they automatically “redefine” educational paradigms, it is more accurate to say that their convergence exerts significant pressure on institutions and educators to reconsider how teaching is organised, how learning is monitored, and what counts as valuable educational work.

These pressures are unevenly distributed. Critical scholarship has shown that digitalisation and AI in education are deeply entangled with long-standing inequalities, power relations, and labour conditions, rather than simply solving them (Selwyn, 2022, 2024). Scientometric reviews of digital inequity in education highlight stark differences in access to high-quality digital resources and AI-enhanced tools within and across systems, with marginalised communities often experiencing more surveillance and experimentation and less meaningful support (Meng et al., 2024). At the same time, systematic reviews by Creagh et al. (2025), Fernández-Batanero et al. (2021), and Yang et al. (2025) document how the rapid proliferation of technologies contributes to teachers’ stress, technostress, and “time poverty,” as expectations around innovation, data use, and accountability intensify. For teacher-education programmes, debates about AI therefore unfold in a landscape already marked by inequalities and workload pressures that shape what any technology can realistically achieve.

The Fourth Industrial Revolution and Education

Within this broader context, AI has come to symbolise the Fourth Industrial Revolution in education. According to the Latin American Institute for Teacher Professional Development (2024), AI tools are now expected to support adaptive learning, automated feedback, virtual assistants and chatbots, and large-scale analysis of educational data. These capabilities are frequently invoked as solutions to chronic challenges in schooling, such as large class sizes, heterogeneous cohorts, and limited time for individualised feedback. However, they also raise persistent concerns about inequitable access, opaque decision-making, and the security and governance of the vast amounts of data required to train and operate AI systems (Hoole & Jahankhani, 2021).

In this setting, the idea of AI-based learning pathways has gained particular prominence. The notion that learners might follow differentiated trajectories, supported by continuous data and algorithmic recommendations, resonates strongly with long-standing aspirations to personalise learning. Technically, the combination of abundant data, increasingly affordable processing power, and advanced machine-learning techniques offers new possibilities for modelling learner performance over time and adjusting tasks accordingly (Guettala et al., 2024; Tyagi et al., 2024). Yet technical potential alone is insufficient. Realising any educational benefit from AI-enabled personalisation requires explicit assumptions about what kinds of learner differences matter, how these differences should be interpreted, and how teachers remain central in designing, enacting, and revising learning pathways.

This is where teacher-education programmes face a paradoxical situation. On the one hand, they are urged to become “AI-ready,” incorporating AI tools and analytics to personalise coursework, practica, and professional development. On the other hand, many of the frameworks historically used to justify individualised instruction in teacher education—most notably learning styles (LS)—have been challenged as conceptually weak, empirically unsupported, and closely tied to neuromyths (Coffield et al., 2004; S. Dekker et al., 2012; Lethaby & Harries, 2016; Papadatou-Pastou et al., 2021). If AI-enabled systems in teacher education are designed around LS taxonomies or similar typologies, they risk encoding neuromyths into algorithmic infrastructures, legitimising questionable practices and intensifying workloads through the generation and processing of data that do not meaningfully inform teaching.

Against this backdrop, there is a timely need to understand what the LS literature in teacher education actually contains and what it can—and cannot—offer to evidence-informed personalisation in an AI-intensive landscape. Empirically, it remains unclear which teacher populations, contexts, instruments, and outcomes have been studied; to what extent LS constructs interact with neuromyths and related misconceptions; and whether existing interventions report conceptual-change strategies that could help teachers move beyond LS-based assumptions. Conceptually, the field lacks a synthesis that uses this evidence to articulate requirements for AI-enabled personalisation that are anchored in observable behaviours, strategy use, and developmental trajectories rather than in fixed cognitive “types,” and that remain aligned with school-improvement goals such as time, workload, equity, and feedback quality. To address this gap, the present scoping review pursues three aims: first, to map the empirical and structural landscape of LS research in teacher education; second, to examine how LS constructs intersect with neuromyths and the conceptual-change strategies reported to address them; and third, to derive evidence-informed requirements for AI-enabled personalisation in teacher preparation that avoid LS-based assumptions while advancing school-improvement goals. The following literature review develops the conceptual background for this analysis by examining, in turn, personalised learning in teacher education, the role of LS, the redefinition of LS as a neuromyth, and recent debates on AI-enabled personalisation.

Literature Review

Building on the tensions around AI, personalisation, and the contested role of LS and neuromyths outlined in the introduction, this section reviews the bodies of literature that underpin our analysis. We first examine how personalised learning has been conceptualised and enacted in teacher-education programmes. We then analyse the prominence of LS models in these efforts and their subsequent reframing as a neuromyth. Finally, we review work on AI-enabled personalisation and learning analytics in teacher education. Together, these strands delineate the conceptual and empirical landscape that motivates our scoping review and inform the research questions introduced above.

Personalised Learning in Teacher Education

Within this broader landscape, personalised learning has emerged as a guiding ideal in policy and institutional discourse. In general terms, personalised learning refers to efforts to adapt goals, content, pacing, and support to individual learners’ characteristics, needs, and trajectories, often combining digital technologies with flexible pedagogies. In teacher education, this ideal is frequently invoked to justify redesigns of curricula, assessment, and mentoring structures so that pre-service and in-service teachers can progress along differentiated pathways and receive more targeted feedback on their practice, especially in programmes that attract diverse student populations and career-changers (Baltezarević & Baltezarević, 2024; K. C. Li & Wong, 2023).

However, as Pantoja Ospina et al. (2013) remind us, not every initiative labelled as “personalised” rests on a clear theory of learning or on robust evidence about its effects on teachers’ professional development. Empirical accounts of personalised learning in teacher education are uneven in both scope and quality. Many initiatives are described in broad terms—highlighting flexibility, student choice, or modularity—without systematically examining how personalisation is operationalised or what impact it has on teacher learning and subsequent classroom practice (Pantoja Ospina et al., 2013). In some cases, personalisation is reduced to the provision of optional resources or to the use of digital platforms that log learners’ activity, without a clear pedagogical rationale for how these data inform instruction or feedback. As Hamse et al. (2021) note, there is a risk that the rhetoric of personalisation becomes detached from robust instructional designs, particularly when systems rely on simplified learner classifications rather than on rich evidence of performance, strategy use, and growth.

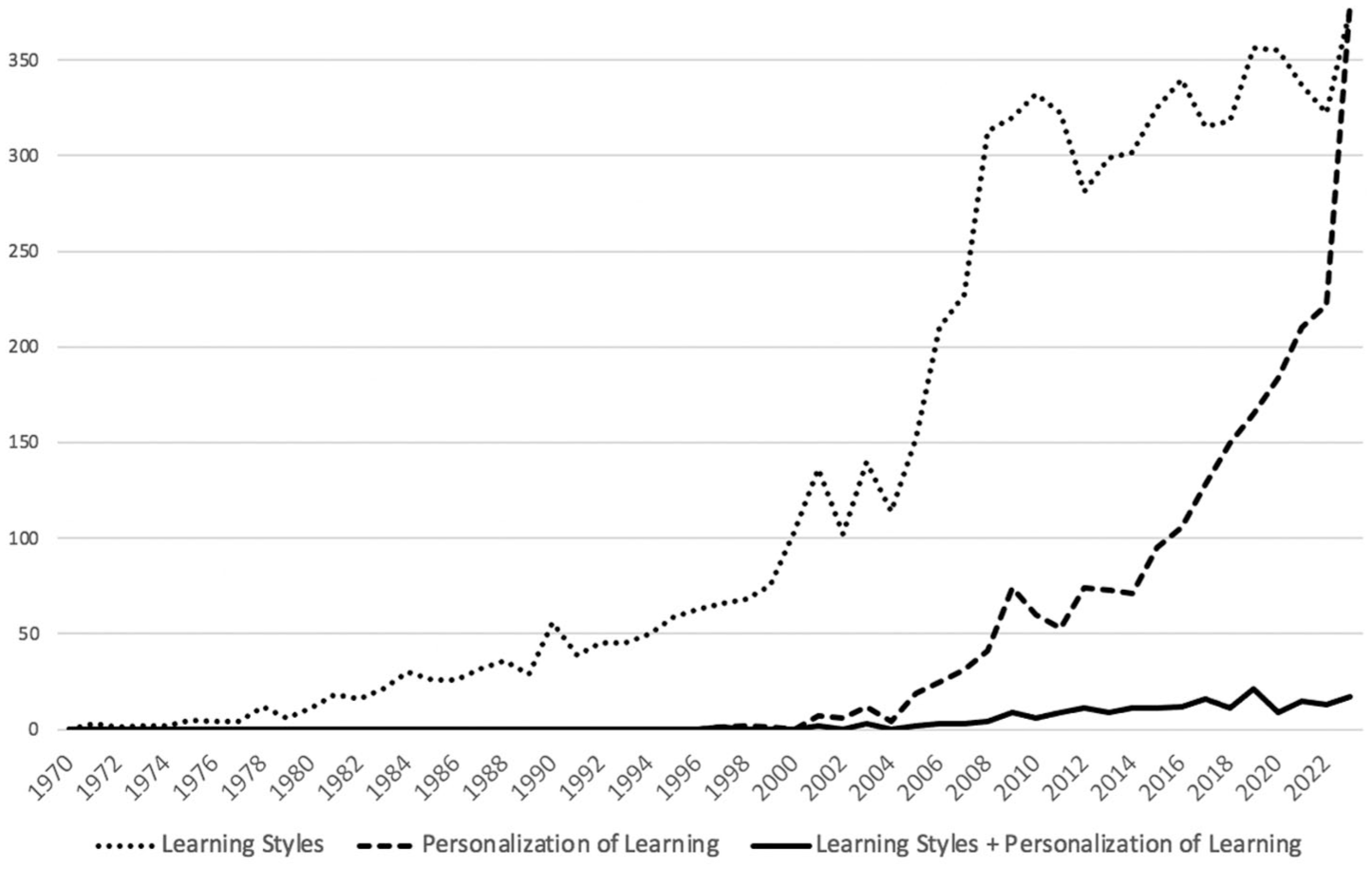

Against this backdrop, it is instructive to examine how the research fields of personalised learning and learning styles have evolved in relation to one another. Figure 1 summarises the number of publications on learning styles and personalisation of learning identified in Scopus over time, illustrating both the historical prominence of LS research and the comparatively smaller, though growing, body of work explicitly concerned with personalisation. Scopus trends show a long-standing and higher-volume trajectory for learning-styles research relative to the smaller and more recent body explicitly labelled as personalised learning. This historical asymmetry helps explain why LS logic still feels like a “ready-made” template for personalisation claims and signals a key risk for AI-enabled designs: if the evidential baseline is not re-anchored in observable performance, strategy use and self-regulation, AI may modernise the interface of personalisation while preserving legacy LS assumptions in the backend.

Published studies on learning styles and personalisation of learning (Scopus).

Learning Styles in Teacher Education

Learning styles have played a central role in many attempts to personalise instruction in teacher education. A wide array of LS models and instruments—such as Kolb’s experiential learning styles, Honey and Mumford’s typology, the VARK model, and others—has been used to categorise learners according to allegedly stable preferences for processing information or engaging in learning activities. Within teacher-education programmes, these instruments are often presented as tools for self-knowledge: student teachers complete a questionnaire, receive a profile (e.g. “visual,” “reflector,” “converger”), and are encouraged either to adapt their own study strategies or to design lessons that accommodate their future pupils’ styles (Hamse et al., 2021; Pantoja Ospina et al., 2013). In principle, such practices are framed as ways of respecting learner diversity and fostering inclusive pedagogy.

However, critical reviews have repeatedly questioned the conceptual coherence, psychometric quality, and instructional implications of LS models. Coffield et al. (2004) identified at least 71 different LS schemes in the literature and concluded that many of the most widely used instruments lack reliability and validity, particularly when employed to make high-stakes instructional decisions. Subsequent experimental and quasi-experimental studies have failed to provide robust support for the so-called “matching hypothesis”—the claim that instruction is more effective when matched to learners’ styles—showing instead that well-designed, varied instruction can benefit all students, regardless of their alleged style (Pashler et al., 2008; Romanelli et al., 2009). Furthermore, as Romanelli et al. (2009) argue, the intuitive appeal of LS-based differentiation has not been matched by convincing empirical evidence.

For teacher-education programmes, this situation is particularly problematic. On the one hand, LS-based activities are frequently used to raise awareness of individual differences and to legitimise differentiated instruction as a professional norm. On the other hand, they may inadvertently reinforce essentialist views of learners—presenting styles as fixed traits rather than as context-dependent strategies—and distract attention from more powerful levers for differentiation, such as formative assessment, scaffolding, and high-quality feedback (Coffield et al., 2004; Pashler et al., 2008). Understanding how LS are embedded in teacher education, and the extent to which LS-based interventions are actually evaluated, is therefore a prerequisite for assessing what this body of research can realistically offer to evidence-informed personalisation and to AI-enabled designs.

Learning Styles as a Neuromyth in Teacher Education

Considering these conceptual and empirical weaknesses, learning styles have increasingly been reframed as a neuromyth: a widely held but scientifically inaccurate belief about how the brain works, used to justify educational practices (S. Dekker et al., 2012; Papadatou-Pastou et al., 2021). From this perspective, the problem is not only that LS models lack robust evidence, but also that they shape teachers’ interpretations of learner diversity in ways that can misguide instruction. If teachers believe that students have fixed sensory or cognitive styles that must be matched, they may invest time and resources in categorising learners rather than in adapting tasks, supports, and feedback based on observed performance and evolving needs (Fallace, 2023).

Research on neuromyths in education has consistently found that LS-related statements (e.g. “Individuals learn better when they receive information in their preferred learning style”) are among the most frequently endorsed misconceptions by both pre-service and in-service teachers (Rousseau, 2021). S. Dekker et al. (2012), for instance, reported high levels of agreement with LS neuromyth items among teachers in the Netherlands and the United Kingdom, while Papadatou-Pastou et al. (2021) showed that LS beliefs are resilient even among educators with substantial experience and advanced qualifications. These findings suggest that LS neuromyths are not merely residual misunderstandings but entrenched elements of teacher-education cultures.

Importantly, LS neuromyths do not exist in isolation. They coexist with other misconceptions about the brain and learning—such as exaggerated claims about left-brain/right-brain dominance or about the effects of “brain-based” programmes—that can also be found in teacher-education contexts (Ávila Toscano et al., 2022; McMahon et al., 2019; Nóbrega et al., 2024). Ávila Toscano et al. (2022), for example, document how pre-service teachers often endorse multiple neuromyths simultaneously, while McMahon et al. (2019) and Nóbrega et al. (2024) show that professional-development activities can either challenge or reinforce these beliefs depending on how neuroscience is presented. This broader neuromyth ecology highlights the need to situate LS within a wider set of beliefs and institutional practices, and to consider how teacher-education programmes can foster conceptual change towards more accurate, evidence-informed understandings of learning and cognition.

AI-Enabled Personalisation and Teacher Preparation

While debates around learning styles and neuromyths have matured, a new wave of technologies—particularly AI-driven systems and learning analytics—has renewed interest in personalisation and, in some cases, revived LS-like assumptions under different labels. Many contemporary platforms promise to generate learner profiles, recommend resources, and adapt trajectories based on large datasets, often using language reminiscent of LS typologies (e.g. clustering learners into “profiles” or “paths” that suggest stable preferences). In teacher education, such tools are being introduced to support differentiated coursework, micro-credentialing, and practice-based feedback, with the expectation that they will both enhance learning and streamline programme management (Baltezarević & Baltezarević, 2024; K. C. Li & Wong, 2023). However, as Hamse et al. (2021) caution, the design of these systems does not automatically avoid the conceptual pitfalls already identified in LS-based approaches.

Without critical scrutiny, AI-enabled personalisation risks reproducing or even amplifying the same problematic assumptions that underpinned LS-based practices. If algorithms infer fixed learner traits from limited data, or if they prioritise easily quantifiable indicators over richer evidence of professional growth, they can constrain teachers’ opportunities to experiment, take pedagogical risks, or receive nuanced feedback (Selwyn, 2022, 2024). Furthermore, if teacher-education programmes adopt AI systems that implicitly encode LS-like categories, they may unwittingly legitimise neuromyths precisely at a moment when these beliefs should be challenged (Papadatou-Pastou et al., 2021). According to Selwyn (2022), any adoption of AI in education must be understood within relationships of power, inequality, and teaching work that are already strained by technological intensification.

For these reasons, any attempt to harness AI for personalisation in teacher preparation must be informed by a careful reading of the existing LS literature and of the neuromyth debates. Rather than treating AI as a neutral solution, it is necessary to ask what kinds of learner differences are being modelled, on what evidence these models rest, and how they intersect with school-improvement priorities such as time, workload, equity, and feedback quality (Coffield et al., 2004; Pashler et al., 2008; Selwyn, 2024). The present review therefore examines the empirical and structural landscape of LS research in teacher education and uses this synthesis to articulate requirements for AI-enabled personalisation that avoid LS-based assumptions and instead build on robust, actionable evidence of teacher learning. In the next section, we detail the methodological approach adopted—drawing on PRISMA-guided procedures and the PPCDO framework—to address these questions systematically within a corpus of empirical studies on learning styles in teacher-education contexts.

Method

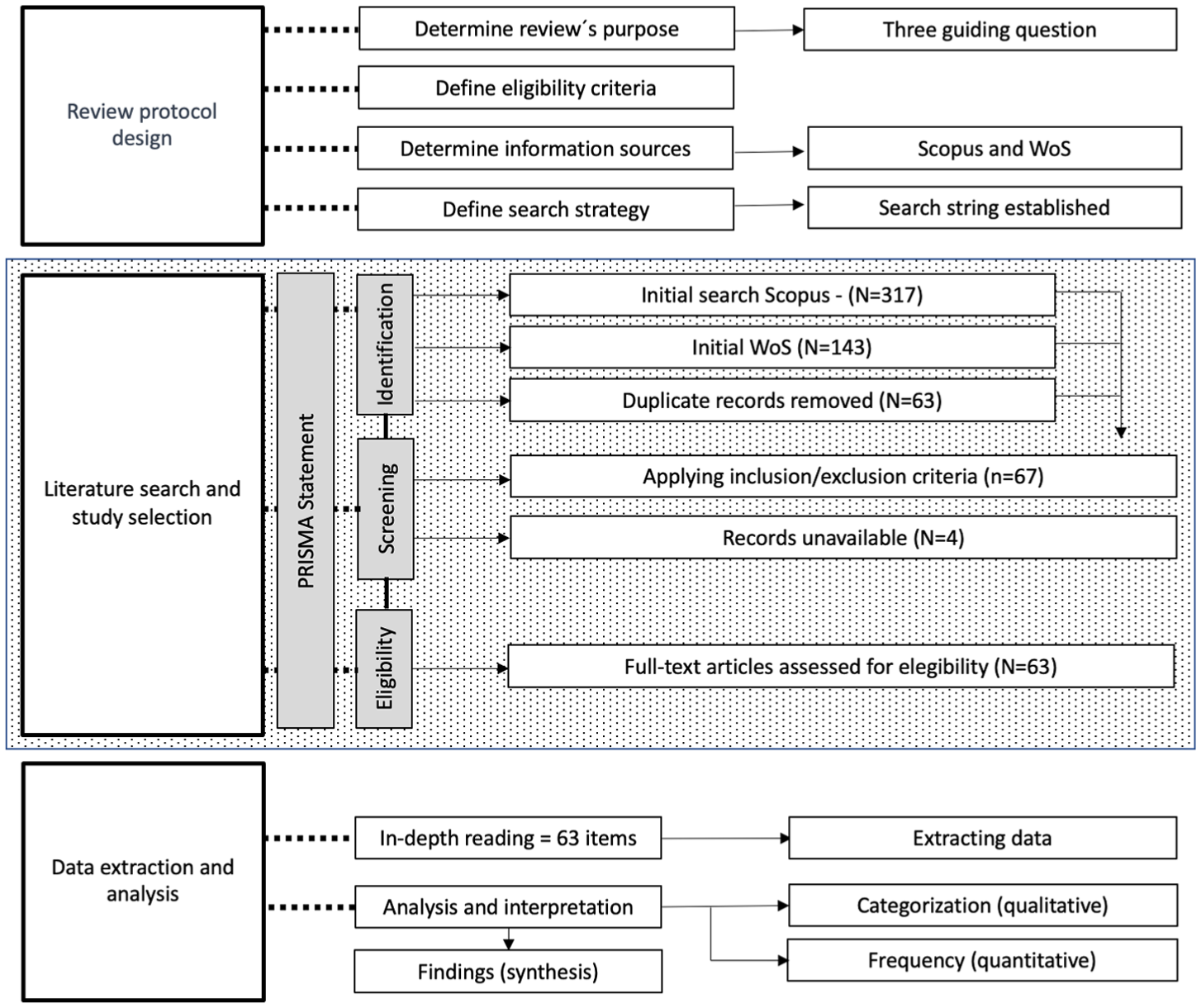

The review was conducted based on the recommendations of Page et al. (2021) regarding the execution of literature reviews and integrating some of the main processes of the PRISMA statement (Preferred Reporting Items for Systematic Reviews and Meta-Analyses). The details of how this method was executed in this literature review are shown in Figure 2, which not only reports the PRISMA-guided selection flow but also indicates how the resulting corpus was examined through RQ-aligned analytic streams, strengthening the transparency of the scoping-review logic adopted in this study.

Review process.

Review Protocol Design

Determining the Purpose of the Review

Teacher-education programmes are increasingly exploring AI-enabled personalisation to improve teaching and learning; however, many designs still inherit assumptions from learning-styles (LS) models that lack consistent empirical support. The purpose of this review is therefore twofold: first, to synthesise what empirical research in teacher education actually shows about LS claims, the strength of their evidence, and the persistence of related beliefs; and second, to translate those findings into actionable requirements for AI-enabled personalisation in teacher preparation that align with school-improvement priorities (time, workload, equity, and feedback quality). In short, the review reframes “personalisation with AI” beyond fixed style labels and towards evidence anchored in observable learner behaviours and strategy use.

To meet this purpose, the review addresses three research questions that mirror the structure of the results:

These questions keep the review tightly coupled to teacher-education contexts while making the AI contribution explicit throughout the article: the synthesis not only adjudicates LS claims but also specifies how programmes and developers should design, implement, and evaluate AI supports without reproducing LS-based assumptions.

This study adopts a scoping-review design to map the empirical and structural landscape of learning-styles research in teacher education and to examine how these constructs intersect with persistent neuromyth-related beliefs. A scoping approach is particularly appropriate given the heterogeneity of learning-styles models, instruments, contexts, and research designs reported in this field, as well as the review’s intent to document thematic and collaboration structures rather than to estimate a single pooled effect size. In other words, the aim is to clarify what has been studied, how it has been studied, and how coherent and cumulative this evidence is within teacher-education contexts.

In addition, the review extends beyond mapping to derive evidence-informed implications for AI-enabled personalisation in teacher preparation. Because this contribution requires synthesising conceptual patterns, methodological diversity, and structural fragmentation—and relating them to risks of legacy inheritance in AI systems—the broader exploratory logic of a scoping review offers a more fitting methodological foundation than a narrowly evaluative effectiveness review. This design therefore supports the three research questions by enabling a systematic description of the field (RQ1), an examination of LS–neuromyth intersections and conceptual-change strategies (RQ2), and the derivation of responsible design requirements for AI-enabled personalisation aligned with school-improvement priorities (RQ3).

Eligibility Criteria

Consistent with this focus, we determined study inclusion using the PPCDO framework—Population, Phenomenon, Context, Design, and Outcomes—which has recently been adopted in AI- and teacher-education reviews to align eligibility decisions with downstream synthesis and training implications (Fajardo-Ramos et al., 2025). The criteria foreground teacher-education settings and treat learning styles (and closely related constructs) as the focal phenomenon while accommodating studies on LS/neuromyth beliefs and conceptual-change interventions. Building on this operationalisation, we piloted the criteria on a sample set to calibrate screening decisions, documented reasons for exclusion, and resolved disagreements by consensus. AI-focussed papers were included only when LS constructs were operational to the analysis, ensuring that the final corpus supports mixed-methods appraisal and the downstream translation of findings into requirements for AI-enabled personalisation in teacher education.

Population: Including pre-service teachers, in-service teachers and teacher educators (e.g. university-based teacher educators, school-based mentors, supervisors) when they were enrolled in, or directly involved with, a teacher-education programme or a structured professional-development activity. Studies were eligible only when the focal participants could be clearly identified as part of an initial teacher education (ITE) programme, an induction scheme, or a continuing professional development (CPD) initiative. We excluded studies focussing exclusively on K–12 pupils, school leaders or higher-education students from other disciplines, as well as studies referring generically to “teachers” when it was not possible to determine whether the population was situated within a teacher-education or professional-development context.

Phenomenon of interest: Including studies that examine learning styles (LS) or closely related constructs (e.g. cognitive styles) as they inform instruction, personalisation, or teacher training, including (a) empirical tests of LS claims and interaction effects, (b) measurement of LS/neuromyth beliefs among teachers/teacher educators, and (c) conceptual-change interventions aimed at reducing LS/neuromyth endorsement. We accepted common LS instruments and labels (e.g. Kolb/LSI–CHAEA, VARK, Dunn & Dunn, Felder–Silverman, Honey & Mumford) and studies using “learning preferences” when these were explicitly operationalised through a recognised LS framework.

Excluding papers on “preferences” or “profiles” without an LS/cognitive-style operationalisation; cognitive-style research with no educational/training link; papers whose central phenomenon is AI but without an LS component (these informed context but were not eligible for inclusion in the synthesis).

Context: Including initial teacher education (preservice) and continuing professional development (inservice), delivered in universities, schools, or mixed partnership settings; any subject/discipline; any country. Excluding purely clinical/psychometric lab settings with no teacher-education context; general curriculum design outside teacher training.

Design: Including empirical primary studies—quantitative, qualitative, or mixed-methods (e.g. experiments/quasi-experiments, surveys, case studies, intervention studies, instrument-use/validation embedded in training). Excluding literature reviews, theoretical/position essays, editorials, book chapters, theses/dissertations, and non-peer-reviewed reports. When multiple publications drew on the same sample, we retained the most complete or latest report.

Outcomes: Including (i) evidence for/against LS-based instructional claims and interaction effects; (ii) teacher/teacher-educator learning outcomes (achievement, strategy use, self-regulation), motivation/engagement, feedback preferences when linked to LS; (iii) beliefs about LS/neuromyths and measured changes following conceptual-change interventions; and (iv) variables enabling field mapping (e.g. instrument use, design choices) for the bibliometric/structural analysis. Direction of findings was not an inclusion criterion. Excluding outcomes unrelated to instruction/training (e.g. personality profiling per se), or measures that mention LS only tangentially without analysis.

This PPCDO specification ensures that the corpus (a) centres teacher-education populations, (b) treats LS as the focal phenomenon while accommodating related beliefs and interventions, (c) captures authentic training contexts, (d) permits mixed-methods appraisal, and (e) targets outcomes that directly feed the translation to AI-enabled personalisation requirements articulated in RQ3.

Taken together, these population criteria mean that our corpus includes diverse groups along the continuum of teacher learning (pre-service teachers, in-service teachers and teacher educators), but all of them are interpreted through a teacher-education lens rather than as generic classroom practitioners. We regard this diversity as a strength, insofar as it captures different stages and settings of teacher preparation and professional development, while also acknowledging it as a limitation that we discuss later when reflecting on the heterogeneity of contexts and the transferability of the findings.

Information Sources

We queried Scopus and the Web of Science Core Collection (WoS) as our bibliographic sources. Using both indices offered a pragmatic balance between coverage and metadata quality, which was necessary for a mixed-methods synthesis coupled with bibliometric mapping. Scopus provides broad coverage of journals in teacher education, educational psychology, learning sciences, and technology-enhanced learning, with rich affiliation, DOI, and reference metadata that support transparent screening and export (Chaparro-Martínez et al., 2016). WoS, with its selective Core Collection, adds stable, high-impact venues and long citation tails that strengthen co-citation and collaboration analyses and reduce the risk of over-representing single publishers or regional outlets.

These databases are complementary rather than redundant. Scopus’s breadth (including many education and applied journals) helps minimise omission bias in teacher-education contexts, while WoS’s curated set improves signal-to-noise for structural analyses and facilitates reproducible citation networks. Relying on both reduces single-source bias and increases confidence that our corpus represents the empirical landscape of learning-styles research in teacher education.

Searches were run in parallel and adapted to each platform’s field tags and filters (e.g. TITLE-ABS-KEY in Scopus; TS = in WoS), applying consistent PPCDO-aligned constraints for document type, subject areas, and languages. After de-duplication, we conducted backward/forward citation chasing from all included records and targeted hand-searches of sentinel teacher-education and TEL journals to check for missed items.

We acknowledge that not consulting additional databases (e.g. ERIC, PsycINFO) may leave residual gaps. However, given our review goals—empirical synthesis plus bibliometric/structural analysis—the Scopus + WoS combination offered consistent metadata schemas, robust citation exports, and manageable de-duplication, thereby enhancing replicability and auditability of the workflow while keeping selection variability under control. The reliability of these databases to support literature review exercises is widely addressed in the literature, as mentioned by Pranckutė (2021) and AlRyalat et al. (2019)

Search Strategy

The first step in this phase was identifying the keywords to be used later in the consultation databases and directly related to (LS) and teacher training, which were standardised with those existing in the ERIC thesaurus. This standardisation was carried out to include keywords with similar meanings and thus obtain broader and more comparable results. As a result of this process, the following search string was established: ( TITLE-ABS-KEY ( “Learning styles” OR “Learning style theory” OR “Models of learning” OR “Learning profiles” OR “Cognitive styles” ) AND TITLE-ABS-KEY ( “Teacher training” OR “Teacher education” OR “Competency-Based Teacher Education” OR “Extended Teacher Education Programmes” OR “Inservice Teacher Education” OR “Preservice Teacher Education” OR “Preservice Teacher Education” OR “Teacher Education Programmes” OR “Professional development for teachers” OR “Paedagogical training” OR “Educational training” ) ) AND ( LIMIT-TO ( SUBJAREA, “SOCI” ) ).

Literature Search and Study Selection

Identification

Applying this search string in Scopus generated an initial set of documents (n = 317), which was filtered by the area of knowledge (Social Sciences), reducing this set of documents to 249 items. Similarly, an initial set of 143 documents was generated in WoS. Duplicates were then identified and removed (n = 63).

Screening

With the corpus unified, titles and abstracts were screened against the Population, Phenomenon, Context, Design, and Outcomes criteria. We prioritised clear links to teacher education/training and an operationalisation of learning styles or closely related cognitive-style constructs. Exclusion decisions were logged using standardised reasons (e.g. not teacher-education, no LS operationalisation, not empirical). This step produced 67 documents for full-text retrieval, 4 of which could not be obtained, leaving 63 for eligibility assessment.

Eligibility

Full texts were examined against all PPCDO criteria. Studies were retained when learning styles/cognitive styles were central to the analysis in authentic teacher-education contexts (pre-service or in-service) and when designs provided empirical evidence (quantitative, qualitative, or mixed methods). After full-text assessment, 63 studies met eligibility criteria and were included in the synthesis; reasons for exclusion were recorded for transparency.

Data Extraction

We conducted an in-depth reading of all included studies and populated a documentation matrix capturing: bibliographic details; setting and participants (pre-service/in-service/teacher educators); LS model or instrument (e.g. Kolb/LSI–CHAEA, VARK, Dunn & Dunn, Felder–Silverman, Honey & Mumford); study design and measures; outcomes related to instructional effects, beliefs (neuromyth endorsement), and any conceptual-change interventions; and variables necessary for the structural/bibliometric analyses (author, venue, references, keywords). This matrix underpinned both the qualitative synthesis and the quantitative/bibliometric procedures.

Data Analysis

The data obtained and recorded in the documentation matrix were subjected to two complementary analysis processes: qualitative and quantitative. In the qualitative analysis, the data were carefully examined to identify patterns and common themes. This involved grouping the information into meaningful and relevant categories for the research, allowing for a deeper understanding of the data. Techniques such as open, axial, and selective coding were used to organise and synthesise the findings. This process helped highlight emerging relationships and trends that were not evident at first glance.

On the other hand, the quantitative analysis focussed on measuring the frequency of occurrence of the categorised data. Statistical tools (Excel and Bibliometrix) were used to calculate the occurrence of each category, allowing for quantifying the prevalence of certain characteristics or behaviours within the data set. This provided a numerical perspective that complemented the qualitative findings, offering a more complete and balanced view of the phenomenon studied. Both qualitative and quantitative approaches worked together to provide a comprehensive understanding of the data.

To address RQ1, we conducted descriptive and bibliometric analyses to map the distribution of LS research in teacher education across contexts, participant profiles, methodologies, instruments, and collaboration structures. To address RQ2, we examined the extent to which LS constructs intersect with neuromyth-related beliefs and identified the conceptual-change strategies reported in empirical interventions. To address RQ3, we synthesised these patterns to derive evidence-informed requirements for AI-enabled personalisation in teacher preparation, with explicit attention to avoiding LS-based assumptions and to aligning design implications with school-improvement priorities such as workload, equity, and feedback quality.

Results

This review was based on an exhaustive analysis of 63 published studies addressing learning styles in the educational context, particularly in teacher training. This review has identified a wide range of approaches, methodologies, and results that provide a clear picture of the characteristics of the research conducted on learning styles, as well as the degree of scientific evidence supporting these studies.

Scientific Validity and Consistency in Studies on Learning Styles

Of the 63 studies reviewed, 77.4% (n = 48) grant some form of validity to learning styles, either implicitly or through the application of specific instruments designed to identify or measure their effectiveness in the educational process (Andheska et al., 2020; Cam et al., 2022; Duman & Çelik, 2012; Durnali, 2022; Evans & Waring, 2006; Saqr et al., 2022). However, within this group, it is important to highlight that not all studies provide strong support for the theory of (LS). A more detailed analysis of these 48 studies reveals that 14.6% (n = 7) ultimately question the validity of learning styles, arguing that these could be classified as neuromyths, as the instruments used lack the necessary robustness to establish a significant correlation between the identification of styles and learning outcomes (Azmi et al., 2018; Donche & Van Petegem, 2009; Vallès-Català & Palau, 2023).

For example, in the study conducted by Golightly (2019), no significant differences were found in the learning style preferences of future geography teachers across different educational levels, leading the authors to conclude that learning styles are not a determining factor in the learning process. Similarly, the study by Duman and Çelik (2012) reported no notable differences in academic performance among students, regardless of the identified learning style. These results reinforce the growing perception that learning styles are a questionable concept, which, while accepted in some educational circles, lacks solid empirical evidence.

Research suggests that despite the high number of studies mentioning or using learning styles as a theoretical basis, there is a lack of consensus on their effectiveness in real educational contexts. Many of the studies reviewed do not apply the four experimental criteria suggested by Pashler et al. (2008), which propose a rigorous methodology to validate (LS). These criteria include the random assignment of instructional methods based on student’s learning styles, the use of standardised tests for all groups, and the demonstration of a significant interaction between the learning style and the teaching method used. None of the reviewed studies fully applied these criteria, casting doubt on the methodological robustness of the reported results.

Studies Reinforcing the Neuromyth Theory

The lack of rigour in scientific validation is one of the most concerning findings of this review. Of the 63 studies, 51.6% (n = 32) did not compare their results with alternative theoretical or methodological frameworks, limiting their ability to objectively validate the effectiveness of learning styles. These studies show a reliance on standardised instruments that have not always proven reliable or valid in various educational contexts. Despite the large number of studies adopting learning styles as a theoretical framework, there is a tendency not to subject their results to rigorous tests that would allow for the identification of their real effectiveness in improving the educational process.

In this regard, the study by Evans and Waring (2006) illustrates some of the weaknesses in learning styles research, as the results showed that while cognitive styles impacted students’ feedback preferences, there was no significant interaction between these styles and other cultural factors that could influence the teaching-learning process. This type of study highlights the importance of questioning the general applicability of (LS) and demanding more robust validation to discard their classification as neuromyths.

Conceptual Change Strategies: Towards Eliminating Neuromyths

Given the growing recognition of learning styles as neuromyths, some studies have begun exploring conceptual change strategies aimed at challenging and correcting these misconceptions in the field of education (Eitel et al., 2021; Grospietsch & Mayer, 2018; Hattan et al., 2024; Martin, 2010; McMahon et al., 2019; Rogers & Cheung, 2020). In this sense, 9 of the 14 studies that support the idea of learning styles as a neuromyth have implemented interventions aimed at modifying teachers’ and future teachers’ beliefs.

For example, the study by Hattan et al. (2024) implemented a brief intervention that temporarily changed the beliefs of teacher candidates about (LS). However, the authors noted that to achieve sustained conceptual change, longer and more detailed interventions that include explicit instruction on how to engage with relational reasoning during the learning process are likely needed. Similarly, Dersch et al. (2022) found that personalised refutation texts were more effective in initiating conceptual change compared to standard expository texts.

These interventions have proven to be useful tools for counteracting erroneous beliefs about learning styles, especially when applied in contexts where neuromyths are strongly present in teacher training. However, the studies also suggest that these strategies need to be systematically integrated into initial teacher training programmes to prevent beliefs in learning styles from continuing to perpetuate over the years.

Diversity in Instruments and Methodological Approaches

One of the most relevant aspects identified in this review is the diversity of instruments and methodological approaches used in research on learning styles. Of the 63 studies reviewed, 24 different instruments were identified as being employed to measure (LS). The most commonly used (17.7%; n = 11) was Kolb’s Learning Style Inventory, followed by the Honey-Alonso Learning Styles Questionnaire (CHAEA; 14.6%; n = 7). Regarding this, the variety of instruments used presents a significant challenge in terms of the comparability of results and their validity.

In their study, Coffield et al. (2004) already pointed out this lack of consistency in the selection of instruments as one of the main weaknesses in learning styles research. This review confirms that the diversity of approaches and the lack of robust methodological standards continue to be an obstacle to the scientific validation of (LS). This diversity not only hinders the replicability of studies but also calls into question the validity of the instruments used, many of which have not been sufficiently evaluated in terms of reliability and consistency.

Methodological and Bibliometric Evidence

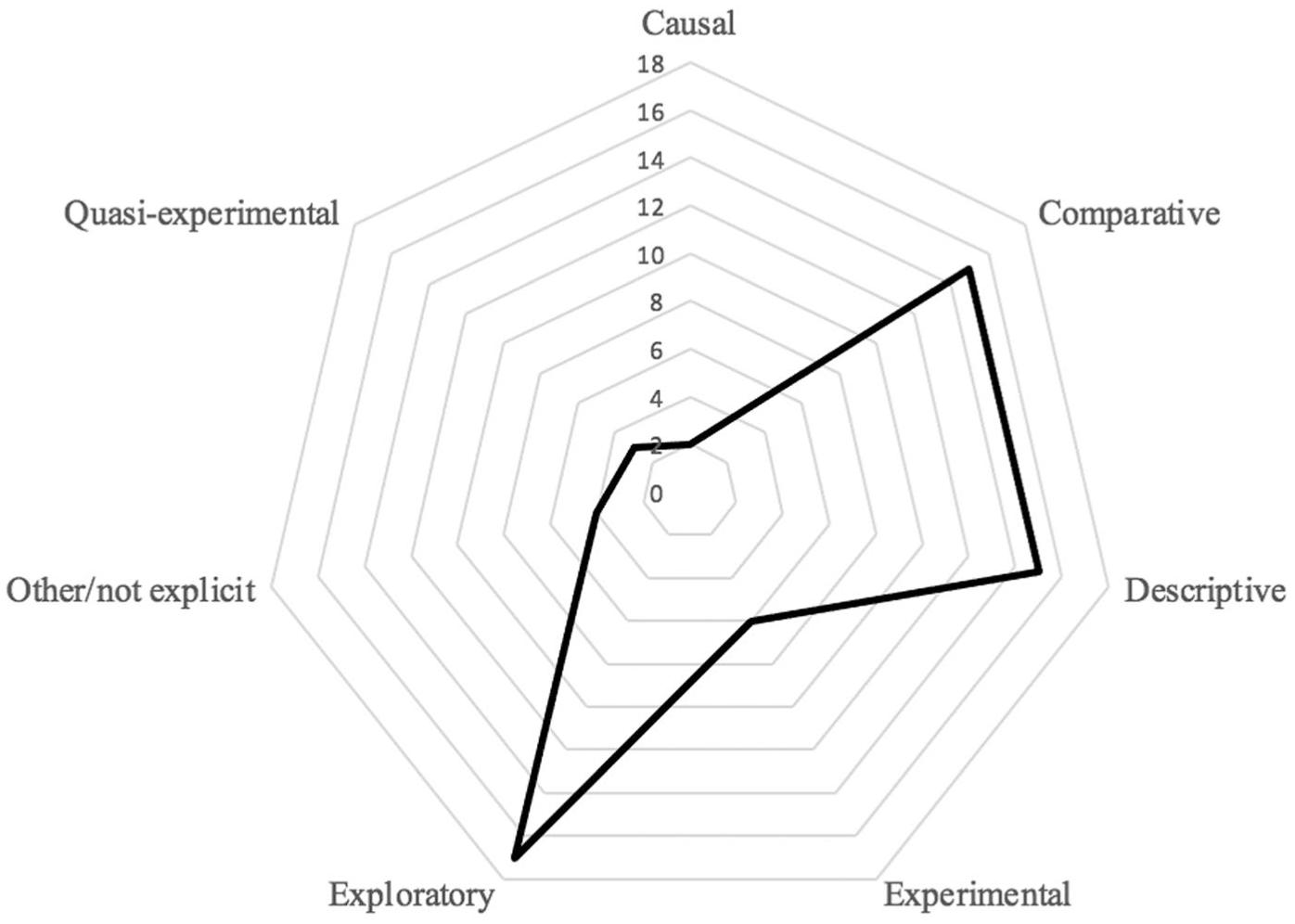

At the methodological level, as shown in Figure 3, most of the reviewed studies follow an exploratory or descriptive approach, merely reporting the presence or absence of learning styles without conducting experimental evaluations that would allow for validating their effectiveness.

Methodological designs in studies on learning styles and teacher training.

In this regard, only 9.7% (n = 6) of the studies adopted a rigorous experimental approach, which is concerning given that the empirical validation of learning styles requires controlled studies that can identify any significant interaction between learning styles and the applied pedagogical methods.

Additionally, regarding the temporal methodology found in the reviewed studies, only 38.7% proposed a longitudinal design that could track the effects or correspondence of learning styles in teacher training processes. Thus, the vast majority of studies focussed on reporting the state of (LS) in teachers without making curricular or didactic adjustments to improve the learning process of these teachers, or to verify whether determining learning styles has any utility in the teaching-learning process, or whether the application of learning styles has been effective in teacher training.

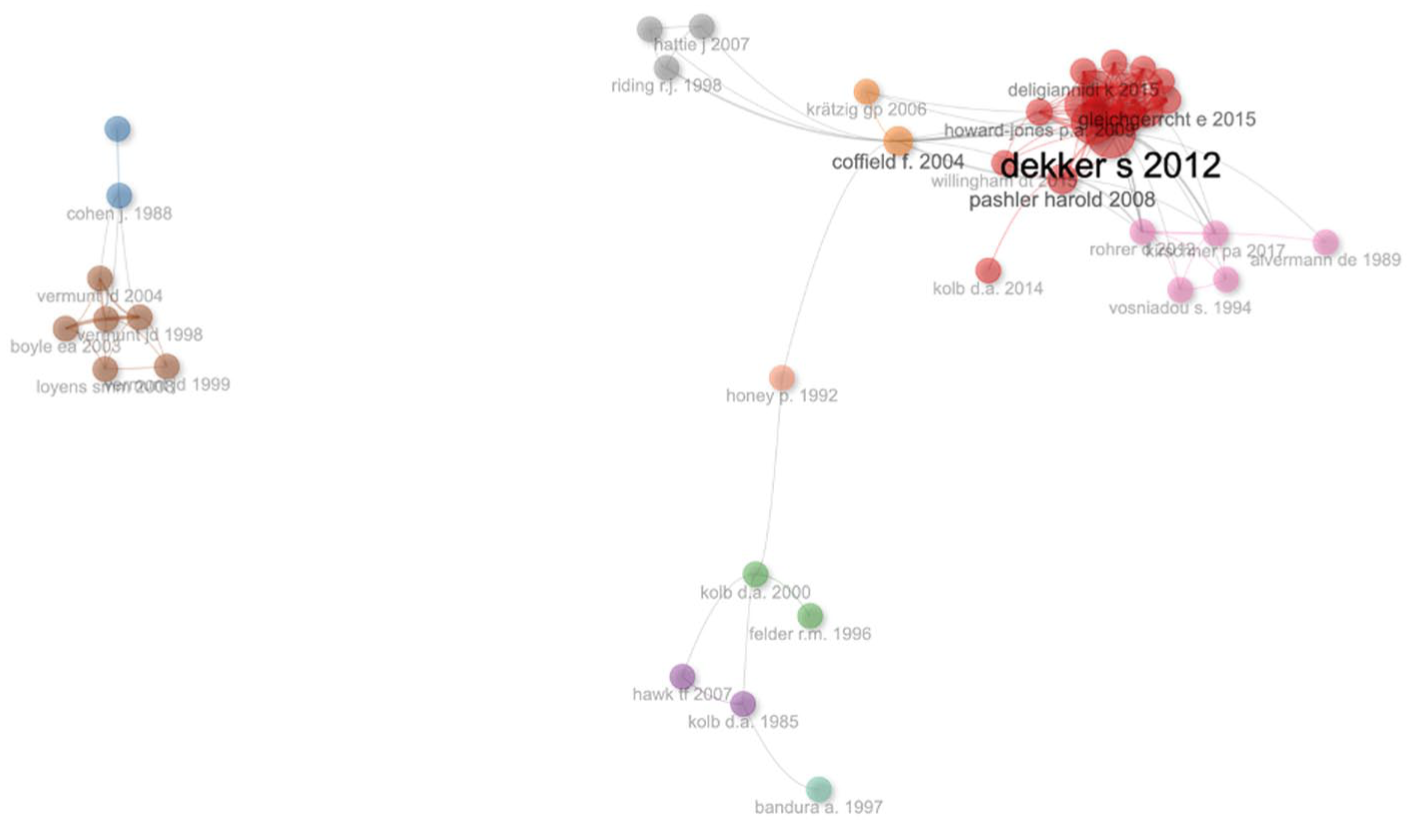

On the other hand, the bibliometric results obtained through the analysis of co-citation networks and collaborations among authors suggest a clear fragmentation in the research field on learning styles. Studies supporting (LS) tend not to interact with those that question or consider them neuromyths. This lack of dialogue between different approaches contributes to the fragmentation of the field and the difficulty in reaching definitive conclusions about the validity of learning styles.

Given the above, the results of this review highlight the urgent need to adopt more rigorous and consistent approaches in research on (LS). While there is a considerable body of literature supporting the existence of learning styles, a more critical review of these studies reveals that many lack the necessary scientific robustness to support their conclusions. The growing evidence that learning styles are a neuromyth reinforces the need to redirect research efforts towards approaches that not only validate or refute (LS) but also explore their practical implications and their relationship with other more widely accepted concepts in pedagogy and neuroscience.

Interaction Between Learning Styles and Educational Outcomes

One of the most debated areas in research on learning styles is the relationship between identifying a particular style and the educational outcomes obtained by students. While some studies have argued that adapting teaching to learning styles can improve academic results, the literature review indicates that the evidence in this regard is contradictory and, in many cases, limited.

For example, K. M. Li’s (2015) study suggests that active students were more inclined to use collaborative tools such as wikis compared to reflective students. This finding could be interpreted as partial support for the theory of learning styles, as behavioural differences were observed among students with different styles. However, the lack of rigorous experimental validation in this type of study prevents definitive conclusions from being drawn about the effectiveness of adapting teaching to (LS). Moreover, these results do not necessarily translate into improvements in academic performance, further questioning the practical relevance of learning styles.

In contrast, studies like that of Andheska et al. (2020), which analysed the relationship between cognitive styles and motivation for writing, demonstrated that students with an independent cognitive style were more successful in writing research proposals compared to those with a dependent style. Although these findings might seem to support the theory of cognitive styles, the generalisation of these results to other educational contexts is uncertain, as no consistent correlation was found between learning styles and other aspects of academic performance.

Therefore, the reviewed studies offer a diverse picture regarding the educational outcomes associated with learning styles, with findings that, although interesting, do not establish a solid foundation to justify the use of (LS) as an efficient pedagogical tool. The inconsistency in results, coupled with the lack of replicability of studies, suggests that learning styles are not a determining factor in students’ educational success and that other elements, such as self-regulation or personalised learning strategies, may have a greater impact on educational outcomes.

Thematic Evolution and Recent Trends

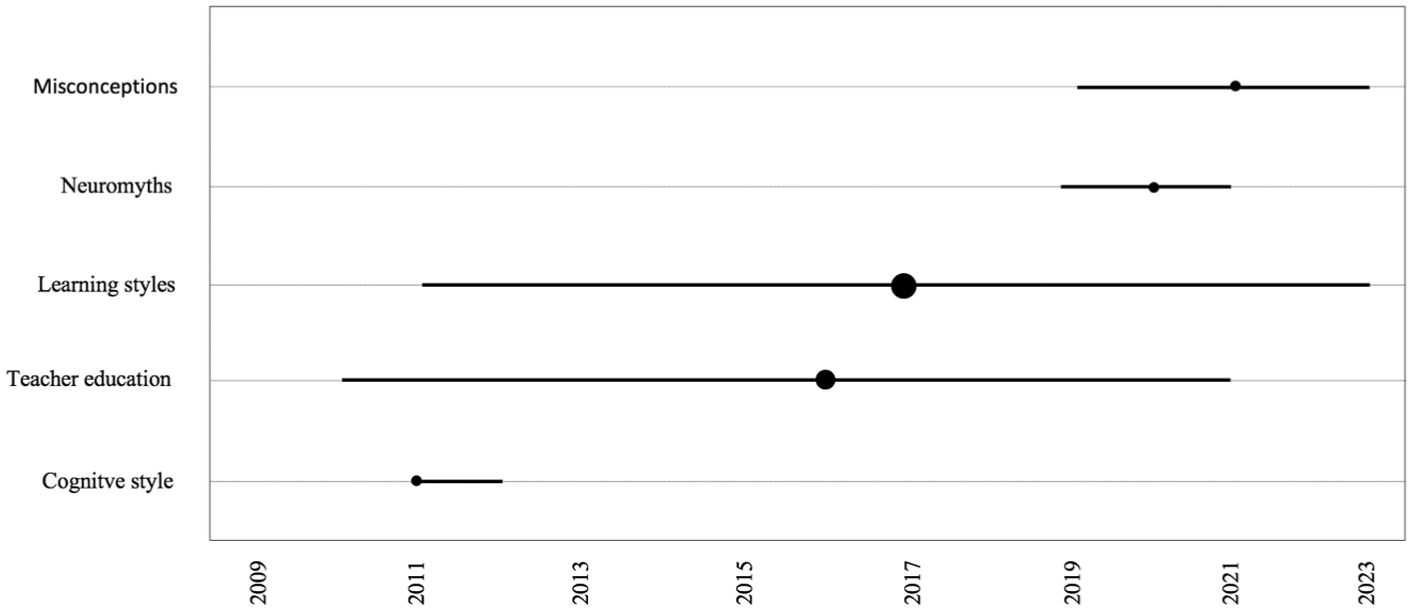

In terms of thematic evolution, the literature review shows that interest in learning styles has fluctuated over time, as shown in Figure 4.

Relevance and years of appearance of research topics (Bibliometrix).

Historically, learning styles have been a central theme in educational research, with a predominant focus on models such as Kolb’s and the Dunn Learning Styles Inventory. However, starting in the 2000s, a more critical approach towards (LS) began to emerge, especially following the publication of studies that questioned their validity, such as Coffield et al.’s (2004) influential work, which conducted a comprehensive analysis of the main learning styles models and concluded that many lacked methodological robustness.

Since 2017, there has been growing interest in the topic of neuromyths in education, leading to increased attention to the criticism of learning styles. More recent studies, such as those by Grospietsch and Mayer (2019), have pointed out that neuromyths, including (LS), are resistant to formal education, and that a deeper, more sustained approach is needed to change these misconceptions among educators. This thematic evolution reflects a significant shift in research on learning styles, moving from widespread acceptance to a more sceptical and critical perspective.

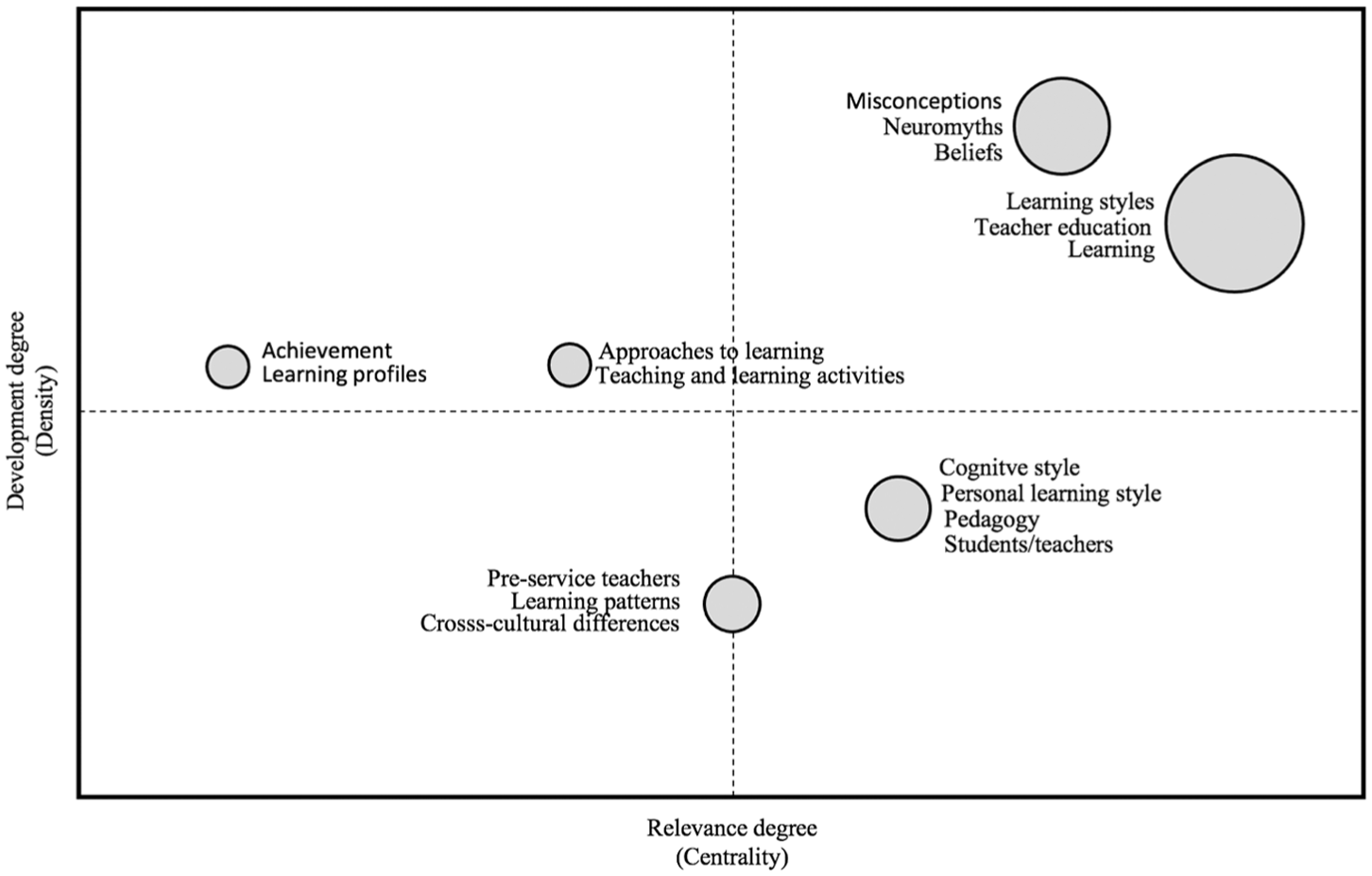

Complementing this, Figure 5 shows the thematic structure, based on the keywords provided by the authors in the 63 reviewed documents. It is worth noting that all the terms have low proximity values, indicating that the network is quite broad and dispersed, with the terms connected through longer paths.

Thematic field of learning styles (Bibliometrix).

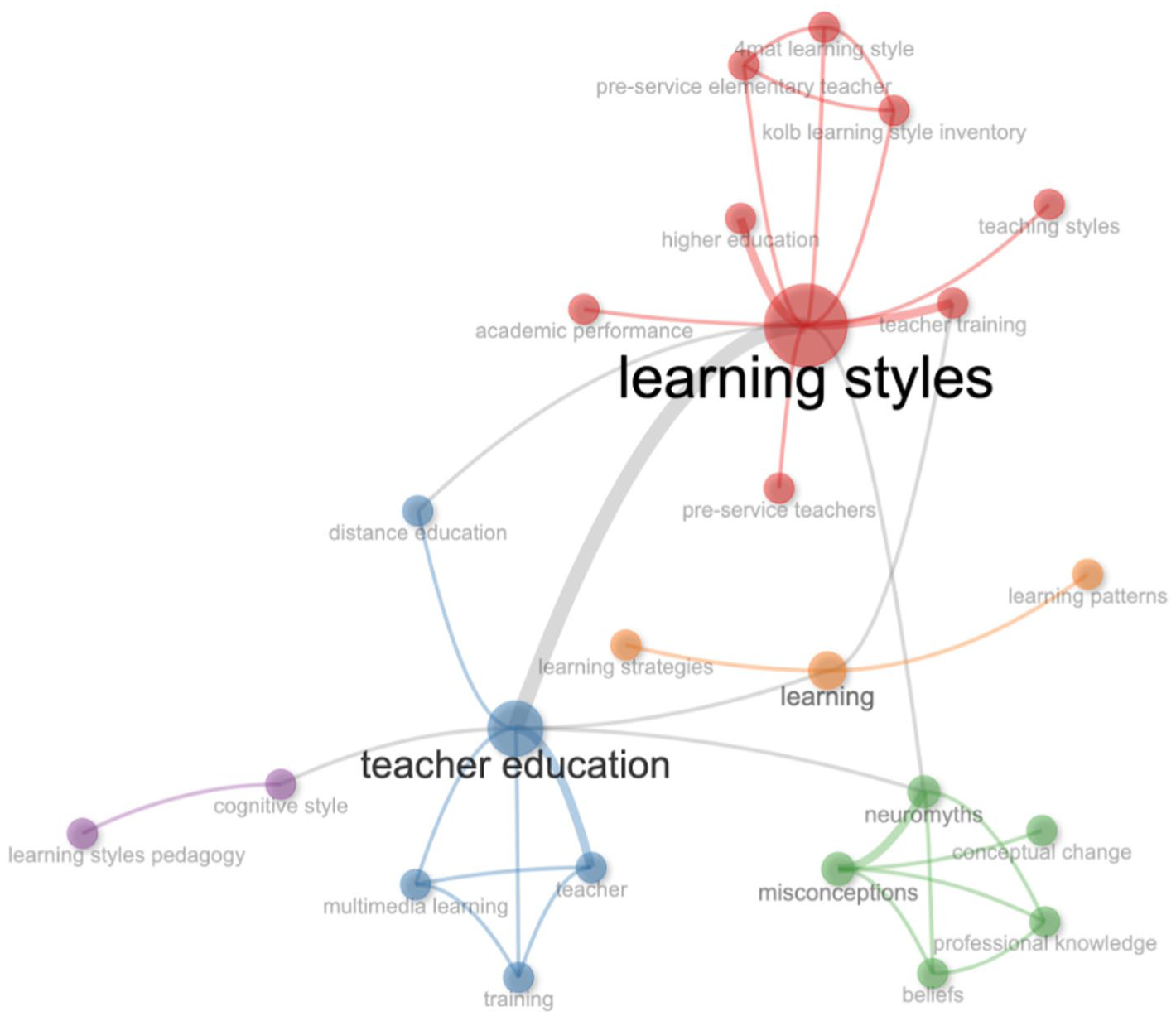

The thematic network presented in Figure 6 shows, in detail, the breadth and dispersion of the network, as well as the lack of connexion between the central themes, Learning Styles, Teacher Training and the theme based on neuroscience and neuromyths.

Thematic network of learning styles and teacher training (Bibliometrix).

The keyword network depicts a broad and dispersed thematic structure with limited integration between learning styles, teacher education, and neuroscience/neuromyth-related strands, suggesting expansion without convergence into a cohesive and cumulative explanatory core.

Taken together, the thematic dispersion shown in Figure 6 suggests that learning-styles research in teacher education has developed as a loosely connected set of lines of inquiry rather than as an integrative programme of evidence. This pattern raises concerns about construct validity, as LS-related claims may be built on partial conceptual anchors that are insufficiently connected to the broader literature on teacher learning and neuroscience-informed misconceptions. The lack of a tightly integrated thematic core also limits evidence accumulation, making it difficult to compare findings across contexts and instruments or to derive stable conclusions that could justify instructional matching practices at scale. In an AI-intensive landscape, this becomes a design risk: algorithmic personalisation systems may modernise the rhetoric of “profiles” and “paths” while inheriting legacy LS assumptions that remain weakly validated and conceptually fragmented.

On the other hand, it is important to note that although the concept of neuromyth has gained ground in educational research, the transition towards a conceptual change regarding learning styles remains slow. The literature suggests that the popularity of (LS) in educational discourse persists partly due to the simplicity and intuitive appeal of the theory. Moreover, many teacher training programmes continue to include learning styles in their curricula, perpetuating their use despite growing evidence against them.

Collaboration and Research Networks

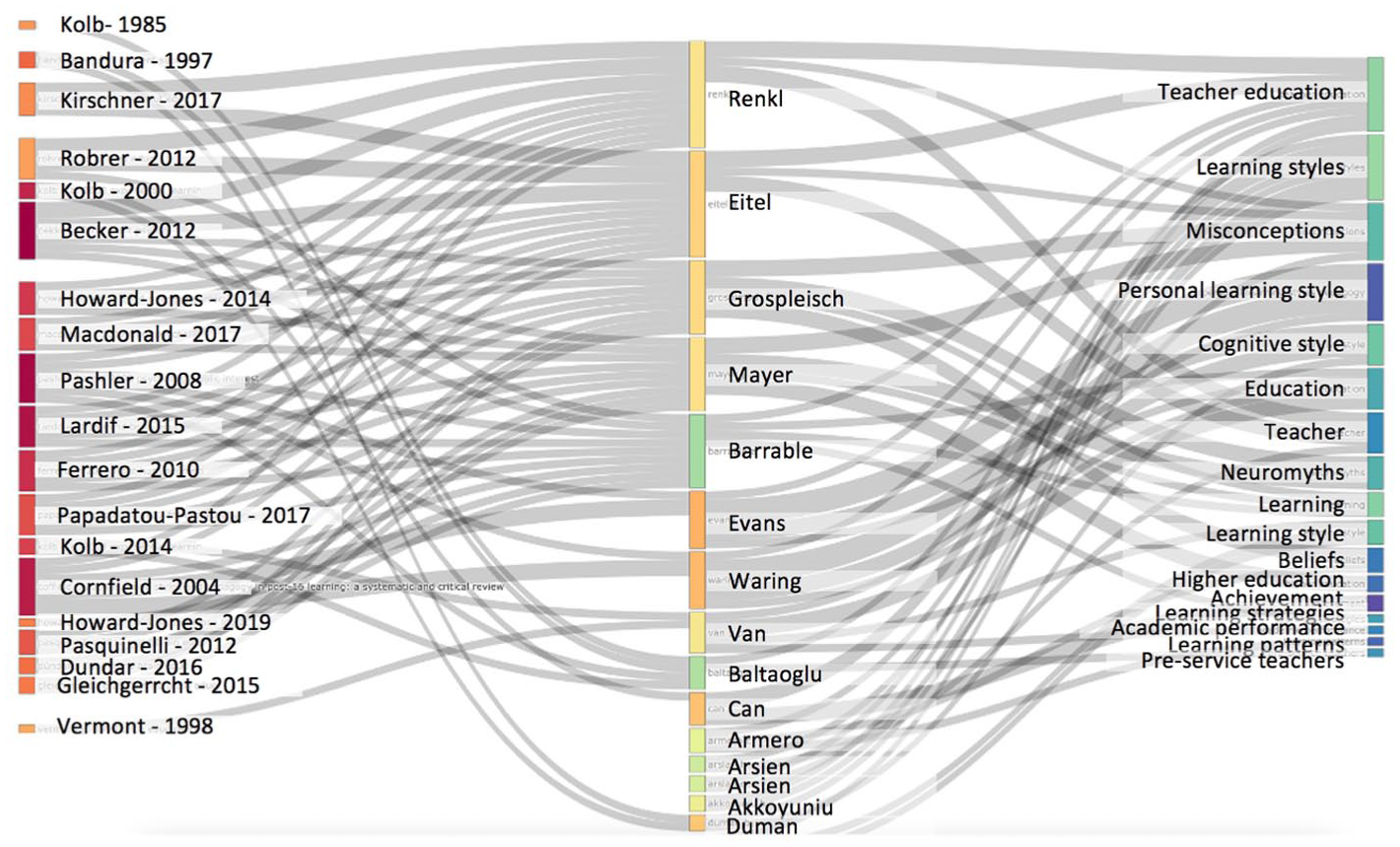

Another relevant aspect is the collaboration structure among researchers addressing learning styles. Bibliometric analysis indicates that the research network on learning styles is fragmented, with little interaction between authors supporting (LS) and those criticising them. In terms of collaboration, studies questioning (LS) tend to be more connected with research in neuroscience and cognitive psychology, while studies supporting them are usually linked to research focussed on traditional education. In this regard, Figure 7 how most authors supporting learning styles do not interact with or reference the group of authors considering them neuromyths, and vice versa.

Relationship between authors and keywords (Bibliometrix).

The author–keyword map indicates a fragmented knowledge structure in which LS-supporting strands and neuromyth-critical strands show limited cross-referencing, reinforcing parallel micro-traditions rather than an integrative programme of inquiry. Figure 7 provides further structural evidence that the field is characterised by parallel knowledge streams with limited cross-dialogue. From a validity standpoint, this segmentation suggests that some pro-LS conclusions may circulate within relatively closed citation and author-topic circuits, potentially reducing exposure to null findings or critical counter-evidence. Such a structure weakens the field’s capacity for cumulative refinement, as the conditions needed to test, recalibrate, or discard LS-based assumptions are less likely to emerge in siloed communities. For AI-enabled personalisation in teacher education, this fragmentation signals a warning: if algorithms or design teams’ privilege one cluster of studies over another, AI systems may inadvertently operationalise static style-like categories under new labels, giving a false impression of evidential consensus.

On the other hand, Figure 8 shows the relationships between authors, where, for example, documents with high intermediation such as Pashler et al. (2008), and Coffield et al. (2004) are crucial for the structure of the network, acting as bridges between different clusters. These authors belong to the group that considers learning styles to be neuromyths, specifically Dekker, who appears in this review as the author of an instrument to determine beliefs in neuromyths.

Author groupings (Bibliometrix).

The co-citation structure highlights the overall dispersion of the field while identifying a small set of bridging authors (e.g. Coffield, Pashler, and Dekker-related work) that provide limited connective tissue across otherwise separated clusters. The co-citation pattern in Figure 8 indicates that the field’s limited cohesion relies disproportionately on a small set of critical and synthesising contributions. This is significant for construct and interpretive validity, as bridging authors frequently foreground evidence that challenges the instructional implications of LS, suggesting that the field’s most robust connective threads may be corrective rather than confirmatory. In terms of evidence accumulation, the siloed structure implies that learning-styles research in teacher education has not yet stabilised into a shared evidential architecture capable of supporting strong instructional prescriptions. For AI-enabled personalisation, this means that responsible design should treat LS-based learner profiling as a high-risk inheritance: without explicit safeguards, AI systems may repackage contested typologies into automated recommendations, thereby amplifying a weak evidential base through scale and perceived objectivity.

In the case of the authors supporting the research on learning styles, Kolb shows different levels of intermediation depending on the version of his work, suggesting that his influence in the network depends on the version of the document.

The documents in this figure tend to have low closeness, indicating that the network is quite dispersed. This isolation of approaches reinforces the perception that the debate on learning styles takes place in separate silos, which makes it difficult to advance towards a more integrated understanding of the subject.

Overall, these structural patterns suggest that learning-styles research in teacher education has expanded without consolidating into a cumulative evidential core. This fragmentation limits the field’s capacity to justify LS-based instructional matching and raises a clear risk that AI-enabled personalisation may inherit legacy typologies under new algorithmic labels unless design is explicitly anchored in observable performance, strategy use, and developmental trajectories.

Discussion

Although AI-enabled personalisation is frequently presented as a scalable solution for teacher education, the international evidence of adoption, impact, and equity remains uneven and contested. Our findings therefore do not assume the inevitability or uniform uptake of AI; rather, they specify what the learning-styles and neuromyth literature suggests AI systems should avoid and what forms of evidence are more defensible for guiding responsible personalisation in teacher preparation. The results of this scope review, taken together, depict a field that is simultaneously extensive and fragile. Our synthesis indicates three convergent messages. First, learning-styles research in teacher education remains methodologically and conceptually heterogeneous, with limited evidence supporting the instructional matching hypothesis. Second, LS beliefs continue to intersect with persistent neuromyths, suggesting that teacher-education programmes require stronger conceptual-change approaches. Third, in an AI-intensive landscape, these findings imply that responsible personalisation should be designed to avoid static style-based profiling and instead prioritise evidence grounded in teachers’ evolving performance, self-regulation, and practice-based feedback needs.

A particularly relevant finding in this respect is the fragmentation revealed by the thematic evolution and network analyses. As illustrated in the thematic map and trends over time (Section 4.7) and in the co-authorship and co-citation networks (Section 4.8), LS-related research in teacher education is dispersed across small clusters rather than organised around shared programmes of inquiry, with limited integration between different theoretical and methodological perspectives. This lack of consolidation weakens the cumulative development of the field and complicates efforts to translate LS findings into coherent guidance for teacher-education practice and policy. Instead of converging towards a progressively refined, empirically grounded account of how learning styles might inform differentiated teacher preparation, the field remains conceptually and methodologically fragmented.

In this context of fragmentation, the identification of authors with high betweenness centrality, such as H. D. Dekker and Kim (2022) and Pashler et al. (2008), highlights the importance of certain seminal works in maintaining a minimum of cohesion in such a divided field. However, the general dispersion of the network suggests that these intermediation efforts, although valuable, are not sufficient to unify the diverse perspectives and approaches that coexist in the study of LS. This situation poses a significant challenge to the coherent and systematic advancement of knowledge in this area.

Moreover, the observed variability in the influence of authors such as Kolb reflects the changing dynamics of research on learning styles. The fluctuation in the relevance of certain theories and authors over time underscores the importance of maintaining a continuous and critical review of the theoretical and empirical foundations in this field. This dynamic approach is crucial to ensure that future research is based on the most up-to-date and rigorous knowledge available.

In line with this need for updating and rigour, the reviewed literature explicitly calls for building stronger bridges between neuroscience and education. This interdisciplinary approach is presented as a promising way to advance, with solid scientific bases, in the validation of instruments used to detect LS, as well as in establishing robust criteria for their application in the classroom. In this sense, works such as those by Nóbrega et al. (2024), Ávila Toscano et al. (2022), and McMahon et al. (2019) offer valuable perspectives on how to approach the conceptual change necessary to overcome so-called neuromyths in education.

The dispersion observed in the thematic network suggests that research in this area lacks the necessary cohesion to foster significant advances in the understanding and application of LS. This lack of conceptual and methodological integration could be hindering the development of more effective and evidence-based approaches to teacher training. The results of this review are consistent with the findings and emphasis of previous studies on the lack of further scientific evidence on this topic that considers the new possibilities offered by AI (Bajaj & Sharma, 2018; Whitman, 2023).

An interesting aspect to analyse is the recent increase in the relevance of concepts such as “Neuromyths” and “Misconceptions,” which indicates that the critical perspective towards LS is gaining ground in academic discourse. This shift reflects a growing awareness of possible misunderstandings and errors in the application of these styles in the educational context. However, the late adoption of these critical concepts suggests that research in teacher training has been slow to recognise and address criticisms from neuroscience, which may have contributed to the perpetuation of questionable approaches for an extended period.

It is important to note that, despite this critical shift, terms related to neuroscience do not yet completely dominate the thematic network of LS research. This situation could indicate persistent resistance or slow incorporation of these critical perspectives into teacher training programmes. The more decisive integration of neuroscientific findings into teacher education presents itself as a crucial challenge for the future of research in this field.

From a methodological point of view, research on learning styles faces significant difficulty due to the wide variety of available models and instruments, as well as the numerous adaptations made to them. This methodological heterogeneity complicates the comparison of results between studies and hinders the accumulation of coherent and generalisable evidence. In this context, Kolb’s Inventory emerges as the most widely used instrument in LS research in teacher training, suggesting a preference for established models, but also potentially indicating a lack of innovation in developing instruments that address contemporary criticisms of LS.

Another concerning aspect is the prevalence of studies that limit themselves to reporting on the state of LS, without conducting curricular or didactic interventions that would allow evaluation of their real effectiveness in educational practice. This predominantly exploratory or descriptive approach, although valuable in certain aspects, limits the ability of research to demonstrate the practical utility of LS in improving teaching and learning processes. The need to complement these descriptive studies with research examining the real applicability and effectiveness of LS.

The concentration of studies on pre-service teachers, along with scarce research on in-service teachers, points to a significant gap in the literature. This disparity could be limiting our understanding of how LS impact real educational practice and how conceptual change can be effectively implemented in the classroom. Addressing this gap presents itself as a priority for future research in the field.

Considering these findings, the need to develop more robust and effective research in teacher training based on LS and conceptual change becomes evident. This requires the implementation of more rigorous methodological designs, with a longitudinal temporality that allows for detailed analysis of each individual. Likewise, it is crucial to conduct a careful review of the instruments used, verifying their validity and reliability, and evaluating whether these indicators have improved with recent research.

The resistance to conceptual change observed concerning neuromyths underscores the importance of developing more effective strategies to modify erroneous beliefs in the educational field. The coexistence of false beliefs and scientific knowledge, even after formal educational processes, poses a significant challenge that requires innovative and persistent approaches to overcoming.

Implications of This Review About the Emergence of AI in Education

Taken together, our findings suggest two complementary sets of implications for AI-enabled personalisation in teacher education. On the one hand, AI systems should avoid inheriting learning-styles typologies, matching assumptions, or static profiling logics that could automate contested constructs at scale. On the other hand, AI-enabled personalisation is more defensible when grounded in observable performance, strategy use, self-regulation, and situated feedback needs, particularly when design goals prioritise equity, workload reduction, and improvements in feedback quality.

Considering these findings, the growing integration of artificial intelligence and learning analytics into teacher education needs to be interpreted with caution. The empirical and structural patterns identified suggest that learning styles and related constructs are a weak foundation on which to base algorithmic personalisation, yet many AI proposals still rely—implicitly or explicitly—on notions of stable learner types or profiles. Rather than treating AI as an external, future-oriented add-on, we understand it as a sociotechnical extension of the same logics that have historically shaped LS-based practices in teacher education. This perspective raises a critical question: how can AI be mobilised to support conceptual change away from neuromyths and towards evidence-informed personalisation, instead of hard-coding contested LS categories into new digital infrastructures?

Firstly, AI tools could provide a solution to the problem of validity and reliability of instruments used to detect LS. Through the analysis of large volumes of data (Big Data), as suggested by the study of Saqr et al. (2022), AI could identify more precise and dynamic learning patterns than those proposed by traditional LS models. For example, analysing students’ interactions with online learning platforms, including click patterns, time spent on different types of content, and preferences in information presentation, could offer a more complete and nuanced picture of individual learning tendencies.

Furthermore, the literature abundantly shows that AI could facilitate large-scale personalisation of learning experiences (Al-Zahrani & Alasmari, 2024; Tafazoli, 2024), overcoming the limitations of LS-based approaches. Within this scenario, it is recognised that intelligent tutoring systems and adaptive platforms could continuously adjust content and teaching methods based on student performance and preferences in real-time, without relying on predefined categories of learning styles (Goram & Veiel, 2020; Wu & Leonard, 2023).

Regarding conceptual change, AI offers innovative tools to address the persistence of neuromyths in education. Thus, the elaboration of personalised refutation texts, mentioned in the review, could be significantly enhanced through the use of AI algorithms. As mentioned by Dersch et al. (2022), these systems could generate content adapted to the prior beliefs and knowledge of each teacher, using multimedia formats (such as podcasts or interactive videos) to maximise the effectiveness of the conceptual change process.

Likewise, the integration of virtual assistants and chatbots into teacher training programmes could provide continuous and personalised support in the conceptual change process (Rabe et al., 2023; Zhang et al., 2025). These systems could offer immediate feedback, respond to specific questions, and provide contextualised examples that help teachers overcome misconceptions about learning and cognition.

According to Ako-Nai et al. (2022) AI’s ability to analyse and synthesise large amounts of information could also accelerate the integration of neuroscientific findings into educational practice. In this sense, AI systems could stay updated with the latest research and translate this knowledge into practical recommendations for teachers, thus facilitating the bridge between neuroscience and education that the reviewed literature points out as crucial.

However, it is important to recognise that the implementation of AI in this context also poses ethical and practical challenges, which are extensively addressed in the published literature on this matter (Ebrahimnejad et al., 2023; Pawar et al., 2023).

As a final reflection, our review suggests that the most constructive role for AI in teacher education is not to rescue learning styles, but to help move beyond them. If AI-enabled personalisation is to contribute to school improvement, it should be designed to work with rich evidence of teacher learning, to reduce unnecessary workload rather than intensify it, and to support equitable access to high-quality feedback and developmental opportunities. This requires abandoning LS-based profiling as a design anchor and instead using the empirical insights from the LS and neuromyth literature to specify negative design requirements—what AI systems in teacher education should not do—as well as positive ones grounded in robust indicators of professional growth and responsive pedagogy.

These requirements are intended to guide responsible AI-enabled personalisation in teacher education where such technologies are being considered or adopted, particularly in ways that do not intensify inequities or teacher workload.

Footnotes

Acknowledgements

We thank Universidad de La Sabana, Campus Universitario Puente del Común, KM 7 Autopista Norte de Bogotá, Chía, Cundinamarca, Colombia, for the support received in the preparation of this article.

Ethical Considerations

Due to the nature of the article, the approval of a research ethics committee was not required.

Consent to Participate

Due to the nature of the article, no consent to participate was required.

Consent for Publication

Due to the nature of the article, no consent to publish was required.

Author Contributions

All the authors participated equally both in the preparation of the article and in its writing, editing and final presentation.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Universidad de La Sabana, Project “Educación 4.0: Enseñar y Aprender con Inteligencia Artificial” – EDUPHD-20-2022.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.