Abstract

The rapid advancement of artificial intelligence (AI) in education necessitates a shared understanding of its intended purpose and societal implications. This paper underscores the significance of societal perspectives in AI and education, often overshadowed by technological aspects. At the same time, policy guidelines for the integration of AI technology within educational systems are playing a pivotal role in shaping the future of education. What we as society imagine AI and education to be, will in some shape or form lead the development of suggested fixes. The aim is to aid the understanding of why and how visions of learning and education are framed in relation to developments in Educational Technology (EdTech) and their introduction in education. It thereby contributes to the ongoing discussion on the integration of AI in education and its potential societal impacts.

Keywords

Introduction

The current artificial intelligence (AI) summer is in bloom, driving development of sector-specific AI quickly, education being no exception to this trend. Consequently, the Educational Technology (EdTech) industry is experiencing a surge in development, with new technology being introduced in classrooms. This fast development of AI in education (AIEd), in turn, has sparked an increase in policy guideline documents for safe implementation (Adams et al., 2021). These guidelines aim to support decision makers in both the private and public sectors in creating ethical AI policies and establish legislation that support various actors: policymakers, teachers, and students, among others. Through this support the guidelines become a significant part of “soft governing” (Mertanen, et al., 2022). As such, these policy guidelines are not a part of traditional lawmaking as they “skip(s) over the parliamentary decision-making traditionally attached to politics” (ibid. p. 732). Emphasizing this perspective, Player-Koro et al. (2018) highlight that “education authorities (as well as schools themselves) are losing the ability to regulate (or even keep abreast of) these essentially ‘privatized government services’” (p. 683). This indicates an increasing challenge faced by school systems to orient “competing visions of the future” (Rahm and Rahm-Skågeby, 2023) presented by policymakers and EdTech developers. Furthermore, in a policy study on national AI strategy documents by Bareis and Katzenbach (2022) the authors state that: As governments endow these imaginary pathways with massive resources and investments, they contribute to coproducing the installment of these futures and, thus, yield a performative lock-in function (p. 856).

Therefore, it is imperative that the visions of the future embedded in policy guidelines are unveiled and analyzed, in order to understand the global development of AIEd. The imaginaries in the guidelines signal how education should be carried out in the future, as technological solutions are presented throughout them. As such, the policies suggested by these organizations can change education in various ways, depending on the desired future framed in them. The aim of this paper is to aid the understanding of why and how visions of learning and education are framed in relation to developments in AIEd and the introduction of new EdTech in education. The research questions guiding that aim are: 1. Which problems (problematizations) are expressed in the policy guidelines? 2. How is the future of education reflected in the policy guidelines?

This will be discussed using Rahm and Rahm-Skågeby’s (2023) heuristic analysis lens, a joint reading of the concepts problematizations and imaginaries, explained further in the theoretical background.

First, there is a brief overview of important work related to EdTech and AIEd, followed by the theoretical background that holds the core aspects in this analysis: sociotechnical imaginaries and problematizations. Then, the method and selection of documents for this paper is introduced. The results are then discussed using the heuristic lens for analysis followed by a conclusion, providing valuable insights into the state of the sociotechnical imaginaries held by and conveyed in policy guidelines.

Previous research—Education in the age of Educational Technology and artificial intelligence in education

The educational sector faces constant evolution and EdTech and AIEd both represent exciting changes and frightening uncertainty. In relation to the policy field, it is important to understand the practical implications of EdTech and AIEd, as these movements intersect and influence each other. Hence, this brief overview provides insight into the practical aspects of the field.

In an historical overview of technologies introduced to education, Cuban (1986) describes the relationship between teachers and technology. The findings presented are seconded, in more recent research, by Selwyn (2011a), who describes four different “phases” (movies, radio, television, and computers) in the evolution of the EdTech classroom, stating that the phases can be seen as a “solution in search for a problem” (p. 58). However, Cuban’s claims that teachers are resistant toward change brought on by technology has been contested in more recent research. Erb and Geiss (2023) describe teachers as playing a key role in shaping the evolution of EdTech, and not as passive bystanders.

Another aspect of the history of the field is a pattern that Selwyn (2011b) describes as a cycle of “hype, hope and disappointment” (p. 715) where technology is introduced into classrooms in hope that it will revolutionize education. However, as Watters (2014) highlights, “developing new technologies is easy; changing human behaviors, changing institutions, challenging tradition, and power are hard” (p. 7). These cycles of hype, hope, and disappointment could be one explanation to the nature of the field today, where polarized opinions on AIEd can be found. For example, Peters et al. (2023), present responses to the launch of OpenAI’ ChatGPT and the positive voices that were raised in papers as a result of this, noting that “Rarely has there been such an enthusiastic response perhaps comparable to that of the internet itself (…)” (p. 5). As such, the hype, hope, and disappointment cycle can be discerned. The scholars invited to collectively write with Peters et al. (2023) add perspectives important for future discussions. One being that “educational researchers have a key role to play going forward to remind producers and consumers of AI technologies that they must view these technologies with skepticism as well as hope” (p. 8).

This polarization did not only spark with the introduction of generative AI. Rather, it can be seen in the field over time. Benefits that have been highlighted are, for example, the benefits of non-human tutors (Roll and Wylie, 2016) and personalization (Luckin et al., 2022b; VanLehg, 2011), as well as time-saving aspects for teachers is highlighted as possibilities brought on by AIEd. Luckin et al. (2022a) also suggest a framework on AI readiness for teachers, encouraging educators to utilize AI where its strengths lie. Other scholars, like (Selwyn, 2011a; 2011b), discuss the added labor for teachers in EdTech classrooms. A similar finding is presented by Sperling et al. (2022), who show that even though the AI software used in the study did not always work correctly, teachers and students enabled and adapted to it, adding more labor caused by the unexpected results from the algorithm. Moreover, ethical issues regarding data-mining in educational settings are arising in light of this development. Williamson (2017: 2021) discusses governance created through big data and the thin lines between education and commercial interests in a digitalized school system.

Thus, the field of research, as briefly presented above, is one with polarized opinion, where hype meets disappointment in light of new advancements.

Theoretical background - heuristic lens highlighting imaginaries and problematization

Education policy occupies a central position in shaping the contours of educational systems. It encompasses a multifaceted landscape with legislative frameworks, administrative directives, and institutional practices that delineate the objectives, methods, and resources allocated to education. Within this sphere, the application of policy analysis methodologies provides a rigorous lens through which to dissect, interpret, and evaluate the intricate interplay of sociotechnical-political forces, pedagogical imperatives, and organizational dynamics that underpin policies. In the attempt to unfold underlying assumptions in the selected policies in this paper, the analysis combines two theoretical concepts: sociotechnical imaginaries and problematizations.

Sociotechnical imaginaries

Imaginaries have been studied by an array of scholars, such as Castoriadis (1987) and Taylor (2004), among others, and can be understood as a way of comprehending how society and the individuals in it imagine their existence. The concept of the imaginary has since been developed to include a sociotechnical dimension, as expressed by Jasanoff and Kim (2009, 2015). According to them, new technology is never created in a vacuum. The world around us affects what technologies are imagined as good or bad—and also how to use them. Jasanoff and Kim (2015) introduce the term “sociotechnical imaginary” by explaining it as: Collectively held, institutionally stabilized, and publicly performed visions of desirable futures, animated by shared understandings of forms of social life and social order attainable through, and supportive of advances in science and technology (p.4).

As such, technology is created by shared understandings of what it should be used for and how—what desired futures society imagines. These imaginaries in an AI and education context are important because the social aspect of AI is overlooked in favor of questions of technology and ethics (Sartori and Bocca, 2022). The social aspects of the AI imaginaries are of importance because it will inevitably affect how these technologies are welcomed and adopted by society (ibid.).

Using sociotechnical imaginaries as a theoretical focal point, one must remember that there are not always uniform imaginaries in play—several different imaginaries are often present at the same time but viewed differently by different stakeholders or actors. Rahm and Rahm-Skågeby (2023) call these differences in imaginaries “competing visions of the future” (p. 3). This is a term that could be used to describe the contrasting conceptualizations of societal developments and outcomes on AIEd—or applied to any phenomena in society.

Sociotechnical imaginaries are broad visions of the future and can be difficult to specify, especially in a field where utopian and dystopian views on the same technology often co-exist, as I will highlight. The imaginaries will be deconstructed and understood through examining the problematizations put forward in the array of policy guidelines in this paper.

Problematizations

Problematization is a concept deriving from Bacchi’s (2009) research and a continuation of Foucault’s ideas of policy responses from “difficulties.” Bacchi’s (2009) “What’s the problem represented to be” approach stands as a common method for policy analysis and aims to uncover underlying assumptions in policies which are worded as solutions to certain “problems.” However, as Bacchi (2009) emphasizes, it is important to understand that policy is not the response to a problem, but rather a “creation (or production) of (policy) problems” (p. 1). As such, it aims to consider “deep-seated cultural values—a kind of social unconscious—that underpin a problem representation” (p. 5). What that entails is to critically analyze policy to find the societal assumptions policy is built upon. For Foucault, a policy response stems from a “difficulty” but for Bacchi “problems take shape (or even emerge) during the creation of policies, not before them” (Rahm and Rahm-Skågeby, 2023: p. 6).

Bacchi (2012) suggests that “all policy proposals rely on problematizations which can be opened up and studied” (p. 5) in order to understand what a suggestion really derives from. Adding to this, using sociotechnical imaginaries (further explained below) will give a broader picture of not only what historically lies behind a problematization, but what imagined future might propel suggested fixes a certain way. This paper uses problematizations as a way to understand and deconstruct imaginaries created in policy guidelines. The “heuristic lens” they suggest is a joint reading of problematizations and imaginaries. The lens entails understanding imaginaries and problematizations as overlapping and using the latter to “deconstruct” imaginaries (p. 1154). They suggest that “ (…) once a sociotechnical imaginary is operationalized in society (e.g., in a technology or policy), it is done so by relying on problem-solution formation” (ibid). Therefore, only analyzing problems represented within these policies will tell parts of the truth, but not highlighting what imagined futures are attained through the narratives.

The pinpointed problems in policy guidelines often have a corresponding “fix”—in the case of AI a technological fix (Rahm and Rahm-Skågeby, 2023). Identifying problematizations thus entails understanding what “fixes” are suggested in policy guidelines, or what is stated as a fact, as it entails assumptions made by policy actors. The authors stress that “problematizations can be described as a way of governing by establishing a certain proposal” (p. 1152). Problematizations are thus not always framed as a problem, but can be stated as a way of fixing, for instance, education. These fixes derive from what we imagine AI to be capable of in society, and specifically in this paper, in the school system. Rahm, (2019) presents the modern practice of policy problematization as “conceptions of technology entangled with ideas about which knowledge citizens need, now and in the future” (p. 99). We should therefore ask what problem policymakers are aiming to fix by the application of AIEd and why. In this sense, problematizations are intertwined with visions of the future—sociotechnical imaginaries, as the problems created in policy guidelines can be “fixed” by the introduction of various AIEd features.

Method

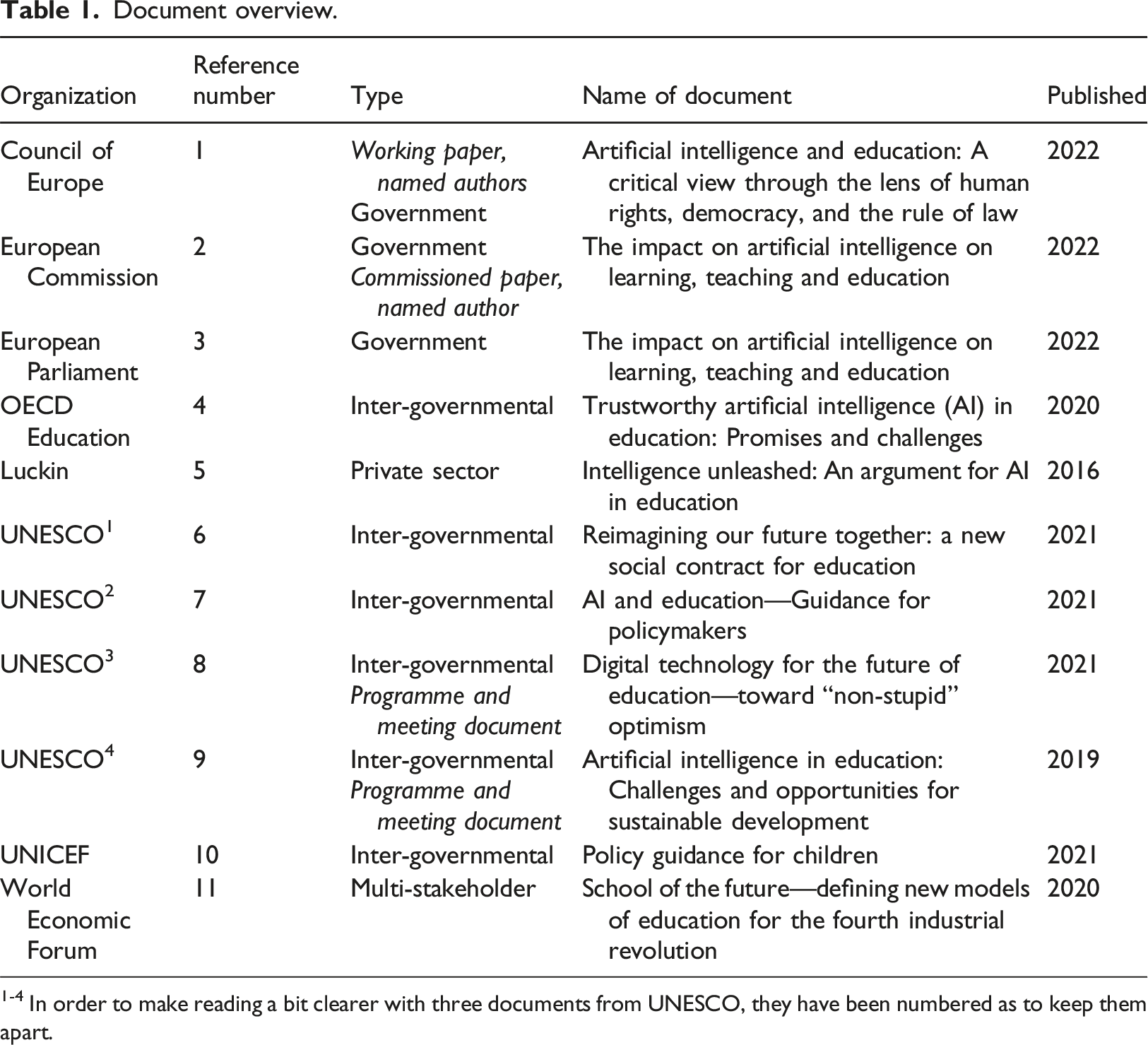

The selection process for this paper considered policy guidelines and policy documents published between 2016 and 2023 on a European and international level. The documents represent guidelines for policymakers from organizations such as UNESCO, OECD, UNICEF, and the World Economic Forum as well as the European Commission and the EU Council of Ministers. The selection is based on actors considered important in the domain area, as AI will change not only school practices, but society as a whole; why many human rights-oriented organizations are active participants in the creation of policy guideline documents. In a study of policy actors in the education system, Lingard et al. (2015) state that the OECD and UNESCO, among others, can be considered to have a significant role for school and changes at policy level, which means that they are represented in more than one document in this analysis. Also represented in more than one document in the analysis is the European Union since an AI law has been suggested and is in trialogue with the European Commission, the Council of the European Union, and the European Parliament. This is a unique point in time, where policy guidelines are being adopted for legislation.

Important to note is that some of the papers in the selection are “working papers” or “meeting documents,” which often have named authors (which the official policy guidelines do not always have). They are, however, commissioned by the organizations and published, which means that they still bear weight in the policy landscape. I have chosen to highlight which of the documents below are working, or meeting, documents for clarity and transparency.

Document overview.

1-4 In order to make reading a bit clearer with three documents from UNESCO, they have been numbered as to keep them apart.

The initial steps involved a reading of all chosen documents, using a jam board to categorize the content of the policy guidelines under headlines “possibilities” and “challenges,” since these represent problem-solution constructions highlighted by Rahm and Rahm-Skågeby (2023). This was the first step to sort content that could later be categorized as belonging to different problematizations and imaginaries. Quotations from the papers were used to identify possibilities and challenges, as a first step. As an example, UNESCO1 ((6) 2021) states that AI could “help in making sure teachers can focus on the key tasks” (p. 18), which was identified as expressing a possibility. But it also said that “AI bias might undermine basic human rights” (p. 20), which was labeled a challenge—a problematization. For each document, a thorough reading with labeling the content as expressing either possibilities or challenges were made. This was to gain an understanding of the stances taken by the different organizations—Are the challenges or possibilities the most highlighted? What different problematizations are presented in the documents and how are they connected to imaginaries on education and AI?

Following this, multiple new readings of the documents were carried out to identify what it said about AI, and how it might function in an educational setting. Then, by adding Rahm and Rahm-Skågebys (2023) heuristic lens as a final reading it was possible to identify problematizations and to uncover what imaginaries that are presented in the documents. The sorting made pinpointing problematizations efficient, as they had been highlighted in early readings. The findings are presented in the result section.

Results

The results are presented in three subsections, where imaginaries that have been detected are analyzed through their problematizations as it is important to take the overlap in the analytical concepts seriously, as highlighted by Rahm and Rahm-Skågeby (2023). To utilize the problematizations as a way of deconstructing imaginaries, I present the problematizations and imaginaries together, answering the questions: 1. Which problems (problematizations) are expressed in the policy guidelines? 2. How is the future of education reflected in the policy guidelines?

These findings are then discussed in the subsequent section, providing a deeper insight into the overlap in imaginaries and problematizations.

The dawn of a new world: Artificial intelligence’s inevitable disruptiveness

The narratives that make up policy guidelines often circle around AI’s disruptiveness, inevitability, and unique possibility to efficiently change the future of education and work, as well as society as a whole. Although the excerpts only represent some of the documents in this analysis, the rhetoric can be found in the majority (but not all) of them. While most innovations in the past decade related to an increased use of computers and the internet in the classroom, the next wave will be based on AI, or on combinations of AI and other technologies ((4) OECD Education Working Papers, 2020, p.6). Artificial intelligence (AI) systems are fundamentally changing the world and affecting present and future generations of children ((10) UNICEF, 2021, p. 7).

These grand visions of the future are often a way to set the stage at the opening of the guidelines—a world where technology is increasingly important for all sectors of life, and that we are all a part of the 4th industrial revolution. Students are presented as the future workforce of the digital age that need education for their future careers in order to live in the new AI society (c.f. Høydal and Haldar, 2021). This neo-liberal framing of the future lends itself to questions on what education will look like in the future, should this imaginary be leading in the development. This inevitability also narrows the discussion around AI and EdTech for the future, as there are no alternative visions presented.

The World Economic Forum (11) states that “Children must be prepared to become both productive contributors of future economies and be responsible and active citizens in future societies. Realizing this vision requires children to be equipped with four key skill sets (…)” (p. 7). The skill sets are based on what The World Economic Forum has identified as important in a society where AI is highly developed and a natural part of life. The skills recognized by The World Economic Forum are “(1) global citizenship; (2) innovation and creativity; (3) technology; and (4) interpersonal skills” (p. 7). Not only do children of the future, according to the World Economic Forum, need a new skill set—schools also need to shift their “learning experience” (p. 11) toward “personalized and self-paced learning,” “accessible and inclusive learning,” and “problem-based and collaborative learning” as well as “lifelong and student-driven learning.” The World Economic Forum (11) represents the most prominent of the advocates of the new skills, stating that: “To productively contribute to a future economy, children must develop the skills necessary to generate new ideas and turn those concepts into viable and adoptable solutions, products, and systems” (p. (8) and the OECD Education Working Papers, 2020 (4)).

When considering why the school system is used as a way of establishing the 4th industrial revolution, it is important to understand what stands to be gained from technology entering classrooms across the globe. The neo-liberal framing mentioned earlier invokes questions on what economic incentives are at play in forming this narrative. If the gains of harnessing this “inevitable” technology were purely didactical or pedagogical, the emphasis would most likely not be put on global citizenship and innovation. Though, for economic growth and fostering a new generation of corporate workers in a new wave of digital revolution, these skills are important. Hence, citizens of the future will need to work with AI, as the disruptive and inevitable future is painted in a majority of the documents.

Artificial intelligence will reshape education

As AI is a disruptive force, as descried in the previous section, it stands to change education at its core as well, apart from affecting society at large. These changes are connected with the inevitable changes brought on by AI but are also sector specific. As seen in the excerpts below, the reshaping of education though technological advancement is described. Artificial Intelligence (AI) has the potential to address some of the biggest challenges in education today, innovate teaching and learning practices, and ultimately accelerate the progress toward SDG 4 ((7) UNESCO, 2021, p. 3). Drawing on the power of both human and AI, we will lessen achievement gaps, address teacher retention and development, and equip parents to better support their children’s (and their own) learning ((5) Luckin et al., 2016, p. 11).

Even though these changes are mentioned by a majority of the documents, the results are conflicted—the same vision of the future is present, but not all organizations and documents adhere to this future. The focus on the reshaping of education in some documents take the form of a problem-solution construction, while others raise fundamental questions about how and why education will develop in the future.

A common problem highlighted in the documents regarding students need for more personalized learning, as well as the future of teachers’ work. UNESCO2 ((7), 2021, p. 15) states that: “The extensive use of ITS raises other problems as well. For example, they tend to reduce human contact among students and teachers. Also, in a typical ITS classroom, the teacher often spends a great deal of time at their desk in order to monitor the dashboard of student interactions.” It is followed by a suggested techno fix, namely smart glasses that shows student progress when the teacher looks at students in the class. So, even though UNESCO raises concerns here, there are also solutions presented by way of technology.

The comments on the future of teachers’ work are many throughout the guidelines. In some of the guidelines this is emphasized as a positive outlook on the new teaching profession, urging for more AI-literacy for teachers and in schools. For instance, the World Economic Forum ((11) 2020, p. 8) guidelines state that “Fostering innovation and creativity will require a shift toward more interactive methods of instructions where teachers serve as facilitators and coaches rather than lecturers.” As such, the suggested “solution” (or in this specific case more of a statement) shifts the current work of teacher to something new altogether. Furthermore, Luckin ((5) 2016 p. 11) devotes a section on teachers and AIEd in their guidelines, stating that “we look toward a future when extraordinary AIEd tools will support teachers in meeting the needs of all learners.” This implies an assumption that teachers of today do not meet the needs of all learners. The solution to that problem is presented through AIEd as a technological fix. In contrast, UNESCO3 ((8) 2021, p. 8) states that teachers’ workload in classrooms as brought on by digitalization is “often a source of longer working hours, role expansion, increased non-teaching, and administrative duties.” This does not correspond to many of the other guidelines that state that teachers will face a reduced workload as a result of working with. This is also mirrored in the Council of Europe guidelines ((1), 2022, p. 4): “(…) this is based on the assumption that the role of teachers is rather mechanical and purely instructional with summative assessment playing a central role, reflecting deep beliefs about the functions of education and the social institutions around it.” Here, the technological fix is questioned, and lifted to a more general discussion on education and learning.

According to Biesta (2019), “learning” is a word that has been taking over from “teaching,” which is visible in these guidelines. The “learnification,” as Biesta calls it, of the school system shifts not only the language that we use to discuss the school system but also the meaning of what education is. This is, perhaps obviously, not something focused on in the guidelines on AI in education—because the learnification of education has already become an educational imaginary taking form, being propelled further by technology. When focus lies in what these technological advancements can do for students (weather it is personalization, direct assessment, or any other forms of suggested technology “fixes”), the core of education is not discussed or taken into consideration. There are, however, exceptions represented in the guideline documents. In the guideline by the European Commission ((2) 2018), the future of education is discussed through a lens of what the world might look like in the future—that is, very much an imaginative scope. The discussion entails questions on what education will be for if societal changes are brought on by AI. It asks: “If we imagine education in a world where work is not a central factor of life or where jobs, as we know them, do not exist, what would be the role of education?” (European Commission, 2018: p. 33). As such, discussions on a more imaginary level are sometimes very clearly carried out through the documents. Another exception is the commissioned meeting document from UNESCO2 ((7) 2021), where Keri Facer and Neil Selwyn state that: This continued willingness to turn to technology as a ready way of overcoming educational problems is understandable; after all, the alternative, a recognition of the profound educational, economic, and social challenges both within and between countries, a recognition of the importance of investment in high quality teaching and schools, a grappling with the issues of epistemic inequalities and historical injustices, seems daunting compared with the promises of EdTech entrepreneurs (p. 10).

Here, the authors dive deeper into questions of a more structural nature, not catering to the idea that AI technology is the only possible way forward, as many of the other documents indeed do. Interestingly though, UNESCO1 ((6) 2021) provides another view, one where headlines such as “How can education prepare humans to live and work with AI?” where they state that AI for K-12 should be included in the curricula in order to “address the growing skill gap and fill the AI jobs being created worldwide.” As such, the same organization provides multi-facetted views and competing visions of the future across published documents within the organization.

Luckin ((5) 2016) do not touch on this subject in length, but rather sell technological fixes though the guidelines—an inspirational AIEd guide. It might not be too surprising, seeing as Pearson also sells tech solutions. The World Economic Forum ((11) 2020) does not lift these issues either, but instead focus on the previous imaginaries mentioned, with headlines such as “shifting learning experience”—both “fixing” the future of teachers’ work and students’ learning through technological fixes. The imagined future of education linked with AIEd is conflicted—where some policy guidelines give examples, solutions, and new ways of teaching, while others ask fundamental questions about how and what education really is and should be. These competing visions of the future are important, as the future is shaped by society and our views on what is most beneficial.

Artificial intelligence is an integral component of an emerging surveillance society

Surveillance and bias is mentioned in a majority of the documents, and therefore makes up the last theme of this result. One example comes from UNICEF’s ((10) 2022, p. 23) guidelines for AI policymakers: “Ultimately, when children grow up under constant profiling and surveillance, and their agency and autonomy are constrained by AI systems, their well-being and potential to fully develop will be limited.” The word choices point to a somewhat dystopian problematization, with words such as “profiling” and “constrained.” UNESCO2 ((7) 2021, p. 20) also highlights this, stating that: “There is also the additional concern that AI data and expertise are being accumulated by a small number of international technology and military superpowers.” These are huge risks and challenges mentioned, with UNESCO providing a checklist of questions for policymakers, but not warning to slow down the process of using AIEd.

This is not least visible in the proposed AI Act by the European Commission, currently in trialogue with the parliament and council ((3) European Parliament, 2023). The opening paragraph states that: “AI technologies are expected to bring a wide array of economic and societal benefits” (which entails that they might “fix” the state of the economy), but also that “AI systems may jeopardize fundamental rights such as the right to non-discrimination, freedom of expression, human dignity, personal data protection, and privacy” (p. 2). This risk-based approach on AI applications in several categories would be the first of its kind if adopted. The risks of the AI systems belong to one of four categories: minimal, low, high, or unacceptable risk. Among the high-risk ranked areas in the AI act are education, law enforcement, biometric identification, migration, infrastructure, administration, essential private, and public services as well as employment. The “unacceptable” risks stated in the AI act, connected to the same imaginary, namely, manipulation on children, social scoring, and facial recognition (EU AI Act, 2023).

The, somewhat dystopian, imaginary of surveillance and personal data-gathering can be used to contrast or highlight difficulties expressed by the organizations. On one hand, AIEd can “provide personalized learning experiences to address each user’s unique needs” ((10) UNICEF, p. 21) but at the same time, “AI systems need data, and in many cases, the data involved is private: for example, location information, medical records, and biometric data. As such, AI challenges traditional notions of consent, purpose, and use limitation, as well as transparency and accountability—the pillars on which international data protection rests” ((10) UNICEF, 2021, p. 23). UNICEF illustrates a future where citizens are under control and are “constrained” by AI systems, even impacting the development of children. This phrasing is used to strongly suggest the big brother imaginary of the future. However, worth noting here is that even though UNICEF highlights very big risks for children, they also suggest specific AI skills be taught in school in order to be successful in the workplace (see previous section on students in the workplace). UNESCO2 ((7), 2021, p. 19) also provides examples on AI technology already in use in education, for example, “AWE, both formative and summative, is currently being used in many educational contexts through programs such as WriteToLearn, e-Rater, and Turnit.” This is also done by the World Economic Forum, who lists examples on AI technology in use under each sub-category of skills for the future.

The themes that have been identified as being most prominent in the guidelines are thus ones concerning AI’s disruptiveness, the future of education (which has been seen both for students and for the future of teachers’ work) as well as the big brother imaginary of surveillance. The conflicts that are visible will be further discussed in the following section, applying problematizations and imaginaries as a way of discussing what visions of the future that are expressed in the guidelines.

Discussion

As shown in the result section, three major sociotechnical imaginaries were identified across the policy guidelines: AI’s inevitable disruptiveness, AI reshaping education, and the surveillance society. The problematizations create and build the sociotechnical imaginaries through problem-solution constructions, or through problematizations that are presented as factual. Or the imaginaries create policy responses in the form of problematizations—both of these things are true in the creation of policy. Rahm and Rahm-Skågeby (2023) suggest that problematizations and sociotechnical imaginaries gain “analytical traction” off each other, as they can both be studied to understand what AI is foreseen to impact education. The discussion will be carried out for each of the imaginaries presented, in the order given above. It will also discuss practical implications for education in relation to previous research presented.

The sociotechnical imaginary that the development and adaptation of AIEd is inevitable, and a necessary step forward needs to be understood as a neo-liberal vision of the future, with the economy at its very core (cf. Høydal and Haldar, 2021). That is, the idea that education has to cater directly to the future working market might need questioning. This undertone echoes through all three of the sociotechnical imaginaries presented in the results. The problematizations in this imaginary are not always necessarily phrased as issues, rather something inevitable—a fact. For one, the (OECD Education Working Papers, 2020 (4)) presents a problematization first in the abstract, stating that: “education faces two challenges: reaping the benefits of AI to improve education processes, both in the classroom and at the system level; and preparing students for new skillsets for increasingly automated economies and societies” (p. 3). This phrasing highlights a “challenge” (problem), where AI is framed as an inevitable force that will change society whether we want it to or not. This needs to be considered through the history of EdTech, discussed both by Watters (2014) and Selwyn (2011a). As Selwyn (2011a) put it, technology tends to be “introduced into education as a ‘solution in search for a problem’” (p. 58). The practical implications of adhering to this imaginary without questioning the motives behind it means that teachers and students have to adopt technology that is introduced as a solution for a problem that might not exist.

Furthermore, the problematization that children (students) will have to acquire new skills in order to be part of a new society—the 4th industrial revolution signals this disruptive imaginary. The underlying assumptions representing these problematizations are that the school system is, first and foremost, a base for economic growth. This is a discussion on how society sees education, a discussion considered in, most prominently, two of the policy guidelines (see UNESCO4 2019) and the Council of Europe). In a majority of these policy guidelines, the “inevitable” shift toward a school that needs to change in order to follow the AI-development is evident. A focus on discussion of what education is and should be is of importance, should we wish to add more competing visions of the future than the neo-liberal one presented. Biesta’s (2019) learnification could be one way of doing so.

The sociotechnical imaginaries at play on the future of education are very much the same across documents, even if the problem representations are expressed as utopian or dystopian. Facer and Selwyn of the UNESCO2 ((6) 2021) programme meeting document argue strongly that the technological fix is not to be allured by, and that teachers should be given more autonomy. This is also seen in the Council of Europe ((1) 2022) report, where the techno fix of teachers’ work is described as a “naïve approach to teaching and learning” (p. 34). In a way, the absence of reflection on these questions in some of the documents also speaks volumes to this imaginary. In contrast, UNESCO2, initially hesitant, ((7), 2021, p. 15) states that: “The extensive use of ITS raises other problems as well. For example, they tend to reduce human contact among students and teachers. Also, in a typical ITS classroom, the teacher often spends a great deal of time at their desk in order to monitor the dashboard of student interactions.” However, this problematization is followed by a suggested techno fix, namely, smart glasses that show student progress when the teacher looks at students in the classroom. So, even though UNESCO initially raises concerns here, there are also solutions presented by way of technology, thus in a sense allured by technology. This can be seen as an example of what Selwyn (2011a) calls a solution in search of a problem. It could also be interpreted as a way of facilitating technical solutions, similar to the findings in Sperling et al.’s (2022) study, where actors enabled issues with the AI engine in different ways. This pattern is something that needs attention and to be further examined. The imaginary at play here is built on promises of technological fixes—problems with solutions attainable through technology. In contrast, as seen in UNESCO4 and the Council of Europe, some scholars contest this view. Depending on what documents are used as guidance for policymakers, their visions of the future will be impacted in different ways. These sociotechnical imaginaries will thus shape different realities.

Teachers’ work is visible in problematizations in the policy guidelines, as well as directly linked to the sociotechnical imaginary on the future of education. Common problematizations in the documents regarding students need for more personalized learning, as well as the future of teachers’ work which together build an imaginary on the future of education. On the policy guideline level, teacher voices and experiences are omitted in favor for experts in different domain areas, not always education in the AIEd sector. This builds an imaginary of teachers’ futures as facilitators of technology, where time should be optimized, and learning characterized by one-to-one teaching in some form. Luckin ((5) 2016) even states that “one-to-one tutoring has long been thought to be the most effective approach to teaching and learning.” This is not consensus in education research but is presented as facts and also comes with a technological solution so that all children can have this tutoring: AI.

As highlighted by Peters et al. (2023) and Erb and Geiss (2023), educators have a key role in the development unfolding in the EdTech and AIEd arena. Competing visions of the future are not only seen in policy guidelines, as presented in this paper, but also between educational researchers. Regarding the future of teachers work, Luckin et al. (2022a) want to prepare teachers with AI readiness, while others, such as Selwyn, (2011a; 2011b), are critical to the current hype in AI. What implications this will have on teachers work is not yet clear. However, as shown in this paper, policy guidelines for AI in education cater to the inevitable entrance of AI into the school system, meaning that teachers have to prepare to develop AI readiness, or become left behind in the 4th Industrial Revolution. However, this imaginary is contested in some of the guidelines—that teachers should not be seen as tech stewards in digitized classrooms. Thus, the competing visions of future come into play. As some of the policy guidelines want to front personalization and AIEd as a way for teachers to save time, a sociotechnical imaginary of teachers as tech stewards is built. Regardless of which sociotechnical imaginary will be leading in influencing the future of education, teachers have an active part to play in shaping the actual teaching practices (cf. Erb and Geiss, 2023).

Bias and surveillance is a sociotechnical imaginary also seen through all of these guidelines, an undertone on which all of them rest. The ethical considerations and discussions carried out by the organizations are mostly focused on data-gathering and risks that come with it. Williamson (2017, 2021) underlines data-mining in schools as a way for private actors to also use that data for commercial interests. Learning platforms are already a big part of our digital educational infrastructure, and the policy, laws, and regulations surrounding the use of data thus need further attention. The narrative and the problematizations that build this reality are very much echoing a dystopian vision of the future—although some of the guidelines barely touch upon this, but instead on biases imbedded in code or not at all. Hence, the documents are dystopian or utopian by nature—either stressing the risks that can be brought on by algorithmic bias or not even mentioning it as a way of turning a blind eye. Some guidelines try to balance in-between this black or white imagery of the future but often lend themselves to either of the two. This framing of utopian and dystopian needs to be understood as a way of signaling desired futures as they affect their intended audiences, and on that dystopian or utopian imaginary present their solutions accordingly.

Rahm and Rahm-Skågeby (2023) suggest that problematizations can be key in deconstructing imaginaries of the future. It is also suggested that sociomateriality should be given a bigger part as an actant in these readings. I suggest that policy guidelines do in themselves not cultivate these thoughts, which therefore does not promote an inclusion of this in the context of this paper. However, it is, in light of their suggestion, interesting to see that the guidelines in themselves sometimes give examples on existing AI solutions—opening for a sociomaterial view. The existing software thus makes its way into guidelines for implementation—making them an actant in the decisions being taken. It is a Cath 22-esqe thought that policy guidelines for safe development and implementation of AIEd use existing AI software that was created outside of these policies. This will, no doubt, have implications for teachers and students across the globe and needs more attention in the AIEd and EdTech policy research and discussions.

Conclusion

The school of the future is created by imaginaries shaping the futures that are desirable. These desired futures are created around us, not least by the soft governing of policy and policy guidelines. The content of them thus play an important part in the imaginaries which we strive for, legislate according to, and develop tech to attain them. It is important, though, no one do not forget that this cycle of “hype, hope, and disappointment” (Selwyn, 2022) exists and might be in play. As the competing visions of the future trickle into the school system and affects teaching by aiming to automating teacher tasks, or adding more labor to an already strained workforce, educators need to play an important part of this governance process.

This analysis has shown that three major sociotechnical imaginaries are present in the policy guidelines used as a basis: AI’s inevitable disruptiveness, AI reshaping education, and the surveillance society. These sociotechnical imaginaries are created by the use of problematizations, often in a problem-solution narrative that sees AI as the means for transforming the future of education. The question is, however, if the guidelines do indeed solve problems, or if they are, as Selwyn (2022) suggests, presenting a “solution in search for a problem” (p. 58). Teachers might soon face a need to develop AI Readiness, as suggested by Luckin et al. (2022a) and change their pedagogy as dictated by software and algorithms.

The analysis has also shown that the sociotechnical imaginaries in play can signal utopian or dystopian views, creating competing visions of the future. This variety of competing visions of the future could influence policymakers and legislative processes in different directions, depending on what guidelines are used in the process.

The results of this analysis can be compared to that of Høydal and Haldar (2021), who conclude that “the sociotechnical imaginary of the digital future presented in the strategy document is linked to the concept of a capitalist state competing in an international market” (p. 474). Even though the contexts of these studies are not the same, similar tendencies can be seen in both. This means that an international perspective and the Norwegian perspective show the same links to neo-liberal visions of the future of AIEd. More work on the significance of discussions on the role of education in the 4th industrial revolution is needed, as well as voices from those most affected by these sociotechnical visions of a school where tech can solve any problem—teachers.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Correction (February 2024):

Article updated online to add reference details for Selwyn (2011a) and Selwyn (2011b).