Abstract

With the rise of machine learning-based decision support systems, the responsibility for potential errors is regularly questioned. Scholarly work regarding this issue has criticized a “responsibility gap,” that is, unattributable responsibility for the effects of machine learning-supported decisions. One proposition to close the responsibility gap is to include scientists, who lay the basis for the functioning of machine learning models in distributed responsibility models, assigning them a share of responsibility for potential errors. However, to date, responsibility models that include scientists have not gained a foothold in regulation. To provide an empirical basis for the discussion around novel responsibility models, this study provides a social scientific analysis of an interdisciplinary machine-learning consortium that works on machine learning-based decision support systems for healthcare – an area where errors have particularly fundamental consequences for individuals. We investigate researchers’ speculations about their responsibility for the downstream effects of their ML research results after translation to clinical practice. We find that researchers point to tensions in the scientific sector as well as to agential, local, and temporal shifts of their research outputs during translation to clinical practice as a major source of what we call “suspension of responsibility.” Our insights contribute to debates about novel responsibility models that are fair to patients, doctors, and scientists and can inform similar debates beyond healthcare.

Keywords

Introduction

Artificial intelligence casts a wide-ranging influence on various sectors, with many potential benefits and challenges. One particularly promising area is machine learning (ML)-based medical decision support systems. Innovations in that area promise to lighten the load of healthcare professionals by supporting them in decision-making, ultimately enabling them to allocate more time and focus to delivering patient-centered care (Topol & Verghese, 2019). However, shifting parts of decision-making from doctors to ML-based decision support leads to a host of societal and ethical issues (McLennan et al., 2020). A central concern in this context is the so-called responsibility gap (Gunkel, 2020; Matthias, 2004); a phenomenon describing that under specific circumstances, neither users nor creators of a certain technology can be held responsible for all the effects of the technology’s use.

One situation in medical decision-making where responsibility gaps have been observed is when doctors are expected to use ML-based decision support tools, but are – due to the opacity of the tools – unable to validate the applicability of the generated outputs for their individual patients. This issue has prompted a discussion about when and under what circumstances physicians should retain ultimate legal responsibility for ML-supported medical decisions (Cestonaro et al., 2023). On a broader scale, this has led to debates about who else, if anyone, should share or take on this responsibility (Bitterman et al., 2020; Sand et al., 2022; Verdicchio and Perin, 2022). In response, scholars have proposed distributing responsibility among multiple actors via shared responsibility models (Floridi, 2016). Yet, constructing effective shared responsibility models that meaningfully include actors at the upstream stages of ML tool development has proven difficult.

This article – situated at the intersection of biomedical AI ethics and Science and Technology Studies (STS) – sets out at this point, focusing on the challenges of integrating upstream actors of ML tool development into shared responsibility models. Specifically, we focus on AI researchers, those upstream actors whose scientific contributions underpin ML tools. In line with established responsibility-oriented research policy frameworks, such as the European framework “Responsible Research and Innovation” (RRI) (Von Schomberg, 2013), and based on literature on responsibility gaps for machine learning supported medical decisions, we adopt the position that a meaningful integration of researchers into shared responsibility models is aspirational. At the same time, we recognize that systemic conditions – such as institutional structures, academic incentive systems, or disciplinary norms – can contribute to perceptions that researchers either should not or cannot take responsibility for downstream effects of their research results. To establish meaningful shared responsibility models and to enable researchers to embrace their role as co-responsible actors, these conditions warrant critical scrutiny and structural transformation.

To ground calls for structural transformation in the lived experiences of those affected by shared responsibility models, this study focuses on the views of ML researchers: how they perceive their systemic conditions, how these perceptions inform their assumptions about responsibility – both within the boundaries of their projects and in relation to the downstream socio-technical implications of their work, for example, when their research results were translated to clinical decision support tools – and how these dynamics may obstruct the implementation of shared responsibility models. With our work, we illuminate how responsibility for downstream effects of research outputs “suspends” and identify specific barriers for including researchers in shared responsibility models.

Responsibility for machine learning supported medical decisions – and its gaps

Our analysis is grounded in a conceptualization of moral responsibility derived from the (biomedical) ethics literature: “the causal responsibility of moral agents for harm in the world and the blameworthiness that we ascribe to them for having caused such harm” (Smiley, 2022: 1). Such responsibility can be carried alone, routed within the collective actions of a group of stakeholders, and distributed among multiple stakeholders. This multitude of possibilities to assign responsibility makes it necessary to carefully assess the requirements for assigning moral responsibility. Well-assigned moral responsibility, in turn, is a prerequisite for a fair examination of who, in fact, should practically, that is, legally, be held responsible.

In examining how responsibility for ML-based decision support in clinical practice is currently distributed, it becomes evident that there is a need to explore how this responsibility might be more effectively shared in the future. According to moral theory, the user of any given tool is assigned responsibility for the consequences of using the tool. Conversely, everyone involved in the development of the tool is not responsible for the consequences of its use. However, when the tool is not functioning according to its specifications, those who created the tool can be held responsible for any unwanted consequences (Fischer and Ravizza, 1998). For example, if a radiologist misses relevant visual features on an X-ray image due to malpractice and consequently misdiagnoses their patient, the radiologist is responsible for the patient’s potential harm. If the radiologist’s fault is demonstrable, they can also be practically held responsible, that is, liable, and be asked for reparations (Biswas et al., 2023). As trained specialists, physicians are ethically and legally expected to be able to validate and process available medical information to the benefit of their patients. In other words, if adverse events occur based on treatment decisions, and the patient did not explicitly assume responsibility for the specific decision, doctors are considered ultimately responsible for these medical outcomes (American Medical Association, 2023; Beauchamp and Childress, 2013). If, on the other hand, a tool is not functioning according to its specifications, responsibility must lie with one or multiple other actors involved in the research, development, or implementation of the tool.

In the research, development, and deployment lifecycle of ML-based decision-support tools in healthcare, numerous actors are involved – each theoretically positioned to assume responsibility for the effects these tools may have on patients. These actors span different stages and sectors, including data generation, acquisition, model development, evaluation, certification, distribution, implementation, and use. Often, these stages involve different individuals or institutions – such as academic researchers, industry partners, regulatory bodies, healthcare institutions, and clinical users – each driven by distinct and sometimes conflicting interests related to science, commercial viability, and patient care.

In principle, the specifications and documentation produced at each stage when one actor “hands over” the ML tool to the next could serve as a basis for assigning responsibility for failures or harms that arise downstream. Problematically, however, in the context of ML technologies, the boundaries of what “functioning according to its specifications” means blur. This happens for two main reasons: (1) an ML model’s performance relates to the dataset used for training, imparing the reliability of the model for groups exceeding the scope of the training dataset, (2) users’, that is, doctors’ control over actions facilitated by ML-based decision-support tools is limited. Even if healthcare professionals genuinely screen and understand all available information about how the ML-based decision-support tool derives its outputs, an inherent lack of transparency impairs their ability to judge the stochastic model’s reliability and their control over subsequent effects when using the tool (Berber and Srećković, 2023; Holm, 2011; Nissenbaum, 1996). 1 This blurriness complicates the attribution of responsibility to specific actors involved in the development and use of ML-based decision support tools. Scholars alluded to this issue as a “responsibility gap,” that is, the dilemma that – under existing responsibility models – neither the creators nor the users of these ML models can be reasonably held responsible for subsequent effects (Gunkel, 2020; Matthias, 2004).

The concept of the “responsibility gap” has been widely explored with embodied AI systems, such as robotics, particularly in the context of incidents like unintended deaths in robot warfare (Sparrow, 2007), and accidents involving autonomous vehicles (Coeckelbergh, 2016; de Jong, 2020; Nyholm, 2018). More recently, discussions have expanded to include harms caused by non-embodied AI systems, such as discriminatory outcomes in credit scoring algorithms (De Conca, 2022). Various phenomena akin to the “responsibility gap” have been introduced and examined throughout this discourse. For example, “liability gaps” emphasize the challenge of assigning legal accountability when harm occurs within these gaps (Bertolini, 2013; Johnson, 2015), and “retribution gaps” center on the issue that neither AI nor their makers are eligible for retributive blame (Danaher, 2016). On the other hand, some scholars think that it is, in all or at least most instances, possible to attribute retributive blame to some actor in the network of an autonomous system. For example, some argue that novel situations involving AI are comparable to those without AI, asserting that there are already instances in which individuals are held responsible for effects beyond their control – citing instances of, for example, factory owners being held responsible for accidents when their employees fail to comply with safety regulations (Santoro et al., 2008). Others argued that, on the grounds of professional responsibility, computer scientists should generally be held responsible for the behavior of AI systems they developed (Nagenborg et al., 2017).

Despite these extensive debates, current frameworks remain ill-equipped to address the diffuse and collective nature of responsibility in complex socio-technical systems – leaving responsibility gaps largely unresolved and shared responsibility models underdeveloped. With this study, we aim to advance these debates by focusing on the inclusion of academic ML scientists in shared responsibility models. As upstream actors, they play a central role in shaping the epistemic foundations of ML systems by continuously advancing the state of the art. Yet, their integration into responsibility models remains ambiguous.

Challenges and opportunities in attributing responsibility for ML tools’ effects to ML scientists

We support the assertion that attributing specific responsibilities for the effects of autonomous systems to individual upstream actors, such as researchers, is often conceptually problematic and practically unfeasible, given the distributed and collaborative nature of AI development. However, accepting this complexity without addressing it would, in extremis, lead to undesirable scenarios: either halting the development of autonomous systems altogether – thereby preventing society from reaping its potential benefits – or leaving those harmed by these systems without recourse, fostering resentment and a search for scapegoats. Since both scenarios are unrealistic and socially unacceptable, understanding the opportunities and barriers to including ML scientists in shared responsibility models is pertinent.

Drawing from the STS literature, we can identify several reasons for including ML researchers in shared responsibility models for the practical effects of ML. For example, Glerup and Horst found that it “is not possible for scientists to avoid ‘a relationship’ with society” (Glerup and Horst, 2014: 31), and Felt et al. have argued that scientists – in many instances – are aware of potentially harmful implications that the knowledge they produce can have (Felt et al., 2018). At the same time, modern science is criticized for lacking robust ways to govern itself responsibly (Braun et al., 2010; Jasanoff, 2011). In response to this criticism, contemporary codes for good scientific practice and frameworks, such as RRI (Von Schomberg, 2013), have become a normative standard within European science policy. RRI, for example, emphasizes the social responsibility of scientists and the imperative to anticipate and reflect on the broader societal implications of their work. These emerging sensibilities underscore the importance of linking scientific outcomes to their effects – and, by extension, to the researchers who helped enable them. This orientation aligns with ongoing calls for shared responsibility models that move toward a collective carrying of responsibility for the downstream effects of research.

In practice, however, a main challenge for shared responsibility models is that it is unclear what exactly scientists should assume responsibility for. At the time of their result publication, scientists are only limitedly able to foresee potential ethical or social implications their research results might have when taken up and developed further by others. For this reason, scientists interviewed by Felt et al. have insisted on “shifting ethics downstream” (Felt et al., 2009: 365), that is, to apply ethics to the ready-made applications their science will have been turned into. Especially in basic research settings, questions regarding the responsibilities for the ultimate effects or implications of their research results seemed unanswerable to them (Felt, 2009; Felt et al., 2009). However, if there is agreement on the importance of meaningfully integrating researchers into shared responsibility models for the downstream effects of their scientific contributions, it is essential to begin delineating the specific responsibilities researchers should bear.

In addition to the practical issues, several factors in the academic sector influence scientists’ assumptions about responsibility. For example, some scientific projects are based on and carried out for non-content-focused reasons related to pressures to win grants, secure academic jobs, and yield publications (Waaijer et al., 2018). Driving factors of such projects are what publishes well or might yield more citations rather than scientific interests. Related phenomena, for example, publishing as many papers as possible from the same data, are met with push-back by scholars criticizing the practice as “salami science” (Christoffersen et al., 2009). These observations indicate that individual researchers’ scientific curiosity tends to be increasingly vulnerable to external factors and that fewer epistemic arguments contribute to allocating scientific resources to projects.

Being consequential for science as a whole, the building blocks of this trend limit researchers’ headspace and room to maneuver and engage in meaningful discussions about their work’s ethical and social implications. The result is tensions between science and social responsibility for scientific results. Eminent for the successful negotiation of these tensions is the space scientists navigate. Their working conditions set the infrastructural, political, and emotional boundaries of their agenda-setting and profoundly influence researchers’ ability to deal with questions of responsibility (Müller and de Rijcke, 2017). These systemic settings must be transformed to allow researchers access to their roles as co-responsible actors. The basis of this, in turn, should be an understanding of the researchers’ perspectives on responsibility-related questions. Therefore, this study draws on empirical data collected in an interdisciplinary research consortium. Over the course of three years, biomedical engineers, dermatologists, radiologists, and a clinical data science group collaborated closely on ML-based decision support tools for radiology and dermatology, offering a valuable lens into how responsibility is understood and negotiated in a real-world setting of interdisciplinary medical AI research.

Methods

The data for this social science study was collected in a German ML healthcare consortium working on medical ML. We collected data during active participation in the consortium as embedded ethicists and social scientists (Fiske et al., 2020; McLennan et al., 2022). Ethical approval for this study was obtained from the ethics commission of the Technical University of Munich (approval ID: 288/21 S-EB). We (1) conducted participant observations in consortia meetings, which were mostly held virtually due to the COVID-19 pandemic, we (2) collected the consortium’s core governance documents, and (3) conducted “peer-to-peer” interviews (Müller and Kenney, 2014).

Peer-to-peer interviews are semi-structured interviews, conducted in an intimate and collegial one-on-one atmosphere, which provides crucial room for reflection, and with the interviewer and the interviewee both being academics (peers). The semi-structured interview guides contained the following three thematic blocks relevant to this study: (1) the team members’ scientific goals; (2) their roles within the consortium; (3) the implications of their work that they are mindful of, and the responsibilities they assume. The interviews were conducted by TW and yielded valuable insights into participants’ perceptions of responsibility and how these views developed and are influenced by systemic conditions.

For the interviews, all active staff members of the consortium were approached via email and asked to participate. All but one, 12 in total, agreed to participate. No reasons for declining participation were reported. Written informed consent to participate was obtained from all interviewees.

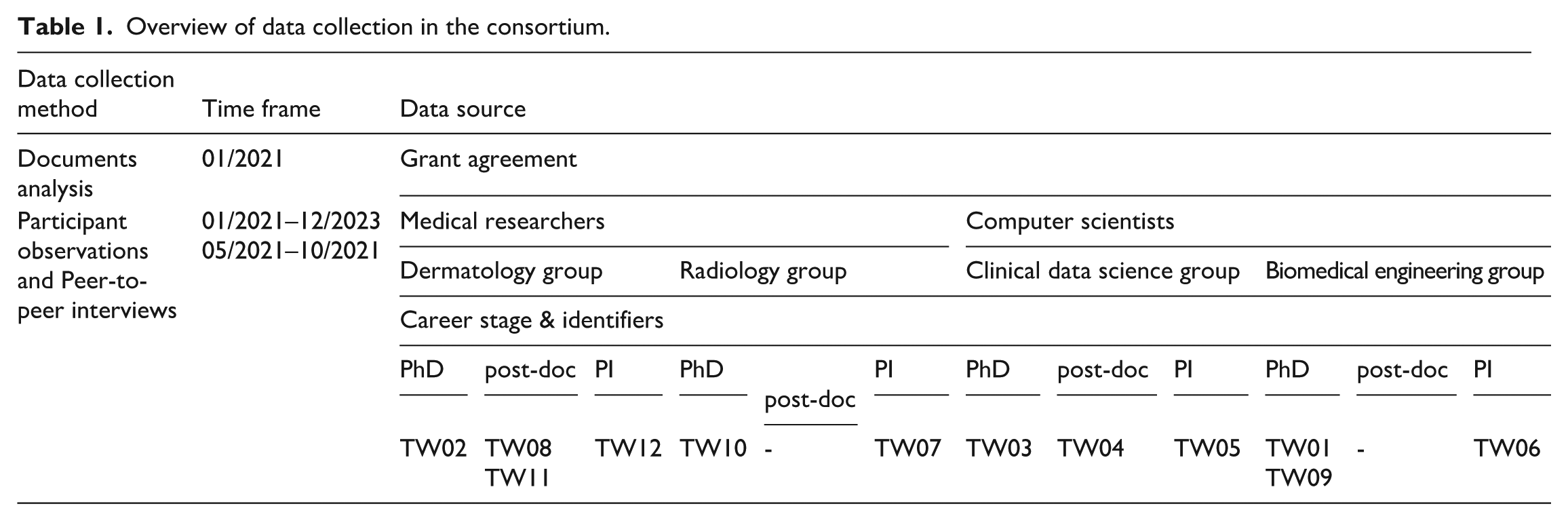

Table 1 provides an overview of the data collection in the consortium. We focused on two participant groups, who are referred to within the manuscript as (1) medical researchers – including the dermatology and radiology groups; and (2) computer scientists – including the clinical data science and biomedical engineering groups. Participants were employed at one of the consortium partners: An academic dermatological group, an academic radiological group, an academic clinical data science group from the same department as the radiological group, and an academic bioinformatics group. Throughout this manuscript, we refer to the clinical data scientists and the bioinformatics researchers as computer scientists. We refer to the radiological and dermatological researchers as medical researchers. We include participants’ career stage where relevant, for example, early-career medical researcher.

Overview of data collection in the consortium.

A pilot interview, which had shown the reliability of the semi-structured interview guide, was included for analysis. Due to the COVID-19 pandemic, all interviews were conducted via Zoom. All interviews were conducted between 05/21 and 10/21, that is, in the first year of the consortium activity. Interviews lasted between 60 and 90 minutes, 70 minutes on average. The interviews were audio recorded. After each interview, TW created interview notes to enrich the audio material about inaudible interview details, relevant observations, and first reflections on the interviews. Data saturation was limited by the number of participants in the project. However, theoretical saturation was reached within groups of interviewees. The interviews were transcribed, then pseudonymized. The transcripts were not returned to participants, and they did not provide feedback on the findings.

All data – the project governance documents, fieldnotes from participant observations during meetings, and the interview transcripts – were organized in Atlas.ti. TW conducted the data processing and analysis following the grounded theory methodology (Charmaz, 2006). The data was coded inductively, line-by-line, and axially in multiple rounds. During coding, analytical memos were created and constantly refined and updated when new material that covered topics that were already part of the analytical memos was analyzed. Analysis accounts were compared with new material to extract analytical themes from the coding. The document analysis focused on the project’s goals and how they were framed, serving as a baseline to later determine in what ways individuals’ goals diverge. Recurring discussions on team members’ timelines and agenda setting during project meetings revealed a contrast between the analyzed goals in the governance documents and the researchers’ individual goals. 2 Recurring discussions emphasizing gaps in responsibility for the project’s outcomes, if they ever translated to clinical practice, helped us sharpen our research focus and arrive at “responsibility” as our sensitizing concept.

Empirical analysis

Case description: The project and its goals

The project focus of this analysis is a German academic consortium researching dermatological and radiological ML, publicly funded by the German Federal Ministry of Health. 3 In this consortium, computer scientists were researching and developing novel ML algorithms, while the two medical partners – one dermatological and one radiological research group – provided the necessary domain knowledge and data to develop and train ML models. The consortium’s core documents analysis showed that its joint project’s rationale was to improve patient outcomes by creating ML tools for clinical workflows that exceed existing dermatological and radiological ML models regarding the accuracy of diagnostic decision support. To achieve the overall goal, three subgoals were defined in the grant agreement: First, to retrieve image data from the two participating clinical partners’ archives. Second, to provide human and technical resources to anonymize and prepare archive data for ML training, including labeling the images with field-specific pathologies. Based on these newly created data sets, the scientific partners’ third subgoal was to develop, train, and publish multiple diagnostic radiological and dermatological ML models. 4 The objectives were built on the idea that, given recent advancements in ML-based medical diagnostics, it is now timely to (a) improve the quality of training datasets and (b) increase the utility of ML models for clinical practice to improve the consistency, efficiency, and overall quality of ML in both disciplines.

Suspended responsibility

Since the project’s grant agreement explicitly articulated the ambition to improve real-world clinical practice, we asked researchers in our interviews whether they felt responsible for the potential effects of the project’s outcomes. Particularly, principal investigators (PIs) responded like this clinical data science PI: “I’m a deputy project manager (laughs), that’s the job description” (TW05), closely connecting their scientific role to what they feel responsible for. Scientists carrying out the consortium’s operative tasks, usually PhD students, answered, “it’s my code” (TW01) or “it’s my model” (TW03). With these statements, they, too, anchored their responsibility in what they consider their role or output. When considering their responsibilities, none of the interviewees mentioned to feel responsible for the downstream effects of their outputs. On the contrary, it was important for many of our interviewees to clearly distance themselves from responsibility for downstream effects. Many mentioned that they worked on a

These observations point towards a strong situatedness of responsibility within the scientific profession and a predominant classical understanding of scientific responsibility that does not reach beyond the scientific realm as our interviewees constitute it. In some cases, the scientific setup of the project even led to the responsibility for ethical and social implications being considered negligible, despite one work package of the project having the specific aim to “embed ethics” into the other work packages: “You know, honestly, we don’t have time for ethics now. Our project has so few implications [. . .] we first need to put something out there, then we can think about ethics.” (TW08, mid-career dermatologist who operatively led the dermatological group in the project)

Again others connected the constraints of their responsibility to the boundaries of scientific research to potential risks for patients. They emphasized the need for

From where responsibility suspends

We found inevitable tensions of official project goals and scientists’ individual goals

5

to be a nutrient for responsibility suspension. These tensions help to explain the limits of the perceived responsibilities elaborated on in the preceding section. When we interviewed researchers regarding their goals within their project, their answers, at first, expectedly, mirrored the goals outlined in the grant agreement. PIs cited content-focused objectives, such as advancing academia or patient outcomes. In addition to these more obvious goals, the interviews showed how the individual goals of the researchers played a significant role in shaping their actions within the project. For example, PIs described the primary reasons for participating in the project as career-related and ML as a future-oriented topic. By working on ML-related topics, the PIs hoped to create outputs considered institutionally or career-wise valuable, such as grant money or publications. One PI shared with us their rationale for working on ML, despite having expressed significant skepticism about the technology itself: I admit that I don’t think much of machine learning. I refused to do anything with machine learning for years. (. . .) It’s another tool in the box. [. . .] So (. . .), after it became clear that I would get my own group two years ago, I strategically oriented [my work] a bit differently and also took up machine learning topics. Simply for the reason (. . .) that [for ML] there are jobs everywhere at the moment, funding everywhere, for everything else not. All my proposals without machine learning in them were rejected. (TW06 – PI biomedical engineering group)

Notably, PIs’ accounts contained little discussion of conflicts between strategic decision-making and the responsibilities they assume for the effects of their projects. Instead, these aspects seemed intertwined, highlighting how PIs balance multiple, potentially conflicting responsibilities. Securing funding is one such obligation – and it may involve strategic decisions that diverge from personal research interests. However, PIs must also consider obligations to their team members, constituting an intricate balancing act of multiple responsibilities. This balancing act shifts attention away from the effects of their research objects after clinical translation.

Early career scientists cited other limiting factors for feeling responsible for the downstream effects of their scientific outputs during the project. Computer science early-career researchers reported that, before working on the project, they had not committed to the medical domain, recounting a comparatively high degree of flexibility in choosing their domain. For example, TW01 recalled during our interview that in their quest for a PhD position, they had applied for various positions in different ML imaging domains, including autonomous driving, facial recognition, and livestock monitoring, and reported to have felt overwhelmed by the wealth of domains to choose from. They positioned their project task to solve machine learning problems; and positioned themselves as unable to verify the parts of their interdisciplinary research outputs. Their lack of medical knowledge served as a rationale for TW01’s limited willingness to assume responsibility for scientific outputs downstream.

Early career medical researchers reported divergent professional realities with different limitations for assuming responsibility for the downstream effects of their research outputs. For example, TW02 – a dermatologist who left only a few months after the project had started, with a PhD thesis only slightly related to the project, lacked intrinsic motivation to work on the project. In addition, they were overwhelmed by other clinical tasks. By having to juggle shiftwork and research, medical early career researchers on the projects could not devote as much time to their project tasks as the computer scientists who had, both by contract and in practice, more hours reserved for the project. TW02 illustratively told us how she came to work on the project and how little flexibility she felt in her project choice: Yeah well. (laughs) I’ll tell you honestly: I was forced to work here. My senior physician came to me, telling me ‘we have no money for your position. You have to work there now, then you may continue to work in dermatology two days a week.’ [. . .] I found the project super exciting (..) but how I ended up here, now working 60 percent, that was really not what I signed up for. (TW02, early-career dermatological scholar)

A dominant reason for the discrepancy between the medical and the computer science early career researchers’ attitudes towards their work seemed to revolve around the fact that medical expertise is not placed center-stage in healthcare ML projects. Medical researchers in our project across all career stages reported experiencing some of their project-related tasks as repetitive, tedious, and less prestigious than those of computer scientists. For example, anonymizing or labeling archive images is a necessary preparation considered to have high value for the quality of trained ML models, yet it was cited as a “time-intensive,” “boring” labor (TW07, TW02). This tension explains why early-career medical researchers in the project were likely to find themselves more in the passenger seat and, hence, were hesitant to assume responsibility for any effects that might later follow from the research project’s outcomes.

Agential, local, and temporal shifts

On top of tensions around discipline choices and role considerations, we found specific shifts occurring during the translation of scientific results to clinical practice. These shifts – agential, local, and temporal – suspend responsibility, significantly exacerbating the challenge for scientists creating the underlying scientific foundations of ML models to assume responsibility for clinical outcomes.

Agential shifts

The healthcare sector works based on well-established agential responsibility divides. Independent authorities are entrusted with certifying medical products for quality control, and treating physicians are assigned ultimate responsibility for patient outcomes – shifting responsibility for the (ML-based) medical tools’ functioning from the techno-scientific actors who are involved in the research and development of an ML model, to actors who themselves were not involved in the application’s genesis. In our interviews, this division of responsibility was used as a rationale to demarcate scientists’ responsibility for the results they create. For instance, the clinical data science PI (TW05) argued that research typically intends to proof principles, “but then someone else should somehow properly certify it.”

Agential shifts, however, impose the question of what about established certification processes needs to be revisited to guarantee their reliability in the context of opaque statistical models that may display performance differences across individuals of populational, perhaps already marginalized subgroups. When prompted with this question, the same clinical data science PI argued that “the doctor is still responsible in the end because he or she also approves the findings” (TW05). However, they also pointed out why this agential shift is problematic, referring to the issues that arise with implicit programming and the so-called black box nature of ML models: [T]he physician must be able to see whether he or she can rely on the comprehensibility and validation [. . .]/ can the model for these data even make a prediction? / or has it never seen such data? (TW05, PI clinical data science)

Yet, they ended their statement by saying that “if the model can be validated and is comprehensible and transparent, then the physician can definitely take responsibility, in my opinion” (TW05).

The opacity of the tools, however, particularly undermines the logic necessary to hold treating physicians responsible. Physicians, as users of ML models in clinical practice, are contextually different actors from scientists, engineers, and other actors involved in the research and development of the ML model. Therefore, they cannot be held ultimately responsible if there is no way to assess if a model has been validated to be comprehensible and transparent and allows treating physicians to assess the context-dependent reliability of outputs for their individual patients. Further, it is currently unclear who should validate, what sufficient validation is, and what comprehensibility and transparency mean.

Local shifts

The second, local responsibility suspension, is closely related to the first, agential responsibility suspension, but additionally emphasizes the locational differences of ML models in development and application: When an ML model is used in clinical practice, it is removed from the physical environment and socio-technical context it was developed and, importantly, tested in. Illustratively of the local shift, one computer scientist on the project (TW01) explained to us how, for example, so-called out-of-distribution data – for example, unexpected images in the model’s worklist, differing patient demographics, images created with different data collection devices than the model was trained with – is known to lead to erroneous outputs for individuals or patient groups.

Arguably, it is the task of the researchers and developers – alongside regulatory bodies, accreditors, and industry actors – to consider potential application scenarios during the development of ML tools. While the researchers we interviewed acknowledged the importance of anticipating future uses, they also emphasized the difficulty of doing so in practice. For example, TW01 told us “the significant variations among clinics, their departments, and procedures make it challenging to predict local differences” (TW01). This complexity – arising from the shift between the controlled environment in which an ML model is developed, trained, and tested, and the diverse contexts in which it may ultimately be deployed – reduces researchers’ control over how the model functions in practice, constituting one lever of responsibility suspension.

Temporal shifts

Temporal shifts defer questions of responsibility from basic research(ers) to a later point in the development chain at which basic research results are translated into clinical practice. Our interlocutors work on what they perceive as elusive scientific objects that become scientific publications and see a stark difference to medical products, which are ready to use in clinical practice. When we asked them about how the ethical implications of the project could be mitigated, our interlocutors repeatedly argued that mitigation will not be necessary at this stage because “it’s not about a medical product at the moment, but about research” (TW01), or, as mentioned previously, that they do not “have time for ethics now.” (TW08)

This distinction draws an all too clear line between basic and applied research. Working in basic research comes with academic freedom, that might make scientists feel that they do not have to worry about the potential negative consequences of their research. In the specific project at the center of our analysis, however, this instrumentalization of basic science, seemed to have been in an artificial place: If research to “develop, train, and publish multiple diagnostic radiological and dermatological ML models” (cf. Section 1. Case description) is basic research, the question arises as to where applied research begins. Moreover, if – to avoid having to take over responsibility for downstream effects – the threshold of what counts as

Discussion

With this work, we asked how researchers imagine the distribution of responsibility for potential errors based on erroneous ML model outputs that emerged in and through their work. We found a disconnect between how the research project was positioned in its grant agreement – as directly influencing patient outcomes – and how individual researchers understood their own responsibility. Despite a link to patient outcomes in the project agenda, researchers felt ill-positioned to assume responsibility for the downstream effects of their work. Beyond this, we identified a series of tensions rooted in both individual scientific roles and broader systemic conditions of the academic sector, and agential, local, and temporal shifts that researchers used to explain how responsibility for ML-supported decisions is suspended.

Our findings empirically illustrate how and why assuming responsibility for the downstream effects of their work may not be self-evident for researchers (Sigl et al., 2020). They revealed a nuanced appreciation of the structural incompatibilities within the current organization of the research sector that ultimately served to disavow responsibility for the downstream effects of researchers’ outputs. For some scientists in our study, the absence of perceived responsibility for potential clinical errors was related to their self-perception as laypeople in the project domain. For instance, computer science PhD students viewed themselves as laypeople in the project domain – and while this is understandable against the background of them still being enrolled in PhD training – this also highlights the knowledge limitations of interdisciplinary researchers regarding their colleagues’ domains (Sultan and Müller, in preparation). Such disparities in expertise among collaborating disciplines have been criticized as a fundamental shortcoming of complex sociotechnical systems (Mainzer, 2011). This phenomenon seems to increase with the complexity and opacity of the tools under development, as well as with increasing proximity to translation and implementation in clinical practice, and aligns with what Berber and Srećković (2023) have called “two-way-nescience.” Furthermore, medical researchers perceived themselves as somewhat subservient in ML projects, for example, when labeling vast amounts of data. This can be connected to a novel wave of literature investigating hidden labor behind emerging medical ML technologies and their integration into clinical practice (Elish and Watkins, 2020; Fiske et al., 2019). It implies that transparency about and valuing medical researchers’ essential work for highly accurate ML models is needed. In addition to tensions within scientists’ roles, our findings extend claims that some scientific projects revolve around non-content-focused reasons connected to grant pressure, academic job market pressure, and publication pressure (Waaijer et al., 2018), and that the possibility to exploit results for one’s own career goals is a primary motivator in academia (Suominen et al., 2021). In our study, these tensions served as a rationale for researchers to distance themselves from ethical deliberation. These findings on tensions within and across academic roles do not necessarily imply an ethical imperative for researchers to assume specific responsibilities for downstream tasks. Rather, they expose the trouble of integrating researchers into shared responsibility models and, thus, reinforce existing calls for structural reforms within the academic sector.

Because many of these tensions lie beyond researchers’ immediate sphere of influence, their current tendency to reject responsibility for effects beyond the scientific domain becomes intelligible. As has been observed earlier, our data indicated that this is the case even though researchers might be aware of the potential societal influences of their work (Felt et al., 2009), and despite longstanding discussions challenging the notion of science as a value-free space and persistent calls for researchers to take responsibility for the social and other consequences of their work (e.g., Toulmin, 1985). These findings challenge the assumptions underlying responsibility-focused research frameworks, such as RRI (Von Schomberg, 2013), that have been introduced to shift ethics upstream, making researchers accountable and holding them responsible for the downstream effects of their work. This, in turn, helps explain why shared responsibility models that aim to include researchers – based on a similar rationale as RRI – have not yet gained a foothold.

The agential, local, and temporal shifts we conceptualized as key dynamics when ML research results are translated to practice act to suspend responsibility at multiple levels. Even when researchers express willingness to assume responsibility for the downstream effects of their work, these shifts present significant challenges for implementing viable shared responsibility models. Each shift complicates the basis for attributing responsibility to researchers, operating through distinct institutional logics and practical constraints.

Agential responsibility shifts resonate with longstanding STS debates on research as practice (Latour, 1988), and the (governance of) co-produced scientific results with distributed agency (Jasanoff, 2004, 2011). These shifts underscore the systemic fragmentation of responsibility in technoscientific networks and bring into focus the responsibility-related challenges that emerge when research is co-produced across multiple institutions and domains. In such environments, traditional expectations of individual responsibility become increasingly untenable.

Local responsibility shifts underscore the limitations of research paradigms that privilege generalizability over context-specific validity. To mitigate the challenges posed by local shifts, ML knowledge production must embrace context-sensitivity – taking into account the specificities of their implementation environment, such as intended use and patient characteristics, institutional infrastructures, and intended users’ expertise. This may require relocating aspects of research into the environments where ML tools are intended to be used. Emerging “living labs” attempt to address this by constructing real-world settings in which to perform research. However, these labs do not replicate the world “as is”; they build new socio-technical realities, with their own tensions and epistemic constraints (Engels et al., 2019). Flexible approaches, such as participatory design (Simonsen and Robertson, 2013), could be a purposeful addition to living lab approaches, to bring their research closer to lived experiences. However, much of the research performed in living labs is translational, leaving basic research knowledge production still largely detached from its future application sites.

Temporal responsibility shifts, finally, reinforce existing critiques of science policy’s persistent reliance on the distinction between basic and applied research (Narayanamurti and Odumosu, 2016). In our study, this distinction functioned as a narrative that allowed researchers to deflect responsibility for downstream effects by locating ethical concerns outside the perceived remit of basic science. These findings point to the need to re-examine the intended uses and effects of scientific categories used to organize and govern science when used, for example, in institutional mechanisms such as funding schemes.

While the shifts we found are not unique to AI research translation, our findings suggest that AI research’s interdisciplinary nature and its tools’ opacity significantly amplify their effects. As a result, the suspension of responsibility reaches a degree of intensity that may be particularly pronounced in this domain, demanding renewed attention to how responsibility can be distributed and enacted with shared responsibility models. Consequently, our findings indicate that the design of shared responsibility models should consider researchers’ epistemic cultures. They should be built on considerations of scientific norms, structural boundaries of scientific roles, and the epistemic opacity of the effects for which responsibility should be shared.

Future research should revisit our findings in broader group settings, such as focus groups, where researchers can collaboratively explore and reflect on designs for shared responsibility models and how agential, local, and temporal shifts could be minimized. Further, these discussions could deepen the understanding of what systemic changes are needed to make shared responsibility models practical and sustainable. Additionally, future studies might explore whether researchers’ goals – intrinsic (e.g., patient outcomes) or extrinsic (e.g., career incentives) – influence their sense of responsibility, and how scientists can better align these motivations with responsible research practices. Given the increasing role of private-sector researchers in ML (Gibney, 2024), expanding the inclusion of participants beyond public-sector researchers is essential. Moreover, applying these questions to other technological domains and societal contexts could offer comparative insights and help clarify which dynamics are specific to ML in healthcare and which are more broadly applicable.

Conclusion

Functioning shared responsibility models for ML-aided decisions that include all relevant actors, including researchers who lay the knowledge foundations that underpin these technologies are aspirational, yet to be established. Speaking to this issue, the primary objective of this study was to show how medical AI researchers position themselves on issues of responsibility regarding errors, such as misdiagnosis with ML-supported decision making, that could be linked to their research outputs.

Drawing on a document analysis of a clinical ML research consortium’s grant agreement, participant observations over the course of the same consortium’s three-year project, and peer-to-peer interviews with participating researchers, we identified a complex interplay of factors that complicate researchers’ assumption of responsibility. These include systemic tensions embedded in research roles, institutional structures, and sectoral norms, as well as three key shifts – agential, local, and temporal – that suspend responsibility as ML research moves from lab to practice.

Our findings help explain why researchers tend to allocate their responsibilities within the boundaries of the academic sector and why efforts to build and implement shared responsibility models that include them struggle to take root. To advance shared responsibility models, we suggest their design should be based on careful consideration of researchers’ epistemic cultures and the systemic conditions that shape their work. – Shared responsibility for ML-based decisions should indeed include the researchers who contributed foundational research. However, such inclusion must be calibrated to the norms, role boundaries, and institutional logics that govern academic practice, as well as the epistemic opacity of the effects for which responsibility is to be shared.

Footnotes

Acknowledgements

We thank Bettina Zimmermann and the members of Ruth Müller’s group, particularly Maximilian Braun, Svenja Breuer, and Dominic Lammar, for their valuable comments on earlier versions of this manuscript. We also extend our gratitude to Sven Nyholm for the opportunity to present this work at his AI ethics seminar and for the insightful feedback the session generated. Finally, we express our thanks to all our project partners for their collaboration throughout this project, and to our interlocutors for generously sharing their time and perspectives.

Author note

Theresa Willem is now affiliated with Helmholtz Munich, Munich, Germany.

Ethical considerations

Ethical approval for this study was obtained from the ethics commission of the Technical University of Munich (approval ID: 288/21 S-EB).

Consent to participate

Written informed consent to participate was obtained from all interviewees.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research presented here was funded by the German Federal Ministry of Health under grant agreement no. 2520DAT920, within the funding program

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data supporting this study is not publicly available to protect the anonymity of the interviewees.