Abstract

In the last few decades, the assumption that the implementation of standardized tests might cause washback effects on language teaching and learning has become certainty. Researchers and practitioners report an increased use of ‘teaching to the test’ (TTT) practices, however, in current literature, consensus about which practices this includes is missing and operationalizations in studies vary greatly. Further, even though TTT is assumed to impair meaningful learning, no study so far examined students’ perspective. The current study therefore used a multi-method approach to theoretically and empirically define TTT and develop a uniform measurement instrument, focusing on students’ perspective of their English language teachers’ practices. Results suggest that TTT is a multidimensional construct consisting merely of test preparation practices, but not changes in teaching style. Multilevel analyses showed adequate fit for two equally plausible models, good psychometric properties, and full measurement invariance of the instrument across gender, first language, and school type. The theory-based and empirically supported instrument therefore represents a valid and reliable measure for uniformly assessing perceived TTT in the language learning context. Our study paves the way for future research studying the effects that this specific type of washback might have on students’ learning outcomes.

Keywords

I Introduction

Language learning represents the process of gaining the ability to communicate (written and orally), express ideas, read and comprehend literature of all types in the original language, and further encompasses gathering knowledge about different cultures. Assessing all components adding up to the mastery of a language in a standardized and – nonetheless – meaningful way is, hence, difficult.

A rising number of countries have begun using standardized tests for performance assessment to enhance the comparability of test results between students, schools, regions, and countries (Copp, 2018; Maag Merki, 2015). In particular, there are steadily growing efforts to evaluate the quality of schools and teachers using the results of centralized school leaving examinations and standardized achievement tests such as PISA or TIMSS. As a consequence, studies report so-called washback or backwash effects (Alderson & Wall, 1993; Spratt, 2005): the implementation of standardized tests influences teachers, classrooms and students (Alqahtani, 2021; Cheng et al., 2004). In recent years, numerous researchers examining washback effects reported teachers feeling obliged to change their teaching to a more exam-focused instruction and increase their use of test preparation strategies to help their students score high, especially when stakes associated with the test are high (e.g. the admission to specific universities; Benmoussat & Benmoussat, 2018; Copp, 2018; Green, 2006; Jennings & Bearak, 2014).

Even though the practical outcome of scoring high on standardized tests might be beneficial for students’ nearer future, many educators fear that this so-called ‘teaching to the test’ (TTT) approach might bring negative consequences for students’ learning behavior, language acquisition, and life-long-learning (Lobascher, 2011; Welsh et al., 2014). Changing teaching to a mere test preparation strategy is often said to ruin a healthy and meaningful way of learning (Menken, 2008; Zakharov & Carnoy, 2021), also in language learning. If language learning becomes test (item) learning, students might not fully acquire the required skills: memorizing grammatical rules, vocabulary and spelling, understanding the underlying concept, connecting it to cultural backgrounds and traditions as well as applying it to real-world situations (Ho, 2009; W. Klein, 1986; Spratt, 2005). This might have severe implications for their future education and careers, as those students might find themselves in settings that require considerable communicative skills in that language (e.g. university, international jobs), which those trained test takers might lack (Benmoussat & Benmoussat, 2018; Lobascher, 2011; Muñoz et al., 2019). Although these issues have been known for a considerable time, there are three open issues in current studies. First, the concept of TTT faces a lack of conceptualization, as, in current literature, there is wide variation as to what practices TTT consists of. Whereas only a few studies describe TTT as a single test preparation technique (e.g. excessively practicing with prior exam items) or change in teaching style (e.g. being more authoritarian), most researchers state that it consists of several aspects (Benmoussat & Benmoussat, 2018; Volante, 2004; Welsh et al., 2014). However, there seems to be no consensus about which practices this phenomenon ultimately consists of. Further, while some researchers describe TTT as changes in teaching content or style due to the implementation of standardized tests (and hence as a form of washback effect; see, for example, Cheng et al., 2004), others argue that the construct comprises merely teaching practices focused on test preparation to get students scoring as high as possible (Welsh et al., 2014; Zakharov & Carnoy, 2021). The plethora of conceptualizations calls for a unification of this concept, as studies that aim to measure the consequences of TTT, so far, cannot be meaningfully compared. Second, current studies of TTT have merely focused on teacher statements, ignoring students’ perspectives (Green, 2006). This neglects the possibility of students not perceiving TTT as something negative, monotonous, or stupendous but rather as a helpful and clearly structured teaching strategy for language acquisition. Third, the possible impacts of TTT on student variables like their skill acquisition or motivation remain an under-examined aspect, especially in language learning.

The primary purpose of this study is to provide researchers and practitioners with a measurement instrument with which they can assess TTT practices. While existing measurement instruments measure TTT by teachers' self-reports (Copp, 2018; Hamilton et al., 2007; Jäger et al., 2012), our measurement instrument is intended to measure perceived TTT via information provided by students. In other words, our measurement instrument assesses whether students perceive TTT practices in the context of foreign language classrooms under the circumstances prior to a standardized exam.

Developing a measure of TTT requires the construct’s conceptualization, which includes two steps: (a) a nominal definition of the construct (includes terms for abstract ideas or mental representations of real-world phenomena and what they mean) and (b) an operational definition of the construct (consists of a set of instructions on what exactly and how to measure the real-world concepts empirically; Bernard, 2013; Grützmacher et al., 2025; Neuman, 2013). These two steps, in the form of the nominal and operational definition, represent the link between theory and empiricism. This study aims to conceptualize the construct based on a comprehensive literature review, validated by empirical testing, and to develop a measurement instrument with a focus on students' perceptions.

II Literature review

The application of TTT in the language learning classroom can be understood as a form of washback effect that occurred due to the implementation of standardized exams and the pressure on schools, teachers, and students to perform well (Cheng et al., 2004). In teaching research, a distinction is made between good teaching and effective teaching (Berliner, 2005; Fenstermacher & Richardson, 2005). Good teaching refers to appropriate, age-appropriate and morally justifiable teaching content and methods that are used or taught with the aim of increasing learners’ skills (normatively defined quality characteristics). TTT is criticized a lot for its’ potential negative consequences for students’ learning behavior, language acquisition, and life-long learning (Lobascher, 2011; Welsh et al., 2014). Changing teaching to a mere test preparation strategy is often said to ruin a healthy and meaningful way of learning (Thanh Ha, 2019; Zakharov & Carnoy, 2021). Effective teaching, on the other hand, refers to teaching that enables learners to reach a certain level of achievement. In order to determine effective teaching, a purpose must be (normatively) defined. Under this premise, teaching to the test could be seen as something positive if it helps students reach a high level of achievement (like was partly found in Zakharov & Carnoy, 2021). However, good teaching does not necessarily lead to effective teaching and effective teaching does not necessarily result exclusively from good teaching practices (Berliner, 2005; Fenstermacher & Richardson, 2005).

Teaching effectiveness research (TER) is mainly concerned with examining the effects of teaching on outcomes (such as the effect of TTT on student outcomes). Such an effect implies that the student outcome changed due to teaching. For a single TER study, it is difficult to include more than a few outcomes, and there is still a strong focus on cognitive student outcomes, especially in subjects like mathematics or language. This practice of focusing on a few outcomes is often criticized because it implies that schools’ only aim is to promote cognitive skills (De Maeyer et al., 2010; Grützmacher, 2022). This one-sided focus on achievement criteria can lead to schools and lessons being geared solely towards these goals or tests that measure these goals. This can lead to overlooking other educational objectives because they appear less relevant. Considering the primary purpose of TTT (i.e. helping students score high on a standardized test), TTT is largely considered a negative teaching practice.

In the literature, several differing conceptualizations of TTT exist. The most extensive review of TTT known to the authors to date is Au’s (2007) qualitative research synthesis. He concluded that the consequences of standardized tests for educational processes can be summarized in three main categories, namely ‘content control’, ‘pedagogic control’, and ‘formal control’, referring to changes in the curriculum, teaching style, and how information is given in the classroom, respectively. In the following, we describe the characteristics and practices that contribute to those main categories, expand Au’s synthesis with quantitative studies, and additionally include the practice excessive test preparation in the literature review, as this practice is most often associated with the term TTT.

1 Dimensions of teaching to the test

a Content control: Changes in the curriculum

In qualitative studies, TTT is predominantly described as changes within the curriculum, mostly manifested as reducing topics (Au, 2007). To cover the test content within a restricted time, many educators feel compelled to reduce the variety of topics in their lessons and emphasize topics that are known to occur in the exam, cutting short or even omitting those that are known (or assumed) not to be included (Berliner, 2011; Rahman et al., 2021). On the other hand, students have also been found to be reluctant to engage with topics known not to be covered in the test, resulting in them not paying attention as they experience this to be ‘a waste of time’ (Tsang & Isaacs, 2022, p. 39). In quantitative studies, too, TTT is often operationalized as a narrowing of the curriculum (Copp, 2018; Jäger et al., 2012). Educators, as well as researchers, propose manifold consequences of the change in the curriculum. The reduction of topic variance within the subject not only affects teachers’ degrees of freedom in planning and preparing their lessons but might also entail gaps in students’ knowledge and higher-order thinking and skills, as they are merely taught ‘skills as they are to be tested rather than as they are to be used in the real world’ (Berliner, 2011; Darling-Hammond & Wise, 1985, p. 320). Further, teachers and students lack the possibility to include daily hot topics or topics that are of particular personal interest and value to students. As a result, some educators describe themselves as merely teaching to the test rather than teaching ‘the real curriculum’, which implies that the perceived loss of a say in the curriculum and the focus on test content is mainly seen as a negative outcome in their profession (Anagnostopoulos, 2003). Even though a focus on test content and a subsequent narrowing of the curriculum could help students feel more secure about which topics they will face in the exam, educators and researchers fear that it will lead to a generation that has very specific knowledge about certain topics but lacks a broad set of skills – such as communicative proficiency in a foreign language – that is required in modern society and economy (Berliner, 2011; East & Scott, 2011; Welsh et al., 2014).

However, Clarke et al. (2003) state that some teachers perceived the changes in the curriculum as a positive effect in that unneeded content was removed from their subjects, making it possible to add other important topics they have had no time to teach until then. This finding is further supported by Au (2007), who also found that, in some cases, the subject matter content expanded.

b Pedagogic control: Changes in teaching style

The pressure to cover and sufficiently anchor the test content in their students’ minds might lead teachers to change their methods from various teaching strategies to a more teacher-centered, lecture-based teaching style (Au, 2007). Whereas teaching the ‘real’ curriculum is said to leave room for developing and applying innovative and creative instruction methods like project-based learning, in-depth discussions, and cooperative learning (Au, 2007; Rodríguez-Muñiz et al., 2016), a mere focus on test-content is considered to cause a more authoritarian teaching style and to make it more repetitious (Au, 2007; Blazer, 2011). In their qualitative study, Welsh et al. (2014) reported that teachers tried to include instruction approaches similar to how the test addresses the objectives. To help their students remember rules, vocabulary, and rote procedures, instruction focused on specific skills required to solve test items (Berliner, 2011). This might take away any opportunity for creative and in-depth learning approaches, lead to monotonous lessons, and erase all forms of motivation and potential interest rooted in the students (Thanh Ha, 2019).

On the other hand, other studies also found increased student-centered instruction in response to the implementation of standardized tests (Au, 2007; Welsh et al., 2014). As teachers cannot predict how the knowledge will be assessed in the exam and further know that each of their students might require a different learning approach, those teachers focused on applying as many teaching strategies as possible while teaching one specific topic relevant to the test.

c Formal control: Changes in information transfer

Interrelated with changes in teaching style, the categorization ‘formal control’ refers to an attempt of teachers to match the transfer of knowledge with how this knowledge will be tested in the exam (Au, 2007). The standardized test format often encompasses specific, on-point questions and tasks (e.g. PISA, TIMSS). Current studies found teachers changing their teaching style to giving new information in little, isolated pieces directly related to the exam rather than applying an integrated approach in which they link the new knowledge to the previous subject matter within or across subjects (Anagnostopoulos, 2003; Blazer, 2011). This change in teaching style is what Welsh et al. (2014) call ‘decontextualized instruction’, leading to ‘artificially inflated test scores’ (p. 98) even for students who might not understand the concepts that are assessed in the exam (Jennings & Bearak, 2014). However, a small but noteworthy number of teachers reported adapting to integrating rather than fragmenting knowledge in the classroom in response to the implementation of high-stakes standardized tests (Au, 2007).

d Excessive test preparation

As the term ‘teaching to the test’ suggests, studies also found an enhanced use of test preparation strategies and materials in and outside the language learning classroom (Benmoussat & Benmoussat, 2018; Zakharov & Carnoy, 2021). TTT might hence be partly described as direct, test-oriented preparation for the exam that includes, for example, practicing with old exam questions, an extensive drill on test items, teaching test-taking strategies, and an increased number of interim tests that mirror the format of the standardized exam (Menken, 2008; Tsang & Isaacs, 2022; Zakharov & Carnoy, 2021). The tendency to practice with test preparation materials increases, especially when the stakes for students and pressure on educators are high (Berliner, 2011). This type of washback has also been found for students, who tended to focus merely on tasks and materials related to the test rather than on skill acquisition when learning for a standardized test (Allen, 2016; Thanh Ha, 2019). Furthermore, students were found to evaluate intensive paper-and-pencil drills and test-oriented teaching positively, eagerly awaiting feedback on their performance (Tsang & Isaacs, 2022).

The implementation of standardized tests entailed using new, very specific test formats that would not usually be taught in lessons. Familiarizing students with the test format by practicing similar items hence might be a valid strategy to avoid students scoring low due to formal difficulties while filling in the examination sheets (Brunner et al., 2007; Wagner & Koch, 2021). Furthermore, students especially value their teachers for using test preparation strategies that aim to show them how to answer test-like questions (Tsang & Isaacs, 2022). However, there is a fine line between ethical and unethical practice. This distinction mainly refers to the validity of the standardized test. While familiarizing students with the test format to a moderate extent might increase test validity, the excessive use of test-like items, giving specific advice on how to answer exam questions, and a generally decontextualized instruction on test items might indeed artificially inflate test scores and hence bias the validity of the test (Messick, 1996; Wagner & Koch, 2021). Standardized tests ought to measure whether students gained knowledge about the core concepts of the tested subject. However, with excessive test preparation, the opposite may occur: teaching what is tested rather than testing what was taught. This raises concerns that test preparation practices may enable students to answer exam questions correctly without genuinely mastering the subject matter (Gordon & Reese, 1997; Hamilton et al., 2007; Welsh et al., 2014). If so, the test may no longer accurately reflect students’ true understanding or abilities, compromising its validity as it fails to assess the intended knowledge or skills.

2 Teaching to the test in language learning

The literature on washback effects and especially on TTT in language learning is inconclusive. Several qualitative studies found language arts teachers reporting significantly reducing the content taught in their classrooms – including certain types of writing – to what would be on the test to help their students score high (Au, 2007; Jones et al., 1999). In general, the largest extent of narrowing the curriculum happened in social studies and language arts classrooms in secondary schools (Au, 2007). On the other hand, other studies find that teachers, due to the narrowed curriculum, were spending more time teaching literacy and writing (Lobascher, 2011; Rahman et al., 2021).

Changes in the curriculum were also accompanied by changes in teaching style. As in the general context, evidence exists that in language arts classrooms, teaching styles changed to a more teacher-centered pedagogy (Au, 2007), that students were drilled on finding mistakes in written work rather than being encouraged to write their own texts or – if the test included a writing component – teachers increase their instruction on revising text characteristics (Jones et al., 1999; Rahman et al., 2021). This closely relates to the increased amount of time spent on teaching test-taking strategies to prepare for the test. In language arts, teachers reported to be ‘teaching to the rubric’, which means that they instruct their students to include specific phrases or text structures that are known to increase test scores (Jennings & Bearak, 2014).

Regarding the fragmentation of knowledge, some studies find that tasks like writing were split up into a step-by-step approach with a clear, test-format structure (Tsang & Isaacs, 2022), while others reported that language arts teachers were now able to focus more on the process of coherently creating a text, rather than giving a procedural writing tutorial (Clarke et al., 2003).

3 Summary of the literature review

In the current literature, there are a variety of nominal definitions of TTT, which leads to different measurements in studies and, consequently, non-comparable study results. Descriptions range from mere changes in the curriculum over changes in how knowledge is transferred in the classroom to the notion that TTT is mere cheating due to excessive test preparation strategies. In sum, when focusing on teacher statements, a categorization of changes in teaching content and teaching practice seems plausible. Changes in teaching content address a narrowing of the curriculum by reducing the variance of topics in the classroom lessons to topics known to be assessed in the standardized exam. Changes in teaching practice encompass an increased tendency to make the lessons more teacher-centered (pedagogic control; Au, 2007; Welsh et al., 2014), test-focused (by repeatedly referring to the test whenever new content is taught and by teaching test-taking strategies; Jennings & Bearak, 2014; Tsang & Isaacs, 2022), repetitive (by repeatedly practicing with exam-like questions; Blazer, 2011; Tsang & Isaacs, 2022; Welsh et al., 2014) and to transfer knowledge in little, isolated pieces (fragmentation of knowledge; Anagnostopoulos, 2003; Au, 2007; Blazer, 2011). Further, researchers also reported an increased use of test-like materials (Berliner, 2011) and mock tests (Zakharov & Carnoy, 2021). However, these categorizations rely exclusively on teacher reports, completely neglecting the students’ perspectives. While teachers might evaluate the reported changes as undesirable, students might not even recognize them or – on the contrary – experience them as something positive if they feel like the applied practices effectively prepare them for the exam. Including students’ perspectives allows for a more complete understanding of how test preparation impacts learning from both sides and sheds light on the washback effects experienced by the learners themselves. Therefore, a multi-informant approach should be used to reduce the teachers’ self-report bias (Reynolds, 1982) and to finally include the students’ perspectives in the picture.

Based on an extensive literature review, we developed a nominal definition of TTT: Teaching to the test represents a conglomerate of changes in teaching content and teaching practice aimed at extensive preparation for a standardized test.

Accordingly, we recognize TTT as a specific form of washback effect in the language learning classroom (Spratt, 2005). However, the studies that this nominal definition is derived from exclusively focus on teacher reports and leave out students’ perspectives.

III Study aims and research questions

Considering the inconclusive literature on TTT in general and especially in language learning, the current study aims to shed light on what practices and teaching characteristics TTT consists of to pave the way for further studies. The aim of the study, therefore, is a general conceptualization of the concept TTT as examined from the students' perspective.

The overarching research questions for the study, as driven by the need for a uniform measurement instrument for TTT, are as follows:

Research question 1: How can the operational definition of TTT from the learner’s perspective be specified and empirically supported?

Research question 2: Does the newly developed measurement instrument represent a valid and reliable tool for assessing TTT from the learners’ perspectives?

The present study focused on the subject English as a foreign language. It was conducted in the context of Austrian secondary schools, where the subject is usually taught from grade 5 on and included in the standardized school leaving examination at the end of 12th grade. The exam has low to middle stakes (E.D. Klein et al., 2009; detailed information about the testing context can be found in the supplemental material), as a lower or higher score has no substantial consequences, but students need to pass the exam to attend university.

IV Methods

1 Participants and procedure

The present study drew its data from a larger longitudinal research project (‘Identification of school success factors’; Lüftenegger et al., 2024) aiming to identify the relative importance of school success factors at the end of secondary education in Austria. To obtain a representative sample, 30 academic secondary schools (= ‘Gymnasium’) were recruited for the project, representing approximately 11% of all upper-secondary schools in Austria’s highest academic track (a detailed description of the sampling procedure is provided in the preregistration of the project). This study used data from wave one of the four measurement waves, which took place in May 2022. Data were collected via an online survey that the participants filled in during a regular classroom lesson supervised by trained assistants. Participants were provided with written informed consent and a data protection statement and asked for permission for data processing. If permission was not given, the questionnaire ended, ensuring participation was voluntary. Schools and students did not receive compensation for their participation; however, schools did receive a school-specific project report after the project was finished. The study was authorized and approved by the ethics board of the University of Vienna (ethics board reference number: 00724) and pre-registered before data collection (Muth & Lüftenegger, 2022).

In wave one, 1625 students of 11th grade, learning English as a foreign language, filled in the online questionnaire. During data preparation, 33 students were excluded because they stated that they did not answer the questionnaire honestly. The final sample consisted of 1,592 students (60.1% female, 1.1% diverse) from 103 English classes of 30 secondary schools of the highest track from all nine federal states of Austria. Participants’ age ranged from 15–20 years (MAge = 17.08, SD = 0.79). Twenty-three students (1.5%) had English as their mother tongue, whereas 273 students (17.2%) indicated that someone had communicated in English with them regularly during their childhood.

2 Scale development

Following the guidelines of Gehlbach and Brinkworth (2011), the construction of the measurement instrument consisted of seven steps, where the first six steps represent a qualitative multimethod study to develop the items for the new instrument (see Figure S1 in supplemental material). Step seven then corresponds to a quantitative study to assess the instrument's psychometric properties.

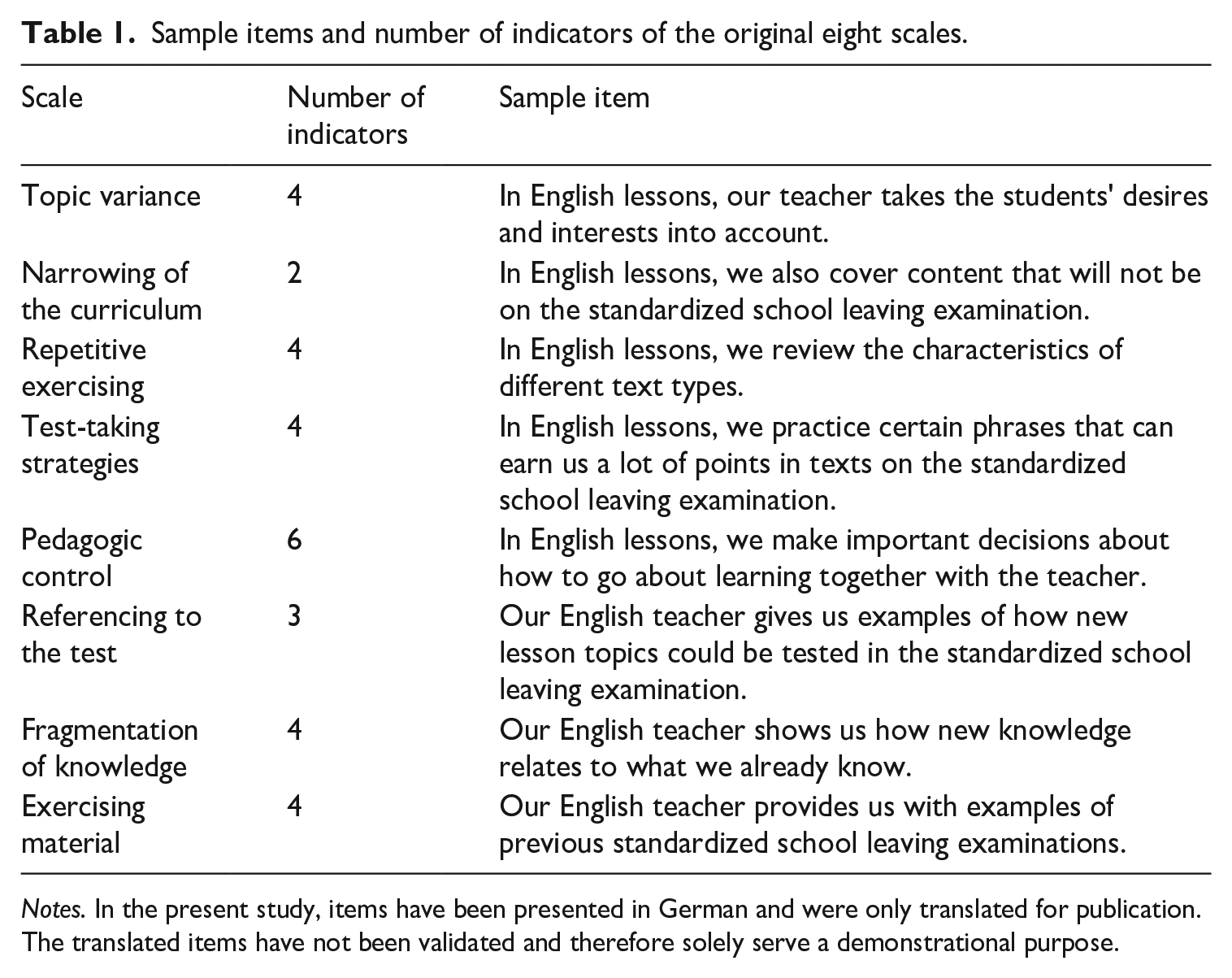

The first step involved extensively reviewing the literature (see Section I) to gain a conceptual understanding of teaching to the test, the operationalizations that have been used so far, and to derive relevant indicators. In step two, semi-structured interviews with three English teachers from secondary schools not participating in the study were conducted to gain insight into their experiences with and conceptualizations of TTT and to identify indicators relevant to their teaching practice. Step three consisted of synthesizing the indicators derived from the literature review and the interviews. In step four, we formulated a total of 44 items within 10 scales. Ten items were based on the literature review and represent adapted versions of items by Baumert et al. (2009), Hamilton et al. (2007), Jäger et al. (2012), and Copp (2018). Three items were taken from an existing scale from Lüftenegger et al. (2017), while the other 30 items were newly created based on the indicators derived from the interviews. In the fifth step, to ensure content validity, we revised these items using feedback from two experts in the field of educational research who were not involved in the initial formulation of the items. This resulted in deleting 11 items and restructuring the instrument, leaving us with 33 items in eight scales. In step six, we conducted cognitive pretesting with five 11th-grade students (aged 17 years) from a school not included in the sample. Their comments were used to further improve the item pool by optimizing item wording, deleting items that seemed conceptually inconsistent, adapting the rating scale, and allocating some items to another scale, resulting in a total of 31 items (see Table S1 in supplemental material) within eight scales (see Table 1).

Sample items and number of indicators of the original eight scales.

Notes. In the present study, items have been presented in German and were only translated for publication. The translated items have not been validated and therefore solely serve a demonstrational purpose.

In an additional step (that is also advised by Gehlbach & Brinkworth, 2011), this item pool was pilot-tested in three high-school classes (N = 61 students, 71.4% female, MAge = 16.38, SD = 0.86; grade 10 and 11) of a school not participating in the main study. Through this, we were able to uncover the last inconsistencies and made final adaptations to the items (i.e. reversing items from negative to affirmative statements to avoid confusion, adapting the rating scale to a 5-point Likert scale, ranging from 1 (‘never’) to 5 (‘always’), with an ‘I don’t know’ answer for the three scales topic variance, narrowing of the curriculum, and repetitive exercising).

3 Quantitative data analysis

Before analysing the data, we defined the ‘I don’t know’ answer category present for three scales as missing value, as for the planned analyses, this category did not contain meaningful information (for an overview about the frequency of missing values, see Table S2 in supplemental material). Further, we recoded negatively worded items so that higher numeric answers would represent more perceived teaching to the test for all items. Descriptive statistics were calculated in R (R Core Team, 2023).

Since the construct’s factors were identified based on the literature, which obtains its findings primarily from teachers’ self-reports, it is crucial to test whether the assumed structure of the construct TTT with the identified factors applies when assessed from a student perspective. For this purpose, the dimensionality and construct validity of the scales and whole instrument were assessed by calculating multilevel confirmatory factor analyses (ML-CFAs) using Mplus 8.10 (Muthén & Muthén, 2023). Maximum likelihood estimation with robust standard errors was used to estimate all models. Missing values (between 0.31% and 13.11% on item level) were handled via the full information maximum likelihood (FIML) approach implemented in Mplus.

To assess the goodness of fit of the CFA models, we used the comparative fit index (CFI), Tucker-Lewis index (TLI), the root mean square error of approximation (RMSEA) and the standardized root mean square residual (SRMR), following the guidelines suggested by Hu and Bentler (1999) regarding cutoff scores for adequate fit to the data: SRMR < .08; RMSEA < .08; TLI > .90; CFI > .90. In the case of poor model fit, residual covariances that arose from similar item wording were specified (Bandalos, 2021). Items that showed a standardized factor loading of λ > .39 were considered valid indicators of the factor.

To answer research question two, in addition to assessing the construct validity of the new instrument with multilevel CFAs, in Mplus, we evaluated the instrument’s psychometric qualities in terms of (1) reliability by calculating McDonald’s (multilevel) composite reliability coefficients (McDonald, 1970); and (2) (multilevel) measurement invariance to demonstrate that the instrument can also be applied in different subgroups of the population. Invariance testing was conducted by calculating six increasingly strict two-level multigroup latent class analyses (LCA), further developing the approach of Rudnev et al. (2018). Models were calculated for the binary latent class indicators gender (male vs. female), first language (German vs. non-German), and school type (eight years vs. four years). Due to the complexity of the mixture models, we used numerical integration via the Monte Carlo method to make the calculations more time-efficient. For model comparison, the Akaike information criterion (AIC), Bayesian information criterion (BIC) and/or chi-square difference testing based on loglikelihood values and scaling correction factors (Satorra, 2000) were used.

V Results

1 Preliminary analyses

Descriptive statistics and bivariate correlations for all eight a priori assumed scales are shown in Table S3 in the supplemental material. Intraclass-Correlations (ICCs) for the 31 initial items showed values between 0.044 and 0.347 (see Table S4 in supplemental material), and only for two items the ICCs were lower than 0.10. This indicates high between-cluster variation and, hence, a need for multilevel data analyses.

2 Multilevel confirmatory factor analyses

Adhering to the high ICCs, we fit a two-level CFA with the eight a priori assumed factors (Model 1). The model results were insufficient, showing poor model fit (RMSEA = .048, CFI = .824, TLI = .799, SRMR = .063) and standardized factor loadings below the threshold of λ > .39 for several items (see Table S5 in supplemental material). Due to basing the initial structure of our measurement instrument on an extensive literature review and expert interviews, we decided to deviate from our preregistration by not calculating an exploratory factor analysis. Instead, we took a content-based approach and re-assessed the items regarding topic similarities.

Especially items three and four of the scale ‘topic variance’ were very similar in content to items four, five, and six from the scale ‘pedagogic control’, as all these items broadly refer to how much autonomy/authority students perceive during English lessons and were partly taken from Lüftenegger et al. (2017). We, therefore, merged the items into a new scale, representing the authority students perceived within the classroom lessons (as they were all recoded). Further, the two items of the scale ‘narrowing of the curriculum’ were similar to the remaining two items of the scale ‘topic variance’ in terms of content and hence merged.

Before fitting another full model, we examined the content validity of the assumed scales separately. Model fit indices were excellent for all models (RMSEA = .00 – .032, CFI = .985 – 1, TLI = .956 – 1, SRMR = 0 – .016), except for the scale ‘Material’, for which modification indices indicated a high correlation between item one and two on both levels. We therefore specified residual covariances for those items and obtained an excellent model fit. However, even though all models fit the data excellently, several standardized item loadings did not reach the threshold of λ > .39. For the scale ‘Fragmentation of knowledge’, we were able to explain the bad loading of the third item (λ = .226) through item wording and omitted the item. For the scales ‘topic variance’ and ‘pedagogic control’ – which both showed multiple insufficient item loadings – the item content was deemed already covered by the other scales. We therefore decided to omit both scales. Descriptive statistics for the remaining scales can be seen in Table S6 in supplemental material.

In the next step, we examined whether the remaining six factors could be confirmed in a more complex model (Model 2), in which we also specified the residual covariance for the two items of the ‘Material’ scale on the within level. Standardized factor loadings were sufficient for all items but one, and the model fit was not optimal (RMSEA = .044, CFI = .912, TLI = .896, SRMR = .046).

Before fitting the next model, we again inspected the modification indices, which indicated high correlations between the third item of the scale ‘material’ and the first item of the scale ‘repetitive exercising’ on the within level, as well as for items one and two of the scale ‘materials’ on the between level. We resultantly specified those covariances in the next model. Based on further considerations about the content of the scales, we realized that four generally represented test preparation strategies (i.e. repetitive exercising, test-taking strategies, referencing to the test and material), while the remaining two (authority and fragmentation of knowledge) represent teaching styles. In the next step, we therefore specified a multilevel model with two second-order factors (‘test preparation’ and ‘teaching style’; Model 3). The model showed an acceptable fit to the data (RMSEA = .040, CFI = .922, TLI = .912, SRMR = .046), a high correlation between the two second-order factors (r = –.773, p < .001), and standardized factor loadings of λ > .39 or higher for all but two items (within: item one of ‘Strategies’; between: item 4 of ‘Repetitive exercising’). However, from an empirical point of view, having a higher-order factor that is composed of only two first-order factors poses serious empirical disadvantages: first, such a model would require an equality constraint to be identified, and second, there is a higher risk of introducing bias for the calculation of (hierarchical) psychometric properties of the model (Gignac, 2016; Zinbarg et al., 2007). Second, from a theory-based point of view, the term ‘teaching to the test’ most often and most strongly refers to ‘excessive test preparation’. When thinking about defining the construct, a less autonomous teaching style, as well as the fragmentation of knowledge, may accompany the rigorous test preparation but might not be inherently defining aspects in a narrow sense. Consequently, we decided to exclude the second-order factor ‘teaching style’ with the two associated first-order factors from the main model and tested what model type consisting of the four factors ‘repetitive exercising’, ‘test-taking strategies’, ‘referencing to the test’ and ‘material’ would fit the data best.

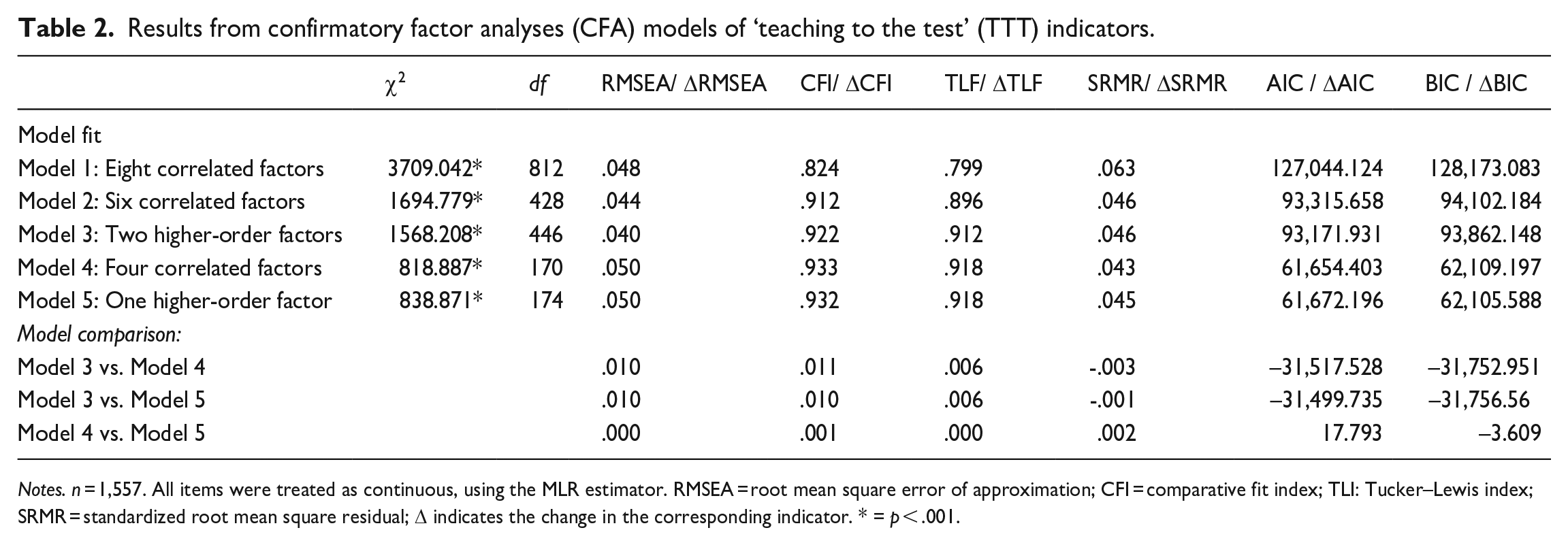

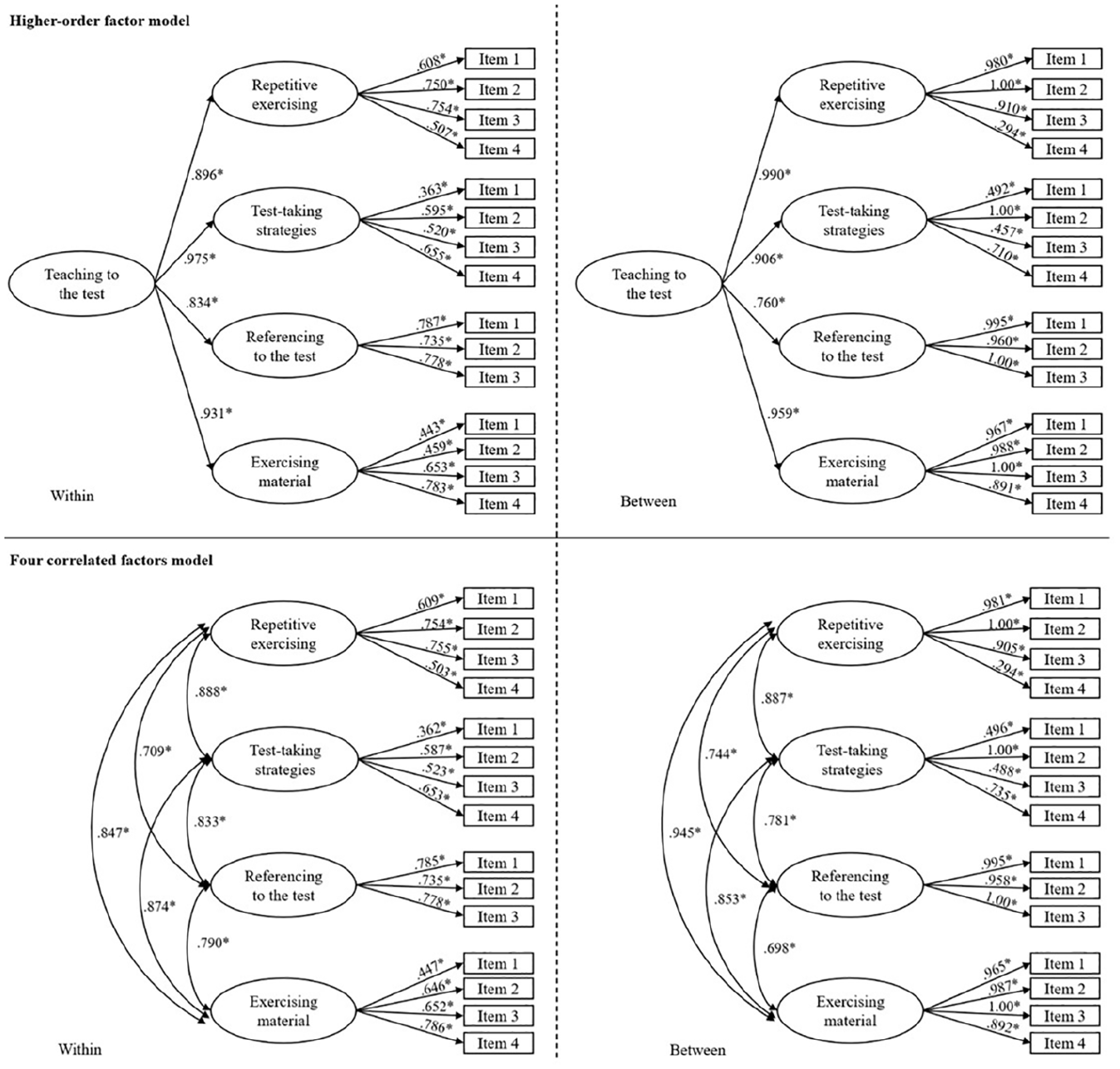

In the last step, we therefore fitted two models: one correlated factor model (Model 4), and one higher-order factor model (Model 5). Residual covariances were specified only on the within-level for the same items as in Model 3. Results of both models showed good model fit indices (see Table 2) and standardized factor loadings of λ > .39 or higher for all but two items (see Figure 1). However, as those items measure unique aspects within the scales, we decided to keep them. Comparing the models in terms of AIC and BIC revealed no substantial advantage of one model over the other.

Results from confirmatory factor analyses (CFA) models of ‘teaching to the test’ (TTT) indicators.

Notes. n = 1,557. All items were treated as continuous, using the MLR estimator. RMSEA = root mean square error of approximation; CFI = comparative fit index; TLI: Tucker–Lewis index; SRMR = standardized root mean square residual; Δ indicates the change in the corresponding indicator. * = p < .001.

Final models.

Regarding the purpose of this study to define TTT, the results justify the presumption that, in a narrow sense, TTT is conceptualized as a multidimensional construct whose facets represent practices for excessive test preparation. However, the acceptable model fit of Model 3, the high negative correlation between the second-order factors, and the standardized factor loadings of > .39 suggest that, in a broader sense, the phenomenon also encompasses changes in teaching style that might accompany the excessive test preparation.

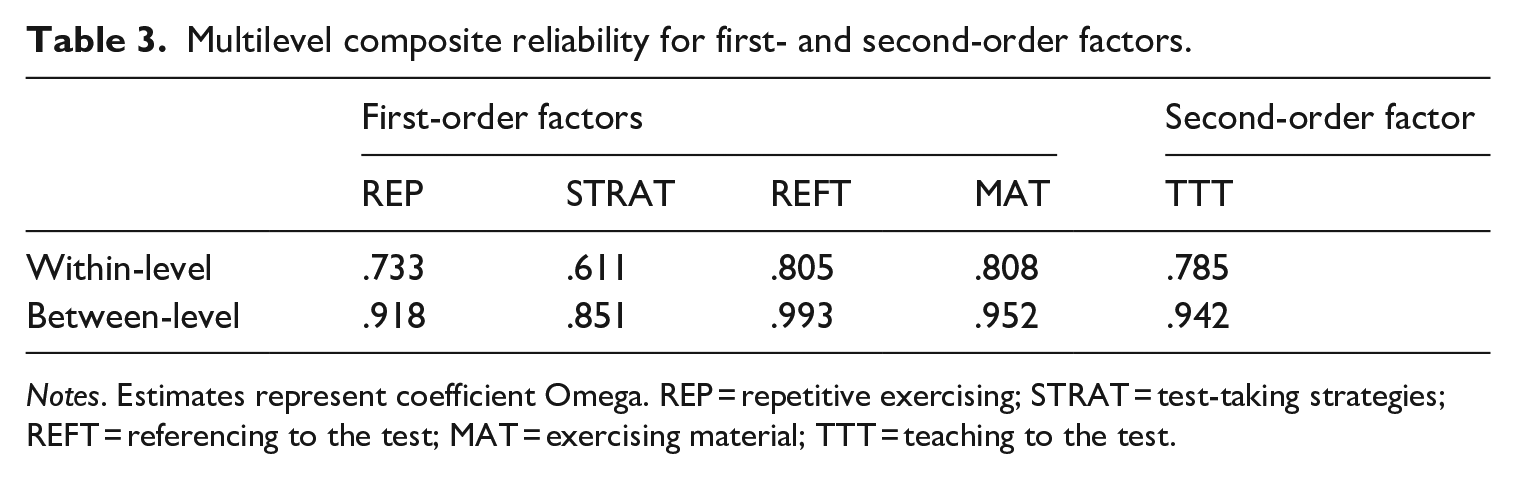

3 Reliability and validity of the newly developed instrument

Proving that a multi-item instrument has good score reliability is an inevitable prerequisite for each researcher before analysing (especially) hierarchical data (Appelbaum et al., 2018). However, it has been common practice to conflate within- and between-group reliability measures, therefore ignoring potential differences for each data structure level. Most researchers applying this practice justify this by referring to classical test theory, which classifies reliability as a property of observed test scores (see, for example, Geldhof et al., 2014). As between-level composite scores are unobserved, calculating between-level composite scores might overestimate the true reliability of the between-level (Lai, 2021). Therefore, to deal with this overestimation issue, the type of multilevel model must be taken into account when calculating multilevel composite reliability scores (Lai, 2021). For our newly developed measurement instrument that assesses TTT in the narrow sense, we, therefore, considered the classifications of multilevel models by Stapleton et al. (2016), categorizing TTT as a configural construct (see also Stapleton & Johnson, 2019) and calculated multilevel composite reliability scores (coefficient omega) for the first- and second order factors. All scales showed acceptable to excellent omega values (see Table 3), indicating moderate to strong reliability of all scales. In second-order models, the reliability of the overall scale score is of particular interest. For our model, omega values on the within as well as on the between level were sufficiently high, indicating strong reliability for the whole instrument. Results from the reliability testing for the accompanying scales can be found in Table S7 in supplemental material.

Multilevel composite reliability for first- and second-order factors.

Notes. Estimates represent coefficient Omega. REP = repetitive exercising; STRAT = test-taking strategies; REFT = referencing to the test; MAT = exercising material; TTT = teaching to the test.

4 Measurement invariance testing

To assess whether the instrument can be applied universally across groups, we tested measurement invariance of the second-order factor model across the binary class variables gender, first language (German vs. non-German), and school type (eight years vs. four years). Results of all two-level multigroup latent class analysis models are presented in Table S8 (in the supplemental material).

In sum, the results indicate that all first-order factors as well as the second-order factor can be considered invariant across gender, first language, and school type, implying equal meaning of TTT and the associated scales across subgroups. As also scalar invariance could be established, the results support the notion that means can be compared with confidence across the groups.

VI Discussion

The aims of this study were (1) to conceptualize the construct teaching to the test (TTT) based on a comprehensive literature review and validated by empirical testing, and (2) to develop a measurement instrument. By examining the students’ perspectives, the study contributes to assessing this special type of washback on the teacher from the perspective of one of the most directly concerned and – so far – under-researched groups: the learners’.

To answer research question 1, we used the literature-derived nominal definition (‘Teaching to the test represents a conglomerate of changes in teaching content and teaching practice aimed at extensive preparation for a standardized test’) to develop an operational definition of the construct. Testing the indicators to see whether the operational definition was also empirically plausible revealed that, in general, this was the case. However, considering methodological prerequisites for complex models as well as reconsidering results from the literature suggested that the concept of TTT can be defined in a broader and a more narrow sense, at least from the students’ point of view. We found that, even though in the literature educators report washback effects for their teaching style due to the implementation of standardized tests (e.g. being less autonomy-supportive, giving information in isolated pieces rather than making connections to prior knowledge; Blazer, 2011; Hamilton et al., 2007; Larsson & Olin-Scheller, 2020), these aspects do not represent defining aspects of TTT in a narrow sense but rather are accompanying changes due to the excessive focus on test preparation. Consequently, based on theoretical and psychometric findings, we conclude that – from a student’s point of view – TTT in a narrow sense can be nominally defined as a ‘conglomerate of teaching practices especially focused on (excessive) test preparation’. However, from our data it is evident that changes in perceived autonomy during learning as well as teachers’ tendency to fragment knowledge during teaching are important related factors that can (and should) justifiably be examined in conjunction with the test preparation strategies.

The four strategies found in this study represent a specific type of washback and are in line with studies examining students’ test preparation strategies with respect to standardized language exams, showing that students usually engage in learning activities that include practicing with past exam questions (Xu & Liu, 2018), drilling language skills that are known to be on the test (Gao, 2006), or repeatedly rehearsing materials such as text characteristics and vocabulary lists (Gao, 2006; Liu & Yu, 2021; Xu & Liu, 2018). Even though these learning strategies might share features with deep learning strategies, even in language learning, Liu and Yu (2021) advocate for distinguishing it from ‘normal learning’ due to research finding dissimilar relations of both learning types with test outcomes. However, in our opinion, this should only hold true if the test is not measuring what it is intended to measure, namely language proficiency in terms of integrated communicative ability (written or spoken). Alqahtani stated in a recent study: ‘To accomplish learning objectives, an exam’s context and format must overlap with curriculum content’ (2021, p. 22), not vice versa. If TTT is (narrowly) defined as specific test preparation strategies and considered a form of washback, the question is how standardized exams can be changed to achieve students scoring high in a test that assesses real-life language proficiency. Language learning requires integrating several skills (East & Scott, 2011). It does therefore not suffice to teach students those skills in singular, but to help them connect them and to understand the greater contextual background the language developed and is used in. Language educators however will be hesitant to do so if they fear being faced with negative consequences of low student achievement in a test that does not focus on communicative language assessment, even though this might run against their general principles regarding language learning approaches (Spratt, 2005). The pressure exerted on teachers therefore automatically reflects on students and opens the door for excessive TTT that might indeed hinder purposeful language learning.

Regarding the learners’ perspectives, our results show that secondary school students indeed do perceive these teaching practices within their English language lessons. So far, however, neither do we know how they evaluate it, nor how these practices affect their learning, motivation, and achievement.

Washback effects may be positive or negative, depending on whether they lead to learning that fosters authentic language production and perception or to mere test drilling that is aimed at producing high scores but leaves the students unprepared for language use outside the classroom by deterring deep learning (Alqahtani, 2021; Messick, 1996). Considering this classification, TTT might be categorized as negative form of washback for language learning (Liu & Yu, 2021), but this, as mentioned before, highly depends on the test it prepares the students for. If the test assesses language proficiency (and hence leads to communicative language teaching) rather than ticking the correct answer (i.e. grammar–translation), TTT might as well be good language pedagogy (East & Scott, 2011). Further, students may want their teachers to teach to the test, either because they value the test outcome (more extrinsically motivated; see, for example, Liu & Yu, 2021), because they feel better prepared and hence less stressed or anxious, or because they experience the preparation as a chance to improve their language ability (Spratt, 2005). If educators aim to adequately prepare their students for the test and promote communicative proficiency, they should focus on task-oriented learning, learner autonomy, and continuous (self-)assessment of the desired skills (East & Scott, 2011; Spratt, 2005). On the higher level, policy makers should focus on creating tests that assess not mere grammar–translation but communicative and integrated skills and provide educators with conditions in which meaningful language learning can take place.

Research question 2 concerned the psychometric qualities of the developed measurement instrument. The results of the two final models showed a good fit to the data, indicating content validity. All scales and the second-order factor demonstrated acceptable to excellent reliability. Further, measurement invariance could be established for the binary classes gender, first language, and school type, indicating that researchers can be optimistic that TTT is measured consistently across different sub-groups of the student population. For research practice, the two equally plausible models pose an opportunity for researchers to strategically design their studies. Higher-order models display an important restriction, known as the proportionality constraint, resulting from non-freely estimated first-order factors (Gignac, 2016). Therefore, researchers need to decide whether they strive to examine research questions related to the facets of TTT or the general phenomenon before conducting their study. If, for example, researchers strive to examine indirect washback on learners in general, using the second-order factor model might be the better choice. If, on the other hand, researchers aim to examine differential effects of the respective TTT practices on motivation, willingness to communicate or language skills in productive or receptive domains, we would advise to base analyses on the correlated factor model.

VII Conclusions, limitations, and future directions

In a narrow sense, teaching to the test is a special form of washback focused on (excessive) test preparation. To understand the possible impacts these test preparation strategies might have on purposeful language learning, studies examining the effects on student variables in the context of different low- to high-stakes standardized exams are needed. The present study provides researchers with a newly developed, psychometrically sound measurement instrument that can be used to tackle these essential research gaps. Also, the study provides researchers with reliable scales to measure two important teaching style aspects considered to underlie accompanying changes due to the excessive focus on test preparation, hence making it possible to also examine perceived TTT in a broader, more holistic sense.

Although clearly informative and generative, the present research is also limited in certain aspects. First, our sample consisted solely of secondary school students of grade 11 in general schools of the highest track in Austria and therefore represented a relatively homogenous group. To further validate the measurement instrument, future studies should apply it to other age groups, school grades, and school types. Second, in this study, we only considered content validity and assessed the construct validity of the scales by calculating confirmatory factor analyses. Even though this might be a sufficient first step, other validity types would further strengthen the validity of our measurement instrument.

Regarding future studies, researchers should examine students’ evaluation and the frequently proposed effects of TTT on their learning, well-being, and language proficiency, preferably in the context of different standardized test conditions. Washback effects were found to be limited in the context of low-stakes tests, while the amount increases with increasing stakes (Liu & Yu, 2021; Shih, 2007). Further, future studies should examine if students or especially their parents want teachers to teach to the test, depending on the stakes, and what values, beliefs and aspirations underlie this desire. Future studies could also investigate the emergence and volatility of TTT practices before an examination and differentiate them from teachers’ regular teaching styles. Lastly, forthcoming research endeavors should also examine whether different amounts of TTT influence learning-related variables and learning outcomes to a different extent. By providing a multi-dimensional measurement instrument, this study paves the way for investigating the questions above and the all-too-often proposed (detrimental) effects that TTT might have on meaningful language learning.

Supplemental Material

sj-docx-1-ltr-10.1177_13621688251351250 – Supplemental material for Teaching to the test in the English language classroom: Development and validation of a measurement instrument

Supplemental material, sj-docx-1-ltr-10.1177_13621688251351250 for Teaching to the test in the English language classroom: Development and validation of a measurement instrument by Joy Muth, Luisa Grützmacher and Marko Lüftenegger in Language Teaching Research

Footnotes

Acknowledgements

The authors would like to thank Elouise Botes and Gholam Hassan Khajavy for their insightful advice on the preregistration and manuscript, respectively.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This article was written as part of the Identification of School Success Factors in General Secondary Schools project (principal investigator: Marko Lüftenegger), which was funded by the Austrian Federal Ministry of Education, Science, and Research [no grant number].

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.