Abstract

This study examines the washback of the National Matriculation English Test (NMET), a high-stakes English proficiency test in China, on students’ English learning outcomes. Employing a mixed-methods approach, data were collected from 3105 senior high school students via a questionnaire survey and from 21 students and 6 teachers through semi-structured interviews. Findings reveal that the washback of the National Matriculation English Test was asymmetric across three key dimensions: language knowledge, language skills and language learning-related elements. Among these, the test predominantly influenced students’ English language knowledge, followed by language skills, with the weakest impact on learning-related elements. In addition, the washback of the NMET varied significantly across learner subgroups defined by gender, grade level and school status, highlighting the stratified nature of its washback effects. These findings shed light on the complex and multifaceted nature of test washback, whose formation, scope and intensity are mediated by a range of contextual factors and learner characteristics, and also call attention to the potential of high-stakes testing to reproduce educational inequalities.

Keywords

Introduction

In the field of applied linguistics and educational assessment, the influence of testing on teaching and learning is commonly referred to as test washback (Alderson and Wall, 1993). Building on foundational washback theories and models (e.g. Green, 2007; Shih, 2007), empirical investigations of test washback have expanded significantly over the past few decades. However, relatively few studies have focused on how language tests affect students’ learning outcomes (Green, 2013; Zhang, 2024), a term that encompasses intended and unintended changes in students’ linguistic competence, cognitive engagement and study behaviors resulting from their test preparation. Meanwhile, test washback is widely recognized as a complex, multifaceted phenomenon. Previous research has shown that a test may exert uneven or imbalanced effects on different aspects of language teaching and learning (e.g. Shih, 2007; Zhang, 2024). In addition, test washback may vary systematically across subgroups of learners defined by characteristics such as gender, first language (L1) background or second language (L2) proficiency level (e.g. Dong et al., 2023; Cheng LY et al., 2011; Fan et al., 2014). In this paper, we refer to the former pattern as ‘asymmetric washback’ and the latter as ‘stratified washback’.

Despite their significance, asymmetric and stratified washback effects have received limited systematic attention in the literature. To address this gap, the present study investigated the asymmetric and stratified washback effects of the NMET (National Matriculation English Test), a high-stakes English proficiency test in China, on students’ English learning outcomes. In this study, learning outcomes are conceptualized broadly to include three interrelated dimensions: language skills, language knowledge and language learning-related elements, which are discussed in detail in the ‘Test Washback on Students’ Learning Outcomes’ section.

Literature review

Test washback on students’ learning outcomes

In the context of high-stakes testing, washback on language learning outcomes refers to the specific linguistic and cognitive gains students achieve as a result of preparing for a language test. This encompasses both content knowledge and skills acquired and learning quality (Shen et al., 2024). Understanding washback on learning outcomes is crucial because it reveals not only what students learn for the test, but also how their overall language proficiency develops in the process. Despite its importance, relatively few empirical studies have directly examined how preparing for a language test translates into tangible learning gains. Existing washback studies conceptualize learning outcomes in relatively narrow terms, often focusing on test score (Green, 2007) or improvements of specific language skills (Zhang, 2024), while overlooking other essential aspects, such affective or motivational factors which have been recognized as integral to language learning (You and Dörnyei, 2016). In this study, we adopted a broader conceptualization of learning outcomes to include three dimensions that were considered central to students’ language learning: language skills, language knowledge and language learning-related elements (i.e. affective and motivational factors). These dimensions were grounded in the core components in the New English Curriculum Standards (NECS; Ministry of Education, 2013), the de facto policy document guiding English teaching and learning in Chinese secondary schools. Specifically, the language skills dimension encompasses the four core language skills (i.e. listening, speaking, reading and writing). The language knowledge dimension refers to systematic components of language knowledge in the NECS, with vocabulary and grammar knowledge the most salient. The learning-related elements dimension addresses the affective and motivational aspects of language learning, including students’ learning motivation, confidence and interest in language learning, and positive attitudes towards English and intercultural communication.

Existing studies on test washback on learning outcomes have commonly employed two methodological approaches. The first approach investigates learners’ self-reported perceptions of improvement in their language abilities. Studies using this method typically employ surveys and/or interviews to examine whether students believe that preparing for a high-stakes language test has indeed enhanced their language proficiency (e.g. Zhang, 2024; Zhang and Bournot-Trites, 2021). For instance, Zhang (2024) surveyed 20,062 first-year undergraduates from 103 universities in mainland China, finding that the NMET improved students’ English skills, including areas not directly tested by the NMET. The second approach to examining washback on learning outcomes uses comparative designs to measure students’ performance gains across different instructional conditions or cohorts (e.g. Andrews et al., 2002; Green, 2007). Such studies aim to provide more objective evidence of a test's impact on learning outcomes than those based on self-report data. However, isolating the specific effect of test preparation is difficult due to confounding factors such as differences in teacher expertise or student motivation. These complications make causal attributions challenging, obscuring whether performance differences directly result from test effects (Andrews et al., 2002). As such, it is important to employ methodological and data source triangulation in washback research on learning outcomes.

In this study, we adopted the first approach, drawing on students’ self-reported data to explore their perceived learning gains from NMET preparation. To complement the questionnaire survey, we also conducted interviews with students and teachers to provide more in-depth and contextualized accounts (see ‘Methodology’ section). Although self-reports cannot directly capture actual performance gains and may be subject to biases such as over- or under-estimation, they provide a valuable window into learners’ lived experiences and subjective understandings of how test preparation shapes their learning. By combining a large-scale survey with qualitative interviews, this study contributes significant insights into how students experience and interpret the washback effects of the NMET on their learning outcomes.

Asymmetric and stratified washback

Washback has been traditionally conceptualized within a binary framework, classified as either positive or negative (Watanabe, 2004). However, this dualistic conceptualization oversimplifies the complex and multifaceted nature of test washback. Recent research suggests that test washback is not a fixed or uniform phenomenon but rather a dynamic and context-dependent process that is influenced and/or mediated by multiple factors, including test design, learner characteristics and broader educational, social and policy contexts (e.g. Dong et al., 2023; Zhao, 2024). Consequently, rather than merely classifying test washback as positive or negative, scholars have advocated moving beyond binary classifications to explore washback mechanisms and mediating factors (Dong 2020; Cheng LY et al., 2011; Tsang and Isaacs, 2022). Within this framework, the concepts of asymmetric and stratified washback have become important, as they push washback research beyond a simple binary conceptual model.

Given that washback has been recognized as an important consideration in contemporary validity frameworks (e.g. Bachman and Palmer, 2010; Messick, 1996), asymmetric washback has emerged as a critical validity concern. Grounded in Messick's (1996) argument that tests must reflect the full complexity of the target construct, asymmetric washback occurs when a test disproportionately influences certain aspects of the construct while neglecting others. Empirical evidence consistently supports the existence of asymmetric washback. For instance, Zhang and Bournot-Trites (2021) revealed that the NMET preparation in China inadequately addressed listening and speaking skills, despite students’ overall positive evaluations of the NMET impact. Like asymmetric washback, stratified washback raises legitimate concerns over test validity. When a test has differential washback effects on identifiable groups of learners, they compromise validity by introducing inequalities in test preparation and learning outcomes (Xi, 2010). Such disparities suggest that access to learning opportunities and test-related activities may not be equitably distributed among test takers. Empirical research has provided evidence for the existence of stratified washback along various learner variables, such as gender (e.g. Fan et al., 2014; Dong et al., 2023; Hung and Huang, 2019), language proficiency (e.g. Cheng LY et al., 2011) and grade level (e.g. Cheng X et al., 2021). For example, Cheng X et al. (2021) found that students with higher English proficiency levels were more actively engaged in listening and speaking activities than their lower-proficiency peers, and that test preparations varied significantly by grade level.

In this study, we focused on three learner variables – gender, grade level and school status – that we considered particularly salient for generating stratified washback effects in the context of the NMET. Gender was included as previous studies show it mediates learning approaches and psychological responses to high-stakes assessments, potentially leading to stratified washback effects (e.g. Fan et al., 2014; Hung and Huang, 2019). Grade level was treated as a temporal indicator of proximity to the NMET, as previous research suggests that both the nature and intensity of washback may fluctuate as the test approaches (Watanabe, 2004). Finally, school status was examined as a crucial contextual factor in China's stratified education system, where key and non-key schools differ substantially in resources and academic expectations, thereby shaping test washback (e.g. Dong, 2022). Together, these variables enabled us to investigate how the stratified washback effects of the NMET operate across different learner and institutional contexts.

The present study

Research context: the NMET in China

This study was conducted in the context of the NMET, one of the most high-stakes English tests in China, as its scores are used for university admissions. Designed by the National Education Examinations Authority (NEEA), the NMET has multiple versions, with a national version used by most provinces and provincial-level regions, and local versions developed by municipal education authorities. This study focuses on the NMET variant used in Chongqing, one of the four major municipalities. 1 The NMET is developed in accordance with the NECS (Ministry of Education, 2013) and comprises a written test and an oral test. The written component, scored out of 150 points, is divided into 4 sections: listening comprehension (20%), reading comprehension (26.7%), language knowledge/use (30%) and writing (23.3%). Whereas reading comprehension, language knowledge/use and writing are standardized across all NMET variants, the inclusion of listening and speaking sections varies by region (Zhang and Bournot-Trites, 2021). At the time of this study, the listening subtest in Chongqing was administered twice annually and separately from the other subtests as part of a pilot reform exploring multiple NMET administrations per year. The speaking subtest, although offered to some candidates, was not included in their overall NMET scores.

Research questions

The present study, as part of a larger research project, explored the NMET's asymmetric washback effects (i.e. across the three dimensions of language skills, language knowledge and learning-related elements) and stratified washback effects (i.e. on learner subgroups defined by gender, grade level and school status) on students’ English learning outcomes. Specifically, it addressed the following two research questions:

RQ1: How does the NMET exert washback effects on senior high school students’ English learning outcomes across different learning dimensions? RQ2: How does the NMET exert washback effects across learner subgroups defined by gender, grade level, and school status?

Methodology

This study consisted of two stages. Stage 1 involved a questionnaire survey of 3105 senior high school students to examine their perceptions of the NMET's impact on different aspects of English learning outcomes. Stage 2 consisted of interviews with 21 students and 6 teachers. In what follows, we detail the research methodology adopted at each stage of the study.

Participants

In Stage 1, a purposive sampling method was employed to ensure participants’ representation across all three grade levels and from municipal-, district- and county-/township-level schools. The final sample included 1402 male students (45.2%) and 1703 female students (54.8%). In terms of their grade distribution, 1199 students were in Grade 1 (38.6%), 1098 in Grade 2 (35.4%) and 808 in Grade 3 (26%). By school tier, 1145 (36.9%) attended municipal-level schools, 1193 (38.4%) district-level schools and 767 (24.7%) county- or township-level schools. These tiers of schools differ significantly in terms of resources, teacher qualifications, student demographics, learning environments and governance structures (Dong et al., 2023). For example, municipal-level schools tend to receive more funding, employ more highly qualified teachers and enroll students from higher socioeconomic backgrounds compared with their district- and county-level counterparts.

To gain in-depth and contextualized insights into the findings from the questionnaire survey, we recruited 21 student participants (10 males and 11 females) and 6 teachers (3 males and 3 females, with 6 to 32 years of teaching experience) in Stage 2, using a purposive sampling method. This qualitative component aimed to capture diverse viewpoints and to explore the mechanisms underlying the patterns identified in the survey. To ensure confidentiality, pseudonyms were assigned to both student and teacher participants when reporting the results.

Instruments

Stage 1 draws on data from one section of a questionnaire developed for a large national research project examining the impact of the NMET on English learning in Chinese secondary schools (Dong, 2020; Dong et al., 2023). This section focused on students’ perceptions of how the NMET facilitates different aspects of their English learning outcomes. Its design was primarily informed by the NECS (Ministry of Education, 2013; see ‘Research Context: The NMET in China’ section), which outlines the goal of English learning at the secondary school level; that is, to cultivate students’ comprehensive ability to use English, including five key aspects: English language skills, language knowledge, emotion and attitudes, learning strategy and cultural awareness. All questionnaire items were rated on a 5-point Likert scale ranging from ‘1’ (not at all) to ‘5’ (a very large degree). A pilot study with 179 valid responses demonstrated satisfactory reliability (Cronbach's α = .926).

Following the questionnaire survey, semi-structured interviews were conducted to gain in-depth qualitative insights into participants’ perceptions of the NMET's washback effects. An interview protocol with a parallel design for students and teachers was developed, structured around three core domains derived from the quantitative survey and aligned with RQ1. Participants were invited to reflect on the extent and nature of the NMET's impact on specific areas, including language skills, language knowledge and language learning-related elements. Sample questions included: Do you think that the NMET's preparation has promoted your English speaking skills? If so, could you elaborate on how the NMET has influenced English speaking skills? Although the interview questions directly mirrored the constructs in the survey, they were designed to elicit more elaborated and nuanced accounts. By aligning the interview protocol with the survey dimensions, the qualitative data served to confirm, extend and enrich the patterns identified in the survey findings.

Data collection

The questionnaire data were collected with the assistance of English teachers from the six participating senior high schools. Students who consented received a link to the questionnaire from their teachers, which was administered through a survey platform in China. Once completed, the data were downloaded, cleaned and prepared for analysis. The interviews were conducted by the first author based on the interview protocols. All interviews were conducted in Chinese, the participants’ L1. With participants’ consent, the interviews were audio-recorded and later transcribed verbatim for analysis. The interviews with both students and teachers lasted an average of 30 minutes.

Data analysis

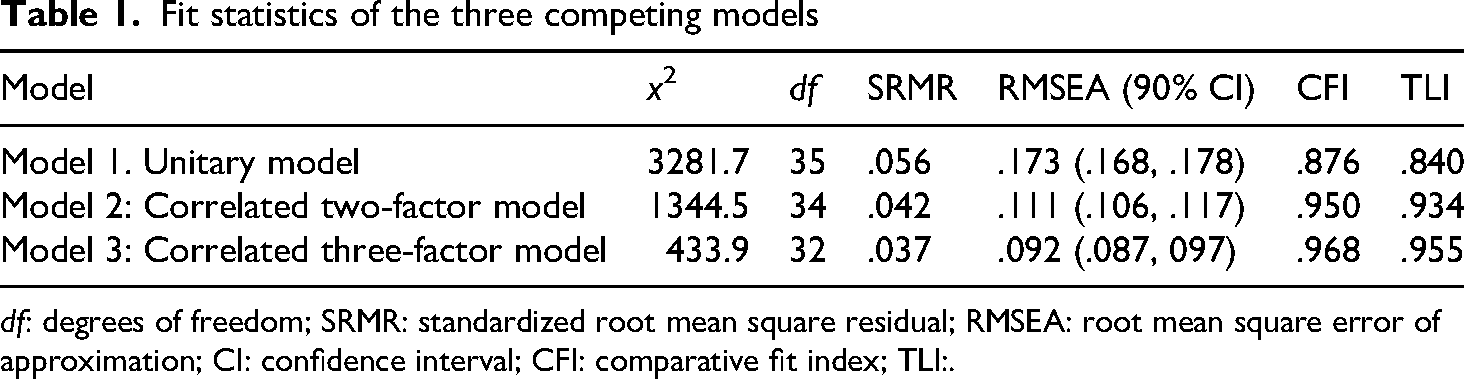

First, confirmatory factor analysis (CFA) was performed using AMOS 21 to verify the factorial structure of the questionnaire. At the stage of questionnaire development, we hypothesized that students’ perceptions of the NMET washback consisted of three dimensions: language skills, language knowledge and language learning-related elements (i.e. affective and motivational factors). To validate this structure, we proposed and compared several CFA models to determine whether the data supported our hypothesized model. When evaluating the model-data fit and comparing competing models, we used four fit indices: chi-square statistic, comparative fit index (CFI), Tucker-Lewis index (TLI), root mean square error of approximation (RMSEA) and standardized root mean square residual (SRMR). Specifically, a smaller chi-square value indicates better fit, while a CFI and TLI value above .90, with a RMSEA value and an SRMR value below .10, suggests a good fit (Kline, 2011).

To address RQ1, we calculated the descriptive statistics at the item and factor levels to provide an overview of the NMET's washback on students’ English learning outcomes across the three dimensions. To examine whether the NMET exerted asymmetric washback effects on the three different dimensions of students’ learning outcomes, we conducted a repeated-measures analysis of variance (ANOVA). When a significant difference was detected, post hoc pairwise comparisons with Bonferroni correction were performed to identify specific differences. To address RQ2, we first employed a multivariate analysis of variance (MANOVA) to investigate whether the three independent variables (i.e. gender, grade level and school status) had significant multivariate effects on the three dependent variables, representing students’ perceived learning outcomes. Following the MANOVA, we conducted separate univariate ANOVAs for each dependent variable to further explore the individual effects of gender, grade level and school status on the three outcome dimensions. Where significant main effects were detected, post hoc tests were used to identify group differences. All analyses were performed in SPSS 21.0.

To elucidate our findings, we conducted thematic analysis of the interview data, using NVivo 12 (QSR International, 2018). A preliminary coding scheme was generated through repeated readings of a subset of the transcripts. Next, two researchers independently applied this coding scheme to coding about 20% of the dataset. Inter-coder reliability was assessed using Cohen's Kappa (κ = 0.83), with discrepancies resolved through discussion. One researcher then coded the remainder of the data with the coding scheme. Since the interviews were conducted in Chinese, all interview excerpts presented in this paper were translated from Chinese to English.

Results

In this section, we present the results. We begin by comparing the three hypothesized models using CFA. We then report the washback effects on each of the learning dimensions to address RQ1 (asymmetric washback). Finally, we examine washback effects across different learner groups, defined by gender, grade level and school tier (RQ2, stratified washback).

CFA

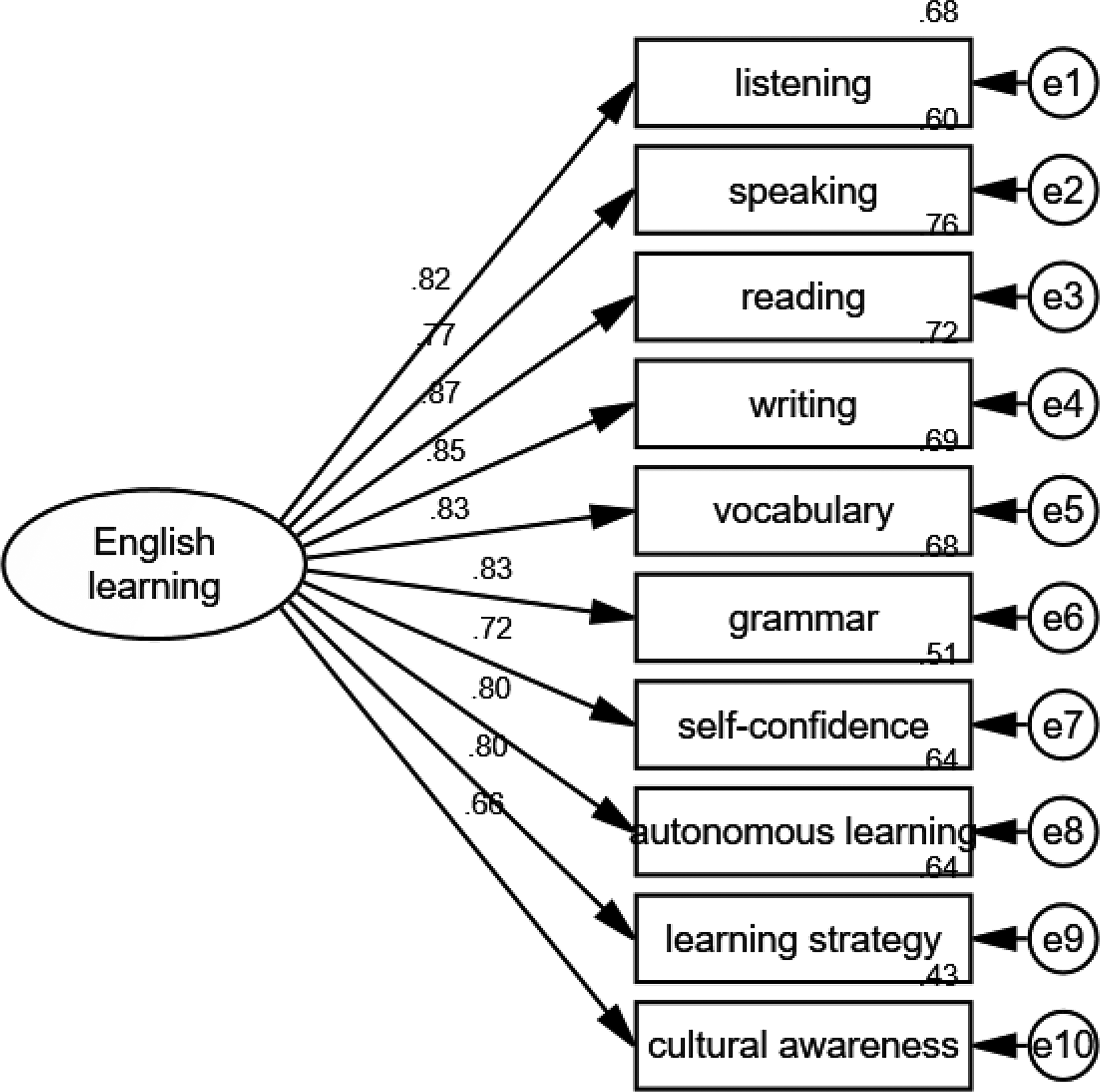

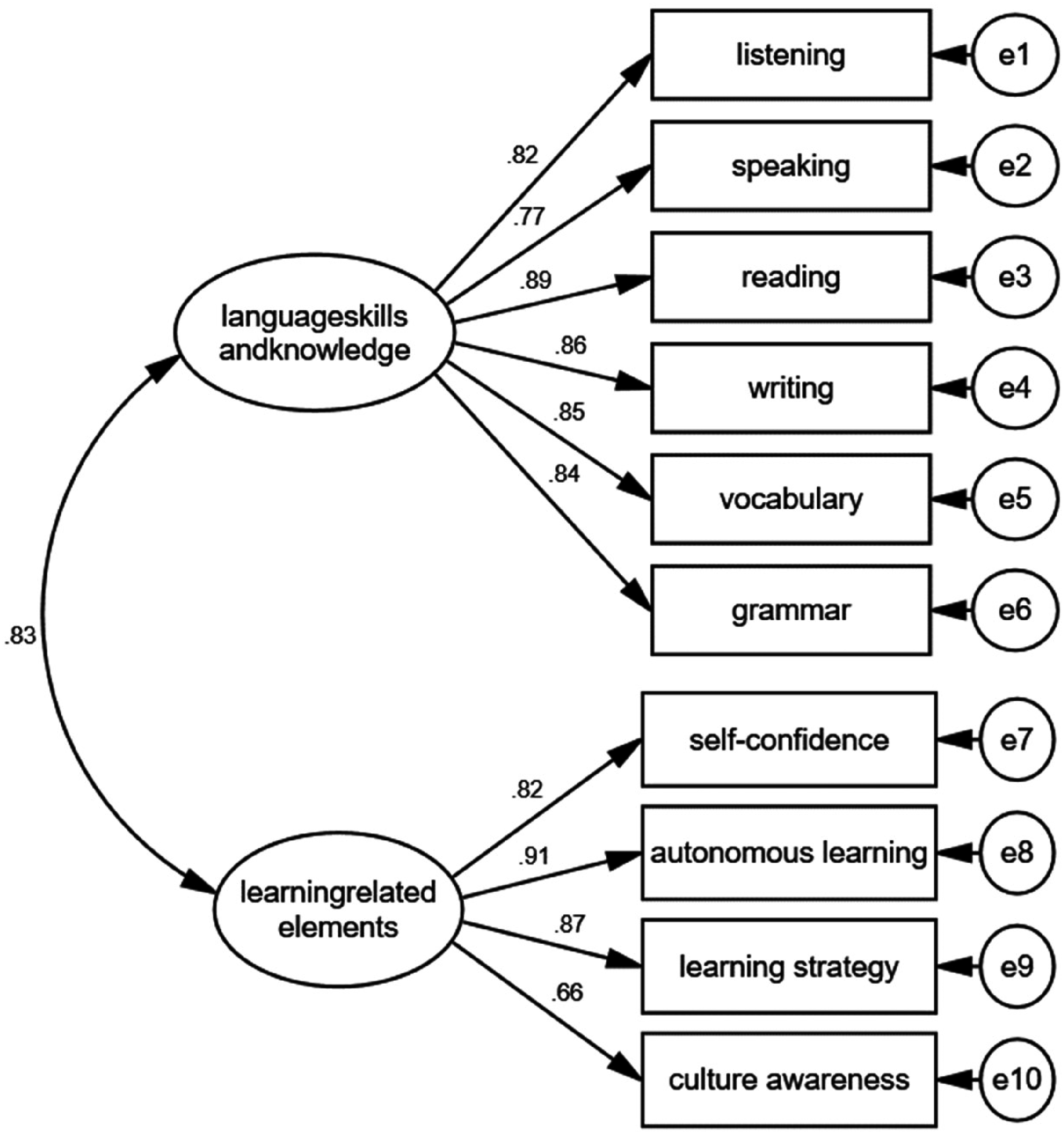

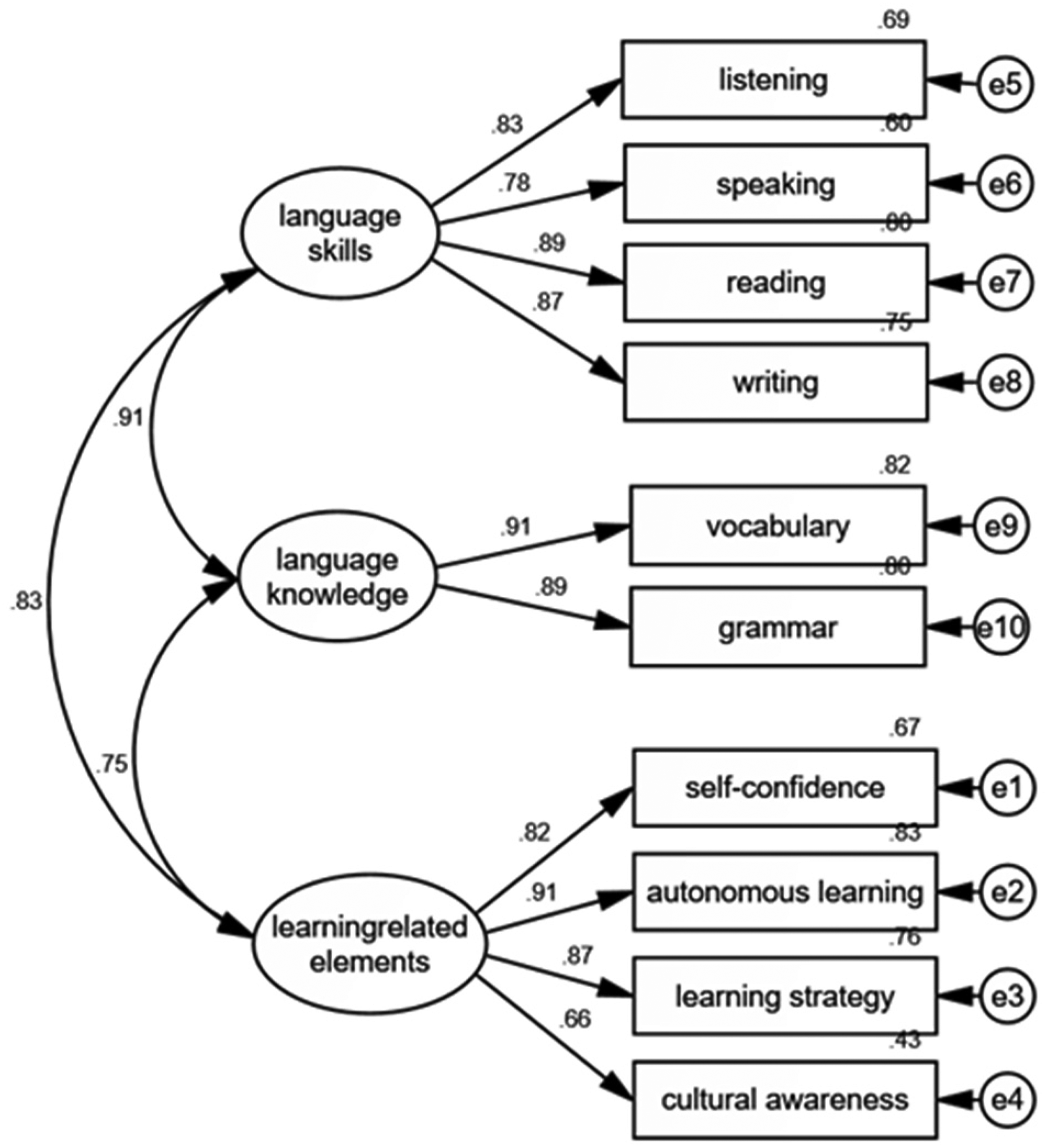

We specified and tested three competing models. The first was a unitary model, in which all questionnaire items were hypothesized to load onto a single underlying construct (see Figure 1). The second was a correlated two-factor model, in which questionnaire items were hypothesized to load onto two correlated latent factors: language skills and knowledge, and learning-related elements (see Figure 2). The third was a correlated three-factor model, which posited three distinct but correlated latent factors: language skills, language knowledge and learning-related elements (see Figure 3). The three models were tested to determine the model with the best fit to the data. As indicated in Table 1, the unitary model (Model 1) did not fit the data satisfactorily (e.g. both CFI and TLI values are below .90). Therefore, this model was rejected. Although Models 2 and 3 both demonstrated acceptable fit, Model 3 exhibited a better fit to the data, with a substantially lower x2 value and an RMSEA below .10. Therefore, this model was selected as the best fitting model. All observed variables load significantly on their respective factors (standardized factor loadings ranging from .66 to .91), further supporting the construct validity of this instrument.

Unitary model (Model 1).

Correlated two-factor model (Model 2).

Correlated three-factor model (Model 3).

Fit statistics of the three competing models

df: degrees of freedom; SRMR: standardized root mean square residual; RMSEA: root mean square error of approximation; CI: confidence interval; CFI: comparative fit index; TLI:.

Washback effects on different learning dimensions (RQ1)

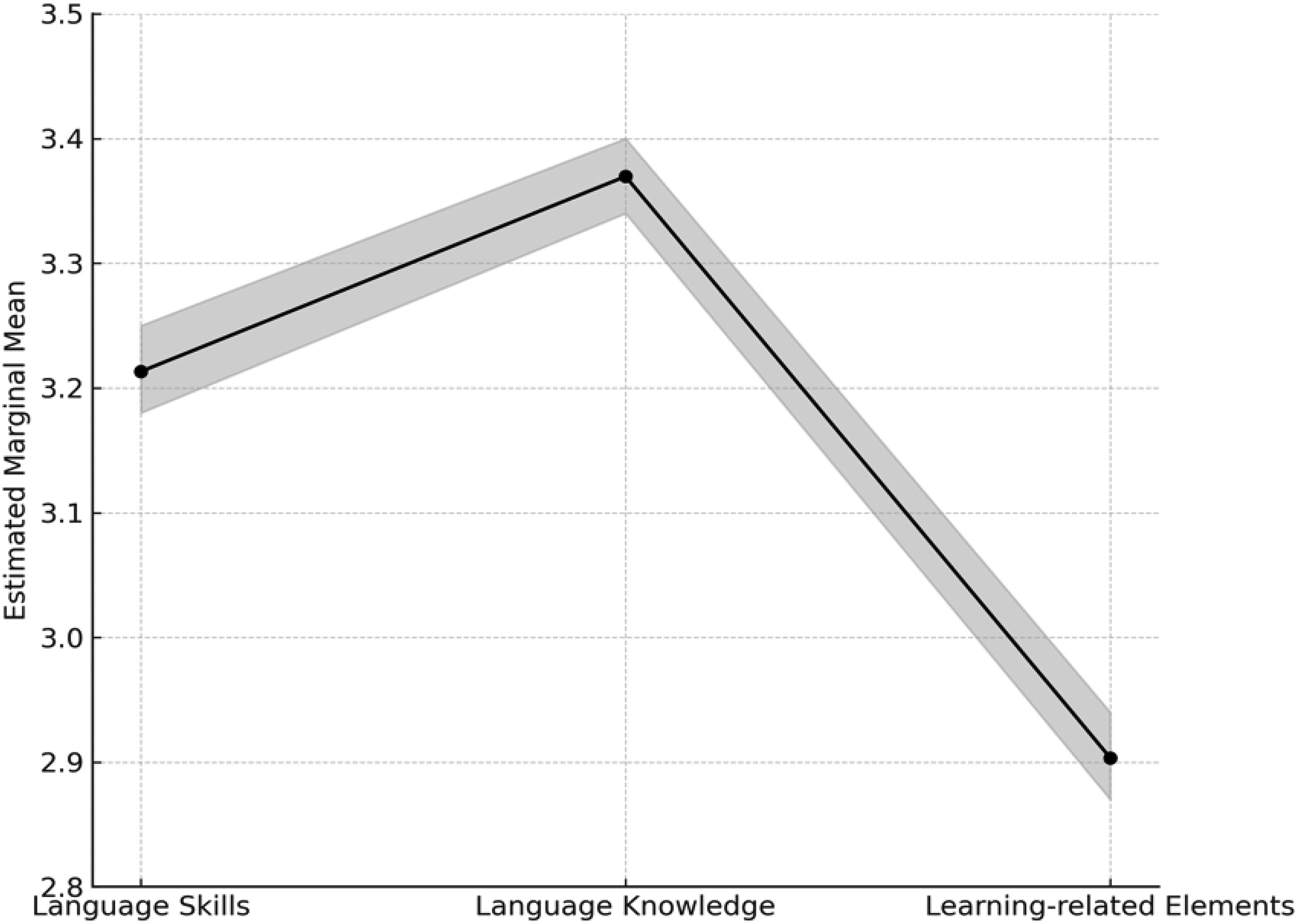

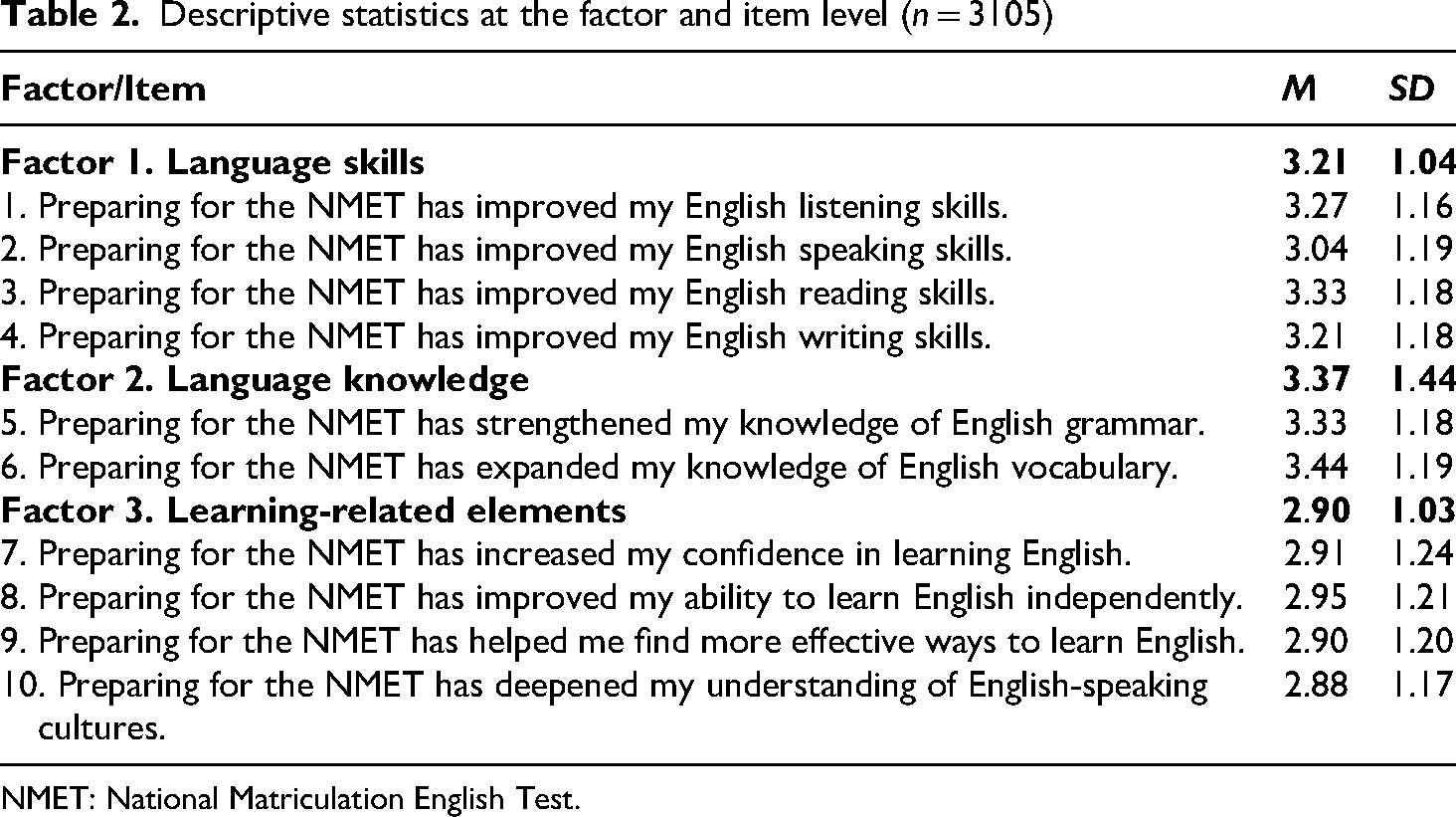

Descriptive statistics at the factor level (see Table 2) revealed that students reported the lowest endorsement for ‘language learning-related elements’ (M = 2.91, SD = 1.03) compared to ‘language knowledge’ (M = 3.37, SD = 1.14) and ‘language skills’ (M = 3.21, SD = 1.04). A repeated-measures ANOVA showed significant differences in students’ perceptions across the three dimensions (F = 619.35, p < .001; see also Figure 4). Since Mauchly's test of sphericity was significant (χ² = 390.97, p < .001), indicating a violation of the sphericity assumption, Greenhouse-Geisser corrections were applied. Post hoc comparisons indicated that students perceived the greatest impact of the NMET on ‘language knowledge’, followed by ‘language skills’, with the least impact on ‘learning-related elements’. All pairwise comparisons were statistically significant (p < .001), confirming that the NMET exerted an asymmetric washback effect, with different degrees of impact perceived by students on different aspects of their English learning outcomes.

Estimated marginal means of the three dimensions (with 95% confidence intervals).

Table 2 indicates that among the four language skills, ‘reading’ had the highest mean value (M = 3.33, SD = 1.18), followed by ‘listening’ (M = 3.27, SD = 1.16) and ‘writing’ (M = 3.21, SD = 1.18), while ‘speaking’ had the lowest mean value (M = 3.04, SD = 1.19). A repeated-measures ANOVA revealed significant differences in students’ perceptions of the NMET's impact across the four language skills (F = 117.04, p < .001). Post hoc comparisons showed that students reported significantly greater improvement in reading than in listening, writing and speaking (p < .001). Listening received significantly higher endorsements than writing and speaking (p < .001), and writing exceeded speaking (p < .001). Both items focusing on language knowledge (Items 5 and 6; see Table 2) received high mean endorsements from students, although ‘vocabulary’ (M = 3.44, SD = 1.19) was rated higher than ‘grammar’ (M = 3.30, SD = 1.22), and the difference was statistically significant (t = 10.66, p < .001). Table 2 also shows that the four items associated with language learning-related elements have similar mean values, ranging from 2.88 to 2.93. A repeated-measures ANOVA revealed a statistically significant result (F = 3.28, p < .05). Post hoc comparisons showed that students reported significantly greater improvement in learning autonomy (p < .05) and learning strategy (p < .01) than in cultural understanding. No other pairwise differences were statistically significant.

Descriptive statistics at the factor and item level (n = 3105)

NMET: National Matriculation English Test.

The interview data with students and teachers provide insight into the quantitative findings. Perhaps not surprisingly, given the high-stakes nature of the NMET, both groups identified achieving a high score as the primary goal for students and a central focus of instruction. Both groups attributed the NMET's strongest impact on reading skills to the high weighting of the reading subtest (26.7%; see ‘Research Context: The NMET in China’ section). For example, 20 of the 21 students reported prioritizing reading practice in and out of class. Similarly, all six teachers indicated that they emphasized reading instruction from the beginning of senior high school. In contrast, speaking received the least attention, as it is excluded from the overall NMET score. Fourteen students and five teachers reported investing little effort in speaking practice. As Teacher Li remarked: Although speaking is very important, we don’t invest much time and energy in it. Since speaking scores are excluded from the overall NMET scores, if we still invest time and energy in speaking, it deviates from the major aim of teaching, which is to boost students’ scores.

Interestingly, however, seven students and one teacher (Teacher Ni) maintained that they still valued speaking, arguing that the ultimate goal of language learning is to develop communicative competence, with speaking being a crucial skill. Both students and teachers expressed concerns about the writing subtest, criticizing the simplicity and predictability in its design, which influenced how writing was taught and practiced. These negative perceptions appeared to influence their preparation for writing and, by extension, their learning outcomes in writing skills. As Teacher Luo noted: Writing is seldom practiced, especially in the first and second year. When the NMET is approaching in the third year, we usually require students to memorize some model essays and sentence patterns to prepare for the test. Students can obtain satisfactory writing scores through these ways.

Vocabulary and grammar were consistently emphasized as essential for success in the test. Despite the recognized importance, several teachers criticized the NMET's reliance on multiple-choice questions to assess these areas, arguing that such formats may inhibit students’ ability to apply language knowledge in authentic communicative contexts. Although both student and teacher participants recognized the value of elements such as learning confidence, strategy use and cultural awareness for overall language learning experience, students generally did not perceive them as directly contributing to their NMET performance. As a result, they felt that preparing for the test did little to improve these aspects. Twelve students reported that the NMET had minimal impact on their understanding of English-speaking cultures, as such content was either absent or only marginally represented in the test, a concern echoed by the teachers. That said, 12 students reported that in striving for higher NMET scores, they worked to develop more effective learning strategies, such as time management in test preparation and targeted practice to strengthen weaker language knowledge and skills.

Washback effects across learner subgroups

To investigate whether the NMET exerts differential washback effects on student subgroups defined by gender, grade level and school status, a MANOVA was first conducted to examine the possible main effect of the three independent variables on the three dimensions of learning outcomes. The results of Box's M test were significant (p < .001), indicating violation of the assumption of equal covariance matrices; thus, Pillai's trace was used. The MANOVA revealed statistically significant multivariate effects for gender (Pillai's trace = .064, F = 69.77, p < .001), grade level (Pillai's trace = .004, F = 2.31, p < .05) and school status (Pillai's trace = .065, F = 34.61, p < .001). Due to the significant multivariate effects, follow-up univariate ANOVAs were conducted to further explore the differential washback effects across learner subgroups.

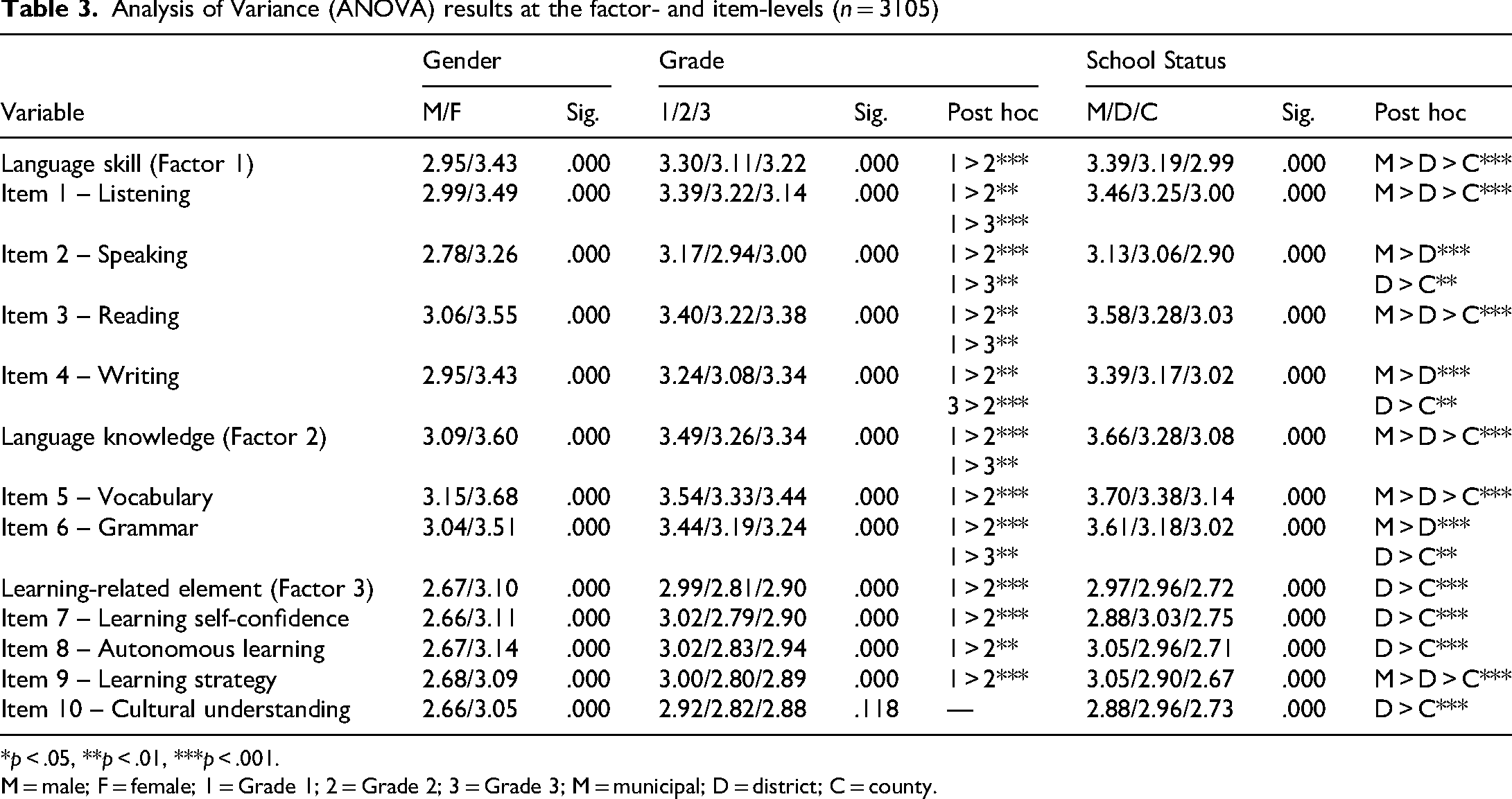

As shown in Table 3, gender had a statistically significant effect across all three dimensions of washback. Female students consistently reported higher mean scores than male students in language skills (F = 178.97, p < .001), language knowledge (F = 197.93, p < .001) and learning-related elements (F = 141.22, p < .001). This gender-based stratification was also evident at the item level (p < .001 in all cases), indicating that female learners perceived a stronger impact of the NMET on their language learning outcomes. Grade level also significantly influenced perceptions of washback across all three dimensions. Overall, students in Grade 1 reported stronger washback effects than those in Grades 2 and 3, with statistically significant differences across nearly all items (p < .001).

Analysis of Variance (ANOVA) results at the factor- and item-levels (n = 3105)

*p < .05, **p < .01, ***p < .001.

M = male; F = female; 1 = Grade 1; 2 = Grade 2; 3 = Grade 3; M = municipal; D = district; C = county.

The most substantial stratification was observed for school status, with significant differences found across all three factors and nearly all items (p < .001; see Table 3). Students from municipal schools reported the highest levels of washback on learning outcomes, followed by those from district- and county-level schools. This pattern was especially evident in the language knowledge dimension (F = 67.73, p < .001), compared with language skills (F = 35.56, p < .001) and learning-related elements (F = 16.84, p < .001). At the item level, the strongest differences emerged for vocabulary (F = 56.35, p < .001) and grammar (F = 66.86, p < .001), again favoring students from higher-tier schools. Similar patterns were found for language skills, particularly in reading (F = 71.95, p < .001) and listening (F = 48.79, p < .001). For learning-related elements, although the differences were less pronounced, students from municipal- and district-level schools reported higher impacts than those from county- and town-level schools in ‘autonomous learning’ and ‘learning strategy’ (p < .001).

Discussion and conclusions

The findings related to RQ1 reveal asymmetric washback effects of the NMET. Survey findings indicated that participants perceived the NMET as having a more pronounced impact on language knowledge, followed by language skills, with the least impact on learning-related elements. This pattern reflects the NMET's focus on discrete language components, particularly vocabulary and grammar (Dong et al., 2023), and raises concerns about its construct validity, notably the underrepresentation of key language constructs in test design (Messick, 1996). Perceptions of test importance directly influenced instructional focus and student behavior, which helps to explain the asymmetric washback effects observed in this study. For example, reading received the strongest endorsements from students, largely due to its perceived weight and importance in the NMET, a finding consistent with Zhang and Bournot-Trites (2021). However, it should be noted that washback is not shaped solely by test stakes or content; it is also mediated by values and beliefs of students and teachers (Zhao, 2024). For example, several participants reported integrating regular speaking practice into their teaching and learning routines because they believed speaking was a critical language skill, despite its exclusion from the NMET scores.

In addition to its asymmetric washback effects, our findings also clearly indicate the NMET's stratified washback effects, thus highlighting the need to interpret test washback in relation to learner characteristics and the broader educational and social contexts in which they are embedded. In terms of gender, female students reported significantly more positive perceived washback on learning outcomes than male students across all three dimensions. This finding aligns with previous washback studies (e.g. Zou and Xu, 2014) and may reflect stronger intrinsic motivation and engagement among female learners in second language acquisition (e.g. You and Dörnyei, 2016). Regarding grade level, our analysis revealed that Grade 1 students reported the strongest washback, a finding that may appear counterintuitive. However, it is plausible that in the early stages of secondary education, the NMET serves more as a broad organizing principle than an immediate pressure point. This may allow for a more general, and perhaps more positive, engagement with language learning. Notably, school status emerged as a particularly influential variable in shaping students’ experiences of the NMET's washback effects. This finding can be interpreted in relation to structural differences among schools, such as unequal access to high-quality educational resources, that mediate how test preparation is approached and how students engage with language learning. Students in higher-tier schools are more likely to respond positively to the demands of the NMET and engage in more meaningful, sustained learning activities. In contrast, those in lower-tier schools often face compounded disadvantages, with their test preparation becoming narrowly focused and anxiety-driven (Dong et al., 2023). These findings suggest that school status not only stratifies test preparation practices but also reproduces educational inequalities through differential washback effects, thus raising important questions about the fairness and justice of the NMET, and of high-stakes testing more broadly (cf. Xi, 2010). The findings also reinforce the argument that test washback is socially mediated and must be understood within the stratified realities of the school system.

By examining the NMET's asymmetric and stratified washback effects, this study extends previous research on NMET washback (e.g. Cheng X et al., 2021; Zhang, 2024) in several important ways. First, it investigated asymmetric washback across three interrelated dimensions, including not only language skills and language knowledge that have been included in previous studies, but also affective and motivational factors. The findings draw attention to the underrepresentation of constructs critical to students’ language development, such as cultural knowledge and awareness. Second, it examined stratified washback through three learner variables – that is, gender, grade level and school status – showing how high-stakes testing can reproduce or exacerbate educational inequalities. This perspective moves beyond the traditional dichotomy of positive versus negative washback and foregrounds the socially mediated nature of test washback effects. Finally, by integrating asymmetric and stratified perspectives, the study highlights both the technical and social dimensions of language testing, offering a more comprehensive account of NMET washback than previous studies.

This study offers several implications for language teaching, learning, assessment and policy for both the NMET in China and other educational contexts. Theoretically, it reinforces the view that washback is not a static, binary phenomenon, but a complex and dynamic process shaped by multiple contextual and individual factors. In addition, it also calls attention to the socially mediated nature of test washback and the potential for high-stakes testing to reproduce educational inequalities (McNamara et al., 2019). Practically, to promote more positive washback, the NMET should adopt more communicative and performance-based tasks as well as culturally rich content. Importantly, speaking should be included as a compulsory, scored component in the NMET regime. More broadly, large-scale testing programs should carefully consider construct representation to avoid underrepresenting important aspects of communicative language competence or privileging the constructs that are more easily measured (Messick, 1996). In addition, the stratified washback effects identified in this study illustrate how high-stakes testing can reinforce existing educational inequalities. These findings point to the need for a more equity-oriented approach that addresses both systemic reforms to tackle structural inequalities and practical considerations in educational policy formulation and appropriation. For example, policies should ensure that lower-tier institutions receive targeted support such as financial, material and teaching resources to mitigate the differential impacts of the NMET and to help prevent the self-perpetuation of educational inequalities.

This study has several limitations. First, it relies primarily on students’ self-reported perceptions of the NMET's washback on their learning outcomes. The findings should therefore be interpreted with caution. Future research could adopt longitudinal designs incorporating both test performance data and classroom observations to track learning trajectories. Second, although the sample was large, the questionnaire contained a small number of items. These items aimed to capture three key dimensions of learning outcomes, but they inevitably oversimplify what is in reality a complex, multidimensional construct. In addition, the items related to learning-related elements may appear abstract to students, potentially affecting interpretation and responses. Future research can broaden and refine the questionnaire to better capture learning development. Third, as the data were collected in Chongqing, regional policy and sociocultural differences may shape asymmetric and stratified washback effects; hence, caution needs to be exercised when generalizing the findings to other regions or NMET variants. Finally, although this study highlights the complex and multifaceted nature of test washback, it did not examine how various factors interact to shape washback effects. Future research could examine these interrelationships to provide a more nuanced understanding of washback mechanisms.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research work reported in this article was supported by the 2024 Key Research Initiative of Sichuan International Studies University (Grant Number: sisu202401).