Abstract

Realist evaluation is an established approach in evaluation. The main question driving realist evaluation is to uncover how, for whom, and under what conditions an intervention works. This is accomplished by empirically examining the inner mechanisms by which an intervention generates outcomes within a particular context—explicating the underlying context–mechanism–outcome configurations. Despite the central role of context in realist evaluation, systematic knowledge about how context is conceptualized and applied in realist evaluation is limited. Informed by the findings of a comprehensive review, the aim of this article is to unpack how context is conceptualized in realist evaluations, the types of contextual factors examined as part of context–mechanism–outcome configurations, and the methodological challenges experienced by evaluators when examining these in realist evaluations. The article concludes with a discussion of conceptual and practical developments for future applications of realist evaluations.

Introduction

Realist evaluation is an established approach in evaluation. Following Pawson and Tilley’s introduction of realist evaluation, a steady stream of publications, books (Emmel et al., 2018), and even conferences (the International Realist Conference 2021) dedicated to the topic have emerged. As we will go on to show, the number of published articles on realist evaluations continues to grow—both within and outside evaluation-specific journals. The realist tradition continues to evolve.

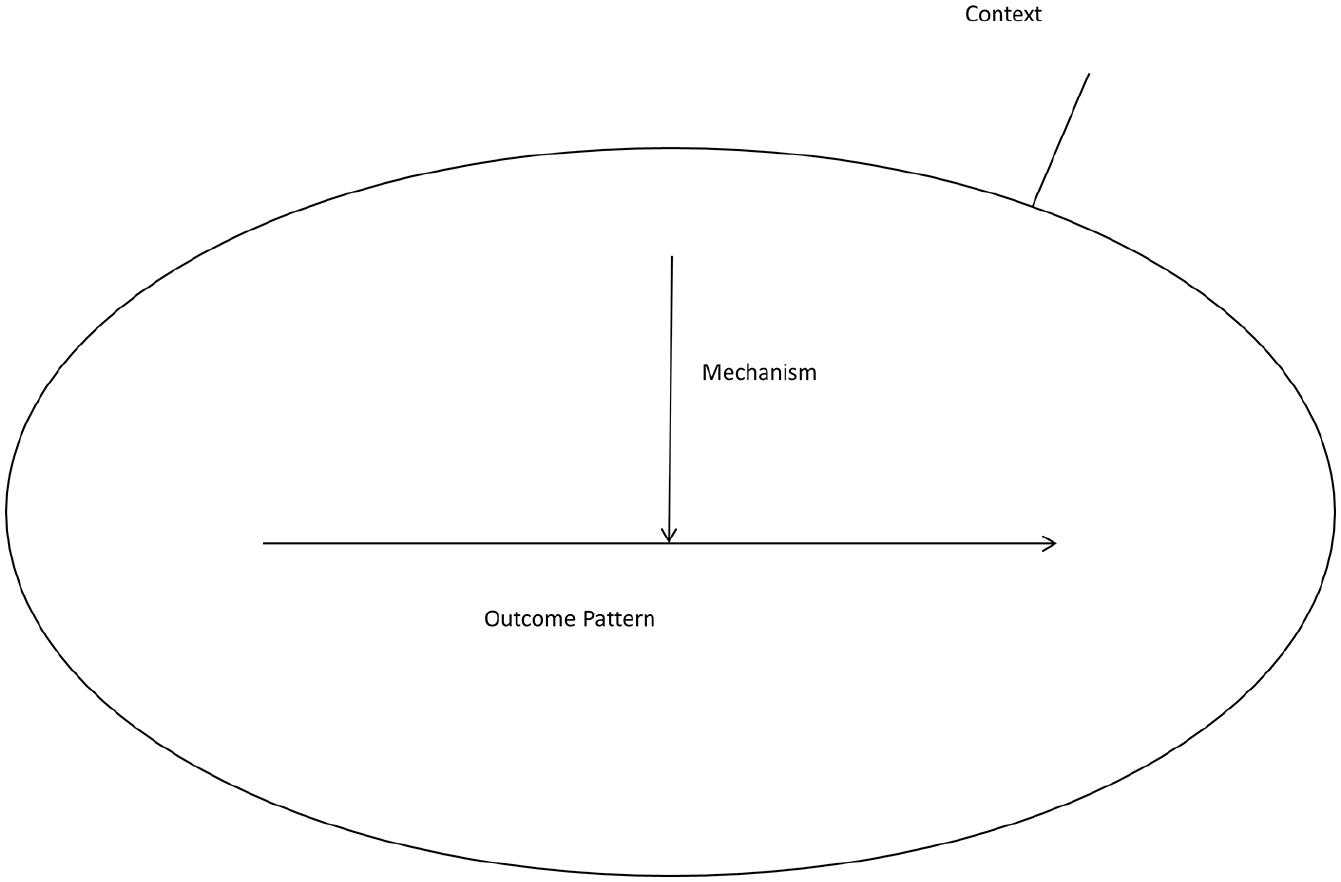

The main question driving realist evaluation is to uncover how an intervention 1 works, for whom, and under what conditions (Pawson and Tilley, 1997). The realist approach assumes that interventions are “theories incarnate” (Pawson and Tilley, 1997). As visually depicted in Figure 1, the theoretical structure underlying realist evaluation is in the form of context–mechanism–outcome (CMO) configurations, describing the generative processes (mechanisms) 2 producing one or more psychological, attitudinal, and behavioral changes among the intervention’s participants (outcomes) embedded within a given setting (context). As Pawson and Tilley (1997) posit, explicating these CMO configurations is a prerequisite for sound evaluation.

Generative causation.

Emerging from the growing literature on realist evaluation, several reviews of realist evaluations have over the years been published, each focusing on different aspects of realist evaluation. These include how realist evaluation has been applied in health systems research (Marchal et al., 2012), how mechanisms have been conceptualized and applied in realist evaluations (Lacouture et al., 2015; Lemire et al., 2020), underlying ontological and epistemological variations in the conceptualization of context (Greenhalgh and Manzano, 2021), and practical challenges of using the realist evaluation approach in general (Ridde et al., 2012) or in the particular context of knowledge transfer interventions (Salter and Kothari, 2014). Considered collectively, these reviews shed light on several important aspects of realist evaluation.

The present review both builds on and reaches beyond previous reviews of realist evaluations by focusing specifically on the concept of context—a key component of CMO configurations. Despite the central role of context in realist evaluation, recent contributions suggest that context is often found to be neglected and problematic (Greenhalgh and Manzano, 2021; Marchal et al., 2012; Renmans et al., 2020). A particular challenge for realist evaluators is the complexity of context and identifying what is relevant for a particular inquiry (Coldwell, 2019; Pawson, 2013; the Realist And Meta-narrative Evidence Syntheses: Evolving Standards (RAMESES) II Project, 2017). As part of the RAMESES II Project (2017), an effort to establish methodological and reporting standards for realist reviews and evaluations, eminent scholars sought to clarify the realist conceptualization of context, emphasizing that context is constituted by preexisting conditions for an intervention, has multiple layers and multiple factors, can be either enabling or disabling, and, through its interaction with mechanisms, can constitute new contexts.

Despite these valuable contributions, systematic knowledge about how context is operationalized and applied in realist evaluation, as well as the methodological challenges related to examining context, is still limited. Despite a recent contribution (Greenhalgh and Manzano, 2021), our understanding of how context is understood and applied rests on a limited empirical foundation.

The aim of this article is to shed light upon the conceptualization and application of context in published realist evaluations. The empirical foundation for this examination is a systematic review of 195 published realist evaluations in the time period from 1997 to 2017 (the two decades following Pawson and Tilley’s seminal publication of Realistic Evaluation). The following three questions guided the review:

How is context conceptualized in published realist evaluations?

What types of contextual factors are examined as part of CMO configurations in published realist evaluations?

What are the methodological challenges experienced by evaluators when examining context in published realist evaluations?

The article is in four sections. In the “Conceptualizing context in realist evaluation” section, we examine the concept of context in realist evaluation. We also discuss three frameworks that we find particularly relevant when unpacking context: Pawson et al. (2004) distinctions between four levels of context (the four Is), the RAMESES II Project (2017) elaboration of what constitutes a contextual condition, and, finally, the empirically derived framework of Durlak and DuPre (2008) on implementation factors. In “Review methodology” section, we describe the purpose, scope, and methodology of the present review, including its limitations. “Findings” section presents the findings of our review, structured around the three guiding questions. Informed by the findings, the final part of this article offers a discussion on future conceptual and practical developments for advancing the use of context in realist evaluations and beyond.

Conceptualizing context in realist evaluation

The concept of context has a long and rich history in evaluation. In an issue of New Directions for Evaluation dedicated to the topic, Fitzpatrick (2012) identified 902 citations for the word context in the American Journal of Evaluation alone. The importance of context to the practice and profession of evaluation is evident in the Guiding Principles for Evaluators (American Evaluation Association, 2018), books (Hood et al., 2005), and even conference themes dedicated to the role of context in evaluation (2009 American Evaluation Association conference). Despite the sustained attention to context, Fitzpatrick (2012: 8) pointedly observes,

Yet the various contextual factors that influence evaluation are rarely considered in much depth in the evaluation literature. Nods and admonitions are given to the importance of considering context and to its impact on evaluation plans, methods, implementation, and use, but few develop the construct in depth.

In a similar vein, Rog (2012) observes that evaluation does not have a unified understanding of context, let alone a comprehensive theory (Rog, 2012). Echoing Jennifer Greene (2005), Rog (2012) notes that understandings of context often hinge on the evaluators’ methodological orientation, as quantitative evaluators tend to consider context as factors to be controlled for and qualitative evaluators consider context inseparable from the actors’ experiences, the intervention, and outcomes. As just one example, Coldwell (2019), reflecting on context in theory-based evaluation, argues that context can be dynamic, agentic, relational, historically located, immanent, and complex.

Realists in turn consider context as an irreducible source of explanation (Greene, 2005). Accordingly, the concept of context plays a central role in realist evaluation. As described by Pawson and Tilley (1997), context is an irreducible set of factors influencing when and how an intervention is delivered and how mechanisms are triggered. Thus, recognizing such contextual conditions that enable or impede mechanisms is crucial to realist evaluation (Pawson and Tilley, 1997). Pawson et al. (2004) have subsequently suggested that contextual factors can be identified at four different levels:

The individual capacities of the key actors and stakeholders such as interests, attitudes, knowledge, and skills.

The interpersonal relationships required to support the intervention, such as lines of communication, management, and administrative support, as well as professional relations and contracts.

The institutional setting in which the intervention is implemented, such as the culture and norms, leadership, and governance of the implementing body.

The wider (infra-)structural and welfare system, such as political support, the availability of funding resources, as well as competing policy priorities and influences (Pawson et al., 2004: 7–8).

This typology of contextual factors—referred to as the four Is framework—offers a valuable high-level conceptualization of context which is useful for grouping influential factors applied in CMOs.

Another conceptualization of context in realist evaluation was introduced as part of the RAMESES II project, wherein the authors propose that

Contexts are therefore bound up with the mechanism(s) through which programmes work, and need to be understood as an analytically distinct but interconnected element of a Context-Mechanism-Outcome configuration. (The RAMESES II Project, 2017: 3)

In extension, the authors describe context as (1) preexisting conditions for an intervention, (2) consisting of multiple layers and (3) multiple factors, (4) enabling or disabling of the mechanisms, and (5) through its interaction with mechanisms potentially constituting new contexts (The RAMESES II Project, 2017).

While these conceptualizations of context are valuable, they still represent high-level conceptualization that are somewhat removed from the practical examination of context in local evaluations. Advancing toward a more practical framework about what context is at the operational level, the growing body of literature on program implementation is informative, especially when the topic of interest is to understand how context and implementation affects outcomes. 3

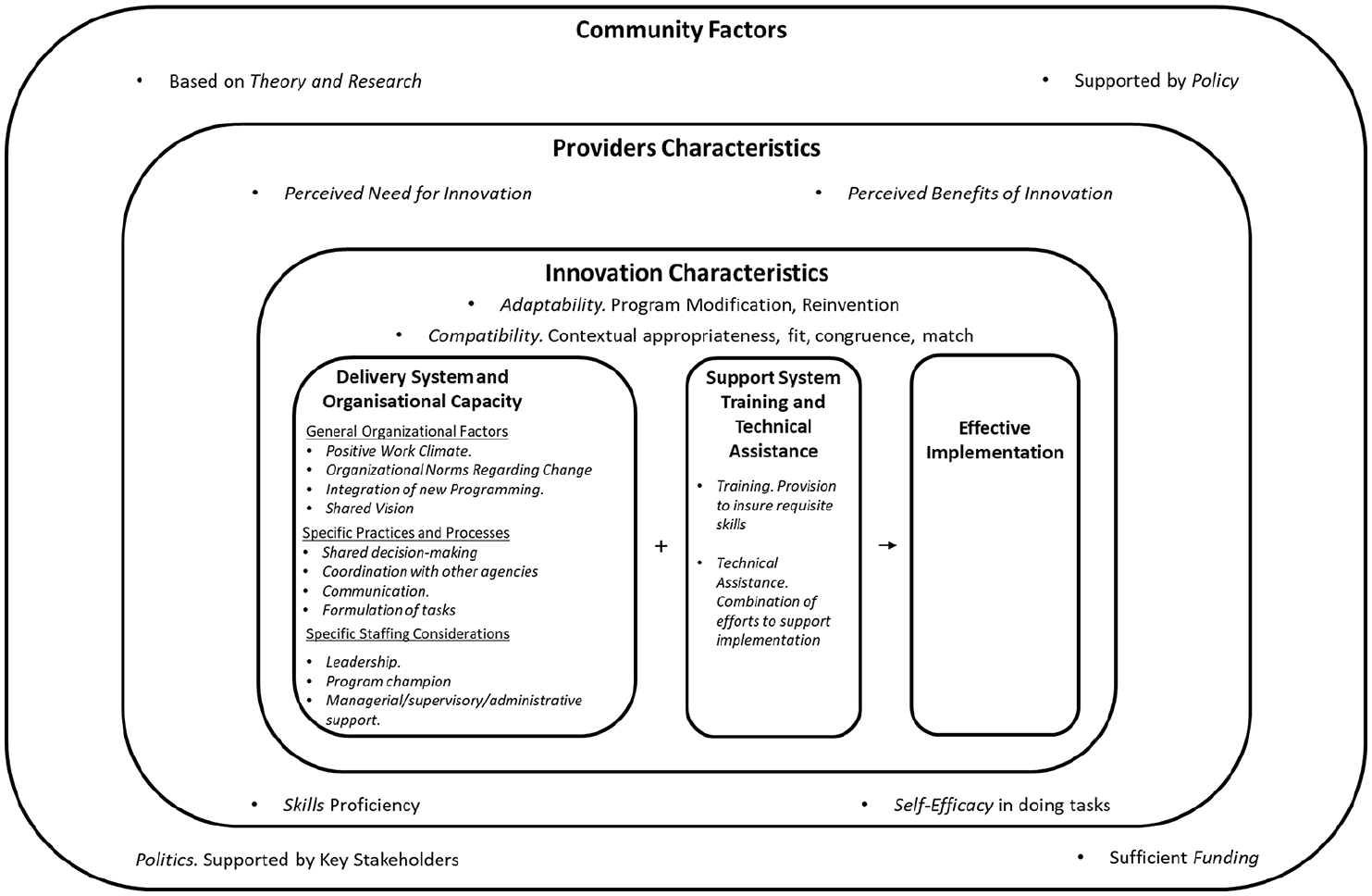

Over the past decades, a broad range of frameworks for gauging policy and program implementation (and fidelity) have emerged in implementation research. The present purpose is not to provide a comprehensive review of the large and still growing literature on implementation research (see Fixsen et al., 2005, for an exemplary review). In the present review, we found that the vast majority of realist evaluations were conducted in the health sector. Therefore, we applied Durlak and DuPre’s (2008) framework for effective implementation which is particularly relevant for this sector. In developing their framework, Durlak and DuPre (2008) reviewed factors influencing implementation and program outcomes in 542 health promotion and prevention programs. Informed by their review, the authors extracted 23 factors, which were identified in five or more studies. These factors were positively associated with high implementation fidelity and program outcomes. The authors cross-referenced these factors against three other reviews (Fixsen et al., 2005; Greenhalgh et al., 2005; Stith et al., 2006) with a somewhat similar scope and found a medium to high level of correspondence. In addition, the framework also applied a multilayered and factorial approach as emphasized by realist scholars. As such, the framework offers an empirically derived and relevant taxonomy to identify what context features were applied in realist evaluation. The framework and its associated implementation factors are presented in Figure 2.

Framework for effective implementation.

According to Durlak and DuPre (2008), the 23 factors influencing effective implementation can be divided into specific levels: (1) community factors, (2) provider characteristics, (3) innovation characteristics, (4) factors relevant to the delivery system and organizational capacity, and (5) factors related to the support system. While space does not permit a detailed account of all 23 factors (see Durlak and DuPre, 2008, for a comprehensive description), a more cursory description is provided here.

Community-level factors

Four different factors may drive, or in their absence potentially impede, effective implementation: (1) the intervention is based on evidence from scientific research, (2) key stakeholders’ support, (3) sufficient funding to support the intervention, and (4) it is aligned with, or supported by, policy.

Provider Characteristics include (5) whether there is a perceived need for the intervention locally, and (6) whether key actors perceive and experience potential benefits from the intervention. Also, the (perceived) competencies of key actors delivering the intervention matter, such as (7) the providers’ perception of self-efficacy in delivering the tasks and (8) their skills proficiency.

Characteristics of the Innovation also matter, including (9) whether the intervention is compatible with the provider’s mission and values, and whether it is (10) adaptable to align with provider preferences and practices.

Durlak and DuPre (2008) divided Factors Relevant to the Prevention Delivery System: Organizational Capacity into three different themes:

General organizational factors that relate to (11) a positive work climate in the workplace, (12) the organization’s openness and norms regarding change, (13) its ability to integrate new programming into its existing practices and routines, and (14) whether organizational members are united in a shared vision about the purpose of the intervention.

Specific practices and processes. These include (15) local ownership and involvement of key actors (shared decision-making) and planning and delivering the interventions, (16) whether local agencies collaborate and coordinate in delivering the requisite services, (17) the existence of clear and open communication, and that (18) tasks are formulated and planned with clear roles and responsibilities.

Specific staffing considerations. According to their review, (19) leadership is important in many aspects of the implementation process, as is (20) the presence of internally respected program champions that support the intervention. (21) Also, the requisite managerial/supervisory/administrative support is needed throughout the implementation.

Factors related to the support system include (22) training to ensure provider skills proficiencies and sense of self-efficacy is particularly important and (23) technical assistance in terms of the organization offering resources and support throughout the implementation.

As mentioned above, the Durlak and DuPre framework is relevant for the present review for several reasons. First, the framework is aligned with other widely cited frameworks (Wandersman et al., 2008) in implementation research. Second, the framework is empirically derived from a comprehensive review of over 500 studies covering a broad range of interventions. This entails that the framework is based on empirical evidence, as opposed to a purely theoretical model, and in effect more likely to be applicable to a broad range of interventions. Finally, the focus of the framework on health and public health interventions makes it particularly applicable when examining realist evaluations, as most published applications of realist evaluations are in health and public health settings, despite potential differences within those arenas.

Review methodology

The present review of context in realist evaluations emerges from a broader review of theory-based evaluations (Lemire et al., 2020). The present review focuses exclusively on realist evaluations. The three questions guiding the present review are the following:

How is context conceptualized in published realist evaluations?

What types of contextual factors are examined as part of CMO configurations in published realist evaluation?

What are the methodological challenges experienced by evaluators when examining context in published realist evaluations?

Search strategy

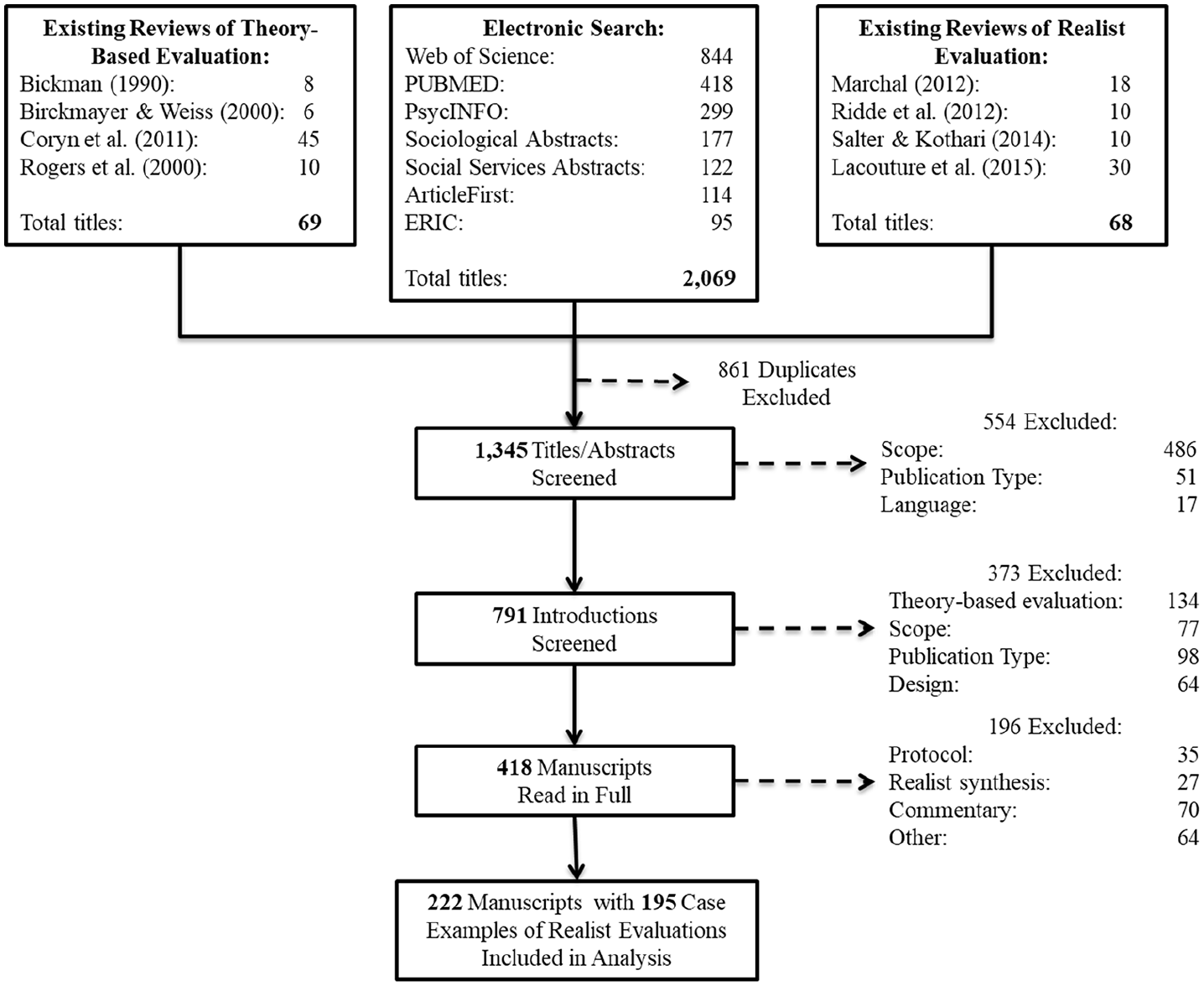

Toward addressing these questions, the present review used a multipronged sampling strategy. The primary search strand was an electronic search for published realist evaluation studies in the time period from 1997 to 2017—the two decades after Pawson and Tilley’s seminal publication on realistic evaluation. The following databases were covered: Web of Science, ArticleFirst, PsycINFO, Social Work Abstracts, Sociological Abstracts, ERIC, and Medline (PubMed). The following search terms were used to identify relevant studies: “realist” and “realistic,” “theory-driven,” “theory-based,” “contribution analysis” (and related variants thereof). These search terms were used in conjunction with the term evaluation to enhance relevance. The electronic search and retrieval, which was conducted in July 2018, resulted in 2069 manuscript titles.

In a parallel search strand, we identified 68 realist evaluations included in four (of the five) published reviews of realist evaluations considered above. 4 Of these, only one manuscript provided a case description of a realist evaluation not already identified in the electronic search. 5 In this way, the manual search of the past reviews served both as a strategy for identifying relevant publications on realist evaluations and to test the coverage of the electronic search of the databases.

The results of the search and retrieval are presented in Figure 3. As the figure shows, a total of 1345 unique manuscripts were identified across the multiple search strands.

Search and retrieval flowchart.

The manuscripts were first screened on the basis of their titles and abstracts. Screening the titles/abstracts resulted in the exclusion of 554 manuscripts. Manuscripts were primarily excluded based on scope (486 manuscripts) and publication type (51). Exclusions based on scope often involved manuscripts including the term realistic evaluation, without this being a reference to the evaluation approach by Pawson and Tilley. In general, the screening erred on the side of inclusion at this stage: If an abstract in any way could be interpreted as potentially relevant, the manuscript was included for further consideration.

The introductions of the remaining subset of 791 manuscripts were then carefully screened. Informed by this screening, 373 manuscripts were excluded because they reported on generic theory-based evaluations (134) or because of publication type (98), scope (77), or design (64). The remaining 418 manuscripts were read in full. Of these, 196 manuscripts were excluded because they were study protocols (35), realist syntheses (27), commentaries (70), or for other reasons (64).

The final set of 222 manuscripts (1) self-identified as realist/realistic evaluation, (2) contained a case description of a realist evaluation, and (3) was presented in English. These 222 manuscripts were included in the final analysis.

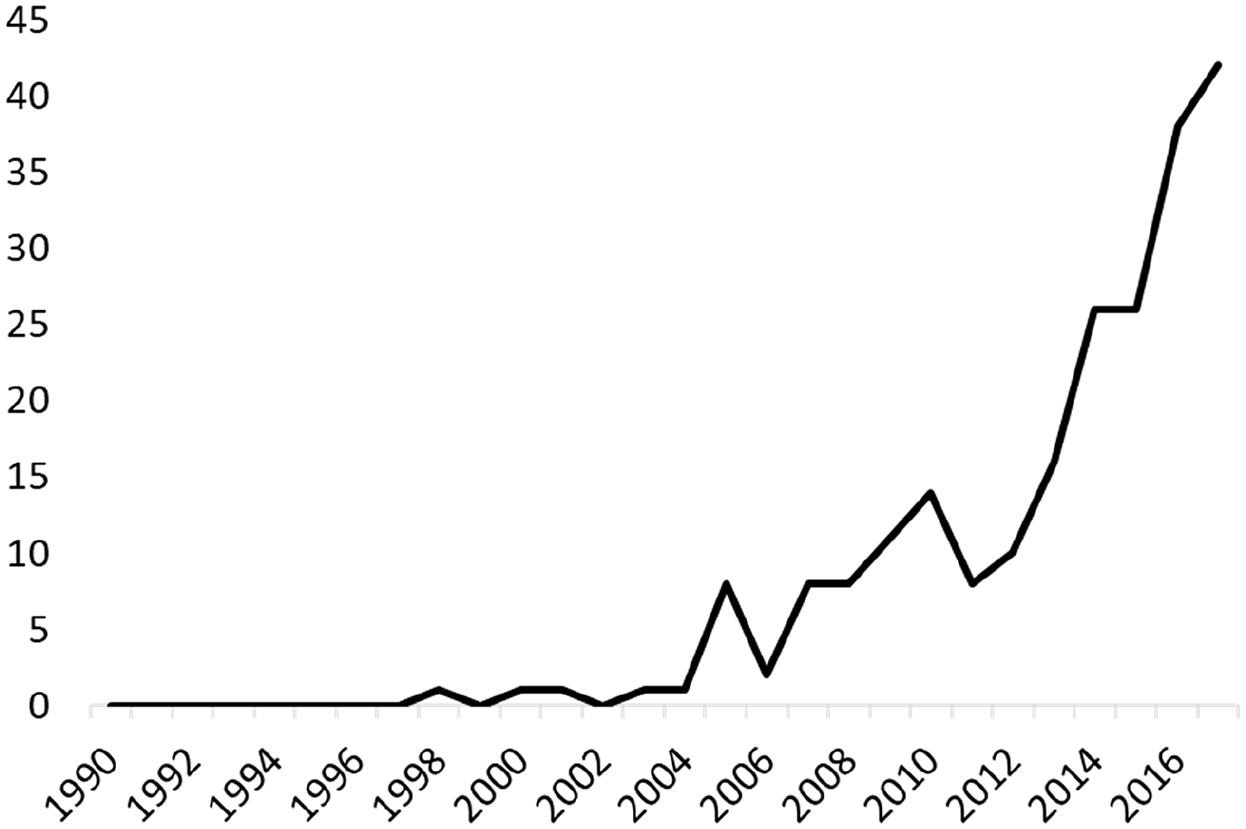

The 222 manuscripts provided descriptions of 195 individual realist evaluations (some authors published more than one article on the same realist evaluation). Figure 4 presents the number of published applications of realist evaluation per year in the period from 1997 to 2017. As the figure indicates, the publication rate (number of publications per year) remained stable between 1997 and 2004, with just a few published case examples per year. Starting in 2007, the number of published applications per year has almost continuously risen. A total of 42 applications of realist evaluations were published in 2017.

Published applications of realist evaluations (1997–2017).

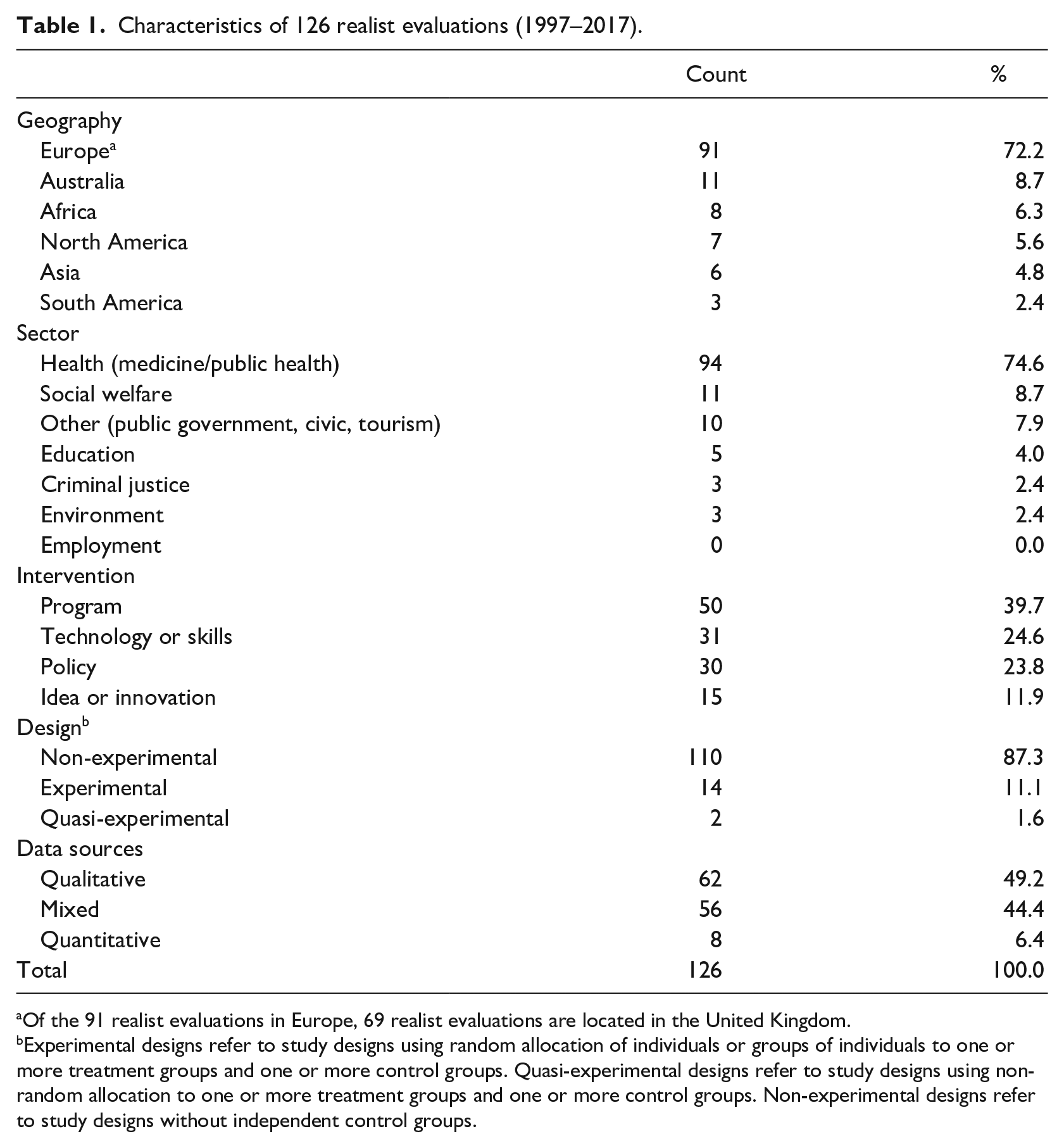

Of the 195 published case examples of realist evaluations, a total of 126 realist evaluations presented one or more CMO configurations. These 126 realist evaluations comprised the data set for the analysis of context. Table 1 provides an overview of the basic characteristics of the 126 case applications. As the table shows, the realist evaluations are primarily from Europe (72.2%). Of these 91 realist evaluations, 69 are from the United Kingdom. A noticeable proportion of evaluations are from Australia (8.7%) and, to some extent, Africa (6.3%) and North America (5.6%).

Characteristics of 126 realist evaluations (1997–2017).

Of the 91 realist evaluations in Europe, 69 realist evaluations are located in the United Kingdom.

Experimental designs refer to study designs using random allocation of individuals or groups of individuals to one or more treatment groups and one or more control groups. Quasi-experimental designs refer to study designs using non-random allocation to one or more treatment groups and one or more control groups. Non-experimental designs refer to study designs without independent control groups.

Realist evaluation appears to have gained traction within the (public) health sector, within which 74.6 percent of the realist evaluations are published. Published applications of realist evaluations also emerge from the social welfare sector (8.7%), education (4.0%), and criminal justice (2.4%), among other sectors. The “other” category includes public government in general, tourism, and civic sectors.

In terms of types of interventions (Coffman, 2010), most realist evaluations in our review involve programs (39.7%), such as service delivery models, treatments, and professional development. Interventions in the form of policies (23.8%) and technology or skills (24.6%) are also common. Close to all, the realist evaluations involve non-experimental designs (87.3%) and rely on qualitative data (49.2%) or mixed-methods data (44.4%).

Coding and analysis

To address the three main research questions, the 222 included manuscripts were coded by authors 1 and 2 according to a prespecified coding framework structured around the characteristics of the realist evaluations (i.e. year, country, sector, study design, data methods, and sources), definitions of key concepts (context and mechanism), and sources of these, as well as contextual conditions presented in the CMO configurations. In addition, information on methodological issues and lessons learned about examining context in realist evaluation was abstracted for further analysis.

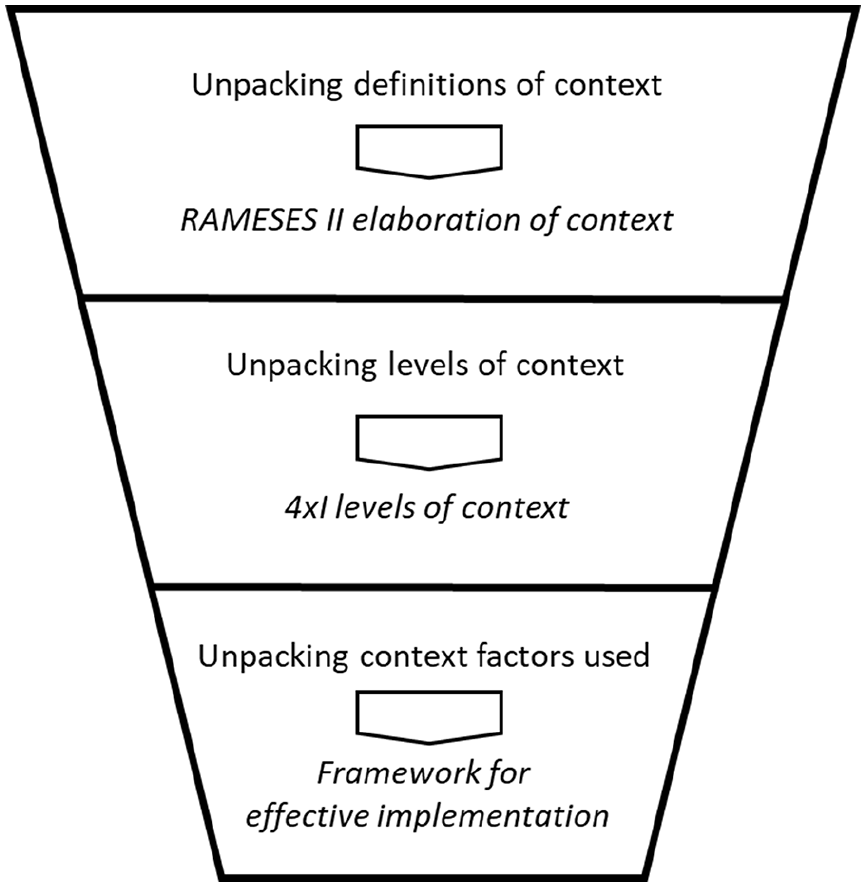

The coding and analysis of context were structured around three levels (Figure 5). At the conceptual level, we used the RAMESES II Project (2017) understanding of context to unpack the definitions of concept provided by the authors. We also coded and categorized the definitions as simple and elaborate, depending on the level of specification and elaboration offered within each definition. We then proceeded to code the context factors included in the CMOs. Here, we first coded what levels of context have been applied in the CMOs. To this end, we used Pawson et al. (2004) distinctions between four levels of context (the four Is framework). Finally, we coded the contextual factors in the CMOs using Durlak and DuPre’s (2008) empirically derived framework for effective implementation.

Analytical frameworks for context.

No review is without its limitations. One limitation of the present review is that it solely pertains to published realist evaluations. Needless to say, these published applications represent a smaller subsample of all realist evaluations conducted during the time period. Moreover, the published subsample of realist evaluations may differ in important ways from non-published realist evaluations. For this reason, generalization of findings beyond the boundaries of the sample should be approached with caution.

Another limitation pertains to the uneven description of contextual conditions provided in the manuscripts. While some manuscripts provided detailed descriptions of contextual conditions, others offered only cursory attention to these. As such, the strength of contextual evidence varied across the manuscripts, and in effect in our coding of these. To make matters more complex, and echoing Rog’s observation that there is no unified understanding of context in evaluation, coding contextual conditions with consistency and precision is difficult as it encapsulates the very challenge of conceptual ambiguity across the manuscripts included.

Despite these limitations, the position we take is that the present review provides important and useful insights into the operationalization and application of context in published realist evaluations.

Findings

This section presents the main findings of our review of the 126 published applications of realist evaluations—the subset of realist evaluations that presented one or more CMO configurations. The section is structured around the three guiding research questions.

How is context conceptualized in published realist evaluations?

The first step in our analysis was to examine how authors conceptualize or define context as part of their evaluation. This information is usually provided in the first part of the article, where authors describe their evaluation approach. Across the 126 case applications, we found that 60 cases (48%) contained an explicit definition of context. This finding aligns with findings from Greenhalgh and Manzano’s review of realist evaluation (45% explicit definitions), definitions of context in evaluations with other research designs (Bamberger, 2008; Kaplan et al., 2010), and a recent review of another realist key term, mechanism, which documented that only 54 percent of the manuscripts included a definition of the construct (Lemire et al., 2020).

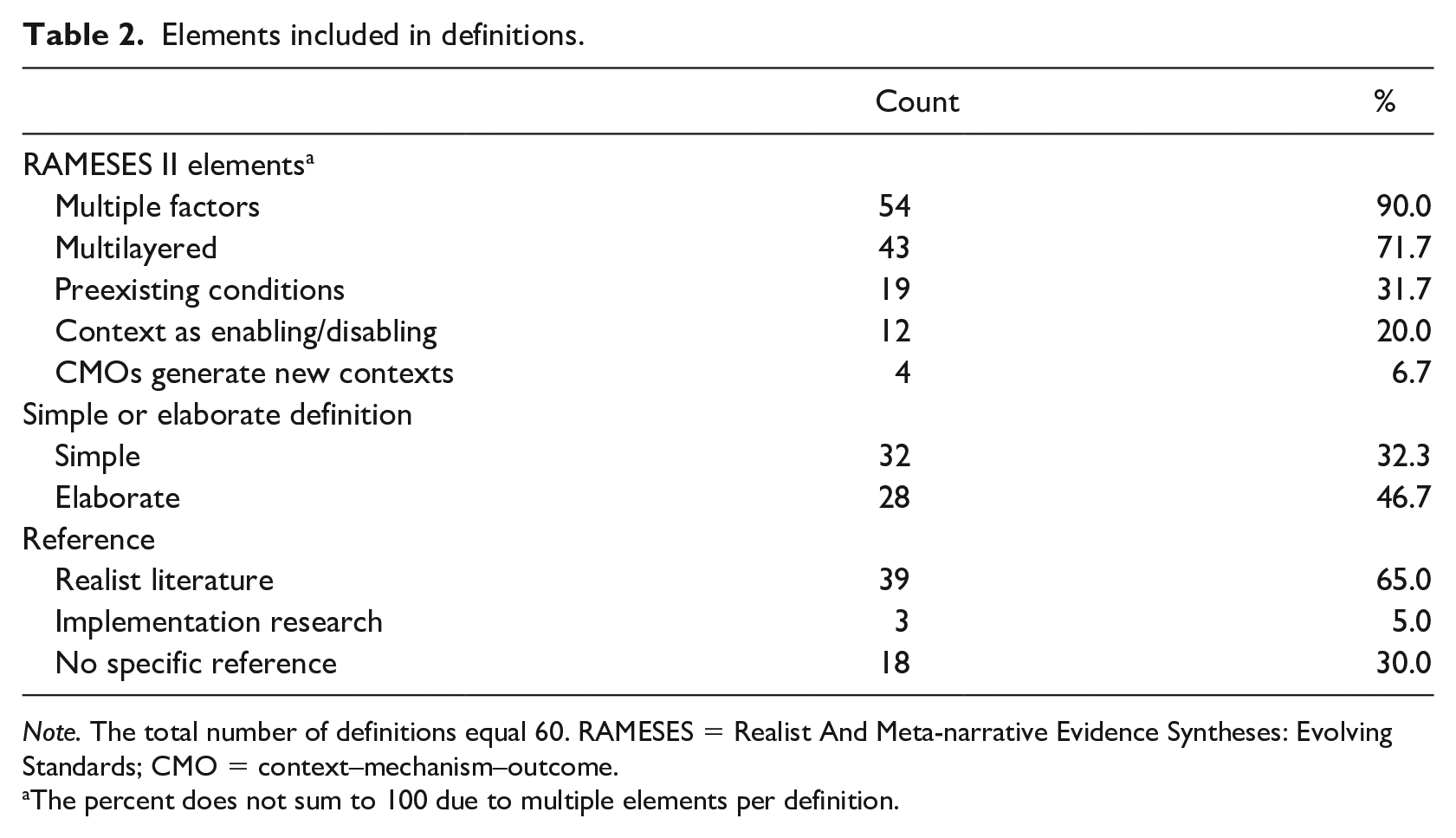

Advancing our analyses, we then examined the 60 definitions against the key elements comprising the RAMESES II elaboration of the construct, categorized the definitions as simple or elaborate (depending on the level of theoretical elaboration and definitional specification), and examined whether authors explicitly referred to realist or implementation research literature as a source for their definition. The results are shown in Table 2.

Elements included in definitions.

Note. The total number of definitions equal 60. RAMESES = Realist And Meta-narrative Evidence Syntheses: Evolving Standards; CMO = context–mechanism–outcome.

The percent does not sum to 100 due to multiple elements per definition.

The table shows significant differences in what elements were included in the definitions. Most of the definitions characterized context as consisting of multiple factors (90.0%) and layers (71.7%), whereas fewer definitions included mention of context as preexisting conditions (31.7%) and enabling/disabling for mechanisms (20.0%). Context as interactive with mechanisms and generative of new context was less common (6.7%).

We further distinguished between simple and elaborate definitions by examining how detailed it considered various elements of the concept. As shown in Table 2, the definitions were close to evenly split between simple (53.3%) and elaborate (46.7%) definitions. Simple definitions of context were mostly in the form a single sentence, offering a surface-level description of the general meaning of context. More elaborate definitions reached beyond describing the meaning of the term to describe its function in relation to mechanisms, different types of contextual conditions, and the ways in which context can be dynamic, agentic, and temporal (Coldwell, 2019).

Finally, we examined the sources cited for the definitions. Most definitions (65%) made explicit reference to realist authors, most often Pawson and Tilley (1997). However, almost one-third of the definitions contained no explicit reference or source (30.0%). Referencing implementation research literature was less common (5.0%).

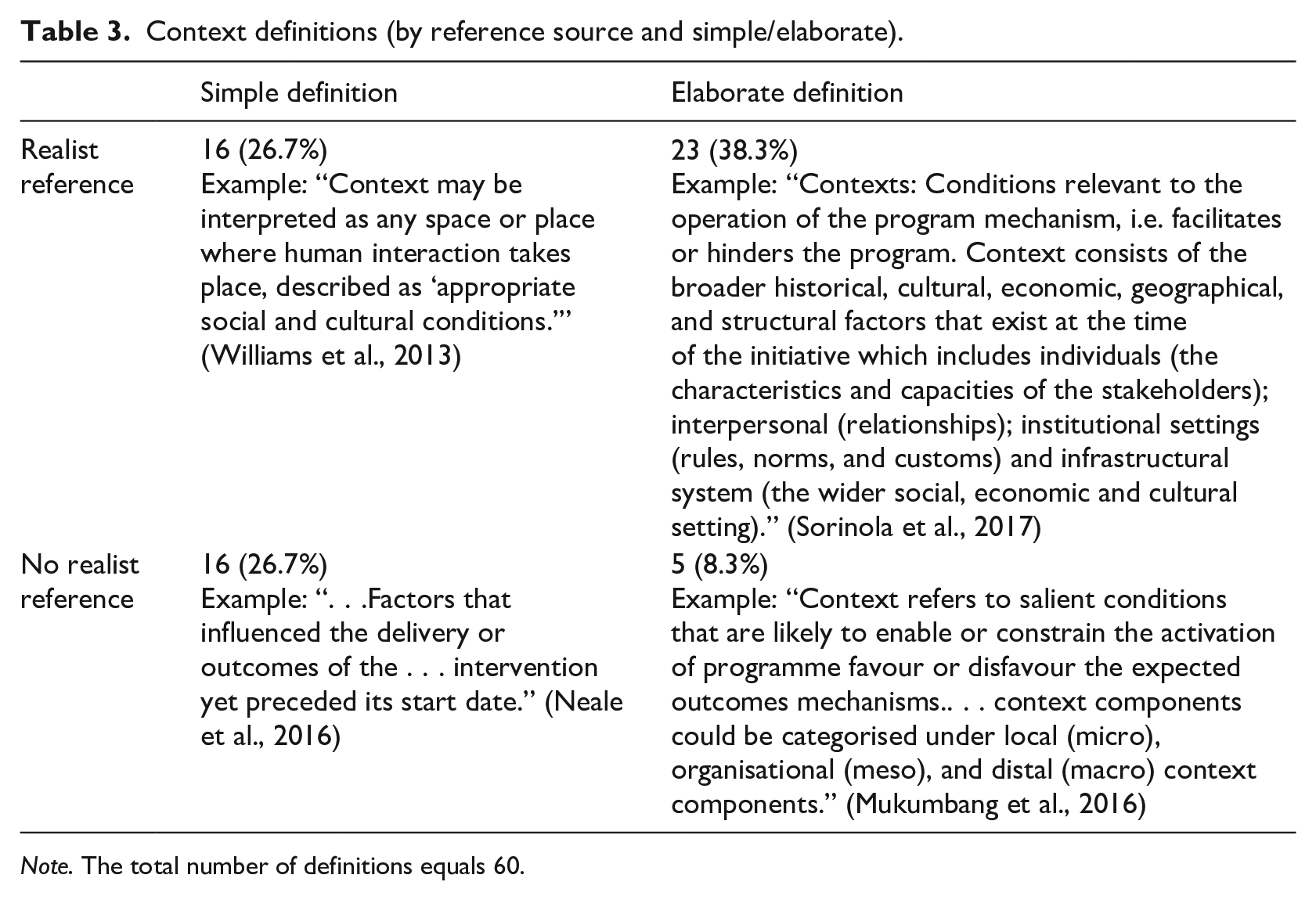

In extension of the above analyses, we also examined whether we could detect an association between reference sources and simple/elaborate definitions. As shown in Table 3, definitions with an explicit realist reference tended to be more elaborate (38%).

Context definitions (by reference source and simple/elaborate).

Note. The total number of definitions equals 60.

What types of contextual factors are examined as part of CMO configurations in published realist evaluation?

As documented above, only about half of the case applications provided an explicit definition of context. However, all the case applications—to a greater or lesser extent—included contextual factors in the CMOs presented. Across the 126 case applications, we identified a total of 517 CMO configurations. These CMO configurations included 850 context factors.

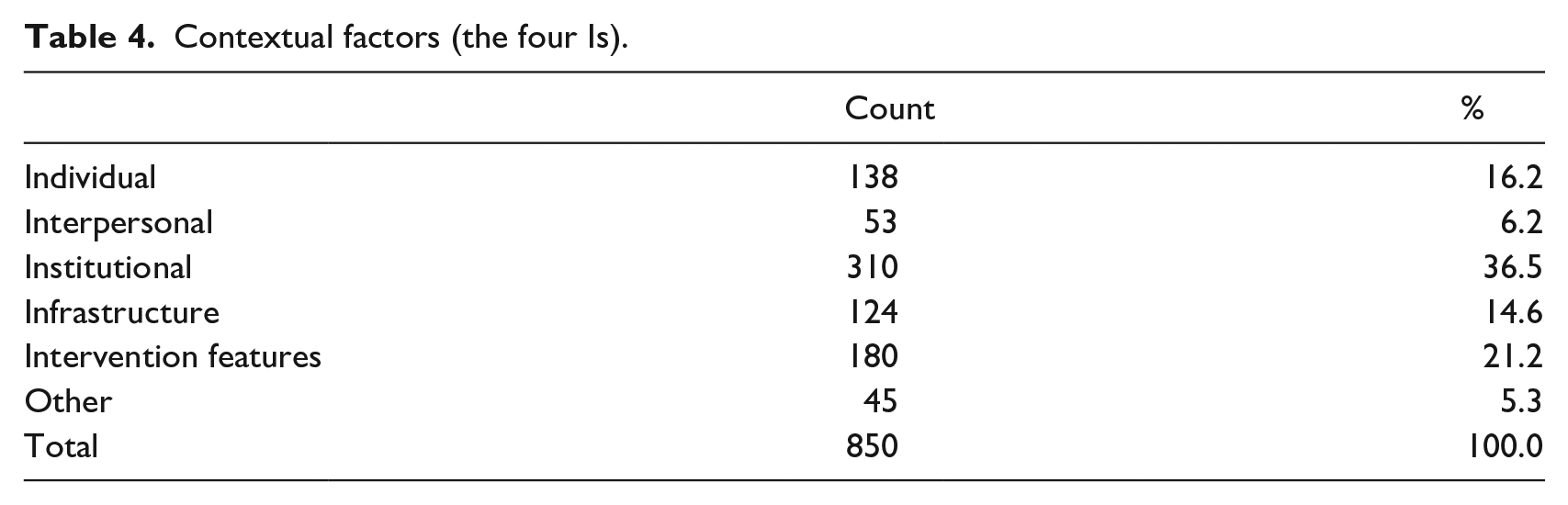

Toward answering the second research question, we first explored how the contextual conditions presented in these CMOs matched with the four Is definition of context provided by Pawson and Tilley (1997). In coding the context factors, we found that authors often identified intervention features (21.2%) as context. For this reason, we included a fifth “I” to the four Is framework. The most common types of contextual factors were institutional factors (36.5%) and to some extent individual (16.2%) and infrastructural factors (14.6%) (Table 4).

Contextual factors (the four Is).

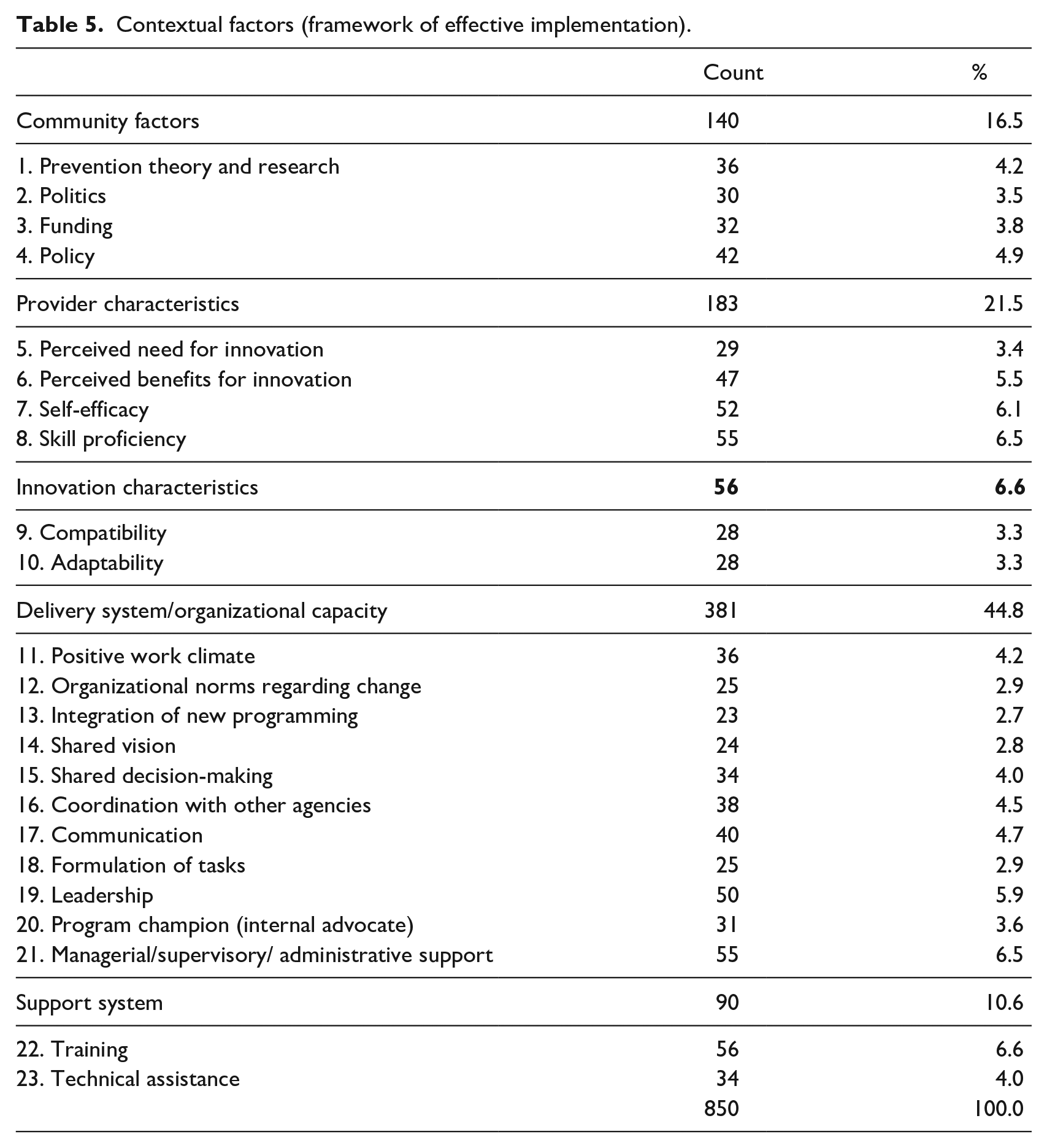

We then examined how the contextual conditions in the CMOs mapped unto Durlak and DuPre’s (2008) Framework of Effective Implementation. The results are presented in Table 5. The table shows that the contextual conditions in the realist evaluations primarily revolve around factors in the delivery system and organizational capacity (44.8%) and the provider characteristics (21.5%), both of which represent the immediate organizational context within which interventions are embedded. Within the delivery system and organizational capacity, emphasis is primarily on managerial/supervisory/administrative support (6.5%) and leadership (5.9%). Within provider characteristics, the most common factors were skill proficiency (6.5%) and skill efficacy (6.1%)—broadly speaking the capabilities of the staff implementing the intervention. Another prevalent factor is that of training (6.7%) under support system, which reaffirms the emphasis on staff capabilities. Similar to the potential conflation of intervention characteristics, we found it, in some cases, difficult to differentiate between training as the intervention itself or as part of the support system. Differences in applied categorizations notwithstanding, we found the context categories to, largely, cover those identified in the cases.

Contextual factors (framework of effective implementation).

In extension of the above analyses, we also examined whether the different types of definitions—identified under the first research question—affected how context was operationalized. More specifically, we examined whether the number of contextual factors varied across realist evaluations grounded in realist reference (or no realist reference) or with more (or less) elaborate definitions of context. However, we found no clear pattern indicating that more elaborate definitions, or those referencing explicitly to realism, were associated with more context factors (data not shown). At least quantitatively, there appears to be no detectable connection between level of elaboration and source of definition and the operational application of contextual factors in the CMOs.

What are the methodological challenges experienced by evaluators when examining context in published realist evaluations?

Advancing toward a better appreciation of the methodological challenges experienced by realist evaluators when examining context, we examined the case applications for any discussions thereof. Methodological challenges were often discussed in the limitations and discussion sections of the manuscripts. Only few authors reported on methodological challenges in the application of context as analytical construct. In comparison, about one-third of the cases discussed challenges concerning mechanisms, another key concept in realist evaluation (Lemire et al., 2020). Echoing Greenhalgh and Manzano (2021), we found mechanisms to take the forefront concerning methodological discussions.

The few challenges identified can be grouped into three broad issues: identifying the most salient contextual conditions, analytically differentiating between context and mechanisms, and describing the interplay between contextual factors, mechanisms, and the outcomes of interest.

In terms of identification of salient contextual conditions, Prashanth et al. (2014) observed, when considering social reality as an open systems world, whereby the boundaries of what is considered context are open, there is no end to the rival explanatory possibilities to be potentially included as part of examining the contextual conditions of the intervention. Accordingly, one must prioritize which contextual factors are considered most relevant and important to include. In a similar vein, other authors reflected on the difficulty of identifying a set of relevant context factors (Cheyne et al., 2013; Marchal et al., 2010). No authors provided strategies or offered guidance on how contextual factors can be prioritized and subsequently included in CMOs. To be sure, there is no simple recipe for making these decisions—sound judgment is called for. However, more formalized guidance on how to select the most relevant contextual factors in a systematic and transparent manner would surely be of value.

In terms of analytical differentiation, Bartlett et al. (2014) reported challenges in defining mutually exclusive categories of context but did not report how this challenge was resolved. This differentiation challenge was not limited to different contextual constructs, as some found it difficult to distinguish between what was considered context versus what were mechanisms (Bartlett et al., 2014; Sorinola et al., 2017) and intervention components (Alvarado, 2017). This finding aligns with previous findings related to difficulties differentiating between mechanisms, context factors, and intervention features (Lemire et al., 2020). In reflecting on these challenges, Sorinola et al. (2017) suggested, and correctly so, that using tight definitions and operationalizations of the key concepts comprising CMOs could remedy some of these differentiation challenges.

In terms of describing the interplay between context factors and mechanisms and outcomes, Marchal et al. (2010) noted a challenge in terms of isolating the influence of the context from that of the intervention on the outcome of interest. This challenge might arise from the difficulties of differentiating clearly between context factors and mechanisms (Lemire et al., 2020), unclear conceptualizations of contexts, and its resulting operationalization in the design and data collection methods used in the evaluation. Several authors noted the importance of mixing methods and applying multiple data sources (Kovacs and Corrie, 2016; Martin and Tannenbaum, 2017) as part of identifying and describing salient contextual factors and their interplay with mechanisms and outcomes. This observation resonates well with the prevalence of qualitative and mixed-methods data sources in the realist evaluations (see Table 1); however, further development of analytical strategies for describing the interplay between context, mechanism, and outcomes, as well as strategies for gauging the relative influence of the context from that of the intervention on the outcome of interest, would serve well to advance our practice.

Discussion

This review examines how context is conceptualized and operationalized in published realist evaluations. Three questions guided the review: (1) How is context conceptualized in published realist evaluations? (2) What types of contextual factors are examined as part of CMO configurations in published realist evaluation? and (3) What are the methodological challenges experienced by evaluators when examining context in published realist evaluations?

In what follows, we will briefly consider the main findings for each of these questions and discuss further implications of these.

Conceptualizing context in realist evaluations

As documented in the preceding pages, context is only explicitly defined in about half of the published case applications of realist evaluations. The definitions provided tend to emphasize the multilayered and multifaceted nature of context. Furthermore, those with an explicit definition tended to cite foundational realist literature, such as Pawson and Tilley’s (1997) book on Realistic Evaluation. Conceptually, only few cases drew from existing implementation theory and research as sources to define context.

The lack of conceptual clarity across many of the published realist evaluations is noteworthy given the central role of context in realist evaluations (Coldwell, 2019; Greenhalgh and Manzano, 2021). However, the finding aligns with the lack of definition of the equally important term mechanism in published realist evaluations (Lemire et al., 2020). Despite the central role and sustained interest in realist evaluation, conceptual ambiguity about the meaning and role of key terms, such as context and mechanism, persists.

We posit that the relative dearth of elaborate definitions and resultant ambiguity surrounding the term context is unfortunate for several reasons. First, following Greenhalgh and Manzano’s observation, the underlying ontological and epistemological assumptions of what context is, and its role in configurational causation, may result in very different research designs and application of methods, and ultimately what is to be understood as realist evaluation (De Weger et al., 2020; Van Belle et al., 2016).

Second, the conceptual ambiguity surrounding the term results in limited transparency about the types of contexts used within and across realist evaluations. In extension, unless we pursue and achieve hard-earned clarity, we run the risk of furthering fragmentation, as opposed to integration, in our collective thinking on and use of context in realist evaluation.

Finally, the conceptual ambiguity also poses an impediment to the ambitions of Pawson (2002) to catalog CMO configurations and accumulate knowledge of how they work across a broad range of contexts. If we are to pursue this idea of knowledge accumulation across realist evaluations, we will need some degree of shared conceptual grounding.

The motivation and purpose of the present contribution are not to promote a one-size-fit all definition of what context is or should be. This type of standardization would simply serve to stifle our ability for reflective evaluation practice and inevitably fall short of accommodating the broad range of contextual conditions within which real-world interventions are embedded. Rather, the aim of the present contribution is to promote a more disciplined and transparent use of the term context in which its definitional implications in terms of ontology, epistemology, and elements are clear. We do suspect that future conceptualization of context can be aided by a stronger theoretical integration between realist evaluation and implementation research. In particular, we found that Durlak and Dupre’s implementation framework provides a useful model to map out what elements realist evaluators empirically consider contextual factors. This avenue of theorizing should be explored further, wherein aforementioned underlying ontological assumptions should be clarified. Coldwell (2019) and, in part, Blamey and MacKenzie (2007), have drawn attention to the methodological implications, and potential pitfalls, of such underlying assumptions.

Operationalizing context in realist evaluation?

When realist evaluators operationalize context as part of CMO configurations, a broad range of contextual factors are included. Emphasis is commonly placed on organizational factors, especially related to managerial, supervisory, and leadership supports, as well as staff capabilities/skills and training. However, the 850 context factors identified in the present review provided coverage of every aspect of Durlak and DuPre’s (2008) implementation framework—including factors such as leadership, political environment, technology, culture and climate, as well as norms and practices. It is also worth mentioning that more than 20 percent of the context factors were features of the intervention itself (see Table 5), suggesting a potential conflation of context and intervention features. Adding further complexity to these considerations, what constitutes part of an intervention in one evaluation may be considered context in another (Blamey and MacKenzie, 2007). Furthermore, contextual conditions themselves are amenable to change throughout an intervention period due to configurational changes in and of themselves or through changes in contextual conditions excluded from the evaluation’s scope due to the complex, dynamic, relational, and temporal attributes of context (Coldwell, 2019).

Greenhalgh and Manzano (2021) point to wider theoretical and methodological implications that stem from the underlying assumptions about context, and how contextual conditions interact in configurations. Despite the complexity of the effort, they call for an understanding of context as part of a configurational understanding of causation and with an emphasis on the explanatory value of capturing context.

The position we hold is that many types of causal reasoning could and would likely be relevant to employ when capturing context as part of realist evaluations. In fact, thoughtfully combining generative causation with configurational and/or probabilistic causal reasoning would from our perspective serve to enhance the explanatory strength of realist evaluations and strengthen the evidentiary grounding for the conclusions drawn about the interplay between context, mechanisms, and outcomes.

Furthermore, the broad number of contextual conditions—and the conflation of context and intervention features—only make the call for further theorizing on context all the more topical. In the context of theory-based evaluation, Funnell and Rogers (2011: 351) have advocated for “program archetypes,” that is, “classes of interventions that are used to activate mechanisms for change.” Common archetypes include information, incentives and sanctions, case management, community capacity building, and direct service delivery programs. The idea of archetypes is interesting and could be pursued both in relation to mechanisms and context factors. In fact, pursuing this might serve to alleviate some of the methodological challenges raised by realist evaluators in relation to context and potentially create a conceptual vantage point for exploring demi-regularities in CMO configurations.

Methodological challenges related to examining context in realist evaluations

Notably, only a few authors discuss methodological challenges related to conceptualizing or applying contextual factors in realist evaluations, let alone address the above considerations. The challenges raised by realist evaluators primarily concern identifying the most salient contextual conditions, analytically differentiating between context and mechanisms, and describing the interplay between contextual factors, mechanisms, and the outcomes of interest.

Arguably, any evaluation study—realist or not—should be explicit and systematic in how key constructs are defined and operationalized. One key methodological challenge identified, but seldom addressed explicitly and systematically, is how to identify the most salient contextual factors to be included in the CMOs. In fact, none of the articles in the present review provided a detailed account of how contextual factors were included/excluded from the CMO configurations.

This challenge is not unique to realist evaluation. Lemire and his colleagues identified a similar challenge in identifying salient influencing factors and alternative explanations in the context of contribution analysis (arguably, a hybrid of theory-based and realist evaluation) (Lemire et al., 2012). They went on propose the Relevant Explanation Finder, a rubric-based procedure in which the influence of contextual factors (and alternative explanations) is gauged based on five criteria (certainty, robustness, range, prevalence, and theoretical grounding). Other evaluators have since adapted the technique further (Biggs et al., 2014). To be sure, many other approaches and analytical techniques are available for determining the relative salience of different context factors and, relatedly, to describe the interplay between context factors, mechanisms, and outcomes. These include case-based approaches (e.g. qualitative comparative analysis and process tracing), statistical analyses (structural equation modeling), and qualitative coding techniques (causation coding), among others. Modeling techniques, combining CMO configurations with causal loop diagrams or stock and flow diagrams, are also worth considering.

The overarching aim of this article is to bring attention to the conceptualization, operationalization, and methodological challenges related to context in realist evaluations. Based on our review, we begin to see the breadth of how the concept of context is conceptualized and applied in published realist evaluations. Informed by our review, and toward realizing the full potential of context in realist evaluation, we recommend a movement toward more disciplined and transparent use of context in realist evaluation. This potentially allows for greater conceptual clarity and perhaps even alleviation of some of the ontological, epistemological, and methodological challenges related to the use of context in realist evaluation.

Footnotes

Acknowledgements

The authors thank Francois Lemire for linguistic support.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by institutional resources alone.