Abstract

Realist evaluation and experimental designs are both well-established approaches to evaluation. Over the past 10 years, realist trials—evaluations purposefully combining realist evaluation and experimental designs—have emerged. Informed by a comprehensive review of published realist trials, this article examines to what extent and how realist trials align with quality standards for realist evaluations and randomized controlled trials and to what extent and how the realist and trial aspects of realist trials are integrated. We identified only few examples that met high-quality standards for both experimental and realist studies and that merged the two designs.

Introduction

There is a long-standing tradition of using experimental designs in evaluation. The primary purpose of experimental designs, commonly referred to as randomized controlled trials (RCTs), is to provide an accurate estimate of intervention impact. 1 The defining feature of RCTs is that individuals (or a group of individuals) are allocated at random to either an intervention or a control group. The underlying logic of the random allocation is that any (observable and unobservable) differences among the intervention or the control groups are evenly distributed between the two groups. Accordingly, any observed outcome differences between the two groups can reasonably be attributed to the intervention being studied.

There are several benefits to RCTs. If designed and implemented well, RCTs provide the least biased mean estimates of intervention impact. In this way, RCTs can help determine whether a cause–effect relation exists between the intervention and the outcome. There are also limitations. As Jamal et al. (2015) argue,

RCTs of such interventions are often criticized as failing to open the “black box”—that is, they examine quite crude questions about “what works” without explaining the underlying processes of implementation and mechanisms of action, and how these vary by contextual characteristics of person and place. (n.p.)

The realist evaluation (RE) tradition originally gained momentum in evaluation circles as an alternative to experimental designs—as a counter to so-called “black box evaluations” (Pawson and Tilley, 1997). The main question driving RE is to uncover how an intervention works, for whom, and under what conditions (Pawson and Tilley, 1997). In line with this thinking, RE structures the data collection and analysis around context–mechanism–outcome (CMO) configurations. These CMO configurations are intended to capture the generative processes (mechanisms) that in a specific setting (context) contribute to one or more changes (outcomes) among intervention participants. The RE approach is theory-driven, which gives a flexibility that is particularly appropriate for the evaluation of complex interventions in complex settings, such as interventions that are developed and refined during implementation, vary in activities depending on setting, and/or are tailored to specific needs of individuals comprising the intervention group.

Over the past 10 years, realist trials have been proposed as an evaluation approach that combines the strengths of RCTs in providing evidence on

However, this proposition is not without controversy. Leading realist scholars have argued that RE and RCTs are incompatible at the ontological and epistemological level, citing differences in the underlying understanding of causation and closely related mechanisms, as well as the inability of RCTs to account for context and complexity of interventions (Marchal et al., 2013; Van Belle et al., 2016).

Despite extensive debates, published case examples of realist trials continue to emerge. As part of a recent review of published REs (Lemire et al., 2020; Nielsen et al., 2022), the authors of the present article identified more than a few evaluations combining an experimental design with an RE approach. Motivated by the identification of these real-world case applications, the purpose of the present article is to examine and describe the methodological features of realist trials to further our knowledge on how to evaluate complex interventions in practice. We hope that these examples may provide a fertile middle ground for further exploration. Specifically, we were interested in examining the following two questions:

To what extent and how do realist trials align with quality standards for RE and RCTs?

How are the RE and RCT aspects of realist trials integrated?

Toward answering these two questions, we reviewed published realist trials and systematically coded these according to the type of integration and established quality criteria for REs and RCTs.

The article is structured as follows. In the first section, we briefly describe current debates about the compatibility of REs and RCTs, highlighting specific ontological and epistemological points of contention related to integrating them in the form of realist trials. In the second section, we describe the review methodology that provides the empirical foundation for the article, including a description of the quality standards applied in the coding of the realist trials. In the third section of the article, we present the main findings of the review structured around the two guiding research questions. Finally, we conclude the article with a discussion on the implications of our findings for realist trials.

What is a realist trial—Unicorn or oxymoron?

Before advancing the empirical findings of our review, we begin by introducing the current debates about the compatibility of REs and RCTs. We shall therefore present the positions in the debate.

The theoretical debate on the possibility of realist trials emerged with an article by Bonell et al. (2012), proposing that conventional critiques of experimental designs made by realist scholars should be reconsidered and that RCTs could be adapted and used to evaluate complex social interventions while staying within the theoretical tenets of realism (Bonell et al., 2012). Toward this aim, the authors suggested that realist trials should place emphasis on understanding the effects of program components separately as well as in combination, examine the underlying mechanisms of change, describe the effects of interventions and their components in different contexts, integrate qualitative and quantitative research methods, and serve to build and validate program theories of interventions (Bonell et al., 2012). In a parallel publication, Porter and O’Halloran (2012) similarly argued that RCTs could

The proposition of realist trials provoked an immediate response from a number of eminent realist scholars (Marchal et al., 2013), labeling realist trials an “oxymoron,” and arguing that the “adjective ‘realist’ should continue to be used only for studies based on a realist philosophy and whose analytic approach follows the established principles of realist analysis” (p. 124). This critique was followed by a series of rejoinders (Bonell et al., 2013; Jamal et al., 2013) and rejoinders to the rejoinders (Bonell et al., 2016; Porter, 2015a, 2015b; Van Belle et al., 2016). The debate continues (Bonell et al., 2018; Porter et al., 2017a). Scanning across these exchanges, there appear to be three main points of contention in relation to integrating REs and RCTs: (1) differences in relation to the ontological and epistemological 2 foundations of causation, (2) differences in conceptualization and operationalization of mechanisms, and (3) differences in the ability to account for context and complexity of interventions. We will consider these in turn.

The first point of contention emerges from the ontological and epistemological differences between RCTs and RE. RCTs are grounded on a successionist account of causation, whereby causality can be demonstrated based on the observed regularity between a particular intervention and a particular outcome. Accordingly, as Marchal et al. (2013) observe, RCTs rely on the use of counterfactuals in order to demonstrate attribution (“What would have happened in cases without intervention?”) and on statistical analyses to quantify the causal impact of interventions.

In marked contrast, RE rests on a generative account of causation, whereby a sequence of unobserved entities—so-called mechanisms—are activated in specific contexts to generate one or more outcomes. Moreover, RE recognizes that an effect is sometimes produced by a complex combination of causes—or causal packages—why a configurational approach to understanding and explaining how and why interventions work is imperative. Accordingly, realist causal analysis focuses on identifying “the configuration that links the outcome to mechanism(s) triggered by the context, often combining quantitative and qualitative data” (Van Belle et al., 2016: n.p.). Because of these ontological and epistemological differences, RCTs and REs are arguably paradigmatically incommensurable (Marchal et al., 2013; Van Belle et al., 2016). Labeling realist trials an “oxymoron,” Marchal et al. (2013) conclude: “The resulting methodological choices regarding causality make it difficult to infuse RE principles in RCT designs” (pp. 124–125). In response, proponents of realist trials reject the successionist/generativist dichotomy on causation as they consider this as a category error.

In extension of these ontological and epistemological differences, another point of contention concerns how realist and RCTs conceptualize and operationalize mechanisms, a central term in RE. Since the primary aim is to determine the impact of an intervention, examining the underlying mechanisms of an observed change is largely outside the purview of RCTs. If examined, mechanisms tend to be conceived as variables in statistical analysis—such as mediator analyses—where the primary aim is to examine how mechanisms influence the impact of an intervention (Marchal et al., 2013; Van Belle et al., 2016).

In RE, mechanisms are central to understanding how, for whom, and under what circumstances an intervention work. As evidenced in past reviews (Dalkin et al., 2015; Lemire et al., 2020), the term mechanism has been defined and operationalized in many different ways. In general terms, mechanisms refer to unobservable entities that are activated in specific contexts to generate one or more outcomes (Van Belle et al., 2016). These mechanisms are a combination of a change in people’s reasoning (e.g. in their values, beliefs, or attitudes) and/or resources (e.g. information, skills, material resources, and support) available to them. As Van Belle et al. (2016) argue, this generative conceptualization moves the examination of mechanisms beyond variables in statistical analysis and toward a configuration-oriented perspective that acknowledges the complex causation adopted in scientific realism.

This brings us to the third point of contention: differences in the ability of RCTs and REs to account for the context and complexity of interventions. RCTs require interventions to be relatively stable and consistent in their implementation across different settings and contexts. Recognizing that context may influence the impact of interventions, RCTs may use statistical techniques to adjust the impact estimates for the most salient context factors (e.g. urban vs rural schools) and may apply different statistical techniques to examine impact estimates for specific subgroups. The primary aim is to account for the influence of contextual factors by distributing variations in context evenly through random allocation.

Realists in turn consider context as an irreducible source of explanation (Greene, 2005; Nielsen et al., 2022). Accordingly, the concept of context plays a central role in RE. Wong et al. (2017) describe context as (1) preexisting conditions for an intervention, (2) consisting of multiple layers and (3) multiple factors, (4) enabling or disabling of the mechanisms, and (5) through its interaction with mechanisms potentially constituting new contexts. Accordingly, examining context in an RE reaches beyond variable-based statistical adjustments to include a more fine-grained analysis of the interaction between a mechanism and the context within which it is embedded. A recent review of published RE studies documents a high reliance on qualitative data (Renmans and Pleguezuelo, 2023).

Irrespective of these largely theoretical exchanges on the foundational differences between RE and RCTs, realist trials continue to be published. As such, we are faced with an apparent methodological paradox: While realist trials are arguably paradigmatically incompatible, published case applications of realist trials continue to emerge. Are these published realist trials methodological highly desirable unicorns? Or are published realist trials, as suggested by Marchal et al. (2013), merely oxymorons that ignore key tenets of the RE approach? Toward unpacking this apparent paradox, we conducted a review of published realist trials to empirically examine the extent to which and how realist trials align with quality standards for REs and RCTs, as well as how the realist and trial aspects of realist trials are integrated.

Review methodology

This review of realist trials emerges from a broader review of REs (Lemire et al., 2020; Nielsen et al., 2022). This review focuses exclusively on realist trials. A more detailed description of the review methodology is provided in Supplemental Materials.

Search and screening

The review used a multipronged search strategy with the purpose of identifying RE studies in the time period from 1997 to 2017—the two decades after Pawson and Tilley’s seminal publication on realistic evaluation. Based on a full-text review of 122 publications describing REs, we identified a total of 16 REs that combined RE with an RCT design. While the RCT design was mentioned in the RE publication, a detailed description of the design was usually presented in a separate publication. For this reason, we identified these “twin” publications describing the RCT design for each of the 16 REs. The 31 publications describing the 16 published case applications of realist trials are listed in the references and in Table S1 in Supplemental Materials.

Coding and analysis

To address the two main research questions, the articles were coded by authors 2 and 3 according to a coding framework structured around the quality of the design (1) of the RE and the RCT design features of the realist trials and (2) the characterization of integration of the realist and RCT aspects of the realist trials. The coding frameworks are available in Tables S3 to S5 in the Supplemental Materials.

Quality of RCT design

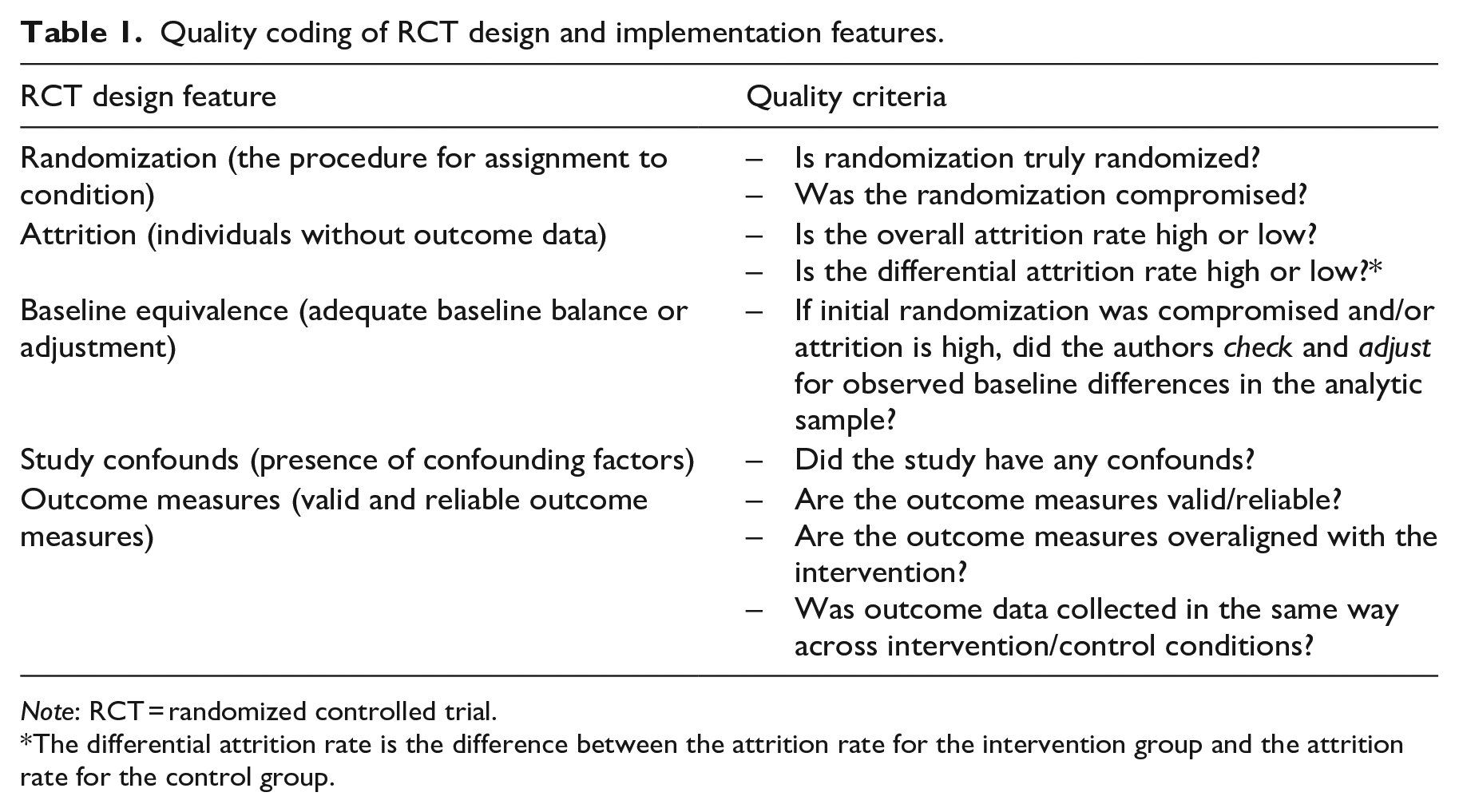

The coding of the quality of the RCT design was informed by the What Works Clearinghouse Group Design Standards (Version 4.1) (What Works Clearinghouse, 2020) and the Cochrane Collaboration Standards (Higgins et al., 2021), both of which are well-established standards for RCTs in education and health, respectively. For the present purposes, we focused our coding on five key design features of RCTs that are central to both standards: randomization procedure, attrition, baseline equivalence, study confounds, and outcome measures. These design features and associated quality criteria are outlined in Table 1. A more detailed description of the coding framework is provided in Table S5 in the Supplemental Materials.

Quality coding of RCT design and implementation features.

The differential attrition rate is the difference between the attrition rate for the intervention group and the attrition rate for the control group.

Based on our coding of these five dimensions, we categorized each RCT design into one of three groups:

These three groups reflect the What Works Clearinghouse categorization of group design studies.

Quality of realist design

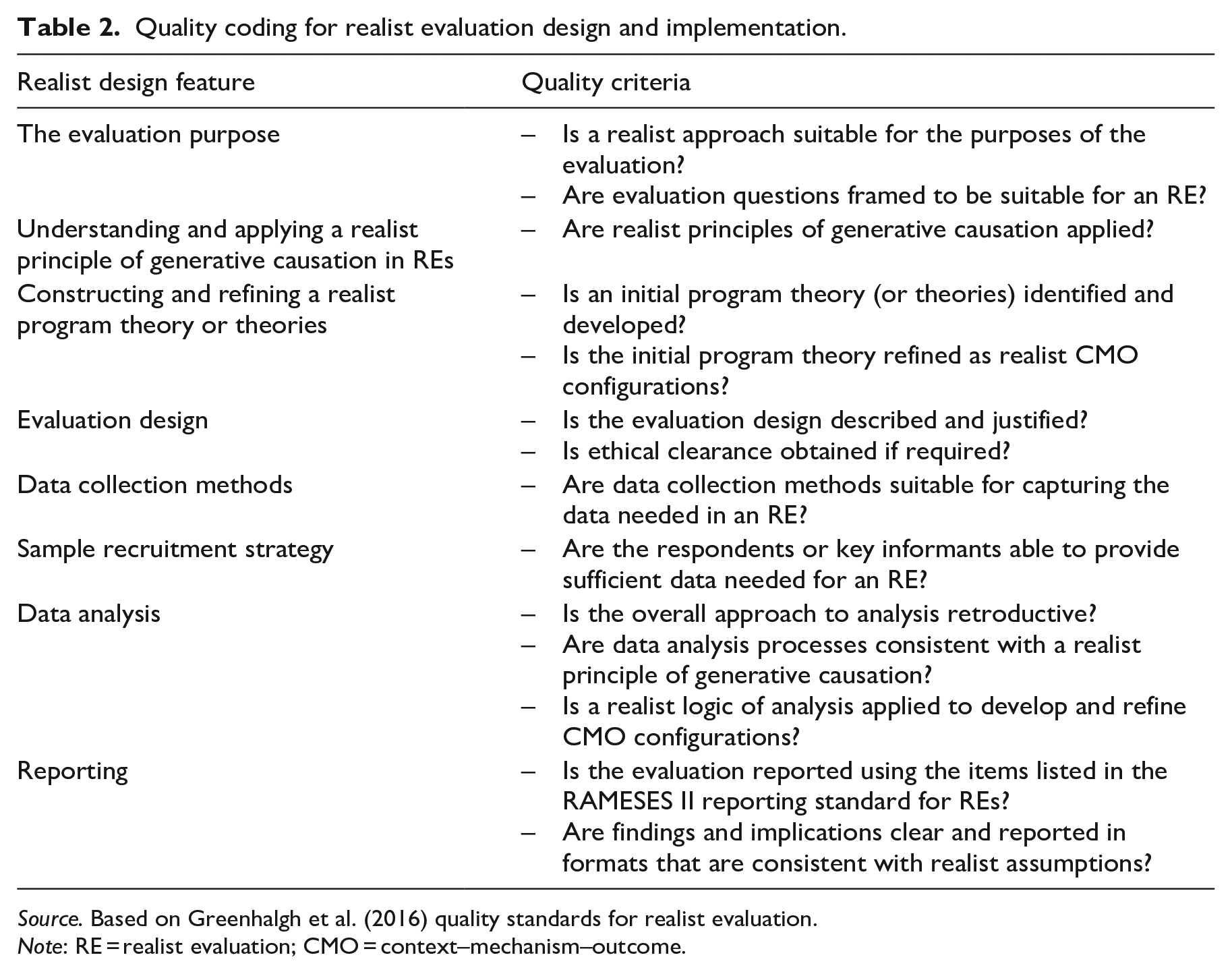

The quality coding of the realist design was based on the Quality Standards for Realist Evaluation—so-called RAMESES standards (Greenhalgh et al., 2016). The quality design standards are structured around eight key features of REs: the evaluation purpose, understanding and applying a realist principle of generative causation, constructing and refining a realist program theory or theories, evaluation design, data collection methods, sample recruitment strategy, data analysis, and reporting. Each of these features involves one to three quality criteria, which are scored according to a rubric with four categories: inadequate, adequate, good, and excellent adherence to the standard. The quality features and corresponding criteria are presented in Table 2 and detailed in Table S4 in the Supplemental Materials. See Greenhalgh et al. (2016) for a more detailed description of the quality criteria.

Quality coding for realist evaluation design and implementation.

Based on our coding of these eight dimensions, we categorized each RE into one of three groups:

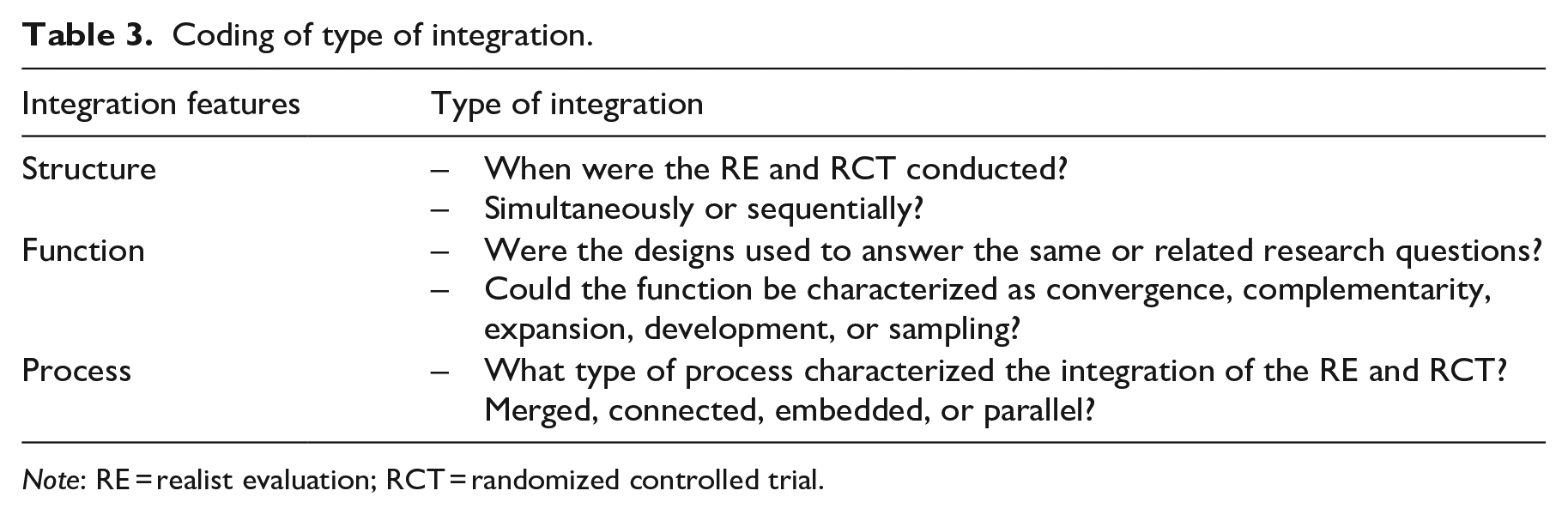

Integration of REs and RCTs

When attempting to grasp how RE and RCTs are integrated, it is useful to draw inspiration from cataloging mixed-methods designs as REs and RCTs tend to rely on qualitative and quantitative data respectively. Palinkas et al. (2011) used, and revised, existing taxonomies for classifying how mixed-methods designs can be integrated according to (1) structure (timing of designs as either sequential or simultaneous), (2) function (how designs were used to answer the same or related research questions), and (3) process (strategies for combining designs) of mixed-methods studies.

We found this typology useful and, with minor adaptations, applicable to categorizing how realist RCT studies were integrated in terms of structure, function, and process. Adaptations included the redefinition of categories to study designs and the addition of one process category, “parallel,” to reflect the existence of parallel studies. These features are presented in Table 3.

Coding of type of integration.

For each of the integration features it was possible to choose one of the subcategories. We refer to Table S3 in the Supplemental Material for a detailed description of each category. We used the framework to characterize

Review limitations

No review is without its limitations. The design standards for RCTs are adapted from existing design standards. The RAMESES standards allow for considerable variation, which also implies that quality ratings may vary. The integration rating has been adapted to the current purpose and has not been applied before. Currently, no consensus quality standards for realist trials exist.

Furthermore, this review solely pertains to published REs. These published applications represent a smaller subsample of all REs conducted during the time period. Moreover, the published subsample of REs may differ in important ways from non-published REs. For this reason, the generalization of findings beyond the boundaries of the sample should be approached with caution. Furthermore, insofar realist trials may have been published without using RE in title, keywords, or abstract, they may have been inadvertently excluded. Despite these limitations, the position we take is that the present review provides important and useful insights into the nature of published realist trials.

Findings

This section presents the main findings of our review of the realist trials. The section is structured around the two guiding research questions.

To what extent and how do realist trials align with quality standards for realist evaluations and randomized controlled trials?

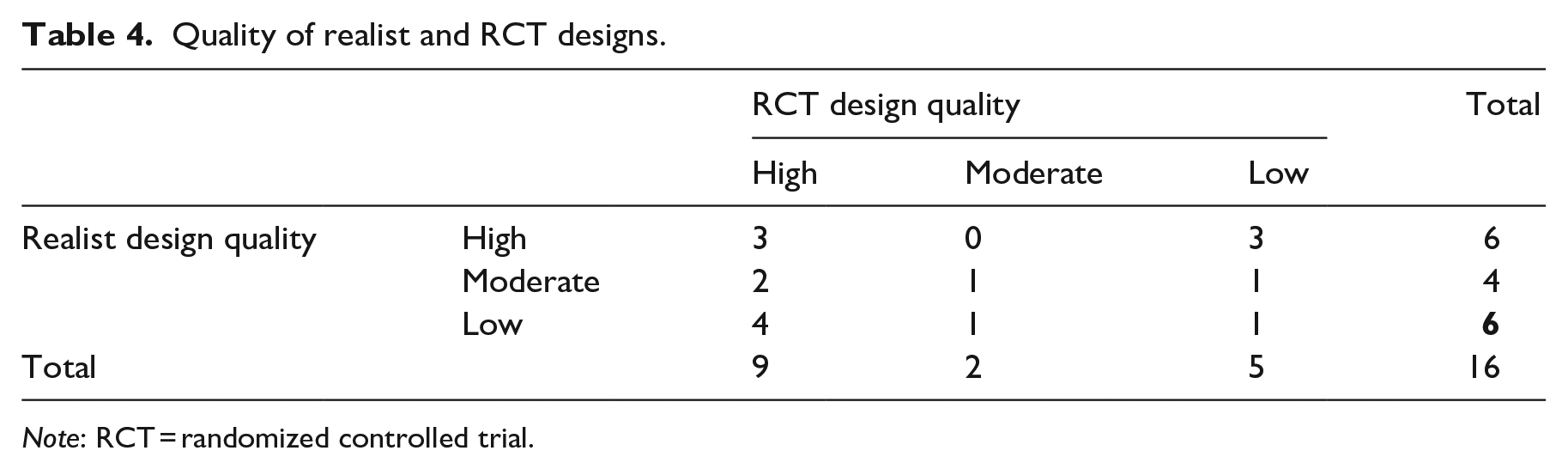

The results of the study design quality coding are summarized in Table 4. As the table shows, the realist designs were evenly distributed across the design quality categories (i.e. from low to high quality).

Quality of realist and RCT designs.

Six RE designs were of high quality with good or excellent adherence to all realist design standards. These REs were in their design, data collection, and analysis explicitly and consistently aligned with realist principles.

Another four RE designs were of moderate quality with adequate or good adherence to most realist design standards. The purpose and research questions in these REs were consistent with RE and involved CMO configurations. However, the sampling, data collection, and analysis stages were not explicitly consistent with realist methodology.

Finally, there were six low-quality realist designs, characterized by low adherence to most of the realist design standards. The purpose and guiding evaluation questions for these evaluations did not align with a realist explanation of how and why interventions work. The evaluations involved significant misunderstandings of realist generative causation, and it was unclear how the data collection and analysis connected with the CMO configurations.

The RCT designs were generally of high quality. Nine RCT studies were of high quality characterized by true randomization, low overall/differential attrition, no study confounds, and valid-reliable outcome measures. Another two RCT designs were of moderate quality because the study had high overall/differential attrition, but the authors adjusted for baseline differences in the analytic sample. The two studies had no study confounds and used valid-reliable outcome measures. Finally, there were five RCT designs of low quality. Three of the studies had high attrition rates, and the authors did not adjust for baseline differences in the analytic sample. The other two studies were of low quality due to a compromised randomization procedure and the presence of a study confound.

Of particular interest in the present context, we found three realist trials (Alvarado et al., 2017; MacKenzie et al., 2009; Martin and Tannenbaum, 2017) that adhered well to quality standards for both REs and RCTs, respectively.

We will now consider how the 16 studies integrated the realist and RCT aspects of their designs in the integration section that follows.

How are the RE and RCT aspects of realist trials integrated?

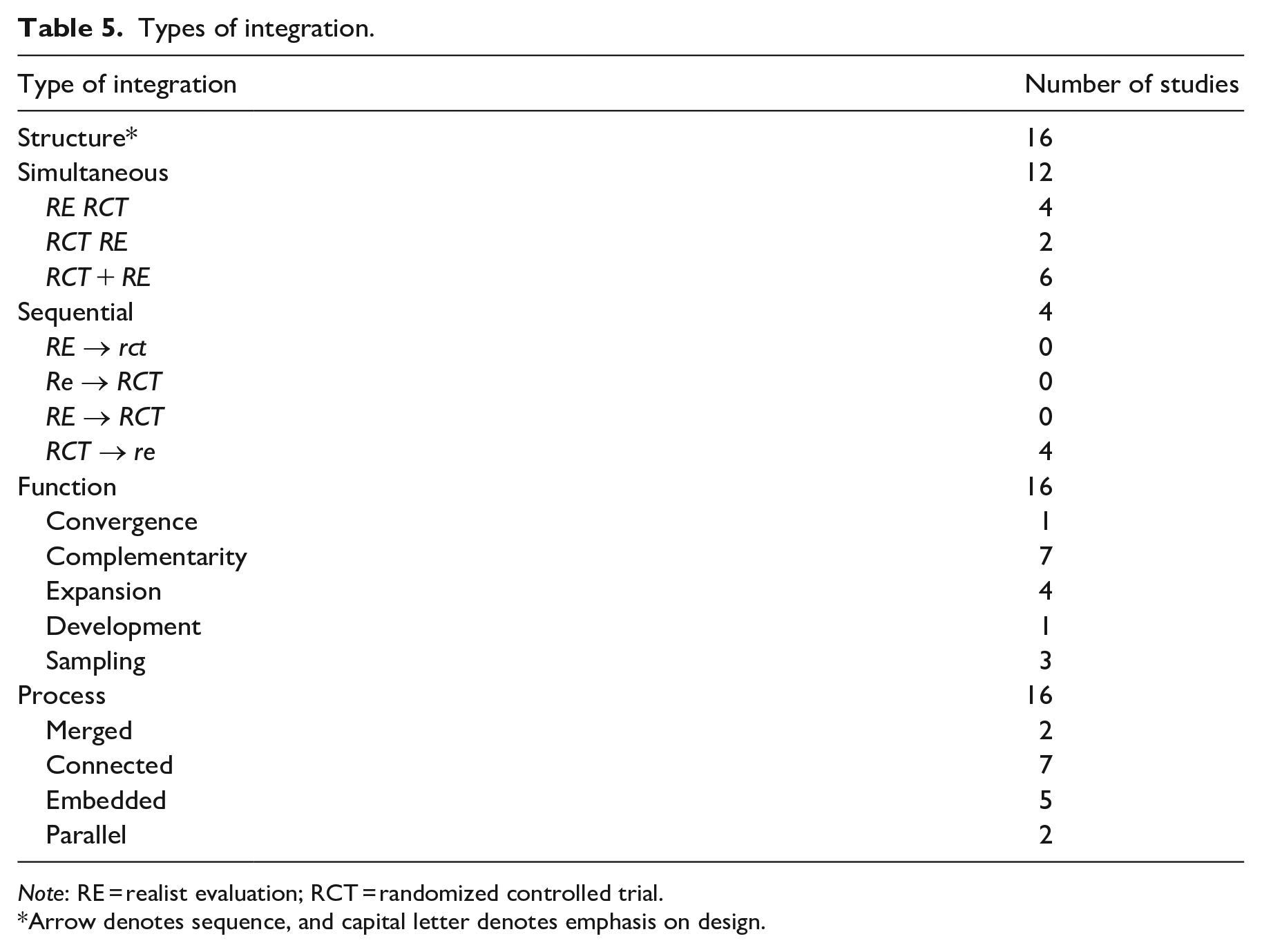

The results of the integration coding are presented in Table 5.

Types of integration.

Arrow denotes sequence, and capital letter denotes emphasis on design.

The table indicates that the majority of the studies were conducted with a simultaneous structure, albeit weighted differently. Functionally, about half were studies wherein RE and RCT served complementary purposes, and one-third used one design to expand upon questions raised by the other design. This also implied the processual application trended toward connected or embedded studies where one dataset built upon the other or played a supportive role. Across these trials, it stands out how different the studies were carried out.

We then analyzed

Fused integration: Integrating RE and RCT design, analysis, and conclusions

In the first type of integration, the realist trials integrate the design, analytical processes, and conclusions of the RCT and RE. In this category, we identified four realist trials. As exemplars of two different approaches, we highlight two studies.

Martin and Tannenbaum (2017) carried out an RE alongside an RCT of the EMPOWER intervention targeting older chronic benzodiazepine consumers. The RE and RCT were reported separately. The RE focused on the intervention group and was designed around a program theory based on Michie’s capacity, opportunity, and motivation model for behavioral change as mechanisms triggered by the intervention. The EMPOWER intervention consisted of a brochure containing a presentation of evidence of risks associated with drug use and a self-assessment component. The RE used both quantitative and qualitative methods for data collection. Quantitative methods were used to collect baseline, endline, and follow-up data used for both the RE and RCT. For each mechanism and outcome variation, they conducted statistical tests (independent

Von Thiele Schwarz et al. (2017) report on the use of participatory, rapid-cycle Kaizen processes to better employee well-being in two cases (postal services and hospital). The authors argue that the use of kaizen is a mechanism driving improvement in both organizational and employee objectives. Both studies were designed as cluster RCTs (one with a wait-list design). Quantitative data on contexts, mechanisms, and outcomes were collected at baseline and two follow-up periods. A program theory involving proximal, intermediate, and distal outcomes was developed. Hypotheses testing the CMO configuration were formulated. They used structural equation modeling (SEM) to test the program theory and its associated hypotheses for CMO configurations. Effectively, the authors treated the mechanism (use of Kaizen boards) as a variable in SEM analysis based on quantitative questionnaire data. The two studies vary as one treating the use of kaizen boards as a mechanism for implementing action plans for employee well-being and prior use of Kaizen boards (for productivity purposes) as context potentially activating the mechanism of using Kaizen boards to better employee well-being. Further findings from the studies were reported separately.

The exemplars highlight different ways through which the program theory and its hypothesized CMO configurations were analyzed. One used quantitative data and SEM to test hypotheses, while the other applied mixed methods to identify contextual factors influencing variations in outcome. In both cases, the program theory was central to designing the study. While Martin et al. only looked at the intervention group to conduct the RE analysis, Von Thiele Schwarz et al. directly used the control group results to analyze if the same structural model (for relations between context mechanisms and outcomes) held equally for the intervention and control group.

Nested integration: RE nested within RCT or RCT nested within RE

This type of integration can be described as an RE nested within an RCT: The RCT and RE are planned to be conducted alongside each other, and data are collected

Common to these studies is the explicit statement that RE can explain outcomes, but with limited analytical integration between context and mechanisms on the one hand and outcomes of the RCT on the other hand.

One such exemplar is MacKenzie et al. (2009) (see also Koshy et al., 2012) who applied an RCT as a tool to assess impacts and RE to further understand variations. Contrasting outcomes were applied for a purposive sampling of participants from both intervention and control groups to analyze CMO configurations. The study concerned a weight management intervention informed by the transtheoretical model targeting smoking cessation participants from deprived areas in Glasgow, Scotland, over 24 weeks. Quantitative results from the trial detailing no significant change in weight loss or smoking cessation were reported separately, but the results were related to each other. The study identified physiological and psychological change mechanisms. Based on differing outcomes, a qualitative analysis identified emergent themes shedding light on how mechanisms worked in different contexts. The authors concluded: “participants who attempted behavior changes simultaneously were more likely to succeed at one or more changes as compared to those who preferred a sequential approach” (Koshy et al., 2012: 8).

Successive integration: Realist evaluation conducted after RCT to explain the results, conclusions are not integrated

The third type of integration is a successive design wherein an RCT was conducted to establish intervention impact, while an RE study was carried out afterward to explain

The majority of the studies in this group (Bhanbhro et al., 2016; Byng et al., 2005; Keurhorst et al., 2016b; McQueen et al., 2017)

Alvarado et al. conducted an RE as part of the ROLARR trial focused on surgical outcomes from robotic surgery versus laparoscopic surgery for rectal cancer in the United Kingdom. There is little indication of integration between RE and RCT components other than the RE is conducted with the explicit aim of opening the black box of the ROLARR trial.

The RE study was based on qualitative interview data in a teacher–learner cycle. The findings from the RCT and RE were reported separately. They set out to elicit how, why, and in what circumstances robotic surgery impacts teamwork in the operating theater, and to what effect. Based on literature review and interviews, they identified a number of CMO configurations, of which the most salient (according to stakeholders) were selected for testing in iterative stages. According to the authors: “Surfacing realist mechanisms take understanding beyond intervention description into explanation . . . [RE] enabled us to surface mechanisms that explain how and why collaborative activities were successfully performed during robotic surgery using strategies” (Alvarado et al., 2017: 459).

Generally, these studies lack integration of conclusions or findings; although some of them build on cases from the RCT, data collection was carried out or planned after the RCT, which to some extent resemble post hoc explanations of failed interventions.

In sum, across the different types of integration—fused, nested, and successive—a diverse range of approaches became apparent. Some were driven by analytical approaches such as SEM, retroductive, thematic, and framework analysis, whereas others utilized intermediate results and steps in the data collection to stratify sub-samples of the target population or multiple (contrasting) cases.

Across the 16 studies identified, there are considerable variations in design, types of methods, and analytical strategies. Overall, the studies that fused RE and RCT designs showed the highest level of integration in terms of structure, function, and process. As noted above, this is not inter alia the superior design option.

In this group of fused integration studies, we also identified studies that combined both quantitative and qualitative data and analyses and integrated configurational hypothesis testing into the design of the RCT.

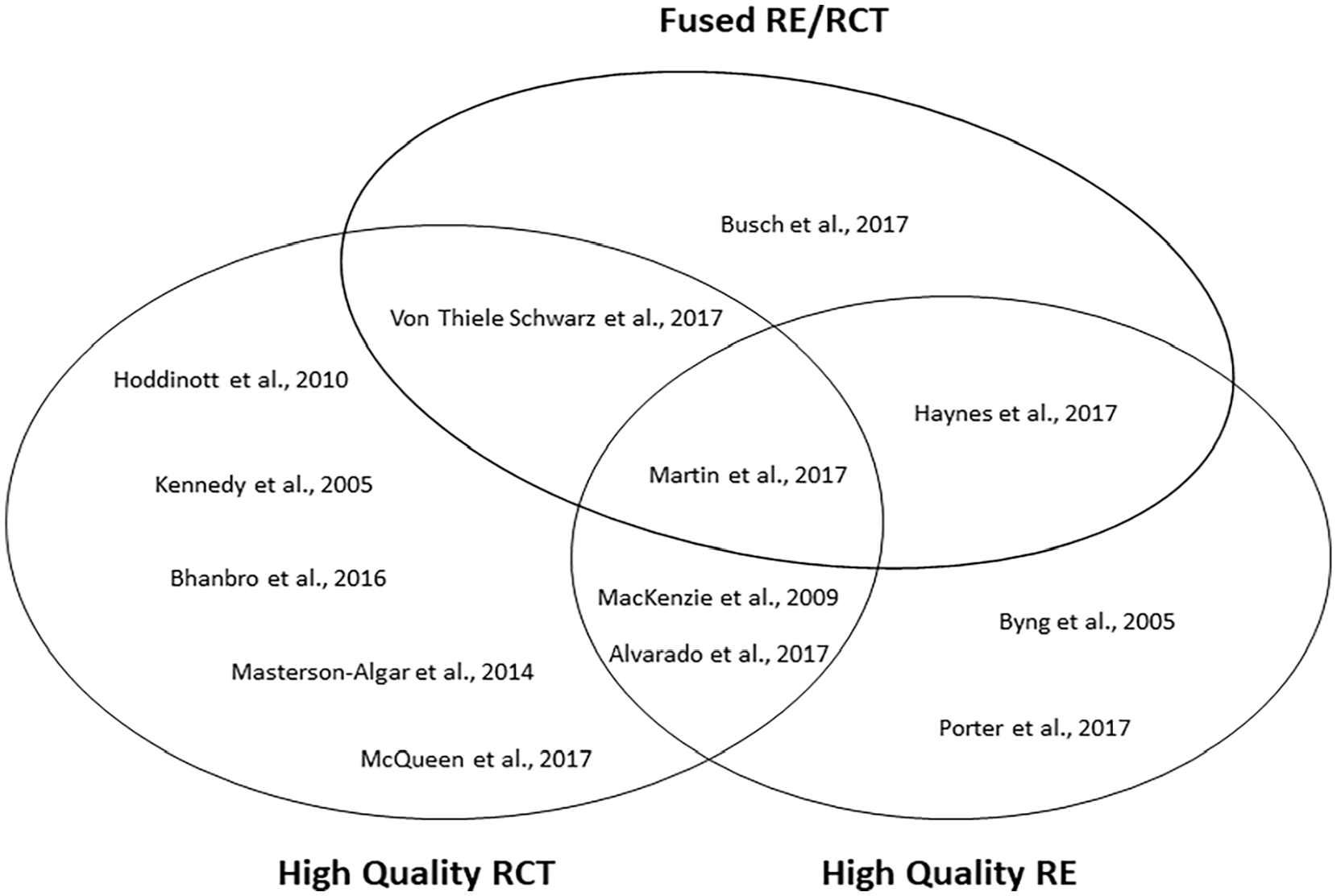

We then analyzed how this was related to the quality rating of the RCT and RE designs respectively. In Figure 1, an interesting pattern emerges that sheds further light on the findings presented above (see Table 5) which documented that there is a design trade-off between adhering to RCT and RE quality standards.

Venn diagram of high-quality RECTs, REs, and fused design.

Of the 16 realist trials, 9 involved a moderate- or high-quality RCT design, while 5 involved a moderate- or high-quality RE. We found three realist trials that adhered well to quality standards for both realist and RCTs, respectively. Unicorns do exist, but they are rare.

Only one of these studies fully fused its RE and RCT design in terms of structure, function, and process. It attests to the difficulty in adhering to high-quality design and reporting requirements for both RE and RCT (Martin et al., 2017).

It is worth noting that the higher-quality RCTs are published, and reported separately, in journals with RCT reporting standards. Similarly, the higher-quality REs tend to follow—even make explicit—reference to the RAMESES design and reporting standards. Likely, the RAMESES standards will serve to promote higher-quality applications in a similar fashion in the future. We caution that such reporting standards may prove prohibitive for methodological innovation such as the combinations of RE and RCT.

Discussion

Informed by our review and its findings, we will return to the three points of contention regarding RCTs and REs discussed in the introduction and relate our findings to the wider debate.

Are realist trials ontologically/epistemologically incompatible?

This is largely a theoretical point of contention, which cannot be resolved by this review. While the realist trials explicitly state the epistemological and ontological underpinnings of their studies, the authors largely refrain from discussing any epistemological or ontological issues related to integrating an RCT design and an RE as part of the same evaluation. Notable exceptions are Porter et al. (2017b) and Masterson-Algar et al. (2014).

We posit that evaluators can purposefully integrate an RE approach with an RCT design and that there are potential benefits to doing so. While rooted in different ontological/epistemological camps, methods and designs can be combined in the same evaluation study. Operationally, integration occurs when applying methods for data collection and analysis. We do this all the time in mixed-methods studies and benefits may be both practical and heuristic. The same is the case for realist trials. In fact, scientific breakthroughs often stem from challenging conventional thinking.

To be clear, this integration of RCT designs and REs does not entail that the RCT part itself is transformed to be realist, nor does it entail that the RE becomes successionist. Evaluation, as an applied form of social scientific research methods, may help address pertinent evaluation questions with one evaluation study integrating multiple perspectives. As we see it, this consideration trumps philosophical purity. Some studies, for example, Martin et al. (2017), exemplify that the RCT and the RE can be designed and implemented in adherence to their respective epistemological and ontological underpinnings and design standards. The pragmatic solution they applied was to collect outcome data that could be used for both analyses, thereby letting the two ontological approaches (or views on causality) co-exist within the same study. The question of whether randomization is compatible with a realist ontology was in the same pragmatic way answered by using randomization for the RCT positivist analysis (to determine if impact occurred) and only using the intervention group sample for the realist analysis (to explain how, why and for whom impact occurred).

This review both sketches the difficulty in doing so and a number of potential design and analytical techniques that hold promise, for example, to use variations between control and intervention groups to elicit variations in CMO configurations for further analysis. We recognize that this integration may be difficult in practice, but theoretical purity should not trump heuristic promise.

Can realist trials conceptualize and operationalize mechanisms in realist terms?

For social phenomena to become empirically researched, it requires operationalization of abstract concepts through the identification of constructs and indicators in order to empirically establish their presence and test hypotheses. This also applies to key realist terms.

Our position is—grounded in evidential pluralism—that evaluators should apply different analytical strategies and data sources across the qualitative and quantitative traditions to fully explicate causal mechanisms. As the included studies in this review showcase, this is already practiced widely. For example, Von Thiele Schwarz et al. (2017) applied quantitative data and statistical methods, such as SEM, to test the configurational processes in which mechanisms were activated in various sequences of the program theory.

Some scholars (i.e. Astbury and Leeuw, 2010) have argued that mechanisms should not be treated as variables in statistical analysis. If the mechanism is to become a useful construct for empirical social science (herein program evaluation), it must transcend from being merely a theoretical construct. To become a useful tool for empirical research, evaluators must be able to establish empirically observable indicators (quantitative or qualitative) as proxies for these unobservable mechanisms and, in extension, be able to test these hypotheses (quantitatively or qualitatively) as to better understand the workings of the mechanisms in CMO configurations. We are not convinced that this can only be done using case-based or qualitative methods. When testing such hypotheses indicators invariably become variables, both in variance-based (such as SEM) and case-based inference (such as qualitative comparative analysis). This aligns well with the realist push for methodological pluralism (Van Belle et al., 2016). Hopefully, even more quantitative and qualitative methods and analyses will stimulate how we test hypotheses about CMO configurations with converging lines of inquiry.

Can realist trials account for the context and complexity of interventions?

The debate on impact evaluation and attribution has evolved since the 1990s and 2000s. Today, there is widespread acknowledgment of the need for different evaluation designs for different evaluation contexts, purposes, and questions.

In the most recent update to the British Medical Research Council guidance on evaluating complex interventions, the authors acknowledge that complexity has many origins. It may stem from the intervention itself, the flexibility in service delivery, and through the intervention’s interaction with its context. Interventions are best viewed as part of complex adaptive systems. They argue that a multiplicity of research perspectives must be embraced and bolder methodological innovations must be explored (Skivington et al., 2021). Realist trials probe such boundaries and hold the potential of straddling different purposes. However, their ability to (better) account for context in complex interventions still holds more suggestion than a proven track record. Effectively, our findings show that most published examples have compromised quality design standards on either or both RCT and RE. Yet, the potential should not be stymied by philosophical differences. Our findings also indicate that it is rare but possible to combine approaches in fused and nested designs.

Future directions for realist trials

Realist trials are breaking new, contentious, ground. Along with new ideas and new approaches, questions will inevitably arise. While the current review does not resolve underlying philosophical differences, the review did reveal relatively few cases that managed to design both a high-quality RCT and RE. If realist trials are to evolve from its infancy, there is a clear need to catalog designs that integrate RE and RCT, and along with it, analytical approaches that make full use of quantitative and qualitative data. Recent developments in RE using Qualitative Comparative Analysis and process tracing methods could provide guidance in this endeavor. In this review, we found some cases that successfully merged the two designs, or embedded REs within RCTs without compromising design quality. Continuing to insist that merging is not possible would therefore entail a responsibility to bring forward exemplary cases and rigorous methods that bring the evolution of RE alone forward. Finally, as design and reporting standards emerge in both RCT and RE, one will most likely need to develop standards amenable to the particular subfield of realist trials. Such standards should consider the structure of the research design, function, and integration of methods and sources, applied analytical techniques, and not the least how CMO configuration was developed, tested, and refined through realist trials.

Conclusion

In this article, we reviewed the published examples of realist trials. We identified 16 published cases. The designs vary considerably in how RCT and RE are integrated. Most often RE and RCT findings were published separately. When assessing the quality of studies toward established methodological standard, we only identified three high-quality studies adhering to both established RE and RCT design standards.

Supplemental Material

sj-docx-1-evi-10.1177_13563890231200291 – Supplemental material for The curious case of the realist trial: Methodological oxymoron or unicorn?

Supplemental material, sj-docx-1-evi-10.1177_13563890231200291 for The curious case of the realist trial: Methodological oxymoron or unicorn? by Steffen Bohni Nielsen, Sofie Østergaard Jaspers and Sebastian Lemire in Evaluation

Supplemental Material

sj-docx-2-evi-10.1177_13563890231200291 – Supplemental material for The curious case of the realist trial: Methodological oxymoron or unicorn?

Supplemental material, sj-docx-2-evi-10.1177_13563890231200291 for The curious case of the realist trial: Methodological oxymoron or unicorn? by Steffen Bohni Nielsen, Sofie Østergaard Jaspers and Sebastian Lemire in Evaluation

Supplemental Material

sj-tif-3-evi-10.1177_13563890231200291 – Supplemental material for The curious case of the realist trial: Methodological oxymoron or unicorn?

Supplemental material, sj-tif-3-evi-10.1177_13563890231200291 for The curious case of the realist trial: Methodological oxymoron or unicorn? by Steffen Bohni Nielsen, Sofie Østergaard Jaspers and Sebastian Lemire in Evaluation

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.