Abstract

Objectives

To establish how quality indicators used in English community nursing are selected and applied, and their perceived usefulness to service users, commissioners and service providers.

Methods

A qualitative multi-site case study was conducted with five commissioning organizations and their service providers. Participants included commissioners, provider organization managers, nurses and service users.

Results

Indicator selection and application often entail complex processes influenced by wider health system and cross-organizational factors. All participants felt that current indicators, while useful for accountability and management purposes, fail to reflect the true quality of community nursing care and may sometimes indirectly compromise care.

Conclusions

Valuable resources may be better used for comprehensive system redesign, to ensure that patient, carer and nurse priorities are given equivalence with those of other stakeholders.

Introduction

Domiciliary care provided by trained nurses is a cornerstone of community-based healthcare in the United Kingdom (UK) and internationally.1,2 In England, community care is delivered by qualified nurses registered with the Nursing and Midwifery Council; additional care may be provided by allied health professionals, unregistered care workers and/or patients’ relatives or friends. 3

Increasing financial pressures on English hospital services and a growing older population have resulted in greater demand for domiciliary healthcare. This ranges from straightforward medication administration to highly skilled, tailored care for patients with complex conditions. 4 This continuing escalation has resulted in concerns about care quality in some areas. 4 Poor care is obviously unacceptable; it is therefore important that means of assessing quality in healthcare are robust. 5

Care quality has been described as the interplay between provider–patient interaction, healthcare outcomes and care delivery mechanisms. 5 Its assessment is often extremely complex, involving challenges in aligning processes with priorities of different stakeholders, including those of service users (patients and/or their informal carers, usually relatives or friends).5,6 Methods for assessing healthcare quality usually include applying quality indicators, typically quantitative performance measures requiring specified outcomes or activities, whose application is assumed to drive quality improvement 7 ; however, this assumption has been challenged. 8 Moreover, the focus on measuring quality and the use of quantitative metrics can result in unintended negative consequences for patients and staff – for example, prioritizing achievement of targets above patient preference, or privileging one area of care over others.9,10

English healthcare is commissioned by Clinical Commissioning Groups (CCGs) (government-mandated bodies) from service providers employing healthcare professionals. These include National Health Service (NHS), private (for profit) and not-for-profit organizations. Quality is typically assessed using contractually developed indicators or pay-for-performance measures mandated by NHS England (NHSE), a public body setting priorities and standards for the NHS. 11 Providers are also inspected regularly by the Care Quality Commission (CQC), a government-sanctioned independent regulator. 12 However, providers are not obliged to align methods of quality measurement with those of the CQC.

Following a key report in 2013, 13 a focus on patient safety has improved English hospital nursing care quality. 14 However, little comparable focus on community nursing care quality has occurred. Assessing domiciliary care quality is particularly difficult. 15 Frequently the only witnesses to episodes of care are practitioners, service users and possibly relatives or friends. Moreover, patients are often frail older people with complex and/or deteriorating conditions where suitable health outcomes are hard to identify. 4 A framework for assessing community nursing quality has been published, but it is not known how widely it is being used. 4

Recent changes to the English care landscape have introduced further complexity to the processes of assessing care quality. Social care is provided by government-funded local authorities. However, since their introduction in 2016, mandatory sustainable transformation plans (STPs) require CCGs, healthcare providers and local authorities to collaborate in delivering and monitoring health and social care. 16

Very little research exists about assessing quality in community nursing. An American Nursing Association report 17 details the development of nursing-sensitive indicators for community care. Other papers present the validity and feasibility of purpose-designed community nursing quality indicators in England,18,19 and competencies which nurses in Wales 20 and Northern Ireland 21 consider appropriate for quality assessment. In the Welsh study nurses expressed concern that indicators designed for hospital settings were being applied in the community. 20

There has been no exploration of how quality indicators are used in community nursing in England, nor to what extent they affect care quality. If satisfactory care is to be delivered making best use of finite resources, methods of measuring quality must be transparent and fit for purpose. The aim of the project reported here is to establish how community nursing quality indicators are selected and applied in England, and their perceived usefulness to service users, commissioners and provider staff.

The project was undertaken in distinct phases. The first involved a national survey of CCGs to identify their community nursing service providers and relevant quality measures and has been reported elsewhere. 22 The second phase comprised a qualitative multi-site case study. 23 This paper reports findings from this phase, detailing variations in perspectives and priorities between commissioners, service provider managers, nurses and service users.

Design and methods

The case study objectives were to discover:

How are quality indicators selected? How are indicators applied? How useful are indicators to service users, commissioners and community provider staff?

The case-study method allowed an exploration of relevant issues from different perspectives and triangulation of data from multiple sources and sites, resulting in enhanced analytic validity. 23

Ethics

The study was approved by Yorkshire & The Humber – Leeds West NHS Research Ethics Committee (14/YH/1059).

Sample

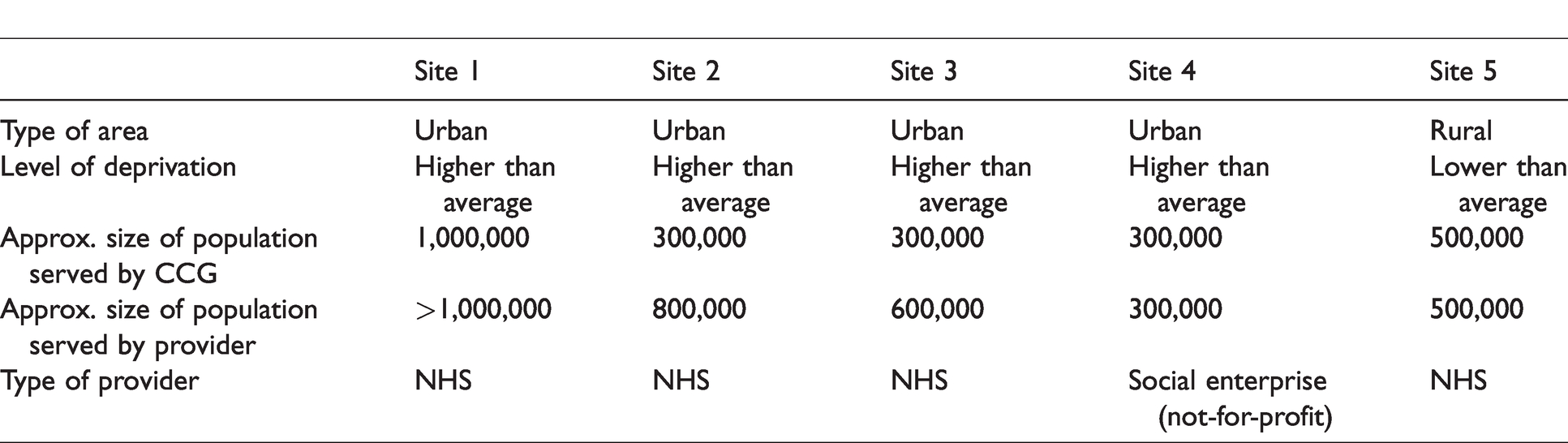

The case study sites comprised pairs of CCGs and their local community nursing service providers. They were identified in phase 122 following the principle of maximum variation, 23 aiming for representation from organizations with different characteristics: urban/rural, NHS/other providers, different degrees of affluence. Five sites were recruited (Table 1); this number afforded the required degree of variation, while remaining feasible within the study constraints. Initial approaches were made to the CCGs in each site.

The case study sites.

Stakeholders were recruited through purposive snowball sampling: commissioners, provider managers, community nursing team leaders, community nurses (any registered nurse providing domiciliary care) and service users.

Data collection and analysis

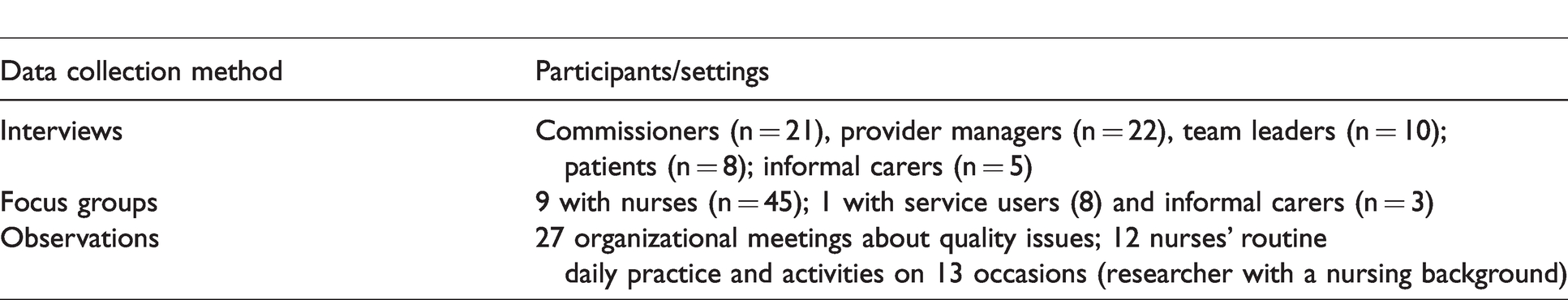

A researcher led data collection in each site, focusing on the selection and use of quality indicators (see Table 2).

Data collection.

Interview and focus group data were recorded and transcribed verbatim. Observational data were recorded in field notes.

Data were coded and analysed for emerging themes using NVivo 10, following a coding framework relating to the study objectives devised by four researchers. Establishing inter-researcher reliability entailed common analysis of a small set of interview transcripts and field notes. Each researcher subsequently analysed cross-site data from distinct sets of participants. Merging the resulting NVivo projects provided definitive within-case and cross-case analysis. 23 The latter revealed only minimal differences in context: all the sites were struggling with reorganization, new computer systems and high rates of staff sickness and attrition. The researchers therefore focused on cross-case analysis.

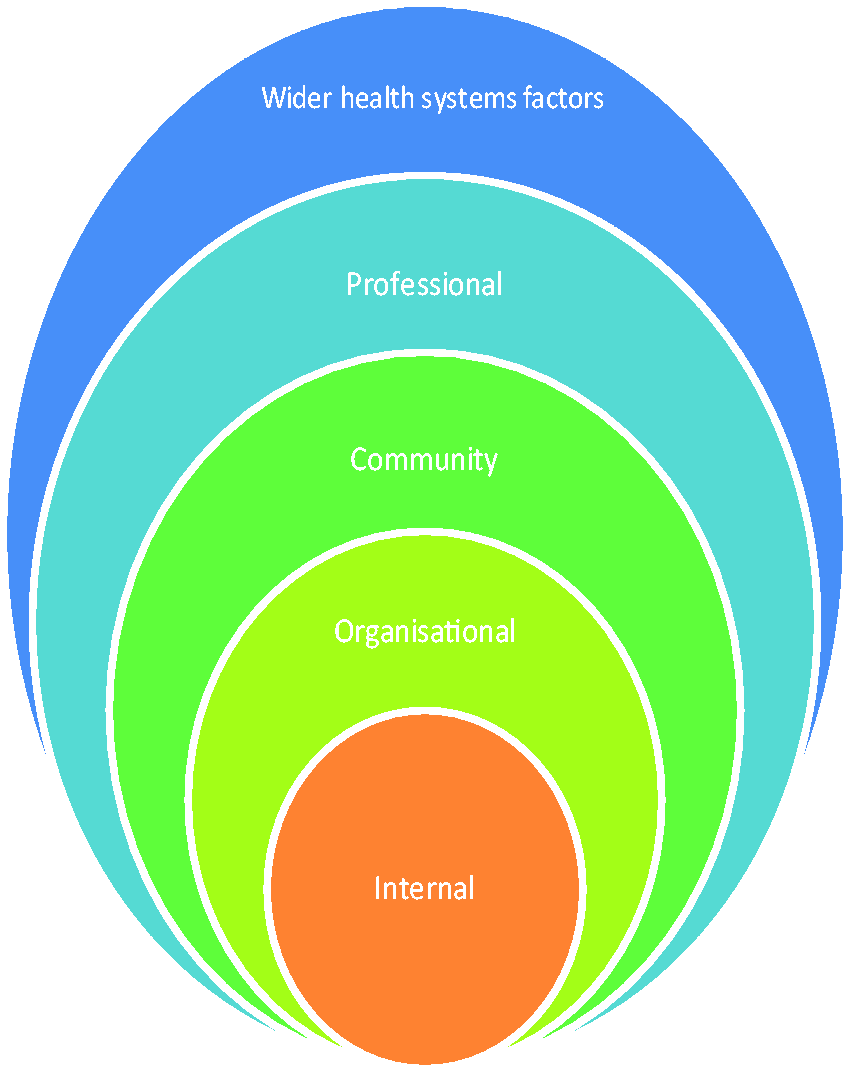

Interpretation was aided by a framework developed to explain the motivation of public health sector workers, 24 subsequently adapted for a national evaluation of pay-for-performance indicators. 25 This draws together explanatory contextual constraints and facilitators affecting attitudes and behaviour of healthcare managers and practitioners, namely factors operating in the spheres of wider health systems, professionality (for example, autonomy), communities (including service users), organizations and individuals’ internal values (Figure 1).

Adapted framework used to aid analysis. 25

Testing of findings

Key findings were tested with stakeholders in ten public workshops across England, publicized through CCGs, where small mixed groups of commissioners, provider managers, community nurses and service users (n = 266) discussed the relevance of findings for their local situations. See the final study report for more details. 22

Findings

Themes identified mapped to the three research questions: selection of indicators, application of indicators and usefulness of indicators. Within each theme, data were considered in relation to the interpretive framework (see Figure 1).

Selection of indicators

Drivers for selecting indicators were substantially linked to the wider health system sphere, such as the need for accountable management and organizational change. Selection commonly involved protracted negotiations between commissioners and provider managers. The latter articulated concerns about their relative vulnerability, as commissioners were perceived to control the process. Some commissioners acknowledged this power differential, but reported seeking constructive relationships and aiming to set mutually acceptable targets. However, commissioners stressed they were accountable for public funds, and required providers to show continuous improvement by setting targets involving concrete changes in activity: ‘Our focus is more on what are we getting for our money’ (Commissioner, Site 4).

Conversely, providers argued that such targets were often unrealistic with no solid statistical basis: ‘What’s the feasibility of being able to achieve that [target]?…[Last year] we were penalized for not attaining a target that was unattainable!’ (Provider Manager, Site 2).

Other power differentials could also affect indicator selection. One provider manager explained general medical practitioners’ (GPs’) power in this regard: ‘Even if [the CCG] have approved it, if the resistance gets too much, they can and have stopped developments before because of the backlash from [GPs]’ (Provider Manager, Site 3).

In response to wider agendas, all the sites were planning or implementing integrated care across different disciplines and organizations, aiming to promote joint assessment of care quality. It was felt by both commissioners and provider managers that developing indicators for care delivered collaboratively could be problematic: You have to be careful about the performance measure that you’re putting in place… [to ensure that] the [provider organization] is not solely dependent on another organization delivering it. (Commissioner, Site 3) The Director of Nursing said that there appears to be some anxiety among providers about who will carry ultimate responsibility for delivery of such [an indicator]. (Meeting Observation Notes, Site 2)

One commissioner acknowledged the difficulty of devising monitoring systems that accurately reflect issues for patients across different services and organizations: ‘We see the importance of having that link [with social care]…but it is hard to actually pick that out and quantify it’ (Commissioner, Site 4).

Another commissioner spoke about the necessity of developing new ways of contracting: ‘It might be three different providers…providing one service…there’s a consultation now about an alliance agreement…one contract with all three of them, it’s a very different way’ (Commissioner, Site 1).

In contrast to commissioners and provider managers, nurses’ perceptions appeared to be grounded in professional and/or organizational factors. There was widespread suspicion among nurses that indicators were imposed due to perceived shortfalls in care, highlighted by terminology such as ‘provision of harm-free care’: ‘It’s negative from the start, because they’re trying to find out how much harm you’ve created’ (Nurse, Site 4).

Such fears could arise from experience. For example, a patient with a new pressure ulcer might trigger a serious incident investigation involving lengthy interviews and a panel discussion including the patient’s relatives. Ostensibly a learning opportunity, this was perceived as punitive by nurses involved: ‘I had a pressure ulcer that was identified in May and the investigation took [over a year]…People do feel scapegoated. I did…I wasn’t even here when the woman developed it’ (Nurse, Site 2).

There was no evidence of community or internal factors influencing indicator selection, in which neither nurses nor service users were involved.

Application of indicators

Findings about application of indicators related mainly to professional and organizational spheres; occasional influence of wider health system factors was also discernible. Commissioners and managers regularly monitored performance against targets. Doubts were expressed on both sides concerning the completeness of available data: ‘There have been big issues with getting the level of data that we need…[the provider is] not submitting complete data sets’ (Commissioner, Site 1).

Providers reported that nurses did not value data collection sufficiently to take care recording and reporting accurate data. Some managers offered targeted support in this regard: ‘We’ve managed to give some support to the nurses around [data entry] and that has helped improve performance. Because obviously, if they don’t put the data in, the performance looks very poor’ (Provider Manager, Site 3).

There was much criticism of current indicators; nurses felt that commissioners and/or policy makers did not understand that indicators designed for hospital settings were not appropriate for community care. For example, nurses argued they cannot control how often patients move or how they use pressure-relieving aids. Moreover, nurses said patient sampling protocols were inappropriate for community use, resulting in oversampling of some and omission of others. Staff were obliged to record numbers of patients with catheter acquired infections and new grade three pressure ulcers on a monthly basis for a nationally mandated indicator. However, as patients were often on the caseload for a long period and there was a set day for data collection, some patients were repeatedly sampled whilst others were not: ‘You end up using the same patient [over and over]’ (Nurse, Site 3).

Nurses suggested alternative ways of measuring quality in this regard: [Record] any pressure ulcers on your caseload that month, how many were attributed to hospital admissions, how many were attributed to patients that are in your care, and how many did you acquire from patients that weren’t in your care…how many did you heal in that next month. (Nurse, Site 3) We haven’t got the FRAX [UK Fracture Risk Assessment Tool], we’ve only got the FRAT [international Fracture Risk Assessment Tool]…I mentioned [it] to [our rehabilitation colleagues]…They looked at me like I’d come down in the last shower…I said, ‘So, you’re not doing it then?’ (Nurse, Site 5) Their fax machines were burning out…The amount of abnormal blood sugar levels you find, the system would crash’ (Nurse, Site 1). We had to [send nurses out to] start visiting patients at risk of developing pressure ulcers far more frequently…so things that weren't life threatening, like a continence reassessment…the work to achieve [the indicator visit] would take priority’ (Provider Manager, Site 4).

Both nurses and service users had reservations about staff being required to collect data about patient experience. It appeared that patients might not be honest about sub-optimal care received for fear of negative consequences: [The nurses] will maybe discuss it and decide that they are a bit against me grumbling or something, you know’ (Patient, Site 5).

Usefulness of indicators

Within the context of whole system or organizational factors, commissioners and provider managers appeared to value indicators. While commissioners questioned the validity of some data collected, overall there was consensus among them that, by using indicators, they could hold provider organizations to account for services delivered. Service managers thought that using quality indicators helped them to monitor the quality of care. They also felt that introduction of new quality indicators helped raise staff awareness of relevant issues.

However, in a view relating to community factors, one manager thought that indicators should be developed to support individual patients to meet specific goals tailored for their particular needs – for example, climbing a specified number of steps, a suggestion also made in the service user focus group. One nurse stated that quality should be measured in relation to ‘patient feedback and time spent’ and outcomes that make a difference, for example, ‘successful referrals to voluntary groups which provide additional support to lonely patients’ (Shadowing Observation Notes, Site 3).

Service users and nurses expressed their opinions in relation to community, professional and internal factors. Some service users argued that linking clinical assessment to quality indicators does not measure care quality, emphasizing that action taken is more important. Consensus was found across nurses and service users that health outcomes – such as catheter-acquired infections – were important to record, but did not in themselves reflect quality: I am not sure that [record of urinary tract infection] highlights the quality of the care of the catheter as it does not indicate the bags have been changed regularly’ (Informal Carer, Site 2). She’s in her mid-90s and she doesn’t have anyone to chat to apart from me or whoever comes to see her twice a week’ (Nurse, Site 3). You can see there are key points coming out as part of the conversation…They can’t just turn up and dress a leg ulcer and then go; there’s a lot more to it’ (Commissioner, Site 5). I suppose there’s what you actually do to a patient but…how they feel they’re treated and respected… that’s very difficult [to measure]. (Commissioner, Site 1) I don’t think [indicators] are a true reflection of what [we do]…They’re very task orientated…the true quality of the service isn’t necessarily around the tasks; it’s how the tasks are delivered. (Provider Manager, Site 4)

Discussion

Our data revealed that indicator selection was typically a lengthy and complicated exercise relating to whole system and organizational factors. Considering the current focus on economic difficulties across the NHS, 26 it was unsurprising to learn that meeting mandatory targets for both service delivery and financial performance was high on commissioners’ and managers’ agendas. However, nurses and service users – with perceptions and priorities clearly located within community, professional and internal spheres – were concerned about the overall quality of care, including its ‘softer’ aspects; these stakeholders had virtually no input into indicator selection. Notably, no participants considered current indicators to be truly reflective of community nursing care.

Considering the wider context, the data demonstrated that inter-organizational power differentials, exemplified by the differing levels of control enjoyed by GPs, commissioners and provider managers, affected the processes of indicator identification and selection to varying degrees. Given the well-documented difficulties associated with inter-organizational dynamics, 27 it can be argued that the ongoing drive towards collaborative practice and mandatory requirements associated with STPs 16 may further complicate the protracted process of indicator selection.

The validity of using methods of quality measurement in community nursing, driven by whole system and organizational factors, must also be considered. This study has provided further evidence of unintended consequences that can arise from their application. Healthcare provision in domiciliary settings is complex by default; 15 as in other contexts,9,10 the findings presented here reveal how applying indicators without sufficient understanding of the care context can indirectly affect care quality detrimentally. Our data also indicate inherent difficulties involved in designing service-specific quality measures across professional and organizational boundaries. It is self-evident that, if services are to be assessed on the quality of the care provided, measures used must be sufficiently discrete to be within the control of a service to implement.

Considering the findings in relation to community and internal spheres as defined in the interpretive framework, 25 services’ lack of responsiveness to patients appears key. Responsiveness was characterized in our findings as relating to ‘softer’ aspects of care, highly valued by both frontline staff and service users. It is known that the priorities of service users and of healthcare providers are not necessarily aligned 6 ; however, in an age when delivering ‘patient-centred care’ is the stated aim of English and many other healthcare services,28,29 it is ironic that none of the systems in place for measuring community nursing care appear to have been designed with service user priorities in mind. Despite ongoing debates concerning the definition of ‘patient-centred care’, 29 it is arguably the case that there should at least be provision for the patient voice to contribute to the identification and selection of measures designed to assess care quality.4,21 Our data showed no systematic patient engagement in developing quality indicators.

The other obvious voice lacking was that of nurses, who often regard indicators being applied as flawed. Given the widespread difficulties affecting services at all levels,4,22 it is arguably a poor use of time and resources for hard-pressed staff to collect data for indicators which they consider unfit for purpose. The findings suggest close alignment between nurse and service user priorities, as reported elsewhere 4 ; where the patient voice is difficult to record, formal engagement with staff could be an effective proxy.

Implications for domiciliary care

A notable finding was the universal acknowledgment that most indicators cannot reflect the true quality of English community nursing care, an issue recently reported elsewhere regarding community services generally. 21 A specific concern is the fact that applying inappropriate indicators not only wastes valuable resources, but in doing so, may also actually diminish care quality. The consistency of our findings across the sites and with other research,4,9,10,17,20 suggests the existence of deeply rooted issues which are unlikely to be amenable to short-term change, particularly in the ongoing economic climate. 26

Factors affecting care quality assessment appear to involve those arising from a mismatch between perspectives and priorities located in different spheres, as defined within the interpretive framework. 25 Adding to the known difficulties related to assessing care quality, 5 especially in community settings, 10 our data indicate that an extra layer of complexity is introduced when assessment is conducted across and by different organizations. These problems are not limited to England. Internationally, the drive to develop community-based collaborative care continues 27 along with an acknowledged need to develop suitable patient-centred care assessment processes4,6,29 and nursing-sensitive indicators for community-based nursing care.17,20 Additionally, it is known that applying unsuitable indicators can result in unintended consequences with concomitant problems for staff and service users.9,10 In the absence of suitable measures, developing indicators tailored to individual patient outcomes may be helpful, as suggested by some study participants.

For domiciliary care quality assessment to achieve the validity required, differences in stakeholder priorities located within different spheres must be addressed and more flexible approaches to quality assessment developed. It is gratifying to note that since this study was conducted, the rigidity associated with nationally mandated English indicators has been somewhat reduced. 30 However, for suitable quality assessment in a landscape involving multi-organizational and/or multi-professional domiciliary care delivery, comprehensive system redesign is required. Notably, frontline staff and service users should be consulted about how to define and assess care quality. Investigating links between, for example, nursing interventions and health outcomes using nursing-sensitive indicators, might better demonstrate contribution to care and enable more staff to inform policy.4,8,17,21 However, such changes will require active collaboration between all stakeholders.

Study limitations

The case study investigated only five CCG-provider pairs using a self-selected sample, which may have produced inherent bias. However, similar findings emerged across all five sites, irrespective of geographical location, size, type of organization or nature of community served, and were subsequently endorsed by all delegates attending the national workshops. In particular, during feedback from the small group discussions, the majority of community nurses and service users attending the workshops agreed with, and had personal experience of, key opinions and issues emerging from the study. 22 Moreover, difficulties affecting all the case study sites, particularly staff shortages and organizational restructuring, reflected the current national context. 4 Data were collected from a range of stakeholders, ensuring that all perspectives were represented.

Conclusion

This project aimed to explore how community nursing quality indicators are selected and applied, and how useful stakeholders consider them to be. The findings showed that indicators served only a limited purpose and were commonly beset with flaws. Moreover, stakeholders’ priorities were located within different spheres, for example, organizational versus internal. Whilst managers and planners appreciated their usefulness in relation to accountability and raising awareness of important issues with nurses, indicators were often perceived by the latter and by service users as punitive and/or a tick box approach to quality. All participants agreed there was a failure to reflect community nursing quality accurately. A better use of valuable resources may require comprehensive system redesign incorporating tailored, personalized patient-centred measures; the voices of patients and informal carers, either directly or through proxy, and those of frontline staff, must be given equivalence with those of other stakeholders.

Footnotes

Acknowledgements

The authors would like to thank all the study participants for their engagement with this research. Thank you also to Jo Hartland, Paul Roy and Katalin Bagi from NHS Bristol, North Somerset and South Gloucestershire CCG for their support.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the National Institute for Health Research (NIHR) HS&DR Programme (project number HS&DR 12/209/02). The views expressed are those of the authors and not necessarily those of the NHS, the NIHR or the Department of Health.