Abstract

Generative Artificial Intelligence (AI) has drawn widespread attention, with its capabilities and transformative potential drawing significant public and academic interest. Despite the growing industry and scholarly focus, the existing literature remains fragmented, lacking a cohesive framework to understand its complexity and underlying dynamics. This study addresses this gap by presenting a conceptual framework derived from a review of 98 peer-reviewed articles and conference papers published since 2020. It provides an overview of generative AI’s role across film, television, gaming, animation, extended reality (XR), and live performance sectors, offering an initial snapshot of the state of research and laying a foundation for future studies in this evolving field. Drawing on this analysis, the comprehensive review maps the key themes of the generative AI literature and explores their conceptual relationships. It identifies areas of progress and highlights gaps in the existing knowledge, proposing directions for further research to deepen the understanding of the field. By providing an in-depth examination, this study contributes to a more nuanced and informed perspective on generative AI’s integration and impact within the screen and live performance industries.

Keywords

Introduction

Generative Artificial Intelligence (AI) is a specialised domain within AI dedicated to creating systems that can produce original and meaningful content, including text, images, audio, and video (Cao et al., 2023). Unlike established AI or Machine Learning (ML), which primarily focuses on analysing data to classify, predict, or make decisions, generative AI is designed to synthesise new outputs that resemble the patterns and structures based on its training data; this capability is achieved through advanced computational techniques which enable these models to generate outputs that are both novel and contextually coherent (Marr, 2023). Due to its advancement in recent years, generative AI has attracted broad attention across academic, industrial, and public domains. Its growing prominence is also reflected in market predictions, with the global market size of generative AI projected to grow steadily from 2025 to 2031, increasing by USD375.2 billion (Statista, 2025).

Generative AI, exemplified by commercial tools such as DALL-E and ChatGPT, has demonstrated transformative potential across diverse contexts, including education (Baidoo and Ansah, 2023), healthcare (Meskó and Topol, 2023), art and humanities (Rane, 2023). Fuelled by its rapid market growth and increasing adoption across industries, AI is expected to create 20 to 50 million new jobs globally by 2030 (Manyika et al., 2017).

This research focuses on the screen and live performance industries, two key sectors where generative AI is poised to have a disruptive impact. The screen industry holds substantial importance, with the United Kingdom (UK) as an example, contributing £13.48 billion to the economy and supporting 219,000 jobs (BFI, 2021). The global live music market (one part of the live performance sector) is projected to grow from USD34.84 billion in 2024 to USD38.58 billion in 2025, and further reach USD62.59 billion by 2034 (Kharrati, 2025).

This economic relevance, combined with a strong openness to adopting new technologies, makes these industries ideal settings to explore the potential and impact of generative AI.

The study examines the literature associated with how generative AI is applied within the screen and live performance industries, assessing the impacts of these technologies, and addressing key questions about their adoption and implications. In doing so, this study comprehensively reviews, analyses, and synthesises findings from 98 peer-reviewed articles and conference papers. 1

By consolidating these insights, the research develops a conceptual framework, which is a new model grounded in a critical analysis of existing literature, presenting novel relationships and conceptual insights (Torraco, 2005). Therefore, this framework could contribute to integrating fragmented knowledge, clarifying key themes and their interconnections, and yielding new questions and directions for further research, thereby offering a structured and nuanced understanding of generative AI in the screen and live performance industries.

Scope of the review

An introduction to generative AI

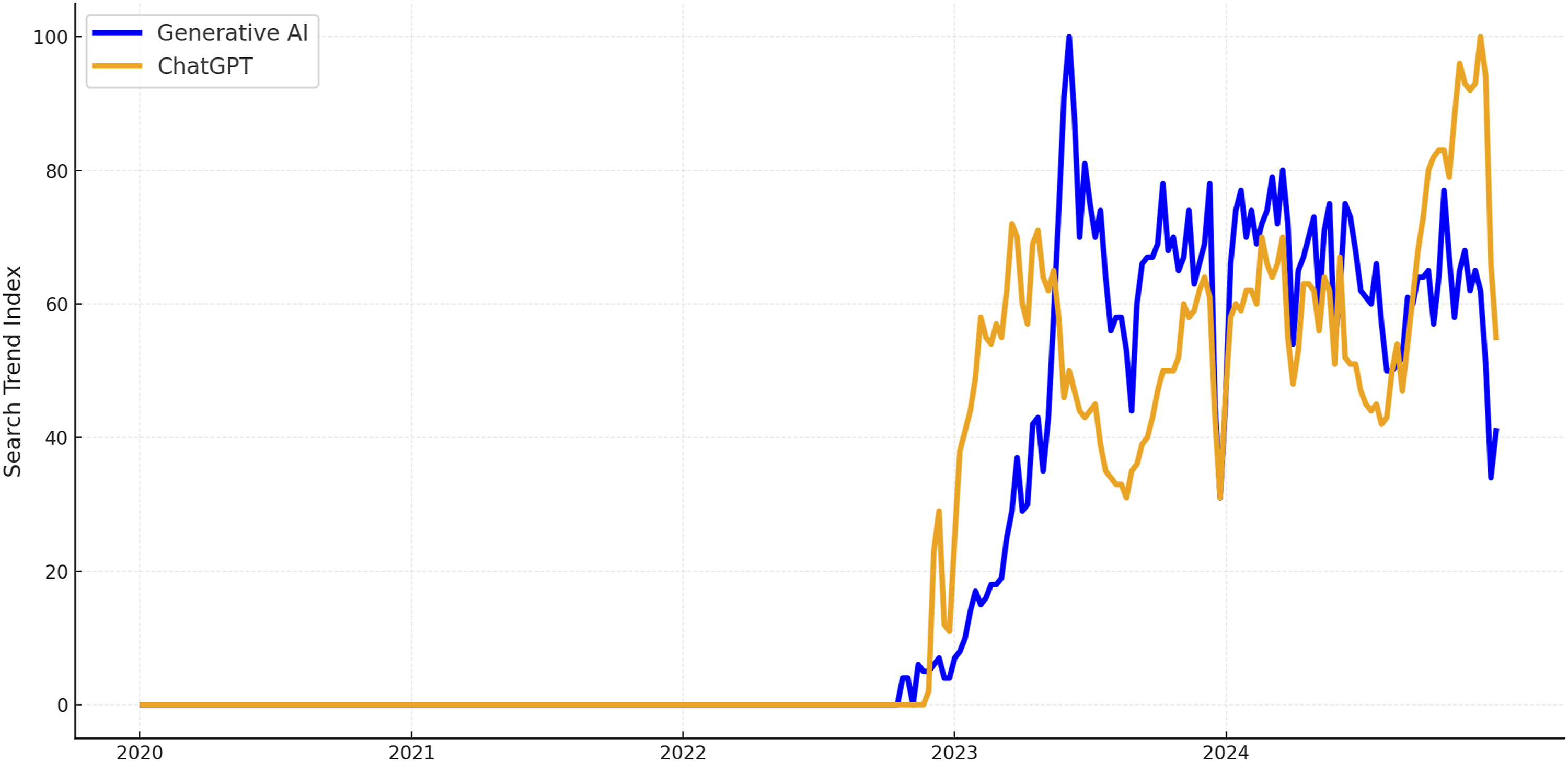

The launch of ChatGPT in 2022 brought generative AI into the spotlight, sparking widespread public interest and significantly increasing Google searches for the terms ‘ChatGPT’ and ‘Generative AI’ (Figure 1). This heightened attention can be largely attributed to ChatGPT’s capabilities in illustrating the practical uses and transformative potential in practice (Bengesi et al., 2024). ChatGPT and Generative AI search trends on Google.

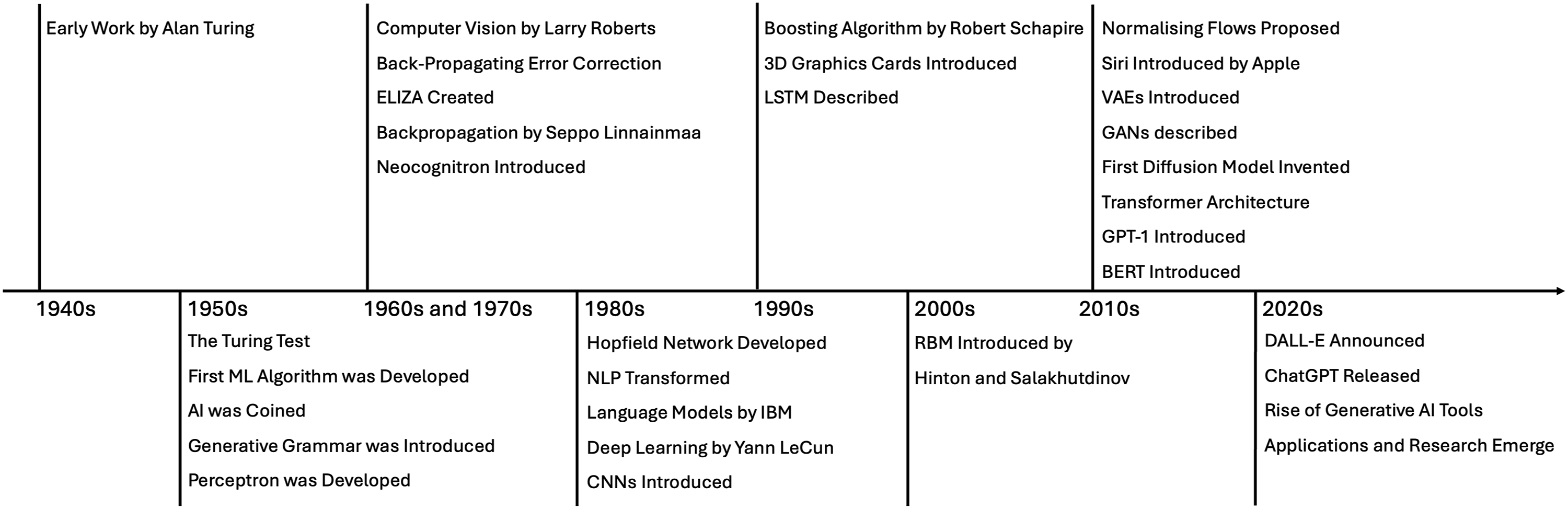

The success of ChatGPT is not a sudden phenomenon; instead, it is the result of decades of development and refinement in underlying technologies (Cao et al., 2023). While predicting the future trajectory of generative AI is challenging, tracing its development can reveal the foundational technologies and innovations that continue to shape its evolution. Therefore, this research first takes a historical perspective on generative AI (Figure 2). The history of generative AI.

The roots of AI extend back to the 1940s when Alan Turing explored the potential of developing computer programs capable of demonstrating intelligence (Turing, 1948). In the 1950s, Turing introduced the concept of the ‘Turing Test’, examining whether machines could mimic human intelligence (Turing, 1950). This test serves as an early conceptual example of AI, broadly defined as the simulation of human cognitive processes, including learning, understanding, and problem-solving (Konar, 2018). During the same period, early machine learning algorithms were developed for playing checkers (Samuel, 1959), and the term ‘artificial intelligence’ was formally coined (McCarthy et al., 2006). These early advancements laid the groundwork for subsequent AI innovations.

Natural language processing (NLP) emerged by combining AI and linguistics, with generative grammar frameworks introduced to translate natural language into structured formats interpretable by computers (Chomsky, 1956). Expanding on this, ELIZA, one of the earliest chatbots, demonstrated that computers could simulate conversational exchanges (Weizenbaum, 1966). Early machine learning algorithms in the 1980s introduced prediction-based language processing that allowed computers to learn patterns and predict words based on context (Jones, 1994; Rosenfeld, 2000).

This progression was further enhanced by the development of recurrent neural networks (RNNs) in the 1990s, specifically the long short-term memory (LSTM) architecture, which enabled machines to recognise dependencies across sequences and became crucial for applications like speech recognition and natural language understanding (Hochreiter, 1997). NLP is now widely applied across various domains, such as scriptwriting, script analysis, game development, interactive storytelling and scene generation, demonstrating its growing influence on the creative sectors (Clocchiatti et al., 2024).

Computer vision began as an exploration into enabling machines to interpret visual data, with early work in the 1960s developing methods to extract 3D information from 2D images (Roberts, 1963). In the 1980s, the Neocognitron, a neural network with convolutional layers, was capable of recognising stimulus patterns without being affected by positional shifts or minor shape distortions (Fukushima, 1980). Significant progress came with the introduction of convolutional neural networks (CNNs) trained with backpropagation, allowing for spatial processing while preserving image structure (LeCun et al., 1989). CNNs enabled machines to identify patterns and objects in complex visual data and are now widely employed in tasks that support creative processes such as image classification, segmentation, object detection, video analysis, and have applications in NLP and speech recognition (Purwono et al., 2022).

In the 2010s, generative models achieved significant progress. For example, normalising flow models used reversible mappings to simplify complex data distributions, enabling more flexible image synthesis and manipulation (Tabak and Vanden, 2010). Variational Autoencoders (VAEs) use an encoder to condense data into a latent space and a decoder to reconstruct it, capturing data patterns for applications like image creation and data compression (Kingma and Welling, 2019). Generative Adversarial Networks (GANs) use a generator to create samples and a discriminator to distinguish real from generated ones (Goodfellow et al., 2014).

Around the time GANs were emerging, diffusion principles were applied to develop a generative modelling algorithm that simplifies complex images into noise and then trains the model to reverse this process (Sohl et al., 2015). The introduction of the transformer architecture (Vaswani, 2017) replaced recurrent layers with self-attention mechanisms, enabling parallel sequence processing, accelerating training, and leading to the development of subsequent Large Language Models (LLMs).

Leveraging the transformer architecture, Bidirectional Encoder Representations from Transformers (BERT) was released as a bidirectional model capable of capturing complex contextual representations by attending to preceding and following word contexts (Devlin, 2018). Generative Pre-trained Transformer (GPT) models, applying unsupervised learning across extensive text corpora, can generate coherent and contextually nuanced text (Radford, 2018).

Building on these foundations, a broad range of generative AI tools, such as text generators ChatGPT, Bard, Gemini, and Claude (Shopovski, 2024); image generators Stable Diffusion, Midjourney and DALL-E 2 (Borji, 2022); video generators Sora and Luma (Vahdati et al., 2024); and audio generators Suno and ElevenLabs (Murray, 2024), reveal great potential in media content creation, content enhancement, and transforming creative workflows (Anantrasirichai and Bull, 2022).

This timeline review underscores the extended evolution of generative AI technologies, highlighting its progressive role in supporting diverse creative tasks over time. Within this broader technological landscape, the present research now narrows its focus to the applications and practical impacts of generative AI within the screen and live performance industries. Before exploring its specific use cases and effects, defining the scope of the industries provides clarity regarding the areas under consideration.

Scope of screen and live performance industries

Both live performance and screen are broad terms encompassing multiple forms of creative practice. Live performance typically involves performers and audiences sharing the same time and space, including concerts, music festivals, dance, opera, and comedy (Auslander, 2012). Given that ‘screen’ is an electronic means of practice, this study will conduct a detailed examination of its different manifestations to understand the various sectors within the screen industry.

In doing so, this research gathers information from journals, industry reports, and website articles, with the keywords including ‘screen sector’ and ‘screen industry’. Then, related terms were consolidated into broader categories. For example, ‘TV’, ‘high-end television’, ‘TV animation programmes’, ‘children’s TV programmes’, and ‘television drama’ were grouped under ‘television’, while terms like ‘video games’ and ‘interactive narrative video games’ were categorised under ‘games’.

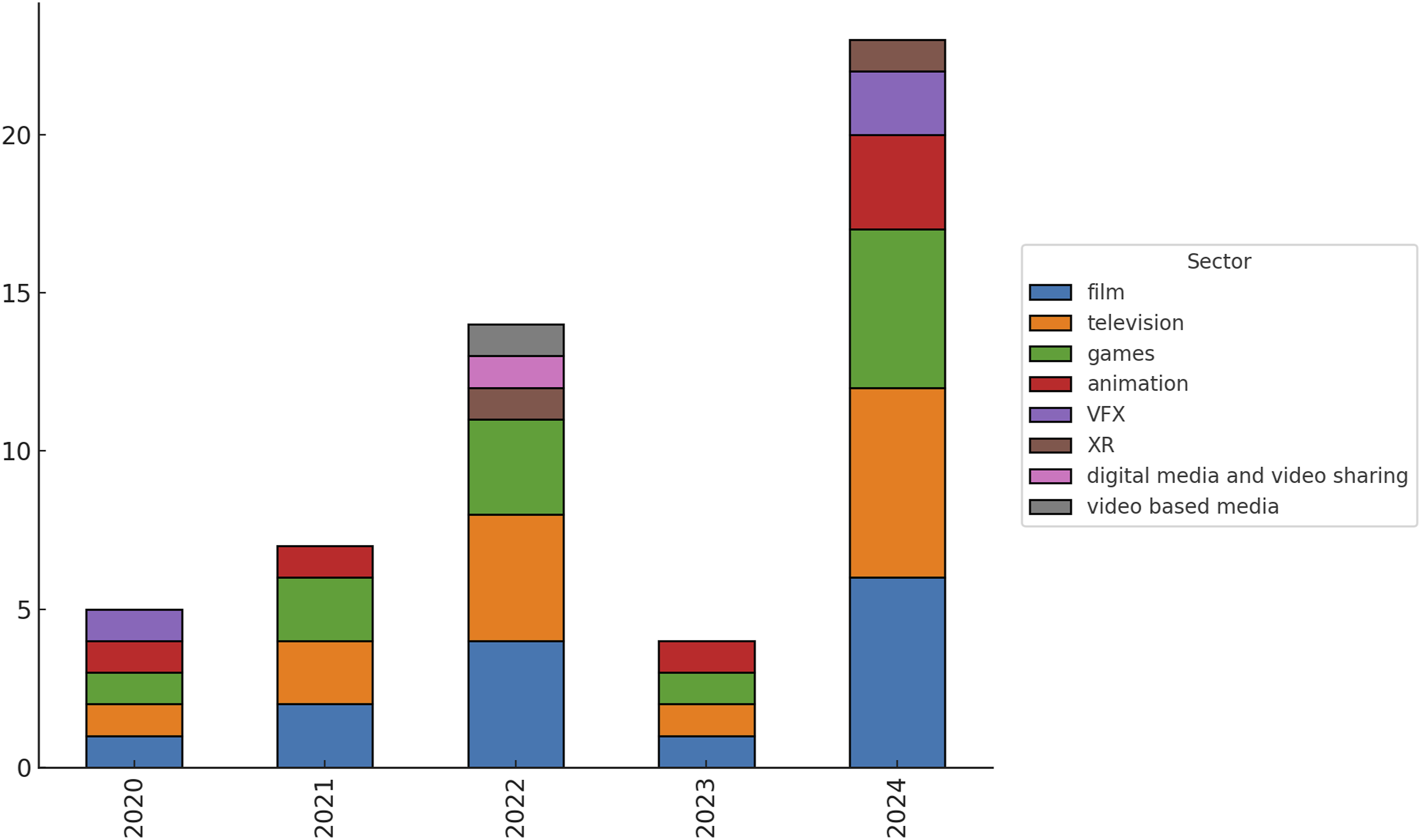

The resulting categorisation (Figure 3) illustrates the frequency of sectoral mentions across the screen industry from 2020 to 2024. This figure identifies several dominant sectors, particularly film, television, and games, which appear consistently and frequently across the years (BFI, 2021; BFI, 2022; BFI, 2023; Brien et al., 2021; British Screen Forum, 2022; Carey et al., 2024; Into Film, 2024; Johnson et al., 2022; Ozimek, 2020; Sissons and Godwin, 2024; Willment et al., 2024). The scope of the screen industry from 2020 to 2024.

From Figure 3, film and television emerged as the most frequently mentioned sectors, highlighting their roles as traditional pillars of the screen industry (Caldwell, 2009). Both sectors have long been central to mass storytelling and cultural impact, as established in media research (Lotz, 2014). Despite their traditional roots, these industries have demonstrated adaptability within new contexts, particularly with digital technologies that have expanded their accessibility and audience engagement (Lotz, 2017; Tryon, 2013).

Figure 3 reveals a growing presence of the games sector over recent years, suggesting an expanding role within the screen industry. A key driver of this growth is the adoption of new technologies, enabling immersive gaming experiences and expanding the user base (Goh et al., 2023). Unity’s report further substantiates that 62% of surveyed studios utilise AI in their workflows, primarily for rapid prototyping, concept development, asset creation, and world-building (Unity, 2024).

Animation and visual effects (VFX) maintain a consistent presence in the industry, contributing across pre-production, production, and post-production stages. Additionally, the scope includes XR and digital media (including digital media and video sharing and video-based media), which reflect emerging forms of immersive and interactive media.

The screen industry has shown strong adaptability and openness to new technologies, positioning it as a potential early adopter and making it a promising context for implementing generative AI. Preliminary findings from an ongoing analysis of mapping AI tools across various stages of the filmmaking process further support this notion, with a sample version available online. 2

This research finally defines the scope of the screen industry by focussing on film, television, games, animation, and XR while excluding VFX and digital media. VFX is excluded as it is closely integrated with film, games, and animation, often functioning as a supporting component rather than a standalone domain. Digital media is not included due to its overlapping roles with other sectors.

Having defined the scope of the screen and live performance industries, it is vital to recognise that, while often considered distinct, these various creative sectors are increasingly interconnected. First, shared workflow components such as motion capture demonstrate this convergence, enabling consistent performance capture and animation processes across film, gaming, animation, XR and live performance (Kitagawa and Windsor, 2020). Second, technological convergence has created cross-sectoral foundations, with game engines, initially central to game development, now widely adapted for virtual production in film (Willment et al., 2024), for creating immersive backdrops in live performance, and for developing interactive content in XR (Gunkel et al., 2023). Third, transmedia storytelling creates hybrid content formats where narratives span across interactive games, cinematic experiences, and immersive performances, utilising the same AI-driven content creation tools for character development and world-building (Cake et al., 2015). Additionally, shared hardware such as XR headsets enables immersive experiences across the screen and live performance sectors. This technological and creative convergence suggests that generative AI innovations will not develop in isolation across these interconnected sectors, making their collective study essential for understanding AI’s impact on contemporary creative industries.

Methodology

Thus far, this study has reviewed the history of generative AI technologies and defined the scope of the live performance and screen industries. Given the notable potential and inherent risks associated with generative AI, its integration demands careful consideration, prompting substantial yet fragmented academic discourse.

Research questions

This study aims to critically review current literature, identify key research themes, trace scholarly developments, and synthesise existing knowledge to inform future research directions. The study is guided by the following research questions. RQ1: What is the state of research on the adoption and impact of generative AI in the screen and live performance industries? RQ2: What is the main focus of the current literature, and how are the key research themes related? RQ3: What are the key findings of this review, and what are the future implications?

Literature retrieval

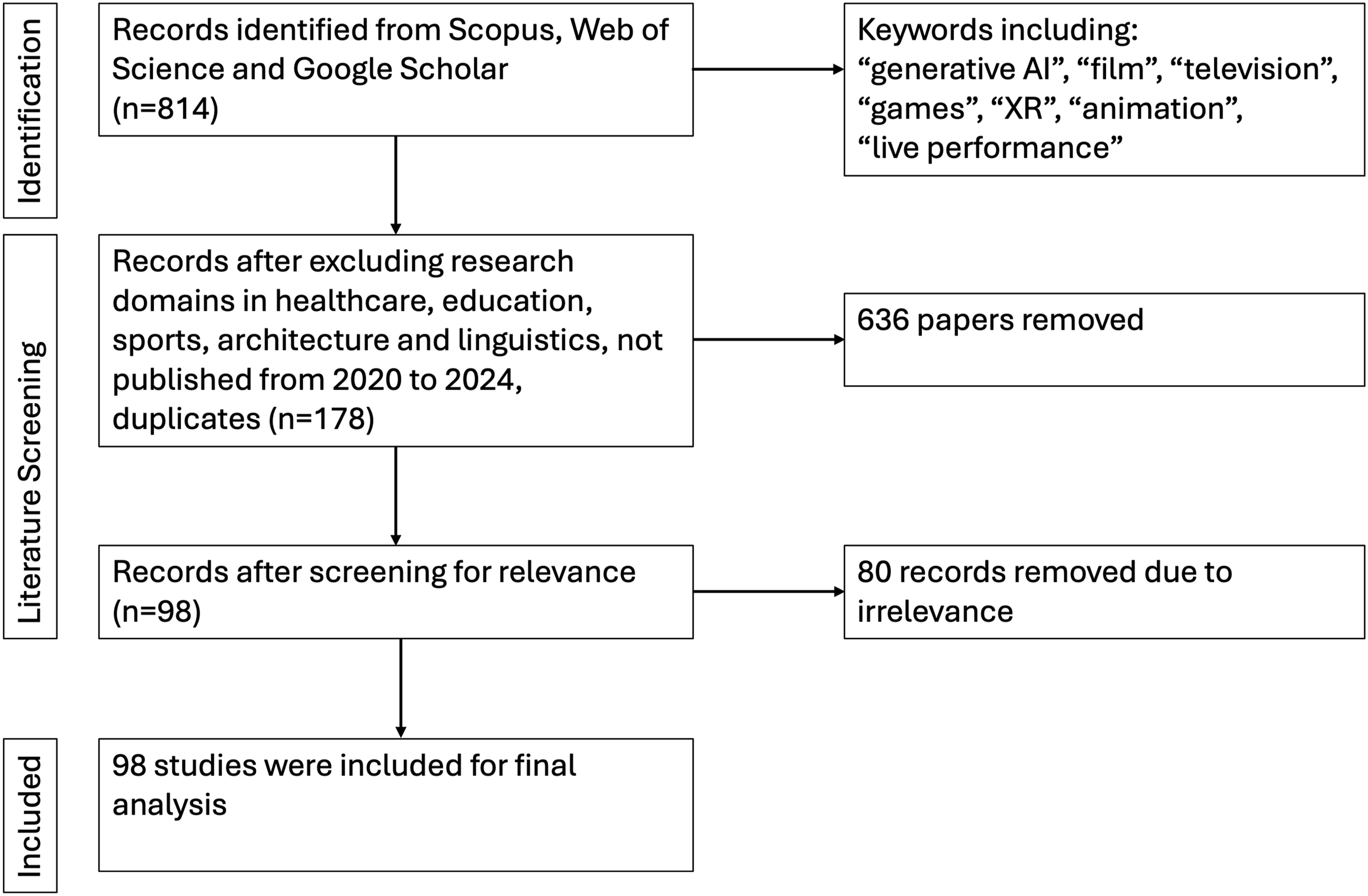

To systematically address these questions, a structured literature review process was followed (Figure 4). The process of literature retrieval and screening.

To obtain a comprehensive understanding of generative AI in the screen and live performance industries, Scopus, Web of Science, and Google Scholar were selected as primary databases due to their coverage of diverse publication types, which allows access to both established findings and emerging insights essential for a rapidly evolving field like generative AI, providing a grounded basis for this review. Notably, Scopus includes trade journals, which offer insights into industry practices and trends, thereby ensuring that industry-related materials were also considered within our review.

The search strategy was based on the previously identified scope of sectors, using primary keywords including ‘generative AI’, ‘film’, ‘television’, ‘games’, ‘XR’, ‘animation’, and ‘live performance’.

To capture timely insights, the review includes conference papers and preprints alongside journal articles. Given the rapid pace of AI development, conference papers and preprints often present novel findings that not yet be available in journals. By including these sources, the review integrates both existing knowledge and recent advancements, providing a fuller picture of generative AI’s trajectory in the screen and live performance industries.

Literature screening process

The initial stage of the literature screening process focused on refining the timeframe to include studies from a 5-year period (2020–2024), a period that reflects the rapid advancement of AI technologies. By focussing on recent publications, the study captures the latest innovations, trends, and challenges associated with generative AI in the targeted industries.

In the next stage, studies from fields such as education, healthcare, sports, architecture, and linguistics were excluded, along with duplicates. This step refined the dataset to strictly align with the review’s scope and relevance.

After carefully studying each article, a final assessment was made to determine its direct relevance to the research questions. Studies were excluded if they lacked a direct focus on the role of these technologies within the targeted areas. This evaluation resulted in a refined set of 98 papers, establishing a focused basis for further analysis.

Sample analysis

To prepare for a detailed analysis of the selected literature, a systematic approach was employed to capture essential attributes of the dataset. This initial stage recorded key bibliometric details, including publication dates, types, and sources, offering an overview of publication trends and commonly chosen venues in the field. Then, the methodologies and theoretical frameworks across the studies were reviewed. This preliminary work supports the subsequent analysis, where themes and key findings will be examined in greater depth.

Current Landscape of the Literature

To address RQ1, this section investigates the current state of research on generative AI in the screen and live performance industries by providing an overview of publication trends over time, the types and platforms of publications, their locational context, and the methodologies employed.

Publication year

To assess the state of research on generative AI in the screen and live performance industries, it is essential to examine the distribution of publications over time.

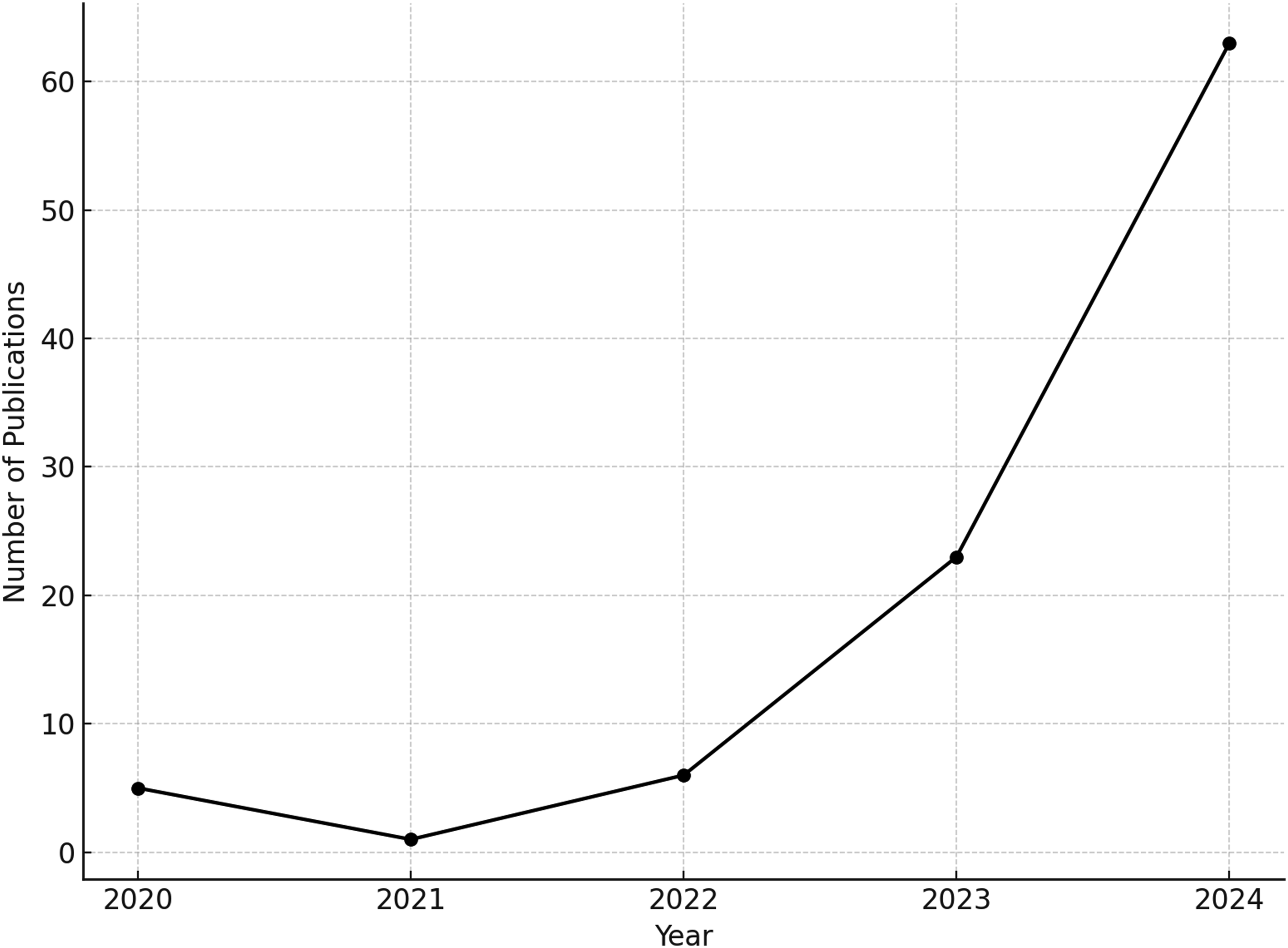

As shown in Figure 5, publications on this topic have increased markedly over the observed period. Of the total sample of 98 papers, only 1.0% (n = 1) was published in 2021, compared to 64.3% (n = 63) in 2024. This upward trend would indicate an expanding field that is attracting growing scholarly interest and engagement. Publication trend.

Publication types

Figure 6 shows the distribution of publication types within the sample, highlighting the preferred formats for disseminating research in this field. Publication types.

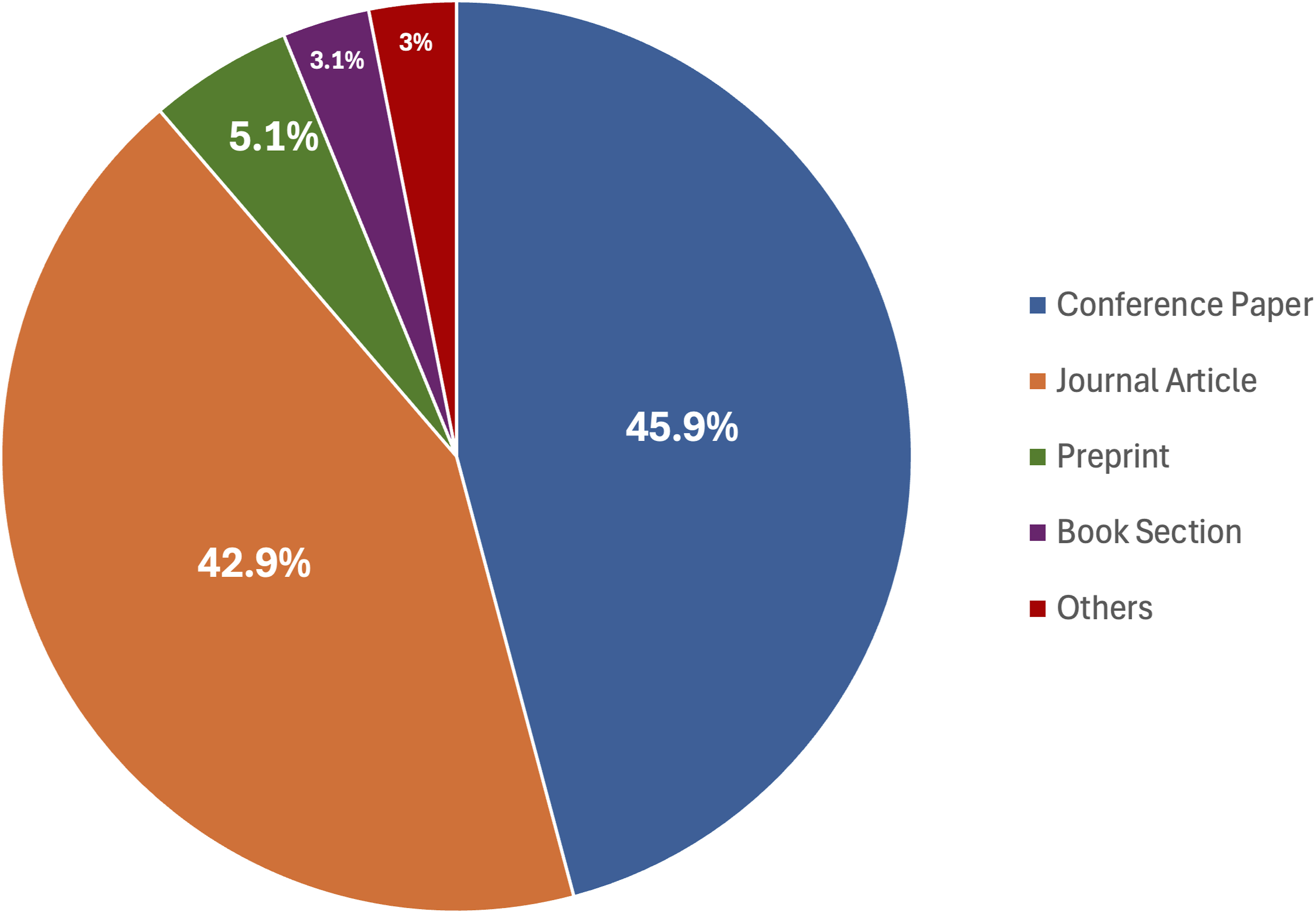

Conference papers represent the largest portion of the literature, accounting for 45.9% of publications. Journal Articles follow closely, comprising 42.9% of the sample, indicating that academics have long recognised the importance of this topic.

Preprints make up 5.1%, reflecting interest in sharing early-stage research to foster timely discussions. Book Sections constitute only 3.1%, showing that the field prioritises faster publication platforms to keep pace with ongoing advancements. In addition, among these materials, there are also 4 industry-related papers, from sources such as DeepMind (Mirowski et al., 2024), DeepMotion (He et al., 2024b), Google (Guajardo et al., 2024), Comcast and Amtrak (Ramagundam and Karne, 2024), which introduce or evaluate their prototypes. These contributions provide valuable insights from leading industry innovators, showcasing rapid development and testing, and bridging the gap between academic research and real-world industry practice.

Publication platforms

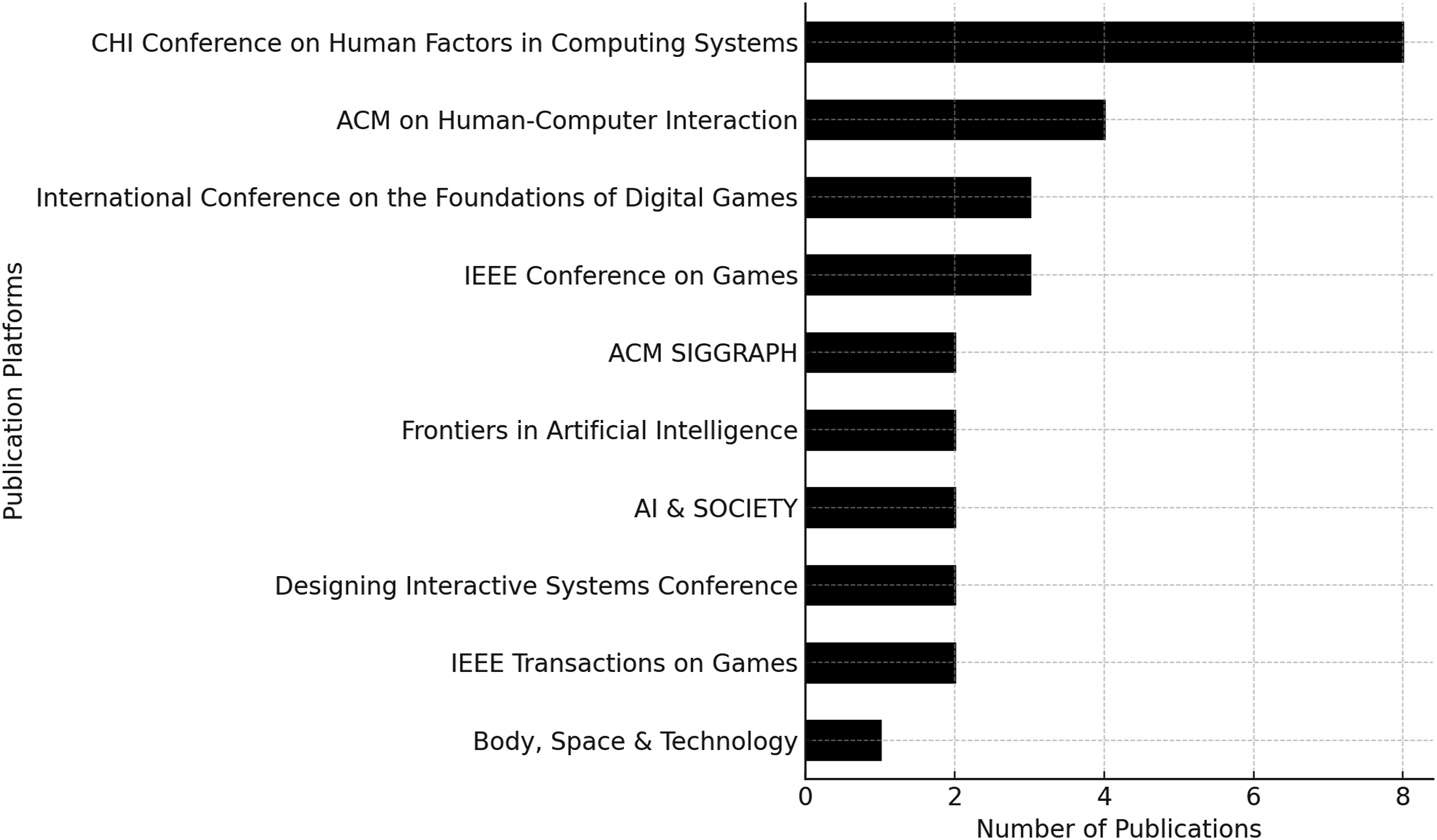

Figure 7 shows that the CHI Conference on Human Factors in Computing Systems is the most frequently used platform (n = 8), followed by the ACM on Human-Computer Interaction (n = 4). Top 10 publication platforms.

While these top platforms indeed indicate a strong HCI presence, our literature review spanned various disciplines, identifying relevant research from other academic fields. This includes contributions from journals such as Organisation Theory, Journal of Human Rights Practice, the Yale Law Journal Forum, and Media Practice and Education. Such disciplinary inclusion allows for a broader understanding of generative AI’s implications beyond a single academic domain.

The top ten publication platforms primarily consist of conferences, including proceedings and extended abstracts. Platforms in this list, such as the IEEE Conference on Games, International Conference on the Foundations of Digital Games, and IEEE Transactions on Games, indicate that the games sector might be a primary area of research interest.

Locational context

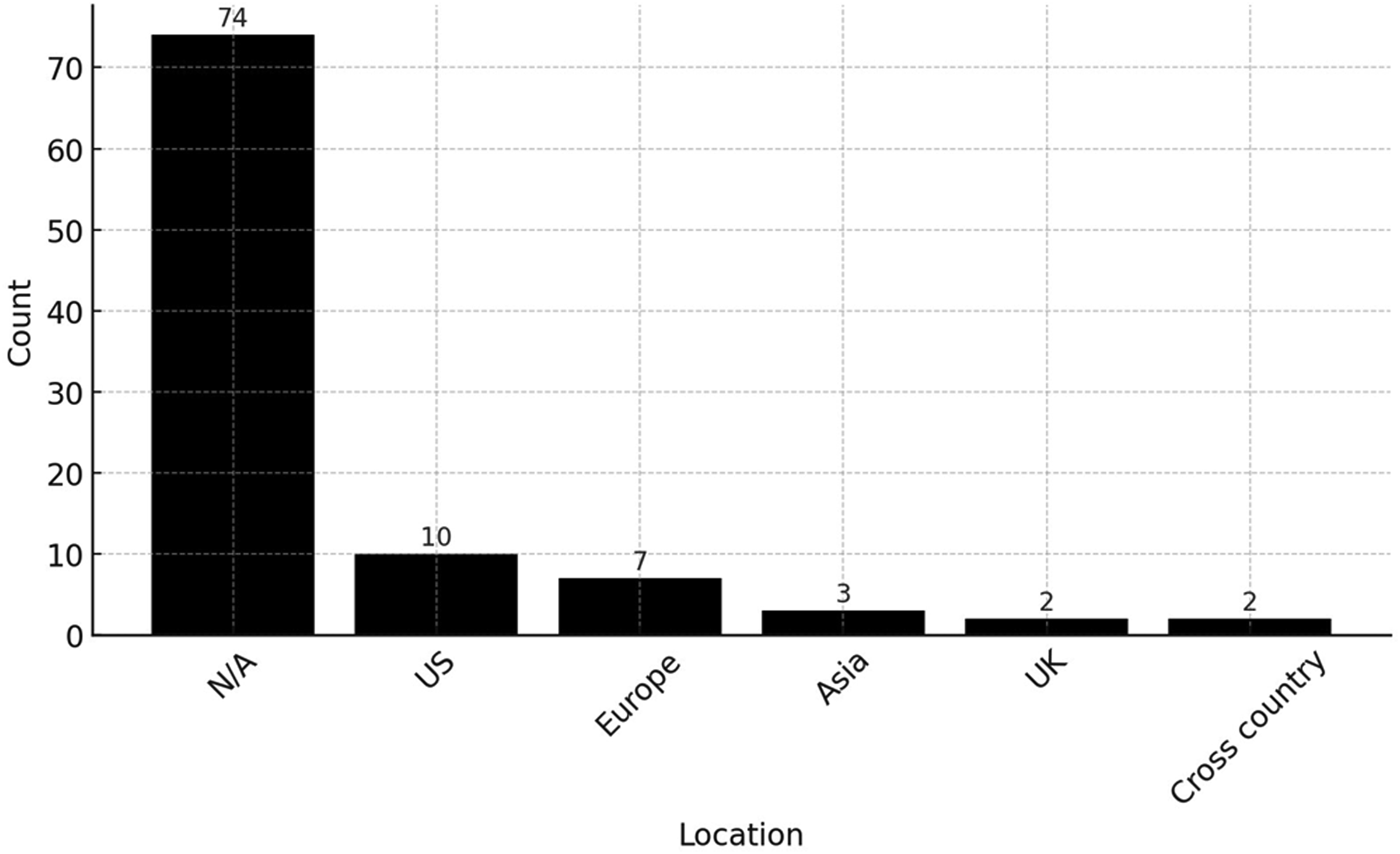

Figure 8 shows the locational distribution of the studies included in the sample, based on the research design and dataset used in the analysed literature. A substantial proportion of studies (64.3%, n = 74) did not specify a clear locational focus. Among the remaining studies, the United States (US) accounted for the largest share (n = 10), followed by Europe (n = 7) and Asia (n = 3). Studies focussing on the UK (n = 2) and those adopting a cross-country perspective (n = 2) were limited. Locational distribution.

The relatively high number of US-focused studies is unsurprising, given the country’s pioneering role in generative AI development and deployment (Chopra, 2023). The limited number of regionally focused studies indicates a potential gap in understanding how local industry structures, cultural practices, or policy environments impact the use of generative AI. Future research may benefit from exploring more geographically grounded insights into generative AI use.

Methodology employed

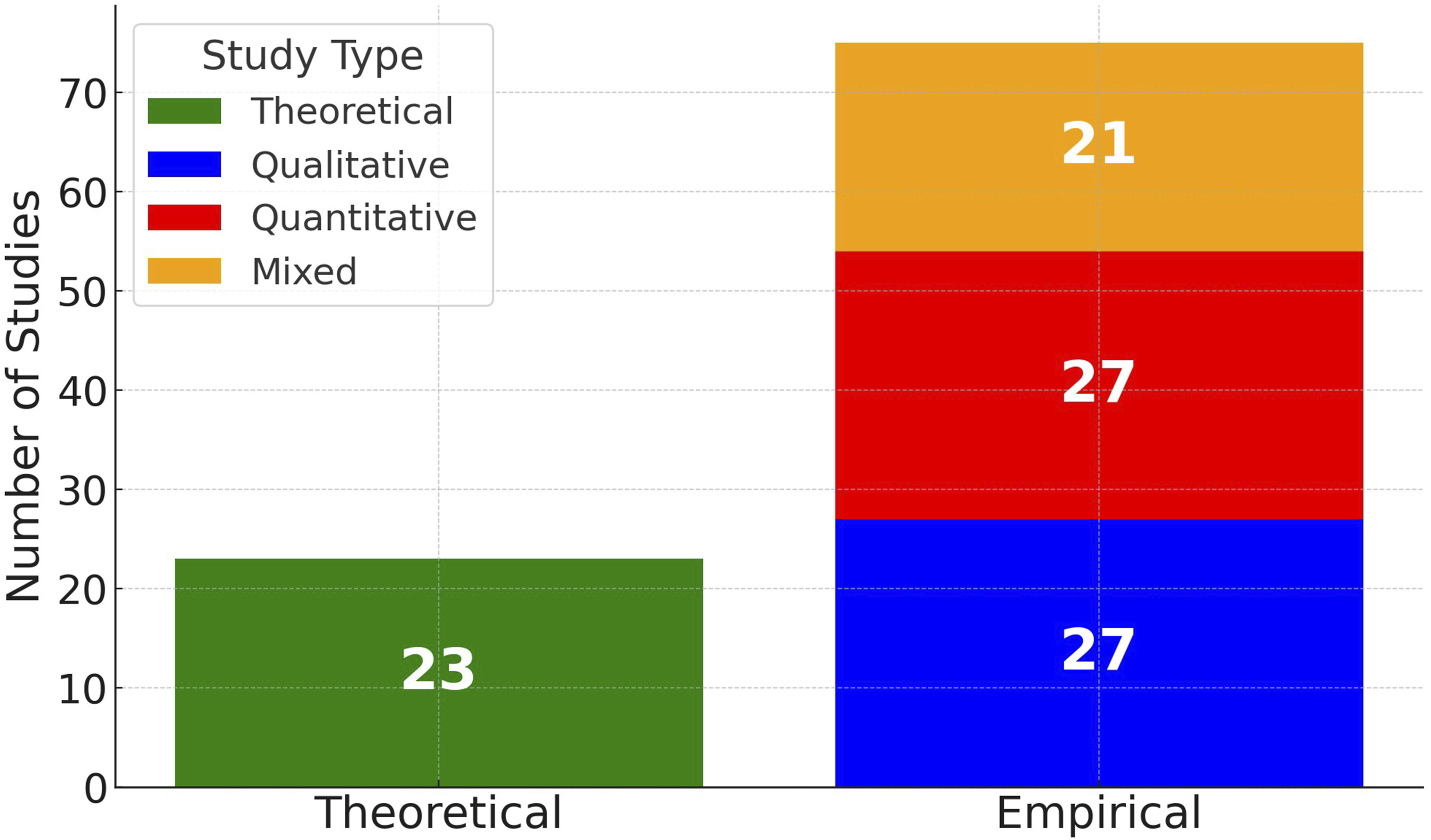

The analytical methodologies employed across the reviewed studies are broadly categorised into theoretical and empirical approaches (Ortony et al., 1978). As illustrated in Figure 9, of the 98 papers in the sample, 23 studies are theoretical, primarily involving conceptual analyses, theoretical frameworks, or literature reviews. Among these, 4 are review-type papers focussing on specific aspects, including ‘deepfake’ (Chauhan et al., 2024), generative AI in visual arts (Vidmar et al., 2024), generative AI in Metaverse (Chamola et al., 2024), and LLMs in games (Gallotta et al., 2024). This demonstrates the value of the present review in providing a broader overview of generative AI across the creative field. Methodology employed by the analysed studies.

Empirical studies account for the majority, with 75 in total. Within the empirical studies, quantitative, qualitative, and mixed-methods approaches are all represented (Creswell and Creswell, 2017). Quantitative and qualitative studies each account for 27 papers, while mixed-methods studies comprise 21 papers. Most of these empirical studies focus on developing prototypes, models, or new approaches, often quantitatively testing their functions or recruiting participants for evaluation, reflecting a practical and applied research interest in creating actionable tools and solutions within the screen and live performance industries.

In contrast, only 11 studies explore users’ interactions with existing generative AI systems. The field could therefore benefit from more empirical research on how stakeholders like creative workers and studios use current generative AI tools like ChatGPT and Midjourney in their projects and their experiences with these tools, especially now that so many generative AI tools are available and evolving so quickly.

Results

With respect to RQ2, this study presents the results derived from analysing the samples and conducting a systematic coding process. Five key themes were identified and subsequently synthesised into a cohesive framework that examines their interconnections, offering a comprehensive understanding of how current literature understands the role and impact of generative AI, and how the identified themes are connected.

Prototypes in the production process

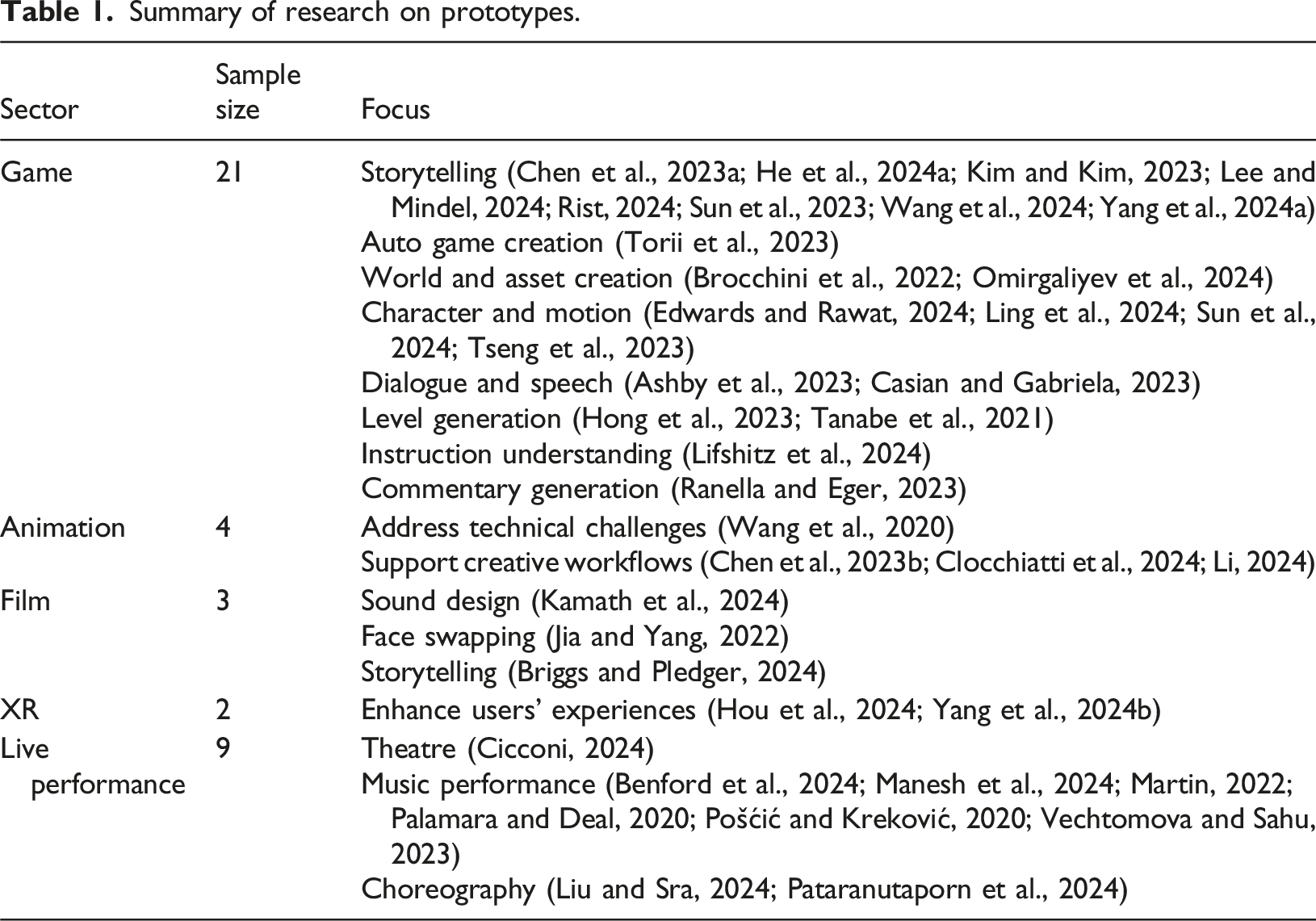

Summary of research on prototypes.

Prototypes are most prominent in the games sector, comprising 53.8% (21 out of 39) of the relevant studies.

Key areas of innovation include interactive and personalised storytelling (Chen et al., 2023a; He et al., 2024a; Kim and Kim, 2023; Lee and Mindel, 2024; Rist, 2024; Sun et al., 2023; Yang et al., 2024a), with examples like Closer Worlds (Lee and Mindel, 2024), which employs ChatGPT-4 and DALL-E 2 to enhance storytelling and collaborative world-building. Auto game design is exemplified by Lottery and Sprint (Torii et al., 2023), a board game designed with human creativity and AutoGPT, showcasing playability and enjoyment through AI-human collaboration.

A deep learning-based system leverages generative models and a combination of convolutional GANs and Pixel2Mesh architecture to automate the creation of 3D gaming assets, streamlining their integration into new gaming environments with small manual adjustments (Brocchini et al., 2022). SleepWalker introduces a refined method for text-to-motion generation, utilising contrastive learning to produce personalised 3D human motion from natural language inputs with just a few examples (Edwards and Rawat, 2024).

To enhance the understanding of open-ended text instructions in gaming, generative models are optimised to handle sequential decision-making tasks and reduce costs through self-supervised learning and minimising reliance on extensive labelled datasets (Lifshitz et al., 2024). Level generation (Tanabe et al., 2021) is exemplified by applications like Angry Birds, where levels are encoded sequentially as text to improve stability and diversity.

Prototypes in the animation sector represent 10.3% (4 out of 39) of the reviewed studies. Studies examine the technology’s potential to address technical challenges (Wang et al., 2020) and support creative workflows (Chen et al., 2023b; Clocchiatti et al., 2024; Li, 2024).

To overcome limitations in existing methods, Wang et al. (2020) introduced a self-supervised framework for image animation that enables domain-independent pose adaptation, improving tasks like facial expression transfer and human pose retargeting through GANs.

In workflow improvements, a short-to-long video diffusion model is designed to extend short videos into long formats using a random mask approach to generate coherent, high-quality transitions directed by text inputs, with applications in image-to-video animation and auto-regressive video prediction (Chen et al., 2023b). Clocchiatti et al. (2024) presented a high-level pipeline that uses ChatGPT to produce character descriptions from screenplays and Stable Diffusion to create scene-specific animations, enabling energy-efficient 2D cutout animations for film previsualisation, 2D games, and XR content while supporting sustainable production.

Prototypes in film explore generative AI’s capabilities in sound design (Kamath et al., 2024), face swapping (Jia and Yang, 2022), and storytelling (Briggs and Pledger, 2024), showcasing its contributions to different production stages.

Generative AI supports sound designers for film through interactive Creative Support Tools that enable rapid iteration, generate unique sound elements, and reduce reliance on field recordings, enhancing creativity but presenting challenges for task-oriented workflows and informing design considerations for effective integration (Kamath et al., 2024).

DeepFaceLab functions as a high-quality face-swapping framework that uses a training model and scale-invariant face detection to extract multi-scale facial features, achieving seamless replacements with minimal source and destination loss at real-time speeds, making it valuable in film, entertainment and art industries (Jia and Yang, 2022).

Projects like Tomorrow’s Pasts demonstrate how generative AI transforms Indigenous storytelling by constructing alternate histories that address themes of racism, colonisation, and Indigenous knowledge systems, using deepfake and text-to-video tools to expand narrative potential while challenging authenticity and collaborative artistry (Briggs and Pledger, 2024).

Prototypes in XR highlight generative AI’s potential to enhance user experience. A new deployment framework was proposed to enhance users’ experiences by employing generative AI for semantic communication, improving multimodal data collection, analysis, and delivery to create more efficient and immersive wireless XR environments (Yang et al., 2024b).

Another spatial communication prototype uses advanced 5G networks, distributed computing, and AI to tackle XR challenges by optimising volumetric data handling, tailoring user experiences via behaviour-based management, and employing pre-digitised 3D models to enable seamless real-time interaction (Hou et al., 2024).

Prototypes in live performance constitute 23.1% (9 out of 39) of the analysed works. In immersive theatre, Cicconi (2024) introduced the Augmented Total Theatre framework, which synthesises theatrical performance with AR to produce immersive representations that surpass conventional theatre, with prospective advancements enabling real-time, cost-effective generation of complex, dynamic environments that intensify audience perception and sensory engagement.

In music performances, Palamara and Deal (2020) presented an AI-based system that resolves limitations in digital music technology by simulating human sensitivity to musical context and incorporating a generative programme that leverages a stochastic model to support improvisation. SHARP, a version control system, was designed to improve live coding musicians’ preparation and performance, allowing them to explore new ideas and create musical styles (Manesh et al., 2024).

In choreography, an interactive AI-powered system was introduced to support creative ideation by generating diverse dance sequences, enabling iterative refinement and prototyping, and reducing the physical demands of idea experimentation (Liu and Sra, 2024).

Given the early stage of generative AI’s practical implementation, the reviewed studies illustrate an exploratory and experimental phase. This body of research focuses on developing prototypes tailored to the specific demands of the screen and live performance sectors, reflecting a practical orientation aimed at testing, adapting, and refining generative AI capabilities. By addressing sector-specific challenges, these studies pave the way for the broader integration of generative AI into creative and production contexts. Meanwhile, most of the research adopts a balanced and critical approach, recognising not only the benefits of this transformative technology but also its risks, emphasising the importance of careful and responsible design for future practical uses.

Real-world applications

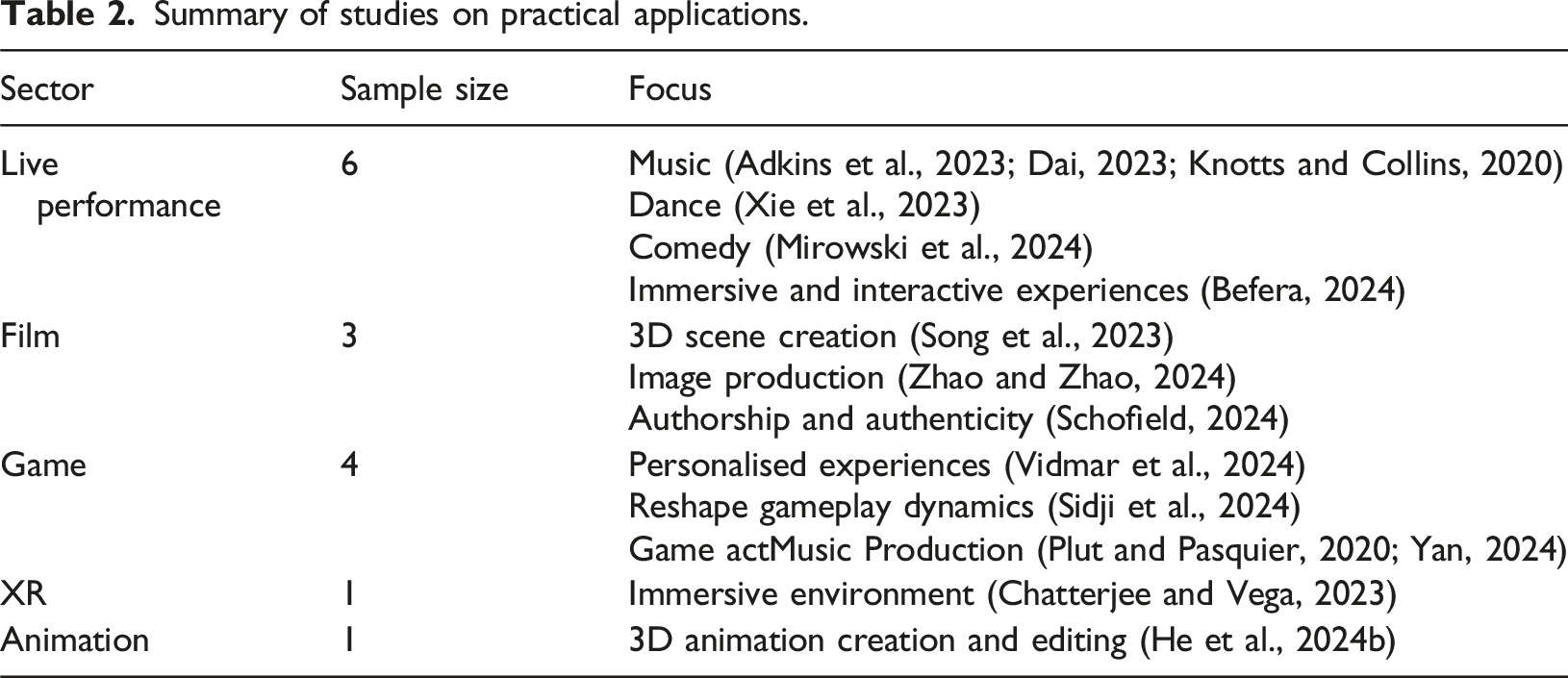

Summary of studies on practical applications.

In live performance, the literature examines the application of AI in music (Adkins et al., 2023; Dai, 2023; Knotts and Collins, 2020), dance (Xie et al., 2023), comedy (Mirowski et al., 2024), and the creation of interactive experiences (Befera, 2024). In music, AI allows diverse applications, including live performance, recording, and generative production, offering tools that facilitate creative processes without requiring extensive programming knowledge (Knotts and Collins, 2020). Adkins et al. (2023) demonstrate how AI supports live coding by generating loopable, high-density musical outputs tailored to specific instrumentation, key, time signature, and bar length, offering flexibility for varied musical contexts.

In wheelchair dance, AI leverages gesture recognition and body segmentation to merge the dancer’s movements with the wheelchair, creating adaptive visual expressions that amplify creative agency and enable personalised storytelling (Xie et al., 2023). Beyond fixed outputs, AI enables dynamic, adaptive connections across text, movement, and sound, creating interactive experiences that integrate diverse content, environments, human participants, and artificial entities (Befera, 2024). Mirowski et al. (2024) evaluated LLMs in comedy performance and observed that moderation strategies reinforce dominant viewpoints and filter minority perspectives and that the generative models fail to enhance creativity as they produce uninspired, biased content.

In film, the literature discusses how generative AI enhances creativity and production processes while raising critical questions about its broader implications. Song et al. (2023) exemplified generative AI’s role in optimising 3D scene creation through generative models, AI-powered rendering, and 2D-to-3D transformations, expanding the expressive boundaries of traditional media.

Similarly, Zhao and Zhao (2024) demonstrated how deep convolutional GANs advance film image production by enabling high-quality virtual characters, scenes, style transformations, and dynamic enhancements. Although generative AI shows potential, its integration challenges traditional photographic ontology, prompting a reconsideration of authorship and framing as benchmarks for authenticity in a medium increasingly shaped by media convergence and AI-driven practices (Schofield, 2024).

In games, the literature investigates the use of generative AI tools like ChatGPT, Stable Diffusion, and Midjourney to enhance immersive, personalised experiences through dynamic dialogue, visuals, and interactive narratives, advancing customisation while raising ethical considerations in game development (Vidmar et al., 2024). Moreover, in applications like Codenames, AI fosters creative thinking but reshapes gameplay dynamics, influencing team cohesion and interaction patterns (Sidji et al., 2024).

Generative AI also supports game music production by offering adaptive, diverse soundscapes for immersive gameplay but faces limitations in expressive range and labour-intensive data management that constrain its broader integration in industrial and academic systems (Plut and Pasquier, 2020). Yan (2024) assessed the ability of Jukebox, a music generation model and found that although people preferred human compositions, the model showed capability in producing genre-consistent outputs and potential for collaborative music creation.

In XR and animation, the literature addresses how generative AI advances the production process in these sectors. In XR, realistic and immersive content is created using a computer vision system that generates 3D environments from Red-Green-Blue images and a Hyper-converged infrastructure system that enables efficient rendering (Chatterjee and Vega, 2023). SayMotion, a generative AI text-to-3D animation platform, applies deep generative learning and physics simulation to transform text into realistic human motion for gaming, XR, and film, using a fine-tuned language model and proprietary motion model for efficient 3D animation creation and editing (He et al., 2024b).

The reviewed literature examines the complexity of adopting generative AI technologies within the screen and live performance industries, identifying practical challenges such as bias and censorship. These studies suggest that the adoption of such technologies requires more than a focus on technical advancements; it also necessitates aligning these advancements with cultural values and ethical considerations. However, the literature predominantly identifies challenges, with limited exploration of potential solutions, requiring more in-depth research to develop strategies that address these issues.

Impact of generative AI

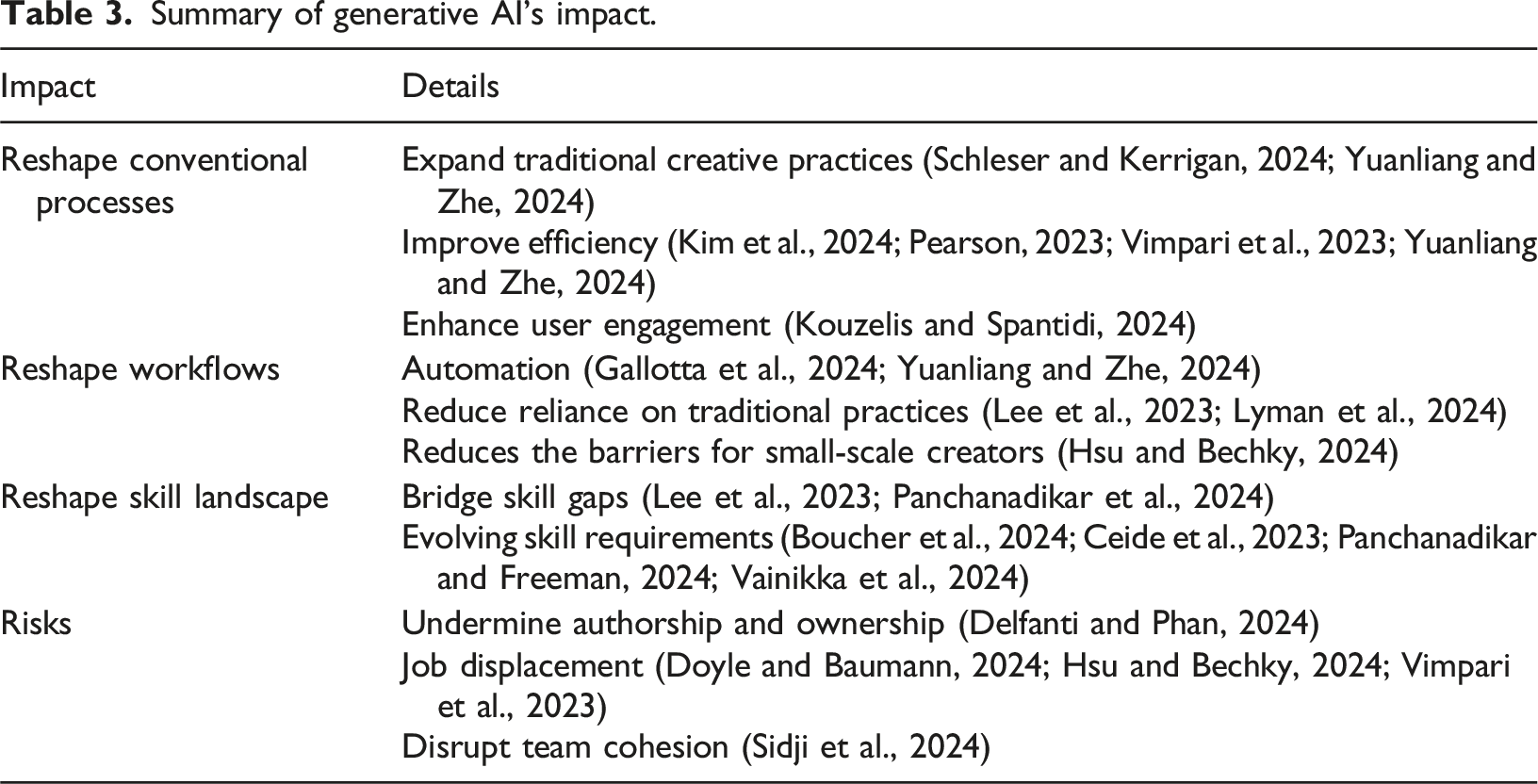

Summary of generative AI’s impact.

Current literature reflects generative AI’s role in reshaping conventional processes within the screen and live performance industries (Schleser and Kerrigan, 2024; Vimpari et al., 2023; Yuanliang and Zhe, 2024) by expanding traditional creative practices, enhancing productivity, and user engagement (Kim et al., 2024; Kouzelis and Spantidi, 2024; Singh et al., 2024; Yuanliang and Zhe, 2024).

For example, a conceptual analysis suggests that generative AI expands the definition of documentary beyond the conventional ‘creative treatment of actuality’ and establishes new cultural conditions for the genre (Schleser and Kerrigan, 2024). Leveraging vast storage and rapid computing capacities, AI can generate artworks that surpass traditional boundaries, enabling complete replication and reshaping the concept of artistic authenticity (Yuanliang and Zhe, 2024).

Generative AI facilitates the integration of 2D and 3D styles while improving production efficiency, accuracy, and fostering diverse, interactive artistic expression (Pearson, 2023; Yuanliang and Zhe, 2024). In XR, quantitative findings show that generative AI can enhance users’ experiences by employing refined prompt engineering techniques such as emotional amplification and fact-anchoring to improve context understanding, reduce inaccuracies, and add emotional depth to AI-generated dialogues, enabling more immersive and interactive engagements (Kouzelis and Spantidi, 2024).

Several studies delve deeper into how generative AI reshapes workflows, with blended creation enabling automation and semi-automation in traditional animation to reduce costs by handling repetitive, time-consuming tasks (Yuanliang and Zhe, 2024), allowing animators to concentrate on story development and characterisation (Guajardo et al., 2024). A theoretical perspective suggests that generative AI tools, as design assistants, can streamline creative workflows by minimising manual effort, expediting development, fostering collaboration, and stimulating user creativity, with current applications in game design offering auto-completion and varied options, and future advancements aiming to position AI as a dynamic collaborator for idea exchange (Gallotta et al., 2024).

In addition, generative AI transforms workflows by reducing reliance on traditional practices. Empirical findings in game design indicate that tools like Midjourney, Stable Diffusion, and ChatGPT enable designers to visualise ideas, conduct market sensing, and create demo games, reshaping the production process and diminishing the need for external departments (Lee et al., 2023). Similarly, Cardistry, a generative AI storytelling card app, replaces the traditional dependence on multiple interviews and thematic analysis with a user-driven process, where GPT models generate storytelling cards directly from user input, enabling rapid personalisation (Lyman et al., 2024).

Theoretically, the adoption of generative AI also reduces the barriers for small-scale creators to develop high-quality productions (Hsu and Bechky, 2024) and enables gaming companies to personalise quests, storylines, and characters based on user preferences and social connections, also allowing users to create customised text-based games (Chamola et al., 2024). However, Hsu and Bechky (2024) noted that generative AI is likely to reinforce a cycle where studios rapidly produce content similar to popular offerings, distribute it in personalised formats, and use consumer preferences to further refine AI-driven production, resulting in oversaturation and diminished innovation.

Findings from interviews suggest the risks of remix labour industrialisation, where tools like Synthesia and Runway Gen-2 automate creative processes, prioritise corporate ownership, obscure authorship, and relegate creative workers to routine tasks, with marginalised freelancers still performing standardised, algorithm-driven roles (Delfanti and Phan, 2024). This automation raises concerns about job displacement (Doyle and Baumann, 2024; Hsu and Bechky, 2024; Vimpari et al., 2023). With AI tools in teamwork, qualitative findings show that the AI assistant may disrupt team cohesion, redefining gameplay norms and reshaping social dynamics by weakening internal team bonds (Sidji et al., 2024).

The literature also reflects on the changes in skill landscape and career development brought about by generative AI. Panchanadikar et al. (2024), in their qualitative study, note that generative AI can bridge skill gaps and democratise game production in indie game teams by providing technical support and alleviating time and financial constraints. Insights from qualitative research indicate that game designers who have access to generative AI tools like Midjourney and are able to craft effective prompts can become ‘super game designers’, capable of visualising ideas, creating playable prototypes, testing market responses, transforming the game development process and business structure (Lee et al., 2023).

According to a theoretical analysis by Doyle and Baumann (2024), the film and television sectors demand expertise in AI programming, excellent post-production skills, data analytics, and AI-based content management. Meanwhile, creative workers increasingly regard proficiency in using AI tools as an essential professional skill (Boucher et al., 2024), yet they acknowledge the substantial effort required to effectively integrate AI into creative workflows, a process that may limit their creative expression and constrain career development opportunities (Panchanadikar and Freeman, 2024; Vainikka et al., 2024). In contrast, employers, particularly in the broadcasting sector, place greater emphasis on adaptability and interpersonal skills over purely technical expertise, reflecting the evolving and transferable skill demands driven by the rise of AI implementation (Ceide et al., 2023).

The reviewed literature examines the impact of generative AI, including enhancing efficiency, user engagement and reshaping traditional workflows. However, much of the existing research remains at the phase of describing the observable changes resulting from workflow transformations rather than analysing the underlying connections and the broader impact chain of generative AI on workflow dynamics. This suggests a need for a more thorough investigation into the deeper and interconnected effects of generative AI within these processes.

Technology limitations and ethical concerns

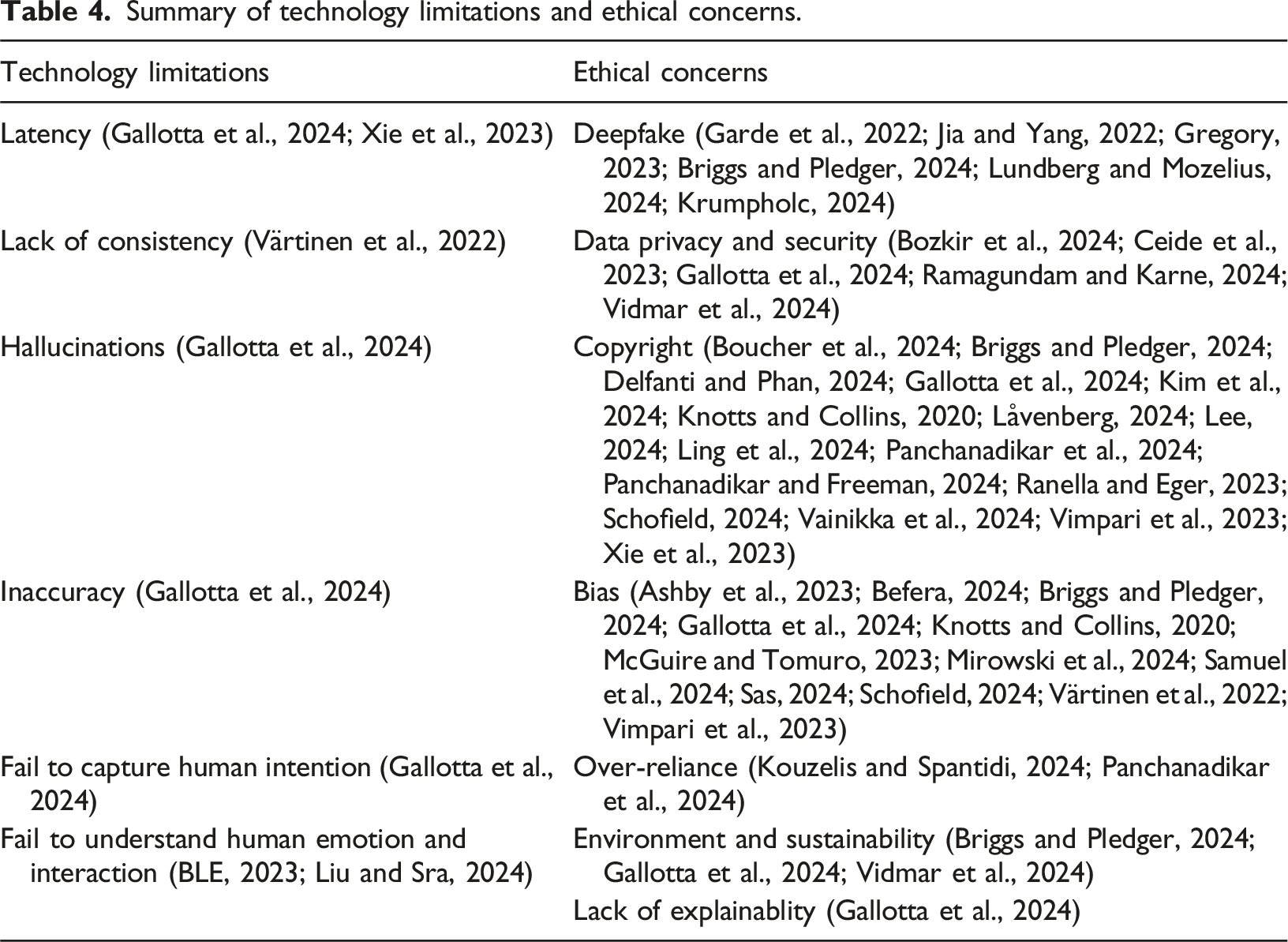

Summary of technology limitations and ethical concerns.

Technical constraints include latency issues due to cloud service stability, internet connectivity, and local processing, as well as the requirement for high-contrast backgrounds for precise body segmentation (Xie et al., 2023). Mixed-methods research by Värtinen et al. (2022) points out that another challenge faced by generative AI is handling complex entities and producing logically consistent text, suggesting future improvements through dataset expansion, model refinement, and industry-academy collaborations.

A theoretical analysis of generative AI in video games identifies limitations including hallucinations, factual inaccuracies, challenges in capturing user intent, restricted memory for long-term interactions, high computational costs, and response delays, all of which hinder reliable and seamless integration into gaming experiences (Gallotta et al., 2024). In live performances, generative AI fails to capture the emotional depth and energy characteristics (Liu and Sra, 2024) and struggles to replicate the improvisation and interaction between musicians and audiences (BLE, 2023).

Compared to the technology limitations, ethical concerns are more complicated.

One major concern is deepfakes, which have practical applications in film and speculative content creation, such as video game graphics and animation. However, they pose significant risks, including misuse for harassment, misinformation, and the erosion of trust in digital media, with recommendations for increased source criticism and advanced watermarking to safeguard media integrity (Garde et al., 2022; Gregory, 2023; Lundberg and Mozelius, 2024; Krumpholc, 2024). According to a theoretical analysis by Chauhan et al. (2024), current deepfake detection technologies often fail to capture all variations of fraudulent facial images, necessitating systems that target GANs’ limitations in rendering real-world features like colour and spatial accuracy to address evolving manipulations.

Data privacy and security are longstanding concerns, and generative AI introduces novel challenges that require close attention. The integration of LLMs in XR and generative AI in tailored ad creation raises privacy concerns, since immersive engagement and targeted content may disclose sensitive information, amplified by sensor data in XR and ethical issues like manipulation and misinformation in ad systems, necessitating in-depth investigation into user privacy expectations, privacy-enhancing solutions, and transparent, accountable deployment frameworks (Bozkir et al., 2024; Gallotta et al., 2024; Ramagundam and Karne, 2024).

The literature also addresses the ethical concerns surrounding copyright, art ownership, and creative agency, particularly among artists and game developers. Artists often resist the use of generative AI in game production due to the lack of copyright protection, potential corporate misuse, and insufficient compensation for original creators, reflecting a broader unease about its ethical impact and the erosion of creative ownership in favour of corporate interests (Boucher et al., 2024; Delfanti and Phan, 2024; Gallotta et al., 2024; Panchanadikar et al., 2024; Vimpari et al., 2023).

On the other hand, Låvenberg (2024) viewed the authorship of The Frost, an AI-generated film, as shared between humans and AI, with AI functioning as a production team member by producing visuals and crafting animations. From a qualitative perspective, Lee (2024) suggests that copyright law should remain technology-neutral, uphold musicians’ freedom to use any instrument, embrace evolving authorship to include AI-generated works and rely on Congress and courts to periodically reassess its scope and address AI-related challenges.

Qualitative findings reveal that bias in generative AI systems remains a critical concern; AI chatbots, for instance, exhibited biases by associating Muslims with potential terror threats at the Paris 2024 Olympics, intensifying and replicating Islamophobic stereotypes from traditional media (Samuel et al., 2024). This is because algorithmic responses often draw on biased training data without disclaimers, underscoring the need for responsible algorithmic design to minimise biases (Gallotta et al., 2024; McGuire and Tomuro, 2023; Samuel et al., 2024).

Other ethical concerns include the over-reliance on AI, as expressed by indie game developers who also fear the replacement of human roles by AI tools (Panchanadikar et al., 2024; Vimpari et al., 2023). Additionally, environmental impacts, sustainability concerns, and the lack of explainability in AI-driven video game development present further challenges (Gallotta et al., 2024).

A few studies further explore how to address these ethical issues. A decolonising framework was proposed to reimagine the integration of AI within the art ecosystem, focussing on four key areas: recognising access as a mechanism of power, addressing biases embedded in AI systems, evaluating the effects of AI on marginalised communities, and disrupting dominant narratives to allow for diverse voices (Baradaran, 2024). Sas (2024) and Wong (2024) make the case that comprehensive legal regulations are needed. Theoretically, Wong (2024) further discusses how governments struggle to regulate generative AI due to fears of democratic erosion, dis/misinformation from AI ‘hallucinations’, rapid technological advances outpacing policymaking, and tech investments complicating antitrust oversight, calling for collaboration between tech companies and policymakers to address these complexities and drive sustainable industry growth.

Studies indicate that the limitations of generative AI become increasingly evident as these technologies process larger datasets and are applied in more diverse contexts. The ethical issues discussed in the current literature show that generative AI amplifies existing concerns, such as data privacy and copyright complexities, makes bias more deeply rooted and introduces new challenges, including over-reliance on these technologies. While the literature covers many aspects of these concerns, further investigation into the unique ethical issues specific to generative AI could add greater clarity. Additionally, tailored academic evidence to inform regulations for specific industries and countries could be highly valuable in addressing these challenges.

Attitudes towards generative AI

Although the adoption of generative AI is still emerging, studies indicate that attitudes and acceptance towards the technology are varied.

A survey by Knotts and Collins (2020) indicates that while many musicians regard AI as a collaborative tool, experienced users express scepticism, concerned that its widespread adoption may result in job losses, homogenisation of music, and a stagnation in the evolution of musical expression. Employees in the creative industries express resistance to generative AI due to concerns over job security, but employers see its potential for cost-cutting and replacing human roles. This conflict was especially evident in Hollywood strikes, where demands for limits on AI scriptwriting highlighted broader anxieties about protecting human creativity (Wong, 2024). This employee-employer divide regarding generative AI continues to expand. Employers’ efforts to integrate AI aim to prepare creators for future demands; however, interviews with creatives reveal that many perceive these initiatives as prioritising managerial efficiency over fostering creativity (Boucher et al., 2024).

Through interviews and observations, Boucher et al. (2023) found that attitudes towards generative AI also differ across job roles, with programmers finding practical value in tools like GitHub Copilot for efficiency, whereas artists feel vulnerable to job displacement, experience reduced satisfaction and struggle with generative AI’s limitations in conveying specific emotions and achieving game-ready assets. Instead of adopting an overly optimistic or resistant stance, interviews reveal that Finland screenwriters exhibit a cautious yet pragmatic acceptance of generative AI, admitting its potential as a supportive tool in early script development but prioritising human creativity and final control in storytelling (Vainikka et al., 2024).

Only three studies examine customer acceptance. Through experimental research, Chen et al. (2024) found that generative AI in game recommendation enhances perceived content quality and user support intentions, with a user preference for AI-driven recommendations over those by real individuals, reflecting generative AI’s potential to evoke similar social and emotional responses as human interaction. In live performances, audiences show increasing interest in AI-driven entertainment and human-AI interaction, with varied expectations about AI’s conversational skills and creative support (Branch et al., 2024; Chopra, 2023).

In examining attitudes and acceptance of generative AI, the studies reveal variations in producers’ attitudes toward generative AI. Investigating these differing perspectives in greater depth could provide insights to guide the integration of generative AI in ways that balance the diverse needs of all stakeholders. Furthermore, the reviewed studies focus more on the perspectives of producers than on those of audiences. This suggests a need for further research to explore the voices of audiences, whose experiences are critical in determining the value of generative AI-powered products.

Thematic overview

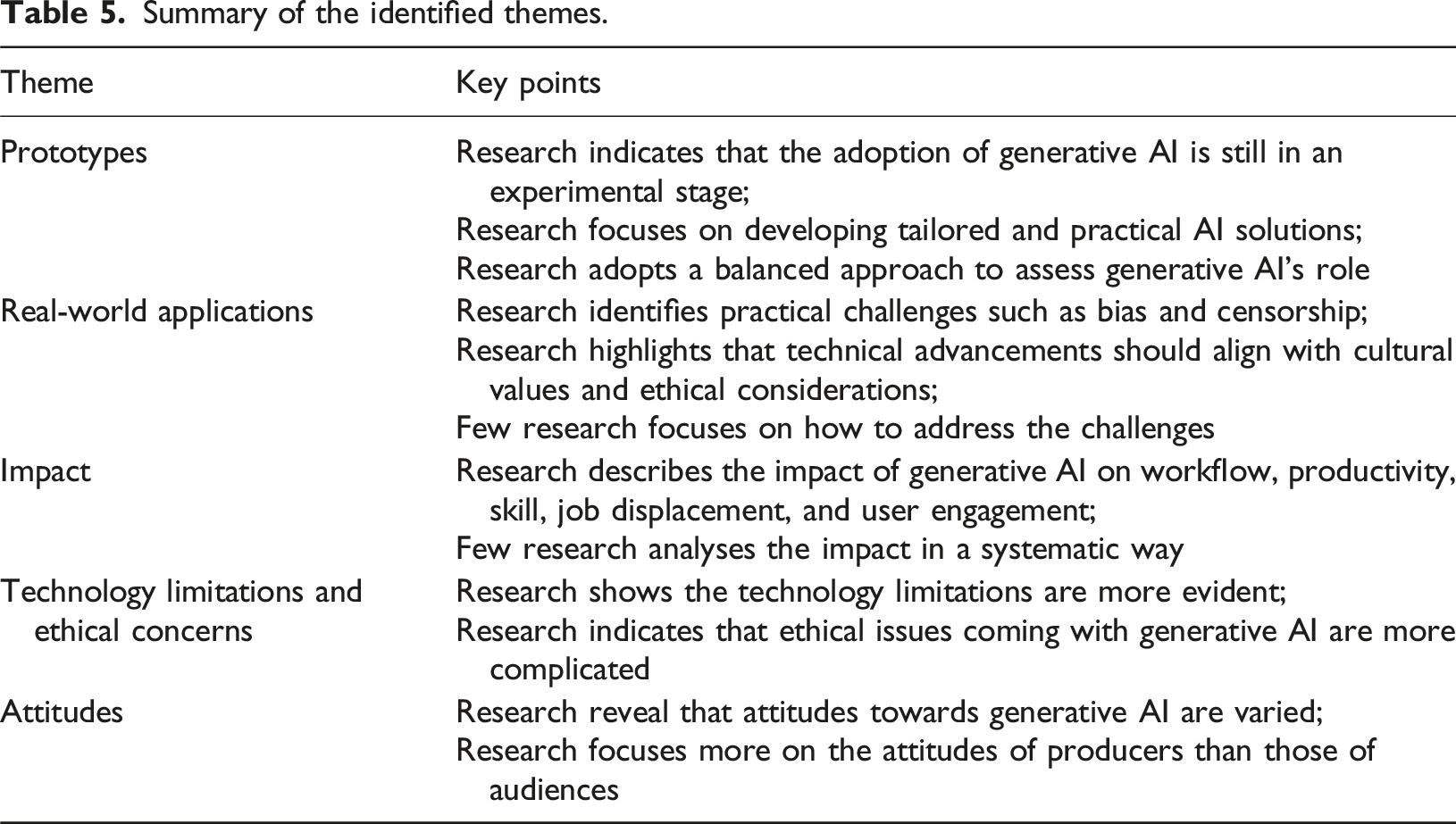

Summary of the identified themes.

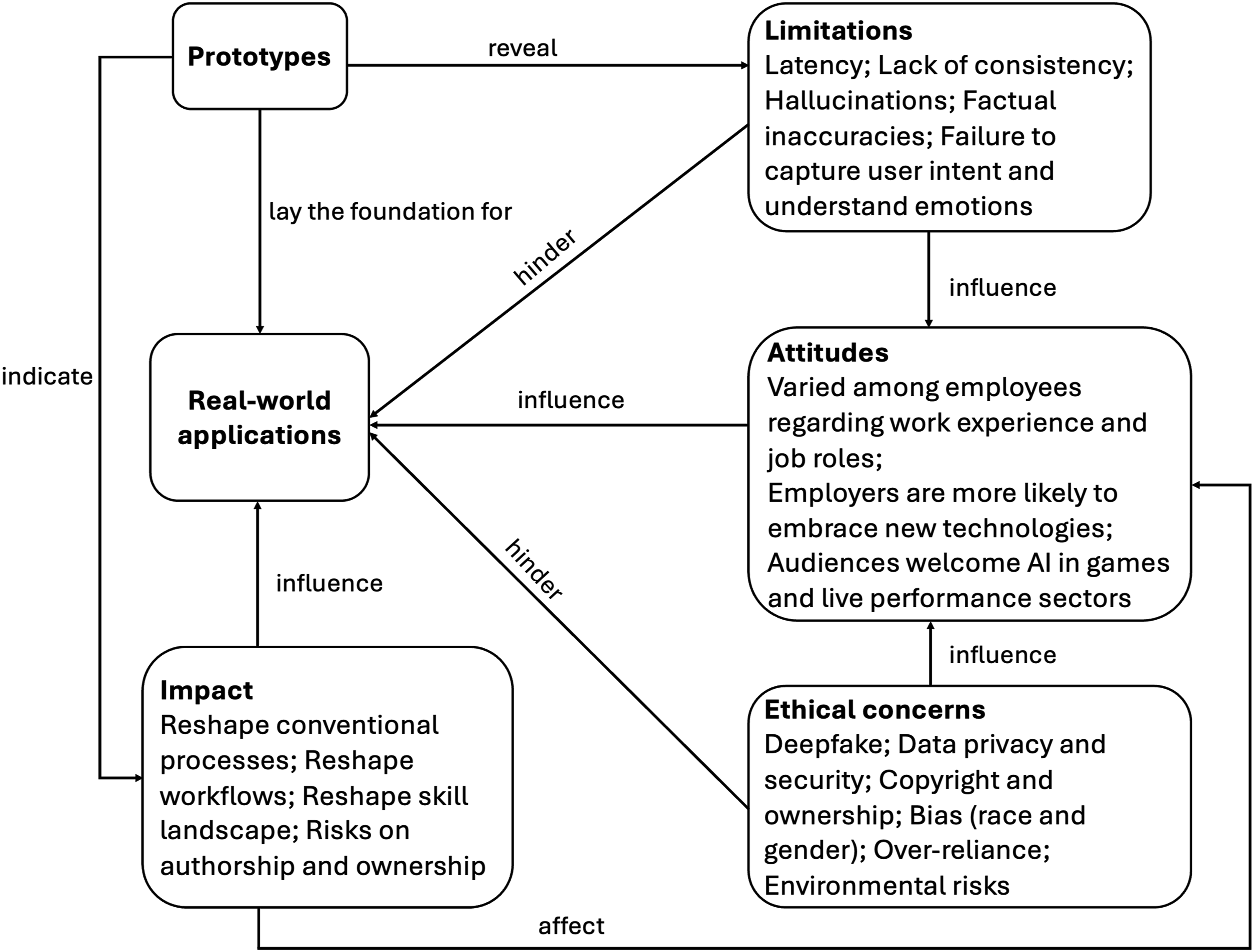

As previously discussed, this study aims not only to categorise the identified themes but also to synthesise them into a conceptual framework that explores their interconnections.

The proposed framework (Figure 10) for mapping generative AI studies within the screen and live performance industries demonstrates that prototypes serve as critical tools for testing the capabilities of generative AI across diverse domains, establishing a foundational basis for the real-world adoption and integration of generative AI. Proposed conceptual framework to map generative AI studies.

The adoption of generative AI is not an easy process, constrained by technology limitations and ethical concerns that shape attitudes and acceptance. These attitudes, along with the broader impacts of the technology, directly affect its usage. The impact of generative AI, both positive, such as enhancing efficiency and user engagement, and negative, such as necessitating new skills and causing job displacement, may shape the direction of people’s attitudes and perceptions.

Overall, this framework illustrates the intricate interconnections between the identified themes. Guided by this analysis, the study further provides insights into current research trends and proposes directions for future investigations.

Current research trends and directions for future research

To answer RQ3, this section explores current research trends, existing gaps in the literature, and suggested directions for future investigations.

Research trends

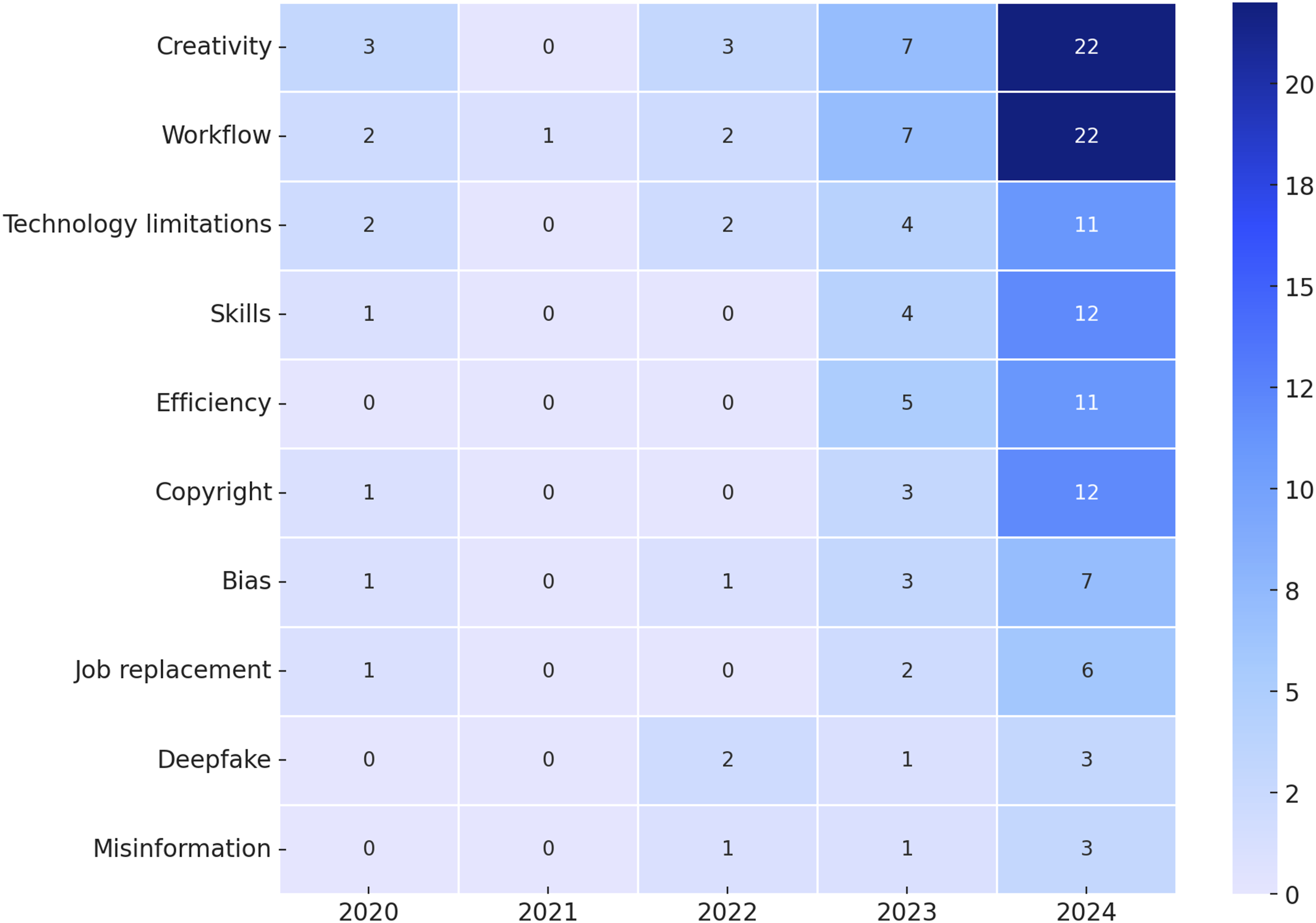

Building upon the analysis of current research themes, this section explores the research trends by focussing on specific keywords that capture the evolving discourse around the adoption and impact of generative AI in the screen and live performance industries. To provide deeper insights, this study identifies recurring terms in the literature, quantifies their frequency, and selects the top 10 keywords that represent critical dimensions of the field, as shown in Figure 11. Research trends (identified by the frequency of keywords).

The general trend for the top 10 keywords from 2020 to 2024 shows an upward trajectory. ‘Creativity’ and ‘Workflow’ represent relatively established areas of research, demonstrating high frequencies across the years. In contrast, keywords such as ‘Technology limitations’, ‘Skills’, ‘Efficiency’, ‘Copyright’, and ‘Bias’ reflect emerging areas of focus. This shift shows an evolution in research priorities, transitioning from established topics to a broader engagement with the technological, practical, and ethical dimensions of generative AI integration.

In the current literature, ‘Creativity’ has been widely discussed. Generative AI’s capacity to produce novel content and provide inspiration challenges traditional concepts of creativity, positioning it not just as an assistant but increasingly as a co-creator, blurring the boundaries between human ingenuity and machine-generated innovation (Bhambri and Khang, 2024). This blurring raises academic discussions on whether generative AI enhances, diminishes, or redefines creativity, and whether creative industries prioritise innovation over the art of creativity (Zhou and Lee, 2024).

‘Workflow’ is another key area of research, together with keywords such as ‘Efficiency’, ‘Skills’, and ‘Job replacement’, the literature examines the disruptive impact of generative AI on work dynamics, exploring how these tools streamline workflows, empower productivity, and reshape traditional roles. Critical discussions also address challenges such as over-reliance on automation and emerging skill gaps, prompting questions about how to balance technological advancement with the preservation and enhancement of human expertise.

The fear of ‘Job replacement’ is evident in the literature, showing anxiety among creative workers. This concern is not unfounded, as the introduction of ChatGPT led to a 21% decrease in job postings on writing and coding while image-generating AI caused a 17% reduction in image-related job opportunities (Demirci et al., 2023). In this case, it is unsurprising that some creatives exhibit resistance to generative AI tools due to concerns over job security and diminished job satisfaction. The necessity to acquire new technological skills to remain competitive may also impose an extra burden, potentially intensifying their reluctance (Einola and Khoreva, 2023).

With substantial attention on generative AI, the literature looks into its ‘Technology limitations’, and ethical issues, including ‘Copyright’, ‘Bias’, ‘Deepfake’, and ‘Misinformation’ rather than solely embracing the technology. Technology limitations may be resolved more readily as advancements lead to continual upgrades and maturation; however, ethical issues present greater complexity.

For example, the capability of generative AI to generate new content complicates copyright issues. Bias, embedded in training models, risks amplifying existing stereotypes, posing challenges to fairness and representation (Samuel et al., 2024). Deepfake and dis/misinformation challenge the reliability of information and undermine public trust in digital media content (Vaccari and Chadwick, 2020).

Although the studies touch on various aspects of generative AI’s role within the field, research gaps persist, underscoring the need for deeper exploration.

Research gaps

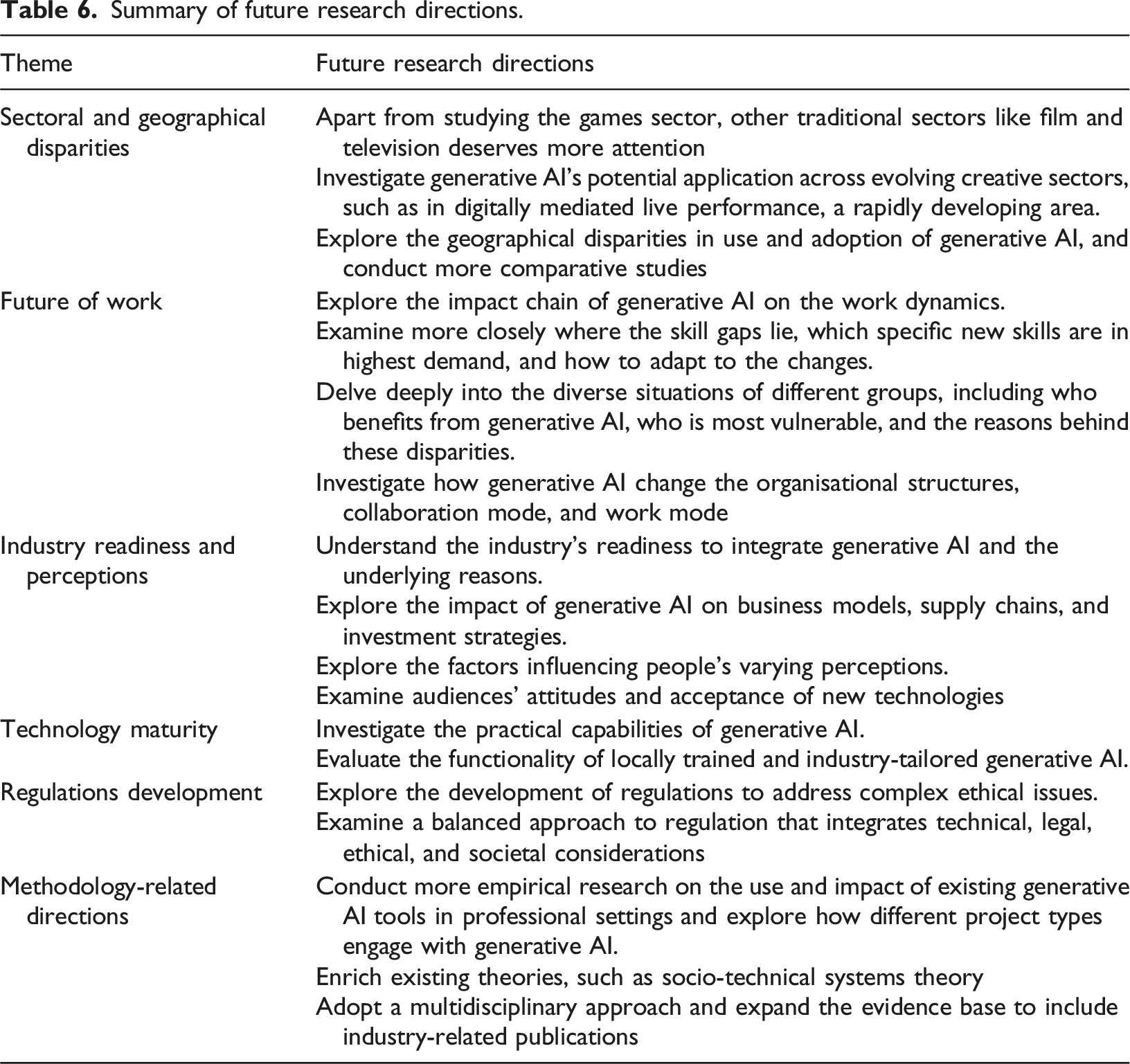

Summary of future research directions.

Sectoral and geographical disparities

The literature exhibits an unbalanced focus across sectors, with the gaming sector receiving substantial attention, while the television sector, despite its traditional importance in the screen industry, is surprisingly underexplored. Besides, there’s a need for further investigation into generative AI’s application across evolving creative sectors, such as in digitally mediated live performance, where new forms are rapidly emerging. Studies have primarily focused on co-present performance, yet there is a growing scope for AI in digital live experiences where performers and audiences do not share the same physical space (Auslander, 2022). ABBA Voyage, which connects audiences with digital avatars rather than physically present performers, exemplifies this shift and shows the potential for advanced technologies to contribute to such formats (Matthews and Nairn, 2023). Understanding how generative AI is, or can be, integrated into these evolving forms of live performance represents a promising area for future research.

Beyond sectoral differences, most of the studies in the analysed sample do not have a specific country perspective on the adoption of generative AI. While some studies do show a country context, they often describe different aspects of generative AI adoption and its impact, which makes direct comparisons difficult. Given that issues related to generative AI’s use and implications form a complex landscape, this gap underscores the need for future studies to investigate more country-specific experiences, best practices and challenges. Following this, comparative analyses can be conducted to understand how factors such as infrastructure, cultural attitudes, and regulatory frameworks influence the use of generative AI across different regions.

Future of work

Although many studies acknowledge the impact of generative AI on work dynamics, further research is necessary to explore the connection between AI-driven workflow changes and evolving skill requirements. There is a limited understanding of which skills will be in the greatest demand and how individuals and organisations will adapt to these new requirements.

Moreover, limited attention has been given to identifying who stands to benefit from these tools and who is most at risk of job displacement, with freelancers being particularly underrepresented in the discourse. Additionally, the broader implications of these changes, including their potential to reshape departmental structures and collaboration modes, remain unclear.

The development of solutions, such as targeted training programmes, remains unexplored. While this review excludes the application of generative AI in educational contexts, its potential to enhance training and skill development should not be overlooked and deserves more exploration.

Industry readiness and perceptions

The current body of research provides a limited evaluation of the industry’s readiness for adopting generative AI. This leaves gaps in understanding the capacity for integration and whether industry practitioners are in the early stages of adoption or scaling its use. The enablers and barriers behind this lack sufficient examination, as does the impact of generative AI on business models, supply chains, and investment strategies.

Future studies are also needed to explore the underlying reasons for practitioners’ mixed perceptions of generative AI, identify the factors that shape these views, and enhance communication among different stakeholders to bridge differing expectations in AI adoption.

In addition, audience attitudes and acceptance of generative AI should be examined more closely, as their willingness to engage with AI-generated content could influence the industry’s decision-making regarding using these technologies.

Technology maturity

Research has shown the limitations and ethical concerns associated with generative AI adoption, however, there remains a gap in understanding the capabilities of these technologies within the screen and live performance industries, as well as a lack of clarity about which tools and technologies are useful and which are not.

Further research is necessary to explore the development of locally trained, industry-specific models. By focussing on tailored solutions, future studies can provide more actionable insights into how generative AI technologies can be effectively integrated within these industries.

Regulations development

A critical research gap lies in the formulation of regulations that address the unique challenges posed by generative AI. For example, the emergence of new forms of copyright raises complex questions about how copyright issues should be understood and assessed. Efforts such as the EU AI Act and the UK AI Opportunities Action Plan represent significant steps toward addressing AI challenges.

Therefore, future research is essential to understand in more depth the implications of generative AI, provide robust academic evidence for policymakers, evaluate existing regulatory frameworks, and support the development of policies that balance ethical, legal, and societal considerations with the need to foster innovation.

Methodology-related directions

Although the reviewed studies are mainly empirical, further empirical research is needed on the use and impact of existing generative AI tools in professional contexts. Further research could also investigate how different project types, such as traditional, VR, and experimental films, engage with generative AI. Such investigations could further contribute to theoretical development, for example, by enriching socio-technical systems theory in relation to generative AI adoption in creative sectors.

Additionally, a multidisciplinary approach drawing from management, law, and the humanities would deepen the understanding of generative AI adoption and its implications. Future research could also benefit from expanding its scope beyond peer-reviewed articles to include more industry-related sources. This expansion would foster a more holistic understanding of market practices, providing insights for practitioners and policymakers on how advancements shape the industry and introduce new opportunities and challenges.

Conclusion

In conclusion, this study reviewed 98 literature on generative AI and its integration into the screen and live performance industries between 2020 and 2024. The analysis revealed five themes: prototypes, real-world applications, impacts, technology limitations and ethical concerns, and attitudes. These themes were then synthesised into a conceptual framework to illustrate their interconnections. Furthermore, the study identified current research trends by analysing the frequency of keywords and provided potential directions for the ongoing exploration in this field.

This research contributes to a deeper understanding of the current literature landscape surrounding generative AI in the targeted industries, but it has several limitations. First, the focus on literature published between 2020 and 2024 means that the findings are constrained to a specific timeframe. As generative AI is a rapidly evolving field, future research is likely to introduce new perspectives and findings that may necessitate updates to the conclusions drawn in this work. This evolution is occurring not only in AI development itself but also across creative sectors such as live performance, where new digital forms are emerging and opening up avenues for the intersection of generative AI and artistic expression. However, the studies analysed in this research primarily consider live performance within the traditional definition of shared time and space between performers and audiences, and thus this review may not fully account for these emerging developments.

Second, the analysis is based on sources retrieved from Scopus, Web of Science, and Google Scholar. While these databases are widely recognised for their academic rigour, the inclusion of additional databases, including industry-specific ones, could provide a more comprehensive understanding of the research landscape, capturing a wider range of studies and viewpoints.

Finally, this study is limited to works published in English, which excludes valuable research conducted in other languages. Expanding the scope to include non-English publications would offer diverse cultural and regional insights.

This work will continue, aiming to advance the understanding of the intersection of generative AI and the industries now that its low-maturity but fast-moving and promising nature has been recognised.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Loughborough University, as part of the AHRC CoSTAR programme (AH/Y007433/1).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.