Abstract

This article examines the social technology company Meta’s public communication on problematic content, via their official ‘Meta Newsroom’, within the context of growing regulatory scrutiny. For nearly a decade, the Meta Newsroom has been a major outlet for Meta company announcements, and since 2016, the Newsroom has increasingly become a key source for company responses to concerns regarding mis/disinformation and other kinds of problematic content on Meta’s platforms. Using a mixed-methods approach informed by discourse analysis, this article critically examines Newsrooms posts from 2016 to early 2021. It asks: how is Meta framing ‘problems’ on its platforms? How is Meta identifying ‘solutions’ to those problems? And is Meta ‘nudging’ policymakers in specific conceptual directions? Overall, we find that Meta is framing content moderation issues through four key frames – ‘authenticity’, ‘political advertising’, ‘technological solutions’, and ‘enforcement’ – that benefit Meta, as they shift responsibility while also demonstrating that Meta is an active and capable problem-solver.

Keywords

Introduction

Since the early 2010s, the official Meta Newsroom has been an outlet for a variety of corporate announcements from Meta, the operator of Facebook, Instagram, and WhatsApp. However, following the 2016 US Presidential election, the Newsroom has also served as a key outlet for company responses to concerns about the spread of ‘fake news’, mis/disinformation, conspiracy theories, and other kinds of problematic content on Meta’s platforms (Kurtzleben, 2018). Some notable Newsroom announcements in this regard include the 2018 newsfeed algorithm change (Mosseri, 2018), aimed at ‘bringing people together’ by reducing news content in the Facebook newsfeed. Another was the decision to suspend former US President Donald Trump’s account following the Capitol siege on 6 January 2021 (Rosen, 2021). In this paper we examine how, as a major public relations outlet for one of the world’s largest social technology companies during a period of tumultuous social, political, and technological upheaval, the Newsroom has framed the problem of problematic online content on its platforms. We also discuss the implications of these frames for how publics and policymakers understand and tackle problematic content on platforms.

We critically examine Newsroom posts from 2016 through to early 2021, focussing specifically on a discourse-theory-oriented analysis of posts that address issues relating to problematic content – specifically, misinformation, disinformation, hate speech, and conspiracist content – on Meta platforms. Combining topic-modelling with close readings, we identify key themes and concepts from these posts. We ask: how is Meta framing problems on its platforms? How is Meta identifying solutions to those problems? And is Meta attempting to nudge public discussion of these problems in specific conceptual directions?

This article closes by discussing the implications of Meta’s discursive frames for the general public, and more specifically for mis- and disinformation policy, including voluntary co-regulations. In this regard we examine policy in regions which have had active regulatory developments in the past few years, such as the EU and Australia (the latter which is our home country). Measuring the reach and impact of Newsroom frames is beyond the scope of our study, and we do not claim that the Newsroom is determining public discussion or policy development. Instead, we note that Meta could be attempting to frame discourse around problematic content on its platforms in ways that suit the company’s self-interest. Most clearly, we find that the Meta Newsroom has framed problematic content as emerging from ‘outside’ actors misusing Meta platforms, while also simultaneously communicating that Meta is competently detecting and removing such content. In regards to policy, we identify some thematic alignments between regulatory priorities and Newsroom posts, especially around concerns about ‘inauthenticity’ and ‘political advertising’.

Background

Company-run ‘newsrooms’ are not unusual. Other major social media companies – such as X, formerly Twitter (nd) and TikTok (nd) – also operate blog-like newsrooms to highlight community events, announce changes to their platforms or new features, and respond to controversies. These newsrooms function as public relations platforms: they produce a self-image beneficial to the company and strategically manage public relationships. Simultaneously, they attempt to limit the range of topics circulating around specific issues of interest, by shaping and controlling the discursive fields. In other words, what gets publicly discussed – and how – is shaped by the newsrooms. Over the past 15 years, these blog-like newsrooms have been part of a widespread transformation of traditional public relations tools, such as press releases, for the interactive and social Web (Wang et al., 2021). These newsrooms even have their own terminology in public relations research: ‘social media newsrooms’ (Zerfass and Schramm, 2014).

Therefore, it is not in itself significant that Meta has a public relations-oriented digital newsroom. However, over the past decade, as Facebook and other Meta-owned social media platforms have grown in immense popularity and profitability, the Meta Newsroom has become a key source of company announcements that impact billions of users. Significant announcements here include changes to Facebook’s newsfeed algorithms: for example, algorithm changes in 2014 (El-Arini and Tang, 2014), 2016 (Peysakhovich and Hendrix, 2016), and 2017 (Babu et al., 2017) that addressed the prevalence of ‘clickbait’ in the newsfeed, which was generating significant negative attention during these years. In 2018, the major aforementioned algorithm change, which shifted the newsfeed away from news content and towards ‘meaningful’ posts from ‘friends and family’, was also announced via the Newsroom (Mosseri, 2018). Many news outlets suffered a significant decline in audience engagement following this algorithm change (Grygiel, 2019).

Moreover, since 2016 the Newsroom has played a significant role in Meta’s struggle with content moderation. As mentioned above, in late 2016 there were reports that the US Presidential election result that year had been influenced by ‘fake news’ proliferating on Facebook (Allcott and Gentzkow, 2017), as well as disinformation operations backed by other states (Broderick, 2019). Furthermore, Facebook was increasingly under scrutiny for allegedly enabling the growth of hyperpartisan news outlets such as Breitbart and InfoWars (Warzel, 2019). These concerns were raised again during and following the 2020 US Presidential election, and throughout the ongoing COVID-19 pandemic, due to Facebook’s role in amplifying misinformation and conspiracist content about the virus (Gallagher, 2021). Growing regulatory scrutiny, from the EU, Australia, the US, and other countries (Meese and Hurcombe, 2020), for example, has also been a result of these growing concerns, as governments over the past half-decade have begun to consider or implement laws and regulations that attempt to clamp down on problematic content on platforms.

The Newsroom has been a prolific channel through which Meta has attempted to demonstrate that it takes these content moderation issues seriously. Since 2018 the Newsroom has released semi-regular reports on actions taken against ‘coordinated inauthentic behaviour’, a term Meta has adopted to describe coordinated information operations on their platforms, 1 and Meta has used the Newsroom to release its reports on community standards enforcement (Rosen, 2021). Major content moderation decisions have also been announced and justified within the Newsroom. During the 2020 election, this included the decision to ban groups and pages that celebrated violence, or which constituted a ‘militarised social movement’ (Meta, 2020c). Prominent conspiracist movements, such as the pro-Trump ‘QAnon’ movement, were included within this category. More significantly, the unprecedented decision to suspend the former US President on all Meta platforms was also officially announced and explained via the Meta Newsroom (Rosen and Bickert, 2021). Issues around news quality have also been addressed through the Newsroom: for instance, in August 2020 Meta announced it was adding ‘additional context’ to COVID-19-related news content (Hegerman, 2020).

The Newsroom is not the only communicative tool at Meta’s disposal, though. Other channels include official social media accounts, such as Mark Zuckerberg’s Facebook page. Meta has also responded to content moderation issues by partnering with factchecking and news organisations Snopes and the Associated Press, amongst others (Ananny, 2018), and by offering grants to academics researching mis- and disinformation (Meta, nd). Famously, it has even appeared in front of governments to answer thorny questions about democracy and ‘free speech’ (Watson, 2018).

In this respect, there have been many avenues through which Meta has responded to content moderation issues. However, over the past decade, the Meta Newsroom has consistently played a prominent role as a mouthpiece for the company. Certainly, this is how the Newsroom is treated by news outlets, who often report on Meta announcements (e.g. Hern, 2018). These news reports on Meta Newsroom announcements also work to amplify Newsroom content beyond audiences who actively follow the Newsroom website. Furthermore, favoured terminology from the Meta Newsroom – such as ‘coordinated inauthentic behaviour’ (Gleicher, 2018) – have also been popularised outside of official Meta communications. For instance, this term has since been picked up by academic researchers (Giglietto et al., 2019) as well as US government representatives (Douek, 2020). This term also appears in the 2022 ‘strengthened’ EU Code of Practice on Disinformation (CPD), and a variation (‘inauthentic behaviours’) is a keyword in the glossary of Australia’s current DIGI Code of Practice on Disinformation and Misinformation (CoP). This is despite Meta’s preferred term having been criticised for focussing on the vague concept of ‘inauthenticity’ (Douek, 2020).

This all suggests that the Newsroom, by framing these issues strategically, may be attempting to nudge policymakers, as well as journalists, researchers, and users, in certain directions when it comes to understanding and tackling problematic content on Meta’s platforms. At the same time, the Newsroom could be working to exclude and limit possible alternative discourses around issues faced by Meta. For instance, the Meta Newsroom, by emphasising ‘coordinated inauthentic behaviour’, may be trying to direct public attention towards instances of ‘fake’ and/or automated malicious activity – for example, bots and state-backed information operations – over examples of problematic and yet ‘organic’ behaviour (e.g. misinformation spread by ordinary users). Some scholars have noted how, following the introduction of ‘coordinated inauthentic behaviour’ as official Meta terminology, Meta started to shift focus to ‘individual bad actors’ (such as Russian troll farms) in its public communication regarding mis- and disinformation (Acker and Donovan, 2019).

However, so far little scholarly attention has been paid to the Meta Newsroom or similar tech company PR outlets. Some studies have used Newsroom articles as examples of how Meta demonstrates a form of ‘enhanced self-regulation’ (Medzini, 2022), and others have examined Newsroom posts in the context of Meta CEO Mark Zuckerberg’s ‘future imaginaries’ (Haupt, 2021). Scholars have also examined how Meta’s ‘elite discourse’ circulates in news outlets (Lucia et al., 2023), as well as how it is constructed in Mark Zuckerberg’s own utterances in a variety of media (Hoffmann et al., 2018). Yet, the discursive role of the Meta Newsroom itself has not been studied in detail. In this article, by combining discourse analysis with a quantitative computational approach, we begin to fill this gap by focussing on the discursive character of Newsroom content.

Theoretical framework

The mixed-methods approach taken in this study is theoretically informed by discourse analytical approaches, particularly Laclau and Mouffe’s (1985) discourse theory (Discourse Theory), and insights from critical discourse studies (CDS), specifically, the discursive strategies identified within the Discourse-Historical Approach to CDS (Reisigl and Ruth, 2005; Reisigl and Wodak, 2016). It is not possible to discuss the complex theory of discourse put forward by Laclau and Mouffe in this article. Therefore, what follows will aim to briefly introduce the core aspect of the theory, and what implications it has for this research. Discourse Theory argues that discourses – networks of meaning – are formed around central signifiers (i.e. nodal points). These nodal points, though, do not necessarily have fixed meanings, and are in a contingent state, with different discourses competing to ascribe their own meaning to them. In other words, they are ‘overflowed with meaning’ (Torfing, 1999: 301). One such example, as can be seen later in this article, is the signifier ‘authenticity’ and how it is used by Meta. In this way, any meaning attached to a signifier potentially excludes all other possible meanings. In turn, any discursive network formed around these central signifiers is a totality of meaning that excludes all other possibilities from the formation. These possible meanings, therefore, inhibit a space outside of a discourse, known as the ‘field of discursivity’ (Laclau and Mouffe, 1985: 112).

It is here where one can observe the role of framing by various actors in the formation and maintenance of discourses. Through strategic and tactical framing, actors aiming to hegemonise their discourse can keep certain signifiers outside the discursive formation, mainly via foregrounding of agendas, themes, topics, and signifiers that they favour. In this article, we trace this process through an examination of the nodal points in the discursive formations set by Meta’s Newsroom. We observe Meta’s active and strategic shaping of the network of meaning formed around the problems faced by and on the platform, and the solutions to them. By actively framing the narrative, particularly through intensification and mitigation, or foregrounding and backgrounding strategies (Reisigl and Wodak, 2016), the Meta Newsroom emphasises and foregrounds certain signifiers as the nodal points of the discourse. This ‘foregrounding’ strategy, in turn, also simultaneously plays the role of a ‘mitigation’ strategy (ibid.) of all the other problems faced by Meta. That is, the Meta Newsroom strategically avoids discussing certain signifiers and issues, and keeps them outside the network of meaning, effectively positioning them within the field of discursivity.

The methodological process in this study aims to deconstruct this meaning-making by the Meta Newsroom through a large-scale computationally aided analysis of words, themes, and signifiers foregrounded by Meta. Research within this space, such as Baker et al. (2008), Baker (2012), Törnberg and Törnberg (2016), and Wiedemann (2013) has shown the usefulness and rigour of combining computational approaches with discourse-oriented ones. We then compare and contextualise these insights and show what signifiers are within Meta’s discourses, and what others are strategically kept outside.

Approach

We manually collected 181 Newsroom posts 2 between January 2016 and February 2021 relating to problematic content issues on Meta’s platforms. This period was selected as it covers a time in which problematic content on Meta’s platforms became issues of increasing public and regulatory concern. Different terms for conceiving the content moderation ‘problem’ fluctuated during this period; however, we were interested in what keywords were most prominent over time. We did not account for geographic patterns in this data. However, as an English-language outlet for a US company, there tended to be a US-centric bent to Newsroom output, although in the latter half of our time period Meta did regularly release content moderation reports on specific non-US countries. Within this dataset, some posts were attributed to authors while others were not. We chose not to consider authorship as our focus was on the discursive role of the Newsroom as a company outlet. The 181 posts were manually divided (coded) into specific issue categories: ‘disinformation’ (71 posts), ‘inciting violence’ (31), ‘news quality’ (26), ‘election integrity’ (25), ‘general community standards’ (15), ‘hate speech’ (4), ‘transparency’ (5), and ‘content quality’ (4). To identify the key concepts and assist in our analysis of these issue categories, the text from all posts were collated and analysed using Leximancer.

Leximancer (Smith and Humphreys, 2006) is a popular visual text analytic technique that has been well-utilised to support mixed-methods content analysis. Leximancer uses word occurrence and co-occurrence statistics through a Bayes-inspired algorithmic process to model major conceptual content from an input text corpus, outputting metrics and visual concept maps. Bayesian statistical models, like those employed by Leximancer, are shaped by the creation of probabilistic models of belief in the likelihood of an event, in our case specific words appearing with repeated proximity. The repeated proximity of these repeated words implies their conceptual ‘connectedness’.

Leximancer’s concept modelling process is grounded wholly in the statistics of the input corpus that is the subject of the analysis. No external corpora or dictionaries are used in building this language model. A concept in the Leximancer-sense is a unique set of word–weight pairings where these weights provide more or less evidence for the presence of the concept in a piece of text. The interactive visual interface provided by Leximancer provides a trained analyst with a useful mechanism to survey the emergent concepts at a whole, or sub-corpus level, while also delving into specific concepts to reflexively determine their grounded meaning via key text passages from the input corpus.

Leximancer is ideal for studies of discourse due to its ability to provide an automated and reliable means of identifying key themes and concepts within large text datasets, and the aforementioned methodology has been utilised in similar studies, including: critical discourse analysis of media representations of digital-free tourism (Li et al., 2018); Australian media representations of asylum seekers (Hebbani and Angus, 2016); and, in understanding the discursive processes of mediatisation such as in the case of the 2011 Ed ‘Miliband loop’ (Rintel et al., 2016). This is because the clusters of words and concepts provided by Leximancer can be used as ‘windows’ to prominent discursive formations in the underlying texts.

We applied Leximancer in a two-stage manner, firstly to inductively interrogate the emergent high-level clusters of concepts across the entire corpus identified by Leximancer, supplemented by a qualitative examination of the texts themselves, to identify major discursive formations – or themes – across our Newsroom dataset. This first analysis was performed without reference to any of the eight manually pre-identified themes, instead the number of themes is determined automatically by Leximancer using default settings that balances for thematic generality and specificity. This inductive process was then complemented by a secondary directed examination of high-level clusters of concepts identified as being of high prominence within each of the eight manually identified issue categories, six of which are displayed in the Appendix. This directed analysis still relies on the same base conceptual statistics generated by Leximancer in the first analysis, however it uses the pre-determined thematic groupings to aggregate these conceptual statistics per the eight provided theme.

Findings

Overall, we find that there are four consistent themes within the Meta Newsroom’s commentary on issues related to content moderation. We have identified these distinct but related themes as ‘Inauthenticity’, ‘Political advertising’, ‘Technological solutions’, and ‘Enforcement’. The four themes constitute the nodal points around which Meta Newsroom’s discourse of problematic content is formed.

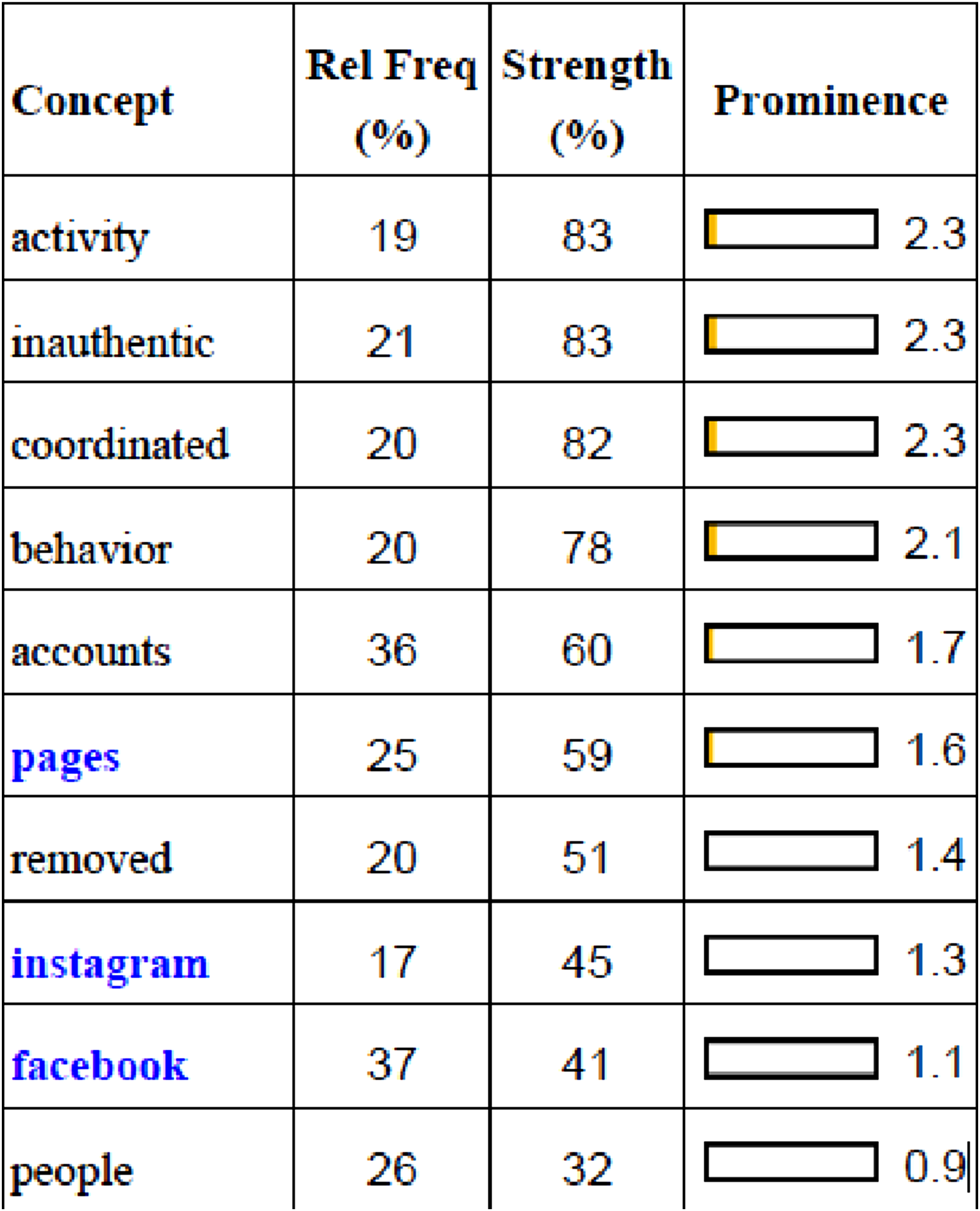

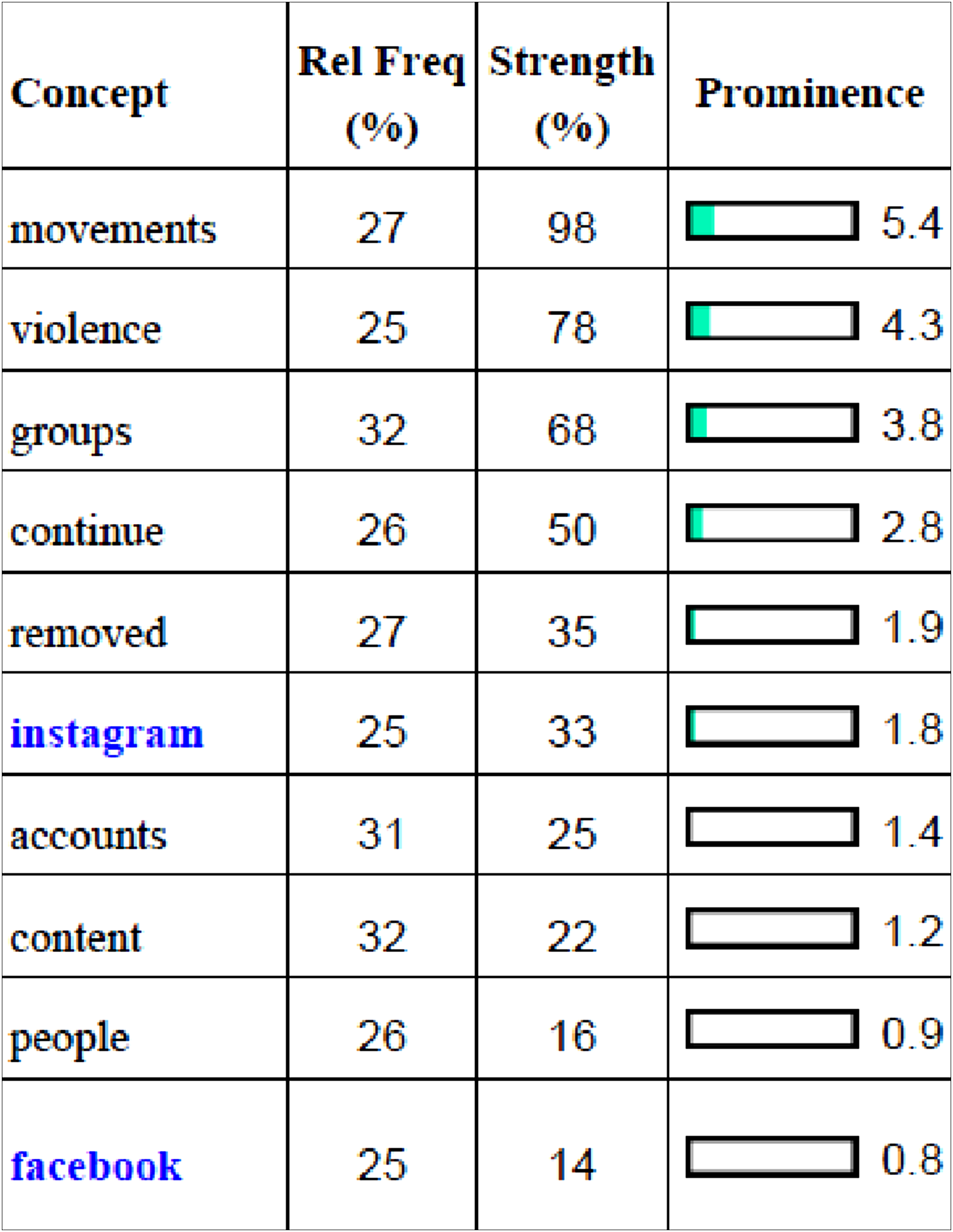

Inauthenticity is the problem

A recurring theme within the Newsroom is ‘inauthenticity’, whether these be ‘inauthentic’ accounts and user behaviour, or deceptive advertising. Most clearly, this can be seen in the dominance of the term ‘coordinated inauthentic behavior (CIB)’ in Newsroom posts relating to disinformation (see Figure 1). The frequency of this term within the Newsroom’s posts can be explained by Meta’s semi-regular reports into CIB, which it has been releasing since 2018. These reports typically share insights into Meta’s detection and removal of various disinformation networks operating on the company’s platforms. CIB’s prominence in these reports, and ‘inauthenticity’ and ‘fake’ as keywords in disinformation-related Newsroom posts overall, indicates the Newsroom’s emphasis on deceptive outside actors as sources of disinformation. The Newsroom’s CIB reports, for instance, note that CIB operations tend to ‘mislead people about who they are and what they are doing while relying on fake accounts’ (Meta, 2020b). Different forms of ‘deceptive’ behaviour, as Meta put it (Gleicher, 2020), have also been the subject of Meta’s Newsroom reports: for instance, Meta distinguishes unsophisticated ‘inauthentic behaviour’ from CIB, using the former to describe ‘much less sophisticated’ and often ‘financially motivated’ behaviours like spam and ‘fake engagement’ (Meta, 2020a). Meta has related this more ‘financially motivated’ form of inauthentic behaviour as akin to spam, or clickbait. This emphasis on combatting ‘inauthenticity’ is also linked to quality control in these posts. Meta state in a report on Iranian state-backed coordinated networks, for instance, that they ‘are constantly working to detect and stop this type of activity because we don’t want our services to be used to manipulate people’ (Gleicher, 2019).

We can also see concerns about ‘inauthenticity’ in Newsroom posts about highly engaged Facebook pages and profiles. For example, a May 2020 Newsroom post reports on Meta’s efforts to verify the identity of profiles whose posts ‘start to go rapidly viral in the US’, and which have a ‘pattern of inauthentic behavior on Facebook’ (Joseph and Paselli, 2020). These efforts build on an earlier requirement for pages with large audiences to undergo ID verification (Joseph and Paselli, 2020). Meta explained these new efforts by stressing the value the company places on authenticity: ‘we want to ensure the content you see on Facebook is authentic and comes from real people’, the May 2020 post stated, ‘not bots or others trying to conceal their identity’ (Joseph and Paselli, 2020). Other kinds of ‘inauthentic’ practices Meta has discussed include ‘manipulated media’ such as ‘deepfakes’ – the use of artificial intelligence techniques to create videos that ‘distort reality’ (Bickert, 2020) – as well as ‘deceptive’ forms of advertising (Goldman, 2017).

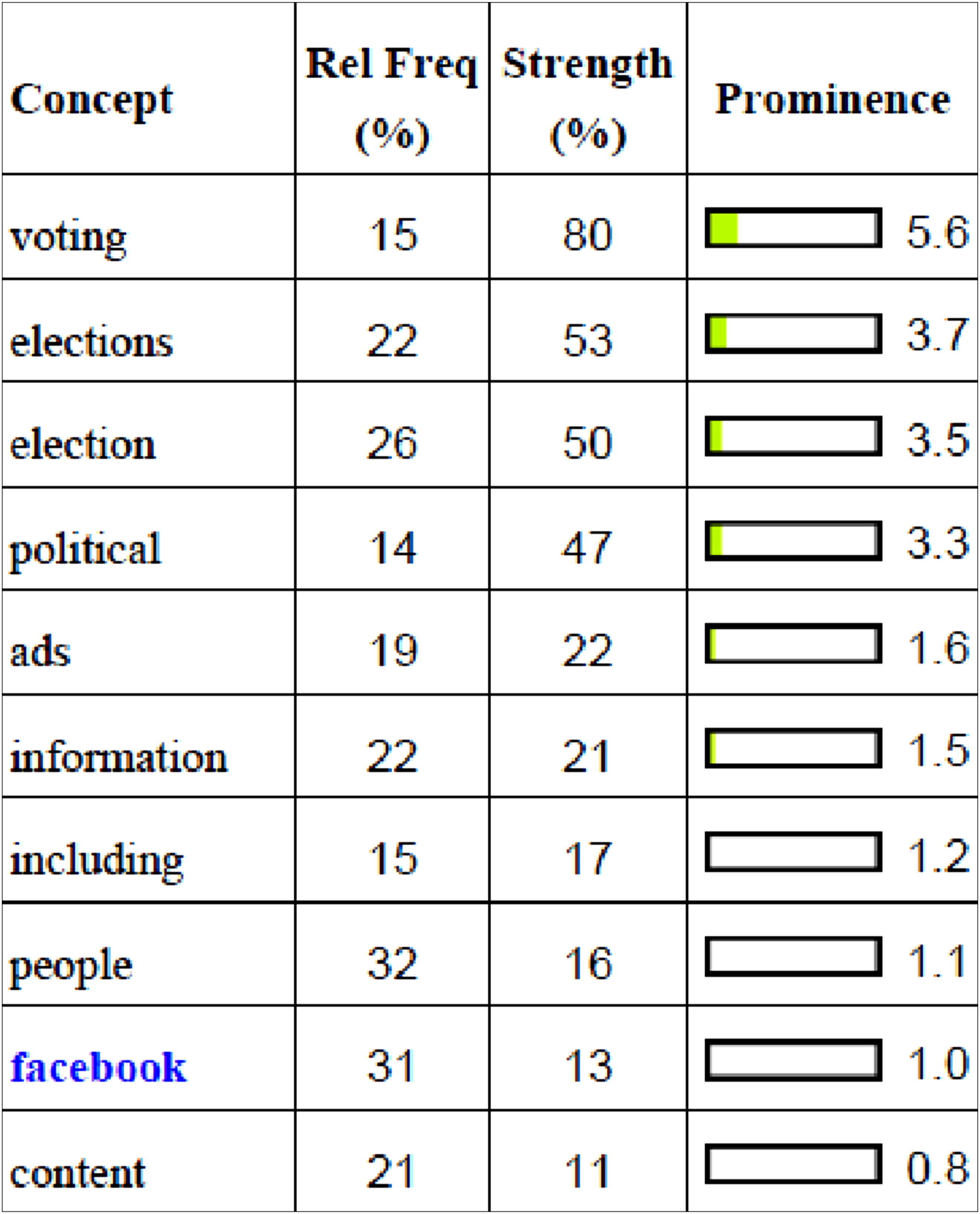

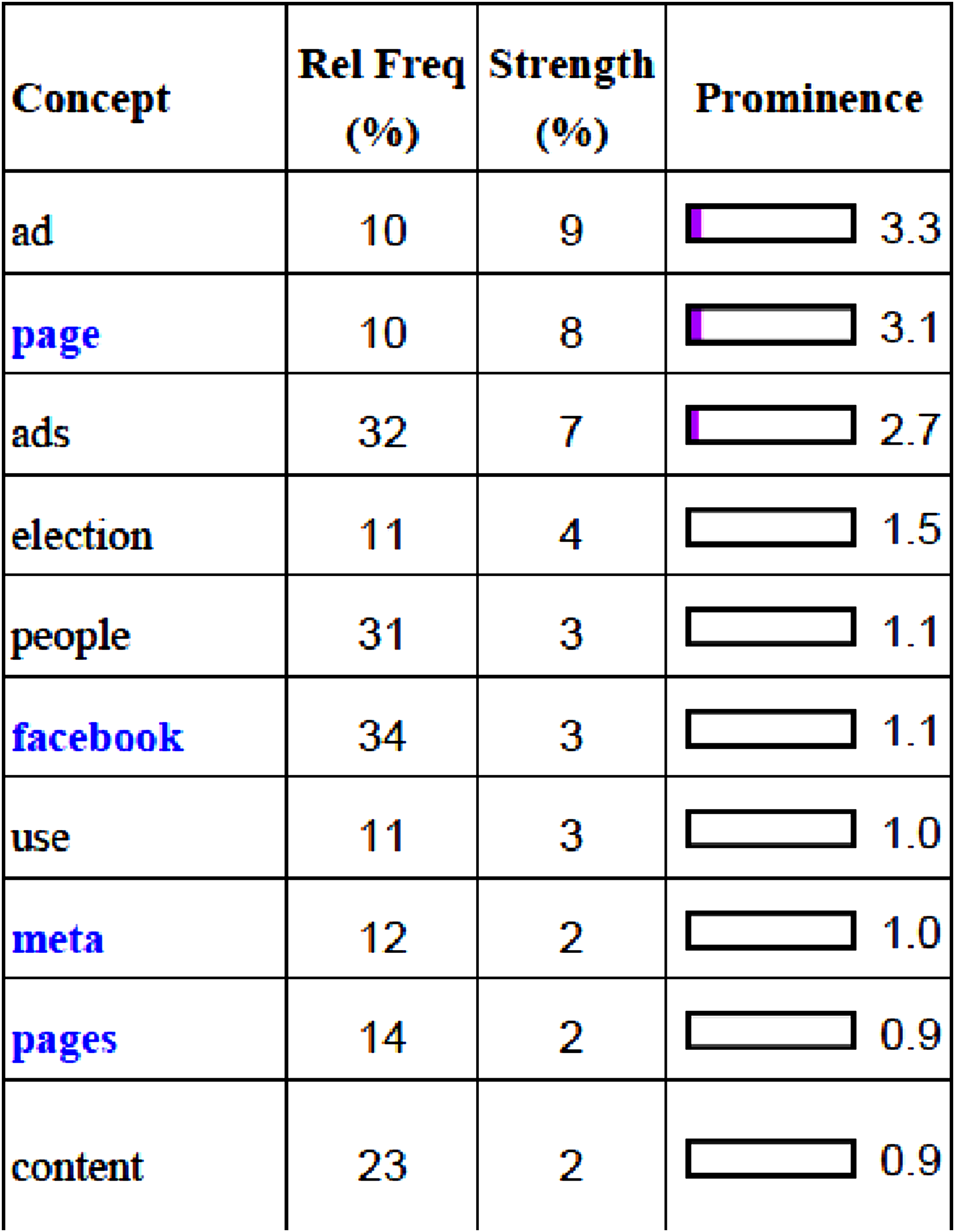

Making political advertising accountable

A second major theme in Meta’s Newsroom is political advertising. Meta often reports on efforts to make political advertising on its platforms more transparent, by providing information about who is running and paying for such advertising. This is especially seen in posts relating to issues to do with election integrity (see Figure 2). In these posts, ‘ads’ was a major keyword. For instance, prior to the 2020 US Presidential elections, Meta had announced numerous measures to increase ‘transparency’ around political advertising (Rosen, 2020b). Early on in the campaign, this included adding labels to ads run by ‘state-controlled media’, establishing a presidential candidate ad ‘spend tracker’, and enforcing advertisers running ‘issue, electoral, or political ads’ in the US to assign a Page Owner (Rosen et al., 2020). Later, Meta announced that they would be temporarily suspending all issue, electoral and political ads in the US after the polls close on November 3, ‘to reduce opportunities for confusion or abuse’ (Rosen, 2020b), and in September, Zuckerberg announced that Meta would not be accepting new political ads in the week before the election (Zuckerberg, 2020). That same month, the Newsroom also announced that Meta would be banning ads that ‘praise, support, or represent’ militarised movements and the pro-Trump conspiracist group QAnon (Meta, 2020c). Outside of the US, Meta has also made announcements about ad transparency measures, such as for the 2019 EU parliamentary elections. These measures have included the requirement for EU advertisers to submit documents and use ‘technical checks to confirm their identity and location’, as well as requiring all political and issue ads in the EU on Facebook and Instagram to have ‘paid for by’ disclosure labels (Website). Indeed, ‘election’ was a major keyword in posts relating to ‘transparency’ issues overall (see Figure 6).

The launch of the Ad Library – previously called Ads Archive – in 2018 also indicates Meta’s emphasis on political advertising. The service launched by focussing entirely on ads related to political issues, although in 2019 this expanded to non-political ads (Shukla, 2019). Still, the Library continues to prioritise political advertising by providing richer targeting information on this specific advertising category, storing inactive political ads (and only these ads), and only allowing search functions for non-political ad categories while those ads are still active on the platform (Angus et al., 2024). Meta’s Newsroom also continued to make announcements about updating the Ad Library to improve electoral integrity: for instance, in the 2019 EU parliamentary elections, Meta announced that the Ad Library would now show more detailed information about Pages running ads in the Library (Website). Like with posts relating to CIB, Newsrooms posts announcing updates to the Ad Library have been framed around product quality and service delivery: for instance, a 2020 update is described as a way ‘help journalists, lawmakers, researchers and others learn more about the ads they see’ (Rosen et al., 2020).

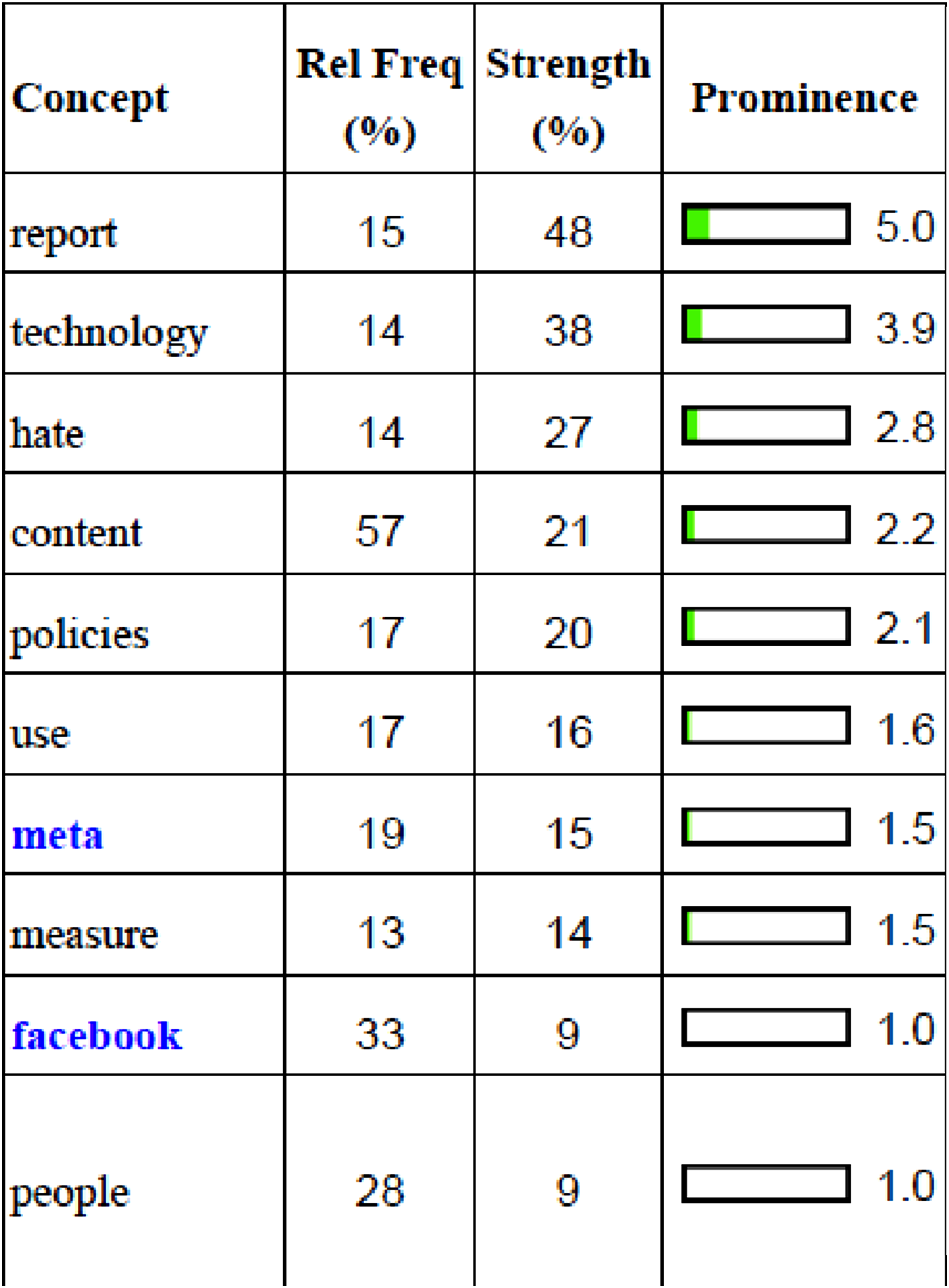

Meta has the technological solutions

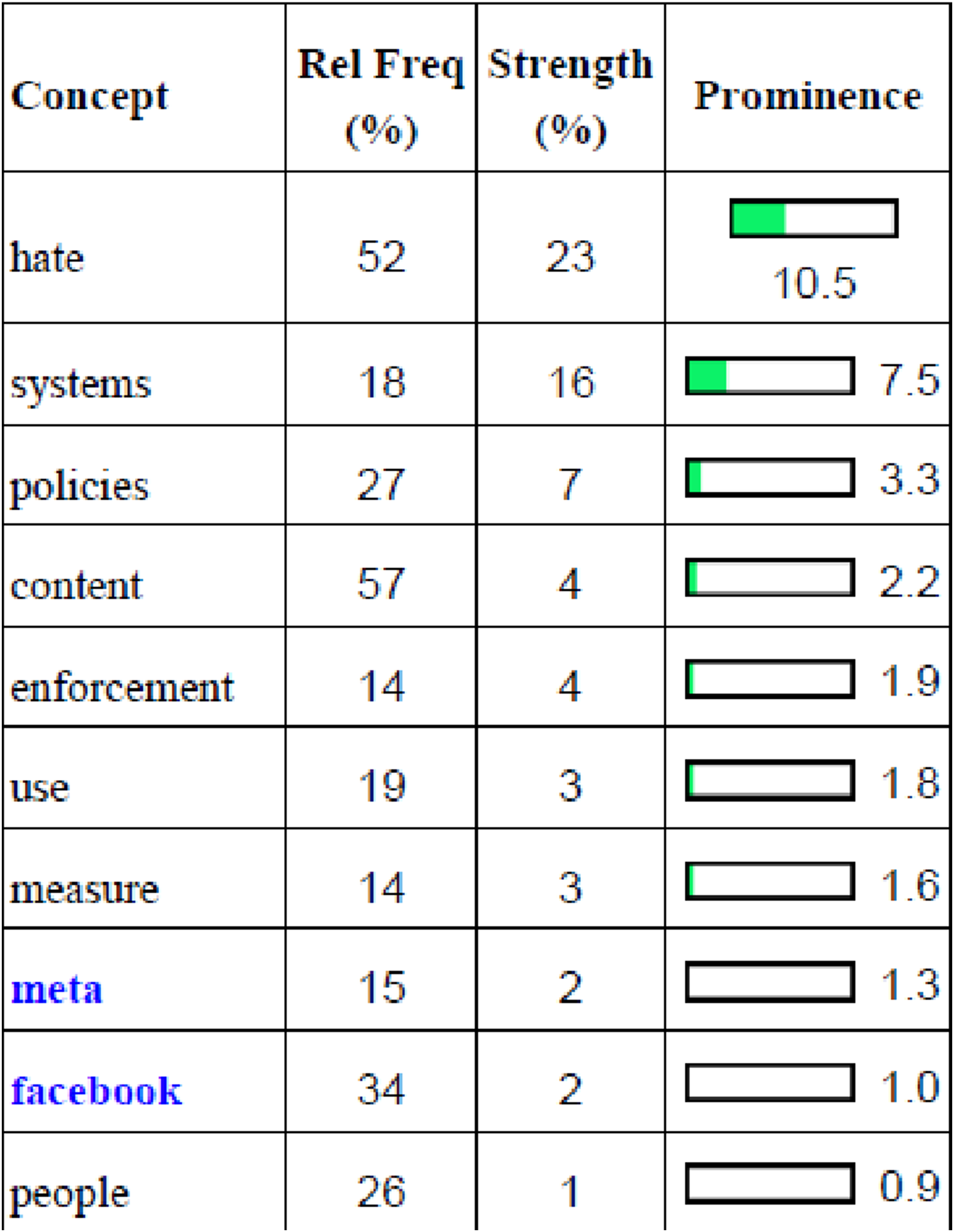

A third major theme in the Meta Newsroom is ‘technological solutions’. Meta, for instance, states that by ‘building better technology’ the company demonstrates how ‘committed’ they are ‘to continually improving to stay ahead’ of complex content moderation problems (Meta, 2020d). Meta therefore often promotes its own technology as a solution to social problems on its platforms, especially regarding issues relating to community standards (see Figure 3) and hate speech (see Figure 4). For posts about community standards on Meta’s platforms, ‘technology’ was a major keyword. For instance, some Newsroom community standards reports detail Meta developing technology to ‘proactively’ or ‘automatically detect’ and remove content that violated their standards (Rosen, 2020a). Others report on how Meta has ‘expanded’ or ‘improved’ upon existing technology to do those same tasks, as in an August 2020 ‘community standards enforcement report’ that describes technological improvements ‘to take more action’ on problematic content during the COVID-19 pandemic (Rosen, 2020b). Earlier ‘community standards’ reports in 2018 and 2019 also promoted using technology developed by Meta to ‘proactively’ detect and remove ‘violating content’ (Rosen, 2018a, 2019b). In both these reports, Meta reports on an increase in ‘proactive’ detection, and attributes this to ‘continued improvements in our technology’ (Rosen, 2018a). Beyond these reports, the Meta Newsroom has also made other announcements about new content moderation technology. These include a 2018 announcement about using machine-learning technology to identify and remove child exploitation material (Davis, 2018), as well as another 2018 post about new artificial technology to help remove larger amounts of problematic content ‘faster’ (Rosen, 2018b).

Posts relating to hate speech on Meta’s platforms similarly promoted technological solutions. As with posts relating to general community standards, these posts often promoted the development of artificial intelligence tools to ‘proactively’ detect and remove ‘hate speech at scale’ (Kantor, 2020). The centrality of artificial intelligence tools to Meta’s content moderation is reflected in the growing percentage of hate speech content that these tools reportedly ‘proactively’ detect. In 2019, Meta reported that AI was proactively detecting ‘65%’ of content it ultimately removed – ‘up from 24%’ from the year before (Rosen, 2019a). In 2020, Meta reported that they were using AI even more, with these tools detecting ‘95%’ of hate speech content that was removed (Kantor, 2020). Meta has also boasted about their ongoing improvements in AI tools, such as the growing ability of its tools to recognise the highly ‘contextual’ nature of hate speech reviewing posts (Kantor, 2020).

Enforcing policies

The last major theme in Meta Newsroom posts is the enforcement of the company’s content moderation policies. This can be seen in how keywords relating to enforcement – such as ‘removed’, ‘policies’, ‘systems’, and ‘enforcement’ itself – are prominent in posts about disinformation (Figure 1), general community standards (Figure 3), hate speech (Figure 4), and inciting violence (Figure 5). Where the theme of enforcement comes through most strongly, however, is in the regular publication of the coordinated inauthentic behaviour and community standards reports discussed above. These reports not only promote certain ways of framing content moderation problems on Meta’s platforms, and certain solutions to those problems, but more fundamentally frame the problems on Meta’s platforms as in the process of being solved. The 2018, 2019, and 2020 ‘community standards enforcement’ reports promoting the use of technology to detect and remove problematic content fit within this enforcement theme. These reports also tend to provide statistical data to demonstrate the success of Meta’s automated detection and removal tools and its content policies more broadly. The coordinated inauthentic behaviour reports are similar in this regard: they often detail what CIB networks Meta recently identified, how they identified them, and how many Meta has removed. The ‘enforcement’ rhetoric, for example, is clearly identified in a February 2020 CIB report which declares: ‘[Meta is] constantly working to find and stop coordinated campaigns… in the past year alone, we’ve taken down over 50 networks worldwide’ (Meta, 2020a). These reports also include screenshots of the offending posts and accounts they identified as ‘coordinated’ and an explanation of their content moderation process, as a way of demonstrating Meta’s expertise at detecting and removing suspect content.

Beyond these reports, we can also see the enforcement theme in posts relating to transparency, as well as in high-profile content moderation decisions. In the first regard, we can see Newsroom posts reporting on transparency measures relating to advertising, such as posts in 2017 and 2018 announcing more information about pages running ads (Goldman, 2017; Leathern, 2018). The ‘enforcement’ theme is clearly present in statements within these posts, such as the claim that Meta is ‘investing heavily in more people and better technology’ to ‘proactively identify’ pages that run misleading ads (Leathern, 2018). In the second regard, we can see how the Newsroom is often used as Meta’s platform for major content moderation decisions. For example, the 2020 decision to remove content relating to QAnon (Meta, 2020c), the 2021 decision to remove content promoting the pro-Trump conspiracist ‘stop the steal’ narrative following the 2020 US Presidential election (Rosen and Bickert, 2021), and mostly famously, Meta’s decision to ultimately ban the former US president from the platform following the Capitol riots in early 2021 (Rosen and Bickert, 2021).

Discussion

Our analysis indicates that Meta’s Newsroom has tended to frame content moderation issues on its platforms as the work of outside ‘threats’ – whether these be ‘inauthentic’ accounts and ‘coordinated’ behaviour, or opaque political advertisers – which Meta is acting on, often with the help of its ever-evolving technology. In other words, when it comes to problematic content and accounts on its platforms, the Newsroom is discursively positioning Meta as not part of the problem, but instead the company with the solutions to the problem and which is already fixing the problem.

This discursive framing can also be identified by what is left out of the Newsroom, that is, the ‘constitutive outside’ (Howarth, 2000: 123). There were two main absences in our findings, the first being the role of highly followed hyperpartisan accounts in stoking political conflict, and second, the relationship between Meta’s platform features and ‘organic’ forms of problematic content and behaviour. These absences were determined to be notable as these two issues have received considerable media attention as apparent vectors of disinformation and ‘polarisation’ (Parks, 2021; Wadman, 2020). Highly followed hyperpartisan accounts, which include conservative personalities such as US commentator Ben Shapiro and far-right news outlets like Breitbart, have thrived on Facebook by creating ‘engagement machines’ that prosper from controversial, and often inflammatory, political content (Gogarty, 2021; Parks, 2021). These accounts are not only highly followed (Shapiro, e.g. has over 9.1 million Facebook followers) and engaged (Ben Shapiro’s The Daily Wire, for instance, regularly tops Facebook engagement charts in the US (Parks, 2021)), but also often ‘verified’, which is one of Facebook’s most prominent markers of ‘authenticity’.

In such ways, these accounts tend to be intertwined with Meta in ways that state-backed disinformation campaigns, for instance, are not, as they are enmeshed both officially via verification as well as within Meta’s engagement and advertising-centric business models. This could explain the Newsroom’s seeming reluctance to comment on them, and instead keep them outside its discourse of ‘authenticity’, and how such actors contribute to problems facing the platform. While some of these figures have been banned from Meta’s platforms due to their outrageous content – the 2018 deplatforming of far-right conspiracist agitator Alex Jones is the most famous example here (Coaston, 2018) – many others have continued to grow with tacit approval from Meta.

In effect, by foregrounding those features of the platform that can address some of the ‘outside’ threats, Meta is excluding all the alternative problems raising from within the platform. The AI models used by the platform can relatively easily identify words and phrases that the platform construes as hate speech. They, however, do not perform as well in identifying dogwhistles, coded language, loaded terms, or discursive markers that have specific meanings among particular communities. These sorts of problematic content have been strategically left out of the discursive formations promoted by Meta’s Newsroom. Instead, the platform relies on vague definitions, or at times, non-definitions of outside threats, and foregrounds the technological solutions taken by the platform in ‘solving’ those issues. What has been left out are problems facing the platform – or faced by it – that cannot easily be solved using technological means such as better AI models or detection algorithms.

Meta’s platform features, especially its ‘Groups’, have also been identified in media reports as significant sources of problematic content and behaviour on Facebook. Groups, for example, played a major role in spreading anti-vaccination content early into the COVID-19 pandemic (Kalichman et al., 2022), as well as in spreading false narratives around ‘election fraud’ following the 2020 US Presidential election (Bond and Allyn, 2021). The affordances of Groups – the ability to be semi-private, and thereby make content moderation difficult, as well as being a shared space for like-minded users to congregate – can be especially conducive to problematic content and behaviour.

Meta did remove some problematic Groups in mid-2020 and early 2021, such as those associated with QAnon and the so-called ‘Stop the Steal’ movement, although these individual take-downs lacked a broader strategy to deal with problematic Groups. The lack of posts within the Newsroom relating to problematic Groups, compared to other identified ‘threats’, could be due to two reasons. The first, is that the ‘organic’ nature of problematic behaviour within Groups, with otherwise ordinary and often real-name users sharing and discussing mis- and disinformation, is at odds with the Newsroom’s emphasis on ‘inauthentic’ and ‘coordinated’ outside threats. Secondly, the Newsroom may be reluctant to highlight the role of Groups within Facebook’s mis- and disinformation ecology due to Meta’s own attempts at pushing users into Groups in the late 2010s (Newton, 2020).

There is also a contingent, reactive quality to the Newsroom. This can be seen in the high-profile content moderation decisions that have been made in response to issues with significant attention, usually from the media. There were a number of these reactive decisions in 2020 and 2021, such as the move to ban ‘militarised social movements’ and QAnon in August, following media attention about how such militarised and conspiracist movements were organising on Facebook (Wong, 2020). Meta was also reactive when it suspended former President Trump following the January 6 Capitol riots. In a similar fashion, the Newsroom’s emphasis on ‘coordinated inauthentic behaviour’ and political advertising reveals a reactive quality. As argued above, these issues appear to be useful for Meta in so far as they discursively push blame for problematic content away from the company (instead of, for instance, focussing on Meta’s promotion of Groups). But Meta only responded to these issues after claims of ‘inauthentic’ and ‘coordinated’ disinformation campaigns during the 2016 US Presidential elections gained significant media and political attention (Broderick, 2019).

The effort by Meta to regularly report on the ‘coordinated’ networks it has identified and removed – and which are typically suggested to be state-backed in these reports – can be seen as an effort to demonstrate how Facebook is solving these high-profile issues. Other Newsroom posts declaring, for instance, the removal of accounts linked to the troll farm run by the Russian-based Internet Research Agency (Stamos, 2018), can be viewed within this reactive context. Likewise, the provision of an albeit incredibly limited advertising transparency dashboard (Angus et al., 2024), can be seen as a reaction to an ‘abuse’ of Meta’s targeted advertising model by what Meta paints as a small cadre of unscrupulous political actors, while the reality of problematic advertising and need for greater transparency on the wider platform is downplayed.

Other posts, such as those relating to privacy and transparency following the 2018 Cambridge Analytica data misuse scandal, are also similarly reactive. The representation of Meta’s discourse around nodal points such as technological solutions, enforcement of platform’s terms, and setting the boundaries of the discursive field to outside problems and threats such as ‘inauthenticity’ or political advertising, therefore, indicates that the company is attempting to set the limits of what meaning relations can be formed. That is, the range of the problems faced by Facebook is represented as originating from the ‘outside’, while the platform takes the role of an enforcer, moderator, and solution-seeker. These solutions, however, are limited to what can be solved technologically or reactively: such as the introduction of better AI to proactively identify ‘hate speech’ or the take down of problematic content and users in reaction to external pressure. What is left out of this discourse is the range of social and technological issues that cannot be solved as easily, either due to the inability of technological solutions to address them, or the financial interests of Meta in maintaining high user engagement, such as what organically happens in problematic Facebook Groups.

Setting discursive limits also enables Meta to use ambiguous terminology to address only those issues and problems that it is willing and able to solve. Although Meta frequently refers to coordinated inauthentic behaviour as an outside threat that should be solved, the meaning of the term, and its limits, are open to interpretation. At times, inauthenticity is limited to automated and/or fake accounts. At other times, it expands to also include real, yet highly problematic accounts. In such ways, Meta appears to be strategically taking advantage of the ambiguity and ambivalence associated with ‘authenticity’ and ‘inauthenticity’. The same is true for coordination, which mainly seems to refer to the automated promotion of content, rather than all the other alternative meanings that coordination can entail: in the case of QAnon, for instance, one can see highly coordinated crowds and information campaigns, yet it is not as easy to argue that majority of them are indeed fake or automated accounts. This fluidity and lack of consistency in the meaning and definition of such signifiers, effectively ‘overflows them with meaning’ (Torfing, 1999) to the extent that they become the central signifiers in the network of meaning that Meta aims to shape and set the agenda for. Simultaneously, they exclude all other alternative problematic content and behaviours, solutions of which may not as easily be found using artificial intelligence or automated content moderation. This self-selective and opaque content moderation approach is also consistent with how Meta has operationalised ‘harm’, and other symbolically loaded terms such as ‘violence’, as dynamic and elusive ‘floating signifiers’ (DeCook et al., 2022).

Policy implications

By attempting to set the limits of discourse around issues to do with problematic content on Meta platforms, and by promoting itself as an active ‘self-regulator’ (Medzini, 2022), the Newsroom’s activities can also be viewed within the context of growing regulatory scrutiny. Over the past decade, misinformation has become a ‘top-level policy issue’ (Meese and Hurcombe, 2020) around the world, with governments in Europe, the US, Australia, and elsewhere actively considering or in the process of implementing regulations that seek to tackle mis- and disinformation on mainstream platforms.

As key examples of these regulatory developments, the EU CPD, the Australian DIGI CoP, and Australia’s most recent Combatting Misinformation and Disinformation Bill 2024, leave clues to a possible discursive relationship between policy and official communication from Meta. There are thematic links between elements of these Codes and our Newsroom findings. Political advertising, for instance, is a major concern of the EU CPD, in both the 2018 and ‘strengthened’ 2022 versions of the Code. The first 13 signatory commitments in the 2022 CPD explicitly relate to ‘political’ or ‘issue’ advertising, with a focus on transparency. Transparency in political advertising is also an objective of the Australian DIGI CoP. ‘Inauthenticity’ is also a keyword in how both the EU CPD and Australian DIGI CoP conceptualise ‘disinformation’. The 2018 CPD commits signatories to prioritising ‘relevant, authentic, and authoritative information’ in ‘automatically ranked distribution channels’, and Commitment 14 in the 2022 CPD explicitly requires signatories to limit, among other ‘fake’ accounts and content, ‘coordinated inauthentic behaviour’. The DIGI CoP, on the other hand, names ‘inauthentic behaviours’ as the defining feature of ‘disinformation’ in the Code’s glossary. Moreover, these Codes are thematically linked to our Newsroom findings, in so far as they focus on ‘bad actors’ and platform ‘misuses’ rather than more organic, and indeed even verified, sources of problematic content (Hurcombe and Meese, 2022). In the case of the Australian Combatting Mis/disinformation bill, there are even significant carve-outs for ‘professional news content’ accounts, meaning they will not be subject to attention or enforcement action under the bill. This would potentially exclude accounts that produce news content but consistently fail to enforce robust editorial standards and ethics in their reporting. This could include accounts that question the legitimacy of electoral processes without evidence, or promote anti-vaccination sentiment, as in the case of the Australian Mudoch-owned broadcaster Sky News Australia’s Facebook account (Wilson, 2020).

Apart from the EU and Australian Codes, other state-led attempts at countering problematic content, such as the so-called ‘fake news laws’ in Germany, France, Malaysia, Taiwan, and other countries (Meese and Hurcombe, 2020), are underpinned by a thematic focus on ‘authenticity’. In 2020, Brazil also proposed a law on online content that sought to target ‘automated’ accounts and increase transparency around political advertising.

It is beyond the scope of our study to pinpoint causal links between official communication from Meta, via its Newsroom or other official channels, and international policy development. In some instances, it is difficult to identify whether Meta is pushing its own conceptualisations of the mis- and disinformation problem, or instead focussing on problem-framings that are beneficial to its own interests. As mentioned above, foreign interference is clearly a concern for many governments, and in that respect, it is unsurprising that Meta would want to demonstrate its commitment to addressing such interference via political advertising or clandestine ‘coordinated behaviours’. The fact that Codes like the CoP and CPD are being co-developed by industry may also explain thematic links between our Newsroom findings and problem-framings currently existing within the Codes. It is also possible that policy is influenced by lobbyists and other more direct yet clandestine forms of pressure from Meta (Feiner, 2024).

Nonetheless, we note that contemporaneous developments within this policy space suggest that stakeholders may be pushing back against these problem-framings. In Australia, for instance, the Australian Communications and Media Authority (ACMA) has recommended that an updated CoP include messenger apps, such as the Meta-owned Facebook messenger and WhatsApp (Karp, 2023). Concerns around these private messaging apps were absent from our Newsroom findings. This increasing regulatory pressure is further highlighted in the Combatting Mis/disinformation bill which states that while the content of private messages will be exempt from its powers, ACMA ‘may make digital platform rules in relation to records’ to understand the ‘measures implemented by digital communication platform providers to prevent or respond to misinformation or disinformation… including the effectiveness of the measures’ (Australian Government, 2024: 24).

Limitations

This study has some limitations. Firstly, by focussing on one technology company – Meta – we are unable to analyse the discursive strategies of other large technology companies. Secondly, our analysis focused solely on Newsroom content related to problematic issues on Meta platforms; we acknowledge that the Meta Newsroom has a broader discursive function beyond (re)framing issues to do with problematic content, and we also acknowledge that other channels, such as Zuckerberg’s official Facebook page, also make public statements about content moderation. Thirdly, this study focused solely on Meta’s outputs, and we did not systematically analyse how other actors – journalists, academics, policymakers, and so on – engaged with Meta’s discourse around problematic content. This final point ties with the Leximancer analysis which treats all posts as having equal importance in formulating lexical statistics and resulting concept and thematic prominence. This is advantageous in terms of providing a full and even-handed account of the breadth of Meta’s Newsroom discourse, but analysis using additional external content such as that mentioned above (news, trade press, academic articles, etc.) is required to know the greater significance of specific concepts in terms of their global reception and ultimate reach.

Conclusion

Our analysis has indicated that there are dominant themes in how Meta has framed responses to issues regarding problematic content on its platforms. We argue that these thematic frames – ‘authenticity’, ‘technological solutions’, ‘political advertising’, and ‘enforcement’ – benefit Meta, in so far as they limit the scope of issues and shift responsibility while also demonstrating that Meta is an active problem-solver. There is a need for further research, however. While this study has limited itself to the Newsroom’s output, the implication that Meta is shifting discourse in certain conceptual directions would be worth examining in detail by, for instance, systematically researching the uptake of keywords like ‘inauthentic coordinated behaviour’ in policy and academic outputs. Furthermore, it is worth considering whether Meta is an outlier in its promotion of technological solutionism, or whether other large tech companies also frame content moderation problems in similar ways.

All these issues remain pressing, as platforms don’t just host and shape everyday (political) communication – they are also large companies that are powerful discursive forces in and of themselves. We look forward to further research examining platforms and tech companies in this respect.

Footnotes

Funding

This work was supported by the Australian Research Council Discovery Project DP200101317 Evaluating the Challenge of ‘Fake News’ and Other Malinformation.

Notes

Appendix

Issue: Disinformation. Issue: Election integrity. Issue: General community standards. Issue: Hate speech. Issue: Inciting violence. Issue: Transparency.