Abstract

In recent decades, a revolutionary transformation has substantially altered the way people access information. Online social media platforms have become a significant facet of our daily lives. The intersection of political discourse with the proliferation of what is commonly termed as “fake news” on these platforms has given rise to an environment that does not necessarily foster independent and critical thinking, thereby posing substantial risks to individuals, industries, and governments. While Facebook has implemented novel strategies to counteract this challenge, their efficacy remains questionable. Drawing from an analysis of fact-checking activities conducted during two Brazilian elections, this study aims to illuminate the key areas of focus within corrective information and assess its impact on Facebook. Our examination also highlights our critique of the lack of standardized measures, utilizing Meta’s tool (CrowdTangle) for this qualitative study. Ultimately, our study concludes by offering insights into potential policy alternatives that could be adopted to address this phenomenon.

Background

Over the past few decades, a revolutionary shift has transformed the way we access information, with online social media platforms emerging as integral components of our daily lives. These digital platforms serve as arenas for sharing expertise, exchanging recommendations, and connecting individuals from diverse spaces and ideologies. While numerous online social media sites have emerged and faded, certain platforms, notably Facebook, have endured the test of time. As of September 2020, its active user base has surpassed 2.7 billion, signifying a substantial global presence. 1 Facebook and its subsidiaries dominate the social media landscape, encompassing a staggering 59% of global users (von Abrams, 2020). This dominance extends beyond simple user numbers, shaping contemporary social interactions and influencing what content people see online.

Consequently, people transformed the way that they gather information, moving away from traditional sources like newspapers and television toward online social media platforms. However, this change has brought about a dual concern regarding the exposure to news and civic content mediated through these digital tools. On one hand, they facilitate dynamic literacies and enable a porous exchange of knowledge. On the other hand, these platforms create an environment inundated with an abundance of information, exposing individuals to an unprecedented array of options that are not always trustworthy. As a result, users are challenged to discern the quality of the content they encounter on digital environments (Pennycook and Rand, 2019).

These platforms have evolved into “third spaces,” originally designated for non-political discussions but now serving as arenas for political conversations (Wright, 2012). This has reshaped information flows within these networks, with potential implications irrespective of the outcomes of these political conversations. Amid this environment of ambiguity and signification instability, the prevalence of so-called “fake news,” denoting false or misleading information with potential adverse consequences (Farkas and Schou, 2018), is anticipated to flourish. While the literature provides several terms to characterize the occurrence of information disorder in online environments, including misinformation, disinformation, and malinformation, scholars argue that these terms offer an overly simplistic framework (Wardle, 2023). Therefore, for this study, we have opted to utilize the term “disinformation” to encompass the bulk of what is typically labeled as “fake news.”

These platforms rely on network externalities, meaning their value is directly linked to the number of users. Thus, users have recognized the advantages of engaging with friends and family members by sharing content, leading to increased engagement and subsequently more members joining the platform (Lin and Lu, 2015). Online social networks also operate based on the principles of homophily and popularity, which serve as the primary determinants of social interactions (McPherson et al., 2001).

However, contentious content is also strategically curated to generate higher traffic and foster organic growth. When false stories are shared by “friends,” they acquire an air of legitimacy that aligns with widely accepted understandings or myths within the platform. In the digital era, individual passions and assumptions wield greater influence in shaping opinions than objective facts. This has led to a decline in trust in traditional media, as individuals increasingly turn to their social network on these platforms as primary sources of information and truth (Nicolaou and Giles, 2017).

The amalgamation of political discourse and the rise of disinformation on these platforms has created an ecosystem that discourages independent and critical thinking, posing significant risks to individuals, industries, and governments. As there is no simple antidote to disinformation, addressing this challenge requires collaborative efforts from society as a whole, including platforms’ owners (Recuero et al., 2019).

During the 2016 US presidential election, disinformation garnered over 8.7 million interactions (shares, comments, and likes), far surpassing interactions with top news articles from mainstream media (Silverman and Singer-Vine, 2016). Faced with mounting pressure to address community concerns, these tech companies are under scrutiny from civil society and governments to develop tools that enhance content quality (Tenove, 2020).

As a result, these platforms have introduced various features to aid users in assessing content accuracy. For example, responding to criticism for its role in propagating disinformation, Facebook introduced a new flagging policy in early 2017, initially for the US (Oeldorf-Hirsch et al., 2020). Its highly skilled multidisciplinary teams could devise tailored solutions to counter the techniques used in the spread of disinformation. This approach involved tagging hoax stories or false content as “disputed” for certain users, 2 which was subsequently evaluated by third-party fact-checkers for accuracy (Silverman and Singer-Vine, 2016). In 2019, Facebook also took a more proactive stance by labeling content fully or partly false when debunked by third-party fact-checkers. 3 While the overlay system underwent modifications over the years, the aim was to provide more evidence to fact-checkers and replace the “disputed” label and red flag (BBC, 2017). These strategies aimed to empower users to make informed decisions about the content they engage with, read, trust, and share (Pangrazio, 2018).

Similarly, Twitter and Instagram introduced badges for “verified accounts,” initially for public figures and notable entities, visually distinguishing them with a checkmark to confirm their authenticity. Twitter, now X, has transitioned from its previous badge system to a subscription-based model for verified accounts. While this initially curbed trolls and disinformation accounts, its efficacy in reversing the risks of disinformation remained limited, as even verified accounts shared such content (Oeldorf-Hirsch et al., 2020).

Research has indicated that warning labels on false stories can effectively reduce the likelihood of sharing disinformation (Mena, 2020). Moreover, presenting related articles that correct disinformation content on Facebook has been found to alleviate misperceptions (Bode and Vraga, 2015). However, the effectiveness of corrective information depends on the nature of the corrective and the prominence of the label (Oeldorf-Hirsch et al., 2020).

Nonetheless, the effectiveness of corrective information is also influenced by users’ values, attitudes, and beliefs. Ideological views can lead to the “backfire effect” (Nyhan and Reifler, 2010), where exposure to evidence-based corrections reinforces rather than corrects misconceptions.

Given these complexities, further research is imperative to refine warning tag approaches. To this end, this article examines Facebook’s response to disinformation, particularly political and health content (due to the COVID-19 pandemic), which becomes especially pertinent during uncertainty periods like elections (Coddington et al., 2014) and health crises (Ceron et al., 2021). Facebook’s implementation of a warning message for COVID-19-related posts is a step to mitigate disinformation propagation, redirecting users to the official WHO information center. As assessing content authenticity and quality requires a certain level of knowledge and skill, it is essential to address gaps in existing practices and propose effective solutions.

Methodology

This study combines a multi-method approach composed of three independent – yet interconnected – steps addressing the main research objective of this article: understanding the flaws in Facebook’s measures against disinformation. As a starting point, we selected such narratives published in Brazil, due to the political crises in the country and the political-ideological alignment between Donald Trump and Jair Bolsonaro, who had a contradictory position in the fight against COVID-19. Although some of the focal points of attention described here are easily found in other nations and languages. First, we mapped debunks from two reputable, independent Brazilian fact-checkers (Lupa and Aos Fatos) rated as false or misleading. Two topics were used to filter these debunks: the two Brazilian elections (2018 and 2020) and COVID-19 vaccines. We collected debunks published between August 2018 and January 2021. In total, 167 stories were mapped for this study, being 76 related to elections and another 91 concerning COVID-19 vaccines.

Second, we used CrowdTangle, a Meta-owned tool that tracks interactions on public content, to search the terms concerning vaccine and election-related disinformation narratives that were still available on the platform. To guarantee the quality of the search, we looked at the exact combination of strings that are most representative in the false or misleading content debunked by these two fact-checking initiatives, which could be an image (meme) or text, to conduct optimized searches on CrowdTangle. From these queries, we extracted the posts and their metadata, which were later combined to create the analysis dataset. This database served to perform a quantitative analysis of the posts with more interactions (shares and reactions).

Lastly, of the thousands of pieces of vaccine and election-related disinformation content being shared on Facebook, we qualitatively examined over 100 pieces with more interactions in the Portuguese language. We considered four levels of observation: overlays, related stories, supporting buttons, and links to fact-checking.

By looking at several disinformation contents, this study draws attention to certain points that need to be addressed in the platform concerning those contents and makes suggestions for their implementation. In the following section, we present the most concerning points found during the analysis.

Focal points of attention

Unbalanced Facebook overlays

In 2019, Facebook made an announcement that content which “has been rated false or partly false by a third-party fact-checker” will receive more prominent labeling, allowing users to make informed decisions about “what to read, trust, and share.” 4 This was initially introduced on Facebook and was later extended to Instagram, another platform owned by Meta Inc. Concurrently, the company also implemented a temporary reduction in the distribution of content pending review by third-party fact-checkers. Despite these measures, Facebook inconsistently applies fact-check labels to false posts, even for content debunked by its partners.

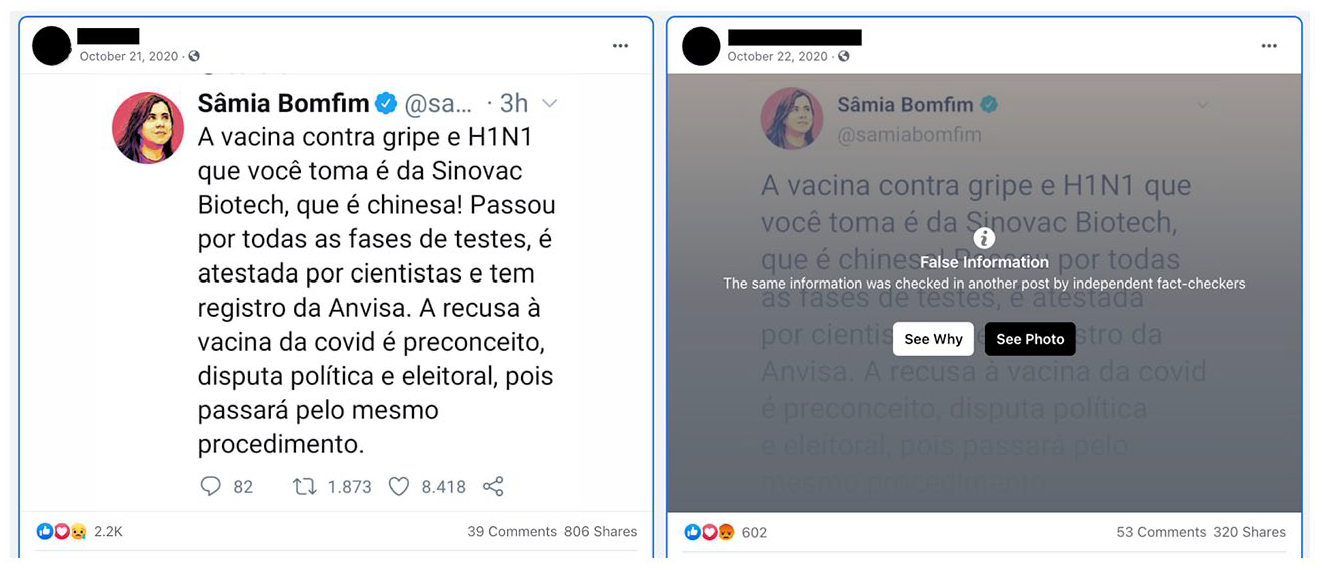

Figure 1 presents an example of a post that was not appropriately labeled as false information within the network. This specific content pertains to the COVID-19 vaccine and was fact-checked by an organization partnering with Meta’s Third-Party Fact-Checking Program. Lupa debunked a claim by Brazilian politician, Sâmia Bomfim, stating the H1N1 vaccine administered in Brazil is produced by Sinovac Biotech (a COVID-19 vaccine producer in the country). This is factually incorrect; the H1N1 vaccine used in Brazil is locally produced. An examination on CrowdTangle shows that Facebook inconsistently applies different measures. Some posts display warnings and links to debunking, while others spread without an overlay.

It shows Facebook’s inconsistency: two identical posts display the same claim, but only one has a “false information” warning.

Facebook’s inconsistent application of warning overlays for debunked content undermines its fact-checking efforts. Users remain exposed to harmful misinformation when some posts lack warnings, as seen in Figure 1.

Facebook’s overlays aim to shield users from disinformation, but inconsistent application fuels its spread. The existence of posts both with and without these overlays creates an unbalanced situation that allows disinformation content to propagate on the platform, leaving users vulnerable. Facebook’s artificial intelligence (AI), adept at image recognition, should ensure consistent application of these warnings, preventing the algorithm itself from becoming a conduit for disinformation.

Absence of labels on debunked content

The lack of “debunked” labels on proven false or misleading posts exposes a major flaw in Facebook’s fight against disinformation. We found instances where debunked content remained unchecked, highlighting Facebook’s limitations in curbing its spread.

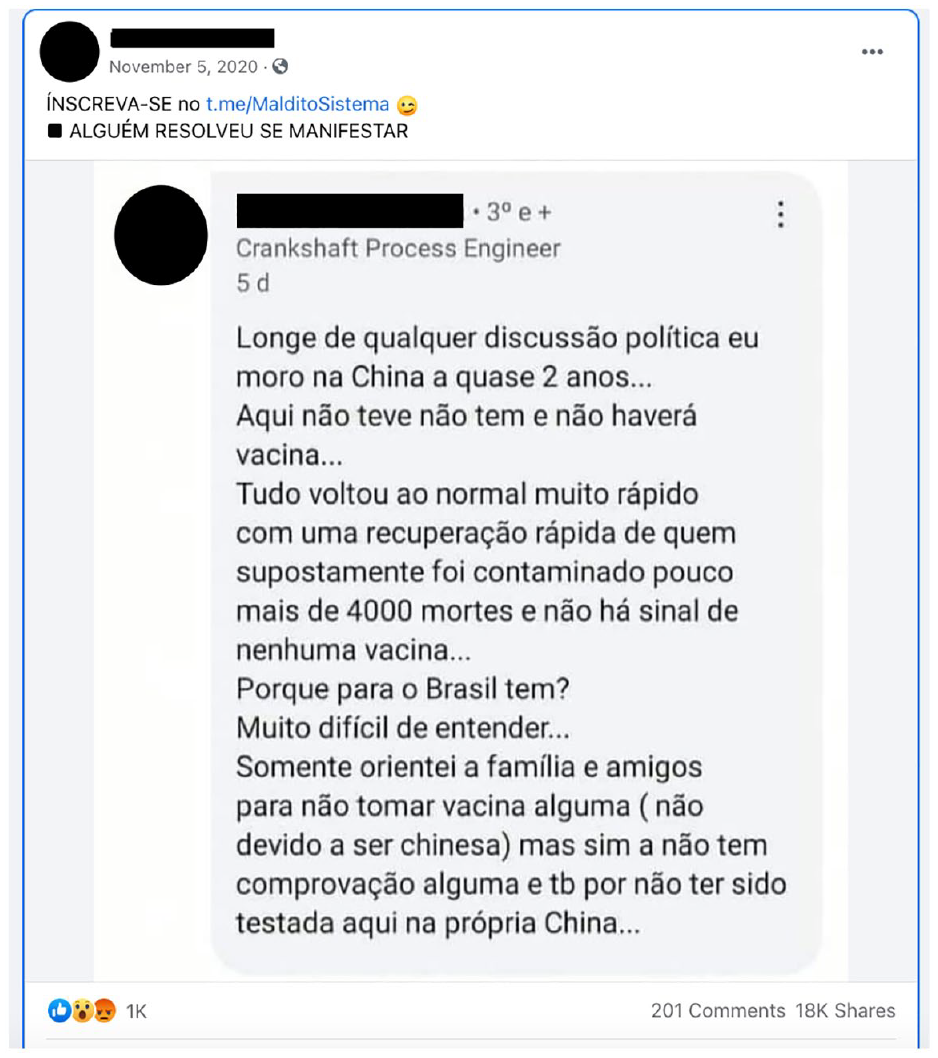

Figure 2 shows a debunked claim (Soares, 2020) about China’s COVID-19 vaccine plans to distribute emergency COVID-19 vaccines in the near future, spreading freely, despite fact-checking by Lupa. Despite its proven falseness, none of the posts identified on the platform using CrowdTangle displayed any overlays or warning labels. This lack of action undermines efforts to combat the spread of harmful information and highlights the need for more robust policies and enforcement.

COVID-19 false information is displayed without an overlay, which had already been refuted by a participant in Meta’s Third-Party Fact-Checking Program.

When disinformation circulates unchecked, people are more likely to believe it. Labeling debunked claims as false is crucial, as it discourages people from sharing or accepting them (Oeldorf-Hirsch et al., 2020). These labels act as warnings, prompting users to fact-check or at least pause before engaging with the content. Without them, users encounter disinformation head-on, potentially falling victim to its harmful narratives.

Inadequate display of debunked content

Facebook’s inconsistent labeling isn’t the only issue. Image variations or deformations on the platform create confusion in the algorithm and allow disinformation to spread differently through visual elements.

A notable distinction is evident between the timeline mode and the photo viewer mode (Facebook’s theater mode). While overlays can be added to photos and videos in the timeline mode, this is not uniformly applied in the photo viewer mode. If a user clicks on a URL originating from another platform or website, they are redirected to the image in the photo viewer mode. In this case, Facebook’s measures, such as overlays or warning labels, are absent. This means that users are exposed to the content without any protective measures and are unable to opt out of viewing it. Additionally, there is no link leading to the fact-checker’s assessment.

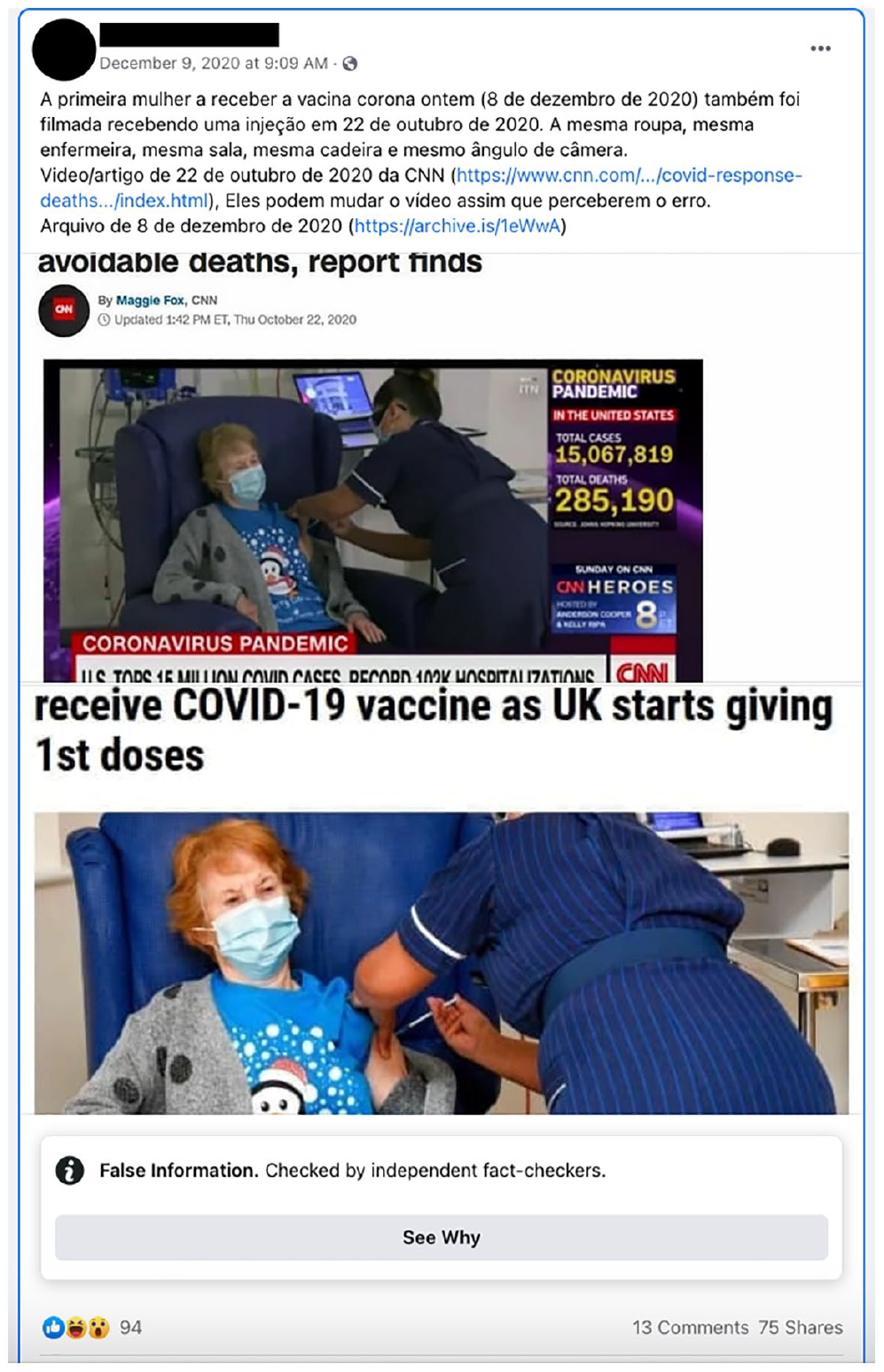

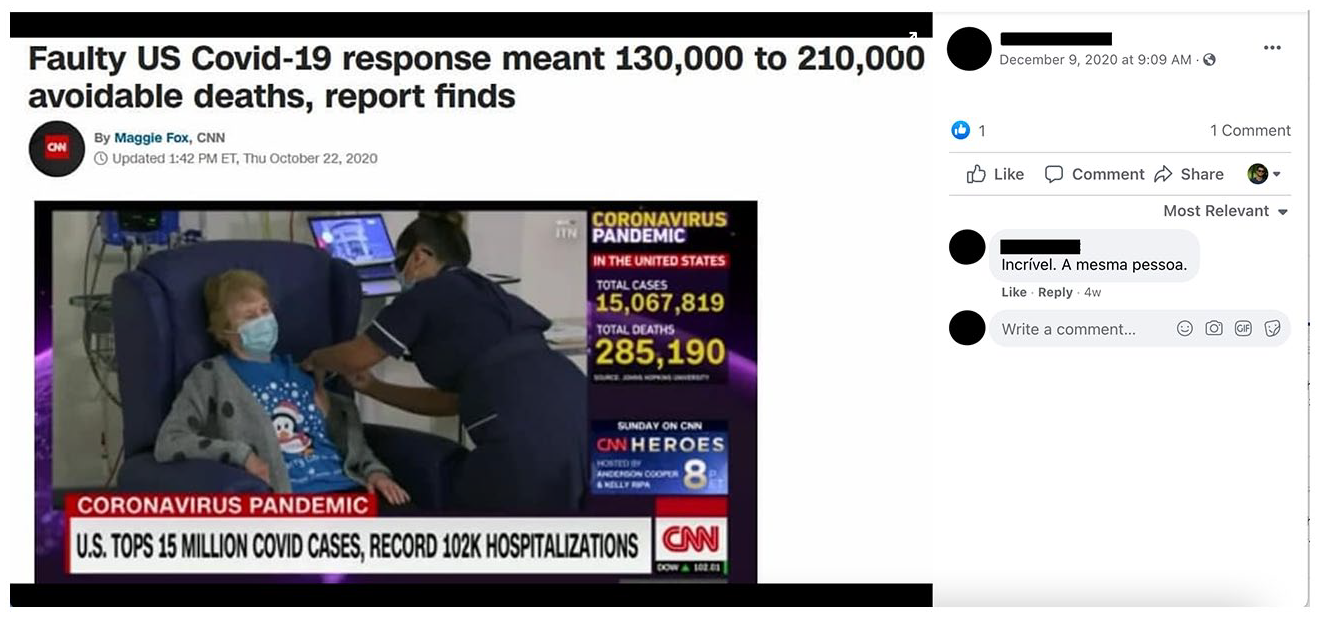

Misleading narratives flourish due to inconsistencies in Facebook’s image handling. Figure 3 shows the first person to receive the Pfizer COVID-19 vaccine. According to the debunk (Costa, 2020), the images was taken on December 8th, 2020, it was also included in CNN’s news story published on October 22nd, 2020. This occurred due to the image’s presence in a carousel on the CNN website, which automatically updates even in older stories. The two images in the post are subjected to an overlay, as depicted in Figure 3. While the post itself has a warning, clicking the images bypasses it, as demonstrated in Figure 4. This inconsistency weakens Facebook’s fight against disinformation.

In the timeline mode, the post is clearly marked as containing false information.

The photo in theater mode lacks any warning signifying that the content is untrue.

Disrupting financial incentives for disinformation

Facebook’s “become a supporter” feature, allowing pages to earn money from fans, creates a potential conflict with its disinformation efforts. This feature enables supporters to make monetary contributions, ranging from 0.99 to 99.99 USD, thereby granting access to premium content through a fan subscription. 5 Facebook claims that creators receive the entire revenue amount, post-taxation, establishing a potential financial incentive that interlinks the upstream and downstream aspects of disinformation via both public and exclusive content. To effectively combat disinformation, Facebook might need to address financial incentives linked to its spread.

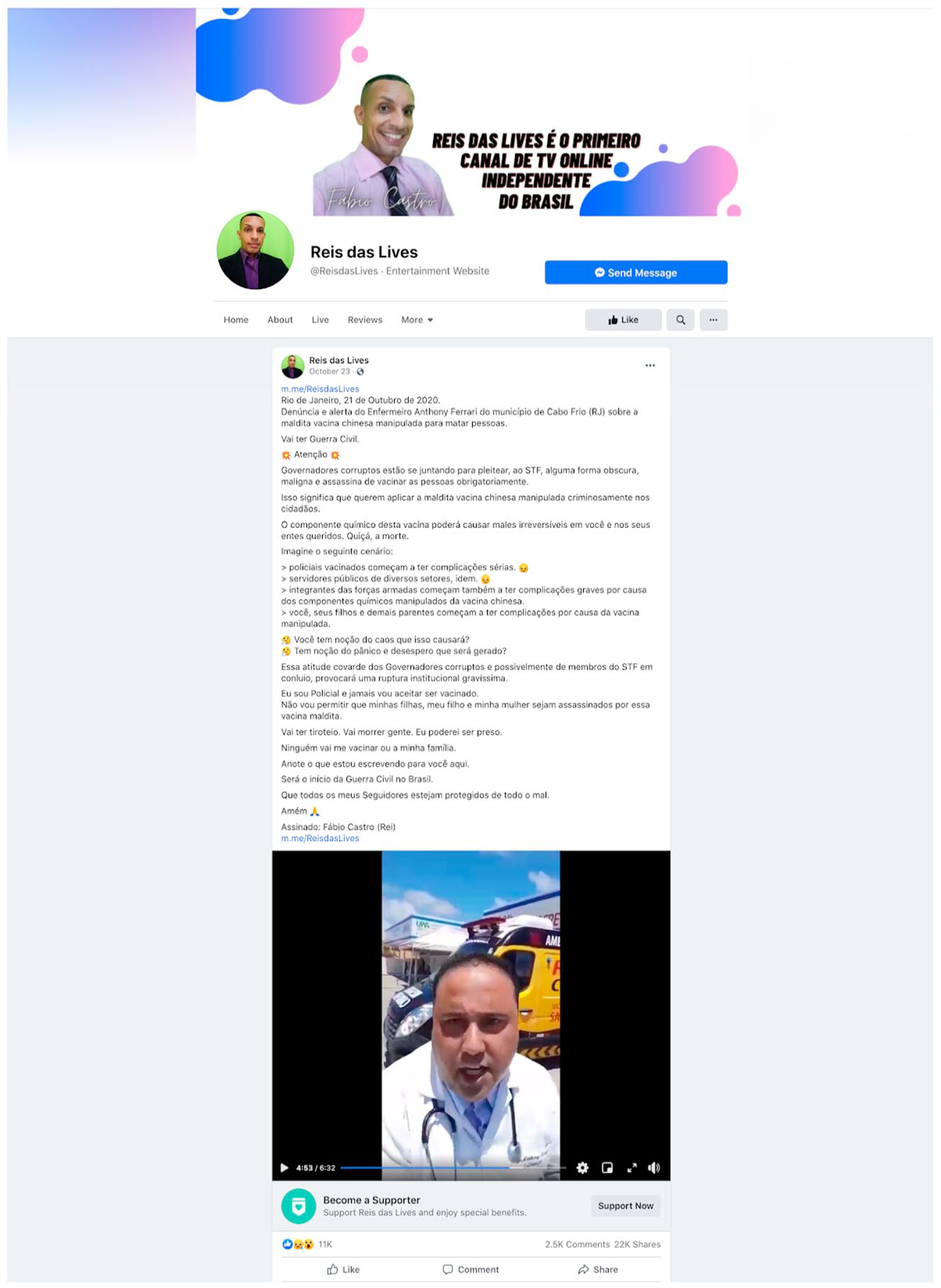

For instance, Figure 5 shows a post on “Rei das Lives” featuring misleading information about the CoronaVac vaccine, debunked by Lupa (de Almeida, 2020a). This post encompasses a video featuring a Brazilian nurse making a series of statements concerning the CoronaVac, one of the COVID-19 vaccines produced in Brazil through a collaboration between the Chinese pharmaceutical company Sinovac Biotech and the Butantan Institute (based in São Paulo). The nurse’s statements encompass dubious information regarding placebo administration and the vaccine’s production technologies. Notably, the page offers “become a supporter” options, potentially linking financial gain to disinformation spread.

A video sharing false COVID-19 information has the “become a supporter” button placed at the video’s bottom, without any accompanying overlay.

Improving fact-check linkages for posts

The absence of fact-check overlays alongside disinformation, when relevant debunks exist, raises concerns about user exposure to disinformation. Figure 5 illustrates a post that lacks an overlay, despite fact-checkers having debunked the misleading claims about CoronaVac made by the nurse in the video. According to Lupa, the nurse’s claims regarding COVID-19, specifically pertaining to CoronaVac, the vaccine developed by the Chinese company Sinovac Biotech, were all proven false (de Almeida, 2020b).

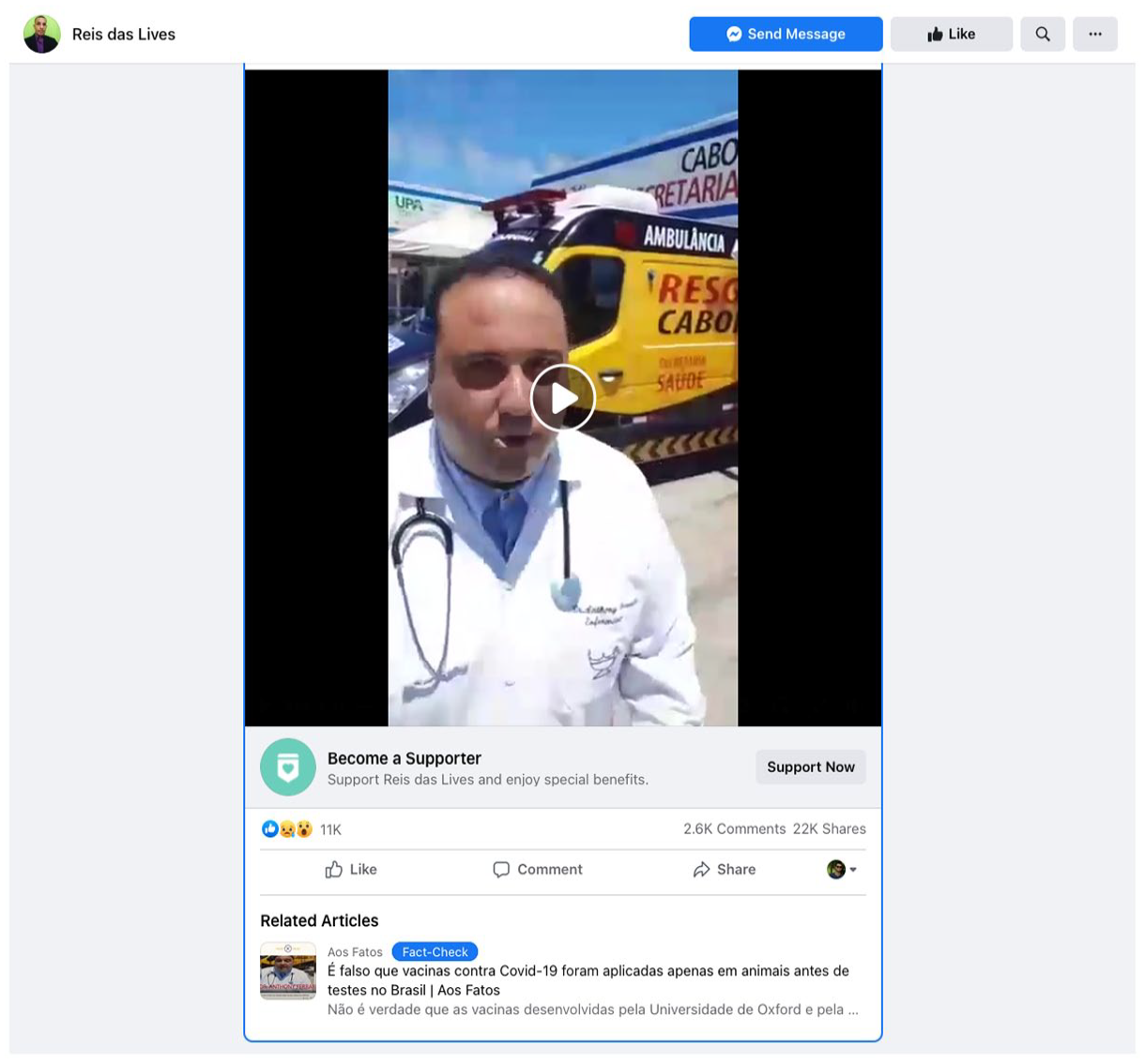

Nonetheless, the post encompassing the video features a section labeled “Related stories” at the bottom, instead of an overlay (as illustrated in Figure 6). Within this section, another false story is highlighted, propagated by the same entity but with a distinct claim. The fact-checking organization Aos Fatos concluded that the assertion in the story (that the vaccine had solely been tested on animals and that Brazilians would be the initial human participants in the trials) was indeed false (Menezes, 2020). Furthermore, the fact-check story is not immediately noticeable, given that Facebook posts generally feature an expanded comments section. These dual issues could potentially confuse users and hinder their ability to discern the disinformation from the accurate information effectively.

Instead of being displayed as an overlay, the fact-check is shown as a related article that pertains to a different story.

Thumbnail, a tool to disinform

Our analysis has uncovered evidence of another potential avenue for the dissemination of false content on Facebook: the use of thumbnails in shared links. Introduced by the platform to enhance user engagement with content, link thumbnails offer a visual preview of the content that lies behind the URL. This tool has become particularly valuable for pages and groups seeking to attract user attention and direct them toward certain websites. Unfortunately, our study has revealed that this feature is also being exploited to spread false news content. Given that not all users click on these links (Ellison et al., 2020), the content displayed in the thumbnail becomes a vector for the propagation of misleading and false narratives.

In September 2018, Lupa debunked a misleading news story from Jornal da Cidade claiming a professor said that electronic ballot security codes were given to Venezuelans and denied to Brazilian auditors. The original report failed to mention key context, including the disqualification of the Venezuelan company in the electronic ballot auditing process and the unconstitutionality of the mandatory printed vote by the Federal Supreme Court in June 2018.

After the 2018 debunking, Jornal da Cidade updated the headline to read, “TSE

The misleading 2018 article resurfaced in early 2020, shared via different groups despite the headline correction. Users shared posts featured the thumbnail headline without the correction. As seen in Figure 7, Facebook previews still displayed the false thumbnail, lacking warnings or labels. This perpetuated the misinformation, allowing its continued spread.

Upon resharing the same story, the thumbnail in the original post is updated. However, old posts remain with the same text.

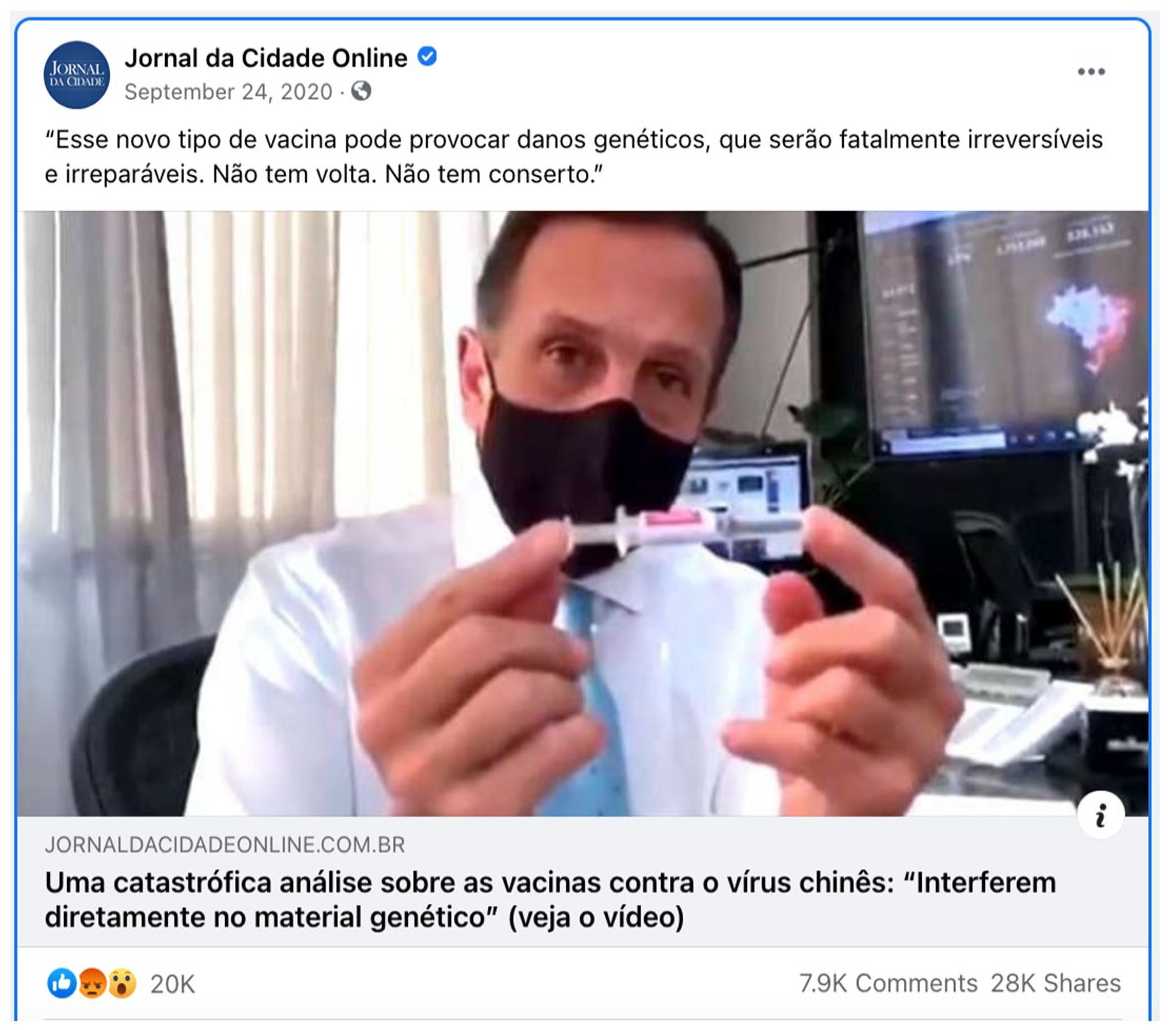

However, this is not an isolated case. Similar instances of thumbnail misuse were found in relation to other Jornal da Cidade’s posts. For example, a story from September 2020 falsely alleged that COVID-19 vaccines alter human genetic material. As depicted in Figure 8, a number of posts were circulating the false headline – later debunked by Lupa (Passarini, 2020) – with no overlay, featuring thumbnail text that proclaimed: “A catastrophic analysis about the vaccines against the Chinese virus: ‘They interfere directly with the genetic material’.” Despite Jornal da Cidade correcting the headline and appending an acknowledgement of the false information, Facebook thumbnails displaying false claims (“vaccines alter human genetic material”) persisted, fueling disinformation.

Another misleading thumbnail without any Facebook measure.

Solving the issue: Recommendations

This study aims to contribute evidence-based insights for policymaking regarding the identified issues on Facebook. In total, seven focal points of attention were identified, spanning various levels of complexity. Our recommendations, aimed at mitigating the impact of disinformation on the platform, are not a definitive solution, but rather an opportunity to enhance users’ ability to promptly identify false or misleading information, with the goal of reducing the spread of disinformation.

While previous studies have demonstrated that labeling content can temporarily reduce belief in false claims or limit the dissemination of such narratives (Oeldorf-Hirsch et al., 2020), we propose an alternative approach. Show the Facts to all individuals who have viewed or engaged with disinformation (including retroactively). This measure could ensure that Facebook users, including members of public groups, gain access to accurate information if they were exposed to debunked disinformation or misinformation. A significant portion of the posts our research identified lacked clear labels indicating that the content had been previously fact-checked; this needs to be addressed promptly.

The measures are not solely about presenting debunked content; they also involve a transformation in platform functionality. Detoxifying the Algorithm implies identifying and reducing the visibility of well-known disinformation and all content originating from consistent disinformation spreaders. Facebook should lower the performance or decrease the reach of any content that third-party fact-checkers have debunked, along with all content from pages and groups that systematically distribute disinformation. Furthermore, Facebook should eliminate any revenue streams that incentivize and sustain the dissemination of false or misleading information on the platform. If a post has been labeled as false or partially false, it should not feature the “become a supporter” button. We recommend that pages sharing more than three pieces of disinformation within a specific period should be temporarily prohibited from seeking funds from their audience, at least for a defined time frame, as an encouragement to produce reliable content.

The computer vision (CV) algorithm (de-Lima-Santos and Ceron, 2021) integrated into the platform should be trained to recognize that memes can be altered, cropped, or manipulated. By utilizing this technology as an additional layer or tool to enhance the process of identifying false information, Facebook can identify memes with minimal alterations between them. Moreover, the platform should consistently apply labels and provide accurate fact-check links. Uniformity is crucial; however, this study underscores that Facebook is not consistently applying labels to similar posts. If the computer vision algorithm can identify these posts on CrowdTangle, Facebook should replicate this within the platform.

Similarly, Facebook’s algorithm should Automatically Update Thumbnails. This research reveals that old thumbnails containing disinformation are being reshared months after their initial publication to propagate false narratives. While we acknowledge that this requires increased computational resources, it is essential to counteract this new form of disinformation prevalent on the platform. User perceptions of credibility, information sharing, and information-seeking should not be influenced by thumbnails that may not accurately represent the current content of a URL. One can argue that automatically updated thumbnails might contribute to this environmental impact. Data itself might serve as the “oil” fueling a data-driven society, however, its infrastructure resembles more than just wells and mines. Data centers, essential for processing and storing this data, contribute to global warming with their cooling systems, acting as the “carbon emissions” counterpart in this analogy (Lemos et al., 2020). However, beyond these material concerns, the real “pollution” associated with data resides in disinformation. Unlike physical waste, its harms extend far beyond environmental damage, causing significant societal repercussions (Ceron et al., 2021).

In the ongoing defense against disinformation targeting our democracies, governments, civil society, and the public must be well-informed about the nature and scale of the threat, along with the actions taken to mitigate it. Consequently, Improving Transparency becomes imperative. Meta must provide comprehensive periodic reports, categorized by country and/or language, detailing the disinformation found on their platform, the number of identified bots and inauthentic accounts (see de-Lima-Santos and Ceron, 2023), actions taken against those accounts and bots, user-reported instances of disinformation, signs of coordination within public groups and pages, and more. These reports must illuminate the platform’s efforts to address disinformation, ensuring transparency about the nature and magnitude of the threat. Although these reports have been produced by Meta in recent years, they should be more frequent and include real-time dashboards. Furthermore, digital platforms should be more transparent with their data for researchers and journalists. The EU Digital Services Act (DSA) aims to propose this imperative in Europe, but this transparency should be done on a global scale. Similarly, dedicated electoral information portals6,7 have been available to certain countries, while others were left out.

In an increasingly digital world, ensuring the safety and security of users’ online experiences and information consumption is paramount. This study aims to contribute to this discussion by presenting relevant points to be addressed in digital platforms to make their environment safer for users. By identifying key areas for improvement and proposing practical solutions, this paper aims to reduce the gap in platforms’ measures for users to promote a more trustworthy and confident environment for information consumption.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by RPA’s Human(e) AI and by the European Union’s Horizon 2020 research and innovation program AI4Media under the grant 951911.