Abstract

The popularization and normalization of conspiracy theories over the last decade are accompanied by concerns over conspiracy theories as irrational beliefs, on the one hand; and their advocates as radical and extremist believers on the other hand. Building on studies emphasizing that such accounts are one-sided at best, and pars pro toto stigmatizations at worst; we propose to study what we call “participatory conspiracy culture”—the everyday, mundane online debates about conspiracy theories. Based on a 6-month ethnography on Reddit’s r/conspiracy subreddit, an analysis of 242 selected discussions, and supported by digital methods tool 4CAT, this article addresses the question of how people participate in online conspiracy culture. It shows that among the plethora of conspiracy theories discussed online, discussions are heterogeneous, and their participants relate to each other primarily through conflict. Three epistemological positions occur: belief (particularly leading to constant discreditation of others’ beliefs), doubt (particularly as opposed to belief), and play (particularly with the fun of entertaining conspiracy theories without taking them too seriously). We conclude that the participatory conspiracy culture of r/conspiracy is not a homogenous echo chamber of radical belief, but a heterogeneous participatory culture in which belief is fundamentally contested, rather than embraced.

Introduction

From JFK and 9/11 to theories about vaccinations, Illuminati and shape-shifting lizards: conspiracy theories have become popular in modern society as alternative explanations for horrifying events. Motivated by the adages “trust no one,” “nothing is what it seems,” “everything is connected,” and “the truth is out there,” advocates of conspiracy theories argue that secret groups are active within modern institutions like governments, media and science (Knight, 2000; Melley, 1999). The popularization and normalization of conspiracy theories over the last decade are accompanied by a moral panic in media and academia alike. This is not new. Ever since the early works of Karl Popper (1945), and Richard Hofstadter (1964), conspiracy theories have been portrayed as “irrational” accounts of modern society or “pseudo-religious” beliefs, and their advocates as radical and extremist fanatics endangering democratic society. Nowadays, this image is particularly salient in the literature on the effects of social media where algorithmic “filter bubbles” (Pariser, 2011), and online “echo chambers” (Nguyen, 2020), allegedly construct self-enclosed spaces where misinformation and conspiracy beliefs are shared on different platforms (e.g., Theocharis et al., 2021).

Building on studies emphasizing that such accounts are one-sided at best and, at worst, forms of academic “boundary work” or pars pro toto stigmatizations; we propose to empirically study what we call “participatory conspiracy culture”—the everyday, mundane online debates people have about conspiracy theories. A “participatory culture” is particularly connected to social media where lay people can freely express and share ideas about public concerns (Jenkins, 2006). Our open research question in this study is accordingly: How do people participate in online conspiracy culture? More specifically, we focus on the topics discussed, the involvement of participants, the communication and relation between participants and their epistemological engagements. To answer our research questions we empirically studied discussions about conspiracy theories on a large conspiracy community on Reddit. This online platform is, more than other platforms (e.g., Twitter, Facebook), a space that affords debate based on bottom-up concerns, issues and interests.

Literature review

Radical beliefs? The academic construction of conspiracy theories

Conspiracy theories have been considered a social problem ever since they were studied in the humanities and social sciences. This “pathologization of conspiracy theories” (Harambam, 2020) and their portrayal as “dangerous” or “anti-democratic” lean on two, intimately related assumptions that developed in tandem, and are still a mainstay in the academic literature. On the one hand, epistemologically, conspiracy theories are held to be irrational “beliefs” akin to religious faith; while, on the other hand, conspiracy theorists are generally considered radical, extremist and fundamentalist in their convictions.

Early and classical texts, in this respect, are those of Karl Popper (1945) and Richard Hofstadter (1964). In The Open Society and its Enemies (1945), Popper dedicated attention to what he called “the conspiracy theory of society” and critically pointed out, like many contemporary academics, the epistemological and methodological fallacies in this popular type of reasoning (1945: 306). Essentially, he argued, it is a pseudo-religious form of belief or “a typical result of the secularization of a religious superstition. The Gods are abandoned. But their place is filled by powerful men or groups – sinister pressure groups whose wickedness is responsible for all the evils we suffer from.” (Popper 1945:306)

The historian Richard Hofstadter, in turn, pointed out in The Paranoid Style in American Politics (1964) that what he called the “conspirational fantasy” is not an innocent personal conviction, since it has serious social and political implications. He discusses radical historical cases—from paranoid political leaders like Hitler and Stalin to the anti-communist propaganda of senator McCarthy in the early 1950s—to argue that conspiracy thinking engenders a Manichean, fundamentalist and extremist worldview that seriously threatens the workings of an open democracy of rational deliberation. Informed by such portrayals, various academics have considered the spread of conspiracy theories an “epidemic” (Robins et al., 1997), a “plague” (Showalter, 1997) and “a poisonous discourse” that “encourages a vortex of illusions and superstitions” (Pipes, 1999).

In the most recent literature this image of conspiracy theories as radical, dangerous beliefs is often reproduced: on the one hand, the recent emergence of anti-vaccination movements, QAnon, and the spread of conspiracy theories around and by former US president Donald Trump may have contributed to this claim—particularly since these extreme examples show that conspiracy theories have become more mainstream, and motivate social and political action (Fuller, 2018). On the other hand, the radicalization of conspiracy beliefs is attributed to the workings of social media. Conspiracy theorists find, quite literally, a platform to express, amplify and spread their radical beliefs on Facebook, YouTube, Twitter and messaging groups (Theocharis et al., 2021). These algorithmically empowered platforms allegedly construct “filter bubbles” of information (Pariser, 2011) where individual belief is confirmed, and “echo chambers” (Nguyen, 2020) where like-minded people radicalize into dangerous activists. Particularly YouTube has been singled out in popular debates as the “great radicalizer” (Tufekci, 2018; cf. Mahl, et al., 2023), leading people into “rabbit holes” of more and more extreme (mis)information and conspiracy theories (ibid.; Grusauskaite, et al., 2023; Marwick and Lewis, 2017; Sutton and Douglas, 2022).

Pars pro toto generalization?

A point of departure of this study is that we question the portrayal of conspiracy theories and their theorists as respectively radical beliefs and believers. We are not so much denying that conspiracy theory can be or is a radical belief in many cases, but want to critically address this generalized picture as a pars pro toto generalization that takes an extreme minority to be representative for conspiracy culture as a whole (Elias and Scotson, 2008). This also explains much of the moral concern. When Hofstadter wrote about the “paranoid style” in 1964, for instance, he argued explicitly that “this term is pejorative, and it is meant to be; the paranoid style has a greater affinity for bad causes than good” (1964:77). However, by emphasizing radical examples from history, Hofstadter and others construct a theoretical frame in which radicalist extremism is equated with conspiracy theories in general.

There are both theoretical and empirical arguments to problematize this position. First of all, we should question the claim of the “radical believer” because conspiracy theories have become normalized, popular narratives in contemporary culture. They feature amongst others in mainstream news, films, television series like The X-Files, Homeland, House of Cards, Stranger Things and elsewhere (Butter, 2020; Kellner, 2003), while more and more people “routinely” theorize and speculate about the “truth out there” and the possibility that there might be actual conspiracies within the complex, globalized network of society (Birchall, 2006; Fenster, 2008; Knight, 2000; Melley, 1999). Is everyone involved in these “paranoid” activities a full-fledged conspiracy believer? Such boundaries between “irrational” conspiracy beliefs and “rational” skepsis are hard to maintain nowadays—if they ever were. As Knight rightly states “conspiracy theories have become […] the lingua franca of many ordinary Americans […] a regular feature of everyday political and cultural life […] as part and parcel of many people’s normal way of thinking about who they are and how the world works” (Knight, 2000:2). In a similar way Uscinski claims: “Conspiracy theories are not fringe ideas, tucked neatly away in the dark corners of society. They are politically, economically, and socially relevant to all of us. They are intertwined with our everyday lives in countless ways” (2018: 6). Stretching this point to the extreme, Fenster even argued that now, in the late-modern globalized world, “we have all become conspiracy theorists” (Fenster, 2008:7).

Given this popularization and normalization it becomes hard to maintain that those engaged with conspiracy theories are exceptions in modern democratic societies. More specifically formulated, it is (and perhaps always was) problematic to situate the “conspiracy theorist” at the other end of typical modern dichotomies between healthy/insane; rational/irrational; fact/fantasy; reason/belief; and relativism/fundamentalism. It can even be argued in this respect that academics writing about conspiracy theories in such a moral, pejorative and dismissive sense are involved in a form of scientific “boundary work” to “purify” the social sciences and sustain its epistemic authority in a broader context of knowledge production (Harambam and Aupers, 2015). Be that as it may, there are also clear empirical reasons to problematize the conception of those engaging with conspiracy theories as “radical believers” and to open up the study of conspiracy culture more broadly as an open “participatory” network. Quantitative surveys trying to map the popularity of engagement with conspiracy theories in Western countries are instructive here (Douglas et al., 2019; NPR/Ipsos, 2020; Oliver and Wood, 2014). In their survey on conspiracy belief in the United States, Oliver and Wood (2014), for instance, show that no less than half of the public endorses at least one conspiracy theory: 20% believed their government to be responsible for the 9/11 attacks, while 28% believed that a secret elite is conspiring to establish a New World Order (ibid.). Indeed, these descriptive numbers are impressive and indicate the popularization and normalization of conspiracy theory. But it is particularly important for our study that a large part of the studied population in these surveys is neither fully endorsing (or believing) nor rejecting (non-believing) conspiracy theories. For instance, one of the most widely endorsed conspiracy theories by 25% of US citizens in 2011 (on Financial Crisis) was neither endorsed nor rejected by other groups: the theory was known by 47% of respondents, and “neither agreed/disagreed” by 38% (ibid.). Based on these statistics we can argue that unconditional and radical belief in conspiracy theories (measured on a Likert-scale) may or may not be significantly prominent in Western societies, but more importantly that there is an understudied majority that is familiar with the conspiracy theories, and participates in conspiracy culture without (straightforwardly) believing its theories.

Other empirical studies confirm that there is a heterogeneity in the conspiracy milieu and various forms of engagement with conspiracy theories that problematize the generalization of “radical belief” (e.g., Schäfer et al., 2022). In terms of worldviews, we can distinguish different conspiracy (sub)cultures in the milieu—from politically engaged “activists” and spiritual “retreaters” to postmodern “mediators,” often doubting any truth claim (Harambam and Aupers, 2017). In a quantitative study of online engagement with COVID-19 theories, Schäfer et al. distinguish six types of conspiracy engagement that form a spectrum from “extreme believers” to “lingering believers,” “hype cynics,” and “profit cynics” (2022). The latter two engage with conspiracy theories, but do not ‘believe’ in the theory that the virus is man-made or even planned by the elite (Ibid.:2896). Other studies witness “the new conspiracism” or “conspiracy rumours” (Rosenblum and Muirhead, 2019): the spread of conspiracy narratives that are primarily considered to be entertaining, fun and encourage a more “playful” engagement (e.g., Aupers, 2020; Birchall, 2006)—despite being no less serious and potentially harmful for it. Nonetheless, it is clear that a heterogeneity of conspiracy culture can not only be found in its worldviews, but also in its diverse epistemological engagements—varying from belief to playful entertainment.

Participatory conspiracy culture

Based on our review of the literature, we hence problematize the overly generalized picture of conspiracy theories and their advocates as “extremist” beliefs/believers, and theorize that conspiracy culture is normalized, mundane and heterogeneously consists of diverse participants, worldviews and epistemological engagements. This study researches not just the heterogeneity of “beliefs” in the online forum of Reddit, but also the communication and discussion between those engaged in this community. Our main sensitizing theoretical concept in this respect is what we call participatory conspiracy culture. The concept is an adaptation of Henry Jenkin’s concept of “participatory culture,” developed to capture the agency of fans, audiences and consumers and their creative appropriation of mass media texts (2006). Jenkins argued that the “Web 2.0” exemplified a full-fledged participatory culture (ibid.). Unlike legacy media (print, radio, television), the Internet is in this perspective considered a free, non-hierarchical arena that affords “low barriers to artistic expression and civic engagement, strong support for creating and sharing one’s creations” (Jenkins et al., 2009:3; Massanari, 2015).

The concept of participatory culture as developed by Jenkins has been critiqued for being overly idealistic and optimistic (e.g., van Dijck, 2009; Quandt, 2018). There is, Quandt argues, a “bleak flipside to the utopian concept” of participatory culture (2018:40) since citizens are not just expressing, connecting and civically engaging in a constructive way. Participatory culture sometimes boasts public worldviews that can be labeled as “fake news,” or “disinformation,” or what Quandt refers to as “dark participation” (2018). Online discussions about conspiracy theories—on the JFK assassination, the 9/11 attacks, COVID-19 origins, the “great reset,” etc.—are examples of such forms of participation in which users express diverse views and doubts about democracy, truth, the state and society (Harambam, et al., 2022). To bracket moral judgments on real or fake news, good or bad information, and light or dark participation—we choose the more neutral analytical term participatory conspiracy culture as the sensitizing concept in our empirical research. We particularly focus on its manifestation on Reddit because this platform, more than others, affords heterogeneity, participation and debate.

Most generally, our research question is “How do people participate in participatory conspiracy culture on Reddit?” More specifically, we formulated the following research questions that cover four aspects of this participatory culture on Reddit:

Method

To answers these questions, the first author performed a 6 month ethnography of an online community called “r/conspiracy,” to be more elaborately introduced below, which hosts over 1.9 million self-described conspiracy theorists, “free thinkers” and “truth seekers.” To answer the questions above, a three-part multi-method qualitative approach was employed.

First, an online non-participant observational ethnography was chosen to study at length, and to get to know over the course of 6 months (from 1 February to 31 July), the group dynamics and attitudes of this online conspiracy-theoretical community. This entails “iterative-inductive research” based on “direct and sustained contact with human agents, within the context of their daily lives (and cultures)”—in this case the users and culture of r/conspiracy—in order to engage in what Karen O'Reilly’s calls simply “watching what happens, listening to what is said, [...] producing a richly written account that respects the irreducibility of human experience” (2008: 3). In addition, this ethnography is specifically performed digitally/online, that is, “in mediated contact with participants rather than in direct presence” with all the platform- and culture-specific forms that digital ethnographers are still exploring, as prompted by their subjects and objects of study (Domínguez et al., 2007; Pink et al., 2015)—again, in this case, the users of r/conspiracy on Reddit. We chose a non-participant observation for three reasons. First and foremost, non-participation is preferred in cases where researchers do not want to interfere in the culture under observation. In this case, since the key dynamic we are studying is participation (i.e., in a participatory culture), it was not desirable for the research design—or indeed the ethnographer—to influence participation directly by, literally, participating: for example, by prompting, probing, or otherwise eliciting interaction but by instead “capturing social action and interaction as it occurs” (Caldwell and Atwal, 2005; Parke and Griffiths, 2008). Second, but relatedly, to participate in a community of over 1.9 million people would mean to further skew our sample toward the interests, expertise and biases of the ethnographer—although we will add some notes on data selection, collection and handling below. Thirdly, non-participant observation is preferred when researcher(s) want to keep a distance from the community studied, or its behavior—such as when studying gambling, drug-using and other “deviant” communities (Becker, 2008; Parke and Griffiths, 2008). Although conspiracy-theoretical communities are absolutely not engaging in such behaviors, the ethnographer personally deemed it advantageous and more sincere to not “go native” for this study of participation in conspiracy-theoretical discussions.

In a second phase of analysis, relevant discussions were “theoretically sampled” (Glaser and Strauss, 1967), with an emphasis on empirical richness—that is, longer or more controversial discussions that prompted more replies—and diversity of topics. Once in the “field” of r/conspiracy, the ethnographer spent 4 hours per day reading and making notes on common topics, discussions, popular users, their interactions with each other, and anything else that was deemed potentially relevant. Four hours is well above the average 15 min spent on Reddit (Clement, 2022), as well as the average 4 h 15 m for posts to stay on the frontpage (Datastories.com, 2016). However, the extra time was used to take field notes, to archive the top 75 posts of every 24 h for reference, and, in the case of 242 discussions (with on average 142 comments, totaling 34,364), to archive whole discussions selected for coding. These were then iteratively coded through open, then axial and closed coding according to a “constant comparative method” following the principles of Grounded Theory (Charmaz, 2006; Glaser and Strauss, 1967). We focused on finding common themes and patterns in the data to incrementally develop higher level concepts that are (Weberian) “ideal-typical reconstructions” (Inglis, 2016:33). We used qualitative data analysis software Nvivo to systematize our analysis throughout the iterations from data to final concepts. At the end of this coding process, we arrived at the final analytical categories that form the backbone of the analysis section.

Thirdly, to supplement the theoretical sampling of this qualitative method, we used “4CAT” (Peeters and Hagen, 2021), which is a digital methods tool developed at the University of Amsterdam to “capture data from a variety of online sources […] and analyze the data through analytical processors” (ibid.). While it is not without its limitations—4CAT provides a large overview rather than qualitative detail, and its Reddit analyses depend on the Pushshift API with its well-documented inaccuracies and shortcomings (Baumgartner, et al., 2020, cf. Gaffney and Matias, 2018)—4CAT was used to gain a more complete overview of the users, topics and trends across all 104,449 posts made between 1 February and 31 July on r/conspiracy, which we triangulated with the available data from two specialized websites that track subreddit trends: frontpagemetrics.com, and subredditstats.com. For reasons of anonymity, quotes are accompanied not with a username, but the relevant post’s or comment’s score at time of data collection, for example, “we like thinking that the elite are lizards” (+6640).

Finally, a note on the quotes used throughout this article, including in the previous sentence: while Reddit is an open platform, and r/conspiracy is a public subreddit, we have chosen to omit usernames for general reasons of anonymity, and for specific reasons regarding the nature of what is discussed and confessed. Instead, we have replaced the usernames with postscores. The number in parentheses behind quotes thus resembles the total amount of “up-” and “downvotes” for the post or comment from which the quote originates. This score, known as “karma” is usually seen to be an indication of the relevance, popularity and/or agreement of the post or comment in question.

r/conspiracy

Before we analyze the participatory culture of r/conspiracy, some context for our case selection is needed. We took to r/conspiracy because, at 1,946,989 subscribers (as of revising in May 2023), it is likely the biggest and growing community of self-described “free thinkers” online. As a “subreddit” of the larger social media platform Reddit, users start discussions on topics they deem relevant to what r/conspiracy’s moderators describe as “a forum for free thinking and for discussing issues which have captured your imagination.” The community description clearly states an intention to be a place for all kinds of different conspiracy theories and opinions, and to be a place for non-judgmental, open discussion: “**The conspiracy subreddit is a thinking ground. Above all else, we respect everyone’s opinions and ALL religious beliefs and creeds. We hope to challenge issues which have captured the public’s imagination, from JKF and UFOs to 9/11. This is a forum for free thinking, not hate speech. Respect others’ views and opinions and keep an open mind.** **Our intentions are aimed towards a fairer, more transparent world and a better future for everyone.**”

Consequently, 10 rules of the subreddit are kept below this description, which are aimed at guaranteeing “free thinking, not hate speech” and “respect” within the community. These rules are actively moderated by a team of 13 moderators who have the power to remove posts, users, and comments from the subreddit. They include a ban on bigoted slurs, ad hominem arguments, spam, stalking, “misleading” headlines (determined supposedly at the discretion of moderators), and memes; as well as the requirement to include a two-sentence “submission statement” with URLs and images to explain their relevance.

Analysis: participatory conspiracy culture

Topics: from JFK to Aliens

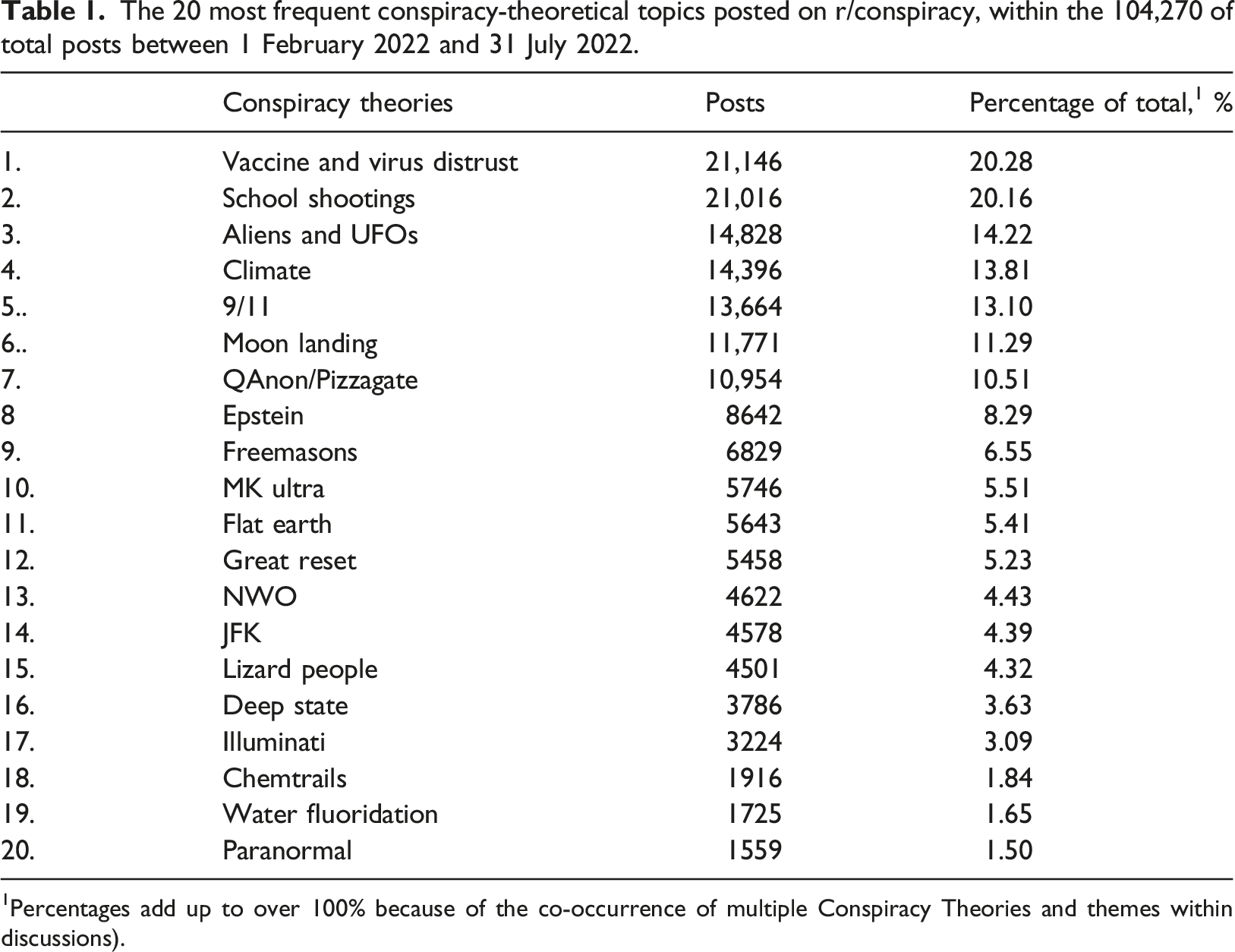

The 20 most frequent conspiracy-theoretical topics posted on r/conspiracy, within the 104,270 of total posts between 1 February 2022 and 31 July 2022.

1Percentages add up to over 100% because of the co-occurrence of multiple Conspiracy Theories and themes within discussions).

Participation: the loud minority

A first observation when studying participatory conspiracy culture on Reddit is that while there might be many participants, there are different degrees of participation. Perhaps surprisingly, only a small portion of the >1.9 million users is responsible for actively posting, while the majority is evidently interested but quietly participating by subscribing, (up- and down-)voting, lurking and listening. According to metrics taken toward the end of data collection, most people simply do not talk: 0.335% of 1,946,989 subscribers is “active,” meaning they comment more than once a month. Subscribers respond to discussions with an average amount of 0.000,268 comments per subscriber per day, and the average amount of posts per user—that is, the start of a discussion rather than adding to an existing one—is even lower, at 0.000,011 per subscriber per day (frontpagemetrics.com, 14-06-2022; subredditstats.com, 14-06-2022).

Yet we cannot conclude that this silent majority is non-participatory, since they participate and influence the conversation in different ways. They decide which discussions rise to the top and are worthy of conversation, through “upvoting” and “downvoting”: an affordance similar to Facebook’s “likes” that indicates whether users appreciate a post or comment, or that they deem it relevant to the subreddit. The sum of all the up- and downvotes in r/conspiracy’s top monthly and yearly discussions is 4,719,474. Given that all users only get to vote on each post once, the scale of this participation is not trivial; nor is its impact: up- and downvotes represent implicit (dis)approval of which posts and comments are seen as relevant by a larger majority of users, and thus function as a sort of agenda-setting.

By contrast, the “loud minority” of users consists of about 100 top posters and only slightly more commenters who contribute most of r/conspiracy’s content. Those at the lower end of the top 100 start only three discussions per year that reach the monthly top 50 posts, whereas the top five posters are responsible for 233 of the Top 50 monthly posts in the past year. These are not just avid discussion starters: their discussions receive most of the attention. R/conspiracy’s top 100 posters get most of the upvotes, while the diversity of people responding to these posts (“commenters”) is only slightly more diffuse. The take-away is this: a small group of people are dominating the participatory conspiracy culture of Reddit, to which only a slightly larger group responds through comments. The majority is overwhelmingly silent—letting themselves known only through the appreciation of upvotes or, when they disagree, downvotes. Notwithstanding their passivity in the debate itself, many of them nonetheless contribute to the agenda-setting and trending topics and themes.

Community: between cohesion and fragmentation

A descriptive way of looking at r/conspiracy, then, is as a rough hierarchy of about 100 most active posters, a larger group of people who respond, and a majority of 99.7% of users who comment less than once a month, but many of whom contribute in setting the agenda. On a more sociological note, however, we can say that the platform boasts an open community that attracts like-minded people interested in conspiracy theories. The conspiracy talk of r/conspiracy functions, literally, as an open platform or forum where people can step outside the mainstream world to debate, discuss or simply read about alternative, conspiratorial views on the official truths of institutions, political leaders, topical events and news.

In other words, within the loosely bound participatory culture of r/conspiracy, users share a general interest in “stigmatized knowledge” and “claims to truth” that are discredited in mainstream society and are considered interesting to discuss and evaluate despite (or because of) the “marginalization of those claims by the institutions that conventionally distinguish between knowledge and error – universities, communities of scientific researchers and the like” (Barkun, 2013:26). To many users, this makes r/conspiracy a safe space: “I don’t subscribe to all of the ideas on this sub but this has become somewhat of a safe space for me and has definitely impacted my life in a measurable way” (+31). Indeed, every once in a while, a thread shows up in which participants state that the tension between their alternative worldview and the values, norms and demands of everyday life is increasing. This somewhat paradoxically reveals that outside of the usual mundane conspiracy talk, many have a troubled offline life because of their online interests. There is support and understanding for users who talk about “sever[ed] relationship with family and friends” (+50), when they “feel like I am on my own” because “my family and some friends think I am a conspiracy theory nut now” (+48), the immediate responses are of recognition and support: “You’re not alone. I have family who feel the same way about me” (+9), and “You’re not alone. There are far more of us out there than you think. Please find some comfort in this” (+17). Users routinely share such stories, whether they cannot deal with their recent “awakening,” are losing friends, jobs, or face other problems. Someone will “feel like I’m the only sober person at the bar” (+22), or they “feel most [people] close to me wouldn’t understand in the slightest” (+0), and the responses are in solidarity, with users replying “you’re not alone” (+17), “ditto” (+5), or “you’re not the only one cursed with knowledge” (+23). As one user writes succinctly elsewhere: “I have a lot of friends and family who are plugged into the day to day life that is expected of a ‘normal’ productive member of society. Which has made me very cautious about who I open up to about my ideas and beliefs.” (+25)

Quotes such as these indicate that participants of r/conspiracy consider the platform a veritable community with like-minded, alternative thinkers; whereas the social cohesion of this community is grounded in a shared critical stance against an imagined and reified “mainstream.”

Epistemology: debating belief, fact, and fiction

We have demonstrated above that r/conspiracy is in many ways a cohesive community where people share a love for alternative, stigmatized knowledge—or even consider it a safe space for conspiracy talk outside of the mainstream. However, does this imply that r/conspiracy is indeed a homogeneous community, or even a digital “echo chamber” in which participants are socialized into shared, radical beliefs? In other words, is r/conspiracy a “great radicalizer” (Tufekci, 2018), leading people into “rabbit holes” of more and more extreme (mis)information and conspiracy theories (ibid.; Marwick and Lewis, 2017)?

Such a position is hard to sustain. Despite the shared interest in “stigmatized knowledge” and conspiracy theories, r/conspiracy proves to be, first of all, a heterogeneous community in which consensus about specific conspiracy theories is an exception: internal debate and discussion are the rule, and participants are internally dived between different interests and perspectives on truth. In the open participatory culture of r/conspiracy, some people are drawn to discussions of freemasonry, others to theories about chemtrails, and yet others to covid conspiracies, shape-shifting lizards people theories, and other topics of interest. More importantly: between these groups, there is constant conflict about which beliefs and which truths are (il)legitimate.

Indeed, “belief” itself is, emically, one of the main topics of discussion leading to conflicts in the community. In lay-discussions about the epistemological foundations of conspiracy theories, different groups were either discrediting each other’s specific beliefs; were prioritizing doubt over belief itself; or were just out there to “have fun” with conspiracy theories as entertainment.

Believe me: “you are discrediting the more realistic and factual conspiracies.”

One common form of conflict is over the validity of others’ beliefs. Users will frequently join in to comment that discussions on certain theories will discredit the conspiracy-theoretical community as a whole. For instance, one user takes aim at “lizard people”-theorists, who propose that politicians, CEOs and other members of the cultural elite are de facto shape-shifting lizard people, writing: “Yeah, this is the type of thinking that makes people less likely to believe the more factual or plausible conspiracies. Conspiracy just means people conspiring to do things, it doesn’t mean wacky ridiculous theories. Critics of conspiracy theorists use this type of ridiculous lizard people shit to help discredit the more realistic and factual conspiracies.” (+80)

The quote makes clear that users each support their own theories, while discrediting those of others. In this divisiveness, they frequently express the fear that the “mainstream” might “use” the more eccentric and radical theories of other users to discredit the whole of conspiracy culture. As such, they are emically concerned about the pars pro toto stigmatization and generalizations discussed in our theoretical section. Although lizard people theories are a frequent target in this respect (“go talk about lizard people on another forum” says [+15]), this happens between any group. Sometimes it is even argued that such outlandish theories are spread consciously and intentionally by outsiders such as (government) shills and bots, with the purpose to discredit conspiracy thinking. Thus, Qanon—the theory that president Trump is secretly prophesizing the downfall of the deep state in coded messages online—is by one participant considered a “disinformation campaign to broadly define truth seekers and conspiracy theorists as far right nuts” (+947). More often, however, the perceived radical and excentric ideas of others that are seen to discredit users’ own preferred theories are just considered to be the result of “stupidity.” As one participant notes: “Pizzagate”—the theory that the U.S. Democratic party is made up of human traffickers and child sex rings—and theories on “adrenochrome […] and all the other stuff is just bad, a lot of older people fall for this” (−2). Another summarizes the point by saying: “the more we listen to the dumber conspiracies, the more weight is taken from things that could rlly rlly be real. Like if we let every flat earther speak their mind w out dissent, then the real shit like artici is seen as just as stupid. Im srs we could change the world w some of this shit” (+6)

In short, the lizard people theorists might find the flat earthers’ theories outlandish, the flat earther theorists find the Qanon theories outlandish, the Qanon theorists find the lizard people theories outlandish, and so on—each discrediting the other users’ theories in favor of their own. Such blatant heterogeneity, subcultural diversity and debate between groups reign on r/conspiracy, suggesting that academic assumptions about homogeneous echo chambers of conspiracy theories should be considered one-sided.

Doubt everything: “there is no ‘we’.”

Additionally, while users like the one quoted above still emphasize “the real shit like theories about convicted child trafficker Jeffrey Epstein” over the “dumber conspiracies,” others are less sure in general. Instead, for such users, discussions should not be about truth and belief but rather about doubting official narratives until things are proven. In the words of one such user: “there is no ‘we’. This is a common space that’s all, don’t try to turn this into a collective. If evidence, leaks and investigation would indeed prove that ‘the elites are lizards’ so be it but ultimately [this subreddit is] about critical thinking and all the fake narratives pushed against the public” (+66)

Such pre-occupations with critical thinking, false narratives and debunking official truths are inherently part of the r/conspiracy community.

This is not a collective of believers: epistemological insecurity haunts conspiracy culture itself—it divides, fragments, and results in internal diversity. Just as commonly as users cast doubt on others’ theories and their validity, users cast doubt on the sincerity of belief in general. Under a highly popular post (918 comments, 3267 upvotes) about how one user became more aware of government conspiracies after using psychedelic drugs, one comment goes: “Guys, how do you expect anyone from the ‘normal’ world to take you seriously with this kind of approach…? That’s why the media mocks on conspiracionism, is a trend followed by stoned people, traumatized people who barely can differentiate between ‘truth’ and ‘belief’.” (+100)

Users make such arguments to discredit what they see as crazy or insincere people from “the real truthers” (+82). The reason for discrediting such problematic theories may be a lack of empirical support, drug-use or accusations of plain stupidity.

Even community members themselves—beyond just their theories—are to be doubted and distrusted. Such users are either problematized because of their outlandish theories, called trolls, shills, or bots. People claim they have “found the NPC” (+12), or that people are “trolling the sub” (+3), often spelling the downfall of the community in which there “seems to be a lot more trolling here lately” (+6), claiming it is plagued by an “invasion from bots and shills” who are ruining r/conspiracy (+4). The assumption that unfounded theories may as well be spread by human trolls, NPCs or software bots, illustrates more than anything else that participants of r/conspiracy are not only debating epistemics—what is belief, fact or fiction – but are also displaying a wider insecurity in their engagement with both other conspiracy theorists and their theories: are those people really real or actually non-human bots? Doubt thus becomes the epistemological position from which such users reason.

Ludic conspiracies: “when conspiracy theories were fun.”

The discourse of discrediting each other’s beliefs, or even preferring doubt over any belief on epistemological grounds, can thus be seen as a vital part of conspiracy talk. It shows that the portrayal of conspiracy culture as consisting of “radical believers” is reductionist, and confirms that conspiracy culture is not as homogeneous as often held to be. Notwithstanding the shared critique of the mainstream, debate on r/conspiracy is open and internally divided.

Whereas the discrediting of one another’s beliefs, or the complete embrace of doubt are pivotal in understanding participatory conspiracy culture, there is a third epistemological position present in these discussions, that problematizes the labeling of conspiracy theory as a matter of “belief.” Different participants make the point that they consider conspiracy theories above all a source of fun and games. Hence, one user argues that r/conspiracy “isn’t intended to be your news source,” but rather that people need to entertain as many possible alternative theories as possible, explaining: see….we like thinking that the elite are lizards, we do hope that tom hanks is exposed as a satanic pedophile, we want to find the cia behind vegas shooting etc etc etc im tired of people saying theories are dumb or stupid or illogical…that’s the point” (+6640, emphasis added)

This is a common response to talk about the legitimacy of the subreddit in general, which a vocal part of its users see as in decline not just because of the aforementioned perceived increase in “bots and shills,” but because the forum used to be less serious and pretentious: “there was way more ‘cool’ stuff back in around 2012 when I first started visiting Reddit and/r/conspiracy” (+8). Such “cool stuff” includes “UFO’s, underground military complexes where they did crazy experiments and yes bigfoot. Politics and government were definitely not the main focus back then” (+8). Summarizing the sentiment, another user adds that “I miss when conspiracy was fun

Indeed, such arguments imply that fact and values; truth and hopes; reality and dreams are hard to disentangle so that we should take conspiracy theories lighter. We realize that naming play or “fun” an epistemological position in this case needs some explanation. What makes the emphasis on fun here, firstly, playful and, second, epistemological, is that these users take an ambiguous (playful) position in discussing their knowledge (epistemologically) with regard to the “official” truth. This does not mean that these users are trivializing the thought that official truths might be wrong; they are entertaining the thought. In other words, they are considering a truth or hypothesis without fully accepting it, which we choose to understand (and elaborate in the conclusion) within the tradition of what psychologist Brian Sutton-Smith called the “ambiguity of play” (2009: 85), and historian Johan Huizinga called simply “play,” regarding it as central to the workings of truth, law, and societies at large (1938: 10): holding a temporary reality as true within the delineated space and time of, in this case, r/conspiracy; an epistemological position of which sociologist Roger Caillois wrote that “all play presupposes the temporary acceptance, if not of an illusion (indeed this last word means nothing less than beginning a game: in-lusio), then at least of a […] imaginary universe. […] The subject ‘makes believe’.” (1961: 19).

Regardless of such thinkers, it is clear that emically, at least, those who emphasize the fun of participatory conspiracy culture are not engaging in radical belief, nor doubt. They come to be entertained by and to entertain conspiracy theories, and as a consequence help spread them.

Conclusions: participatory conspiracy

We started this paper by proposing to study the everyday, mundane online debates about conspiracy theories, which take place in what we call ‘participatory conspiracy culture: the online expression, (civic) engagement with and discussion of various conspiracy theories. Our premise to study participatory conspiracy culture more broadly rested on two arguments. First, we argued that studying conspiracy theories as (radical) beliefs is a pars pro toto generalization in the literature. Instead, we see in several ways that conspiracy culture contains not only convinced believers but that conspiracy theories are additionally engaged with more broadly in non-committal ways. Conspiracy theories are no longer “fringe ideas” but are “politically, economically, and socially relevant to all of us,” and “intertwined with our everyday lives” (Uscinski, 2018:6; cf., Fenster, 2008; Knight, 2000). Second, it follows from this that we must study participatory conspiracy culture as an open-ended discussion of conspiracy theories in a heterogeneous group of people rather than as homogeneous online “filter bubbles” or “echo chambers” in which users radicalize.

Reddit’s sub-forum r/conspiracy, a self-described “forum for free thinking,” is a prime example of such open-ended, non-committal engagement with conspiracy theories. Through a combination of online non-participant observation, inductive analysis of discussions, and supplementary use of digital methods tool 4CAT we addressed the question

How do people participate in online conspiracy culture on Reddit?

Specifically, what are these online discussions about—what are the topics of interest? How do members participate? Firstly, a lot of them do not talk at all: a silent majority of conspiracy culture (99.7% of >1.9 million users) participates through silent means such as upvotes and downvotes, to approve of what is deemed relevant and interesting. Those who do talk set the agenda: about 100 top posters initiate discussions, and an only slighter larger group responds to these posts in discussion threads about various topics. Secondly, what those groups talk about represents a plethora of common conspiracy theories (Table 1), with vaccines and school shootings being most common during the period of our analysis, followed by “classic” theories concerning aliens, climate change, 9/11 and the moon landing.

How do participants in such participatory conspiracy cultures communicate and socially relate to one another? The participatory conspiracy culture of r/conspiracy is highly heterogeneous, and characterized by conflict over each other’s beliefs. Participants of r/conspiracy consider the platform a “safe space” with like-minded, alternative thinkers; whereas the social cohesion of this community is grounded in a shared critical stance against an imagined and reified “mainstream,” as much as against each other. That is, while participants assert their own position within this overarching contestation of mainstream culture and its official truths; they do so by also constantly contesting each other, through conflict over what is true and what is false (or whether that question even matters).

The latter finding demonstrates that symbolic boundaries and tensions among engaged members are not primarily grounded in ideological difference but epistemological debates. We articulated three analytically distinct epistemological positions that users of r/conspiracy take: belief (the conflict over true and false), doubt (the conflict over whether anything can be known, preferring instead to doubt all received truths), and play (the conflict over whether that is even the point, preferring to entertain conspiracy theories for the fun of engaging with them). Without wanting to overemphasize the importance of ironic participation—belief and doubt are, still, at least as important to what makes r/conspiracy a participatory conspiracy culture—this latter position of playful participation is theoretically relevant and underexplored; although some authors have recently started to explore it (de Zeeuw and Gekker, 2023). By empirically mapping it, we find an answer to the calls of what several authors cited above have previously identified as a blind spot of conspiracy research. To continue Knight’s line of thinking on the everydayness of conspiracy theories, they “are now less a sign of mental delusion than an ironic stance towards knowledge and the possibility of truth, operating within the rhetorical terrain of the double negative […] The rhetoric of conspiracy takes itself seriously, but at the same time casts satiric suspicion on everything, even its own pronouncements” (2000:2).

Similarly, Clare Birchall notes that “taking ‘belief’ out of the equation means that conspiracy theory can be marketed to, and parodically adopted by, those concerned with a generalized, rather than specific, conspiracy or injustice” (2006:40); and Jaron Harambam adds that “too little attention has been given to the emotional dimensions of conspiracy theorizing, and future research might seek to find out in more empirical detail what sort of satisfactions a hermeneutic of suspicion engenders in conspiracy theorists” (2020:217). Fenster had already theorized conspiracy theorizing as “a form of play” which induces “a sense of pleasure” (2008:14), and Steve Fuller more recently described post-truth broadly as a “game” (2018). At this point, we should emphasize that theorizing such participation as a form of play does not trivialize the impact of conspiracy culture. Indeed, most theories of play argue against play as something frivolous, emphasizing instead its ability to seriously transform cultures and societies (Caillois, 1961; Huizinga, 1938; Sutton-Smith, 2009). Adrienne Massanari’s seminal study of Reddit applies such conceptions of play as “a means of power exchange, a way of reinforcing community identity, and a protest against the everyday ways of being” (2015:22). Following these analyses, we cannot overstate the seriousness and potential impact of Redditors playing with conspiracy theories. QAnon is but one typical example of how a “game” turned into a “serious” movement with far-reaching impact (Davies, 2022; Zuckerman, 2019). QAnon theories and talking points have been seriously taken up in US Congress, and famously contributed to an insurrection—with many self-proclaimed QAnon followers participating in the January 6 United States Capitol attack. Play—especially what Clifford Geertz (1972) and Diane Ackerman (2011) call “deep play”—can transform society at, and at the very least “spreads [a] thing just as quickly as sharing it sincerely would” (Phillips, 2020). Indeed, the playful engagement we observed on r/conspiracy likely contributes to the virality of conspiracy theories online at least as much as believing and doubtful engagement do.

In conclusion, this article has provided a qualitative, empirical basis to what we thus call “participatory conspiracy culture.” While it should be noted that we took a specific case study—Reddit’s >1.9 million-count r/conspiracy subreddit—it remains to be seen whether our findings and conclusions translate to other platforms and communities. As others have shown, platforms and communities do matter: they shape the quality and quantity by which conspiracy theories are shared differently on Reddit, Twitter, Facebook, or other platforms (Theocharis et al., 2021 cf. Samory and Mitra, 2018). Reddit is, as Massanari has shown (2015), set up for this kind of debate, with a large degree of anonymity, and an emphasis on conflict—if only due to the affordance of being able to upvote as well as downvote. Following Massanari’s ethnography of Reddit, which analyzes it as equally play, community and platform among other things (2015:22–25), a limitation of this study is our underemphasis of Reddit’s platform politics of and “the ways in which this culture is contested by its members as well as shaped by the politics of its platform” (169); while instead taking up Massanari’s suggestion to focus on studying “the cultures of individual subreddits [which] are deserving of exploration” (ibid.). That being said, r/conspiracy is a very sizeable community to say the least, and likely one of the places anyone with an interest in conspiracy theories (and access to the internet) will eventually pass by. Future platform-comparative research needs to show whether the participatory conspiracy culture analyzed here is typical for r/conspiracy, and whether and how it manifests itself on other platforms.

Notwithstanding these limitations, our study has the following theoretical implications: the heterogeneity of worldviews, positions and engagements that make up the participatory conspiracy culture is fundamentally at odds with that of an “echo chamber” (Nguyen, 2020), “rabbit hole” (Sutton and Douglas, 2022), or “filter bubble” (Pariser, 2011). Those in the participatory conspiracy culture of r/conspiracy do not enter into a homogenous community or self-enclosed community of radical believers; but participate in a heterogeneous and open participatory culture with belief, doubt and playful engagement. In r/conspiracy, beliefs are not consolidated nor are believers radicalized; indeed, counter to pars pro toto generalizations of individual conspiracy theorists as extremist believers, this is a community in which belief is, above all, contested rather than embraced.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Arts and Humanities Research Council (AH/V001213/1), and is part of the AHRC-project “Everything Is Connected: Conspiracy Theories in the Age of the Internet.”