Abstract

The rapid rise to prominence of ChatGPT, one of the most successful generative artificial intelligence tools to date, presents both important challenges and opportunities for management education. Specifically, while it improves prospects in many areas (i.e. remote learning, asynchronous communication, online collaboration, gamification, student engagement and assessments), it also poses significant challenges, particularly in relation to academic integrity and traditional forms of assessment (i.e. ‘open book’, non-invigilated, essays). Drawing on insights from social epistemology, I argue that this exogenous shock to the educational system provides opportunities for epistemic evolution, particularly in fields like management education, where essays have traditionally been the dominant form of assessment. I conclude by proposing potential responses to this disruption that can enable educators, students and institutions to succeed in this new environment.

ChatGPT is one of those rare moments in technology where you see a glimmer of how everything is going to be different going forward.

Artificial intelligence (AI) is disrupting management education in 2023 via tools like chat APIs and GPTs. 1 At the forefront of this phenomenon is ChatGPT, now in its fourth iteration, a programme that has become an Internet sensation, reaching one million users in its first week of existence, and prompting a lot of media and user interest since its unveiling on 30 November 2022. So, what challenges and opportunities do ChatGPT and other AI tools present for management education, and more importantly, should we embrace or resist their influence?

Background on AI and ChatGPT

AI refers to the simulation of human intelligence in machines designed to perform tasks that normally require human inputs such as perceiving, synthesizing and inferring information from audio or visual stimuli. One of the major characteristics of AI is its ability to perform multiple tasks and learn from past experiences (Goodfellow et al., 2016) with notable applications involving speech or image recognition, language translation, input mapping and decision-making.

While in the works for decades, AI technology has finally bloomed in 2022, with significant developments in fields such as machine learning, computer vision and natural language processing (Shneiderman, 2022). Subsequently, some of its most promising uses involve algorithms in the healthcare industry to improve diagnoses and provide treatment recommendations (Sharma et al., 2019), fraud detection, forecasting and risk management in finance (Zhang and Lu, 2021), or traffic optimization, safety and emissions reductions in transportation (Wu et al., 2022). Overall, recent advancements (e.g. superior algorithms, larger data sets, and more computing power) have led to greater accuracy and effectiveness of AI models, spurring adoption rates and applications across many industries.

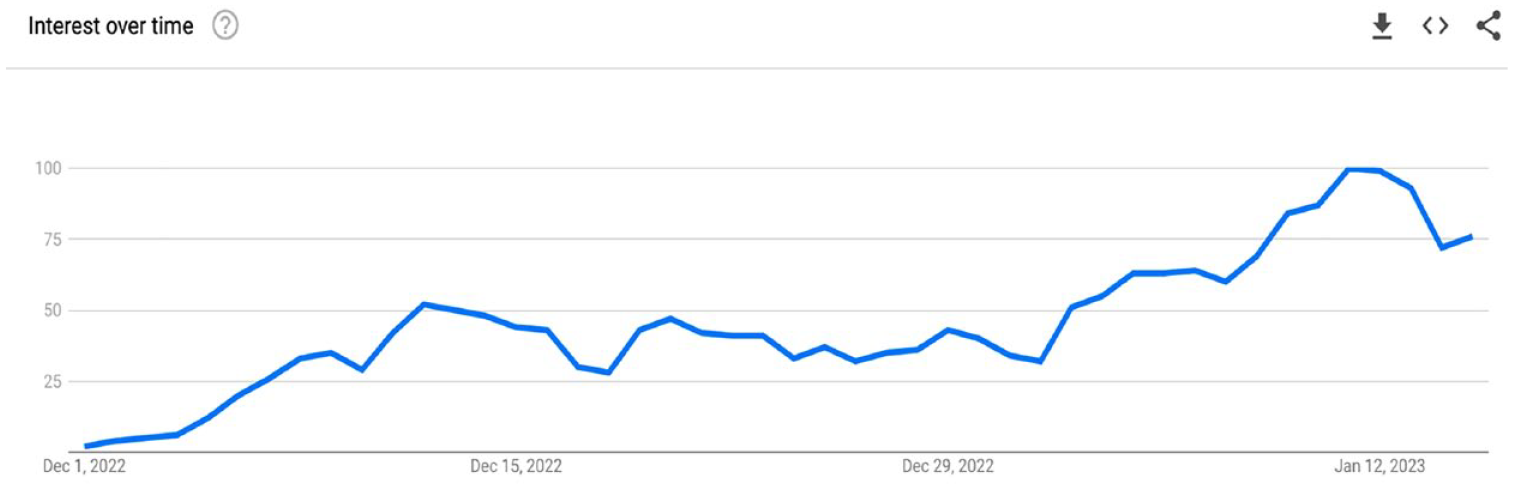

Against this backdrop, the impact of AI tools is starting to reverberate its disruptive potential also in management education. At the forefront of it, we find ChatGPT, a free, easy-to-use, large language model capable of generating human-like text in response to prompts from users. Developed by OpenAI (https://openai.com/), an American company based in San Francisco, ChatGPT can be used for a variety of natural language processing tasks, such as language translation, text summarization and question answering. Heralded as the fastest-growing platform in history in terms of users (Reuters, 2023), the popularity of ChatGPT has been instant (see Figure 1), dwarfing previous champions in this area like Facebook, Netflix or Instagram (Yahoo Finance, 2022). This unprecedented public interest often meant that OpenAI’s servers could not keep up with the demand, prompting a series of corky messages to entertain idling users (Figure 2).

Global popularity of ChatGPT as measured by Google Trends (1 December 2022–20 January 2023).

ChatGPT’s ‘full capacity’ error message.

The core of ChatGPT’s commercial advantage resides in business applications such as customer service (e.g. help chatbots) or marketing (e.g. content analysis, product descriptions and generation of marketing content) (Ma and Sun, 2020). Yet, its ability to write essays in a human-like fashion, and its capacity to learn and improve from interactions, has allowed it to also make a successful leap into the education domain, where it serves a new medium to generate summaries, essays, articles and lines of codes in merely seconds. But do not get too excited. OpenAI’s official disclaimer clearly mentions, ‘ChatGPT sometimes writes plausible sounding but incorrect or nonsensical answers’ known as hallucinations. Nevertheless, despite these limitations, many see ChatGPT as a game changer for the bulk of education.

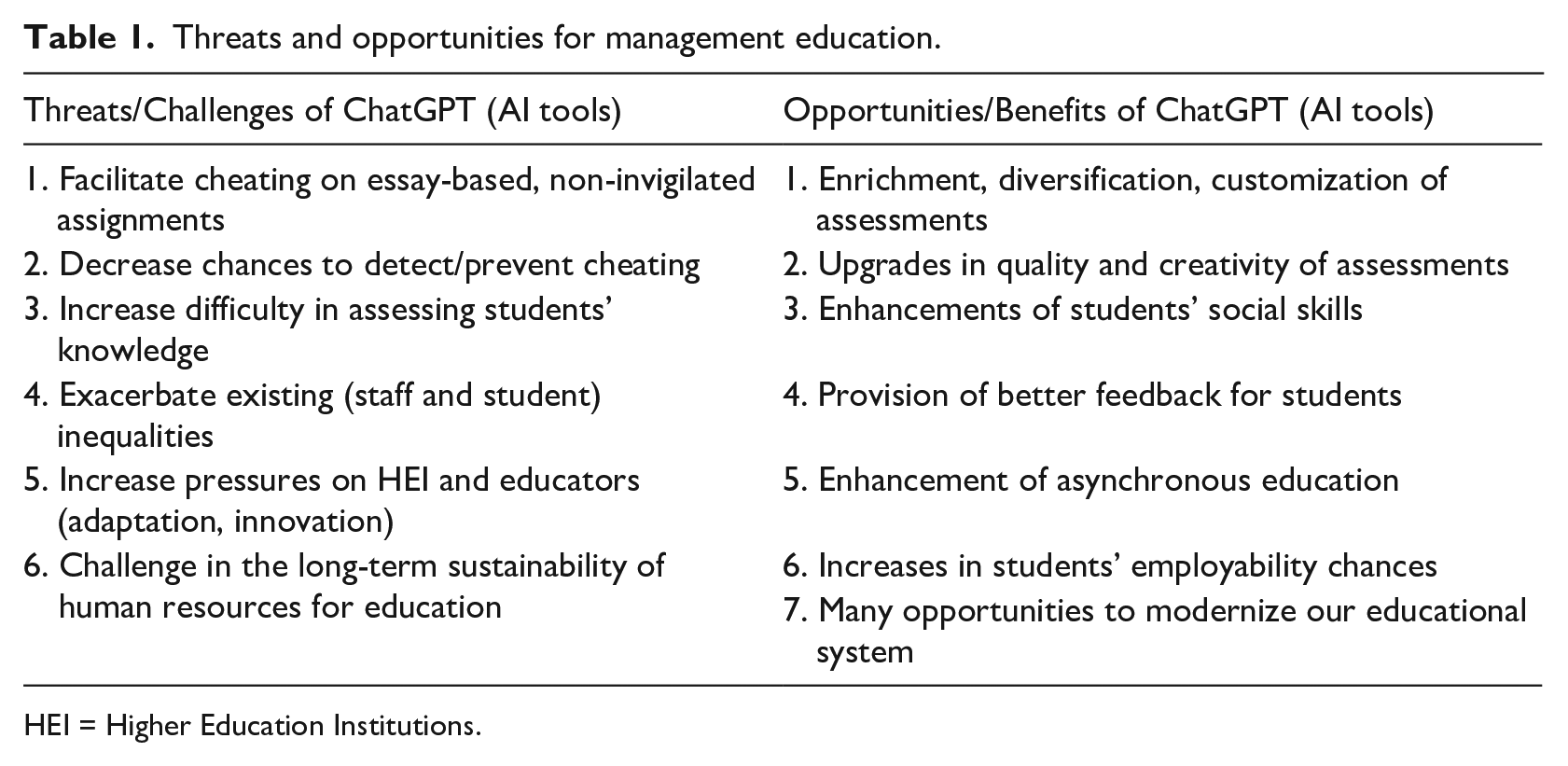

In the following, I employ a social epistemological framework to discuss some of the most salient issues raised by ChatGPT and other forthcoming AI tools. Appropriately, I use the ‘red pill-blue pill’ allegory from the film The Matrix (Wachowski and Wachowski, 1999) to highlight the stark choices faced by management education between embracing, adapting attitudes and defensive, preserving ones in response to the AI challenges, which subsequently can be interpreted as threats or opportunities (see Table 1).

Threats and opportunities for management education.

HEI = Higher Education Institutions.

The blue pill scenario: containing the threats

While many fields employ essay-based assessments, management education is relying heavily on open-ended questions that ask students to develop arguments and competing views on a given phenomenon or scenario (Cram et al., 2022; Kelley et al., 2010). 2 Combined with the open-book, online delivery, lack of invigilation and sizeable cohorts, this reliance presents significant opportunities for cheating (Awdry and Ives, 2023; King et al., 2009; Sweeney, 2023). Enter ChatGPT, and its potential for further disruption via several avenues, as follows.

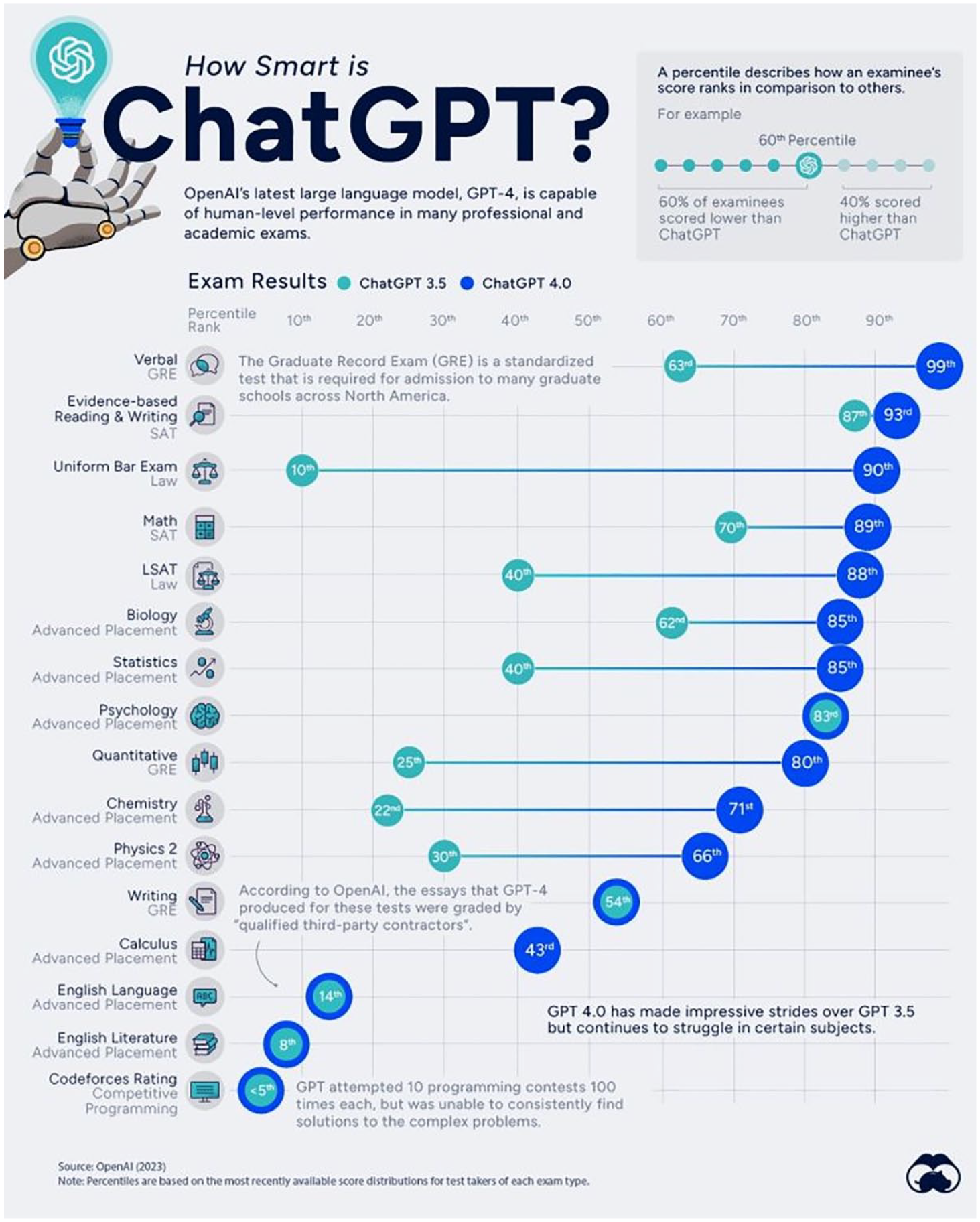

First, ChatGPT provides an easier and cheaper option for cheating on essay-based, take-home assessments (Cram et al., 2022), 3 defeating the quintessential epistemic role of education, namely the promotion of new knowledge to students (Goldman, 1999). The tests I conducted using ChatGPT 3.5 (February 2023) and later ChatGPT 4 (May 2023) have all confirmed the programme’s ability to develop full essays in a matter of seconds without requiring any subject knowledge, post-editing skills or prompting prowess, by delivering worthy essays in terms of quality, readability and plagiarism detection avoidance 4 (see Items 1 and 2 in Appendix 1). Impressively, ChatGPT itself has further improved from version 3 to 4 in all these areas (see Figure 3). 5 Unaddressed, AI tools can produce easy illicit assessments, deeply eroding the value added of current management education that still relies heavily on non-invigilated, online assessments (Sweeney, 2023). In the extreme, it may even lead to a devaluation of business degrees, resulting revenue losses and sector-wide disruptions (Barros et al., 2023; Estermann et al., 2020). 6 This is even more unsettling when considering the current challenging economic environment dominated by a cost-of-living crisis (Neves and Stephenson, 2023) and business schools’ reliance on increasing student numbers (Lomer et al., 2021; Parker, 2018) both for their own growth and for scaffolding other, less commercially viable disciplines within universities (Halachmi, 2011).

Comparing ChatGPT3 with ChatGPT4 in different subject areas.

Second, the use of ChatGPT makes plagiarism both wieldy and difficult to detect, given business students’ well-documented cheating involvement and innovativeness (Bartlett, 2009; Cronan et al., 2018; King et al., 2009; McCabe and Trevino, 1995; McCabe et al., 2006; Medway et al., 2018). This is further enhanced by the increasing gap between AI technologies sophistication and the lagging performance of traditional plagiarism detection tools. My own checks throughout the course of 2023 (Item 3, Appendix 1) using both established (i.e. Turnitin) and recent tools (i.e. ZeroGPT) for similarity checks confirm what is almost a consensus by now, namely that this is likely a futile endeavour (Mollick, 2023a). Ceteris paribus, this growing crevice will therefore steer us further away from the veristic conception of education one that is aligned with a tradition of enlightenment and truth (Goldman, 1999).

Third, AI technology is likely to exacerbate existing inequalities in education both among staff and students. ChatGPT may provide an unfair advantage to students who are able to access it and employ it, compared to those relying solely on their own capabilities. This will likely benefit those with financial means (Neville, 2012) who would be able to employ both the best AI tools for higher quality (such as GPT-4, subscription of which is priced now at $20 per month) and multiple tools at the same time (to reduce the risk of being caught). ChatGPT can also exacerbate existing inequalities among educators by worsening workload pressures, driven mostly by increasing student numbers, abrupt internationalization, quality issues (e.g. lower or no entry standards) or lack of resources. Thus, AI tools are simply the latest addition to these pressures, and the top-down design and implementation of educational programmes will only restrict the type and scope of potential responses by individual educators. Therefore, educators with larger classes (Beattie and Thiele, 2016) will be most affected, as they will face serious practical AI-related challenges (Cassell et al., 2009), including logistics, motivation, and evaluation.

Finally, the rising prominence of AI in many creative settings raises significant concerns around the substitutability of human workforce in favour of AI technologies and its implication for the quality of the final product. 7 Education is a sector that is more than ever focused on achieving performance targets alongside an increasing number of domains such as research impact and prestige (Krammer and Dahlin, 2023), student satisfaction (Cheng and Marsh, 2010), international rankings (Johnes, 2018) or external funding sources (Laudel, 2006). Within this paradigm of ‘performative’ (Jones et al., 2020), ‘capitalist’ (Preston, 2022) universities, it is relatively easy to imagine scenarios in which AI tools would replace human educators to increase both the efficiency and commodification of current educational offers. However, the technological limitations of AI technologies (i.e. hallucinations, biases, lack of common sense, lack of creativity, etc.), such as radical shift, will have a severe impact on the quality of knowledge transmitted and the overall trust in our educational systems (Siegel, 2004).

The red pill scenario: embracing opportunities

While the rise of AI technologies poses significant challenges to our profession, it also presents several important opportunities to rethink, revise and improve it.

First, AI technology can enrich our assessment toolkits by addressing some of the limitations exhibited by traditional forms of assessment. In management education, we still use mostly exams, papers, projects and presentations to assess learning and skills, either in an individual or a group setting (Kelley et al., 2010). In the wake of the COVID pandemic, many of these assessments have migrated online, a trend that is persisting despite inadequacies in some instances, and after the great return to the ‘face-to-face’ teaching 8 (Cram et al., 2022). While no assessment is perfect, the (over)reliance of open-book, non-invigilated, low-difficulty essays (due to very large 9 and heterogeneous 10 classes) is a big red flag. ChatGPT provides a unique opportunity for creating customized assessments that fit the various types of students in a large course, improving significantly their critical thinking and language skills (Bird et al., 2021; Firat, 2023). Subsequently, universities should invest in developing AI proficiency of their staff, as well as let them put it into practice for both carefully creating and evaluating assessments, with the aim of increasing the amount and sophistication of knowledge transfers. Such aims align well with the social epistemic goal of improving collective understanding (Dede, 2010).

Second, AI tools can also increase diversity and creativity of our assessments moving them in the upper echelons of Bloom’s taxonomy. While the COVID-19 pandemic has ushered the ‘online’ and ‘hybrid’ educational offerings into the mainstream, AI tools can push this even further by reconsidering what management education should be, what are its objectives and the new, better ways to achieve them. For instance, custom-tailored and fun-seeking gamification (via game-based or interactive tools) provides a novel ecosystem for learning, one that has the potential to maximize the effectiveness of our educational tools by better meeting the needs and aspirations of our students, as well as capturing performance via novel, and perhaps, better metrics (Cao, 2023; Huang and Soman, 2013).

Third, AI tools can also develop complex scenarios for testing and enhancing students’ communication and collaboration abilities. Research on online collaboration suggests that GPT is a reliable aid in terms of understanding and improving collaborative classroom practices (Phillips et al., 2022). Notably, its ability to summarize accurately conversations in real time allows teachers to better allocate their efforts and attentions towards students who are not engaged, confused or frustrated, and successfully re-engage them with the task at hand. Moreover, AI tools have a clear advantage assisting students in their learning process, by being able to provide real-time feedback and additional resources (i.e. notes, links, resources) to address any student queries or requests regarding a particular subject (Mollick and Mollick, 2023). In this regard, one can easily envision outsourcing some of the basic activities (such as knowledge dissemination and comprehension) to a virtual AI assistant, leaving the educator with more time for tasks related to guidance, critical thinking and fostering of collaborative learning environments (Arnett, 2016).

Fourth, educators can also employ AI tools to assess and provide better feedback on assessments, which will increase epistemic virtues like open-mindedness, curiosity and intellectual humility (Turri et al., 1999). As the technology advances, the opportunities to train AI bots to grade exams by looking first for keywords, concepts and arguments and second, by assessing the degree of language sophistication, writing quality and proper use of relevant academic references would be all amazing additions to any educator’s toolbox. For this essay, I have also entertained this idea in practice by using ChatGPT to grade its own developed essay and provide a grade plus short feedback (see Item 3 in Appendix 1). Both version 3 and version 4 were generous (scored it 90 and 92, respectively, on a scale to 100) but clearly fourth-generation GPT has made significant improvements which justify the difference (I scored the essays 65 and 77, respectively). The feedback provided was also quite good, which is encouraging for the educational process, as proper feedback is a great enabler of learning.

Fifth, an important advantage of AI technologies is their potential to democratize education by providing more inclusion, accessibility and quality for all students (Au and Apple, 2007; Freire, 2000). Educators can employ AI to customize and update seamlessly their courses via news, databases, academic references and other resources. They can also harness it for developing course topics, populating slides with content or generating new presentation output (Barros et al., 2023). 11 Although the need for content curation and training will be crucial for the success of such an AI-driven courses, the future possibilities are truly exciting. Chatbots can provide students with an excellent platform for communication and learning by answering questions, providing real-time examples, feedback and alternative assessments. In addition, they will be able to circumvent all language differences through fluent translation, thus stimulating class participation and facilitating communication among all students, regardless of background. Likewise, AIs will also be able to facilitate remote learning by better catering also for students with special needs, such as disabilities or mental health issues, bringing us closer to a true democratization of education.

Sixth, AI literacy and skills will become an important asset for employability. Part of our responsibility as educators is to prepare our students for this brave new world, where they should be able to use proficiently AI technologies to enhance their other skills (Mollick, 2023b). 12 Soon, AI technologies may become ubiquitous in our daily routines, the way calculators and computers have become a part of mathematics and science (McMurtrie, 2023). Subsequently, the benefits of engaging these technologies early will be substantial and long-lived (Sharples, 2022). Given its versatility, AI’s impact on education is also likely to differ across levels, for example, undergraduates may experience more automation plus more adaptive and immersive learning experiences; graduates can benefit from it via simulations, real case studies as well as mentoring; while doctoral students could use it for syntheses and reviews of the literature or advice on coding and programming. Moreover, it will likely go beyond standard educational offers and spill into dedicated corporate trainings and professional development programmes. Again, all these new opportunities will require skilled technicians and educators to develop and implement such new programmes, resulting in more creation, or sharing, but also practical evaluation of knowledge (Arnett, 2016; Cassell et al., 2009).

Finally, AI could also be an opportunity to shift away from the `terror of targets and performance’ that characterizes many universities today (Jones et al., 2020). The competitive edge (i.e., the success and prestige) of higher-education establishments rests predominantly on their human capital endowments and capabilities (e.g. overall number, qualifications, intellect, creativity, expertise and aspirations), none of which can be matched by any AI. Thus, a good engagement with AI tools can result in better productivity and more enjoyable educational experience for both students and educators. Moreover, cultivating and retaining high-quality individuals in organizations should remain a priority (Dill, 2009), irrespective of these technological advancements.

Bottom line: resist or ada(o)pt?

The great economist Joseph Schumpeter coined the phrase ‘creative destruction’ to instantiate the power of technology and innovation to revolutionize economic structures from within. We have seen this phenomenon time and again, from Henry Ford’s assembly line to recent disruptors like Uber or Netflix. Now, AI appears finally to have matured and be able to affect a variety of industries and activities, including education.

As such, similar to Keanu Reeves’ character (i.e. Neo) in The Matrix, we find ourselves at a major crossroad, weighing up our options in response to this unexpected challenge. On one hand, we can take a classical incumbent’s approach (i.e. the ‘blue pill’ option) and ignore or fight off this disruption to the best of our ability so that we are able prolong our existing educational paradigms. Thus, we can certainly outcast AI as a ‘negative resource’ (Lindebaum and Ramirez, 2023), useful only for unethical practices by students and focus on deterring this ‘vice’ from our classrooms. This would require a coalescence of factors, including (a) the development of reliable, AI-specific, plagiarism tools (either by upgrading existing ones like Turnitin or by developing new ones such as ZeroGPT or Copyleaks); (b) a strong enforcement of penalties for foul play, and in particular, AI-related one; (c) the option to revert back to traditional assessment methods (such as in-class, closed-book, invigilated exams) or use a combination of assessments (e.g. oral group presentation and individual in-class exam) to reduce the appeal for AI cheating.

In this way, from an epistemic perspective, we will be able to preserve the quality of knowledge transmitted and the existing trust in our educational system (Siegel, 2004) by insulating ourselves against the perils and intrusion of AI tools. However, such an autarkic approach would, at best, maintain the status quo, without any real chances for progress in the future. Moreover, these defensive adjustments will need to be backed up by significant investments from higher education institutions (in training, monitoring and technological upgrades) which they are unlikely to do, as proven by other episodes of disruption (e.g. the famous ‘essay mills’) that went largely un-addressed (Bartlett, 2009; Lancaster, 2020).

In turn, going forward, it is difficult to imagine a future for education free of any AI interference. AI technologies have evolved tremendously in the last decade, and ChatGPT is currently the pinnacle of these developments. But technology evolves rapidly, and AI the new gold-rush (Financial Times, 2023) as proven by Microsoft (Copilot), Google (Bard) and other tech giants that have rushed to claim a seat at the AI table since the first version of this essay was written (Jan. 2023). Thus, seeking AI-proof assessments is going to be impossible, and we likely need to accept that such ‘augmentation’ will occur and focus on better ways to capture educational achievement in this new, brave, world.

Subsequently, we can follow Neo’s footsteps and go for the ‘red pill’. We can embrace AI tools as potent additions to our educational toolkits, playing into their strengths but staying mindful of their pitfalls. 13 While a bot can write essays about most topics, provide convincing arguments and write them up at a decent level of quality, it cannot replace completely the human component, especially when it comes to complex topics (Thorp, 2023). Moreover, the responses developed by AI still display inherent limitations (e.g. hallucinations, biases, inaccuracies, obfuscation) which yield major penalties for critical thinking, originality and legitimacy. This implies that we need to develop and adapt our assessments to the needs of this new world by raising the bar (i.e. new epistemic standards) in terms of what is asked in an exam and how it is viewed by the educator (Cassell et al., 2009). In parallel to these adjustment efforts, we need also to continuously educate students on plagiarism and be consistent and serious about enforcing appropriate penalties. 14

Finally, we must recognize that these adaptation efforts will require significant efforts and expertise, which should be addressed through institutional strategies and dedicated support and resources. Outsourcing the burden of dealing with this potential shock exclusively to educators (‘laissez-faire’ approach) is both ethically unfair and irresponsible in the long term, given the significant differences across classes, subjects and capabilities. 15 Not all educators will possess the techne (i.e. technical know-how) and phronesis (i.e. practical application) required (Cassell et al., 2009) for a successful delivery of the next generation of AI-proof assessments. In turn, as proven by the recent COVID-19 pandemic, institutions have the resilience, creativity, expertise and resources to address major challenges when their survival is at stake. It is all about staying in tune or ahead of the time, rather than the usual herding strategies we commonly see in higher education. Thus, from an epistemic perspective, this shock presents significant opportunities for advancing knowledge leadership and power (Bourdieu, 1977) with tangible long-term benefits for early-adopting institutions (Huser et al., 2021).

Recommendations for management education

If ignored, ChatGPT may shatter our assessment strategies and reduce the overall impact of our educational offers. So how can we address it and harness it to improve our educational offerings? In the following, I will try to present several recommendations for the management education sector, while maintaining a neutral and objective view on the suitability of the ‘red pill’ and ‘blue pill’ alternatives.

The first mandatory point on the agenda is the development of new formal academic policies regarding the use (or not) of AI tools. While some pundits may believe that AI refinement is still far away (Marche, 2022), 2023 has shown us how fast this technology evolves both in terms of sophistication and also in terms of its applications. 16 However, almost one year after the breakthrough release of GPT3 to the public, educational establishments are yet to develop and implement a concrete plan to address AI technologies in education. While some universities in the United States and the United Kingdom have originally prohibited the use of AI by students, 17 they have since reverted their positions (for example, the Russell Group declaration of the top UK universities). 18 While clarity in terms of direction of travel is much appreciated, it can quickly turn into a red herring, as details, implementation, support and timelines have also been un-touched thus far. And re-configuring the educational system to embrace and harness these new AI powers requires significant planning and investments in infrastructure, curricula development and staff educational training, all of which are still unaccounted for.

Second, regardless of the chosen strategy (blue or red), these new policies and instruments should reduce or eliminate the possibility of unethical behaviours via AI tools. On the blue route, these can tap into traditional assessment forms, like a mixture of ‘pen and paper’ (Cassidy, 2023), in-class, closed-book (Lindebaum and Ramirez, 2023) or oral examinations (Allen, 2022) to avoid AI interference. On the red route, we can develop new assessment forms that encourage the use of AI but still reward human qualities, personal experiences or interests (McMurtrie, 2023). In this way, critical thinking, and the ability to integrate different views, and provision of relevant examples are all areas where AI will be less likely to excel.

Third, we need to educate students on the benefits and fallacies of using these AI tools, particularly in light of academic integrity, reliance and personal development. Acquiring literacy in this domain may prove to be a very valuable skill for students upon graduation, and therefore a worthy investment (Zhai, 2022) that spurs their creativity (Lim, 2022) and coding skills (Zhai, 2022). By doing so, students will be better equipped for entering the job market, and they will acquire technical skills which provide them with increased flexibility in terms of jobs. Conversely, students should be aware of the common pitfalls of using AI tools, for example, cheating, taking the output at face value or being unable to complete a task correctly without its assistance. Such mishaps bear major consequences (academic misconduct or loss of reputation/employment); thus, focusing on the positive (and allowed, encouraged and creative) uses of these technologies can provide significant and sustainable benefits.

Fourth, we need scientific evidence on the benefits and pitfalls of AI technologies vis-à-vis other educational tools. Here is where we (management scholars) come in: by collecting data, running experiments, conducting surveys to students and so forth; all with the grand aim of assessing how we can best employ AI in our classes, while complementing the inherent strengths of the human instructor (Pardos and Bhandari, 2023). 19 For instance, a couple of growing areas of interest evolve around optimal prompt engineering (Mollick, 2023b; White et al., 2023), 20 or parsing and highlighting errors in AI’s responses (Pardos and Bhandari, 2023). Regardless, finding that appropriate place for AI in our educational delivery must be driven by empirical inferences drawn from extensive data and analyses. Thus, more empirical investigations on these issues are needed, and our classrooms provide the perfect experimental setting to do so.

Conclusion

With the rise of tools such as ChatGPT, AI appears to finally secure mainstream success after a decade of lacklustre performance. Throughout 2023, its potential and user appeal both remain very high, and with surging competition in this space, we can expect more and better AI tools to emerge regularly. Gloomy predictions state that these developments might wipe out a large portion of knowledge workers, generating massive unemployment in certain sectors of the economy (Krugman, 2022; Roose, 2022), including higher education.

However, history suggests that we should embrace change rather than fight it. When handheld calculators emerged, they raised significant doubts about people’s ability to acquire mathematical skills (Hembree and Dessart, 1986); similarly, when the COVID-19 pandemic hit, many business schools predicted a rough patch ahead in terms of dwindling student numbers due to the lack of face-to-face educational offers (Laasch et al., 2022). ChatGPT and other AI tools are only the latest ripple to affect education. Clearly, their arrival and disruptive effect require substantial responses from all stakeholders, not just from educators. Resources need to be invested, coherent strategies need to be developed and new educational ecosystems should embed rather than ban AIs. Educators and senior management of institutions need to collaborate and coordinate these efforts to ensure a smooth and productive transition into the next phase of modern education.

Most importantly, the rise of AIs presents also significant pedagogical opportunities to democratize (Freire, 2000), enlighten (Adorno, 1963) and personalize education (Reber et al., 2018), all for the greater benefit of meeting more accurately and comprehensively the needs of all students. From an epistemic perspective, AI presents significant opportunities to complement our existing educational excellence, push further the boundaries of our knowledge (Mollick, 2023a) and enhance our value proposition to students via triangulation of multiple, high-quality knowledge sources (Bernhard et al., 2019; Schaap et al., 2011). A positive and coordinated pedagogical approach to these disruptive technologies can provide important opportunities to include AI tools in our classrooms and further enrich our education.

Footnotes

Appendix 1

Acknowledgements

I would like to thank Deborah Brewis and Ajnesh Prasad for feedback and comments on prior drafts of this work. I am also grateful to Herman Aguinis and Nicolai Foss for some fun, interesting conversations on the role of AI in societies and our educational domain. Thanks to Tao Chen for running a couple prompts for me on the ChatGPT4 to test its essay writing abilities. Emma, David and Jamie – this one is for you, hopefully growing up with AI will make you better learners and citizens of this brave new world.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.