Abstract

This article analyses how the use of artificial intelligence shapes the way news gets produced and distributed, based on 143 interviews with news workers at 34 leading publishers in the United States, United Kingdom and Germany. Drawing on gatekeeping theory and the concept of rationalisation, it describes and explains the use and effects of the technology in the news. Artificial intelligence (AI) is used in all parts of the gatekeeping process to drive efficiency gains, optimise processes and bring about greater effectiveness, with these effects being real but task-dependent and hard to quantify. Overall, AI reshapes and retools the production and distribution of news by providing publishers with new means in the service of achieving existing ends, rationalising the work of news organisations in the process, and pushing it more strongly towards logics of efficiency, predictability and calculability. I discuss these findings with respect to their impact on the public arena and the reconfiguration of power and control within the information ecosystem.

Introduction

Artificial intelligence (AI) is increasingly being adopted in the news industry, but there are still few studies on the specific implications of AI particularly on how it reconfigures news organisations’ gatekeeping processes, shapes the news people get to see and thus ultimately transforms the public arena, also known as the information environment. This article offers a holistic and long-term view of how AI has come to transform the work of news organisations. Drawing on 143 interviews with news workers across editorial, commercial and technological domains at 34 leading national and international publishers in the United States, the United Kingdom and Germany conducted in two waves between June 2021 and December 2022 and January 2023 and January 2024, I find that AI is increasingly being adopted across all parts of news organisations and along the entire chain of gatekeeping – the socio-technical process by which information becomes news – from news gathering to distribution, affecting multiple departments. While there is no single dominating AI system, various AI systems reshape the news as part of a broader trend towards more automation, providing publishers with new means in the service of achieving existing ends: AI, in other words, retools the gatekeeping process and the news.

This article is, however, not just mapping the uses of AI but also provides a theoretical contribution that explains how the use of AI is reshaping and retooling journalism more broadly. Though its precise effects are hard to quantify in some areas, AI is a form of rationalisation in the Weberian tradition, pushing news organisations and the public arena towards logics of greater efficiency, predictability and calculability. Concretely, AI in news organisations can be understood as an extension of a broader process of rationalisation that is about the ‘intensification or extension of applying scientific knowledge [and techniques]’ to an ever-growing set of domains in human life and society (Schroeder, 2020: 3). This rationalisation comes in the form of a concern with developing or improving techniques of greater efficiency, predictability and calculability (Brubaker, 1991) and applying them in the hopes of greater control, here over the process of how the news is made and distributed. A common result of a stronger rationalisation is that traditions, emotions and other non-quantifiable values as motivators and drivers for behaviour and processes in various social domains are increasingly replaced (partially or wholesale) with concepts based on rationality and rules to bring about greater efficiency, coordination and control (Mau, 2019; Petre, 2021; Simon, 2024: 56). As we will see, this also applies to the use of AI in the news.

This article’s main contribution is combining empirical findings from leading news organisations in three media markets with this theoretical lens of gatekeeping and rationalisation. These not only help to explain where and how ‘AI is happening’ but crucially what a key overarching effect of the technology is. Tieing together the disparate elements we can observe with the increasing use of AI in news organisations, this article closes by providing expectations for the broader effects of this shift on our information environment, the public arena. I suggest that AI reconfigures who holds power in the news ecosystem – shifting control at least partially from traditional journalistic gatekeepers to AI systems; and by extension empowering technology companies and organisational decision-makers, with knock-on effects on how public information is curated and disseminated.

Literature review and theoretical framework

Definitions: what is AI (in the news)?

The definition of ‘true’ AI is contested, with the term itself drawing criticism for its value-laden nature. I define AI as a diverse range of applications and techniques at different levels of complexity, autonomy and abstraction. These are concerned with the computational emulation of human capabilities in tightly defined areas, most commonly through the application of machine learning approaches. Machine learning is a subset of AI in which machines learn from data, their own performance, or forms of human feedback (Lewis and Simon, 2023; Mitchell, 2019). This learning happens on a scale between supervised and unsupervised, with the exact configuration differing between AI systems and approaches but improving the system’s performance on a task over time. Importantly, these systems are not (yet) able to operate beyond the ‘frontier of [their] own design’ (Diakopoulos, 2019: 243). This is even true for so-called foundation models, which are at the core of what has been termed ‘generative AI’: artificial intelligence systems capable of producing new forms of data such as text or audio-visual output (Mitchell, 2023). In news and journalism, AI is often used to describe various uses of off-the-shelf AI products, AI-as-a-Service (or AIaaS, the accessing of specific AI capabilities through the cloud) and applications of machine learning systems (which includes foundation and large language models). These are increasingly applied in news production tasks such as story discovery, transcription, translation, summarisation, or verification. Other uses on the production side include content management (e.g. automated tagging and subtitling, archive management and discovery, re-formatting) and increasingly content production (e.g. creating article drafts, summaries, or simplifying complicated writing). On the business side AI has been used in content distribution, content recommendation, audience analytics and dynamic paywalls, to name just a few examples.

Gatekeeping and public arena theory

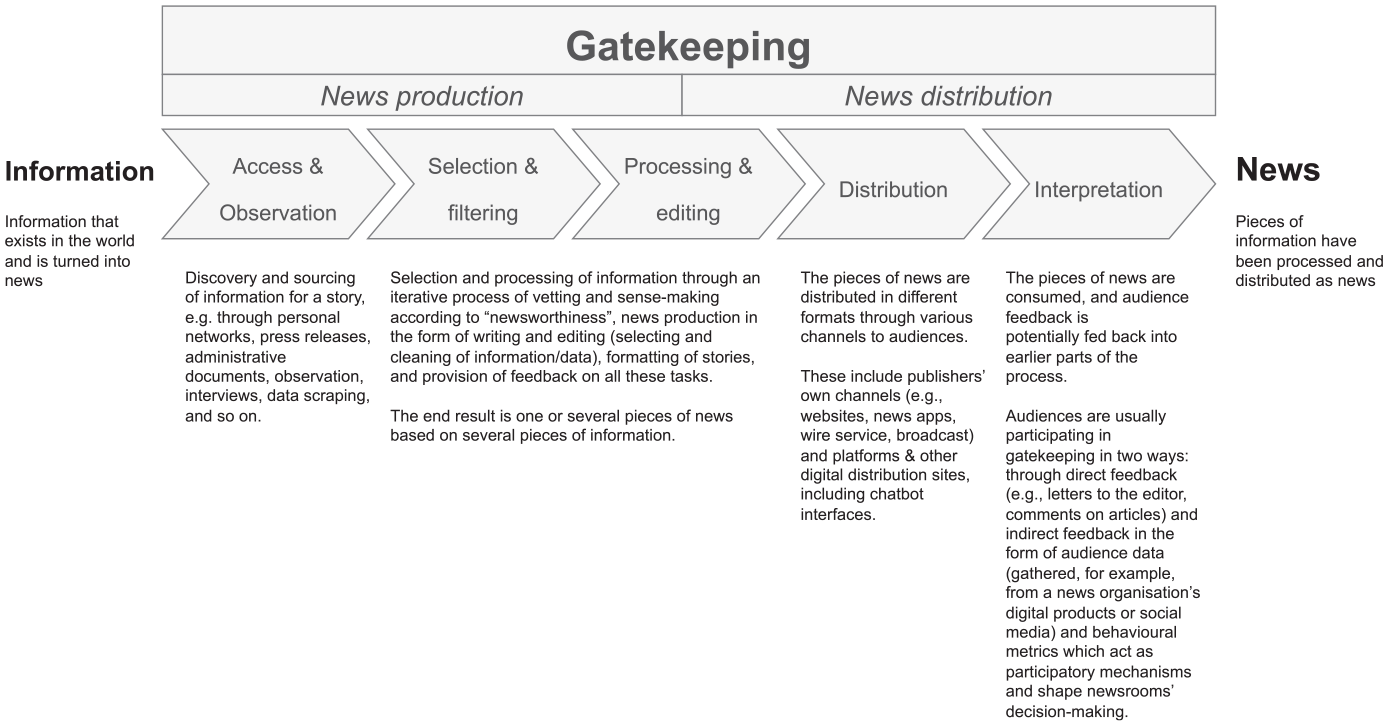

To understand how AI shapes the production and distribution of news, it is necessary to have a conceptual model of the process by which information becomes news, first. This allows us to see where AI can come to bear. A broadly accepted model of how information becomes news (and ends up in the public arena, see next section) is gatekeeping theory (Shoemaker and Vos, 2009). Traditional gatekeeping theory frames the organisation of news as an abstract mechanistic model in which pieces of information travel through several chokepoints or gates before they are being distributed and consumed as news, with gatekeeping ‘the process of selecting, writing, editing, positioning, scheduling, repeating and otherwise massaging information to become news’ (Shoemaker and Vos, 2009: 73). Helpful here is Domingo et al.’s (2008) classification of this as a five-stage process (see Figure 1).

A schematic visualisation of the gatekeeping process in the news based on the categorisation by Domingo et al. (2008).

The gatekeeping process in news organisations is a complementary mixture of social and technological factors – a socio-technical process. Professional and organisational norms, rules and rituals and various technological tools and infrastructures all help bring about decisions that affect how a piece of information ‘becomes the news’. To give an example, journalists use their sense for ‘what is news’ and the selection criteria they have learned (such as assumed impact, social relevance, geographical proximity, or timeliness) to prioritise what to cover (De Maeyer, 2020), but they might also be aided by internal and external metrics (e.g. Chartbeat analytics or trending topics as provided by a search engine) that indicate if a topic is particularly salient and therefore perhaps merits coverage (see, for example, Petre, 2021). At a later stage, an audience editor might then use a mixture of experience and data analytics to pick a headline and optimise the stories for search engines. The speed with which select pieces of information are processed and turned into a news item of a certain format and quality is determined by these various elements, with technological systems and their configurations an important but not the only component.

In the last three decades, news organisations’ gatekeeping processes have become intricately intertwined with the large technological system of digital media – ‘the institutions and infrastructures that produce and distribute information encoded in binary code’ (Jungherr et al., 2020: 7) and as such the traditional gatekeeping model has become more complex, as, for example, Blanchett (2021), Wallace (2018) and Bro and Wallberg (2015) have noted. News organisations and their production and distribution processes belong to this system, but so do audiences, technology companies and their platforms, as well as various other technological artefacts or components (e.g. the cables and servers which make up the backbone of the Internet). Especially platforms and search engines play a role in agenda-setting (here defined following Schroeder (2018: 14) as the capacity to shape which actors and issues receive attention by the public), as news stories gain visibility based on user engagement and sharing and are shaped by algorithmic recommendation systems, with the competition for audience attention intensifying. At the same time, users have become more central as generators of content and active participants on and around news. All this has already reshaped gatekeeping processes, with, for example, the selection of news stories driven or assisted by computational tools and audience analytics software but also adding new tasks such as the verification of user-generated content and the management of online communities (see, for example, de Haan et al., 2022; Petre, 2021). However, as various scholars have shown (see, for example, Bro and Wallberg, 2015; Nechushtai and Lewis, 2019; Wallace, 2018) this role of algorithmic gatekeeping is complex and not free of conflict. With such systems often programmed to select and prioritise news based on criteria related to user preferences and engagement metrics rather than traditional journalistic standards, this shift has raised concerns about potential biases creeping into news, a loss of human editorial control and brought to light the challenge establishing clear normative standards for algorithmic systems in journalism.

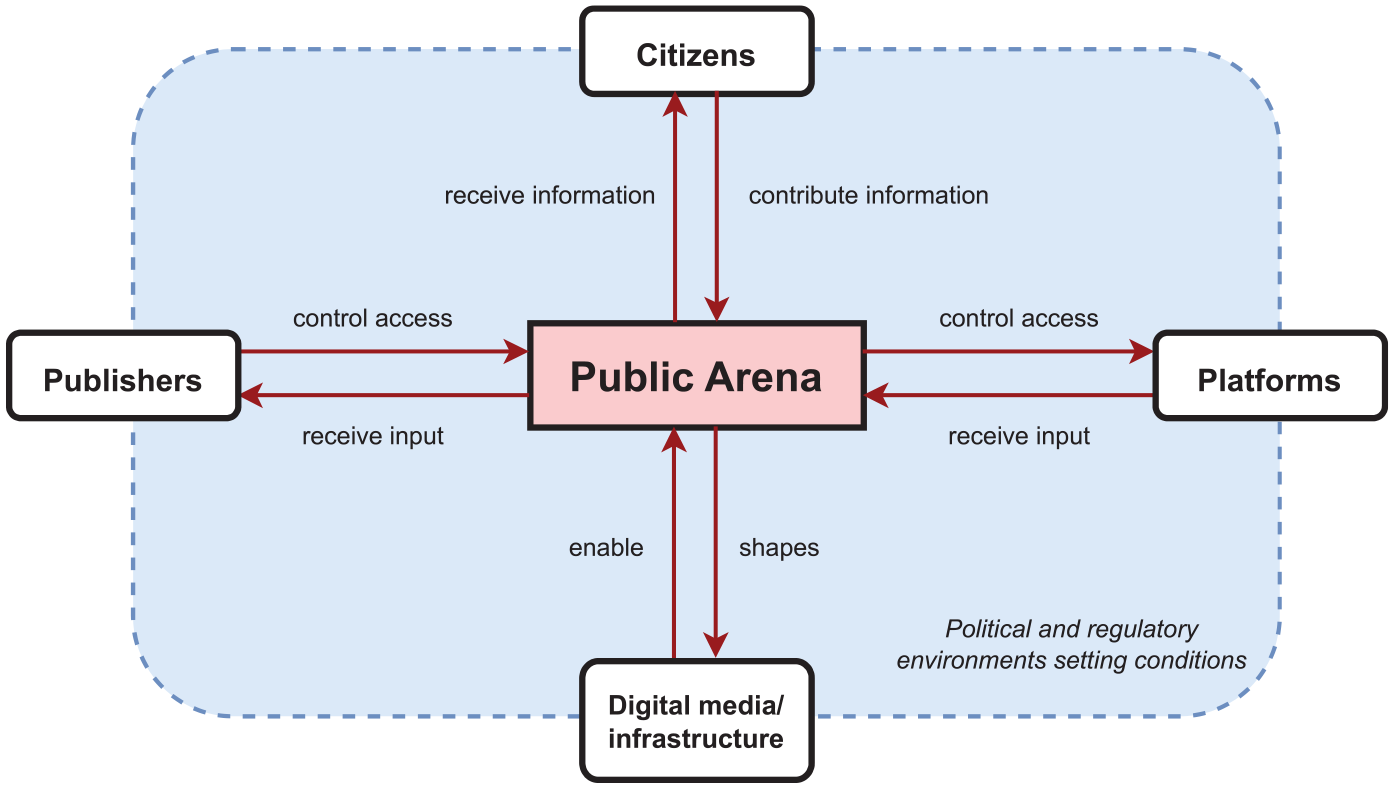

Each technological wave has not only influenced the speed and reach of news dissemination but has also introduced new considerations and challenges for the news as gatekeepers to navigate in their role as mediators between information and the public. News organisations are still important gatekeepers of the public arena – the common mediated shared space, where different actors exchange information and discuss matters of common concern, often referred to as information environment (see, for example, Jungherr and Schroeder, 2023). They are so thanks to the attention afforded to them as sources of information, which translates into an ability to set the agenda and determine who and what receives wider attention. While in theory everyone can make themselves heard to various publics and (political) elites, the public arena is contested because it is effectively marked by a limited attention space (our attention, for which there is intense competition, see (Taylor, 2014)) and thus resembles a zero-sum game: only so many actors and issues can feature at the same time – something that many people experience as ‘issue visibility’ (for a visualisation see Figure 2). However, while news organisations continue to hold a great deal of control over what does and does not end up as news in public, it is important to note that their control has been strongly weakened in recent years. The reason is a fundamental re-structuring of the way information can be produced, received and consumed through digital media – ‘institutions and [technical] infrastructures that produce and distribute information encoded in binary code’ (Jungherr et al., 2020: 7) which include platforms, 1 search engines and social media services – (Jungherr et al., 2020: 7) and the increasing reliance on the same by large swathes of the public for news and information consumption.

A schematic visualisation of the public arena and its components.

As the gatekeeping process on the side of publishers changes – for example, through new technologies and the actors thereby entering the journalistic field – so does potentially (1) how and what kind of news ends up in the public arena and (2) re-structures the existing position and control various actors (the news, technology companies, the public) have over the public arena, thus ultimately reshaping the same and with it, general informedness and political opinion. As artificial intelligence presents such a technological change, I ask the following:

How does AI shape the gatekeeping process of the news in terms of production and distribution?

Within this, I ask the following:

RQ1: Where does AI come to be used in news organisations writ-large?

RQ2: Which effects arise as part of AI’s adoption and use in the production and distribution of news?

Case selection, data and methods

Sampling of cases and participants

To produce a dataset with some meaningful variation that allows for a limited general analysis, I adopted a multiple-case study design, focusing on commercial and public service news organisations in the United States, the United Kingdom and Germany, which represent three different media systems in the scheme of Hallin and Mancini (2004). I identified 45 organisations where AI was in use as sites for recruitment through extensive desk research in industry publications, outlets’ websites, commentary and coverage on social media platforms, recommendations from colleagues and other researchers in the field, and calls for information on social networks Twitter and LinkedIn. I then employed a mixture of purposive sampling followed by snowball sampling to recruit interview participants from these organisations. I reached out to people who (a) have worked with or on AI in the broadest sense (as indicated by, for example, their job description or publicly available information), and (b) came from editorial, product development, audience, technology and strategic/management roles, using a mixture of emails, messages on platforms such as LinkedIn, Twitter, letters and telephone calls.

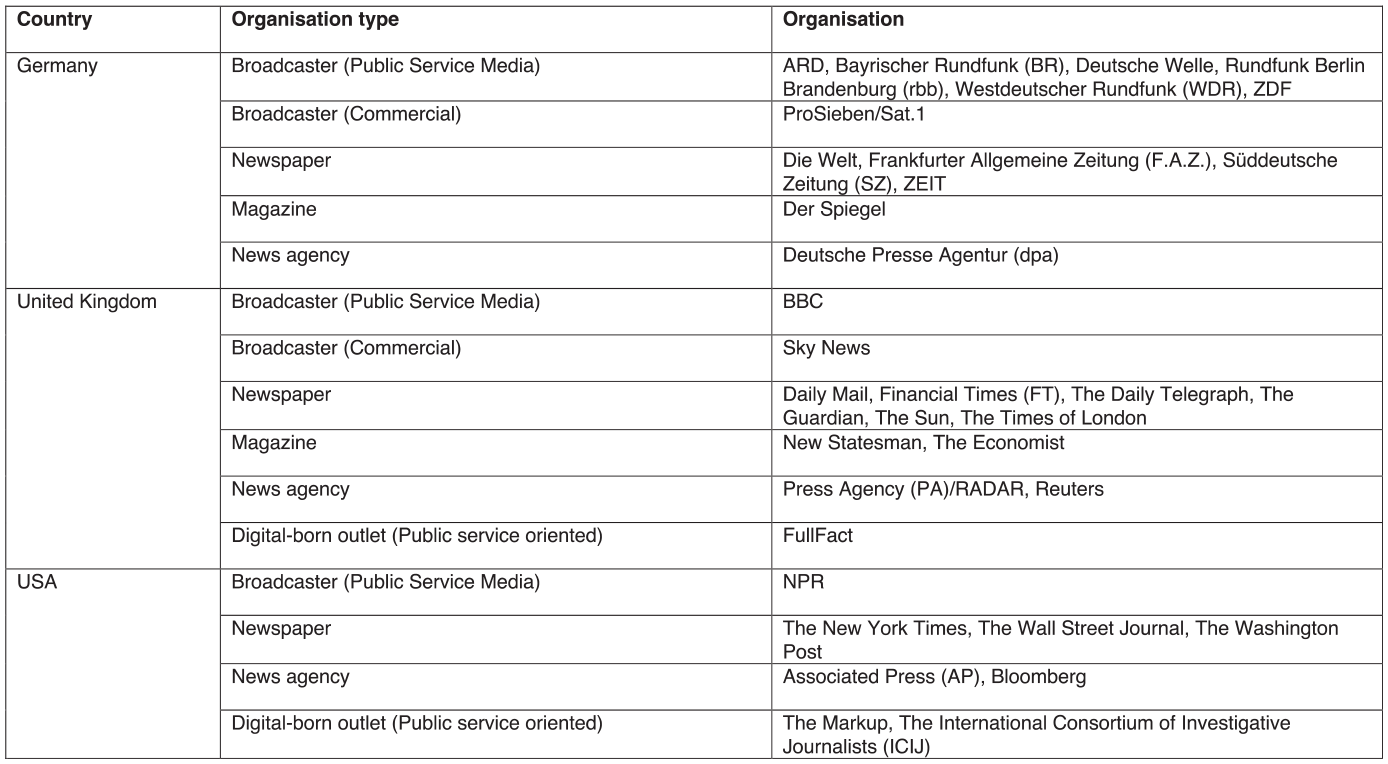

In total, I contacted 312 news workers 2 and ended up interviewing 143 from 34 organisations out of the 45 organisations I approached (Figure 3). While I reached saturation earlier, I conducted further interviews past the saturation point to better explore the depth and breadth of a subject and validate the consistency of the data. My interviewees held roles such as reporter, editor, data scientist, software engineer, audience analytics manager, or product manager from more junior to senior positions. 28% of the sample were from the United States, 38% from the United Kingdom and 34% from Germany. I did not ask participants how they identified in terms of gender. To be eligible to participate, individuals had to be 18 years of age or older. However, not all participants provided their age. From personal judgement, most participants were in their 30s and 40s. In addition to official interviews, I also had various off-the-record background conversations with the same and additional news workers from these organisations as part of in-person and virtual industry events, conferences, workshops and newsroom visits which informed the analysis.

An overview of the respective news organisations.

Interview conduct, analysis of data and ethical considerations

The majority of interviews was conducted between June 2021 and December 2022. However, due to rise in interest in AI after the public release of OpenAI’s ChatGPT in November 2022, I conducted further interviews with a smaller group of new participants between January 2023 and January 2024 to capture these developments, with 121 in the first wave and 22 in the second wave. Interviews were conducted using the same semi-structured interview instrument in both waves to address the research questions in a standardised way, while providing enough flexibility to discuss aspects and topics which arose spontaneously. Guided by gatekeeping theory, I formulated interview questions to probe how and where AI systems come to be used in the flow of information, focusing on different stages, as well as tasks, motivations and effects. Sample questions included, for example, ‘Can you please explain to me which AI systems your news organisation has implemented/which AI systems you are working with?’ and ‘Can you describe to me what this/these application/s does/do?’ as well as ‘In your organisation/department, how has AI affected: [stage of gatekeeping process/tasks]?’. I kept extensive notes and wrote short reflective memos after each interview which both formed part of the corpus used for the analysis. Interviews lasted on average 75 minutes, with the shortest 25 minutes and the longest interview 2½ hours. All interviews were recorded, transcribed verbatim and coded for themes in NVivo, using a mixture of inductive (RQ2) and deductive coding (RQ1 and RQ2), drawing on the research questions and the theoretical framework. To improve my sense for the findings, assess the credibility of what interviewees told me, and spot inconsistencies, I cross-checked the interviews and final analysis with other primary and secondary sources from the organisations (where available) and external, publicly available sources such as news and industry reports. For each thematic section, I assembled a selection of quotes from participants across organisations and countries, that illustrated aspects of the broader findings particularly well, only a fraction of which could be included in the final manuscript.

Research ethical approval for the study was granted by the University of Oxford’s Central University Research Ethics Committee (CUREC, Approval Reference: SSH_OII_CIA_20_71). All participants were provided with an information sheet and signed a consent form. To maintain the trust and confidentiality of my participants I offered them anonymisation and anonymised them throughout. All illustrative quotes and examples as well as descriptions of roles were carefully checked to make sure they do not reveal the organisation or individual (unless the information was already public).

Findings

AI uses along the gatekeeping process

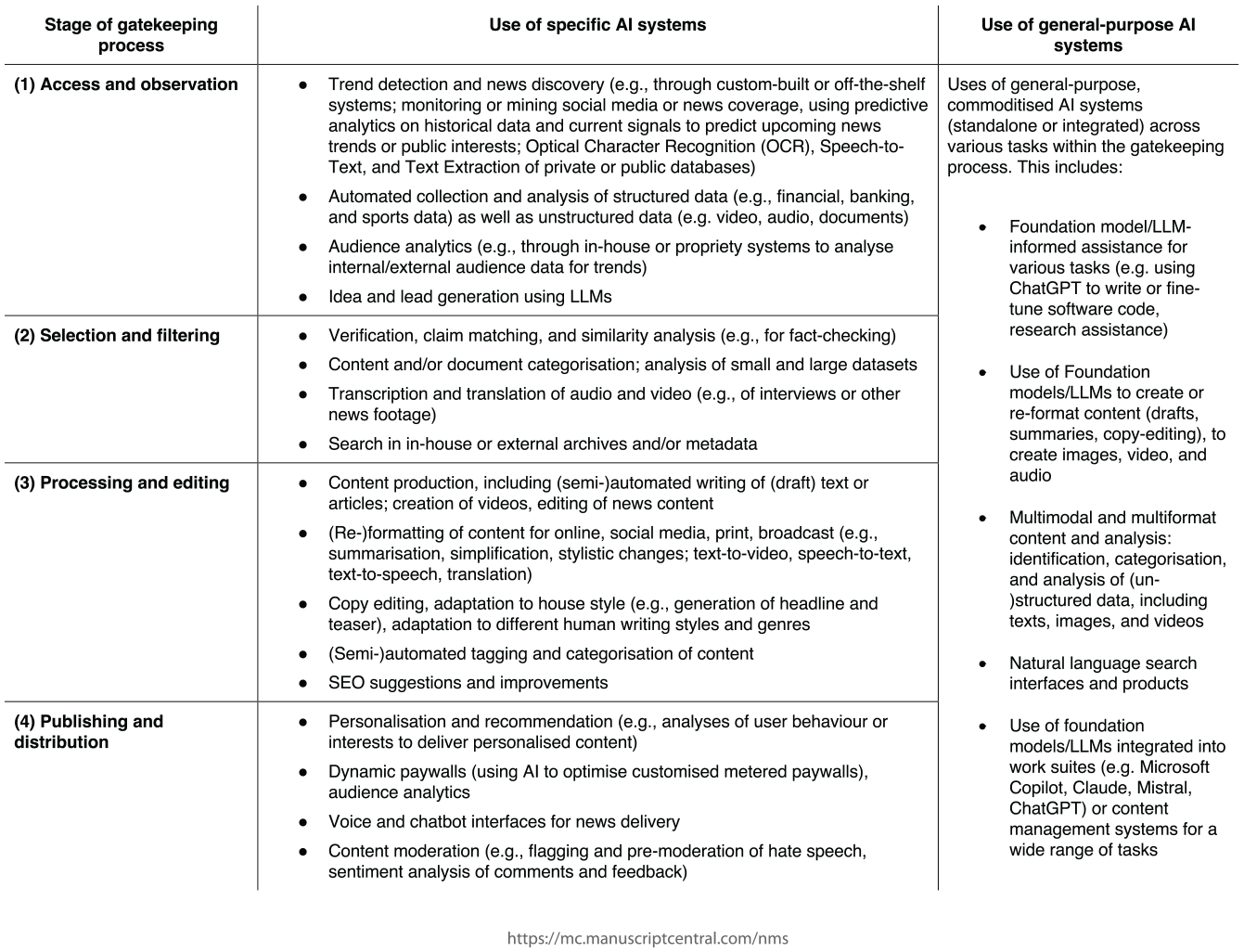

Across the news industry in these three countries, artificial intelligence is used at all points of the gatekeeping chain (RQ1), although not in all organisations to the same degree (see Figure 4, for a categorisation).

A schematic and non-exhaustive overview of AI uses in different stages of the gatekeeping process.

My interviews suggest that at least up until the more public emergence of ‘Generative AI’ in late 2022, the use of AI was more pronounced on the business side of most of these news organisations, where its integration aligned with – and often was the natural continuation of – existing business cases and audience analysis practices, with more immediate and pragmatic use cases for what AI could deliver at the time. Nevertheless, even before the winter of 2022, AI had gradually extended to other parts of these news organisations as well, particularly in areas characterised by often heavy quantitative data reliance and/or availability and higher data and computing literacy, for example in investigative journalism, or the nascent visual- and data-investigation teams at some of the organisations in my sample. However, the advent of AI-as-a-Service models, coupled with the emergence of generative AI technologies, has lowered the barriers to AI integration at many organisations. The ease of access and the reduced complexity offered by these new AI models has, according to my interviews, democratised their use. Consequently, there is a broader uptake of AI across various parts of news organisations that are directly involved in the creation and distribution of news.

AI’s shaping: efficiency, effectiveness and optimisation

A central question is not just where and what but also how AI shapes the gatekeeping process (RQ2). Across the organisations studied, three themes emerged: AI is used to drive efficiency gains, to optimise processes, and to bring about greater effectiveness in achieving certain aims. Often used interchangeably, these concepts differ. While efficiency is about achieving an objective with minimal effort, optimisation is about finding the best way to do so within certain limits, and effectiveness is about the degree to which predetermined objectives or goals are achieved. In practice, this often overlaps but it is a useful distinction to understand the effects of AI in various gatekeeping processes.

The most prominent theme that emerged from the interviews was the use of AI to drive efficiency gains through further automation of existing processes. A majority of respondents indicated that their organisations were using AI approaches to automate repetitive, high-volume tasks that are labour-intensive and time-consuming when performed manually. As one senior editor from a news agency explained,

I started at in 2014. And they had recently embarked on a project to automate the production of corporate earning stories. And that was really [about] the power of basic data to text automation, to make news production more efficient.

A second example of AI bringing about an efficiency gain comes from a US-based news organisation which sought a way to find hidden laws within congressional bills. According to the interviewee, this process can be done manually, but it is cumbersome and time-consuming. To address this, the organisation’s editorial technology team designed and trained a neural network:

[The system] could find them with quite a bit of efficiency. But the downside was, it meant that a reporter had to now go report on this. It created an entire new line of work, that a reporter was not currently covering. It’s like now I have to cover all of these crazy injected bill laws.

The clearest and most common example of AI being used to drive efficiency increases was around the transcription, translation, and increasingly the re-formatting or co-creation of content. The use of different AI tools, including LLMs and various multimodal foundation models, towards these ends was mentioned by interviewees in all organisations in my sample, with interviewees stressing that the time savings from the use of the technology were significant and measurable.

Meanwhile, a UK journalist at a large newspaper pointed out the merits of automated AI-translation in speeding up in both reporting and editing processes:

Automatic translation, it doesn’t have to be perfect for it to be useful. And I don’t think people always necessarily think about it like that, but that is true. If it gives you a gist of it, what’s trying to be said, that’s enough for you to do. And people are aware that that’s not perfect [. . .] If it’s something that’s going to be published or something, you get someone who actually speaks the language to check over it, for example.

Using AI to drive efficiency gains is, however, not limited to the first two stages of the gatekeeping process, access (Stage 1) and selection (Stage 2), but also matters in the later stages of processing (Stage 3) and distribution (Stage 4), as various examples from these organisations demonstrate (see Figure 1). For instance, a broadcaster in Germany has implemented a system that assists their staff to more efficiently find and work with video material from a range of different channels, again something that was possible manually but took more time:

[We have] our own search engine with which we make our content intelligently searchable in order to quickly find videos from our portfolio on a specific topic. This is achieved by first creating a transcription of a video using Speech-To-Text and then further processing this transcription with a Large Language Model. Two AI models are used for our content search. The speech-to-text part is carried out using the Whisper model from OpenAI and an LLM from the German AI company Aleph Alpha is used to embed the transcripts.

The use of this system not only comes to bear in the production of new material, but also in the more efficient and quick distribution of existing content across various online platforms.

These efficiency gains, many of my interviewees argued, do matter, with many minor improvements adding up to make a difference for the whole organisation, although this is not always straightforward and depends on the specific tasks being automated. In some cases, AI could also end up decreasing efficiency, for example, if something produced by AI ends up needing to be laboriously checked by a human because its output cannot be fully trusted – a pressing concern especially with forms of ‘generative’ AI which are prone to producing responses which contain false, misleading, and made-up information presented as factually accurate due to their systems architecture (see Ji et al., 2023). These considerations could also limit the scope to scale up certain products or processes that use automation.

A second consistent theme was that AI is bringing about greater effectiveness and scaling. AI allows these publishers to produce certain results or achieve certain tasks that were previously impossible due to various constraints, including the limits of human abilities. This includes, for example, telling stories from datasets that are too large to be analysed by humans alone (due to the time it would take or the impossibility of cognitively processing the volume of data), or producing and distributing content tailored for specific audience segments or individuals based on their behaviour or preferences at scale. Again, this happens across all stages of the gatekeeping process. For example, one senior AI strategist from a leading US publisher explained the efforts at his organisation around news gathering (Stage 1):

One focus on AI in journalism innovation is on news gathering. So how can you use [. . .] natural language processing for mining these [digital] data sources, structuring them [so] it makes sense of what’s happening in a particular area. Same thing with climate data, so looking at emissions or temperatures or extreme weather events. [. . .] as the world moves to become more quantitative, this is a new type of information [we can use].

Another interviewee at a different US organisation recounted how AI was used to provide story ideas over a longer time frame, too:

We were able to create a machine learning model that is able to give us ideas or leads for multiple stories over a longer period of time. And we might turn it off. We might turn it on. We might ignore the stories when we’re very busy. We might follow up the leads on a high frequency when we’re not so busy.

The use of AI also brings about greater effectiveness where it enables more personalised and targeted distribution of news (Stage 4) or a better understanding of audiences that can inform editorial production that were previously unattainable at scale. As explained by one interviewee, who described the use of AI in audience analytics at their organisation:

Well, it’s crucial because you have a deep understanding of the audiences [thanks to AI], and you can time the. . . the timing of your outreach in the types of promotions and the type of conversion channels or funnels that you can create are very sophisticated at scale.

In all these cases, the capacity of AI systems to analyse large corpuses of multimodal data – whether audience data, archive material, or data gathered for investigations – at scale enabled newsworkers to achieve existing goals (e.g. finding stories, carrying out reporting tasks, and tailoring content distribution) through the application of techno-scientific methods which would otherwise have not been possible due to the scale or complexity of the task. However, effectiveness – while often about scale – extends beyond the same. For example, AI systems to improve copy-editing or detect stereotypes in news content are not about scaling up the task of writing or editing but instead aim to help news workers be more effective on a qualitative dimension – embedding a level of critique into the workflow that was not as easily possible before. The ability to increasingly tap into these previously inaccessible capabilities with the help of AI is reshaping how these news organisations can gather, analyse and distribute news.

Finally, the use of AI leads to increased optimisation – making existing systems or tasks as functional as possible within given constraints – at these organisations for various tasks, especially on the distribution and business side (Stages 3 and 4). A growing number of publishers, including several commercial news organisations in my sample rely on dynamic paywalls as a core part of their revenue strategy, with these systems individually limiting what online audiences get access to (in comparison to unrestricted access, as is the case with, for example, public service media) but also bringing in new subscribers. Such systems commonly rely on a large number of data points for each individual user – from the time of their visit, the device used, what content they read, for how long, and so forth – to predict how likely they are to becoming a paying subscriber – and adapt the paywall accordingly.

The news workers interviewed at these publishers showed great caution in not disclosing the precise mechanics of these systems, specifically regarding the algorithms employed or the nature of the data inputs. This reservation was particularly pronounced in relation to their efficacy in improving conversion rates (the percentage of users who take a specific desired action as a result of engaging with news content, for example, non-subscribers becoming subscribers). The interviewees provided estimations of enhanced conversion effectiveness ranging from 2% to 10% relative to a non-targeted approach. While I am unable to independently verify these figures, the fact that across interviews within and between organisations similar figures came up suggests that they are broadly reflective of what these systems can achieve.

Another central area where AI systems are used to optimise is in audience analytics and distribution (Stages 2, 3 and 4). Using computational methods to sift through user data and interaction trends, many news organisations in this study reported acquiring a more nuanced understanding of audience preferences and behavioural patterns, enabling more optimised content promotion, subscriber targeting and churn reduction. As two interviewees from publishers in the United States and Germany elaborated about their use of machine learning in this context:

We do a lot of audience and traffic analysis and we try to understand every day what stories are performing well and why, and how we can repeat that success so that involves pulling in lots of audience data, performing the analysis, doing the pivot and then trying to statistically assess whether that was what we expected it would do or not. We saw the introduction of these technologies for distribution, so optimising when you publish stories or building automated AB testing of headlines in packages through machine learning.

While many interviewees reported that editorial judgement remains paramount in content prioritisation, AI-informed audience analytics are providing important directional signals at various commercial outlets in all three countries:

[We will continue to] be very manually-curated and editorially-curated using what those platform editors believe to be the best news agenda of the day. So, we might give them feedback that says this story isn’t performing as well as we think something in position two should be doing, and then it is up to them to say that doesn’t matter.

It should be noted that although my interviewees say that the focus will continue to be manual and ‘editorially human’, they may or may not see (or disclose) how in fact, AI content recommendation and more comes to creep into this part of the gatekeeping process over time. Again, it was difficult to obtain reliable and independently verifiable information on how big a difference the use of AI for optimisation in the context of content recommendation and distribution makes, apart from the conviction of interviewees across all organisations in my sample using such approaches that it does make a difference. To give one example, figures that interviewees mentioned for AI-informed recommendation of news fell in the range of 10–30% of improved click-through rates (CTR) 3 against a baseline of recommendations made by human editors, which suggests that the effects are real and not just assumed.

Discussion

AI as part of the long history of automation in gatekeeping

The integration of algorithmic systems – and later AI – in news production has a longer history against which the findings presented in this article have to be seen. As a rich body of scholarship across different organisations and media systems has demonstrated, these earlier systems have themselves already altered the traditional understanding and practice of gatekeeping, a concept historically tied to journalists controlling the flow of information from sources to audiences. Previously characterised by a one-way model, where journalists served as the primary arbiters of news production and dissemination, gatekeeping has evolved into a more dynamic and multifaceted process (Blanchett, 2021). While journalists still select, edit, and prioritise news items, work over the last decade has emphasised the growing role of audiences, digital intermediaries and algorithmic systems in this process.

The use of AI, and the findings presented here fit into this longer trend. Take, for example, the use of AI to increase efficiency – a goal which did not just arise with the arrival of artificial intelligence, but one which can already be found in the deployment of earlier computer systems in the news (Nechushtai and Lewis, 2019; Parasie, 2022). Here, too, the aim was to perform gatekeeping tasks more efficiently and on a larger scale than with, for example, human editors. Likewise, as Hansen and Møller Hartley (2023) argue, the challenging economic realities facing the news industry were and are a key driver of automation adoption – and therefore increasing rationalisation – as is the use of algorithmic systems to optimise processes, for example around optimising content for search engines and social media platforms. Again, as my findings demonstrate AI has to be understood variously as a continuation and intensification of these ongoing processes.

AI retools the gatekeeping process

In light of this, it is time to return to the questions set out at the beginning: How does AI reshape the gatekeeping process of the news in terms of production and distribution? My findings suggest that the answer is fivefold:

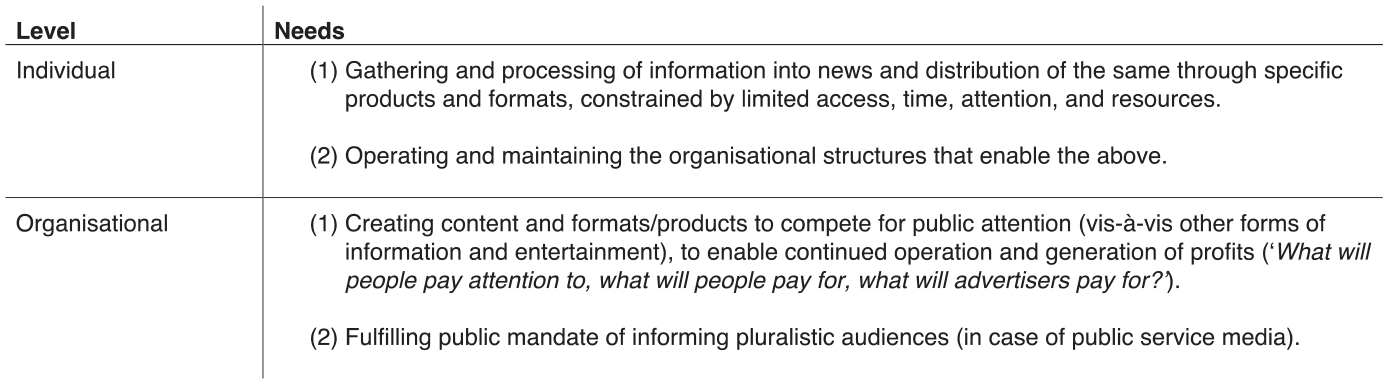

First, AI reshapes the production and distribution of news by providing publishers with new means in the service of achieving certain existing ends (see Figure 5). AI, for now, does not fundamentally revolutionise the essence of journalism. Rather, akin to what Jungherr et al. (2020: 28) have described for the role of digital media in politics AI and what previous studies on the changing nature of gatekeeping have shown, AI can be seen as a form of retooling, where the use of this technology in news organisations for now does not necessarily lead to changes in the fundamentals of why and towards which ends they operate; it mainly reshapes how they are pursued.

A schematic representation of the needs and motivations of news organisations on the individual and organisational level.

Second, this reshaping can often be barely visible up close but becomes apparent when things are looked at in the aggregate and from a distance. Previous studies have demonstrated how the reshaping of the news through technology is often slow and can be difficult to at first to those involved (see, for example, Hansen and Møller Hartley, 2023; Wu et al., 2019). Partially, this is due to the somewhat opaque workings of some of these systems, partially because only select groups have control over and access to them within news organisations, with many others only getting to see some of the effects, but not the full picture (see, for example, Hansen and Møller Hartley, 2023: 937).

Third, there is no one single AI that imprints itself on and re-tools the gatekeeping process. Instead, AI is best understood as an assemblage of different techniques, systems, and approaches, loosely grouped together under the definition outlined in the beginning, namely that these systems learn and carry out tasks that were previously thought to be reserved to humans. However, AI increasingly comes to bear across the entire lifecycle of a news item, from information gathering and processing to distribution and consumption, and affects multiple departments within news organisations that are involved in the production and distribution of news. While AI is in public is often discussed in the context of editorial work, such a framing loses sight of the larger socio-technical structure in news organisations on which AI comes to bear.

Fourth, the adoption of AI is part of a longer trend of automation in news production and distribution happening across all these countries. This matters with respect to the speed with which AI has been adopted in different parts of news organisations. My data shows how AI is part of a more natural evolution of existing approaches, as described with recourse to other studies earlier, especially in data journalism and audience and business analytics and more revolutionary, especially in more traditional forms of news production. While recent developments in the field of ‘generative AI’ have accelerated existing efforts, or introduced them wholesale in some organisations, overall, my findings indicate that AI is better understood as part of the broader, gradual digitisation in the news and the gatekeeping process as described by scholars such as Boczkowski (2005) and more recently Blanchett (2021), Nechushtai and Lewis (2019) or Petre (2021). The technological shaping of AI as described here is similar across the range of settings I observed, both in terms of countries and organisations – the findings suggest an isomorphic trend among news organisations with respect to AI partially as a result of AI’s coercive force, characterised by analogous motivations, objectives, uses, and effects, and diverging primarily on the basis of individual organisational needs and operational frameworks rather than geographical position. This sign of isomorphism ties in more broadly with historical trends around previous algorithmic systems where news organisations have congealed in their structure and operations (Christin, 2020; Petre, 2021) across media systems.

Fifth, the effects of AI are real, but it is often still difficult to quantify them and this study does not make a comment on the size of the described effects of AI. However, ongoing efforts within news organisations and in academic research show the promise of providing better answers to questions of gains in efficiency and productivity, as well as quality measured in various ways.

AI and the ongoing rationalisation of the news

Beyond an account of where AI comes to work (in all parts of the gatekeeping process, but to different degrees) and how (by providing new means or tools to achieve certain tasks, completely or partially), what is still missing is an explanation of the shaping power of the technology itself. Added up, what is the overarching effect of this technological shaping? Or to put it differently: What does AI do?

As I suggested in the beginning, a useful theoretical lens to think through this is to see AI as a form of rationalisation in the Weberian tradition. Digital technologies have already extended and deepened the digitalisation and calculability of audiences (Napoli, 2010; Schroeder, 2018) on the one hand and news work on the other (Parasie, 2022). AI, my empirical findings strongly suggest is another layer in this process: In all three countries analysed here, artificial intelligence further rationalises the work of news organisations and pushes it more strongly towards logics of efficiency, predictability, and calculability. While my analysis did not focus on assessing the different degree to which these rationalisation tendencies play out in all three countries the general tendency was notably present throughout.

That this should be so, makes better sense when one considers the genesis of AI itself. First, AI as a scientific field and set of approaches is imbibed with notions of the rational. The standard model of AI that emerged in the 1940s is founded on the idea that ‘a machine is intelligent to the extent that its actions can be expected to achieve its objectives’ (Russell, 2022: 44). Arguably, this definition is overbroad as it can theoretically include everything from a cement mixer to a Large Language Model – both ultimately machines concerned with certain objectives. It will also likely have to be rethought as specifying objectives completely and correctly becomes more difficult owing to the large number of tasks future AI models are expected to carry out (Russell, 2022: 43). Nevertheless, it is what drives much of AI to this day. This fact alone marks out the technologies’ effects in the news as a form of rationalisation.

Second, a central way in which Weber saw rationalisation, as Schroeder (2020) notes, was in terms of calculability and ‘an intensification or extension of applying scientific knowledge’ to an ever-growing set of domains (p. 3), and as my findings show, this can be said for the news, too. AI is a form scientific knowledge as it is grounded in statistical and computational theories, derived from fields like computer science, mathematics, and cognitive science. Its wide application in news organisations as demonstrated here extends the application of this systematic, scientific knowledge to the traditionally more subjective domain of journalism, where human judgement and intuition long reigned supreme.

Third, the rationalisation of the news and the gatekeeping process through AI becomes, however, most clearly visible in my findings where forms of what Weber called instrumental rationality are concerned: the pursuit of goals in the most efficient and effective way possible. One recurring pattern across these organisations in all three countries is not just that the motives for the adoption of AI predominantly fall under the mantel of achieving more efficient or effective outcomes, the actual use of AI (which of course can differ from stated aims and motives) itself seems mostly oriented towards the same. This is also corroborated by recent surveys of the news industry’s motives for – and use of – the technology (see, for example, Newman and Cherubini, 2025).

That increasing rationalisation can be a double-edged sword has been observed in other contexts and we can see this in the context of the news and AI, too. The increasing use of tools and technologies devoted to greater rationality also holds the potential to undermine the ‘substantive human capability for consciously achieving one’s goals’ (Collins, 1994: 84). The pursuit of instrumental rationality also assumes the disregard of other values, ethics, or broader implications of those actions (what Weber called ‘value rationality’) that might conflict with rational logics of efficiency and maximum utility. In other words, the scope of human inputs and outputs is reduced while that of – instrumentally calculable, rather than subject to value rational – machine inputs and outputs is expanded. In the news organisations studied here, both plays out in how and why they use AI. We also see this, for example, in news organisations with the establishment of AI guidelines, which are not just established in response to the uncertainty from the technology and its trajectory, but because some of the effects of AI as used in journalism are in tension with some fundamental journalistic norms and ethics (Becker, Simon, Crum, 2025).

Whither the public arena?

The increasing use of AI in these organisations rationalises them overall inasmuch as that it disenchants not necessarily their entire world, but existing routines and tasks along the gatekeeping chain that previously used to be the reserve of newsworkers, creating both an ‘iron cage’ (by structuring and constraining the actions of news workers) on the one hand and an ‘exoskeleton’ on the other that enables tasks which were previously difficult to achieve and rendering existing ones more efficient (see also Schroeder, 2018: 32).

This, of course, raises the question of the ‘So what?’ Isn’t it just a form of technology reshaping an industry that has always changed with and through technology? The answer to this is both yes and no. As argued in the beginning, news organisations still play a central – albeit diminished – role as gatekeepers of the public arena, which binds citizens to elites and which acts as a conveyer belt for information, thus playing a central part in the functioning of deliberative democracies. As the gatekeeping process changes so does potentially what kind of news ends up in the public arena. AI also re-structures the existing position and control various actors (the news industry, technology companies, the public, the state, but also new entrants) have over the public arena, thus ultimately reshaping the same and with it, general informedness and by extension political opinion.

Looking at this again through the lens of AI as a form of rationalisation, two points emerge. First, AI further strengthens those elements in news organisations that have already been rationalised, namely the distribution side and data-oriented practices in newsrooms. By leveraging AI, publishers can tailor their content and services more efficiently and effectively to their audiences, thus not just leading to improved engagement and performance in (online) revenue, but also potentially to either better serving and reaching pluralistic audiences or maximising their overall audience share by tapping into as of yet unexplored audience segments. Meanwhile, the use of AI for improvements in efficiency and effectiveness of news work can lead to and aid the production of better news content (in terms of, for example, rigour, depth and quantity). None of this, however, automatically guarantees citizens will be better informed and that the quality of information within the public arena will improve.

While the rise of ‘material and technological practices [in news production] that strive for [greater] neutrality and factuality’ (Parasie, 2022: 6) have arguably allowed for the better analysis of complex and systematic phenomena, and thus improved the quality of information available to the public, this is not as I argue elsewhere (2024) ‘a 10.1080/21670811.2024.2431519 conclusion’, depending instead on ‘decisions made by the set of actors who wield control over the conditions of news work – executives, managers, and journalists’ (p. 37). Similarly, while AI offers the potential for improved audience understanding and content tailoring, its use can also risk prioritising quantifiable engagement metrics over less-easily quantifiable journalistic values (Petre, 2021), potentially skewing journalistic content towards sensationalism or neglecting less quantifiable aspects of public interest (see, for example, Napoli, 2010). The effectiveness of AI in serving diverse audiences is also contingent upon the data and algorithmic systems used which can be opaque or reflect existing societal biases and power imbalances, thereby potentially reinforcing them rather than diversifying reach.

If AI will be used to improve the public arena – both in terms of raising the quality of available news and a structural reinforcement of publishers as providers of factually accurate and trustworthy information – therefore depends not just on the capacity of the technology itself, but also on the decisions of organisations and news workers to use it towards these ends. On its own, AI does not guarantee either.

Second, structurally AI shifts the balance of power within the public arena. Already, the technology empowers certain actors which wield control over the public arena more than others. As Simon (2022, 2023) has found, the increasing use of AI at publishers goes in tandem with an increase in control of large technology firms – for example, Google, Microsoft, but also OpenAI or other AI firms – over the news, as these largely control parts of the technology, important infrastructure and the conditions for the use of both. As gatekeeping gets reshaped by AI, ‘outsiders’ in the form of these companies gain more control over the technical elements of this process, too. At the same time, news’ use of their services ultimately also often ends up improving the technology, given the learning that is central to AI and requires vast amounts of data, thus allowing technology companies to build better general-purpose AI products and services in return. This brings us to the second facet. Technology companies also deploy AI at scale on their own services and products to become more efficient in handling information internally and in structuring and providing information, including news, to the world at large. Platforms are already among the most important gateways for audiences to news and information writ large. Two insights follow from this. First, it serves as a reminder that the way news organisations use AI is one part of the story. How platforms, technology companies, and other actors empowered through the tools and services of the former use AI will matter just as much, if not more, in how the public arena will be reshaped in the years to come. Second, a dual strengthening of the position of technology companies through AI could not only grant them greater control over the public arena and its conditions; it would also further empower a set of actors which have proven difficult to democratically control and regulate – and which compete with the news for audiences and gatekeeping power. But for the public arena to function properly, its public ‘assessibility’ and transparency needs to be ensured (Jungherr and Schroeder, 2023); it requires its workings, adherence to norms, and infrastructures to be open for scrutiny and debate by all, including academics, elites and the public – and whether this is guaranteed in future where it is more strongly mediated by AI is so far unclear.

Conclusion

This study has contributed to existing research by addressing key questions around the introduction, use and effects of AI in news organisations with rich data from three countries, connecting empirical evidence with a theoretical framework that explains and frames the phenomena. This article’s main contribution is providing the theoretical lens of gatekeeping and rationalisation which jointly ties together the disparate elements we can observe with the increasing use of AI in news, which so far has been lacking.

This study has limitations. While my selection allows for an examination of three different media systems and organisations with significant global influence, it also narrows the study’s scope, potentially overlooking the dynamics present in media systems in the Global South or in other regions with differing regulatory, economic, and cultural contexts. Second, the exclusion of regional and local news organisations narrows the scope further. Local and regional media, with their distinct economic and technological challenges, audience relationships, and resource constraints, could have a harder time at integrating AI to the same degree as larger organisations, leading to a reduced impact of the technology. At the same time, some smaller news organisations might have the advantage of flatter hierarchies and a greater ability to experiment, which would suggest a similar or greater effect than elsewhere. Subsequent studies should explicitly investigate these contexts to find answers to these and other questions. Qualitative interviews also have limitations in describing and assessing journalistic practices, mainly because of the gap between self-reported behaviours and actual conduct. In addition, while this study can describe the channels by which AI reshapes the gatekeeping process and where, it is limited in what it can say about actual effects and their size. Only further research will be able to establish how big of a difference AI does make for the situations and settings I describe.

While there is no single AI system dominating newsrooms, various AI systems reshape the news as part of a broader and longer historical trend towards more automation and a reshaping of the entire gatekeeping process, providing publishers with new means in the service of achieving existing ends. Though the precise effects are hard to quantify, AI pushes news organisations and the public arena towards logics of greater efficiency, predictability, and calculability. In rationalising the news, it also reconfigures who holds power in the news ecosystem.

Footnotes

Acknowledgements

This research would not have been possible without all the news workers at various organisations who were willing to lend me their time and talk to me. I am also greatly indebted to Michelle Disser, Ralph Schroeder and Ekaterina Hertog for helping to shape the article from the onset, taking the time to read various drafts and providing excellent comments. Caitlin Petre and Carl Benedikt Frey, too, provided excellent feedback. Finally, I am grateful to Steve Jones and the anonymous reviewers for their very helpful comments which made this a much better a paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: The author would like to thank the Leverhulme Trust and the OII-Dieter Schwarz Scholarship for supporting his doctoral studies. As well, the author gratefully acknowledges support for this article from a Knight News Innovation Fellowship and grant at Columbia University’s Tow Centre for Digital Journalism, the Minderoo-Oxford Challenge Fund in AI Governance and the Balliol Interdisciplinary Institute grant. Any opinions, findings and conclusions or recommendations expressed in this material are those of the author and do not necessarily reflect the views of these bodies.