Abstract

This study, based on 841 surveys with 18-to-19-year-old teenage girls who live, work, or attend school in the Greater Baltimore area, investigated their social media use and the kind of harassment they are subjected to on different platforms. Racialized sexual harassment was rampant, with girls of color being inundated with requests for nudes and sexual comments, especially on Facebook. Participants said that they faced harassment on Instagram irrespective of race, which, as prior studies have shown, has a distinct bias against users of color. Harassment toward girls of color promoted harmful racial stereotypes. American Indians were also deeply impacted. Unrelenting online harassment made participants feel uncomfortable and uneasy (45%), racially discriminated against (40%), and hated (12%) on platforms they chose to socialize and seek information of interest on.

Introduction

As of 2018, 88% of young adults aged 18 to 29 years reported having used some form of social networking service (SNS), including Facebook, Instagram, or Twitter, with about 81% of them using these platforms on a daily basis (Rideout & Fox, 2018; Smith & Anderson, 2018). Academic publications, news reports, and crime databases globally have identified that more than 50% of teenage girls are sexually harassed on social media platforms (Francisco & Felmlee, 2021; WionNews, 2022). As

The Federal Bureau of Investigations (FBI) estimates that there are as many as 750,000 child/teen sexual predators active online who may engage in the sex trafficking of children/teenagers in the United States (Crimes Against Children/Online Predators, 2022). An exemplary case occurred in the State of Maryland, which has sizable populations of Black and other traditionally marginalized minorities and ranks 44th out of 50 states in providing prevention, resources, and support to victims and survivors of child sex (Bernard, 2021). In February 2022, three men in the State of Maryland, one from the city of Baltimore, were charged with using social media accounts and mobile phones to coerce sexual activities with a minor (U.S. Department of Justice, 2022). The limited resources for the victims and survivors and the increase in racial and sexual violence on social media platforms suggest that providing online training and empowerment to identify and prepare teenage girls and young adults to resist and challenge racialized and sexualized misogyny is a much-needed intervention. There is little research on the assessment and impact of online racialized sexual violence on teenage girls from marginalized communities; therefore, this study surveyed 18- and 19-year-old girls who live, work, or attend school in the Greater Baltimore area, including Baltimore City, Baltimore County, and Howard County in the State of Maryland to investigate their social media use and the type of harassment they are subjected to on social media.

Literature Review

Youth and Social Media

Research published by the Centers for Disease Control and Prevention (CDC) in 2023 indicates that “America’s teen girls are engulfed in a growing wave of sadness, violence, and trauma” (George et al., 2023). This study, the first of its kind to be conducted after the pandemic, involved high school students sharing their experiences of growing up in a culture heavily mediated by social media that promotes hate and bullying while creating different pressures set by hard-to-attain beauty standards, all of which are further exacerbated by the isolation caused by the pandemic. Emphasizing the trauma youth in the country face,

Abuse Is Intersectional

Social media is heavily implicated in the politics of gender, identity, and race (Litchfield et al., 2018). As Senft and Noble (2013, pp. 107–125) have stated, “Racism is part of life on the internet; in a networked world, it is now a global reality.” In 2018, Amnesty International published a study on gender abuse on X (formerly Twitter), stating that on Twitter, racism and misogyny go hand-in-hand. Online abuse toward women and girls of color is racially stereotypical, focused on their levels of attractiveness and promiscuity, and reinforces traditional negative stereotypes (Francisco & Felmlee, 2022). Black girls navigate the online world, very aware of the existing bias, and know that they are perceived as less attractive than their White counterparts (Weser et al., 2021). Being high consumers of social media, young Black girls often find identity and self-development support online, yet at the same time, the stereotypic media portrayals of them as being hypersexual and aggressive that undoubtedly abound in the offline sphere have also been rendered on social media (Charmaraman et al., 2022). Social media might have enhanced the public participation of girls and women, but it has also exacerbated the gendered public and private negotiations that they must navigate since being female marks them out as objects of judgment and perusal (Salter, 2016). An ADL report published in 2023 showed that hate against Asian Americans increased in 2022 but Black and African American Americans experienced the highest rates of harassment among racial groups. Studies have shown that women of color often respond by calling out trolls, countering the lies and hate while caring and validating the experiences of other women, especially those of color (Ortiz, 2023). But little is known about the responses of those in the age group of 18–19. Instagram and TikTok are among the most popular platforms among Black teenagers, with users logging on multiple times a day (J. Anderson, 2022), but these platforms are biased against Black teenage users (Broadway, 2022).

Through a thorough analysis of media, text, and paid online advertising, Noble (2018) asserts that racism and sexism online mediate what we find and see online. The Internet is not a level playing field when it comes to race, and this discrimination is only exacerbated because search engines and digital media platforms are created and operated by big corporations focused only on profit. For example, in 2020, Black TikTok creators raised a complaint that their content was suppressed since they had posted pro-Black Lives Matter material on their accounts (McCluskey, 2020).

This is similar to the experiences of Asian American adolescents who have excellent access to the Internet and, therefore, to digital media platforms but are also exposed to extreme racial discrimination earlier than are other individuals of other minorities (Huynh et al., 2023). Asian Americans also use social media differently than other ethnicities, as indicated by surveys by the Associated Press-NORC Center for Public Affairs (2020).

For female African Americans, Latinx, and Asian American communities, these aspects of harassment, in the light of the trauma that young people suffer, have important implications. Studies have revealed that 10%–25% of adolescents of color have experienced online racial discrimination that was individually focused and that between 33% and 70% have experienced online racial discrimination vicariously (Duggan, 2017; Rideout et al., 2016). The Nation’s Report Card (National Assessment of Educational Progress, 2019) has stated that Black girls may have low technical literacy and proficiency in their peer groups in the United States, but the Associated Press-NORC Center for Public Affairs Research has demonstrated that Black teens are extremely active on social media (AP-NORC, 2020), even adopting social media at ages younger than other ethnicities (Zhai et al., 2020). The use of mobile technology is particularly high among Asian American, African American, and Latinx communities (Smith, 2020). Education has little to no influence on cyber safety (St. George, 2023). Black and Hispanic youth have disclosed high levels of exposure to violent and sexual content (Stevens et al., 2019). Compared to their White or Hispanic counterparts, Asian Americans aged 18–24 years of age are significantly more likely to keep incidents of being cyberbullied to themselves in order to save face and prevent embarrassment (Charmaraman et al., 2018).

Moreover, social media on smartphones can be used to enhance socialization and as a tool for activism and outreach (Xue, 2021). Using social media for activism often leads to exposure to exceedingly severe harassment, known as “trolling,” which exacerbates mental health issues that young people from traditionally marginalized socioeconomic groups are afflicted with, and this also negatively mediates their understanding of how social media works and their response to it (Broadway, 2022). Studies have confirmed that violent content negatively impacts children from all communities and ethnicities, with children of color being at a greater disadvantage (Kirk, 2022) and that teens of color may not be getting the security they need online. For example, in the context of Asian American youth, especially Chinese Americans, the pandemic saw an accelerated rise in racial profiling and animosity (Croucher et al., 2020).

Cyber Bulling and Harassment

The main appeal of media being social is built on the premise of interactivity that encourages the sharing of private details and extending one’s reach beyond geographical boundaries (Papacharissi & Gibson, 2011) and researchers have argued that social networking sites have become important components in the grooming course, allowing groomers to make contact and develop trust and then inject sexual themes into communication (Choo, 2020). While platforms such as Instagram, Twitter, Facebook, Snapchat, and TikTok have an age requirement of 13 years and older, teenagers (J. Anderson, 2022) have mentioned that young users have circumvented this age requirement, using false birthdays to create accounts. Often this has happened with parental consent, with parents being persuaded by means of written essays or presentations (J. Anderson, 2022). Surveys have confirmed that while 62% of Americans had faced harassment and considered this a problem, and they wanted social media platforms to take more considered action to prevent this (Pew Research Center, 2020). Kindred by Parents, a community support group for Black parents, reached out to various social media platforms such as Snapchat, TikTok, and Instagram to understand the safety precautions they had in place to protect users of color (Broadway, 2022). As their report stated, only Snapchat had a statement on the “intersection of being Black and a teen through its policies.” Indeed, a 2022 poll from Piper Sandler found that teenagers preferred Snapchat over Facebook (Beer, 2022). On TikTok, Black girls find community support but also the risk of extreme racial and sexual hatred (Whaley, 2020), especially on content that celebrates the experiences of Black women. The equity team at Instagram “was formed to address the challenges that people from marginalized backgrounds may face on Instagram,” and it specifically focuses on concerns raised by Black users and communities on the platform. It also seeks to prioritize “promoting fairness on the platform through our technology and automated systems.

1

” Meta has gone on record with

There is growing awareness of the mental health impact of social media platforms, and now even schools are taking on the media corporations. For instance, a lawsuit led by Seattle Public Schools saw school districts in Pennsylvania, Florida, New Jersey, and California also go to court, suing the technology companies over youth mental health problems (St. George, 2023), but much more research must be done to understand how teenagers of color use digital platforms, and their responses, conversations, and participation online (Atske, 2021; M. Anderson & Jiang, 2018). A scoping review of online abuse of women (Watson, 2024) shows that the issue requires focused personal, societal, and organizational action to effectively address it since the issue is a complex multidimensional one. As Schoenebeck et al. (2021) have shown, supporting survivors of online hate and finding means to deter online aggravation is important but a complex issue to navigate since certain measures that may help some racial and ethnic groups may not be useful to others.

Some studies have specifically looked at social media encounters in an intersectional light, but generally, there is a preference to generalize media research based on White populations (Charmaraman et al., 2022). Lauricella et al. (2015) indicated that adolescents of color spend over 4 hr per day, more than their White counterparts, using mobile phones and other forms of media, and thus, it is imperative to understand how racially motivated discriminatory practices toward them operate online. To amplify these left-out voices and illuminate the diverse identities in digital media research (Stevens et al., 2017), this study focused on the Baltimore region, analyzed the involvement and experiences of teenagers of color, explored their understanding of how social media works, sought to determine if they know how to keep themselves safe online, and characterized their digital platform experiences.

Setting the Context: Baltimore

As the most populous city in the US state of Maryland, Baltimore consists of the demographically diverse Baltimore City, Baltimore County, and Howard County (U.S. Census Bureau Facts, 2022). The largest ethnic group is Black (60.9%) followed by White (27.3%), Asian American (7.7%), and Hispanic (5.6%). Baltimore has one of the highest crime rates in the United States, and 15.3% of Baltimore city/county families live in poverty (U.S. Census Data, 2022). The Youth Risk Behavior Surveillance System (YRBSS) data indicate that a high percentage of young people in Maryland have reported being bullied via texts and social media platforms such as Facebook and Instagram (2019). In Maryland, cyberbullying, which includes elements with “intent to harass, alarm or annoy,” is a criminal misdemeanor. In 2019, Governor Lawrence J. Hogan Jr. (Republican) signed a law, called Grace’s Law 2.0, that would give families in Maryland exceptional protections against the online harassment of their children (DePuyt, 2019). This came about when 15-year-old Grace McComas committed suicide due to being bullied online. The American Civil Liberties Union criticized this law, stating that it focused on “communication as opposed to conduct” (WBOC, 2019). While bullying in schools might have decreased, cyberbullying via various online means still remains an issue (Van Asdalan, 2021). A Cyberbullying Research Center (2019) report reported that 41.5% of those interviewed were survivors of cyberbullying. Maryland is cognizant of this and has programs such as the Digital Kindness Week and the Baltimore County Anti-Bullying Task Force to combat it. Recently, the Maryland Kids Code was signed into law that demands privacy for and protection of children to be default on digital platforms. But teenagers aged 18 to 19 are beyond its scope. However, it remains important to determine how effective such measures are in improving the attendance and presence of colored youth online. Baltimore’s ethnic composition provides an excellent milieu to explore these questions, accordingly contributing to a growing body of research examining social media pursuits among communities of color and increasing our understanding of how being social and online contributes to and moderates their lived experiences. It is in this light that the main research question of this study emerges: What kinds of online harassment do teenage girls of color experience in the greater Baltimore area?

Methods

This study, based on an anonymous survey, investigated the extent of racialized sexual violence on social media platforms in adult teenage girls, specifically girls of color, living, working, or attending school in the Greater Baltimore area in the State of Maryland, which includes Baltimore City, Baltimore County, and Howard County. The collection of data for this project was approved by the Institutional Review Board. Adult teenagers aged 18 and 19 were part of a convenience sample, and a requirement by the institutional review board to keep the sample to adult participants only. We designed the questions to include both descriptive, open-ended qualitative, and quantitative survey instruments to provide the participants space to reflect upon their sexual and racial harassment, moving toward the mixed methodology design. It also helped us to triangulate the responses of the participants. Researchers have defined mixed methods as an approach to knowing about a topic in more than one singular for better understanding (Burke et al., 2007; Greene, 2006). Combining qualitative and quantitative methods in research has been useful to provide richer data through triangulation (Johnson et al., 2007).

To characterize the online harassment teenage girls of color experience in the Greater Baltimore area, the survey questions related to sexual and racialized harassment were asked, including the following (among others):

The data measures included the race, age, ethnicity, the number of hours spent on social media sites, types of social media platforms.

“Has any text, photo, video, link, GIF made you uncomfortable on social media platforms?”

“Were they linked to your race?”

“Have you received direct messages (DMs) from strangers that mentioned race or activities that made you uncomfortable?.”

The survey created on Qualtrics had 38 questions, comprising a combination of Likert-type scale, multiple choice, and open-ended questions on the use of social media sites and experiences of racialized sexual harassment, focusing on the differences in cultural values in their social media experiences (Litt et al., 2020).

The survey link was shared on different social media platforms, Facebook, Twitter, LinkedIn, and Instagram, of the researchers, as well as on one of the researcher’s institutional websites and listservs, between February and May 2023.

There was an opportunity for the participants to sign up through a separate link for participation in a raffle for US$50 gift cards for 100 participants.

The survey received 1,158 responses, but only 841 were valid responses, with 65% of the responses from girls of color. Some of the reasons for the invalid responses are the following:

Incomplete answers: due to Institutional Review Board (IRB) requirements, we could not make the questions required;

Not accepting the consent form; and

Not responding to the questions on race or age.

Following is the racial breakdown of the survey participants:

Measures and variables: The following types of variables were measured both quantitively and qualitatively in the survey to gain an understanding of the research questions: • Demographic and identity variables: age, race, location, and ethnicity. • Platform usage variables: use of the different social media platforms, preference of different social media platforms, frequency of use of different social media platforms based on preference, overall frequency of use of social media platforms, • Harassment experience variables: the frequency of harassment on different social media sites, the knowledge of reporting harassment on different platforms, and the type of sexual and racial harassment on different social media sites. An example of an open-ended question here is, “ • Post-harassment support-seeking variables: the comfort of reaching out to a trusted circle of individuals such as parents, friends, or teachers, the knowledge of a trusted circle of individuals in providing advice and recommendations, and the outcome of implementing that advice. An example of an open-ended question here is, “

Results and Analysis

The survey indicated that participants used different kinds of social media platforms depending on their race. For example, in a rank and order question on the preference of social media sites, majority of Asian girls used Snapchat, while majority of the African American girls used Facebook and Instagram. The main research question motivating this study focused on the types of online harassment teenage girls of color experience in the greater Baltimore area, and the results showed that most girls of color identified that they were sexually harassed on social media platforms, which ranged in form, from private messages to nude pictures.

Other themes that emerged from the data included are explained in the following sections:

Popular Social Media

Concerning the type of social media used by adult teenagers, it was found that, on average, girls of color used Facebook, Snapchat, and Instagram the most. Asian American teenage girls used Snapchat the most; American Indian teenage girls used Facebook the most; Black teenage girls used Facebook, Instagram, and TikTok the most; and White teenage girls used Facebook the most. Since most participants are college students, White female college students use both Facebook and Instagram to follow information on sororities.

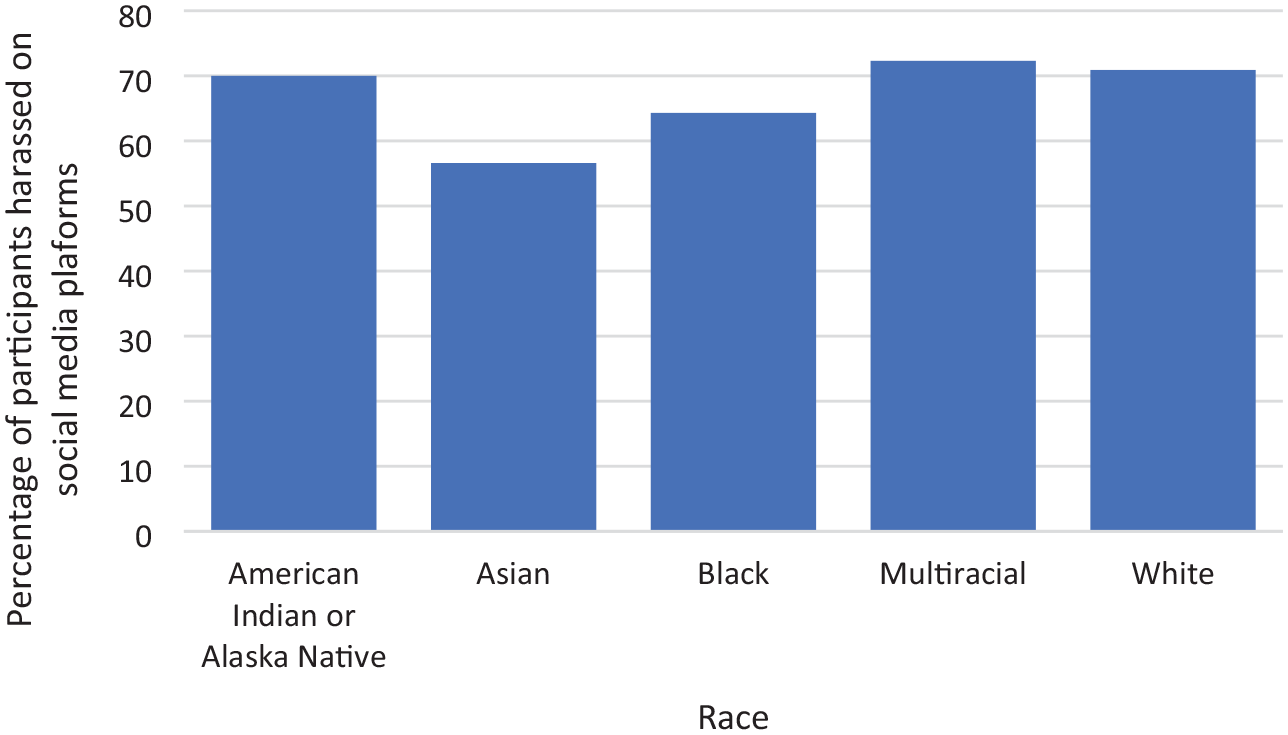

The data suggested that across all races, teenage girls over the age of 18 years old experience being sexually harassed on social media platforms; the following Figure 1 is the response to the question of whether participants were harassed on social media platforms. The participants had to choose from Yes, No, or Unsure. Overwhelmingly, a considerable percentage of Asian participants chose unsure. American Indians and multiracial participants prominently identified as “yes.”

The percentage of participants who were harassed on social media was determined by racial identity.

The following data relate to our core research question, “What kinds of online harassment do teenage girls of color experience in the greater Baltimore area?”

Most teenage girls also agreed that they were harassed on Instagram irrespective of their race, with the proportion among American Indians and Alaska natives being particularly high.

When the participants were asked about the platforms where they were harassed the most, Instagram topped the list, as seen in Figure 2, irrespective of their racial identity. The Likert-type scale options for this question were:

The types of sexual harassment included receiving DMs, being sent nude photographs, soliciting sexual favors, and comments on body image. Most participants shared that comments on body image occurred on Facebook, Instagram, and Twitter. Most comments also focused on what the girls wore and how their bodies looked in their clothes; for instance, several participants commented that DMs were sent to them describing their breasts and other body parts.

Aggregate data of the social media platforms on which the participants were sexually harassed.

Racialized Online Sexual Harassment

When asked whether race and sexually explicit comments are mentioned together in the chats/comments, all participants of color acknowledged that it was an overwhelming part of their discourse, as seen in Figure 3.

The responses to the mention of race in direct messages and chats were categorized by racial identity.

It is important to note that not all participants responded to all the questions. Due to ethical and compliance reasons and directions from the IRB, participants were not required to complete a question to proceed to the next item in the survey.

In responding to how racial and sexually harassing comments on social media made them feel, the participants indicated the following:

The researchers and a research assistant coded the themes for the open-ended questions separately and then analyzed them together to compare. Three themes emerged from the qualitative responses on the kind of direct message content that made the participants feel uncomfortable on their social media engagement: racist comments and messages, violent sexual messages, including pornography, and sexually suggestive messages.

This research has focused on the qualitative data received from the survey, focusing on the descriptive analysis of the participants’ online experiences. We did not test any hypothesis or do correlation analysis, as the focus was on the descriptive themes of the participants on their social media sexual and racial harassment.

Discussion and Conclusion

Racialized sexual harassment is a growing phenomenon on social media platforms, which is often overlooked due to a lack of data on teenage girls. Teenagers are a growing and consistent population of social media users, and thus, gender- and race-based harassment must be studied in depth as part of technology-based research. There is growing awareness of the issue, and policymakers and advocacy groups are urging major technology companies to recognize the detrimental impact social media is having on teens nationwide and crucially emphasizing the need to educate them on cyber safety and security. President Joe Biden raised this issue in the 2023 State of the Union address, urging the need for legislation to protect children’s privacy and safety online. Similarly, the UN’s 67th Session of the Commission on the Status of Women (2023) focused on an open, safe, and equal cyberspace for girls and women. As teenagers of color spend more than 4 hr a day on phones and social media platforms, it is important to understand the kind of responses and racially retaliatory messages and practices they receive online (Lauricella et al., 2015, Marwick & Boyd, 2014). Baltimore’s ethnic composition provides a fitting setting to explore and understand the experiences of its diverse communities online and contribute to a growing body of research that goes beyond the mental health impact of social media platforms to understand how being social and online affects the quality of participation (M. Anderson & Jiang, 2018).

The data from this study form a comprehensive analysis of adult teenage girls facing racial and sexual harassment on social media platforms. The responses of the participants in dealing with racist and sexually harassing experiences on social media platforms are similar to Ortiz’s (2023) findings of White women spreading awareness by focusing on emotional impact and women of color “calling out” the harassers with facts. However, in our research, we found that Asian female teenagers were the least likely to report their harassment on the social media platform. In their qualitative inputs, Asian teenage girls shared that they chose to ignore the perpetrators/harassers in the hope that the harassment would stop. But in majority of the instances, it did not. Conversations with family and friends also comforted them and encouraged them to ignore first, which is not unsurprising, considering recent research (Lantz & Wenger, 2022) has indicated that Asian Americans are least likely to report harassment to authorities.

Compared to their White or Hispanic counterparts, Asian Americans in the age group 18–24 significantly run the risk of being cyberbullied and then keeping such aspects to themselves to save face and prevent embarrassment (Charmaraman et al., 2018).

While the issues and problems of TFGBV have been identified by policymakers, there is limited primary source data available for investigating this issue in depth. For instance, the reactions of our participants ranged from anger to feeling discriminated against when racist and sexist comments were used to denigrate them, which is a barrier to girls of color in using the space for their well-being and social progress. For instance, a participant mentioned how “Some of the unkind comments made me self-doubt,” and another shared, “Other people’s comments can affect your mood,” showing that online racialized sexual harassment impacts the overall well-being of female users.

Consistent with the literature, our findings show that Black teens and Asian Americans are extremely active on social media (AP-NORC, 2020), using Facebook, Snapchat, and TikTok, with Asian American teenagers using Snapchat and Facebook more compared to the other demography where Facebook clearly was the platform of choice among White teenage girls. A 2023 CDC report indicated that “America’s teen girls are engulfed in a growing wave of sadness, violence and trauma” (George et al., 2023), and our data clearly showed that across all races, teenage girls over 18 years old felt that they were sexually harassed on various platforms.

Instagram was the platform that our respondents said they were the most harassed on, irrespective of race. Studies have shown that Instagram and TikTok are very popular among Black teenagers, with users logging on multiple times a day (J. Anderson, 2022), but these platforms have identifiable biases against Black teenagers (Broadway, 2022), with Noble (2018) showing that keyword searches with “Black girls” showcase negative stereotypes and hypersexual imagery. With research showing that nearly 81% of users in the teenage group use some form of SNS nearly every day (Rideout et al., 2018), this is clearly representative of “a crisis in American girlhood” (George et al., 2023, Pedalino & Camerini, 2022). In 2020, a study found that 57% of females (ages 18 to 34 years) had received sexually explicit images (M. Anderson & Vogels, 2020, Online Hate and Harassment: The American Experience, 2023), which is in line with our findings of harassment coming in form of requests of nudes and sexual favors, along with unwanted comments on body images. Comments on body images occurred mostly on Facebook, Instagram, and Twitter, indicating, as Amnesty International has shown, that certain social media sites are clearly places where “racism, misogyny, and homophobia are allowed to flourish basically unchecked” (Amnesty International, 2018). Prior research has shown social media has certainly enabled women and girls from different races to participate more publicly, but the gender harassment they are subjected to reduces the value of this participation, opening them up to greater judgment and scrutiny (Salter, 2016). Social media today is an integral part of communication that encourages development and self-expression and thus deeply influences how youth perceive society and evolve (Charmaraman et al., 2022). Violence affects all communities, but the sexual violence that Black and other teenagers of color encounter online (Kirk, 2022) clearly shows that they are not being provided the safety features they need on social media platforms. Sexual harassment made our participants feel uncomfortable and uneasy (45%), racially discriminated against (40%), and feeling hated (12%) online. Clearly, social media platforms exacerbate politics of race and gender (Litchfield et al., 2018), leaving vulnerable communities open to harm. It is also apparent that the racial stereotypes of Black girls being hypersexual have been translated online as well (Charmaraman et al., 2022). This raises important questions about the motives of social media platforms and their ability and actions in keeping users safe. Snapchat had a statement on the “intersection of being Black and a teen through its policies” (Broadway, 2022). As shown by our data, Snapchat was the platform on which teenagers were harassed the least. Instagram, where harassment crossed racial boundaries, has an Equity team that “was formed to address the challenges that people from marginalized backgrounds may face on Instagram.” Although it has publicly declared that “promoting fairness on the platform through our technology and automated systems. 2 ” is a priority, clearly its users have a different experience. Facebook, which owns Instagram, did little to curb the impact of Instagram on the body image issues of young girls and also seems to do little in this regard (Wells et al., 2021). Evidently, the internet is not a level playing field when it comes to race, and this discrimination is distinctly exacerbated because the digital media platforms are managed by corporations that prioritize targeting profits.

The study has certain limitations. Being set in Baltimore, it has narrow generalizability. Its sample size when this article was being written was limited as well, but the findings from this study provide critical insights into the gaps in the understanding of racial and sexual harassment of girls of color. There is limited understanding of support and intervention from social media platforms in supporting teenage girls to protect themselves against racialized sexual harassment. The feeling of discrimination and hate adds to their anxiety and mental health, as they are forced to deal with unwanted sexist and racist paradigms on social media platforms. Most participants agreed that they enjoyed spending time on social media to engage with their favorite topics such as entertainment videos, cooking, fashion, and more. However, they were also scared and concerned about the constant harassment. As the data show, American Indians were also deeply impacted. In another study, Asian Americans in the age group of 18–24 years were found to be more often at risk of significant cyberbullying compared to their White and Hispanic counterparts, and they often kept such information to themselves, preferring to suffer in silence to save face (Charmaraman et al., 2018). This is an aspect that needs further exploration, and we invite scholars to probe such questions in future research.

Footnotes

Acknowledgements

Kristin Lloyd for her assistance in the first round of the data collection and analysis of this project in Spring 2023.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research is funded by an internal grant from the School of Emerging Technology, Towson University.