Abstract

Many scholars find the peer-review process to be a puzzling, non-transparent, and subjective exercise. Many emerging scholars also learn about the peer-review and publishing process through painful and time-consuming trial and error while still students or as early-career researchers rather than through formal training or guided supervision. Yet many pitfalls exist in this process for new and veteran scholars alike. With this study, grounded in the communication field, we aim to pull back the curtain on this opaque process and assist scholars in their publishing ambitions while also providing suggestions, primarily for journal editors and those who train future reviewers, about how the peer-review process can be improved for collective benefit. To do so, this grounded theory study reviews a year's worth of reviews from a communication journal to explore which issues reviewers identify within the submitted research, to explore how the reviewer feedback reveals their implicit understanding of their role in the peer-review process, and to identify how clear reviewers and editors are regarding which feedback is most important. Taken together, this allows for an understanding of how reviewers and editors engage in the social construction of research. The results inform the training of communication scholars, reviewers, and editors.

Keywords

Many scholars find the peer-review process to be a challenging experience where uncertainty, a lack of transparency, and subjective decision-making seem to reign (Atjonen, 2018). It is often a confusing endeavor rife with misunderstandings, varied expectations, and variable outcomes (Brezis and Birukou, 2020). Yet it can also be an important tool for ensuring research quality and encouraging research that is worthwhile, ethical, and that adds to the sum of human knowledge (Kelly et al., 2014). Despite its importance, long history, and ubiquity across academic contexts, few academics have ever had a class in how to navigate the peer-review process. Indeed, scholarly peer-review has been called the skill that “almost no one is teaching” (Goodman, 2022, n. pag.). If they are lucky, select graduate students’ supervisors might take them aside and offer to complete a peer review together or provide guidance on how to respond to a review that's been received. But, for many beginning academics, they are forced to learn about the peer-review process individually and slowly over the years, at potentially significant personal cost, as they try to publish and piece together the limited, often absent (in the case of desk rejections), or conflicting advice they receive through the process (Gravett et al., 2020).

Many pitfalls exist in the peer-review process and choosing the wrong journal or spending too long with work under review presents a significant opportunity cost with the potential for real and lasting damage to one's career prospects and opportunities (Huisman and Smits, 2017). Likewise, not knowing how to conduct a peer review or how to respond to one can also deepen inequalities and can hamper career progression and development (DiDomenico et al., 2017). With this study, grounded in the productively fragmented communication field and informed by a constructivist theoretical viewpoint (Bornmann, 2008), we aim to pull back the curtain on this opaque process and assist burgeoning scholars in their publishing ambitions while also providing suggestions for reviewers, editors, and publishers alike about how the peer-review process can be improved for collective benefit. To do so, this study reviews a year's worth of reviews from a communication journal to explore:

which issues reviewers identified within the submitted research how the reviewer feedback reveals their implicit understanding of their role in the peer-review process how clear reviewers and editors are regarding which feedback is most important.

Taken together, this allows for an understanding of how reviewers and editors engage in the social construction of research. The results inform the training of communication scholars, reviewers, and editors alike.

This study begins with a literature review that defines what peer review is and briefly traces its evolution. We next outline our theoretical positioning, discuss peer review types and conventions, and summarize findings from other studies that have sought to learn from the peer-review process, both generally and within the communication discipline, which has received especially little research attention (Curtin et al., 2018). This section also addresses how reviewing and editing compares and allows us to reflect more precisely on how reviewers implicitly see their roles in the peer-review process. After this, the study's methods are presented and findings and implications follow these.

Literature review

Peer review: what and when

Scholarly research builds on and generates knowledge. The enduring question of what defines knowledge has led to numerous ways to conduct research. Regardless of approach, research, ideally, prioritizes quality and integrity. To meet these ideals, some form of evaluation is required when academics seek project funding, submit conference abstracts or papers, and write books, book chapters and journal articles. One standard evaluation technique is peer review. In relation to journal articles, a simple definition one publisher offers is, “the independent assessment of your research paper by experts in your field” (Taylor & Francis, 2022). However, as research opportunities have expanded, so too have questions around the peer review process.

The history of peer review is not straightforward. It often begins with reference to seventeenth century scientific journals and the role an editor-in-chief played in determining which letters should be shared with the broader scientific community (Kelly et al., 2014; Tennant et al., 2017). In some instances, editorial boards were charged with a similar task. This version of events prompts initial discussions around “gatekeeping” and who gets to decide what is worthy for publication. However, historians suggest it was not the beginning of what we now refer to as peer review (Baldwin, 2015; Moxham and Fyfe, 2018). In relation to current practices, the nineteenth century offers a more credible starting point. A proliferation of journals and increased journal specialization created a need for more focused judgment, requiring a broader pool of expertise. Earlier appraisals relied on the knowledge and skills of individual referees rather than established criteria (Fyfe, 2019). Since the introduction of the term “peer review” in the 1970s (Moxham and Fyfe, 2018), reviews have become more explicit, dealing with research merit, innovation and ethics as well as overall presentation and writing style (Fyfe, 2019).

Theoretical positioning

Early understandings of social scientific work posited that academic manuscripts were the result of researchers’ efforts alone and that they solely deserved recognition rights (Merton, 1973). Later understandings, informed by a constructivist theoretical viewpoint, challenged this view. For example, those aligned to this theoretical perspective argue that academic manuscripts result not just from the work of the authors but also, importantly, from the contributions of reviewers and editors, as well (Bornmann, 2008). Knowledge of the peer-review process informs how scholars write prior to submitting academic work for review (Knorr-Cetina, 1981; Ali and Watson, 2016). In addition to this implicit influence, the reviewers and editors can also exert explicit force in shaping how a work develops through the peer-review process and, as disciplinary gatekeepers, whether it is judged worthy to be published or is rejected (Siler et al., 2015). Thus, this paper operates within the theoretical assumption that a peer-reviewed manuscript is socially constructed by both the author(s) and by the peer reviewers and/or editors. In line with this view—and by focusing on where reviewers target their feedback, what issues they identify, and the degree to which they identify issues only versus offering solutions or guidance on what to do next (as well as how the editor relays and/or mediates this feedback)—this study is then able to provide meaningful insights not only to authors about some of the pitfalls they might themselves encounter in the peer-review process but also to reviewers and editors about how they implicitly see their role in the construction of academic knowledge. The results of this exploration can inform practical guidance for authors, reviewers, editors, and publishers alike.

Peer review: how

The first part of this section addresses how scholars-in-training learn about peer review and develop their skills in it while the second section overviews the expectations that are placed on reviewers. The third part of this section discusses how resources and the effects of the COVID-19 pandemic are affecting the contemporary peer-review landscape while the fourth and final part of this section discusses peer review in the communication discipline, specifically, and ends with a comparison of reviewing and editing.

Training and development

Reviewers can gain knowledge and skills about the reviewing process from personal experience and from mentoring from other academics, similarly to on-the-job training (Chong and Mason, 2021). Different publishers and individual journals sometimes also provide advice for reviewers, offering both philosophical arguments and practical tips (though it is unclear how much uptake these have or how useful these are). As examples, SAGE offers a series of online videos, as well as a written 9-page training guide, on the peer review process and on how to conduct a peer review. It also offers live webinars on peer reviewing. The Web of Science Academy, hosted by Clarivate, offers free short courses on peer reviewing, with classes covering reviewer expectations, what to look for in a manuscript, and how to write a review (both independently and by co-reviewing with a mentor). Individual journals occasionally have their own sessions for reviewer training or host “ask the editor” sessions to provide a forum for discussion around peer review and a particular journal's aims.

Some scholars also try to learn about the peer-reviewing process through self-reflexive and systematic analysis of their own reviewing practices. Mason and Chong (2022), for example, analyzed 62 of their own peer reviews in the disciplines of education, applied linguistics, and bibliometrics, to evaluate where they focused their feedback and to assess the emotional tone of their writing. They found that they centered their comments around eight areas: 1) title, abstract, key words; 2) preliminary information, 3) reporting of methods, 4) reporting of findings, 5) discussion and conclusions, 6) tables and figures, 7) referencing and formatting, 8) language and wording and focused most heavily on the methods section and literature review. What is less clear is an understanding of what the specific issues are that reviewers identify within the evaluated research, how the editor and reviewer feedback reveals the ways in which these parties see their role in the process, and how explicit reviewers and editors are regarding which feedback is most important, which this present study aims to illuminate.

Resources and their effect on the process

The peer review process relies on the generosity of the academic community. It is promoted as a vital part of an academic's job to build on and advance new ideas. The good news is that a growing number of journals, each with a distinct disciplinary focus, has increased publication opportunities for researchers. However, this also puts a strain on the reviewing process due to reviewer fatigue, increased requests to review and competing academic demands (Breuning et al., 2015). As academics are already stretched, the decision to review is an added burden that may not be factored into workloads (either at all for adjunct academics who don’t have a “service” component to their workload or adequately for those who do but find this allocation insufficient for the number or type of requests they receive). Recently, COVID-19 has decreased the number of full-time academics and has exacerbated the situation (Thatcher et al., 2020). However, even before the global pandemic, the system was under strain with some journals suspending submissions to cope with the backlog (Inside Higher Ed, 2018).

Expectations placed on reviewers

Publishing groups and scholarly associations alike publish best practice guidance for scholarly peer review that outline the responsibilities of and expectations for those who participate in this process (see, e.g., COPE, 2013; Taylor & Francis, 2023). Such responsibilities include a laundry list of expectations related to bias, conflicts of interest, confidentiality, timeliness, and academic integrity. However, the most germane to our exploration of the role and function of peer review is providing a “constructive, comprehensive, evidenced, and appropriately substantial peer review report” (Taylor & Francis, 2023) and being “objective and constructive in their reviews” (COPE, 2013, p. 1). Some journals provide explicit guidance to their reviewers on particular aspects to comment on or how to structure the review but other journals leave this as an entirely open-ended process where the reviewer is invited to provide feedback without any specific prompts, which raises the often-cloudy issue of the role of peer review and what, exactly, a constructive and appropriately substantial peer review entails. The Committee on Publication Ethics also expects scholars to “make clear which suggested additional investigations are essential to support claims made in the manuscript under consideration and which will just strengthen or extend the work” (COPE, 2013, p. 4). However, we also lack an understanding of if or how peer reviewers and editors provide this sense of which feedback is more important and which is less so.

Scholarly peer review in the communication discipline

Empirical research about scholarly peer review, itself a sensitive and opaque process, as already acknowledged, is limited in comparison to other areas of scholarly communication and publishing (Jennings, 2006). Scholarship in this area tends to focus on those conducting peer reviews (see, e.g., Rowley and Sbaffi, 2018) or on the timing or perceived efficacy of the review process (see, e.g., Björk and Solomon, 2013; Etkin, 2014) rather than on the content of reviews themselves. The bulk of literature on scholarly peer review stems from the medical fields while complementary attention in the social sciences is “scant” (Curtin et al., 2018, p. 279).

Within the interdisciplinary communication field, the literature on scholarly peer review is smaller still. A study in this field, undertaken in 2018 and drawing as participants mainly American academics, explored who reviews for the communication field and what their motivations are (Curtin, Russial, & Tefertiller). It also examined reviewer roles in different contexts and what communication academics perceived would improve scholarly peer review quality. It found that more than 75 percent of respondents (n = 547) supported reviewer training initiatives or “more complete [reviewing] guidelines” from journals (p. 291). Another study within the communication field found that flaws outweighed strengths in reviewer comments by a ratio of 10:1 (Neuman et al., 2008).

Scholarly peer review is nominally undertaken by peers or “equals.” Yet the anonymity of the process coupled with the vast need for reviewers (Kurdi, 2015), as previously acknowledged, means that academics of varying ranks, disciplinary traditions, and expertise levels are reviewing manuscripts. This has implications for reviewing quality, relevance, and for parity among colleagues with different workloads and pressures. Of interest here, too, is the notion of disinhibition (Suler, 2004) afforded by anonymous peer review and that anonymity can result in more acerbic feedback.

Comparing reviewing and editing

Due to the lack of training most reviewers receive in how to review and, indeed, in the wide variance in how submitted reviews are structured, it is necessary to explore how reviewing and editing compare so that, later, once an exploration and analysis of the reviewers’ output has been attempted, we can reflect on how this output implicitly demonstrates how the reviewers see their role in the social construction of knowledge through the peer-review process and the degree to which they engage in editing work in addition to reviewing work. The literature acknowledges the ambiguity of what is meant by editing and the terms used to describe it (Fretz, 2017; Burrough-Boenisch, 2013). However, despite this ambiguity and, in some cases, lack of consensus on what editing entails, there is generally agreement that editing can be seen through a vertical perspective (from shallow to deep), from a weight perspective (from light to heavy) or from a distance perspective (micro to macro) (Burrough-Boenisch, 2013). We adopt the distance perspective in this paper and conceptualize editing along three types which includes, from micro to macro, proofreading and line editing, copyediting, and substantive or developmental editing. Though no consensus exists on what, exactly, is entailed in each type of editing (Fretz, 2017), we provide examples drawn from the literature to illustrate the general focus of each type of editing. The most micro form of editing, proofreading and line editing, generally focuses on identifying issues with grammar, punctuation, spelling, and can also fact-checking and verifying the accuracy and appropriateness of claims and supporting evidence (Lozano, 2014). It can also include making suggested changes to sentence structure, word choice, and (often) within-paragraph suggestions on the text's organization. The second level, copyediting, moves from the micro to the mezzo and macro perspective (Wates and Campbell, 2007). Rather than identifying errors within words, sentences, or individual paragraphs, copyediting looks across paragraphs to ensure consistency of style and formatting. The third and final type of editing, substantive or developmental editing, is the most macro and adopts a “big picture” approach to consider the paper's organization, overall, and whether all necessary sections (such as a discussion of implications or a limitations and future directions section) are present and convincing. While proofreading can focus on the accuracy of individual sources, substantive or developmental editing is more concerned with the synthesis of sources and with a convincing and appropriate distillation of those sources across paragraphs and pages. It remains to be seen how reviewers see their role in terms of pure evaluation (as “reviewing”) or if they also see their role as evaluation coupled with guidance, which strays into the territory of editing.

Synthesis and research question

This study advances the following umbrella research question:

RQ: How do reviewers and editors at a communication journal engage in the social construction of research?

To answer this question, we explore the following three aspects:

What issues do reviewers identify within these parts of the research process? How does the feedback of reviewers and editors reveal their implicit/tacit understanding of their role in the peer-review process? How clear are reviewers and editors regarding which feedback is most important?

Based on this literature review, the present study is able to respond to calls (Curtin et al., 2018) for reviewer training or guidance by identifying which issues are identified during the peer-review process. With this knowledge, training and guidance can be tailored to the most problem-prone parts of the research process. Similarly, identifying through their feedback how reviewers and editors implicitly see their role in the process allows us to continue a conversation about our role as reviewers and members of an academic community with a shared responsibility for promoting and advancing scholarship. Lastly, by identifying how clear reviewers and editors are about which feedback is most important, we can appreciate the extent to which these parties are meeting their expectations under COPE principles (COPE, 2013).

Methodology

Grounded theory: what, who, and why

Grounded theory is an approach that seeks to provide a “conceptual overview of the phenomenon under study” (Holton, 2008, n.pag.), in this case, the peer-review process as understood through an evaluation of peer reviews themselves. Grounded theory has been applied in myriad ways and with pragmatist (Locke, 2001); realist (Partington, 2002), and interpretivist (Lowenberg, 1993) orientations, among others. As a general research methodology, grounded theory is not confined to any particular epistemological or ontological perspective but can suit the philosophical perspective adopted by the research team (Holton, 2008), which, in this case, is interpretive and constructionist (Locke, 2001) and complements our understanding of the peer-review process as a socially constructed form of knowledge production. Grounded theory is appropriate for a little-studied topic like evaluating peer review within the communication discipline as it allows us to abstract to a conceptual level and, in doing so, theoretically explain rather than describe behaviour (Glaser, 2003).

Data pool and sampling

In January 2022, the study's first author contacted a communication journal editor about the possibility of conducting a study on peer reviewer comments for articles submitted to the journal over a one-year span (from January through December 2021). The editor provided in-principle support for the idea provided the identifiability of both authors and peer reviewers was protected. The research team obtained in February 2022 a de-identified copy of the data set for review and analysis. This data set included the reviewer comments as well as any comments made by the editor. The research team coded the data together as a team rather than individually.

This study examined 16,202 words of peer-reviewer feedback related to articles that were sent out for peer review in 2021. The range in length was from 45 words to 1496 words. This particular journal, the editor said, generally seeks to obtain two reviews for each submission but occasionally solicits a third reviewer when mixed reviews are received.

Coding approach

The team followed Strauss & Corbin's open-axial approach to coding (1990). Specifically, it first imported into NVivo a spreadsheet containing all reviews from 2021 and then, as a team, would read through each discrete “section” of a review. This might be as small as a sentence fragment or extend to multiple paragraphs. First, as part of the open coding phase of data analysis, each discrete review “section” was coded for any critical feedback present . This ranged from feedback about inappropriate methods and sampling issues to insufficient engagement with relevant literature and insufficient contribution to theory. Next, to explore the “intervening strategies” dimension within Strauss & Corbin's axial coding stage, the review was coded for any explicit guidance reviewers offered to resolve the issues they identified. This allowed us to understand how often reviewers only identified issues compared to identifying issues and offering guidance on how to resolve them. It also allowed us to examine how reviewers implicitly saw their role in the process, which is one of the “broad and general conditions that influence action/interaction strategies” (Vollstedt and Rezat, 2019, p. 88). Lastly, the team coded for any explicit references reviewers made to the relative importance of their feedback (e.g., that a suggestion was “essential” or would strengthen or extend the work but wasn’t required). This allowed us an understanding of the “action or interaction strategies” that reviewers directed to authors through their reviews and rounded out the final dimension of our axial coding process.

Findings

To explore how reviewers and editors at a communication journal engage in the social construction of research, we first identified the specific issues that reviewers raised within the research they evaluated. Doing so help us understand problematic parts of the research execution and presentation process that scholars can encounter with their own research.

Identified issues in peer-reviewed research

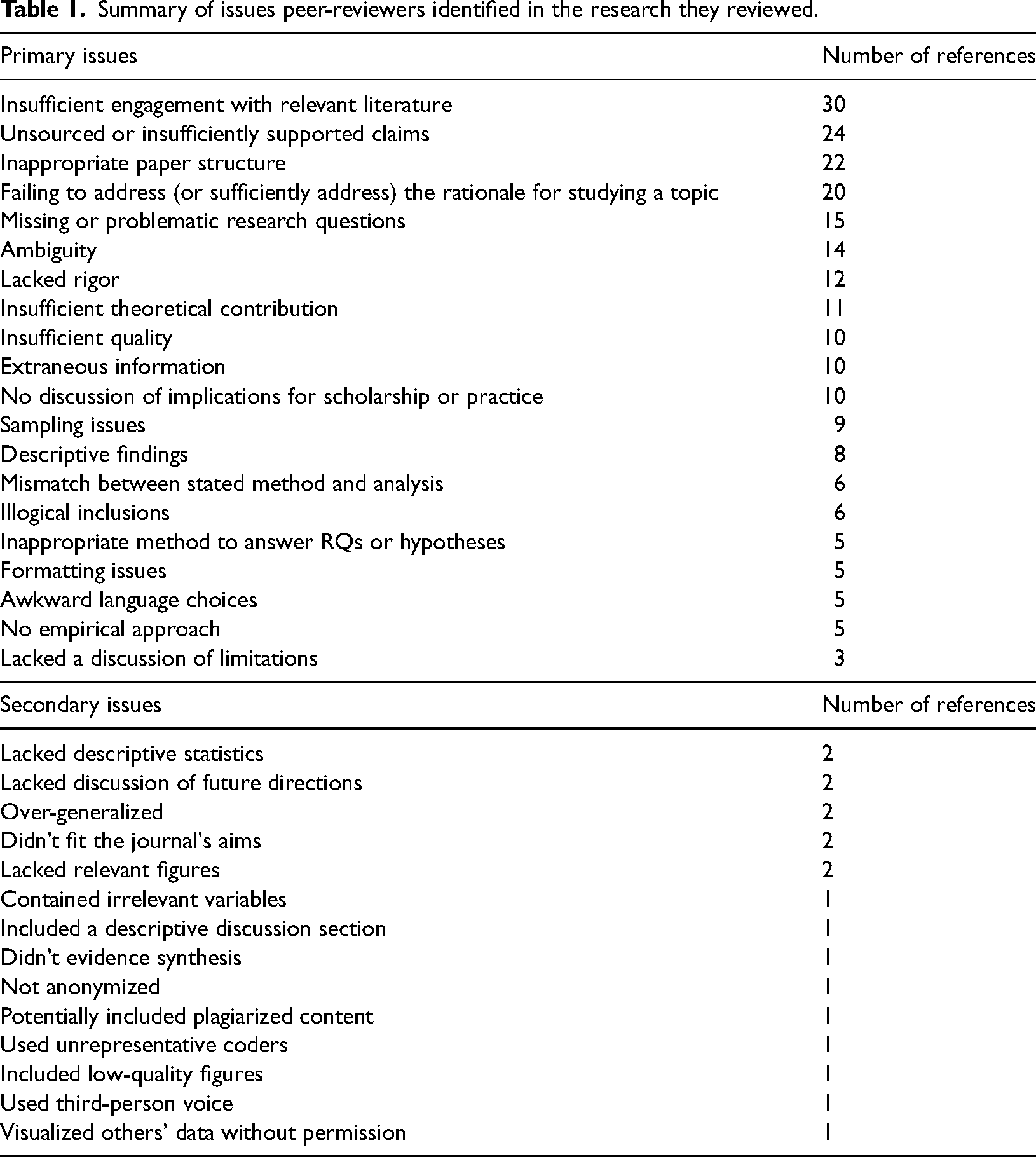

Reviewers in the sample identified 34 discrete issues with the articles they assessed (see Table 1). The first 20 of these were mentioned three or more times (and as many as 30 times) and are deemed primary issues. The remaining 14 were only mentioned one or two times and are deemed secondary, outlier issues. This categorization is not necessarily a judgment on the importance or lack of importance that the issue itself represents (e.g., a minor issue might appear in the primary issues section if it was mentioned frequently while a major issue might appear in the secondary issues section if it was only mentioned once or twice).

Summary of issues peer-reviewers identified in the research they reviewed.

Primary issues

The primary issue reviewers identified was that the author(s) of the submission they were reviewing had not delved sufficiently into the relevant literature. This included reliance on too-dated sources, reliance on popular over academic sources, and writing that revealed a shallow understanding of the topic at hand. The second primary issue was about unsourced or insufficiently supported claims. This included claims that could be supported by third-party references or by the author's own data (if the submission was empirical in nature). The third primary issue was about inappropriate structure. This related to how the paper was organized in whole or part and included the positioning of individual elements, such as figures, paragraphs, or sections, as well as readability concerns, such as a lack of sub-heads. The fourth primary issue centered on authors failing to address (or to sufficiently address) their rationale for studying the topic or for why the audience cares about a particular topic, event, or phenomenon. The fifth primary issue was feedback about missing or problematic research question(s). The sixth primary issue concerned ambiguity. This included lack of clarity around specific terms, hazy findings, or unspecific methodological details. The seventh primary issue was that the submission lacked rigor. This feedback was concerned with the execution of methods and lack of adhering to research methods best practices.

The eighth primary issue was insufficient theoretical contribution. Reviewers here questioned submissions that did not generate theoretical knowledge, add to existing theory, or reflect through a theoretical lens. In a three-way tie for the ninth primary issue were concerns about insufficient quality, extraneous information being included, and a lack of a discussion of implications. The 10th primary issue was related to sampling. The 11th primary issue was the author(s) presenting descriptive findings. Here, reviewers were concerned with findings being merely described rather than critically analyzed. In a two-way tie for the 12th primary issue was feedback about a mismatch between stated method and analysis and one or more illogical inclusions. In a four-way tie for the 13th primary issue was feedback that the paper's method was inappropriate or unsuitable to answer RQs and/or hypotheses, that the paper exhibited formatting issues, demonstrated awkward language choices, and lacked an empirical approach. The final category of feedback in the “primary issues” category was, in 14th place, that the submission lacked a discussion of limitations.

Secondary issues

The secondary issues were outliers that were mentioned only two or fewer times. The first five were all mentioned twice and the remaining nine were each mentioned once. These secondary issues with two mentions each include that the submission lacked descriptive statistics; lacked discussion of future directions; over-generalized; was inappropriate for the aims of journal; and wasn’t accompanied by relevant figures to visualize and make more concrete the author's argument. The secondary issues with only a single mention include that the submission contained irrelevant variables; lacked international comparison; included inaccurate tallying; undersold its innovation; included a discussion section (rather than a findings one) that was descriptive rather than analytical; didn’t evidence synthesis; was potentially not anonymized; potentially included plagiarized content; used unrepresentative coders; included low-quality figures; used third-person voice; and, finally, visualized others’ data without permission.

Implicit understandings of editor and reviewer roles

To continue exploring how reviewers and editors at a communication journal engage in the social construction of research, we next evaluated how the reviewers’ feedback reveals their implicit understanding of their role in the peer-review process. To do this, we explored the degree to which reviewers only identified issues versus identifying issues and offering solutions or guidance on how to resolve them. This reveals two different understandings, which we call in this paper “critics” and “guides,” of the role of scholarly peer review. Reviewers spent roughly 10,158 words (62.69 percent of the total sample) describing the submission or identifying issues in it. They spent the remaining 6044 words (37.3 percent of the total sample) providing guidance on how to resolve the identified issues. When doing so, reviewers provided 42 discrete types of suggestions. Of these, 13 were mentioned more than five times by reviewers and were operationalized as primary types of feedback. The remaining 29 types were operationalized as secondary types of feedback and were mentioned by reviewers five or fewer times.

By number of raw instances, the most frequent guidance-focused feedback peer reviewers offered was line edits. The second most-popular type of guidance-focused feedback was to clarify meaning or purpose and resolve ambiguity and refine the paper's structure. The third most popular type of guidance-focused feedback was to engage more deeply with the relevant literature. About half of this feedback was generic while the other half offered specific literature, scholars, theories, or concepts to consider consulting. The fourth most popular type of guidance-focused feedback was to provide more evidence to support a claim. This included providing statistics to back up the narrative claims being made in the findings section, feedback to not generalize (or over-generalize) from a single case, or to strengthen an argument that was weak or could be interpreted or explained multiple ways. In a three-way tie for sixth primary type of guidance was feedback to adopt an empirical approach, increase the rigor of the method(s), and remove extraneous information. In a three-way tie for seventh primary type of guidance was feedback to add a discussion of implications, use a different method, or discuss in greater depth.

The remaining 29 types of guidance are classed as secondary and were mentioned five or fewer times by reviewers. They included guidance to:

provide more context (in the abstract or introduction) present findings in visual form provide examples polish written expression add a discussion of limitations remove inferences not supported by data refine sampling include descriptive statistics use preferred formatting describe claims more accurately add research questions add depth to implications engage an external editor submit as a book chapter rather than a journal article provide international comparison retally presentation of findings emphasize innovation discuss future directions submit to a different disciplinary journal present statistical tests appropriately strengthen argument consult external expert-method analyze rather than describe tighten scope refine visuals shift balance to improve relevance change voice: third person to first refine research questions not visualize others’ data.

By analyzing the reviewers’ output, we see that slightly more than half the reviewers (52 percent) focused on developmental or substantive editing and, therefore, that these reviewers saw their role chiefly as providing feedback on big-picture issues in the manuscripts they reviewed. This is evidenced by the feedback they offered focused on structure, engaging more deeply with the relevant literature, and adjusting research methods or increasing the rigor of their application. In addition to identifying big-picture issues, another 43 percent of the reviews focused on the other end of the spectrum and concerned themselves with proofreading and line editing. This manifested through micro-level suggestions about unsupported claims, extraneous information, and awkward word choices. A minority of the reviews (4 percent) focused on the copy-editing level. Those that did were concerned with formatting, visualizing findings, and achieving consistency across pages. Thus, based on an analysis of their reviews, reviewers not only implicitly saw their role as evaluating the submitted research but also helping edit how it was presented (though primarily at the micro rather than at the mezzo, copyediting level).

Clarity regarding which feedback is most important

To further continue our exploration of how reviewers and editors at a communication journal engage in the social construction of research, we lastly examined how clear these parties are with which feedback they perceive is the most important. Reviewers didn’t often provide clarity on which aspects of their feedback they perceived as most important (or, if they did, they provided this feedback confidentially to the editor and not to the author). Indeed, reviewers provided this clarity in only 20 percent of the submissions. They were not always specific about their concerns, however. As examples, some reviewers included generic statements about an inappropriate overall research design, a paper's insufficient “execution,” a study's lack of “strength,” and references to papers not meeting “publishable standards.” When the reviewers were more specific about the issues they deemed the most important, they focused their feedback chiefly on methods (including concerns about an inadequate description of the method employed, sampling concerns, concerns about the validity of the method, and concerns about the generalizability of the findings, which is related to the method as well as the presentation of findings and resulting discussion). Other specific aspects reviewers deemed as the most important included: inadequate definitions of terms; a lack of significance; an inadequate contribution to the field; a lack of implications; and findings that were descriptive rather than critical-analytical.

Given this inconsistency on when reviewers provided clarity on which issues were most important (and on how specific they were with this type of feedback), we turned to an examination of the decision letters, containing the editor's personalised comments, if any, to see if they helped mediate the feedback provided for the authors. However, the editor used the default decision template letter and let the reviews speak for themselves in all cases except for desk rejects where there was no peer review feedback to contextualize the decision. In these cases, the editor most frequently mentioned concerns that the submission fell beyond the journal's scope or aims. Other reasons given included concerns about a “lack of reliability,” having “just recently published a similar piece,” and being unable to find reviewers for the submission.

As foreshadowed earlier, academic publishing is the product of author(s), reviewers, and editors rather than just the author(s) alone (Bornmann, 2008). In addition to the questions about what issues reviewers identified and how clear they are with which feedback is most important, the reviews in this study also provide an opportunity to evaluate how reviewers implicitly see themselves in this process and how they contribute to the construction of knowledge in doing so. This insight can then inform the training of reviewers, authors, and editors alike. As evidenced through the feedback provided, reviewers saw their role in the process as disciplinary experts (calling for greater engagement with the relevant literature) as well as pseudo-editors (suggesting ways to improve the paper's organization and writing, which is evidenced by the high number of line edits reviewers offered). Indeed, reviewers’ fixation with offering line edits calls into question how much of that type of editing should occur during the peer-review process (as facilitated by reviewers) versus through the production process (as facilitated by the publisher's professional editing service following a manuscript's acceptance). From their comments, reviewers were more concerned with the execution of research methods and the engagement with the literature compared to the theoretical contribution (if any) a paper made. This focus on technical competence at the expense of scholarly contribution should not be overlooked. Based on the proportion of feedback that only described or identified issues versus providing guidance on how to resolve them, reviewers also largely saw themselves more as critics—describing the work and its flaws—rather than as guides suggesting ways to an improved manuscript. This orientation raises questions about how emerging scholars learn about their role through formal or informal training and experience and then later communicate it to their own students as they mature in their careers. It also provides a rich opportunity for discussion so that a clearer understanding of author-reviewer-editor roles, aims, and contributions can be achieved.

Discussion

The literature (see, e.g., Fyfe, 2019) indicates that early forms of peer review relied on instinct more than on pre-determined evaluation criteria. This study presents findings from a communication journal where instinct is still the way that peer-reviewed research is assessed: no explicit criteria are provided to reviewers when a reviewing invitation is sent to them. Due to a lack of evidence in the scholarly literature, it's unclear how widely this practice holds across communication journals but, from our anecdotal experience, having collectively reviewed hundreds of submissions in the communication discipline in multiple countries and across several decades, we estimate that structured evaluation criteria are only used about a quarter of the time. Given the variance in the structure and substance of the reviews evaluated in this study, this might suggest the need for more standardisation to provide a more consistent experience for those involved in the peer-review process and to allow for clearer expectations around what, precisely, will be evaluated.

Overall, peer review can be a confusing, frustrating, and opaque process for all stakeholders involved (editors, authors, and reviewers). These aspects are compounded in cases where journals aren’t explicit about the specific role of the reviewer and how they see themselves in relation to the overall knowledge production process (Bornmann, 2008; Ali and Watson, 2016). It can also, as this study highlights, include feedback of varying length and specificity or feedback that focuses too much on identification of flaws or line edits in contrast to feedback (a la developmental or substantive editing) that offers guidance on how to productively respond to identified problems. These issues could be reduced by setting clearer expectations around reviewer roles and establishing evaluation guidelines that are then actively modeled and taught. As will be detailed further in the recommendation section, these improvements would ensure that a focus on quality control is accompanied by a focus on peer support and present a strong case for institutional resources and backing to ensure that time is available to produce quality reviews and, likewise, time is not “wasted” by the wrong person undertaking a type of editing that is someone else's responsibility.

The number of and types of deficiencies the reviewers identified and the large distribution of comments about these underscores the nuance required and the challenge of publishing peer-reviewed research. These aspects also implicitly highlight the different roles reviewers saw themselves fulfilling, which is of particular importance to an interdisciplinary field like communication (He et al., 2023). There is no easy solution. Rather, academics need to master or at least demonstrate a wide variety of skills from conceptual knowledge (of literature and theories) to logic, research methods (both selecting appropriate ones and executing them appropriately), and the presentation of material (through aspects like fluency of expression, clarity, and parsimony), as well as knowing which roles exist and which they are being expected to fulfil. The blinded nature of peer-review means that the specific expectations reviewers have is constantly changing and difficult to predict, which underscores the importance of journals being more proactive and explicit with their expectations so authors and reviewers alike can benefit from this transparency.

If navigating the peer review process is difficult for seasoned academics, what about emerging ones? Reflecting on the present study and its first aim (to explore which issues the reviewers identified through their peer reviews) allows us to indirectly consider the skills our post-graduate students require and to reflect on how effective we are at providing them. Specifically, the relatively equal emphasis (by number of words) that reviewers placed on the three core sections of literature review, methods, and findings underscores the deeply interdependent nature of quality and publishable scholarship. A study can be executed well from a technical standpoint but can lack a sufficient contribution to make it interesting or publishable if it isn’t grounded in the literature and doesn’t have awareness of how it builds on (or replicates) past work. Likewise, a study can evidence a deep understanding of the literature and pose meaningful questions for exploration or testing but can fall short if the right method or methods aren’t selected or if these aren’t executed properly. Publishable scholarship, then, based on these reviewer comments, requires a diverse skillset. This includes 1) solid research methods training, 2) understanding, and ideally awareness, of relevant literature and associated theories and concepts, and 3) a curiosity and drive that foments innovation and contribution. At the same time, innovation can be in tension with established literatures and theories and reviewers, as gatekeepers, can play a role in reproducing existing understandings of what “counts” as knowledge or can police certain worldviews and epistemologies for the betterment or detriment of future scholarship.

This study revealed that, at least for one communication journal at a specific point in time, it was more common to identify issues with submissions compared to identifying issues and offering guidance on how to resolve them. There are likely multiple, inter-related reasons for this. One is time (Breuning et al., 2015). Reading a submitted article is an investment of time in and of itself. Understanding the arguments made takes additional time and cognitive effort. Identifying flaws and documenting these also takes time but not all flaws are equal. Line edits—the most common type of feedback by number of instances—provide relatively little substantive feedback and are also the “low-hanging fruit” of the peer-review process (Lozano, 2014). More substantive feedback requires a greater cognitive effort but is arguably more valuable. Substantive feedback coupled with constructive guidance takes even more time but raises the question of whose job it is to provide this feedback and when and how journal authors should receive it.

A second potential reason for the low prevalence of reviews that identified issues and provided guidance on how to resolve them is the level of expertise required to do this. This underscores the importance of the abstract as it is this small paragraph that dictates who accepts reviewer invitations. If the abstract doesn’t completely or accurately describe the study's components, the likelihood of a mismatch between the topic and the reviewer's expertise area increases. It also raises the issue of workload and how many reviews specialists receive and must decline, which forces editors to rely on academics with more generalist knowledge (Breuning et al., 2015).

A third issue, how the role and purpose of peer review is understood, returns to the study's theoretical positioning, and acknowledges the contributions that many stakeholders beyond authors play in the scholarship that is published (He et al., 2023). If reviewers understand peer review as merely an exercise in identifying flaws, it is not at all surprising that so many reviews lacked guidance on how to resolve them. However, if they instead see peer review as an opportunity to improve the quality of the submitted research (and further develop the skills and contributions of the person or persons submitting it), it underscores the importance of incorporating this developmental editing perspective formally into post-graduate education and training. At the same time, it also requires editors, publishers, and research organizations to rethink guidance and expectations communicated to reviewers. To this end, we offer the following recommendations.

Recommendations for journal editors, universities, and authors

The normative understanding some publishers have in how they see peer review (e.g., as being “constructive, comprehensive, evidenced, and appropriately substantial” Taylor & Francis, 2022, n. pag.) seems to contrast with how peer reviewers see their role and responsibility (or at least how these are demonstrated based on an evaluation of the reviewers’ raw output: the reviews themselves that were analyzed in this study). Contrasting expectations about what is the purpose of peer review, possibly also affected by limited institutional support to complete quality peer reviews (Breuning et al., 2015), can affect author satisfaction with the process. Thus, we recommend that journal editors and publishers (in consultation and collaboration with academics and universities):

Develop and publish consistent understandings about the peer review process that are clearly communicated to and adopted by the relevant reviewers to achieve the dual purposes of quality control and peer support. To do so, this would likely require active training and guidance in addition to the passive resources and guidance currently available. This requires a conversation in the discipline about whether the role of peer review is to identify issues only (the “critic” role) or whether it is to identify issues and propose solutions or ways forward (the “guide” role). It also includes reaching consensus about editing terminology (what, precisely, is meant by proofreading and line editing, copy editing, and developmental or substantive editing) and whose role it is to engage in which type of editing. Provide resources or recognition to ensure quality peer reviewing and to acknowledge the role that reviewers and editors have in shaping the research that is published. Recognition is already being provided by third-party organizations like Publons and universities should ensure that they sufficiently support and recognize this labor in hiring, promotion, and leadership decisions. Likewise, universities need to allocate appropriate workload for this academic “service” work to happen (with commensurate accountability to ensure it doesn’t fall disproportionately on the shoulders of under-represented academics). Doing so will help to upend older but still prevalent conceptualizations of peer-reviewed research as being only the product of the submitting author(s) rather than also the reviewers and editors who also contribute to the published research. Be clearer about what particular aspects journals, journal editors, and editorial board members expect in submissions. For example, whether empirical approaches are expected, whether the journal's primary aim is to advance theory, whether research methods innovation is especially valued, whether comparative approaches are privileged, etc. This specificity and transparency is needed because reviewers in this sample seemed to, at times, express arbitrary expectations that weren’t clearly communicated in the journal's aims and scope (e.g., one reviewer asked an author of a study focused on Japan to contrast Japanese politics to those of the U.S. and U.K.). Without knowing what reviewers expect, authors will understandably struggle to provide this. Being more transparent and specific about aims and expectations will help potential authors determine if a journal is an appropriate fit. Likewise, being more explicit about what journals privilege also reinforces to academics the qualities and skills that they need to teach their graduate students so they can produce this kind of research. Adopt a social constructionist mentality from both the reviewer-editor and author perspectives. Consciously adopting this perspective has the potential to increase the constructive nature of reviews (whether the reviewer sees their role as critic or guide) and to foment an intent of helpfulness, thereby reducing the likelihood of reviewers providing acerbic or antagonistic feedback. Applying this mentality for editors ensures they see their role not just as transmitters of the reviewers’ feedback but also as active agents with an important role in mediating that feedback. Likewise, seeing the peer-review process as a collaboration rather than a battle encourages authors to more genuinely consider reviewer feedback and use it to enhance their scholarship.

Conclusion

Recalling that peer review has been called “the skill that almost no one is teaching” (Goodman, 2022), future lines of enquiry should focus on evaluating the effectiveness and helpfulness of the limited philosophical and practical tips that journal publishers or individual journals or academics provide. Additional lines of enquiry can be found in evaluating the political dimensions of reviewer feedback. For example, how reviewers use tone or terminology to discipline manuscripts, which can be couched within a broader focus on civility and toxicity within scholarly peer-review. Other research on dynamics in peer-review between the global north and global south and on unconscious bias would also be welcome. Given the results of this study and depending on how closely it converges with the practices and experiences of other communication reviewers and authors, a shift from passive guidance on journal publisher or journal websites to active training and more explicit expectations around peer-reviewing would seem warranted.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.