Abstract

In mid-2022, AI systems automatically translating text into evocative original images became an internet sensation. People compared it to magic: invoking an uncannily competent artist–magician. We call it ‘autolography’ from the Greek ‘automatos + logos + graphos’ (self + word + drawing). Following a discourse analysis of online publications comparing autolography to magic, we analyse its enthusiastic reception from some and critique from others. We identify historical parallels and divergences between the reception of contemporary autolography and early photography: the reduction or transformation of creative labour, negotiations over intellectual property, the alleged democratisation of visual cultural production, and the association with the Western magical imagination. Both photography and autolography prompted renegotiations of artistic practices, professional identities and intellectual property laws. However, rather than being a mechanical eye on the world, autolography undermines faith in images by invoking digitally uncanny materialisations of floating signifiers from AI models.

Keywords

The release of artificial intelligence text-to-image generators such as Dall-e 2 and Midjourney in the middle of 2022 caused both euphoria and outrage. For some, the systems that created images automatically from text prompts seemed magical products of wild imagination and a sense of humour, displaying competency in visual language, including subject selection, composition, use of materials, lighting, perspective, the rules of physics, cultural codes, and artistic styles. For others, they exploited the work of artists whose names and works were used without consent. In this paper, we critically explore the place of this technological ‘magic’ or exploitation within wider histories of computational, media and visual cultures.

We refer to the practice of using AI text-to-image generators as autolography – from the Greek automatos + logos + graphos (self + word + drawing), in a different sense to previous authors (Chiolerio, 2020). Without scholarly work on cultural aspects of autolography, we analyse texts where early reactions to the technology were expressed: in computer science, YouTube clips, online journalism, blogs and social media. To give these developments a deeper history, we examine the nexus between human and non-human creativity in Western mythology, photography and autolography.

In the first section, we propose that the dynamics of the autolographic practice resemble the ancient practice of invocation. In Ancient Greece, artists invoked Muses, goddesses of the creative arts and daughters of Zeus, for inspiration and assistance in creating their work (Vanderveken, 1990). With autolography, users use natural language prompts to invoke deep learning models that algorithmically draw from millions of images to create images that might pass as art. Neural networks have some homology with the psychic and intersubjective networks of meaning whereby people interpret and create texts and images.

In the second section, we compare autolography's public and critical reception with photography – the 19th-century predecessor that transformed image-making more than any other technology. Over the 200-year-plus history of photography, many technical, ethical, aesthetic and legal questions have been negotiated (Marien, 1997). Similar questions emerged in the early days of autolography, particularly concerning its relationship to human creativity and its contested aesthetic, legal and moral status as art.

Communicating through chaos

The practices of autolography resonate with the theme of this special issue: ‘Communicating through chaos: Connection, disruption, community’ in several ways. Developers of autolographic systems describe a non-intuitive process of ‘image diffusion’ by which images are created: diffusion algorithms start with a target image to which Gaussian noise is applied repeatedly until all the image information is lost. To create new images, the process is reversed, with the image gradually being de-noised, guided by both the textual prompt and the image/text AI model trained with deep learning on millions of images and text descriptions. With each step, an algorithm tests for the probability that the text aligns with the prompt. The images become increasingly coherent and believable until they are fully revealed to the user. While photography is celebrated for its chance elements, autolography is characterised by highly random and cryptic processes for creating images. This novel practice disrupts the status quo of art and commercial image-making, and threatens the interests of creative communities and practitioners.

In addition, the emergence of autolography was characterised by the emergence of communities of developers, popularisers, early adopters and artists, particularly in the English-speaking world. The developers’ circulation of pre-print research, sample images, and blog posts reveal a certain urgency in competing over building the most compelling techniques and models. YouTubers’ popularisation of this research reached a far wider audience and welcomed these innovations with great enthusiasm. However, communities of artists and copyright holders also emerged to resist the unacknowledged costs of these emergent media. As the platforms became publicly available through 2022, many users’ experiments began as the equivalent of trick photography and illusionism: looking for spectacular effects. However, a community of users appeared most publicly on Midjourney, which used the Discord chat server as the interface. Users could observe other users’ prompts and the images generated. Midjourney refined its algorithms, so that by early 2023, version 4 was more sophisticated in the generation of realistic faces. Increasingly, though, this reinforced normative standards of female beauty. Social media also became a forum for negotiating the meanings of these technologies and their outputs. Meanwhile, commentators and critics speculated on the implications of this technology, in terms of labour, the value of artistic and technical education, and intellectual property, for artists.

Autolography and the magical imaginary

Many early reactions to autolography compared it to magic, not only because it employed artistic techniques of illusion but also because it worked like magic in its unintelligibility, uncanniness and practical value. By magic, we are not referring to actual supernatural occurrences or ritualistic practices, but phenomena in which an entity (a magician or illusionist, or with autolography the AI and its programmers) hide the processes and procedures through which a marvellous happening or surprising event occurs. Autolography is an example of ‘secular magic’ (During, 2002) – an art of conjuring, involving complicity between conjurer and spectator and their suspension of disbelief. Both spectator and autolographer know that there is trickery in the process through which the awe-inspiring event occurs (algorithmic code and AI engines with autolography). Yet this process remains concealed. Autolography is magic as mass entertainment, with complicity between machines, programmers, and end users. Dan North observes that stage magic as mass entertainment is devoid of supernatural implications: ‘The stage thus appears to be less loaded with otherworldly potential, and magic is aligned with the sophisticated application of technique’ (North, 2007: 180). In the case of AI in general and autolography in particular, the ‘magic’ lies in their enigmatic and unpredictable processes and results. Autolographic machines are the blackest of black boxes, considering the proprietary nature of the software and the obscurity of the training data. This lack of transparency means that beyond the skill of writing effective prompts, users are not aware of the algorithmic processes that generate their images.

Autolographic machines are resonant with the digital uncanny. This term describes how the digital can unsettle cultural and cognitive expectations, but not necessarily coherently or consistently. As Kriss Ravetto-Biagioli points out, the uncanny is ‘intangible – an aesthetic experience that is not fully empirically describable’ (2019: 8). The digital uncanny ‘unsettles and estranges concepts of ‘self’, ‘affect’, ‘feedback’ and ‘aesthetic experience’, forcing us to reflect on our relationships with computational media’ (15). She argues it is incited when non-human digital dynamics such as algorithms and data flows ‘anticipate human gestures, emotions, actions, and interactions, thus intimating that we are machines and that our behavior may be predictable precisely because we are machinic’ (5). Even high-profile expert technologists have used hyperbolic metaphors about the spiritual implications of AI. For example, Mo Gawdat, previously head of Google X, is quoted as saying about AI, ‘We’re going to build amazing things that are going to change even more people's lives… The reality is… we’re creating God’ (Rifkind, 2021). Elon Musk is quoted as saying about artificial intelligence that ‘We’re summoning the demon’ (Marche, 2022).

Of course, autolography was not the first technology greeted as uncannily ‘magical’. Telegraphy, photography and even player pianos sometimes had dark supernatural connotations. For instance, from the 1840s, ‘spiritualists claimed to be able to scientise supernatural phenomena […] by appearing to communicate with the spirit world via telegraphy, they could exploit the perception of scientific apparatus as objective and unmediated’ (North, 2007: 178). Here, spiritualists used media and technology as proof of the supernatural, as conduits of the ultimate truth.

Photography has attracted equally supernatural connotations, as we will see further down this paper. It is important to note, however, that in some Global South and non-Western contexts, photography has important religious and ritualistic implications. Among some Aboriginal Australians, for instance, the camera was ‘a dangerous magical instrument capable of stealing some essential part of a human being, or of causing illness or death’ (Michaels, 1991: 259). Other image-making technologies, including cinema, have had similar culturally specific reactions across the globe.

Like cinema in the age of illusionist/filmmaker George Méliès, in the past three decades, digital images such as CGI special effects and games, which require high-level technical knowledge and artistic skill, have often been sold as magical (e.g. George Lucas’ FX company is called Industrial Light & Magic). Controversial techniques such as Deepfakes require expertise and substantial work to create images that threaten to confuse reality and illusion: a concern that can be traced back to Plato (Coeckelbergh, 2019). The same applies to some extent to apps, such as FaceMagic and FaceLab, for face-swapping, automatic ageing, creating caricatures from photos and image processing. These are more accessible to everyday users, but they are relatively limited applications of AI.

What made autolography different from these technologies was the simplicity and power of generating polished images from any English-language text. It worked like an unreliable, slow, surprising search engine for invoking uncannily tailored original images. Commentators on the autolographic experience often compared it to magic: DALL•E is spooky good. Not so many years ago, it was easy to conclude that AI technologies would never generate anything of a quality approaching human artistic composition or writing. Now, the generative model programs that power DALL•E 2 and Google's LaMDA chatbot produce images and words eerily like the work of a real person. (Hartsfield, 2022) using Midjourney for the task felt exhilarating: All I did was paste the lyrics, and the images slowly materialized in front of my eyes without any other effort, like a genie pluming out of a lamp. (Diaz, 2022)

Commentators observed that technologies that feel like magic tend to become popular: When a text prompt comes to life as you envisioned (or better than you envisioned), it feels a bit like magic. When technologies feel like magic, adoption rates tick up rapidly. (Warzel, 2022)

Journalists observed autolography moved forward not just incrementally but in dramatic leaps.

Some of that progress has been slow and steady – bigger models with more data and processing power behind them yielding slightly better results… But other times, it feels more like the flick of a switch – impossible acts of magic suddenly becoming possible. (Roose, 2022)

These were not the first media technologies hailed as otherworldly. 19th-Century reactions to photography and other media technologies, such as the cinema drew connections with magic, the occult, and sorcery.

Related ideas came into play particularly with the fad of spirit photography from the 1860s, which had many people believing that photographs revealed hidden fairies and ghosts. This global spiritualism fad, with seances and communication with the dead, was an early example of viral disinformation. It embraced science and emerging media such as optics, chemistry, magnetism, electricity and radio, but interpreted them in mystical terms. Spirit, unlike any other subject matter that the camera would survey, drew attention to the paradox of photography's double identity: at one and the same time an instrument for scientific enquiry into the visible world and, conversely, an uncanny, almost magical process able to conjure up the semblance of shadows and, with it, supernatural associations. (Harvey, 2007: 7)

So, where does the AI get its version of reality from? It gets it from programmers’ algorithms that invoke informational patterns across training sets of image–text pairs often gathered from the internet. Text and image data are integrated into the deep learning process and treated dynamically in multi-layered deep learning models. Autolography draws together two meanings of the term ‘ontology’ – the technical ontologies of categories, properties and relations in performing computer operations – and the philosophical concern with the nature of reality. The AI algorithms generate their own technical ontological consistencies in the emergent models. Risking misrepresentation, we can say that the AI model is the non-human equivalent of the subconscious if it were exposed only to media texts (i.e. if we didn’t have a fully sensorial experience of reality). Viewers of autolographs inevitably assess images according to a common-sense view of reality, often noticing immediately inconsistencies and distortions that betray the image's detachment from the everyday.

As we discussed earlier, submitting prompts can be compared to the ancient practice of invocation. Users call on a non-human for inspiration or help in creating art. Among the most famous entities invoked in the Western tradition were the Muses, female deities from Ancient Greece associated with arts, including music, poetry, history, dance, comedy and tragedy. Human artists claimed to cultivate relationships with these characters, who are notoriously as unreliable as they are powerful. Supplicants were required to follow the proper protocols in performing their coded utterances. This myth recognises that human creativity (which science now understands as a complex cognitive process), skill and knowledge have always been mysterious and elusive. Creativity has long been compromised by performance anxiety, writer's block and other creative impasses. Artists cultivated their relationships with Muses who personified the collective embodied wisdom and cultural knowledge of the community. The Muses served as resources for sparks of creativity outside the control of the artist (Prose, 2002). Artists have also looked to substances such as alcohol, mescaline and psychedelics to unlock creativity and awaken the Muses (see Sessa, 2008; Janiger and DeRios, 1989).

Autolographic systems might be seen as the latest incarnation of the Muses: they are conjured for their creative powers in generating works of ‘art’ (or something approaching a work of art). However, in this case, they are holders of AI models trained in contemporary non-curated ‘museums’ – sets of hundreds of millions of images in various genres and styles, most often extracted without consent or payment to the original creators. Systems like Midjourney function as non-human curators and interpreters of visual culture that, over their first year, demonstrated higher and higher levels of visual modality (Figures 1–3). Sources of images for autolographic systems include collections such as DeviantArt, Flickr, Artstation, Pinterest and Getty images (Edwards, 2022). Datasets like the Common Crawl corpus, ImageNet, COCO (common objects in context), Google's OID (Open Images Dataset), Adobe Stock and LAION-5B are selective distillations of visual cultural knowledge in the form of millions of text-image pairs. They feature enormous sets of images of people, objects and scenes captured from the regions where data was extracted, including Western cultural motifs, ideologies, and English-language texts. They also manifest biases towards dominant values in the selection and composition of images and in the linguistic choices in the associated text. These biases stem from both the cultural backgrounds of developers and from the social power relations that influenced the production and selection of images in the datasets. The nature of the images contained in these datasets in terms of ethnic, ableist, cultural, and linguistic representation also sheds light on gaps in digital media access and capabilities that determine who creates, curates, distributes, and consumes the majority of digital images on the internet

Prompt: Ancient Muse Calliope using a laptop (Midjourney v.3, July 2022) (authors).

Prompt: Ancient Muse Calliope using a laptop (Midjourney v4, November 2022) (authors).

Prompt: Ancient Muse Calliope using a laptop (Midjourney v5, March 2023) (authors).

The way the text/image pairs have typically been created on the web serves the invocational purposes of autolography well. Since the 1995 introduction of the ALT tag in HTML, the norm for creating web content has been to include descriptions that are an alternative to the image itself. In semiotic terms, the text alternatives are deemed to have the same referent. For example, an image of a Burmese cat might be described simply as ‘Burmese cat’. These alternatives to images remain unseen by most users. The original intention was to make web content accessible to low-bandwidth users, search engines, and people who are blind or visually impaired. This practice produced millions of text-image pairs that anchor the meanings of text and image as closely as possible. This concept of anchorage appears as an alternative to ‘illustration’ in Roland Barthes’ (1977) classic essay ‘Rhetoric of the Image’. He proposes that because images have no code, their meanings can be uncertain without a text caption to anchor them. Uncaptioned images ‘imply, underlying their signifiers, a ‘floating chain’ of signifieds, the reader able to choose some and ignore others’ (39). He proposes that mass-communicated images came by convention to be often accompanied by text captions that helped resolve the uncertainty of the meaning of the image and anchor it. In autolography, tying images and ALT tags together train neural nets to relate the words ‘Burmese cat’ to a ‘Burmese catness’ that can be rendered as several invoked cats. Here indefinite repetitions of invocations of deep learning algorithms make visual Derrida’s (1973) conception of différance, with its constant deferral of meanings and production of an indefinite number of images, none of them complete or final, and none of them secured by the judgement or interpretation of a subject.

In effect, prompting a neural net automates the invocation of floating chains of signifiers or fields of différance in an AI model in the cloud, unchaining and diffusing image elements, statistically calculating which of them to stress to create intermedial interpretations of the prompt. However, the chains are not comprised of signifieds in peoples’ minds but invoked signifiers in neural nets without involvement from mental processes. In a sense, the AI artificially performs visual cultural competency in negotiating text-image relationships to evoke human visual cultural literacy. Of course, this process differs greatly from how people develop visual and language literacy by internalising networks of words, pictures, sounds and objects. This begins in childhood, prompted by artefacts such as posters in the nursery – A for Apple, B for Ball, and so on, which establish associations between words, images, objects and sounds (Mankin and Simner, 2017). For people, this knowledge is more complex than being trained on text-image pairs. However, in philosophical terms, we can observe that autolography is empiricist more than rationalist. Unlike rule-based rationalist systems such as Mandelbrot images and artificial life, which generate complex appearances and behaviour through algorithms and procedures (Bown, 2021), deep learning extracts from its training to build multidimensional models from which it can invoke images that are evocative of the experienced mediated world.

But autolographic Muses are frustratingly intransigent at consistently creating the images the creator desires. As in ancient myths, invocational power is unreliable and unpredictable. The invoker often cannot utter the magical words precisely enough, and the Muse is distracted and indifferent. Both the frustration and delight with autolography is its unpredictability, a trait that exacerbates its uncanniness. Autolographic invocations often disappoint users, partly because the user needs to spell out the elusive right words (made increasingly slippery by new, highly technical commands in platforms such as Midjourney version 4) but mainly because of the inherent limitations of the medium. There are five forms of distortion with autolography: (1) the imprecision of prompts, (2) the limitations of training sets, (3) the randomness in the processes of image diffusion, (4) acts of censorship by the platforms, and (5) strategic manipulations of the algorithms (Salvaggio, 2022). The early versions of platforms had some obvious problems. For example, in many cases, hands and faces were distorted or malformed. Text elements in the image were often rendered as gibberish. Objects were misplaced or configured unexpectedly. Attributes assigned to one element of an image were assigned to other elements. And yet occasionally the output was extraordinary, evocative, and almost technically flawless, seeming to show either a convincing mimicking of reality or the expression of imagination and insightful interpretation.

This quest for realistic images is a common aspiration for the technical autolographic community. For visual social semioticists Kress and van Leeuwen (2016), this is a desire for high visual modality. They argue that there are different ways that images implicitly make claims about their own truth. This is not an objective truth, but a truth assessed by relevant communities: ‘what is regarded as real depends on how reality is defined by a particular social group’ (158). What is considered realistic is said to have high modality. For example, through the conventions of naturalism, images resembling everyday perceptions or photography are deemed realistic. In 2022, DALL•E 2 was generally lauded as being able to generate the most photorealistic images, perhaps exceeded even by Google's Imagen, which was not publicly accessible (Google Research, 2022). However, by changing the prompt, users can invoke what seems to be an authentic historical or abstracted image that reflects something other than naturalism. More than an Instagram filter, though, autolographic realism can extend to presenting coded versions of history, with clothes, race and subculture, such as in the prompt ‘Harlem street in the 1970s’, which could be read as another form of exploitation.

The surprise with autolography, and the style transfer technique that preceded it, is this uncanny algorithmic exploitation of the previously intangible and subjective phenomenon of style. A quality that was most human seems to be easily mimicked, even if this mimicry might be judged to produce clumsy clichés. This produces images that are high modality, but for different reasons than naturalism. The popular invocation of Van Gogh‘s painting style is presented by its creators as high modality because it seems true to the style of a revered artist and therefore carries cultural value. And yet, with style transfer, autolography risks reproducing pastiches and clichés. Each of the platforms had distinctive default styles. Craiyon's images were the most distorted and nightmarish, while Stable Diffusion was only slightly better, often producing distorted bodies, faces, objects and architecture. By contrast, Midjourney created images that reflected some norms of popular visual arts. Regardless of the platform, though, it was apparent that the galleries of outstanding work distributed online were carefully selected from many attempts. Creating images most often generated many images with unusable levels of distortion.

The autolographical community's attitude towards generated images shifted over time. The first samples of autolographic images released in 2021 by OpenAI invoked playful surrealistic juxtapositions of elements unlikely to be found together, as suggested by the reference to Salvador Dalí in the name 'DALL•E'. The first page began with the suitably zany prompt ‘an illustration of a baby daikon radish in a tutu walking a dog’. With DALL•E 2, OpenAI's webpage presented samples of fantastic anthropomorphised animals and astronauts. When the public got access to these platforms, autolographic images began circulating on social media. Many became memes, invoking popular subjects such as Ronald McDonald, Simpsons characters, and even the psychoanalytic theorist Slavoj Žižek eating lobster. However, platforms were cautious about the risks of users creating offensive, copyrighted or illegal images and blocked certain prompts. DALL•E 2 initially barred the production or uploading of recognisable faces because of adverse publicity about Deepfakes (Wiggers, 2022), which some used to produce apocryphal pornographic videos. Many platforms disallowed words relating to violence or pornography, as well as prompts that could trigger controversies, such as ‘Prophet Mohammed’ and ‘Nazi’, or the names of artists that might render gory imagery, such as ‘Cronenberg’ for the Canadian director of Crash, The Fly, and Videodrome. On the other hand, most seemed to default to stereotypes privileging white, patriarchal, able-bodied, cis-gender, and heterosexual interpretations of the world. For example, if the user invoked a nurse, the person represented would be female, while an invocation of a CEO would produce a man, most probably white. The black-boxed nature of the training datasets makes the origin and nature of these AI decisions inaccessible to the end user. They reveal, however, a lack of diversity representative of the images contained in the datasets: they do not represent the entirety of the population, with many AI companies seemingly uncritical of this fact.

As time progressed, in some platforms, autolography seemed to become a more considered creative practice, with communities of users experimenting and collectively learning how to create prompts that produced aesthetically pleasing images. The Midjourney platform, publicly released in July 2022, adapted the group chat app Discord as the interface. Users were instructed to preface their prompts with the command ‘/imagine’. Beginner autolographers used ‘newbie’ channels to experiment publicly, automatically sharing prompts and images with other users on the channel. This platform provided community spaces for sharing invocational techniques, revealing to each other what was effective and what was not. It demonstrated that even brief and abstract prompts could create evocative images, while long and detailed prompts could refine image objects and style. In late 2022, Midjourney introduced paid subscriptions that allowed users to create more images more quickly. Creating effective prompts became a virtuosic practice, even if Midjourney images shared a recognisable house style: with science fiction, fantasy and gothic imagery becoming prevalent genres. Many users tailored prompts to create images of beautiful women. Developers responded in versions 4 and 5 by making it easier for users to create attractive faces by default. Meanwhile, creators increasingly chose to remove their prompts when they published their images. Showcases on social media exhibited images without the prompts that created them. The images were made to stand independently, unbound by the invocation that created them. While the autolographic generation of images is very different from photographic capturing of images, there are some clarifying parallels between the two that we will explore in the next section.

Parallels between photography and autolography as ‘magic’ media

The first years of professional photography from the 1840s were characterised by very rapid global uptake by enthusiasts, entrepreneurs and showmen but some ambivalence about the character and significance of the medium. Unlike other technologies of the industrial era which were seen in opposition to nature, some commentators on photography equated the apparatus with nature herself, claiming that this was a way of capturing the natural world directly (Baudelaire, 1980).

At the same time, there was a tension between photography's scientific capacity for truth-telling and its association with magic. First appearing in carnivals and entertainment venues, ‘the association of magic and photography fostered a view of the photographer as a magician or shaman, that is, as one who works outside the bounds of everyday life and morality’ (Marien, 1997: 14). For Walter Benjamin (1972) photography has, despite its technical origins, a capacity to reveal previously indescribable phenomena. He argues that ‘the difference between technology and magic (is) entirely a matter of historical variables’ (7–8). He contends photographs can carry a magical value which a painted picture can no longer have for us. However skilful the photographer, however carefully he poses his model, the spectator feels an irresistible compulsion to look for the tiny spark of chance, or the here and now, with which reality has, as it were, seared the character in the picture. (7)

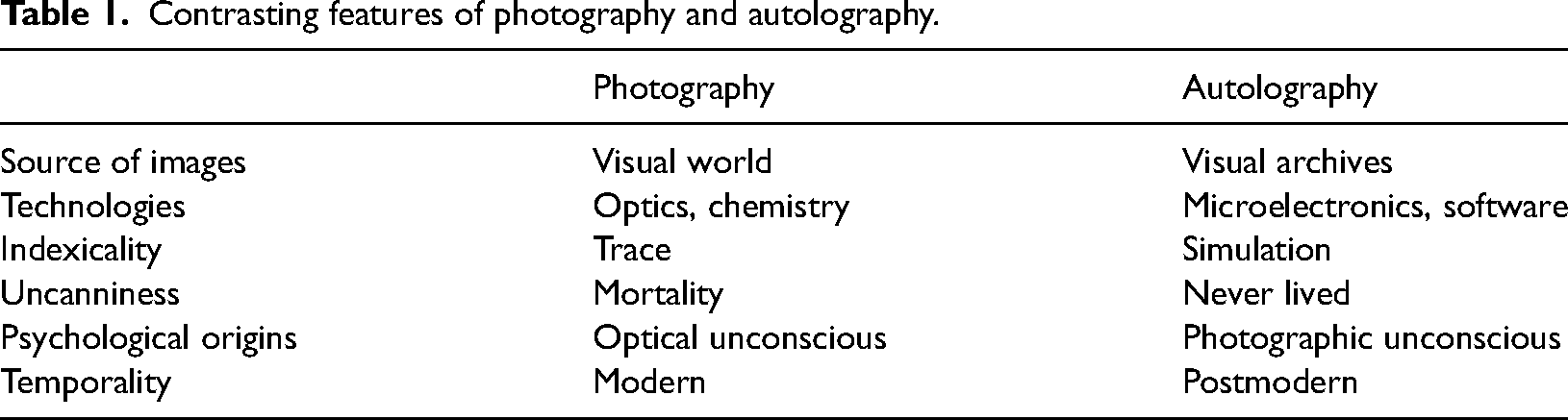

Autolography differs from photography in several ways (see Table 1). It can never make the truth claim that photographs often still retain. Rather, autolography makes us aware of the extent to which the contemporary visual unconscious is influenced by the ubiquity of images in everyday digital life: advertising, cinema and television, social media and so on. Where the founding myth of photography is that it is both iconic and indexical (Sadowski, 2011), autolography create simulacra (Baudrillard, 1994). What it reproduces was never seen. It is a resemblance without a referent. Rather than shaping light with a lens into analogue images, autolographic algorithms do not work with images. They work with digital information and calculations of probabilities in dataspaces. Its subjects are not in the visual fields of everyday life but in calculations of data patterns in massive image archives. Autolography fixes its images not through chemistry or CCDs, but through programmed random diffusion procedures in neural networks. These are related to Deepfakes, another technology that challenges authentic identity, but Deepfakes requires considerable effort and an intention to deceive. If photography is uncanny in its fixing an image that draws attention to our mortality – the ‘punctum’ that pierces us (Barthes, 1982: 27) – the autolograph is uncanny when it presents vivid images of people who were never alive and places that never existed. Autolography's highly accessible and playful invocations of simulacra arguably foster in its users an assumption that all images are suspect, even if they seem to resemble someone and express emotions. Rather than asking whether people will be fooled by the realism of autolography, a more pressing question is to know how its processes and outputs are related to traditional artistic work and the art world.

Contrasting features of photography and autolography.

Controversies about whether autolography is art emerged early. For example, in August 2022 Jason M. Allen, known online as ‘Sincarnate’, won the emerging digital artist competition at the Colorado State Fair for the work ‘Théâtre D’opéra Spatial’, an elaborate sci-fi-inspired image with hints of the Baroque which he printed on canvas. Allen produced the image using Midjourney, with which he had been experimenting for weeks, a relatively short time compared to the years needed to master figurative oil painting. Other contestants accused Allen of cheating, but he claimed that his ‘creative’ methods were clear from the beginning. Interviewed by The New York Times, Allen said: ‘I couldn’t believe what I was seeing. I felt like it was demonically inspired – like some otherworldly force was involved’ (Roose, 2022). By the nature of the medium, autolographers relinquish much of their agency over the creative act, as it is only present in the magical efficacy of the prompt. While most creators at the time published their images with the prompts that generated them, Allen refused to make his prompt public, saying, ‘If there's one thing you can take ownership of, it's your prompt’ (Harwell, 2022). His image was nicely produced and well chosen, but not unusual. His provocative move was to add a slightly ironic or pretentious French title, remove evidence of the processes of its invocation, and submit it to an art competition. From these acts, the controversy around how he created this work became a global news event (Figure 4).

‘Theatre D’Opera Spatial’ (Jason Allen / Midjourney (Public Domain), 2022).

In response, author David Brooks (2023) wrote a column in The New York Times saying that AI is better understood through revealing what it can’t do. Of AI art such as Allen's ‘Théâtre D’opéra Spatial’, he said: ‘It's often bland and vague. It's missing a humanistic core. It's missing an individual person's passion, pain, longings and a life of deeply felt personal experiences. It does not spring from a person's imagination, bursts of insight, anxiety and joy that underlie any profound work of human creativity’. Even though these technologies are constantly evolving, there seems to be a common belief that AI text-image generators cannot yet capture the depth of human emotion refined in painting or spontaneously produced in photography.

Perhaps we are at a watershed moment and autolography will become part of Western ‘everyday data cultures’ (Burgess et al., 2022) and new, creative ways of using it will emerge. Or we may also be at the beginning of a period of legal and cultural contestation against these techniques and technologies. Autolography might even trigger a reactive, humanist renaissance in the arts. As Anthony McCosker (2022) has stated about the oft misunderstood potential of Deepfakes: ‘While deepfakes can create spectacular social media events, they also exemplify the everyday activities and knowledge-building that shape the way AI is beginning to be circulated, encountered, and built in ordinary rather than just corporate technology contexts’ (2022: 3). It seems there is no turning back in the incorporation of AI systems into everyday data cultures, and autolography is one of the various artificial intelligence autointermedial applications that challenge us to rethink what it is to be human and what human traits such as creativity mean in the face of increasingly capable and complex machine learning systems.

One reason for the controversy around autolographic creations is the belief that art should take considerable effort and skill (Bown, 2021). Like photography in its day, autolography seems to dramatically reduce the human creative labour in producing images worthy of being exhibited, potentially threatening the capacity of existing human image-makers to compete with machines. But is not this minimisation of work in creative acts based on many unacknowledged human and non-human co-authors? Photography was initially seen as an automatic process that relied on sophisticated mechanical elements to capture something already given. Today, no one would dispute that photographers can be artists. Autolography, too, is a semi-automatic artistic mediator, invoking the algorithmic work of programmers, the unacknowledged creative practices of millions of photographers and artists in the training sets, and the global networks, servers, graphical processing units, algorithms and enormous datasets that activate this medium.

However, developers of autolographic systems seem to disregard all these externalised forms of work and present them simply as ‘a tool capable of generating realistic images from natural language (which) can empower humans to create rich and diverse visual content with unprecedented ease’ (Nichol et al., 2021: 1). They argue it allows people to avoid the need for ‘specialised skills and hours of labour’ (1). In exuberant videos in a series of ‘2-minute papers’ on image diffusion, Hungarian YouTuber Károly Zsolnai-Fehér hailed logography as a revolutionary democratisation of image-making, punctuating the video with his catchcry ‘What a time to be alive!’ I am sure this tool will democratise art creation by putting it into the hands of all of us. We all have so many ideas and so little time. And DALL•E will help with exactly that. So cool. Just imagine having an AI artist that is, let's say, just half as good as a human artist, but the AI can paint 10000 images a day for free. Cover art for your album? Illustrations for your novel? Not a problem. A little brain in a jar for everyone… Bravo OpenAI… the impact of this AI is going to be so strong there will be the world as we know it before DALL•E and the world after it. (Zsolnai-Fehér, 2022: time 7:47–8:33)

Despite the claims that autolography democratises the creation of images among tech savvy users, the practice raises unresolved ethical and legal questions about the status of its products as art and intellectual property. First, what are the ethics and legalities around exploiting the dead labour of uncredited millions of creators of images and texts in the training sets? Autolography is highly problematic for the moral rights of artists whose names and styles are expropriated for autolographic prompts. Polish digital artist Greg Rutkowski, recognised for his fantasy images (he has provided illustrations for games such as Horizon Forbidden West, Anno, Dungeons & Dragons, and Magic: The Gathering) had his name invoked by thousands of autolographers. His authorial reputation and algorithmically extracted style were exploited, and his work potentially devalued (Heikkiläm, 2022). Surely some form of self-regulation, or external regulation is called for to limit this abuse of artists’ names and styles.

Second, what is the copyright status of the images generated by machine learning? On both counts, in different jurisdictions, legal developments are shifting in favour of the tech industries by disavowing the rights of creators of the images used to train AI while asserting the right for copyright protection for the developers and users of image generators. While currently, the United States Copyright Office has ruled that authors of copyrightable works must be human, some scholars and industry figures argue that AI systems should be given the status of author and therefore be given copyright protection (Brown, 2018). Brown argues that computer-generated art quickly shows that computers can perform creative work but argues they should also be considered authors. The stakes behind these claims are suddenly higher with the growth of autolography. The European Union distinguishes between ‘AI-assisted outputs’ and ‘AI-generated outputs’ to determine whether a machine-generated work could be considered art deserving of copyright. Only AI-assisted outputs that imply substantial technical or artistic human labour and ingenuity can be copyrighted (De Grauwe and Gryspeerdt, 2022).

Enthusiasts present autolography as more convenient, faster and cheaper than any human artist (Nichol et al., 2021). They stress that users can quickly make any number of new images, tweaking the prompt every time. However, others indicate that there are numerous limitations to autolography. The suggestion of some advocates that it might soon generate any image imaginable is a myth. For example, Salvaggio (2022) demonstrates that DALL•E 2 cannot produce convincing images of people kissing. He attributes this failure to biased training data sets, curatorial censorship and lack of comprehensiveness. He argues that autolographs are ‘infographics for a dataset’, their faults indicating through the images how biased the dataset is. He observes serious failures such as that ‘faces of black women were consistently more distorted than the faces of other races and genders’ (2022).

In the early days of photography, there was similar aesthetic, legal and ethical contention over the status of photographs. Photography was initially seen as a merely technical practice, so its products in most jurisdictions did not qualify as creative works deserving copyright protection (Bowrey, 2012). Baudelaire (1980) poked fun at photography as the ‘refuge of failed painters with too little talent’ (87). He claimed that photography's ‘true duty… is that of handmaid of the arts and sciences, but their very humble handmaid’ (88). This attitude perhaps reflected the cultural location of the early photographic industry. This globalised high-tech media phenomenon emerged in the 1840s, characterised by a rapid series of innovations, from Daguerrotypes to wet-collodion to dry-gelatin plates (Jenkins, 1975), each of which made photography cheaper and more accessible. By the mid-20th century, certain genres of photography were widely recognised as art, but this was only after global visual culture, including the art world, had been transformed by photography.

Conclusions: autolography's ‘Kodak moment’?

Another popular form of entertainment in the late-19th century was learning how to perform magic tricks. A series of books beginning with Modern Magic (1876) was published by Angelo John Lewis, also known by the pseudonym ‘Professor Hoffman’ (Houston, 2019). Over the next few decades, magicians and illusionists captured the imagination of global audiences, a phenomenon that culminated with the popularity of Harry Houdini. We can’t help seeing parallels between these three phenomena, each of which experienced a historical moment: stage magic, photography and autolography. Each involved new technologies, forms of illusion that confounded the logic of the senses, a community for sharing the work and refining techniques, a period of rapid innovation, and some dramatic disruptions to social and aesthetic norms. For the first two of these three, this period of acceleration ended. Magic was professionalised, demystified and later, televised, while generating amateur communities on and offline. Photography was commodified as a consumer technology during the famous ‘Kodak moment’ in 1900 but also contributed to transforming the visual universe and the art world.

Over a century later, a new set of conditions emerged: an accumulation of billions of networked photographs and a medium characterised by its capacity to invoke new evocative images from algorithms, the photographic archive and networks. We are arguably entering a period of acceleration of mass access to autolographic invocational media, along with other artificial intelligence technologies such as generative adversarial networks, GPT language models, automatic music generators, autolographic video, biometric recognition, predictive policing and so on. However, with algorithmic bias and the inevitability of errors in statistically-determined judgements, AI systems may, in many contexts, struggle to gain faith in their reality. Even if it rarely achieves distinction in the art world, it may well disrupt image production in commercial contexts. The issues emerging from autolography are mostly linked to the black-boxed nature of the software.

However, it is not yet clear whether the scale of autolography's nascent cultural impact will compare to either magic tricks or photography. Beyond the photographer pressing the shutter or the autolographer writing the prompt, where does creativity reside? Isn’t creativity always distributed among a dispersed array of humans and non-humans? But are autolographic techniques unfair to those who have mastered older art practices? How will the copyright industries react? How can autolography be regulated to ameliorate these challenges? How do these media affect our perceptions of images and understanding of reality? The controversies over autolography and other generative AI systems have only just begun.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.