Abstract

Objectives:

With the increasing application of high-throughput transcriptomic data in cancer research, identifying reliable cancer biomarkers in high-dimensional settings remains a major challenge. This study aims to systematically evaluate various regularized conditional logistic regression (CLR) methods under a matched case-control (MCC) design, focusing on their performance in variable selection, parameter estimation, and predictive accuracy. Special emphasis is placed on the importance of the matching design in reducing confounding effects and improving model interpretability.

Methods:

We utilize RNA-seq data from The Cancer Genome Atlas (TCGA), specifically datasets for liver, thyroid, and lung cancers, which include paired tumor and adjacent normal tissue samples. In our analysis, we apply 4 regularized CLR methods implemented in R packages—namely “clogitL1,” “pclogit,” “clogitLasso,” and “penalizedclr”—to analyze over 20 000 gene expression features. We evaluate the comparative performance of these methods based on metrics such as gene selection stability, predictive accuracy, and interpretability. Additionally, we employ a bootstrap resampling framework to estimate gene selection probabilities, which serve as a measure of gene importance.

Results:

Our results show that incorporating the MCC design significantly enhances feature selection performance by mitigating confounding noise. The regularized CLR models successfully identify several well-established cancer-related genes with high selection consistency and statistical significance. In contrast, models that ignore the matched design tend to miss critical biomarkers or produce excessive false positives, leading to potentially misleading interpretations.

Conclusions:

This study highlights the value of integrating a matched case-control design with regularized CLR methods for the analysis of high-dimensional transcriptomic data. The proposed analytical framework offers improved accuracy, robustness, and biological relevance, providing a practical and scalable approach for cancer genomics research. It also supports the advancement of precision medicine and translational applications.

Keywords

Background

Precision medicine is an emerging medical paradigm that tailors treatment and prevention strategies to individual patients based on their genetic, molecular, and environmental profiles. With the rapid advancement of gene sequencing and high-throughput technologies, precision medicine has become a transformative trend in both clinical practice and biomedical research, particularly in oncology, endocrinology, and cardiovascular medicine. Recent studies, such as the Taiwan Precision Medicine Initiative 1 and population-specific polygenic risk scores for Han Chinese ancestry, 2 provide strong evidence for the feasibility and utility of personalized approaches in large-scale and population-specific contexts. Rather than relying on a 1-size-fits-all strategy, precision medicine aims to deliver individualized therapeutic interventions while simultaneously enabling disease prevention through early risk assessment and lifestyle-based recommendations. In this context, predictive models are used to evaluate patients’ responses to treatment, whereas prognostic models help assess the risk of disease recurrence or predict survival outcomes. Both types of models are essential for guiding therapeutic strategies and monitoring disease progression.

Traditional diagnostic methods for cancer, such as imaging and histopathological examination, are widely used in clinical practice but may be limited by insufficient information or subjective interpretation by clinicians. 3 In contrast, genetic testing and molecular profiling provide earlier and more accurate diagnoses, helping physicians design personalized treatment plans that can improve patients’ quality of life and clinical outcomes. 4 Conventional pathological classifications, which mainly rely on tumor size and grade, offer some diagnostic value but lack molecular-level information and have limited predictive power for drug response. By analyzing gene mutations and molecular subtypes, it becomes possible to select targeted therapies according to the specific characteristics of individual tumors, thereby improving treatment accuracy and efficacy. 5 Molecular subtype classification integrating multi-layer data—such as global gene expression, pathway activity, and biological functions—forms the foundation of precision medicine and reveals the heterogeneity of cancers more comprehensively. Because cancer exhibits high molecular heterogeneity and complexity, 6 developing accurate diagnostic models that capture its biological characteristics has become a key research topic. Large-scale cancer genomic databases such as The Cancer Genome Atlas (TCGA) provide abundant and standardized data resources that greatly facilitate the development of such models.

In cancer genomics research, the Matched Case-Control (MCC) design has been widely adopted, especially in high-dimensional data contexts, as it helps control confounding factors, improve statistical power, and enhance feature identification.7 -9 MCC designs match key demographic variables (eg, age and sex) to ensure comparability between cases and controls in non-study variables. In genomic studies, tumor tissues and adjacent non-tumorous tissues are commonly used as matched samples for differential expression analysis. For example, in TCGA, “normal” samples generally refer to tissue adjacent to tumors that are histologically verified as non-tumorous, or to areas of the same organ without detectable pathological changes, serving as valid reference controls. Ignoring the matched design during analysis can lead to biased estimates and reduced statistical power.10,11

With the increasing prevalence of high-dimensional data such as transcriptomics, MCC design has become increasingly important, particularly in cancer biomarker discovery. For instance, TCGA provides gene expression data from paired tumor and normal tissues, enabling the identification of differentially expressed genes and subsequent gene enrichment and pathway analyses. 12 In the TCGA lung adenocarcinoma dataset, 506 patients are included, 58 of whom have matched tumor and normal tissue samples spanning over 20 000 gene expression profiles. Such designs offer increased statistical power and enhanced interpretability, providing a strong foundation for high-throughput transcriptomic studies. 9

However, high-dimensional modeling often faces the so-called “curse of dimensionality,” where the number of variables far exceeds the number of samples, leading to model overfitting and performance degradation. 13 To address this issue, feature selection methods are widely used to eliminate redundant and irrelevant variables while retaining key features associated with disease states. This not only enhances predictive performance but also aids in understanding underlying biological mechanisms. Although MCC designs can improve variable selection efficiency, many studies neglect the matching structure when applying feature selection methods, resulting in decreased selection accuracy and biased outcomes.8 -10

Conditional Logistic Regression (CLR) is a common statistical approach for analyzing MCC data, as it accurately estimates the relationship between covariates and disease status while accounting for matched pairs. 14 However, the application of CLR in high-dimensional data remains limited, particularly when the number of samples is small, the number of variables is large, and collinearity or noise is present—conditions that reduce the model’s ability to distinguish cases from controls. To overcome these limitations, several regularized CLR methods have been proposed, such as “clogitL1,” “pclogit,” “clogitLasso,” and “penalizedclr.”11,15 -17 Nonetheless, these methods have rarely been systematically compared and evaluated on real high-dimensional matched gene expression datasets. Moreover, since CLR relies on linear assumptions, its ability to discriminate between cases and controls decreases in the presence of collinearity, noise, or large-scale data. 18

This study aims to conduct a systematic comparison and analysis of various regularized CLR methods using high-dimensional transcriptomic data from 3 TCGA cancer datasets with matched designs. We evaluate each method’s performance in terms of variable selection accuracy, parameter estimation stability, and predictive ability, and apply a bootstrap resampling approach to assess the stability and biological relevance of selected genes—thereby validating their potential in cancer biomarker discovery.

The major contributions of this study are as follows: we systematically integrate and evaluate multiple existing regularized CLR methods in the context of high-dimensional MCC data and propose a comprehensive analysis framework suitable for real-world applications, encompassing data preprocessing, modeling, feature selection, and result validation. Through empirical analyses of 3 TCGA cancer datasets with matched transcriptomic samples, we identify potential differentially expressed genes and underlying biological mechanisms, aiming to provide researchers in the field of precision medicine with practical analytical tools and methodological recommendations of high reference value.

Materials and Methods

TCGA Transcriptomic Data Sources

Given The data used in this study comes from TCGA project, specifically transcriptomic data, which can be downloaded using the R package “UCSCXenaTools.” 19 The cancers studied include liver hepatocellular carcinoma (LIHC), thyroid carcinoma (THCA), and lung adenocarcinoma (LUAD). Data can be accessed via the UCSC Xena platform’s TCGA Hub database at https://tcga.xenahubs.net, with official data sourced from the TCGA project’s website: https://www.cancer.gov/ccg/research/genome-sequencing/tcga.

To provide a comprehensive overview of the data used in this study, we have added Table 1, which summarizes the sample characteristics of the 3 TCGA datasets analyzed. The table includes the total number of samples, age range, gender distribution, and the number of matched tumor–normal pairs. In addition, we report the proportion of genes (out of 20 501 genes) that follow a normal distribution under the paired-sample structure in each dataset, which was assessed using the Kolmogorov–Smirnov (KS) test. For instance, the TCGA LIHC dataset contains 370 tumor and 50 normal tissue samples, with 50 patients providing both. Each sample includes data for 20 501 genes. Our analysis focuses on these 50 paired samples, labeling tumor tissues as 1 and normal tissues as 0.

Summary of Sample Characteristics, Matched Tumor–Normal Pairs, and Gene Distribution in the 3 TCGA Datasets Analyzed.

We first constructed an analytical flowchart (Figure 1) to illustrate the key components of the study. Each step depicted in the flowchart was then comprehensively described to provide clarity on the methodology and rationale underlying the analysis.

Flowchart of regularized conditional logistic regression analysis.

Exploratory Feature Screening in High-Dimensional Genomics

To identify potentially relevant genes for further analysis, we first conduct a pre-screening step using the entire dataset. We apply differential expression analysis (eg, paired t-tests) across all MCC samples to select a subset of genes that show significant variation. This step aims to reduce dimensionality and focus subsequent modeling on features with a strong biological signal. Importantly, we emphasize that this pre-selection serves as part of an exploratory analysis and is not used to make direct predictive performance claims. All subsequent modeling procedures, including regularized conditional logistic regression and performance evaluation, are conducted on this fixed subset of genes using cross-validation. This allows for biologically meaningful hypothesis generation within a practical framework.

Given the high dimensionality of the original data, we begin by performing an initial feature screening to eliminate less relevant features prior to applying regularized regression. 20 In Differentially expressed genes (DEGs) analysis, conducting independent hypothesis tests on numerous gene expression values poses a substantial risk of false positives. To mitigate this issue, we apply the false discovery rate (FDR) control method to correct for multiple hypothesis testing. Using the Benjamini-Hochberg (BH) procedure, 21 we rank the P-values obtained from paired sample t-tests in ascending order and adjust each by multiplying it by m/j, where j represents the rank of the P-value and m is the total number of independent genes tested. The resulting adjusted P-values are referred to as q-values, and FDR serves as the primary criterion for selecting differentially expressed genes. Typically, we apply an FDR threshold of <.05 for filtering.

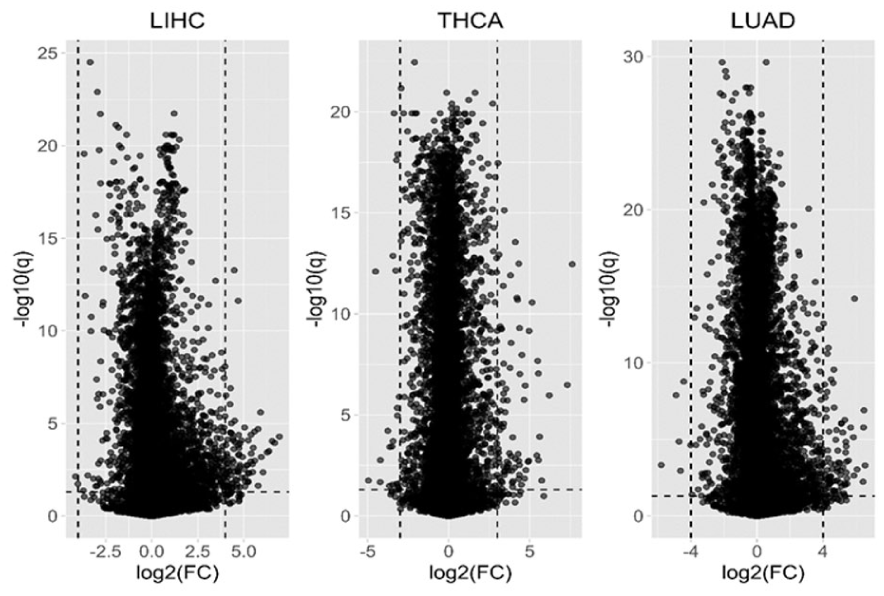

In practice, researchers often combine FDR with fold change (FC) in gene expression between 2 groups to improve biological interpretability. By taking the base-2 logarithm of FC, we obtain log2(FC). Genes with an absolute log2(FC) value exceeding a defined threshold are generally considered for selection, highlighting those that are strongly upregulated or downregulated. Based on these criteria, we select candidate genes that meet either FDR < 0.05 or |log2(FC)| >4 for further analysis.

A double filtering strategy was applied to identify variables with both statistical significance and substantial expression differences. The absolute log2(FC) threshold was empirically chosen by considering the sample size, the regularization properties of the model, and the desired balance between sensitivity and interpretability. This threshold was set to capture approximately 100 high-confidence variables while minimizing noise from marginal effects.

Regularized Conditional Logistic Regression for Matched Case-Control Data

CLR is a statistical model used to analyze paired or stratified data, primarily to address the non-independence of observations. It is applied to study the relationship between binary outcomes and multiple predictors while controlling for confounding variables. This model is typically used in case-control studies when the data is paired or stratified. In CLR, each case is usually paired with 1 or more controls, helping to account for potential confounding factors. The model is based on conditional probability, estimating the effect of predictor variables on the outcome given certain conditions. Maximum likelihood estimation is used to estimate the model parameters, allowing for the assessment of the influence of each predictor variable on the outcome.

In this study, the real data for TCGA-specific cancers consists of 1:1 MCC pairs, thus CLR is used. In the conditional logistic function, assuming there are n samples, with n/2 patients, there are n/2 = K independent strata (patients). Each stratum (patient) contains 2 samples: 1 from normal tissue and 1 from cancerous tissue.

The conditional logistic function can be expressed as

where the stratum effect is included by assigning a unique intercept

The full likelihood function for the 1:1 MCC study using CLR can be written as

As stated by Breslow and Day, 10 the maximum likelihood estimates (MLEs) can be computed using the likelihood function, and this can be implemented using the “clogit” package in R. However, when the predictor variables are high-dimensional and highly correlated, the performance of MLEs may not be optimal, even under the true model (oracle model). This phenomenon will be highlighted in the simulation study. Therefore, when dealing with high-dimensional paired case-control studies, it is recommended to use regularized regression.

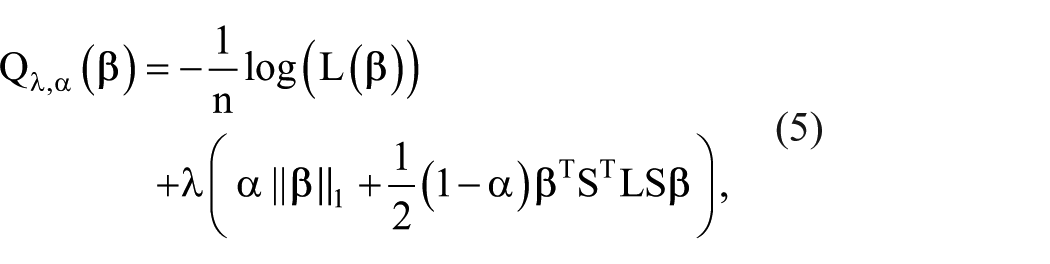

According to Reid and Tibshirani, 11 the penalized conditional logistic regression model with the elastic net penalty (as defined by Zou and Hastie 22 ) is given by

where

In biological data, biomarkers are often correlated with each other, so incorporating biomarker network information into the regularized regression model can enhance the model’s effectiveness.24,25 According to Sun and Wang, 15 the penalized conditional logistic regression likelihood with the network-based elastic net penalty function can be defined as

where the diagonal sign matrix

Conditional Likelihood for 1:M Matched Case-Control Data

To extend the MCC design beyond the traditional 1:1 matching, we also consider the general 1:M matching structure, in which each case is matched to M controls based on covariates such as age, sex, or other potential confounders. CLR provides a natural modeling framework for analyzing such stratified data, as it accounts for the matched-set structure by conditioning out the nuisance parameters (ie, set-specific intercepts) associated with each stratum.

Let the dataset consist of K matched sets (strata), where the k-th stratum includes 1 case and M matched controls. We can derive the CLR likelihood function for 1:M matching based on the likelihood function of 1:1 matching. The complete likelihood function can be expressed as:

The conditional log-likelihood function after taking the logarithm is

This formulation removes the stratum-specific intercepts through conditioning and enables estimation of the log-odds coefficients

Leave-One-Stratum-Out Cross-Validation (LOSOCV)

Next, the appropriate tuning parameters are identified using the leave-one-stratum-out cross-validation (LOSOCV) procedure, following van Houwelingen et al.

29

Given the tuning parameters

where

Prediction Using the Mean Method

The conditional logistic regression model eliminates the intercept interference parameters, but the parameters are crucial for predicting new data. Therefore, as per Reid and Tibshirani,

11

we use 2 methods for prediction. The dataset is partitioned into training and test sets, with 70% assigned to the training set and 30% to the test set. Using the training data, the best model for each method is fitted with the LOSOCV procedure, and the estimated

The average of all the linear predictors in the training set is then computed to form a threshold. For each data point in the test set, the feature vector is multiplied by the estimated

Prediction Using the Majority Voting Method

Using the training data, the best model for each method is fitted with the LOSOCV procedure, and the estimated

These 2 forecasting methods can also be naturally extended to 1:M matched case-control design. For example, in the mean method, we first calculate the average of M linear predictors for each stratum of the M controls in the training set, and then average them with the linear predictors of the case group in the training set to form a threshold. In the majority voting method, if the linear predictor from the testing set model exceeds 100*(M/(1 + M))% of the linear predictors from the training set model, the result is classified as a case; otherwise, it is classified as a control.

Finally, a confusion matrix is created based on the test set predictions, and prediction metrics (such as G-mean and AUC) are computed for each method. It is important to note that the G-mean is a comprehensive metric that considers both sensitivity and specificity, that is, the square of the product of sensitivity and specificity, while the receiver operating characteristic curve and the corresponding area under the curve are used to adjust the penalty tuning parameters. 11 In addition to comparing model prediction performance, we can also evaluate variable selection (G-mean) and parameter estimation (root mean squared error, RMSE) performance using simulated data.

Real Data Analysis for Gene Importance

In real data analysis, we first identify the best regularization condition logistic regression method for a specific cancer using the flowchart in Figure 1. Then, we apply the bootstrap method to randomly sample the 50% entire dataset (using strata as the sampling unit), perform variable selection using the best regularized CLR method, and repeat this process 100 times. This results in calculating the frequency selection probability for each gene. The probability is then used to define the gene importance. 17 We note that this bootstrap method is flexible and robust, making it less susceptible to the influence of specific samples. In other words, different samples may lead to the selection of highly different genetic combinations. The bootstrap method can alleviate this issue, resulting in more accurate biomarkers.

Summarizing the Research

Currently, there are 4 R packages (“clogitL1,” “pclogit,” “clogitLasso,” “penalizedclr”) available for performing penalized CLR (Lasso, Ridge, Elastic Net). These 4 R packages are summarized in Table 2. We have established a related workflow for this research topic.

Reviews on Regularized Conditional Logistic Regression (a Partial List).

The first research objective is to conduct a systematic comparison and analysis of the penalized conditional logistic regression methods in these 4 R packages, evaluating criteria including variable selection, parameter estimation, and model predictive performance. Next, we will demonstrate through a series of simulation studies that, in high-dimensional matched case-control studies, the penalized CLR method is more effective than traditional maximum likelihood estimator and penalized ordinary logistic regression. A comprehensive statistical analysis will be performed, with the goal of providing biologists with a better understanding of current regularized conditional logistic regression methods. Finally, the study will focus on MCC study data from the TCGA project, specifically concerning LUAD, LIHC, and THCA.

Finally, we state that the reporting of this study conforms to the Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) Statement (STROBE_checklist_v4_combined). The completed STROBE checklist has been submitted as a Supplemental File.

Results

Simulation Studies: Synthetic Dataset

Following the simulation framework of Sun and Wang, 15 we constructed a 1:1 MCC dataset consisting of 150 strata of data. Among them, 100 strata were used for training and 50 strata for testing. Each individual had 2000 variables. The dependent variable Y was binary, with cases coded as 1 and controls as 0. To mimic biological signal heterogeneity, we specified different mean vectors for cases and controls:

The case and control means differ on specific subsets of variables to represent signal regions, similar to the settings described in Sun and Wang, 15 who generated high-dimensional MCC methylation datasets with 1000 CpG sites for computational feasibility and to maintain consistency with their simulation design.

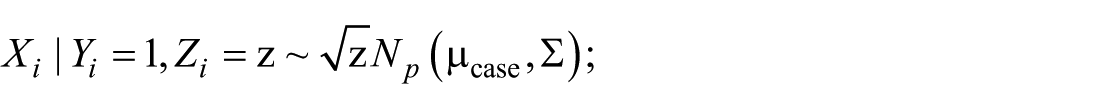

Additionally, the stratum-level variable

We assumed that the independent variable

where

Hub: A central node is connected to multiple other variables, forming a Hub structure.

Band: Variables are connected in a band-like structure.

Cluster: Variables form multiple clusters, with high correlations within each cluster.

Scale-free: The connections between variables follow a scale-free network characteristic, where a few variables have many connections, while others have fewer.

Figure 2 shows the 4 variable network structures. These network patterns and data distributions follow common settings in network-based high-dimensional simulation studies,15,30 providing a controlled environment to evaluate the proposed method under varying dependency structures.

The 4 gene network structures.

Considering the limited number of strata, after the initial feature selection (FDR < 0.05 or |log2(FC)| > 4), we controlled the number of features to approximately 100 and then explored several approaches. First, we considered MLE within the CLR framework, which can be implemented using the “clogit” R package. Additionally, we evaluated the penalized logistic regression method, which does not account for the paired structure of the data, and can be performed using the “glmnet” R package. We also examined 4 R packages specifically designed for penalized CLR: “clogitL1,” “pclogit,” “clogitLasso,” and “penalizedclr.” Finally, in our simulation study, we incorporated the oracle estimator, 31 which provides the MLE under the true model.

To investigate whether commonly used multiple testing procedures, such as the BH method, can effectively control the FDR under an MCC design, we conducted a simulation study mimicking the high-dimensional structure of gene expression data. In each simulated dataset, 2000 features were generated, of which only 15 were truly differentially expressed between matched cases and controls, while the remaining features followed the null distribution. Paired t-tests were applied to each feature to obtain P-values, and the BH procedure was subsequently used to control the FDR at a nominal level of .05. Features with adjusted q-values below 0.05 were considered significant. To estimate the empirical FDR, we calculated the proportion of false positives (null features incorrectly declared significant) among all selected features. This procedure was repeated 200 times, and the average empirical FDR was calculated across all simulations (see Supplemental Table S.2 for details). The results suggest that the BH procedure provides reasonable control of the FDR under the MCC design, with the observed empirical FDR remaining close to the target level of 0.05. These findings support the practical use of FDR-based thresholds for variable selection in high-dimensional matched-pair transcriptomic analyses.

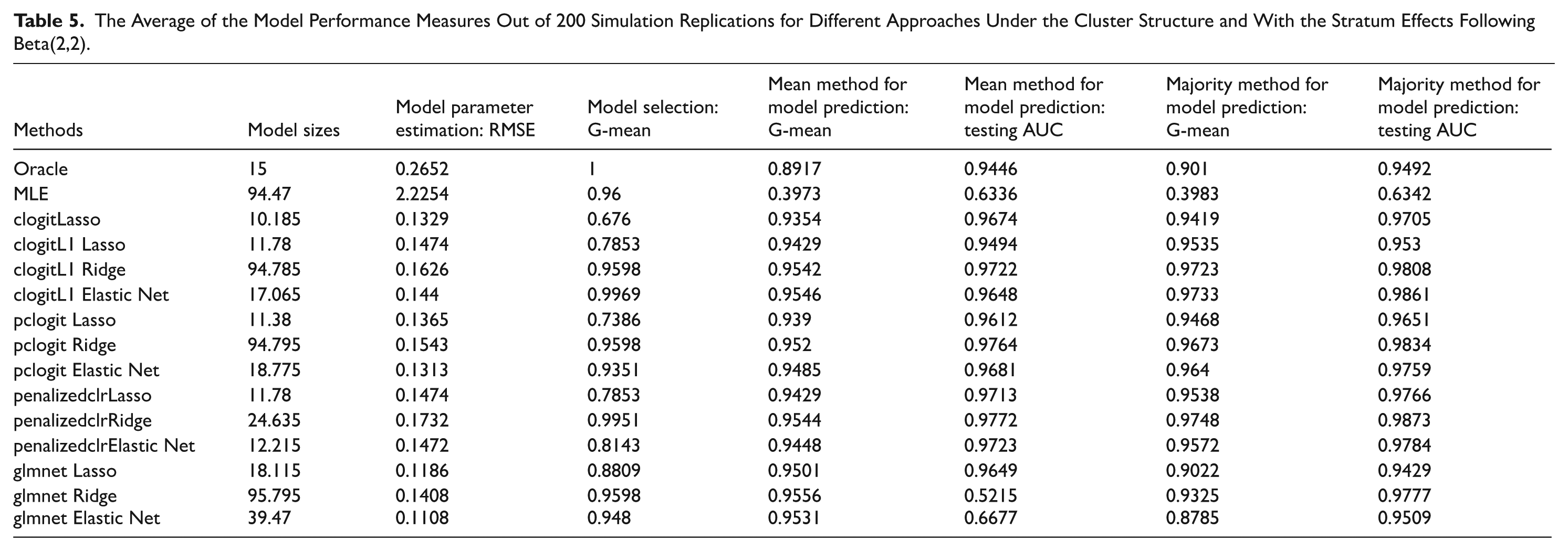

We take the average of the model performance measures out of 200 simulation replications. Based on the simulation results (Tables 3–5 and Supplemental Tables S.3–S.11), we draw several key conclusions. First, penalized CLR outperforms traditional maximum likelihood estimation in terms of parameter estimation and predictive accuracy, even in the ideal oracle estimation scenario, where the true model is known. Therefore, when there is a network structure among the features, it is recommended to use regularized regression. Second, penalized CLR outperforms penalized ordinary logistic regression in terms of predictive accuracy. Ordinary logistic regression ignores the paired structure of the data. Third, predictive accuracy varies with different levels of stratum effects, indicating that a larger beta distribution parameter value for the stratum effects leads to higher predictive accuracy. Finally, among the 4 penalized CLR R packages tested, the “penalizedclr” package demonstrated the most stable performance. It consistently provided reliable results, regardless of changes in stratum effects or network structures, making it the most robust choice across different simulation settings. Additionally, the “pclogit” package also delivered satisfactory results in terms of predictive accuracy.

The Average of the Model Performance Measures Out of 200 Simulation Replications for Different Approaches Under the Cluster Structure and With the Stratum Effects Following Beta(.5,.5).

The Average of the Model Performance Measures Out of 200 Simulation Replications for Different Approaches Under the Cluster Structure and With the Stratum Effects Following Beta(1,1).

The Average of the Model Performance Measures Out of 200 Simulation Replications for Different Approaches Under the Cluster Structure and With the Stratum Effects Following Beta(2,2).

This study further explores 1:M MCC data, expanding the simulated synthetic data to a 1:4 MCC dataset. A simulation analysis is conducted under a cluster structure with 3 different stratum effect distributions (Beta(.5,.5), Beta(1,1), Beta(2,2)). Table 6 and Supplemental Tables S.12 and S.13 present the average results from 100 repeated runs under the cluster structure with the 3 different stratum effect distributions, leading to similar conclusions as the previous 1:1 MCC dataset.

The Average of the Model Performance Measures Out of 100 Simulation Replications for Different Approaches Under the Cluster Structure and With the Stratum Effects Following Beta(2,2) under 1:4 Matched Case-Control Study.

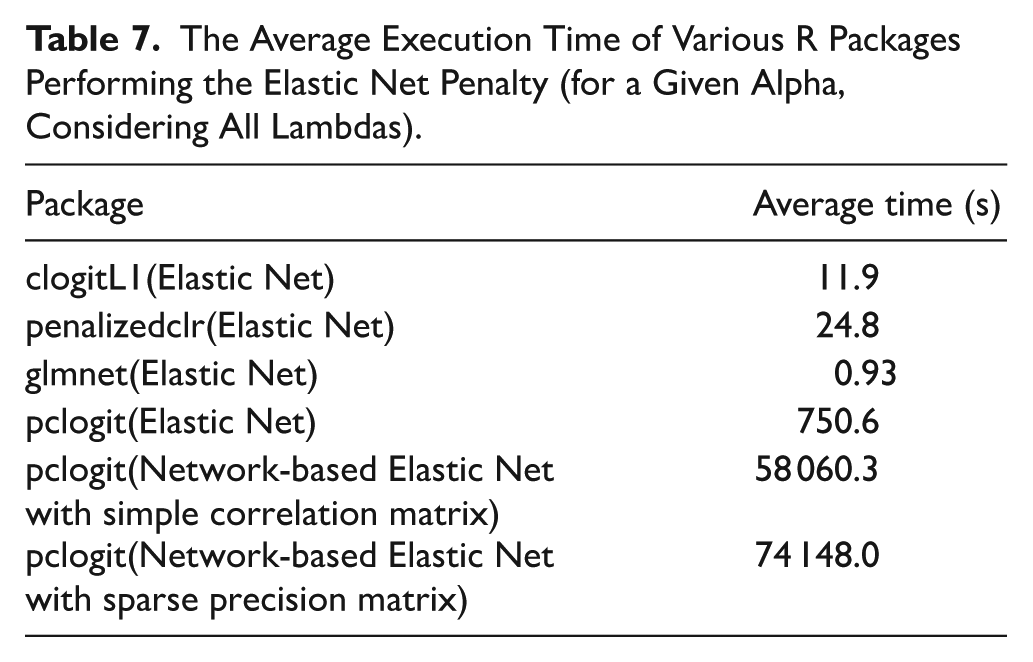

However, when using “pclogit” to run the network-based elastic net, we find that its statistical effectiveness is no better than simply running the elastic net. Additionally, the execution time is quite long. Table 7 presents the average execution time of various R packages performing the (network-based) elastic net penalty (for a given alpha, with all lambdas considered). Due to the concern about execution time, we decided not to proceed with the network-based elastic net.

The Average Execution Time of Various R Packages Performing the Elastic Net Penalty (for a Given Alpha, Considering All Lambdas).

For completeness, we also implemented a network-based elastic net model using the “pclogit” package, which combines an L1 penalty with a network smoothness term to incorporate correlations among predictors. However, this approach required substantially longer execution time and yielded limited improvement in predictive performance compared to the standard elastic net model. Therefore, it was not pursued further in the main analysis. Nevertheless, the method is briefly discussed for its theoretical value and potential applicability in future studies where biologically meaningful network structures can be more accurately defined.

In this study, we focused on 3 cancer types, LIHC, THCA and LUAD, because they have relatively large sample sizes in TCGA with matched tumor and normal tissue samples, represent different organ systems and biological characteristics, and are known to exhibit substantial gene expression differences. These features make them particularly suitable for differential expression analysis and model validation. Based on the KS test results reported in Table 1, approximately 99% of genes in the 3 TCGA 1:1 matched tumor–normal datasets were normally distributed, indicating that most gene expression changes are suitable for subsequent data analysis using paired t-tests.

Real Data Application: TCGA LIHC Data

Our analysis focuses on a subset of the TCGA LIHC dataset, which includes data from 50 patients and 20 501 gene expression markers, with both normal and tumor tissue samples provided for each patient. Since the number of genes likely associated with cancer is expected to be limited, we reduce the gene count before performing the training/test split for cross-validation. We adopted a double filtering procedure, 32 where genes are first filtered based on FDR results, and then further filtered based on the magnitude of log2(FC) changes. A well-known visualization tool in genomics for illustrating the results of double filtering (based on both statistical significance and fold change) is the volcano plot, which is widely used to visualize the results of genomic experiments. A volcano plot is a scatter plot that illustrates the relationship between –log10(q) and log2(FC). As shown in Figure 3, the most strongly upregulated or downregulated features located at the extremes of the X-axis, and the most statistically significant features at the top. The findings in the upper-left and upper-right corners are typically considered the most likely to be significantly differentially expressed genes. Using this double filtering process, we considered genes to be significant if they met both FDR < 0.05 and |log2FC| > 4. This approach identified 96 candidate genes for further analysis in the LIHC dataset. However, if only 1 of the 2 conditions is required to be satisfied, the number of selected genes increases to 11 022, which is too large and unsuitable for subsequent regularization analysis.

The volcano plots of TCGA LIHC, THCA, and LUAD.

We conducted 10 random splits, partitioning the entire dataset into a 7:3 training/test ratio, to assess the performance of all methods applied to the TCGA LIHC data. Using the 96 candidate genes selected through the double filtering procedure, we developed prediction models for each method. The average classification results for each method across the 10 random splits are shown in Table 8. The results show that the “pclogit” package with the ridge penalty performed best in the majority prediction method, while the elastic net penalty also performed well in both prediction methods. Since ridge does not allow for further variable selection, we used the well-performing elastic net method for the subsequent analysis. Then, we apply the bootstrap method to randomly sample 50% of the entire dataset (using strata as the sampling unit), perform variable selection using the “pclogit” with the elastic net penalty, and repeat this process 100 times. The selection frequency probability for each gene is calculated to define the importance of the gene, and a threshold of .5 is used, meaning that a gene must have a selection probability greater than .5 to be included in our final candidate model. Next, we apply the “pclogit” package with the elastic net penalty to the entire dataset and determine the optimal tuning parameters

Results (Average of Model Performance Measures) of Different Methods on the TCGA LIHC Data Over 10 Random Splits of 7:3 Training/Test Sets After Applying Double Filtering Procedure.

In the TCGA LIHC Dataset, Perform 100 Bootstrap Iterations to Calculate Gene Selection Probabilities, Retain Genes With Probabilities > .5, and Apply Elastic Net Penalty From “pclogit” to Estimate the Parameters.

Genes identified by both regularized CLR and unmatched LASSO models.

Among these 10 important identified genes, several have been proven in the literature to be related to liver cancer development or cancer genesis mechanisms. Ouyang et al 33 demonstrated that the EBF2 gene is a DEG and is upregulated in LIHC. Nasiri-Aghdam et al 34 found that CELF family members are linked to cancer proliferation and invasion, being potential tumor suppressors or oncogenes. The review highlights CELF protein regulation mechanisms and their role in cancer physiology. PAEP is one of the key genes identified in the study, 35 linked to angiogenesis and ferroptosis in hepatocellular carcinoma (HCC). It may serve as a potential biomarker for prognosis and immunotherapy response in HCC patients. Zhang et al 36 demonstrated that CSMD1 is linked to the stage and grade of alcohol-related HCC (HCC-A) and is associated with poorer disease-free survival. It may serve as a potential biomarker for predicting survival in HCC-A patients and contribute to disease progression.

Real Data Application: TCGA THCA Data

Our analysis focuses on a subset of the TCGA THCA dataset, which includes data from 59 patients and 20 501 gene expression markers, with both normal and tumor tissue samples provided for each patient. Since the number of genes likely associated with cancer is expected to be limited, we adopted a double filtering procedure, 32 where genes are first filtered based on FDR results, and then further filtered based on the magnitude of log2(FC) changes. Using this double filtering process, we considered genes to be significant if they met both FDR < 0.05 and |log2FC| > 3. This approach identified 103 candidate genes for further analysis in the THCA dataset. However, if only 1 of the 2 conditions is required to be satisfied, the number of selected genes increases to 13 188, which is too large and unsuitable for subsequent regularization analysis.

We conducted 10 random splits, partitioning the entire dataset into a 7:3 training/test ratio, to assess the performance of all methods applied to the TCGA THCA data. Using the 103 candidate genes selected through the double filtering procedure, we developed prediction models for each method. The average classification results for each method across the 10 random splits are shown in Table 10. The results show that the “pclogit” package with the elastic net penalty performed best in both prediction methods. Then, we apply the bootstrap method to randomly sample 50% of the entire dataset, perform variable selection using “pclogit” with the elastic net penalty, and repeat this process 100 times. The selection frequency probability for each gene is calculated to define the importance of the gene, and a threshold of .5 is used, meaning that a gene must have a selection probability greater than .5 to be included in our final candidate model. Next, we apply the “pclogit” package with the elastic net penalty to the entire dataset and determine the optimal tuning parameters

Results (Average of Model Performance Measures) of Different Methods on the TCGA THCA Data Over 10 Random Splits of 7:3 Training/Test Sets After Applying Double Filtering Procedure.

In the TCGA THCA Dataset, Perform 100 Bootstrap Iterations to Calculate Gene Selection Probabilities, Retain Genes with Probabilities >.5, and Apply Elastic Net Penalty From “pclogit” to Estimate the Parameters.

Genes identified by both regularized CLR and unmatched LASSO models.

Among these 20 important identified genes, several have been proven in the literature to be related to thyroid carcinoma development or cancer genesis mechanisms. For example, Liu et al 37 identified PNPLA5 as a potential driver gene in Chinese papillary thyroid carcinoma (PTC), alongside the BRAF mutation. Comparisons with the TCGA-THCA dataset revealed genetic differences, highlighting the unique profile of Chinese PTC patients and the need for ethnic-specific approaches in diagnosis and therapy. Liu et al. 38 explored the role of HTGPCRs, a group of 13 gene families associated with cancer progression. The study found that the expression of HTGPCRs is related to cancer stemness, immune response, and the tumor microenvironment, and suggested that HTR5A may have a cancer-suppressing function. Specifically, we find in Table 11 that the parameter estimate for the HTR5A gene is negative, indicating that the higher the expression of the biomarker, the lower the probability of THCA events occurring. Therefore, our findings align with those of Liu et al. 38

Real Data Application: TCGA LUAD Data

Our analysis focuses on a subset of the TCGA LUAD dataset, which includes data from 58 patients and 20 501 gene expression markers, with both normal and tumor tissue samples provided for each patient. Since the number of genes likely associated with cancer is expected to be limited, we adopted a double filtering procedure, 32 where genes are first filtered based on FDR results, and then further filtered based on the magnitude of log2(FC) changes. Using this double filtering process, we considered genes to be significant if they met both FDR < 0.05 and |log2FC| > 4. This approach identified 91 candidate genes for further analysis in the LUAD dataset. However, if only 1 of the 2 conditions are required to be satisfied, the number of selected genes increases to 13 403, which is too large and unsuitable for subsequent regularization analysis.

We conducted 10 random splits, partitioning the entire dataset into a 7:3 training/test ratio, to assess the performance of all methods applied to the TCGA LUAD data. Using the 91 candidate genes selected through the double filtering procedure, we developed prediction models for each method. The average classification results for each method across the 10 random splits are shown in Table 12. The results show that the “penalizedclr” package with the ridge penalty performed best in both prediction methods, while the “clogitL1” package with the elastic net penalty also performed well. Since ridge does not allow for further variable selection, we used the well-performing elastic net method for the subsequent analysis. Then, we apply the bootstrap method to randomly sample 50% of the entire dataset, perform variable selection using “clogitL1” with the elastic net penalty, and repeat this process 100 times. The selection frequency probability for each gene is calculated to define the importance of the gene, and a threshold of .9 is used, meaning that a gene must have a selection probability greater than .9 to be included in our final candidate model. Next, we apply the “clogitL1” package with the elastic net penalty to the entire dataset and determine the optimal tuning parameters

Results (Average of Model Performance Measures) of Different Methods on the TCGA LUAD Data Over 10 Random Splits of 7:3 Training/Test Sets After Applying Double Filtering Procedure.

In the TCGA LUAD Dataset, Perform 100 Bootstrap Iterations to Calculate Gene Selection Probabilities, Retain Genes With Probabilities > .9, and Apply Elastic Net From “clogitL1” to Estimate the Parameters.

Genes identified by both regularized CLR and unmatched LASSO models.

Among these 28 important identified genes, many have been shown in the literature to be associated with lung cancer development or the mechanisms of cancer genesis. Here, we list a few of them. For example, Xu et al 39 analyzed the transcriptional expression of several genes in lung squamous cell carcinoma (LUSC) and LUAD. They pointed out that the 2 genes, GNGT1 and KRTAP4-1, identified by our method, show significant upregulation in both types of lung cancer. Fan et al 40 also noted that GNGT1 is overexpressed in LUAD patients and is associated with poor prognosis. The expression of GNGT1 is significantly correlated with gene alterations and a low methylation status of its promoter. High GNGT1 expression in LUAD patients is linked to advanced lymph node metastasis and more immune cell infiltration.

Li et al 41 indicated that PDX1 is an important gene for predicting the prognosis of LUAD patients. It was included in a model that helps doctors predict survival and make more informed treatment decisions for LUAD patients. Chen et al 42 found that FGF19 is overexpressed in non-small cell lung cancer (NSCLC) and its high levels are linked to poorer overall survival. FGF19 could serve as a potential biomarker for predicting worse outcomes in NSCLC, especially LUAD. Wu et al 43 found that INSL4 is one of the key genes linked to LUAD prognosis. It was included in a predictive signature that helps stratify patients into high- and low-risk groups, correlating with survival outcomes and immune cell infiltration in the tumor microenvironment. Szmajda-Krygier et al 44 examined the role of the AP-2 transcription factor family (TFAP2A to TFAP2E) in LUAD. The study highlighted the differential expression of these factors in LUAD and LUSC, their impact on survival, and their interactions with immune cells. It emphasized the significance of TFAP2A, TFAP2C, and TFAP2D in LUAD. GDF2 (also known as BMP9), a member of the TGF-β superfamily, plays a role in LUAD by promoting vascular normalization and delaying tumor growth. Overexpression of GDF2 in LUAD tumors leads to reduced hypoxia and increased immune cell infiltration in the tumor microenvironment. 45 Specifically, we find in Table 13 that the parameter estimate for the GDF2 gene is negative, indicating that the higher the expression of the biomarker, the lower the probability of LUAD events occurring. Therefore, our findings align with those of Viallard et al. 45

Note that Tables 8, 10 and 12 summarize the predictive performance of our models on the testing set. All reported metrics were calculated using the testing set, providing an unbiased assessment of model performance. We also clarify that the focus of this study is on diagnostic genes distinguishing tumor from normal tissues. Although some genes may overlap with prognostic or predictive roles, our primary goal is the identification of diagnostic biomarkers.

Comparison With Conventional Methods Ignoring Matched Design

To further validate the practical value of the method proposed in this study, we conduct additional real-data analyses to directly compare our approach with traditional methods that ignore the MCC structure. Specifically, under a fixed analysis pipeline, we apply the conventional glmnet Lasso method. Among the glmnet Lasso, Ridge, and Elastic Net approaches, lasso demonstrates the best predictive performance across 3 cancer datasets.

In the LIHC dataset, 6 genes are identified with selection probabilities greater than .5: EBF2, B4GALNT2, PAEP, COX7B2, ANKFN1, and SLCO1C1. In the THCA dataset, 5 genes exceed the .5 selection probability threshold: DMBX1, PIK3C3G, CXorf64, IBSP, and PNPLA5. In the LUAD dataset, only 2 genes—ONECUT1 and C11orf86—have selection probabilities greater than .9.

We find that the gene sets identified by the conventional method are subsets of those identified by our proposed method. This finding suggests that ignoring the MCC structure may lead to the omission of biologically informative genes. Therefore, these results further support the practical utility and potential of our proposed method in high-dimensional matched-pair genomic studies.

Genes marked with an asterisk (*) in Tables 9, 11 and 13 were consistently identified by both the paired CLR and the unmatched LASSO analyses. These overlapping genes represent candidates that are more stably selected across different analytical approaches, suggesting higher reliability as potential cancer biomarkers. Specifically, in LIHC, 5 genes were consistently selected: EBF2, B4GALNT2, PAEP, COX7B2, SLCO1C1; in THCA, 5 genes overlapped: DXBX1, PIK3C2G, CXorf64, IBSP, PNPLA5; and in LUAD, 2 genes were consistently identified: ONECUT1, C11orf86. The consistent identification of these genes highlights their potential biological relevance and indicates that they are particularly worthy of further functional validation and mechanistic studies.

Sensitivity Analysis Using Variance-Based Pre-Screening

To address concerns regarding “using data twice,” which may introduce bias during feature selection and model evaluation, we conducted a sensitivity analysis using an unsupervised gene filtering strategy. Specifically, for each cancer type, we used only the 1:1 MCC pairs and calculated the variance of each gene across these matched samples. This variance-based pre-screening approach does not involve any case-control label information, thereby avoiding potential circularity in feature selection.

We then selected the top genes with the highest variance values, with the number of selected genes matching that obtained from the double-filtering procedure (96 for LIHC, 103 for THCA, and 91 for LUAD). These gene subsets were subsequently analyzed with the same 4 regularized CLR models (“clogitL1,” “pclogit,” “clogitLasso,” and “penalizedclr”) within the same MCC design framework.

The results, summarized in Supplemental Tables S14–S16, show that the predictive performance of the regularized CLR models varied across cancer types. In THCA, the double-filtering approach yielded better predictive performance, whereas in LUAD, the variance-based filtering performed better. For LIHC, both approaches produced comparable results. Overall, these findings indicate that our main conclusions remain robust under different gene pre-screening strategies. We also suggest that future studies incorporate unsupervised pre-screening sensitivity analyses to more comprehensively evaluate the stability and reliability of model performance.

Comparison With Standard Logistic Regression Using Unmatched Data

To evaluate the practical applicability of CLR compared with standard logistic regression, particularly when matched samples are limited, we conducted an additional experiment using unmatched tumor and normal samples from the TCGA dataset. Specifically, we excluded patients who were included in the 1:1 MCC analysis and retained all remaining tumor samples along with all available normal tissue samples, thereby constructing larger unpaired datasets for each cancer type.

On these unmatched datasets, we applied standard regularized logistic regression models with 3 types of penalties: Lasso, Ridge, and Elastic Net. To address the high dimensionality of transcriptomic data, we employed a variance-based feature filtering strategy, selecting the most variable genes for each cancer type (96 for LIHC, 103 for THCA, and 91 for LUAD), consistent with the number of genes used in our CLR analyses. Each model was evaluated over 10 repetitions of a 70/30 train-test split, and performance was assessed using classification accuracy and AUC.

The results are presented in Supplemental Tables S17–S19. Overall, we found that regularized CLR, which accounts for the matched structure, achieved superior predictive performance under the majority voting method, whereas standard regularized logistic regression applied to unmatched data performed better under the mean method, benefiting from the larger sample size. These findings indicate that CLR is advantageous when matched structures are present, while standard logistic regression can leverage larger unpaired datasets. This provides practical guidance for cancer researchers on when and how to apply conditional methods depending on the availability of matched samples.

Discussion

Discrepancy With Previous Network-Regularized Models

In contrast, our implementation of network-based elastic net was based on the “pclogit” R package, which uses an L1 penalty combined with a network smoothness term, rather than minimax concave penalty (MCP). It is worth noting that elastic net uses a combination of L1 and L2 penalties, and the network penalty also accounts for correlation among predictors. However, MCP is known to be less biased and better suited for feature selection in high-dimensional settings compared to the L1 penalty. 46 This difference in penalty choice likely explains why Ren et al 47 reported greater improvement over elastic net, while our L1-based network model did not outperform standard elastic net.

Moreover, we suspect that the limited performance gain observed in our results may also be attributed to 2 additional factors. First, the network structure employed in our analysis was constructed based on pairwise correlations between features, using a thresholding approach to define adjacency. While computationally straightforward, such a method may not adequately capture the true functional or biological relationships among genes, thereby limiting the effectiveness of incorporating network information into the model. Second, the cross-validation procedure used for tuning hyperparameters tends to favor sparse solutions driven by strong marginal effects. This selection bias may underutilize the contribution of the network-based penalty, especially when the added structure does not align well with the dominant individual signals in the data.

Finally, we acknowledge that execution time is a practical concern, particularly when exploring large lambda grids across cross-validation folds. While we chose not to pursue the network-based model further for computational efficiency, we agree that methodological rigor should not be compromised solely for this reason. Thus, we now cite and briefly discuss the work of Ren et al 47 to provide a broader context for readers interested in alternative network-regularized models.

The Significance and Limitations of Using TCGA Data

Although TCGA consists of samples from patients with already diagnosed cancers, the models developed based on this dataset are not intended to replace initial clinical diagnosis. Instead, they serve to complement traditional diagnostic approaches. Conventional clinical diagnosis relies heavily on imaging and histopathological evaluation, which can be limited by resolution, inter-observer variability, and difficulties in early-stage detection. 3 In contrast, TCGA provides comprehensive molecular and gene expression profiles that reveal tumor heterogeneity at the molecular level, offering a rich foundation for developing auxiliary diagnostic models.4,5 Such models can facilitate more refined cancer subtyping, predict drug response and patient prognosis, and ultimately assist clinicians in formulating personalized treatment strategies. With advancements in high-throughput technologies and non-invasive sampling methods such as liquid biopsies, gene-based diagnostic tools that support accurate single-sample prediction have strong potential to be integrated into clinical workflows and become an essential component of precision oncology. 6

From Tissue Samples to Non-Invasive Diagnosis: Feasibility of Applying the Method to Liquid Biopsy

Although this study was based on RNA expression data from tissue samples in TCGA and developed a regularized conditional logistic regression model to select stable and predictive cancer-related genes, the approach also holds potential for application in liquid biopsy. In recent years, liquid biopsy technologies have rapidly advanced, with circulating tumor RNA and exosomal RNA in blood providing tumor-derived gene expression signals. These serve as important non-invasive tools for cancer diagnosis and monitoring. Studies have indicated that circulating RNAs dynamically reflect tumor biological characteristics and have been utilized in the development of clinical biomarkers. 48 Therefore, applying our analytical framework to blood samples could aid in developing more accurate and minimally invasive diagnostic tools, advancing the implementation of precision medicine.

Moreover, this study used bootstrap resampling to estimate gene selection probabilities, enhancing the stability assessment of biomarkers, which is particularly suitable for the low-signal, high-noise environment characteristic of liquid biopsy samples. This method helps reduce false positive rates and provides a valuable basis for developing multi-gene panel biomarkers in future liquid biopsy applications. Overall, the model design and marker selection strategy presented here have practical value in promoting the development of non-invasive early cancer diagnostic tools, aligning with the demand for high accuracy and personalized diagnostics in precision medicine.

Large Datasets Integrations

Large Datasets Integrations. Several public human databases, such as the Gene Expression Omnibus (GEO) and the National Cancer Database (NCDB), are significant for assessing the reproducibility of our findings. We believe that conducting a meta-analysis could help discover and validate biomarkers, 49 and we plan to explore this area further in future research. Additionally, we have conducted simulation studies to perform one-to-many MCC data analysis, demonstrating that the analytical workflow we proposed is feasible. In the future, we plan to collect real one-to-many MCC data for meaningful analysis.

Although this study has not yet performed an external validation using independent datasets, analyses were conducted across 3 distinct cancer types in TCGA. These cancers represent different organ systems and biological characteristics, and are known to exhibit substantial gene expression differences. Therefore, the consistent performance of our workflow across these cancer types provides preliminary evidence of its generalizability. However, it is worth noting that paired case-control data comparable to TCGA are not readily available in other large public databases such as GEO or NCDB, which limits the possibility of direct external validation at this stage.

Revisiting Fold-Change Thresholds: Insights From TREAT

In this study, we applied a relatively stringent fold change threshold, determined empirically by considering the sample size and the advantages of regularized model selection. This double filtering approach allowed us to focus on approximately 100 variables with both statistical and biological relevance. It is worth noting that the choice of a fold-change threshold is not universal but rather context-dependent.

The method TREAT 50 provides a formal statistical framework for testing whether observed changes are greater than a specified biologically meaningful threshold, instead of testing against zero change. This approach emphasizes that fold-change thresholds should ideally be defined with respect to biological significance and experimental variability, rather than being arbitrarily chosen. In future studies, integrating the TREAT framework or adaptive thresholding methods could help refine differential expression criteria, balancing sensitivity and biological interpretability.

Limitations Regarding Clinical Covariates

One limitation of our study is that clinical variables such as age, sex, and tumor stage were not explicitly included in the analysis. Our workflow utilized TCGA 1:1 paired case–control data, where everyone contributed both tumor and matched normal tissue samples. This pairing inherently controls for inter-individual variability, reducing confounding effects due to baseline differences. Nevertheless, we acknowledge that other potential clinical confounders could influence gene expression and biomarker selection, and future studies integrating clinical covariates could further refine the predictive models.

Feature Pre-screening Prior to Penalized Regression

Before performing penalized CLR, we applied a preliminary feature filtering step based on differential expression analysis. Although penalized regression methods such as LASSO inherently perform variable selection and do not theoretically require pre-screening, we found that direct computation using all 20 501 genes was only feasible for the LASSO model on a standard workstation (Intel Core i7 CPU, 64 GB RAM). In contrast, several commonly used R packages for penalized CLR (“clogitL1,” “pclogit,” and “penalizedclr”) failed to complete computation under ridge and elastic-net penalties due to memory limitations and convergence issues.

To reduce dimensionality and alleviate computational burden, a differential expression–based pre-screening procedure was therefore applied before model fitting. This approach is a common and practical strategy in high-dimensional omics analyses and has been shown to improve model stability, interpretability, and statistical efficiency. While there is no strict theoretical upper limit on the number of predictors that can be included in a LASSO analysis, the actual computational feasibility depends strongly on data dimensionality, model structure, and available computing resources. In ultra–high-dimensional omics settings, performing an initial feature pre-screening step before penalized regression is generally necessary and has been recommended by previous methodological studies.17,20

Conclusion

This study systematically explores the application of high-dimensional transcriptomic data in cancer research through a regularized CLR method, emphasizing the importance of MCC designs in improving statistical efficiency and reducing confounding factors. Through both simulation and real data analysis, it is demonstrated that matched designs effectively improve the accuracy of model predictions and help more accurately identify cancer-related genes. The common issue of the “curse of dimensionality” in high-dimensional data analysis can also be effectively addressed through regularization methods, preventing overfitting and improving model stability. By comparing the performance of different R packages (such as “clogitL1”, “pclogit”, “clogitLasso” and “penalizedclr”) in penalized CLR, the study finds that the “pclogit” and “penalizedclr” packages perform best in model prediction, while “clogitL1” has the best execution time, helping researchers choose the most suitable data analysis tools based on specific needs. Additionally, combining gene network information with regularized CLR helps improve the accuracy of gene selection, thereby enhancing cancer prediction models. However, the computational time required for this method remains a challenge.

Finally, by applying the proposed analysis workflow to three 1:1 MCC cancer datasets from TCGA, we successfully identified important tumor-suppressing and oncogenic genes related to cancer development. Some of the statistically significant genes are supported by wet-lab studies, indicating that our method can accurately identify cancer-related genes and is consistent with previous research findings. These results enhance the credibility and validity of our study and provide a valuable resource for further research in cancer biology and precision medicine. It should be noted that the current workflow does not predict disease subtypes or clinical treatment responses, and its contribution lies primarily in the identification of cancer-related genes for research purposes.

Furthermore, we perform an empirical comparison to evaluate the impact of using an MCC design on cancer biomarker identification. We find that without precise matching, several well-established cancer-related genes fail to be identified or show substantially reduced statistical significance. This finding indicates that neglecting potential confounding factors can lead to misleading results and omission of important biomarkers. Therefore, an analysis framework based on MCC design not only improves statistical efficiency but also more accurately reflects gene-disease associations. Building on this foundation, our study investigates the performance and applicability of regularized CLR methods within such matched designs. Finally, to facilitate understanding of the mathematical formulations presented in this work, all symbols and their definitions are summarized in Supplemental Table S.20 (Supplemental Materials).

Supplemental Material

sj-docx-1-cix-10.1177_11769351251404255 – Supplemental material for Robust Cancer Biomarker Identification From Matched Transcriptomic Data Via Bootstrapped Regularized Conditional Logistic Regression

Supplemental material, sj-docx-1-cix-10.1177_11769351251404255 for Robust Cancer Biomarker Identification From Matched Transcriptomic Data Via Bootstrapped Regularized Conditional Logistic Regression by Jie-Huei Wang, Zih-Han Wu, Hui-Chen Lu and Tzung-Ying Guo in Cancer Informatics

Footnotes

Acknowledgements

Abbreviations

AUC: Area under curve; BH: Benjamini-Hochberg; CLR: Conditional logistic regression; DEGs: Differentially expressed genes ; FC: Fold change; FDR: False discovery rate; GEO: Gene expression omnibus; HCC: Hepatocellular carcinoma; HCC-A: alcohol-related HCC; KS: Kolmogorov–Smirnov test; Lasso: Least absolute shrinkage and selection operator; LIHC: Liver hepatocellular carcinoma; LOSOCV: Leave-one-stratum-out cross-validation; LUAD: Lung adenocarcinoma; LIUSC: Lung squamous cell carcinoma; MCC: Matched case-control; MCP: minimax concave penalty; MLEs: Maximum likelihood estimates; NCDB: National Cancer Database; NSCLC: Non-small cell lung cancer; PTC: Papillary thyroid carcinoma; RMSE: Root mean squared error; STROBE: Strengthening the reporting of observational studies in epidemiology; TCGA: The Cancer Genome Atlas; THCA: Thyroid carcinoma; UCSC: University of California, Santa Cruz.

Ethical Consideration

All data used in this research were sourced from publicly available and freely accessible repositories. Therefore, ethical approval was unnecessary for this study.

Consent to Participate

The study described in this manuscript did not include human or animal participants.

Consent for Publication

Not applicable.

Author Contributions

JH conceived and designed the experiments. JH and ZH collected and organized the analysis data. JH, ZH, HC and TY analyzed the data. JH wrote the first draft of the manuscript. JH made critical revisions and approved final version. All authors agreed with manuscript results and conclusions. All authors jointly developed the structure and arguments for the paper. All authors reviewed and approved of the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the grant NSTC 112-2118-M-194-003-MY2 and NSTC 114-2118-M-194-003 from the National Science and Technology Council of Republic of China (Taiwan). The funding body did not play any role in the design of the study and collection, analysis, and interpretation of data and in writing the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The study utilized TCGA transcriptomic data for LIHC, THCA, and LUAD, which were organized as 1:1 matched case-control pairs for the analyses. The data were obtained from the TCGA Hub repository (https://tcga.xenahubs.net), with the primary source being the TCGA website (![]() ). The R scripts used for both simulation and real data analyses are available from the corresponding author upon reasonable request.

). The R scripts used for both simulation and real data analyses are available from the corresponding author upon reasonable request.

Clinical Trial Number

Not applicable.

Declaration of AI and AI-Assisted Technologies in the Writing Process

During the preparation of this manuscript, the authors used ChatGPT solely for spelling and grammar checks. The selection of the research topic, the design of statistical methods, programing, simulations, and data analysis were all conducted independently by the authors and are entirely original. Any suggestions provided by the AI tool were carefully reviewed and, where appropriate, incorporated by the authors, who take full responsibility for the final submitted content.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.