Abstract

The complexity of cancer has long challenged the medical community, driving the need for improved early detection and treatment. Artificial intelligence (AI) has profoundly impacted oncology research in recent decades, resulting in innovative diagnostic and therapeutic approaches. This review synthesizes the critical applications of AI in oncology, focusing on 4 key areas: medical imaging, digital pathology, robotic surgery, and drug discovery. We highlight the role of AI in cancer diagnosis and treatment by reviewing key studies and machine learning methods, and we address the field’s current technical and ethical challenges. AI models have significantly enhanced the accuracy of medical imaging by efficiently detecting lesions and disease sites, leading to earlier and more precise diagnoses. In digital pathology, AI tools aid in risk prediction and facilitate the examination of extensive tissue sample sets for patterns and markers, simplifying the pathologists’ tasks. AI-powered robotic surgery provides different levels of automation, leading to precise and minimally invasive procedures that not only improve surgical outcomes but also lower readmission rates, hospital stays, and infection risks. Moreover, AI expedites the process of discovering cancer therapies by identifying potential lead compounds, predicting drug reactions, and repurposing current medications. In the past decade, several AI-developed drugs have successfully entered clinical trials. These significant advancements underscore the expanding role of AI in shaping the future of cancer diagnosis and treatment. Although standardization, transparency, and equitable implementation must be addressed, AI brings hope for more personalized and effective therapies.

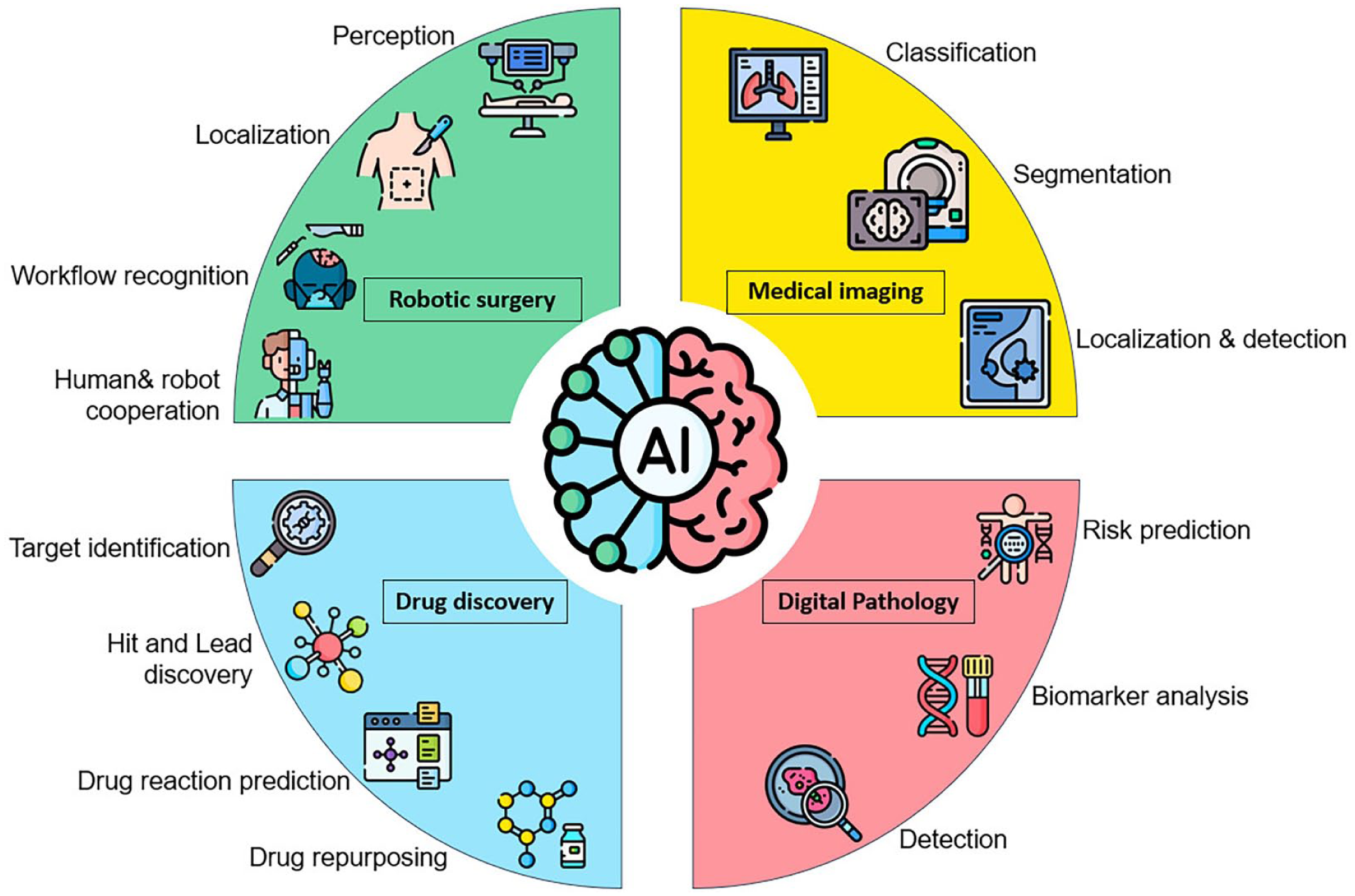

Graphical Abstract

This review examines the role of AI in cancer care, focusing on its applications in medical imaging, digital pathology, robotic surgery, and drug discovery.

Introduction to AI in the Field of Medicine

The integration of artificial intelligence (AI) in medicine marks a significant shift toward more accurate, personalized, and efficient healthcare practices, especially in cancer care. 1 AI refers to machines that perform tasks traditionally requiring human intelligence, such as understanding language, recognizing patterns, solving problems, learning from data, and improving performance over time. Key AI techniques include machine learning (ML) and deep learning (DL). ML uses algorithms to identify patterns and make predictions, while DL, a subset of ML, employs multi-layered neural networks inspired by the human brain, such as convolutional neural networks (CNNs). Other essential ML techniques include support vector machines (SVMs), decision trees, and K-means clustering algorithms. 2

In healthcare, AI is applied in diagnostic algorithms, treatment recommendation systems, patient monitoring, and care management tools. 3 AI can process and analyze vast amounts of healthcare data more efficiently than humans, leading to improved diagnostic accuracy, therapeutic interventions, and patient outcomes. 4

AI-Powered Precision Medicine: Advancing Personalized Treatment

Precision medicine categorizes patients in clinical trials utilizing personal data. 5 The objective is to enhance efficacy and safety outcomes, ultimately increasing the likelihood of clinical success and drug approval. 6 This approach acknowledges that individuals have unique genetic, molecular, and clinical characteristics influencing their treatment responses. Precision medicine aims to enhance treatment outcomes and minimize side effects by tailoring therapies to these individual differences. 7

AI is vital in precision medicine, as seen in the genomic profiling of tumors that assists in making targeted therapy decisions for patients with breast or lung cancer. 8 AI-driven tools can also facilitate the rapid and accurate interpretation of genomic data, providing real-time recommendations for personalized treatments. 9

AI has been instrumental in genetic analysis by identifying transcription start sites, modeling regulatory elements, and accurately predicting gene expression from genotype data. These developments are crucial in understanding the relationship between genomic variations, disease presentation, treatment efficacy, and prognosis.10,11 In medulloblastoma, AI analysis of numerous exomes has enabled the administration of precise and optimal treatments for pediatric patients. 12 AI-driven precision medicine holds the potential to change cancer care by offering more accurate diagnoses, predicting disease risks before symptoms manifest, and designing tailored treatment plans that prioritize safety and efficiency. 13 Integrating AI into clinical practice is expected to become more widespread as research advances, leading to even more significant advancements in cancer care. 14 Figure 1 illustrates the process of precision oncology. 15

The precision oncology process. Where AI plays a crucial role in each stage. 16 Firstly, AI will analyze patient data, including molecular tests and medical images. 17 Secondly, AI algorithms scrutinize vast amounts of molecular data to identify genetic mutations and tumor biomarkers. 18 Thirdly, AI stores and uncovers new insights about personalized data. 19 Fourthly, AI algorithms create predictive calculators to determine disease risk and recommend the most effective treatment targets and practices. 20 Finally, AI can access medical records to aid in monitoring patients. 21

The Historical Background of AI in Cancer Research

The exploration of AI in cancer research began in the 1970s, with early attempts at computer-aided diagnosis and the development of expert systems. These early AI programs primarily concentrated on diagnosing blood infections, which ultimately laid the groundwork for future medical applications. 22 Over the years, advancements in computational power, complex algorithms, and the availability of extensive biomedical datasets have propelled AI from essential pattern recognition to sophisticated ML and DL. 23 These models can identify subtle diagnostic signals in imaging, genomic, and clinical data, significantly advancing cancer diagnosis, prognosis, and treatment planning. 24

This study aims to review recent advancements and provide future directions for AI-driven oncology, focusing on 4 major fields: medical imaging, pathology, surgery, and drug discovery.

Methodology

Protocol Registration and PRISMA Adherence

This article comprehensively analyzes AI applications in oncology using a structured methodology incorporating PRISMA guidelines. This approach minimizes bias in literature selection and ensures rigorous identification of trends, gaps, and innovations in AI and oncology. To enhance transparency and reproducibility, this review has been prospectively registered on PROSPERO (CRD 42023384772). The methodology strictly followed the PRISMA 2020 checklist and its 2021 extension for search reporting, with detailed documentation of search strategies, study selection, and data synthesis.

Search Strategy

We searched 7 databases: Wiley, Taylor & Francis, Scopus, ScienceDirect, Sage, Google Scholar, and Springer. Additionally, citation tracking was performed in Google Scholar. The search covered January 2013 to March 2024 to capture AI advancements over the past decade. We combined keywords using the following Boolean operators:

AI/ML terms: “artificial intelligence,” “machine learning,” “deep learning,” “neural networks,” “predictive analytics” Oncology terms: “oncology,” “cancer,” “tumor,” “malignancy,” “carcinoma” Application terms: “diagnosis,” “prognosis,” “treatment planning,” “radiomics,” “genomics”

We expanded the search methodology to include gray literature and clinical trial registries, such as ClinicalTrials.gov and WHO ICTRP, following best review practices. We employed the PICO framework to refine search terms, focusing on population (cancer patients), intervention (AI applications), comparison (traditional methods), and outcomes (diagnostic accuracy and treatment efficacy).

Inclusion and Exclusion Criteria

We removed 85 duplicate and incomplete texts from an initial pool of 275 documents before screening, leaving 192 records for title and abstract screening. Inclusion criteria prioritized studies focused on AI applications in oncology, including diagnosis, treatment, and drug discovery. Only studies with full-text availability in English were considered. Exclusion criteria removed non-oncology studies (eg, AI in cardiology), non-peer-reviewed articles, conference abstracts, research lacking sufficient technical or clinical detail, and non-human studies. Duplicates were identified and removed using EndNote’s automated deduplication, followed by manual verification. Studies published before 2013 were excluded to focus on recent advances. This rigorous process ensured a relevant and high-quality dataset.

Study Screening and Selection

Two independent reviewers conducted title and abstract screening and full-text review using Rayyan’s AI-assisted platform. Disagreements were resolved by consensus. Inter-rater reliability was calculated using Cohen’s kappa coefficient (κ = 0.82), indicating strong agreement. After screening, 31 records were excluded for irrelevance at the title and abstract stage, leaving 185 full-text articles for eligibility assessment. After a full-text review, 14 additional studies were excluded for irrelevance or insufficient methodological detail, resulting in a final set of 171 studies included in this review. Figure 2 presents the PRISMA 2020 flow diagram illustrating the detailed study selection process.

Overview of the review process. PRISMA 2020 flow diagram detailing the identification, screening, eligibility assessment, and inclusion of studies, with reasons for exclusions at each stage.

Data Extraction and Synthesis

We used a standardized template to extract data from the 171 included studies, focusing on the following areas:

AI methodologies (e.g., model architectures, datasets, validation metrics such as AUC, sensitivity, and specificity) Clinical applications (e.g., diagnostic accuracy, treatment outcomes, workflow improvements) Ethical considerations (e.g., bias mitigation strategies, interpretability, regulatory compliance)

The collected data were organized by themes to find common patterns, like how deep learning techniques are mainly used in radiology, and to point out areas that need improvement, such as the lack of AI tools being used in actual clinical practice. A Supplemental Table summarizing key references and their insights is provided in Table 1 and Figure 3a to enhance transparency and reproducibility.

Key References on the Impact of AI in Healthcare and Oncology.

(a) Conceptual roadmap outlining this review’s framework and thematic structure. (b) A visual map created by VOS Viewer shows groups of related research and trends, emphasizing connections between different fields and new areas in AI applications in oncology.

Quality Assessment

Each study was evaluated twice using a modified QUADAS-2 tool to assess the following aspects:

Technical domain: reproducibility, dataset diversity, external validation

Clinical domain: impact on patient outcomes, feasibility for adoption

Ethical domain: reporting of biases, data privacy measures, FDA/CE compliance

To acknowledge evolving methodologies in AI-oncology research, we retained low-quality studies (eg, small sample sizes or unreproducible methods), but flagged them in the analysis.

Network Analysis

The synthesis approach combined elements of systematic mapping and narrative synthesis. Each section brought together results from 20 to 30 important studies that were carefully examined for their role in combining different fields in AI and cancer. VOS Viewer (version 1.6.2) software was employed for bibliometric analysis and visualization of research trends, identifying clusters of related studies. Narrative synthesis was used to understand and combine the findings in context, highlighting studies that show connections between different fields (Figure 3b).

Early Detection and Diagnosis

AI has substantially impacted the early detection and diagnosis of cancer, offering promising avenues for future research and patient care. 28 Timely cancer diagnosis is crucial, as survival rates are higher in the earlier stages when the cancer is smaller and has not spread throughout the body. 29 AI can analyze various patient assessments to provide more accurate information about survival prognosis and predict disease progression in cancer patients. Healthcare providers often use a predictive model known as the Breast Cancer Risk Assessment Tool (the Gail model) to estimate a woman’s risk of developing breast cancer. This model calculates the risk of developing breast cancer over the next 5 years as well as throughout her lifetime, up to the age of 90. 30

The Gail model is widely used for estimating a woman’s risk of developing breast cancer. It takes into account 7 critical risk factors, including age, age at the onset of menstruation, age at the birth of the first child (or if the individual has not given birth), family history of breast cancer (in a mother, sister, or daughter), the number of past breast biopsies, the number of breast biopsies indicating atypical hyperplasia, and race/ethnicity. 31 By incorporating these factors, the Gail model can provide a more accurate assessment of a woman’s risk of developing breast cancer over the next 5 years as well as throughout her lifetime, up to the age of 90.

Another example is a study by Koo et al that developed a novel model for individuals with metastatic or recurrent gastric cancer. This model incorporates 6 clinicopathological factors: high alkaline phosphatase level, poor Eastern Cooperative Oncology Group performance score, peritoneal metastasis, bone metastasis, high neutrophil-lymphocyte ratio, and low albumin level. 32

AI has made significant advancements in developing predictive models for disease progression, recurrence, and patient survival. These models utilize extensive clinical and molecular data, which include a patient’s genetic makeup, disease characteristics, and anticipated response to therapy. 33 These models can identify subtle diagnostics. By incorporating this information, the models provide more accurate risk assessments, thereby informing clinical decision-making and enhancing patient counseling. 34 Furthermore, Predictive models can significantly impact treatment outcomes by facilitating personalized and precise treatment planning. 35 By incorporating a patient’s genetic makeup, disease characteristics, and predicted response to therapy, these models assist oncologists in tailoring treatment regimens to suit individual patients, potentially resulting in improved treatment outcomes and reduced side effects. 36

AI-powered wearable devices and remote monitoring tools enhance patient care by continuously tracking vital signs and symptoms, enabling early intervention in case of adverse events or disease progression. 37

Medical Imaging

Medical imaging plays a crucial role in this complex and specific process. 38 However, DL algorithms, particularly Convolutional Neural Networks (CNNs), are highly accurate at identifying details in medical images like mammograms, ultrasounds, radiology, CT scans, PET scans, and MRIs to find tumors early. 39 Also, transformer neural networks have recently replaced CNNs in image processing due to their improved reliability and efficiency in computer vision tasks. 40

AI analysis in medical imaging encompasses image classification, segmentation, detection, and localization. It offers details about the probability of cancer and identifies disease sites, such as lesions and metastatic tumors. Some AI models have demonstrated accuracy surpassing that of human experts. For instance, an AI model developed by McKinney et al has significantly reduced false positives and negatives in breast cancer predictions in the USA and the UK. This AI outperformed average radiologists, decreasing their workload by 80%. 41

In another study, a deep learning model trained on a large dataset achieved an accuracy of 82.9% in classifying images from digital single-operator cholangioscopy (DSOC), with a sensitivity of 83.5% and specificity of 82.4%. 42 Table 2 demonstrates other selected AI models in medical imaging.

Selected AI Models in Medical Imaging From 2022 to 2024.

In 2024, Brancaccio et al assessed the effectiveness of AI in diagnosing skin cancer. Their review highlighted that while AI has been extensively used to analyze whole-body photographs for identifying changes in lesions, its integration into consumer-oriented smartphone applications and support for non-experts remain uncertain. Likewise, the role of AI in primary care is a topic of ongoing debate. General practitioners acknowledge the potential benefits of AI but emphasize the need for clear definitions concerning its specific applications. 54

Ha et al pointed out a significant drawback in using AI for analyzing mammograms, based on their study of more than 5700 women without symptoms who have dense breast tissue. The findings indicate that AI-enhanced mammography offers increased specificity, resulting in fewer false positives and a reduced need for follow-up imaging. However, combining mammography and supplemental ultrasound has proven to be a more effective strategy for detecting early-stage, node-negative cancers. Notably, ultrasound detected a significantly higher number of cancers, including cases that the AI system missed. These results suggest that for women with dense breasts, a combined screening approach utilizing both mammography and supplemental ultrasound may be more effective than relying solely on mammography augmented with AI. 55

Furthermore, AI models can become unstable due to changes or alterations. It has been reported that minor perturbations, such as slight patient movements, can lead to significant errors in AI results and incorrect diagnoses. Such problems are common during medical imaging, so networks require frequent retraining for each subsampling combination. As a result, this frequent retraining makes the process impractical for a wide range of applications. 56

Innovations in Digital Pathology

Digital pathology revolutionizes cancer diagnostics by converting traditional histopathology, immunohistochemistry, and cytology slides into high-resolution digital images using whole-slide scanners. By leveraging AI tools on these images, we can automate time-consuming tasks traditionally performed by pathologists. This automation not only enhances efficiency but also enables quicker and more reliable diagnoses, allowing pathologists to focus more on high-level decision-making tasks. 57

In 2021, the FDA authorized the first AI-based software to detect prostate cancer (Paige Prostate). 58 The Paige Prostate AI digital diagnostic tool uses data from the digital slide archive at New York’s Memorial Sloan Kettering Cancer Center (MSKCC) to classify whole-slide images of prostate biopsies as either “Suspicious” or “Not Suspicious” for prostatic adenocarcinoma. 59

A critical aspect of pathology is Biomarker detection. But traditional methods often face limitations in sensitivity, accuracy, and speed, especially when dealing with complex disease processes. 60

Niehues et al demonstrated that DL can effectively predict multiple biomarkers from histopathology images. 61 They evaluated 6 leading DL architectures and found that numerous instance learning (MIL) models consistently outperformed traditional approaches. However, while the predictions for MSI and BRAF mutations were clinically promising, the forecasts for KRAS, NRAS, and PIKCA were not sufficiently reliable for clinical use. 62

AI in pathology enhances the precision of quantitative assessments and allows for the geographical contextualization of data through spatial algorithms. 63 Adding spatial metrics to immunohistochemistry (IHC) methods can make finding important biomarkers more useful in a clinical setting. 64 For example, a study showed that adding spatial information to IHC using multiplex IHC and immunofluorescence (IF) was better at predicting how patients would respond to immune checkpoint inhibitors than just using gene expression profiling (GEP) or regular IHC alone. 65 However, some common factors can affect AI results, such as color variations and errors in tissue preparation, including blur, tissue folds, and tissue tears. Also, foreign objects not included in the training set may be forcibly classified into predefined groups. 66 Therefore, human supervision is crucial for guaranteeing AI results. For example, Bodén et al showed that while digital image analysis reduced errors compared to visual methods, human-in-the-loop corrections were essential for addressing significant weaknesses, such as misclassification due to faint staining or incorrect separation of tumor and stroma regions. 67 Furthermore, the unavailability of data prevents the replication of many pathology AI models, despite their satisfactory performance. As Wagner et al reported, around 70% of pathology AI studies fail to provide publicly accessible data for independent validation. 68

Overall, despite challenges, computational pathology can enhance oncology through multiple approaches. 69 Table 3 shows other selected models developed for different AI applications in pathology.

Selected models were developed for different AI applications in pathology from 2020 to 2024.

Robotic Surgery

AI has recently entered surgery, enhancing imaging, navigation, and robotics capabilities. This integration is poised to revolutionize surgical practices by linking real-time sensing with robotic control, facilitating varying degrees of autonomy in surgical procedures. 82

History of Robotic Surgery

Robotic systems in surgery have been a transformative leap in medical technology, with their utilization dating back over 20 years. 83 Integrating advanced hardware and AI has allowed these systems to assist in increasingly complex procedures, enhancing precision and improving patient outcomes. 84 Surgical robots like da Vinci have significantly advanced over traditional surgical methods. These systems provide surgeons greater control, flexibility, and access to intricate anatomical structures through robotic arms equipped with specialized surgical instruments. 85 The da Vinci robot, in particular, achieved a historic milestone in 1997 as the first surgical robot to receive FDA approval and has been deployed in over 8000 facilities, facilitating more than 13 million procedures. 86 Further studies have optimized this robot. For example, Eslamian et al developed an algorithm that autonomously positions the camera on the da Vinci surgical robot to optimize surgical visualization and determine the correct zoom level. 87

Medtronic’s latest robotic system, launched in 2021, is called the HUGO™ RAS System. This modular option is an alternative to the current market leader in surgical robotics, the da Vinci system. 88 The HUGO™ RAS System comprises an “open” surgical console with a high-definition 3D passive display, a system tower, and 4 arm carts. Each robotic arm is independent and features 6 different joints for flexibility. 89 Moreover, the cart column allows for the vertical placement of the arms by raising or lowering them. 90 Trocars attach these arms, while specialized motors known as instrument drive units control the instruments. This system provides adaptable and versatile arm movements due to its multiple joints. 91 Figure 4 illustrates the historical development of surgical robots, showcasing their contribution to automated surgical tasks and intraoperative safety measures.92 -94

The timeline of surgical robots’ development and evolution.

AI Roles in Robotic Surgery

AI techniques for robotic and autonomous systems can be categorized into 4 groups: perception, localization and mapping, surgical workflow recognition, and human-robot interaction. 95

In perception, distinguishing between native tissue and non-native devices is a critical challenge in implementing AI. De Backer et al used deep learning networks to identify instruments during robot-assisted kidney transplantation, achieving a Dice score of 97.10%. 96 Ping et al developed a method for instrument detection in surgical endoscopy using a modified CNN and the You Only Look Once (YOLO) v3 algorithm, achieving a sensitivity of 93.02% for surgical instrument detection and 87.05% for tooltip detection. 97

As for providing information on native tissue, Doria et al developed stiffness models through a wearable fabric to give haptic feedback to tissue palpation of intrauterine leiomyomas during robotic surgery. 98

Accurate localization and mapping are essential in surgical oncology to ensure that tumors are wholly removed while preserving as much healthy tissue as possible. Marsden et al presented AI models using fluorescence imaging to generate an accurate heatmap of probable cancer location and guide cancer excision in oral and oropharyngeal surgery. 99 To identify safe dissection planes in tissues where they naturally separate, Kumazu et al utilized a sensitive deep-learning model that automatically segments loose connective tissue fibers during robot-assisted gastrectomy. 100

Studies have focused on higher levels of physical task automation in human-robot cooperation.

For instance, Saeidi et al created a CNN with a U-Net algorithm to find where the bowel is and completely automate laparoscopic bowel anastomoses, rather than just 1-step suturing. 101

To automatically eliminate the smoke produced by electrocautery devices used in dissection and ligation, Wang et al proposed a convolutional neural network (CNN) combined with a Swim transformer. This innovative approach swiftly generates smoke-free surgical footage. 102

Surgical workflow recognition (SWR) provides surgeons with real-time feedback during operations to help prevent errors and assist in surgical training. Kitaguchi et al developed an AI model using a dataset of 300 laparoscopic colorectal resection videos. The model accurately classified 9 different surgical phases, achieving an average accuracy of 81%, and demonstrated an accuracy of 83.2% in distinguishing between dissection and exposure actions. 103

Sahu et al used a different method to reach SWR. They developed an RNN to recognize surgical phases by distinguishing the tools in videos. 104

To get through the time-consuming labeling process, Shi et al proposed an active learning system for gallbladder surgery. 105

Patient Recovery Statistics

Robotic surgery has been associated with numerous patient benefits, including reduced chances of readmission, shorter hospital stays, fewer minor surgical scars, a lower risk of infections, and less pain during recovery. 106 A study published in JAMA found that robotic surgery reduces the chance of readmission by half (52%) and revealed a four-fold (77%) reduction in the prevalence of blood clots. 107 Also, Patients’ physical activity, stamina, and quality of life improved significantly. 107 These findings demonstrate the benefits of robot-assisted surgery, indicating a safer surgery with fewer complications and a quicker return to daily activities. 108

While AI assistants have made significant strides in surgery, they can sometimes introduce time-consuming complexities. For instance, advancements in telesurgery offer promising capabilities; however, they often suffer from latency issues unless connected to high-speed, reliable networks. Latency exceeding 200 to 300 ms can compromise precision and safety, leading to increased fatigue for surgeons and a higher likelihood of errors. 109

Some studies have also reported low accuracies of AI in surgery. For example, in postoperative outcome prediction, an AI model predicted urinary continence after robot-assisted radical prostatectomy with a mean absolute error that ranged from 85.9 to 134.7 days. 110 A Random Forest model detected only 35.7% of adverse events intraoperatively, despite 99.6% specificity. 111 Similarly, an AI model trained for autonomous tissue retraction tasks had a 20% error rate in detecting tissue flaps, and performance dropped further due to variability in tissue positioning. 112

A systematic review conducted in 2021 analyzed 35 studies on the application of AI in robot-assisted surgery. It revealed that none of the models had been validated using datasets from multiple medical centers. Furthermore, models trained on small datasets, such as JIGSAWS and MICCAI EndoVis, demonstrated poor generalizability to real-world surgical situations and were limited in the tasks they could perform. 113 These findings show how AI may struggle with complex, dynamic, and unpredictable surgical environments.

Overall, the integration of AI in surgery has significantly impacted the field, offering enhanced precision and improved patient recovery statistics. 114 However, unlike screening and diagnosis, the implementation of AI in surgery is relatively new and requires further approval and standardization to ensure patient safety, optimal outcomes, and widespread adoption. 115

AI in Anti-Cancer Drug Discovery

In the realm of treatment, the development of effective medications is a critical cornerstone. 116 However, crafting new and triumphant anti-cancer drugs from the ground up remains a strenuous, costly, and time-intensive endeavor demanding intricate multidisciplinary collaborations spanning medicinal chemistry, computational chemistry, biology, pharmacology, and clinical research. 117 The statistics paint a formidable picture, indicating that ushering a new drug from inception to clinical application can span over 10 to 17 years, with costs ballooning to almost $2.8 billion. Moreover, a mere 10% of compounds tested in clinical trials successfully navigate the arduous path to market availability. 118 Figure 5 illustrates the steps of drug discovery.119,120

The typical steps of drug discovery.

Despite these challenges, AI has emerged as a formidable and optimistic technology in pursuing swifter, more cost-effective, and productive designs for anti-cancer drugs. 121 Table 4 presents a compilation of anti-cancer drugs developed using AI and subsequently progressed to clinical studies.

Anti-Cancer Drugs Designed by AI Have Reached Clinical Studies.

Many different algorithms are used in drug discovery. 128 Some of the frequent algorithms in drug design are Support Vector Machines (SVM), Random Forest (RF), and Convolutional Neural Networks (CNN). 129 In Drug screening, Machine Learning (ML), Meta-heuristics, and similarity are mainly used. 130 In Chemical synthesis, SVMs, RFs, artificial neural networks (ANNs), CNNs, recurrent neural networks (RNNs), and graph neural networks (GNNs) are common. 131 While in drug repurposing ML, deep learning (DL), network transmission, matrix completion, and matrix factorization are frequently used. 132 Figure 6 demonstrates the role of AI in drug discovery.133 -135

The role of AI in different steps of drug discovery.

Anti-Cancer Drug Target Identification

Identifying interactions between drugs and their targets is a crucial first step in the design of effective anti-cancer medications. 27 The potency of these interactions is commonly quantified through binding affinity constants, which encompass metrics like dissociation constant (Kd), inhibition constant (Ki), and half-maximal inhibitory concentration (IC50). 136 Given the laborious and costly nature of experimentally pinpointing these interactions, the computational forecast of drug-target interactions holds significant appeal. 137 Precise and efficient predictions can significantly bolster drug development efforts and expedite the discovery of promising lead compounds or hits. 138

AI can integrate multiple datasets to select the best targets. For instance, Tong et al integrated clinical data with gene expression profiles and protein-protein interaction networks to predict candidate drug targets in liver cancer. They achieved an AUC of 0.88 using a one-class support vector machine. 139 Madhukar et al developed a Bayesian-based machine learning method (BANDIT), which integrated 6 data types on more than 2000 molecules to achieve approximately 90% target prediction accuracy. 140

On the Way to Hit and Lead Compounds

Following the identification of therapeutic targets crucial for anti-cancer drug development, the next step involves screening for hit compounds, molecules possessing initial activity against specific targets or modes of action. 41 Computer-aided discovery of hit compounds primarily relies on high-throughput screening techniques. 141 This screening process can be carried out through 2 main approaches: structure-based screening and ligand-based screening. 142 Recent studies have demonstrated the efficacy of fragment-based screening methods in hit compound discovery. 143 While high-throughput screening has proven successful in numerous research and development endeavors, screening millions of compounds has encountered efficiency limitations and significant costs. 144 However, with the rise of GPU technology, enhanced computational capabilities, and swift advancements in AI, many virtual screening tools for hit compounds have emerged, enriching the arsenal of tools available for drug design. 145

ML models, specifically DL models like CNNs, have been developed to predict binding affinity and even generate 3D structures of protein-ligand complexes. These models are trained on massive datasets of known protein-ligand interactions and structures.

In their 2022 study, Arul Murugan et al conducted a comparative analysis of various computational screening methods for assessing ligand-protein interactions. Their findings identified 4 standout models: DEELIG, BgN-score, PerSPECT-ML, and TNet-BP, which recorded impressive Pearson correlation coefficients (Rp) of .89, .86, .84, and .83, respectively. Notably, DEELIG emerged as the most effective model, utilizing a comprehensive array of features that characterize both the protein binding pocket and the properties of the ligand, enabling it to predict binding affinity accurately. 146

Hit compounds must evolve into lead compounds to achieve refined and optimized properties suitable for preclinical testing. Consequently, it is essential to thoroughly investigate their properties to explore various synthetics and attain maximum optimization. This investigation utilizes QSAR (Quantitative Structure-Activity Relationship) models, which predict the biological activity of molecules based on their chemical structures. Additionally, QSPR (Quantitative Structure-Property Relationship) models are employed to forecast physicochemical properties, such as solubility and permeability, which play a critical role in drug development. 147

In 2023, Turon et al introduced ZariaChem, a groundbreaking tool designed to evaluate Quantitative Structure-Activity Relationships (QSAR) and Quantitative Structure-Property Relationships (QSPR) in low-resource environments. Utilizing a fully automated, machine-learning-driven system, they pioneered a virtual screening cascade aimed at drug discovery for malaria and tuberculosis, marking a significant advancement in this critical area of research. 148

Prediction of Anti-Cancer Drug Reactions

The relationship between drug reactions and their ADMET (absorption, distribution, metabolism, excretion, and toxicity) properties is crucial in determining drug sensitivity and toxicity. Understanding these interactions is essential for optimizing therapeutic efficacy and minimizing adverse effects. 149 Precise drug reaction prediction can significantly enhance clinical trial efficacy, predict adverse drug reactions, and improve patient outcomes. 150 AI methods in drug design have increased due to advancements in AI technologies. 25 Past research has highlighted various ADMET characteristics such as Caco-2 permeability, carcinogenicity, blood-brain barrier permeability, and plasma protein binding. 151 For instance, in predicting drug plasma protein binding, Mulpuru and Mishra developed a forecasting model for the portion of unbound drugs in human plasma by employing a chemical fingerprint alongside an accessible AutoML framework. 152 In 2023, Swanson et al introduced ADMET-AI, the fastest web-based platform for ADMET predictions. This new tool uses a cutting-edge graph neural network, Chemprop-RDKit, to provide tailored predictions by matching input molecules with approved drugs. The advanced algorithms of this tool set a new standard in the field by streamlining the drug development process and enhancing the accuracy of assessments related to absorption, distribution, metabolism, excretion, and toxicity. 153

Anti-Cancer Drug Repurposing

The effective identification of novel applications for approved or well-established clinical drugs plays a crucial role in drug discovery, a process commonly referred to as drug repositioning. 154 Presently, one of the primary approaches for drug repurposing involves predicting drug-target relationships. 155 For example, to enable personalized drug repositioning leveraging genomic data, Cheng et al introduced a genome-wide localization system network algorithm (GPSnet). 156 This technique utilizes patient-specific DNA and RNA sequencing data concerning specific targets to delineate disease modules for repurposing drugs. 157 Their research verified that Ouabain’s established arrhythmia and heart failure medication effectively targets the HIF1α/LEO1-mediated cellular metabolic pathways in lung adenocarcinomas, hinting at potential anti-tumor properties.156,157

Liu et al proposed the IDMCSS method to improve disease module identification by refining protein-protein interaction (PPI) networks. They incorporate potential interactions and remove incorrect ones based on similarities between disease-associated proteins and their neighbors to improve detection accuracy. 26

Forecasting interactions between drugs and diseases is crucial for disease-focused drug repurposing. 158 For instance, Jarada et al introduced an innovative deep-learning framework, SNF-NN, designed to anticipate novel drug-disease interactions by utilizing data on drug-related similarities, disease-related similarities, and existing drug-disease interactions. 159

Conclusion

Over the past decade, AI has profoundly transformed the field of oncology, enhancing early detection, precision diagnostics, robotic-assisted surgery, and expediting drug discovery. This review illustrates how AI systems have outperformed traditional methods in critical areas such as tumor classification and biomarker analysis. However, the integration of AI into clinical practice is not without challenges. Problems such as dataset biases, insufficient prospective validation, regulatory obstacles, and cost barriers require immediate attention and resolution.

Discussion

AI technologies in oncology are poised to bring significant advancements, including predictive analytics, targeted delivery, and image analysis. As shown in Table 5, AI-driven tools have demonstrated considerable potential in enhancing diagnostic accuracy, streamlining workflows, and personalizing treatment plans. However, several challenges must be addressed to ensure their reliable implementation in clinical settings.

A comparative Analysis of AI and Traditional Methods in Cancer Care Was Compiled From the Studies Reviewed in This Paper.

Present AI models often rely on limited datasets and have primarily undergone retrospective trials for testing. To establish their reliability, these models require further multicenter validation in prospective studies. Although patient outcome assessments are critical for validation in areas like robotic-assisted surgery, there has been a notable lack of research focusing on these assessments, particularly in cancer-related contexts. 113

The development of AI models must prioritize the use of diverse and representative datasets. For instance, many convolutional neural networks (CNNs) that demonstrate high accuracy in detecting skin lesions are trained on datasets where only 5% to 10% of the participants are Black. This results in a model accuracy for Black individuals that is roughly half that for White individuals, highlighting the critical need for diverse datasets to ensure equitable health outcomes. 160

The integration of large and diverse datasets is crucial to addressing complex challenges in healthcare. This necessitates collaboration between technology companies and healthcare providers. However, the reliance on medical and personal information underscores the need for robust frameworks to enable secure and anonymous data sharing. One promising approach is federated learning, which processes data locally at each institution. By sharing only model updates instead of raw data, this method effectively preserves privacy and enhances security. 161

Future statistical studies should optimize workflows to minimize bias, including determining the necessary number of annotations or samples required for robust model training. Additionally, adherence to established guidelines for model reporting, such as the STARD-AI or TRIPOD-AI frameworks, is crucial for ensuring reproducibility and comparability across studies.162,163

A significant concern in AI-driven medicine is the’ “black box” nature of many systems. This term refers to models that lack transparency in their input features and algorithms, rendering them difficult to interpret. Consequently, some clinicians hesitate to utilize AI tools, while others may blindly follow AI recommendations without fully understanding the underlying logic, potentially leading to inappropriate treatments.

For instance, an experimental study involving 28 pathology experts found that integrating AI improved overall diagnostic accuracy. However, it also resulted in a 7% bias rate, where initially accurate diagnoses were inaccurately altered following AI intervention. Notably, time pressure further amplified the reliance on incorrect AI outputs, underscoring significant risks associated with deskilling and over-dependence on technology. 164

A recent advancement in addressing the black box problem is the emergence of Explainable AI (XAI). This approach aims to make complex AI logic more interpretable for humans. By employing techniques such as data visualization and model simplification, XAI fosters greater trust and reproducibility in AI models. These developments are critical for enhancing transparency in AI systems, ensuring that users can understand and rely on AI-driven decisions. 165

Finally, the financial implications of integrating AI into medicine raise significant concerns. For example, hospital-wide AI implementation can exceed $36 billion annually, 166 creating challenges for resource-limited settings. Expenses of AI adoption may encompass expensive infrastructure, such as high-performance computing and cloud storage, 167 maintenance, and cybersecurity measures to protect sensitive genomic and imaging data, 168 clinician training to interpret AI outputs 169 and the cost of rigorous multi-center trials. 170 Consequently, comprehensive cost-benefit analyses and pilot studies are urgently needed to substantiate the case for the widespread adoption of AI in healthcare.

Footnotes

Abbreviations

AI – Artificial Intelligence

ANN – Artificial Neural Network

AUC – Area Under the Curve

BRAF – B-Raf Proto-Oncogene (biomarker)

CNN – Convolutional Neural Network

CT – Computed Tomography

DL – Deep Learning

DSOC – Digital Single-Operator Cholangioscopy

EDL-BC – Ensemble Deep Learning for Breast Cancer

FDA – Food and Drug Administration

FGFR – Fibroblast Growth Factor Receptor

GEP – Gene Expression Profiling

GPSnet – Genome-wide Positioning System network

HDAC – Histone Deacetylase

HER2 – Human Epidermal Growth Factor Receptor 2

H&E – Hematoxylin and Eosin (staining)

IHC – Immunohistochemistry

Ki-67 – Proliferation Marker Protein

LDCT – Low-Dose Computed Tomography

ML – Machine Learning

MRI – Magnetic Resonance Imaging

MSKCC – Memorial Sloan Kettering Cancer Center

PET – Positron Emission Tomography

QSAR – Quantitative Structure-Activity Relationship

QSPR – Quantitative Structure-Property Relationship

RAS – Robot-Assisted Surgery

RF – Random Forest

RNN – Recurrent Neural Network

SVM – Support Vector Machine

TORS – Trans Oral Robotic Surgery

USP1 – Ubiquitin-Specific Peptidase 1

WSI – Whole-Slide Image

Author Note

The study was conducted without financial support or relationships that could influence its outcomes. It was designed, executed, and analyzed independently, ensuring objectivity and integrity in the findings. The authors affirm that their work was carried out solely in their personal and academic capacities, free from the involvement of any governmental or sanctioned entities. They adhered to publishing ethics and compliance with relevant sanctions laws throughout the research and publication process.

Author Contributions

M.A.I. designed the presented idea, supervised the project, and edited the manuscript. N.N.N. and H.H. wrote the manuscript. Additionally, N.N.N. provided all the figures and plots. All authors have read and approved the final version of the manuscript and have full access to all of the data in this study. They take complete responsibility for the integrity and accuracy of the data analysis.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data supporting the findings of this study are available from the corresponding authors upon reasonable request.