Abstract

A modified variant of gray wolf optimization algorithm, namely, mean gray wolf optimization algorithm has been developed by modifying the position update (encircling behavior) equations of gray wolf optimization algorithm. The proposed variant has been tested on 23 standard benchmark well-known test functions (unimodal, multimodal, and fixed-dimension multimodal), and the performance of modified variant has been compared with particle swarm optimization and gray wolf optimization. Proposed algorithm has also been applied to the classification of 5 data sets to check feasibility of the modified variant. The results obtained are compared with many other meta-heuristic approaches, ie, gray wolf optimization, particle swarm optimization, population-based incremental learning, ant colony optimization, etc. The results show that the performance of modified variant is able to find best solutions in terms of high level of accuracy in classification and improved local optima avoidance.

Literature Review

Many researchers have proposed various nature-inspired techniques to solve the different types of real-life problems to improve the quality of the solutions. The most popular meta-heuristics algorithms are discussed in this section.

Evolution strategies (ES) are evolutionary algorithms that date back to the 1960s and are most commonly applied to black-box global optimization functions in continuous search spaces. Evolution strategy was proposed by Rechenberg. 1 This approach is population-based on ideas of evolution and adaptation. In this use, mutation, recombination, and selection are applied to a crowd of individuals containing member of the population solutions to evolve iteratively better and better optimization problem solutions.

The particle swarm optimization (PSO) algorithm was first introduced by RC Eberhart (Electrical Engineer) and James Kennedy (Social Psychologist). 2 Its fundamental judgment was primarily inspired by the simulation of the social behavior of animals such as bird flocking and fish schooling. While searching for food, the birds either are scattered or go together before they settle in the position where they can find the food. While the birds are searching for food from one position to another, there is always a bird that can smell the food very well, that is, the bird is observable of the position where the food can be found, having the correct food resource message. Because they are transmitting the message, particularly the useful message at any period while searching the food from one position to another, the birds will finally flock to the position where food can be found.

Genetic algorithm (GA) was proposed by Holland. 3 This approach is inspired by Darwin’s theory of evolution “survival of the fittest.” In this approach, each new population is created by mutation and combination of the individuals in the previous generation. Because the best individuals have a higher probability of participating in generating the new position of the candidate, the new position is likely to be better than the previous position of the candidate.

Ant colony optimization (ACO) approach was proposed by Marco Dorigo et al. 4 This approach is based on the behavior of ants seeking a path between their colony and source of food. The basic idea has since diversified to solve a wider class of numerical problems and improved the quality of the solutions.

Population-based incremental learning (PBIL) was introduced by Shumeet. 5 It is a global optimization approach and an estimation of distribution algorithm. Population-based incremental learning approach is an extension to the Evolutionary GA achieved through the re-examination of the performance of the evolutionary GA in terms of competitive learning. It is easier than a GA and in a number of cases leads to better and good qualities of solutions than a standard GA.

Recently, some of most popular variants are gravitational search algorithm (GSA), 6 gravitational local search, 7 big bang-big crunch, 8 central force optimization, 9 artificial chemical reaction optimization algorithm, 10 charged system search (CSS), 11 ray optimization, 12 galaxy-based search algorithm, 13 black hole, 14 curved space optimization, 15 and small-world optimization algorithm, 16 and many others. All these approaches are different from evolutionary algorithms in the sense that a random set of search agents communicate surrounding the search area according to the physical rules.

Gray wolf optimization (GWO) algorithm was first proposed by Mirjalili et al 17 It is a nature-inspired optimizer approach and mimics the leadership hierarchy and hunting mechanism of gray wolves in nature. Four types of gray wolves alpha (α), beta (β), delta (δ), and omega (ω) are worked for simulating the leadership hierarchy. A wolf very near to a target is assigned by α, second level of near to a target is assigned as β, third level of near to a target is assigned as δ, and remaining wolves are assigned as ω. The main 3 stages of hunting, searching for target, encircling target, and attacking target, have been implemented. The performance of this approach was tested on several benchmark functions and real-life problems. On the basis of results obtained, it was concluded that the present approach is superior to and better than other existing nature-inspired approaches such as PSO, differential evolution, GSA, ES, and evolutionary programming.

The GWO for training multilayer perceptron was first proposed by Mirjalili. 18 On the basis of this existing variant, the author solved 3 function approximation data sets and 8 standard data sets including 5 classifications. The performance of the proposed variant was compared with a number of existing nature-inspired algorithms such as PSO, GA, ACO, ES, and PBIL. The results obtained showed that the proposed variant provides competitive solutions in the forms of improved local optima avoidance and also demonstrates high level of accuracy in approximation and classification of the proposed trainer.

Some of the recent population-based nature-inspired training algorithms are social spider optimization, 19 invasive weed optimization, 20 chemical reaction optimization, 21 teaching-learning–based optimization, 22 biogeography-based optimization, 23 and CSS. 24 Several researchers have used the above variants to solve the real-life medical problems and presented their high performance in terms of approximating the global optimum. In this article, we have also solved these real medical problems using the newly proposed mean gray wolf optimization (MGWO) algorithm. We have also reported that quality of solution of these problems using MGWO algorithm is better than other existing algorithms.

Two novel binary versions of the GWO (bGWO) algorithm were also proposed by Emary et al 25 for feature selection in wrapper mode. These algorithms were applied and used for feature selection in machine learning domain using different initialization methods. The bGWO approaches are hired in the feature selection domain for evaluation, and the results are compared against 2 of the well-known feature selection algorithms—PSO and GA.

Mittal et al 26 developed a modified variant of the GWO called modified GWO. An exponential decay function is used to improve the exploitation and exploration in the search space over the course of generations. On the basis of obtained results, authors proved that the modified variant benefits from high exploration in comparison with the standard GWO, and the performance of the variant is verified on a number of standard benchmarks and real-life NP-hard problems.

Sodeifian et al 27 used the response surface methodology to study the efficiency of supercritical fluid extraction from Cleome coluteoides. Chemical compositions extracted by hydrodistillation and SC-CO2 methods were identified by gas chromatography (GC)/mass spectrometry and determined by GC/flame ionization detector. Comparing the 2 techniques, the obtained solutions showed higher total extraction yield with SC-CO2 method.

The rest of the article is organized as follows. The newly proposed algorithm MGWO algorithm is presented in section “MGWO Algorithm.” The proposed mathematical model and algorithm have also been discussed in section “MGWO Algorithm.” The tested benchmark functions and numerical experiments are presented in sections “Testing Functions” and “Numerical Experiments.” Parameter setting, results, discussion of standard benchmark functions, and real-life problems are represented in sections “Parameter Setting,” “Analysis and Discussion on the Results,” and “Real-Life Data Set Problems.” Finally, the conclusion of the work is summarized at the end of the article.

Gray Wolf Optimization

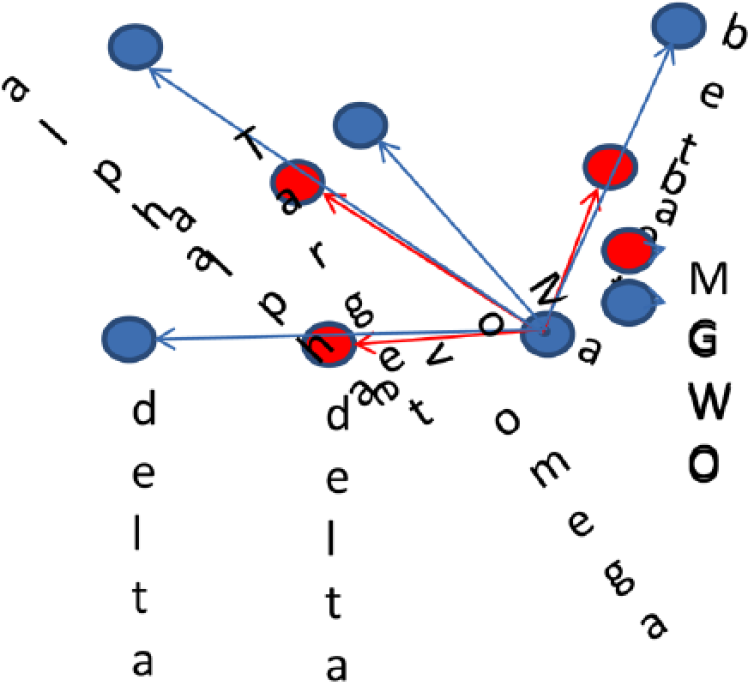

Mirjalili et al 17 proposed a new swarm-based meta-heuristic approach. This variant mimics the hunting behavior and social leadership of gray wolves in nature. In this variant, the crowd is divided into 4 different groups (Figure 1).

Hierarchy of gray wolf (dominance decreases from top to down). Adapted from Mirjalili et al. 17

The first 3 wolves in the best position (fittest) are indicated as

where

The vectors

where components of

Hunting

To mathematically simulate the hunting behavior of gray wolves, the hunt is usually guided by α, β, and δ which also participate in hunting occasionally. Suppose that α is the best solution of the candidate, β and δ have better knowledge about the potential location of prey.

We save the first 3 best candidate solutions obtained so far and oblige the other search agents to update their positions according to the position of the best search agents. The following mathematical equations are developed for this simulation:

The wolves update their positions randomly around the prey as represented in Figure 2. 17

Positions updated by the wolves in gray wolf optimization. Adapted from Mirjalili et al. 17

MGWO Algorithm

In this article, a modified variant MGWO is proposed for the purpose of improving the accuracy, convergence speed, and time performance of the GWO algorithm. In the proposed variant, mathematical equations of encircling and hunting have been modified. Remaining equations/procedure is same as that in GWO. 17

The main purpose of this variant is to improve the movement or optimal path of each wolf in the searching space.

The MGWO approach is outlined in the following sections.

Encircling prey

Gray wolves encircle the prey during the hunt which can be modified using the following mathematical equation:

where µ is the mean,

The vectors

where components of

Hunting

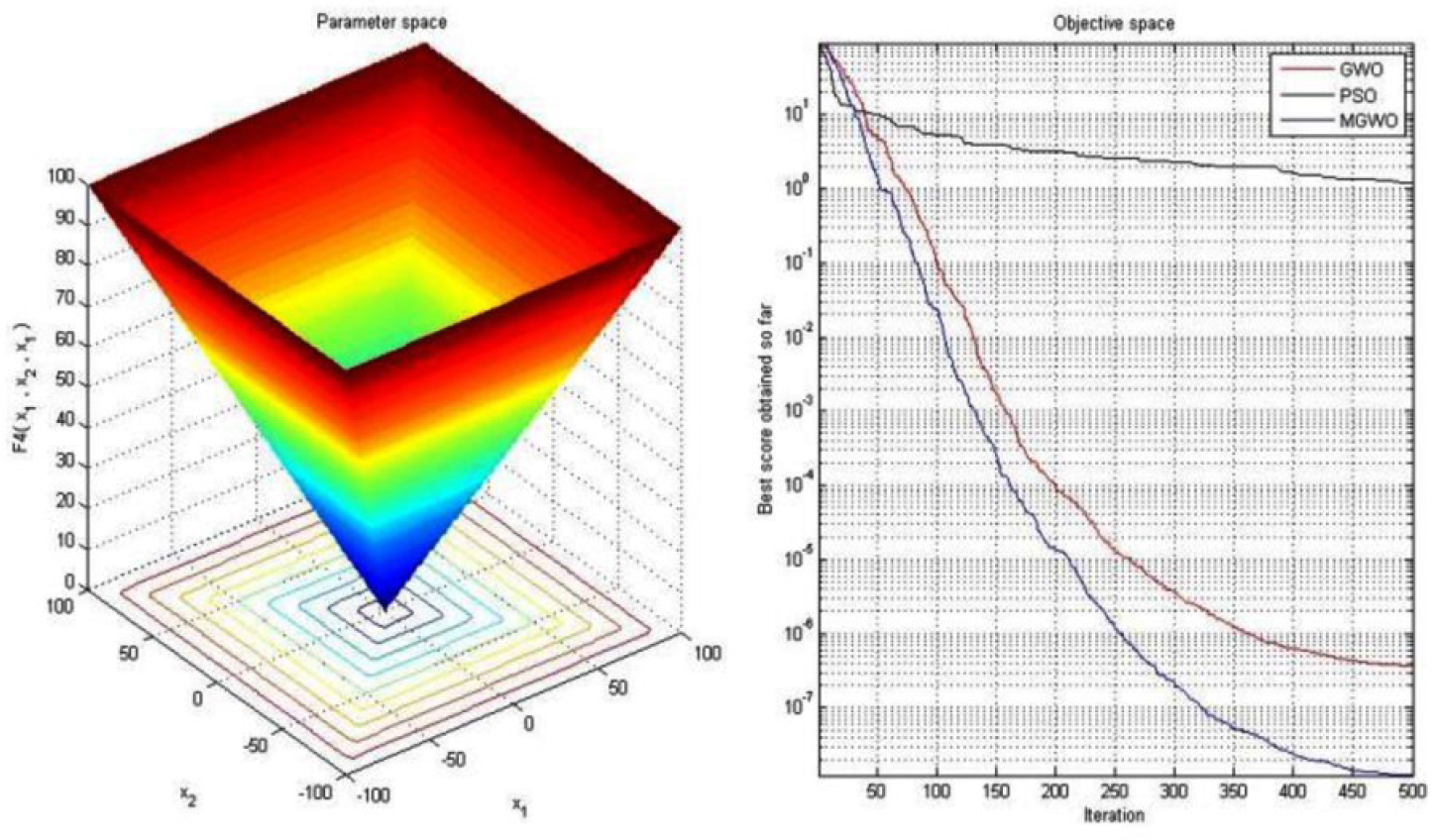

The hunting of prey is usually guided by α, β, and δ groups which participate occasionally. First 3 best candidate solutions are referred by α, β, and δ and the remaining candidate solutions are denoted by ω. The position of each wolf has been modified in the search space area by taking the mean of the positions. The following modified mathematical equations are proposed in this regard (Figure 3):

(a) Performance index graph and (b) performance graph of PSO, GWO, and MGWO. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

In GWO and MGWO algorithms, the wolves update positions randomly around the prey which can symbolically be represented as shown in Figure 4.

Positions updated in GWO and MGWO. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

The pseudocode of the MGWO algorithm:

Initialize the population

Initialize

Calculate the fitness of each search candidate (agent) of the population in the search space

While (t < max. number of iterations)

For each search candidate (agent)

Update the position of the current search (candidate) agent using equation (16)

End for

Update

Find the fitness of all search candidate (agent)

Update

End while

Return

Testing Functions

The convergence and time-consuming performance of proposed variant have been tested on several types of standard functions, and the results obtained are compared with those obtained using other recent meta-heuristics. These classical functions have divided into 4 different parts, ie, unimodal, multimodal, fixed-dimension multimodal, and composite functions and are listed in Appendix 1 (Tables A to C) where

Numerical Experiments

The MGWO, GWO, PSO, PBIL, and ACO algorithms are coded in MATLAB R2013a and implemented on Intel HD Graphics, 15.6″ 16.9 HD LCD, Pentium-Intel Core, i5 Processor 430 M, 320 GB HDD, and 3 GB Memory.

Parameter Setting

In MGWO, GWO, PSO, PBIL, and ACO algorithms, we have set the following parameters:

Number of search agents (candidate) = 30;

Maximum number of iterations (generations) = 500;

Analysis and Discussion on the Results

In this section, effectiveness of using MGWO algorithm has been checked. Usually, it is done by solving a set of benchmark problems. We have used 23 such classical functions for the purpose of comparing the performance of the modified variants with other recent meta-heuristics. These classical functions are divided into 3 types:

Unimodal

Multimodal

Fixed-dimension multimodal

The MGWO and GWO variants were run 30 times on each benchmark function. The numerical results (best solutions, minimum objective function value, maximum objective function value, standard deviation, mean and time performance) are reported in Tables 1 to 18. The modified variants, GWO and PSO algorithms, have to be run at least more than 10 times to find the best statistical results. It is again a common technique that a variant is run on a function many times and best solutions, mean, standard deviation, time-consuming performance, and minimum and maximum objective functions of the superior are obtained in the last generation.

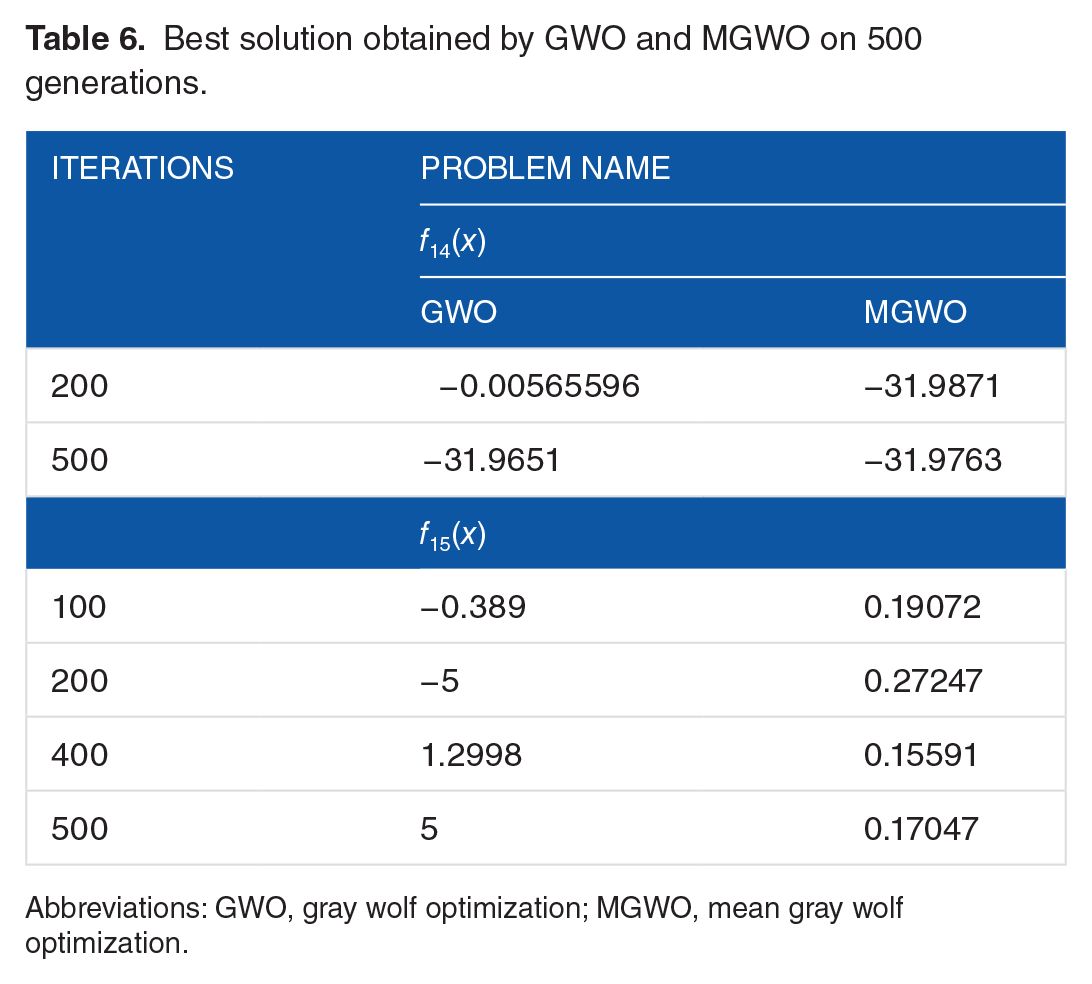

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

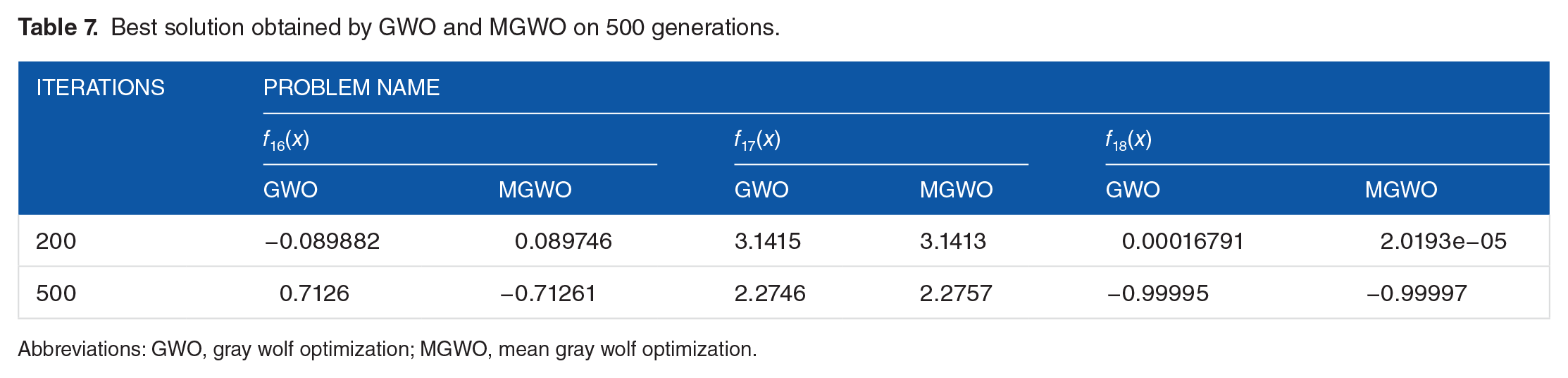

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

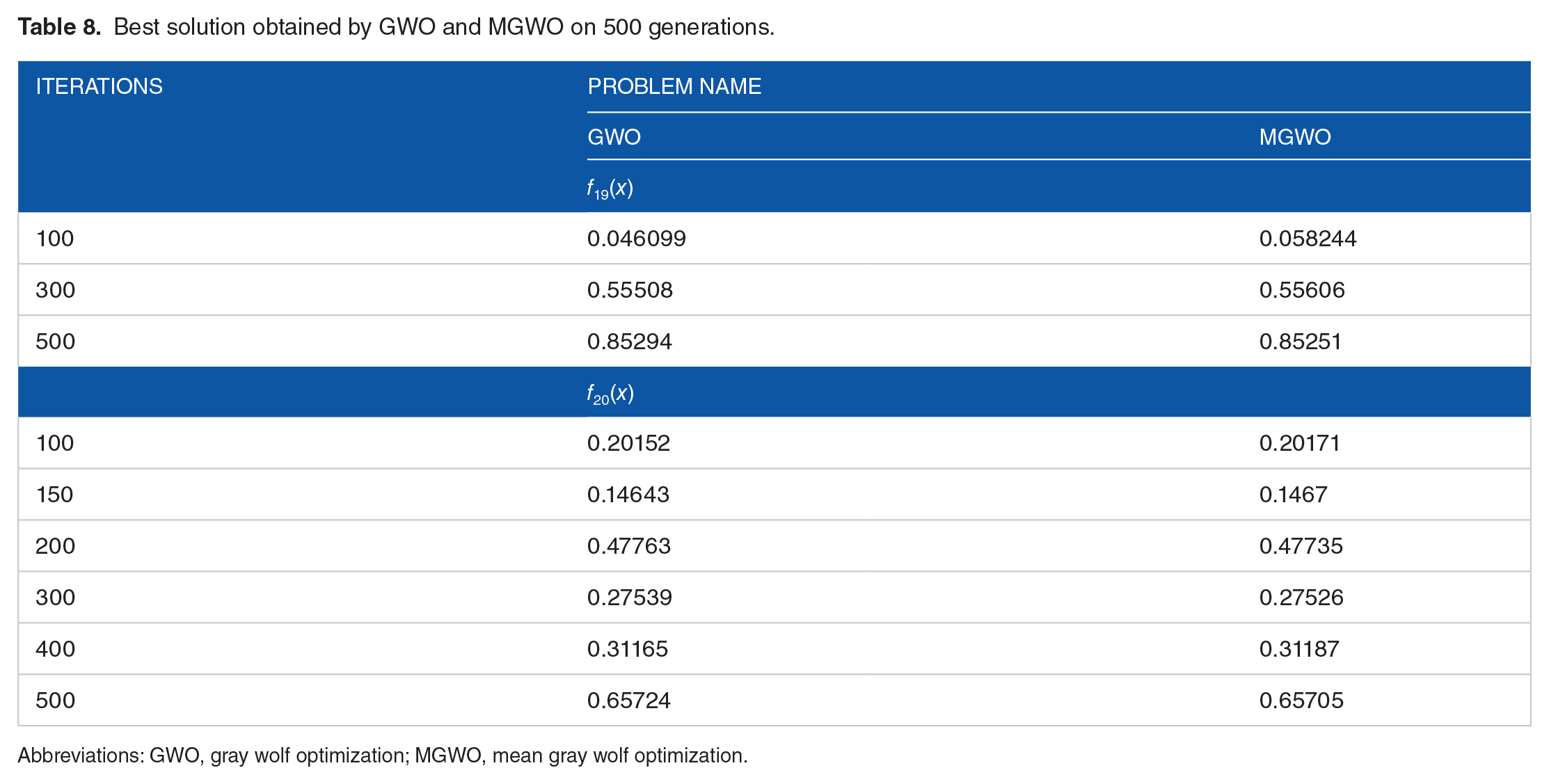

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Best solution obtained by GWO and MGWO on 500 generations.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Results of unimodal benchmark functions (maximum and minimum).

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

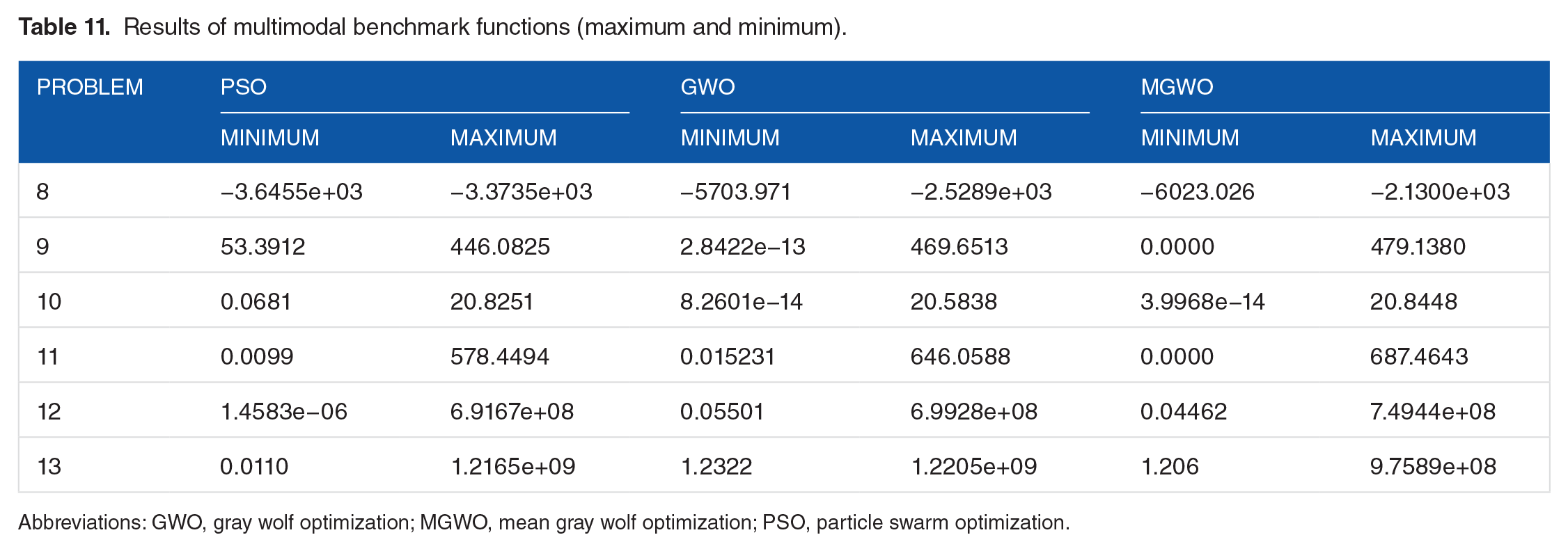

Results of multimodal benchmark functions (maximum and minimum).

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Results of fixed-dimension multimodal benchmark functions (maximum and minimum).

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

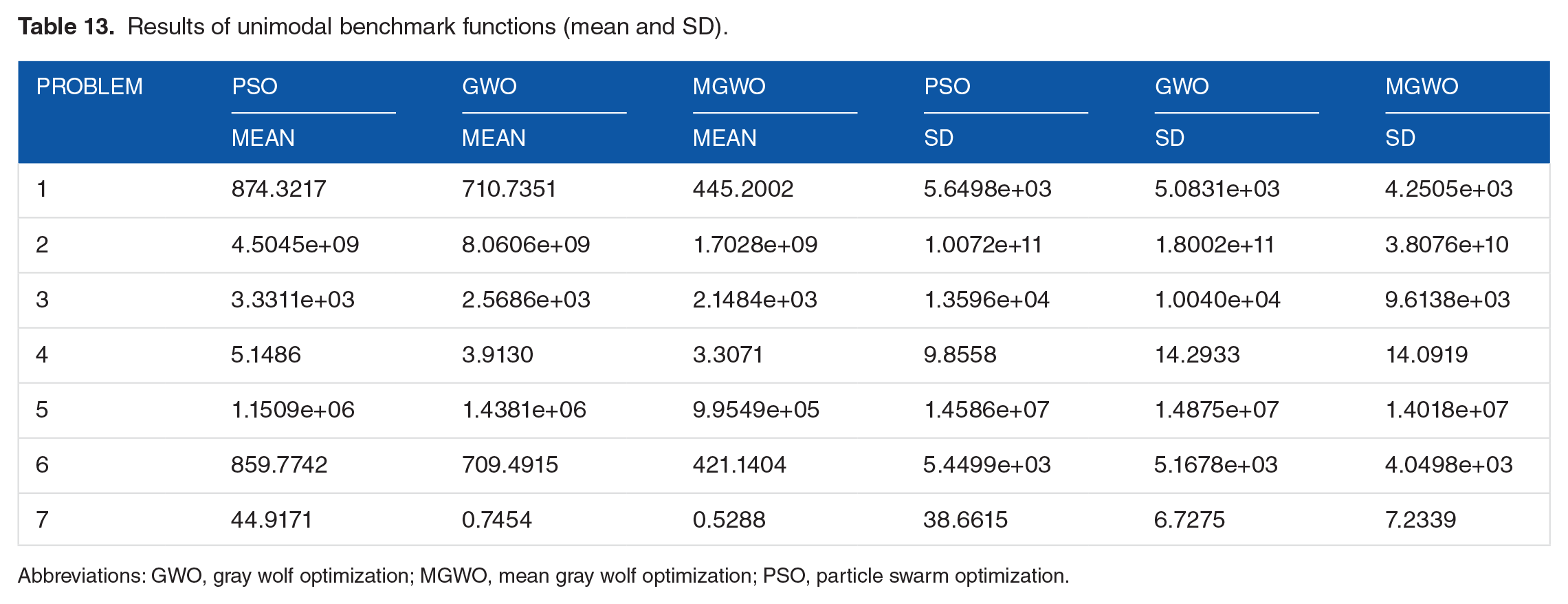

Results of unimodal benchmark functions (mean and SD).

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Results of multimodal benchmark functions (mean and SD).

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

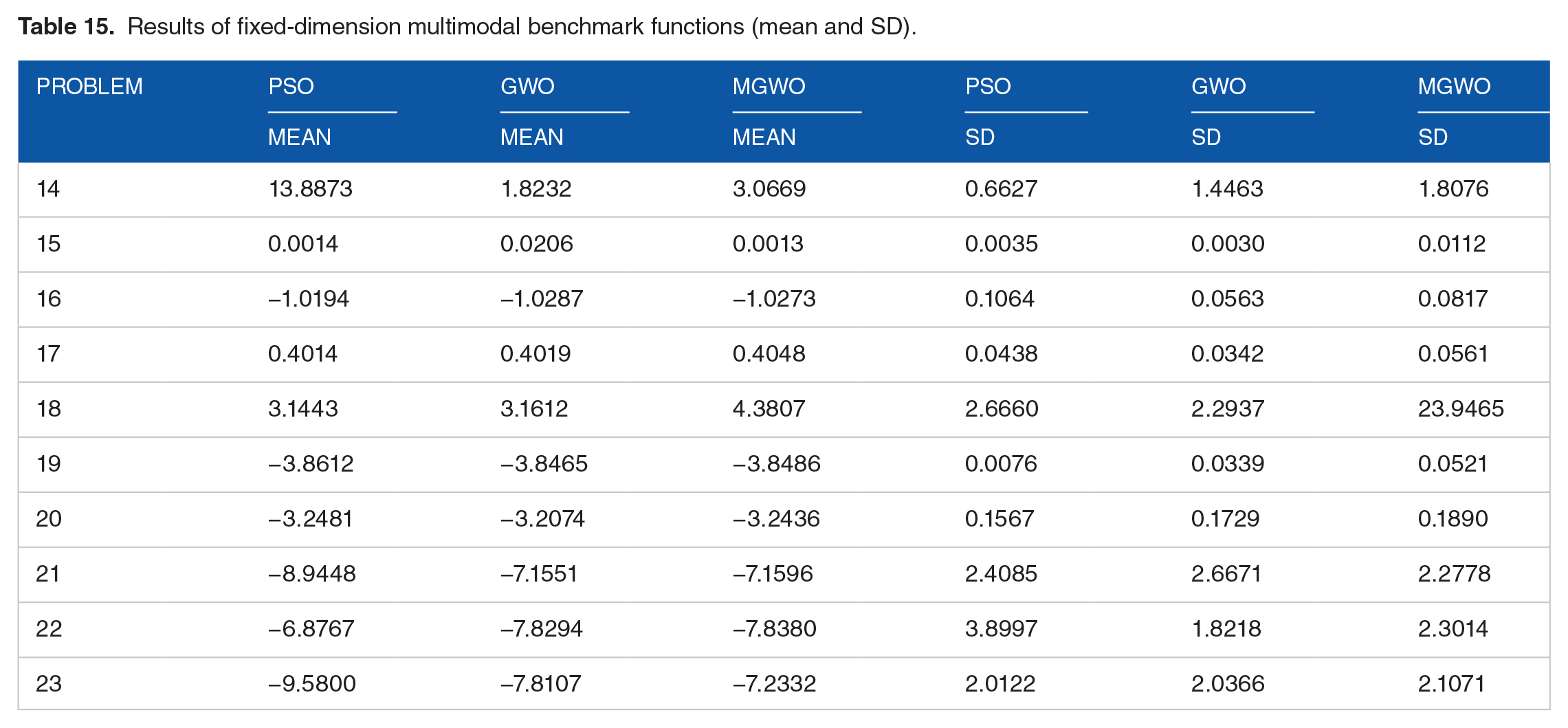

Results of fixed-dimension multimodal benchmark functions (mean and SD).

Time-consuming results of unimodal benchmark functions.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Time-consuming results of multimodal benchmark functions.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Time-consuming results of fixed-dimension multimodal benchmark functions.

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

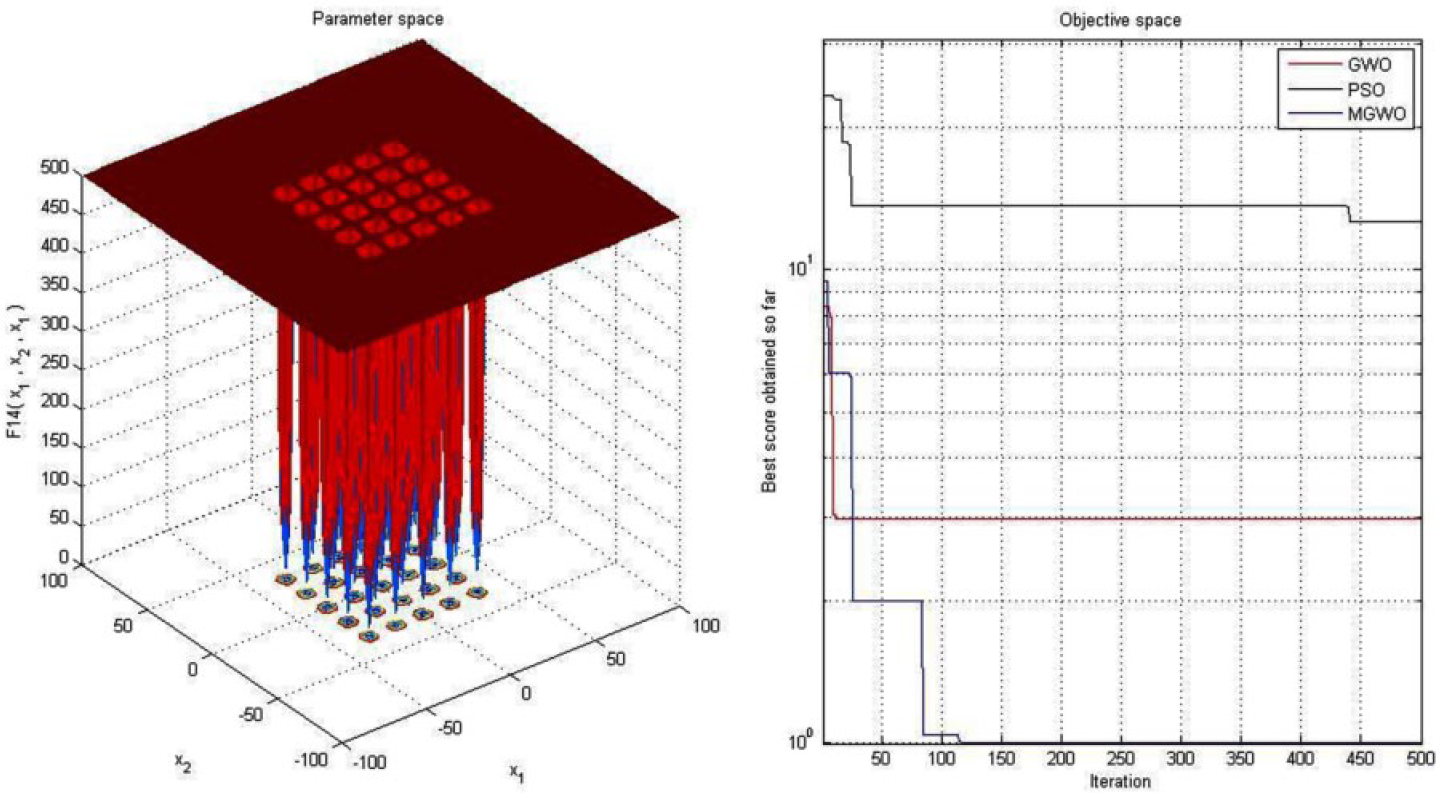

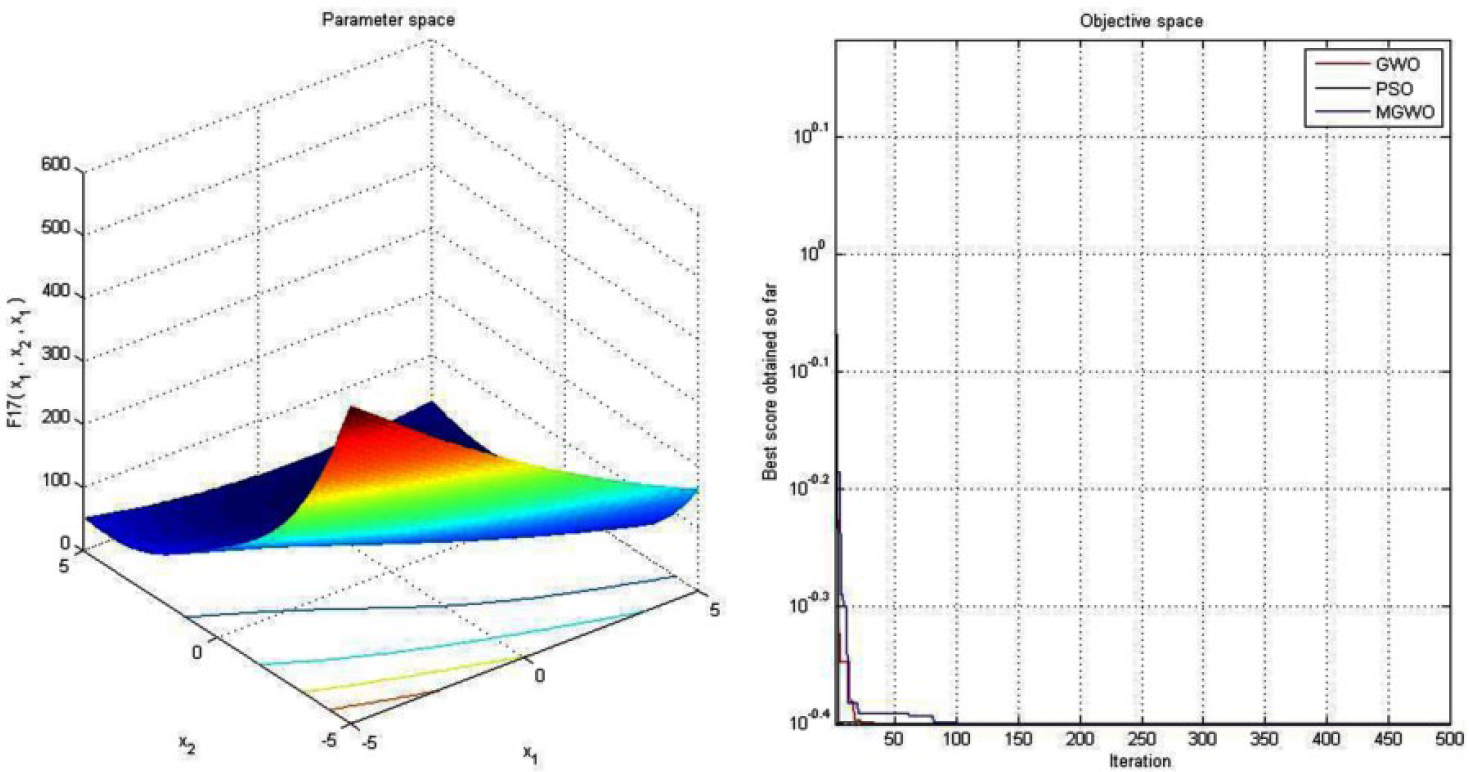

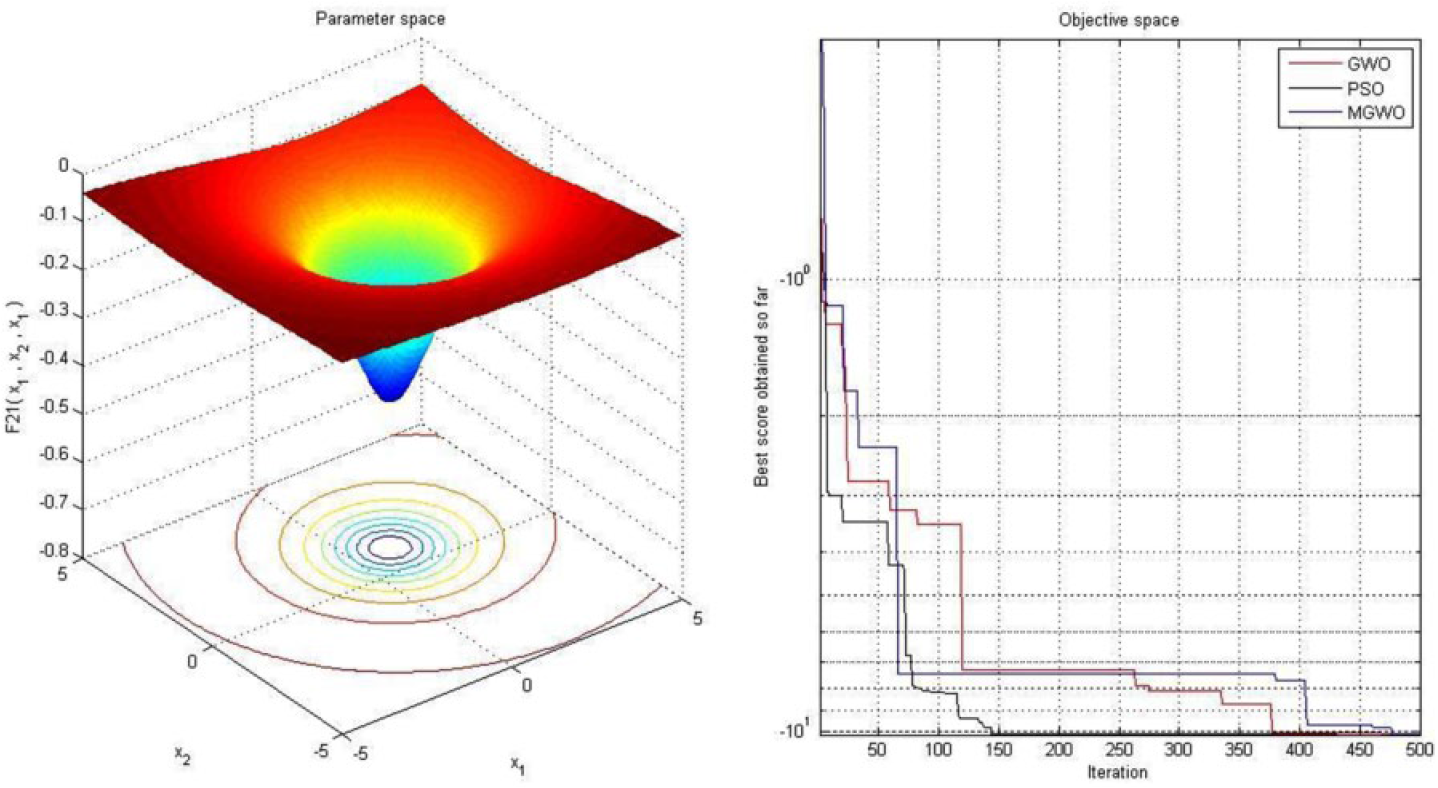

To verify the convergence and time-consuming performance of MGWO variant, PSO and GWO variants are chosen. Here, we use 500 generations and 30 search agents for each of the variants. The convergence performance for unimodal, multimodal, and fixed-dimensional multimodal standard classical functions for the PSO, GWO, and MGWO is given in Figures 5 to 27 and results are presented in Tables 1 to 9. Simulated results in Tables 1 to 9 and Figures 5 to 27 show that the proposed variant is superior to PSO and GWO in terms of rate of convergence and best optimal solution. Hence, all experimental results reveal that the MGWO is relatively better as compared with PSO and GWO.

Convergence graph of unimodal benchmark function (F1). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F2). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F3). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F4). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F5). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F6). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of unimodal benchmark function (F7). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of multimodal benchmark function (F8). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

(a) Convergence graph of multimodal benchmark function (F9) and (b) convergence graph of multimodal benchmark function (F9) from 0 to 15 iterations. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of multimodal benchmark function (F10). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of multimodal benchmark function (F11). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of multimodal benchmark function (F12). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of multimodal benchmark function (F13). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F14). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F15). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F16). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F17). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F18). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F19). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F20). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F21). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F22). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

Convergence graph of fixed-dimension multimodal benchmark function (F23). GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization; PSO, particle swarm optimization.

The experimental statistical results of the MGWO, PSO, and GWO variants on unimodal benchmark functions are shown in Tables 10 and 13. On the basis of results obtained in these tables, we are comparing the performance of modified variant with GWO and PSO variants in terms of minimum and maximum objective value of cost functions, mean, and standard deviation. After analysis, it may be seen that modified variant gives highly competitive solutions as compared with PSO and GWO on unimodal benchmark functions. As previously discussed, the unimodal benchmark problems are competent for benchmarking exploitation of the variants. Hence, all obtained solutions evidence high rate of exploitation capability of the MGWO variant.

Furthermore, the experimental numerical solutions of the proposed variant on multimodal test function are shown in Tables 11 and 14. We observe that modified variant performs better to other meta-heuristics on

Furthermore, the statistical results of the modified variant on fixed-dimension multimodal functions are presented in Tables 12 and 15. For these functions, we have checked the rate of convergence performance of the modified variants, PSO and GWO, in terms of minimum and maximum objective functions, mean, and standard deviation values. The solutions are consistent with those of the benchmark test problems. Modified variant gives highly competitive solutions compared with other meta-heuristics, for these problems.

Finally, the performance of the newly proposed algorithm has been verified using starting and end time of the CPU (TIC and TOC), CPUTIME, and CLOCK. These results are provided in Tables 16 and 17, respectively. It may be seen that the modified variant solved most of the benchmark functions in least time as compared with other variants.

To sum up, all simulation results assert that the modified approach is very helpful in improving the efficiency of the GWO in terms of result quality as well as computational efforts.

Real-Life Data Set Problems

In this section, the following 5 data set problems are employed: (1) XOR, (2) Balloon, (3) Breast Cancer, (4) Iris, and (5) Heart. These problems have been solved using modified variant, and results obtained have been compared with several meta-heuristics. Different types of parameters have been used for running code of several meta-heuristics. These parameters are listed in Appendix 1, Table E. The performance of these algorithms has been compared in terms of average, standard deviation, classification rate, and convergence rate of all the variants. All these data set problems have been discussed step-by-step in the following sections.

XOR data set

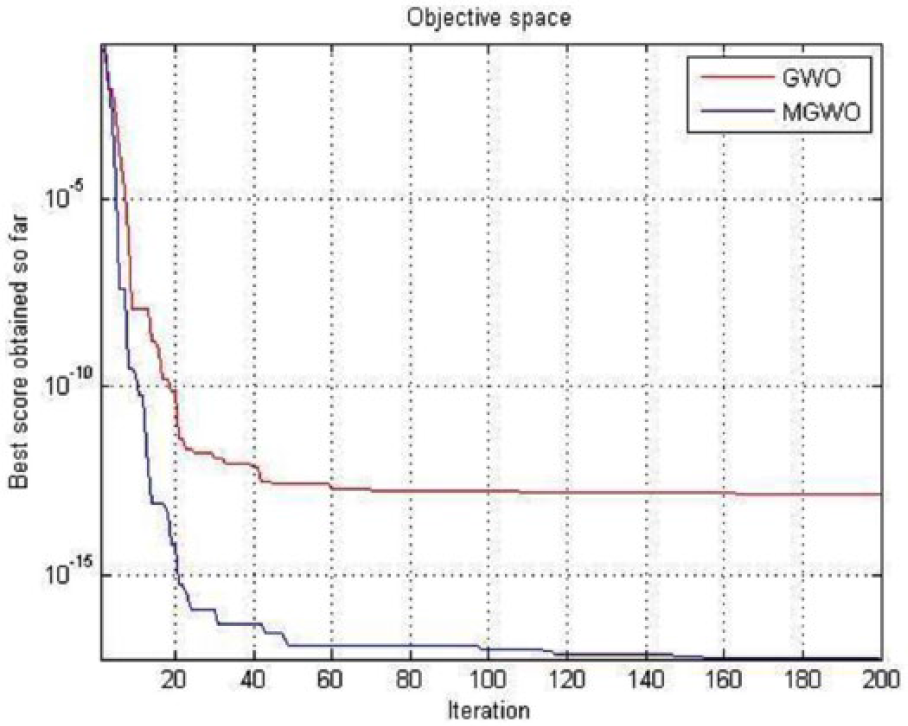

This data set has 3 attributes (input), 8 training samples, 8 test samples, 2 classes, and 1 output (Appendix 1, Table D 18 ). The experimental numerical results obtained through MGWO, GWO, PSO, GA, ACO, ES, and PBIL for this data set are shown in Table 19, and convergence performance of GWO and MGWO variant is shown in Figure 28.

Experimental results for the XOR data set.

Abbreviations: ACO, ant colony optimization; ES, evolution strategy; GA, Genetic algorithm; GWO, gray wolf optimization; MGWO, mean gray wolf optimization; MSE, mean squared error; PBIL, population-based incremental learning; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

Convergence graph of XOR data set problem. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

It is clear from Table 19 that MGWO, GWO, and GA variants give the better quality of statistical results as compared with other meta-heuristics. The results obtained with MGWO, GWO, and GA variants indicate that it has the highest ability to avoid the local optima and is considerably superior to other variants such as PSO, GA, ACO, ES, and PBIL.

The performance of these variants has also been compared in terms of average, standard deviation classification rate (Table 19), and convergence rate (Figure 28). The low average and standard deviation show the superior local optima avoidance of the variant. On the basis of obtained results, we have concluded that newly modified variant MGWO gives highly competitive results as compared with other existing variants, and convergence graph shows that MGWO gives better solutions rather than GWO variant.

Balloon data set

It is clear from Appendix 1, Table D 18 that this data set has 4 attributes, 16 training samples, 16 test samples, and 2 classes. The statistical numerical and convergence results of the variants on this data set are shown in Table 20 and Figure 29.

Experimental results for the balloon data set.

Abbreviations: ACO, ant colony optimization; ES, evolution strategy; GA, Genetic algorithm; GWO, gray wolf optimization; MGWO, mean gray wolf optimization; MSE, mean squared error; PBIL, population-based incremental learning; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

Convergence graph of balloon data set problem. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

Here, we are comparing the accuracy of the algorithms in terms of average, standard deviation, classification rate, and convergence rate of the algorithm. First, we observe that all the variants give similar classification rate. Second, on the basis of statistical and convergence results, we observe that modified variant gives highly competitive solutions as compared with other variants such as GWO, PSO, GA, ACO, ES, and PBIL algorithms. These convergence results are plotted in Figure 29.

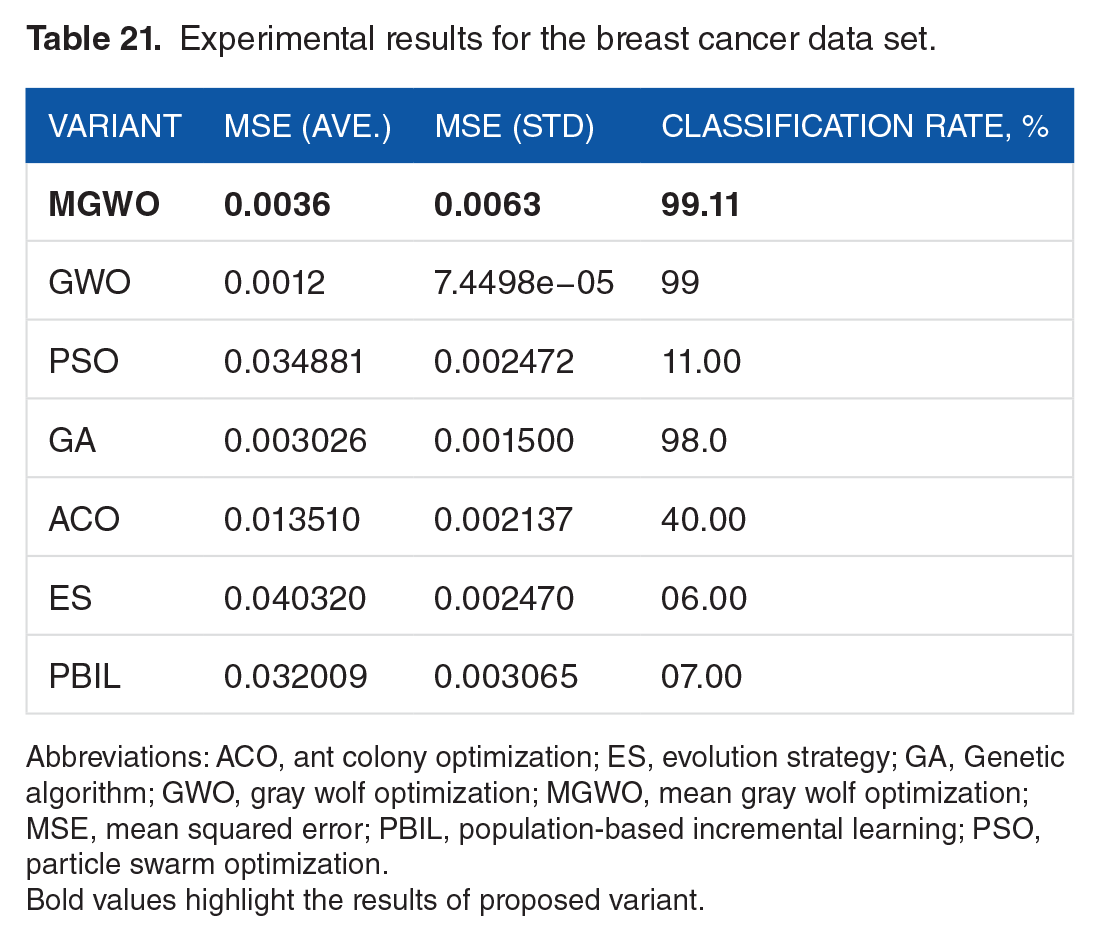

Breast cancer data set

This data set has 9 attributes, 599 training samples, 100 test samples, and 2 classes (Appendix 1, Table D). 18 All problems have been run 10 times using this data set. The numerical results are shown in Table 21. The convergence performance on this data set is plotted in Figure 30.

Experimental results for the breast cancer data set.

Abbreviations: ACO, ant colony optimization; ES, evolution strategy; GA, Genetic algorithm; GWO, gray wolf optimization; MGWO, mean gray wolf optimization; MSE, mean squared error; PBIL, population-based incremental learning; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

Convergence graph of breast cancer data set problem. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

We have observed that the modified variant (MGWO) gives 99.11% classification rate and better convergence solutions (Figure 30) that are superior to other meta-heuristics.

Iris data set

This data set is another well-known testing data set in the text. It consists of 4 attributes, 150 training samples, 150 test samples, and 3 classes as represented in Appendix 1, Table D. 18 The convergence performance of MGWO, GWO, PSO, GA, ACO, ES, and PBIL variants is plotted in Figure 31. The numerical results are shown in Table 22.

Convergence graph of iris data set problem. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

Experimental results for the iris data set.

Abbreviations: ACO, ant colony optimization; ES, evolution strategy; GA, Genetic algorithm; GWO, gray wolf optimization; MGWO, mean gray wolf optimization; MSE, mean squared error; PBIL, population-based incremental learning; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

We have observed that these variants give the classification rate as MGWO (91.334%), GWO (91.333%), PSO (37.33%), GA (89.33%), ACO (32.66%), ES (46.66%), and PBIL (86.66%), respectively. The modified variant presents the better classification rate as compared with other variants.

The results confirm that MGWO algorithm has better local optima accuracy and avoidance simultaneously.

Heart data set

The heart data set is really one of the most popular data sets in the text. This data set has 22 attributes, 80 training samples, 187 testing samples, and 2 classes, respectively, and these data sets are reported in Appendix 1, Table D. 18 The results of the training these variants are shown in Table 23, and the convergence performance of MGWO and GWO is plotted in Figure 32. The low average and standard deviation show the superior local optima avoidance of the variant.

Experimental results for the heart data set.

Abbreviations: ACO, ant colony optimization; ES, evolution strategy; GA, Genetic algorithm; GWO, gray wolf optimization; MGWO, mean gray wolf optimization; MSE, mean squared error; PBIL, population-based incremental learning; PSO, particle swarm optimization.

Bold values highlight the results of proposed variant.

Convergence graph of heart data set problem. GWO indicates gray wolf optimization; MGWO, mean gray wolf optimization.

The results of Table 23 reveal that MGWO has the best performance in this data set in terms of improved mean squared error, classification rate, and convergence as compared with other meta-heuristics.

Figure 32 shows that MGWO variant gives better quality of convergence solutions and outperforms GWO variant.

Conclusions

This article proposes a modified variant of GWO, namely, MGWO, inspired by the hunting behavior of gray wolves in nature. A statistical mean is used to balance the exploitation and exploration in the search space over the route of generations. The results reveal that the newly modified variant benefits from high exploration in comparison with the PSO and GWO algorithms.

Moreover, the performance of the modified variant has also been tested on 5 data set problems, ie, (1) XOR, (2) Balloon, (3) Breast Cancer, (4) Iris, and (5) Heart. For the verification, the statistical results of the MGWO algorithm have been compared with 6 other meta-heuristics trainers: GWO, PSO, GA, ACO, ES, and PBIL. On the basis of results obtained for these data sets, we have discussed and identified the reasons for poor and strong performance of other variants. The experimental statistical results showed that the modified variant gives high competitive solutions in terms of improved local optima avoidance and high level of accuracy in mean, standard deviation, classification, and convergence rate as compared with GWO, PSO, GA, ACO, ES, and PBIL algorithms.

Footnotes

Appendix 1

The initial parameters of algorithms.

| Algorithm | Parameter | Value |

|---|---|---|

| MGWO | Linearly decreased from 2 to 0 | |

| Population size | 50 for XOR and Balloon, 200 for the rest | |

| Maximum number of generations | 250 | |

| GWO |

|

Linearly decreased from 2 to 0 |

| Population size | 50 for XOR and Balloon, 200 for the rest | |

| Maximum number of generations | 250 |

Abbreviations: GWO, gray wolf optimization; MGWO, mean gray wolf optimization.

Peer Review:

Two peer reviewers contributed to the peer review report. Reviewers’ reports totaled 553 words, excluding any confidential comments to the academic editor.

Funding:

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

SBS conceived the idea to develop a new variant of nature inspired technique which can outperform other metaheuristics in terms of solution quality and convergence. NS designed the numerical experiments, developed code and prepared the manuscript. Both the authors revised and finalized the final draft of manuscript.