Abstract

Evaluations are considered of key importance for a well-functioning democracy. Against this background, it is vital to assess whether and how evaluation models approach the role of citizens. This paper is the first in presenting a review of citizen involvement in the main evaluation models which are commonly distinguished in the field. We present the results of both a document analysis and an international survey with experts who had a prominent role in developing the models. This overview has not only a theoretical relevance, but can also be helpful for evaluation practitioners or scholars looking for opportunities for citizen involvement. The paper contributes to the evaluation literature in the first place, but also aims to fine-tune available insights on the relationship between evidence informed policy making and citizens.

Stop! First, let me make something clear; evaluators do not deal with stakeholder groups. Evaluators work with individuals who may represent or be part of different stakeholder groups. I think it is best to work with individuals—specific individuals (Alkin & Vo, 2017, p. 51).

Introduction

Whereas the importance of knowledge has been recognized for centuries (cfr. for instance Francis’ Bacon (1605) famous aphorism: knowledge is power), its societal role has changed dramatically in recent decades. The preponderant source of wealth is no longer merely industrial and product related, but is knowledge related. To be able to compete and succeed in this globalized world, societies are increasingly dependent on the knowledge of stakeholders to drive innovations and entrepreneurship. Thus, previous research has discussed how individuals and organizations can participate in evaluation processes (Brandon & Fukunaga, 2014; Cousins, 2003; Cousins & Whitmore, 1998; Greene, 1988; Sturges & Howley, 2017; Taut, 2008; Whitmore, 1998). Cousins and Earl (1992, p. 397) argue that the participation of stakeholders is an elementary element in order to understand evaluation utilization.

Starting in the 1980s, participatory methods became more popular in evaluations by “taking more inclusive, rights-based approaches to the design, implementation, monitoring and evaluation of community-based development interventions” (Kibukho, 2021, p. 3). In this context, participation became an instrument that promised to transfer power to the less privileged, and provided them the opportunity to engage in the evaluation process (Cousins & Chouinard, 2012; Hilhorst & Guijt, 2006). While literature on stakeholder participation has a long tradition in research on evaluation, only few studies have discussed the participation of individual citizens in particular. Being often the main beneficiary group of social interventions, citizens de facto have a stake in many interventions and their evaluations, even as “ordinary” or “lay” or “unaffiliated” individuals.

As a matter of fact, in evaluation scholarship, the term “citizen” is often named in one single breath with “stakeholders,” which makes it difficult to delineate both concepts from each other. The often inconsistent use of both terms, and the lack of explicit conceptualizations, contributes to this challenge. With Alkin and Vo (2017, p. 51), we adopt a rather general definition of stakeholders, and define them as all individuals who have an interest in the program that is evaluated. This includes clients of the evaluation, program staff and participants, and other organizations. Hanberger’s (2001) distinction between active and passive stakeholders is most insightful in this regard. While active stakeholders, or key actors, will try to influence a policy or program at different stages, passive stakeholders are affected by the policy or program, but do themselves not actively participate in the process. In his understanding, the evaluator needs to recognize, and deliberately include the interest of passive stakeholders, otherwise the effects and value of the policy for inactive or silent stakeholders will be overlooked (Hanberger, 2001, p. 51).

In line with Alkin and Vo’s definition, and consistent with Plottu and Plottu (2009) and Falanga and Ferrão (2021), it can be argued that citizens are a subgroup of stakeholders. Citizens are typically conceived as functional members of a society by virtue of living within it and being affected by it (Kahane et al., 2013, p. 7). Different than organized stakeholder groups such as interest organizations, professional groups or public and private organizations that seek to promote the interest of a limited group, we consider citizens as individual persons without an institutional or public mandate, and which are not member of an organized group (such as a political party). Whether citizens are active or passive stakeholders can be assumed to depend on the evaluation at stake, and of the opportunities provided by the evaluator and not least the evaluation model.

Theoretically, the added value of individual citizen participation for evaluation can be justified by referring to three key democratic values: legitimacy, effective governance, and social justice (Fung, 2015). First, citizen participation holds the promise to enhance legitimacy. Citizens may advance interests that are widely shared by other citizens (Bäckstrand, 2006; Fung, 2015). Essential about lay or local knowledge is that it is embedded in a specific cultural and often also practical context (Juntti et al., 2009, p. 209). The input of citizens in the evaluation of interventions can therefore point at communal values that are broadly shared among local communities (Schmidt, 2013), and of which experts and evaluators themselves may not always be aware. Related to this, involving citizens can have epistemological advantages: citizens can be more open to new inputs, and are more aware to how social interventions work in particular communities (Fischer, 2002; Fung, 2015). A belief in communal and local knowledge is also one of the reasons explaining the increased investments in citizen science in recent decades (Irwin, 1995). Secondly, citizen participation can also foster effective interventions, particularly when so-called multisectoral wicked problems are at stake. Citizens, other than political actors for instance, may be well placed to assess trade-offs between ethical or material values; or may frame a policy problem in a more viable way than experts (Fung, 2015). Citizens can advance new viewpoints or an alternative perspective on trade-offs between different types of values, which can foster the validity of certain policies (Juntti et al., 2009). Finally, citizen participation has the potential to mitigate social injustice (Fung, 2015). From a social justice lens, citizens can bring certain undemocratic biases to the surface in an evaluation.

Despite this potential, little is known as to how much room evaluation theorists have given to citizens in their models. As evaluation theorists differ in their context and evaluation practice, their views on the purpose and the role of citizens also differ fundamentally. Which evaluation theories indeed consider citizen involvement? How and at which stage of the evaluation process are citizens having a role? To date, the evaluation literature does not include such a systematic assessment. As a consequence, this can make it difficult for evaluation commissioners and decision makers to know which models are appropriate for citizen involvement, should they be willing to engage in this. This article addresses this gap by presenting a review of citizen involvement in the main evaluation models circulating in the field. We distinguish between effectiveness models, economic models, and actor-centered models, and studied the state of citizen involvement per stage of the evaluation process. As such, we account for a comprehensive and nuanced assessment on the role of citizens. Method-wise, we triangulated an analysis of the original sources in which the models are outlined, with an expert survey. Our aim is not to make a normative or judgmental assessment of the evaluation models, but to provide a toolbox which can be of use for evaluation practitioners and scholars looking for opportunities for citizen involvement. The article also fine-tunes available insights on the relationship between evaluation and citizens more in general.

The article is structured as follows: the next section sets the stage to introduce the typology of evaluation models, which we use as a heuristic to analyze the role of citizen involvement. The results of the document analysis, and expert survey are presented in section four. The last section summarizes our findings and discusses the implications for evaluation practice.

Evaluation Models

Evaluation models—sometimes referred to as theories or approaches—typically prescribe specific steps that an evaluator is expected to follow towards a particular goal that has been specified in the beginning of the evaluation (Alkin, 2017, p. 141). Of course, there is no such thing as “the” evaluation model. This is why previous scholars have tried to collect and classify different evaluation models. According to Madaus et al. (2000, p. 19), evaluation models are not directly empirically verifiable for a given theory. They are rather to be understood as an evaluation scholar’s attempt to characterize central concepts and ideal typical procedures which can serve as guidelines in evaluation practice. In general, the aim of a classification or a taxonomy is to better understand core principles of different evaluation theories. These especially become clear when defining principles and characteristics are contrasted (Contandriopoulos & Brousselle, 2012, p. 67). Just as there exist many evaluation models, there are quite a number of evaluation taxonomies, all prioritizing different dimensions. For the purposes of our article, we rely on the taxonomy by Widmer and De Rocchi (2012), which is itself based on taxonomies of Vedung (1997) and Hansen (2005).

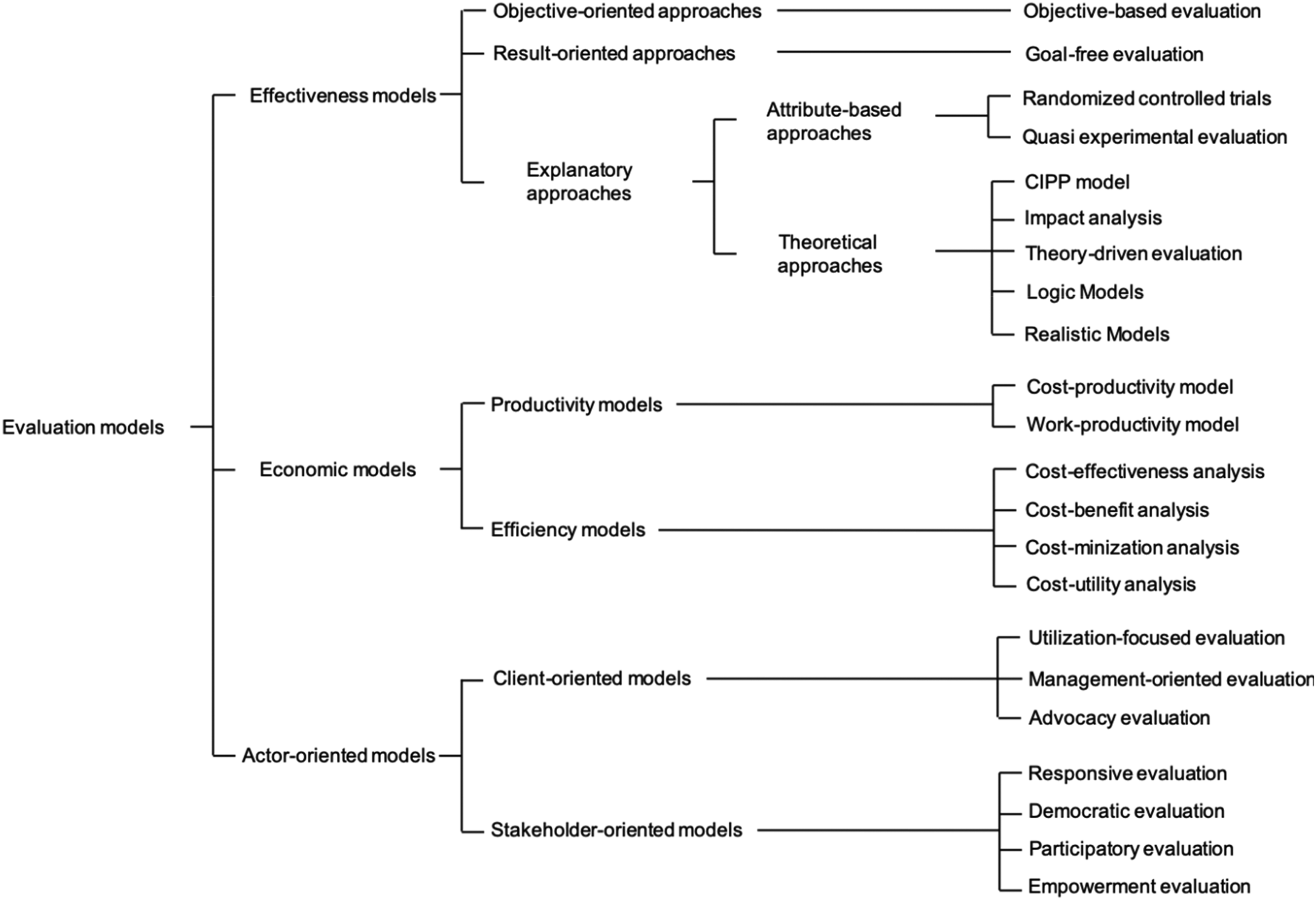

Vedung (1997, 2004) distinguishes between three major models that constitute the backbone for several subsequent taxonomies, including the one that we adopted for our analysis: (1) substance-only models that primarily address the substantive intervention content, outputs and outcomes, (2) economic models that focus on costs, and (3) procedural models that put intermediary values such as legality, representativeness, and participation in the focus of the evaluation. Hansen (2005) has further built on this overview, and provides a more systematic and fine-grained taxonomy. In comparison to Vedung, she presents a typology of six evaluation models: results models, process models, system models, economic models, actor models, and program theory models. Widmer and De Rocchi (2012), finally, have made an attempt to integrate these typologies, and present a taxonomy with three basic types of models (see Figure 1). In doing so, they make a distinction between models that focus on the impact of a program (effectiveness models), the efficiency (economic models) or the interests and needs of actors involved and affected (actor-oriented models). While effectiveness models focus on the effects of a program and address only the results of alternative interventions, economic models account for the relationship between program effects and the costs. Finally, actor-oriented models put the emphasis on the actors involved, and were introduced as a separate category to account for the most recent developments in research about evaluation. Figure 1 presents their detailed taxonomy, with 22 models being situated in one of the three overarching types.

Taxonomy of Evaluation Models By Widmer and De Rocchi (2012, p. 52).

Naturally, the position of specific models in the typology can be subjected to debate. For instance, depending on one’s approach to advocacy evaluation (compare Sonnichsen’s seminal notion (1989) with the work of Julia Coffman (2007)), this model can be situated in a different category. Contandriopoulos and Brousselle (2012, p. 68) argue that models are “intellectually slippery beasts” which make it challenging to position them in certain categories. Despite these constraints, which are inherent to any typology, the typology by Widmer and De Rocchi (2012) presents a useful heuristic tool for the purpose of our study. Our ambition is not to position these evaluation models definitively, but rather to have a help tool for classifying different evaluation models and their considerations of citizens’ interest. First, to the best of our knowledge, Widmer and De Rocchi’s taxonomy attempts to be all-inclusive and does not focus on individual policy domains or disciplines. This makes it suitable for all kind of social interventions. Second, the taxonomy makes a more fined-grained distinction between different actor-oriented models, which are in particular important for the participation of citizens. Third, this taxonomy puts predominant emphasis on the original models, and not on later variations or interpretations. While we acknowledge that models can and have been adapted over time, our aim is to analyze the role of citizens as it has been conceived in the “core idea” of the models. This core idea, as we believe, is best reflected by the original models, and not by their later developments or further developments. Evaluation models are supposed to guide practice. As a consequence, they have been naturally adapted over time by evaluators, to make them fit for a particular local purpose or context. With such empirical applications sometimes very much departing from the original “pure” nature of the models, and being very diverse in nature, we see most added value in staying close to the original purpose of the models, which best represent the common denominator inspiring later empirical applications that originated from this same trunk.

The key question is then how “citizen involvement” is understood in each of these models? To be clear, and in contrast to the concept of citizen science (Irwin, 1995), we do not consider citizens as evaluators themselves per se, but rather review which role is given to citizens in evaluations in general. We simply review any role given to citizens to which reference is made in the models. To account for a comprehensive assessment, we do not restrict our analysis to a particular aspect of the evaluation process, and consider all stages of a typical policy evaluation. With Vedung (1997) and Hansen (2005) we break the evaluation process down in five basic stages: (1) delineating the evaluation context, (2) formulating evaluation questions, (3) data collection, (4) assessment and judgment, and (5) utilization of evaluation findings. As such, we account for a complete assessment of the role of citizens. Fischer (2002), for instance, stated that citizen involvement is quintessential during the entire research process, to ensure the validity of the findings produced (see also Juntti et al., 2009). As mentioned before, we do not argue for any particular role of citizen involvement. Again, our ambition is simply to take stock of the (theoretical) role given to citizens.

In what follows, we review how citizen involvement is conceived in the different models, per stage of the evaluation process. We focus on the general trends observed for effectiveness models, economic models and actor-oriented models as the three main types. Within the scope of the article, it is not possible to discuss all fine-grained nuances at the level of each of the 22 specific models, but we will refer to some clear examples per type to substantiate our point where relevant. In Table A1 in Appendix, the detailed findings of the document analysis can also be consulted.

Next to the review of the original texts of the models, we conducted an expert survey. These international experts were either the pioneers in introducing one or several of the 22 evaluation models, or—in case the founder of a particular evaluation model is no longer alive or not available—strongly contributed to its development. Hooghe et al. (2010, p. 692) suggest that expert surveys are appropriate if reliable information can be found with experts rather than in reliable documentary sources. The survey, launched between September and December 2019, listed the same factors that were included in the document analysis (see Table A2 in Appendix). About 60% of the evaluators responded (see Table A3 in Appendix for the overview), resulting in 12 completed surveys. While not all models are covered, the expert input helped us nuancing the results of the documentary analysis and supported us to assess whether the criteria of the document analysis allow adequate conclusions.

Let the Models Speak: Findings of the Document Analysis and the Expert Survey

Delineating the Evaluation Context—Are Citizens’ Perspectives Considered?

A quintessential part of an evaluation process is to delineate the context factors that provide the evaluation framework. According to Alkin and Jacobson (1983, p. 18), context refers to the elements or components of the situational backdrop against which the evaluation process unfolds. Examples of context factors are fiscal or other constraints on the evaluation, the social and political climate surrounding the project being evaluated, and the degree of autonomy which the project enjoys. In other words, the context concerns the framework within which the evaluation is conducted (Alkin & Jacobson, 1983, p. 62). Mark and Henry (2004, p. 37) distinguish between the “resource context” that involves the human and other resources allocated to the evaluation and the “decision/policy context” that consists of the cultural, political and informational aspects of the organization involved in the policy implementation. Hence, delineating the evaluation context involves defining the rules of the game that an evaluator has to know prior to formulating the evaluation questions. As can be derived from the overview table, this broader evaluation context, and the role of citizens in particular, is differently reflected in the three main evaluation models, and in varying degrees of explicitness.

Starting with the effectiveness models, the picture is mixed. Some so-called theoretical approaches explicitly address the evaluation context, as it is at the center of their raison d’être. Stufflebeam’s CIPP model (2004)—which acronym refers to “context”—distinguishes four steps in their evaluation model: context, input, process, and product evaluation. Context, in this model, refers to the needs and associated problems linked to the evaluand. An evaluation can help identifying the necessary goals for improving the situation. Pawson and Tilley (1997)’s realistic evaluation revolves around a related notion of context: in their model social programs are conceived as influenced by the surrounding context. Some interventions might only work under certain circumstances. The same applies to logic models where citizens’ perspectives can be a deeply influencing causal factor to realize a certain outcome. In attribute based approaches instead, citizens’ perspective is seldom considered, precisely due to their research focus on the generalizability of results. Hansen (2005, p. 450) argues that program theory models are used to analyze the causal relations between context, mechanism, and outcome. Randomized-controlled trials (Campbell & Stanley, 1966) and quasi-experimental evaluation (Campbell & Stanley, 1966) particularly have the goal to control the context, and therefore cut out the perspective of citizens, in order to increase internal and external validity. As one expert phrased it: “In the best case, I was part of a [research team] that randomly sampled schools and that over-sampled based upon theoretically driven questions regarding the context. (…) But this may be the exception that proves the rule.”

Citizen perspectives are also only peripheral in economic models such as productivity and efficiency models. They have a strong focus on the relationship between invested resources and the output, and as such also apply a rather narrow or no perspective to citizens’ considerations. Whether economic models take citizens’ interests into account, as experts mentioned to us, is entirely in the hands of evaluators. For instance, cost-effectiveness models can provide an opportunity for stakeholders to reach consensus on what the most important objectives are, and how to measure them. The latter can be influenced by citizen’s opinions, but most often it is not, also since citizens’ response has to be translated into monetary values. Evaluators can soften these conditions in order to give citizens a say in these evaluation models: “It is my belief that those factors that cannot be easily measured in monetary terms should continue to have a place in the analysis and this is perhaps where citizens can play a significant role.”

Both the document analysis and the experts confirmed that citizens’ perspectives can best be accounted for in actor-oriented models. Models of democratic evaluation (House & Howe, 2000; MacDonald, 1976) and empowerment evaluation (Fetterman, 2001; Fetterman & Wandersman, 2007) are by nature conceived for citizens and stakeholders more in general. Both models’ aim is to have an informed citizenry and community, in which the evaluator acts as a broker between different groups in society. For such societal groups, the evaluation can provide an opportunity to evaluate their performance and to accomplish their goals. In some cases, such as empowerment evaluation, citizens can even carry out the evaluation, with the support and facilitation of specialists. Moreover, citizens’ perspective can serve as an important data resource, also in the design, interpretation, and promulgation stages. This is not to say that actor-oriented models always consider citizens’ input, however. As for responsive evaluation models, for instance, it was stated “that there is no expectation of citizens having a decision-making or participative role in any stage.” Hence, while the label “actor-oriented” may suggest this, it would be wrong to conclude that citizens have a stake per se in defining the framework within which the evaluation is conducted.

Evaluation Questions—Are Citizens’ Expectations Considered?

At the core of evaluation is to address questions about programs, processes, or products. Ideally, an evaluation provides information to a wide range of audiences that can be used to make better informed decisions, develop more appreciation and understanding, and gain insights for action. Evaluation questions typically consider the causal relationship between a program and an effect, but can also focus on the description of an object or a normative comparison between the actual and the desired state.

According to Preskill and Jones (2009), one way to ensure the impact of an evaluation is to develop a set of evaluation questions that reflect the expectations, experiences and insights of as many stakeholders as possible. They argue that all stakeholders are potential users of evaluation findings, which is why their input is essential to establish the focus and direction of the evaluation. Naturally citizens are important stakeholders that evaluators have to account for. When citizens’ expectations are already included at an early state of the evaluation, citizens’ specific information needs will most likely be addressed.

Again, and as expected, evaluation models vary strongly in respect of their focus of the evaluation questions and how they include citizens’ perspectives. Based on the review of original sources, effectiveness and economic models do not strongly consider citizen’s expectations. However, one should be careful in concluding that citizens have no role at all in this regard. According to some experts, citizens are increasingly having a say in practice in effectiveness models, as evaluators, “are driven by concerns with the ‘usefulness’ or ‘relevance’ of findings from RCTs for decision-making.” The same applies to economic models, where there seems to be an increased attempt to add evaluation questions that put the citizen in the process of placing monetary values on various policy goals. However, this is again not a principle but a choice by the evaluators: “Citizens’ perspectives can be considered (and often are) in articulating the theory of action and planned implementation and also in hypothesizing the resources required to operationalize that theory.” Not all experts share this opinion. For some, the top-down structure of economic evaluation models, being commonly decided by a funding body or other decision maker, makes citizen input far less important than other interests.

Notwithstanding these nuances, actor-oriented models are indeed much more oriented towards citizens’ needs. As explained by Hansen (2005, p. 449), stakeholder and client-oriented models particularly focus on the question whether stakeholders’ or clients’ needs are satisfied. In empowerment evaluations and participatory evaluations, citizens’ expectations are usually the driving forces in developing evaluation questions. In participatory evaluation, the interests and concerns of citizens with least power are usually allocated some priority. Importantly, however, some experts argued that even these models cannot always include citizen’s interest: “Seldom in the past has 2% or more of the budget been warranted for inquiry into citizens’ expectations, experiences, and insights.” This observation itself is worth highlighting.

Evaluation Methods—Are Citizen’s Voices Considered?

In general, evaluators use a broad scope of methods to address the evaluation questions formulated. According to Widmer and De Rocchi (2012, pp. 98–100), evaluation methods tend to vary in four aspects from regular research activities. To start with, evaluations frequently use a combination of methods and procedures. In doing so, one can distinguish between a method mix and triangulation. Whereas the former refers to the use of different methods for answering different questions, the latter concerns the use of several different methods for answering the same question. Second, evaluations often deal with questions that focus on change, that is, whether a program has achieved a specific societal effect. However, these effects can often only be observed after a few months or even years. For this reason, comparisons in time axes, so-called before-and-after comparisons, are common in evaluation. Third, the generalization of evaluation results is often only of secondary importance, mainly since the commissioner of an evaluation aims to understand the consequences of an intervention in a given context. Fourth and last, target/actual comparisons are frequently used in evaluations, which focus on the extent to which a program has achieved the declared objectives.

Theoretically speaking, citizens’ voice can be integrated in the implementation of evaluation methods in several ways. Citizens’ input can be directly used as a source for information, in particular in the context of triangulation. Where expert panels are used to assess the effects of an intervention, such results can in principle also be validated by ordinary citizens, with social interventions often explicitly aiming to change citizens’ behavior. In sum, the perception of citizens can be a useful tool to observe how and to what degree an intervention has contributed to changes. As one of the experts highlighted: “high quality evaluation design (see U.S. program standards, American Evaluation Association) considers citizen stakeholder perspective in all aspects of the evaluation architecture, including evaluation methods.” When reviewing the different models, however, the strong variability in considering citizen’s voice is again apparent.

For economic models, such as cost-effectiveness analysis, it is not common to see citizen voice directly in data collection and data analysis. For cost-benefit analysis in particular it was mentioned that community activity does not always translate in monetary values. The same largely applies to effectiveness models, where the inclusion of citizens is rather exception than rule.

On the other side of the continuum, one can again identify the more actor-centered models, but the latter also have different traditions as to the involvement of citizens in data collection. For participatory evaluation, for instance, it will depend on the type of evaluation whether stakeholders will be actively involved, beyond providing input for the design and implementation of the evaluation. As it was mentioned to us: “the more technical these activities, the less likely it is for stakeholders to be active participants in data collection and analysis.” In empowerment evaluation instead, it is part and parcel that citizens conduct the evaluation and determine what is a safe and appropriate method, in conjunction with a trained evaluator. The empowerment evaluator will not only make sure that citizens get the information they need through data collection, but will also help “with rigor and precision by helping them learn about the mechanics, ranging from data cleaning to eliminating compound questions in a survey.” Also client-oriented models lend themselves generally well to the consideration of citizens’ input. Utilization-oriented evaluation, for instance, is by design targeted at “identifying specific intended users for specific intended uses and engaging them interactively, responsively, and dynamically in all decisions, including methods decision.” Whether these intended users are citizens, will then again depend on the particular evaluation or intervention at stake though. Altogether, actor-oriented models offer most potential to account for citizen input, but neither provide the guarantee that citizens’ voices will be actively heard.

Assessment—Are Citizens’ Values Considered?

To many, the rationale of evaluations is to assess public policies on the basis of known criteria. According to Scriven (1991b, p. 91), “the term value judgment erroneously came to be thought of as the paradigm of evaluation claims.” This theory of values can already be found in the early work by Rescher (1969, p. 72): Evaluation consists in bringing together two things: (1) an object to be evaluated and (2) a valuation, providing the framework in terms of which evaluation can be made. The bringing together of these two is mediated by (3) a criterion of evaluation that embodies the standards in terms of which the standing of the object within the valuation framework is determined.

However, Shadish et al. (1991, p. 95) argue that both authors use different terms (Scriven standards; Rescher criterion) to explain the basis of judgements. Stockmann (2004, p. 2) illustrates that the assessment of the evaluated results is not anchored in given standards or parameters, but on evaluation criteria that can be very different. From his stance, evaluations are often meant to serve the benefit of an object, an action or a development process for certain persons in the groups. The evaluation criteria can be defined by the commissioner of an evaluation, by the target group, the stakeholders involved, by the evaluator him/herself or by all these actors together. It is obvious that the evaluation of the benefits by individual persons or groups can be very different, depending on the selection of criteria.

As Shadish et al. (1991) argue, evaluation criteria can be modeled along the values of stakeholders, which can potentially also include citizens. It is up to the evaluator to identify these values, to use them in constructing evaluation criteria and to conduct the evaluation in terms of those. Scriven (1986), however, rejects this model. He particularly considers it the role of evaluators to make appropriate normative, political, and philosophical judgments, as public interest is often too ambiguous to rely on.

As it turns out from the review, economic and efficiency models not explicitly consider citizens’ values in the development of evaluation criteria, or only in an indirect way. As for effectiveness models, of which logic models are a case in point, citizens' values can be determinant in assessing the practical utility and related return of any policy, but whether this is indeed taken into account will depend on individual evaluators.

Economic models have not explicitly built in citizen values but only indirectly address them. For instance, in cost-utility analysis, “citizens’ values are captured in the utility measure, but this is only in service to the overarching efficiency criterion”; or in cost-effectiveness analysis, citizen values are generally considered in analyzing distributional consequences or “who pays” and “who benefits.”

Conversely, actor-oriented models hold more promises in considering citizens’ input in establishing judgement criteria as the document analysis and the expert survey revealed. Empowerment evaluation is most outspoken in this respect, where community-based initiatives are evaluated against criteria that have been bottom-up derived, hence accounting for community values. As one of the experts explained: citizens “literally rate how well they think they are doing concerning the key activities that the group thinks they need to assess together (…) they also engage in a dialogue about their rating to ensure the assessment is meaningful to them.” Participatory evaluation is another example, where citizens’ input is a key concern in setting the criteria for what constitutes a “good” and “successful” program. As one of the experts stated: “the point of view of program participants can be quite different from the ‘expert’ view.” This resonates with the legitimacy and epistemological motives that we highlighted in the introduction.

Utilization—Are Citizens’ Interpretations of Findings Considered?

As Eberli (2019, p. 25) noted, evaluation utilization can be approached from multiple conceptual angles. While the terms “use” or “utilization” are applied synonymously for the use or application of evaluations (Alkin & Taut, 2002; Henry & Mark, 2003; Johnson et al., 2009), “utility” refers to the extent to which an evaluation is relevant to a corresponding question (Leviton & Hughes, 1981). “Usefulness” on its part reflects the subjective evaluation of the quality and utility of an evaluation (Stockbauer, 2000, pp. 16–17), and refers to the use of evaluation results by the evaluated institution with concrete consequences following the evaluation findings. In evaluation literature, one can identify a large number of attempts to classify the use of systematically generated knowledge. One of the most common distinctions conceives three types of use (Alkin & King, 2016): Instrumental use refers to the direct use of systematically generated knowledge to take action or make decisions. Conceptual use points at indirect use of systematically generated knowledge that opens up new ways of thinking and understanding, or that generates new attitudes or changes existing ones. In addition, one can distinguish symbolic use which refers to the use of systematically generated knowledge to support an already preconceived position. This in order to legitimize, justify, or convince others of this position. Noteworthy is also the concept of process use, which authors as Patton (2008, p. 90) added to the typology. Process use implies use occurring due to the evaluation process and not due to its results.

In recent times, scholars have discussed the role of evidence in public discourse and how citizens can be included in this context (Boswell, 2014; Pearce et al., 2014; Wesselink et al., 2014). They argue that the use of citizen information in policy discourse, and an interpretive view to policy making can lead to more reasoned debates. Schlaufer et al. (2018), for instance, empirically showed that the use of evaluations in direct-democratic debate provides policy-relevant information and increases interactivity of discourse. They also revealed that evaluations are particularly used by elite actors in their argumentation, leading to a separation of deliberation between experts and general public. El-Wakil (2017), however, showed that a facultative referendum, one of the instruments that can be used in a direct democracy, gives political actors strong incentives to think in terms of acceptable justifications. Considering these contributions, evaluators might not only include citizens when assessing evaluation results, but also encourage evaluation clients to deliberate the use of evaluation with interested citizens.

Based on our analysis, it is clear that many models do not structurally involve citizens, generally speaking. We attribute this to the fact that debates about the use of evaluations only emerged after the development of many of the evaluation models. Take effectiveness models, where citizens are “rarely” involved in the interpretation, “except to include quotes or feedback directly on their experience using an intervention.” Also in efficiency models, the consideration of citizens is rather uncommon. The following expert quote for cost-benefit analysis (CBA) is illustrative: “this [the involvement of citizens in the interpretation of findings] is a critical question and sometimes ignored by CBA evaluators” (…) and “CBA often is less transparent on the assumptions that drove the analysis.” For cost-utility analysis, the expert indicated “I have never seen this done, though this is a principle I would support.”

On the other end of the spectrum, we can again situate actor-oriented models. Stakeholder-oriented models, such as empowerment evaluation, can be said to have most built in possibilities for citizen involvement. Empowerment evaluations serve in the first place the communities and stakeholders that are affected by a program. As they are the primary audience in evaluations, they can also be easily considered in the utilization stage. As one of the experts mentioned: citizens “self-assess by selecting the key activities they want to evaluate and then rate how well they are doing. Once they discuss what the ratings mean, they are prepared to move to the third step and plan for the future (…). The plans for the future represent their strategic plan.” Also MacDonald’s democratic evaluation model which has particularly been designed for the sake of informing the community. The evaluator “recognizes the value pluralism and seeks to represent a range of interests in its issue formulation. His main activity is the collection of definitions and reaction to the program” (MacDonald, 1976, p. 224). This is not to say that all actor oriented models have guarantees for citizen involvement, and that all stakeholder oriented models incorporate citizen input by definition. Especially for responsive evaluation, we were given a less positive image by experts than what we derived from the reading of the original models: “seldom have responsive evaluators or their clients been involved with formal procedures for citizen interpretation of the findings. The most common ending has been that the evaluand administrators or their superiors have moved beyond their concerns when the evaluation was commissioned, and have confidence that they can do what needs to be done.” Also client-oriented models not necessarily pay much attention to citizens, or apply a relative elitist perspective to the interpretation of the evaluation findings. Utilization focused evaluation, for instance, is solely oriented towards the intended users of an evaluation, most often program managers (Patton, 2008). And when citizens are involved in utilization-focused evaluation, this is mainly restricted to the “primary intended users.” In sum, actor oriented does not necessarily mean that there is also room for citizen input, also in the utilization stage of an evaluation cycle.

Conclusion

To what extent do the various evaluation models consider citizens during the evaluation process? Whereas citizen participation in evaluation holds the potential to realize important democratic values, there is not much evidence as to whether and how existing evaluation models account for citizens’ considerations. To our knowledge, this article is the first that relies on a unique combination of document analysis and expert input in order to provide a review of the role of citizens in evaluation. The distinction between different dimensions of the evaluation process furthermore allows for a comprehensive and fine-grained perspective. Not surprisingly, actor-oriented models have most built-in guarantees for a relatively extensive involvement of the public and communities throughout the entire evaluation process, as these models have also been particularly conceived for these purposes. As our analysis revealed, substantial variation also exists across these various models, and across the varied stages of the evaluation process. At the risk of crude generalization, it can be said that citizens’ input is usually relatively built in actor oriented models when considering “context,” “evaluation questions,” and “assessment,” but this is much less evident for the stages of “data collection and data analysis” and especially for the “utilization of findings.”

It would be wrong to conclude that citizens’ considerations are completely absent in the other models. Many models make explicit reference to “stakeholders” as broader denominator, but individual citizens are usually not targeted. True, many models were already developed before the heydays of collaborative governance or citizen science, which can explain why citizen involvement is not at the center of many models.

Admittedly, many models have been revised over time. Since we have purposely focused on the original models instead of the newer developments, some models may have been assessed more negative or positive compared to later developments. For instance, Stufflebeam and Zhang (2017) discuss prominently the involvement of citizens in the updated CIPP evaluation model. In contrast, newer interpretations of democratic evaluation rather suggest to focus on macro-structures such as democracy institutions, democratic transitions, democratic renewal instead of citizens (Hanberger, 2018). Our deliberate choice to focus on the original models, however, is based on the firm belief that basic principles such as the inclusion of stakeholders can be deduced from the original models. Given the richness of the evaluation field, it would not have been possible to address all latest developments of original models within the scope of this manuscript. We acknowledge though that this restriction is a limitation of this article.

This being said, existing literature on evaluation models only seldom distinguishes between stakeholders and citizens. By making this distinction explicit, we have attempted to make an important contribution to evaluation practice. We raise three issues important when planning to include citizens in an evaluation. At the beginning of the evaluation project, evaluators should clearly define the purpose and the reason of the evaluation. Even though formative evaluations are more likely to be targeting citizens’ input, this does not mean that the population cannot provide valuable feedback for summative evaluations. Our review provides a first orientation on available models that evaluators can choose from if they want to integrate citizens’ interest.

Second, evaluators need to partner with commissioners of evaluations and help them deciding on how to consider citizens’ interest. The state transformation to modern technologies already provides public administrations various modalities for the involvement of citizens. However, public servants often are unsure how and to what degree they should engage citizens in the assessment of public policies. Therefore, evaluators need to support clients with the decision on how to consider citizens’ interest. Typical citizen involvement includes community based practices where citizens not only accompany evaluation processes but also deliberate about the content and findings of the evaluations.

Third, evaluators should reserve time for calibration and testing the evaluation models when planning the timeline of the evaluation. No matter what type of model is adopted, evaluators should always—in consultation with their clients—pretest the actual experience of real participants. This allows them to improve the involvement of citizens and find the right balance between valuable inclusion and infinite discussion. While this article assumes that citizen involvement in evaluation could be important for democratic values, whether and how the link between the two actually materializes in practice is an empirical issue to study. To be clear, it is not our ambition to uncritically call for increased citizen participation in evaluation at all costs. Evaluation is an inherently contingent undertaking, in which many contextual factors will determine whether it is wise to involve citizens. The nature of the policy problem may be one element to consider in this regard. One may state, for instance, that more technical problems likely lend itself less for citizen engagement. In the same vein, different civic epistemologies in various countries or policy sectoral settings (Jasanoff, 2011) can also make that citizen input will be differently valued. In fact, to take it further, the decision to involve citizens is in evaluation is not merely a matter of model or design choice, or having the appropriate resources for it (Fung, 2015). The major decision is of political nature in the first place, with evaluation commissioners that should ultimately be willing to actively engage citizens in one or several stages of the evaluation process. With many evaluations being intrinsically political (Weiss, 1993), the decision whether and how to involve citizens is de facto also a decision about the allocation of power (Juntti et al., 2009).

Footnotes

Acknowledgments

Previous version of this paper was presented at the International Conference on Public Policy 2019 in Montreal, Canada, at the European Group for Public Administration Annual Conference 2019 in Belfast, Northern Ireland. The authors thank all the participants for their feedback. We are grateful to Sebastian Lemire as well as the three anonymous reviewers for their valuable remarks.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.