Abstract

Collaborative approaches to evaluation (CAE) are evaluations where evaluators engage with program community members to coproduce evaluation knowledge. There has been much written about CAE theory and a plethora of empirical studies have been published. Yet little is known about the extent to which CAE practice corresponds with theory. This study is a systematic review of a set of 46 peer-reviewed studies of CAE practice published over the past 25 years. We draw from Cousins and Whitmore's descriptive theory of participatory evaluation to explore the extent to which and how the case examples reflect practical and transformative streams and how they map onto three fundamental process dimensions: control, diversity, and depth of participation. Results provide support for these descriptive theoretical propositions. We discuss their implications for the value and use of CAE evidence-based principles; ongoing research; CAE in government and international development sectors; and appreciation of reciprocal benefits.

Keywords

Introduction

Fifteen years ago, Daigneault and Jacob (2009, p. 337) made the claim that the “… three process dimensions identified by Cousins and Whitmore (1998) can logically be described as necessary constitutive dimensions of [participatory evaluation].” Based on a thorough and penetrating conceptual analysis, the authors concluded that the dimensions—control of evaluation decision-making, diversity in program community member (PCM) 1 selection for participation, and depth of participation—are parsimonious, internally coherent, able to distinguish participatory from more conventional approaches to evaluation, and accommodating of practical and transformative interests. While these process dimensions enjoy strong theoretical support, how do they play out in practice?

Collaborative approaches to evaluation (CAE) have been with us for decades, many having originated in the context of international development, others having provenance in Western developed country contexts (Brisolara, 1998). While many varied genres and approaches fall under the CAE umbrella (Cousins et al., 2020; Shulha et al., 2016), the common denominator, and defining feature, is evaluators working in tandem with PCMs to coproduce evaluative knowledge. The range of approaches emanating from international development work includes the most significant change technique (Davies & Dart, 2005), participatory action research (Fals-Borda et al., 1991), and rapid rural appraisal (Chambers, 1981). In North America, CAE is commonly framed as collaborative evaluation (O'Sullivan, 2004; Rodriguez-Campos, 2005), participatory evaluation (practical and transformative) (Cousins & Whitmore, 1998), and empowerment evaluation (Fetterman, 1994). Some approaches naturally lend themselves to participatory engagement between evaluators and PCMs, although they need not include this collaborative element. Utilization-focused evaluation (Patton, 2008) and contribution analysis (Mayne, 2012) are two examples that come to mind.

Cousins and Whitmore (1998) laid down some enduring theoretical contributions associated with CAE purposes and processes; as Daigneault and Jacob (2009) observed, the paper has had wide influence within and outside the field and according to King (2007) it “is indeed one of the most cited chapters ever published in New Directions for Evaluation” (p. 336). Recently, we published a 25-year retrospective on the chapter (Cousins & Whitmore, 2024).

Christie (2011) joined a long list of contributors (e.g., Mark, 2008; Shadish et al., 1991; Smith, 1993) who have underscored the critical importance of empirical research to evaluation theory development and application. She draws attention to the theory-practice gap, and “the growing mistrust by practitioners that academic knowledge can offer anything of relevance to practice situations” (2011, p. 14). As is the case in most domains of inquiry in the social sciences, the correspondence between theory and practice has been of interest to evaluation scholars for some time. For example, Miller and Campbell (2006) published a systematic review to determine the extent to which empowerment evaluation can be readily distinguished from other collaborative approaches. They found that empowerment evaluation is not entirely distinct from other methods of evaluation and that its practitioners do not approach their work in identifiably similar ways. In an exploratory study that examined evaluation practices in France, Tourmen (2009) examined the logic underlying evaluators’ choices, and the role evaluation theory played in those choices. Similarly, Christie (2003) empirically examined the extent to which evaluators’ practice aligned with eight different evaluation theoretical perspectives. Both authors found only loose practice connections to evaluation theory; evaluators are more likely to be guided by their own practical knowledge and wisdom which develops through experience.

In this systematic review, we explore the extent to which there is correspondence between CAE theory and practice. Through an examination of empirical case examples of CAE purposes, practices, and consequences, we aim to assess the “theory-practice divide” in this domain. Noteworthy, we see empirical research on evaluation (RoE) as a bridge between theory and practice that provides for mutual influence (Cousins & Chouinard, 2012). Others have acknowledged such mutual influence and have underscored the need to tap into evaluation practitioner wisdom to inform evaluation theory (Rog, 2015; Tourmen, 2009).

Theoretical Framework

Understanding CAE has great potential to accommodate contextual and cultural complexity and can help to integrate different ways of knowing into the evaluation process. In this section of the paper, we re-present our theoretical framework for participatory and CAE. First, however, we comment on two noteworthy developments in the field.

As mentioned, there exist myriad forms and approaches of CAE. In our view, the decision about whether to implement CAE depends on an a priori analysis of the program or intervention in context. Decisions about purposes to be achieved and appropriate processes to be implemented naturally follow. In this sense, concerns about appropriately naming the collaborative process and ensuring that practice conforms to a theoretical ideal are not priorities (Cousins et al., 2013, 2020; Shulha et al., 2016).

Given the wide array of available collaborative options, we undertook to develop and validate a set of evidence-based principles as an umbrella framework to guide CAE practice (Cousins, 2020; Cousins et al., 2013; Shulha et al., 2016). The resulting eight principles are presented in a loose temporal order ranging from “clarify motivation for collaboration” to “promote appropriate participatory practices” to “follow through to realize use.” The principles should be used as a set and applied in an iterative, contextually relevant fashion. They are not intended as a model or stepwise protocol to be followed. In that sense, they align well with the descriptive theory of participatory evaluation advanced by Cousins and Whitmore (1998). By descriptive theory, we mean an understanding of participatory and CAE as practiced in context, not how they should be practiced. On Horvath's (2016) ladder of inquiry, we would locate the stage of theory development between “discovery” and “explanation.” We now revisit the principal tenets of this descriptive theory.

Two Streams of Practice

In the original chapter, we identified three justifications for collaborative inquiry. First, we considered the goals and interests of participatory evaluation and came up with three main justifications: pragmatic (program problem-solving; use of evaluation findings); political (ideological, amelioration of social inequity, marginalized group self-determination); and philosophical (epistemological, deep understanding of complex phenomena). Years later, Chouinard and Cousins (2021) added a fourth justification for the collaborative evaluation process—“ethics” (moral obligation, ethic of engagement).

Based on the original three justifications and our analysis of participatory evaluation in international development and Western-developed contexts, we concluded that there exist two streams of participatory practice (Cousins & Whitmore, 1998). First, we labeled practical participatory evaluation or P-PE and asserted that it draws predominantly from the pragmatic justification but that the other justifications factor in as well. On the other hand, we identified transformative participatory evaluation or T-PE, the predominant driving force being political and ideological considerations associated with the amelioration of social inequity. However, with our field's contemporary emphasis on transformational change and the transformational agenda (e.g., Chaplowe & Hejnowicz, 2021; Patton, 2019), we now argue that individual, team, organizational, and community capacity building qualify as part and parcel of the T-PE stream.

We assert that these streams are not limited to participatory evaluation; they would apply to a wider range of approaches under the CAE umbrella (e.g., collaborative evaluation, empowerment evaluation, rapid rural appraisal). Further, we posit that the streams are not mutually exclusive; rather, they may be thought of as locations on a spectrum, perhaps a semantic differential scale. This is to say that P-PE may also generate transformative benefits just as practical gains may emerge from T-PE, even if secondarily.

Three Dimensions of Collaborative Process

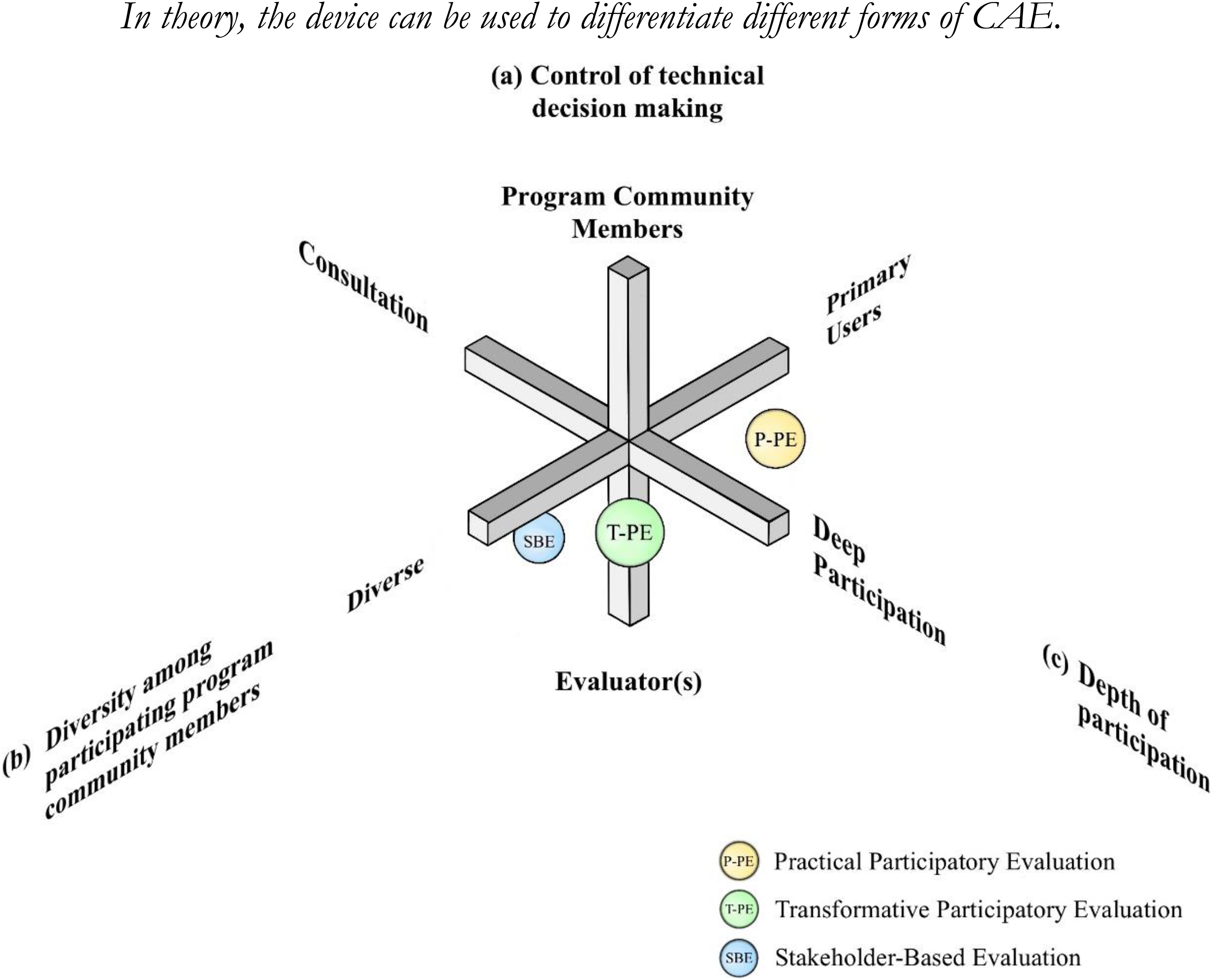

As referenced above, Cousins and Whitmore (1998) highlighted three fundamental process dimensions of collaborative inquiry; each can be conceptualized as a semantic differential scale with polar opposites. We argue that the dimensions are orthogonal and can be represented in three-dimensional space, as shown in Figure 1.

Three Dimensions of Collaborative Inquiry (Licensed Under CC BY-NC-ND 4.0.).

The first is control of evaluation decision-making. At one end of the dimension the evaluator or evaluation team is in the lead, while at the other pole, the evaluator is a facilitator with PCMs driving and controlling the evaluation process. Of course, a more balanced form of control with decisions being made jointly by evaluators and PCM participants would be near the midpoint. Next is diversity of participating PCMs. Realistically, PCM groups could be quite heterogeneous and include program funders, developers, managers, implementers, as well as intended beneficiaries, and special interest groups. At one pole, we identify primary users—actors with a vested interest in the intervention and/or its evaluation—who could be expected to leverage change on the basis of evaluation results (Alkin, 1991). At the other end, a wider, maximally diverse group of PCMs would be implicated, including intended beneficiaries and special interest groups. Alternatively, different configurations of PCM diversity might be located in between poles, some close to the midpoint. Finally, to what extent do PCMs participate in the full methodological continuum of evaluation activities? This dimension we labeled depth of participation. This spectrum may range from PCM consultation (shallow) at one pole to serious engagement with the full range of evaluation methodological tasks (deep) at the other. Alternatively, PCM engagement with methodological activities might be somewhere in the middle (intermediate). Note that the term “depth” implies coverage of the methodological spectrum of evaluation activities as opposed to intensity of participation in any given activity (e.g., data collection).

Having affirmed the three dimensions as being fundamentally constitutive of participatory evaluation, Daigneault and Jacobs (2009) took a next step. They developed a three-level measure of participation, higher scores corresponding to PCM control, wide diversity, and deep participation, thereby rendering their theoretical stance as normative. On this point, we depart from our colleagues. In contrast, we see the three-dimensional device appearing in Figure 1 as a descriptive tool; a way of profiling what participation looks like in any given CAE and at any given point or stage in the project. As an example, based on our own reading and experience, we differentiated P-PE and T-PE in three-dimensional space (see Figure 1). While they might be similar in terms of control (balanced) and depth of participation (deep), they are likely to differ in terms of diversity. Participation in P-PE is likely to be limited to PCMs who are primary users, whereas T-PE might engage a much broader range of PCMs, potentially including intended program beneficiaries and special interest groups. In contrast, we contend that stakeholder-based evaluation (SBE; Bryk, 1983) would be geographically separated in Figure 1 from practical and transformative processes by virtue of its approach to control (evaluator-led), diversity (intermediate), and depth of participation (consultative).

As stipulated above, we contend that differentiation by practical and transformative streams is enduring at an all-encompassing level of consideration and inclusive of a wide range of collaborative approaches. Hence, we now label the streams P-CAE and T-CAE, referencing practical and transformative primary intentions, respectively.

In the interest of our overarching goal of exploring the CAE theory-practice gap we identified the following research questions:

To what extent can the case application intended purposes be categorized as either practical (P-CAE) or transformative (T-CAE)? How are case applications profiled on each of the three dimensions of collaborative process (control, diversity, depth)? To what extent is there geographical dispersion in Figure 1? What are the observed outcomes of the CAE applications? Do they align with intended CAE purposes? Can CAE practical and transformative purposes be differentiated by dimensions of collaborative process? If so, how?

The questions guiding this research imply that it aligns with the “classification” mode of inquiry from Mark's (2008) RoE taxonomy. With a focus on CAE purposes and processes, the study seeks to identify different types of CAE practice, the contexts in which they take place, and the outcomes to which they contribute. Answers to these questions could potentially provide empirical support for Cousins and Whitmore's (1998) descriptive theory and thereby provide a basis for ongoing research, theory development, and practical guidance.

Methods

Sample

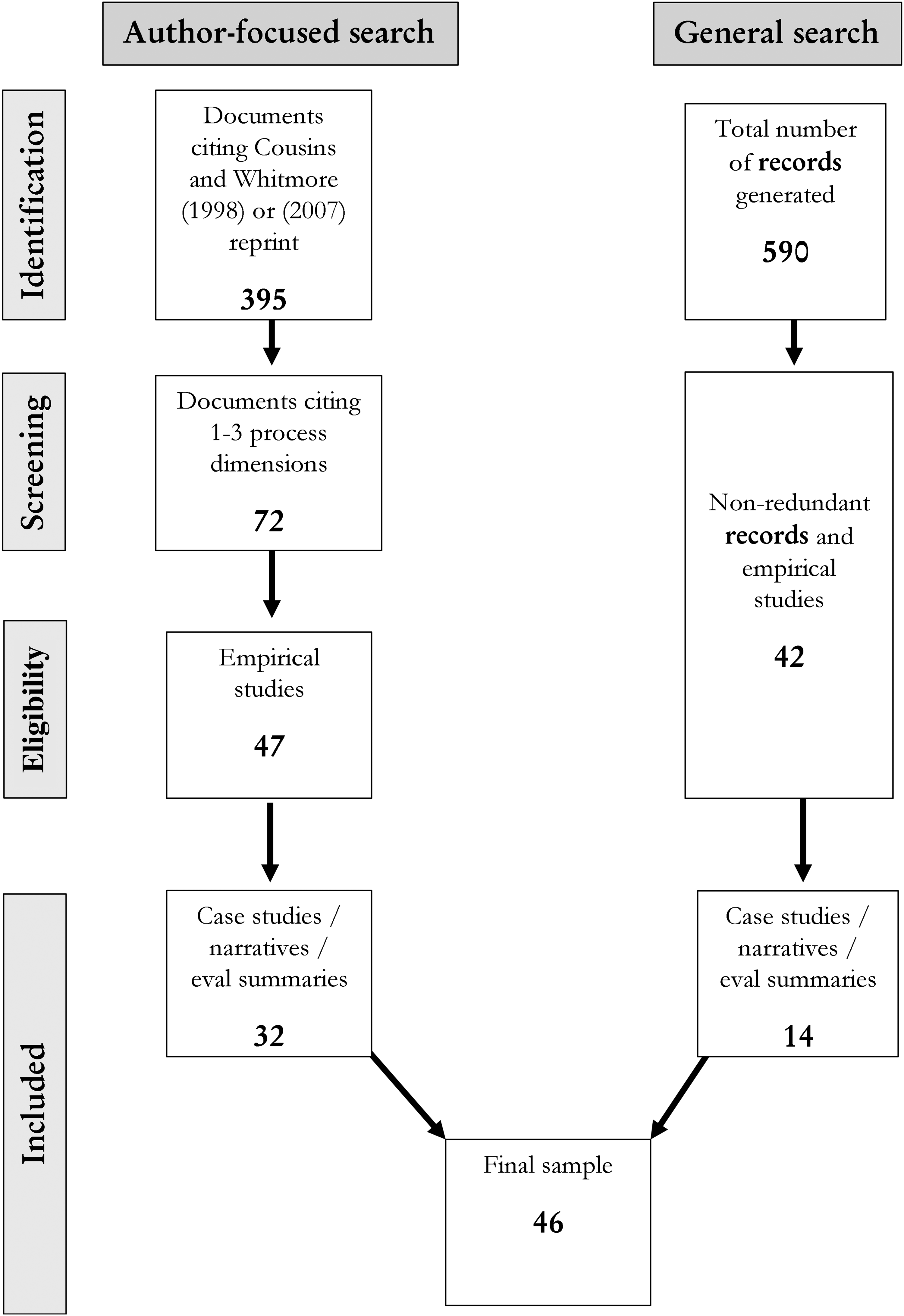

We searched for peer-reviewed case studies, reflective case narratives (RoE), or summaries of CAE projects appearing over the last 25 years. In addition to published peer-reviewed journal articles, we also considered unpublished dissertations reviewed by thesis committees. As shown in Figure 2, we had two main search strategies: author-focused and general search.

PRISMA-Style Flowchart Describing Sampling Process.

Author-Focused Search

To be considered, studies had to cite either Cousins and Whitmore (1998) or a reprint of the original article in a commemorative issue of New Directions in Evaluation published in 2007. 2 Given our interest in mapping CAE processes, we used both Google Scholar and the Web of Science Citation Index to identify studies appearing in the year 2000 and beyond. Self-citing articles were eliminated, meaning that studies published by the authors or members of the authors’ research group were excluded. 3 In addition to the search platforms, the first author's account on Research Gate (a social media platform for scholars) was another source that turned up articles that had cited the chapter.

All in all, these sources identified 395 articles for analysis. A total of 373 of the studies were published in English; the remainder was published in French (10), Spanish (5), German (3), Portuguese (2), Slovakian (1), and Arabic (1). 4 Our next step was to scan the articles to determine the nature of the citation. Seven studies were immediately eliminated because the original chapter was included in the reference list but was not cited in the article. Of the remaining 388 articles, we identified 72 that specifically mentioned one or more of the three collaborative process dimensions described above (i.e., control, diversity, and depth of participation). From that list, we culled a sample of 47 articles coded as empirical. Finally, we eliminated 15 articles as not being case examples of CAE application for a final total of 32.

General Search

In addition to the author-focused search, subsequently, we conducted a more general search of relevant databases for empirical articles on CAE. We used the keywords “participatory evaluation,” “collaborative evaluation,” “peer-reviewed,” “empirical,” and searched a select list of databases from 2000 to present. The databases were Google Scholar, PubMed, PsycINFO, and ERIC. This process generated a total of 590 records. To avoid duplication, we compared qualifying studies (1) across databases and (2) against the master list of those already obtained from the author-focused search. This process narrowed the search to 42 potentially eligible articles of which 14 could be classified as case study, reflective case narrative, or evaluation summary. Of those eliminated, many were empirical studies of cross-cutting evaluation issues such as PCM power dynamics, valuing, evaluation use, and the like. Such studies provided scant details about the application of CAE. Thus, a final total of 14 studies resulted from the general search strategy.

Sample Description

These parallel search functions identified a total of 46 items (42 peer-reviewed journal articles, 4 doctoral theses) which were included in our core sample. While the sample is not exhaustive, we are confident that it is comprehensive and reflective of CAE practice. A detailed table of descriptive characteristics of the core sample appears in Supplemental File 1 and the corresponding list of references in Supplemental File 2.

Studies have appeared steadily since the turn of the millennium. We categorized the sample into 5-year intervals and observed that most studies appeared in 2010–2014 (33%) followed by the most recent interval, 2020–2024 (27%). Only 5 studies (11%) appeared in the 2015–2019 intervals.

By far and away, most cases examples took place in North America: USA (54%) and Canada (20%). Others were in Europe (15%) (Spain 2, Netherlands 2, UK 2, Austria 1). Two case reports were derived from Australia, and one case each was from Iran and Afghanistan. One reflective case narrative (Apgar et al., 2024) was international, describing three applications (Mali 1, Columbia 2).

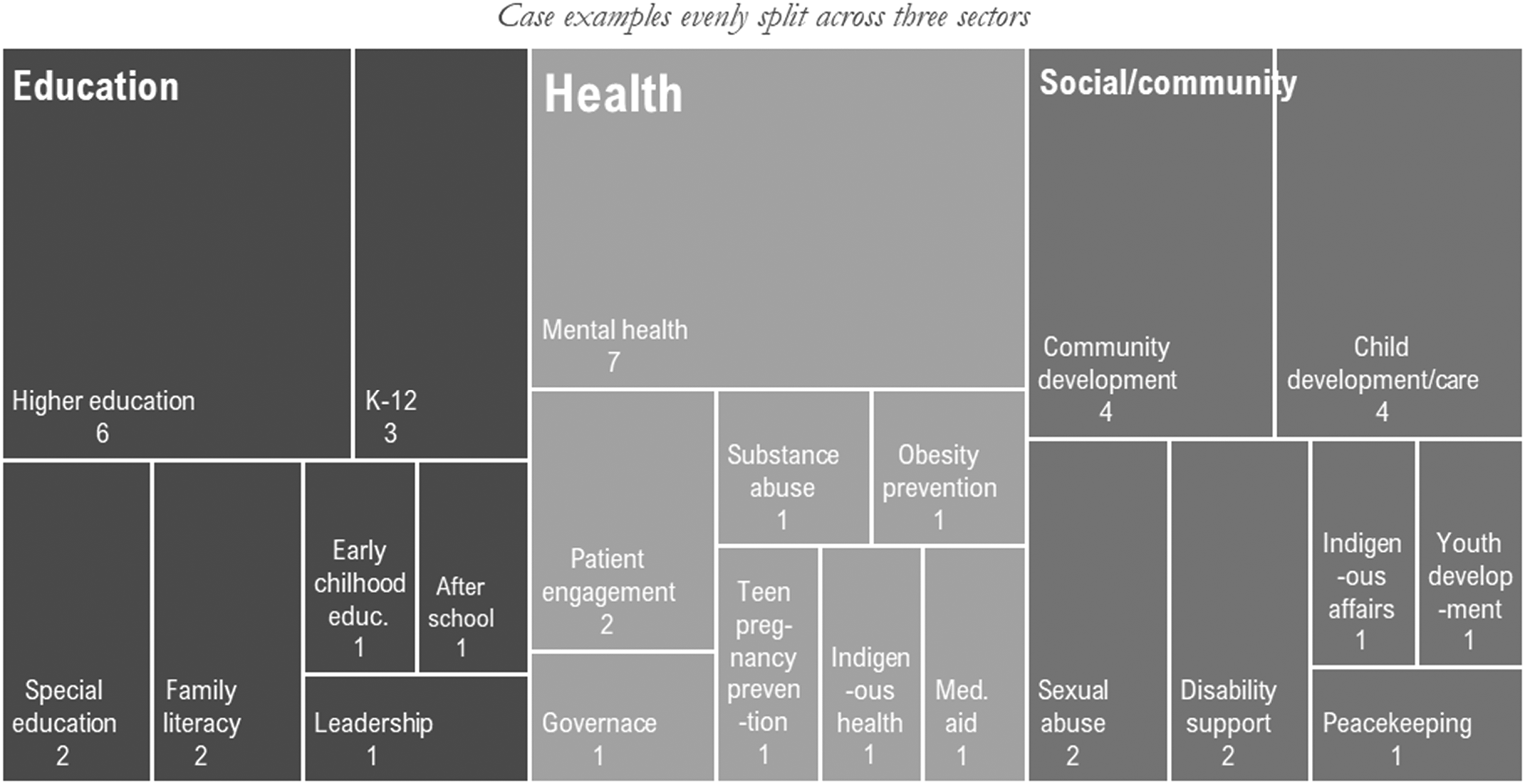

Figure 3 is a tree structure node-link diagram, a preferred display when there is an abundance of child nodes for consideration (Long et al., 2017). In the figure, child nodes (subdomains) are associated with parent nodes (sectors: education, health, and social/community) and weighted by area size. As evident in the Figure, studies were quite evenly split across three sectors: education, health, and social/community. Within each sector, there was a range of subdomains, the most prominent subdomains being higher education (education), mental health (health) and child development and care, and community development (social/community).

Tree-Map of Published Article Themes within Sectors.

The case examples were a mix of reflective case narratives (48%), evaluation summaries (30%), and case studies (22%). Studies focused on a wide range of objectives, sometimes directly on CAE processes and effects, but most often using CAE case examples to get at cross-cutting issues (e.g., evaluator roles, cultural relevance, process use, health system experiences). Most studies elaborated on how they defined CAE, but in some cases, we made inferences based on the information provided. Typical categories were practical participatory evaluation, empowerment evaluation collaborative evaluation, community-based participatory research, inclusive participatory evaluation, developmental evaluation, and most significant change technique (see Supplemental File 1 for more detail).

Instrument and Data Processing

We developed a data summary template in order to summarize the relevant data from each study (see Supplemental File 3). Both authors engaged with the data summary process. The template provided several prompts associated with the main information of interest and included rating scales associated with CAE purpose, and the three dimensions of collaborative processes (control, diversity, depth of participation). The rating scales are semantic differentials with end points understood to be polar opposites in accordance with theoretical specifications by Cousins and Whitmore (1998). Ratings were based on the analysis of the cases relative to the scale end points.

To ensure analytic consistency, early in the coding process the two data analysts (authors) independently coded three randomly selected studies for comparative purposes. One of the studies was agreed by both analysts not to qualify for the core sample because it did not qualify as a case study, reflective case narrative, or evaluation summary. For the other two studies, we found a high level of agreement about the independent ratings associated with the collaborative study purposes and the processes employed. We discussed the justifications for the ratings and in a few instances agreed to a consensus rating (sometimes a midpoint). After subsequently coding a portion of the sample, we repeated the process and found an acceptable level of agreement on our coding of CAE purposes and processes. We are satisfied that intercoder agreement was sufficiently high for our purposes and we confidently completed the independent coding of the 46 articles.

Analysis

For the analysis, we relied primarily on Dedoose® supported by SPSS. Dedoose is a mixed-method data analysis software package (Salmona et al., 2020) that, although quite powerful, has only seen limited use in evaluation and RoE (Cousins et al., 2024). The empirical case applications were coded according to the categories that we had established in the data summary template (Supplemental File 3). Several of the codes were descriptive, but the codes involving rating scales were weighted. That is to say, the excerpt of text in the data summary was assigned the relevant code, and the number corresponding to the rating was attached as the weight.

In addition to coding the textual excerpts as described, we also created “descriptors” in Dedoose using categorized variables. The first descriptor was for study demographics and included categorized versions of year of publication, country/region of publication, and the sector in which the program/intervention was located.

Finally, we created a descriptor in Dedoose corresponding to the rating for collaborative study purpose. For the purposes of this descriptor, we collapsed ratings of 1–2 into “practical,” 2.5–3.5 into “mixed,” and 4–5 into “transformative.” This categorized descriptor enabled mixed-method analyses specific to our research questions. Note that the quantitative ratings were imported into SPSS for further analysis. Consistent with Cousins and Whitmore's (1998) proposition, rating scales were given equal weight in the analysis.

Findings

We present findings in the sequence of the research questions posed above.

Two Streams of CAE

Our first task was to determine the extent to which the case applications could be categorized as practical or transformative. According to our ratings well over half of the studies fell into the P-CAE (59%) category, about a quarter were T-CAE (26%), with the remainder being mixed (15%).

The practical case examples typically focused on the identification of program strengths and weaknesses, intended program improvements, and enhanced evaluation use (e.g., Connors & Magilvy, 2011; Edalati et al., 2023; Eversole, 2018; Landerholm et al., 2000; Magilvy, 2011). Fletcher-Hildebrand et al. (2023), in a study of an animal-assisted intervention for mental health, provide a good illustration: “The process evaluation focused on the assessment of program functioning, including program inputs, activities, and target audience. The evaluation was formative, with the objective of program improvement” (p. 5). Similarly, in the words of Dryden et al. (2010) about their youth trauma intervention intended to reduce the progression of substance use and prevent the transmission of infectious diseases: “From the outset, the Phoenix Rising director and evaluation team decided that the primary purpose of the local evaluation should be utilization-focused, with the intent to gather information that can be used on an ongoing basis for program assessment and improvement.” (p. 387). In a study of kinship caregivers, Moldow et al. (2023) asked such questions as how are caregivers adjusting? What supports and resources are most needed? What program strengths and areas of improvement present?

Such studies were rated as 1 (P-CAE) on the 5-point scale, but other studies made specific reference to evaluation capacity building (ECB), usually organizational, as part of the intended purposes of the study (e.g., Clancy, 2011; King & Elhart, 2008; Owens, 2022; Themessl-Huber & Grutsch, 2003). In a multisite study on sexual assault interventions, Campbell et al. (2014) were interested in developing evaluation capacity of program staff, but they did identify the main purpose of the evaluation to be enhancing the understanding of the intervention's efficacy in generating intended outcomes. We typically rated such P-CAE studies as 1.5 or 2.

At the other end of the spectrum, about a quarter of the studies were coded as T-CAE. Studies rated as 4 or 5 on the CAE Purpose scale placed a premium on empowerment and transformation. For example, Ucar et al. (2016) conceived of participatory evaluation as a community development strategy. Their work in lower-income communities near Barcelona was driven by empowerment and transformative evaluation approaches and sought to foster the “empowerment of people and groups participating in the evaluation.” (p. 297). Sullins’ (2003) dissertation focused on evaluating drop-in center for people with emotional difficulties and homelessness issues and was very much empowerment oriented, involving staff and consumers. In her words: …their drop-in center could benefit from an evaluation, especially an evaluation that actively involved all the staff and consumers and could be continued after my work with them was complete. (p. 389).

As was the case with P-CAE, some of the studies rated at the transformative end of the spectrum also included some practical elements, although the main drivers were evidently transformative. The following excerpts help to illustrate: The collaborative evaluation project was intended to act as a tool to evaluate the module and as a process where tutor and participants enhanced their learning about research and evaluation methodologies through active participation in evaluating the module. (Bovill, 2010, p. 144, higher education) A primary purpose of the program accountability system was to build capacity for accountability among the county partnerships so that they could perform as learning organizations. (Flaspohler et al., 2003, p. 46, early childhood education) Collaborative efforts at all levels contributed to providing a comprehensive self-assessment of the curriculum and placed stakeholders back in the driver's seat—providing them with forums and opportunities to become leading voices in setting priorities. (Fetterman et al., 2010, p. 187, higher education)

In the study by Vat et al. (2020) on patient engagement in Newfoundland and Labrador, Canada, we observed that although the project informed community development in practical ways, there was a definite change element defined in terms of community transformation in ways that respect the interests and values of community members. This is also certainly the case with the Clarke et al. (2022) study in the USA which adopted a culturally responsive approach in the evaluation of service provision for the elderly Indigenous persons in the USA. The team adhered closely to culturally responsive evaluation principles: (1) engagement with PCMs and a participatory ethic, (2) attention to cultural values and Indigenous ways of knowing, (3) recognition of and respect for tribal sovereignty, and (4) training to build local evaluation capacity.

We rated relatively few studies as being mixed or including a blend of practical and transformative intentions; we were unable to locate them at either end of the CAE purpose spectrum (e.g., Baur et al. 2010; Bhattacharyya et al., 2017). As an example, O'Sullivan and D’Agostino (2002) managed the collaborative evaluation of a county-wide early childhood initiative in North Carolina, USA. Although the evaluation had an improvement-oriented agenda, the authors used cluster networking on the assumption that programs with similar goals can strengthen their evaluation strategies and build evaluation expertise from within.

In summary, despite the modest set of studies that defied categorization at one end or the other it is worth noting that we do see definitive evidence of the two streams of CAE at play in practice. It is clear, however, that practical and transformative agendas need not be mutually exclusive. Readers are reminded that CAE purposes are all about plans or intentions and that CAE implementation and effects can be somewhat dissonant.

Collaborative Process Profiles

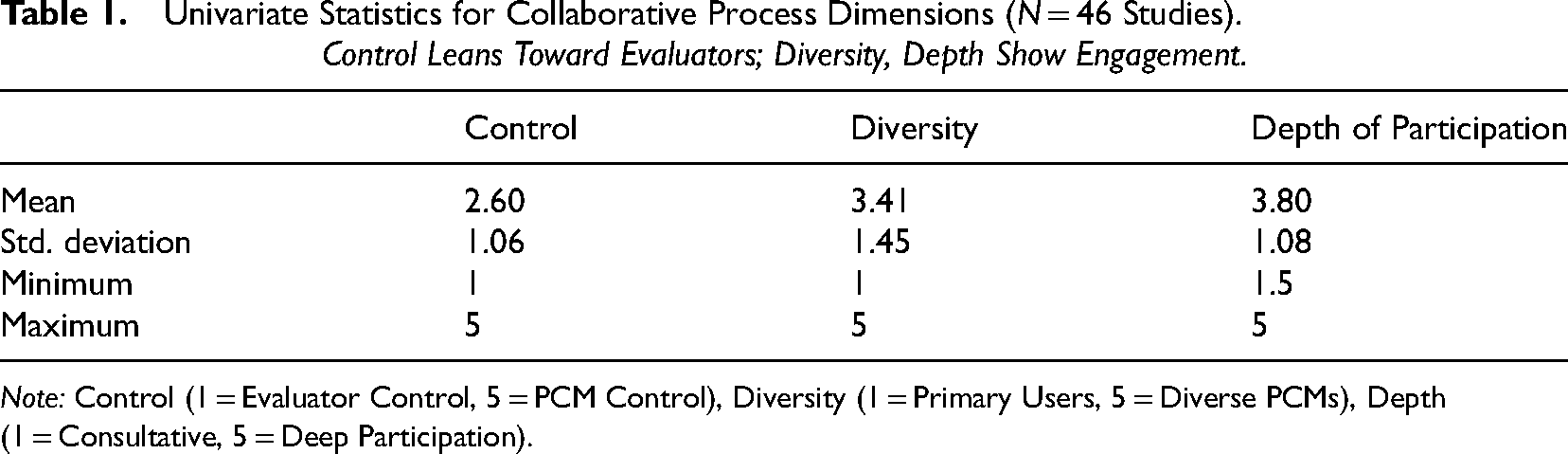

How do case applications of CAE fall out on our three process dimensions? Table 1 displays descriptive statistics for the ratings associated with each collaborative process dimension. In general, we can see that control of evaluation decision-making tended to lean toward the evaluator, PCM diversity was reasonably diverse, and depth of participation of PCMs tended toward fairly deep participation. No studies were rated as the minimum for the depth of participation scale, suggesting that PCMs were more engaged than merely commenting on proposed plans or offering opinions about findings. We now turn to an up-close look at collaborative practice associated with these dimensions.

Univariate Statistics for Collaborative Process Dimensions (N = 46 Studies). Control Leans Toward Evaluators; Diversity, Depth Show Engagement.

Note: Control (1 = Evaluator Control, 5 = PCM Control), Diversity (1 = Primary Users, 5 = Diverse PCMs), Depth (1 = Consultative, 5 = Deep Participation).

Control of Evaluation Decision-Making

In many of the studies, CAE control was definitely evaluator-led (e.g., Acree, 2019; Dryden et al., 2010; Elicker, 2013; Eversole, 2018; Fletcher-Hildebrand et al., 2023; Fluhr et al., 2004; Landerholm et al., 2000; MacDonald et al., 2006; O'Sullivan & D’Agostino, 2002). This is not to say that PCMs did not have a significant role to play in deciding about evaluation knowledge creation. For example, in the context of a support program for home residents with disabilities, Robinson et al. (2014) reported that the evaluation was controlled by evaluators and funding agencies but that input from other PCMs was collected and considered on design, reporting, and oversight. Here are some direct illustrations of evaluator leadership from other CAE case examples: The research team provided intensive training to each site that included standardized directions and step-by-step forms to complete when reviewing case files to determine case eligibility and sample cases. (Campbell et al. 2014, p. 612, sexual assault nurse examiner program) Thus, the external evaluation team members could be seen as senior contributors to the evaluation process, while the programs made important but less sophisticated contributions. (O'Sullivan & D’Agostino, 2002, p. 377, county-wide ECE program) …evaluators retain control while collaborating with stakeholders. This arrangement helps safeguard the credibility of evaluation products, while integrating collaboration into the design. (Chehaib et al., 2023, p. 236, youth mental health first aid program) If this were to be envisioned on the 3-D participatory evaluation signpost (Cousins & Whitmore, 1998), technical control would fall slightly toward the evaluator. (Donnelly et al., 2014, p. 26, memory clinic services)

At the other end of the spectrum, there were a few instances where evaluators played a facilitating role while PCMs were active in shaping the evaluation process (e.g., Apgar et al., 2024; Baur et al., 2010; Ridde et al., 2012). Apgar et al.'s (2024) working group focused on developing an innovative and well-informed framework for inclusive rigor in the context of peacebuilding. The international group was composed of professionals across a range of organizations and institutions with interests in this domain. The authors described a need for a decolonized, “localized-led” approach to evaluation.

Finally, Connors and Magilvy (2011) reported on how control had shifted to the nonevaluator participants as the evaluation of a college of nursing program progressed. Especially evident was the active control of PCMs in selecting topics for investigation and disseminating results to decision makers. Years earlier, Themessl-Huber and Grutsch (2003) identified “control shift” as the very focus of their research paper—the alteration of evaluation decision control throughout the course of CAE. Their case focused on an evaluation of remedial services for families contending with physical handicap and ambulatory issues. A remarkable shift toward the evaluators’ role from control to facilitation as the project progressed was observed.

Diversity of PCM Participation

Several of the studies in our core sample were rated as being fairly midrange on the PCM diversity dimension (e.g., Campbell et al., 2014; Clancy, 2011; Fletcher-Hildebrand et al., 2023; Owens, 2022). Often the studies involved a wide range of PCMs but did not necessarily include intended beneficiaries. The study by Ridde et al. (2012) helps to illustrate. The study focused on an international aid program that undertook different activities focusing on rehabilitating healthcare structures and medical training for healthcare workers in conflict-ridden Afghanistan. The evaluation team was balanced and had six members who had a stake in the program. Affected populations, or intended beneficiaries, were only consulted. According to the authors, which included medical program community participants, “The selection of the people participating in the process was undertaken from a practical perspective … This is why affected populations were consulted but did not participate” (p. 187). In another example, Robinson et al.'s (2014) evaluation of a residential support program for people with disabilities included a range of PCMs including funding agencies and actors representing the interests of people with disabilities. People with disabilities were included but more as sources of data than as participants in the evaluation. Robinson et al. were of the view that their perspectives were adequately considered by those representing their interests and underscored the value of including that perspective.

Several studies did include intended program beneficiaries and scored highly on the diversity continuum. In writing about the evaluation of obesity prevention clubs in Iran, Edalati et al. (2023) noted that inclusion criteria for participants of the evaluation team were having experience with and active involvement in obesity prevention clubs for at least 4–5 years, as well as being willing and able to participate. The final evaluation team included community citizens, that is, volunteers in the program, as well as health managers at district and city levels. Gilbert and Cousins (2022) reported on the workings of a collaborative health services committee that embraced principles of patient-engagement. Former cancer patients and current patient family members were active on the collaborative needs assessment. In the K to 12 education sector King and Ehlert (2008) reported on three separate CAE projects within a single school district that took place over multiple years. The projects are very well organized and structured and a very wide range of PCMs were involved in each. Program community members involved in one or more of the CAE projects included principals, the teachers’ union representatives, early childhood and curriculum specialists, paraprofessional staff, multicultural student advisors, parents, parent advocates, and service agency representatives. In a middle school evaluation project, a diverse group of students from each middle school participated. The students represented different abilities, races, ethnicities, and socioeconomic status groups. Another study that included intended program beneficiaries was an evaluation of an intercultural community intervention project in Spain (Vecina-Merchante & Morey-Lopez, 2024). The evaluation group consisted of seven professionals from health, social services, and education and two citizens linked to all sectors of the population by age and ethnocultural origin.

At the other end of the spectrum, there were several studies where diversity of PCMs was limited to primary users, or those in positions enabling them to act on evaluation findings and leverage programmatic change (Acree, 2019; Campbell, 2013, Eversole, 2018, O'Sullivan & D’Agostino, 2002; Robinson & Cousins, 2004). In Chehaib et al.'s (2023) evaluation of a school-based youth mental health first aid program, a range of actors were involved but they were all primary users: the director of student services, the senior manager of psychological services for the school system, and the director and trainer of the program under evaluation. In Dryden et al.'s (2010) evaluation of the Phoenix Rising program mentioned earlier, the breadth of PCM involvement was, for the most part, limited to the agency staff, presumably with some sway in program decision-making. The staff include the agency director, the program manager, and four case managers. Finally, Fluhr et al. (2004) evaluated 12 community-based teen pregnancy prevention projects. The evaluation team consisted of representatives from the College of Public Health, the State Department of Health, and project staff. All could be considered primary users.

Depth of Participation by PCMs

It is interesting to note that the average score on the depth of participation dimension was relatively high reflecting significant involvement in many of the evaluation methodological stages by PCMs. There was no shortage of studies that described deep levels of participation by PCMs (e.g., Campbell, 2013; Clarke et al. 2022; Edalati et al., 2023; O'Sullivan & D’Agostino, 2002; Parker et al., 2024; Robinson & Cousins, 2004). The following excerpts convey the substance of the depth dimension. [The PCMs] were involved in selecting the evaluation questions, development of the measurement tools, piloting the survey, interpretation of study findings, and dissemination activities (Vat et al., 2020, p.5, patient engagement program) [They] worked with the team as evaluation consultants, providing methodological expertise and facilitation of team meetings…The team made decisions regarding the targets for the evaluation, data collection instruments, and evaluation methods. (Quintanilla & Packard 2002, p.18, science enrichment program). The role of the Evaluation Committee was to offer feedback and input into all aspects of the evaluation including the design, interpretation of data, and translation of findings into the program (Donnelly et al., 2014, p. 26, memory clinic). The [freed fieldworker] was involved in every step of the evaluative process and conducted most of the essential evaluation tasks: process initiation in certain cases, application elaboration (or participation in its elaboration), question finalization, elaboration of data collection tools, monitoring and/or participation in data collection, results analysis and interpretation, drafting of the final report and results presentation. (Jacob et al., 2011, p. 116, youth social services)

In some cases, PCMs were deeply involved in conducting evaluations while the highly technical activities were carried out by evaluation specialists, perhaps for obvious reasons. For example, in the Moldow et al. (2023) evaluation of the kinship navigator program, it was decided that the kin caregivers on the team would design the interview guide, conduct interviews, and interpret data. “Our full evaluation team examined the survey results. Once our statistician completed analyses, she brought aggregate data, without names or identifiers, to three separate group meetings to ask kin caregivers on our team to reflect on the findings together” (p. 6). Such an occurrence was fairly common across CAE projects, as might be expected given PCM time and expertise limitations.

Despite the relatively high average rating in this dimension, there were some projects where depth of participation was rather limited or consultative (e.g., Chehaib et al., 2023; Owens, 2022; Robinson et al., 2014). In some cases, evaluators consulted extensively with PCMs to enable the tailoring of the evaluation to the local context. Jones et al.'s (2022) evaluation of “PartnerSPEAK,” a peer support program for significant others of persons convicted of possession of child sexual abuse material, provides a good example. The evaluation methods were codesigned with the program CEO and key staff. The evaluators ran workshops to vet the survey instrument and interview schedule with PartnerSPEAK managers and peer support workers; they wanted to ensure that instruments adequately reflected the needs and experiences of the organization and their clients. On another front, Owens (2022) engaged members of the intended beneficiary group to help inform her collaborative study of learning experiences as an outcome of an instructional module designed to equip adults with learning disabilities with knowledge and confidence for self-care and managing common infections.

An evaluation described by de Hoop (2020) provides a case example that, although participatory in the main, was highly consultative in the involvement of citizens, civil society, and local governments. The evaluation was of a participatory project called Gamma Sense in the Netherlands, which aimed to democratize gamma radiation-related decision-making through participatory knowledge production. PCM group involvement was limited to consultation in the case of citizens and local governments, consultation/coproduction in the case of a civil society organization, and consultation in the case of the Dutch National Institute for Public Health and Environment.

In this section, we have attempted to bring to life the nature of participatory practice concerning the three fundamental process dimensions of interest. With each of the dimensions we observe some variation, but average ratings suggest that the evaluator control was reasonably common, that PCM group involvement was relatively diverse, and that PCM depth of participation was quite substantial.

Collaborative Approaches to Evaluation Purpose-Outcome Alignment

Here, we provide an analysis of CAE project outcome patterns. Specifically, we wanted to get a sense of the nature of the outcomes but more importantly, how they aligned with identified purposes of the collaborative project. Supplemental File 1 is particularly useful for this purpose. In the final column of that comprehensive table, CAE outcomes are identified in abbreviated form for each study. We looked over the summarized outcomes and concluded whether they (1) were aligned with the specified goals of the CAE or (2) also extended to secondary areas of accomplishment/effect. We monitored for unanticipated outcomes as well.

As a first observation, all studies demonstrated uniformly positive impacts of CAE activities. This was true even for reflective narratives which were usually research studies using CAE stories as a basis for reflection. Despite the positive impacts observed, our analysis did reveal a few downside perspectives, which we discuss below.

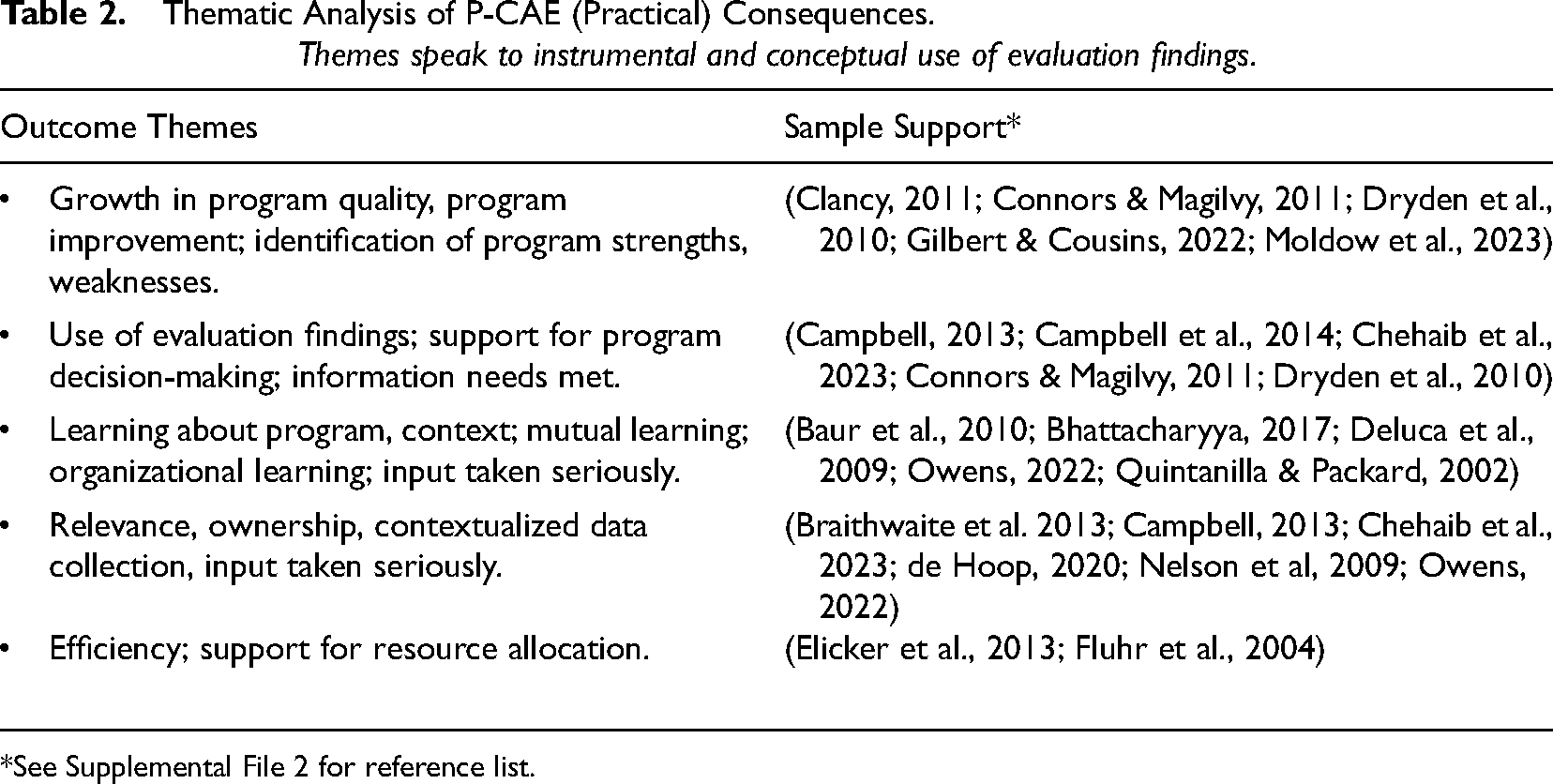

On the practical side, studies reported a wealth of outcomes and consequences of the evaluations. In Table 2, we provide a glimpse at the list of emergent themes which is well aligned with expectations derived from practical perspectives.

Thematic Analysis of P-CAE (Practical) Consequences. Themes speak to instrumental and conceptual use of evaluation findings.

*See Supplemental File 2 for reference list.

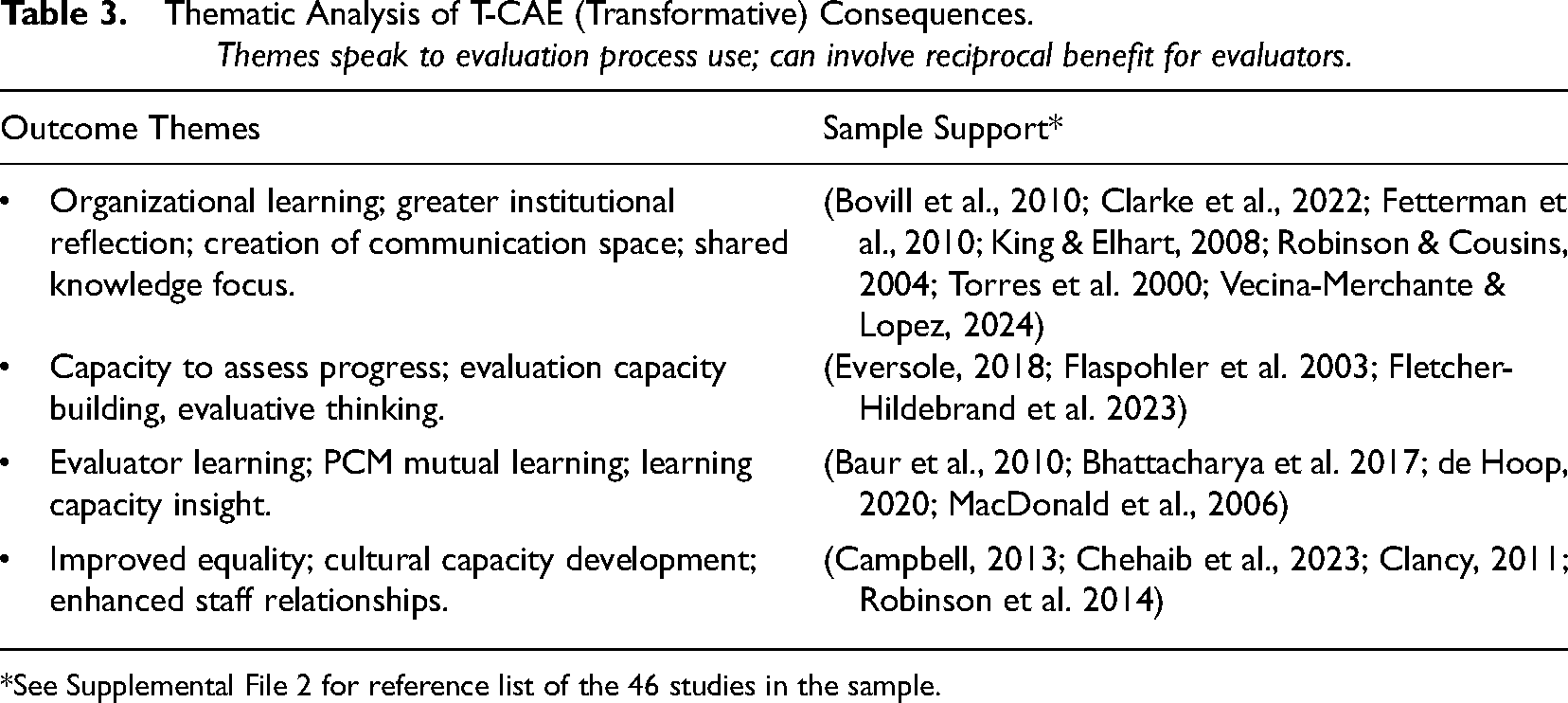

On the transformative side, the pattern of results was also highly congruent with expectations. The list of themes appearing in Table 3 amplifies claim. However, the transformational outcomes laid out in the table are limited in the extent to which they show transformational outcomes for marginalized populations and intended program beneficiaries. Most are aligned with capacity building, growth, and learning and are consistent with our revised definition of T-CAE.

Thematic Analysis of T-CAE (Transformative) Consequences. Themes speak to evaluation process use; can involve reciprocal benefit for evaluators.

*See Supplemental File 2 for reference list of the 46 studies in the sample.

Of high interest is the extent to which observed outcomes are in sync with intended CAE purposes. Of the 46 case examples, we coded 20 (44%) as being aligned with CAE purpose, and 26 (56%) as reflecting additional outcomes that expand from the intended. An independent samples t test confirmed that there was no statistically significant relationship between CAE purpose and outcome; “aligned” or “expanded” coding was independent of CAE purpose ratings, although there was a slight tendency for expanded observed outcomes to associate with practical (M = 2.75) versus transformative (M = 2.25) intentions.

While observed outcomes were, in the main, undoubtedly positive, we hasten to add that some studies identified downsides to CAE implementation. These included obstacles preventing full participation (Torres et al., 2000; Vecina-Merchante & Lopez, 2024), recommendations not implemented or delayed (Ridde et al., 2012); suboptimal configuration of PCM participation (Ucar et al., 2016); and weak or nonexistent experiential benefits to participants (Acree, 2019; Apgar et al., 2024; Robinson et al., 2014). Sullins (2003) reported a failure in empowerment evaluation and that ultimately, she had to intervene with a more conventional evaluation approach. Staff and consumers at a mental health drop-in center were disinterested in embracing evaluation implementation. All this to say, CAE is certainly not without its own set of challenges and obstacles.

In sum, all studies produced outcomes aligned with their CAE focus, but most yielded secondary effects that touch on a broader range of intended outcomes. Yet, we also saw modest evidence of disappointing or downside outcomes.

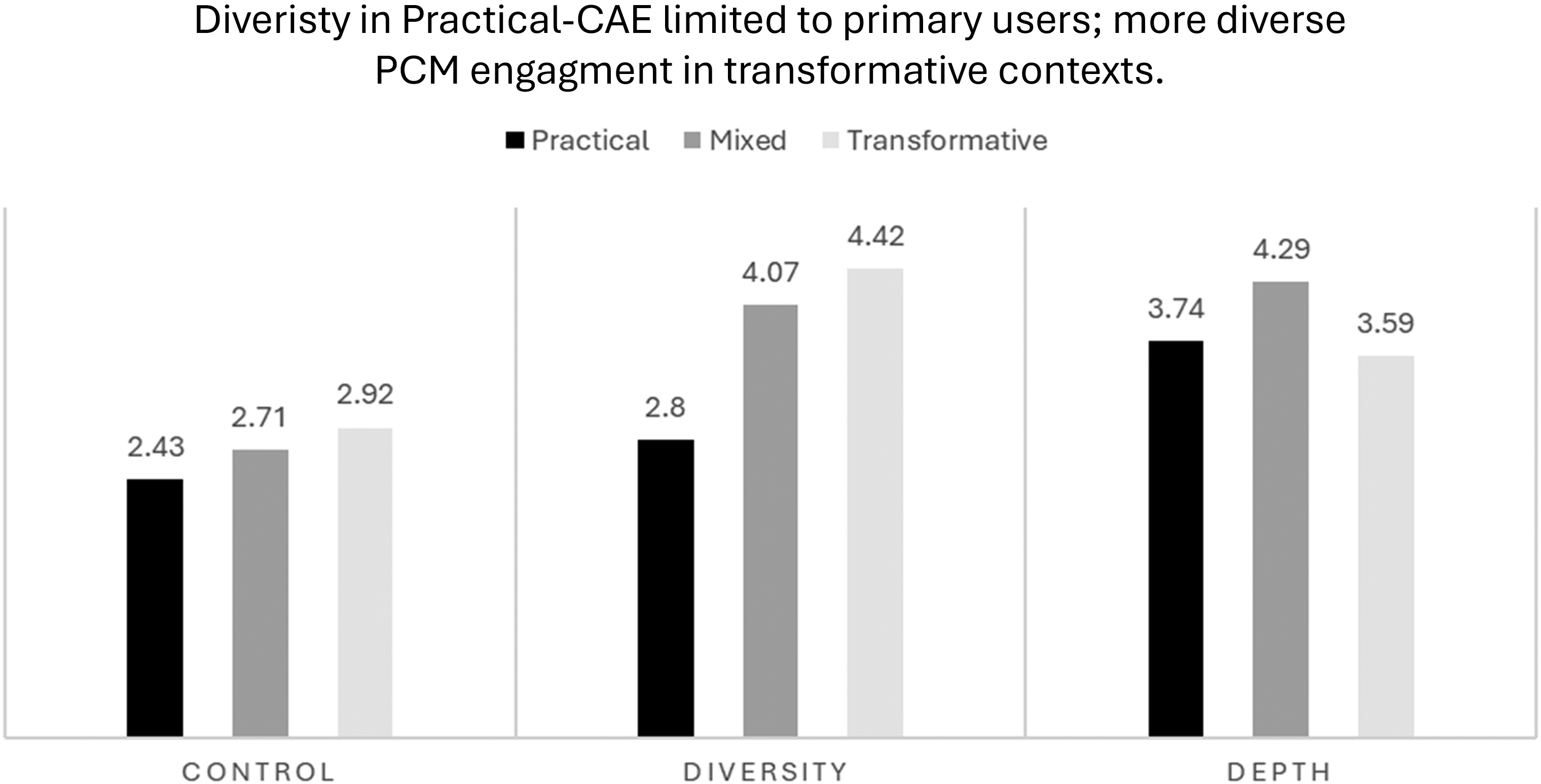

Collaborative Process Profiles by CAE Streams

Can P-CAE and T-CAE cases be differentiated by collaborative practice processes? If so, how? The foregoing sections give a good flavor for the nature of CAE practice and outcomes. In this section, we examine how collaborative process ratings associated with ratings of CAE purpose. In Figure 4, we observe the breakdown of collaborative process dimensions by CAE purpose. In the left panel, we can see that projects with a practical focus tend to be controlled by evaluators, whereas mixed and transformative CAE examples exhibit a slightly more balanced approach. The differences are subtle and not statistically significant.

Average Collaborative Process Scores by Process Dimensions and CAE-Purpose.

In the middle panel, we see the breakdown for the PCM diversity process dimension. Here, we observe that practical approaches to CAE tend to involve PCMs with a vested interest in the program and its evaluation, perhaps in positions to contribute to programmatic decision-making and leverage change. Such primary users are also involved in mixed and transformative CAE case examples, but a wider range of PCMs was implicated in those studies. In the case of transformative approaches, it would appear that intended program beneficiaries are often involved. The differences across these three purpose categories were statistically significant: The F-test and post hoc comparisons confirmed that scores on the control dimension are lower than the combined mixed and transformative CAE case examples.

No statistically significant findings were associated with average scores for the depth of participation scores. In the right-side panel of Figure 4, we observe relatively deep levels of participation across these three categories of CAE purpose. The extent to which PCMs are involved in evaluative activities appears to be independent of the purpose or intent of the CAE.

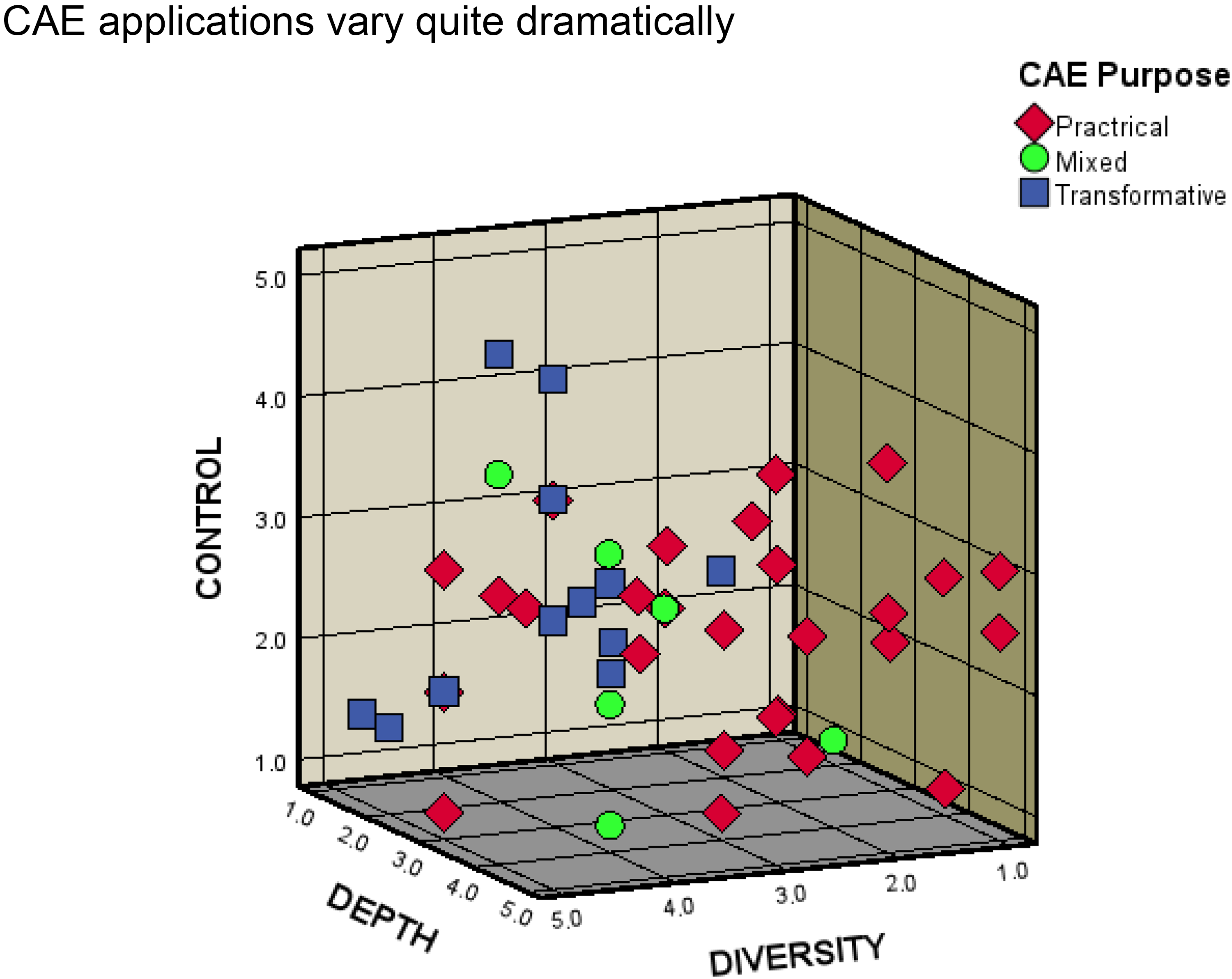

Finally, we were interested to see how CAE process profiles locate in three-dimensional space as per the representation in Figure 1. Figure 5 shows how the 46 cases fell out in a three-dimensional scatterplot. The spread of the 46 study data points shows an interesting pattern.

Three-Dimensional Scatterplot of Collaborative Process Dimensions by CAE Purpose (N = 46 Studies) (Control 1 = Evaluator, 5 = PCM; Diversity 1 = Primary Users, 5 = Wider PCM; Depth 1 = Consultative, 5 = Deep participation).

In the Figure, the dispersion of case examples across sectors in the three-dimensional plot is quite impressive. This finding affirms that the device is sensitive to detecting profile differences across CAE case examples and that CAE can take on many forms. The Figure also reiterates the differentiating properties of the diversity dimension with an evident separation of practical, as opposed to transformative projects, the former being more likely to engage only primary users. Finally, only two case examples were identified as PCM-led with the majority of applications reflecting balanced control or evaluator-led inquiries.

Discussion and Conclusions

To follow, we summarize the main findings of the review and then consider their implications for CAE practice, theory, and research.

Principal Takeaways

The findings from this study corroborate propositions from our theoretical framework. First, the two streams of CAE were confirmed in practice. More of the studies were practical in orientation and of those found to be transformative, most focused on ECB although they did not necessarily signify the empowerment of marginalized groups as a goal. Further, P-CAE and T-CAE are not mutually exclusive. A limited number of case examples blended the two streams in their representation of CAE purpose and a great many studies yielded outcomes that suggest that adherence to one orientation does not preclude adherence to the other.

Collaborative approaches to evaluation process profiles vary enormously, and our three-dimensional device (Figure 1) adroitly captures such variation. First, most projects leaned toward balanced or evaluator control, regardless of CAE purpose; only two studies appeared to be PCM-directed. Perhaps this latter observation is unsurprising given the high level of practical wisdom and knowledge of evaluation logic and methods evaluators bring to the table relative to limited PCM experience with inquiry of this sort. Next, case examples tended to favor a significant depth of participation of PCM participants, regardless of project purpose. It could be argued that deeper PCM engagement leads to more pervasive effects on evaluation relevance, ownership, learning, and proclivity to use findings. But perhaps even more importantly, deeper levels of participation may leverage PCM learning about evaluation, their development of evaluative thinking, and change in organizational or community culture toward inquiry mindedness. That observed outcomes in many studies expanded beyond those associated with identified purposes, supports this conjecture; specifically, there was a tendency for studies with practical focus to generate outcomes that were not only practical but transformative/capacity generating as well. Finally, consistent with expectation, diversity of PCM participation was differentiated by CAE purpose. Practically oriented CAE applications tended to favor engagement only with primary users, no surprise given the program problem-solving, evaluation use-oriented foci. On the other hand, where the interests were more transformative, a wider diversity of PCMs was often in play sometimes including intended program beneficiaries. Dialogue and deliberation as well as understanding and appreciation of different voices are valued aspects of T-CAE practices.

Two other findings are worthy of note. First, none of the studies derive from evaluation in government contexts and very few from international development evaluation settings. Finally, in some cases, evaluator learning or mutual learning between evaluators and PCMs was evident. We shall have more to say about these observations below.

This study is not without limitations. First, our sample is not exhaustive and it is likely that our search strategies missed suitable case examples from important sectors and contexts (e.g., government, international development, Indigenous/culturally responsive). Still, we are satisfied that our achieved sample is suitably encompassing of the scope and application of CAE in a wide range of education, health, and social/community settings. Second, in limited instances, we oversimplified by aggregating ratings across communities or evaluation phases, despite knowing that process profiles can vary as CAE implementation evolves and conditions change as we observed in some case applications (e.g., Sullins, 2003; Themessl-Huber & Grutsch, 2003). Third, many studies relied on reflective case narratives about evaluation experiences, an approach to RoE that has its limits (Christie, 2011; Cousins & Chouinard, 2012; Daigneault & Jacob, 2009). In their systematic review, Brandon and Singh (2009), for example, bemoan the fact that narrative reflections did not include methods sections or report on how data were collected and analyzed. However, as we observed, in some cases, narratives were augmented by data from the focal evaluation or supplemental data collection (e.g., Acree, 2019; Themessl-Huber & Grutsch, 2003), and in others, narratives were coauthored by PCMs (e.g., Apgar et al., 2024; Elicker et al., 2013). These practices help to augment the validity of reflective case narratives, as we have observed previously (Cousins & Chouinard, 2012). Finally, and related, most of the evaluation summaries, narratives, and case studies were written from the perspective of the evaluators. This has been identified as a general limitation in RoE literature (e.g., Cousins & Chouinard, 2012). In the present instance, the absence of voice of PCMs as research knowledge producers put limits on our understanding, for example, of depth of participation. While we may have a rough estimate of the range of evaluation activities in which PCMs were engaged, an assessment of the nature and quality of such participation is missing. These limitations should be borne in mind in considering the findings and the ensuing discussion.

Implications

“There is nothing as theoretical as good practice,” the inverse of Kurt Lewin's adage (e.g., Lewin, 1946), seems an increasingly appropriate frame for our thinking about the relationship between theory and practice. 5 Regardless of preference, empirical research provides a conduit for thinking about evaluation theory development and practical guidance. Studies by Christie (2003), Rog (2015) and Tourmen (2009) all considered theory's relationship to practice from a prescriptive or normative perspective. However, in the present study, the focal theoretical framework is descriptive; despite the supportive evidence that emerged, myriad questions about guidance for CAE practice remain. Still, there is a good deal we can say that evaluators who are engaged in CAE should consider. We have ideas about ongoing CAE research priorities as well.

First and foremost, evaluators stand to benefit from relying on the set of eight evidence-based principles to guide CAE practice developed by our research group (see Box 1; Cousins, 2020; Cousins et al., 2013; Shulha et al., 2016). In the present study, only one case application explicitly referred to the CAE principles (Acree, 2019), although we are aware of several others including a set of eight case studies external to our sampling frame devoted to field testing them (Cousins, 2020). To some extent, this may be a function of the recency of publication and promotion of the principles as well as our decision not to include “self-citation” articles in our sample. 6 In developing the principles, we drew on the practical wisdom of 320 evaluators who practice CAE and reflected on experiences that were highly successful as well as some that were less successful than expected (Shulha et al., 2016). While the principles could be considered normative and intended to provide evaluators, and indeed participating PCMs, with guidance about CAE practice, at the same time they enable flexibility to decide what the evaluation is to accomplish and what shape it should take in practice. As mentioned, the principles are entirely consistent with the descriptive theoretical contributions provided by Cousins and Whitmore (1998).

Evidence-based principles to guide CAE practice

Clarify motivation for collaboration

Foster meaningful relationships

Develop a shared understanding of the program

Promote appropriate participatory processes

Monitor and respond to resource availability

Monitor evaluation process and quality

Promote evaluative thinking

Follow through to realize use

As we have seen, CAE can adhere to different purposes and can take many different forms. It is paramount that significant attention is paid to a contextual analysis of the program and the setting in which it resides. Such analysis should inform a decision as to whether a CAE is appropriate, and if so, what it needs to accomplish (clarify motivation for collaboration), and what it should look like in practice (promote appropriate participatory processes).

A second implication from the current study is a call for continued research on CAE practice and theory development. Currently, practical guidance associated with each of the eight principles is offered in terms of a set of indicators or questions that evaluators and participating PCM's might consider (Cousins et al., 2020). But it would be beneficial to add to our understanding through a more nuanced approach to research that sheds light on the conditions, factors, and influences that may shape decisions throughout the CAE process. Also of interest would be a closer look at the extent to which features or aspects of the collaborative inquiry map onto the type and quality of observed outcomes. A more targeted and penetrating approach to research could help to inform decision-making about the principles and their application. Next, continued research on culturally responsive and Indigenous-led evaluations would enable a deeper understanding of theory-practice connections in transformative contexts. From a methodological perspective, a wider range of research designs and methodologies would be beneficial. For example, Indigenous-led methodologies and other culturally responsive approaches could be used to deepen our understanding of CAE and complexity. Additionally, more longitudinal case studies would help to illuminate the trajectory of CAE implementation and how processes may evolve over time. And innovative methods such as concept mapping could be used, for example, to explore PCM understandings of evaluation consequences. Finally, as mentioned, the vast majority of studies in our sample were written from the evaluator's perspective, although, we hasten to say, some studies were coauthored by PCMs. It is imperative that ongoing research focuses on the PCM perspective, which would help us to understand at deeper levels the meaning of control, diversity, and depth of participation. For example, elaborated understandings about how PCMs value and perceive their engagement with a range of evaluation methodological activities would be beneficial. Were their experiences positive or negative, expected or unexpected? Why/not? And what are the implications for practice?

We frame RoE as a bridge between evaluation practice and theory. Developing the foregoing streams of RoE could help CAE theory move up Horvath's (2016) ladder of inquiry from description to explanation. An explanatory theory would clarify why CAE works as observed or assumed and would provide stronger guidance for practice. With the ongoing accrual of an empirical base, CAE theory could potentially become formal, “validated and generalized across a range of inquiry areas and … transferable to different contexts” (Horvath, 2016, p. 218).

Third, relative to the observed paucity of governmental or international development case examples in our sample, we are drawn to Schwandt's (2018) argument that evaluation in the main is understood as a “collective, social undertaking” (p. 131). His arguments reframe evaluation logic as being driven by dialogic practice rather than practice defined by monologic adherence to conventional evaluation reasoning and methods. Schwandt advocates a model of professionalism where evaluators are obligated to serve the public as engaged, participating citizens, not as clients or PCMs with different roles. In his words, “…it is incumbent upon the evaluator to develop and foster forms of collective evaluative reasoning that ensure the inclusion of multiple perspectives in a dialogic, deliberative process” (Schwandt, 2018, p. 132). Despite the appeal of Schwandt's perspective, we observe that collaborative evaluation practice in government and international development contexts, at least within our sample of studies, is severely limited. While our search strategy may have factored in, no case examples in our sample came from government contexts and very few from international development evaluation.

In government, participatory prospects run up against a heavily entrenched culture of accountability associated with contemporary governance frameworks such as New Public Management or its variants (Andrikopoulos & Ifanti, 2020; Chouinard, 2013; Mathison, 2018). Evaluation is framed by private sector management principles and practices to improve the efficiency and effectiveness of public sector organizations and embraces principles of managerialism. Evaluation in this context can be framed as a technocratic enterprise, with the interests of the political elite at the forefront. A similar narrative aligns with international donor agencies. According to Vaessen et al. (2020) international evaluation offices (IEOs) in bi- and multilateral aid agencies “… generally would not have direct involvement with the intervention or its stakeholders. In practice, this means that several variations of participatory evaluation are not applicable. Nonetheless, creative applications of participatory approaches that safeguard independence and the efficient incorporation of stakeholder inputs into IEO evaluations are encouraged in most IEOs today.” (p. 68, our emphasis). Privileging the concept of independence, of course, is antithetical to Schwandt's (2018) thesis. Further to the point, Mathison (2018) asked “Does evaluation serve the public good?” Her conclusions were disquieting, to say the least. Mathison framed allegiance to evaluation's purported independence to ultimately conserve and serve the status quo. In contrast, she acknowledged a proliferation of approaches that promise participation, emancipation, and transformation and hold good potential to break evaluation's ineptitude in contributing to the public good. Her words, “speaking truth to the powerless . . . to empower the powerless to speak for themselves” (p. 118) are worthy of the field's serious attention. Contributions by Carden (2007, 2010) and Hay (2010) are among many that have similarly argued that a broader set of interests than those of donor agencies is required to bring about meaningful change. Collaborative approaches to evaluation offer avenues and possibilities to engage with such interests.

It may be that CAE in these contexts is occurring but perhaps under different labels than participatory and collaborative (e.g., utilization-focused evaluation, most significant change technique). Further, it is possible that proponents in these sectors are not aware of the contributions of Cousins and Whitmore (1998) or employ a different theoretical orientation and therefore fall outside of our sampling scheme. We are aware, for example, of governmental initiatives in Canada that embrace CAE within the context of the evaluation of Indigenous services and programs, but these are mostly framed as Indigenous-led evaluation (e.g., Indigenous Services Canada, 2022) and perhaps remain too early in the process to have been reporting evaluation or case summaries. Alternatively, there may be a question of scale, as governmental and international development interventions tend to be relatively large and perhaps not overly conducive to CAE. However, participatory and collaborative approaches in such contexts are entirely possible as illustrated by our own nation-wide evaluation of teacher in-service practice in India (Cousins & RMSA Evaluation team, 2015). Finally, it may be that CAE in these sectors are more likely to be rhetoric than reality. As a case in point, a master's thesis by Meier (1999) that focused on participatory research in sub-Saharan Africa found that the studies were hardly participatory and tended to rely on PCMs as mere sources of data as opposed to cocreators of evaluative knowledge.

Finally, we are reminded of Huberman's (1994) construct of “sustained interactivity” in the context of research use. Process use is a term that adequately captures the learning that CAE can engender in the program community by virtue of PCM proximity to, or participation in, evaluation (Patton, 1997). In our study, there was also evidence suggesting that evaluators learned and benefited from the collaborative process. Evaluators stand to benefit in developing their own practical knowledge and wisdom through their ongoing interactions with PCMs, or sustained interactivity. As Huberman (1994) put it, “In other words, there are reciprocal effects, such that we are no longer in a conventional research-to-practice paradigm, but in more of a conversation among professionals bringing different expertise to bear on the same topic.” (p. 9). So as to say, in evaluation theory and practice, it would be prudent to think more directly about reciprocal influences than has been the case to date.

In conclusion, this review and integration of RoE aligns with others such as Miller and Campbell's (2006) review of the application of empowerment evaluation and Coryn et al.'s (2011) review of theory-driven evaluation. Both reviews studied the extent to which published applications either repudiate or substantiate claims and theoretical ideals. As a point of departure, in the present case, we examined the extent to which practice aligns with a descriptive theory of CAE. The empirical support provided here for the theoretical constructs Cousins and Whitmore (1998) identified over 25 years ago helps to strengthen the connection between CAE theory and practice. Yet, as we have discussed, there is much to be done in both theory development and the advancement of practical guidance for evaluators and PCMs who wish to embrace a collaborative approach. We look forward to an ongoing regime of RoE to foster such growth through its essential role in bridging the theory-practice divide.

Supplemental Material

sj-pdf-1-aje-10.1177_10982140251355140 - Supplemental material for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice

Supplemental material, sj-pdf-1-aje-10.1177_10982140251355140 for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice by J. Bradley Cousins and Yasmine Alborhamy in American Journal of Evaluation

Supplemental Material

sj-pdf-2-aje-10.1177_10982140251355140 - Supplemental material for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice

Supplemental material, sj-pdf-2-aje-10.1177_10982140251355140 for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice by J. Bradley Cousins and Yasmine Alborhamy in American Journal of Evaluation

Supplemental Material

sj-pdf-3-aje-10.1177_10982140251355140 - Supplemental material for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice

Supplemental material, sj-pdf-3-aje-10.1177_10982140251355140 for Theory-Practice Connections in Collaborative Approaches to Evaluation: A Systematic Review of Practice by J. Bradley Cousins and Yasmine Alborhamy in American Journal of Evaluation

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Universtiy of Ottawa.

Supplemental Material

Supplemental material for this article is available online.

Supplemental File 1: Descriptive characteristics of the core sample of 46 studies Supplemental File 2: Reference list of the core sample of 46 studies Supplemental File 3: Data summary template

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.