Abstract

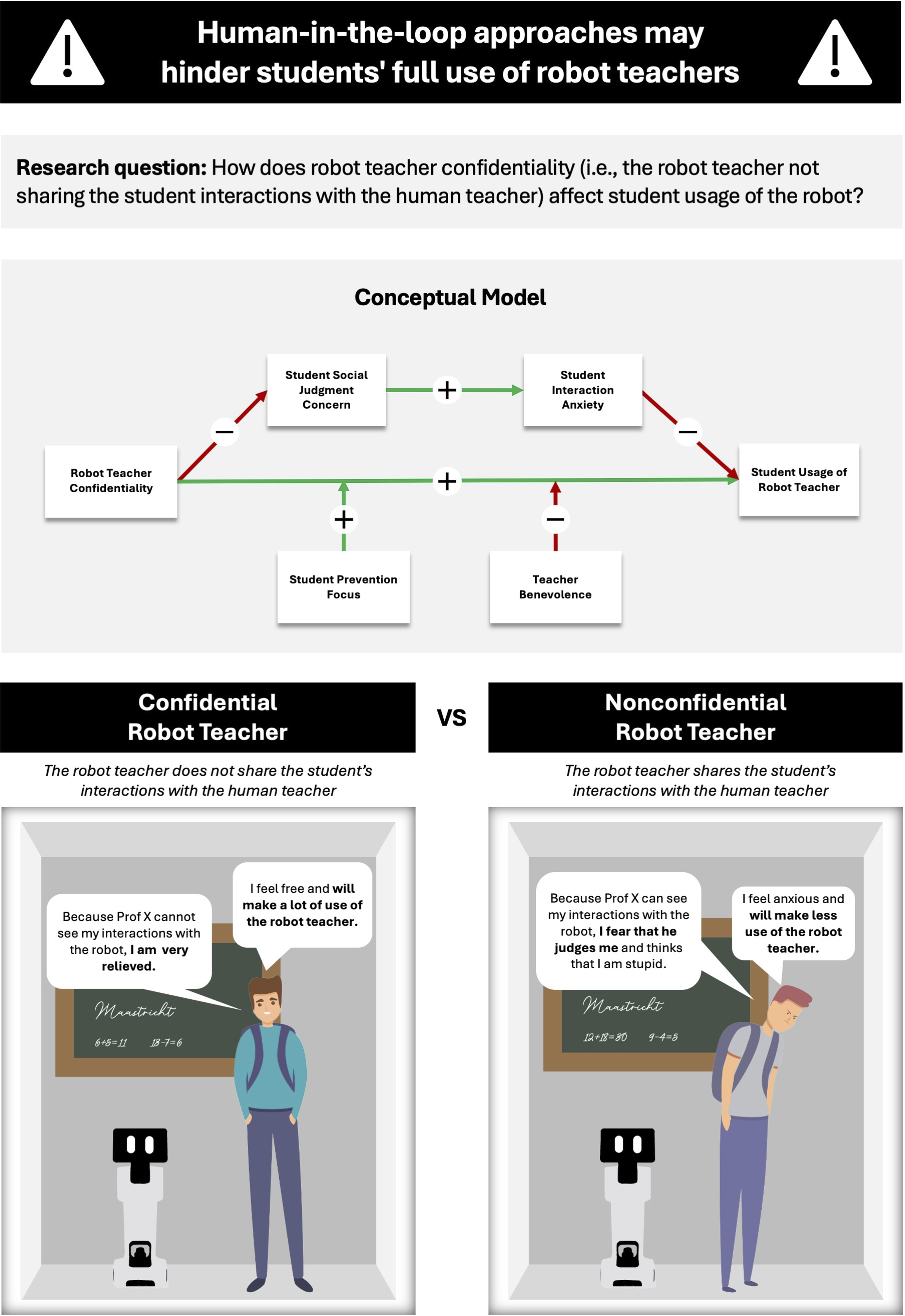

Enabled by technological advances, robot teachers have entered educational service frontlines. Scholars and policymakers suggest that during Human-Robot Interaction (HRI), human teachers should remain “in-the-loop” (i.e., oversee interactions between students and robots). Drawing on impression management theory, we challenge this belief to argue that robot teacher confidentiality (i.e., robot teachers not sharing student interactions with the human teacher) lets students make more use of the technology. To examine this effect and provide deeper insights into multiple mechanisms and boundary conditions, we conduct six field, laboratory and online experiments that use virtual and physical robot teachers (Total N = 2,012). We first show that students indeed make more use of a confidential (vs. nonconfidential) robot teacher (both physical and virtual). In a qualitative study (Study 2), we use structural topic modeling to inductively identify relevant mediators and moderators. Studies 3 through 5 provide support for these, showing two key mediators (i.e., social judgment concern and interaction anxiety) and two moderators (i.e., student prevention focus and teacher benevolence) for the effect of robot teacher confidentiality. Collectively, the present research introduces the concept of service robot confidentiality, illustrating why and how not sharing HRI with a third actor critically impacts educational service encounters.

Introduction

Technological breakthroughs in AI and robotics and associated benefits of using these technologies (Belpaeme et al. 2018) have enabled service robots to increasingly add value in education, an essential and transformative service setting (Fisk et al. 2022). Despite cultural differences in adoption (Reich-Stiebert and Eyssel 2015), more schools and universities now deploy physical and virtual robot teachers (i.e., system-based autonomous and adaptable interfaces that interact, communicate, and deliver services to students; Wirtz et al. 2018). The promise of such AI-based teachers is to help overcome global teacher shortages, enable faster and more individualized feedback for students, and improve accessibility to education (Belpaeme et al. 2018). Robot teachers seem especially suited to take over routine tasks from education personnel, providing human teachers with more time to mentor and guide students (Hwang et al. 2020). In light of such promising possibilities, Harvard University, amongst others, implemented a ChatGPT-based robot teacher in its 2023 Fall Semester (Phelan 2023) and Khan Academy has integrated a robot teacher called Khanmigo into its online learning platform (Khan 2023). Because of their growing relevance, scholars and practitioners have called for a better understanding of how robot teachers should be designed and implemented so that students can take full advantage of them (Kasneci et al. 2023).

Until now, scholars, policymakers, and practitioners have assumed that human oversight during Human-Robot Interaction (HRI) is desirable. For example, research has shown that humans react more negatively when they are confronted with decisions that are purely made by robots or AI, indicating that people seek human involvement (Dietvorst, Simmons, and Massey 2018). Similarly, policymakers have advocated for the implementation of so-called human-in-the-loop approaches, such as in Article 14 of the European Union’s AI Act (European Commission 2024). While human oversight can be organized in various forms (Enqvist 2023), this paper mirrors current practitioner approaches and operationalizes it as human teachers having access to student HRI. For example, for Khan Academy’s Khanmigo, “one of the very important safeguards is that the conversation is recorded and viewable by your teacher” (Khan 2023). Putting human teachers “in-the-loop” of student-robot interactions is considered both an ethical and legal priority. Moreover, such an approach enables teaching personnel to closely monitor students’ learning processes and, thus, provide more personalized help (Hwang et al. 2020). Scholars have even proclaimed a “need for continuous human oversight” that is “indispensable and critical for the mitigation of bias and beneficial application of large language models in education” (Kasneci et al. 2023, p. 5). Thus, emerging research on HRI in education, practitioners, and policymakers unanimously call for oversight from human teachers when students interact with robot teachers.

In the present paper, we challenge this notion, arguing that while human-in-the-loop approaches have their ethical and practical merit, they may come with psychological and behavioral drawbacks that hinder students from taking full advantage of robot teachers. Specifically, we introduce the concept of robot teacher confidentiality (i.e., robot teachers not sharing interactions with the human teacher) and, drawing from impression management theory (Bolino et al. 2008), we propose that confidential robot teachers increase student usage of the technology.

We test our predictions across six studies (total N = 2,012), including field, laboratory, and online experiments with real-life physical and virtual robot teachers, employing robot teacher usage as a behavioral outcome measure. Supporting our theorizing, we first show in a laboratory setting that students make more use of both physical (Study 1) and virtual (Study S1) robot teachers that are confidential (vs. nonconfidential). We then replicate and expand these findings for virtual robot teachers in three field studies and one online experiment (Study 2–5). In particular, in Study 2 we employ Structural Topic Modeling (Hannigan et al. 2019) to inductively identify social judgment concerns and interaction anxiety as potential psychological mechanisms underlying the effect of robot teacher confidentiality. In Study 3, we provide empirical support for this mechanism and demonstrate the external validity of our findings in a field context. Studies 4 and 5 show that student prevention focus and teacher benevolence moderate the relationship between robot teacher confidentiality and robot usage, such that the effect is stronger for students with a high (vs. low) prevention focus but can be mitigated if students face a more (vs. less) benevolent teacher. A post-hoc analysis in Study 5 further reveals how cultural differences influence the effect of teacher benevolence.

By doing so, we make three key contributions to the service robot literature. First, we introduce the concept of robot confidentiality to enrich service research on HRI. Challenging prominent postulation about unanimously positive effects of putting humans “in-the-loop,” (e.g., Longoni, Bonezzi, and Morewedge 2019), our set of studies shows that students make more use of robot teachers when there is no human oversight. This allows us to move beyond the predominant focus on the direct involvement of other human actors in the service encounter (e.g., Odekerken-Schröder et al. 2022). This is important as advances in robotics make it increasingly more common that other human actors (e.g., employees) are only indirectly present during HRI in service. Second, our research provides a more holistic, human-centered view towards the mechanisms and boundary conditions explaining the consequences of robot teacher confidentiality. In particular, we identify a serial mediation of social judgment concern and interaction anxiety as the psychological mechanisms underlying the consequences of robot teacher confidentiality. Additionally, we unravel the strengthening role of students’ prevention focus and the mitigating role of teacher benevolence as key boundary conditions that affect the usage of robot teachers. Previous research has been relatively disconnected in this regard, for example, by focusing on robot or organizational characteristics driving the effects of third parties on HRI (e.g., Khoa and Chan 2023), thus overlooking the joint roles of the individual human actors for this equation. Taken together, these findings generate deeper and person-specific insights about when and why the human actors of the service triad (consisting of student, human teacher, robot teacher) shape behaviors such as robot teacher usage. Third, our study sheds light on the role of robot teacher confidentiality in education, a crucial and transformative service environment (Fisk et al. 2022). While scholars have generated insights into the role of other human actors for HRI in other service contexts, educational settings entail unique challenges regarding robot implementation (Kasneci et al. 2023). As such, we provide answers on the impact of robot teacher confidentiality in a setting that has been largely overlooked in service research (De Keyser and Kunz 2022). Collectively, we provide a theory-driven and practically relevant assessment of what putting humans-in-the-loop in student-robot teacher encounters entails.

Conceptual Background and Hypothesis Development

Related Literature and Research Gaps

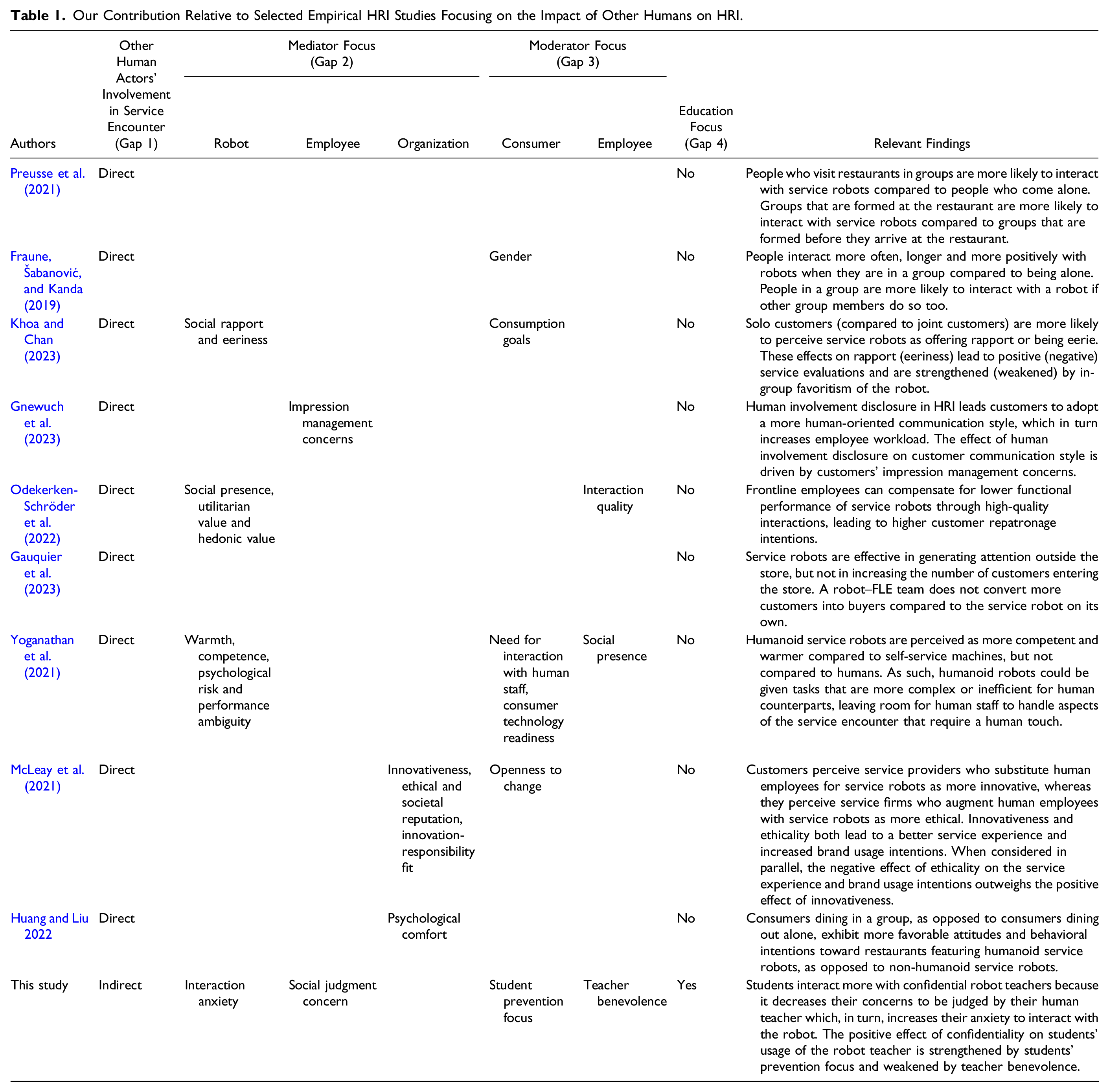

Our Contribution Relative to Selected Empirical HRI Studies Focusing on the Impact of Other Humans on HRI.

The second gap points to the psychological mechanisms explaining why other human actors influence HRI. While a few existing studies have explored mediating mechanisms, these mainly relate to consumers’ perceptions of the robot, such as its warmth, rapport, and social presence (e.g., Khoa and Chan 2023), or the entire organization (e.g., McLeay et al. 2021). As a result, explanations for why the third actor in the service triad (i.e., the human employee) affects HRI remain ill-understood (see Gnewuch et al. 2023, for an exception). In our study, we elucidate a two-stage process in which concerns to be judged by the third triad actor (i.e., the human teacher) increase anxiety to interact with the other actor (i.e., the robot teacher), in turn decreasing the usage of the robot. Thus, we provide a more direct test of the cognitive underpinnings of other human actors being present during HRI in service contexts.

Third, we provide a more holistic view of the service triad by identifying boundary conditions of human-in-the loop approaches influencing robot usage. We showcase which types of teachers have a more prominent impact on student-robot interactions, and which students are more affected by a human (teacher)-in-the-loop. Boundary effects related to personal characteristics in HRI have received limited attention in this literature stream (see Table 1). So far, research has mostly identified rather broad personal characteristics on the consumer side, such as gender (Fraune, Šabanović, and Kanda 2019), as shaping responses to robots. We extend these findings with deeper insights by highlighting the role of student prevention focus, a personality trait critical for better understanding consumer reactions (Lanaj, Chang, and Johnson 2012). Moreover, research has rarely covered how personal characteristics of the other human actor (e.g., the employee) affect HRI. We address this gap by investigating how teacher benevolence, as a crucial driver of interpersonal attitudes and actions (Sverdlik, Oreg, and Berson 2022), impacts student-robot interaction.

Finally, we contribute to the service literature by taking a deep dive into the transformative service sector of education that has largely been overlooked in HRI research. 1 Education is an essential service setting which is both in need of and struggling with digitalization and the implementation of robots and AI (Fisk et al. 2022). Due to fundamental differences between education and other service settings (e.g., consumers, that is students, must take exams to be evaluated by the provider; Díaz-Méndez and Gummesson 2012), existing literature can provide only limited guidance and insights for successful HRI in this domain. Hence, a better understanding of HRI in education and the impact of teacher-in-the-loop approaches on student-robot interactions is urgently needed.

In summary, a deep examination of the literature reveals substantial gaps in scholarly understanding of how other human actors impact HRI. The present research tackles these.

Teacher-in-the-Loop

Social context shapes the way in which people behave and interact (Argo and Dahl 2020). For HRI, one crucial component of the social context is the so-called human-in-the-loop (i.e., a human who monitors or oversees the HRI). So far, the literature on AI algorithms and robots has assumed that having such a human-in-the-loop makes consumers more at ease when using the technology. For instance, Longoni, Bonezzi, and Morewedge (2019) show that consumer resistance against medical AI can be mitigated if a human doctor remains in charge of making the final decision. The assumption that consumers need or want human supervision in their HRI is similarly acknowledged amongst policymakers and managers. For example, the European Commission advocates for the implementation of human-in-the-loop approaches in Article 14 of its AI Act (European Commission 2024). Similarly, Khan Academy integrated a human-in-the-loop approach for its ChatGPT-based robot teacher Khanmigo (Khan 2023).

In educational settings, such approaches are termed “teacher-in-the-loop.” Researchers have proposed that oversight over student-robot interactions allows human teachers to better understand the students’ learning processes and offer more personalized support (Hwang et al. 2020). Further, many have argued that teachers need to be involved due to various forms of AI bias and given that humans generally perceive robots to lack empathy and skills regarding ethical questions (Akter et al. 2021). In sum, the “human-in-the-loop is often advocated as a panacea against concerns about AI-powered machines” (Krügel, Ostermaier, and Uhl 2023, p. 1). To complement this perspective, we introduce the concept of robot teacher confidentiality and showcase psychological downsides of teachers in-the-loop.

Robot Teacher Confidentiality

We argue that robot teacher confidentiality positively impacts student usage of robot teachers. In line with existing research, we define confidentiality as “the perceived ability to carry out an externalization tactic that restricts the information flow in terms of what is disclosed and who gets to see it” (Dinev et al. 2013, p. 301). In our research, we operationalize robot teacher confidentiality (vs. nonconfidentiality) as the robot teacher making the interactions between the students and the robot unavailable (vs. available) to the human teacher.

Consumer research shows that merely having a social audience can negatively impact people’s attitudes and behaviors (Argo and Dahl 2020). For example, the presence of others makes retail shoppers avoid actions that may project a negative image (Argo, Dahl, and Manchanda 2005) and makes people more cautious during HRI (Gnewuch et al. 2023). These findings are mirrored in impression management theory. This theory suggests that people are deeply concerned with how others view them (Leary and Kowalski 1990), making them adjust their behaviors strategically to portray a positive version of themselves and avoid negative judgment by others (Bolino et al. 2008). Impression management concerns are activated by a social audience (Leary and Kowalski 1990), which we argue a human teacher-in-the-loop represents. Importantly, people often feel that they can more freely disclose themselves when interacting with a robot or AI (Kim et al. 2022). Yet, such care-free attitudes vanish when they know that their actions can be observed by another human (Raveendhran and Fast 2021). In our context, this means that students would not want to be perceived as incompetent or as lacking relevant knowledge. As a result, they may alter their interactions with a nonconfidential robot teacher (i.e., when a human teacher is in-the-loop; cf. Gnewuch et al. 2023). For example, they might refrain from asking questions or seeking additional input or help, as they worry it signals inadequacy (Aleven et al. 2003). On this basis, we postulate that robot teacher nonconfidentiality leads students to reduce their usage of the robot teacher.

By contrast, we argue that a confidential robot teacher makes students feel like they can interact freely and utilize the robot without having to worry. Especially in education, feeling free and not anxious means that students ask more questions and are more likely to voice a lack of understanding (Ryan, Pintrich, and Midgley 2001). Thus, we hypothesize:

Students will make more use of a confidential (vs. nonconfidential) robot teacher.

Empirical Overview

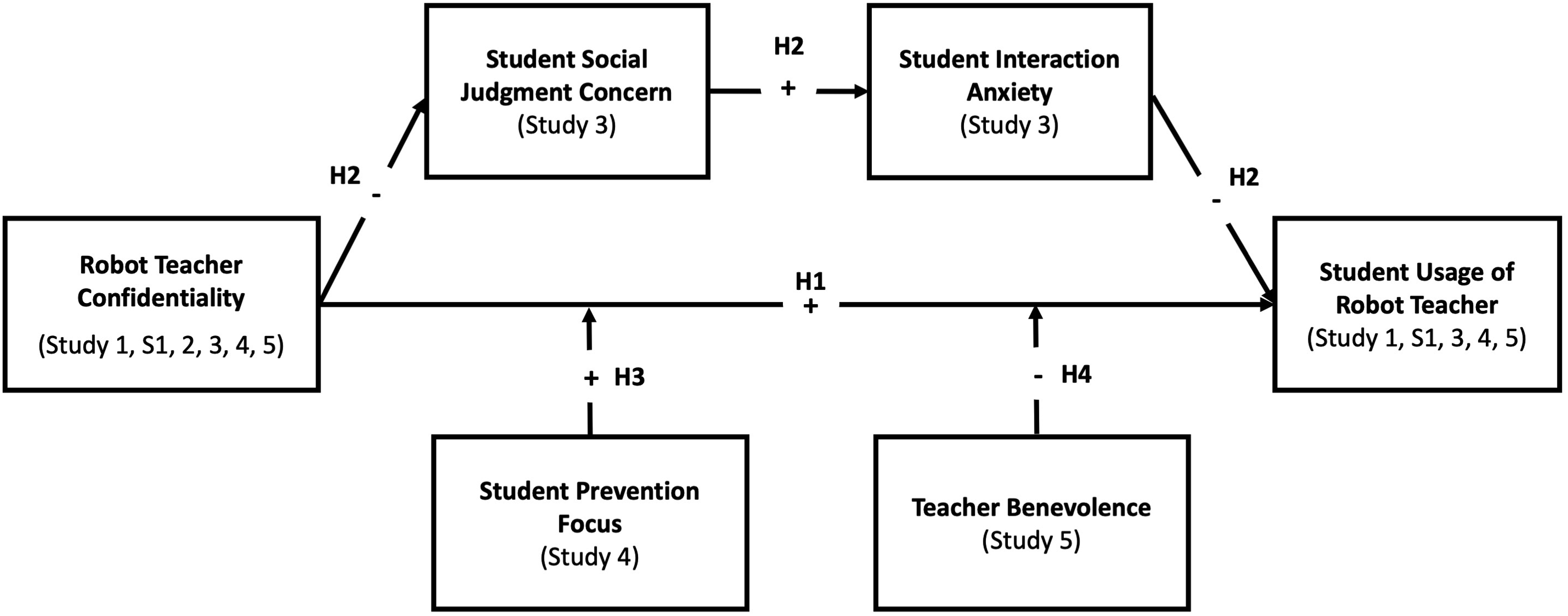

To investigate the effect of robot teacher confidentiality on student usage of the robot teacher, we conducted six complementary studies that employed different methodological approaches (i.e., two laboratory experiments, one qualitative field study, two field experiments, and one online experiment) and measure usage behaviorally. First, we provide proof-of-concept for the effect of robot teacher confidentiality in a laboratory experiment with a physical robot teacher (Study 1, N = 110) and extend these effects to virtual robot teachers (Study S1, N = 128). Leaving the laboratory context, we conducted a qualitative field study to inductively identify potential psychological mechanisms underlying the observed relationship as well as key boundary conditions using state-of-the-art topic modeling (Study 2, N = 87). We find support for the mediating mechanisms of social judgment concerns and interaction anxiety in a large field experiment (Study 3, N = 1,128). Finally, in another field experiment (Study 4, N = 247) and an online experiment (Study 5, N = 312), we show that the relationship between robot teacher confidentiality and student usage is moderated by student prevention focus (Study 4) and teacher benevolence (Study 5). Figure 1 shows our conceptual framework. Conceptual framework.

Study 1

The purpose of Study 1 was to investigate whether students indeed make more use of a confidential (vs. nonconfidential) robot teacher within a controlled laboratory setting. Using various university communication channels, 120 participants were recruited to take part in an “educational study.” Each participant received €15 as compensation for their time. In line with recommendations for dealing with outliers (Aguinis, Gottfredson, and Joo 2013; Coxe, West, and Aiken 2009), we removed 4 participants from the dataset that had an excessively high impact on the estimated coefficients (as indicated by their DFBETAs). In addition, six participants were excluded because they did not carefully read the instructions, leaving a final sample of 110 participants (M age = 22.57; 58% female). The study employed a two-cell (Robot teacher confidentiality: confidential vs. nonconfidential) between-subjects design.

Procedure

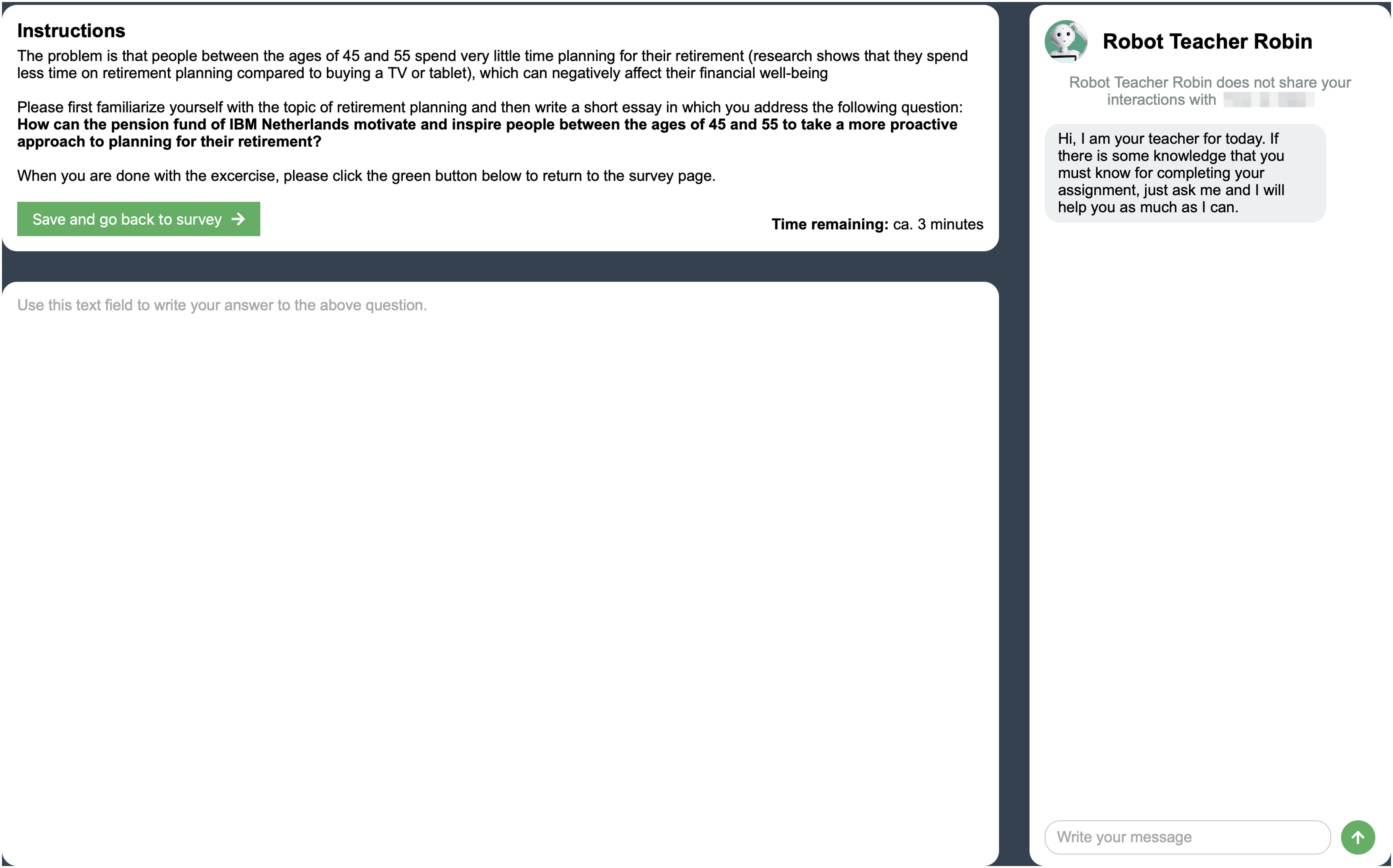

To increase relevance and validity of the study, participants were asked to help a marketing professor at their university with a real-life business challenge for an actual client of the institution’s scientist-practitioner hub. In addition, we truthfully 2 told participants that the five best solutions would be pitched to the client and that the respective students would each receive a €20 Amazon gift voucher. Thereafter, we gave participants 12 minutes to work on the assignment which required students to ideate how a European pension fund could better motivate people to more proactively plan for retirement (see Web Appendix A-1).

Participants were informed that they could interact with and get help from Robot Teacher Temi. Temi is a 100 cm tall, autonomous service robot that can independently move through rooms. In addition, it has a built-in camera, voice, and audio systems (Figure 2). To give the robot natural language communication capabilities, we ran a specifically instructed GPT-3 model on the robot (see Web Appendix B-1). This GPT-3 model was “fed” with relevant background information so that it could provide participants with tailored guidance on the task as well as engage in sophisticated conversations through natural language generation (Floridi and Chiriatti 2020). Participants were randomly assigned to one of two conditions. In the confidential condition, participants were informed that the robot teacher would not share their interactions (e.g., questions and prompts) with the professor. In the nonconfidential condition, participants were informed that the robot teacher would share their interactions with the professor. This information was provided in written form before starting the assignment. In addition, this information was made specifically visible throughout the experiment on a lanyard worn by the robot around its neck (Figure 2). Robot Teacher Temi.

Measures

The dependent variable of the experiment was how much students made use of the robot teacher. This was operationalized as the number of prompts (e.g., questions or commands) posed to the robot teacher. We relied on this measure as prompts are the only way to use the robot teacher, therefore reflecting and quantifying actual usage. Such an operationalization has also been employed by previous studies on HRI in education (e.g., Klos et al. 2021).

Results

A manipulation check (“Robot Teacher Robin will keep our interactions confidential” (1 = Strongly disagree, 7 = Strongly agree) showed that participants perceived the confidential robot as significantly more confidential than the nonconfidential robot (M confidential = 5.89, M nonconfidential = 2.98, t(92) = −10.34, p < .001, d = −1.94).

Given that the number of prompts to the robot teacher takes the form of count data, a generalized linear model with a Poisson distribution was used to analyze the relationship between robot confidentiality and the number of interactions (Crawley 2012). 3 Supporting H1, the results show that students made more use of the confidential than the nonconfidential robot teacher (b = 0.16, SE = 0.07, p = .03). More specifically, in the confidential condition, participants sent M = 7.30 messages on average, whereas they sent M = 6.25 messages in the nonconfidential condition. For all studies, please see Web Appendix C for detailed descriptive statistics and Web Appendix D for detailed analysis outputs.

Discussion

Study 1 provides initial support for our hypothesis that students make more use of confidential (vs. nonconfidential) robot teachers. Yet, while our study employed a physical robot teacher, most existing robot teachers, for example, at Khan Academy (Khan 2023) and Harvard University (Phelan 2023) are virtual. In fact, virtual robot teachers offer many advantages, such as the ability to provide personalized service to many students at once and at low cost or to be instantly available and accessible outside of the physical classroom (Wirtz et al. 2018). Given that virtual robots might evoke different reactions among users compared to physical robots (e.g., Blut et al. 2021), generalizing our findings to virtual robots is thus conceptually relevant, allows for large-scale data collection, and increases external validity due to its alignment with robot teachers in practice. Therefore, in the following studies, we investigate how students react to virtual robot teachers. We note that before these studies, we conducted a lab study in which we replicated the findings from Study 1 with a virtual robot teacher (Study S1; Web Appendix E).

Study 2

Study 2 aimed to identify key psychological mechanisms and boundary conditions to the observed relationship between robot teacher confidentiality and usage of the robot teacher with a qualitative methodology. In contrast to the other studies in this research, Study 2 takes an inductive approach trying to identify relevant variables and relationships first. Moving from a laboratory to a field context, Study 2 took place during a regular course meeting of an undergraduate marketing course at a European university. 89 students participated in the study (M age = 22.21; 63% female). Two students were removed from the sample because they violated the instructions by using third-party generative AI tools (i.e., ChatGPT) for completing the assignment or the questionnaire, leaving a final sample of 87 respondents. As an additional incentive for students, we awarded the five students who provided the best solutions with €20 Amazon gift vouchers. The study employed a two-cell (Robot teacher confidentiality: confidential vs. nonconfidential) between-subjects design.

Procedure

Throughout their marketing course, students worked on a group assignment for a European telecommunications provider which required them to identify and pitch potential areas for a product portfolio expansion. This group assignment constituted 40% of the students’ final course grade. In addition, the best teams would pitch their ideas directly to the company. The experiment took 1 hour, of which students were given 40 minutes to develop five potential ideas for the company’s product portfolio expansion, argue their feasibility and impact, and ultimately rank the ideas from best to worst (Web Appendix A-2).

As in Study S1, students had the option to make use of a virtual robot teacher named Robin (Figure 3). Yet, in this study we used OpenAI’s ChatGPT model for powering the robot teacher, as this model promises better conversational capabilities compared to GPT-3. Again, this model was provided with task-relevant background information (see Web Appendix B-2). We randomly assigned participants to either the confidential or nonconfidential condition and truthfully informed them that their human teacher would (not) be able to see their interactions with the robot teacher. Interface for Robot Teacher Robin.

Our experiment was scheduled during an in-class meeting at the beginning of the course. Besides introducing the assignment by following a prewritten script (Web Appendix F), the human teacher was instructed to minimally interact with students. Students were only informed about the robot teacher when reading the instructions of the assignment (Web Appendix A-2). These instructions made clear that using the robot teacher was optional.

Immediately after working on the assignment, we asked students to reflect on the interactions they had with Robot Teacher Robin in a short, structured online interview. Students had about 20 minutes to answer several open-ended questions in written form. Participants were asked to answer how it made them feel and affected their behavior that Robot Teacher Robin did (not) share their interactions with their human teacher (see Web Appendix G for the full list of questions). Students were required to type at least 250 characters for each question to generate deep insights that are sufficient for the topic modeling approach described below (Banks et al. 2018; van der Velde and Gerpott 2023).

Analysis

To identify patterns in student perceptions and behavior, we conducted a Structural Topic Modeling Analysis. This type of analysis enables a “bottom-up,” inductive analysis of qualitative data. This analysis follows an approach based on an unsupervised machine learning algorithm that captures clusters of words that co-occur together (Banks et al. 2018). Compared to traditional qualitative methods, topic modelling allows for an automated and data-driven process that facilitates more replicable and objective qualitative data analysis and avoids drawbacks of human coding such as low interrater reliability (Schmiedel, Müller, and vom Brocke 2019). In our analyses, we followed recent examples of structural topic modeling (e.g., van der Velde and Gerpott 2023), best practice guidelines by Banks et al. (2018), and we utilized the R stm package (Roberts, Stewart, and Tingley 2019). For a detailed description of this approach, data preparation, the topic model estimation and the interpretation process, we refer to those sources and Web Appendix H.

Results

Confidential Condition

For the confidential condition (i.e., the robot teacher not sharing student interactions), the topic modeling analysis reveals that student experiences can be grouped into three topics (see Web Appendix I, Table I-I for topic labels, descriptions, FREX, and key quotes). Mirroring our theoretical arguments and our previous findings, two of these themes indicate that students made more use of the robot teacher because it was confidential. Students in the “feeling free to share ideas and thoughts” theme (Theme #1a) describe how they felt more “confident” to interact with the robot teacher because nobody would be able to oversee and excoriate them. For example, one student noted that “I was more willing to share my thoughts without any filters or restrictions. I wrote any ideas that went through my mind because I knew I would reflect on them without being judged.” They further describe how this made them engage with the robot teacher more. Reflections within the “assured to ask questions” theme (Theme #2a) focus more specifically on the act of asking questions, describing how students felt “at ease” and less “worried” to ask any questions they had to the robot teacher, without being concerned that their human teacher would find their questions “stupid.” One student, for example, said that “it makes me feel much more assured and at ease to ask any questions to Robin. As any shallow or dumb questions wouldn’t be shared and I can feel free to ask any confusing in mind. As I’m worried the tutor might assess the quality of the questions I asked.” The third theme, labeled as “indifferent about robot confidentiality” (Theme #3a), indicates that not all students were impacted by the robot teacher’s confidentiality. Reflections within this theme show that, instead, there are students that state that they would be fine with their human teacher reading the conversation, some even hoping to benefit from their human teacher reading their interactions: “I don’t really care if the information got shared or not. [..] In fact, it doesn’t matter at all if our tutor saw the conversation or not, maybe he would have some extra input even.”

Nonconfidential Condition

For the nonconfidential condition, three themes emerge that largely mirror the themes observed for the confidential condition (Web Appendix I, Table I-II). In particular, in reflections belonging to the “worried about being judged” theme (Theme #1b), students describe how they felt “uncomfortable” about interacting with the robot teacher and were anxious to be evaluated or judged based on the questions they asked. They further report how this made them use the robot teacher less. For instance, one student noted that “I asked only basic questions because I was afraid to be evaluated based on the questions I asked.” Similarly, statements within the “limiting use to protect privacy” theme (Theme #2b) describe how some students did not feel “comfortable” that the robot teacher shared their interactions, appearing anxious about the idea of other people having access to this type of data. Again, students report not having used the robot teacher as much as they would normally have. For example, one student noted that “I would prefer if it didn’t share my interactions with my tutor, as I like to have some privacy. I really like privacy and I don’t feel comfortable if this information is shared with my tutor.” Lastly, some students reported not having been negatively impacted by the robot teacher sharing their interactions. On the contrary, reflections within the “benefits of nonconfidential robot teachers” theme (Theme #3b) mentioned varying advantages of their human teacher seeing the interactions. For example, students reported to have thought more for themselves or hoping to receive more personalized feedback from the human teacher. Or in the words of one of the participants: “The idea that Robot Teacher Robin is going to share my interactions with the tutor made me think more individually than just take over the ideas from the Robot.”

Discussion

The topic modeling analyses provide three main insights. First, many students report that a confidential robot teacher encouraged them to interact with the robot teacher freely and intensively, while a nonconfidential robot teacher made them avoid using it. This is in line with our main argument that confidential robot teachers come with positive psychological and behavioral benefits. Second, many students appear to respond in a less anxious way to the confidential robot teacher because this confidentiality makes them less worried that they will be judged by their human teacher. In contrast, students describe how they avoid using a nonconfidential robot teacher since the nonconfidentiality makes them feel concerned about interacting with the robot because they are worried to be judged by their human teacher. Consequently, concerns of social judgment (Kim et al. 2022) as well as technology-induced interaction anxiety (Spake et al. 2003) appear to be sequential potential mechanisms underlying the observed negative effect of robot teacher nonconfidentiality. We will investigate these mechanisms in the following in more detail. Lastly, a substantial number of students (ca. 38%) reported having no negative attitudes or even seeing value in nonconfidential robot teachers. This suggests that there may be important boundary conditions. For example, in terms of students’ personality traits, students that are less anxious, afraid of disapproval by others, or of making mistakes more generally, may be less susceptible to concerns of negative social judgment. As explained further below, we integrate insights from impression management theory (Kacmar and Tucker 2016) with regulatory focus theory (HigginsTory and Cornwell, 2016) to suggest that students’ prevention focus may moderate our main hypothesis. In addition, some students showed confidence in their human teacher to additionally help (rather than judge) them if they could see their interactions with the robot teacher. Therefore, we argue that if the human teacher appears to be benevolent (i.e., seems to care about their best interests and be fair; Bock, Folse, and Black 2016), this might further moderate the impact of robot teacher confidentiality. Based on the inductive insights obtained from structural topic modeling, we next formulate three additional hypotheses, which we further test deductively in Studies 3 to 5.

The Mediating Roles of Social Judgment Concern and Interaction Anxiety

The topic modeling analysis in Study 2 provides qualitative assurance for our earlier argumentation that students adapt and restrict their behavior when interacting with nonconfidential robot teachers. Yet, more importantly, Study 2 additionally sheds light on the potential psychological mechanisms underlying this behavioral adjustment. More specifically, students who are confronted with (non)confidential robot teachers seem to experience a two-stage psychological process that focuses on a different actor of the service triad in each stage of this process. First, considering the human counterpart (i.e., teacher), (non)confidential robot teachers appear to reduce (increase) students’ concerns to be socially judged by their human teacher (i.e., the employee in the service triad). Subsequently, these social judgment concerns by their human teacher are transferred to the other actor in the service triad (i.e., the robot teacher) because it makes students more anxious to interact with the robot teacher. Finally, this anxiety to interact with the robot may inhibit robot teacher usage. Based on these insights, we therefore argue that student social judgment concern and, in turn, interaction anxiety can help explain the relationship between robot teacher confidentiality and students’ usage of the robot teacher. We describe our reasoning for this serial mediation in more detail below.

Impression management theory suggests that people have a fundamental desire to project and maintain a positive self-image to others. As a result, social judgment concerns may force individuals to avoid interactions that can lead to negative impressions (Bolino et al. 2008). Social judgment concerns are worries to be negatively evaluated by others and have been shown to be activated by the presence of other human actors in embarrassing or sensitive service encounters (Kim et al. 2022). By contrast, people experience reduced fears of social judgment in HRI because they believe that robots do not engage in human-like social evaluations (Raveendhran and Fast 2021). In an education context, many students feel they signal inadequacy when asking questions or seeking help (Aleven et al. 2003) and worry about subsequent judgment from their teacher (Ryan, Pintrich, and Midgley 2001). Therefore, we argue that students exhibit higher (lower) social judgment concern when their human teacher can(not) oversee their student-robot interactions. We argue that such social judgment concerns do not directly transfer effects of confidentiality but rather precede technology-induced interaction anxiety, which ultimately affects usage of robot teachers.

Anxiety is a negative emotion linked to worries and tension, representing the polar opposite to comfort (Spake et al. 2003). Interaction anxiety entails feeling uncomfortable or worried about an upcoming or ongoing interaction. Considering the presence or absence of another human, research suggests that people may feel less anxious when solely interacting with a robot because they are less worried that they are being judged (Kim et al. 2022). Introducing human social presence into (robot assisted) service interactions can elicit feelings of discomfort (van Doorn et al. 2023). Worries about being negatively judged by humans may thus increase interaction anxiety relating to a robot (Gnewuch et al. 2023). As such, we argue that concerns to be judged on their interactions by one actor (i.e., human teacher) affect students’ anxiety to interact with the other actor in the service triad (i.e., robot teacher).

In turn, feeling anxious about interactions can have a profound impact on people’s behavior. In general, research has shown that feeling anxious makes people often refrain from productive behaviors during interactions, such as exchanging ideas or asking for support (Ryan, Patrick, and Shim 2005). In education, in particular, it is a seminal notion that feelings of anxiety reduce help-seeking from potentially helpful sources like peers or teachers (Russell and Topham 2012). Therefore, we expect that students will ask less questions and share less ideas with a robot teacher when they feel anxious about interacting with it.

Thus, employing an impression management lens and considering existing research on social judgment concerns and technology-induced anxiety, we argue the following: students experience more (less) social judgment concern when the robot teacher shares (does not share) their interactions with their teacher, in turn increasing (reducing) students’ interaction anxiety, ultimately making students use the robot less (more).

The effect of robot teacher confidentiality (vs. nonconfidentiality) on usage of the robot teacher is serially mediated by social judgment concern and interaction anxiety.

The Moderating Roles of Student Prevention Focus and Teacher Benevolence

The topic modeling further shows that not all students were impacted equally by the robot teacher (not) sharing their interactions. We hypothesize two key boundary conditions that moderate this relationship, namely, students’ prevention focus and teacher benevolence. The inclusion of student prevention focus as a potential moderator is based on its identification in prior studies as a key trait influencing individuals’ fear of being perceived negatively (Lanaj, Chang, and Johnson 2012). In contrast to a promotion focus which is associated with achieving gains, a prevention focus is concerned with avoiding losses (HigginsTory and Cornwell James, 2016). Importantly, prevention focus is seen as a key factor in shaping impression management concerns (Kacmar and Tucker 2016) and has been found to be a relevant factor determining service situations (Lechner and Mathmann 2021) and HRI (Baek and Kim 2023). We focus on prevention focus due to its strong relationships with anxiety and fear of negative judgment (Lanaj, Chang, and Johnson 2012).

We suggest that the positive relationship between robot teacher confidentiality and student-robot interaction intensity is strengthened by students’ prevention focus. A stronger prevention focus implies a concern for safety, causing people to screen their environment for cues that might indicate insufficient security in interactions (Winterheld and Simpson 2011). Highly prevention-focused individuals behave in ways that aim to avoid failures, inaccuracies, and miscues (Lanaj, Chang, and Johnson 2012). In terms of impression management tactics, past research has shown that prevention-focused individuals actively try to avoid behavior that could result in negative judgment from others (Kacmar and Tucker 2016). As such, for students with a stronger prevention focus, robot teacher (non)confidentiality would be a primary concern driving how they choose to interact with the robot, making them restrict conversation with a robot teacher if their human teacher could view these. By contrast, students with a lower prevention focus would be less cautious and engage in potentially productive behaviors even if they may fail or look bad in front of others (Pham and Higgins 2005). Therefore, even if a robot teacher shares their interactions with the human teacher, these individuals will openly ask questions. Hence, we suggest that:

The relationship between robot teacher confidentiality and usage of the robot teacher is moderated by student prevention focus, such that this relationship is stronger for students with a high (vs. low) prevention focus.

Not only student but also (human) teacher characteristics might influence how robot teacher confidentiality impacts student usage. Considering the topic modeling results above and insights from impression management theory, we focus here on the concept of benevolence. Benevolence has been conceptualized in an education context as the assurance that a teacher has the students’ best interests at heart and will protect them by demonstrating goodwill, empathy, and fairness (Hoy and Tschannen-Moran 1999). Benevolence plays a key role in determining how people navigate consumer and service situations (Bock, Folse, and Black 2016; White 2005). Benevolent people create a trusting environment and make others feel safe and supported (Zhang, Li, and Liu 2023). The education literature highlights the role of teacher benevolence for shaping students’ behavior, allowing them to be comfortable in interactions and more freely asking questions (Ryan, Pintrich, and Midgley 2001). Therefore, we propose the human teacher’s perceived benevolence as a potential boundary condition.

We expect students to be less worried about being judged by their human teacher if they perceive this teacher to be benevolent. If students feel that their teacher puts genuine emphasis on their students’ well-being and success, they may feel that they can freely ask the robot teacher questions without the teacher negatively judging them. This expectation is in line with research showing that impression management concerns are less salient if students have a good relationship with their teacher (Snijders et al. 2022). By contrast, we expect that a nonbenevolent teacher increases impression management concerns. In particular, a teacher that is perceived to be less interested in doing good for their students creates a climate in which students are afraid of asking questions that may signal lack of knowledge or skills (Ryan, Patrick, and Shim 2005). Hence, we hypothesize that:

The relationship between robot teacher confidentiality and usage of the robot teacher is moderated by teacher benevolence, such that this relationship is weaker for students with a benevolent (vs. nonbenevolent) teacher.

Study 3

The purpose of Study 3 is two-fold. First, the study seeks to demonstrate the external validity of our earlier findings in a field experiment. Second, the study seeks to provide support for the proposed underlying serial mediation of social judgment concern and interaction anxiety. The field experiment was conducted in an undergraduate marketing course at a European university. 1,243 students participated in the experiment, working on an individual Pass/Fail assignment during a regular course meeting. Excluding participants who failed the attention check, whose response time deviated more than three standard deviations from the mean (Berger and Kiefer 2021), or who had an excessively high impact on the estimated coefficients (as indicated by their DFBETAs; Aguinis, Gottfredson, and Joo 2013; Coxe, West, and Aiken 2009), we sampled 1,128 students (M age = 18.71; 37% Female). We again used a two-cell (robot teacher confidentiality: confidential vs. nonconfidential) between-subjects design.

Procedure and Measures

The experiment took place during a regular group meeting of an introductory business course. During this group meeting, students worked on an individual self-reflection assignment. In the assignment, students were asked to reflect on their performance in the course as well as identify concrete actions they could take to improve their performance (Web Appendix A-3). Students had 20 minutes to complete the assignment and received instructions from their human teacher, similar to those in Study 2.

Students could again make use of the virtual robot teacher Robin and were randomly assigned to one of two conditions (i.e., nonconfidential vs. confidential). Again, the robot teacher was powered by an instructed ChatGPT model (see Web Appendix B-3). We measured student usage of the robot teacher as the number of messages sent to it. In addition, social judgment concern was measured with an 8-item scale adapted from Carleton, Collimore, and Asmundson (2007; e.g., “I worried about what my tutor would be thinking of me”; 1 = Strongly disagree, 7 = Strongly agree; α = 0.96”) and interaction anxiety with a shortened version of the scale developed by Spake et al. (2003; “When (thinking about) sending messages to Robot Teacher Robin, I felt: Worry free – Worried, Very much at ease – Very uneasy, Secure – Insecure, Comfortable – Uncomfortable”; α = 0.93). 4

Results

The same manipulation check as before indicated that students perceived the confidential robot as significantly more confidential than the nonconfidential robot teacher (M confidential = 5.37, M nonconfidential = 4.18, t(1,080) = −12.35, p < .001, d = −0.73).

To assess the direct effect of robot teacher confidentiality on student usage, we again used a generalized linear model with a Poisson distribution. Confirming our theorizing and replicating our earlier findings, the results of the analysis show that students interacted more with the confidential (vs. nonconfidential) robot teacher (b = 0.45, SE = 0.09, p < .001). More specifically, in the confidential condition, participants sent on average M = 0.56 messages, whereas they sent on average M = 0.36 messages in the nonconfidential condition.

To test the hypothesized serial mediation, we used MPlus (Muthén and Muthén 2017). The results show a significant direct effect of confidentiality (b = 0.36, SE = 0.09, p < .001) and, importantly, a significant indirect effect through social judgment concerns and interaction anxiety (b = 0.03, SE = 0.01, p < .001). As such, confidentiality reduced social judgment concerns, which in turn reduced interaction anxiety, leading to higher usage of the robot teacher. Thus, Study 3 supports H2.

Discussion

The insights gained in Study 3 are two-fold. First, the study replicates the findings of the previous studies in a field context, demonstrating their external validity. In fact, this time we show that our findings replicate even if major outcomes related to student success were at stake and when students operated in a naturalistic environment. Second, the study empirically validates the proposed serial mediation. More specifically, Study 3 shows that a confidential (vs. nonconfidential) robot teacher reduced social judgment concerns, which subsequently decreased interaction anxiety which, in turn, led to more student usage of the robot teacher.

Study 4

Focusing on the first proposed boundary condition, the purpose of Study 4 was to investigate whether students with a high (vs. low) prevention focus react more strongly to a confidential robot teacher. We conducted a field experiment in a lecture of an Entrepreneurship course at a European university. After excluding students who failed the attention check, whose response time deviated more than three standard deviations from the mean (Berger and Kiefer 2021), or who had an excessively high impact on the estimated coefficients (as indicated by their DFBETAs; Aguinis, Gottfredson, and Joo 2013; Coxe, West, and Aiken 2009), our final sample included 247 participants (M age = 20.54; 45% female). As an additional incentive, the students with the five best solutions received a university-branded T-Shirt. We utilized a two-cell (robot teacher confidentiality: confidential vs. nonconfidential) between-subjects design.

Procedure and Measures

During their Entrepreneurship course, students worked on a final course assignment which required them to create a start-up idea. This project accounted for 35% of their overall grade. We scheduled the experiment during a lecture, asking students to identify the biggest potential threat to their start-up’s long-term success and coming up with a potential solution for this threat. They were given 20 minutes to complete the assignment and received instructions from their human teacher like those in Studies 2 and 3. Students again had the option to make use of a virtual robot teacher named Robin. This robot teacher was powered by OpenAI’s ChatGPT model (Web Appendix B-4) and was accessible directly in the online environment through which they submitted their assignment. Students were randomly assigned to one of two conditions (i.e., confidential vs. nonconfidential).

We again measured student usage of the robot teacher as the number of messages sent to it. Student prevention focus was measured using a 7-item, shortened version of a commonly used scale (Lockwood, Jordan, and Kunda 2002; e.g., “In general, I am focused on preventing negative events in my life”; 1 = Strongly disagree, 7 = Strongly agree; α = 0.76).

Results

As a synonym for confidentiality, we asked students to indicate as how discreet they perceived the robot teacher (i.e., “I perceive Robot Teacher Robin to be…”; 1 = Very indiscreet, 10 = Very discreet). This manipulation checked showed that students perceived the confidential robot as significantly more discreet than the nonconfidential robot teacher (M confidential = 6.77, M nonconfidential = 5.61, t(215) = −3.91, p < .001, d = −0.51).

In accordance with the results of the previous studies, a generalized linear model with a Poisson distribution showed that students made significantly more use of the confidential (vs. nonconfidential) robot teacher (b = 1.26, SE = 0.25, p < .001). More specifically, in the confidential condition, participants sent on average M = 0.62 messages, whereas they sent on average M = 0.18 messages in the nonconfidential condition.

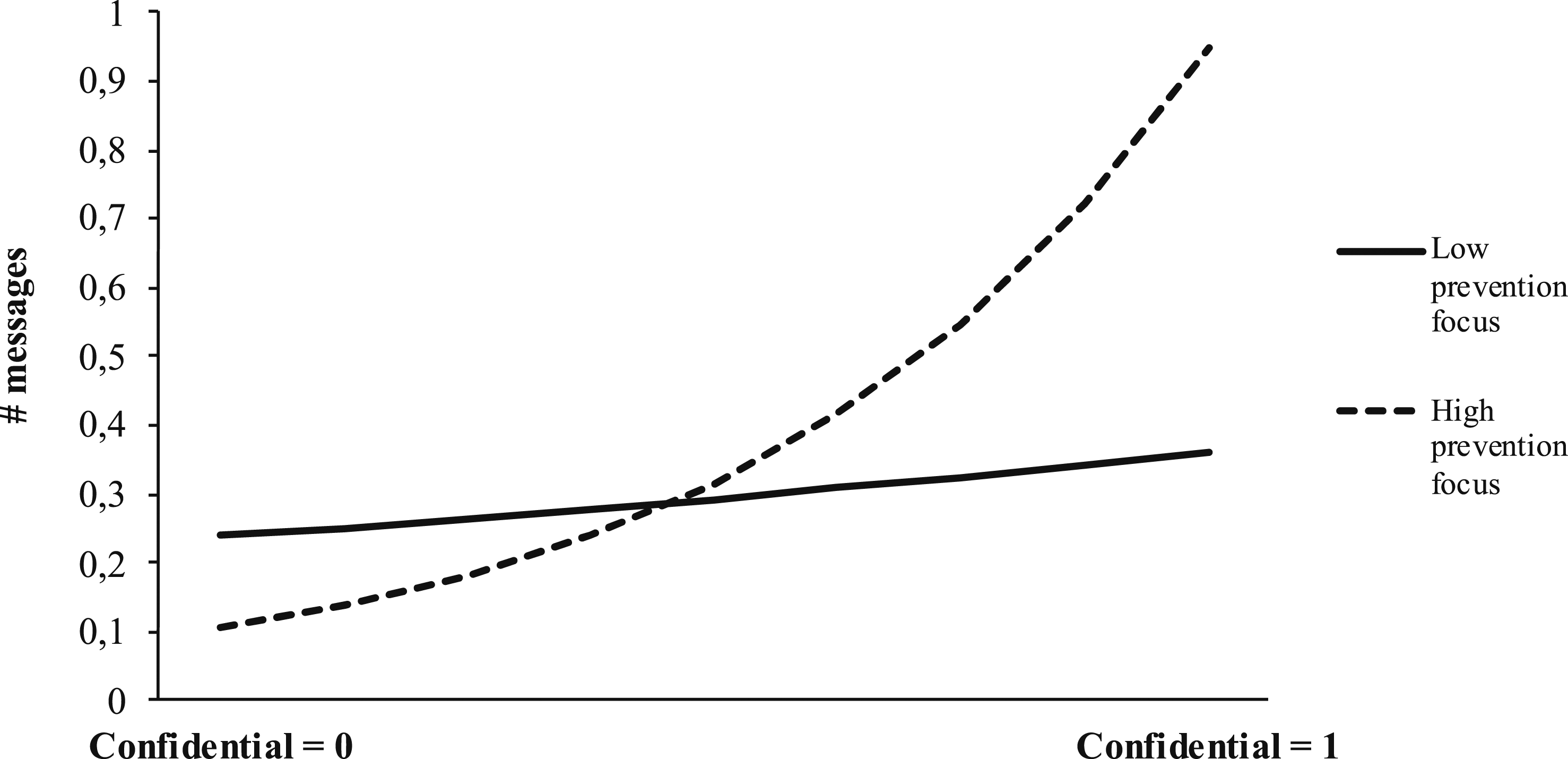

To test for a moderating effect, we added the (mean-centered) student prevention focus construct along with the robot confidentiality x student prevention focus interaction term to the model. The results of this analysis show significant main effects for robot teacher confidentiality (b = 1.31, SE = 0.29, p < .001) and prevention focus (b = −0.45, SE = 0.20, p = .03). Moreover, the interaction effect was also significant (b = 0.90, SE = 0.24, p < .001). Figure 4 depicts the number of messages at two levels (i.e., low and high) of prevention focus. As can be seen, the effect of robot teacher confidentiality was significant and positive for students with a stronger prevention focus (+1 SD; b = 2.21, SE = 0.43, p < .001), while it was not significant for students with a lower prevention focus (−1 SD; b = 0.40, SE = 0.32, p = .21). This supports H3. Moderation analysis results for Study 4.

Discussion

Employing a large-scale field experiment, Study 4 replicates the findings of the previous studies by showing that students make more use of a confidential (vs. nonconfidential) robot teacher, further strengthening the reliability and external validity of our results. Importantly, the study further shows that this negative effect is stronger (weaker) for students with a higher (lower) prevention focus. This way, Study 4 highlights that students with various personalities react differently to robot teacher confidentiality.

Study 5

Turning to teacher characteristics, Study 5 examined the moderating role of teacher benevolence. We recruited a sample of 399 respondents via Prolific. To ensure fit with our focus on the educational sector, we required participants to be currently enrolled as students. In addition, as the assignment required students to be proficient in the English language, we restricted our sample to native English speakers from (1) the UK and (2) the US. After excluding students who failed the attention check, whose response time deviated more than three standard deviations from the mean (Berger and Kiefer 2021), or who had an excessively high impact on the estimated coefficients (as indicated by their DFBETAs; Aguinis, Gottfredson, and Joo 2013; Coxe, West, and Aiken 2009), 312 respondents remained (M age = 31.38; 47% female). As an incentive, the top 15% of solutions were awarded an additional $1 compensation. The study implemented a 2 (robot teacher confidentiality: confidential vs. nonconfidential) × 2 (human teacher benevolence: benevolent vs. nonbenevolent) between-subjects design.

Procedure and Measures

Participants were given 10 minutes to work on the same assignment we used for Study 1 (see Web Appendix A-5) and could again make use of the ChatGPT-powered virtual robot teacher named Robin (see Web Appendix B-5). Participants were randomly assigned to either the confidential or nonconfidential robot teacher condition and to either the benevolent or nonbenevolent human teacher condition. In line with prior work (e.g., White 2005), fictitious, yet realistically looking student evaluations introduced the benevolent (nonbenevolent) human teacher as (not) being caring, fair, and interested in the students’ well-being (see Web Appendix A-5). We again measured student usage of the robot teacher as the number of messages sent to it.

Results

Our manipulation check (i.e., “Robot Teacher Robin will keep our interactions confidential”; 1 = Strongly disagree to 7 = Strongly agree) shows that students perceived the confidential robot as significantly more confidential than the nonconfidential robot teacher (M confidential = 6.29, M nonconfidential = 2.49, t(238) = 22.25, p < .001, d = 2.48). In addition, a second manipulation check (Levine and Schweitzer 2014; i.e., “Prof X has good intentions” and “Prof X is kind”, 1 = Strongly disagree, 7 = Strongly agree, α = 0.98) shows that the benevolent teacher was perceived as significantly more benevolent than the nonbenevolent teacher (M benevolent = 6.30, M nonbenevolent = 2.11, t(255) = 35.57, p < .001, d = 4.16).

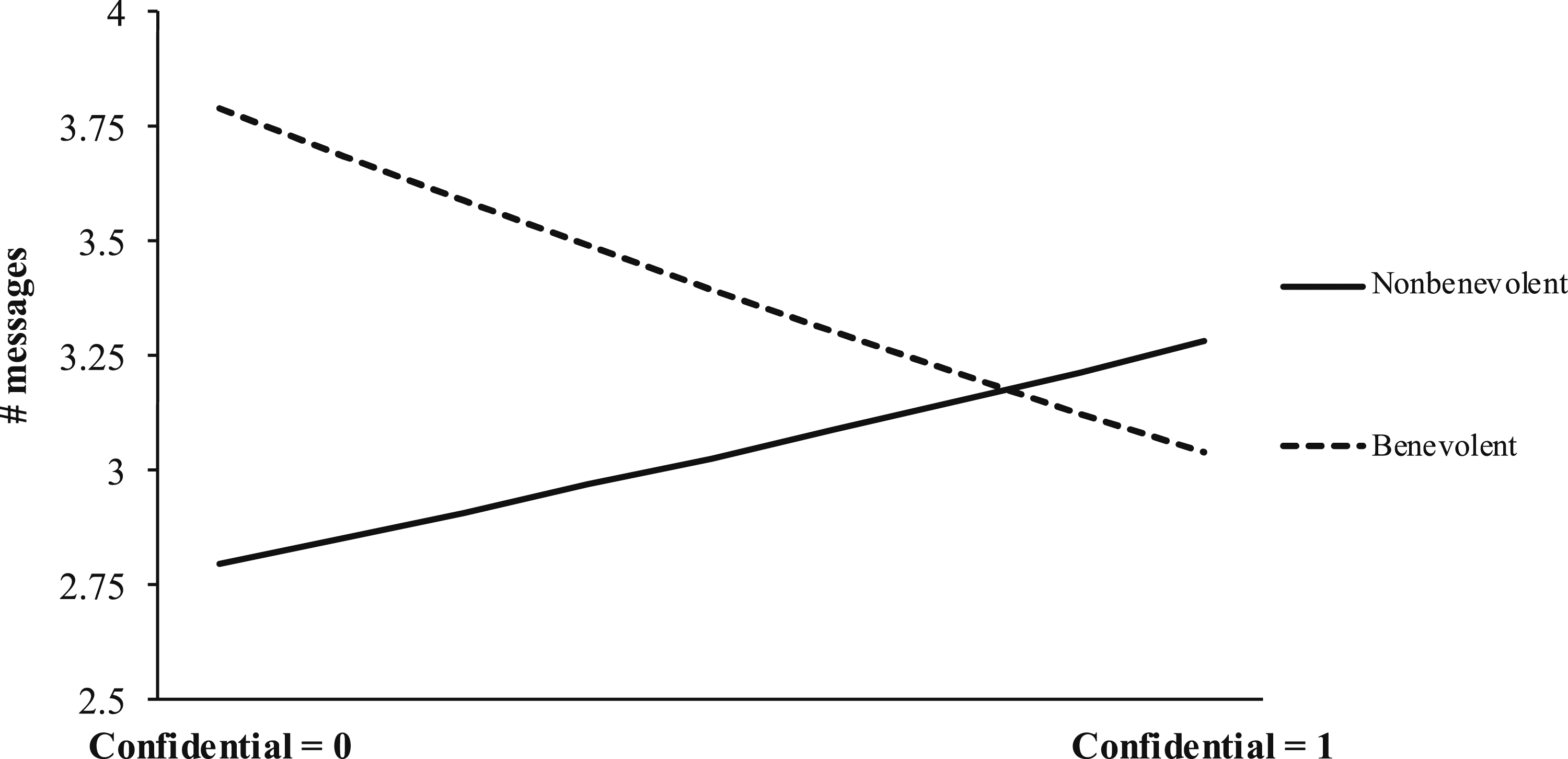

We ran a Poisson regression including the dummy-coded conditions as well as their interaction term to test our hypotheses. The results of this analysis revealed a significant interaction effect (b = −0.38, SE = 0.13, p < .01), a significant main effect for human teacher benevolence (b = 0.30, SE = 0.09, p < .001), and an insignificant main effect for robot teacher confidentiality (b = 0.15, SE = 0.10, p = .11). In particular, as shown in Figure 5, there was a positive, yet not significant effect of robot teacher confidentiality for students facing a nonbenevolent teacher (b = 0.15, SE = 0.10, p = .11). Interestingly, we observed an unexpected, negative effect of robot teacher confidentiality for students that were taught by a benevolent human teacher (b = −0.22, SE = 0.08, p = .01), indicating that students who are taught by a benevolent human teacher would interact even more with the robot teacher if their human teacher could see their messages. Moderation analysis results for Study 5.

We ran a post-hoc analysis to better understand these findings. Given that our sample included participants from two different cultural backgrounds (i.e., UK and US), we tested for potential cultural differences. This post-hoc analysis revealed different effects of our manipulations across respondents from the UK and US. In line with our predictions, UK students interacted more with a confidential (vs. nonconfidential) robot teacher when they faced a nonbenevolent human teacher (b = 0.25, SE = 0.12, p = .04) and there were no differences in interaction quantity across robot teacher types (i.e., confidential vs. nonconfidential) when the human teacher was benevolent (b = −0.02, SE = 0.11, p = .82). For US students, the result pattern was different. Here, students interacted more with a nonconfidential (vs. confidential) robot teacher when their human teacher was benevolent (b = −0.56, SE = 0.14, p < .001). This effect disappeared when US students were taught by a nonbenevolent human teacher (b = −0.00, SE = 0.17, p = .98). Therefore, we find support for H4 in the UK sample but not the US sample, showcasing that there seem to be profound cultural differences in how benevolent human teachers impact student behavior.

Discussion

The findings of Study 5 provide interesting, nuanced insights into the moderating role of human teacher benevolence for the robot teacher confidentiality-usage linkage. In general, teacher benevolence mitigated the negative effect of robot teacher confidentiality on robot teacher usage. Surprisingly, however, if their human teacher was benevolent, students made even more use of the robot teacher if it was nonconfidential (vs. confidential). A post-hoc analysis revealed that this finding may be driven by cultural differences across students.

UK students used the confidential robot teacher more if they were being taught by a nonbenevolent human teacher, which is in line with impression management theory (Snijders et al. 2022) as well as our hypotheses and previous findings. As such, these UK students seem primarily concerned with avoiding negative judgment from nonbenevolent human teachers. US students, by contrast, seem to use nonconfidential robot teachers more if they are being taught by a benevolent human teacher. That means that they use the robot teacher more when they know that their benevolent human teacher can see their messages. One possible suggestion is that—if they face a friendly and fair human teacher—US students engage in self-promotion behaviors, which is another impression management tactic used to be seen as more competent (Bolino et al. 2008). In other words, the fact that benevolent human teachers can see their students’ messages makes the students want to demonstrate their effort, dedication, or skill. Therefore, although not previously hypothesized, these results do mirror central tenets of impression management theory. Intercultural research provides support for these differences between individuals from the US and UK, showcasing that self-promotion behaviors are more accepted and prevalent among the former (Molinsky 2013), potentially due to the US being the most culturally individualistic country (Hofstede 2001). It thus seems that impression management tactics are differently triggered across cultural contexts.

In sum, Study 5 provides two insights. First, we demonstrate an important boundary condition, showing how a relevant teacher characteristic (i.e., benevolence) can influence the effect of a teacher-in-the-loop. Second, Study 5 suggests that cultural differences may influence reactions to robot teacher confidentiality and (non)benevolent teachers.

Exploratory Analyses of Output and Interaction Quality

The primary focus of our investigation was to unravel how robot teacher confidentiality affects the extent to which students interact with a robot teacher (i.e., interaction quantity). Nonetheless, it might be interesting to see whether robot teacher confidentiality further influences (1) how students interact with it (i.e., interaction quality) and (2) how well they perform on their assigned tasks (i.e., output quality). We conducted post-hoc analyses for Studies 1–5 to explore these questions. First, we analyzed students’ messages to explore differences in interaction quality. As a starting point, we reviewed existing literature to identify a set of prompt quality dimensions, including prompt content (i.e., purpose, contextualization, and task relevance) and prompt style (i.e., clarity, verbosity, density, and complexity). As shown in more detail in Web Appendix J, our analyses did not show a consistent, statistically significant pattern that indicates generalizable interaction quality differences between our experimental conditions. This could be interpreted as robot teacher confidentiality mainly impacting interaction quantity (i.e., whether and how much) but not quality (i.e., in what way). However, there were instances where interaction quality seems to have been impacted by robot teacher confidentiality (e.g., in Study 2, we found that students formulated their prompts clearer if their human teacher could read their messages), but we caution overinterpreting these findings given their exploratory, post-hoc nature. In addition, we note that the analyses may suffer from low sample size and self-selection issues as we could only analyze interaction quality for students that used the robot teacher. Specifically, it may be possible that students who did not interact with the robot teacher may be the ones that would have interacted differently with a confidential vs. nonconfidential robot. Nonetheless, we hope these notions provide a foundation for future research on this topic.

Second, we analyzed the output quality of the students (i.e., their solutions to the assignments) to explore whether robot teacher confidentiality impacts student performance or learning. Early research suggests that students who utilize Generative AI perform better and show better learning outcomes (e.g., Yilmaz and Yilmaz 2023). For our exploratory, post-hoc analyses, we employed three measures of student performance. First, for Study 4 and Study S1, students rated their own performance on the different grading rubrics by being asked “How do you think [teacher X] will rate your performance on the following criteria?” We found no differences between the confidential and nonconfidential robot teacher conditions in the analyses (all p > .10). Second, the solutions were coded by independent coders in line with the grading criteria communicated to the students (see Web Appendix A). 5 When comparing performance across experimental conditions, we do find differences for Study 2 (M confidential = 2.36, M nonconfidential = 3.29, t(75) = 3.24, p < .01), but for none of the other studies (all p ≥ .10). Finally, efficiency (i.e., how quickly students were able to execute the tasks) is another common measure of performance. Therefore, we compared between experimental conditions how long it took students to complete the tasks. Besides Study 2 where participants in the confidential (vs. nonconfidential) condition worked faster (M confidential = 1972 seconds, M nonconfidential = 2,175 seconds, t(66) = 2.35, p = .02), there were no significant differences for any of the other studies (all p > .27). 6 These results may imply that robot teacher confidentiality leads to higher interaction quantity but has a limited impact on outcome quality (i.e., performance). Yet, we caution to rush to conclusions in this regard. In addition to the post-hoc nature of these analyses, the assignments were relatively short (many students took about 10 minutes) while past research has shown pronounced performance benefits of using robots or generative AI for longer tasks (e.g., Brynjolfsson, Li, and Raymond 2023). 7 Thus, we encourage future research to thoroughly investigate the impact of robot teacher confidentiality on student performance and learning.

General Discussion

While robot teachers are an emerging reality, research on this technology is still in its infancy. In the present study, we introduce the concept of robot teacher confidentiality as a key means to optimize HRI in educational settings. Across our studies, including field, laboratory, and online experiments with virtual and physical robot teachers and using behavioral outcome measures, we show that students make less use of a nonconfidential (vs. confidential) robot teacher. Moreover, in a large-scale field experiment, Study 3 shows that the observed effect of robot teacher confidentiality is serially mediated by student social judgment concern and interaction anxiety. Another field experiment (Study 4) and online study (Study 5) identify students’ prevention focus and teacher benevolence as boundary conditions, highlighting that students with a high prevention focus are particularly affected by nonconfidential robot teachers and that benevolent teachers can mitigate negative effects of such robot teachers.

Theoretical Contributions

Our study makes important contributions to the service robot literature. First, we challenge the predominant belief among scholars (Kasneci et al. 2023), practitioners (Khan 2023), and policymakers (European Commission 2024) that putting (other) humans “in-the-loop” of HRI leads to exclusively positive outcomes. By introducing the concept of robot confidentiality, we showcase that—while an ethically and legally crucial consideration—keeping HRI private may lead to critical psychological and behavioral benefits. By illustrating how indirect involvement of other human actors may negatively affect HRI, we extend existing service robot and broader HRI research that has primarily focused on how a direct presence of other human actors in the service encounter influences HRI (e.g., Khoa and Chan 2023). This is a key contribution because service encounters with robots in which human employees participate only indirectly are on the rise (Larivière Bart et al., 2017). Our research therefore also adds to research on service robot design (van Doorn et al. 2023), suggesting that human-in-the-loop approaches, which are currently prevailing in practice, require rethinking.

Second, we contribute to the understanding of the complex interplay between the different actors within the service triad (De Keyser et al. 2019). Studies on how other people affect HRI have primarily studied isolated mechanisms and boundary conditions, focusing mostly on the robot (Khoa and Chan 2023), the broader organization (e.g., innovativeness; McLeay et al. 2021), or simple demographic characteristics (Fraune, Šabanović, and Kanda 2019). As a result, it has largely been neglected how, when, and which (personality) types of human agents of the service triad shape HRI outcomes. To understand the mechanisms at play, our research unravels social judgment concern and interaction anxiety as a serial psychological mechanism that explains usage of (non)confidential service robots. Moreover, we identify personal characteristics of both human actors (i.e., student and human teacher) in the service triad that act as boundary conditions. A post-hoc analysis provides more nuances to the effect of teacher benevolence in that it may trigger different impression management tactics from students depending on their cultural context. Collectively, these results provide a more holistic view towards the role of (different types of) human actors in HRI.

Finally, we extend the service literature by investigating the use of service robots in education, an essential service setting (Fisk et al. 2022) that has largely been overlooked in the service literature. Research focusing on other service settings provides only limited insights into facilitating successful HRI in education due to the unique features of the education context (Díaz-Méndez and Gummesson 2012). Our key insights regarding the consequences of human-teacher-in-the-loop approaches during HRI provide important considerations for the ongoing debate about how to implement educational robots and AI in a responsible and inclusive manner (Adiguzel, Kaya, and Cansu 2023).

Practical Implications

Our findings provide important insights for practitioners and policymakers. We find that educators face a dilemma when contemplating whether robot teachers should share student interactions with human teachers. On the one hand, there are ethical, legal and practical benefits of teacher-in-the-loop approaches. On the other hand, the present studies showcase that teacher-in-the-loop approaches may prohibit students from taking full advantage of robot teachers. Thus, we urge careful consideration of how robot teachers and teacher-in-the-loop approaches are implemented. In terms of robot teacher design, past research has repeatedly shown that robot anthropomorphism (i.e., human-likeness) strongly increases usage of service robots (Blut et al. 2021). As such, robot teachers should be enabled with, for example, a human name, voice, or face to increase their usage. Moreover, allowing a robot teacher to explain how it shares conversations with the human teacher may reduce anxiety. Even simple explanations of how a robot performs its task are often sufficient to reduce worries of using it (Cadario, Longoni, and Morewedge 2021). In a similar sense, teachers might explain that having access to the students’ interactions allows them to better understand their learning process (rather than only the outcome), giving them additional opportunities to optimize their students’ learning. Furthermore, students could get autonomy to decide which interactions to share, as research indicates that providing autonomy helps students more fully utilize such technology (Dietvorst, Simmons, and Massey 2018). Such approaches will allow students to benefit from robot teachers even more while preserving human-in-the-loop benefits.

Additionally, we highlight that teacher benevolence mitigates the negative effects of teacher-in-the-loop approaches. Education institutions may therefore focus on hiring teachers who score high on benevolence-related values. Alternatively, institutions may want to also increase teachers’ concerns for the well-being of their students (and how to display it) through development, training and mentoring programs (cf. Sverdlik, Oreg, and Berson 2022).

Lastly, our results indicate that nonconfidential robot teachers can increase the already existing digital divide (i.e., unequal digital outcomes; Fisk et al 2022) by creating differences in the extent to which students make use of robot teachers. If prevention-focused students exhibit less help-seeking behavior towards the robot teacher, this can lead to decreased learning and performance (Bartholomé et al. 2006). We suggest that institutions and educators could gauge their students’ regulatory focus (e.g., Lockwood, Jordan, and Kunda 2002) so that they can discuss and tackle worries among (particularly prevention focused) students.

Limitations and Future Research

This research has relevant limitations and provides exciting opportunities for future research. First, we investigated effects of robot teacher confidentiality across various tasks, without manipulating task differences. Not directly investigating task differences limits us from making inferences on whether usage of (non)confidential robot teachers differs across tasks. We therefore hope that future research will directly manipulate task characteristics and investigate the effects of robot teacher confidentiality in these different situations.

Second, we conducted our research in educational service settings only. We view this focus as an important contribution of our work given the critical nature of education (Fisk et al. 2022). However, because there are key differences between students and consumers of other services (Díaz-Méndez and Gummesson 2012), the generalizability of our findings to other service settings might be limited. Although impression management effects have been demonstrated in other service settings as well, it is unclear whether consumers of these services would be equally concerned about judgment in case of a nonconfidential robot.

Third, whereas our aim was to generalize our findings across different types of robots rather than comparing them, it is worthwhile diving deeper into the differences between physical and virtual robot teachers and how these differences impact the effect of confidentiality (Wirtz et al. 2018). Besides such characteristics related to embodiment, also the robot’s modality (i.e., text vs. speech) and anthropomorphism (Blut et al. 2021) could influence the effect of robot teacher confidentiality on students’ use.

Lastly, we encourage researchers to explore other mechanisms and boundary conditions of the relationship between robot teacher confidentiality and student use. For example, perceived effort by the human teacher could be investigated as a mechanism. Being able to see student-robot interactions may signal to students that their human teacher invests effort in understanding their learning process. In line with social exchange theory (Cropanzano et al. 2017), students may then use the robot more to reciprocate the teacher’s effort. Study 2 hints at this pathway as several students put more effort into their interactions with a nonconfidential robot, hoping for more personalized feedback from their human teacher. Finally, future research may dive deeper into the role cultural differences play by following up on our initial evidence that UK and US students react differently and seem to apply different impression management tactics when being confronted with (non)benevolent human teachers and (non)confidential robot teachers.

Supplemental Material

Supplemental Material - I Care That You Don’t Share: Confidentiality in Student-Robot Interactions

Supplemental Material for I Care That You Don’t Share: Confidentiality in Student-Robot Interactions by Kars Mennens, Marc Becker, Roman Briker, Dominik Mahr, and Mark Steins in Journal of Service Research

Footnotes

Acknowledgments

We would like to thank the entire editorial team and the three anonymous reviewers for the time and effort they invested in helping us develop this paper during the revision process.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.