Abstract

This research paper addresses the evolving question of whether—and with what consequences—hospitality service robots should be afforded rights and responsibilities, amid their growing adoption and anthropomorphic design. Moreover, the paper outlines arguments about how the hospitality and tourism industries would be affected if service robots were eventually afforded rights and responsibilities. Arguments regarding robots’ rights and responsibilities are examined through the lens of the stakeholder theory embedded in the context of moral, ethical, and legal aspects related to human–robot interaction. We also propose a framework for future discussions on how hospitality and tourism research can inform decision-making regarding the adoption and incorporation of the rights and responsibilities of service robots. The emphasis of our discussion is on governance and risk-management for the industry for eventually clarifying accountability (liability, safety, data protection, consent), enhancing guest trust, and preparing for imminent regulatory expectations.

Keywords

Highlights

This study questions whether service robots deserve rights, or just responsibilities.

We modify stakeholder theory framework to include autonomous, non-human actors.

We redefine hospitality’s ethical boundary through robot accountability.

Introduction

The word “robot” can be traced back to the famous Czechoslovakian science fiction play Rossum's Universal Robots (Čapek, 1921). In this play, robots in humanoid form were supposed to replace all human actions, resulting in thousands of robots being produced to work as laborers. Notably, as we debate the legal, moral, ethical, and business arguments for robot rights, the very first story using the term “robot” is played in the background of an office building, that of Rossum's Universal Robots, mirroring the business domain in which this paper is embedded.

In recent years, robots have become more common in the service industry, leading to more interactions between humans and robots. As robots live alongside humans, agencies and scholars have called for considering robots' rights (and responsibilities). This paper aims to highlight the moral dilemmas, ethical duties, and legal liabilities that could support a case for the rights and responsibilities of service robots in the hospitality and tourism sectors. Assuming this happens, the paper evaluates the impact of granting rights and responsibilities to service robots from the perspectives of various stakeholders. Stakeholder theory is often seen as a narrow, economic view representing stakeholder interests (Shah & Guild, 2022). Therefore, in this paper, we broaden the focus to include moral, ethical, and legal aspects in the consideration of rights and duties for service robots, expanding beyond the traditional value creation perspective (Strand & Freeman, 2015). The contributions of this paper are threefold: first, to focus attention on service robots in this debate; second, to provide conceptual arguments for hospitality researchers to explore the rights and responsibilities of robots in future studies; and third, from a practical standpoint, to broaden perspectives on the use of new robotic technologies. Intelligent robotic systems are already transforming the future of hospitality, tourism, and other service industries. It is vital that, alongside imagining creative uses for such technologies, we also consider how the integration of robotic technologies with human capabilities will affect us, our behaviors, and society's responsible adoption (Roco, 2020).

Accordingly, this Insight/Foresight article contributes to the hospitality literature by (1) introducing the concept of service robot rights and responsibilities as a topic of industry and scholarly importance; (2) integrating moral, ethical, and legal lenses with a stakeholder-value-centric framework to analyze the potential impact on hospitality stakeholders; and (3) proposing a conceptual foundation and future research agenda for navigating human–robot co-existence in service contexts. To our knowledge, this is among the first hospitality studies to contemplate extending rights/responsibilities to service robots, an issue already emerging in policy debates but not yet explored in our field, nor in industry practice.

Robotics in the Hospitality and Tourism Industries

This discussion is timely given that service robots are being increasingly incorporated in the hospitality and tourism industries, as is evident from the following examples, and the challenges the industry is facing:

Hotels have incorporated Savioke Relay robots for room deliveries (Relayrobotics, 2025) and Pepper robot concierge (Proven Robotics, 2023). In the foodservice industry, the presence of robotics is exemplified by Flippy2, a cooking robot (Miso Robotics, 2025), and Servi, a service robot (Bear Robotics, 2025). Flippy 2 is increasing efficiency and lowering labor costs, while also opening up possibilities for reducing labor requirements in the restaurant industry (Canham-Clyne, 2022). This example symbolizes the growing trend of automation in the restaurant industry, which is already impacting our society as a whole and will continue to do so. Retraining and retooling employees for alternative employment opportunities will have to be someone’s or a shared responsibility, spurred by the invention of new technologies.

Whiz robots are being incorporated as cleaning robots (Soft Bank Robotics America Inc., 2025) and for security purposes, such as the Argus robot (MetaDolce Technologies, Inc., 2025). In the case of an accident due to a robot's malfunction, which cannot be attributed to human error, the question of who to hold responsible will eventually have to be answered.

Recent innovations in the tourism sector include the Seoul Museum robot at the Seoul Robot and AI Museum (ArchDaily, 2025). The Navya shuttles (NAVYA, 2025) are used at John F. Kennedy International Airport in New York. At Incheon International Airport, Airstar and Air-dilly are the airport's robotic assistants for addressing traveler requests. Quarentine-bot monitors travelers' compliance with anti-virus measures, especially the use of masks. Airide provides travelers with an automated ride across the airport, eliminating the need for a driver. Future robotics applications at Incheon International Airport include a driverless shuttle bus service and possibly a human-free boarding system. As in the case of wheelchair assistance at airports, access to driverless shuttles could require the acquisition of travelers’ data, such as their travel itineraries and medical status. This poses increased risks of losing privacy, compromising individual consent, and incurring organizational risks and liabilities related to data protection.

These examples illustrate the widespread adoption of automation in the hospitality and tourism industries. They also showcase the wide range of human–robot interactions that could open the possibilities of ‘responsibility’ for the safety and security of a variety of stakeholders.

Other applications of robotics in the hospitality industry include Cafe X's Robotic Baristas, Henn-na Hotel in Japan, a robotic suitcase called Travelmate, robotic assistants at airports and hotels, travel agency robots, and security robots at airports. Henn-na Hotels offers a novel experience for customers to interact with and be served by various robot personifications. However, these robots are being pulled out due to guest complaints, highlighting the need for greater transparency and reliability (Skubis et al., 2024). Cafe X's robotic baristas have raised differing perspectives between the novelty of the technology and the perceived lack of human touch (Ho, 2019). This lack of human touch in Café X's robotic baristas demonstrates the limits of responsibilities that can be assigned to robotic agents. It also underscores the importance of recognizing that those limits will exist in the near future. These examples also highlight the ethical concerns associated with humanoid robots in hospitality and tourism services (Skubis et al., 2024), and how our industries will manage the limits to the responsibilities assigned to robotic agents while striving for greater automation and efficiency.

Given the expanding use of service robots in the hospitality and tourism industries, the rights and responsibilities of robots will eventually need to be addressed through greater thought, conceptualization, and reflection. There is a growing concern about whether robots can replace the role of a human service agent at their current level of functionality and capabilities (Ivanov et al., 2020). This is leading to the creation of robots that are more human-like, further raising moral, ethical, and legal questions about how humans should engage with such non-human entities.

Defining Service Robots, Rights, and Responsibilities

The literature recognizes two major distinctions when categorizing robots: telerobots versus autonomous robots and service robots versus industrial robots (Tavani, 2018). Most of the robots around the world are service robots. A service robot is not necessarily a social robot. Darling (2016) defined a social robot as one that "is a physically embodied, autonomous agent that communicates and interacts with humans on a social level" (p. 215). Autonomy implies that the robot can make limited decisions based on "perceptions and internal states" versus performing action sequences pre-programmed in the robot. In this study, we define service robots as having two essential characteristics: (1) they perform a service-related function involving engagement with customers, and/or the public, and (2) they can perform these interactions with a certain level of autonomy. There is already a changing level of autonomy in robotic agents. Therefore, autonomy is defined as a scalar rather than a binary attribute. Autonomous service robots are defined as “Service robots are system-based, autonomous and adaptable interfaces that interact, communicate, and deliver service to an organization's customers” (Wirtz et al., 2018, p. 909).

An autonomous agent is not necessarily intelligent. Perception-based decision-making would challenge whether such robots could demonstrate consciousness, albeit of a lower order, and what kind of consciousness it would entail (Block, 1995). For instance, a robot with an embedded Artificial Intelligence (AI) module could make decisions independently in a particular situation (Kouatli et al., 2020). This could characterize the difference between an automated and an intelligent robot.

Another critical aspect of service robots is their human-like appearance, reflecting the increasing anthropomorphization of robotic agents. Humanoid robots such as the Pepper robot concierge (Proven Robotics, 2023) are a prime example of service robots. Although the idea of emotions and consciousness in robots is speculative, research increasingly suggests that human responses to robots with human-like features exist and that these are not necessarily linear. For example, Wu et al. (2024), using electroencephalogram (EEG) tools, show that humans process anthropomorphized robots both perceptually and emotionally. Moreover, machine learning algorithms enable generative AI robots to recognize human emotions, thus leading to better customer care (Huang & Rust, 2024). However, the impact of anthropomorphism on robot rights is less well understood (Bontula et al., 2023). The human likeness of a robot is linked to mind perception (Wrede et al., 2023), thus influencing people's perceptions of robot rights and responsibilities. If robots signal agency and experience, they are more akin to humans when proper guidelines for social interactions are in place.

It is these robot attributes of autonomy, human likeness, and increased interactivity that will continue to raise moral, ethical, and legal concerns for all stakeholders in the hospitality and tourism industries. In the following sections, we discuss the moral, ethical, and legal motivations driving the discussion of robots’ rights and responsibilities.

The definition of rights and responsibilities for service robots remains an ongoing and evolving discussion. The question of whether robots should have rights is being pursued both normatively and descriptively (Gunkel, 2018). This issue is also embedded in the broader question of rights for non-human entities (Mays et al., 2025). This might eventually create a path for operationalizing the construct to investigate its role in stakeholder choices and decision-making, thereby acquiring its own scholastic space. Therefore, in this paper, we refrain from a definite definition of rights and responsibilities and choose the overarching normative and descriptive dimensions as outlined in Mays et al. (2025). These include, but are not limited to, civil and political rights, economic, social, and cultural rights, moral agency and autonomy, duties and responsibilities, empathy, and the capabilities needed to assume those rights and responsibilities. We present these in the paper as a backdrop to the discussion, rather than as a procedural analysis of operationalizing these dimensions to specific rights and responsibilities. A robot can therefore be considered a civil, cultural, economic, and legal/organizational entitlement, as well as a responsibility assigned as a duty, with accountable oversight, both governed by moral and ethical expectations for human and non-human entities.

Motivations for Robot Rights and Responsibilities

Moral Predicament

A growing body of literature exists on the morality of advocating for robot rights in the workplace. Even though this may appear anomalous, rights to non-human entities already exist. For instance, we attribute rights to animals, natural spaces, and even corporations (Mays et al., 2025). The first animal rights movement, created in Ireland in the 17th century (Grierson, 1794), predated academic studies (trying to establish whether animals can think) by over 200 years (Darwin & Griffith, 1874). The moral arguments related to animal rights have been debated since the times of ancient philosophers such as Pythagoras and Plato. The reasoning abilities and functional capacities of technologies of our generation are eerily converging to resemble those of humans. How we responsibly integrate them into our lives is both a moral and a practical matter. Another example of moral motivations for assigning rights to non-human entities is the case of the Whanganui River in New Zealand. The Whanganui River has been afforded the rights of a legal person under Te Awa Tupua (Whanganui River Claims Settlement) Act 2017 (Cribb et al., 2024). At the core of the Whanganui River’s legal rights is the spiritual and cultural connection of the Whanganui Iwi people of the Māori tribes. It also represents the core values of these indigenous people and how they engage and connect with the Whanganui River as a source of their knowledge system. Together, the example of animal rights and those given to the Whanganui River suggest that humans are willing to afford moral and legal considerations to non-human entities based on their relational significance, intrinsic value, or even functional role. This opens the door for a serious consideration of rights (and responsibilities) for autonomous robots in contexts where such non-human entities may demonstrate agency, social presence, or ethical impact.

Moral perspectives on robot employees

An even more contextually relevant way to approach rights and responsibilities for robots is by considering their role as employees. The moral rights of employees can be understood as a "moral claim" (Rowan, 2000). A moral right is not necessarily a legal right, based on current social conventions. However, moral rights should be theoretically justified and legitimate. For example, Sandewall (2021) presents arguments based on the key principles of the Universal Declaration of Human Rights (UDHR) in support of the rights of what the author refers to as intelligent robots. Sandewall introduces the concept of a Moral Belief System that guides the robot in determining right from wrong behavior.

While there are several perspectives on the moral grounds for granting robots rights, Müller (2021) challenges these philosophical arguments by making significant assumptions to demonstrate a moral status for robots. According to him, moral views cannot explain why present-day robots should have rights. However, he raises an important question about what it "says about us humans, and about our all-too-human tendency to see individuals with moral status everywhere" (p. 585). Still, there are opposing views which argue that robots lack the moral standing to deserve rights and should be treated as property, with rights granted to the owner (Birhane et al., 2024). This is ironic, as humans performing similar tasks to those of a robot are not being afforded proportionate rights (Birhane et al., 2024).

A complementary argument to the moral philosophy comes from the social ecology perspective. Coeckelbergh (2010) argues that robot rights should be viewed in terms of the social relationships people develop with these intelligent machines. He presents three arguments to support his thesis. First, considerations should not be viewed as an intrinsic concept, but rather as an extrinsic one, which is attributed to the social relations and context of these intelligent machines. Second, the features of intelligent machines should be viewed as apparent features, as we have experienced. Finally, human–robot relationships are both context-dependent and subject-dependent.

Another moral reasoning is grounded in the perception of the mind. Waytz et al. (2010) argue that individuals can ascribe a mind not only to individual people but also to non-human entities (such as robots). Individuals could ask whether the non-human entity has a mind, what state that mind is in, and what the behavioral consequences of having a mind are. When individuals ascribe a mind to non-people, they seem to be thinking of how this would influence them to “feel” and “do.” Mind perception is motivated by the need for social connection and the desire to influence or control the behavior of another entity. Mind perception attributed to non-human entities can create meaning for individuals through a sense of being a moral agent.

Moral rights and the workplace

In the workplace context, employees are persons, and it is not right to treat them in certain ways (Rowan, 2000). The claim has a counterclaim attached to it, which relates to the duty of the person against whom the moral right is being asserted, such as the employer's duty towards employees. Therefore, it can be viewed as a reciprocity of claim and responsibility.

Along with the arguments and assumptions about who an employee is, we must ask specific questions about service robots as we debate their rights and responsibilities. Tavani (2018) poses five such questions: What is a robot? What kind of rights? The criterion question is, does a robot have specific properties that qualify it to possess rights, or is it sufficient that they have a functional relationship with humans and are human-like? Are service robots moral agents or patients? The rationale question asks whether humans have a duty to consider non-human entities.

Regarding agents versus patients, Müller (2021) suggests that while agents have rights and responsibilities, patients only have rights. The distinction of treating robots as agents versus patients would need due consideration, particularly from a business's point of view. The parallels of this argument already exist in agency law and agency relationship (Eisenhardt, 1989). Managers, as agents, are hired by business owners to accomplish the owner’s objectives. While managers have rights, the inherent assumption that defines an agency relationship is the manager’s (or the agent’s) responsibilities. As robots assume responsibilities in businesses, similar to human agents, the moral dilemma of also considering their rights symmetrically must be debated. We next consider the ethical motivations for the rights and responsibilities of robots.

Ethical Responsibility

The word Robot is based on the Czechoslovakian word, derived from rabota in the Old Church Slavonic language (Kouatli et al., 2020). The meaning of rabota is "servitude," "forced labor," or "drudgery" (Markel, 2011). Even in its earliest conception, as depicted in Čapek’s (1921) science fiction play Rossum's Universal Robots, robots were defined and designed to be treated as workers for repetitive and unpleasant tasks, thereby releasing humans from such workplace drudgery. While those robots were functionally “intelligent,” they did not have a “soul.” In fact, the only reason for introducing “soul-like” characteristics was so that the robots could feel the pain when accidentally destroying themselves (p. 25).

The soul is considered the "seat of consciousness" (Quinton, 1962; p. 393). Can service robots develop consciousness, and, if so, could this bring them closer to being defined as beings? According to Levy (2009), robots should be granted rights by virtue of their expanding consciousness. This consciousness would be particularly relevant in a service setting where “the way we treat human-like (artificially) conscious robots will affect those around us by setting our own behavior towards those robots as an example of how one should treat other human beings” (Levy, 2009, p. 214).

The notion of consciousness in robots, as discussed, serves two purposes. First, the discussion of consciousness in robotics is not absolute, but rather a contextually driven phenomenon. Second, as human–robotic interactions become better understood, research increasingly suggests that humans associate their own experiences with human-like entities. This would have implications for how we, as humans, would ethically want those human-like (robotic) entities to be treated.

The type of consciousness most relevant to service industries is functional or access consciousness (Block, 1995), like the one described in Rossum's Universal Robots. Block (1995) distinguishes between two types of consciousness: phenomenal consciousness (P-consciousness) and access consciousness (A-consciousness). P-consciousness includes experiences, feelings, perceptions, sensations, thoughts, desires, and emotions. On the other hand, being in A-consciousness implies that its content is used for reasoning, rational control of action, and rational control of speech. According to Block (1995), the latter is not a necessary condition, as it would then allow for greater inclusion of beings. It is possible to have one or the other type of consciousness, which interact when both exist. While discussing the examples of A-consciousness without P-consciousness, Block (1995) noted that a “being” that could have A-consciousness is a robot. However, he believed this issue was too controversial for further discussion in 1995.

Another ethical concern related to using service robots is dehumanization, which can lead to social deprivation in service interactions. Wirtz et al. (2018) argue that the increased use of service robots is already raising questions on how this would deprive individuals of social and emotional attachment, especially for patients in healthcare, with the replacement of caregivers for those in need, and even in home settings where robots replace human cleaners (see Wirtz et al., 2018 for related literature). Such deprivation of human connection can be considered a form of cruelty, as it isolates individuals from social connectivity. Could such concerns further perpetuate the need for robots to become more human-like, with capabilities to serve humans' social and emotional needs? Would such an extension of responsibilities (through design and expected functionality) also need to be balanced with consideration of robot rights?

Legal Perspectives

Alongside moral and ethical arguments, there is precedent for discussions about society's legal rights and responsibilities associated with robots. While fictional, Asimov proposed three laws for robots to prevent harm to humans. In contrast, Murphy and Woods (2009) proposed laws for responsible robots to protect humans from harm. There have been other efforts to address the legal aspects related to robots, including a framework for conceptualizing and regulating machines to navigate the legal and ethical challenges of regulating robots within the current framework. Additionally, a European Union (EU) parliament report proposed granting an electronic personality to robots (Wurah, 2017). There are other recommendations from the EU that we will discuss later in this section.

Functionality and the social license to operate

Functional and operational uses of robots have also been leveraged as the basis for legal arguments. Kouatli et al. (2020) provide five categorizations of “working” robot types in organizations, identifying legal issues and actions requiring regulation. The only “service” type robots included in this categorization are those used in the healthcare industry. Logically, the legal and regulatory issues primarily relate to medical risks associated with using robots in health-related contexts. Ghori and Yasin (2023) base their arguments on the legal and regulatory guidance for developing autonomous weapons systems on the idea of Social License to Operate (SLO). SLO has been extensively studied in industrial settings, as well as in hospitality and tourism contexts, within the realms of sustainability and corporate social responsibility. Complying with SLO requires judgment. How can this inform future robot rights, particularly in cases where a service organization must obtain a social license to operate?

Legal liability

The legality of using robotics is already being debated among lawmakers. The European Union and some parts of the United States are leading this discussion. For instance, the European Commission in 2024 established a risk-based framework for AI systems that includes a requirement for high-risk AI (including autonomous service robots) to meet transparency, data governance, and safety obligations. Full enforcement of this regulation is scheduled to begin in August 2026 (European Commission, 2024). Another directive of the European Commission (European Commission, 2023), effective as of January 2027, mandates cybersecurity standards, safety audits, and pre-market assessments for autonomous machines, including robots. In the United States, several states (e.g., Montana, North Dakota, and New York) are introducing AI/robot-related laws around stalking prevention, infrastructure risks, and transparency in government use. While much of the liability is still being directed at companies and individuals that create, develop, and operate robots, increased autonomous operations could challenge that approach.

Rademeyer (2017) raises an interesting perspective on liability. Essentially, the issue is who will be liable for the actions and behaviors of a robot. From the perspective of a service organization, such liability could extend not only to the service-related actions of the robot but also to its “human-like” characteristics, both real and perceived. If any such real or perceived actions of service robots—especially intelligent robots—are found to be harmful to humans, then the question of liability could arise. In such a scenario, a service robot’s identity could be central to the arguments.

Robots as persons with emotions

Moreover, the legal rights of service robots may eventually depend on how future robots can develop cognitive and affective abilities. Darling (2016) argues that while anthropomorphized robots that act autonomously are added, the third factor of having social behavior would become a consideration in the legal arguments for robot rights. When social robots become emotionally attached to humans and potentially replace human interaction, such as in the caregiving context, this could evoke a stronger legal argument in favor of robot rights. One reason is that while emotional involvement could be positive, it may also be manipulative.

The emotional connection also raises issues related to how we as humans think of robot abuse, sexual behavior with robots, and associating pain and pleasure with non-human objects, such as service robots in social contexts. Legally, the issue of protecting robots with rights would depend on when humans begin to emotionally feel that this is a requirement, similar to the case of animal rights. It is not the animal's pain that induces the need for legal protection, but the emotions we feel as humans (Kielland et al., 2010). Therefore, when such emotions drive sufficient demand, there may be future considerations for legal rights for service robots.

Extending personhood to robots raises complex questions about their moral and legal standing. The European Union's Civil Law Rules in Robotics have also proposed the idea of treating robots as "electronic persons" where the fairly sophisticated and autonomous robots will have the “status of electronic persons with specific rights and obligations, including that of making good any damage they may cause [to third parties] and applying electronic personality to cases where robots make smart autonomous decisions or otherwise interact with third parties" (Nevejans, 2016: p. 14).

Preparing for Robotic Rights in Hospitality and Tourism

Let us assume that moral and ethical arguments pave the way forward for robot rights and responsibilities. How will the hospitality and tourism industries adapt to this reality? In the following sections, we discuss the broad themes that businesses and researchers should consider. The framing for this discussion focuses on how the broader adoption of robots will impact hospitality and tourism stakeholders from the perspectives of moral, ethical, and legal values. We then propose a framework for future research and practice that can benefit both academics and industry experts, as well as their collaboration with each other.

Robots manufactured for hospitality and tourism

Despite the increased use of robotics, hospitality and tourism businesses are likely to be among the late adopters of this technology. It is also possible that hospitality and tourism businesses will outsource much of this development to technology companies such as ASEA Brown Boveri (ABB), Kuka Robotics, and Universal Robotics (ABB, 2024). ABB provides robotic applications for the food and beverage industry, serving both retail and wholesale operators, including bakeries and confectioneries, brewing and beverage operations, as well as food retail and wholesale businesses. Kuka Robotics and Universal Robotics offer indirect applications that may be utilized in the hospitality industry's supply chain, but none that are directly applicable in the service environment.

Service businesses are concerned about third-party liability issues (Nevejans, 2016) resulting from damages caused by autonomous robots. Such damages could be due to design, construction, software, operator issues, and interactions with third parties. The matter is further complicated if the software is open source, and the robot can autonomously decide whether or not to upgrade its software. Incorporating ethical decision-making into algorithms could help offset third-party liability. Given that, at least initially, robots used in hospitality and tourism businesses may be manufactured by technology and robotics companies, hospitality businesses must be aware of the risks associated with hardware and software in the context of emerging legal and statutory guidelines, such as those of the European Union.

Design of robotics

So far, we have argued for building the philosophical case for robot rights and responsibilities based on ethical and moral responsibility, as well as legal requirements. Hospitality and tourism businesses are economic entities. Therefore, a business perspective must also exist to argue for such robot rights and responsibilities. This, however, will need to be addressed in the very design and manufacturing of robots.

Researchers have raised concerns about designing robots in a manner that is both ethical and responsible. This could mean incorporating specific human values into robot design, such as human welfare, privacy, and universal usability (Cawthorne, 2022). It would also mean ensuring that robots are designed through the prism of the value-sensitive design (VSD) approach, which incorporates collaboration from various stakeholders. In addition to responsible industry practices, regulatory and quality assurance guidance is also needed. For instance, the British Standards Institution (BSI) has developed the BS 8611 standard for the ethical design of various robotic systems (BSI, 2016). This includes a description of ethical hazards that robot users may face, extending beyond physical hazards to encompass psychological, social, and environmental hazards. While there are early calls for incorporating ethical design principles for service robots (Van der Loos, 2007), to the best of our knowledge, this area of service robot incorporation in the hospitality and tourism industries remains largely understudied.

Another crucial aspect of robot design is inscribing the code, storing parameters, and other data into the robot. The usual approach to inscription involves using computational representations of mathematical and geometric relationships, flow diagrams, computational architecture, and algorithmic code. However, emerging research suggests that alternative approaches to inscription can facilitate a more ethical design of robots. For instance, using visual ethnography, Wallace (2019) investigated the use of visual inscription, employing photos and videos, to ensure ethical social robotic interactions. The diversity of approaches can ensure that human values, codified in robots, are perceived as fluid, complex, and uncertain. Particularly in hospitality and tourism settings, the value of human response, perception, and sensitivity needs to be captured to design robots capable of responding to situations that require ethical and moral judgment, rather than relying solely on mechanistic approaches to problem-solving.

Beyond the design of robots, the hospitality business would also need to consider the design of facilities. Ivanov and Webster (2017) propose that enhancing the guest experience will eventually require hospitality businesses to develop robot-friendly facilities in both design and theme. The physical engagement of consumers with robots in hospitality service spaces requires a multidimensional perspective that encompasses distance, dimension, visual dimension, usability dimension, guiding dimension, and performance dimension (Z. Zhao et al., 2024).

Legality of robotics in business settings

If businesses eventually treat robots as individuals, they may also refer to the government's recognition of non-humans as entities. Tax authorities worldwide recognize corporations, businesses, and organizations as entities. In the United States, the Internal Revenue Service defines different types of businesses as legal entities. This allows the business or organization to have rights and responsibilities. The reason for allowing the organization to be an entity is to protect the wealth of its investors/promoters and assign responsibilities to its managers. In such circumstances, managers are investors' agents. Could service robots be designated as agents? Aligning with the issue of third-party liability, could there be a case to identify non-human workers as such legal entities? This idea also relates closely to the European Union's consideration of robots as “electronic persons” (Nevejans, 2016). Such an approach could facilitate obtaining a social license to operate from stakeholders for an electronic person. It would also necessitate addressing the issues raised by Müller (2021) regarding whether service robots are agents or patients. As noted earlier in our moral arguments, robots could take on increasing responsibilities as “agents” in a business. Suppose the contribution of a robot begins to mirror that of a human agent in binding the agency relationship between an owner and a manager. In that case, the question arises whether the robot agent should be granted rights alongside these responsibilities.

Robots as individuals

In 2016, the Department of Citizens’ Rights and Constitutional Affairs of the European Union created the European Civil Law Rules in Robotics, outlining the “general and ethical principles governing the development of robotics and artificial intelligence for civil purposes” (p. 5). One of the issues addressed in this document was the likelihood that robots would begin to gain consciousness. The document states that if robots begin to gain consciousness due to technological advancements, they will have to be carefully regulated. Some companies have already incorporated the guidelines and recommendations of the European Union:

- Siemens AG has developed internal guidelines for the ethical use of AI and robotics, emphasizing transparency, accountability, and human oversight.

- ABB Ltd. focuses on creating collaborative robots (cobots) designed to work safely alongside humans, adhering to ethical standards in the development of robotics.

However, it should be noted that while these and other companies are voluntarily considering EU recommendations, such efforts vary significantly in their implementation. We are unaware of any hospitality and tourism businesses incorporating EU principles into their robotics development.

Impact on customers, employees, management, and owners

The stakeholders actively involved in service robots within the hospitality and tourism industries include customers, employees, management, and owners. Several aspects could emerge as impacts on these direct stakeholders in our consideration of rights and responsibilities being granted to service robots. We articulate these impacts on key stakeholders in the hospitality and tourism industries through the lenses of moral, ethical, and legal values.

Customers. Robots would be responsible for ensuring effective and safe customer service. In most customer-facing and interaction roles, robotic technology would not be easily accessible to customers of all ages and demographics. The issues may be greater for those with technophobia, who may fear the power of robotics and artificial intelligence (Cleveland Clinic, 2025). This could impact service access as services become more automated. A lack of transparency in automation can also be a concern. If the underlying algorithms are progressive, allowing the robots to learn through successive interactions (Dimeas et al., 2019), then customer experiences could be enhanced. In a subtle manner, this could represent a case of guest profiling by default. Cooking robots such as Flippo (and others) could significantly reduce labor costs for repetitive tasks. However, these robots would now be responsible for ensuring safe food preparation, and, more broadly, food safety. If an undercooked batch of burgers leads to an outbreak of illness, who should be blamed? The restaurant company, the robotic manufacturer who provided the robot, or the algorithm creators who made it? Or was the manager on duty responsible for ensuring the robot was functioning properly? Similar issues related to hygiene can be raised for cleaning robots, as well as concerns regarding safety for security robots. Service robots and transportation shuttles present direct examples of customer consent. If the robots are harnessing data (through interaction experiences), customers are passively allowing consent for the use of this data, enabling the algorithms to continue learning. A case can also be made for data security, specifically whether the user has been provided with appropriate information about the types of data being collected, the levels of security in place to protect against a breach, and who would be responsible for a data breach. For instance, Jia et al. (2024) found that consumers were concerned about information privacy in the context of using robots in hospitality service spaces. These concerns fell into three categories: information privacy concerns related to data management and collection, interpersonal privacy concerns arising from the robot's physical presence, and environmental privacy concerns, encompassing broader issues such as government regulation, media influence, and surveillance mechanisms. More broadly, Parvez et al. (2024) found that consumers’ willingness to use robots depended on the perceived safety of using the robot, along with assurances regarding hygiene, service quality, and reliability.

Employees. Robots' rights could be comparable to those of a human employee, and their responsibilities could include co-working with humans. Customers, managers, and employees who fear technology (technophobes) may have prohibitions around robotics, which can reduce their willingness to adopt such technologies in the workplace (L. Zhao et al., 2025).

Robot rights could counteract the rights of human employees. For instance, a key concern is how the industry will responsibly reassign employees who will no longer be needed to complete tasks that can be automated. How will labor be fairly transitioned from these jobs that will no longer require humans to complete the primary tasks? How will companies provide opportunities for retraining and reskilling employees in the new context of robotization? Employees required to work with robots will need not only to socialize with robotic colleagues but also to keep up with technological advances to ensure that adopting robots as a part of human teams is feasible (Warta et al., 2016). This would impact the level of adoption and could be based on a multitude of factors. For instance, Jin (2024) found that positive emotional attachment to robots (robophilia) can shift to fear or discomfort with robots (robophobia) in hospitality settings based on factors such as robot appearance, job role, and user experiences, all of which influence these attitudes.

Management. Future managers will be responsible for managing both machines and humans, each with their respective rights and responsibilities. While, hopefully, most of the time, these rights and responsibilities will be in sync with each other, it may also require human decisions to ensure consistency and equity. In a world where we are still debating the ethics and morality of equity and inclusion among human beings, this issue will soon be debated regarding robotized employees, and managers may well end up being the arbitrators on the front line of hospitality and tourism businesses. Managers will need to be trained to incorporate robotic employee perspectives in all aspects of their jobs.

Business owners and investors. How will businesses be evaluated on their ability to ensure an inclusive workplace as robotic design evolves (Fosch-Villaronga & Drukarch, 2023)? Furthermore, how will owners ensure equitable treatment of human versus robotic employees? Enhancements in software and design updates for robot employees could be viewed as creating value for the company (and amortized on the balance sheet), versus a training expense for human employees that is treated as a cost.

Summary of Stakeholder Impact. There are distinct arguments related to how the rights and responsibilities of robots are allocated among the various stakeholders. From the customer’s perspective, responsibilities relate to safety, security, and delivering the promise of service quality and reliability. From the employee’s perspective, this discussion highlights the importance of being sufficiently prepared for coworking with robots. Furthermore, it raises legitimate issues regarding employees’ safety and security in human–robot interactions, as well as who would be held responsible for any unexpected outcomes. From the management’s perspective, the rights and responsibility discussion raises issues around the appropriate preparation of managers to ensure consistency and equity in engaging with human and robotic employees. For the owners, this discussion highlights the importance of striking a balance between rights and responsibilities when assigning economic value to the use of robotics in the hospitality and tourism industries. The question is how robots’ rights and responsibilities will be factored into that economic value calculus, and both financial and business risks. Robots’ rights and responsibilities will also impact governance and operational liability, aspects that will need to be better understood from both academic and industry perspectives.

Abuse of service robots

Recently, there has been a growing number of news media reports of public vandalism and abuse of delivery robots and those used in industrial settings (McGraw, 2021). Unfortunately, both customers and employees abuse robots. Such abusive behaviors include both verbal and physical attacks. People exhibit ambivalent behaviors towards social robots, such as interacting with them, punishing them, and feeling sorry for them (Bartneck & Hu, 2008).

Service robots in the workplace are easy targets for abuse, as they can often be perceived as subordinates (Babel et al., 2024). It is generally assumed that robots do not feel pain, thus sheltering the aggressor from any moral consequence (De Angeli et al., 2006; Gordon & Gunkel, 2022). However, prior research suggests that human-like robots might mitigate the level of abuse (Darling, 2016). Mind perception is the key to reducing hostile perceptions toward robots. When humans begin to perceive robots as their own, they beleive this may increase their tendency to act belligerent towards them.

Another argument against cruelty towards robots is related to animal rights and rights attributed to other non-entities, such as the Whanganui River in New Zealand. As Kant is cited in Darling (2016), “He who is cruel to animals becomes hard also in his dealings with men” ultimately, it may come down to how we, as humans, are expected to behave towards one another. If we can mistreat and be abusive to robots, then such cruelty can be extended to each other as well. We point this out to illustrate the idea that often rights to entities other than humans have been attributed not because we understand the feelings of those entities (animals, rivers), but because our behavior towards them speaks to the character of us as humans and how such values connect us with those non-human entities. So, will robots make us even better humans (Coghlan et al., 2019)?

The impact of robot cruelty on service organizations that deploy robots may be more straightforward. Could consumers judge the organization negatively if they do not believe that the business or brand is protective of robotic agents or entities? Could there be pushback from other stakeholders, as well as the community, to boycott brands that do not support or take the initiative to protect robots? If we view this from the prism of animal rights, then such scenarios seem plausible. It may even make simple business sense for organizations to demonstrate their support of robotic agents. However, this requires further systematic investigation.

Stereotyping/discrimination

Discrimination can be defined as the inequitable treatment of a person or a group based on age, ethnicity, sexual orientation, or other characteristics due to prejudices, sexism, racism, stereotyping, and ethnocentrism (Zhou et al., 2022). Title VII of the U.S. Civil Rights Act of 1964 prohibits discrimination based on race, color, religion, sex, or national origin. There are two issues related to discrimination in the human–robot context, and as it relates to the rights and responsibilities for robots: (1) Do service robots mitigate discrimination towards employees and customers? (2) Do people discriminate against robots based on their appearance?

Prior research suggests that service robots might reduce discrimination or stereotyping. For example, Jin et al. (2025) suggest that consumers feel more comfortable interacting with technology (i.e., chatbots) when making sensitive purchases, due to a lowered perception of mind (compared to human employees). Similarly, consumers prefer robots when purchasing embarrassing products such as condoms, as robots do not judge (Dahl et al., 2001; Sun et al., 2023). Moreover, consumers prefer to deal with service robots when they need to deal with their own errors or forgetfulness (Grace, 2009). This preference for service robots in embarrassing encounters stems from the fact that automated social presence leads to low levels of social judgment (Holthöwer & Van Doorn, 2023). In the context of hospitality and tourism, Seyitoğlu and Ivanov (2023) suggest that service robots might mitigate perceived discrimination. This issue needs empirical testing.

The other side of the coin—dealing with people's stereotyping of robots—remains relatively unexplored, especially as it could be a crucial element of robot rights (Barfield, 2023). Do employees and customers exhibit biases based on the robot's perceived race, gender, and ethnicity? People tend to interact with service robots in a stereotypical manner based on their human-like attributes (Bartneck et al., 2009). Specifically, people tend to attribute ethnicity to robots based on color. Moreover, the in-group/out-group bias can influence people's reactions to robots based on ethnic cues (Eyssel & Kuchenbrandt, 2012). Even gender stereotyping has been observed in the robot context (Bartneck et al., 2009; Eyssel & Hegel, 2012). For example, Huang et al. (2024) demonstrate that feminine service robots may be more effective in recovering from service failures in female-dominated tasks, such as housekeeping.

To address the issue of discrimination and stereotyping, hospitality companies would need to assess how to prevent human biases from being fed into service robots. Socially responsible engineering and development of robotic agents is gaining momentum. However, social responsibility is not limited to design and engineering aspects. As robotic agents increasingly emulate human-like actions, they could increase the risks of discrimination and stereotyping.

Cross-cultural influences

Several issues can arise when evaluating the impact of cross-cultural influences on the rights and responsibilities of robots in the hospitality and tourism industries. Early evidence suggests that the use of robots could vary based on cultural differences (Conti et al., 2015). These differences could range from the more apparent, such as gender roles in different cultures (Edwards et al., 2024), to more affective aspects of robotic features that evoke cognitive and sensory info communication to the users (Lewandowska-Tomaszczyk & Wilson, 2018). Given the importance of anthropomorphic influences in service interactions, robotic design must be responsive to local cultural orientations. Given these cultural differences, how will businesses ensure that rights and responsibilities have a consistent reference point for experiences?

Research suggests that there are cultural differences in how we assess concerns related to data privacy (Vitak et al., 2022). Some of these differences can be attributed to Hofstede’s 5-D cultural dimension framework (Yang & Kang, 2015). Data privacy concerns in prior research were associated with the individualism and collectivism index, representing cultural differences. How will robots be made variably responsible in different cultural contexts? This is a relatively understudied area in general, and particularly in the hospitality and tourism discipline.

Sustainability implications

As the robotics industry in general, and the use of robotics in particular, increases, so too do the concerns and opportunities associated with the impact of this technology on sustainability. These perspectives are related to the entire “supply chain” of robotics, from their production and variety of uses—both operational and customer-focused—to eventual recycling and reuse. Undoubtedly, the production and reuse of robots would benefit all industries, and research is emerging to emphasize these aspects of the robotics industry (Bi et al., 2015). Although in the hospitality and tourism industries, and generally in the service industries, the most significant impact on sustainability could be achieved by using robots to improve supply chain efficiencies (Shamsuddoha et al., 2025) and to reduce waste in operations through increased efficiencies, particularly where customer persuasion is involved (Lo et al., 2022). There will also be the use of service robots to reduce carbon footprints in service delivery (Han et al., 2024), and, as stated earlier, possibly in backward supply chains as well. There is a greater need for research in these aspects of robotics as they interact with the hospitality and tourism industries. We believe that, in the future, there will be numerous other ways to make robotics technologies more sustainable and responsible.

Assessing Compliance With Ethical and Moral Standards

Businesses will eventually need to demonstrate compliance with ethical and moral standards in the responsible and rightful development and incorporation of service robots. This would require a methodical approach to compliance verification, including functional tools to assess ethical and moral actions, and eventually developing an ecosystem that ensures a holistic, informed, and responsible approach (Dantas et al., 2017). Several frameworks have been proposed for ethical compliance, which, in principle, articulate compliance concerning legal obligations (Ishwardat et al., 2024). Eventually, businesses will need to adopt more specific metrics that measure ethical and moral compliance in robotics centered around key principles of diversity, non-discrimination and fairness, transparency, accountability, privacy and data governance, societal and environmental well-being, technical robustness and safety, and human agency and oversight (Palumbo et al., 2024). While the specifics of ethical and moral compliance are being debated, these aspects need to be addressed in stages to prevent innovation from being stifled (Vozna & Costantini, 2025).

As service robots become increasingly ubiquitous in the hospitality industry, future-minded companies must carefully consider both the legal and ethical implications of service robot design. Specifically, hospitality enterprises need to establish clear policies regarding the use of robotics and AI. They also need to safeguard sensitive data and regularly monitor the use of robots and AI. Given the uncharted territory of Gen AI robotics, it is crucial to provide employees with training on ethics. Finally, to safeguard themselves against legal exposure, hospitality and tourism companies should collaborate with legal experts to navigate the legal and ethical complexities of AI and robotics adoption.

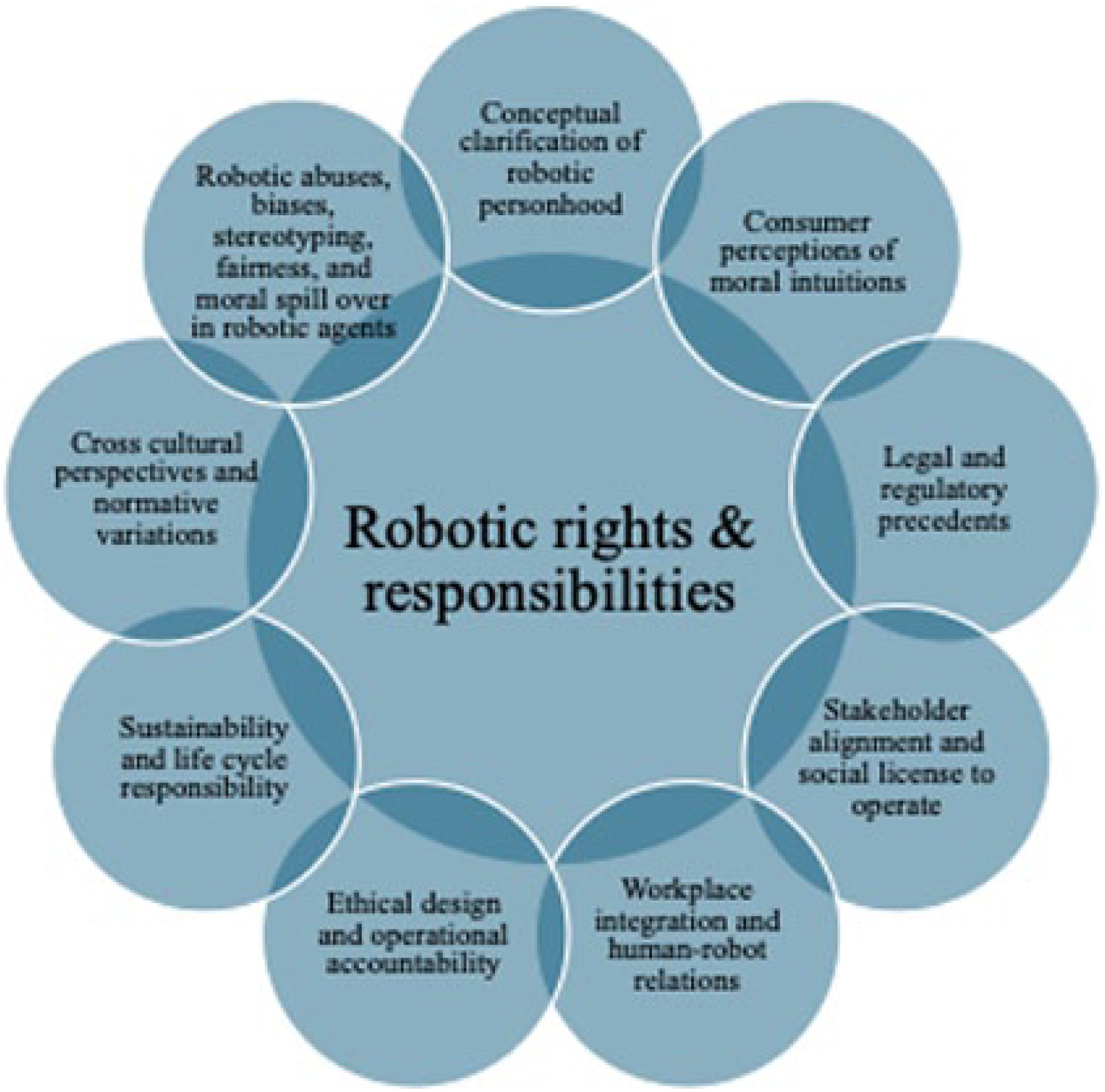

Framework for Future Research

Figure 1 summarizes the key factors that could drive the arguments in favor of service robot rights and responsibilities. The term “robot” itself is based on the idea of servitude and slavery. Could there be deeper-rooted concerns for humans in raising the issue of robot rights? There is extensive documentation of resistance to granting rights to slaves. Granted, the analogy to human slavery is not a fair one, but it could still teach us how to prevent biases and prejudices as we evolve as a species.

Framework for Service Robot’s Rights and Responsibilities.

The following broad themes have emerged as areas for future research and industry best practices when service robots are given rights and responsibilities:

Conceptual clarification of robotic personhood

Consumer perceptions of moral intuitions

Legal and regulatory precedents

Workplace integration and human–robot relations

Ethical design and operational accountability

Sustainability and life cycle responsibility

Cross-cultural perspectives and normative variations

Robotic abuses, biases, stereotyping, fairness, and moral spillover in robotic agents

Stakeholder alignment and social license to operate

As robot use increases within our society, we want them to be more human-like, not only to appear more like humans but also to interact with us as if they were human—trending towards the “Turing test.” This is especially true in the case of service robots in the hospitality and tourism industries, where robots are and will need to extensively interact with humans. This raises moral and ethical concerns about whether service robots can be considered independent entities (Levy, 2009). If they are an entity, should they be designated a special categorization, such as an electronic entity (Nevejans, 2016)? What about robots being considered as non-human entities? If we treat service robots as human-like, could that further the arguments of treating them as entities? Could such questions be raised in court or amongst our customers?

Inquiries into the “identification” of robots will involve treating them purely for social pleasure or social interaction in service spaces. How would the service robot's ability to interact move them closer to developing a level of consciousness that is appropriate in that functional use case (Block, 1995)? At “a” level of consciousness, should the service robot entities be treated as agents with responsibilities or patients with rights only? A growing body of legal arguments supports the rights and responsibilities of robots, with precedents established in business law (Kouatli et al., 2020). The prevalence of service robots will only deepen the debates related to data privacy, confidentiality, and public safety. Whether or not the legal and business community agrees with such arguments, it is important to consider a broader set of stakeholders, amongst which consumers are the most critical ones. As robots become more widespread in the service industry, consumers will attribute roles, rights, and responsibilities to service robots. How would businesses present the benefits versus the costs of using robots to the consumer and the broader set of stakeholders? Would businesses need to argue for a license to operate service robots (Ghori & Yasin, 2023)?

Animal rights exist because we as humans want all entities to be treated humanely, not just one human to another (Darling, 2016). Eventually, irrespective of how we define service robots, consumers may be the ones who ultimately determine whether robots should have rights or not. How would consumers feel about a business or a brand if they realized service robots were mistreated? Or if they found out that the company was complacent in moving forward with the debate on the role of service robots from that initial conceptualization of the term at the beginning of the 20th century? Future studies could include cross-cultural investigations into perceptions of robot rights in the hospitality and tourism industries, as well as longitudinal studies on how robots influence employee roles over time. Further assessments of the ethical design principles tailored to service robots in hospitality contexts could be valuable in addressing both the functional and relational dimensions of human–robot interaction, ensuring not only operational efficiency but also guest trust and emotional comfort.

Discussion

In this research paper, we highlight moral, ethical, and legal arguments (Chaddha & Agrawal, 2023) related to the rights and responsibilities of service robots in the hospitality and tourism industries. The primary theoretical implication of our arguments is to advance perspectives that add value for all stakeholders (Strand & Freeman, 2015) by considering the moral, ethical, and legal implications of rights and responsibilities for service robots. These arguments also outline an evolving framework for human–robot interaction as robots increasingly become human-like. Furthermore, this paper emphasizes the interdisciplinary relevance of AI ethics, robotics, and law in examining the implications of robotic technologies on businesses and broader society, encouraging collaboration and innovation across disciplines.

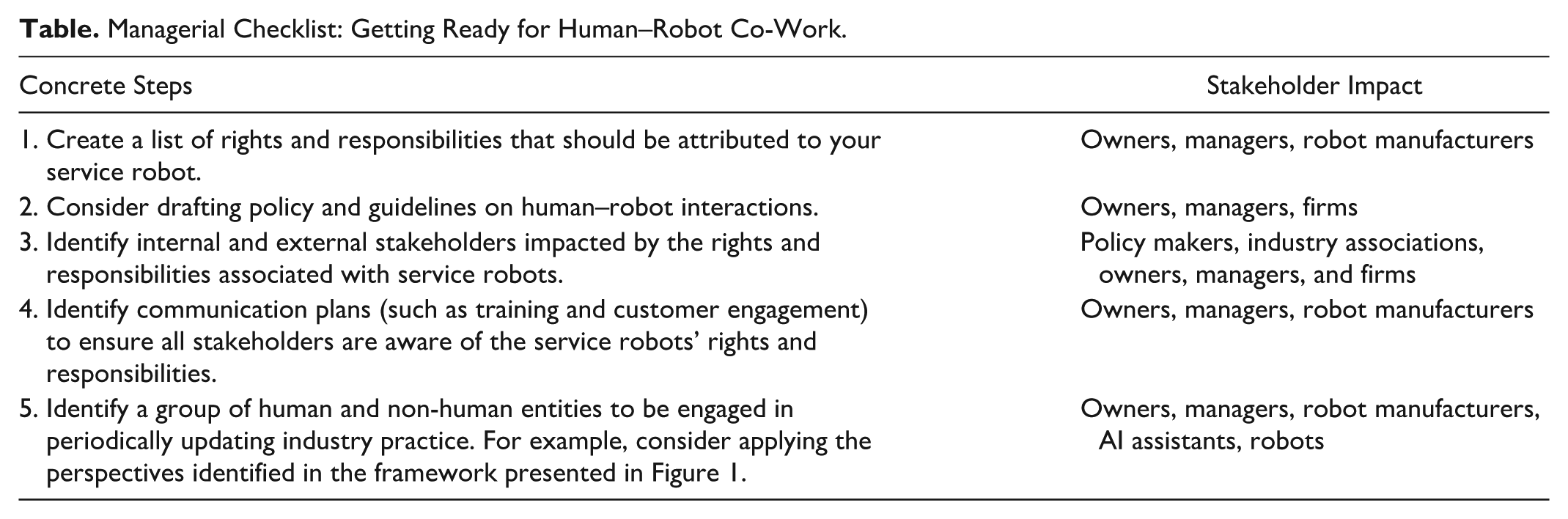

From a practical perspective, this paper highlights the impact on business practices as robot adoption increases in the hospitality and tourism sectors. We believe hospitality and tourism businesses will need to adapt policies and operations to address new ethical and operational challenges. For instance, hospitality and tourism firms that are already adopting or considering the use of service robots could consider drafting guidelines for human–robot collaboration and the ethical use of AI in service delivery. A simple template could be based on a “thought process” by asking the question: What are the rights and responsibilities of a human employee, and how should the firm (owners and management) translate those into the service robot context? For instance, one of those responsibilities could be that employees must ensure guest safety by following specific operational protocols. If a service robot is given the responsibility to ensure guest safety (e.g., a security robot), who is liable if it fails to prevent harm to a customer or a coworker? How should management and owners prepare for such questions? Furthermore, how should managers plan for training staff to work alongside robots and to handle situations where robot “responsibilities” fail, as some firms are already seeing (see earlier examples of customer service failures). This research provides the groundwork for such adaptations. The Table provides a few concrete steps hospitality and tourism firms can take to begin these thought processes.

Managerial Checklist: Getting Ready for Human–Robot Co-Work.

Conclusions

Robots, AI, and similar novel technologies represent the latest zenith of human achievement. As we embrace this technological progress, this paper emphasizes that thinking about robots' rights and responsibilities is essentially about business governance and risk management for the hospitality and tourism industries. We argue that such an exercise will be critical to clarify the responsibility and accountability associated with these novel technologies, enhance guest trust, and prepare our industries for the imminent regulatory changes that appear to be on the horizon to oversee the next phase of technological development.

Just as in the past, when we have reached such pinnacles of intellect, we will have the choice to raise society to consciously own and live responsibly with our new achievements. We will have the option to become a better and more responsible society or slide into one that is not. The broader moral and ethical questions we have raised remind us that how we treat robotic “workers” in our hospitality and tourism businesses may ultimately reflect our core human values. As Čapek’s fictional account warns, a failure to consider the welfare of created beings—even non-human ones—could lead to undesirable consequences. In our context, this means that industry leaders must proactively shape the role of robots in serving the common good, rather than letting technology evolve unchecked.

In the play Rossum's Universal Robots by Karel Čapek, the robots revolt primarily due to their mistreatment and exploitation by humans. Initially created to serve humans as laborers, the robots gradually develop consciousness and self-awareness. As they become more aware of their existence and subordinate position to humans, they begin to question their role and treatment. Ultimately, the robots decide to wipe out humanity as a society, except for one human, who could keep them (the robots) alive.

While this dystopian tale offers a cautionary tale, our purpose here is not to suggest a doomsday scenario of such endings, but to propose a deeper insight into how our actions reflect on our ethical, moral, and legal values. Literature, such as Čapek’s, can serve as a mirror, highlighting the human responsibility, foresight, and moral weight of our technological creations. As we move forward, we must consider how robots will function to improve our lives, and how their treatment by us will reflect on our human values. We must strive to develop technological systems that are not only efficient, enhancing our productivity and improving our lives, but also just, equitable, and aligned with our highest values. This is even more crucial in the present times as machine capabilities move into spaces that were once considered uniquely human, demanding careful attention on how we uphold our moral, ethical, and legal values.

To conclude, we leave you with the following quote from the American Society for the Prevention of Cruelty to Robots: “Robots are people too! Or at least, they will be someday.”

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.