Abstract

Although calls for inclusiveness in services are becoming more vigorous, empirical research on how to design and implement service inclusion for stigmatized consumers remains scant. This paper draws on key questions of personalization (i.e., who personalizes what for whom?) to tailor the (a) source and (b) content of marketing messages in order to better include stigmatized consumers. The authors examine this idea in three experiments in healthcare/well-being settings. In terms of message source, the results show that, in interpersonal interactions, service companies can employ the principle of homophily to better engage stigmatized consumers (Study 1). In contrast, homophily-inspired personalized messages to stigmatized consumers can backfire in the context of consumer-artificial intelligence (AI)-interactions (human-to-avatar interactions; Study 2). Moreover, in terms of message content, Study 3 explores how, and under which conditions, companies can leverage thinking AI versus feeling AI for improved service inclusiveness. Finally, the studies point to anticipated consumer well-being as a crucial mediator driving effective service inclusiveness among stigmatized consumers. The results not only contribute to an emerging theory of service inclusiveness, but also provide service scholars and managers with initial empirical results on the role of AI in inclusive services.

Calls for services to be more inclusive are becoming more frequent and vigorous (Aksoy et al. 2019; Boenigk et al. 2021; Field et al. 2021; Larivière and Smit 2022; Kuppelwieser and Klaus 2020). This emphasis on inclusiveness emerges from the paradigm of Transformative Service Research (TSR) (Anderson et al. 2013; Blocker et al. 2022) 1 , and it is consistent with efforts in related fields such as Transformative Consumer Research (Mick 2006), efforts by leading outlets such as JMR’s “Mitigation in Marketing” and JM’s “Better Marketing for a Better World” initiatives, and the Responsible Research for Business and Management (RRBM) initiative (Mende and Scott 2021). However, despite enthusiasm related to the idea of inclusiveness, important questions remain. First and foremost, how can service firms implement inclusive designs for improved consumer well-being 2 and positive customer-firm interactions? Accordingly, Fisk et al. (2018, p. 841, emphasis ours) diagnose that service research “has not addressed how service organizations can extend service quality to all consumers, including those who enter service establishments or systems with disabilities, vulnerabilities, nontraditional roles, or refugee and migrant status.” In other words, there is little empirical research on how to include service consumers who live with potentially socially stigmatizing attributes (diseases, obesity, addictions, disability, poverty, etc.). This void in the literature is surprising because many service industries (e.g., medical/healthcare and well-being, financial services) serve consumers with potentially discrediting attributes (Berry, Mirabito, and Baun 2010; Bone, Christiansen, Williams 2014; Corrigan 2004). Thus, these firms ought to understand the needs of socially stigmatized consumers and how to reduce potential access barriers that keep these consumers from using their services (e.g., consumers avoid seeking help via professional services due to a perceived threat of being stigmatized).

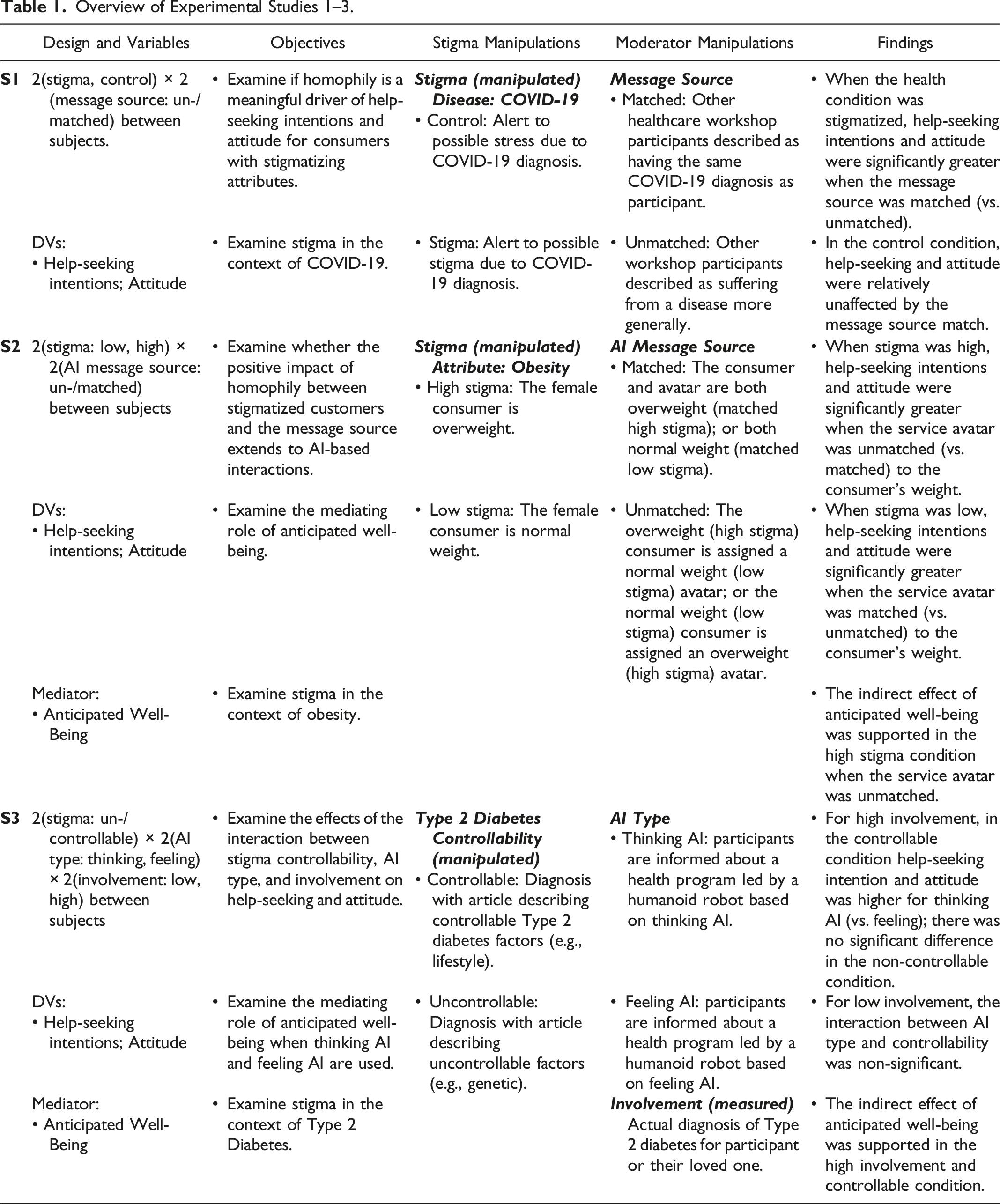

Overview of Experimental Studies 1–3.

First, the inclusion of stigmatized consumers has received relatively scant attention in empirical service and consumer research (Arsel, Crockett, and Scott 2022). In response to calls for more research on stigmatization (Boenigk et al. 2021; Harmeling et al. 2021), we discover stigma as a crucial variable that influences the effectiveness of a firm’s customer inclusion efforts. To identify ways to personalize communications to mitigate consumer-perceived threats of stigmatization, we examine the interplay between consumer stigma and facets of message source (i.e., the “who”) as well as message content (i.e., the “what”), two fundamental elements to tailor marketing communication (Ptacek and Eberhardt 1996). We show how organizations can personalize their communication to increase the likelihood that stigmatized consumers will engage with them. Our findings extend recent work on stigma as a key variable for inclusion in the marketplace (Harmeling et al. 2021), but also provide novel insights. For example, while we show that service organizations can leverage the principle of homophily to personalize human-to-human interactions and to improve help-seeking intentions 3 for stigmatized consumers (Study 1), we also find that homophily-guided communications to stigmatized consumers can backfire in the context of AI-based interactions (e.g., human-to-avatar interactions; Study 2). In parallel, we show (in Study 3) how managers can personalize AI-based communications by employing thinking AI versus feeling AI (Huang and Rust 2021) to include consumers with a stigmatized condition that is controllable (vs. not).

Second, with our focus on the role of sophisticated technology (in Studies 2 and 3) as the platform for personalization (Huang and Rust 2017, 2021), we respond to calls for empirical research on AI in inclusive service design. To illustrate, outlining a research agenda for inclusive service, Fisk et al. (2018, p. 849) urge scholars to address the question: “How can technology (e.g., AI) facilitate the design of services to enable opportunity in various service contexts for the vulnerable” (e.g., consumers with stigmatizing attributes)? Directly speaking to this question, our results provide initial insights for researchers and managers on how to leverage (thinking/emotional) AI for service inclusion.

Third, by examining the underlying process, we identify anticipated consumer well-being as a key mediator in the empirical service inclusion literature. We find that stigmatized consumers consider personalized messages that are sensitive to their stigma as promising pathways toward their well-being; notably, we find this effect in interactions between stigmatized consumers and non-human AI-based service agents (an avatar in Study 2, a robot in Study 3). By unearthing this mechanism of how to include stigmatized consumers especially via AI, our work enriches extant theory of service inclusion, and answers calls for research that can “conceptualize and measure the happiness generated by inclusive service interactions at the individual level” to help foster customer well-being (Fisk et al. 2018, p. 850). Moreover, our results directly respond to recent calls for more research on how customer-AI interactions can contribute to consumer well-being, especially the well-being of “vulnerable and disadvantaged groups” of consumers (Benvenuti, Scarpi, and Zarantonello 2023, p. 113).

Taken together, for firms that seek to serve stigmatized customers, this research shows that marketers should not think of communication strategies in generic terms; rather, they can (and should) explore ways to personalize their marketing efforts in light of stigmatizing consumer attributes; notably, appropriate customization can vary depending on the service context (e.g., whether the service provider is human or AI). Our research also shows that “access” is not just about economic or geographic availability, but also about overcoming psychological barriers that may inhibit consumers from participating in services available to them. Finally, our findings provide actionable implications for companies in communicating to their stigmatized customers. This is important because, to date, many service organizations fail in “designing service settings for inclusion” (Fisk et al. 2018, p. 847).

In the remainder of this paper, we first provide the conceptual background on the interplay between stigma and personalized marketing communications (in terms of message source and message content). Then, grounded in the idea of homophily (i.e., the idea that people are more likely to bond with others they perceive to be similar, McPherson, Smith-Lovin, and Cook 2001), Study 1 examines the effectiveness of a communication that is personalized for stigmatized consumers. Specifically, this experiment focuses on the interactive effect of the similarity of a message source (i.e., “who”) and a stigmatizing attribute of a consumer (i.e., “whom”) on their attitude toward the service and their help-seeking intentions in an interpersonal (human-to-human) service setting (H1). The subsequent sections are inspired by recent calls for research on the link between consumer well-being and technology (Benvenuti et al. 2023) and examine how AI might promote service inclusion. In light of this objective, we strove to enhance the ecological validity of our work. Therefore, we conducted exploratory interviews with therapists in an addiction center working with stigmatized clients. We also conducted two experiments (Studies 2 and 3) on the role of AI for service inclusion. Study 2 investigates the effects of a personalized message source (the “who” is a homophily-inspired AI avatar serving as a fitness coach) that matches the consumer’s stigmatizing attribute (e.g., obesity). 4 Then, Study 3 manipulates the message content (the “what”) to emphasize the profile of an AI-powered robot as either thinking AI or feeling AI (Huang and Rust 2021). Experiments 2 and 3 also investigate anticipated consumer well-being as a mediator to shed light on the underlying psychological process in customer-AI encounters. Finally, note that, in the interest of generalizability and managerial relevance, the studies examine different health-related stigmas (linked to COVID-19, obesity, and Type 2 diabetes) 5 in various service contexts (an online health workshop [Study 1], a gym with an AI coach [Study 2], an AI/robot-led health diabetes program [Study 3]).

Conceptual Background: Stigma and Personalizing Inclusive Service Communications

Marketplaces continue to change with the evolution of services and technologies (e.g., AI) (Huang and Rust 2021), creating opportunities for progression in inclusive services. Because we propose that the extent to which a consumer attribute is potentially stigmatized influences how effective a service’s communications are, we first define stigma(tization).

Stigma Theory and Inclusive Service

Stigma theory helps explain which attributes can affect consumers’ willingness to seek help for their undesirable conditions (e.g., disease, obesity). A stigma is an attribute of a person that is potentially devaluing (Crocker and Major 1989). Importantly, an attribute in isolation cannot be a stigma. Its position as a stigma is established by an audience of onlookers who determines what is considered normal and what is deviant or undesirable (Harmeling et al. 2021). 6 Past experiences of stigmatization influence how a stigmatized person perceives others. For example, individuals who are systematically ostracized because they possess a particular characteristic or are a member of a certain group (Kurzban and Leary 2001) can see others as a social threat. Consequently, consumers living with stigmatizing attributes often adopt a hyper-vigilance to identifying what audiences they are exposed to and estimating the audience’s intent toward them relative to their stigmatizing attribute (Goffman 1963). In short, being stigmatized affects a person’s social interactions. Notably, services—by their very nature—require a degree of interaction (Zeithaml et al. 2024). Therefore, we theorize that the extent to which a customer’s attribute (e.g., diseases, obesity) is stigmatized alters their response to personalized marketing initiatives for services that might aid their stigmatized condition.

Fundamental Aspects of Designing Personalized Communications for Inclusive Service

Two fundamental marketing decisions (Ptacek and Eberhardt 1996), which are especially relevant for personalizing communication with stigmatized customers, are determining the most effective message source (Studies 1 and 2) and message content (Study 3). Below, we highlight important aspects of message source and message content and then theorize how they may impact stigmatized consumers’ likelihood to seek help from service organizations.

Personalized Message Source and Homophily

The message source can bias people’s processing of the message such that an ineffective source will promote more negative elaboration and an effective source more positive elaboration, with corresponding downstream effects (Chaiken and Maheswaran 1994). For example, one distinction related to message sources is between experts and non-experts, where experts are typically seen as more credible (Petty and Cacioppo 1981). An expert (vs. non-expert) source can elicit positive responses due to an “authority heuristic” (Sundar 2008); that is, consumers might comply with an expert’s request because it triggers an “experts can be trusted” heuristic (Cialdini 1993; Janssen et al. 2008). However, other research suggests that when the source is a peer, although a non-expert, this can elicit a similarly meaningful heuristic (Biswas, Biswas, and Das 2006). This effect is based on people’s ingrained biases in favor of in-group members (Ambady and Weisbuch 2010). In-group (vs. out-group) members are often perceived to be more credible, and this greater credibility translates into greater influence on the recipient’s attitudes and behaviors creating a “peers can be trusted” heuristic (Clark and Maass 1988; Pornpitakpan 2004). Such effects are often explained via the concept of homophily, which describes the human propensity to associate with similar (vs. non-similar) others; homophily has been shown to be an influential preference in human interactions and relationships (McPherson, Smith-Lovin, and Cook 2001). Indeed, service scholars have demonstrated that homophily can, under certain circumstances, facilitate customer-employee interactions (e.g., Arndt et al. 2021; Streukens and Andreassen 2013).

Personalized Message Content: Informational Benefits and Social Benefits

Another essential aspect to designing personalized, persuasive communication is emphasizing the most appealing benefit for the focal audience (Sudhir, Roy, and Cherian 2016). The focal benefit provided is the core content of the communication and often the focus of a consumer’s cognitive processing (Chaiken and Maheswaran 1994). One commonly featured benefit is information. Information is a vital facet of a service firm’s functional value because it provides customers with specific topical expertise (e.g., advice on health issues and solutions). As information circulates between (and among) customers and the firm, it generates benefits as it facilitates the development of cumulative expertise and customer learning (Muniz and O’Guinn, 2001; Porter and Donthu 2008; Wirtz et al. 2013). Besides such informational benefits, social benefits for customers have also received considerable interest. For example, despite the somewhat impoverished nature of digital environments, social bonds in online communities often bolster feelings of self-esteem and a sense of belonging among its members (e.g., Loane, Webster, and D’Alessandro 2015).

Taken together, customer inclusion in well-being services (e.g., health communities, fitness clubs) might provide both informational and social benefits. 7 But, the choice of which of these two benefits is emphasized in personalized customer-directed communication might affect the effectiveness of the inclusion initiative, as we further discuss next.

The Interplay of Stigma and Personalized Message Source: Homophily-Based Matching

Personalized communications from service organizations can offer a platform for social benefits and to fulfill consumers’ need to belong, defined as seeing oneself as socially connected and being an accepted member of a group. The need to belong is a fundamental human motivation, but it is even more important for self-affirmation in the face of an identity-related threat (e.g., threats of being stigmatized) (Baumeister and Leary 1995). Notably, belonging in one group can offer a defense to self-threats related to exclusion from another (DeWall, Baumeister, and Vohs 2008; Twenge et al. 2007). Thus, social benefits from being a part of a group can help customers alleviate the distress associated with stigmatization.

That said, experiences of stigmatization can make people highly sensitive to information that is diagnostic of the quality of their social connections (Walton and Cohen 2007). Members of stigmatized groups are usually aware of the negative stereotypes that others apply to them and of their risk of being a target of prejudice and discrimination (Crocker, Major, and Steele 1998). One consequence of this awareness is perceived social identity threat, which is “a psychological state that occurs when a person fears being devalued on the basis of group membership” (Major, Mendes, and Dovidio 2013, p. 517). In this state, consumers with a stigmatized attribute should be particularly attentive to assuring the credibility of a message source, which can bias their interpretation of and response to the focal message (Ptacek and Eberhardt 1996).

Moreover, as stigmatization increases, interactions with out-group members are particularly challenging because they create an inherent “category divide” based on the deviant attribution (e.g., disease) that brings with it the risk of being the target of prejudice (Vorauer 2006). Consequently, encounters between members of different groups (e.g., patients and experts) are more likely to entail aspects of vigilance, threat, miscommunication, and misperception (Blascovich and Mendes 2010). 8 In parallel, members of stigmatized groups tend to adopt a prevention mindset, which directs their cognitive resources toward social threats; for example, patients who have a stigmatized condition are often suspicious of being the target of stereotyping by members of another group (Major, Mendes, and Dovidio 2013). In contrast, when customers are approached by a source with the same stigmatized condition (vs. a source that does not appear to have the stigmatized attribute), there is no “category divide” that may trigger a risk of being the target of stigmatization (Vorauer 2006). That is, a personalized communication that employs a homophily-based matching between the message source and the stigmatized customer should make the message more appealing and effective; thus, we propose:

When the customer and message source are similar, customers are more likely to (a) seek help from the service organization and (b) report a more favorable attitude toward the service when the matched health condition is stigmatized (vs. when it is not). When the customer and message source are non-similar, intentions to seek help are relatively unaffected by stigmatization of the health condition.

Study 1: The Effects of Message Source and Consumer Stigma on Help-Seeking Intentions and Attitude Toward the Service

To test H1, Study 1 examines stigma in the context of COVID-19 (the study was conducted during the height of the pandemic in 2020). The Center for Disease Control (CDC) had warned that COVID-19 “can lead to social stigma” and is “causing so much stigma” because it has many unknowns; people often fear the unknown, and “it is easy to associate that fear with ‘other.’” These conditions create malleable beliefs about the level of stigma associated with COVID-19 and allowed us to manipulate the stigma levels associated with this disease.

Design, Participants, and Procedures

The study employs a 2 (stigma, control) × 2 (message source: matched, unmatched) between-subjects design with 502 Amazon Mechanical Turk (MTurk) participants (MAge = 36.73, 191 women). 9 Participants read a scenario in which they receive a positive COVID-19 diagnosis, along with information from their healthcare provider about the possibility of experiencing stress (control condition) or stigma (stigma condition) due to their diagnosis. 10 We used content from the CDC to generate the stimuli. We manipulated message source with an invitation to an online healthcare workshop, which described the other workshop participants (i.e., message source) as people who either have a COVID-19 diagnosis (i.e., personalized, homophily-based matched condition) or suffer from a disease more generally (i.e., non-personalized, unmatched condition). See Web Appendix A for stimuli. Next, participants indicated their help-seeking intentions (I would want to … enroll in this workshop; sign up for emails from this workshop; join the mailing list for this workshop; 7-point, strongly dis-/agree, α = 0.78). As a superordinate construct, we also examine attitude toward the workshop (negative/positive; bad/good; un-/favorable; dis-/like, 7-point bipolar; α = 0.85). See Web Appendix B for construct measures by study. Participants also provided demographics (e.g., gender, race, political orientation, marital status, and household size). 11

Stigma Manipulation Pretest

We randomly assigned participants to read either the control or stigma condition (MTurk, n = 80, 46 women, MAge = 41.16). Next, participants indicated the extent to which they perceived COVID-19 to be stigmatizing: “If I were diagnosed with COVID-19…I would be discriminated against/I would be discriminated against by my employer/I would be stigmatized” (α = 0.81). We also examined negative affect: “I currently feel…very upset/angry/sad/afraid/helpless,” (α = 0.93) and perceived severity of the disease: “COVID-19 is a real threat to my well-being.” “COVID-19 is a very severe illness.” “I am afraid of how COVID-19 will affect me personally” (α = 0.78; 7-point, strongly dis-/agree; random order). Results show that the COVID-19 diagnosis was perceived as more stigmatizing in the stigma (vs. control) condition (MStigma = 4.32, MControl = 3.50, F (1, 79) = 6.61, p = 0.01). We find no difference in negative affect (MStigma = 3.01, MControl = 2.98, F <1) or perceived severity of the disease (MStigma = 4.67, MControl = 4.81, F <1). Thus, the manipulation performed as intended.

Results

Help-Seeking Intentions

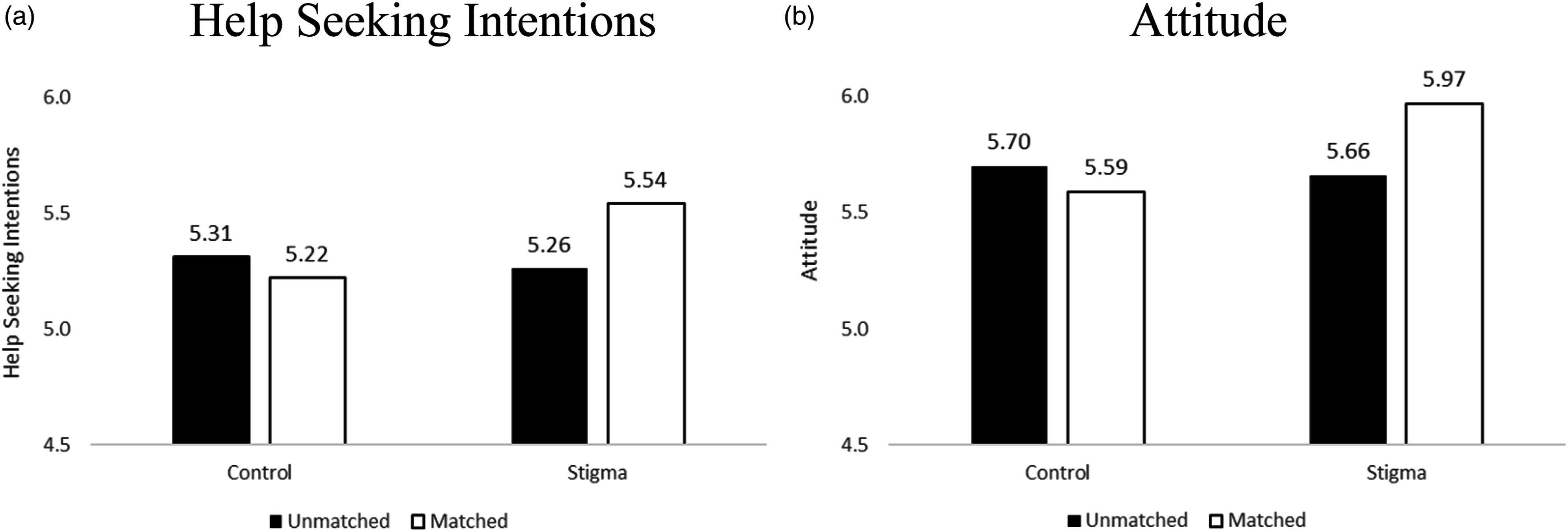

ANCOVA on the help-seeking intentions index revealed a significant stigma × message source interaction F (1, 491) = 4.17, p = 0.04), see Figure 1; the main effects were NS (p’s > 0.13). When the customer and message source were matched, help-seeking intentions were greater when the health condition is stigmatized than when it is not (MStigmatized-Matched = 5.54, MControl-Matched = 5.22; F (1, 491) = 6.34, p = 0.01). When the customer and message source were unmatched, help-seeking was relatively unaffected by stigma (MStigmatized-Unmatched = 5.26, MControl-Unmatched = 5.31; F (1, 491) = 0.14, p = 0.71). These results support H1a. Looked at another way, when the condition was stigmatized (vs. control), help-seeking intentions were significantly greater when the message source was matched (vs. unmatched) (MStigmatized-Matched = 5.54, MStigmatized-Unmatched = 5.26; F (1, 491) = 4.87, p = 0.03); in the control condition, help seeking was relatively unaffected (MControl-Matched = 5.22, MControl-Unmatched = 5.31; F <1). Study 1, help-seeking intentions and attitude as a function of stigma and message source match. Panel a: Help-seeking intentions, Panel b: Attitude.

Attitude Toward the Service

ANCOVA on attitude revealed a stigma × message source interaction F (1, 491) = 5.51, p = 0.02), see Figure 1. There was a stigma main effect (F (1, 491) = 3.52, p = 0.06); other effects were NS (p’s > 0.22). When the customer and message source were matched, attitude was more favorable when the condition is stigmatized than when it is not (MStigmatized-Matched = 5.97, MControl-Matched = 5.59; F (1, 491) = 8.93, p = 0.003). When the customer and message source were unmatched, favorability was relatively unaffected by stigma (MStigmatized-Unmatched = 5.66, MControl-Unmatched = 5.70; F (1, 491) = 0.12, p = 0.73). These results support H1b. Looked at another way, when the health condition is stigmatized, attitude was more favorable when the message source was matched, (MStigmatized-Matched = 5.97, MStigmatized-Unmatched = 5.66; F (1, 491) = 6.01, p = 0.02); in the control condition, attitude was relatively unaffected by the message source match (MControl-Matched = 5.59, MControl-Unmatched = 5.70; F <1).

Discussion

Study 1 shows that when stigmatized consumers receive communications that are meant to provide help with a stigmatized attribute (e.g., online health workshop), they respond more favorably to a personalized message that emphasizes the similarity between the consumer’s stigmatizing attribute and the message source. In contrast, when communications are from a non-similar source, consumers are relatively unaffected as a function of stigmatization. That is, Study 1 finds that when the illness is perceived to be stigmatizing, emphasizing homophily based upon the stigmatizing attribute is an effective approach for service inclusion. Although these findings can inform inclusion efforts, Study 1 was conducted in a consumer-to-consumer (i.e., interpersonal) setting. In contrast, relatively little empirical research has examined how AI can help with promoting the inclusion of vulnerable and disadvantaged consumers (Benvenuti et al. 2023; Fisk et al. 2018). With this reality in mind, we strove to enhance the ecological validity of our research and conducted exploratory interviews with therapists in an addiction center.

Exploring the Role of AI for Service Inclusion via Interviews With Addiction Therapists

We share the optimism in the literature on the promise and importance of AI (e.g., Huang and Rust 2021). For example, recent research points to opportunities related to personalizing customer conversations with chatbots by imbuing bots with distinct personalities (Shumanov and Johnson 2021); other research goes further and proposes to match consumer-chatbot personalities (Zogaj et al. 2023). This optimism about AI in the scholarly literature notwithstanding, we wanted to explore the potential of AI in the context of stigmatized consumers from service providers’ perspectives. Thus, we conducted exploratory, semi-structured interviews with four therapists in an addiction center (typically working with clients who are addicted to alcohol and other substances). While Web Appendix C provides more detailed insights, here, we focus on three overarching themes related to the role of AI.

First, although there was agreement about a trend toward automation, the interviewees signaled skepticism and hesitancy about sophisticated technology (e.g., therapy robots) in their profession. For example, one interviewee stated: “I never considered this possibility. (…) I’m afraid of these things [robots], as universal solutions for everyone. I’m sure that things are much more robotic and automated. I think things are heading towards that and that it may become the way in a few years” (I-1). And I-3 concluded: “I look at myself 12 years ago [when I was a patient] in an interview with a robot; not for me. Because of course, I need a person who understands me, a person who looks at me and tells me ‘you can’. It would be weird, weird.”

Second, as we continued probing, the initial aversion became more nuanced and reflective. Specifically, the interviewees began to consider certain settings in which robotic technology might be helpful for stigmatized clients. For example, one participant noted that some clients might prefer technology because it is more comfortable than interacting with a human counselor due to concerns of shame; they noted (I-1): “I understand that having the option for those who prefer not having a person, for example, those who sometimes do not come due to the gender issue; there are issues for women and others for men, then possibly for a certain segment of a certain age and certain gender, that mechanism [technology] can be a good tool” (I-1). Another interviewee validated this insight: “Maybe yes, because of the shame. If the person is really in need, wants help, and does not dare to speak, and you know that a machine is going to ask you, although later a person is going to have to see everything you said, maybe yes. I think so” (I-4). Another respondent recognized the improved efficiency (especially in initial stages) for both customer and provider when they explained (I-3): “For example, a call center offering help with drug abuse. That the first contact when you call and a robot attends to you (…) that he [the robot] asks you everything and you won’t have to repeat everything.”

Third, the above insights notwithstanding, the interviewees questioned the extent to which technology can truly provide the empathy and human warmth needed when serving stigmatized consumers; for example, I-2 summarized: “I’m not saying no, because technology has been very favorable in many things. But I think that this human being needs to be loved, listened to, and appreciated. And I think that’s why I’m very human--they need this more than anything because stigmatized people need people to look at them, listen to them, and many times they need a hug because they have been rejected and the rejection is very deep inside them.”

These exploratory insights illustrate an ambiguity about the potential of AI in serving stigmatized consumers; they also indicate a need for empirical consumer research that examines when and how to employ AI in inclusive services. Against this background, we conducted Study 2, which builds on the insights from Study 1, but extends the analytical realm threefold: First, Study 2 examines a different stigmatizing attribute (obesity) (Puhl and Heuer 2009). Second, it tests H1 in the context of AI-based interactions with a service avatar. Third, Study 2 examines the mediating role of anticipated well-being, as discussed next.

Personalized Customer-AI Encounters and the Mediating Role of Anticipated Well-Being

We propose that the concept of well-being is important for inclusive services, especially related to personalized efforts to engage consumers with stigmatizing attributes (e.g., Boenigk et al. 2021; Fisk et al. 2018). We theorize that positive responses to a personalized communication among stigmatized consumers (per H1) might be driven by the well-being consumers anticipate achieving via the focal service (e.g., an online health workshop in Study 1). Although a review of the diverse literature on well-being is beyond the scope of our research (see, Keyes and Haidt 2010; Rahman 2021), high levels of well-being are often indicated by positive emotions, and psychological as well as social functioning (Keyes 2007). On a more detailed level, Diener et al. (2009, p. 252) describe well-being as having a sense of meaning, purpose, self-acceptance, and optimism that is linked to being engaged and interested in one’s own life, but also to have supportive and rewarding relationships, to be respected by others, and to contribute to the well-being of others. The idea that anticipated well-being is the first step toward actual well-being is consistent with theories of human adaptation, which postulate that people’s interactions over time shape their well-being. For example, the “broaden and build theory” (Fredrickson 2001) proposes that the experience of positive emotions broadens people’s thoughts and behaviors and facilitates adaptive responses to their environment, which, in turn, provides greater learning and growth opportunities, ultimately fueling their future well-being.

These general insights notwithstanding, an important question is whether customer-AI interactions can contribute to anticipated well-being. Because this issue has received little empirical attention, Benvenuti et al. (2023, p. 113) underline that better understanding how technologies can affect consumer well-being is “an area of primary importance;” even more important, the authors call for research on how sophisticated technology can bolster the well-being of “vulnerable and disadvantaged groups” of consumers (ibid.). In fact, the corresponding literature (which is growing but relatively nascent and limited in its generalizability, scope, and measurement) remains ambivalent. On the one hand, several researchers continue to question the capability of AI (e.g., chatbots) to provide social support because they are not able to fully and deeply understand their human interlocutors (Scoglio et al. 2019). On the other hand, some findings suggest that AI (e.g., chatbots, virtual assistants, assistive robots) might support well-being for stigmatized consumers (e.g., Dhimolea, Kaplan-Rakowski, and Lin 2022; Ruggiero et al. 2022). To illustrate, advanced chatbots (e.g., in the context of digital psychiatry, Torous et al. 2021) can provide deeper conversations, social interactions, and support in light of their users’ mental health issues and other health-related concerns (van Wezel, Croes, and Antheunis 2021).

Considering the above insights about the promise of AI in promoting consumer well-being as well as our findings in Study 1, we expect that stigmatized consumers who receive a personalized (i.e., stigma-matched) communication from an AI-source will anticipate their engagement with the service to be a platform for support and their ability to deal with their stigmatized condition. Therefore, they should perceive the service to be contributing to their well-being (Frederickson 2001). We therefore predict:

The improved (a) help-seeking intentions and (b) attitude among customers with a stigmatizing attribute in response to a similar (vs. non-similar) AI-based message source is mediated by anticipated well-being.

Study 2: Homophily-Based Personalization and AI: Matching Service Avatars to Stigmatizing Consumer Attributes?

The purpose of Study 2, inspired by the notion that AI can enable greater personalization in service (Huang and Rust 2021), is to test whether the positive impact of homophily-based personalization (found in interpersonal settings in Study 1) extends to AI-based interactions. Specifically, Study 2 investigates how a personalized AI-based agent that is matched (vs. unmatched) to a consumer’s stigmatizing attribute influences their help-seeking intentions and attitudes (H1), and whether anticipated well-being mediates their response (H2). Study 2 investigates stigma among women, in the context of being overweight (Azarbad and Gonder-Frederick 2010; Major, Eliezer, and Rieck 2012). Accordingly, this study examines the interaction of an overweight (vs. normal weight) female customer with an AI-powered avatar that is matched to the customer in its appearance (i.e., for an overweight customer, the avatar is also overweight). Notably, examining an overweight service avatar in a gym setting reflects the increasingly prominent marketplace trend of “body-positive” fitness coaches (e.g., Jackson-Gibson 2020; Speller 2022). Moreover, the idea of an overweight (avatar) fitness coach is also aligned with findings on the role of (interpersonal) homophily in the adoption of healthy behaviors. For instance, Centola (2011) found that the adoption of health-promoting behaviors was greatest “across dyadic ties, in particular among obese individuals.” In other words, the most effective social trigger for increasing the willingness of obese individuals to adopt healthy behaviors was the influence of others with similar (weight-related) health characteristics. Study 2 examines whether such an effect would also emerge related to digitally-designed homophily with a virtual service provider (here, an overweight fitness coach).

Design, Participants, and Procedures

Study 2 employs a 2 (stigma: low, high) × 2 (AI message source: matched, unmatched) between-subjects design. Because weight stigma affects women more than men (Azarbad and Gonder-Frederick 2010; Major, Eliezer, and Rieck 2012), we requested a panel of women. We requested 300 female participants from MTurk and received 308 participants (MAge = 41.09, 295 women). 12

We manipulated stigma via the body weight of a target female consumer named Alex. In the high stigma condition, the target is overweight; in the low stigma condition she is normal weight; all other aspects of her appearance are held constant (see Web Appendix D for stimuli). Participants read that Alex is searching online for a gym to join. She finds a gym in her area and visits the gym’s website for more information. The website offers a virtual consultation, in which she reports her height, weight, and submits a photo of herself (see Web Appendix D). We manipulated the match or mismatch between Alex and the AI message source via the physical appearance of a service avatar. Specifically, after Alex submits her information via the website, she is greeted by an anthropomorphized avatar. The avatar is Alex’s assigned personalized coach. In the matched condition, when Alex has a high stigma body weight (i.e., is overweight), the avatar is also overweight; and when Alex has a low stigma body weight (i.e., is normal weight), the avatar is also normal weight. In the unmatched condition, the avatar is overweight for the non-stigmatized consumer and normal weight for the stigmatized consumer.

Next, participants indicated judgments about the consumer’s help-seeking intentions (How likely do you think Alex is to … enroll in this gym’s membership program; sign up for emails from this gym; join the mailing list for this gym; visit the gym’s website again; visit this gym frequently; sign up for classes at this gym; incorporate a visit to this gym into her weekly schedule; stick with a fitness program at this gym; go to this gym when she needs help regarding her health and fitness; 7-point, very un-/likely, α = 0.98). We also examine attitude toward the service (negative/positive; bad/good; un-/favorable; dis-/like, 7-point bipolar; order of presentation randomized; α = 0.99).

To measure anticipated well-being, participants indicated the extent to which they felt joining the gym would improve Alex’s well-being: “If Alex were to join this gym, joining would help her feel … that she has a purposeful and meaningful life,” “… that her social relationships are supportive and rewarding,” “… engaged and interested in daily activities,” “…that she actively contributes to the happiness and well-being of others,” “… competent and capable in the activities that are important to her health and fitness,” “… like a good person who lives a good life,” “… optimistic about her future health and fitness status,” “… that people respect her”; (1 = strongly disagree/7 = strongly agree; order of presentation randomized; α = 0.95; Diener et al. 2010; Keyes 2007, 2009). Finally, participants indicated their demographics (e.g., age and gender). 13

Stigma Manipulation Pretest

To pretest our manipulation (i.e., the body weight of the target, Alex), we requested 100 female participants from MTurk and received 105 responses (103 women, MAge = 41.38). Unexpectedly, two non-female participants made it past the screening process and were not included in the analyses. We randomly assigned participants to either the high or low stigma condition and showed them the corresponding picture of Alex. Next, participants indicated the extent to which they perceived Alex to be stigmatized (derived from Harmeling et al. 2021): “People like Alex are stigmatized,” “Alex would be stigmatized,” “People like Alex are often treated differently,” “People usually have their minds made up about people like Alex,” “People like Alex are socially isolated,” “Alex would be discriminated against,” and “Alex would be discriminated against by her employer,” (α = 0.95). Results show that the overweight target was perceived as more stigmatized than the normal weight target (MHighStigma = 5.04, MLowStigma = 2.86, F (1, 101) = 114.13, p < 0.001). The overweight female was also perceived as more stigmatized compared to the scale midpoint (MHighStigma = 5.04; t (51) = 7.94, p < 0.001) and the normal weight female less stigmatized than the scale midpoint (MHighStigma = 2.86; t (50) = −7.27, p < 0.001). Thus, the manipulation performed as intended.

Results

Help-Seeking Intentions

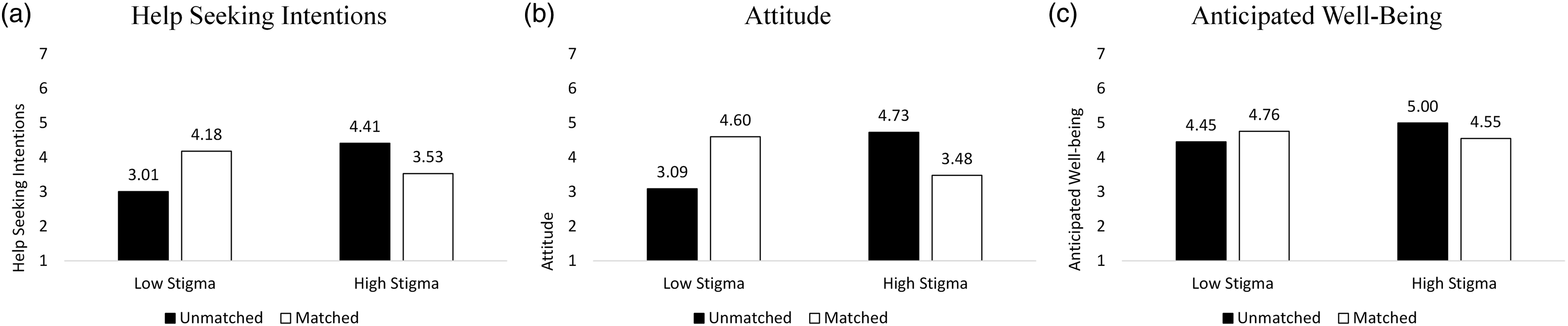

ANOVA on help-seeking intentions shows a significant stigma × AI message source interaction F (1, 291) = 28.17, p < 0.001) see Figure 2(a). There was also a stigma main effect (F (1, 291) = 3.70, p = 0.06); the AI main effect was NS (p = 0.45). Contrasts show that the unmatched service avatar increased help-seeking intentions when stigma was high (vs. low) (MLowStigma = 3.01 vs. MHighStigma = 4.41; F (1, 291) = 26.57, p < 0.001). The matched avatar increased help-seeking intentions when stigma was low (vs. high) (MLowStigma = 4.18 vs. MHighStigma = 3.53; F (1, 291) = 5.61, p = 0.02). Looked at another way, when stigma was high, help-seeking intentions were significantly greater when the avatar was unmatched (vs. matched) to the consumer’s weight (MUnmatched = 4.41 vs. MMatched = 3.53; F (1, 291) = 10.26, p = 0.001). When stigma was low, help-seeking intentions were greater when the avatar was matched to the consumer’s weight (MUnmatched = 3.01 vs. MMatched = 4.18; F (1, 291) = 18.50, p < 0.001). Study 2, help-seeking intentions, attitude, and anticipated well-being as a function of stigma and AI message source match. Panel a: Help-seeking intentions, Panel b: Attitude, Panel c: Anticipated well-being.

Attitude Toward the Service

ANOVA on the attitude index shows a stigma × AI message source interaction F (1, 291) = 50.39, p < 0.001) see Figure 2(b); other effects were NS (p’s > 0.18). Contrasts show that the unmatched avatar improved attitude when stigma was high (vs. low) (MLowStigma = 3.09 vs. MHighStigma = 4.73; F (1, 291) = 35.91, p < 0.001) and the matched avatar improved attitude when stigma was low (vs. high) (MLowStigma = 4.60 vs. MHighStigma = 3.48; F (1, 291) = 16.46, p < .001). Looked at another way, when stigma was high, attitude was more favorable when the service avatar was unmatched (vs. matched) to the consumer’s weight (MUnmatched = 4.73 vs. MMatched = 3.48; F (1, 291) = 20.66, p < 0.001); when stigma was low, attitude was significantly more favorable when the service avatar was matched (vs. unmatched) to the consumer’s weight (MUnmatched = 3.09 vs. MMatched = 4.60; F (1, 291) = 30.45, p < 0.001).

Anticipated Well-Being

ANOVA on the anticipated well-being index shows a significant stigma × AI message source interaction F (1, 291) = 6.94, p = 0.009) see Figure 2(c); other effects were NS (p’s > 0.22). Contrasts show that the unmatched avatar improved anticipated well-being when stigma was high (vs. low) (MLowStigma = 4.45 vs. MHighStigma = 5.00; F (1, 291) = 7.54, p = 0.006). For the matched avatar, anticipated well-being was relatively unaffected by stigma (MLowStigma = 4.76 vs. MHighStigma = 4.55; F <1). Looked at another way, when stigma was high, anticipated well-being was greater when the avatar was unmatched (vs. matched) to the consumer’s weight (MUnmatched = 5.00 vs. MMatched = 4.55; F (1, 291) = 4.87, p = 0.03); when stigma was low, anticipated well-being was relatively unaffected by the (mis)match (MUnmatched = 4.45 vs. MMatched = 4.76; F (1, 291) = 2.32, p = 0.13).

Mediation Analysis

As a test of the predicted mediation of the relationship between stigma and AI message source match on help-seeking intentions through anticipated well-being (H2), we conducted a moderated mediation analysis using 5,000 bootstrapping samples (Hayes 2022, Model 7). In the model, AI was the independent variable (0 = unmatched, 1 = matched), stigma was the moderator (0 = low, 1 = high), anticipated well-being was the mediator, and help-seeking intentions was the dependent variable. Results support overall moderated mediation (a × b = −0.7493; 95% CI: −1.3222 to −0.1955). In the high stigma condition, the indirect effect of anticipated well-being was supported when the service avatar was unmatched (a × b = −0.4454; 95% CI: −0.8461 to −0.0589). In the low stigma condition, mediation did not emerge based on service avatar match (a × b = 0.3039; 95% CI: −0.0921 to 0.7242). Looked at another way (i.e., with stigma as the independent variable (0 = low, 1 = high) and AI as the moderator (0 = unmatched, 1 = matched), results also support overall moderated mediation (a × b = −0.7428; 95% CI: −1.3152 to −0.1767). In the unmatched condition, the indirect effect of anticipated well-being was supported when stigma was high (a × b = 0.5443; 95% CI: 0.1449 to 0.9346). In the matched condition, mediation did not emerge based on stigma (a × b = −0.1985; 95% CI: −0.6164 to 0.2151).

Discussion

Study 2 shows that (i) consumers with higher levels of weight-related stigma respond more positively when the avatar does not match their stigmatizing attribute, and (ii) that their responses to the AI are driven by anticipated well-being. In parallel, when stigma is low, consumers were more positive toward the service offering when the avatar was matched. These results support neither H1 nor H2, thereby suggesting that personalization via a homophily-inspired digital agent is not an effective tool for inclusiveness in this setting. Study 2 extends our understanding of homophily-based matching of stigmatizing cues. Although prior work suggests that sharing cues of stigmatization with other consumers can be beneficial (Harmeling et al. 2021), this study finds that shared stigmatized cues with an AI-based avatar has the potential to backfire. Contrary to a fellow human, shared stigmatized cues with an AI-based service provider may not have the same relevance, potentially because consumers assume that AI cannot truly relate to their (human) experience of living with a stigmatizing attribute. Thus, service firms outwardly recognizing the customer’s stigmatizing characteristic via the firm’s service avatars that match the customer’s stigmatizedJSR_1188676_gs_f2 attribute—which may have not been highly salient to the consumer at that stage of the service process—may offend these consumers. Although more research is needed, marketers may not want to rely on matching stigmatizing attributes under all circumstances; particularly when they employ AI-powered providers (e.g., avatars).

The findings from Study 2 are intriguing as they suggest that even well-established social principles (homophily) might not necessarily transfer to customer-AI interactions. This raises the question in which other ways AI might be personalized to promote service inclusiveness. With our next hypothesis (H3) and Study 3, we therefore deepen our exploration and test the idea of customizing types of AI for inclusive services (as called for by, for example, Fisk et al. 2018).

Stigma and Personalized Message Content: Matching AI Types in Light of Perceived Controllability of Stigma and Customer Involvement

Technological evolution (e.g., machine learning) provides novel opportunities for AI in service (Huang and Rust 2021). For example, chatbots and virtual assistants are not only “increasingly common in primary care” (Miles, West, and Nadarzynski 2021, p. 1), but “the successful integration of AI into healthcare could [also] dramatically improve quality of care;” yet experts emphasize that this “will require careful navigation to ensure the successful implementation of this new technology” (Lovejoy 2019, p. 3). Some studies address this implementation challenge. For example, AI can play a meaningful role at the frontline to reduce access barriers that stigmatized consumers are likely to perceive. Artificial intelligence helps overcome some of the stigma surrounding certain health conditions, because consumers may perceive AI/bots (vs. humans) to be less judgmental (Bartneck et al. 2010; Holthöwer and van Doorn 2022). One successful illustration of this idea is chatbots that provided online cognitive behavioral therapy and decreased depression and anxiety in college students over a 2-week period (Fitzpatrick, Darcy, and Vierhile 2017). Despite such encouraging findings, more research is needed on how to use AI in providing healthcare to populations that might otherwise not be able or willing to access these services (Lovejoy 2019; Miles, West, and Nadarzynski 2021). Therefore, we explore consumer preferences for distinct AI types (thinking AI/feeling AI) as a function of the perceived controllability of their stigma (per H3 and Study 3).

Personalizing Message Content via AI Types: Thinking AI and Feeling AI

In their seminal work on AI in services, Huang and Rust (2018, 2021) distinguish three types of AI: mechanical intelligence, thinking intelligence, and feeling intelligence. Although mechanical AI performs important repetitive tasks that allow consistent service delivery, thinking AI and feeling AI are more relevant for our research. Thinking AI makes autonomous decisions in a rational manner, and it learns and adapts through data mining, deep learning, and predictive analytics. As such, thinking AI can be a powerful platform to personalize frontline interactions and to recommend service solutions in light of customer profiles; it also helps with customizing marketing communications on an individual basis (Huang and Rust 2021). Feeling AI is designed to be empathetic and to personalize service instantaneously (i.e., in real-time); it not only recognizes human emotions, but it can also imitate and respond with simulated emotions (e.g., based on identifying a customer’s sentiment and emotional tone, including states of sadness, happiness, or excitement). In short, feeling AI can increasingly understand and respond to customers on a social, emotional, and relational level. In many service contexts (e.g., healthcare, personal services, education), such empathetic interactions with customers are critical throughout the service journey (Huang and Rust 2021). Yet, despite the great potential that both thinking AI and feeling AI offer, service organizations need to understand how to employ them, especially when they aim to use AI to interact with stigmatized customers. One managerial guidepost is to consider the customer-perceived controllability of the stigma, as we discuss next.

The Moderating Role of Stigma Controllability

People’s beliefs about how well they can control the stigma-causing attribute can make them more or less sensitive to the value they think others will assign to them because of their stigma (Crocker and Major 1989). When people lack control over a stigma, many situations can make them feel powerless, living a life where they rarely present their true self in its entirety (Newheiser and Barreto 2014). These conditions often motivate greater willingness to engage in situations that provide a temporary sense of control in hopes of presenting a more complete self, thereby providing social support (Landau, Kay, and Whitson 2015).

These insights are consistent with coping theory, which suggests that different situations require different coping methods. 14 Lazarus and Folkman (1984) proposed that a “goodness of fit” between a person’s appraisal of a situation and their selected coping strategy is crucial. In addition, their transactional model of stress distinguishes between problem-focused and emotion-focused coping. The authors theorize that an individual’s appraisal of a problem as (un-)controllable influences which of these coping strategies is a better fit. Specifically, a problem-focused coping approach (i.e., strategies focused on resolving the problem itself) is a better fit when the problem is perceived to be controllable (Lazarus and Folkman 1984). In contrast, an emotion-focused coping approach (i.e., strategies meant to address emotions evoked by the problem) is a more effective fit in uncontrollable situations (Siegel, Schrimshaw, and Pretter 2005).

The Moderating Role of Customer Involvement

We also consider the role of customer involvement related to a stigmatizing condition. A stigmatizing attribute (e.g., health condition) should be more personally relevant (i.e., a customer should be more involved with the health condition) when consumers perceive it to be linked to their goals and values; in addition, involvement results from past experiences that are stored in long-term memory and activated in light of the personal relevance of a focal service experience (Celsi and Olson 1988).

Drawing on prior research (e.g., Richins and Bloch 1986; Zaichowsky 1985), we examine involvement as the extent to which a health condition is of personal relevance to a consumer, either by being diagnosed themselves or having a close family/friend being diagnosed with the illness. We expect that (low/high) levels of involvement moderate stigmatized consumers’ responses such that effects related to the interplay between AI types and controllability emerge under high (vs. low) involvement. Our prediction draws on research regarding the importance of involvement on message processing and effectiveness, such that recipients who are more (vs. less) involved are more likely to be influenced by the content of the message because they are more attentive (vice versa, consumers with low involvement are not motivated to process many persuasive cues of the message; Petty and Cacioppo 1981).

Synthesizing these conceptual insights, we expect a three-way interaction such that consumers will report higher help-seeking intentions and more positive attitudes in response to a thinking AI when they perceive their stigmatizing attribute as more controllable; in contrast, we theorize that they will report higher help-seeking intentions and more positive attitudes in response to a feeling AI when they perceive their stigmatizing attribute as less controllable; we expect these effects to emerge for consumers who report high involvement (i.e., personal relevance), but to be mitigated when consumers report low involvement; formally:

When a consumer has high involvement with a controllable stigmatizing condition, they are more likely to (a) seek help and (b) report a more positive attitude toward a thinking (vs. feeling) service AI; when the condition is not controllable, consumers are more likely to (a) seek help and (b) report a more positive attitude toward a feeling (vs. thinking) AI. Under a low level of involvement, consumers are relatively unaffected.

Study 3: The Interplay Between AI Type, Stigma Controllability, and Involvement

This study tests H3 and the mediating role of anticipated well-being alongside alternative mediating variables (explained below). Study 3 is set in the context of Type 2 diabetes. More than 37 million of Americans have diabetes, the majority of which (app. 90–95%) are diagnosed with Type 2 diabetes (CDC 2021). Projections suggest that the number of individuals under the age of 20 receiving a Type 2 diabetes diagnosis will rise by more than 60% by 2060 (Tonnies et al. 2023). A variety of factors (e.g., genetics, lifestyle) may cause this condition (CDC 2022b), allowing us to manipulate the (un)controllability associated with the disease accordingly.

Design, Participants, and Procedures

This study employs a 2 (controllability: uncontrollable, controllable) × 2 (AI type: thinking, feeling) × 2 (involvement: low, high) between-subjects design. We requested 400 and received 401 online panel participants 15 (MAge = 42.48, 201 women). Participants read a scenario in which they receive a Type 2 Diabetes diagnosis. Using actual content from the CDC, we manipulated the controllability of this disease by asking participants to read a brochure with an article describing either the non-controllable (e.g., genetic) or controllable (e.g., lifestyle) factors contributing to Type 2 diabetes, and to summarize the main points of the brochure. Participants were then informed about an AI-based program, led by a humanoid robot, to help patients learn more about how to manage their condition. We manipulated thinking and feeling AI by adapting language from Huang and Rust (2021). See Web Appendix E for stimuli. We measured involvement by asking participants whether they or someone close to them has been diagnosed with Type 2 diabetes (“Have you ever been diagnosed with Type 2 diabetes?” “Do you have someone close to you who has been diagnosed with Type 2 diabetes?” yes/no). After reading the materials, participants indicated their help-seeking intentions (I would like to … enroll in this program; continue to receive emails from this program; join the mailing list for this program; request to receive the newsletter from this program; consider products recommended by this program; read more about this program; 1 = strongly disagree/7 = strongly agree, α = 0.96). 16 We also captured attitude toward the program (negative/positive; bad/good; un-/favorable; dis-/like; un/interesting; 7-point bipolar scale; α = 0.97). We measured anticipated well-being with the same index as in Study 2 (e.g., would help me feel: “… that I lead a purposeful and meaningful life,” “… that I am competent and capable in the activities that are important to my health,” α = 0.96; Diener et al. 2010). 17 Participants also provided their demographics (e.g., age and gender).

Pretest of Controllability Manipulation

We randomly assigned participants to read either the controllability or non-controllability condition (n = 96, 52 women, M Age = 20.28). Then, they indicated whether they perceived getting Type 2 diabetes to be controllable or not (e.g., it is under a person’s control; a person has no control over the cause of this illness (r) (Mantler, Schellenberg, and Page 2003; α = 0.83) (MNoControl = 3.60 vs. MControl = 4.60; F (1, 94) = 15.67, p < 0.001). See Web Appendix F for details. The manipulation performed as intended.

Pretest of AI Type Manipulation

We randomly assigned participants to read either the thinking AI or feeling AI condition (n = 67, 14 women, M Age = 20.52), and to indicate the extent to which the AI was more analytical or emotional (“…based on analytical intelligence to identify consumers' needs and preferences/…based on emotional intelligence to relate to consumers' needs and preferences”; 7-point bipolar; adapted from Huang and Rust 2021; α = 0.81) (MThinking = 2.28 vs. MFeeling = 3.89; F (1, 65) = 26.73, p < 0.001). See Web Appendix E for details. The manipulation performed as intended.

Results

Help-Seeking Intentions

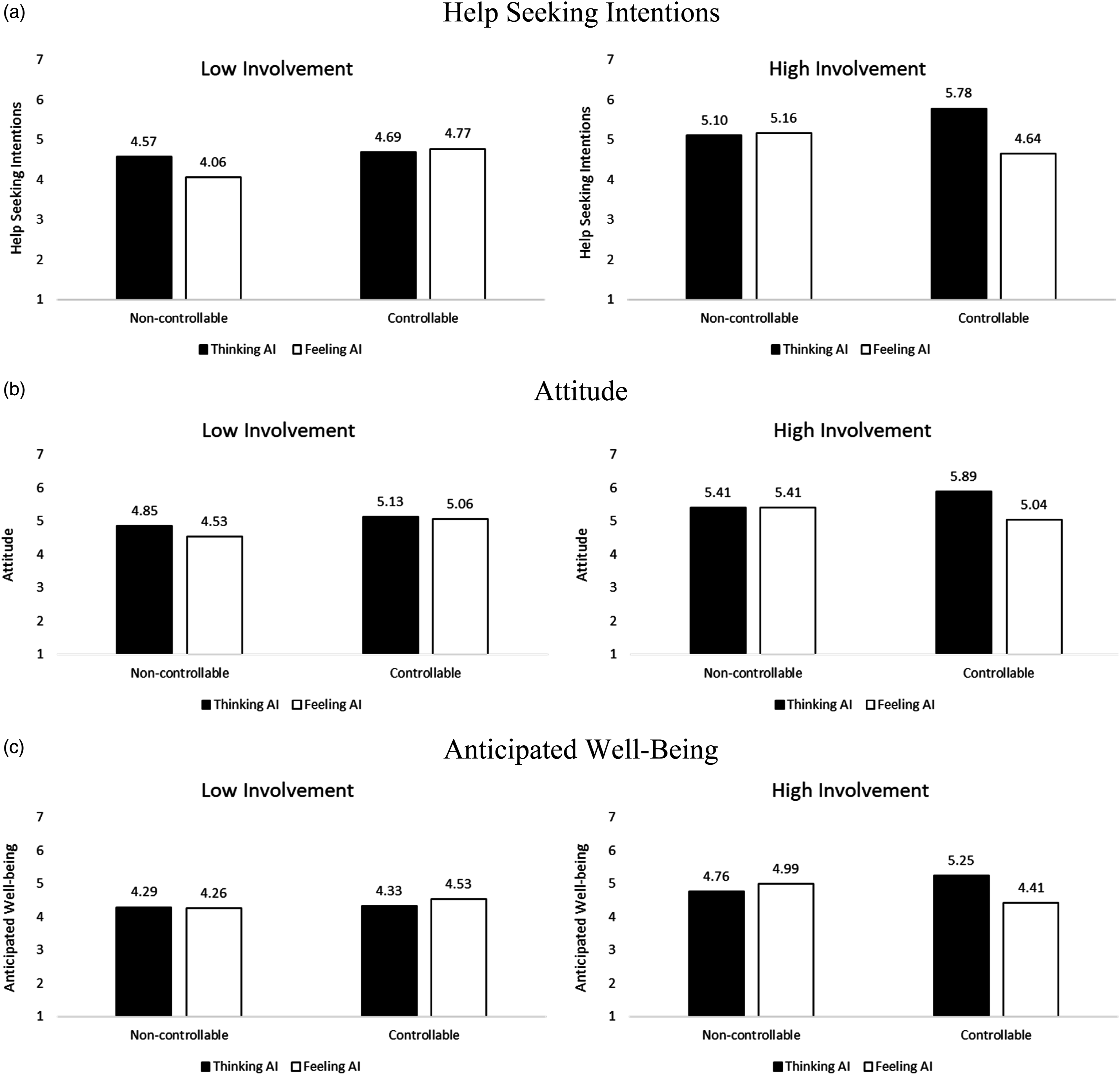

We conducted a regression as a function of AI type, controllability, involvement, and their higher-order interaction (Hayes 2022, Model 3). In the model, AI type was the independent variable (0 = thinking, 1 = feeling), controllability was the first moderator (0 = non-controllable, 1 = controllable), involvement was the second moderator (0 = low, 1 = high), and help-seeking intentions was the dependent variable. The regression resulted in a significant 3-way interaction of AI type, controllability, and involvement (b = −1.78, t (393) = −2.85, p = 0.005) see Figure 3(a). The model also revealed a marginally significant main effect of involvement (b = 0.53, t (393) = 1.75, p = 0.08), the other effects were NS. To explore the significant 3-way interaction, we examined effects by level of involvement. For participants with high involvement with Type 2 diabetes, there was a significant interaction between AI type and controllability (b = −1.19, t (393) = 2.86, p = 0.004). A significant difference in help-seeking intentions emerged when comparing thinking AI and feeling AI for the controllable condition (MThinking = 5.78 vs. MFeeling = 4.64, b = −1.13, t (393) = −3.94, p < 0.001), but not the non-controllable condition (MThinking = 5.10 vs. MFeeling = 5.16, b = 0.06, t (393) = 0.20, p = 0.84), in partial support of H3a. For participants with low involvement, the interaction between AI type and controllability was NS (b = 0.58, t (393) = 1.26, p = 0.21). There was no significant difference in help-seeking intentions between thinking and feeling AI for the non-controllable (MThinking = 4.57 vs. MFeeling = 4.06, b = −0.50, t (393) = −1.62, p = 0.11) and controllable (MThinking = 4.69 vs. MFeeling = 4.77, b = 0.08, t (393) = 0.24, p = 0.81) conditions. Study 3, help-seeking intentions, attitude, and anticipated well-being as function of AI type, stigma controllability, and involvement. Panel a: Help-seeking intentions, Panel b: Attitude, Panel c: Anticipated well-being.

Attitude Toward the Service

We conducted the same analysis using attitude as the outcome. 18 The regression resulted in a marginally significant 3-way interaction of AI type, controllability, and involvement (b = −1.10, t (393) = −1.71, p = 0.09). The involvement main effect was marginally significant (b = 0.56, t (393) = 1.77, p = 0.08), the other effects in the model were NS. See Figure 3(b). To explore the significant 3-way interaction, we examined effects by level of involvement. For participants with high involvement with Type 2 diabetes, there was an interaction between AI type and controllability (b = −0.85, t (393) = 1.97, p = 0.05). There was a significant difference in attitude when comparing thinking AI and feeling AI for the controllable condition (MThinking = 5.89 vs. MFeeling = 5.04, b = −0.85, t (393) = −2.85, p = 0.005), but not the non-controllable condition (MThinking = 5.41 vs. MFeeling = 5.41, b = 0.001, t (393) = 0.003, p = 1). For participants with low involvement, the interaction between AI type and controllability was non-significant (b = 0.25, t (393) = 0.53, p = 0.60). There was no significant difference in attitude between thinking AI and feeling AI for either the non-controllable (MThinking = 4.85 vs. MFeeling = 4.53, b = −0.32, t (393) = −0.99, p = 0.32) or the controllable (MThinking = 5.13 vs. MFeeling = 5.06, b = −0.07, t (393) = −0.19, p = 0.85) conditions. These results provide partial support for H3b.

Anticipated Well-being

We conducted the same analysis using anticipated well-being as the outcome. The regression resulted in a significant 3-way interaction of AI type, controllability, and involvement (b = −1.30, t (393) = −2.27, p = 0.02); there was also an involvement main effect (b = 0.46, t (393) = 1.66, p = 0.10), the other effects are NS, see Figure 3(c). To explore the significant 3-way interaction, we examined effects by level of involvement. For high involvement with Type 2 diabetes, there was a significant interaction between AI type and controllability (b = −1.07, t (393) = 2.80, p = 0.005). There was a significant difference in anticipated well-being when comparing thinking AI and feeling AI in the controllable condition (MThinking = 5.25 vs. MFeeling = 4.41, b = −0.83, t (393) = −3.16, p = 0.002), but not the non-controllable condition (MThinking = 4.76 vs. MFeeling = 4.99, b = 0.23, t (393) = 0.85, p = 0.40). For participants with low involvement with Type 2 diabetes, there was a non-significant interaction between AI type and controllability (b = 0.23, t (393) = 0.54, p = 0.59). There was no significant difference in attitude between thinking AI and feeling AI for either the non-controllable (MThinking = 4.29 vs. MFeeling = 4.26, b = −0.03, t (393) = −0.12, p = 0.91) or the controllable (MThinking = 4.33 vs. MFeeling = 4.53, b = 0.20, t (393) = 0.63, p = 0.53) condition.

Moderated Mediation Analysis: Anticipated Well-Being

We tested a moderated mediation model to examine our mediator (anticipated well-being) alongside the exploratory ones (see Web Appendix H for details on these mediators). We conducted parallel mediation analysis using 5,000 bootstrapping samples (Hayes 2022, Model 12). In the model, AI type was the independent variable (0 = thinking, 1 = feeling), controllability was the first moderator (0 = non-controllable, 1 = controllable), involvement was the second moderator (0 = no, 1 = yes), anticipated well-being, program effectiveness, social support, information exchange, and social fear were the additional mediators, and help-seeking intentions was the outcome variable. The index of moderated mediation supports the mediation for anticipated well-being (a × b = −0.2356; 95% CI = −0.5512 to −0.0133), as well as an exploratory additional mediator, program effectiveness (a × b = −0.7811; 95% CI = −1.5263 to −0.0593); the other exploratory mediators are NS (see Web Appendix H). The mediation is supported in the high involvement and controllable condition (anticipated well-being: a × b = −0.1513; 95% CI = −0.3177 to −0.0317; program effectiveness: a × b = −0.4573; 95% CI = −0.7765 to −0.1574), but not when the condition was high involvement and non-controllable (anticipated well-being: a × b = 0.0424; 95% CI = −0.0452 to 0.1576; program effectiveness: a × b = 0.0515; 95% CI = −0.2570 to 0.3616). Mediation did not emerge when the condition was low involvement and was controllable (anticipated well-being: a × b = 0.0359; 95% CI = −0.0841 to 0.1842; program effectiveness: a × b = 0.0095; 95% CI = −0.4017 to 0.4289) or non-controllable (anticipated well-being: a × b = −0.0060; 95% CI = −0.1308 to 0.1158; program effectiveness: a × b = −0.2627; 95% CI = −0.6819 to 0.1426).

These results show that anticipated well-being and program effectiveness mediate and help explain the help-seeking intentions among people who personally relate to Type 2 diabetes and consider it controllable. Mediation was not supported for the other exploratory variables (index of moderated mediation – social support: a × b = −0.0087; 95% CI = −0.1663 to 0.1509; information exchange: a × b = −0.0929; 95% CI = −0.3691 to 0.0731; social fear: a × b = −0.0087; 95% CI = −0.0845 to 0.0644). We conducted the same analyses using attitude and word-of-mouth as the dependent variables, and these analyses show consistent results (as Web Appendix H details).

Discussion

Study 3 explored the role of thinking AI and feeling AI in the context of a Type 2 diabetes program. We tested how responses to a personalized communication varied when the Type 2 diabetes was perceived as more or less controllable (e.g., based on lifestyle vs. genetics). The results show that among individuals who have a high level of involvement with Type 2 diabetes, when the illness is controllable, help-seeking intentions are greater and attitudes are more positive with thinking AI (vs. feeling AI). Moreover, for highly involved individuals, when the illness is not controllable, health seeking intentions are unaffected by the type of AI. This latter pattern supports the view that people might sometimes use both, problem- and emotion-focused coping, to combat stressful events (Lazarus and Folkman 1984).

General Discussion

Stigmatization, because of its context-driven and diverse nature, can affect a large percentage of the population in their personal and professional lives, as well as in their marketplace experiences (Summers et al. 2018). On a more encouraging note, according to a study by McKinsey (Brown, Burns, and Harris 2022), “the American consumer is undeniably becoming more inclusive,” and companies are also increasingly embracing inclusion efforts. Yet many companies struggle with finding the best ways to implement inclusion (Marcus 2020). Against this background, our research offers insights with theoretical, managerial, and policy implications, especially related to how technologies can support service inclusiveness (e.g., Benvenuti et al. 2023; Fisk et al. 2018; Henkens et al. 2021).

Findings and Theoretical Implications

Personalization (via Homophily) and Service Inclusiveness

Study 1 suggests that interpersonal homophily can be a platform for personalized communication with stigmatized consumers. Our results are consistent with prior research that showed how homophily can facilitate customer-employee interactions (e.g., Arndt et al. 2021; Streukens and Andreassen 2013). In parallel, our findings are distinct and important because homophily can cause negative consequences over time such that it reduces diversity in communities and social networks (see Ertug et al. 2022). Contrasting these concerns, our work helps inform companies on how they can leverage homophily to increase diversity and inclusion. However, more research is needed on the conditions under which homophily has such desired effects (as further discussed below).

Grounded in the literature on personalization (Fan and Poole 2006), we implement our homophily-based approach via core questions of who personalizes what for whom? And related to research on customer journeys (Lemon and Verhoef 2016), we study homophily during the pre-purchase stage. Our focus on this initial stage through the unique lens of consumers with a history of being stigmatized is fully aligned with the notion that “it is important to consider how past experiences” influence the consumer’s current service experience (Lemon and Verhoef 2016, p. 78). Our findings inform service research (and practice) on how personalizing the pre-purchase stage can benefit stigmatized consumers and firms. Extending our work, service scholars can go beyond homophily and draw on alternative conceptualizations of personalization (see Chandra et al. 2022) and link these with service paradigms (e.g., study how the 7Ps can be tailored to inclusiveness across the entire customer journey; Lemon and Verhoef 2016).

Personalization and the Potential of AI

Personalized service is fueled by data and corresponding AI algorithms (Huang and Rust 2018). Because, increasingly sophisticated technologies will allow tailoring inclusiveness efforts on an individual basis, we explored how AI might help facilitate inclusion-inspired personalization. Our results, which are admittedly exploratory in nature, suggest that AI is not a panacea, because we found a homophily-inspired avatar to backfire (Study 2). We speculate that the (obesity-)matched avatar backfired because consumers might be offended by the firm highlighting their stigmatizing attribute. In addition, the beneficial effect of homophily might be curbed because consumers believe that an AI cannot truly understand their stigma-related experiences. This latter notion is consistent with insights from one of our interviewees who questioned the extent to which technology can provide the empathy and human warmth needed when serving stigmatized consumers.

However, Study 3 reveals some encouraging insights related to personalization via AI types (Huang and Rust 2021): when consumers perceive their stigmatized condition to be controllable (vs. not), they prefer thinking AI (vs. feeling AI), as long as the stigmatizing attribute (e.g., health condition) elicits high involvement. These insights inform research on matching consumers with chatbot personalities (Zogaj et al. 2023). For example, Shumanov and Johnson (2021) show that “consumer personality can be predicted during contextual interactions, and that chatbots can be manipulated to ‘assume a personality’ using response language,” which improves consumer engagement with chatbots. Therefore, matching types of AI with consumers as a function of their stigmatized attribute (e.g., based on perceived controllability and concealability, or based on gender, race, language/accent, or age; Zogaj et al. 2023) provides additional opportunities for AI-driven service inclusiveness.

Finally, Studies 2 and 3 show the mediating role of anticipated well-being in consumer-AI-interactions. This finding responds to calls for research on the link between sophisticated technologies and consumer well-being, especially the well-being of vulnerable consumers (e.g., Benvenuti et al. 2023). Our results suggest that artificial, digital, and robotic service providers are able (at least to some extent) to foster human well-being, which is encouraging in light of staff shortages in many healthcare and medical service settings (e.g., Johnson 2022).

Managerial and Policy Contributions

Implementing Personalized Service Inclusiveness

Our results point to various managerial implications. First, managers need to understand how living with a stigmatized attribute influences how customers wish to engage with organizations. Relatedly, in designing their inclusion initiatives, firms should measure perceived stigma, identify markers of stigmatization (e.g., diseases) in their customer portfolio, segment their audiences, and then personalize their inclusion initiatives accordingly. We have explored strategies via personalized message sources (Studies 1 and 2) and message content (Study 3), but other aspects are worth exploring (e.g., the volume, frequency, or timing of inclusion strategies). Second, scholars have underscored the potential for AI to personalize and relationalize service (Huang and Rust 2021). Artificial agents (e.g., chatbots, avatars) will soon be able to adjust their appearance and personality in real-time in light of individuals they serve. We provide managers with empirical insights into how to use thinking AI to personalize service for stigmatized consumers (Study 3). But, equally important, our findings caution managers not to presume that foundational principles of interpersonal relations (i.e., homophily) always transfer to human-technology interactions. Third, we identified anticipated well-being as the driver of positive consumer responses, which allows companies to emphasize corresponding benefits explicitly in their market communications. Fourth, leveraging the above segmentation and measurement efforts, firms can develop and test programs that are designed to improve their customers’ well-being even further (e.g., by reducing perceived stigmatization), benefiting both parties (consumers and firms). Relatedly, on a cautionary note, organizations should carefully monitor any potential stigmatization among their customers. It seems likely that even slight perceptions of being stigmatized by other customers (e.g., online bullying via social media) or by employees (e.g., healthcare providers), can undermine customer well-being.

Policy Relevance of Service Inclusiveness

Stigmatization and discrimination affect the health and well-being of populations around the world. Therefore, multiple of the United Nations Sustainable Development Goals (SDGs) relate directly to this harmful phenomenon (SDG 3 “Good Health and Well-Being,” SDG 5 “Gender Equality,” SDG 10 “Reduced Inequalities”). Although, to date, service scholars may not consider the SDGs to be linked to their work, we propose that service research can help inform progress toward the SDGs with (more) work in the realm of TSR, which is aligned with ideals of “diversity, equity, and inclusion” (Anderson et al. 2013). We hope our work helps inspire service scholars to study topics related to the SDGs.

Limitations and Further Research

Our work has limitations (e.g., scenario-based studies), which suggest avenues for future research. First, while we focus on social stigma, one of the therapists we interviewed noted that self-stigmatization can be an equally serious challenge: “The biggest challenge is that a person who reaches a state of physical, mental, emotional deterioration, and with his low self-esteem to the point that he is with self-stigma, that is, he himself incorporated and believed in those things and incorporated them. The biggest challenge is that, to dismantle all that system of false beliefs. (…) it becomes the greatest therapeutic challenge” (sic.) (I-1). Therefore, we need to examine how organizations can help reduce consumer self-stigmatization. Second, more work is needed on how AI can help with service inclusion. One of our interviewees raised an interesting question related to physical touch of therapeutic robots when they emphasized that clients often “need a hug” (I-2). It will be fascinating to study whether service AI/robots can fulfill such needs. Third, future research should go beyond our focus on message source and message content. One way forward for such research might be the “Transformative Value Creation via Service Communications” framework that guides companies on how to use the 7Ps to promote well-being (e.g., including communications by sales/frontline staff, advertising, social media, website) (Tsiotsou and Diehl 2022). Fourth, companies often incentivize customer acquisition efforts. Marketing research should examine whether and which incentives can be effective for inclusion of stigmatized consumers. For example, providing members with social status (e.g., badges) is a common incentive in online communities. Such status-granting incentives might be particularly effective for stigmatized individuals because stigma often prevents a person from gaining elevated status in other aspects of life. Fifth, in addition to the mediating role of anticipated well-being, our exploratory analyses (Study 3) found consumer-perceived effectiveness of the service to be an additional mediator; we expect that other mediators (and moderators) help inform service inclusion. Finally, we recognize that our studies used online (and student) participants in scenario-based studies that examine healthcare and well-being settings. 19 Future research can extend our work via field studies in other settings in which service firms aim to engage stigmatized consumers (e.g., consumers of certain sexual orientations, race/ethnic profiles, or religions).

Supplemental Material

Supplemental Material - Personalized Communication as a Platform for Service Inclusion? Initial Insights Into Interpersonal and AI-Based Personalization for Stigmatized Consumers

Supplemental Material for Personalized Communication as a Platform for Service Inclusion? Initial Insights Into Interpersonal and AI-Based Personalization for Stigmatized Consumers by Martin Mende, Maura L. Scott, Valentina Ubal, Corinne M. K. Hassler, Colleen M. Harmeling, and Robert W. Palmatier in Journal of Service Research

Supplemental Material

Supplemental Material - Personalized Communication as a Platform for Service Inclusion? Initial Insights Into Interpersonal and AI-Based Personalization for Stigmatized Consumers

Supplemental Material for Personalized Communication as a Platform for Service Inclusion? Initial Insights Into Interpersonal and AI-Based Personalization for Stigmatized Consumers by Martin Mende, Maura L. Scott, Valentina Ubal, Corinne M. K. Hassler, Colleen M. Harmeling, and Robert W. Palmatier in Journal of Service Research

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.