Abstract

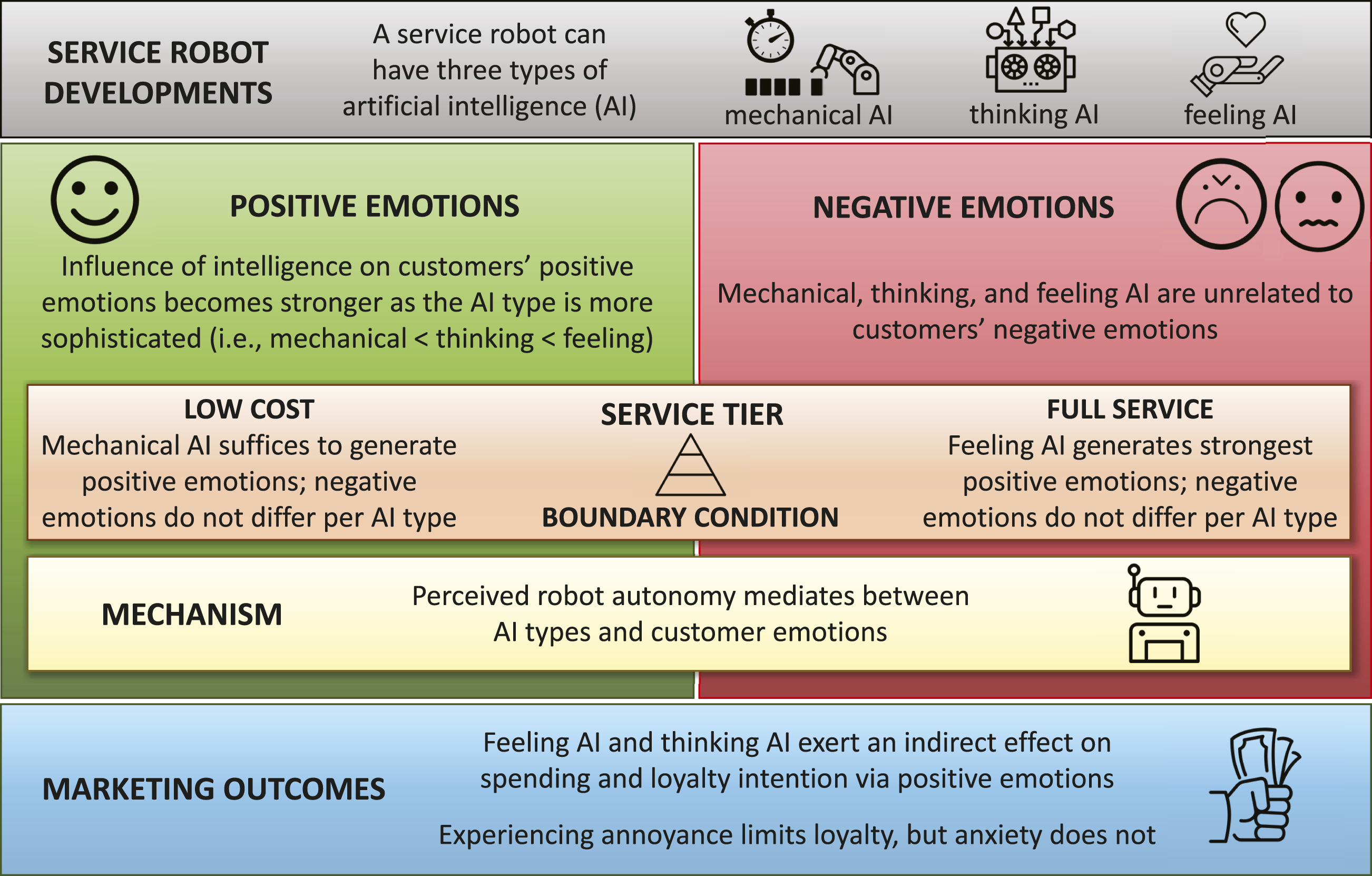

Service robots are taking over the frontline. They can possess three types of artificial intelligence (AI): mechanical, thinking, and feeling AI. Although these intelligences determine how service robots can help customers, not much is known about how customers respond to robots of different intelligence. This paper addresses this gap, builds on the appraisal theory of emotions, and employs three online experiments and one field study to demonstrate that customers have different emotional responses to the three types of AI. Particularly, the influence of AI on positive emotions becomes stronger as the AI type becomes more sophisticated. That is, feeling AI relates more strongly to positive emotions than mechanical AI. Also, feeling AI and thinking AI increase spending and loyalty intention through customers’ positive emotions. We also identify important contingency effects of service tiers: mechanical AI is more suitable for low-cost firms, whereas feeling AI mainly benefits full-service providers. Remarkably, none of the three intelligences are directly related to negative emotions; perceived robot autonomy is an important mediator in these relationships. The findings yield concrete managerial guidance as to how smart a service robot should be by pinpointing the right type of AI given the market segment of the service provider.

Introduction

On the frontline of many organizations, service robots enabled with artificial intelligence (AI) are transforming the service process. For example, waiter robots in China deliver between 50% and 100% more meals than a waiter employee at a lower cost than that of the employee’s annual salary (Hospitality and Catering News, 2019). Literature now predicts the gradual replacement of humans by robots, which goes hand in hand with the development of multiple types of AI (Huang, Rust, and Maksimovic, 2019). Service providers will use mechanical AI (automatic routine repetition) for standardization of transactional tasks, thinking AI (analytical information processing) for personalization of more data-driven services, and feeling AI (social interaction skills) for relationalization of experience-based hedonic services (Huang and Rust, 2021b).

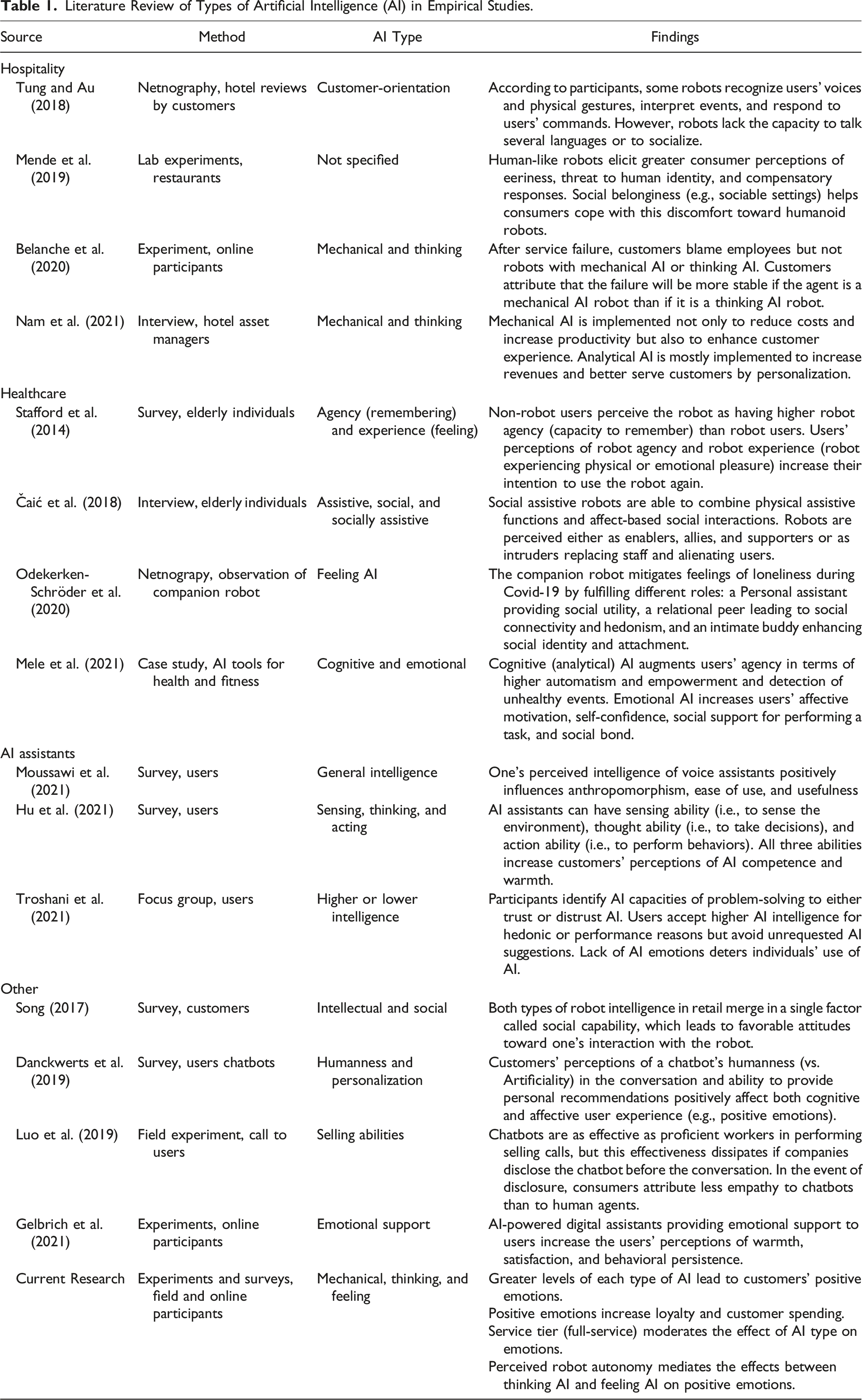

Literature Review of Types of Artificial Intelligence (AI) in Empirical Studies.

Several clear observations flow from Table 1. First, despite the delineation of mechanical, thinking, and feeling AI rapidly becoming the dominant framework in service AI research (e.g., judging by the number of references to seminal works), very little is known about consumers’ responses to service robots incorporating different types of AI. The reasons are that some empirical works generate their own typology (e.g., Mele et al., 2021), while others identify “should-be” types or qualities (e.g., Odekerken-Schröder et al., 2020), and still others include some but not all AI types (e.g., Belanche et al., 2020). Second, the majority of studies adopt a cognitive perspective regarding customer responses, as evidenced by outcomes such as intention to use (e.g., Moussawi et al., 2021) or perceptions of ability (e.g., Hu et al., 2021). However, customers’ responses to innovative technologies, such as service robots, are often emotional in nature (Wood and Moreau, 2006). Especially when interacting with AI devices, individuals undergo an appraisal process before deciding on their future behavior (Gursoy et al., 2019). Not considering these appraisals and their associated emotions provides an inaccurate picture of customer behavior, such as spending or future loyalty, on the automated frontline (Lu et al., 2019). Finally, although scholars have noted that a company’s marketing strategy may determine the introduction of one or another type of AI (Rust and Huang, 2021), little is yet known about how customers across market segments respond to different types of AI. Given the importance of customers’ goals and expectations in their evoked emotions (Watson and Spence, 2007; Wood and Moreau, 2006) and the differences in customers’ goals and expectations in different segments, their emotional responses to service robots are likely to vary across segments.

This work aims to address the above-mentioned gaps and specifically presents four main contributions. First, our research is among the first to empirically examine the framework of AI types (Huang and Rust, 2021b) and to assess customers’ responses to each form of intelligence in frontline service robots. We build on cognitive appraisal theory (Bagozzi et al., 1999; Johnson and Stewart, 2005; and Watson and Spence, 2007), which predicts that customers evaluate or appraise the underlying characteristics inherent in service situations, experience positive and negative emotions as a result of this appraisal process, and behaviorally respond to the experienced emotions. Our effort not only extends studies listed in Table 1 but also adds an emotional perspective to a rapidly growing stream of literature that investigates how consumers respond to robot characteristics (e.g., Belanche et al., 2021, Blut et al., 2021, Mozafari et al., 2021, McLeay et al., 2021, and Yoganathan et al., 2021).

Second, we add to nascent literature on service technology and emotions (e.g., Henkel et al., 2020; Rajaobelina et al., 2021) by uncovering the mechanism through which service robot intelligence relates to customer emotions. We build on cognitive appraisal theory’s proposition that the agency of the service agent (i.e., whether the robot has control over the service outcome) is one of the dominant appraisals to predict customer emotions. Accordingly, we hypothesize and find that perceived robot autonomy, “the ability of robots to perform intended tasks based on current state and sensing without human intervention” (Xiao and Kumar, p. 15), mediates between AI and evoked emotions. These findings also add to a discussion in which some scholars hold that emotions directly result from interacting with innovations (Wood and Moreau, 2006), while others suggest that a process of appraisals precedes experienced emotions (Watson and Spence, 2007). Our results indicate that positive emotions may be a direct result of customer interaction with technology, while negative emotions follow an appraisal of agent autonomy.

Third, we answer the question of whether the AI types are equally suited to serve customers in different market segments. We build on the common distinction between firms operating at low cost and those employing a full-service strategy (Chan and Tung, 2019; Treacy and Wiersema, 1993) and note that customers patronizing these firms in the associated service tiers have different goals and expectations of their service interactions (Saha and Theingi, 2009; Tsaur, Luoh, and Syue, 2015). According to cognitive appraisal theory, such differential customer preferences determine whether a marketing stimulus is appraised as desirable or undesirable and, thus, whether positive or negative emotions are evoked. This adds detailed new insights to literature, which has mostly focused on robot’s anthropomorphism and its boundary conditions, such as task or sectoral characteristics (Blut et al., 2021), but has provided little insight for management regarding the role of service tiers (or segments) existing within sectors.

Finally, the business relevance of this paper lies in the fact that the cost of robot development and implementation rapidly increases with the AI types. Firms must thus know which type of service robot intelligence fits their profile or, more colloquially phrased, how smart a service robot should be. The answer is nuanced; low-cost companies could implement any of the AI types, but mechanical AI automation is sufficiently adequate. Full-service providers benefit from robots that are more characteristic of feeling AI than of mechanical AI because this enhances positive emotions and reduces negative emotions. Another route for firms toward desirable customer emotions is by influencing customers’ idea of the robot’s autonomy.

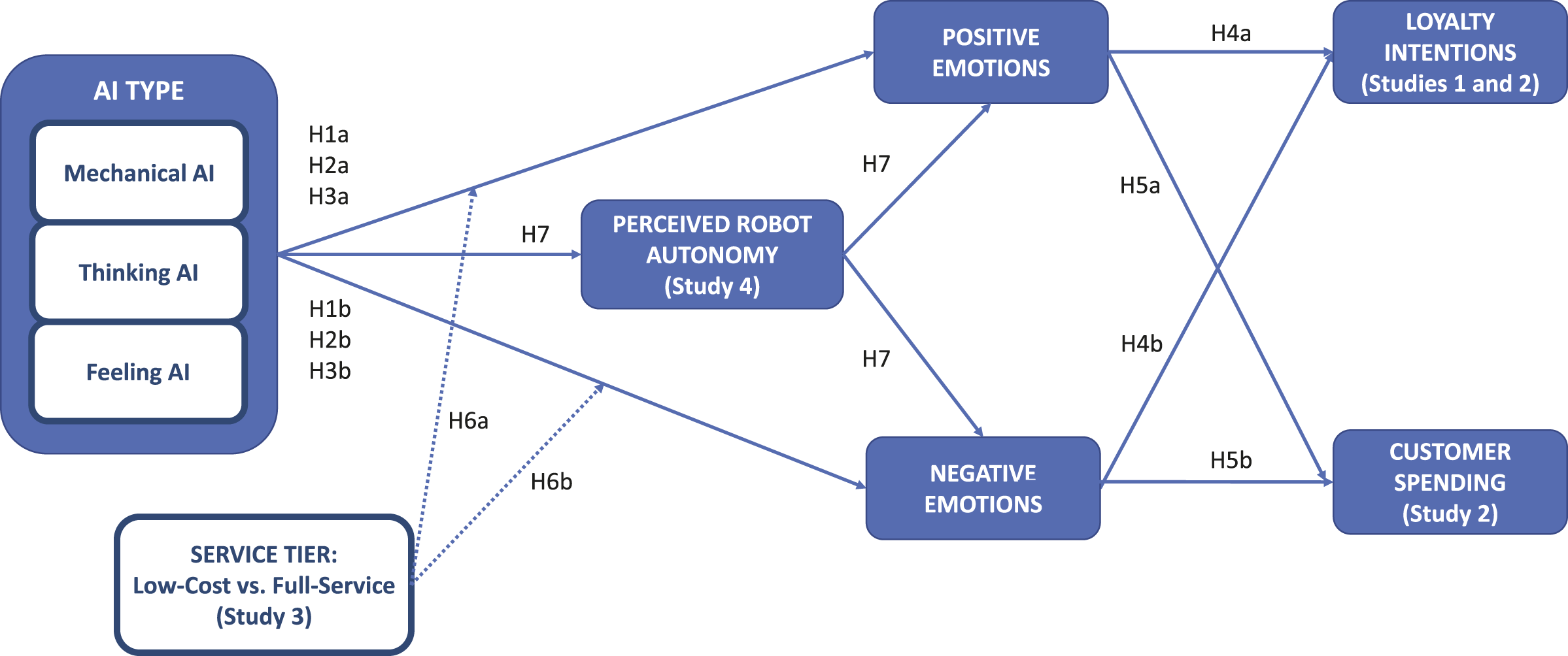

Figure 1 illustrates our conceptual framework consisting of seven hypotheses, which we empirically test by means of four studies. We focus on service robots—autonomous agents providing customized services by performing physical and nonphysical tasks (Jörling, Böhm, and Paluch, 2019)—and study concierge and waiter robots in particular because they require all three AI types (e.g., due to context and person variations, Huang and Rust, 2021b) and represent prototypical settings in frontline service studies (Belanche et al., 2020). Organizing framework.

In Study 1, an online experiment featuring a hotel context (n = 314), we demonstrate that while the three AI types are unrelated to negative emotions, their influence on positive emotions becomes stronger as the AI type is more sophisticated. In Study 2, a field study in a real-life robot-operated restaurant (n = 311), we corroborate these effects and extend our implications to the outcome of customer spending. In Study 3, an online experiment (n = 334), we show that more positive emotions arise as the AI type increases in sophistication, but that this effect only holds for full-service restaurants, not for low-cost restaurants. Finally, Study 4, an online experiment again featuring a restaurant setting (n = 296), uncovers perceived robot autonomy as the mechanism through which different types of AI induce customer emotions.

Conceptual Development

Types of AI

Service robots avoid the limitations of human beings (e.g., temper, health issues, and tiredness) while including more advanced skills (e.g., multilingualism, memory capacity, collection, and analysis of information). Scholars have recently captured these skills in different types of “intelligences.” We adopt the well-cited framework proposed by Huang and Rust (2021b), which includes three types of intelligence: mechanical, thinking, and feeling AI. Each AI type should be considered as a separate category, since each delivers unique benefits and can be used differently for customer engagement. The three categories are not mutually exclusive; every robot may have specific levels of mechanical AI, thinking AI, and feeling AI.

Mechanical AI “concerns the ability to automatically perform routine, repeated tasks” (Huang & Rust, 2018, p. 158). Such mechanical operations do not require much creativity because repetition allows an agent to perform tasks with little or no extra thought (Sternberg, 1997). Thus, mechanical AI generally learns and adapts only to a minimal degree and is predominantly designed to maintain consistency and maximize efficiency. Thinking AI involves analytical or intuitive processes to learn and adapt from data (Huang and Rust, 2021b). It is based on skills such as information processing; logical reasoning; and algorithmic learning from observable artifacts, cross-sectional data, and longitudinal patterns (Huang & Rust, 2018). Thinking AI technologies are designed to explore customer diversity, identify meaningful patterns, and provide a more personalized service. Finally, feeling AI extracts customer emotions from data, and the outcomes are used in a technology’s interaction with customers through natural language (Huang and Rust, 2021b). Technology based on feeling AI is able to recognize and understand other people’s emotions and to respond appropriately or to influence others’ emotions. This includes interpersonal and social skills to be sensitive to others’ feelings and relational needs (Huang & Rust, 2018).

AI and Customer Emotions

An emotion reflects “a mental state of readiness that arises from cognitive appraisals of events or thoughts; has a phenomenological tone; is accompanied by physiological processes; is often expressed physically (e.g., in gestures, posture, and facial features); and may result in specific actions to affirm or cope with the emotion, depending on its nature and meaning for the person having it” (Bagozzi, Gopinath, and Nyer, 1999, p. 184). Emotions may be positive (e.g., happy, excited, and pleased) or negative (e.g., anxious, uneasy, and discontented) in valence. The cognitive appraisal theory of emotions holds that individuals consciously and unconsciously evaluate the characteristics of service encounters (Lazarus, 1991; Johnson and Stewart, 2005), particularly outcome desirability and agency (Watson and Spence, 2007). For instance, if one lends their car to a colleague who then damages it due to a malfunctioning lane assist function, although the outcome is undesirable, the colleague is not fully autonomous in operating the vehicle, such that the agency cannot fully be ascribed to the driver. Different appraisals of service encounters jointly lead to individuals experiencing positive or negative emotions. We first focus on the appraisal that, across studies, explains most variance: outcome desirability. Later, when we uncover the role of perceived autonomy, we will consider agency appraisal.

Robots combine different intelligences and can have higher or lower levels of mechanical, thinking, and feeling intelligence. We posit that as the level of each of these intelligences increases, so does the intensity of customers’ evoked emotions. Specifically, with regard to mechanical AI, literature indicates that customers tend to experience more positive emotions and less negative emotions when an agent provides a consistent, efficient, and competent service (Price et al., 1995; Delcourt et al., 2017). As a core service requirement for a satisfactory experience, customers demand an organized, capable, and efficient service (Zeithaml et al., 1990). A consistent service, as a key feature of mechanical AI, may facilitate a pleasurable service encounter by contributing to customers’ flow (Quach et al., 2020). By contrast, the absence of service consistency contributes to negative feelings because of heightened outcome uncertainty (Price et al., 1995). In summary, customers likely appraise the structure and consistency in enhanced mechanical AI as desirable, such that this increases positive and decreases negative emotions. Therefore, we hypothesize the following:

Customers interacting with service robots that capture thinking intelligence assume that a robot learns from previous experiences as an employee would do (Belanche et al., 2020). The improvement and adaptation of skills is appraised as a desirable service outcome and triggers positive emotions in humans. For instance, according to Lange and Pastau (2018), customers feel that restaurant recommendation agents (embodied by a smartphone app) with lexical diversity, grammatical complexity, and speech fluency are more affectionate and make better companions. Further evidence supports that thinking AI’s advanced abilities to innovate, understand humans, and solve problems result in customers’ increased trust (Troshani et al., 2021), such that customers become more open to the service outcome. For instance, personal assistants such as smart wearable devices include thinking AI to help customers exercise, control their daily food intake, or take care of their incapacitated relatives. The learning capabilities of these assistants contribute to people’s (positive) feelings of protection and general well-being and reduce (negative) feelings of loneliness and lack of self-control (Mele et al., 2021). The outcomes of thinking AI functionalities are therefore appraised as desirable. Combining these insights with the rationale from cognitive appraisal theory, we propose the following:

Feeling AI further brings relational skills such as empathizing with users or showing supporting behaviors, which generally increase customers’ positive affective states, such as liking, trust, and respect (Bickmore and Piucard, 2005) as well as happiness and satisfaction (Gelbrich et al., 2021). When a robot recognizes, experiences, and reacts appropriately to others’ emotions, the robot is perceived as empathic. Such empathy is considered a desirable outcome because it is a universal value in human communication, which leads to greater affiliation and positive affect capitalization. In turn, a lack of empathy often leads to undesirable outcomes such as misunderstandings, hostility, and frustration (Preece, 1999). Analogously, (genuine) robot laughter discourages a range of negative affective responses among users (Jo et al., 2013), and the associated emotional competencies help customers to cope with any negative episode during the service encounter (e.g., a small delay and a rude fellow customer). In summary, customers likely appraise more intense levels of feeling AI as a desirable outcome, such that they experience more positive and less negative emotions. In other words,

Emotions and Marketing Consequences

Cognitive appraisal theory proposes that customers consider their emotions as information influencing their current and future actions. Indeed, literature demonstrates that emotions may drive consumer service experience and product choice (Bloemer and De Ruyter, 1999). As people generally strive to repeat the experience of positive emotions (Bagozzi et al., 2016), patronizing a service provider that initially sparked a positive emotion becomes more likely. In addition, individuals tend to reciprocate positive events (Blau, 1986); in service settings, this may manifest as increased spending or tipping behavior. By contrast, negative emotions may make customers skeptical of the service provider’s intention and thus more likely to withdraw from the service. In line with these works, we hypothesize the following:

Empirical Overview

We test our hypotheses in four empirical studies. In Study 1, we focus on testing H1–H4 in an online experiment. In Study 2, we corroborate our findings of the first study in a field setting, and we expand our empirical assessment to include H5. In Study 3, we expand our framework to study the contingency effect of service tiers. Finally Study 4, focusing on full-service providers, uncovers the mechanism through which different AI types induce customer emotions.

Study 1: The Link Between AI Type and Customer Emotions

The objective of Study 1 was twofold. First, we aimed to provide initial evidence of the primary influence of AI types on customers’ emotions by examining customers’ responses to a hypothetical service encounter with a frontline robot presenting mechanical, thinking, or feeling AI. Second, we evaluated the influence of customers’ emotions on loyalty intention.

Method

We conducted a between-subject online experiment with three scenarios focusing on mechanical, thinking, and feeling AI, respectively. Using the Prolific crowdsourcing platform, we recruited participants from a consenting representative sample of European adults (aged over 18). Participants were randomly assigned to each condition. After removing 17 participants who failed attention checks and five who provided an incomplete questionnaire, 314 individuals remained. Most participants were female (55.1%), between 25 and 34 years old (25.2%), with a university education (77.4%). Web Appendix 1 presents the full characteristics of the sample.

Participants were asked to imagine being in a hotel where they would be attended by a service robot that performs front-desk tasks. We manipulated the type of AI of the service robot by describing the robot’s behavior that would typically correspond to each of the categories. The full scenarios are described in Web Appendix 2, while an image of the robot shown to participants is displayed in Web Appendix 3. After being exposed to the stimulus, participants responded to a set of questions. Based on the definitions and characteristics provided by Huang and Rust (2021b), we developed three multi-item scales to measure respondents’ perceptions of each type of AI. Even though each scenario focused on one type of AI, customers may have perceived that the robot possessed more than one intelligence. Therefore, participants evaluated all three intelligences for each scenario. Then, we assessed respondents’ positive and negative emotions following their interaction with the service robot, employing the scales proposed by Bagozzi et al. (2016) and adapting them to our research context. We deemed this a valid approach as previous research has demonstrated that mental simulation also enacts emotions related to the use of service robots through the imaginative process (Lu, Cai, and Gursoy, 2019). The measurement of loyalty intention, capturing the intention to revisit the hotel and positive word-of-mouth intention, was adapted from Ryu, Lee, and Kim (2012). Three items adapted from Bagozzi et al. (2016) were used to measure the realism of the scenario (α = .844). Responses to all items were recorded on 7-point Likert scales; Web Appendix 5 details all survey items used in this study as well as the subsequent studies.

We first confirmed that participants reported levels of realism (M = 5.432, SD = 1.508) significantly higher than the midpoint of the scale (p < .01). We then confirmed that our manipulation of the AI types was successful; see Web Appendix 6 for more details. Then, we began the measurement validation process with a principal components analysis with varimax rotation to assess the dimensionality of each scale (with eigenvalues >1 and factorial loadings >.5). Most latent variables corresponded to one factor, but for negative emotions two factors were extracted. On the one hand, the emotions anxious, nervous, uneasy, tense, worried, and threatened formed one of the factors of negative emotions. We consider these emotions to be related to anxiety. On the other hand, upset, discontented, disappointed, ashamed, guilty, and regretful formed the second factor of negative emotions. We consider these emotions to be related to annoyance. This outcome aligns with previous studies that have found that emotions with the same valence could have different meanings (Raghunathan and Pham, 1999).

A confirmatory factor analysis using SmartPLS 3.0 (Ringle, Wende, and Becker, 2015) corroborated the initial factor structure. As can be seen in Web Appendix 7 (i.e., Table WA7.1), we obtained adequate levels of Cronbach’s alpha and composite reliabilities of all the reflective constructs. The average variance extracted (AVE) values were also above the benchmark of .5 (Fornell & Larcker, 1981) for all latent constructs, confirming convergent validity. Finally, discriminant validity was supported by checking that, for all pairs of constructs, the square root of the AVE was greater than the correlations among constructs (Fornell & Larcker, 1981) and that heterotrait-monotrait (HTMT) values were lower than .9 (Henseler et al., 2015).

Results and Discussion

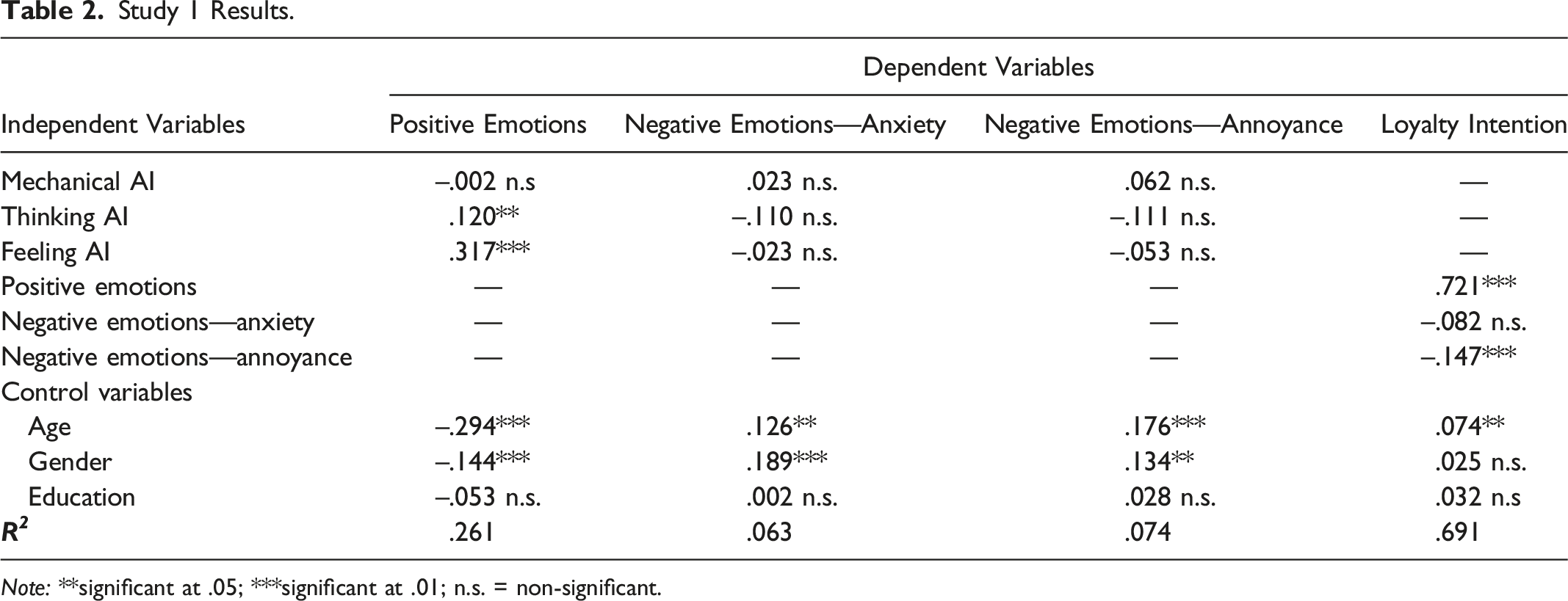

Study 1 Results.

Note: **significant at .05; ***significant at .01; n.s. = non-significant.

First, regarding the influence of each type of AI on positive emotions, we observed positive and significant effects of feeling AI (β = .317, p < .01) and thinking AI (β = .120, p < .05), whereas the influence of mechanical AI was non-significant (β = −.002, p > .05). Hence, H3a and H2a are supported, but H1a is not. These results also suggest that this influence becomes stronger as the AI becomes more sophisticated. This may be explained by the observation that when service robots become more sophisticated in their AI type, the technology seems more radical and complex to the customer. However, with highly intelligent robots, such as those built on feeling AI, the customer experience is usually more akin to a traditional service encounter compared to the experience with mechanical AI robots. Hence, even though customers expect that their service goal will be difficult to attain with more complex technology, it actually becomes easier. This unexpected ease of goal attainment is likely to be considered a desirable outcome and thus enhances positive emotions (Luce, Bettman, and Payne, 2001).

Second, the effects of mechanical AI, thinking AI, and feeling AI on negative emotions were non-significant; therefore, H1b, H2b, and H3b are not supported. In addition, we observed that positive emotions had a significant effect on loyalty intention (β = .721, p < .01), supporting H4a. However, H4b is only partially supported, while the effect of negative emotions related to anxiety on loyalty intention (β = −.082, p > .05) was non-significant, and negative emotions related to annoyance exerted a significant negative influence (β = −.147, p < .01). We also found some significant effects of the control variables age and gender; these effects align with insights from past works on technology adoption (e.g., Morris and Venkatesh, 2000).

Finally, we calculated bias-corrected confidence intervals (CIs) to evaluate the indirect effect of types of AI on loyalty intention through emotions (Chin, 2010). We observed that while feeling AI (CI [.146, .326]) and thinking AI (CI [.005, .172]) exerted an indirect effect on loyalty intention via positive emotions, no significant indirect effect arose via negative emotions.

In summary, Study 1 provides partial support for our theoretical framework and suggests that higher robot intelligence enhances customers’ loyalty by means of positive emotions. Nevertheless, AI type does not affect customers’ negative emotions. Interestingly, customer anxiety toward robots (e.g., spontaneous nervousness and thrill) may not be as bad as feelings of annoyance related to this service innovation. The General Discussion expands on these remarks.

Study 2: Field Study Corroboration and Spending Extension

A field study was set up to corroborate the findings of Study 1 in a real-life setting. Apart from re-assessing H1–H4, the setting also allowed us to test H5 (spending). In addition, we aimed to generalize our findings beyond the hotel setting and focused on a restaurant context where the frontline robot performed waiter tasks.

Method

We collaborated with a mid-price restaurant that employs the service robot presented in Web Appendix 3 to interact with its customers—note that we employed a picture of this same robot in our three online experiments. The waiter robot incorporates the three kinds of AI; among other skills, the robot greets customers, delivers the products ordered, takes the empty plates to the kitchen, and acts in a friendly manner. The restaurant is located in a major European city. In March 2021, customers of the restaurant were asked, at the end of their visit, to participate in a study to share their experiences with the service robot. An inclusion criterion was that customers at least had to place their order with and be directly served by the robot.

The questionnaire—accessed by scanning a QR code located at the exit of the restaurant—asked participants about their positive and negative emotions experienced while interacting with the service robot. The same scales to measure emotions and loyalty intention used in Study 1 were used in Study 2; see Web Appendix 5. In addition, based on Huang and Rust (2021b), one item measured customers’ perceptions of each type of AI. A short description of each intelligence preceded these questions. Finally, a question was included to measure the money spent (per person, for the respondent only) in the restaurant in the following intervals (in Euros): less than 5, 5–10, 11–20, 21–30, 31–40, 41–50, 51–60, and more than 60 Euros.

After removal of eight incomplete responses and four cases who failed attention checks, 311 individuals remained. The restaurant does not target any specific sociodemographic group and receives guests for drinks, breakfast, lunch, and dinner. Most participants were female (53.7%), between 35 and 44 years old (29.9%), with a university education (67.2%); see Web Appendix 1 for more details.

To validate our measures, we conducted a confirmatory factor model in SmartPLS 3.0, where negative emotions were again included in two constructs: anxiety and annoyance. Web Appendix 7 (i.e., Table WA7.2) demonstrates adequate levels of Cronbach’s alpha and construct reliability, as well as satisfactory convergent validity and discriminant validity.

Results and Discussion

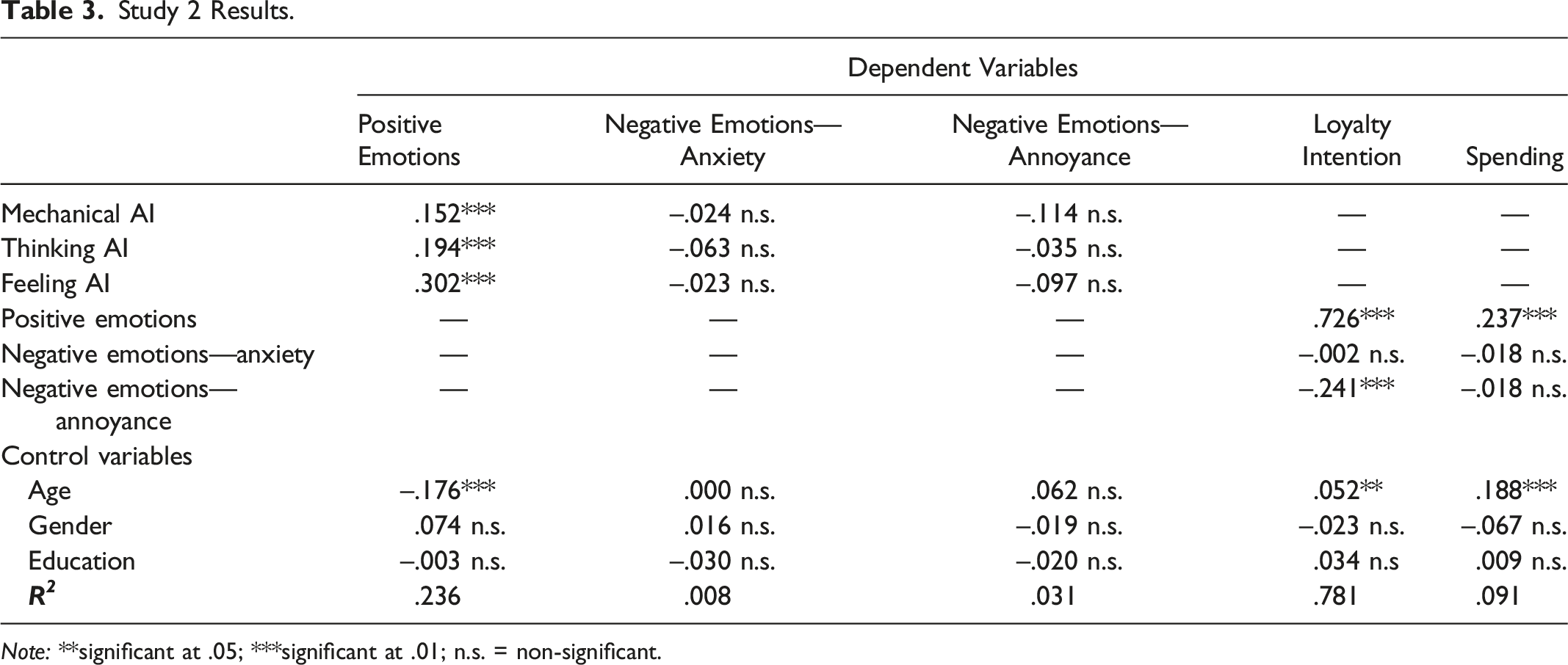

Study 2 Results.

Note: **significant at .05; ***significant at .01; n.s. = non-significant.

We observed that positive emotions had a significant effect on both loyalty intention (β = .726, p < .01) and spending in the restaurant (β = .237, p < .01). These results corroborate previous findings for H4a and support H5a, respectively. Similar to the results from Study 1, the effect of negative emotions related to anxiety on loyalty intention (β = −.002, p > .05) was non-significant, but negative emotions related to annoyance exerted a negative influence (β = −.241, p < .01). Thus, H4b is again partially supported. Customers’ spending in the restaurant was not affected by negative emotions related to anxiety (β = −.018, p > .05) or to annoyance (β = −.018, p > .05). Therefore, H5b is not supported. Regarding the influence of control variables, age exerted a negative effect on positive emotions (β = −.176, p < .01) and positive effects on both loyalty intention (β = .052, p < .05) and spending in the restaurant (β = .188, p < .01).

Finally, focusing on indirect effects, we observed that all AI types (CImechanical [.039, .180]), CIthinking [.052, .228], and CIfeeling [.140, .303]) exerted an indirect effect on loyalty intention via positive emotions. We also found indirect effects on spending via positive emotions (CImechanical [.010, .076]), CIthinking [.012, .096], and CIfeeling [.031, .126]). As in Study 1, no significant indirect effect arose via negative emotions.

In summary, the results of this field study are relatively consistent with those of Study 1 and confirm that (1) all types of AI relate to positive rather than negative emotions; (2) these relationships become stronger as the type of AI becomes more sophisticated; (3) positive emotions have positive consequences for the company in terms of loyalty and consumers’ spending; and (4) while negative emotions related to annoyance exert a negative effect on loyalty intention, this effect does not exist for negative emotions related to anxiety.

The Moderating Role of Service Tier

So far, we have built on cognitive appraisal theory to argue that customers appraise the outcome desirability of a service element—in our case, the AI type underlying a service robot. The theory accounts for the fact that the desirability of an outcome may be highly dependent on the construction of meaning from an individual’s context. To illustrate, “the winner and loser of a sporting event will probably have very different interpretations of, and emotional responses to, the same stimulus event” (Watson and Spence, 2007, p. 490). These different appraisals of the same stimulus stem from divergent goals and expectations (Johnson and Stewart, 2005). On the frontline, different customer preferences can be found between different service tiers.

Service tiers vary from low-cost companies employing low price strategies to full-service companies driven by superior services to customers (Rajaguru, 2016). Previous studies have hinted that service tier may influence how service robots relate to customer emotions. For instance, Chan and Tung (2019) found different customer responses to service robots in budget, midscale, and luxury hotels. Based on the service tier, customers make specific elements of the service provision more salient than others in their pattern of expectations. Specifically, patrons of low-cost companies expect efficient operations against a low price (Saha and Theingi, 2009). By contrast, customers of full-service establishments expect a more complete service experience, which emphasizes the importance frontline agents’ skills and attributes (e.g., efficacy, pleasantness, and empathetic performance; Tsaur, Luoh, and Syue, 2015).

We posit that the more sophisticated an AI type is, the better it meets the expectations of customers of full-service providers. As a result, these customers are more likely to consider outcomes as desirable from feeling AI than from mechanical AI. Specifically, the lack of a personalized experience in a mechanical AI implementation is likely to be appraised as undesirable and therefore trigger less positive and more negative emotions. In other words, customers of a premium service do not welcome practices that do not correspond with its positioning (Moser et al., 2018). Mechanical AI is likely a better match with the goals of customers of low-cost providers. These customers typically expect efficient service, and mechanical AI is highly suited to efficiently scale up service operations, for instance, by transforming repetitive human service into mass production.

At the same time, customers of low-cost providers may appraise feeling AI functionality as undesirable (cf., Saha and Theingi, 2009; Tsaur, Luoh, and Syue, 2015). These customers may perceive the “feeling behavior” of a robot as not contributing to productivity, and they may even feel uneasy because they are not accustomed to receiving high levels of personal attention (cf. Mikulić and Prebežac, 2011). By contrast, customers of full-service providers are more likely to appreciate the hedonic qualities of personal attention and empathetic performance. Therefore, these customers are more likely to see feeling AI as a more desirable outcome than clients of low-cost providers would and consequently have less negative and more positive emotions.

Finally, because thinking AI robots occupy a position between mechanical intelligence and feeling intelligence, we posit that these robots evoke less extreme emotions in customers than their mechanical and feeling counterparts do. Formally, we hypothesize the following:

Study 3: Robots in Low-Cost vs. Full-Service Settings

Study 3 empirically examined whether the influence of AI type on customers’ emotions depends on service tiers. The objective of this study was twofold. On the one hand, we aimed to corroborate the pattern of customers’ emotional responses derived from each type of AI. On the other hand, we aimed to investigate which type of AI could be more suitable for low-cost providers and full-service providers.

Method

An online experiment with a 3 (type of AI: mechanical, thinking, and feeling) x 2 (restaurant type: low-cost vs. full-service restaurant) between-subject design was conducted. As in Study 1, we recruited participants from a consenting representative sample of European adults (aged over 18) active on Prolific. Participants were randomly assigned to each condition. After removing 11 responses who failed attention checks and six incomplete responses, 334 participants remained; the smallest cell in the experiment consisted of 46 respondents. As can be seen in Web Appendix 1, most participants were female (50.9%), between 35 and 44 years old (32.0%), with a university education (65.3%).

Participants were asked to imagine being attended by a service robot in either a low-cost or a full-service restaurant. We showed a picture of a service robot (Web Appendix 3), which corresponds to the robot evaluated in the field study and was also employed in Study 1. We manipulated the type of AI of the robot by describing the robot’s behavior that would typically correspond to each of the categories outlined by Huang and Rust (2021b). The full scenarios are described in Web Appendix 4. After exposing respondents to the stimulus, we assessed their positive and negative emotions from interacting with the service robot. The same scales as in Studies 1 and 2 were employed to measure positive and negative emotions. We again also included the three multi-item scales to measure the respondents’ perceptions of each type of AI (mechanical, thinking, and feeling) based on Huang and Rust (2021b).

We first confirmed scenario realism (M = 5.395, SD = 1.337) to be significantly higher than the midpoint of the scale (p < .01). We then confirmed that our manipulation of the AI types was successful; see Web Appendix 6 for more details. Furthermore, a confirmatory factor model in SmartPLS3.0 again demonstrated that negative emotions were captured by two factors. Web Appendix 7 (Table WA7.3) demonstrates that scales exhibit adequate levels of Cronbach’s alpha and composite reliability. Convergent validity and discriminant validity were also verified.

Results and Discussion

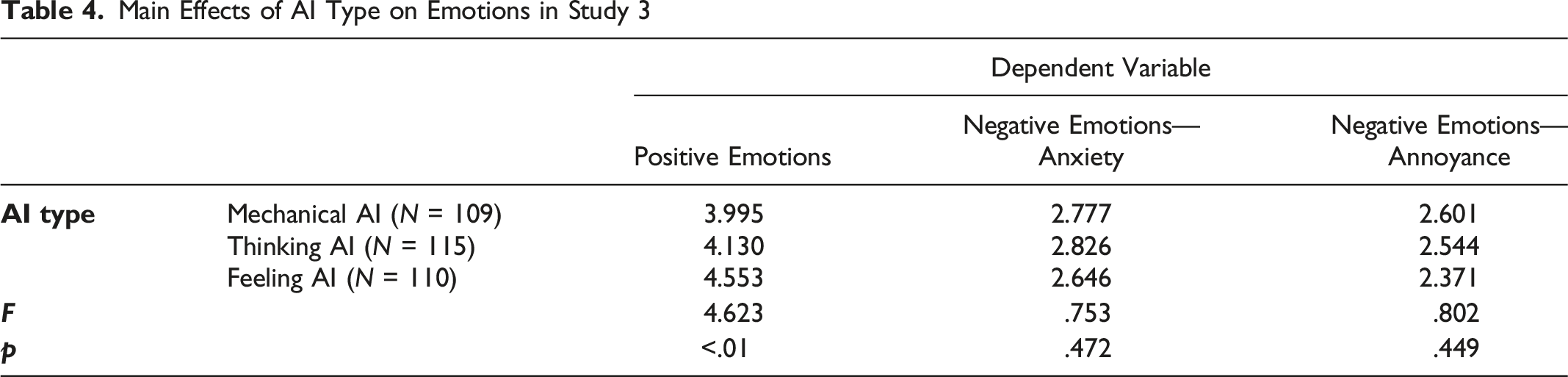

Main Effects of AI Type on Emotions in Study 3

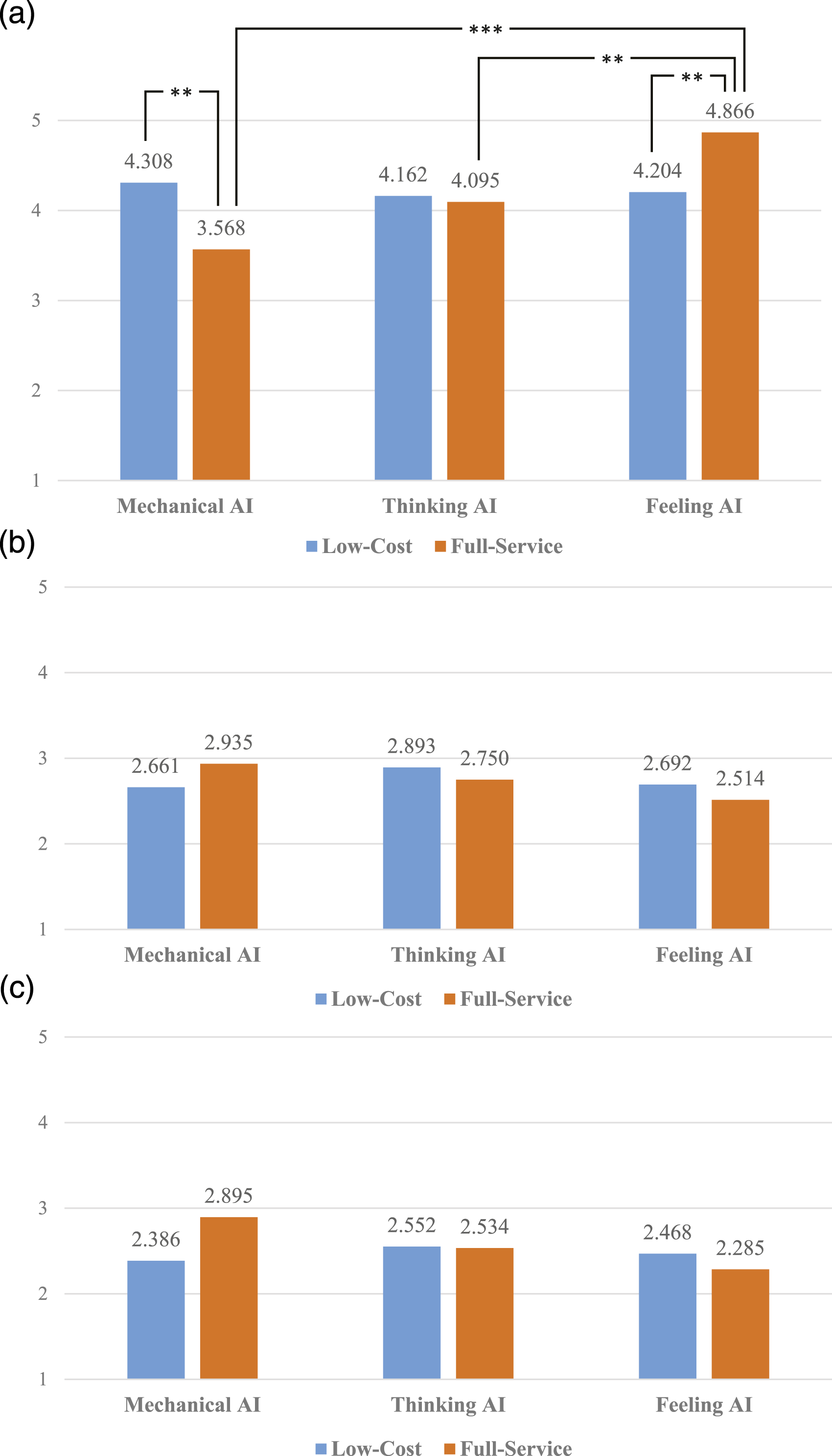

Second, a series of ANOVAs were conducted to test H6a and H6b. We found an interaction effect between type of AI and service tier on positive emotions (F = 6.065, p < .01), in support of H6a. Figure 2 illustrates that for full-service restaurants, more positive emotions emerged as the type of AI employed became more sophisticated. In turn, for low-cost restaurants, the differences in positive emotions between the AI types were not significant. Post-hoc Bonferroni tests revealed that for full-service restaurants, significant differences emerged in positive emotions between mechanical AI and feeling AI (Mmechanical = 3.568, Mfeeling = 4.866, p < .01), and between thinking AI and feeling AI (Mthinking = 4.095, Mfeeling = 4.866, p < .05), but not between mechanical AI and thinking AI (Mmechanical = 3.568, Mthinking = 4.095, p > .05). Furthermore, mechanical AI generated significantly higher positive emotions in low-cost than in full-service restaurants (Mlow-cost = 4.308, Mfull-service = 3.568, t = 2.572, p < .05), while feeling AI yielded the opposite pattern (Mlow-cost = 4.204, Mfull-service = 4.866, t = −2.312, p < .05). Study 3 interaction effects. Panel A. Interaction effects for positive emotions.

No significant interaction effect between type of AI and type of restaurant was found for negative emotions; therefore, H6b cannot be supported. Panels B and C of Figure 2 illustrate that respondents in the full-service restaurant conditions did seem to feel less anxiety and annoyance with increasing types of AI; however, these interactions were not significant (F = .809, p > .05 for anxiety and F = 1.458, p > .05 for annoyance). Finally, we observed no main effect of service tier on positive (F = .088, p > .05), negative–anxiety (F = .010, p > .05), and negative–annoyance (F = .359, p > .05) emotions.

In summary, Study 3 supports our contention that the relationship between type of AI and positive emotions depends on service tier. We found that customers of low-cost service providers seem emotionally unaffected by the type of AI offered. Perhaps they feel that a robot serves the purpose of their visit by keeping operating costs low. The focus of these customers may therefore be on the mere presence of the robot rather than its capabilities (cf., Chan and Tung, 2019). Indeed, customers of low-cost providers tend to act on exchange norms, such that the service outcome is more diagnostic than the service process in their responses (Moser et al., 2018). Customers may thus be less immersed in the service encounter and more superficially process stimuli.

At the same time, Study 3 reconfirms that the type of AI does not seem to be directly related to customers’ negative emotions, nor does it support a moderating effect of service tier on this relationship. Although past work has documented customer resistance to innovations (e.g., Heidenreich and Spieth, 2013), this at least does not seem to directly relate to being confronted with higher service-robot intelligence. Perhaps consumers have become so accustomed to interactions with AI-based devices (e.g., Alexa, voice-controlled tv sets or car navigation, and smart thermostats) that negative emotions are not that easily triggered. However, next, we suggest another explanation: the relationship may not be a direct, but rather mediated.

Perceived Robot Autonomy as the Mediating Mechanism

Surprised by the non-significant effects of AI types on negative emotions, we sought to uncover a mediating mechanism that may explain this link. We again build on cognitive appraisal theory, which posits that the agency of the service agent is one of the dominant appraisals to predict customer emotions. We expect agency to be especially important to explain AI’s relationship to negative emotions because, compared to positive events, negative events are more likely to trigger attempts to uncover why the event has occurred (Weiner, 2000). Nevertheless, we account for the possibility that agency mediates between AI and positive emotions, too.

To conceptualize agency, we focus on perceived robot autonomy, “the ability of robots to perform intended tasks based on current state and sensing without human intervention” (Xiao and Kumar, p. 15). Recent advancements have allowed some robots to achieve great levels of autonomy in sensing, thought, and action (Hu et al., 2021). Nevertheless, many robots still require employees to complement them in performing frontline tasks (Choi et al., 2020). Indeed, the interdependence between robots and employees has been identified as an issue of increasing interest in recent conceptual works in the service field (Li et al., 2021; de Keyser et al., 2019).

We thus assume that customers perceive differences in robot autonomy, and we posit that customers explicitly make this appraisal prior to experiencing emotions. More specifically, as robots’ thinking and feeling AI increases, they become more adaptable to different service situations, better able to customize the service to a specific customer (Huang and Rust, 2021b), and less likely to require human intervention or augmentation. They are thus more likely to be appraised as acting autonomously. Since agency, or autonomy, goes hand in hand with more sophisticated functionality (Mele et al., 2021), the effect on emotions will be beneficial such that more positive and less negative emotions are evoked. Indeed, past research has shown that autonomously operating devices elicit positive feelings, such as relaxation, from people because service tasks become easier, the operation requires reduced cognitive resources, and people have more free time to do other work (Rijsdijk and Hultink, 2009; Jörling, Böhm, and Paluch, 2019). Moreover, individuals feel less embarrassment (i.e., a negative emotion) when interacting with a fully autonomous robot than when interacting with a tele-operated robot (Choi, Kim, and Kwak, 2014). Summarizing our argument, we hypothesize the following:

Study 4: The Role of Perceived Robot Autonomy

Our final study explored the process by which AI types influence customers’ emotions. We focused on full-service providers because our previous study indicated that the effects of the AI types are specifically pronounced in these settings. Against this backdrop, we investigated perceived robot autonomy as a mediating mechanism, and we thus empirically examined H7.

Method

In a between-subject online experiment, we used the same scenarios presented in the full-service restaurant conditions of Study 3. We followed the same recruitment method as in Studies 1 and 3, and after removing nine participants who failed attention checks and four incomplete responses, we obtained a representative sample of 296 European adults (aged over 18). Most participants were female (52.1%), between 25 and 34 years old (20.9%), with a university education (73.3%); see Web Appendix 1 for details.

After exposing respondents to the scenario and the robot picture in Web Appendix 3, we assessed their perceptions of each AI type as well as their positive and negative emotions from interacting with the service robot, using the same scales as in previous studies. In addition, participants evaluated perceived robot autonomy using a three-item scale adapted from Rijsdijk and Hultink (2009) and Sims, Szilagyi, and Keller (1976; see Web Appendix 4).

Again, we first confirmed that participants reported levels of realism (M = 4.608, SD = 1.902) significantly higher than the midpoint of the scale (p < .01). We then confirmed that our manipulation of the AI types was successful; see Web Appendix 6 for more details. A confirmatory factor model in SmartPLS3.0 once more confirmed the validity and reliability of our measures (see Web Appendix 7, Table WA7.4). However, the scale of mechanical AI yielded a Cronbach’s alpha value slightly below the threshold of .7 (.644). Given the exploratory nature of our research setting, we still deemed this value above .6 to be acceptable.

Results and Discussion

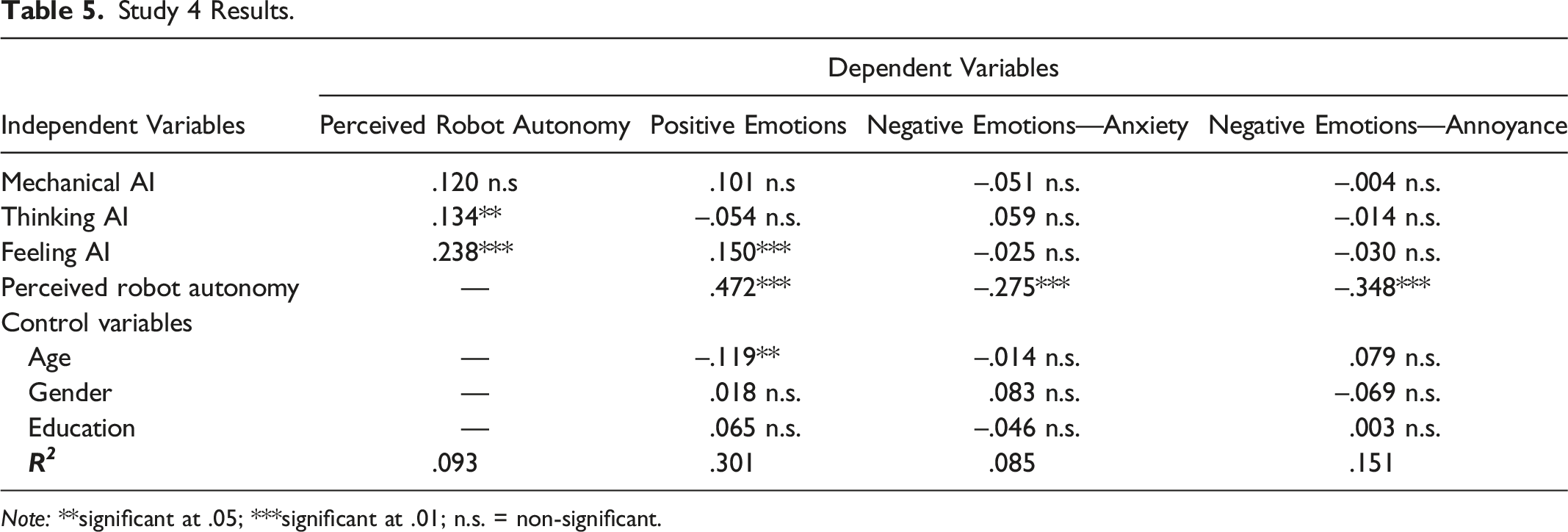

Study 4 Results.

Note: **significant at .05; ***significant at .01; n.s. = non-significant.

Additionally, we found a significant direct effect of feeling AI (β = .150, p < .01) on positive emotions. The direct effects of thinking AI (β = −.054, p > .05) and mechanical AI (β = .101, p > .05) on positive emotions were non-significant. As in the previous studies, the influence on positive emotions was the strongest for more sophisticated types of AI (both directly and indirectly in this case). However, the direct effects of mechanical AI, thinking AI, and feeling AI on negative emotions were non-significant; this result is consistent with our previous studies. Regarding control variables, only age was negatively related to positive emotions (β = −.119, p < .05).

In summary, these results partially support H7. Particularly, perceived robot autonomy partially mediates the influence of feeling AI on emotions, fully mediates the influence of thinking AI on emotions, and does not mediate the influence of mechanical AI on emotions.

General Discussion

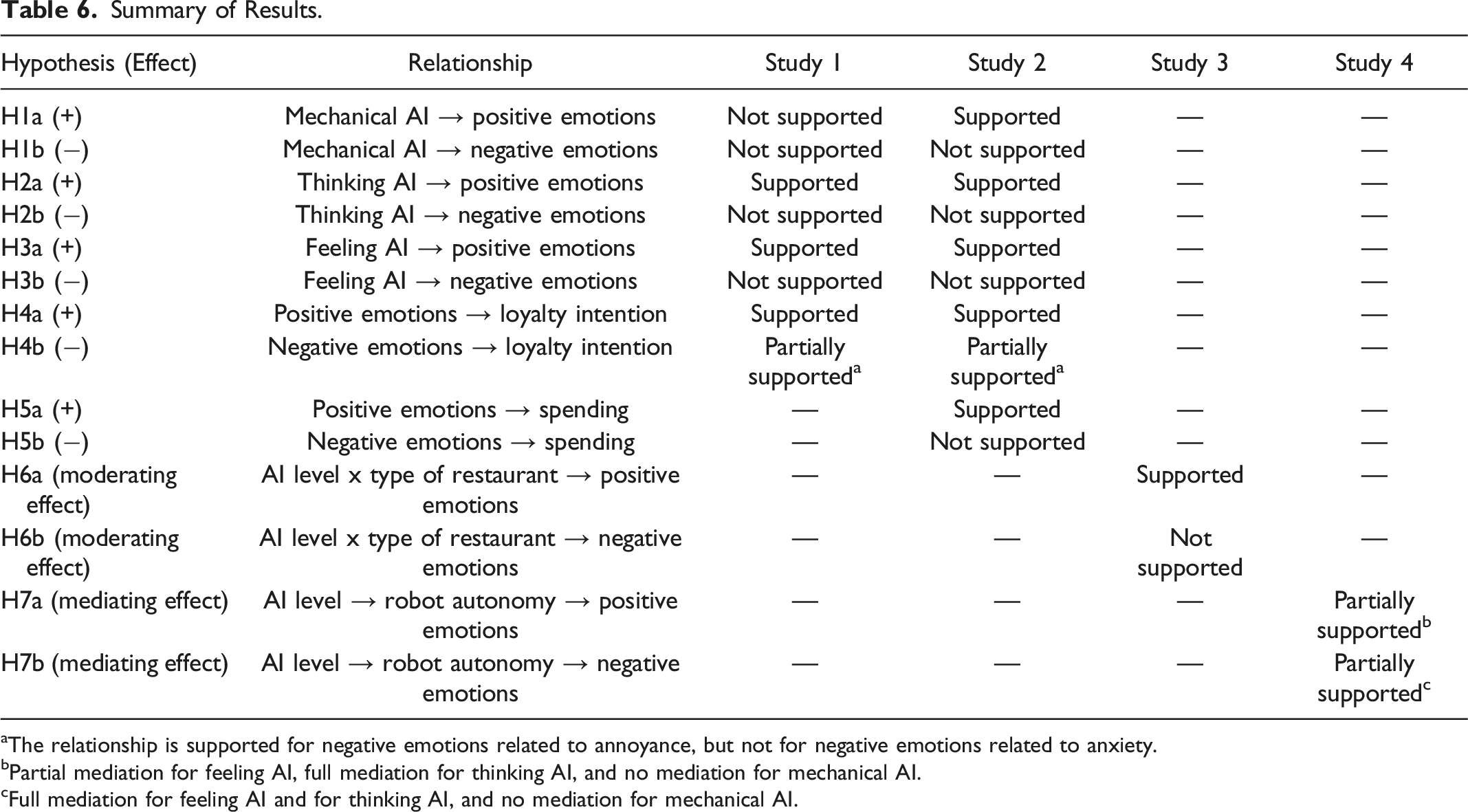

Summary of Results.

aThe relationship is supported for negative emotions related to annoyance, but not for negative emotions related to anxiety.

bPartial mediation for feeling AI, full mediation for thinking AI, and no mediation for mechanical AI.

cFull mediation for feeling AI and for thinking AI, and no mediation for mechanical AI.

Summary of findings in this paper.

Theoretical Implications

As a first contribution, our research for the first time empirically analyzes the framework of AI types (Huang and Rust, 2021b) to assess customers’ responses to each form of intelligence in service robots. To date, recent efforts in this realm have either not built on the now dominant framework (e.g., Mele et al., 2021), identified intelligences that customers seek rather than how they respond to them (e.g., Odekerken-Schröder et al., 2020), or focused on a limited set of AI types (e.g., Belanche et al., 2020). We specifically add an emotional perspective to a rapidly growing stream of literature that investigates how consumers respond to robot characteristics (e.g., Belanche et al., 2021, Blut et al., 2021, Mozafari et al., 2021, McLeay et al., 2021, and Yoganathan et al., 2021). These recent studies mostly build on insights from traditional technology adoption models, which do not consider the roles of individuals’ emotions in their behavior (Bagozzi, 2007). Extending beyond evoked emotions, we demonstrate that customers adapt their service behavior in response to the emotions they experience when interacting with a service robot. Across four studies, we find consistent support for the notion that higher levels of thinking AI and feeling AI in service robots trigger positive emotions in customers which, in turn, increase spending and loyalty intention. The influence of mechanical AI on positive emotions is less clear-cut, with non-significant effects in Studies 1 and 4, and a significant effect in Study 2.

As a second contribution, we add to nascent literature on service technology and emotions (e.g., Henkel et al., 2020; Rajaobelina et al., 2021) by uncovering the mechanism through which service-robot intelligence relates to customer emotions. Specifically, our results suggest that all AI types are directly related to customers’ positive emotions, but not to their negative emotions. Consistent with previous insights into the benefits of higher autonomy in AI (Jörling, Böhm, and Paluch, 2019; Belanche et al., 2020; Lucia-Palacios and Pérez-López, 2021), our research confirms that robot autonomy plays a mediational role between AI types and customer emotions. As such, autonomy especially explains how AI relates to negative emotions. Another contribution to this particular domain is our distinction between anxiety and annoyance as negative emotional reactions after a service encounter with robots. Different emotions can be portrayed by their intensity (arousal) and valence (positive or negative; Westbrook, 1987). On the one hand, anxiety can be described as higher in arousal, while annoyance (or anger) is stronger in negative valence (e.g., Russell and Mehrabian, 1974). Customers could thus interpret anxiety as a momentary thrill or excitation related to interacting with a service robot. Indeed, there may be something positive in a negative emotion to offset potential detrimental effects; think about being scared by frightening movies or having an overwhelming amount of information in a new, stimulating job environment (Straube et al., 2010). On the other hand, annoyance represents a clearer and conscious emotional dissatisfaction with the service innovation, as an explicit negative reaction different from spontaneous and unconscious anxiety, as customers have both implicit and explicit negative reactions toward robots (Akdim, Belanche, and Flavián, 2021). This may explain why we find that anxiety, in contrast to annoyance, does not have negative effects on spending or loyalty intention.

Third, we uncover the boundary conditions of the effect of AI on customer emotions and find that the effect depends on a firm’s service tier. This aligns with cognitive appraisal theory, which holds that the context (i.e., the service tier) in which people interpret a stimulus determines their goals and expectations, and it thus affects the desirability of the stimulus. Our theorizing and results add to a laudable effort by Blut et al. (2021), who concentrated on contingencies from a related, yet different variable (i.e., service types as a sectoral characteristic) in a different relationship (anthropomorphism => intention to use). We provide further detail by demonstrating that more sophisticated service robot intelligence benefits the emotions of customers of full-service providers but that customers of low-cost providers display less emotional differentiation regarding the different AI types. Our results extend studies on human service delivery that show, for instance, that frontline staff quality is key to customer satisfaction in full-service but not in low-cost companies (Tsaur, Luoh, and Syue, 2015; Koklic, Kukar-Kinney, and Vegelj, 2017). Moreover, although one should be careful to generalize the findings beyond our research context, our results also align with Xiao and Kumar’s (2021) recent proposition that service robots are currently well equipped to provide efficient and predictable quality for customers of middle- or low-equity brands but that more sophisticated robots are needed to help customers of high-equity brands.

Managerial Implications

As a substantive contribution of our work, we provide managerial guidance on the implementation of mechanical, thinking, or feeling AI for different service tiers. With the plethora of AI options available, many service managers now contend with the question of the volume of resources to invest in the development and implementation of robots on their frontlines. We specifically address the question of whether a more intelligent robot, in terms of the three AI types, yields more desirable outcomes. In other words, how smart should a service robot be?

Our findings highlight a need to match the kind of AI employed in a frontline robot with customers’ expectations about the service provided by a firm. In particular, low-cost companies generally focus on standardization and efficiency. Although they could implement any of the AI types, the generally less-costly mechanical AI automation is sufficient, since customers do not demand a relational orientation but rather a fast and convenient service provision. In contrast, customers of a full-service provider have higher affective expectations and demand rapport-building skills in frontline agents. Firms in this segment benefit from robots that are more characteristic of feeling AI than of mechanical AI because the former type enhances positive emotions and reduces negative emotions. Notably for managers, although more sophisticated forms of AI require more advanced technologies, the type of AI should be distinguished from the technology that enables it (Bock, Wolter, and Ferrell, 2020). A robot may use sensors and big data to implement either mechanical, thinking, or feeling AI. However, it is the capability of the robot that matters.

Given the importance of customers’ perceptions of these capabilities, another route to benefit customer emotions involves influencing their idea of the robot’s autonomy. A robot’s autonomy is shaped by its capacity to incorporate sophisticated skills, such as the ability to accommodate environmental variations, without further input (Thrun, 2004). However, managers could enhance perceived autonomy in other ways, such as through marketing communications or by designing a fixed set of service scenarios that robots can complete autonomously.

Future Research and Limitations

As with every study, our research encountered some limitations that, at the same time, may provide opportunities for future research. First, across four studies, we found consistent support for the effects of thinking AI and feeling AI. The influence of mechanical AI on positive emotions was non-significant in Studies 1 and 4, but significant in Study 2. The latter study employed field data, and future research may establish whether this actual involvement of participants in the setting results in more fine-grained effects than lab studies do.

The positive effect of the emotional anxiety component on service enhancement also raises an interesting research avenue. In particular, as already evident in some theme parks, robots could be employed as an attraction seeker for customers willing to experience the ambivalent emotions of being served by a service robot. Further research should investigate how more sophisticated robots, in terms of their AI type, may generate those feelings and identify whether only sensation-seeking customers will be curious about this experience or whether this effect applies to a broader audience (Straube et al., 2010).

In addition, at the time of writing and data collection, the COVID-19 pandemic was raging. One could argue that customers would be happy to go out at all, after months of social distancing and lockdown. In addition, the hospitality industry eventually suffered from understaffing, and expectations of customers seemed to be low. These processes may potentially have affected our results. However, our studies were spread over time, and respondents came from a large geographical area. We thus expect such positive and negative influences to even out. Nevertheless, given the potential impact of COVID-19 on customers’ responses to robots as social actors or even companions (see, e.g., Kim et al., 2021; Odekerken-Schröder et al., 2022), our results should be considered in this light.

Finally, we adopted cognitive appraisal theory as our conceptual foundation and modeled consumer responses to service-robot intelligence accordingly. This forced us to make choices regarding the focal variables, but we must note other concrete ideas for studying the effects of the three AI types on consumers. For instance, all types of intelligence could lead to higher perceptions of a robot’s warmth and competence (Hu et al., 2021). These universal dimensions of social cognition appear central to many current service-robot studies (e.g., Yoganathan et al., 2021) but have yet to be linked with service-robot intelligence. Future work may also control for the influence of perceived novelty. For example, customers’ expectations about and emotional responses to the service provision may be influenced by both the AI type and the perceived novelty of the technology. The evaluation of the type of intelligence may even change over time as a function of service-robot novelty. This likely makes service-robot AI a topic that will continue to spark research interest for many years to come.

Supplemental Material

Supplemental Material - How Smart Should a Service Robot Be?

Supplemental Material for How Smart Should a Service Robot Be? by Jeroen Schepers, Daniel Belanche, Luis V. Casaló, and Carlos Flavián in Journal of Service Research

Footnotes

Acknowledgments

This work was supported by the Spanish Ministry of Science, Innovation and Universities under Grant PID2019-105468RB-I00; European Social Fund and the Government of Aragon (“METODO” Research Group S20_20R).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: European Social Fund and the Government of Aragon (Research Group “METODO” S20_20R) and Spanish Ministry of Science, Innovation and Universities (Grant PID2019-105468RB-I00).

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.