Abstract

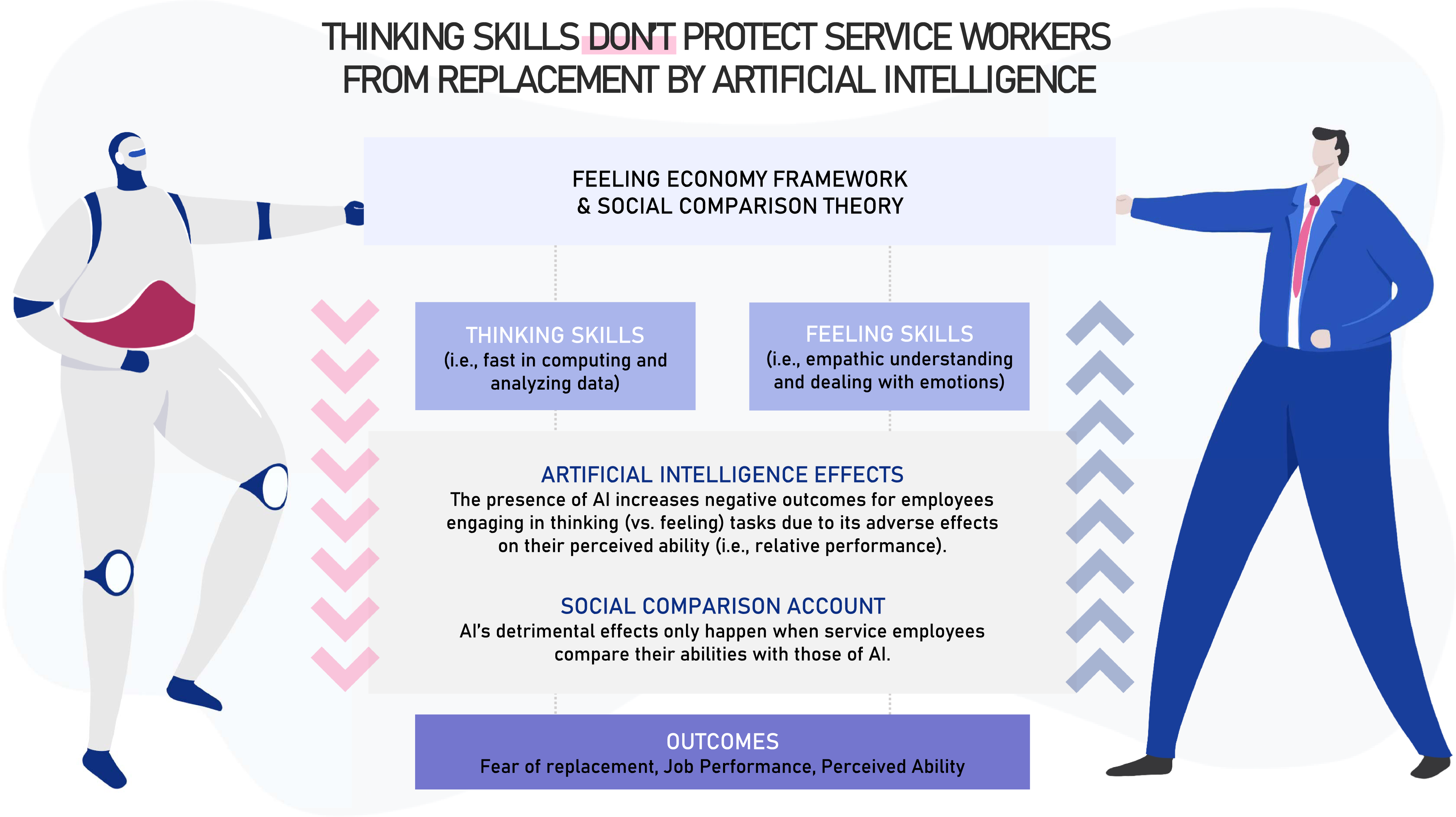

Despite the documented benefits of Artificial Intelligence (AI) to the service industry, the service employees’ fear of being replaced by AI continues to be a major concern as we transition to the Feeling Economy. This paper builds upon the Feeling Economy framework and the social comparison theory to examine how different service-related tasks (thinking vs feeling) distinctively impact the service employees’ feelings and behavior. Five studies reveal that the presence of AI increases negative outcomes for employees engaging in thinking (vs. feeling) tasks due to its adverse effects on their perceived ability (i.e., relative performance). Findings further indicate that these detrimental effects only happen when service employees compare their abilities with those of AI. This research provides important theoretical and managerial implications, helping to mitigate AI’s negative outcomes on employees’ fear of replacement and reduced job performance.

Introduction

Artificial intelligence (AI) has reshaped customer experiences across the service landscape (El Adl 2019; Hoyer et al. 2020; Huang and Rust 2018) influencing how we buy, entertain, work, play, and even eat (Puntoni et al. 2021). While most companies see AI as a major source of innovation (Pelau, Dabija, and Ene 2021), the rise of AI has also been a reason for apprehension among service employees and frontline workers (e.g., Bock, Wolter, and Ferrell 2020; McLeay et al. 2021; Schlögl et al. 2019; Wuest et al. 2020). Such concern with AI seems well-founded: AI has steadily evolved over the last few decades, transitioning from an artificial agent performing mostly mechanical tasks (i.e., repetitive tasks requiring minimal learning) to an agent capable of performing increasingly complex thinking tasks, showing a great ability to analyze, learn from, and make autonomous decisions based on data (Huang, Rust, and Maksimovic 2019).

Notwithstanding the progress in the AI’s capacity over the years, it still underperforms humans in a multitude of tasks, particularly on tasks requiring the ability to decode, emulate, and respond to human feelings and other interpersonal cues, or feeling tasks for the sake of simplicity (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021). Such greater capacity of AI to perform thinking over feeling tasks has fueled a gradual increase in the demand for employees who can perform feeling tasks, giving rise to a new social-economic paradigm coined as the Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021). Importantly, however, whether and why such variability in the AI’s performance across different tasks impacts service employees’ fear of replacement and other downstream job outcomes is still largely unknown.

We start to fill this gap by bridging the literature on the Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021), social comparison theory (Alicke, Zell, and Bloom 2010; Wood 1989), and theory on the employees’ attitudes toward AI (Bock, Wolter, and Ferrell 2020; Granulo, Fuchs, and Puntoni 2019; Lu et al. 2020), to propose that the influence of AI on the employees’ perceived abilities, fear of replacement, and job performance hinges on the nature of the tasks they perform. Across five studies—one study using Twitter data, one study with local service providers, and three online experiments with actual service employees—we show that the presence of AI has deleterious effects on the employees’ feelings (i.e., increases fear of replacement) and performance (lower expected and intended performance) when they are engaged in thinking (relative to feeling) tasks. These studies also provide evidence that perceived ability is the mechanism underlying the effects, and that the effect is contingent on AI being a salient comparison target.

This research provides important theoretical and managerial implications. First, we contribute to research on the Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021) by examining how different job tasks might distinctively impact the employees’ fear of being replaced and their job performance. Second, this research contributes to recent studies on the interaction between artificial agents and employees (Granulo, Fuchs, and Puntoni 2019; Spatola and Normand 2021) by adding a more nuanced view of how different job tasks might affect job outcomes. Third, extant research on interpersonal behaviors toward non-human agents (Lewandowsky, Mundy, and Tan 2000; March 2021; Ruijten, Midden, and Ham 2015) has proposed that human-machine interactions mimic human-to-human dyads in several ways, with artificial agents showing the ability to engender social exclusion and build trust. We complement this work by proposing a novel aspect of human-to-human interactions—social comparison—that may also be reflected in human interactions with artificial agents. We extend their work by demonstrating that even when AI does not interfere directly with the employee’s work, its mere presence might be detrimental to the employee’s feelings and job performance, depending on the type of task the job entails. Finally, we provide guidelines to service providers by suggesting managerial approaches to minimize fear associated with AI and to maximize the outcome of AI-augmented services.

Theoretical Background

The concern with whether, how, and when technology might replace human labor traces to the early days of industrialism and has periodically reappeared across multiple domains (Fleming 2019). Economic studies on automation and job replacement, for instance, have examined how automation (including AI) increases inequality (Mokyr, Vickers, and Ziebarth 2015; Prettner and Strulik 2020), reduces wages (Acemoglu and Restrepo 2020; Lordan and Neumark 2018), causes technological substitution (Lu, Rui, and Seidmann 2018; Prettner and Strulik 2020), and increases unemployment (Acemoglu and Restrepo, 2020; Mokyr, Vickers, and Ziebarth 2015). The social sciences and business literature have also tackled the subject using a multipronged approach, focusing on the impact of AI from the consumers’ (e.g., Luo et al. 2019; Mende et al. 2019), managers’ (Huang & Rust, 2018, 2021a, 2021b, 2021c; Puntoni et al., 2021), and employees’ perspectives (Glikson and Woolley 2020; Granulo Fuchs, and Puntoni 2019; Huang, Rust, and Maksimovic 2019).

A notable contribution to this debate, both at the macro and micro level, comes from the series of works by Huang and Rust, who propose a theoretical framework (Huang and Rust 2018, Rust and Huang 2021) accompanied by supporting empirical evidence (Huang, Rust, and Maksimovic 2019) describing the Feeling Economy and the process through which AI will replace humans as primary service providers (Huang and Rust 2018). Consistent with their work, services typically involve different types of intelligence (mechanical, thinking, and feeling) that should be performed either by AI or by human intelligence. AI will replace service employees in the tasks they are better at (Huang and Rust 2018, Huang, Rust, and Maksimovic 2019), such that, with increasing capabilities of AI, most tasks, if not all, will eventually be performed by AI (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021).

Despite growing interest in AI and its effect on service employees’ feelings and beliefs (Granulo, Fuchs, and Puntoni 2019; Lu et al. 2020), more research is needed to fully understand how, why, and when AI affects job outcomes. As we theorize next, we propose that the effect of AI on employees’ job outcomes hinges on the type of tasks the employees perform.

Artificial Intelligence and Service Employees’ Tasks

Artificial Intelligence is a set of technologies that make machines able to understand, act, learn, and display features of human intelligence (Chi, Denton, and Gursoy 2020). Artificial Intelligence is still an emerging topic and its positive and negative effects on the workplace are an ongoing debate. On the one hand, AI can augment the employee’s abilities and minimize mechanical, repetitive tasks, thus improving the employees’ well-being (Lu et al. 2020). Consistent with this line of thought, human labor is irreplaceable (Bhargava, Bester, and Bolton 2020) and employees should be discharged from mechanical tasks and focus on more creative and intuitive ones (Jarrahi 2018; Nedelescu 2015). On the other hand, AI can replace humans even in the most advanced tasks (Huang, Rust, and Maksimovic 2019); with increasing AI capabilities, it is plausible that most tasks otherwise performed by humans will be performed better and more efficiently by AI, such that AI may eventually become a major constraint to human labor (Fast and Horvitz 2017).

Much of the theorizing on how AI will replace human labor comes from the work on the Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021), which examines how the replacement of human intelligence by AI might depend on the skills required by the tasks. Consistent with Huang and Rust (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021), AI and human intelligence range from mechanical, to thinking, to feeling, and that as AI replaces human intelligence in some tasks, employees should execute increasingly complex tasks (Rust and Huang 2021). For instance, recent research indicates that AI can outperform employees’ thinking tasks in several areas, including STEM (science, technology, engineering, and mathematics) tasks (De Cremer and Kasparov 2021; Luo et al. 2020; Pesapane et al. 2020). As a result, the relative importance of feeling tasks—communicating/coordinating with others and establishing/maintaining interpersonal relationships—is growing across different industries and becoming more important to human workers than thinking tasks (Huang et al., 2019; Huang & Rust, 2021; Strauss 2017).

In our research, we propose that the different abilities of AI might not only impact the trends in job replacement that characterize the Feeling Economy but might also have a distinct influence on the service workers’ feelings and performance on the tasks assigned to them.

How AI Presence and Task Type Shape Job Outcomes

There is plenty of evidence to suggest that social agents, particularly AI, can mimic interactions with other humans, eliciting interpersonal responses otherwise only observed in human-to-human interactions (Lewandowsky, Mundy, and Tan 2000; March 2021). For instance, artificial agents can engender feelings of trust during negotiations (Lewandowsky, Mundy, and Tan 2000), can cause people to feel lonely due to social exclusion (Ruijten, Midden, and Ham 2015), or even energize attentional control, effectively boosting the interactor’s performance during cognitive tasks (Spatola et al. 2018).

Since artificial agents with AI capabilities can engender interpersonal outcomes, it stands to reason that AI could also trigger performance and ability comparisons. People spontaneously compare their abilities and performances with others who have performed similar tasks (Harding et al. 2019; Wheeler, Martin, Suls 1997; Wood, 1989) or who are salient comparison standards (Alicke, Zell, and Bloom 2010; Harding et al., 2019). With the increasing overlap in tasks performed by AI and service employees (Huang and Rust 2018), we should expect AI to become a particularly salient standard in such comparison processes. This is important because a comparison standard’s ability on a specific domain tends to influence an individual’s perceived relative ability (i.e., “the AI is relatively better or worse than me in a given task”) as well as the individual’s absolute ability judgments (i.e., “I believe that I am more or less capable in performing a task now that I considered how well the AI does on a similar task”; Alicke et al., 2010; Downes et al. 2021).

If AI serves as a comparison standard to service employees, what effect will it have on the employee’s perceived ability? Self-other comparison processes often lead to poorer self-ratings with high-performing peers (Sun et al. 2021; Tariq et al. 2021), and better self-ratings with low-performing peers (Harding et al. 2019). It is widely accepted that AI has strong analytical and technical capabilities that are particularly suited to perform thinking tasks, and a weak ability to empathize with others and respond to social cues, both heavily recruited during feeling tasks (Rust and Huang 2021). Building on these differences and the theory on social comparison, we propose that, in service contexts involving AI, service employees might perceive that they possess a lower ability to perform thinking tasks and a higher ability vis-a-vis feeling tasks. As a result, we propose that, in service contexts where the AI is present, service employees working on thinking tasks will have a lower perceived ability than service employees working on feeling tasks. Further, we propose that such perceived ability will have important downstream consequences, affecting particularly the service employee’s fear of being replaced and job performance.

Recent studies show that service employees who have higher perceptions of AI’s abilities exhibit a higher intention to leave their job (Li, Bonn, and Ye 2019), a phenomenon likely rooted, at least in part, on the prevailing belief that AI’s superiority will eventually lead to labor displacement. Prior research indicates that employee-AI comparisons can engender lower perceived ability, which can be a source of job insecurity (Pensgaard & Roberts, 2000), consequently inducing fear of replacement. Since we expect that, in service contexts involving AI, employees working on thinking (vs. feeling) tasks will have the lower perceived ability, we propose that they will also have more fear of being replaced, a hypothesis that is both intuitive and in line with general predictions of the trends associated with the Feeling Economy.

Perhaps more importantly, an individual’s perceived ability also impacts the individual’s performance, such that individuals who doubt their ability tend to demonstrate decreased performance (Miller et al. 1993). Indeed, individuals with a lower perceived ability tend to predict worse future performance, become poorly committed to achieving performance outcomes, spend less energy to perform the task, and, ultimately, perform worse at the task (Harding et al., 2019; Wheeler et al., 1997). Thus, since we predict that, in service contexts involving AI, employees performing thinking (vs. feeling) tasks will have the lower perceived ability, we also propose that they will perform worse at their jobs.

Overview of Studies

We test our predictions in five highly powered studies. Study 1a aims to provide a deeper understanding of the sentiment, both general and self-referenced, about AI service-related tasks (thinking and feeling) by analyzing Twitter posts (N = 18,869). Study 1b explores the influence of job-related tasks (thinking vs feeling) on participants’ feelings toward AI using a sample of managers of local service organizations. Study 2 provides initial causal evidence for the influence of task type on job outcomes when AI is salient by asking a sample of freelancers for human intelligence tasks (HIT) (i.e., Mechanical Turk workers) to focus either on the feeling or on the thinking tasks they perform at work and then assessing their expected job performance. In study 3, we examine whether the theorized influence of task type is exclusive to situations where people are thinking about AI (i.e., the presence of AI is salient), and examine perceived ability as the mechanism underlying their fear of replacement and perceived job performance. Finally, study 4 gives credence to our assertion that the effect of AI on job performance is guided by service employees’ spontaneous comparisons with AI by manipulating the player against which the task will be performed (AI vs. human player with high thinking intelligence vs. human player with high feeling intelligence) along with task type (thinking vs feeling). Study 4 shows that the relatively worse performance on thinking (vs. feeling) task that we observe when service employees compare themselves with AI is replicated when participants perform against a human player with high thinking intelligence (but reverses when the adversary is a human player with high feeling intelligence).

Consistent with pre-registration, in the online experiments we targeted a minimum of 80 participants per experimental condition. To circumvent issues associated with click-farms (Kennedy et al. 2021; Moss and Litman 2018), we built on prior work (Wongkitrungrueng et al., 2020) and screened out responses collected using online platforms that had identical geolocation (based on metadata automatically collected by the survey platform), collecting additional surveys until the target sample size was reached (AsPredicted studies #78042, #81506, and #80903).

Study 1a: Sentiment Analysis Toward AI Among Service Providers Engaging in Feeling and Thinking Tasks

Study 1a aims to provide an initial understanding of the broad public sentiment towards AI. Therefore, tweets were collected via Twitter API, and the sentiment analysis was performed using Python.

Design and procedures

27,517 tweets were collected from January 2018 till the end of August 2020 using the query: ‘(employment OR employee OR job OR work) AND (replace OR substitute OR eliminate) AND (“artificial intelligence” OR “AI” OR “machine learning” OR “ML” OR “deep learning” OR “DL” OR robot OR automation OR technology OR “intelligent machine” OR “virtual assistant” OR chatbot OR “bot”)’.

First, we conducted the preprocessing phase to prepare the dataset for further text mining (e.g., deleting URLs, punctuation marks, and word normalization). Therefore, the dataset was preprocessed and lemmatized (to return the base or dictionary form of a word), the repetitive tweets (retweets) were eliminated, resulting in a final dataset with 18,869 unique tweets. Sentiment analysis was conducted using the VADER sentiment analyses package, which has high accuracy for tweet classification (Elbagir and Yang 2019). Vader gives each word on the tweet a sentiment score and then computes a compound score ranging from −1 (most extreme negative) to 1 (most extreme positive). The threshold values (Hutto and Gilbert 2014) used to transform the quantitative value to a qualitative were the following: positive tweets (compound score ≥ 0.05), neutral tweets (compound score between −0.05 and 0.05), and negative tweets (compound score ≤ −0.05).

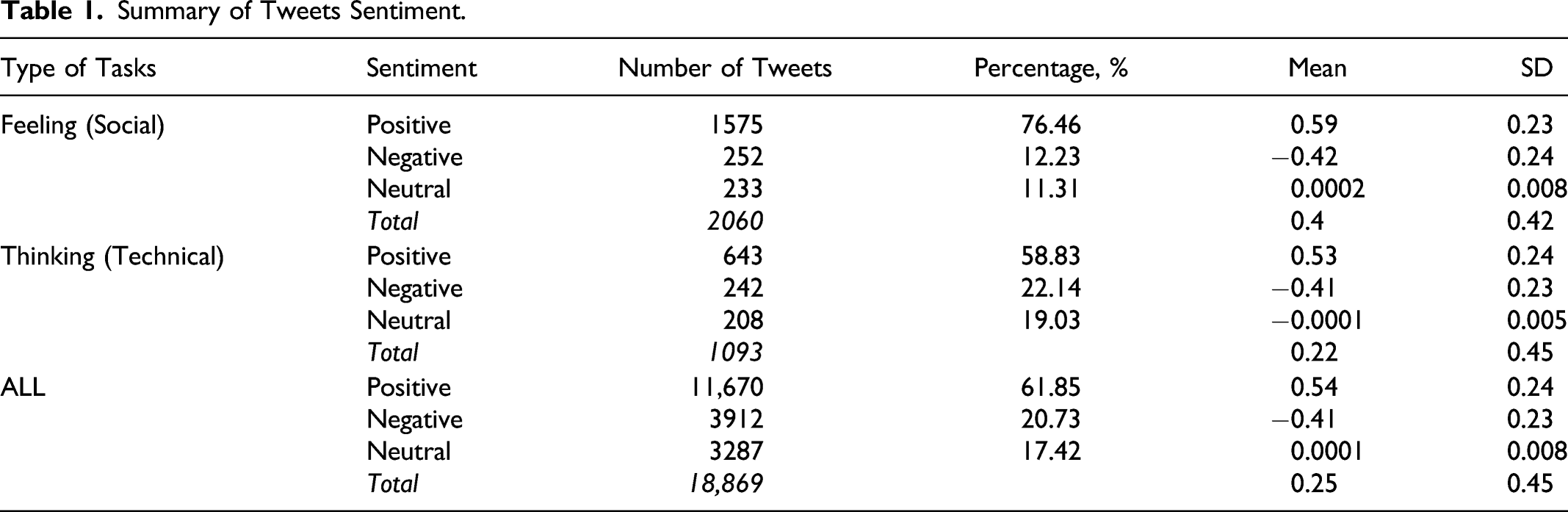

Summary of Tweets Sentiment.

Results and discussion

The overall sentiment analysis revealed that most of the tweets were positive (N = 11,670, 61.85%), followed by those with negative connotations (N = 3,912, 20.73%), and finally, those deemed to be neutral (N = 3,287, 17.42%). In the tweets related to thinking tasks, 58.83% of tweets were positive, 22.14% were negative, and 19.03% were neutral. In contrast, tweets related to feeling tasks were more predominantly positive (76.46%), with lower amounts of both neutral and negative tweets (11.31% and 12.23%, respectively). A Kruskal–Wallis test confirmed that the difference of the compounding sentiment score between tweets related to feeling tasks (M = 0.40, SD = 0.42) and to thinking tasks (M = 0.22, SD = 0.45) is statistically significant (KW χ 2 = 129.97, p < .001), suggesting that tweets related to AI and job replacement based on feeling tasks had more positive sentiment than those based on thinking tasks. To gain more insight on our prediction that AI presence fosters more negative self-referencing feelings, we further performed the analysis on a selection of all tweets that included explicit self-references (e.g., I, me, myself; n = 310). As expected, our robustness check indicate that self-referencing tweets related to AI and job replacement based on feeling tasks (M = 0.38, SD = 0.42) had more positive sentiment than those based on thinking tasks (M = 0.20, SD = 0.48, KW χ 2 = 10.05, p < .01). A similar robustness check that included all first-person pronouns (including plural, e.g., we, our) also replicated our basic findings. In sum, the analysis of tweets associated with AI collected over more than 2 years indicates that the sentiment towards AI replacement hinges on the nature of employees’ job tasks.

Study 1b: How AI Affects Fear of Replacement Among Service Providers Performing Thinking and Feelings Tasks

Study 1b aimed to provide corroborating evidence for the distinct impact of the presence of AI among service providers performing thinking and feeling tasks on the workers’ feelings, but now focusing specifically on their fear of being replaced.

Participants, procedure, and measures

We first contacted 680 service providers who attended an executive education session at a Portuguese university. Participants received a link to an online survey and were invited to volunteer to participate in a short study about the role of AI in the service industry. Four hundred and nineteen service executives responded to the questionnaire (61.2% women; Mage = 25.3, SD = 7.4) voluntarily. First, participants informed the tasks they usually engage in at work on a nine-point scale: 1-More focused on social/soft skills (feeling tasks); 9-More focused on technical/hard skills (thinking tasks). Accordingly, higher scores indicate stronger reliance on thinking (vs. feeling) tasks. Then, participants indicated their fear of AI replacement across 3 items (α = 0.69; Li et al., 2019) in a nine-point Likert scale (1-Strongly disagree; 9-Strongly Agree) web appendix.

Results and discussion

As predicted, participants’ reliance on thinking tasks was significantly correlated with fear of replacement (r =.211, p < .001). To illustrate, service employees more engaged in thinking tasks (i.e., above the median score) indicated higher fear of being replaced by AI (M = 5.83, SD = 2.02) than the employees more engaged in feeling tasks (i.e., below the median score; M = 5.18, SD = 2.36; t330.416 = −4.717, p < .01). These findings complement study 1a by demonstrating that service employees do not simply have more negative sentiments towards AI, self-referenced or otherwise, but that such sentiments reflect a greater fear of being replaced by AI. In the next study, we demonstrate causal evidence that AI influences another important outcome for service employees: their projected job performance.

Study 2: How AI Affects the Expected Job Performance Among Service Providers Performing Thinking and Feelings Tasks

The primary goal of study 2 is to provide causal evidence for the negative impact of AI on the employees’ attitudes and feelings when they perform thinking (vs. feeling) tasks. We asked service providers to elaborate on thinking or feeling tasks related to their jobs while reminding them of the presence of AI in service environments and then asked them to indicate their expected job performance. The study design, procedures, and analyses were pre-registered (AsPredicted #78042).

Participants and design

We posted a HIT on Amazon Mechanical Turk inviting service providers to participate in a short survey in exchange for a small fee. After screening out duplicate geolocations (per pre-registered procedure), our final sample consisted of 242 service employees from the US (34.3% women; Mage = 35.0, SD = 9.9). The majority of our sample (55.7%) is at the mid-level management (e.g., team lead, account manager, and supervisor); their service jobs were primarily in the business service (21.9%), finance and banking (20.3%), and communication services (17.5%). Study 2 used a one-factor (service tasks: thinking vs. feeling) between-subjects experimental design.

Procedure and stimuli

Participants were informed that the survey was about AI in the work environment and were provided with a definition of AI. They were then randomly assigned to one of the two experimental conditions (Bastos and Barsade, 2020; Wade and Parent, 2001). In the feeling (vs. thinking) task condition, participants were asked to recall and write about feeling/social (vs. thinking/technical) aspects of their current or previous job. Specifically, they read the following instruction: “In the box below, we will ask you to recall the social [technical] aspects of your current or your previous job and evaluate how you felt while you were working. Please think specifically about the social aspects of your job where you had to communicate a lot with customers, colleagues, partners, or end-users, where you were in charge of ensuring successful teamwork for instance [technical aspects of your job, where you had to use any software, do data analysis, coding and programming, project management or any mechanical task].”

Measures

Job performance was assessed by asking participants whether they agreed (1-Strongly disagree; 9-Strongly Agree) with three items borrowed from Xiaojun (2017); we averaged those items to form a positive job performance index (α = 0.68). As a manipulation check, we asked participants to indicate whether the job they described at the beginning of the study related more to technical or social skills on a nine-point scale (1-Social skills; 9-Technical skills). Higher (vs. lower) scores indicated reliance on thinking (vs. feeling) tasks.

Results and discussion

Results from an independent samples t-test with task type as the grouping factor and skill score as the dependent variable confirmed the effectiveness of our manipulation, with participants in the thinking condition reporting higher use of technical (as opposed to social skills; M = 7.05, SD = 1.47) than participants in the feeling condition (M = 6.31, SD = 1.96; t(229.06) = 3.32, p = .001). A similar t-test on predicted job performance confirmed our predictions, with individuals in the thinking task condition reporting lower predicted job performance (M = 6.05, SD = 1.08) than participants in the feeling task condition (M = 6.44, SD = 1.20; t(239.72) = 2.71, p = .01).

In sum, Study 2 demonstrates that the presence of AI has a negative impact on service providers’ expected job performance when they focus on thinking tasks (versus feeling tasks). Further, and perhaps more importantly, in study 2 we show causal evidence in support of this relationship. The primary goal of the next study is to provide evidence for perceived ability as the mechanism underlying the observed effects while demonstrating the germane role of the presence of AI on the reactions of service employees engaging in thinking and feeling tasks.

Study 3: A Test of Perceived Ability as the Underlying Process

Based on findings from studies 1, 1b, and 2, one could argue that the effects we observed are not unique to the AI context, but rather inherent to service employees’ relative preference for feeling over thinking tasks. Such a preference could arguably make them feel better able to perform such tasks, replicating the findings we observed in the first three studies. To provide evidence that negative feelings and hindered performance expectations among service providers engaging in thinking (vs. feeling) tasks are unique to the context where AI is salient, in study 3 we manipulated the salience of AI by reminding participants of AI and its capabilities in the high salience condition and contrasting it with a condition where they elaborate on their tasks without being reminded of AI and its abilities. Finally, study 3 provides evidence in support of service providers’ relative ability as the mechanism underlying our predicted effects.

Participants and design

We recruited service employees on Prolific Academic in exchange for a small fee to take part in the study. After screening out duplicate geolocations, our final sample consisted of 331 service employees (70.0% women; Mage = 40.2, SD = 11.5). The study had a 2 (service tasks: thinking vs. feeling) by 2 (AI salience: high vs control) between-subjects experimental design. The study design, procedures (including screening procedure), and analyses were pre-registered (AsPredicted #81506).

Procedure and stimuli

Participants were randomly assigned to one of the four conditions. We manipulated task type and AI salience together through a recall task. Task type was manipulated as in study 2. We manipulated AI salience by, after the recall task, reminding them that “Artificial intelligence (AI) is the capability of a computer program to perform tasks or reasoning processes that we usually associate with intelligence in a human being. It has the potential to improve productivity, efficiency, and accuracy across an organization. However, AI can be seen as a threat to replace human workers due to AI’s capabilities.” The AI salience information was not given for participants in the control condition.

Measures

We measured fear of AI replacement using the same 3 items as in study 1b (α = 0.91). Job performance was captured via a five-item scale used by Windle and colleagues (2018; α = 0.84; sample item: “I persevere with my work plans.”). To assess perceived ability, we borrowed four items from (Nicholls et al., 1990; Pensgaard & Roberts, 2000; Sanbonmatsu et al., 2013; α = 0.77; sample item: “How do you evaluate your own ability at work compared with others[AI]”?). The manipulation check for task type was the same as in Study 2. The manipulation check for the salience of AI was assessed by asking participants to what extent their work was affected by or related to AI (1-Not at all related to AI; 9-Related to AI). We also asked participants to indicate which kind of service they provide, with an option for those who do not work in services. We ran robustness checks in all analyses by statistically controlling whether or not the participant works in the service sector; results are consistent with those reported below.

Results and discussion

We first checked the effectiveness of our manipulations using independent samples t-tests; results revealed that people assigned to the thinking tasks condition reported more technical skills (as opposed to social; M = 5.74, SD = 2.18) than those in the feeling task condition (M = 3.81, SD = 2.17, t(327.9) = 8.08, p < .001). Moreover, those assigned to the AI condition thought their jobs were more affected by or related to AI (M = 6.40, SD = 2.84) than those in the control condition (M = 5.05, SD = 2.75, t(328.41) = 4.28, p < .001).

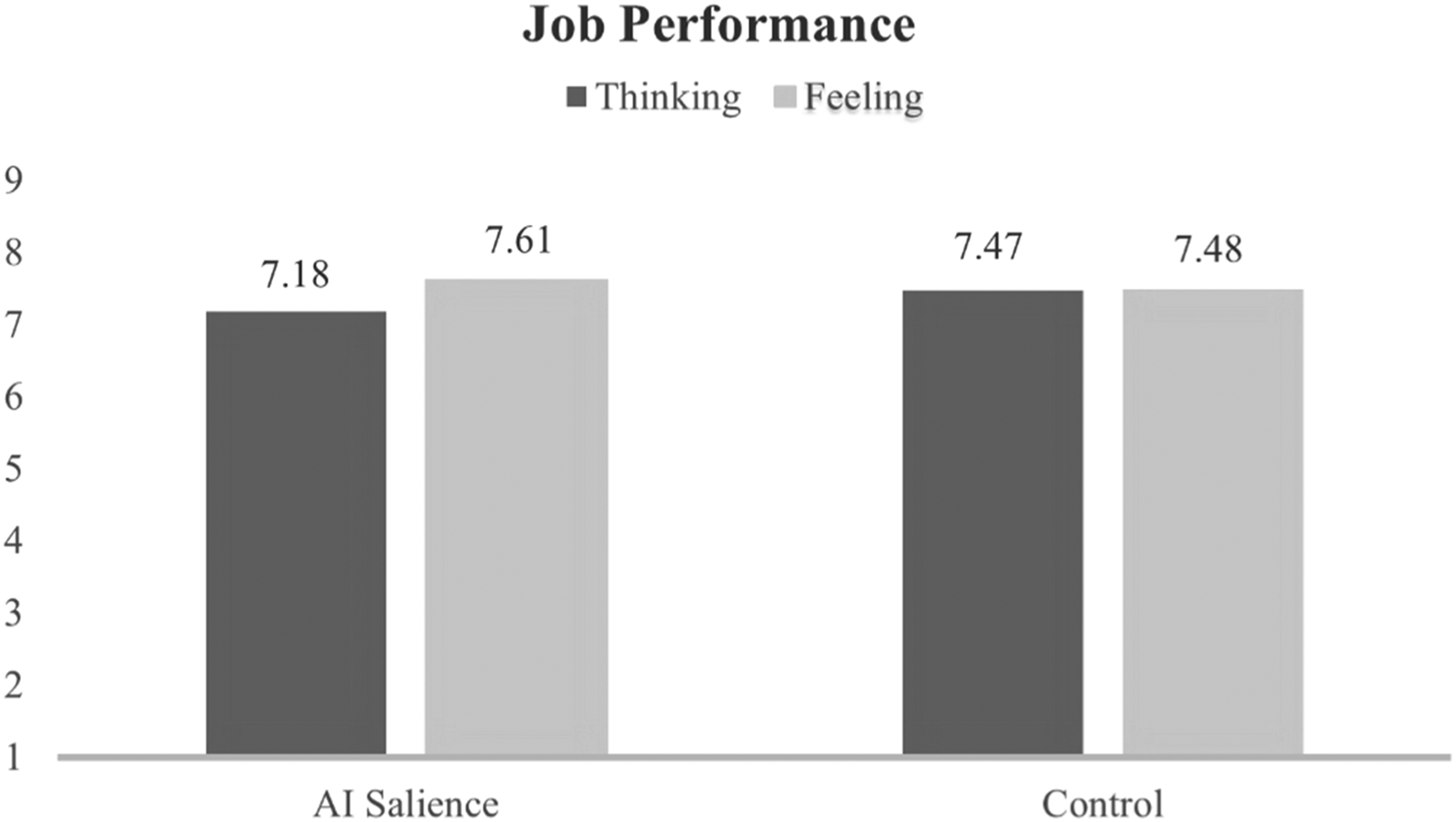

We then ran an ANOVA with expected job performance as the dependent variable and AI salience and task type as factors. Results reveal a marginally significant interaction (F(1, 327) = 3.28, p = .07) as well as a marginally significant main effect of task type (F(1, 327) = 2.77, p = .097). Most importantly, planned contrast analyses show that when AI is salient, participants elaborating on thinking tasks reported lower expected job performance (M = 7.18, SD = 1.21) than those in the feeling task condition (M = 7.61, SD = 0.97, F(1, 327) = 5.99, p = .01). Such differences are not significant in the control condition (Mthinking = 7.47, SDthinking = 1.13, Mfeeling = 7.48, SDfeeling = 1.11, F(1, 327) = 0.004, p = .95) (see Figure 1). Study 3 results–job performance.

Next, we ran a similar ANOVA with fear of replacement as the dependent variable. Results reveal only a significant interaction effect (F(1, 327) = 5.48, p = .02). Planned contrast analyses show a similar pattern, with people in the AI salient condition elaborating on thinking tasks perceiving that they had lower ability (M = 2.36, SD = 1.47) than those elaborating on feeling tasks (M = 2.93, SD = 1.93, F(1, 327) = 4.12, p = .04); such difference was not significant in the control condition (Mthinking = 2.98, SDthinking = 1.86, Mfeeling = 2.63, SDfeeling= 1.81, F(1, 327) = 1.60, p = .21).

Finally, we ran an ANOVA with perceived ability as the dependent variable. There was a significant interaction (F(1, 327) = 11.08, p < .001), a significant main effect of task type (F(1, 327) = 14.25, p < .001), and significant main effect of AI salience (F(1, 327) = 15.66, p < .001). Planned contrasts again reveal differences in the AI salient condition, with participants who elaborated on thinking tasks perceiving that they had lower ability (M = 7.44, SD = 1.32) than those who elaborated on feeling tasks (M = 6.31, SD = 1.50, F(1, 327) = 25.86, p < .001); the difference was again not significant in the control condition (Mthinking = 6.32, SDthinking = 1.39, Mfeeling = 6.22, SDfeeling = 1.41, F(1, 327) = 0.25, p = .62).

To examine the underlying role of perceived ability on service providers’ fear of replacement and expected job performance, we ran two moderated mediation analyses by estimating 10,000 bootstraps, 99% confidence intervals (CI) for the respective indirect effects. When expected job performance was the dependent variable, we found a significant indirect effect of task type on job performance through perceived ability when AI was salient (β = 0.21, CI: [0.08, 0.38]), but not in the control condition (β = 0.08, CI: [−0.01, 0.22]). A similar pattern emerged with fear of replacement as the outcome: we found a significant indirect effect of task type on fear of replacement through perceived ability when AI was salient (β = −0.23, CI: [−0.07, −0.47]), but not in the control condition (β = −0.09, CI: [0.01, −0.28]).

In sum, Study 3 provides evidence that the negative effect of thinking tasks (relative to feeling tasks) on service employees’ fear of replacement and job performance is contingent on AI being salient. Importantly, study 3 also provides evidence that such an effect emerges due to lower perceived ability.

Study 4: The Effect of AI and Task Type on Real Job Performance

The goal of study 4 is twofold. First, we want to provide evidence for the negative effect of AI on real performance. We did so by designing a game that ostensibly relied either on thinking or on feeling and assessed the participants’ performance. Since AI reduces the perceived ability and expected performance when engaging in thinking (relative to feeling) tasks, such beliefs should influence the amount of effort participants put in the game, negatively impacting their actual performance (Renn, Steinbauer, and Biggane 2018). Second, we wanted to provide evidence that such reduced performance stems from the employee comparing himself with an agent (i.e., AI) with superior (inferior) thinking (feeling) ability. To that end, we mirrored Harding et al.'s (2019) procedures and contrasted the condition where the employee competes against AI with two conditions where it plays against a human player with superior thinking or feeling abilities. We predict that participants will perform worse in the thinking (vs. feeling) game when playing against the AI and the human with special thinking intelligence. In contrast, we expect participants will perform worse in the feeling (vs. thinking) game when playing against a human with special feeling intelligence. The study design, procedure (including sample screening), and analysis plan were pre-registered (AsPredicted #80903).

Participants and design

After filtering out identical geolocations, our final sample consisted of 585 service providers (44.1% women; Mage = 40.2, SD = 12.5) who answered an advertisement posted on Amazon Mechanical Turk in exchange for a small fee. Study 4 employed a 2 (task type: thinking vs. feeling) x 3 (adversary: AI vs. feeling task expert vs. thinking task expert) between-subjects experimental design.

Procedure and stimuli

Participants were informed that they would be playing a game that assesses skills essential to service providers against a randomly assigned opponent. The game consisted in identifying quickly and accurately consumers’ facial expressions; an ability of interest to service providers. Participants assigned to the feeling task condition were told that, in a service context, it is important that they have social skills, which we told them related to the awareness of the others, as well as the ability to deal with stress, problems, criticism, and understanding of the others’ needs. In contrast, participants in the thinking task condition learned that service contexts require strong technical skills, which we told them referred to the learned abilities acquired and enhanced through practice, repetition, and education related to analytical, mechanical, and computing abilities. Next, participants were informed that they would be playing this facial expression recognition game against either an AI player, or against a human player with strong thinking (i.e., fast in computing and analyzing data) or strong feeling skills (i.e., empathic, understanding, and good in dealing with emotions). Next, participants were exposed to a set of five different facial expressions that, consistent with prior research (Dailey et al. 2002), represent five prototypical emotional expressions (see web appendix for the stimuli). They were then reminded that they should try to identify the emotional expressions as quickly and accurately as possible.

Measures

Upon seeing the facial expression, participants were given three options, presented in a randomized order: the correct emotion, an incorrect one, and “I don’t know” (Dailey et al. 2002). Higher (vs. lower) job performance is reflected in the proportion of correct choices. As a manipulation check for the three adversary conditions, participants answered two questions: (1) whether the opponent had more social (feeling) or technical (thinking) skills (1-Stronger social skills; 9-Stronger technical skills) and (2) whether the opponent in the game was a human or AI.

We first examined the effectiveness of our comparison manipulation. An ANOVA with skills rating as the dependent variable and adversary as the factor revealed a significant main effect (F(2, 582) = 67.94, p < .001). As predicted, participants perceived that the human opponent with high thinking had higher thinking (over feeling) skills (M = 6.49, SD = 1.73) than the human opponent with high feeling intelligence (M = 4.65, SD = 2.16, F(1, 582) = 85.88, p < .001). AI received a score on thinking (over feeling) skills similar to that of the human opponent with a special thinking intelligence (M = 6.75, SD = 1.95, F(1, 582) = 1.64, p = .20) and higher than the human opponent with a special feeling intelligence (F(1, 582) = 113.57, p < .001). As well, participants who played against the AI indicated that they played against an AI more often (M = 88.5%) than participants assigned to the two human player conditions (Msocial = 45.4%, Mtechnical = 56.1%, χ2(2) = 56.71, p < .001); while we hoped for lower proportions of participants who believe they played against an AI in the two human opponent conditions, the large discrepancy with the AI condition and non-significant differences across the two human conditions dissuade our concerns with the effectiveness of the manipulation. Results from analyses where we eliminate participants assigned to the two human player conditions who reported having played against an AI replicate the findings reported below, further reducing concerns with the effectiveness of the manipulation.

To examine the influence of task type and adversary type on job performance, we first created two dummies to identify the adversary factor; the first dummy (i.e., AI dummy) received 1 for AI and zero for each human player, while the second dummy (i.e., Thinking dummy) received 1 for the opponent with special thinking intelligence and zero for the other two conditions. Accordingly, each dummy can compare the condition receiving 1 (i.e., AI or Thinking player) with the condition that received zero in both dummies (i.e., the player with special feeling intelligence; see Aiken, West and Reno 1991 for details on the approach). We then ran a general linear model (GLM) whereby we regressed task type (contrast coded; feeling =0.5, thinking =-0.5), the two dummies, and the interaction between each dummy and task type on their responses over the five trials, assuming a Bernoulli distribution of the outcome and logit link (see Crawley, 2012 for details on the analysis). Results revealed a significant interaction between task type and the AI dummy (β = 2.44, z = 5.91, p < .001), a significant interaction between task type and the thinking dummy (β = 1.59, z = 4.17, p < .001), and a significant effect of task type (β = −0.87, z = −3.27, p < .01); no other effects were significant. Planned simple slope analysis (Aiken, West and Reno 1991) indicated that participants who played against AI had significantly higher accuracy (M = 97.3%) when the game relied on feelings than when they learned the game relied on thinking (M = 88.2%; β = 2.07, z = 6.15, p < .001). A similar pattern emerged when participants played against the human opponent with high thinking intelligence, with better performance when the game relied on feelings (M = 95.4%) than when the game relied on thinking (M = 91.0%; β = 1.52, z = 5.00, p < .001). Finally, consistent with our proposition that performance is influenced by a relative comparison process, those playing against a human player with high feeling intelligence performed better when the game relied on thinking (M = 96.0%) than when the game relied on feeling (M = 90.9%; β = 0.99, z = 4.75, p < .001).

In sum, the findings of this study suggest that even on a simple emotion recognition task with documented high accuracy scores (Dailey et al. 2002), when service employees compare their abilities with AI, they perform worse when they learn that the task relies on thinking (in which they tend to be inferior to the AI) than when they learn that the task relies on feeling (in which they tend to be superior to the AI). The replication of the pattern of results when participants thought that they are playing against a human player with special thinking intelligence and the reversal of the effects when they thought that they were playing against a human player with special feeling intelligence lends further credence to our framework.

General Discussion

In five studies using different data sources (text mining, field, and online studies), we demonstrate that the detrimental effects of AI on job outcomes hinge on the type of task performed by service employees. Specifically, our findings demonstrate that, in the presence of AI, service employees tend to have more negative feelings (study 1a), feel less capable (study 3), have a greater fear of being replaced (studies 1b and 3), have lower anticipated performance (studies 2 and 3) and perform worse (study 4) when engaging in thinking over feeling tasks. In doing so, this research provides important theoretical and managerial implications for the Feeling Economy account, the social comparison theory, and service literature.

Theoretical Implications

Our primary contribution is to the burgeoning work on the Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021). Our findings extend Huang and Rust’s paradigm by showing that AI might damage the job outcomes of service employees working on tasks relying on the processing and analysis of data. Conversely, jobs requiring feeling intelligence such as responding or reacting appropriately to collaborators’ or consumers’ feelings might have a brighter future. Interestingly, our findings also suggest that the shift in the type of tasks performed by humans in the emerging Feeling Economy (Huang, Rust, and Maksimovic 2019; Rust and Huang 2021) might eventually lead to the employees’ decreased fear of being replaced by AI and to better job performance. In that, we are the first, to the best of our knowledge, to propose and demonstrate how the Feeling Economy might impact the job outcomes of employees in any industry—service or otherwise.

Our research also contributes to the social comparison literature (Alicke, Zell, and Bloom 2010; Harding et al. 2019; Wheeler, Martin, and Suls 1997) by proposing that artificial agents, particularly those with AI capabilities, may serve as comparison standards in ability and performance judgments. According to Koopman et al. (2020), while the extant literature indicates that social comparisons influence employee outcomes (e.g., job performance), the role of AI has been thus far ignored. Along similar lines, this finding contributes to the burgeoning work on the interpersonal aspects of the relationship between humans and machines (Lewandowsky, Mundy, and Tan 2000; March 2021; Ruitjen, Midden, and Ham 2015) by demonstrating that artificial agents might engender social comparison processes.

We also add a more nuanced understanding of how the presence of robots might improve cognition and performance on certain tasks (Spatola et al. 2018). Interestingly, Spatola (Spatola et al. 2018; Spatola 2020) demonstrated that the presence of an anthropomorphized robot improved the individual’s performance on a cognitive task, which seems at odds with our framework and our findings. It is important to note, however, that in Spatola’s studies, the artificial entity served as an observer of the human performer and, as such, individuals might have assimilated rather than contrasted their abilities with those of the artificial agent. Future research could examine this interesting hypothesis, testing whether the role the AI plays in the organization (e.g., replacing jobs or augmenting job capabilities) might impact the individual’s tendency to assimilate or contrast their abilities with those of the artificial agent.

Finally, our research adds to the findings of Granulo, Fuchs, and Puntoni (2019) by suggesting that the negative outcomes of AI on job outcomes they documented might hinge on the type of task the employee performs. In a similar vein, we contribute to the literature on technostress (Ayyagari, Grover, and Purvis 2011; Saleem et al. 2021; Tarafdar, Pullins, and Ragu-Nathan 2015; Tu, Wang, and Shu 2005) particularly as it relates to social anxiety (e.g., fear of being replaced by AI; Abilleira et al. 2021); we show that AI can be a source of technostress for service employees performing thinking (versus feeling) tasks and it might negatively influence their performance and fear. Future research could extend our work by building on the literature on technostress to examine whether service employees engaging in feeling (vs. thinking) tasks might become more resilient to technostress.

Managerial Implications

Our findings have important practical implications in light of the growing interest in understanding service employees’ perceptions of AI (Schepers and van der Borgh 2020). It is well documented that AI might not only engender fear in service employees but that such fear might cause disruption and incapacitate behavior (Jamroz 2020). While it is crucial to explain to service employees the company’s strategy for AI integration and for greater transparency with service employees to minimize fear of replacement by AI (Tyfting 2020), it is still largely unknown how to best communicate with employees to reduce such fear. Our findings of study 4 suggest that merely framing a job task as relying on feeling intelligence might be enough to counter the potentially deleterious effect that AI can have on employee performance.

Relatedly, a recent BCG report (Strack et al., 2021) underscored that the companies’ growth in the near future will hinge on the employees’ ability to upskill and retool, hinting that employees will not lose jobs, but rather will have different job descriptions and assigned tasks. This view is reflected not only in prior research documenting the increasing demand for feeling/social skills that characterize the Feeling Economy (Balcar 2016; Huang, Rust, and Maksimovic 2019; Lewis et al. 2008) but also in the findings from Google’s Project Oxygen, which revealed that the seven skills that most influence the company’s top employees’ performance are all associated with the feeling intelligence (Strauss 2017). Given these irreversible trends and of the findings we report in this research, service providers that incorporate AI in their operations could improve the performance of their workforce by underscoring the social and empathetic aspects of the employees’ assignments in their job descriptions, training, and potentially even in the performance evaluations. Similarly, our findings also echo the call from Rust and Huang (2021) for business schools to shift their focus from developing the students’ thinking intelligence to helping students nurture their more social and empathetic skills.

Limitations and Future Research

This study has some limitations that can offer directions for future research. Primarily, our data were collected during a very unique context: during the Covid-19 pandemic. Covid-19 has drastically influenced the relevance of thinking versus feeling tasks, given the lack of social interactions (Calbi et al. 2021). This could engender higher resilience towards AI integration and higher fear of AI replacement. Future research should investigate the post-pandemic long-term effects.

Further, while AI can both augment and replace human tasks (Huang, Rust, and Maksimovic 2019; Larivière et al. 2017; McLeay et al. 2021). Our studies depict the presence of AI without considering such framing; to remedy such limitation, future research could investigate the potential role of AI framing (fully vs. partially replacing human tasks) in our effects. Similarly, more research is needed to explore whether there are differences in reactions when the AI replaces someone’s job in the organization or when it replaces individual tasks (Granulo, Fuchs, and Puntoni 2019).

Interestingly, recent work suggests that AI is not immune to engaging in discriminatory behavior, such that the algorithms that guide the AI decisions might be inherently biased against certain minorities (Ukanwa and Rust 2020), potentially canceling some of the effects we observe on the present research. This idea is based on a wealth of research (Turner 1975) suggesting that threats to one’s identity might engender a form of social competition that results in selective social comparisons that result in systematic biases. If employees feel discriminated by the AI or feel that the AI is discriminating against members of relevant groups they feel part of, they could engage in social competition against the AI that may mitigate or even reverse some of the nefarious effects of the comparison processes we documented. Future work could explore this intriguing possibility.

Our findings suggest that AI affects feelings and job performance because it serves as a comparison standard for employees. While our data indicate that this is a byproduct of both upward and downward comparisons (i.e., the presence of the AI may engender both better and worse performance, depending on the task), future studies could examine more in-depth the role of upward and downward social comparisons. Further, while in our research we show evidence that the upward and downward comparisons elicit contrasting effects with the participants’ self-assessments, prior work (Zell et al. 2020) has also suggested that assimilation effects might also be possible. Future research could theorize and demonstrate under what conditions assimilation effects are possible. For instance, one could examine whether perceptions of AI augmentation (replacement) role elicit assimilation (contrast) comparison processes.

Finally, it is worth noting that artificial agents might vary in the degree to which they are anthropomorphized, which may have both a positive (Glikson and Woolley 2020) and a negative impact on humans’ reactions to it (Laakasuo, Palomäki, and Köbis 2021). One could argue that if an AI appears eerie human, its differences might become more apparent, and employees might seize to use it as a comparison standard. While past research has documented persistent interpersonal effects of artificial agents despite the uncanny valley (Patel and MacDorman 2015), future research could examine this interesting hypothesis.

To conclude, since the adoption of AI-enabled services is inevitable, we must understand how to best maximize service outcomes from human-to-AI interactions. We hope that our research inspires future work dedicated to comprehending the various opportunities and disadvantages of AI integration in the service landscape to better manage service organizations as they gradually adapt to the emerging Feeling Economy.

Supplemental Material

Supplemental Material - Thinking Skills Don’t Protect Service Workers from Replacement by Artificial Intelligence

Supplementary Material for Thinking Skills Don’t Protect Service Workers from Replacement by Artificial Intelligence by Darina Vorobeva, Yasmina El Fassi, Diego Costa Pinto, Diego Hildebrand, Márcia M. Herter, and Anna S. Mattila in Journal of Service Research

Footnotes

Acknowledgments

The authors would like to thank Nuno Antonio and Saleh Shuqair for their helpful advice and comments.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Foundation of Science and Technology (FCT Portugal) [DSAIPA/DS/0113/2019].

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.