Abstract

This research draws upon the increasing usage of AI in service. It aims at understanding the extent to which AI systems have multiple intelligence types like humans and if these types arouse different emotions in consumers. To this end, the research uses a two-study approach: Study 1 builds and evaluates a scale for measuring different AI intelligence types. Study 2 evaluates consumers’ emotional responses to the different AI intelligences. The findings provide a measurement scale for evaluating different types of artificial intelligence against human ones, thus showing that artificial intelligences are configurable, describable, and measurable (Study 1), and influence positive and negative consumers’ emotions (Study 2). The findings also demonstrate that consumers display different emotions, in terms of happiness, excitement, enthusiasm, pride, inspiration, sadness, fear, anger, shame, and anxiety, and also emotional attachment, satisfaction, and usage intention when interacting with the different types of AI intelligences. Our scale builds upon human intelligence against AI intelligence characteristics while providing a guidance for future development of AI-based systems more similar to human intelligences.

Keywords

Introduction

Artificial intelligence (AI) draws upon the idea that machines (computers) should mimic the human brain’s cognitive processes and act accordingly by using specific software and algorithms. Specifically, they would reproduce human attributes such as learning, speech, and problem-solving (Saridis and Valavanis 1988). In other words, AI is often developed to capture and simulate human cognitive abilities as a “hybrid-human machine apparatus” (Muhlhoff 2020). Although robots are not yet as diffused as Asimov imagined in 1950 (Asimov 1950), AI is increasingly used in new product development, creative design, and manufacturing to mimic or even replace human creativity (Demarco et al. 2020). The diffusion of AI has attracted increasing interest from marketing scholars and practitioners, particularly as a promising tool for improving service (Davenport et al. 2020; Huang and Rust 2021a, b; Shankar et al. 2021). Indeed, AI can: (i) be a robotic companion that supports the shopping experience (Bertacchini, Bilotta, and Pantano 2017; Huang and Rust 2021a; Xiao and Kumar 2021); (ii) improve recommendations (e.g., for clothing, through digital stylists) (Silva and Bonetti 2021); (iii) provide automatic customer assistance through a chatbot (Pizzi, Scarpi, and Pantano 2021); (iv) deliver personalized offers to consumers (Kumar et al. 2019); (v) understand and predict consumer behavior (Huang and Rust 2021b), etc.(1)

Recent studies have advanced that AI can be designed to have multiple intelligences (Huang and Rust 2018). However, if AI mimics Human Intelligence (HI), a measurement scale for AI should be developed starting from the notions about HI. Yet, the development of tools for measuring or evaluating these different intelligences is still in its infancy. Likewise, research has yet to determine how people emotionally react when interacting with different AI intelligences (Huang and Rust 2021a). Thus, the more common human-robot interactions become, the more need there is to understand (i) what humans perceive about artificial intelligences and (ii) what emotional response such intelligence evokes. Accordingly, there is a need to investigate the extent to which people evaluate the technology (including AI systems) and how they reply (Shin 2021), with emphasis on the diverse possible emotional response (Huang and Rust 2021b).

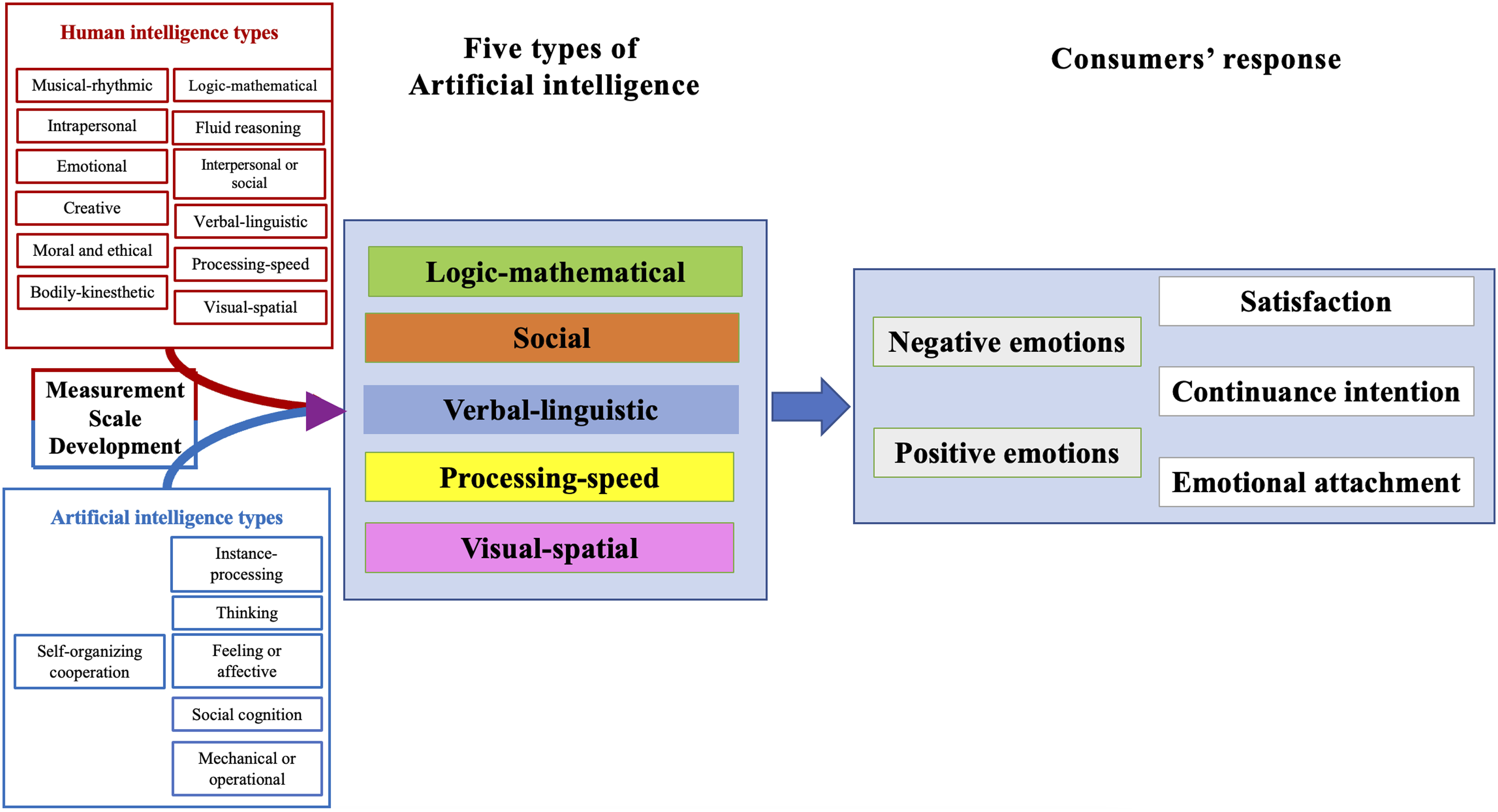

Human and artificial intelligence have been mostly investigated independently. However, past authors stated that AI aims at reproducing human attributes to simulate human cognitive abilities (Saridis and Valavanis 1988; Muhlhoff 2020). Thus, we provide a combined and more comprehensive overview of the possible new AI types emerging from the contrast with the human one. Based on that, we propose five types of AI. Then, we develop a scale for measuring AI intelligence, emphasizing the similarities and differences with HI (Study 1). Finally, we show what emotions humans experience interacting with different AIs (Study 2). This scale has the advantage of showing the extent to which AI is diverse, measurable, quantifiable, and classifiable against HI, which was not considered in previous AI scales. In doing so, this research provides a measure of AI intelligences as perceived by the consumers interacting with them. It shows that different AI intelligences solicit different positive and negative emotions in consumers in retail service settings, such as happiness, excitement, enthusiasm, pride, inspiration, sadness, fear, anger, shame, and anxiety.

This research draws upon several theories of HI (Gardner 1983; Cichocki and Kuleshov 2021; Mayer et al. 1999; Schneider and McGrew 2012; Kan et al. 2011; Keith and Reynolds 2010; Rosenberg et al. 2015) and the Theory of Emotions (Bagozzi, Gopinath, and Nyer 1999; Izard 1977) and uses retail service as the research context. It extends past studies on AI and emotions (Huang and Rust 2018 2021a 2021b) by (i) developing a scale to evaluate the dominant intelligences in AI systems, (ii) providing empirical evidence that intelligences for AI can be as diverse as they are for humans, (iii) showing that some AI can display multiple dominant intelligences simultaneously, contrary to humans; and (iv) demonstrating the extent to which consumers show different reactions to different AI intelligences, in terms of positive-negative emotions, emotional attachment, satisfaction, and technology continuation intention.

Theoretical Background and Hypotheses

From Human to AI Intelligences

Intelligence studies have initially focused on the ability to think abstractly and adapt to the environment (Detterman and Sternberg 1986; Wechsler 2011). Despite the debate on the precise definition of intelligence, its conceptualization has gone from the idea of a single and stable intelligence (Carroll 1993; Detterman and Sternberg 1986) to a set of multiple abilities that can develop with age (time) and experience (Mayer, Caruso, and Salovey 1999). This approach recognizes the various facets that contribute to the overall concept of intelligence. Examples are verbal comprehension and perceptual reasoning (e.g., Wechsler Abbreviated Scale of Intelligence, WASI-II; Wechsler 2011).

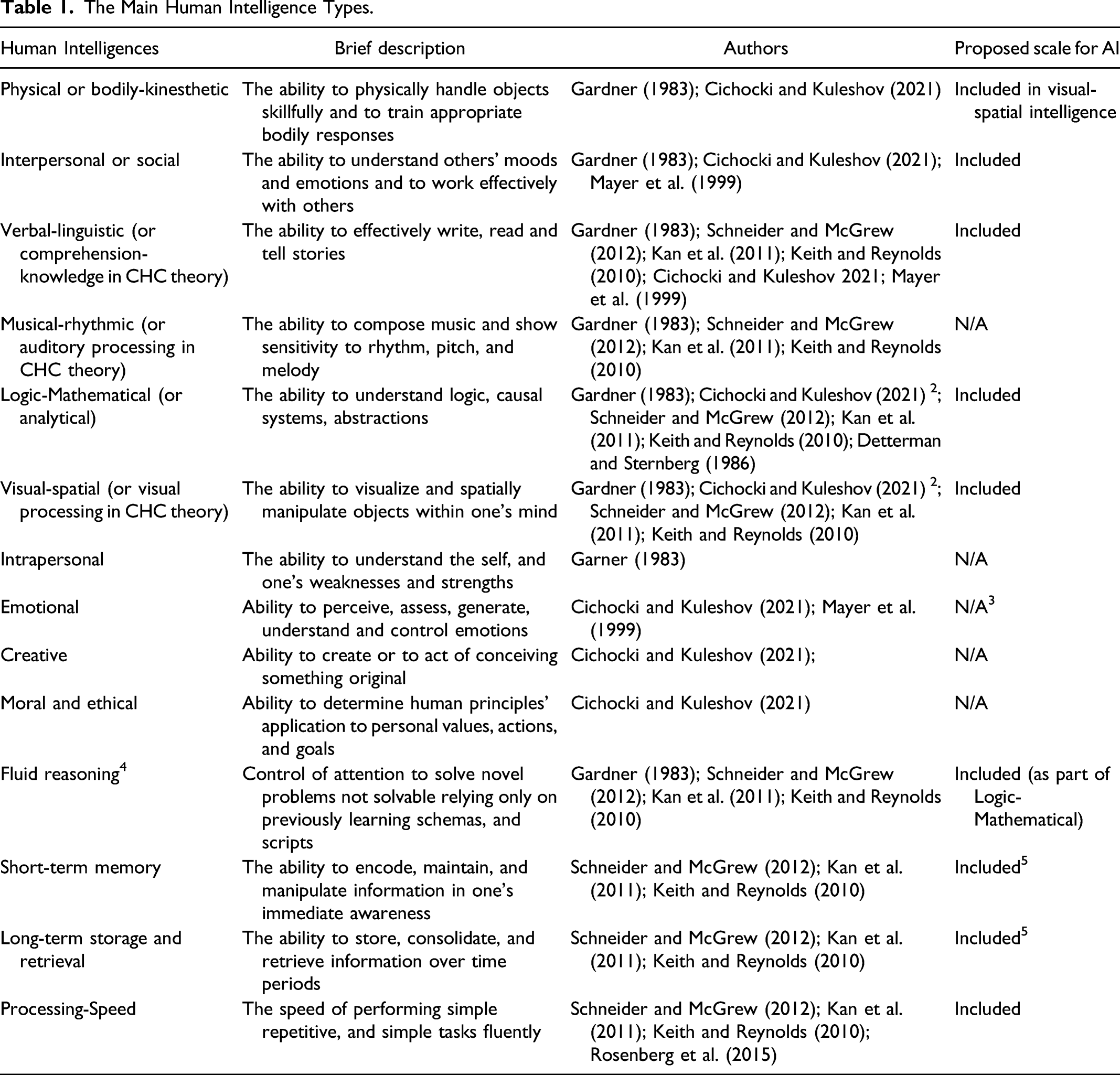

The Main Human Intelligence Types.

Despite their differences, what several theories on HI argue is that (i) HI is multifaceted, (ii) all humans can display multiple types of intelligence, and (iii) usually one intelligence type is dominant for each individual (Shearer 2020; Cichocki and Kuleshov 2021). However, to date, there are still few studies in marketing on how HI could apply to AI (Cichocki and Kuleshov 2021), with even less focusing on particular AI as in service (Huang and Rust 2018, 2021a).

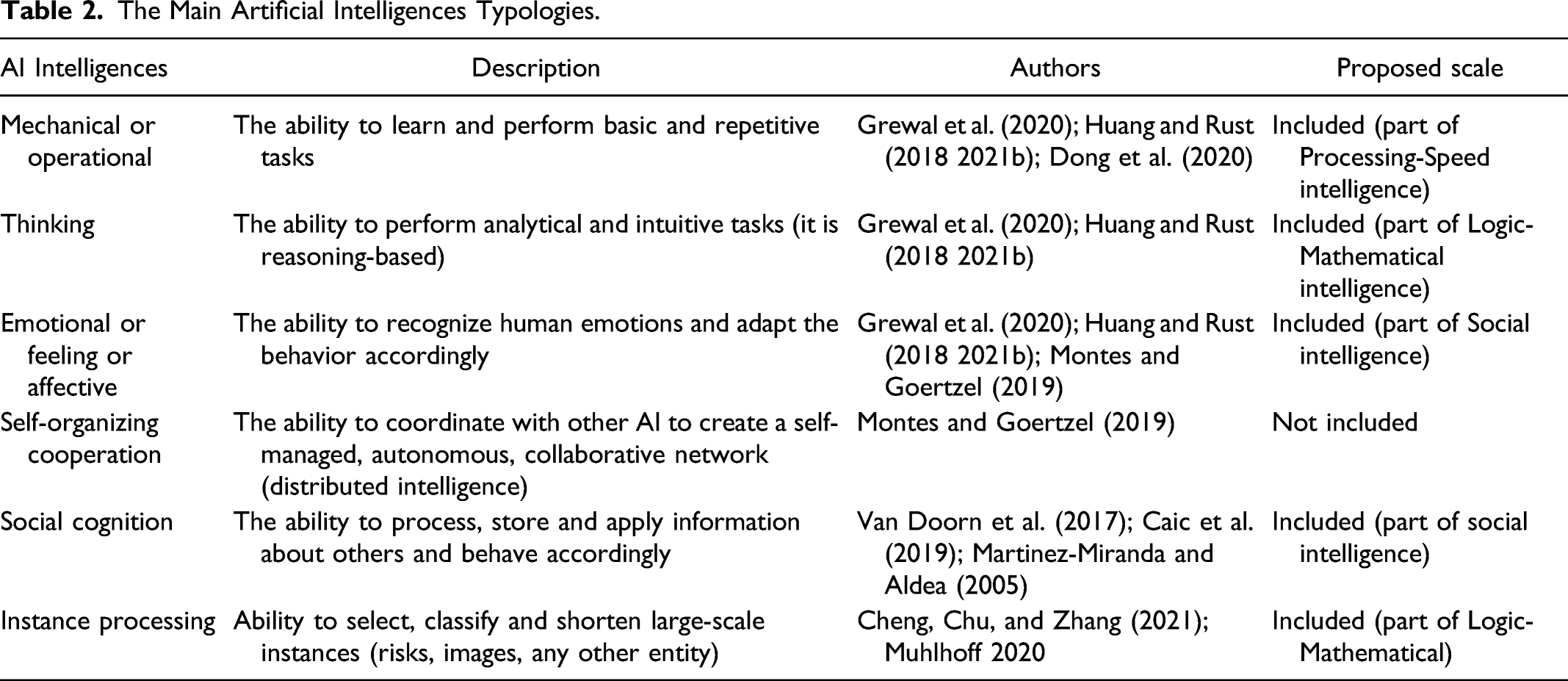

The Main Artificial Intelligences Typologies.

A huge deal of research in cognitive psychology and evolutionary robotics aims at reaching the complexity of the human brain and developing neural mechanisms of comparable complexity (Montes and Goertzel 2019) to reproduce the full range and Gestalt of human cognitive abilities rather than only a subset (Montes and Goertzel 2019). Indeed, there is a need to provide new tools and instruments to replicate the human brain’s physiological structure and its processing of information to develop more effective AI (Hernández-Orallo 2017; Li et al. 2018; Montes and Goertzel 2019). Consequently, we expect that AIs show multiple intelligences as humans do:

H1: Similar to human intelligence, AI systems have multidimensional intelligence.

Five AI Intelligence Types

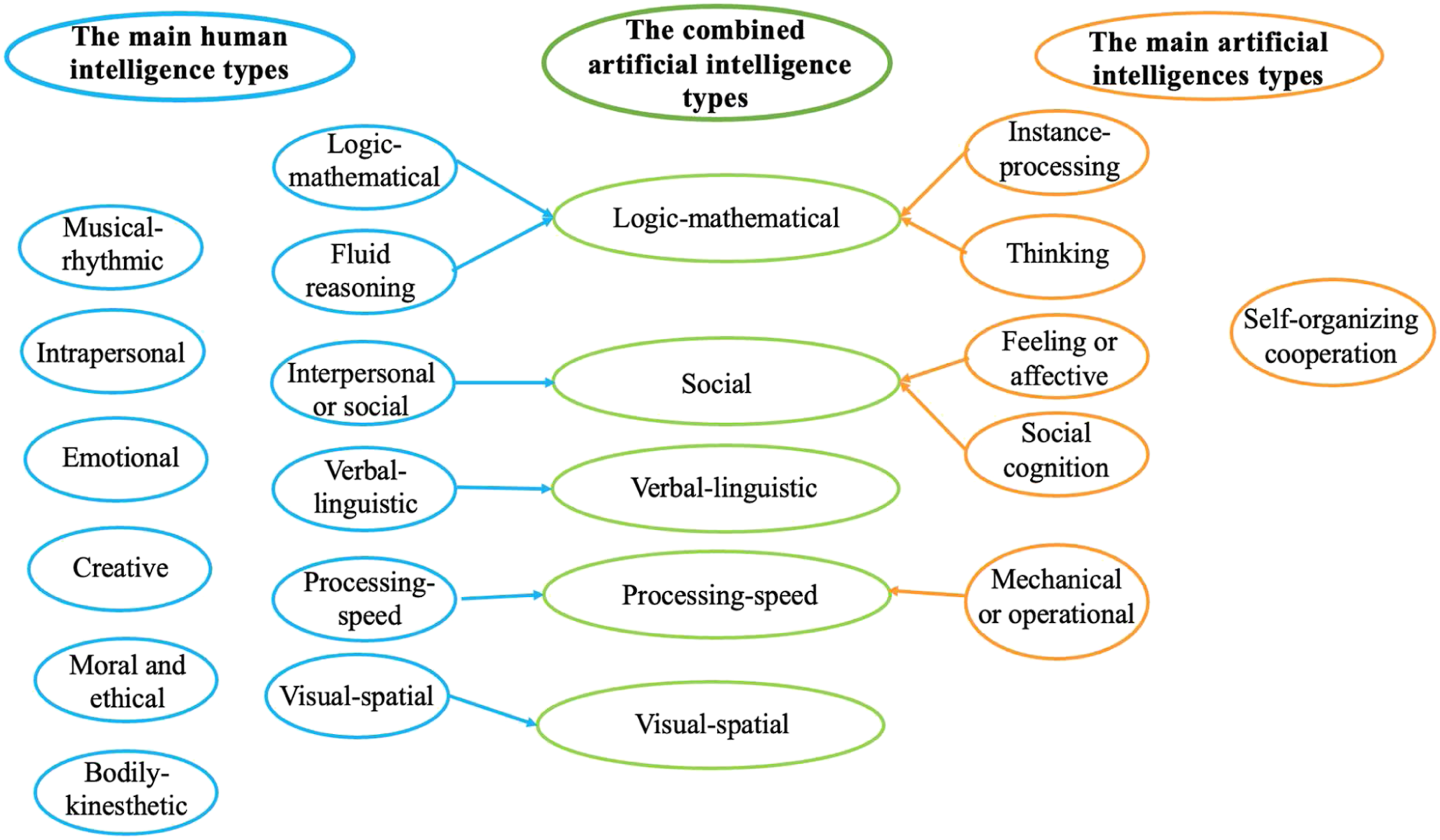

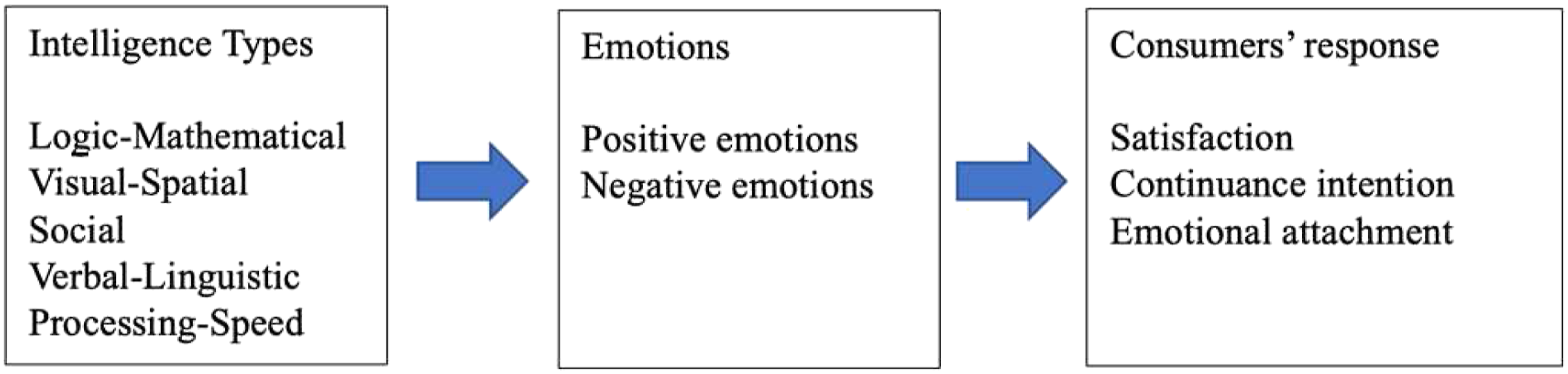

Drawing upon the past studies on HI and AI types (Tables 1 and 2, respectively), our research develops a combined and more comprehensive overview of possible AI types as they emerge from comparing human intelligence types from previous studies in psychology and evolutionary robotics (Figure 1). Specifically, we identify five main types of AI that show a correspondence between the human intelligences emerging from past studies on psychology and AI developed from past studies in AI, emphasizing the application of AI in marketing and service contexts. The combination of the two sets of intelligence in the new AI types.

(1) Logic-Mathematical intelligence: This was the first intelligence integrated into AI to create value for users (McCarthy 1988). It is mainly based on machines’ ability to solve complex analytical problems that require logical thinking (Huang and Rust 2018). This intelligence allows machines to make autonomous decisions based on the data they collect and adapt their behavior accordingly (Wirtz et al. 2018). Thus, similar to humans, it includes the ability to analyze problems and situations logically, finding solutions accordingly.

(2) Social intelligence: Scholars highlighted how AI can have social, empathetic intelligence that spans several contexts, including service (Huang and Rust 2018), domestic, hospitality, entertainment, and even healthcare (see Caic, Mahr, and Odekerken-Schröder 2019 for a review). This intelligence is related to machines’ ability to understand human emotions, respond to social cues, and interact with humans. The interpersonal dimension of this intelligence is the common thread that connects these studies.

(3) Visual-Spatial intelligence: This intelligence pertains to space perception or spatial awareness and can include the subsequent ability to manipulate objects in the space. It is not related to the possession of psychomotor abilities (i.e., moving thanks to legs, wheels, and other physical devices; Caic, Odekerken-Schröder, and Mahr 2018; Schneider and McGrew 2012). Rather, this intelligence is about AI’s ability to “understand” space. Thus, it includes pattern identification, space rendition, and planning out routes. Typical applications span from Play Station’s kinetic set to AI’s advising drivers and runners.

(4) Verbal-Linguistic intelligence: this intelligence pertains to understanding and effectively simulating human language (natural language processing). This intelligence, typical of humans’ CHC, is novel in classifying AI intelligences. It explicitly involves the machine’s ability to communicate with humans (in written or oral form), simulating human natural language processing. This intelligence is largely embedded in chatbots, or AI voice assistants like Amazon Echo, Alexa, Siri, and so on, which are growing in popularity amongst consumers due to the utilitarian benefits emerging from consumers’ interaction with this AI (McLean, Osei-Frimpong, and Barhorst 2021). Indeed, these systems are characterized by an increase in accuracy, semantic understanding ability, and wake-up ability, which can be developed to offer a rich human-computer interaction experience.

(5) Processing-Speed intelligence: This intelligence combines the CHC model of HI and the ability to perform repetitive tasks quickly and fluently (Schneider and McGrew 2012), with mechanical intelligence as the ability to perform basic and repetitive tasks (Grewal et al. 2020; Huang and Rust 2018, 2021b; Dong et al. 2020). Thus, it involves the speed of performing simple and repetitive tasks fluently and quickly. Accordingly, it does not involve understanding mathematical problems and quantitative reasoning (thus no overlaps with instance processing as part of Logic-Mathematical intelligence) or visual-spatial comparisons (so as not to overlap with Visual-Spatial intelligence), or speaking fluency (thus no overlaps with Verbal-Linguistic).

Emotions Toward the AI Types

Bagozzi, Gopinath, and Nyer (1999, p.184) defined emotions as “a mental state of readiness that arises from cognitive appraisals of events or thoughts […] and may result in specific actions”. Similarly, Isaac and Budryte-Ausiejiene (2015, 403) defined emotions as “affective states characterized by occurrences or events of intense feelings associated with specific evoked response behaviors”. In short, emotions represent a mental state and can affect subsequent actions (Bagozzi, Gopinath, and Nyer 1999). Furthermore, while initial studies used many items for measuring emotions, later research has shown these can be summarized in a much smaller number of dimensions (see Huang 2001 for a review). Ultimately, two factors are usually employed: positive and negative emotions. In this vein, Huang (2001) proposed that viewing positive and negative emotions as two separated dimensions is the most appropriate approach.

In the context of service research, positive and negative emotions arise from people’s interaction with other people (Walsh et al. 2011), which has important consequences for service (Babin et al. 2013). For instance, sales personnel can communicate in ways that influence consumers' emotions (e.g., Dallimore, Sparks, and Butcher 2007). Similarly, social abilities attributed to employees create a positive consumer service experience, which in turn results in high satisfaction and intention to continue interacting (Prentice, Lopes, and Wang 2020; Balarkishnan and Dwivedi 2021).

These considerations converge into social perception theory: when people interact, each actor anticipates the other’s intelligence and emotions and develops their emotional reaction accordingly (Cuddy, Fiske, and Glick 2008). For instance, in retail service, consumers' emotions are solicited by interaction with other consumers, employees, and the store atmosphere (including music, scent, and lights) (Pantano, Dennis, and Alamanos 2021). Moreover, contact-intensive new technology might influence consumers’ emotions (Bagozzi et al. 1999; Bougie, Pieters, and Zeelenberg 2003; Cachero-Martínez and Vázquez-Casielles 2021; Hennig-Thurau et al. 2006). Thus, we advance that the positive relationship between (human) intelligence assessment and emotional reaction will also hold when the intelligence is artificial:

H2: High levels of AI intelligence(s) will lead to positive emotions.

Furthermore, the intensity of the solicited emotions can vary based on the AI type (Martinez-Miranda and Aldea 2005). In this vein, studies in psychology conducted with adult human samples (Walker et al. 2022) demonstrated that social intelligence is associated with low levels of negative emotions such as anxiety and fear. Similarly, psychology scholars found that social intelligence reduced, or even shielded against, negative emotions, increasing individuals’ capacity to cope with and repair negative emotions (for a review, see Lam and Kirby 2002).

In this vein, Social Intelligence training was found to help people remain calm in situations that evoke negative emotions such as tension, hostility, depression, and anger (Miyamgambala 2015). Other studies found that it might reduce negative emotions like anger, dissatisfaction, and frustration (Ahn, Sung, and Drumwright 2016). Similarly, Social Intelligence can be applied to interactive systems design to support consumers’ interaction with the technology (Green and de Ruyter 2010).

We advance a similar relationship between social intelligence and negative emotions will also hold for AI. Thus,

H3: AI social intelligence reduces negative emotions.

Furthermore, technology is taking on more and more roles in service, and scholars are witnessing advancements in the use of and expectations for technology in service environments (Premer 2021). Accordingly, we advance that consumers expect an AI to perform routine tasks quickly and, in general, possess high Processing-Speed intelligence levels. Thus, at least for some consumers, Processing-Speed intelligence might be perceived as akin to a hygiene factor (Premer 2021). Hygiene factors are considered necessary pre-conditions and work asymmetrically: they do not increase positive reactions but decrease negative reactions. Thus, we advance that Processing-Speed will be negatively related to negative emotions rather than positively related to positive emotions.

From a different perspective, literature in psychology has related Processing-Speed with the intensity of emotional perception (Rosenberg et al. 2015). It supports our hypothesis suggesting that Processing-Speed is related more strongly to the perception of negative than positive emotions. For instance, when Processing-Speed is compromised in humans, the perception of negative emotions is affected more than positive ones (e.g., Dimoska et al. 2010; Spikman et al. 2012). Thus,

H4: High levels of Processing-Speed intelligence diminish negative emotions.

Finally, individuals can develop positive and negative emotions for inanimate objects, such as stores (Badrinarayanan and Becerra 2019), brands (Park et al. 2010), and places (Raggiotto and Scarpi 2021), even in computer-mediated environments (Dwivedi et al. 2019). Such a bond is usually referred to as emotional attachment and stems from the emotions perceived during an experience, for instance, while shopping (Dunn and Hoegg 2014; Badrinarayanan and Becerra 2019). Accordingly, there could be hypothesized that individuals could develop emotional bonds toward a certain AI if it evokes an emotional response in the consumers.

Overall, organizations that provide positive emotions to customers are more successful in selling goods, developing satisfactory experiences (Mende, Bolton, and Bitner 2013; Pantano, Dennis, and Alamanos 2021), and creating an emotional bonding with service providers (Badrinarayanan and Becerra 2019). Consistently, marketing scholars have found that positive emotions lead to positive outcomes, such as loyalty, satisfaction, and usage continuation (e.g., Cachero-Martínez and Vázquez-Casielles 2021; Dubé and Menon 2000).

H5: AI types-induced positive emotions positively mediate the relationship between AI intelligences and consumers’ attachment to the service provider (H5a), satisfaction (H5b), and technology continuation intention (H5c).

Instead, negative emotions lead to dissatisfaction and lower intention to keep using the brand or service provider (e.g., Bougie, Pieters, and Zeelenberg 2003; Hennig-Thurau et al. 2006). For instance, a service failure leads to consumer anger and sadness, while the interaction with an employee or another customer might elicit shame (Laros and Steenkamp 2005). Accordingly, we hypothesize that:

H6: AI types-induced negative emotions negatively mediate the relationship between AI intelligences and consumers’ attachment to the service provider (H6a), satisfaction (H6b), and technology continuation intention (H6c).

Research Design

The research is organized into two studies: Study 1 develops a scale for measuring five AI intelligences (Logic-Mathematical; Social; Visual-Spatial; Verbal-Linguistic; Processing-Speed). Then, Study 2 (field) investigates what emotions people develop as a function of the AI type they interact with and how they affect emotional attachment, satisfaction, and continuance intention.

Study 1: Scale Development for AI in Service

Development of the Items

Study 1 intends to develop a useful and practical scale that is parsimonious and applied easily. Following well-assessed procedures for scale development (Clark and Watson 2016; Netemeyer et al. 2004), preliminary scale items were identified through reviewing a large base of relevant literature (see, e.g., Table 1 and Table 2). A focus group interview was then conducted (Netemeyer et al. 2004) to specify AI’s content area. Focus group members consisted of a convenience sample of eight academics and practitioners based on easy accessibility, geographical proximity, availability, expertise in AI, and education (Master’s Degree or higher). There were two academics in digital marketing, two in psychology, two in computer science, and two in service.

They read the descriptions of AIs and HIs. Moderators probed respondents concerning how they would evaluate AI. The discussion soon centered on AI intelligences. After some discussion, a further distinction was made between AI’s mathematical and non-mathematical abilities. A wide range of responses was gathered throughout the discussion. Responses ranged from expressions of social intelligence (e.g., “Some AIs can interact with humans and seem to understand how they feel”) to mathematical intelligence (e.g., “Some AIs are good at games that require logical thinking”). Linguistic intelligence also emerged (e.g., “Some AIs express themselves with clarity and precision”) as well as consideration on the quick performance of simple repetitive tasks (e.g., “Some AIs do not really think or create anything, but are fast at doing simple things”).

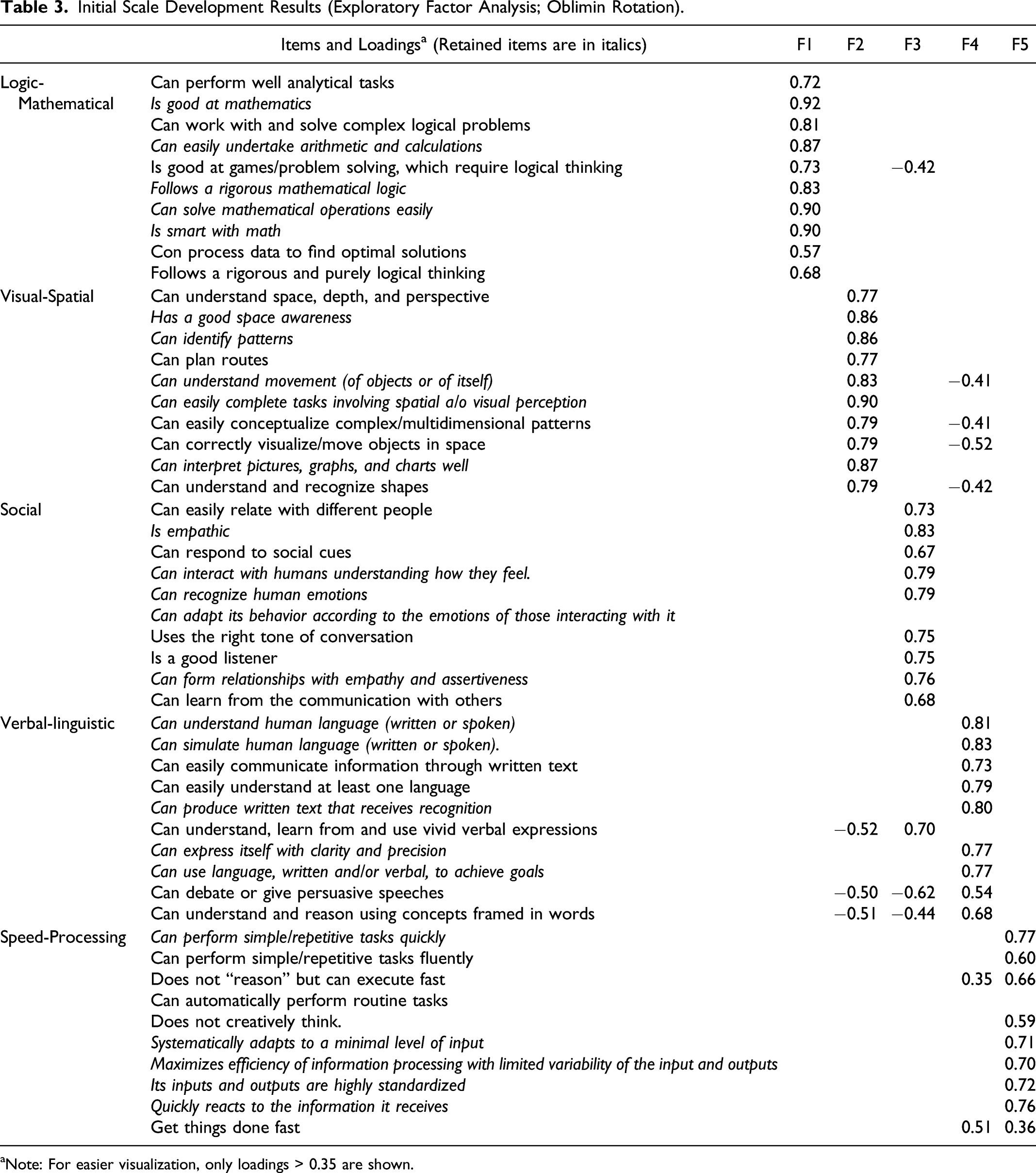

Initial Scale Development Results (Exploratory Factor Analysis; Oblimin Rotation).

aNote: For easier visualization, only loadings > 0.35 are shown.

Scale Development and Test

Initial quantitative analyses were conducted to purify the measures and provide an initial examination of the scale’s psychometric properties, following Clark and Watson (1995) and Netemeyer et al. (2004). To ensure that raters know what the object is that they are evaluating (Rossiter 2003), respondents were 200 IT professionals, computer scientists, experts in marketing and psychology (mean age = 32; 43% females) provided by a market research company (Prolific.co) recruited in September 2021.

A range of “representative constituents” of the constructs to be measured provides a safer generalization of the results (Rossiter 2003, 312). Accordingly, 6 AIs were considered: Knorr meal planner; Olay advisor; Pepper robot; Stitchfix personalized stylist; UnderArmour connected fitness; Victoria Beckham Messenger. They were all available at the time of data collection, covered different types of service (clothing, cosmetics, sports, food, etc.), and were identified with the help of a convenience sample of six experts (two retailers, two psychologists, and two computer scientists). All these AIs were free to use and could be used online, except one (Pepper Robot), which required an offline interaction. Thus, Pepper was evaluated only by respondents who declared they had recently interacted with it and passed a test to ascertain they actually had. To avoid fatigue, each respondent was assigned to two AIs, balanced so that each AI was evaluated by 50 respondents.

Respondents had to use an AI by clicking the link to the website hosting it and interacting with the AI. Then, they were administered the 50 items on seven-point Likert scales. After that, they used and rated the second AI. The appearance order of the AIs and the intelligence scales was randomized, as was the appearance order of the single items within each scale (Netemeyer et al. 2004). The ratings obtained for the 44 items were subjected to a series of iterative analyses consistent with Churchill’s (1979) paradigm for developing scales, as detailed in the following.

Dimensionality and Item purification: A factor analysis revealed the presence of 5 dimensions with Eigenvalues above 1, accounting for about 70% of the total variance, while no additional factor accounted for more than 3%. Thus, the scree-plot exhibited an elbow in the quantity of variance explained by these five factors. The initial principal components solution was rotated using Oblimin to examine the factor structure more closely.

Table 3 presents the factor loadings from this analysis. Sixteen items failed to load highly on the five factors or loaded relatively high on more than one factor. Thus, they were eliminated (Netemeyer et al. 2004). Furthermore, to have scales of the same length for each intelligence and concise enough for easier implementation, we retained the five items that performed better for each scale. Thus, 25 items comprising the first five factors were retained.

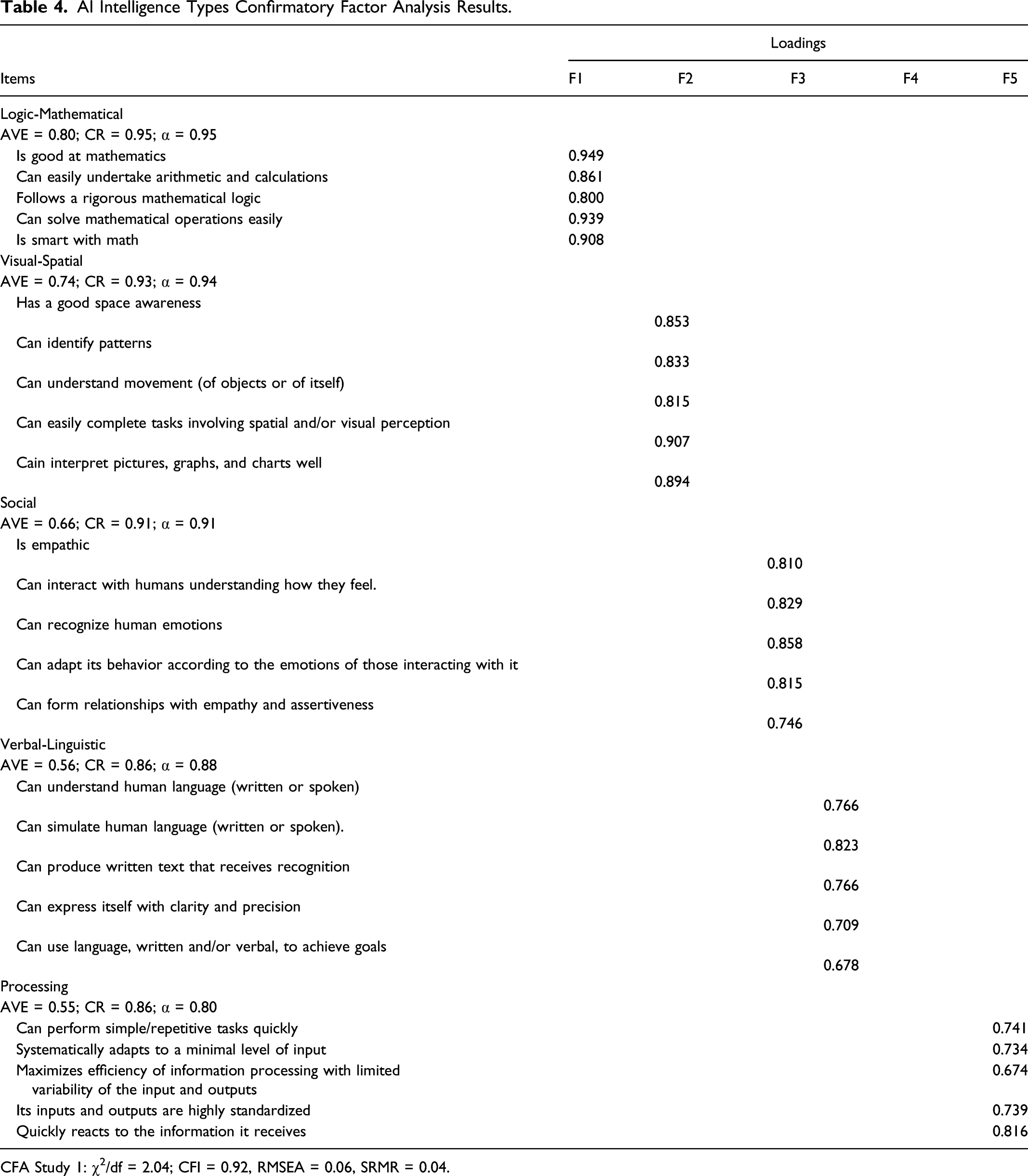

AI Intelligence Types Confirmatory Factor Analysis Results.

CFA Study 1: χ2/df = 2.04; CFI = 0.92, RMSEA = 0.06, SRMR = 0.04.

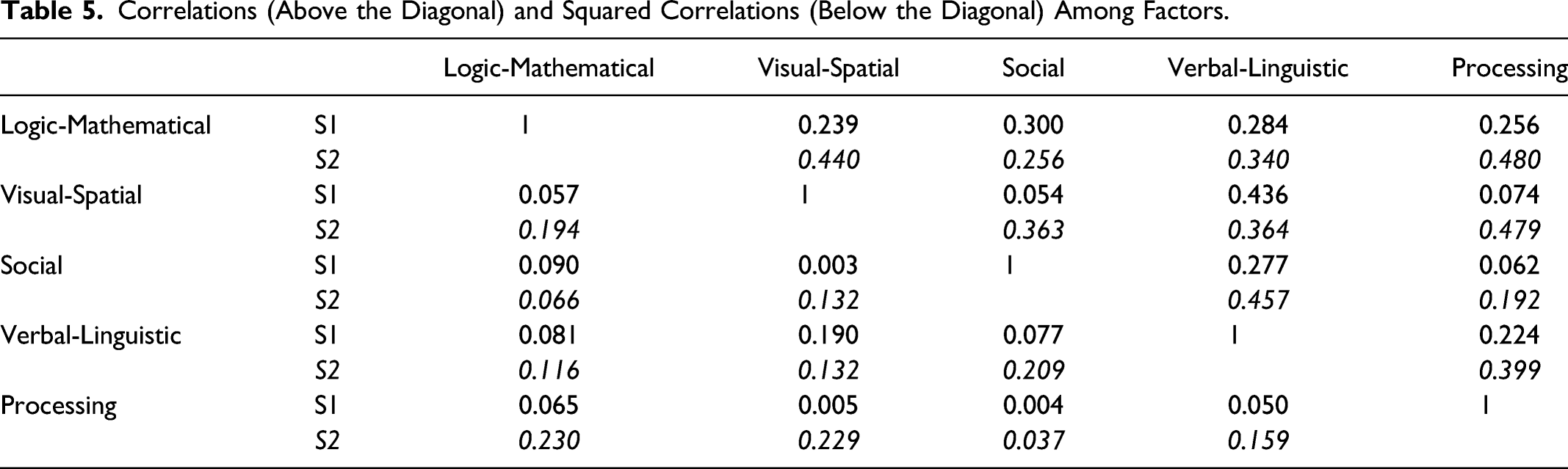

Correlations (Above the Diagonal) and Squared Correlations (Below the Diagonal) Among Factors.

Discussion: These results supported the scale’s psychometric properties and factorial structure. The five factors consisted of items representing Logic-Mathematical (factor 1), Social (factor 2), Visual-Spatial (factor 3), Verbal-Linguistic (factor 4), and Processing-Speed (factor 5) intelligence. This evidence supports Hypothesis 1: similar to human intelligence, also AI systems have multidimensional intelligence.

The scale displays good psychometric properties. Although these results provide evidence of construct validity, Study 2 further validates and extends them, relating them to consumers’ emotions, satisfaction, and usage continuation intention.

Study 2: Consumers′ Emotional Response to AI

Sample and Procedure

Following Rossiter (2003) about raters’ type and adequacy, in Study 2 we validated the Scale from Study 1 on 300 adult customers (mean age = 28; 43% females). Potential respondents representative of the clientele demographic profile were contacted, asking them to participate in a study about AI. Over the next 9 weeks (October and November 2021), the interviewers accompanied the respondents on a shopping trip. Respondents interacted with the AI while shopping, then filled out a survey to measure the AI intelligences (as developed in Study 1), their emotions from interacting with the AI (Multidimensional Emotion Questionnaire: MEQ; Klonsky et al. 2019), satisfaction (Lim et al. 2019), technology continuation intention (Balakrishnan and Dwivedi 2021), and emotional attachment to the service provider as a consequence of using that AI (adapted from Sánchez-Fernández and Jiménez-Castillo 2021).

MEQ is based on five positive (happy, excited, enthusiastic, proud, and inspired) and five negative emotions (sad, afraid, angry, ashamed, and anxious). It aligns with PANAS (Watson, Clark, and Tellegen 1988), was employed in several studies on human emotions (e.g., Izard 2007; Panksepp 2007), and was even deemed to be the “most appropriate for marketing” (Huang 2001, 245). Although anxiety is not included in PANAS, it is reflected in the PANAS Fear scale that correlates highly with anxiety (Watson and Clark 1994).

Scales’ Reliability

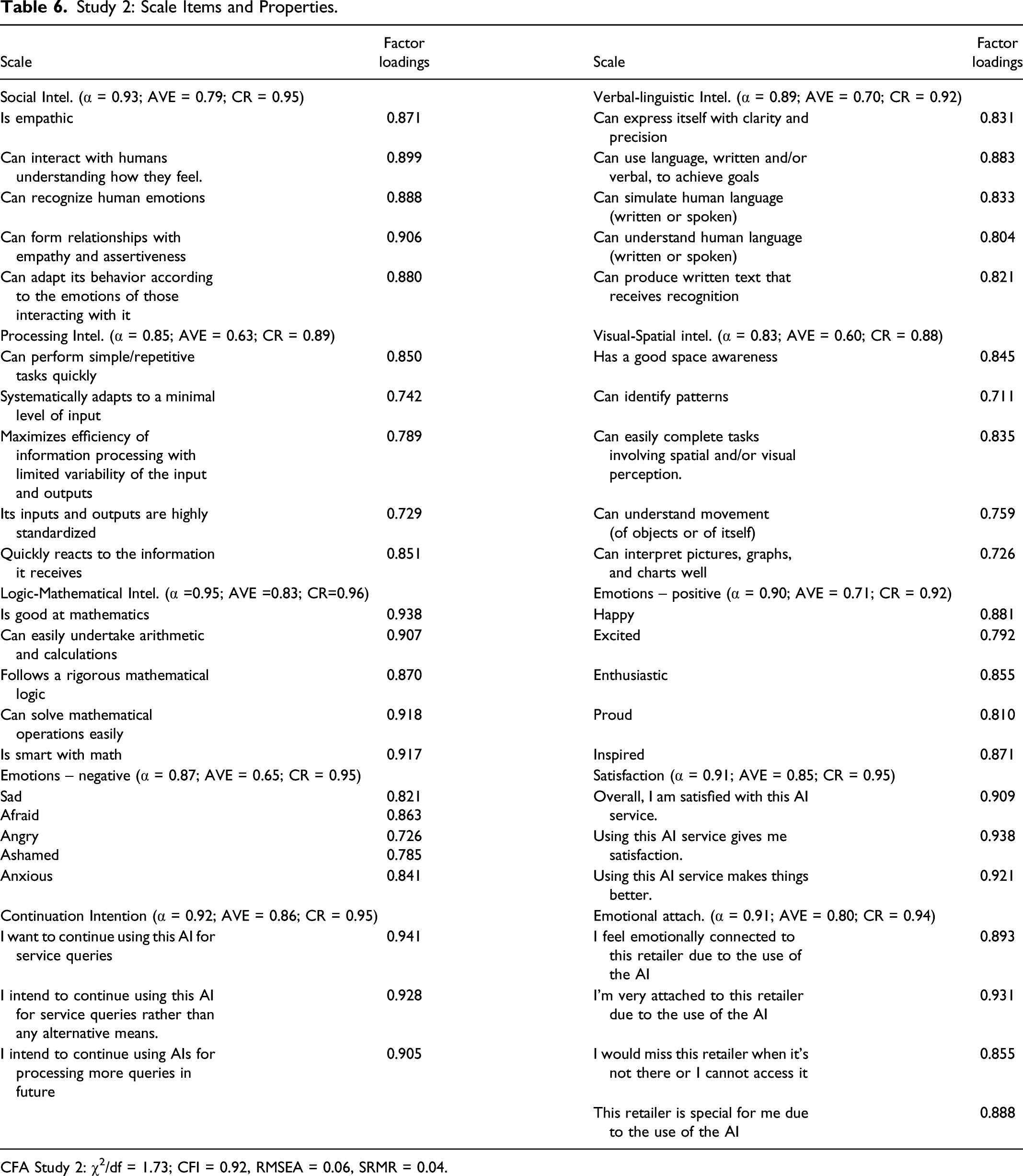

The confirmatory factor analysis (Oblimin rotation) exhibited a satisfactory fit (χ2/df = 1.73;

Study 2: Scale Items and Properties.

CFA Study 2: χ2/df = 1.73; CFI = 0.92, RMSEA = 0.06, SRMR = 0.04.

Because the dependent and independent variables were measured through responses from the same respondents, we ensured against potential common method bias using the Harman one-factor test, following the approach of previous service researchers (e.g., Chen, Tsou, and Huang 2009). According to this technique, common method variance is present if a single factor emerges or one “general” factor accounts for more than 50% of the variables’ covariation. A single factor did not emerge, and imposing a one-factor solution significantly worsens the fit (χ2/df = 7.16; p < 0.001) and accounts for significantly less than 50% of the covariation. Furthermore, testing common method bias also with the method by Bagozzi, Yi, and Phillips (1991) provides converging evidence that common method bias is unlikely to be a concern in the data: the correlation among principal constructs is no higher than 0.48 (see Table 5), thus well below the 0.9 threshold (Bagozzi et al. 1991).

This initial evidence from Study 2 further supports Hypothesis 1, providing external validity on a sample of non-experts: multiple AI intelligences emerge in Study 2 as they did in Study 1.

Results

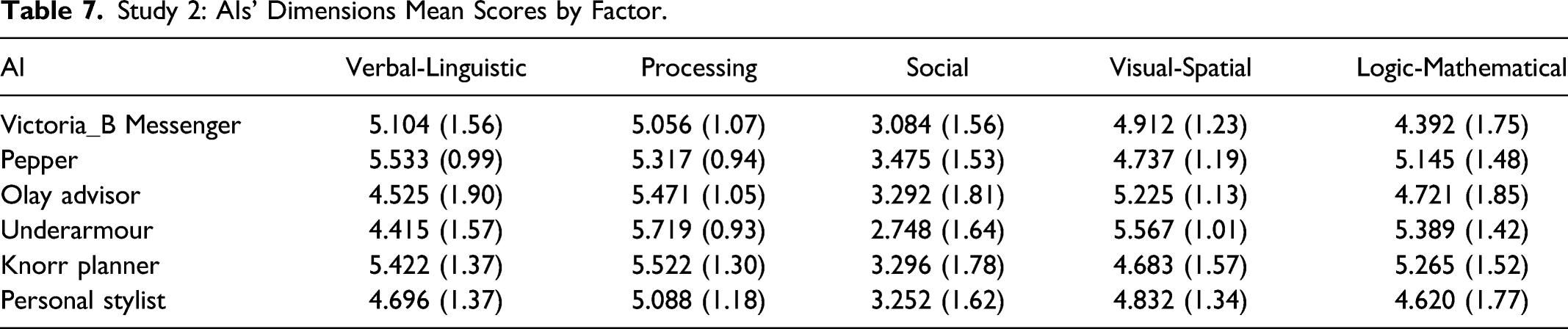

Study 2: AIs’ Dimensions Mean Scores by Factor.

Then, a structural equation model was run in SPSS-AMOS, as presented in Figure 2, to compare the impact of the five intelligences on positive and negative emotions. The model also assesses the role of emotions as mediators of the relationship between intelligence types and consumers’ responses (satisfaction, continuance intention, and emotional attachment to the service provider). The mediation model.

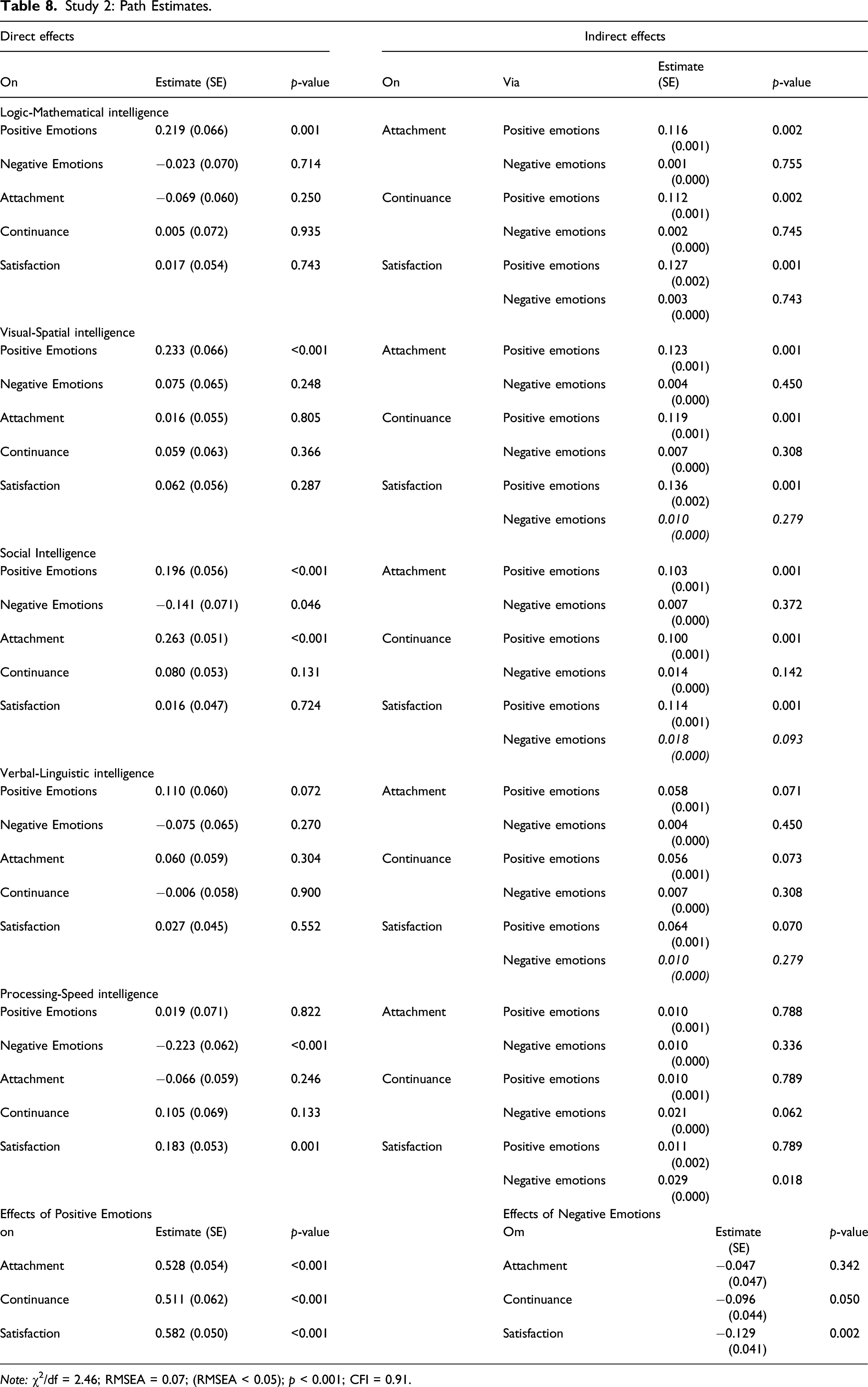

Study 2: Path Estimates.

Note: χ2/df = 2.46; RMSEA = 0.07; (RMSEA < 0.05); p < 0.001; CFI = 0.91.

In turn, positive emotions equally impacted satisfaction (0.582, p < 0.001), continuance intention (0.511, < 0.001), and emotional attachment (0.528, p < 0.001), while negative emotions decreased satisfaction (−0.129, p < 0.001) and continuance intention (−0.096, p = 0.050) (no effect emerged on emotional attachment: −0.47, p = 0.342).

To test for mediation (H5 and H6), we used Preacher and Hayes’s (2004) approach with the Sobel test. Regarding the mediation by the positive emotions, the results showed a significant indirect effect of all intelligences on attachment, continuance, and satisfaction (though marginally for verbal-linguistic intelligence), except for processing-speed intelligence, whose indirect effect was not significant (see Table 8). Regarding the mediation by the negative emotions, the indirect effects were significant only for processing-speed on satisfaction and, marginally, on continuance (see Table 8). Overall, the combined direct and indirect paths evidence supports H5 and only partially supports H6. Positive and (in part) negative emotions mediate the relationship between intelligence type and consumers’ response.

Finally, we examined whether the emotional reaction to intelligence types differed due to some characteristics of the consumers. However, neither age, gender, education, or mood significantly affected the emotion-intelligence relationships.

Discussion and Conclusion

This research aimed to understand the extent to which AI systems can have multiple intelligences and whether different intelligences arouse different emotional responses in consumers. To this end, we conducted two studies based on UK respondents: Study 1 developed a measurement scale for evaluating the artificial intelligence types based on experts. Study 2 further validated the scale and assessed consumers’ emotions when interacting with AI-based service. It showed that the different AI intelligence types have different effects on consumers’ responses. Further, it revealed positive and negative emotions mediate the relationship between AI intelligences and consumers’ satisfaction, continuance intention, and emotional attachment to the service provider.

This research answers recent calls to develop ways to evaluate AI systems’ intelligence (Hernández-Orallo 2017; Li et al. 2018) and address consumers’ emotions when interacting with AI (Huang and Rust 2021a). There are numerous contributions from this study. First, we identify AI types starting from definitions and studies of HI (Table 1). Compared to other measurements (Grewal et al. 2020; Huang and Rust 2018, 2021b; Montes and Goertzel 2019; Van Doorn et al. 2017), our approach evaluates AI intelligences (Table 2) against human intelligences (Table 1; Figure 1).

Consequently, our approach expands Huang and Rust’s (2018, 2021b) framework on artificial intelligence as a result of the comparison/contrast with human intelligence (Table 1). In this way, we provide a new guideline to develop AIs able to mimic human abilities better. Yet, our approach might be more comprehensive based on how the artificial intelligence needs to mimic the human one and solicit certain emotions in consumers when interacting with those systems. For instance, verbal-linguistic intelligence is not the ability to feel empathy or have intuitions; rather, it adds the ability to express them efficiently. Thus, it complements Huang et al.’s classification, separating the ability to feel (Social intelligence), to understand (Logic-Mathematical intelligence), and to express those feelings and intuition verbally (Verbal-Linguistic intelligence).

Similarly, we add Visual-Spatial intelligence. This intelligence type complements Huang and Rust (2018, 2021b), adding a specific ability to understand images and spaces. The visual-spatial dimension can be comprised in Huang’s mechanical intelligence in less evolved AI, where visual or space-related tasks are routine (e.g., identifying a bar code, seeing a human figure). However, as AI evolves, the ability to understand space leaves the domain of a repeated/routine task. It comes closer to humans’ ability to generate and transform well-structured visual images, visualize shapes in the “mind’s eye”, and identify movement patterns of objects, which is a different intelligence from mechanical.

Second, previous studies usually assessed AI intelligences based on experts’ qualitative opinions (e.g., Huang and Rust 2018). Instead, we developed a multidimensional measurement scale for AI, similarly to the scales of HI (Table 2), validating it on a panel of experts (Study 1). Then, we considered social interaction theory (Cuddy, Fiske, and Glick 2008) to advance that also general customers could form an idea of an AI’s intelligence when interacting with it, just as they do when interacting with other humans. Thus, we tested the scale also on general service customers (Study 2). We believe this is a significant step toward developing ways to evaluate AI systems’ intelligences (Hernández-Orallo 2017; Li et al. 2018). Our results show the extent to which artificial intelligences are configurable, describable, and measurable, much as is done for human intelligences (Yavich and Rotnitsky 2020).

Third, Study 2 adds that recent AI-based service can already generate and communicate emotions, and different types of AI lead consumers to different emotions. This evidence sheds light on the emotion transfer occurring during consumer-AI interactions in service, not fully covered by past studies (Huang and Rust 2021a).

Fourth, Study 2 also highlights that the intelligence types do not symmetrically affect positive and negative emotions: intelligences inducing the former do not necessarily prevent the latter, and vice-versa. Specifically, Logic-Mathematical and Visual-Spatial increase positive emotions but do not decrease negative emotions. Instead, Processing-Speed decreases positive emotions but does not increase positive emotions. Only Social intelligence affects both positive and negative emotions.

Moreover, the mediation analysis provided in Study 2 highlights that positive (negative) emotions positively (negatively) mediate the relationship between AI intelligences and consumers’ attachment to the service provider, satisfaction, and technology continuation intention. This evidence extends recent literature on the multiple benefits of AI (Huang and Rust 2018, 2021b; Kumar et al. 2019) and past studies on humans’ development of emotions toward inanimate objects (Badrinarayanan and Becerra 2019; Dwivedi et al. 2019; Park et al. 2010; Raggiotto and Scarpi 2021) with new evidence about the human emotional response to AI-based service.

Overall, Study 2 provides evidence that the different AI intelligences differ significantly regarding which emotions they affect and how strongly. This finding answers recent calls to determine how people react (Shin 2021) and what they feel (Huang and Rest 2021a) when interacting with different AI intelligences. In addition, this study extends previous research on how people are willing to extend real-life psychological dynamics towards artificial entities (Russo, Durandoni, and Guazzini 2021) with evidence from the service context.

Summarizing, the results demonstrate that (i) intelligence classifications used for HI can be used as a theoretical base to understand AI, (ii) intelligences for AI can be diverse just as human intelligences are, and (iii) some AI display multiple dominant intelligences simultaneously. Finally, consumers react differently to AI intelligences by showing different emotions (positive: happiness, excitement, enthusiasm, pride, and inspiration; negative: sadness, fear, anger, shame, and anxiety) with different intensities, and different levels of emotional attachment, satisfaction, and technology continuation intention. Thus, our scale might be considered a starting point for future research in AI, leading to specific, measurable intelligence analyses.

Managerial Implications

Introducing AI in service may help managers improve and complement their traditional sales personnel service. First, managers should be aware that different types of AI intelligences exist, and different AI types solicit different reactions in consumers. Indeed, consumers form a different opinion about the type and amount of intelligence of the AIs they interact with. What is more, the interaction with an AI generates emotions in customers, just as it happens when they interact with human personnel. Accordingly, we recommend that practitioners care about customer-AI interactions no differently than they care for customer-human personnel interactions. Thus, practitioners should consider introducing specific AI types based on consumers’ emotions they want to generate or avoid specific emotional responses. For instance, the findings show that Social intelligence generates emotional attachment to the service provider and does so more than Verbal-Linguistic intelligence. In contrast, Processing-Speed intelligence does not do it at all.

However, it helps reduce the negative emotions and directly and positively affects satisfaction, while Logic-Mathematical, Visual-Spatial, and Verbal-Linguistic intelligence do not. Since this AI type was still relatively weak (even in those AI that scored highest in their ability to express themselves), we recommend efforts, especially in research and development, to increase machines’ ability to understand human emotions and respond to social cues accordingly.

Thus, new AIs might be developed to support the service traditionally offered by in-store sales personnel. Similarly, online, where interactions with human personnel are more limited, AI needs to develop Social Intelligence to create an emotional attachment to the service provider. Differently, AI with higher Processing-Speed intelligence would increase satisfaction while reducing negative emotions. Thus, it can be especially supportive for new payment systems or product searching in-store and online.

Practitioners might use these results as guidance when developing an AI-based service. Our results also suggest that there is no need to provide all five intelligence types in one single AI, as -for instance, Logic-Mathematical and Visual-Spatial intelligence are equally capable of inducing positive emotions. Similarly, Social and Processing-Speed intelligence are both capable of reducing negative emotions. As our results show, the emotions generated by the interaction with an AI system mediates key outcomes such as customers’ satisfaction and usage continuance.

Finally, introducing specific AI would help managers complement traditional service and reduce the human-to-human interaction, which might be valuable under health and safety risks like during pandemics or while interacting with vulnerable consumers (i.e., consumers with severe health conditions).

Limitations and Future Research

Despite its contributions, this research has some limits. First, this study only addressed the intensity of consumers’ emotions when faced with different AI intelligences. Thus, future studies could use a longitudinal approach to investigate the effects of AI intelligence on emotional frequency and persistence, thereby accounting for all components of emotional chronometry.

Second, future studies might investigate additional emotional responses (e.g., pleasure and reactance) and include specific negative emotions (e.g., disappointment, madness, cognates of disgust, guilt, envy, and chagrin), broadening the spectrum of emotions that AI could arouse.

Furthermore, we did not address the possible ethical issues. Consumers could not always prefer an AI-based service (Pitardi et al. 2022). For instance, they could not want an AI to be “too much” intelligent in embarrassing service encounters (Lobschat et al. 2021). This consideration leads to more questions on balancing the offer of AI-service and human-service to avoid unexpected outcomes.

Finally, what impedes AI from violating ethics, including consumers’ privacy, if artificial intelligence’s ethical and moral intelligence is still underdeveloped compared with humans? We encourage future works to adopt an ethical perspective to define the boundaries of AI applications. Thus, such efforts may lead to more realistic and effective “laws of robotics” than those proposed by Asimov in the science-fiction novel “I Robot” in 1950. We encourage future works to adopt an ethical perspective to define the boundaries of AI applications. Thus, such efforts may lead to more realistic and effective “laws of robotics” than those proposed by Asimov in the science-fiction novel “I Robot” in 1950.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.