Abstract

Prototypes—internalized knowledge structures of the most typical or characteristic features of a concept—are important because they influence cognitive processing. Yet prototype analysis, the method used to examine prototypes, appears relatively underutilized in organizational research. To introduce prototype analysis to a wider audience of organizational scholars, we conducted a critical methodological literature review following a six-step procedure. Seventy-three prototype analyses published in 35 journals were categorized and their content analyzed. A prototype analysis typically includes a sequence of independent studies conducted over two stages, recently referred to as the standard procedure. Our review makes several contributions, including development of a taxonomy of prototype analysis applications, clarification of the standard procedure of a prototype analysis and possible variations, and suggestions for organizational research. Benefits of undertaking a prototype analysis include improved understanding of abstract workplace concepts that are difficult to measure directly, the ability to compare cross-cultural prototypes, and an approach for investigating the issue of construct redundancy. We conclude with best-practice recommendations, implications for organizational scholarship, methodological limitations, and future research suggestions.

Organizational research has a number of conceptual and measurement issues associated with the use of abstract concepts that prototype analysis can help address. Prototype analysis is an empirical method based on prototype theory (Rosch, 1973) used to identify and study concept prototypes (Fehr, 1982, 1986, 1988). As such, “a prototype analysis is a descriptive, not a prescriptive, analysis” (Fehr & Russell, 1984, p. 484). To illustrate, a prototype analysis can be used to “flesh out the content and structure of a particular concept” (Fehr, 2005, p. 185) and to compare related concepts from the same family (Jones et al., 2018; Kito, 2016). Hence, a prototype analysis could help reduce construct proliferation and their different measurements in organizational research (Jones et al., 2018). Psychometric measures derived from prototypes potentially offer greater precision compared to those developed using factor analysis and so may better meet construct validity requirements (Bergner, 2023). Prototype analysis results can also elucidate nuances in different cross-cultural cognitive structures (Sun et al., 2024a), evaluate competing theories about concept content and structure, and suggest new theoretical directions (Fehr, 2005). Yet while prototype analysis has been used for decades to capture the meanings laypeople attach to specific concepts (Fehr, 2005), the method appears relatively underutilized in organizational research. Further, prototype analyses can vary in design and are dispersed across numerous disciplines, rendering it difficult to identify best practices, and the full range of organizational research questions a prototype analysis can address.

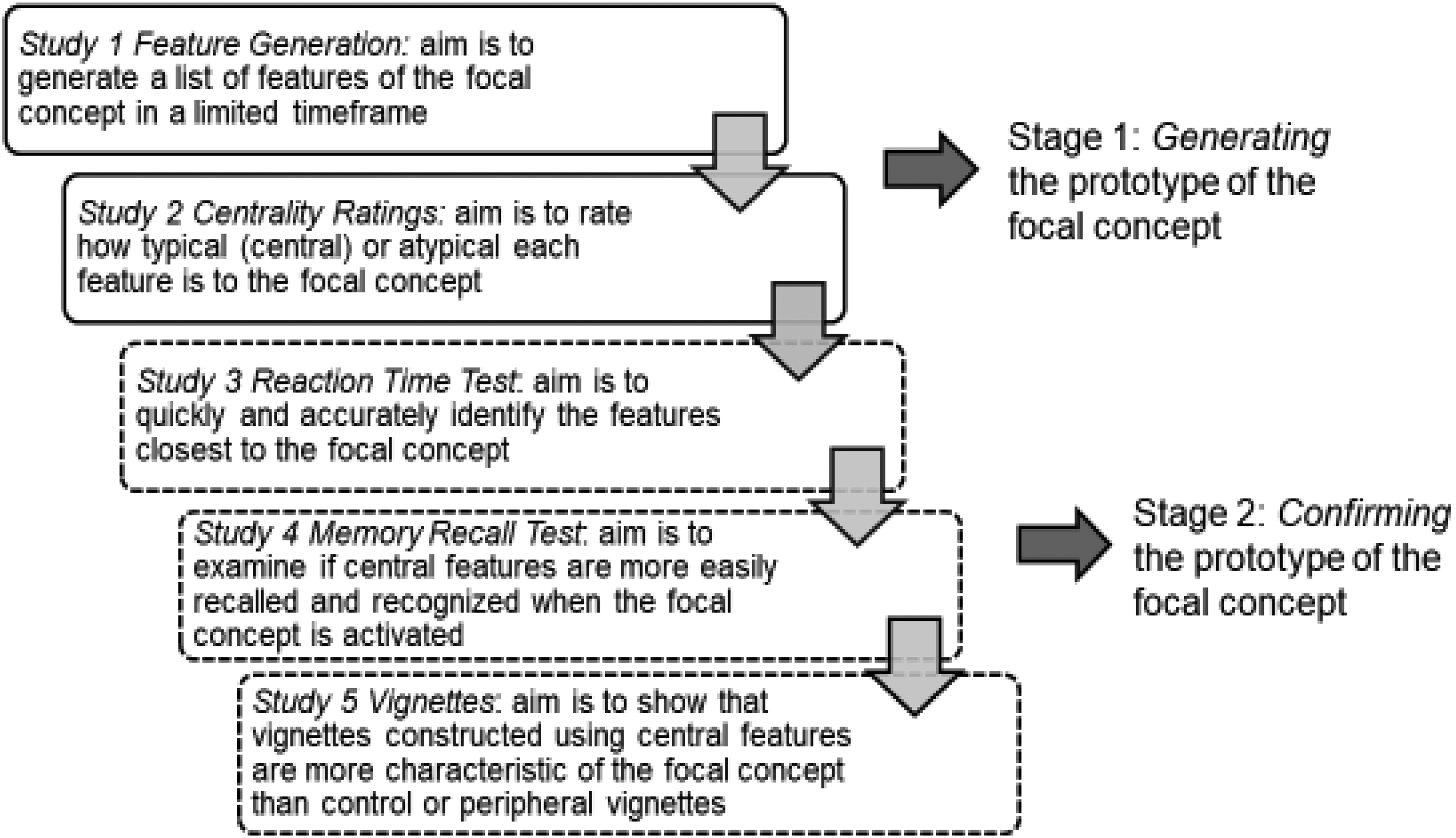

Prototypes, or people's internalized knowledge structures, are important because they influence cognitive processing (e.g., speed, memory recall, interpretation) (Baldwin, 1995; Fehr, 2005). Two conditions must be met in order for a concept to exhibit a prototypical structure: (1) individuals must be able to identify the features of the concept and reach consensus regarding their relative significance; and (2) the internal cognitive schema associated with the prototype ought to exert a discernible influence on the way in which individuals interpret and process information about the concept (Rosch, 1975). Prototype analyses are, therefore, typically structured as a sequence of independent studies over two stages that generate and then confirm the prototype, as depicted in Figure 1 (e.g., see Fehr, 1986; Fehr & Russell, 1984). This research design has recently been referred to in prototype analysis as the “standard procedure” (Luo et al., 2022, p. 5; Schreuder et al., 2024, p. 45; Schweiger Gallo, Görke, et al., 2024, p. 460; Sun et al., 2024a, p. 7), a term also adopted in this review.

Example design of the prototype analysis method with standard procedure—the horizontal dimension.

A prototype analysis, at its simplest, answers research questions of the forms what is X? and how does X relate to Y? Empirical examples of prototype-based methods occur in various research domains, including psychology (e.g., meaning of a “good person,” Smith et al., 2007), sociology (e.g., comparison of prototypical meat-eaters with vegetarians, Johnston et al., 2021), economics (e.g., prototypicality of forms of saving, Groenland et al., 1996), leadership (e.g., characteristics of perceived leaders, Lord et al., 1984), followership (e.g., characteristics of an “effective follower”/“ineffective follower” or subordinate, Sy, 2010), organizational behavior (OB) (e.g., differentiation of group rituals from group norms, Kim et al., 2021), human resource management (HRM) (e.g., understanding of employee well-being, Jarden et al., 2018), entrepreneurship (e.g., “business opportunity” prototype of experienced vs. novice entrepreneurs, Baron & Ensley, 2006), and marketing (e.g., assessment of the independence of consumers’ commitment to service providers, Jones et al., 2009). While these examples typically follow Rosch (1973), not all follow the standard procedure (e.g., Groenland et al., 1996; Johnston et al., 2021).

The purpose of this critical methodological review of the prototype analysis method is to provide a one-stop shop resource that will help introduce a wider audience of organizational researchers to this method, following the guidelines of Aguinis et al. (2023) to ensure its thoroughness, clarity, and usefulness. In organizational contexts, prototype analysis may diverge from other fields due to an emphasis on how prototypes influence outcomes (e.g., Baron, 2006) and a distinction between ideal and typical prototype forms (e.g., “effective followers” vs. “followers,” Sy, 2010). This suggests a potential methodological shift toward a more pragmatic and context-sensitive approach. Our categorization and content analysis of 40 years of prototype analyses make three major contributions: (1) development of a taxonomy of prototype analysis applications; (2) clarification of the standard procedure of a prototype analysis and possible variations; and (3) recommendations and implications for organizational scholarship. This review is timely given recent interest by organizational scholars in prototype analysis (e.g., Kim et al., 2021; Migge et al., 2020; Schreuder et al., 2024) and potential method advancements since Fehr (2005). The use of the prototype analysis method has also increased, with nearly half (48%) of the studies in this critical methodological review published in the last decade. The review begins with an overview of prototype theory (Rosch, 1973), contrasting it with alternative approaches to concept definition and its potential role in reducing construct proliferation. Major advances in the prototype analysis method are also highlighted. The procedure used in conducting the critical review, including the findings, is described next, followed by best-practice recommendations, implications for organizational research, methodological limitations, and future research suggestions.

Overview of Prototype Theory and Evolution of the Prototype Analysis Method

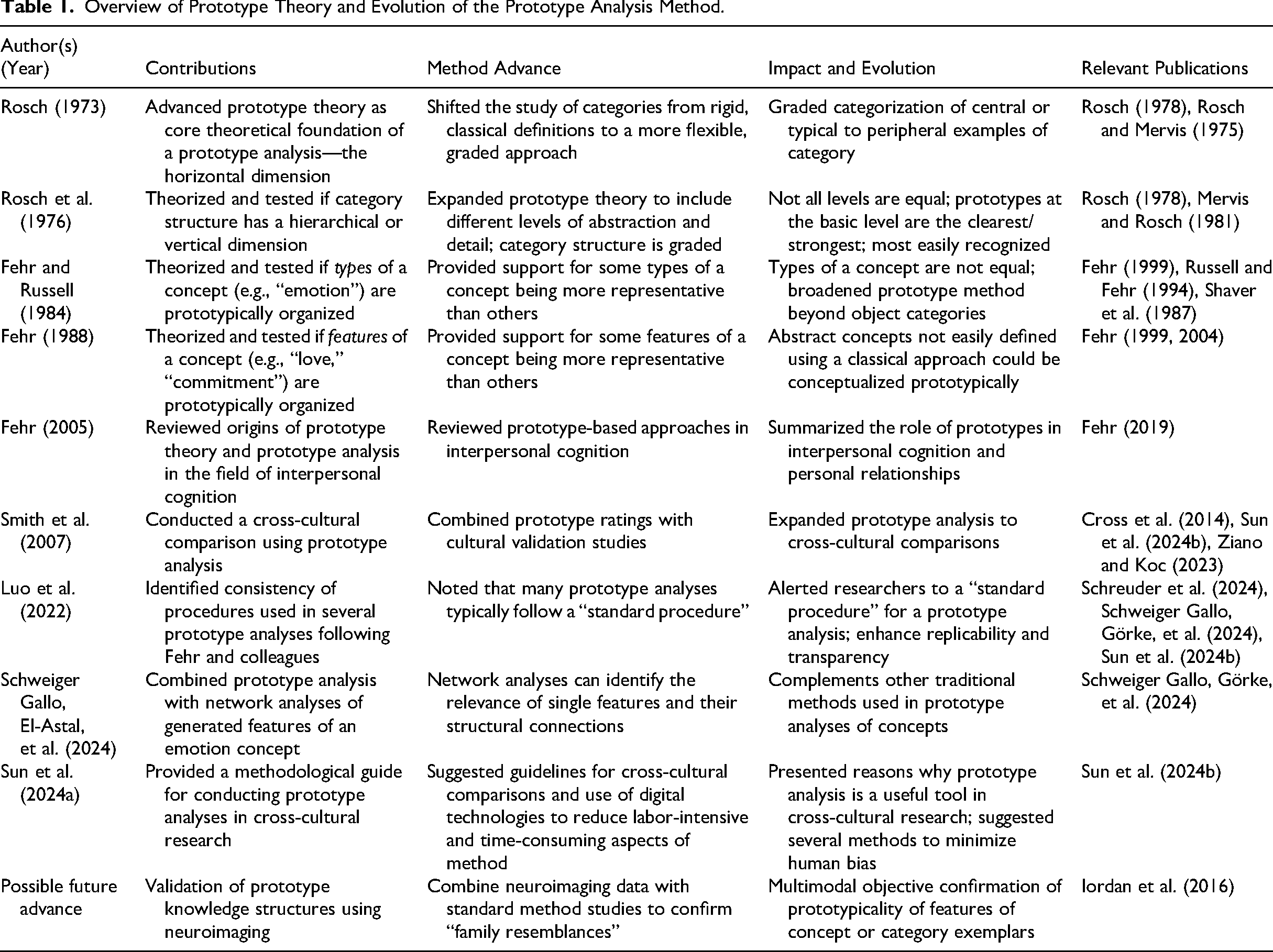

Rosch (1973) theorized that concept categories within perceptual domains are not arbitrary but rather are composed of the best examples or clearest cases of a category. Category members share many features where the composite of the most frequent features define the prototype. Since this initial theorization, further work has advanced both prototype theory and the prototype analysis method, as highlighted in Table 1.

Overview of Prototype Theory and Evolution of the Prototype Analysis Method.

In prototype theory, there is a horizontal dimension describing typicality of features or members of a concept and a vertical dimension describing hierarchical relationships between concept categories (Rosch, 1973; Rosch et al., 1976). A major assumption in prototype theory is that the boundaries between horizontal and vertical categories are fuzzy or ill-defined (Rosch, 1973). In the horizontal dimension, not all category members or features are equal—some are more central or typical than others (e.g., for “love,” they could include “affection,” “attachment,” “caring”), and some are peripheral with more in common with an adjacent concept category (e.g., “admiration,” “gratitude,” “altruism”) or even a contradictory feature (e.g., “jealousy”), reflecting lived experiences. Each member within a horizontal category may share features with one or more other members; however, only a limited number of features may be shared by all members of the category. This overlapping similarity reflects “family resemblance,” which can be represented conceptually on a Venn diagram (e.g., see Jones et al., 2009; Lord et al., 1984). Figure 1 illustrates the design of a prototype analysis targeted at understanding the horizontal dimension of a concept (Fehr, 1988).

Rosch et al. (1976) provided evidence for a vertical dimension to concept categorization, organized by the degree of abstraction and specificity. At the top is the superordinate level, the most general or abstract category (e.g., “emotion”); the basic level, such as “love,” is learned first language-wise, carries the most information, and is cognitively the most efficient; and the subordinate level is where finer-grade and more detailed distinctions are made (Rosch et al., 1976). For “love,” the subordinate level could distinguish between “romantic love,” “compassionate love,” and “filial love.” Categories tend to become narrower and more detailed with each step down the hierarchy (Fehr, 1999). Additional studies to those illustrated in Figure 1 are needed to assess the vertical structure of a concept (e.g., see Fehr, 1999). Prototype theory can also be applied to organizational concept categorization, such as “trust,” where some conceptual ambiguity persists despite considerable research attention (Dirks & de Jong, 2022). For example, there may be conceptual overlap between “trust” and adjacent concepts, such as “honesty,” “loyalty,” “communication,” and “caring” (Kito, 2016), and even “distrust” and “mistrust.” Vertically, “relationship quality” may form the superordinate category, with “generalized trust” being the most efficient and basic level of concept categorization; and at the subordinate level, there are potentially numerous other specific trust concepts including “trust in coworkers,” “trust in leaders,” or even “xinren (trust) in buyer–seller relationships” (Migge et al., 2020).

The application of prototype theory to concept definition has not, however, been without critique (Clore & Ortony, 1991; Johnson-Laird & Oatley, 1989), including from classical essentialist and cultural consensus approaches (Fehr, 2019; Hegi & Bergner, 2010; Heshmati et al., 2019). Both classical and cultural consensus approaches focus on developing a succinct concept definition by assuming a necessary and sufficient set of concept features exist; “essential” features must be present for X to relate to Y and achieve cultural consensus across society (Hegi & Bergner, 2010; Heshmati et al., 2019). Yet when considering the well-researched concept of “love,” Hegi and Bergner indicated that a necessary condition (investment in the well-being of another) is met but that this feature set was not sufficient to define “love.” Similarly, Heshmati et al. confirmed there is little cultural consensus for “felt love” due to the complex variety of signals for the different types of love. Consequently, how people organize knowledge varies between how they define love and what signals they use to construct a good relationship (Fehr, 2019). Prototypical features with high levels of category membership may not necessarily be perceptually available (Clore & Ortony, 1991) but can be used to develop scales around “how they [people] think about these concepts” (Fehr, 2019, p. 166). While the various approaches to concept definition differ, they can nevertheless complement one another (Armstrong et al., 1983; Clore & Ortony, 1991; Russell, 1991). For example, “grandmothers often have grey hair, but not necessarily. Grandmothers are also mothers of a parent—this is necessarily so and is known by simply knowing the meaning of the terms” (Russell, 1991, p. 38). Gray hair is a feature of the “grandmother prototype,” without precluding the classical definition that necessitates being a parent of a parent.

As highlighted above, in circumstances where the classical essentialist approach to concept definition is unsupported, prototype theory offers an alternative by viewing conceptual knowledge as organized around a prototype rather than a fixed set of defining features (Fehr, 1986). Not all features are equal or representative in prototype theory, with some features being more central to the concept. In organizational research domains where construct redundancy is a persistent problem, such as OB (Le et al., 2010) and leadership (Eva et al., 2025), prototype theory may offer new insights into how people think about abstract concepts. For example, a meta-analysis by Hoch et al. (2018) showed that “ethical,” “authentic,” and “transformational leadership” styles have overlapping variance, with little incremental validity. In prototype theory, some features of leadership may be thought of as central to all contexts, with others being more context sensitive (Lord et al., 1984). Hence, a more parsimonious conceptual map of leadership styles could potentially be derived from shared and unique features, rather than creating new constructs for marginal cases that everyday people find difficult to distinguish.

The Prototype Analysis Method

Fehr (2005) reviewed the role of prototypes in interpersonal cognition and concluded that two research streams have been particularly influential in advancing the method since Rosch (1973): (1) types of concepts (e.g., love, Fehr, 1994; Fehr & Russell, 1991) and (2) features of concepts, including complex relational patterns (e.g., love, Fehr, 1994; love and commitment, Fehr, 1988). Horizontal and vertical dimensions of concepts have been examined within both streams, albeit the vertical dimension potentially requiring separate studies (Fehr, 1999). A significant advancement in the prototype analysis method has also been its application to cross-cultural research (e.g., see Smith et al., 2007), culminating in a recent detailed methodological guide on how to conduct such prototype analyses (Sun et al., 2024a). This and other method advancements in Table 1 are elaborated on later in the review.

Other Prototype-Based Methods

Other prototype-based methods, following Rosch (1973, 1978) but not necessarily the standard procedure, have been conducted in sociology (Johnston et al., 2012, 2021; Walters, 2002; Wulff, 2019) and economics (Groenland et al., 1996). The focus in these disciplines has largely been on developing cognitive prototypes used for classifying and/or comparing different “segments” of society. For example, Johnston et al. (2021) developed and compared cognitive prototypes for meat-eating and vegetarianism segments within Canadian ethnic groups. Each approach develops features either from existing literature or uses grounded theory approaches rather than undertaking feature generation and centrality rating studies, as in the standard procedure. Developing cognitive prototypes can involve other methods, including interviews and thematic analysis (Johnston et al., 2012, 2021), cluster analysis (Groenland et al., 1996), historical secondary data (Walters, 2002), and Q-sorting (Wulff, 2019).

Prototype-based approaches have also been used to understand concepts in HRM (Stone & Colella, 1996) and entrepreneurship (Baron, 2006; Baron & Ensley, 2006; Costa et al., 2018). Stone and Colella theorized that prototype analysis is a relatively unobtrusive method that could enhance understanding of a person with a disability because it may help avoid automatic and restrictive assumptions. In entrepreneurship, Baron and Ensley compared how experienced versus first-time entrepreneurs conceptualize the “business opportunity” prototype by analyzing responses to two open-ended questions, rather than a typical feature generation study. Costa et al. did not conduct a prototype analysis; rather, they showed how the features of the business opportunity prototype could be used in a training program to improve students’ recognition of viable business opportunities.

The application of prototype theory (Rosch, 1973) to leadership is a well-established line of inquiry, generating over 40 years of research primarily focused on understanding how characteristics of leaders (implicit leadership theories, ILTs) and followers (implicit followership theories, IFTs) affect outcomes, using a variety of methods (e.g., for reviews, see Epitropaki et al., 2013; Junker & van Dick, 2014; Lord et al., 2020). Lord et al.'s (1984) early study was influential in identifying the features of specific types of leaders. However, typical methods used in ILT and IFT diverge from the standard procedure, with some conducting an initial item (feature) generation study but then proceeding direct to exploratory and confirmatory factor analyses aimed at measure creation (e.g., Epitropaki & Martin, 2004; Offermann et al., 1994; Offermann & Coats, 2018; Sy, 2010). Some prototype-based studies in this stream also distinguish between ideal (goal-oriented) and typical (central tendency) prototypes (Barsalou, 1985), such as eliciting features of “effective followers” compared to “followers” (Sy, 2010). Fehr (1999) noted that differentiating between ideal and typical prototypes can be methodologically challenging since people may not have an explicit, available prototype when adjectives are used to qualify a concept. Likewise, Junker and van Dick (2014) concluded from their review that researchers need to be more consistent in defining, operationalizing, and interpreting the results of implicit leadership and followership studies. Given variations in other prototype-based methods, this review is focused on Fehr and colleagues’ approach to prototype analysis.

A Critical Methodological Review of the Prototype Analysis Method

The aims of this critical methodological review, following Rosch (1973) and Fehr (1982, 1986), are to categorize and content analyze published studies that use the prototype analysis method in order to develop a taxonomy of prototype applications, offer recommendations for best practice, identify implications for organizational scholarship, and highlight limitations and directions for future research (Aguinis et al., 2023). We followed Aguinis et al.'s systematic six-step procedure.

Step 1: Scope of Review

This review covers some 40 years of research, from the publication of Fehr and Russell (1984) to March 2024. Articles were selected for inclusion if the primary method broadly followed Fehr and colleagues’ approach (Fehr, 1982, 1986; Fehr & Russell, 1984, 1991). Early prototype-based studies of psychological concepts were outside the scope (e.g., Cantor & Mischel, 1977). Leadership studies that generated items but then followed conventional scale development methods in the subsequent studies were not included in the review (e.g., Offermann et al., 1994; Offermann & Coats, 2018; Sy, 2010). Studies where the prototype features were derived from interviews (e.g., Johnston et al., 2021) without further confirmatory studies were also excluded. Unpublished dissertations and conference papers were also excluded from the final article set since we were interested in best practice. Prototype studies conducted in a language other than English (LOTE) but published in an English-language journal (e.g., Chinese concept of xinren in buyer–seller relationships, Migge et al., 2020) were included in the review. In keeping with critical review processes, the final set of articles is broadly representative, rather than exhaustive (Paré et al., 2015).

Steps 2 and 3: Article and Journal Selection Procedures

Two selection procedures were followed to locate articles for the review. Keyword selection in Web of Science (WoS) was used rather than journal selection because of the multidisciplinary nature of the prototype analysis method. Business, management, marketing, sociology, economics, and psychology (i.e., general, applied, clinical, multidisciplinary, social) WoS categories were searched using six keyword terms: prototype analysis, prototyping analysis, prototypical conceptions, prototype approach, prototype methodology, and prototyping study. In line with Fehr (1982, 1986, 2005), we use the term prototype analysis throughout this review. Backward snowballing (reference lists) and forward snowballing (citations) in Google Scholar were also used to locate additional articles. The final article set comprised 73 prototype analyses published in 35 different journals. Journals that have published the greatest percentages of prototype analyses include Personal Relationships (16%), Journal of Personality and Social Psychology (12%), Personality and Social Psychology Bulletin (10%), and Cognition & Emotion (7%). Organizational journals that published the most prototype analyses were from the OB/HRM (7%) and marketing disciplines (7%).

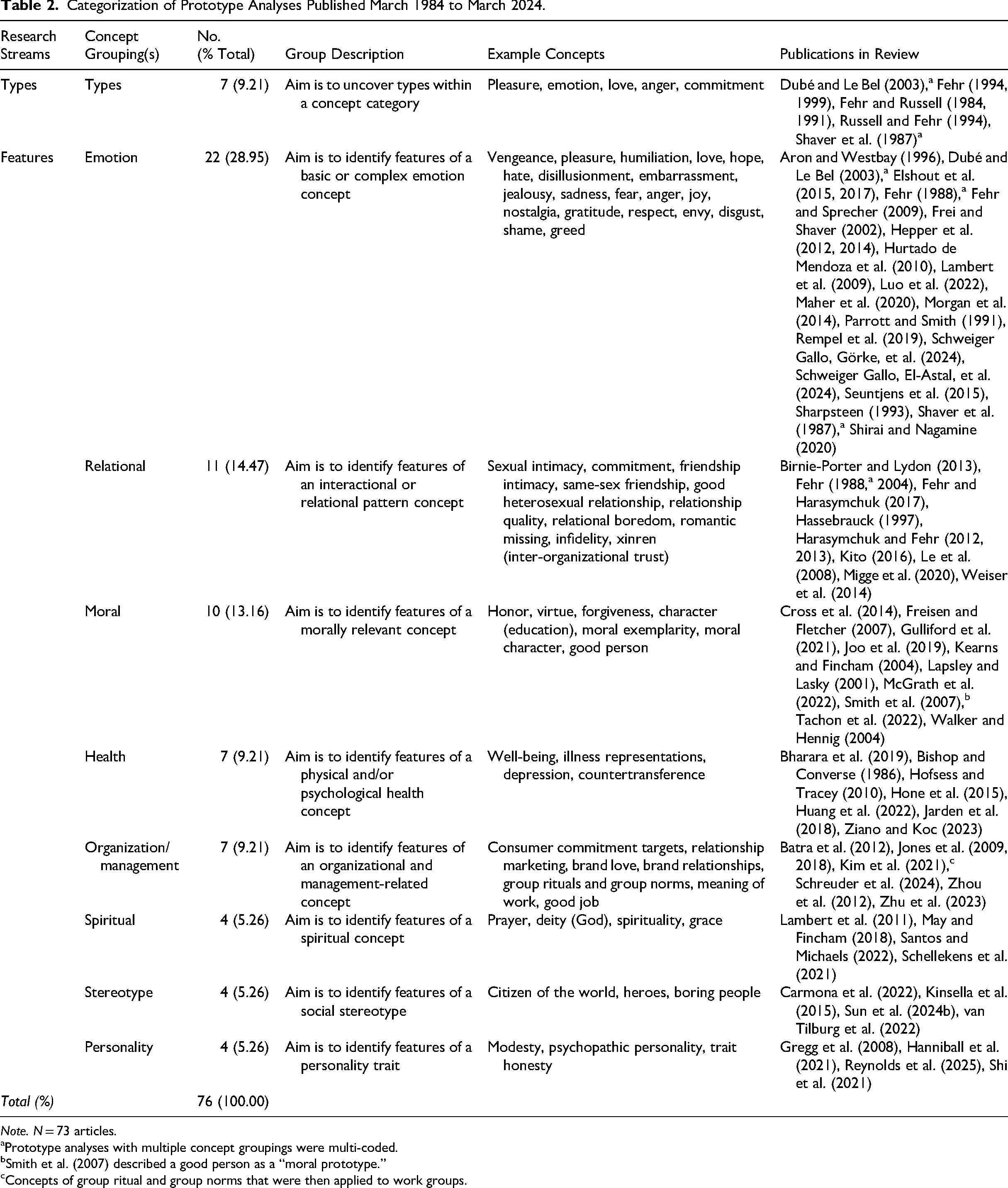

Step 4: Categorization of Prototype Analysis Concepts

Next, to better understand how prototype analysis has been used in concept definition, the first author read and categorized all articles to Fehr's (2005) previously mentioned two concept groupings: types and features. Table 2 results show that 9% of the prototype analyses were coded to types and the remaining to features. The type studies were not further subcategorized since they were few in number, and the most recent was Dubé and Le Bel (2003). The first author then inductively coded the feature studies until all were classified in eight subgroupings, as described in the coding schema (Supplementary File S1). The second author independently reviewed the same articles and coded them using the coding schema. Areas of disagreement were resolved later through discussion and by revisiting the original author descriptions of the concept. Articles that prototyped more than one concept or conducted both type and feature studies of the same concept were multi-coded. This resulted in nine concept groupings, with the features of emotions most frequently studied (29%). Organization and management concepts comprised 9%. Five concepts were goal-oriented prototype forms (7%): good heterosexual relationship, relationship quality, good person, good job, and boring people.

Categorization of Prototype Analyses Published March 1984 to March 2024.

Note. N = 73 articles.

Prototype analyses with multiple concept groupings were multi-coded.

Smith et al. (2007) described a good person as a “moral prototype.”

Concepts of group ritual and group norms that were then applied to work groups.

Step 5: Creation of a Taxonomy of Prototype Analysis Applications

This step identified additional dimensions that differentiated between studies, to develop a taxonomy of prototype analysis applications. In the second and third rounds of coding, an iterative inductive and deductive process was again followed by the first author, informed by Fehr (2005). The first author, similar to Step 4, identified differences and developed a coding schema (S1), the second author then independently coded, the results were compared, and disagreements were resolved through discussion. All coding and coding comparisons were conducted in Excel, including the final round derivation of Cohen's (1960) kappa to assess interrater reliability (agreement rate = 0.92, k = 0.69, and confidence interval [CI] = [0.40, 0.89]). Two additional dimensions were identified: relationship context and theoretical development. Concepts prototyped in multiple contexts and/or addressing multiple research questions were multi-coded. Next, we elaborate on the relationship context findings.

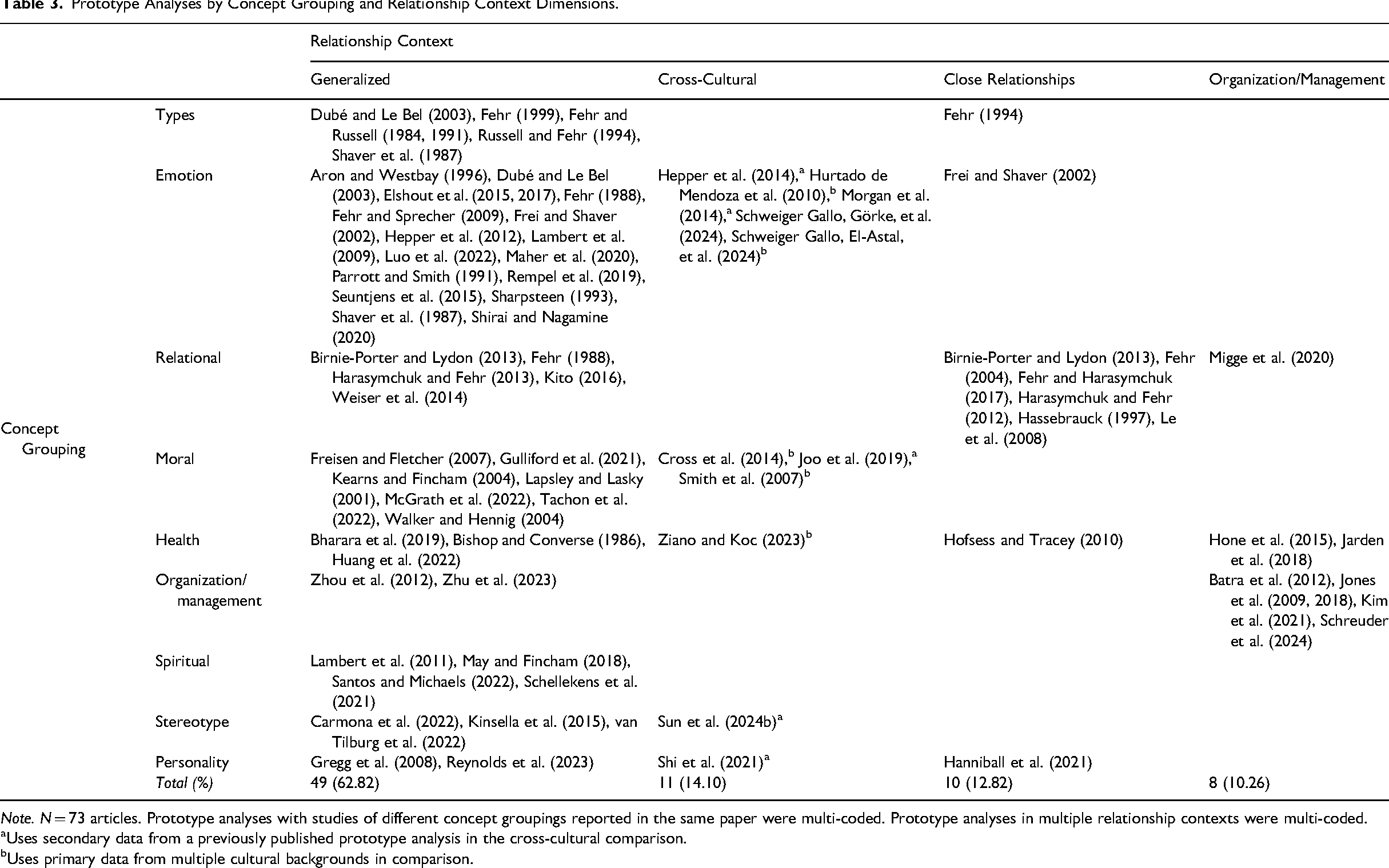

Relationship Context Dimension

In a prototype analysis, differentiating between generalized and relationship-specific knowledge structures is theoretically and practically important because they operate differently (Fehr, 1999, 2005). The context can shape which concept features are considered more important, or central, to the prototype. In the article set, four categories captured the relationship contexts, with generalized knowledge structures being the most frequently studied (63%), as presented in Table 3. Generalized is used in this context where the research is broad in scope and aims to draw conclusions applicable to everyday people/situations (e.g., students, laypeople), rather than to a specific group or context. Cross-cultural comparative studies involved participants from more than one country or ethnic group. The close relationship context included dating couples, friendships, romantic relationships, and caregivers. Organizational relationships included employees/workers, business-to-consumers, and buyer–sellers. Cross-cultural differences in envy (Schweiger Gallo, Görke, et al., 2024), respect in close relationships (Frei & Shaver, 2002), and xinren (trust) in buyer–seller relationships (Migge et al., 2020) illustrate concept grouping by relationship context. Next, we discuss the relevant organization findings for this dimension.

Prototype Analyses by Concept Grouping and Relationship Context Dimensions.

Note. N = 73 articles. Prototype analyses with studies of different concept groupings reported in the same paper were multi-coded. Prototype analyses in multiple relationship contexts were multi-coded.

Uses secondary data from a previously published prototype analysis in the cross-cultural comparison.

Uses primary data from multiple cultural backgrounds in comparison.

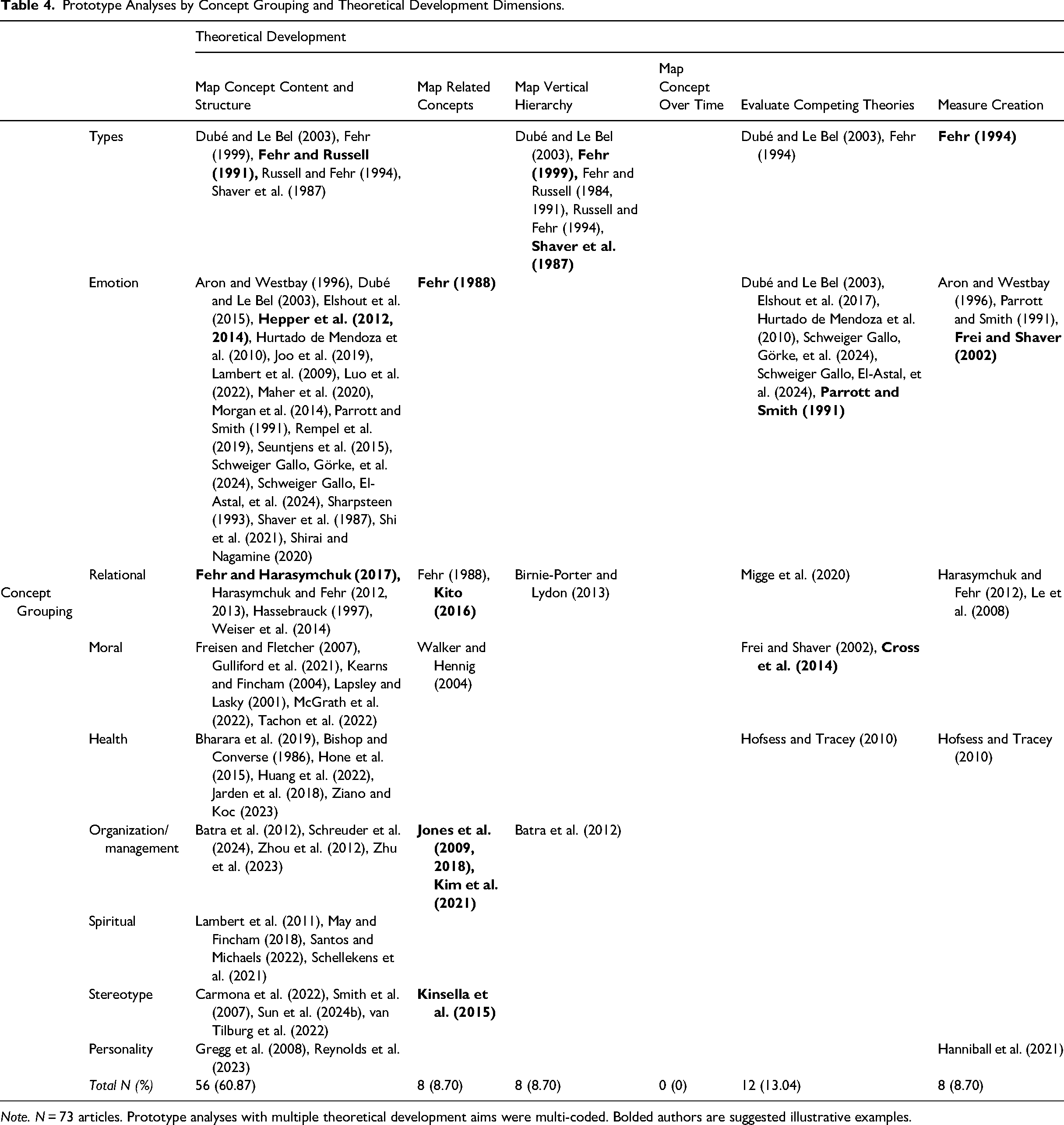

Theoretical Development Dimension

The third differentiating dimension in the taxonomy is the research question(s) being answered or how the prototype analysis helps advance theory (Fehr, 2005). Third-round coding resulted in six coded categories of theoretical development, with mapping the horizontal content and structure of the concept being the most frequent (61.5%), as presented in Table 4. The third round of coding resulted in a three-way taxonomy of prototype applications based on concept grouping, relationship context, and theoretical development. Next, we report the findings for how prototype analysis has been used in theoretical development in organizational research.

Prototype Analyses by Concept Grouping and Theoretical Development Dimensions.

Note. N = 73 articles. Prototype analyses with multiple theoretical development aims were multi-coded. Bolded authors are suggested illustrative examples.

Step 6: Content Analysis of the Design and Procedure of the Prototype Analysis

The last step in this review was to content analyze the design and procedure(s) employed in a prototype analysis to provide best-practice recommendations. In this fourth round of coding, the first author again followed an inductive exploratory approach to identify the fields of interest. Supplementary File S2 lists the fields and data collated from the articles. Final fields included the authors and year, concept studied, number and type of studies, participant populations, and overall and individual study sample sizes. We present findings for the design and procedure first, followed by an overview of frequently used studies in a prototype analysis.

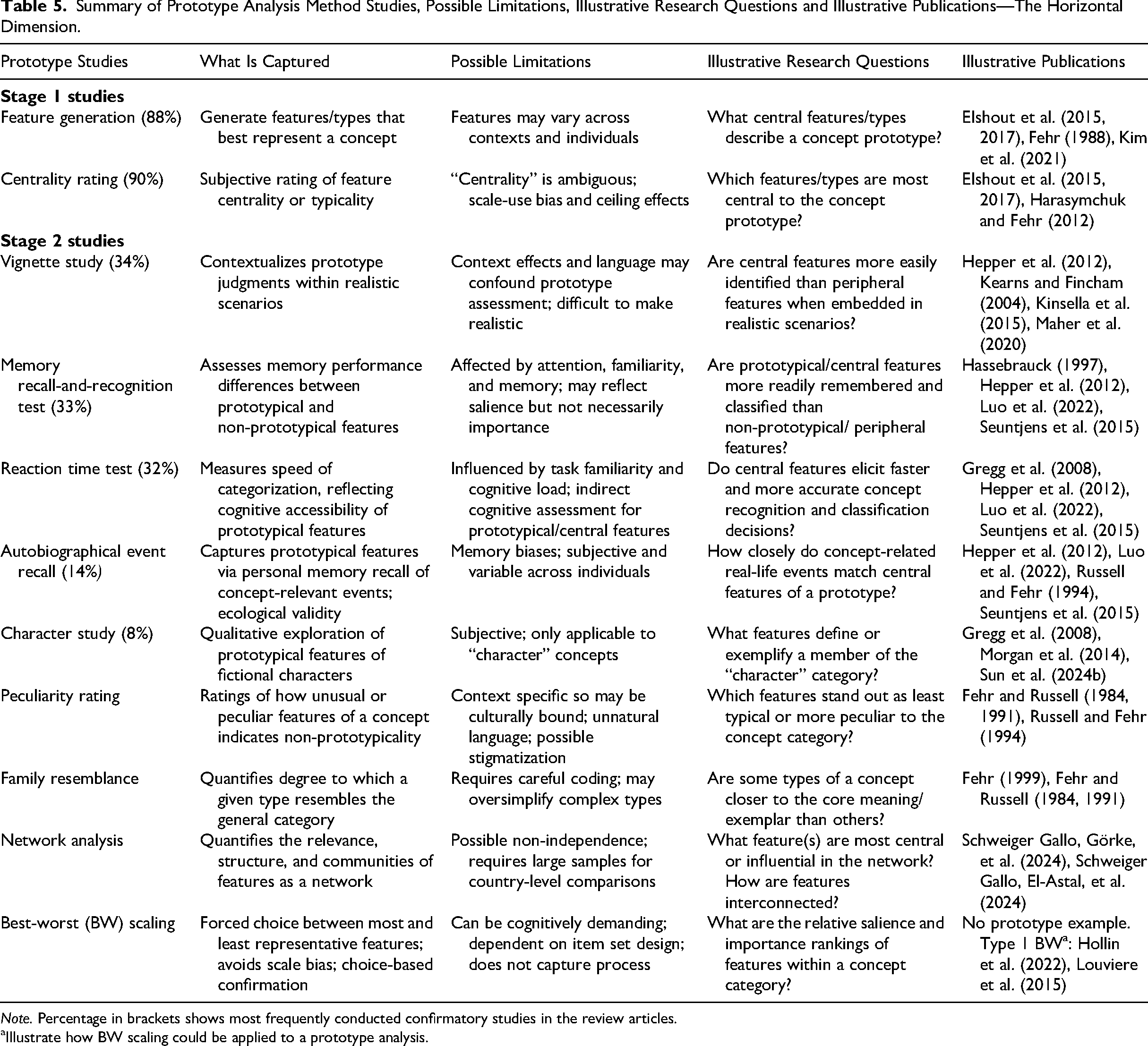

Overall Design Prototype Analysis

The average number of studies in the overall design of the protype analyses in this review was four (SD = 1.65), with a range of [1, 8]. The average number of studies in the organization prototype analyses was fewer (M = 3.3, SD = 0.95). Excluding the cross-cultural studies due to large sample sizes, the average total sample size was 645 (SD = 584). The total number of studies was somewhat related to total sample size (r = .24, p = .06). We present a summary of the most frequent standard procedure studies, their limitations, example research question(s) they answer, and illustrative publications in Table 5. Variations from a feature generation study included using features from a prior prototype analysis or deriving features from the literature or an existing measure (12%). The number of studies conducted in Stage 2 varied, ranging from one (e.g., McGrath et al., 2022) to six (e.g., Fehr, 1999). Only 40% of the organization prototype analyses conducted two or more confirmatory studies. The most common confirmatory studies in organization/management were vignettes (30%), reaction time (20%), and recall and recognition tests (20%). Next, we briefly elaborate on commonly used standard procedure studies.

Summary of Prototype Analysis Method Studies, Possible Limitations, Illustrative Research Questions and Illustrative Publications—The Horizontal Dimension.

Note. Percentage in brackets shows most frequently conducted confirmatory studies in the review articles.

Illustrate how BW scaling could be applied to a prototype analysis.

Standard Procedure Studies

We next present best-practice recommendations for conducting a prototype analysis organized under three headings: overall design and preregistration, sampling strategy, and data analysis.

Best-Practice Recommendations

Overall Design and Preregistration

Number and Type of Studies

Consistent with prior research, we recommend that the overall design of a prototype analysis follow the standard procedure, including both Stage 1 and 2 studies. However, we found no definitive guidelines on the optimal number or types of studies to be included in a standard procedure prototype analysis; nor did we find clarification on whether the term “standard procedure” is intended to also encompass the vertical dimension, as theorized in prototype theory (Rosch, 1973; Rosch et al., 1976) and in the illustrative example of Fehr (1999). Studies in Table 5 inherently target the horizontal dimension of a prototype. Fehr’s (1999) detailed examination of the content and structure of laypeople's conceptions of commitment included separate studies targeted at understanding both its horizontal and vertical structures. To avoid possible confusion and inconsistencies in the operationalization of prototype theory in organizational research, we recommend the following: (1) the term standard procedure encompass both dimensions, albeit different studies (and/or analyses) are needed to assess each dimension (we return later to this point), and researchers clarify the dimension(s) being examined; (2) studies assessing the horizontal dimension include a minimum of four original studies, two in Stage 1 (i.e., type/feature generation and centrality rating studies) and two or more confirmatory studies (see Table 5 for the most frequently used); (3) at least one confirmatory study that does not rely on a self-report methodology is included, such as a reaction time test (Fehr, 2019); and (4) researchers consult the illustrative publications in Table 5 for guidelines on conducting individual studies. Future advances in the method might also include neuroimaging to complement self-report methods, as suggested in Table 1. Iordan et al. (2016) provided the first neural test of family resemblance using functional magnetic resonance imaging (fMRI) and found that typical examples of a category elicited clearer and more consistent brain activity than peripheral category objects.

We also encourage researchers to synthesize features/types from prior studies, as this is a variant to the standard procedure that can be advantageous. For instance, prototype analysis appears ideally suited to a “revisit” qualitative restudy (Köhler et al., 2025), whereby researchers could generate additional prototype data of a concept that can then be compared and contrasted with concept features in a previously published study. For example, new samples for cross-cultural or new population comparisons or “ideal” versus existing “typical” prototype form comparisons. The standard procedure in prototype analysis provides a template for those unfamiliar with the method and helps support replication studies (Köhler et al., 2022). However, some variations are possible within the overall design of the prototype analysis.

Infrequent/New Confirmatory Studies

Infrequent confirmatory studies include a peculiarity rating using hedge words in statements (e.g., kind of, sort of, somewhat, Fehr, 1988; 1999; Russell & Fehr, 1994), a family resemblance study (e.g., see Fehr, 1999; Fehr & Russell, 1984; 1991), and network analyses (Schweiger Gallo, El-Astal, et al., 2024). Central or prototypical features are expected to rate as less natural sounding/peculiar when hedged than peripheral features. A family resemblance study aims to assess how strongly different members of a category resemble the prototype, such as types of a concept. While not usually conducted as a separate study, cluster analysis has been shown to be useful for mapping the underlying structure of the generated features, such as the causes, symptoms, and resolution of embarrassment (Parrott & Smith, 1991). Network analysis is a recent data analytic development in prototype analyses (see Table 1), which has been used to confirm feature centrality in cross-cultural studies of envy and disgust (Schweiger Gallo, El-Astal, et al., 2024; Schweiger Gallo, Görke, et al., 2024). Network representations are a potentially useful addition to prototype analysis because they can help uncover structural connections, both within and between cultures. We have recommended network analysis as a possible confirmatory study in Table 5, ideally based on a separate data collection and independent sample.

One method not previously used in prototyping, which could also help confirm the prototypical features is best–worst (BW) scaling (see Table 5). To the best of our knowledge, BW modeling has not been previously applied in prototype analysis, despite its conceptual alignment with the graded structures assumed by prototype theory. Given that BW elicits explicit trade-offs between features and avoids scale-use bias, we propose its use as a complementary confirmatory method. BW applications to consumer preferences, travel behavior, health economics, and public policy suggest BW could provide valuable insights and efficiently confirm central features of the prototype. In BW experiments, participants would be presented with sets of features and then asked to choose the most and least prototypical features within each set (Louviere et al., 2015). As BW forces discriminative judgments, the approach avoids the scale-use bias found in rating tasks. Moreover, the relative importance or centrality of each item is inferred through choice frequencies and model results, distinguishing importance even among highly salient items; consequently, attributes most strongly associated with the prototype can be more clearly identified (“breaking ties”). There is evidence from cognitive psychology that forced-choice tasks align well with underlying memory strength and salience (Louviere et al., 2015). A BW study could also include programming to record participant reaction times for each set, such that a combined BW/reaction time test could be efficiently conducted. These more objective metrics and inferred rankings of the features can validate central features derived from other methods.

Vertical Dimension Studies

Some studies in the review derived the hierarchical structure of the prototype using statistical techniques, rather than via independent studies targeted at participants themselves identifying the structure (for an exception, see Fehr, 1999). For example, pairwise combinations of features can be rated for their similarity or dissimilarity, and the resulting distance matrix can then be subjected to hierarchical cluster analysis to derive the structure (i.e., Shaver et al., 1987). Structural equation modeling has also been used to explore the presence of a hierarchical higher-order structural model to the concept of brand love (i.e., Batra et al., 2012). However, it remains unclear whether participants themselves can explicitly identify a vertical hierarchy to abstract concepts (e.g., see commitment, Fehr, 1999; love, Fehr & Russell, 1991; anger, Russell & Fehr, 1994) and, furthermore, what studies are most suited to examining this dimension. In light of this, we recommend that future prototype analyses targeted at understanding the vertical dimension of a concept conduct at least one independent study (e.g., card sort, Dubé & Le Bel, 2003; violation of rules of hierarchies, Fehr, 1999) and not rely solely on statistical means to test the hierarchical structure. Illustrative studies that have mapped the vertical dimension of a concept, using ether statistical means or additional studies, are provided in Table 4.

Preregistration of Planned Studies

The best practice of preregistering studies is increasing in psychology, although its use in consumer behavior research has lagged behind (Simmons et al., 2021). Several authors preregistered all (or some) of their proposed studies in the prototype analysis, including plans for data collection and analysis, inclusion criteria, and determinations of sample sizes (e.g., see Kim et al., 2021; Luo et al., 2022; Schreuder et al., 2024; Ziano & Koc, 2023). The advantage of preregistration is that it promotes transparency and reduces the potential for false-positive findings. Preregistration could also help distinguish the confirmatory stage studies from the more exploratory studies (Logg & Dorison, 2021; Simmons et al., 2021). In a preregistration document, researchers would specify the overall design and number of studies planned in the prototype analysis, together with samples size(s) and procedures, and the data analysis plan (Simmons et al., 2021). Justification for the sampling strategy in the review articles was unclear or unconvincing at times, and hence, we consider this next.

Sampling Strategy

Determination of Sample Sizes

Since the first study in a prototype analysis is exploratory, it is difficult to determine the appropriate sample size. There is wide variability in sample sizes for Stage 1 studies, with the most common justifications being prior research (e.g., Schweiger Gallo, El-Astal, et al., 2024), followed by convenience (e.g., Rempel et al., 2019) and the desire to exceed earlier sample sizes (e.g., Reynolds et al., 2025). Based on our review, a suggested rule of thumb might be a minimum of 200 participants for each Stage 1 study, presuming the standard procedure is being followed (rather than a variant). A larger number of participants can make clearer the cut-off points for the frequency distribution of the elicited features. Sample sizes for the confirmatory stage studies are more easily determined because the studies are generally experimental and because the illustrative articles in Table 5 provide further information and guidelines for organizational researchers.

Use of Student Samples

Early prototype analyses relied primarily on university students to elicit lay people's views of a concept. Young adults make up the majority of undergraduate student cohorts, with ages generally in the range of 18–23 years. If students lack experience or exposure to the concept, they may omit central features or base their responses on social media or stereotypes (Le et al., 2008) and/or on descriptions in popular business or mainstream press (Baron & Ensley, 2006). Ayman-Nolley and Ayman (2005) also highlighted the nascent importance of age in determining prototypical knowledge structures with young children of differing ages defining their understanding of leaders early and differently. Larger samples, with all (18+) age cohorts represented, could help ensure participants have adequate exposure to the organizational concept being prototyped. Amazon's Mechanical Turk (MTurk) marketplace is one increasingly popular online data collection method that includes a larger and more demographically diverse participant pool, with typically more years of work experience than traditional student samples (Aguinis et al., 2021). Albeit more expensive than student pools, online platforms are increasingly popular because they allow relatively quick data collection from a larger number of diverse participants than studies conducted in one or a few organizations (Newman et al., 2021). In this review, approximately 31% of the prototype analyses published since 2018 reported using online participant platforms (e.g., MTurk, Prolific, Credamo), which is comparable to the Journal of Applied Psychology (2017–2021) (Zickar & Keith, 2023). Newman et al. (2021) highlighted a number of sampling concerns when conducting research using online platforms, including sample bias, data non-independence, in-group bias, and non-naivety. Detailed guidelines on how to overcome potential sampling and data quality issues, such as careless responding, inattentiveness, dishonesty, and bots are provided in these recent reviews (e.g., Newman et al., 2021; Zickar & Keith, 2023).

Use of Independent Samples

A few prototype studies in the article set used the same participants in more than one study (e.g., Gregg et al., 2008; Gulliford et al., 2021; Le et al., 2008; McGrath et al., 2022). Overlapping samples across the feature generation and confirmatory stages of a prototype analysis can inflate statistical associations and blur the existence of a prototype. To ensure the separation of the two stages of prototyping, the samples for each study ideally should be independent of one another. This helps avoid potential contamination of participants’ responses and inflated statistical associations. Some overlapping samples are possible during the confirmatory stage, if the studies are separated by a distraction task, for example, and the presentation order is also varied to reduce potential confounds and participant fatigue. Here, we again highlight the benefits of conducting at least two independent confirmatory studies, one of which that does not rely on self-reports.

Exposure to the Concept

While category or concept formation may be universal, Rosch et al. (1976) noted that since the categories reflect real-world internalized views and the knowledge of the people doing the categorizing, then a person's knowledge of a particular category may differ among cultures, subcultures, and individuals. Hence, different amounts of knowledge about a category object or concept might change the classification or the features that are generated (Rosch et al., 1976). For example, Rosch et al. (1976) found that a former airplane mechanic produced a much longer list of attributes of an “airplane,” greater detail about more obscure attributes (e.g., engines of several types of planes), and a more unusual pictorial depiction of an airplane (i.e., underside and engines) than a lay person. In contrast, when participants lack exposure to the concept, they may base their ratings on stereotypes and/or assumptions. For example, the prototype of a “good job” for undergraduate students may be biased due to their lack of work experience (Zhu et al., 2023). Similarly, people from diverse cultures may have differing internalized knowledge structures related to that concept (Migge et al., 2020). Researchers, therefore, are advised to plan their sampling strategy with participants’ exposure to, or experience with, the focal concept in mind. English-language fluency and race/ethnic background should also be considered. Finally, since one of the advantages of a prototype analysis is that other researchers can use published features for later study comparisons, it is important to plan, collect, and report data on participants’ exposure to the focal concept.

Laypeople Versus Expert Conceptions

Purposive (expert) sampling, whereby participants are chosen because of their particular expertise or domain knowledge (Zickar & Keith, 2023), has been used in the prototype analysis method. For example, McGrath et al. (2022) conducted a prototype analysis to better understand educators’ perspectives of “character.” Experts have also been used to examine health and personality concept prototypes. Hofsess and Tracey (2010) examined the concept of countertransference with a sample of experienced psychologists, because it is unique to professional practice. Hanniball et al. (2021) also examined the content validity of a simplified version of the Comprehensive Assessment of Psychopathic Personality (CAPP-Basic) scale, with a sample of mental health professionals who had direct clinical experience with the disorder. Notably, Fehr (2005) concluded that laypeople and experts may each offer unique theoretical insights, rather than being mutually exclusive. Even within an expert domain, variation in the degree of “expertness” may influence the prototype. For example, Baron and Ensley (2006) found that the “business opportunity” prototypes used by experienced entrepreneurs showed greater clarity, were richer in content, and were more directly relevant to new ventures than those of novice entrepreneurs. Experienced entrepreneurs tended to exhibit enhanced pattern recognition that potentially could help them successfully start and run new business opportunities. One a priori consideration, however, is the feasibility of obtaining a sufficiently large, and matched if relevant, sample of experts. In line with Baron and Ensley (2006), we recommend coders be unaware of group characteristics when using matched groups (e.g., degree of expertness, laypeople vs. experts).

Data Analysis

New Analytical Tools

Most prototypical analyses undertake manual coding processes in the feature generation study, following Fehr's (1988) procedure; while Maher et al. (2020) and Schellekens et al. (2021) used NVivo, a commonly available analytical tool. These data analysis techniques have limitations with the possibility of introducing bias and are labor-intensive and time-consuming (Sun et al., 2024a; Table 1). Recent developments in natural language analysis, AI tools, and open-source software have introduced tools that we recommend researchers could use to overcome or minimize the above limitations. For example, the Linguistic Inquiry and Word Count (Boyd et al., 2022) computer software was used in two studies in the review to quantify how much of participants' responses reflect various psychological themes (i.e., Kinsella et al., 2015; McGrath et al., 2022). Sun et al. (2024a) also suggested using R codes to conduct the initial word count, online translation software for cross-cultural studies, and ChatGPT to automatically organize categories and word-embedding techniques to automatically arrange semantically similar words into features. Other possible techniques could include latent Dirichlet allocation (LDA), which uses machine learning to automatically extract central topics, undertake word counts, automatically normalize stem words, consider word co-occurrence/associations, and be able to undertake sentiment analysis. LDA output can be used to quantify the importance of central topics, thus giving an indication of centrality importance for the features generated. LDA syntax is available in multiple languages (+100 languages), simplifying cross-cultural analysis.

Testing for Individual Differences

Evidence for the influence of individual differences, such as gender, age, education, ethnicity, cultural background, and religion, on prototypicality ratings is equivocal (Fehr, 2005). Gender has been commonly reported as binary in prototype studies, with relatively equal representation in the majority of studies (e.g., Kearns & Fincham, 2004), but overrepresentation of one group in others (e.g., Le et al., 2008). Some studies found no individual differences (e.g., Kito, 2016; Walker & Hennig, 2004), while others reported differences (e.g., Kearns & Fincham, 2004; Kinsella et al., 2015; Schellekens et al., 2021). Kinsella et al. (2015) found gender differences among 26 hero features, with males rating “fearless,” “saves others,” and “powerful” higher than women. Kearns and Fincham (2004) also found significant differences in the forgiveness prototype based on gender, race, and religion. Females rated “…letting go of anger, having peace of mind, not wanting revenge, learning from mistakes, doing the right thing…” (p. 845) as more central to forgiveness than males. Schellekens et al. (2021) also found significant differences in conceptualizations of “grace” based on age, gender, and religiosity. The data analytic strategy should, therefore, include tests for individual differences as part of the preregistration of the planned sampling strategy and data analysis approaches.

Examining Cross-Cultural Differences

Prototype analysis has previously been used to better comprehend how a concept varies across cultures (Sun et al., 2024a). Hepper et al. (2014) took the 35 nostalgia features previously found by Hepper et al. (2012) and examined cross-cultural similarities and differences across 18 countries. Findings suggest that cross-cultural agreement among participants was high as to the features most closely related to nostalgia, except for the African countries of Uganda, Ethiopia, and Cameroon. Morgan et al. (2014) examined the concept of gratitude in the UK and then contrasted their findings with a US sample (i.e., Lambert et al., 2009). While there were some culturally ubiquitous features of gratitude, there were also some unique features. Smith et al. (2007) examined the concept of a “good person” across seven cultures, using both laypeople and expert views. Sun et al. (2024b) also used prototype analysis to study the differences in how heroes are depicted across individualistic and collectivistic cultures. Recent prototype analyses of “envy” and “disgust” also found that Western and non-Western countries did not agree about these concepts (Schweiger Gallo, El-Astal, et al., 2024; Schweiger Gallo, Görke, et al., 2024). Organizational researchers can consult Sun et al. (2024a) for guidelines on prototype analysis in cross-cultural research.

Implications for Organizational Scholarship

The findings from this methodological review suggest that prototype analysis could be used more often to advance organizational research through increased understanding of the content and structure of fundamental and emerging concepts. For instance, the concept of trust (and distrust) within the workplace has been studied for decades; but some conceptual ambiguities and measurement problems remain, even though trust is fundamentally important to numerous organizational relationships (e.g., leadership, team functioning, organizational justice, the psychological construct) (Dirks & de Jong, 2022). Emerging organizational concepts, such as “working from home,” could also be prototyped rather than approached from a classical essentialist perspective, and the results then compared to management's views (or policymakers). Moral concepts, previously studied with laypeople/students, are also of growing importance to leadership in today's workplaces (e.g., honor, moral exemplarity, moral character). Prototype analysis could also be used to elicit employees’ understanding of people with disabilities, as this could help to promote inclusion and avoid inaccurate assumptions and unfounded expectations (Stone & Colella, 1996).

A rapidly emerging topic of organizational scholarship is the relationship between humans and AI technologies, which has stimulated new questions for workers and their organizations (Bankins et al., 2024; Glikson & Woolley, 2020). People in organizations may have had limited exposure to AI, and yet the increasing use of AI suggests that studying relational patterns with technology using the prototype analysis method (e.g., trust in AI, Kaplan et al., 2023; worker attitudes towards AI, Bankins et al., 2024) could be helpful. Prototype analyses of “relational” technology concepts, such as trust in robots and automated systems in the workplace, could provide unique insights about developing internalized knowledge structures of the human–AI interface, beyond the understanding of human–human relational concepts. Uncovering such prototypical structures may be more challenging as the prototype may not yet be organized as a cognitive schema due to employees’ lack of exposure to the concept. Yet given the expected expansion of AI within the workforce, relying on human–human scholarship may not be fruitful (Kaplan et al., 2023).

Prototype analysis could also be more widely used in organizational scholarship to differentiate unique from overlapping concepts (e.g., employee engagement; work engagement; job involvement; transformational, authentic, ethical leadership styles). Mapping related concepts and examining similarities and differences in concept features can help identify unique, overlapping, and redundant constructs that would be of particular benefit to organizational research, thus addressing conceptual and measurement issues such as construct proliferation and concept redundancy (Eva et al., 2025; Fischer & Sitkin, 2023; Hoch et al., 2018; Le et al., 2010). Jones et al. (2018, p. 875) proposed three criteria for determining construct independence in a prototype analysis: (1) The extent to which each construct had unique attributes. (2) The extent to which these unique attributes were also central to the construct, employing the centrality ratings. (3) Comparison of the mean centrality of the unique items for each construct against the shared items for the construct—if the unique items have a higher centrality, then this is firm evidence for the independence of the construct.

Within leadership scholarship, Lord et al. (2001) argued that diverse situational contexts can activate different features of a leadership prototype—for example, contrasting expectations in a military culture compared to a research and development (R&D) organization. Yet other contemporary contexts might also include working from home, distributed work, multi-national corporations versus family-owned businesses, and different types of professional services (e.g., arts/culture compared to engineering/scientific). A family resemblance study can be used in the prototype analysis method to understand styles of leadership, such as “authentic” and “ethical,” thus comparing the general or “typical” leadership prototype over non-typical kinds of leadership (see Fehr, 1999, for how to calculate family resemblance scores for a type-based prototype analysis). While this would be challenging, it could potentially better represent the reality that there is no one best leadership style (Lord et al., 2001); but rather there may be overlapping and unique features of leadership related to the context.

Given the importance of globalization and international markets, prototype analyses can also compare concepts across cultures and thereby help to identify the extent to which features are universal or culturally specific (Sun et al., 2024a). Cross-cultural studies are evident in some prototype-based organizational research, but they do not necessarily follow the standard method reviewed here, such as leadership (e.g., Brodbeck et al., 2000). In this methodological review, the cross-cultural relationship context was the second most frequent context beside generalized, suggesting that it constitutes a growing and useful application of a prototype analysis. Since prototype studies typically publish concept features for a specific culture, cross-cultural comparisons can be made after collecting additional country-specific datasets (e.g., see Hepper et al., 2014; Morgan et al., 2014). While it is not new to cross-cultural leadership research, the prototype analysis method potentially offers a structured and replicable approach for examining cultural differences in leadership and other organizational concepts, such as supplier/customer relationship management.

Prototype analysis has been used to map vertical hierarchies, thereby helping to advance knowledge by testing competing theories. Organizational scholars could consider conceptualizing vertical hierarchies for concepts, such as the aforementioned trust, and also brand relationships and value, where multiple conflicting conceptualizations exist. Job crafting has also been conceptualized as potentially hierarchically structured, encompassing the superordinate level of “job crafting orientation,” relative to the subordinate categories of form and content (Zhang & Parker, 2019). Prototype analysis could advance theory in job crafting research by examining whether there is a hierarchical structure to workers’ perspectives of the changes they make to improve their jobs and then contrasting those with subject matter experts.

Prototype analyses are helpful to the development of prototype-based measures (Fehr, 1994), with approximately 9% of the studies in this review creating a measure. A prototype-based measure can potentially be more concise due to the focus on “central features” and also apply to a wider range of contexts because some lack contextual information. Examples of concepts that could benefit from development of prototype measures include new contexts such as human–AI trust and culturally specific contexts in which measures were developed from Western perspectives (Migge et al., 2020). Broughton (1984) found that the prototype approach was better statistically in the construction of personality scales over other traditional strategies (e.g., empirical, factor analytical, rational, and internal consistency) and urged further research be undertaken into the application of prototype-based measures. Once items are generated using the feature word/phrases and the prototype features then confirmed, the standard approach to scale development can be followed, including item reduction and scale reliability and validity testing (convergent and discriminant validity) (Hinkin, 1995).

Finally, it is important to note that not all concepts in organizational research are suited to a prototype analysis. For instance, the concept of bullying in most Western organizational settings is defined by necessary and sufficient conditions for the purposes of legal applications; yet people's knowledge of this concept may be very different from such legal definitions based on their everyday experiences and exposure. These types of concepts may, therefore, require consideration of both classical essentialist approaches and prototypical approaches. For example, Smith (1991) found that everyday people had naïve knowledge of common crimes that included incorrect legal information. Therefore, organizational scholarship could assist in educating employees by eliciting their prior knowledge of concepts with legal and policy implications, like bullying, using the prototype analysis method. Thus, while classical approaches may be required for legal applications, managerial understanding of the central features of prototypical knowledge structures can inform organizational messaging, training programs, and policy development.

Methodological Limitations and Future Research Directions

This review has highlighted future potential applications of the prototype analysis method, particularly in relation to organizational research, through the development of a taxonomy of concept grouping, relationship context, and theoretical development. The taxonomic structure allows for the possible addition of new categories to each of its three dimensions as new research challenges emerge. For example, cross-disciplinary concept groupings, such as leadership (Lord et al., 2001) and sustainability (Feeney et al., 2023), the human–AI relationship context (Bankins et al., 2024; Kaplan et al., 2023), and theoretical developments regarding how prototypes shape decision-making and behavioral outcomes (Seuntjens et al., 2015). The main advantage of the prototype analysis method is that it is a participant-driven approach, as opposed to researchers predetermining concept definitions and themselves distinguishing between related constructs. Overall, prototype analysis is an underutilized innovative qualitative research method that could be used more widely to study the interplay between employees’ and other stakeholders’ views of complex organizational phenomena.

There are some possible methodological limitations to prototype analysis. First, some concepts may be too abstract or unfamiliar (e.g., political concepts, atypical prototypes, expert concepts), such that people do not agree on a prototypical structure. Ideal prototypes were not often examined in this methodological review, but understanding such prototypes and their application appears important both to organizational practice and for the advancement of the method. Inconsistencies in the leadership prototype research led Junker and van Dick (2014) to conclude that careful attention should be paid to definitions, operationalizations, and interpretations of the results from a prototype analysis when examining ideal (goal-oriented) prototypes because they are less familiar than typical prototypes, a recommendation applicable to this review. While Fehr (1986) noted that a prototype analysis is worth considering when classical essentialist approaches to concept definition have been unsuccessful, we have also shown in this methodological review that prototype analysis can be used to map expert concepts, such as countertransference in psychology, assuming adequate sample sizes.

A second potential limitation is that prototype analysis is an inductive qualitative technique that makes no a priori assumptions about the causal ordering of generated features, including whether they are antecedents, consequences, or symptoms of the concept itself. Instructions usually do not limit participants’ thinking but, rather, might suggest that features may include “characteristics, components, functions, feelings, ideas, or behaviours—anything that helps define (X)” (Fehr & Russell, 1991, pp. 432–3). As such, a prototype analysis is considered a descriptive, rather than prescriptive, method (Fehr, 2005). Examining the generated feature words, in terms of negative and positive, cognitive and affective (feelings, emotions), characteristics and behaviors, adjectives and nouns, and motivations, may be the first step to better understanding the features of the prototype. One possible approach to address this limitation is for researchers to code features as antecedents, experiences, behaviors, and consequences or outcomes (e.g., Hurtado de Mendoza et al., 2010). Cluster analysis has also been used to provide a statistical solution to understanding how the features are related to one another (e.g., Hassebrauck, 1997; Kito, 2016). Finally, network analysis of the centrality data is a more recent technique used similarly (e.g., Schweiger Gallo, El-Astal, et al., 2024; Schweiger Gallo, Görke, et al., 2024). Hence, while a prototype analysis does not assume relationships between features, there are established statistical approaches that can provide insight.

The term standard procedure was used in articles in this review to refer to prototype studies that assess the horizontal content and structure of a concept. We pointed out the importance of also examining the vertical organization of concept categories. To promote consistent operationalization and in line with prototype theory (Rosch et al., 1976), we have suggested here that future research use the term standard procedure to encompass the content and structure of both the horizontal and vertical dimensions of concepts. There remains a choice within that standard procedure in terms of the types of studies included in the overall design, as suggested in our recommendations.

We found no examples where prototype analysis had been used to evaluate competing theories in organizational research (see Table 4), and so this represents a promising avenue for future research. Fehr (2005) suggested that prototype analyses are most useful in evaluating competing theories, such as those of experts versus laypeople. For example, Parrott and Smith (1991) showed that the prototype of “embarrassment” held by laypeople was related to two of five expert theories of “embarrassment”: “dramaturgic” and “social anxiety.” A prototype analysis can also involve comparing the elicited features of a concept to existing measures derived from a particular theoretical perspective (e.g., “gratitude,” Lambert et al., 2009; Morgan et al., 2014). Applying prototype analysis to organizational control versus trust-based theories of flexible work practices (e.g., remote work, working from home) offers a potentially promising avenue for examining competing theories of managers and employees.

Another future avenue for the development of the prototype analysis method is examining how prototypes influence behavioral outcomes (e.g., behavioral implications of greed in organizations, Seuntjens et al., 2015), which has received little research attention in prototype-based research (Fehr, 2005). One potential approach is by Sun et al. (2024b), who replaced heroic features with heroic behaviors in a 3 × 2 vignette study (e.g., jumping onto railway tracks to save a person). Validated prototype-based measures of a concept also offer promise because they may indicate behavior. Hence, laboratory studies could provide a means to empirically evaluate the influence of a prototype on behavior using a measure developed from the prototype. In a more general sense, the influence of prototypes on in-role performance, proactive behaviors, organizational citizenship, and withdrawal behaviors is of particular interest to organizational scholars, and so examining how the prototype influences such behaviors could help to advance theory and provide new theoretical directions.

Finally, the content and/or structure of a prototype may change over a person's lifetime, and prototype analysis is arguably well suited to examining this question (Fehr, 1988, 2004, 2005). For example, the types of interactions that build intimacy in friendships might evolve over time (Fehr, 2004). Yet no examples of a longitudinal prototype analysis were identified in this methodological review. In the workplace, various stages of an employees’ career trajectory might influence the central features of a particular concept, such as organizational commitment. The changing relevance of organizational commitment is one concept that might benefit from further examination using prototype analysis. How organizational commitment potentially changes as new generational cohorts emerge could be examined using a longitudinal prototype analysis. Since leadership is dynamic and highly contextually sensitive (Lord et al., 2001), prototype analysis might also provide insight into how leadership prototypes change over time with greater experience and ongoing training (Epitropaki & Martin, 2004).

Conclusion

As Bansal et al. (2018, p. 1194) observed, “the types of qualitative methods are rich and varied”; however, relying on a small subset of commonly used methods can limit insight and progress in a field. Prototype analysis has some adaptability and flexibility in its application, which is an important consideration when studying complex organizational phenomena (Köhler et al., 2022). Prototype analysis can be used to study abstract concepts that may share features with other concepts in the same family. Since prototype features can be used in later applied studies, such as measure creation, discriminant analysis, and model testing, this represents another advantage of the prototype method. Prototype analysis can also elicit expert and/or employee views of a concept, important for examining competing theories. Prototype analysis is not a new methodological approach in the field of applied psychology, but it could be more widely used in organizational research to better understand ill-defined work-related concepts and relational patterns, as well as their applicability to work both within and across cultural contexts. The taxonomy of prototype analysis applications presented in this methodological review suggests numerous ways organizational researchers could employ the method to improve concept clarity, advance theory, and address a variety of important research questions.

Supplemental Material

sj-docx-1-orm-10.1177_10944281251399210 - Supplemental material for Application of Prototype Analysis to Organizational Research: A Critical Methodological Review

Supplemental material, sj-docx-1-orm-10.1177_10944281251399210 for Application of Prototype Analysis to Organizational Research: A Critical Methodological Review by Sandra Kiffin-Petersen, Sharon Purchase and Doina Olaru in Organizational Research Methods

Supplemental Material

sj-docx-2-orm-10.1177_10944281251399210 - Supplemental material for Application of Prototype Analysis to Organizational Research: A Critical Methodological Review

Supplemental material, sj-docx-2-orm-10.1177_10944281251399210 for Application of Prototype Analysis to Organizational Research: A Critical Methodological Review by Sandra Kiffin-Petersen, Sharon Purchase and Doina Olaru in Organizational Research Methods

Footnotes

Acknowledgments

The authors sincerely thank the Associate Editor Dr. Justin DeSimone and the anonymous reviewers for their valuable feedback throughout the review process and Dr. Brett Smith for his helpful comments on an early draft.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.