Abstract

This article and the related Feature Topic at

Keywords

Introduction

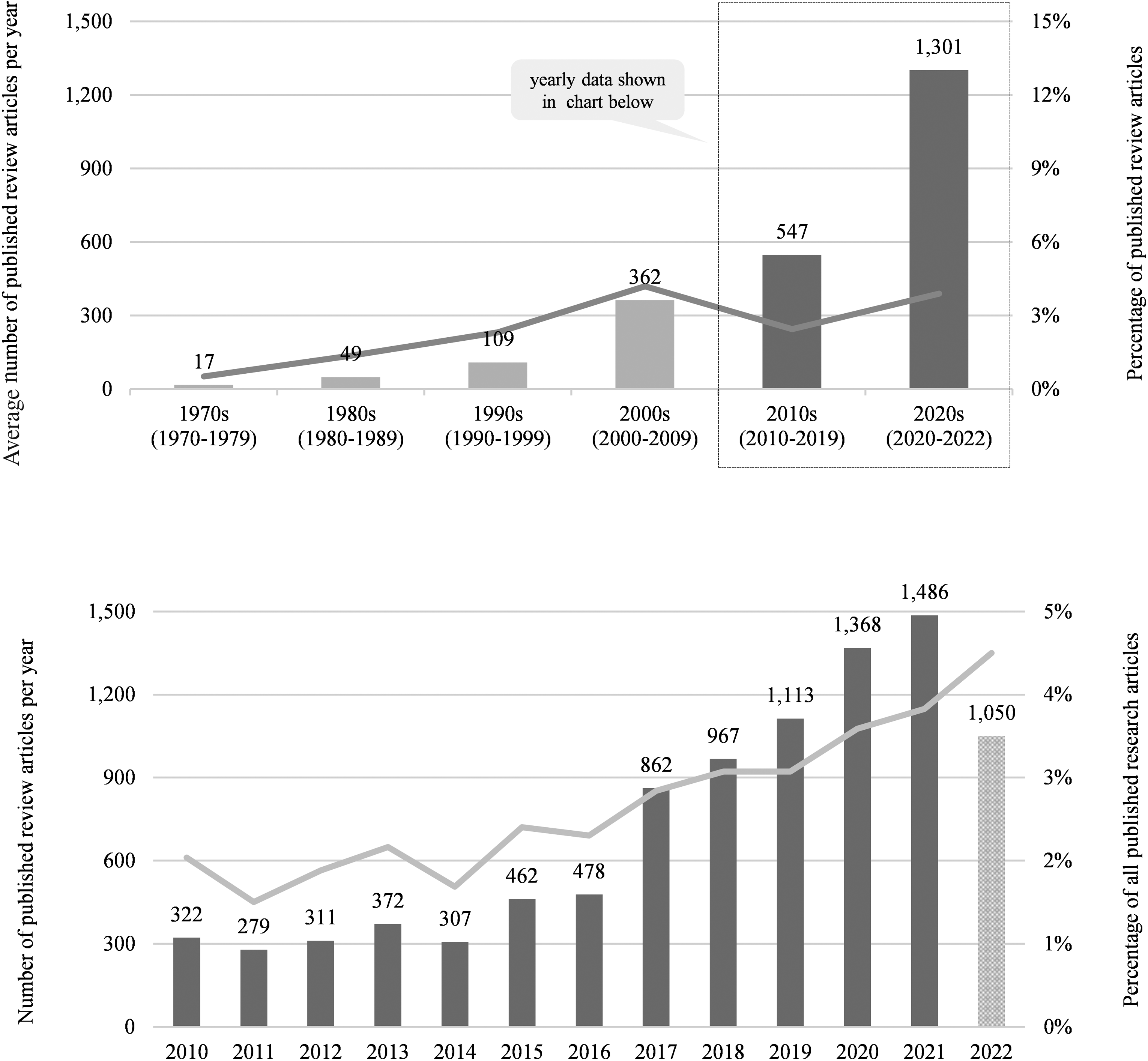

Although lagging after their recognition in medical and some social science fields, review articles including research syntheses, meta–analyses, and integrative literature reviews have flourished in management and business scholarship in recent years. An analysis of the Web of Science database in the categories “business” and “management” reveals a substantial growth of review articles per year over time, as displayed in Figure 1. The importance and growing popularity of review articles have been recognized in multiple subfields in business and management, including accounting (Massaro et al., 2016), entrepreneurship (Combs et al., 2021; Kraus et al., 2020; Kraus et al., in press), human resource management (Callahan, 2014; Torraco, 2005), international business (Paul & Criado, 2020), organizational behavior and organization theory (Dasborough, 2020), marketing (Hulland & Houston, 2020; Palmatier et al., 2018), strategy (Durand et al., 2017; Ethiraj et al., 2017), and supply chain management (Durach et al., 2017; Seuring & Gold, 2012).

Number and percentage of published review articles over time.

There is also a growing recognition that producing a “high–quality” review article is a demanding scientific endeavor that requires multiple scientific skills and competences (e.g., Breslin & Bailey, 2020; Rojon et al., 2021; Wright & Michailova, in press). Indeed, a number of editorials and articles including those in this Feature Topic have discussed how to produce high-quality review articles. These works provide information on specific types of reviews, steps in the review process including search and analyses as well as specific competences that are needed to produce and ultimately publish high-quality review articles.

Although each of the prior works provides valuable insights, a holistic and comprehensive exposition of what constitutes high-quality review research is lacking. One can think of the focus on a singular aspect of quality as studying a single shard of glass in a kaleidoscope and missing the potential vista.

Moreover, the increasing amount of advice reflects a growing recognition that a plurality of review purposes and methods exist that build on diverse epistemological and ontological traditions (for taxonomies and typologies, see e.g., Cooper, 1988; Grant & Booth, 2009; Paré et al., 2015). This plurality of review purposes and methods can create confusion about what constitutes a high-quality review article (e.g., Greenhalgh et al., 2018) especially because different epistemological and ontological traditions mean that there is no single set of criteria to assess their quality. Because of this diversity, best practice recommendations for reviews in general risk creating more harm than help. Practices that may work for some types of reviews may be dysfunctional for other types. For example, although the focus on prestigious peer-reviewed journals has become a standard practice in the article selection process, it can be detrimental for certain review purposes such as aggregating knowledge, identifying publication biases or providing guidance for emerging topics.

In this article, we take a holistic and pluralistic perspective on review articles as a distinct form of scientific inquiry. We propose

Although no single set of criteria exist to assess the quality of review research, all forms of review research are concerned with rigor and impact. As one of the main themes for the Feature Topic at

In this article, we discuss the plurality of review purposes and methods to provide the context for the papers in this Feature Topic and establish review research as a credible and important scientific endeavor. First, we examine the history and advancement of review research outside of and within the management field to foster a better understanding about the evolution and diversity of review research. We also briefly introduce the articles included in the Feature Topic and clarify what review research is. Second, we discuss eight different purposes inherent in review research that not only consolidate previous work, but also generate unique knowledge contributions, critically evaluate previous work, and develop and extend theory. Third, we specify six aspects of rigor and impact and show how these help to provide the basis for assessing review research. By building on existing collective wisdom and the articles showcased in this Feature Topic, we highlight the importance of alignment between review purpose and review methods to produce rigorous and impactful contributions through review research.

Foundations and Evolution

To foster a better understanding about review research as a legitimate form of scientific inquiry, we trace the history and advancement of review research outside of and within the management field. In doing so, we show that review research can be rooted in multiple epistemological and ontological traditions.

Roots in Medicine and the Social Sciences

The foundations of review research can be linked to evidence-based practices as well as the push for research syntheses that curate existing knowledge. These review research foundations also reflect aspects of both “rigor” and “impact,” as we shall explore later in more depth. Movements for evidence-based practice, initially in medicine, and the introduction of research syntheses and meta-analysis in the social sciences, have spurred review research in our field.

Systematic Reviews in Medicine

Evidence-based medicine and systematic reviews were spurred originally by the work of Archie Cochrane, a British physician who had been imprisoned by Germany during World War II. While “caring for prisoners with tuberculosis, he lamented the lack of hard evidence” (Levin, 2001, p. 310) on which treatment was best to use. After the war he specialized in epidemiology, and in 1972 he published

In response to Cochrane's call for “systematic, up-to-date reviews of all relevant RCTs of health care” (Chalmers, 1993, p. 157) Iain Chalmers, an obstetrician (p. 310) “set out in 1974 to create a comprehensive register of all randomized clinical trials in perinatal medicine (pregnancy through postpartum day 28).” He and his colleagues conducted systematic reviews on this topic, but ongoing research meant that the reviews continually had to be updated. Finally, in 1993, “Chalmers and an international group of about 70 others jointly announced the formation of the Cochrane Collaboration to prepare, maintain, and disseminate systematic reviews of the effects of health care interventions” (Chalmers, 1993, p. 159).

According to the Cochrane Collaboration, “A systematic review attempts to identify, appraise and synthesize all the empirical evidence that meets pre-specified eligibility criteria to answer a specific research question. Researchers conducting systematic reviews use explicit, systematic methods that are selected with a view aimed at minimizing bias, to produce more reliable findings to inform decision making” (Cochrane Library, 2022). As such, evidence from systematic reviews, meta-analyses, and critical appraisals (i.e., review research that evaluates and synthesizes the literature) can be regarded as a more important and valuable knowledge contribution than an individual, primary research study.

The use of evidence-based medicine has continued and expanded to include clinical judgments. For example, Guyatt et al. (2004, p. 90) noted that “The philosophy of evidence based medicine has evolved. Exponents increasingly emphasize the limitations of using evidence alone to make decisions, and the importance of the values and preference judgments that are implicit in every clinical management decision. They now see clinical expertise as the ability to integrate research evidence and patients’ circumstances and preferences to help patients arrive at optimal decisions.” Briner et al. (2009) discuss extensively the integration of different forms of evidence in decision-making.

Meta-Analyses in the Social Sciences

There have been attempts to combine research findings in the social sciences since at least the 1930s, such as work by Tippett (1931) and Fisher (1932) on statistical methods. However, combining findings did not become influential until the 1970s, with the development of meta-analysis. Meta-analysis emerged largely from a presidential address by Glass (2000) at the American Educational Research Association meeting in 1974. Glass, who had been trained in psychometrics and statistics, and who had been personally helped tremendously by psychotherapy, was seeking a way to counteract what he saw to be unsupported claims by Eysenck (1965) that psychotherapy was worthless. He developed an analytical method that included assessment of effect sizes across multiple studies of a phenomenon and ways of combining these studies even if they had differing characteristics (e.g., Glass et al., 1981; Smith & Glass, 1977). In the process, Glass and colleagues developed a way of showing the worth of psychotherapy.

Meta-analysis has spread to a large variety of social science fields over the years, including organizational psychology and management. The impetus for systematic reviews in management initially came from industrial-organizational psychology, especially work by Schmidt and Hunter, that commenced in the 1970s and refuted conceptual notions of situational specificity (e.g., the belief that validities of any selection procedure were situationally specific and not general). Some were concerned that it was impossible to create replicable results across settings. However, the development and use of meta-analysis validated that selection procedures are indeed general (e.g., DeGeest & Schmidt, 2010; Schmidt & Hunter, 1977). Additional influential and trailblazing work that built upon Glass, Hunter et al. (1982), Hedges and Olkin (1985), and Schmidt (1992) and others continued to spread its use. Meta-analysis has increased in importance (DeSimone et al., 2018; Geyskens et al., 2008; Steel et al., 2021; Stone & Rosopa, 2017), as the number of management studies has grown.

Light and Pillemer (1984) use the term

Consistent with Light and Pillemer's third theme, utilizing both quantitative and qualitative methods in systematic reviews has increasingly been supported, especially for policymakers and practitioners. Researchers have experimented with a range of methods for synthesizing diverse forms of evidence such as narrative summaries, thematic analysis, grounded theory, meta-ethnography, meta-studies, and realist syntheses (Boaz et al., 2006; Denyer et al., 2008; Dixon-Woods et al., 2005), a development that also took place in other fields such as medicine (e.g., see Barnett-Page & Thomas, 2009; Thomas & Harden, 2008).

Social scientists interested in creating social policy needed reviews beyond what was available in the Cochrane Collaboration. Several of them (Petrosino et al., 2001) formed the Campbell Collaboration, named in honor of Donald Campbell, a well-known psychologist (Campbell Collaboration, 2022). This equivalent of the Cochrane Collaboration aims: “promotes positive social and economic change through the production and use of systematic reviews and other evidence synthesis for evidence-based policy and practice” (Campbell Collaboration, 2022).

Chalmers et al. (2002) presented evidence showing the growth in recognition of the scholarly importance of scientific reviews. They noted that Garfield (1979) (whose work laid the foundation for the Web of Science) had recognized the importance of reviews for science and had even proposed a new profession, “scientific reviewer.” In addition, recent surveys of editors of core clinical medical journals by Meerpohl et al. (2012); Meerpohl et al. (2012), and Krnic Martinic et al. (2019) found that most of the editors of such journals considered [systematic] reviews to be original research. That is, “scientific reviews” in fields such as medicine have moved beyond simply being sources of evidence for practitioners to make particular discrete decisions. They are increasingly being acknowledged by scholars and journals editors not only as compendiums of knowledge, but also as original contributions to knowledge.

Impetuses in Business and Management

In business and management, impetuses for review articles are particularly notable pushes for evidence-based management and for knowledge synthesis (such as fostered through the launch of the

Evidence-Based Management

Tranfield et al. (2003) introduced a methodology for systematic reviews. They proposed evidence-based management (EBMgt) to contribute to evidence-based practice approaches the UK government was taking at the time. They described (p. 208) how “an evidence-based movement has developed under New Labour,” and recognized that this movement had had a major impact in several areas of work in the UK. Pre-eminent was medicine, but other evidence-based approaches also emerged in government departments addressing education, crime reduction, environment, transport, and the regions. Tranfield et al. (2003) recognized that “systematic reviews have tended to be applied in, and to emanate from, fields and disciplines privileging a positivist tradition, attempting to do for research synthesis what randomized controlled trials aspire to do for single studies” (p. 210).

In her presidential address at the 2005 Annual Meeting of the Academy of Management, Rousseau (2006) turned a spotlight on evidence-based management (EBMgt). Her focus was on providing guidance on ways of translating organizational research into more effective management practice. She did not use the term “systematic review” in that address, but she did discuss the importance of “gather(ing) facts systematically in order to choose an appropriate course of action” (p. 260). Since then, Rousseau, Denyer and Briner have been involved in several initiatives to establish evidence-based management as an accepted approach by both scholars and managers. For example, Rousseau (2012) sponsored a series of conferences on evidence-based management that resulted in a handbook of evidence-based management. They have also established the approach in a series of publications for academics and practitioners (e.g., Rousseau et al., 2008).

Briner et al. (2009) defined evidence-based management in a way similar to evidence-based medicine: “Evidence-based management is about making decisions through the conscientious, explicit, and judicious use of four sources of information: practitioner expertise and judgment, evidence from the local context, a critical evaluation of the best available research evidence, and the perspectives of those people who might be affected by the decision” (p. 19). They defined a systematic review (p. 25) as “a replicable, scientific, and transparent approach that differs greatly from a traditional literature review.”

Several scholars have taken steps to make evidence-based management available to managers. For example, Barends and Rousseau (2018) have established a Center for Evidence-based Management (CEBMA.ORG) that is designed to foster the use of systematic searches for evidence by practitioners. Among other contributions, this center makes available to members several scholarly social science databases that may be helpful to them. As another example, Sharma and Bansal (2020) show that managers can not only be recipients of evidence-based work, but can also help create research reviews that guide practice.

Beyond their potential impact on practice, Denyer and Tranfield (2006) recognized that “(s)ystematic reviews have become regarded as the most reliable form of research review (Clarke & Oxman, 2001; NHS Centre for Reviews and Dissemination, 2001) due directly to the explicit and rigorous methods utilized (Mulrow, 1994)” (p. 217). However, they also recognized some limitations of using such reviews in the social sciences, given their positivist epistemology and the inability of meta-analysis to cope with variations in data sources. Rynes and Bartunek (2017) also raised questions about systematic reviews that reflect a purely positivist approach. Instead, they “particularly encourage integrative and explanatory reviews (which incorporate multiple types of evidence) and interpretative reviews (which synthesize qualitative research into higher-order theoretical constructs). (Further, they) encourage more aggregative reviews that start with a practical question (rather than with a “body of literature” (p. 252).

Knowledge Synthesis

The launch of the

Since then, several review journals and special review issues have been launched. Today, three broad types of outlets for review articles can be distinguished: First, there are several specialized review outlets, such as the

Methodological Advancements in This Feature Topic

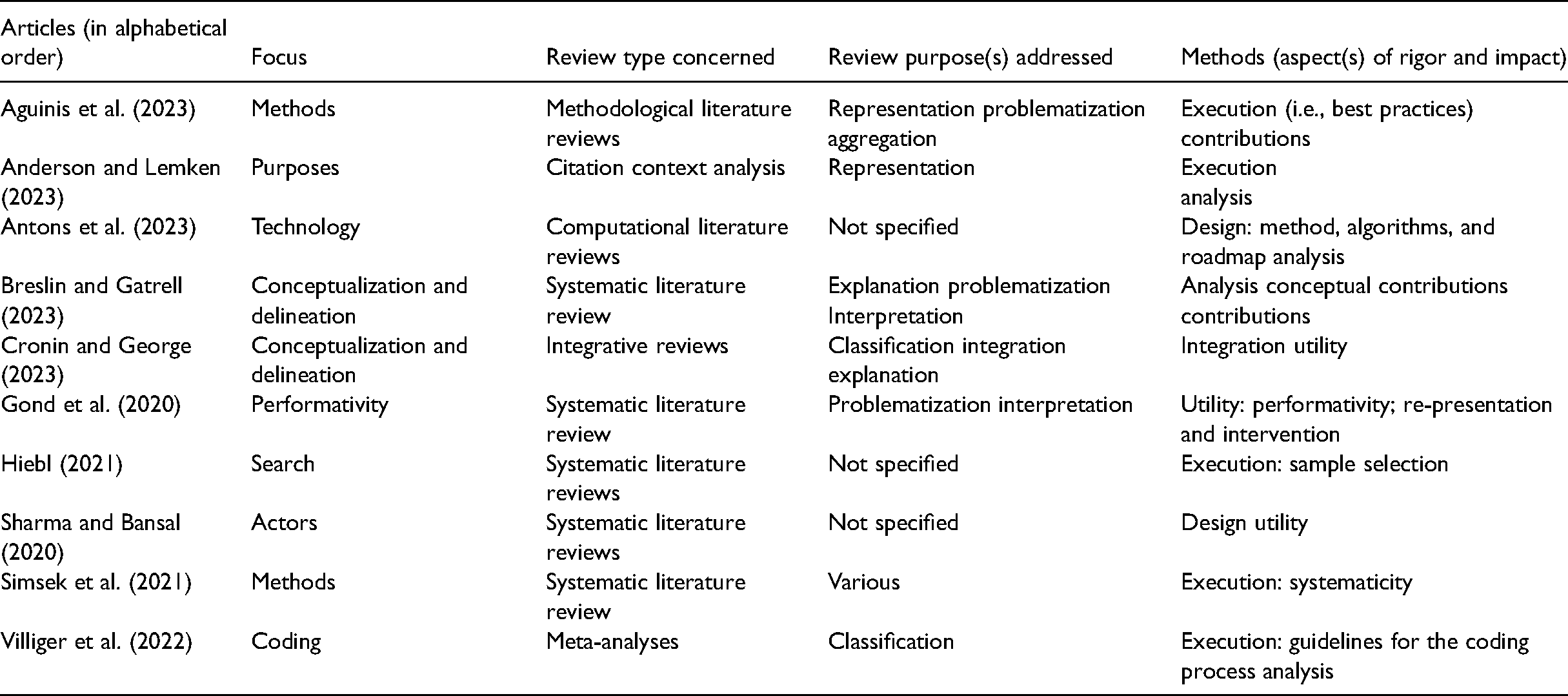

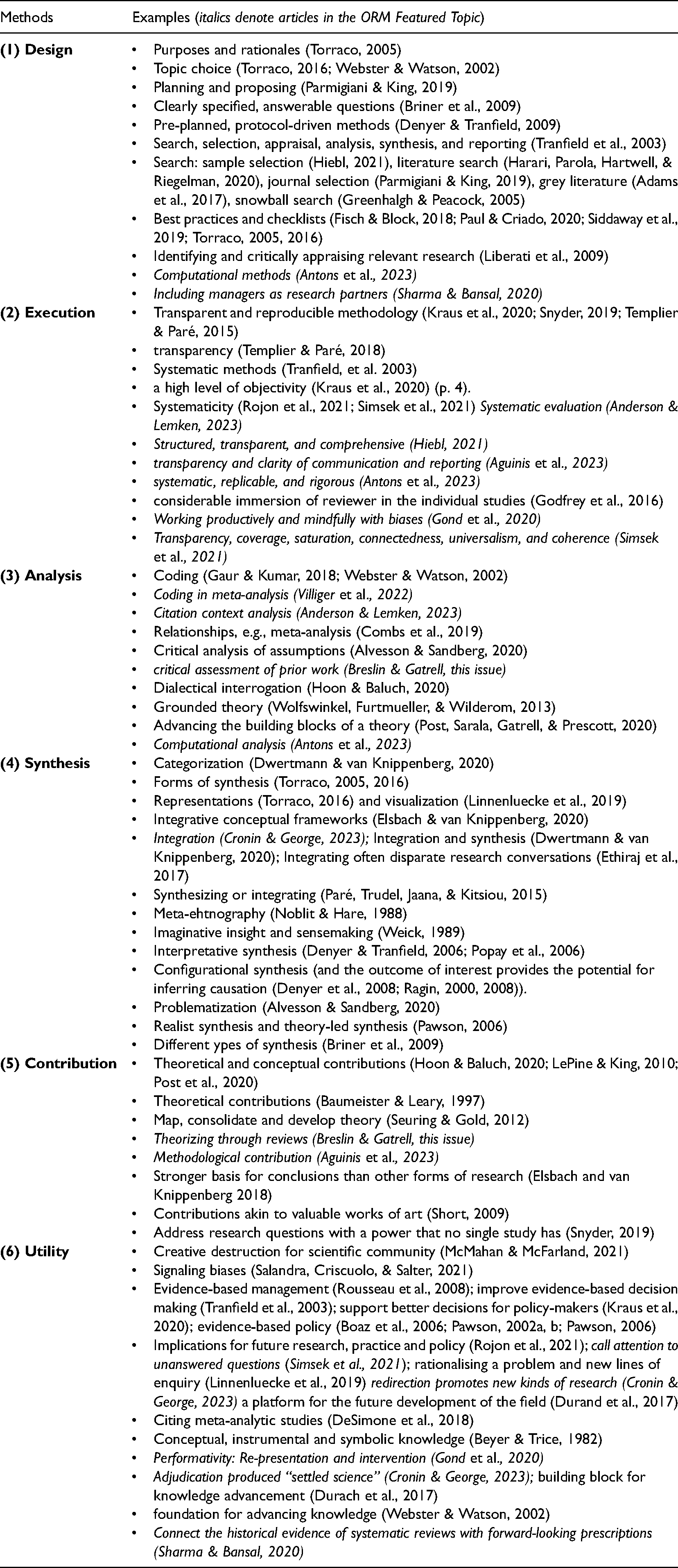

Since this Feature Topic is the first concerted effort for methodological advancement in reviews, we consider this Feature topic and the published articles in it as an important milestone in the development of review research, with the goal to further develop this mode of scientific inquiry as a legitimate form of scholarly research in and of itself. As shown in Table 1 and discussed below, the Feature Topic articles address multiple review purposes and cover different aspects of rigor and impact as overarching concerns in scientific endeavors. These articles aid us in comprehending what review research is (and is not), provide specific methodological guidance for conducting review research, and point to new review research directions.

Summary of Articles in this Feature Topic.

First, several articles advance our understanding about the distinct nature of reviews as a form of scholarship: Breslin and Gatrell (2023) address the uncertain relationship between reviews and theory development. The authors develop a new classification scheme by invoking the metaphor of a “miner-prospector continuum.” Cronin and George (2023) outline an approach to writing an integrative review and provide insight by synthesizing the current state of knowledge from multiple, perhaps competing, paradigmatic perspectives and communities of practice on a topic. They describe in detail and walk readers through specific decision points to guide them in the writing of integrative reviews. Gond, Mena, and Mosonyi (2020) discuss the performative nature of reviews. Reviews are not simply a task of making sense of significant volumes of literature. Rather, the impact of research reviews is to reconstitute the literature the review encompasses. They illustrate this with the literature on corporate social responsibility.

Second, several articles provide methodological guidance for the research process and specific review types: Simsek, Fox, and Heavey (2021) develop a framework of systematicity as an overarching orientation toward the application of explicit methods in the practice of literature reviews. They describe its principles, practices and promises, and offer an assessment of published reviews in terms of how much the reviews make use of systematicity practices. Hiebl (2021) analyzes the sample selection process in systematic reviews. Building on an assessment of the reviews published in the

Finally, two articles point to new directions in review research: Sharma and Bansal (2020) argue that reviews of academic research have not impacted management practice to the degree hoped, in part because researchers and managers differ significantly in their knowledge systems, what they know, how they know it, and the kind of knowledge that that they see as useful. They describe ways that researchers can navigate the tensions inherent in including managers as knowledge partners in the review endeavor, as well as the benefits of this partnership. Antons, Breidbach, Joshi, and Salge (2023) introduce computational literature reviews (CLRs) as a new review method that makes use of computational processes to go beyond the limits of human information processing. They describe the capabilities, identify critical design decisions, and provide practical guidelines that make CLRs accessible to novice and expert users alike.

A Holistic Definition of Review Research

Taken together, the aforementioned developments as well as the articles in this Feature Topic demonstrate that stand-alone “review articles” have become an important form of research in the field of business and management. A plethora of terms and definitions exist for review articles. They have been designated as “research reviews” (Durand et al., 2017; Light & Pillemer, 1984), “knowledge synthesis” (Chen & Hitt, 2021), “literature reviews” (Webster & Watson, 2002), “systematic (literature) reviews” (Denyer & Tranfield, 2009; Rojon et al., 2021), “structured reviews” (Massaro et al., 2016), “state of the art reviews” (Grant & Booth, 2009), “integrative reviews” (Elsbach & van Knippenberg, 2020), “problematizing reviews” (Alvesson & Sandberg, 2020), “narrative reviews” (Baumeister & Leary, 1997), “critical reviews” (Wright & Michailova, in press), “meta analyses” and “meta-syntheses” (Siddaway et al., 2019) “(academic) review articles” (Cropanzano, 2009; McMahan & McFarland, 2021; Short, 2009) and “review-centric work” (Hoon & Baluch, 2020). Although the terms and definitions emphasize different aspects of review articles, they all share the use of prior data as the common defining feature.

As already indicated in the introduction, we propose

Purposes

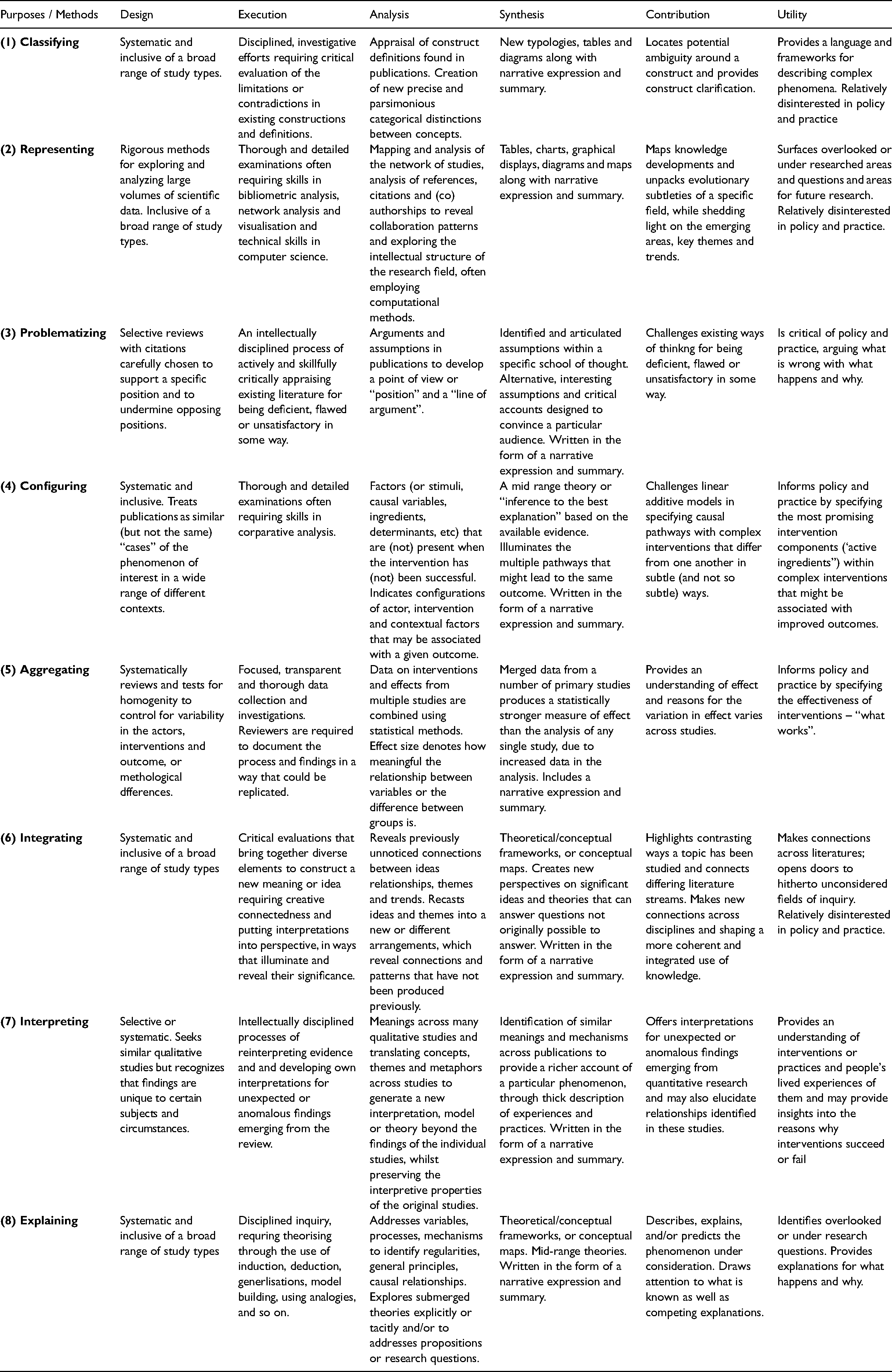

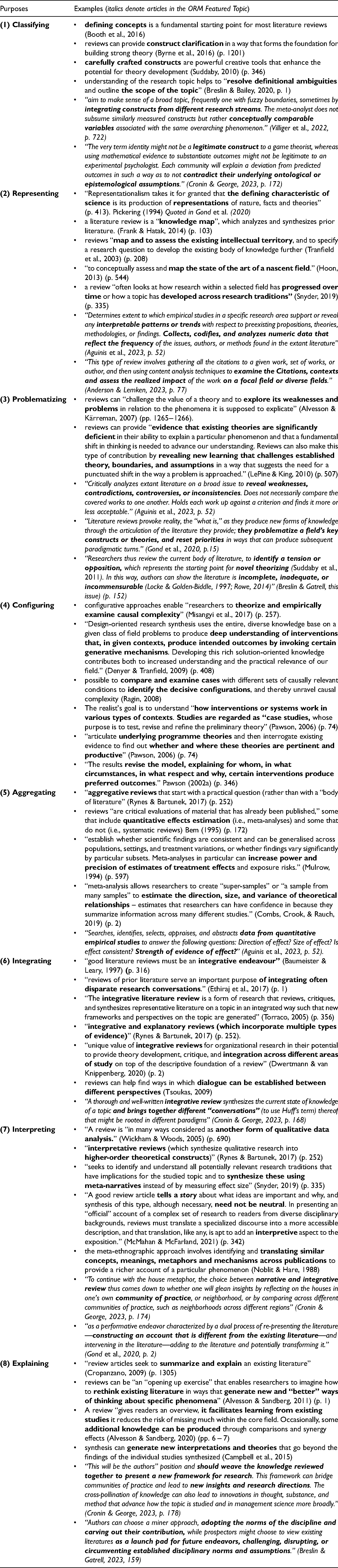

There is a growing recognition that review articles can serve a variety of purposes (Chen & Hitt, 2021; LePine & King, 2010; McMahan & McFarland, 2021; Rousseau et al., 2008). Our survey of the literature reveals eight general purposes inherent in review research, whether these are made explicit or not: (1) classifying, (2) representing, (3) problematizing, (4) configuring, (5) aggregating, (6) integrating, (7) interpreting, and (8) explaining (see Appendix 1 for examples). These are not mutually exclusive; and in fact, some review research combines two or more complementary purposes. However, by specifying the review purposes, we can distinguish the outcomes authors intend to produce, the quality criteria for review articles, and the alignment of review methods with those outcomes.

(1) Classifying

We define classifying in review research as articulating, defining, and producing clear, precise, and parsimonious constructs drawn from multiple studies on a phenomenon of interest and the process of putting them into distinct groups based on ways that they are alike. Classifying aims to surface the nature, essential properties or characteristics of the concept or phenomenon under consideration. Almost all review research involves recourse at some point to clarifying and classifying (Booth et al., 2016). Classifying aims to advance conceptual understanding by locating potential ambiguity around a construct and providing construct elucidation in a way that forms the foundation for building strong theory (e.g., Byrne et al., 2016).

Cronin and George (2023) remind us that research communities may have divergent views of the same construct that reflect differing underlying ontological or epistemological assumptions. They note, for example, the “very term identity might not be a legitimate construct to a game theorist, whereas using mathematical evidence to substantiate outcomes might not be legitimate to an experimental psychologist” (p. 172). Classifying can help distil such phenomena into robust conceptual generalizations, by making “sense of a broad topic, frequently one with fuzzy boundaries, sometimes by integrating constructs from different research streams” (Villiger et al., 2022, p. 722). Classifying involves describing and tabulating the alternative ways in which the phenomenon under consideration has previously been defined and show how various constructs differ or how “conceptually comparable variables” can be associated with the same overarching phenomenon (Villiger et al., 2022, p. 722). Classifying may also map out relationships between the focal construct and other constructs with which the focal construct is related.

In one example of classifying in review research, Grant-Smith and McDonald (2018) produce a matrix which delineates four distinct forms of unpaid work along two dimensions – purpose of participation and level of participatory discretion. The review and resulting matrix provide conceptual clarity around unpaid work practices that informs future research. Hällgren et al. (2018) review the fragmented field of extreme contexts research, sharpen definitions and develop a context-specific typology to help differentiate between contributions from research into risky contexts, emergency contexts, and disrupted contexts. In yet another example, van Grinsven et al. (2016) develop a typology of four alternative approaches to translation and show how these are associated with institutional, rational, dramaturgical and political perspectives. In one final example, Shipton (2006) offers a typology for organizational learning research that categorizes the literature according to (a) its prescriptive/explanatory bias and (b) in line with the level of analysis, examining whether there is a focus on the organization as a whole or upon individuals and their work communities instead.

Classifying requires reviewers to reflect on extant theory and ideas with the aim of changing the way scholars understand and interpret the constructs under consideration. The skillful use of language is often employed to persuasively create precise and parsimonious categorical distinctions between concepts that are comprehensible to a community of scholars. Carefully crafted constructs produced by review research are powerful creative tools that enhance the potential for theory development (Suddaby, 2010, p. 346). Clarifying provides a common terminology as well as guidance and direction for future research.

(2) Representing

Representing in review research is defined as analyzing the structural and conceptual relationships between data extracted from review research and the production of a visual or graphical representation of the field of study. Representing attempts to produce an accurate depiction of a given field's components and relationships making it possible for scientists to interact with complex fields, not observable in other ways. For example, a citation network map can provide a topic topology overview of a complex field that details key contributions and how they are related. Novel visualization techniques assist with interdisciplinary research analytics and map common (and distinct) topics across publications from different disciplines. Representing is a valuable way to map knowledge developments over time, as well as trends of topics of interest in the research literature (Greenhalgh, 2004; Snyder, 2019).

Pickering (1994) notes that “representationalism takes it for granted that the defining characteristic of science is its production of representations of nature, facts and theories” (p. 413), quoted in Gond et al. (2020). Representing typically examines the co-authorships, citations, and themes that occur in a field. Various authors highlight that an important outcome of review research is to “map and to assess the existing intellectual territory” (Tranfield et al., 2003, p. 208), produce a “knowledge map, which analyzes and synthesizes prior literature” (Frank & Hatak, 2014, p. 103), “to conceptually assess and map the state of the art of a nascent field” (Hoon, 2013, p. 544).

When several hundreds or even thousands of works on a topic exist, computational and bibliometric methods become increasingly necessary to identify key themes that have been impactful in terms of informing research agendas (Anderson & Lemken, 2023; Antons et al., 2023). Reviewers then undertake a quantitative, bibliometric assessment of the academic quality of journals, articles, or authors by indicators such as citation rates. Relevant data are extracted, such as the publications' contents, references, citations, and (co)authorships. Analysis of a set of publications in the domain is based on quantitative indicators such as its evolution over time, number of citations, most prolific authors, etc. (Antons et al., 2023).

As an example of representing, Zha et al. (2020) produce a visual map of the knowledge structure and thematic distribution of the literature on brand experience and provide a roadmap for future brand experience research. Similarly, Vogel and Güttel (2013) generate a visual citation network map of the dynamic capabilities view in strategic management. Several clusters of thematically related research are extracted from bibliographic networks, representing interconnected yet distinct subfields of inquiry. These citation and co-citation network analyses are used to find relationships between cited publications and the set of publications that cite the research (e.g., co-citation analysis, coupling analysis, collaboration analysis or co-occurrence analysis) to create visual representations of the publications network. Extraction of themes from the underlying network are used to discover current research areas as well as their evolution through time (Anderson & Lemken, 2023).

(3) Problematizing

Following Alvesson and Sandberg (2011), problematizing is defined as “a methodology for identifying and challenging assumptions underlying existing literature, and based on that, formulating research questions that are likely to lead to more influential theories.” To problematize means to “challenge the value of a theory and to explore its weaknesses and problems in relation to the phenomena it is supposed to explicate” (Alvesson & Kärreman, 2007, pp. 1265−1266). This purpose of review research aims to extend conceptual knowledge and practice by critically appraising and challenging the assumptions underlying existing theories. For example, Breslin and Gatrell (2023, p. 152) note that researchers “review the current body of literature, to identify a tension or opposition, which represents the starting point for novel theorizing.” Search is often undefined and subjective. Sources are selected for the review when they reveal prior theoretical omissions or inconsistent findings and anomalies and are “incomplete, inadequate, or incommensurable (Locke & Golden-Biddle, 1997; Rowe, 2014)” (Breslin & Gatrell, 2023, p. 152). Through critical analysis of a selected set of sources, reviewers attempt to surface and challenge the assumptions held by authors and taken-as-givens within the field and to “reveal weaknesses, contradictions, controversies, or inconsistencies” (Aguinis et al., 2023, p. 52). They next problematize the existing “position,” demonstrating the consequences of such assumptions or alternative assumptions on theorizing, then “problematize a field's key constructs or theories, and reset priorities in ways that can produce subsequent paradigmatic turns” (Gond et al., 2020, p. 15).

Problematizing lays out compelling, logical arguments to examine flaws, contradictions, interdependencies, (un)warranted assumptions, overly limiting boundary conditions or previously unquestioned assumptions that have a social or ethical dimension. Alvesson and Sandberg (2011) offer several types of assumptions which can be challenged, such as those within a specific school of thought (“in house”), broader images of a particular subject matter underlying existing literature (“root metaphor”), ontological, epistemological and methodological assumptions underlying existing literature (“paradigm”), political-moral and gender related assumptions underlying existing literature (“ideology”), and assumptions about a specific subject matter that are shared across different theoretical schools (“field”).

Iszatt-White and Kempster (2019), as one example, address what authors of published work perceive to be substantive flaws in the construct authentic leadership. They critically evaluate the development of the construct and propose the need for a radical re-grounding of authentic leadership. In another example, Gond et al. (2016) provide a critical review of performativity and reveal the uses, abuses and under-uses of the concept by organization and management theory scholars.

Problematizing is primarily an “endeavor to know how and to what extent it might be possible to think differently, instead of what is already known” (Foucault, 1985, p. 9; cited from Alvesson & Sandberg (2011), p. 253). The approach requires the skillful use of language to persuasively create a critical account designed to convince a particular audience. It develops a point of view or “position” and then offers reasons to support that position by forthright claims and undermining of the opposing position (Breslin & Gatrell, this issue). Without reviews that challenge the assumptions that underlie existing theories, it is not possible to problematize them or construct research questions that may lead to the development of more interesting and influential theories (Breslin & Gatrell, this issue).

(4) Configuring

Configuring in review research is an approach that helps researchers look for patterns across multiple studies to better understand the combinations of factors and conditions that result in changes or other outcomes in some situations and not others. It enables the analysis of multiple existing studies in complex situations. It can be used in situations where there are either too few cases or insufficient homogeneity to apply conventional statistical analysis (see aggregating below). Configuring accommodates both quantitative and qualitative studies. It requires in-depth knowledge of the studies included in the review but is also capable of generating findings that can be generalized to other situations by means of developing and examining theory (Pawson, 2002a).

The first step involves the development of a nascent theory of change. Alternatively, an existing theory of change can be used. The task of the researcher is then to examine the combinations of initial conditions that lead to the outcomes of interest (Fiss, 2007; Ragin, 2000). The search involves finding all relevant studies, both qualitative and quantitative. These studies are then treated as “cases” (e.g., individuals, organizations, societies, policies, events), of a family of equivalent (but not identical) manifestations of the phenomenon of interest whose purpose is to test, revise, and refine a preliminary theory (Denyer et al., 2008). The project is based on case knowledge, whereby the researcher seeks to observe how different attributes of the cases consistently fit together to produce an outcome (Ragin, 2000). Configuring locates causal powers in the “combination” of attributes of these cases, leading to the outcome of interest (Ragin & Becker, 1992). It is possible that more than one set of conditions can lead to the observed outcome. Configuring compares studies with different sets of causally relevant conditions to identify the decisive configurations, and thereby unravel causal complexity (Ragin, 2008).

In one example of this approach, Denyer et al. (2008) undertook review research on High Reliability Organizations (HROs) (Roberts, 1990). By comparing studies of HROs in different contexts, Denyer et al. (2008) produced a set of propositions that explained what HRO practices in what conditions produced desirable organizational outcomes. The study by Denyer et al. (2008) highlights the potential of using qualitative comparative analysis in review research. Dust and Ziegert (2016) suggest that the context determines the effectiveness of a particular multi-leader team configuration, because each formation has unique internal team mechanisms. Zimmermann (2011) offers a configurational perspective on relationships in transnational, virtual teams (TNTs) and reveals how several characteristics of the team structure, organizational context, and socio-political environment may facilitate or inhibit relationship aspects. Ellwood et al. (2017) adopt a theory-led, realist synthesis of innovation speed research to develop a new time-based framework for categorizing the innovation-speed literature.

A similar form of theory-led review research, termed realist synthesis, has been used in other social science fields, such as criminal justice, to inform evidence-based policy and practice (Pawson, 2002a). The approach aims to “articulate underlying program theories and then interrogate existing evidence to find out whether and where these theories are pertinent and productive” (Pawson, 2006, p. 74). Each study of the phenomenon of interest is regarded as a case and the reviewer compares and contrasts the cases to identify the “combinations” of attributes (attribute sets) that explain the presence and absence of the outcome (Denyer et al., 2008). The result is a “model, explaining for whom, in what circumstances, in what respect and why, certain interventions produce preferred outcomes” (Pawson, 2002a, p. 346).

(5) Aggregating

We define aggregating in review research as the extraction of data from multiple, comparable, independent studies for the purpose of measuring the effect or covariation among independent and dependent variables. Given that aggregating projects seek to combine individual variables from multiple studies with outcomes of interest, it is crucial that variables are homogenous (Aguinis et al., 2023). Where possible, meta-analysis is used in aggregative reviews to increase the power and precision of estimates of treatment effects and can address questions such as the “Direction of effect? Size of effect? Is effect consistent? Strength of evidence of effect?” (Aguinis et al., 2023, p. 52).

Aggregating must be accomplished by a review method that is designed and operationalized to minimize “bias” or “subjectivity.” Being thorough, careful, systematic, and logical contributes directly to this outcome (Briner et al., 2009). Such reviews begin with a predetermined scope of review questions and sub-questions. A comprehensive search is then performed to find all relevant studies. Explicit criteria are used to include and exclude studies, and usually include research beyond studies found in prestigious journals. Depending on the specific purpose, unpublished research studies found in the “grey” literature may be taken into account (Adams et al., 2017). A comprehensive set of recommendations for collecting studies can be found in Higgins et al. (2019). Established standards to critically appraise study quality are employed to ensure that the review research is based on the best available evidence. Specific methods for extracting and synthesizing study findings are developed and made explicit (Denyer & Tranfield, 2009). Data are then extracted, for example, from surveys or experimental trials contained within the review sources. The data are then combined/aggregated using statistical techniques to produce a single estimate of the effect under consideration (i.e., independent variables). Aggregating collates all that is known on a given topic and identifies the basis of that knowledge. The approach captures findings from the studies included in the review about the “outcome” or “output” or “result” or “effect” (i.e., dependent variable) that needs to be explained (Aguinis et al., 2023).

For example, a meta-analysis by Miller and Cardinal (1994) developed a comprehensive model that drew upon prior contingency frameworks that could explain the strategic planning and firm performance relationship. They found that methods factors accounted for most of the variance in the relationship. In another example, Miller et al. (1991) conducted a meta-analysis on the technology-structure relationship by examining popular and neglected moderators. The motivation for their aggregation study was that prior scholars studied single variables at a time.

In many fields of science, aggregating starts “with a practical question (rather than with a ‘body of literature’)” (Rynes & Bartunek, 2017, p. 252) and is employed to inform policy and practice by examining the efficacy of actions and interventions to improve practice. For example, Mulrow (1994) argues that aggregating can “establish whether scientific findings are consistent and can be generalized across populations, settings, and treatment variations, or whether findings vary significantly by particular subsets” (p. 597). Through aggregating, review research can resolve controversy between conflicting findings and provide a reliable basis for decision making (Tranfield et al., 2003).

(6) Integrating

Integrating in review research seeks to synthesize and build linkages and relationships across previously disconnected studies or schools of thought, such that new frameworks and perspectives on the topic are generated. Integrating seeks to merge ideas and findings from related areas (Macpherson & Jones, 2010, p. 109). Baumeister and Leary (1997) argue that “good literature reviews must be an integrative endeavor” (p. 316). Dwertmann and van Knippenberg (2020) suggest that the “unique value of [integrating] for organizational research is the potential to provide theory development, critique, and integration across different areas of study … (p. 105). Through integrating, review research highlights the contrasting and complementary ways that researchers have studied the same or similar topics and connects different streams of literature (Durand et al., 2017). Cronin and George (2023) contend that a “thorough and well-written integrative review synthesizes the current state of knowledge of a topic and brings together different ‘conversations’ (Huff, 2009) thereof that might be rooted in different paradigms” (p. 168).

Integrating is particularly important in ambiguous and complex fields and phenomena that cannot necessarily be well understood from a single perspective. Diversity and a plurality of perspectives is a strength, but can also lead to a lack of interaction and productive dialogue between research groups (Tranfield & Starkey, 1998). Integrating aims to draw connections across research domains and find ways to establish dialogue between different approaches (Tsoukas, 2009). In emergent research areas, integrating can connect research findings from disparate sources in original ways so that a new perspective or phenomenon emerges. In mature research areas, review research can help bridge fragmented areas where different research traditions are not sufficiently informing and drawing from each other.

Integrating involves linking theoretical angles that are embedded in and stem from different research paradigms (Burrell & Morgan, 1979) and approaches (Hardy & Clegg, 1997). It provides opportunities to map out important areas of agreement and disagreement, as well as uncover new insights and new research requirements. Constructively contrasting an emerging paradigm with an established domain can enable communication between different theoretical worldviews and reduce fragmentation of management theories (Chen & Hitt, 2021; Donaldson, 1998). Integrating review research might trace the historical development of a new perspective and reflect on how the perspective developed from and is embedded in social and intellectual processes. For example, as noted by Suddaby (2010), management scholars “often ‘borrow’ concepts from other disciplines, such as psychology or biology” (Whetten et al., 2009) or “take constructs developed at one level of analysis, such as the individual, and apply them to another level of analysis, such as the group, team, or organization (Floyd, 2009)” (pp. 348–349).

Examples of integrating include the following: Mowbray et al. (2015) connect studies on employee voice from a diverse range of disciplines; Miller et al. (2014) integrate studies from divergent theoretical perspectives and review the major enablers and barriers to corporate rebranding, with special attention to contextual factors; Menz (2012) integrates the dispersed knowledge about functional executives such as chief financial officers, chief information officers, chief marketing officers, and chief strategy officers in different fields; and Menz et al. (2015) synthesize the knowledge about the corporate headquarters in the fields of management and international business and develop an integrative framework.

Integrating requires the ability to draw meaning from seemingly disconnected ideas. Recognizing and engaging in dialogue and debate between a novel perspective and other alternative theoretical approaches (Hardy & Clegg, 1997) requires reflexivity (Alvessonet al., 2008; Johnson & Duberley, 2000). Reviewers using this approach need to have skill in “reading between the lines” and “connecting the dots” between different groups of literatures. They also need to reflexively step out of their own world-view, learn new vocabulary and methods, and view the topic from alternative perspectives.

(7) Interpreting

Interpreting in review research is defined as the critique and synthesis of independent studies covering a phenomenon of interest by means of reviewers creating and associating their own subjective and intersubjective meanings as they interact with the literature. Interpreting involves attempts to understand phenomena through accessing the meanings that authors of the primary studies and the interpretive reviewer both assign to them. Interpretative approaches such as meta-ethnography aim to identify and translate similar concepts, meanings, metaphors and mechanisms across studies to provide a richer account of a particular phenomenon (Noblit & Hare, 1988).

Interpreting may offer understanding for unexpected or anomalous findings that emerge from research or may help generate more comprehensive and generalizable theory (Gond et al., 2020). Interpreting may also provide insights into the reasons why interventions succeed or fail and, as such, may usefully inform the implementation of interventions and programs (Denyer & Tranfield, 2006). With interpreting, search and selection processes are similar to the principle of saturation in grounded qualitative research (Breckenridge & Jones, 2009), whereby the reviewer determines when there are adequate studies included in the review, such that the inclusion of further studies would not materially alter the view that is developed of the structure of the field, or of the key insights, or the overall conclusions (Denyer & Tranfield, 2006).

Meta-ethnographic studies are still rare in management scholarship. An example outside of management by Toye et al. (2017) explores healthcare professionals' experiences treating chronic non-malignant pain by conducting a qualitative evidence synthesis. In another example, Daker-White et al. (2021) produce a synthesis of qualitative studies concerning Infection Prevention and Control (IPC) in care homes for older people.

Interpreting requires a considerable immersion of the reviewer in the individual studies to achieve a synthesis (Godfrey et al., 2016). This is, as noted by Gond et al. (2020), “a performative endeavor characterized by a dual process of re-presenting the literature—constructing an account that is different from the existing literature—and intervening in the literature—adding to the literature and potentially transforming it” (p. 2). Some interpretative techniques, such as meta-ethnography, enable a body of qualitative research to be drawn together in a systematic way (Noblit & Hare, 1988). The process of reciprocally translating the findings from each study into those from all the other studies in the synthesis, if applied rigorously, ensures that qualitative data can be combined “into higher-order theoretical constructs” (Rynes & Bartunek, 2017, p. 252). Following this essential process, the synthesis can generate new interpretations and theories that go beyond the findings of the individual studies synthesized (Campbell et al., 2015; Popay et al., 2006), whilst preserving the interpretive properties of the original studies.

(8) Explaining

Explaining in review research is defined as the identification and analysis of observations, patterns, uniformities, causes, or trends that have not been previously identified in the individual primary studies. Explaining advances conceptual knowledge and practice by illuminating important “hows,” “whys,” and links between causes and effects or correlations and pieces them together (Denyer & Tranfield, 2006). Explaining moves beyond description of a phenomenon to explain why it exists or what causes it. For example, it might explain patterns between seemingly unrelated dimensions, or explore the root causes behind real world events, and try to predict what will happen next (Rousseau et al., 2008). Explaining is an “opening up exercise” that enables researchers to reimagine existing literature in ways that generate new and “better” ways of thinking about specific phenomena (Alvesson & Sandberg, 2011). Explaining is a creative activity (Torraco, 2005), involving disciplined imagination (Weick, 1989) and is always based on and bounded by researchers’ assumptions about the subject matter in question.

An example of explaining in review research is found in Dada (2018), who produced a comprehensive model of entrepreneurial autonomy in franchised outlets. The review reveals the core factors and associated secondary factors that are important for understanding franchisee entrepreneurial autonomy. Kunisch et al. (2017) also provide an example. The authors analyze and synthesize the existing knowledge about strategic change through a time lens. Another example is Cundill et al. (2018), who use data from the literature to produce a process model of non-financial shareholder influence. Underpinned by the influencing context, this conceptualization centers on three primary shareholder interventions: divestment, dialogue, and shareholder proposals. In yet another example, Mergen and Ozbilgin (2021) produce a morality-based process model of followers’ continued participation in the toxic game. The review frames the dynamic system that sustains toxic leadership by means of continued support of the followers.

Explaining is often driven by a particular question or set of propositions. The search method is often undefined and subjective, but also systematic. Reviewers selectively draw on established sources that contain linked ideas that, in turn, provide a new explanation of a phenomenon under consideration (Rousseau et al., 2008). The synthesis then draws on existing studies to explain why a pattern or uniformity exists. Reviewers generatively combine existing ideas from multiple studies to provide a new model, framework, or other unique contribution that can generate new interpretations and theories that go beyond the findings of the individual studies synthesized (Campbell et al., 2015). Studies are compared against each other and against the outcomes of interest. Reviewers identify hidden or “exogenous” variables that may have influenced the results and thereby spell out contextual conditions under which a proposed explanation will or will not apply.

Rigor and Impact

The plurality of purposes in review research from various epistemological and ontological traditions means that there is no single set of criteria to assess their quality. However, as with other research (Shrivastava, 1987), and based on contemporary social science scholarship (Aguinis et al., 2014), it is reasonable to assume that all forms of review research aim for both

While all review research needs to consider the different aspects of rigor and impact, the specific criteria and methodological implications can differ across the different review purposes. The goal for scholars should thus be to achieve purpose-method fit (i.e., methodological fit), which refers to the internal consistency among elements of a research project (Edmondson & McManus, 2007; Gehman et al., 2018; Knight et al., 2021).

As noted by Simsek et al. (2021), “researchers need to be clear about the purpose of their review from the outset” (p. 21); in other words, purposes and hoped-for outcomes should guide the design and implementation of review research. Assessment criteria can be developed based at least in part on how the rigor and impact of the review research will be evaluated by the intended audiences (academic, policy and/or practice), based on the purposes pursued. Once the assessment criteria have been considered, the review methods and approaches should support achieving the review outcomes. Table 2 provides an overview on how the assessment criteria and methods for rigorous and impactful research ought to be aligned with the purposes. We introduce the six aspects of rigor and impact, and exemplify how these can be aligned with the particular purpose(s) of the review research endeavor in the following section.

Alignment of Purposes and Methods to Produce Rigorous and Impactful Review Research.

(1) Design

Design concerns the overall strategy or plan for the review research (Gough et al., 2012). Review research should be seen as “a self-contained research project in itself that explores a clearly specified question” (Briner et al., 2009, p. 671). While review research, like all research (Huff, 2009), follows several general steps (e.g., Tranfield et al., 2003), the approach, specific methods and techniques vary depending on the purpose (and intended outcomes) of the review. For example, Kraus et al. (2020) argue that reviews should follow “a transparent and reproducible methodology in searching, assessing its quality and synthesizing it, with a high level of objectivity” (p. 4). The rigor of the review research demands that the methodology chosen is well explicated and justified. Hiebl (2021) argues that well-conducted review research—including sample selection—can be “summarized into the following three widespread and accepted features: (a) structured, (b) transparent, and (c) comprehensive” (p. 3). However, there are differences between types of review research based on the degree to which the review design needs to be systematic and the search for relevant literature needs to be exhaustive (Denyer & Tranfield, 2006).

A key marker of rigor, particularly in aggregative and explanatory reviews, is the selection criteria used to include or cite information sources in the review. Over time, research review designs have become standardized with processes and criteria to ensure rigor (Tranfield et al., 2003). For example, much of the writing on systematic reviews in evidence-based practice focuses upon the degree to which review research follows an appropriate

Searching for relevant literature usually reaches a point of saturation when the value of what is still to be discovered is likely to be marginal. For example, Greenhalgh and Peacock (2005) showed that in searches of complex and heterogeneous evidence (such as studies of the diffusion of innovation) formal protocol-driven approaches may fail to identify important evidence. They found that snowball methods such as pursuing references of references produced the greatest yield of relevant articles, and informal approaches such as browsing, “asking around,” and being alert to serendipitous discovery can also substantially increase the efficiency of search. Some review research purposes, particularly integration, are inclusive of a broad range of study types and must cope effectively with the sheer variety of information and knowledge embedded in academic fields, the lack of a common shared understanding of disciplinary knowledge, and differences in research questions, methods, data, and analysis procedures. In a fragmented field (Tranfield et al., 2003), increasing the comprehensiveness of a search will reduce its precision and will retrieve more non-relevant articles, increasing the time and resources required. Increasing the size of the pool from which studies are selected does not, in itself, guarantee an increase in the likely validity of a review's findings (Hammersley, 2001).

Judgements and trade-off decisions must be made regarding the time and resources that should be allocated to a comprehensive search and being systematic in the steps of reviewing research literatures and the task of deeply engaging with and making sense of the literature. Further, review purposes such as problematizing and interpreting require careful reading and assessment, insisting that a review is a hermeneutic rather than mechanical task (Hammersley, 2001). Rigidly following a predetermined plan or protocol can result in overly mechanical research reviews, whereby the reviewer faithfully follows a set of prescribed steps, but the result is a superficial reading of the material found.

Design can also refer to who is involved in the review process. For example, Sharma and Bansal (2020) show the complexity of designing processes that enable researchers and managers to collaborate on creating joint reviews. This requires planning ways to manage tensions between academics and managers throughout the process. There may also be tensions between academics operating out of different paradigms for review research, and some of the design approaches discussed by Sharma and Bansal may apply in these instances as well.

(2) Execution

Execution is about demonstrating operational competence in collecting the studies for and then conducting the review research, especially in terms of appropriate collection of studies and proficient use of appropriate analytic techniques (e.g., meta-analysis). For example, Villiger et al. (2022) contend that coding in a review “is susceptible to high degrees of subjective decision-making, […] the same rigor called for during data collection for primary research is likewise appropriate for the coding process in meta-analyses” (p. 730). The qualities of being thorough, transparent, replicable, and logical contribute directly to this outcome (Tranfield et al., 2003). Aguinis et al. (2023) also argue for “transparency and clarity of communication and reporting” (p. 58). Similarly, Antons et al. (2023) refer to “the need for systematic, replicable, and rigorous literature reviews, but also highlight the natural limits of human researchers’ information processing capabilities” (p. 107). Some approaches, such as aggregation, are performed in ways that minimize “bias” or “subjectivity.” As with other research, processes should be described in enough detail and be documented in such a way that the same procedural steps should be able to be replicated in the sense that two researchers asking the same question would employ the same methodology, albeit possibly reaching different conclusions. Disclosing such information in the review is important and increases the transparency and trustworthiness of the research findings. Simsek et al. (2021) summarize this “systematicity” as “an encompassing orientation toward the application of explicit methods in the practice of literature reviews, informed by the principles of transparency, coverage, saturation, connectedness, universalism, and coherence” (p. 2).

Inductive qualitative researchers have rejected the notion of objectivity as it is regarded in positivist approaches as appropriate for their work. Rather, following the lead of Lincoln and Guba (1985) they argue that it is much more appropriate to assess the trustworthiness, reliability, and authenticity of research. To accomplish this, in interpretative review research the aim is to encourage researcher reflexivity, essentially researchers’ insight into their biases and rationale for decision-making as the synthesis progresses. As noted by Gond et al. (2020), rather than seeing “biases” as “problems” to be ruled out, their performative take on reviewing “suggests instead working productively and mindfully with them, as they offer powerful ways to develop a field—rather than trying to avoid or solve them at all costs” (p. 23).

Problematization reviews are necessarily selective because studies included in the review are carefully chosen by the reviewer to support a specific position or argument. In both interpretative and problematization forms of review research, authors combine and bring to bear their knowledge, wisdom, and skill to read, frame, and review the literature. By necessity, they build on the knowledge and experiences authors bring to the task. These approaches to review research require considerable immersion of reviewers in the individual studies in order to achieve a synthesis (Godfrey et al., 2016). From this perspective, bias and subjectivity are highly valued. Reviewing is akin to what artists do when they paint a picture or what composers do when they pen a sonata. Reviewers put their thoughts to paper, pulling together ideas, insights, and information from a variety of sources with the intent of creating a coherent narrative. The skill of producing high-quality review research distinguishes the expert reviewer from the novice.

(3) Analysis

Analysis concerns the depth, richness, and appropriateness of the review, and whether, when analyzed, the studies included in it provide enough evidence to answer the review question(s) (Tranfield et al., 2003). Analysis is the job of breaking down articles included in the review into component parts and describing how they relate to each other—it is not random deconstruction but a disciplined examination (Hart, 1998). The focus of the analysis varies according to the research review project being undertaken. As we have discussed, in review research what constitutes relevant information, data, and evidence to be extracted from published research can take multiple forms (Tranfield et al., 2003). Analysis can be portrayed in tables, charts, and typologies often found in the graphical displays, diagrams, and maps found in representational approaches. Villiger et al. (2022) argue that analysis “should be rigorously planned (i.e., cohere with the research objective), conducted (i.e., make reliable and valid coding decisions), and reported (i.e., in a sufficiently transparent manner for readers to comprehend the authors’ decision-making)” (p. 716). Rigor is demonstrated in clear links between information gleaned from the studies included in the review and the findings of the review research. Researchers can show how their findings arose by detailed descriptions of the process and presentation of the findings (Breslin & Gatrell, 2023), whereby “authors identify gaps, connections, or insights that are molded into a new contribution” (p. 158).

Depending on the review purpose, analysis can be described in different ways. With an aggregative approach it is common practice for several researchers to conduct the analysis independently of each other and then compare their findings, calculating an inter-rater relationship (for rating qualitative judgements, see Perreault & Leigh, 1989) as a quality marker. Palich et al. (2000) provide an application of the Perreault and Leigh index of inter-rater reliability in their examination of three competing models of the diversification-performance relationship. In contrast, in an interpretative approach deep and insightful interactions with the data are highly valued (Noblit & Hare, 1988). Researchers must employ imaginative insight as they attempt to make sense of the data and generate understanding and theory (Weick, 1989). Thus, research syntheses often depend upon the researchers’ creative interpretation of the studies included in the review. To support the research process, reviewers need to surround themselves with data, both as a source of empirical information and inspiration to trigger imaginative insights (Denyer & Tranfield, 2009).

Context is not always considered in review research. Aggregative reviews that combine findings from quantitative experimental studies tend to be devoid of contextual considerations since the original studies are similarly devoid. In aggregative synthesis it is also common to use a test for homogeneity to determine if the results of the studies included are sufficiently similar to warrant their combination into an overall result. Differences of concern include those in participants, interventions or outcomes, study designs, or intervention effects or results. If context is discussed it is usually handled in the narrative discussion of findings rather than in the synthesis itself. This lack of attention to context has led to criticisms (Denyer & Tranfield, 2009; Hammersley, 2001; Pawson, 2002a) leveled against systematic reviews and meta-analyses which restrict the types of research designs considered. Both the Cochrane and Campbell collaborations have encouraged the incorporation of qualitative data into systematic reviews that allows attention to context.

In contrast, in an interpretative project contextual features may form categories by which the data can be compared, contrasted and translated across original studies to facilitate interpretation (Popay et al., 2006). Configurational analysis considers context as integral to the study and maintains that empirical co-occurrence of particular contextual conditions (e.g., aspects of an intervention and the wider context) and the outcome of interest provide the potential for inferring causation (Charles, 1997; Denyer & Tranfield, 2009). The focus is on what works, for whom, under what conditions, why, and how (Pawson, 2002b).

(4) Synthesis

Synthesis is “the composition or combination of parts or elements so as to form a whole” (Merriam-Webster, 2021). It involves recasting evidence and arguments into new, appropriate arrangements. The product of synthesis might be frameworks, typologies or models, but synthesis also pertains to the strength of a line of argument, quality of reasoning, application of logic, critical thinking, interpretation, and theorizing underpinning claimed contributions. Synthesis involves combining the data, insights or arguments from the studies included in the review into a new or different arrangement (Dwertmann & van Knippenberg, 2020). The product of aggregative synthesis can be an understanding of the “true” effect of interventions and might include summary statements of effectiveness, like those mathematically displayed in meta-analyses (as an effect size). Briner et al. (2009) argue that the “most appropriate method of synthesis depends on the types of evidence reviewed, which in turn depend on the review question. It seems likely, given the idiosyncratic features of management and organization studies, that a range of approaches will be required” (p. 26).

In an interpretive project the aim of synthesis may be holistic explanation or understanding of a phenomenon which is deepened by translating findings from multiple studies. In an explanatory synthesis, the aim is to produce a conceptual framework or theory expected to be applicable beyond the original study (Denyer & Tranfield, 2006; Popay et al., 2006). An explanatory approach, termed realist synthesis (Pawson, 2002b), seeks to identify the generative mechanisms whose properties are causal and, depending on the situation, may or may not be activated (Pawson, 2006). Interpretive syntheses involve induction and interpretation, and are primarily conceptual. As such, the synthesis might be a theory or theoretical framework. Interpretative, explanatory and configurational syntheses can produce a theoretically generalizable mid-range theory that explains variation across studies (Merton, 1967).

Synthesis goes beyond a narrative description and summary of the studies included in the review. It also recasts information gleaned from studies to interpret findings in new ways, reveal previously unnoticed connections or counterintuitive findings (Breslin & Gatrell, 2023). Authors of reviews infer, theorize, and conjecture, and through problematization can challenge taken for granted assumptions held in the field in order to shed fresh insight on a topic (Alvesson & Sandberg, 2011). Synthesis, particularly in interpretative and problematization approaches, also pertains to the strength of a line of argument, quality of reasoning, application of logic, critical thinking, interpretation, and theorizing underpinning claimed contributions. This may even involve ‘persuading’ readers and reviewers (Wallace & Wray, 2011).

Interesting review research (Davis, 1971) challenges mainstream assumptions (Alvesson & Sandberg, 2011). A key aspect is a detailed explication of how the findings of the review research add to the existing knowledge through new interpretations. In an integrative project, researchers often take a practical and pragmatic approach to their literature reviews, sometimes borrowing or transforming concepts from other practice theories, or importing them from other disciplines (Whetten et al., 2009). Scholars frequently use bricolage (Weick, 1995), assembling various building blocks of literature into new explanations.

(5) Contribution

Central to review research is the idea of contributing above and beyond the individual studies. For example, Elsbach and van Knippenberg (2018) argued that “well-executed integrative reviews probably offer a stronger basis for conclusions that represent the state of the science in a field than any other form of research” (p. 3). Thus, while review research necessarily depends on individual study contributions that it includes in the analysis, it aims to contribute (to management and organization studies) insights “of a scope and theoretical level that individual empirical reports cannot normally address (Baumeister & Leary, 1997, p. 311). Short (2009) suggested that “review articles offer perspectives to the management field not commonly presented in other outlets. Consequently, review articles have the potential to provide contributions akin to valuable works of art” (p. 1312). Snyder (2019) contended that “a literature review can address research questions with a power that no single study has” (p. 1).

Review research necessitates an original contribution on its own and can take multiple forms. That is, just as empirical research may use multiple types of qualitative and quantitative methods, and just as theoretical contributions may take many forms (cf. Cornelissen et al., 2021) the contributions of review research are also diverse. The contributions of review research can be conceptual/theoretical, empirical, or methodological in nature. Conceptual/theoretical contributions in a classification project might be improved conceptual definitions of the original constructs. In an integrative or explanatory review, they might include the development of new theoretical linkages with their accompanying justification or development of improved theoretical rationale for existing linkages. These contributions are realized when review research reveals “what we otherwise had not seen, known, or conceived” (Corley & Gioia, 2011, p. 201). Thus, review research can result in contributions that are new, novel, unique and/or revelatory. These contributions extend well beyond gap spotting approach (Alvesson & Sandberg, 2011), which Alvesson and Gabriel (2013) describe as “a missing brick in a wall that the researcher diligently provides” (p. 248).

Empirical contributions in an aggregative review might accrue from testing theoretical relationships between two constructs that have not previously been tested or the examination of the effects of a potential moderator on the relationship between two constructs or between competing theories. This form of contribution in review research may be concerned with the replication, confirmation, or verification of previous work. Many commentators overlook the importance of this contribution. For example, Wright (2015) suggests that “no top-tier journal can afford to waste valuable space on papers that simply reiterate what the field already knows” (p. 766). However, the consolidation and verification of research findings are critical to evidence-based practice and in some fields the findings of systematic reviews are regarded as an important means of overcoming the limitation of single, one-off studies (Briner et al., 2009). As Evanschitzky and Armstrong (2013) suggest: “If medicine used the same practice, researchers might test many treatments and occasionally discover some of them useful by chance. Teachers should be wary of including the findings of one-off studies in their curricula, and researchers need to recognize that such findings rest on a weak foundation” (p. 1407).

Methodological contributions (Bergh et al., 2022) might include increasing the generalizability of the research findings through aggregating data and employing new sampling procedures. Reviews that are inclusive of different types of research have the potential to address problems with shared method variance through the inclusion of studies with multiple methods of measurement.

(6) Utilization

Utilization refers to the impact of the review on academia, such as the setting of new research agendas, and/or the impact on practice, policy and society, such as informing decision making and action. As noted above, knowledge produced in review research is likely to result in a conceptual contribution that is indirect and cognitive (Beyer & Trice, 1982) and occurs when research changes how people think about an issue. Common to all eight of the review research purposes is the desire to ask research questions as well as solve them.

Review research might not only consider prior literature to determine what we know, but also unearth what we do not know (Tranfield et al., 2003). Review research develops future research agendas on which other researchers can build. Linnenluecke et al. (2019) claim that review research helps in “distinguishing what has been done from what needs to be done; identifying the main outcomes of and methodologies used in prior studies; and avoiding fruitless research” (p. 3). The research agenda typically provides meaningful directions for future research with reference to theory, key constructs, propositions, methodology and potentially novel methods, and/or context (Durand et al., 2017). Integrative reviews, in particular, can help promote agendas for multi-disciplinary perspectives and cross-disciplinary investigations (Cronin & George, 2023). Review research can also contribute to teaching, especially teaching doctoral students. Not only do reviews provide a compilation of disciplinary knowledge, they can also encourage students to be critical, creative thinkers who learn how to connect and synthesize knowledge from reading review research.

To this end, Cronin and George (2023) argue that review research generally serves two purposes: “

Review research can also make scientific knowledge accessible and relevant to practitioners, policy makers and other users outside of academia (Tranfield et al., 2003). As such, the “general consensus across all fields interested in evidence-based practice is that a synthesis of evidence from multiple studies is better than evidence from a single study” (Briner et al., 2009, p. 24). This objective may be enhanced by involving managers in the review process (Sharma & Bansal, 2020, p. 2). The aim of review research for evidence-based management is produce evidence that are generalizable to multiple contexts and can be used to make reasonable predictions of future events (Rousseau et al., 2008). In many social science fields, research utilization has been enhanced by reviewing fields of literature in order to synthesize and convey essential collective wisdom from existing research studies to professional practice (Tranfield et al., 2003). Briner et al. (2009, p. 29) argue that “in terms of practical utility, it is important to note that systematic reviews never provide ‘answers.’ What they do is report as accurately as possible what is known and not known about the questions addressed in the review.” Review research is only one form of evidence that can be used to inform practice (Briner et al., 2009), but as we have seen, it has the significant advantages of rigor and impact.

Conclusion

Producing rigorous and impactful review research is a demanding scientific endeavor that places different and often competing demands on the researcher. Producing such research is consistent both with the “context of discovery” (“the form in which [thinking processes] are subjectively performed”) and the “context of justification” (“the form in which thinking processes are communicated to other persons”) (Reichenbach, 1938, pp. 6-7; see also Popper, 1959, pp. 31-32). As we have seen in all eight purposes, there is always an interplay of discovery and justification in review research.

To use a metaphor from the context of law, a review researcher is a detective who asks probing questions and systematically searches for and collates the best available evidence from a crime scene to try to discover who the murderer is. The detective also needs the skill to painstakingly document, analyze and interpret the evidence in valid ways, describing and justifying what was performed and what was found when reporting the results. The researcher then takes on a second role that is analogous to the barrister who constructs a persuasive account and argues the case based on the available evidence. It is not only the quality of the evidence but the quality of the arguments about it that make the difference in a criminal trial. Figuring out the murderer is and proving the case in court are both critical processes that must function together, yet they require fundamentally different cognitive processes and practices.

As observed by Weick (1989, p. 516), theory “cannot be improved until we improve the theorizing process, and we cannot improve the theorizing process until we describe it more explicitly, operate it more self-consciously, and decouple it from validation more deliberately.” By providing a more explicit description of the purposes of review research and the criteria to assess its rigor and impact we hope that all reviewers can find paths to a more natural and organic link to the contexts of discovery and justification.