Abstract

We categorized and content-analyzed 168 methodological literature reviews published in 42 management and applied psychology journals. First, our categorization uncovered that the majority of published reviews (i.e., 85.10%) belong in three categories (i.e., critical, narrative, and descriptive reviews), which points to opportunities and promising directions for additional types of methodological literature reviews in the future (e.g., meta-analytic and umbrella reviews). Second, our content analysis uncovered implicit features of published methodological literature reviews. Based on the results of our content analysis, we created a checklist of actionable recommendations regarding 10 components to include to enhance a methodological literature review’s thoroughness, clarity, and ultimately, usefulness. Third, we describe choices and judgment calls in published reviews and provide detailed explications of exemplars that illustrate how those choices and judgment calls can be made explicit. Overall, our article offers recommendations that are useful for three methodological literature review stakeholder groups: producers (i.e., potential authors), evaluators (i.e., journal editors and reviewers), and users (i.e., substantive researchers interested in learning about a particular methodological issue and individuals tasked with training the next generation of scholars).

Keywords

Methodological innovations are accelerating due to new software, the speed of computers, the availability of Big Data, and new sources of qualitative and quantitative data (e.g., Bamberger & Pratt, 2010; Boyd & Solarino, 2016; Cortina, Aguinis, & DeShon, 2017; LeBaron et al. 2018; Meißner & Oll, 2019; Tonidandel et al., 2018). Together, these innovations mean that researchers need to expand their methodological toolkits on an ongoing basis. Accordingly, given the need to learn new methodological approaches and decreased resources invested in doctoral education as well as seasoned researcher retraining and retooling (Aguinis, Cummings, et al., 2020), it is not surprising that many journals publish literature reviews focused on methodological issues on a regular basis.

We define methodological literature reviews as articles that formally or informally review the existing literature regarding practices about methodological issues, summarize the literature, and provide recommendations for improved practice. These reviews offer three main contributions. First, they help substantive researchers, including doctoral students, improve their methodological repertoire (Wright, 2016). Second, by describing “how to do things right,” methodological literature reviews help address the challenge of questionable research practices (QRPs; Butler et al., 2017). That is, methodological literature reviews can be used by substantive researchers to learn how to apply a method and also to check whether specific practices are appropriate or considered a QRP. Similarly, journal editors and reviewers can use methodological literature reviews to identify and attempt to minimize QRPs and the exploitation of methodological gray areas in submitted manuscripts (Aguinis, Banks, et al., 2020; Aguinis, Hill, & Bailey, 2020). Third, methodological literature reviews help identify knowledge gaps and research needs, including not only methodological but also substantive innovations resulting from improved methodology (Kunisch et al., 2018).

Despite the aforementioned contributions, there is room for improvement regarding literature reviews due to the lack of clarity and thoroughness in describing the procedures used to conduct the review and derive the recommendations presented therein (e.g., Adams et al., 2017; Aguinis et al., 2018; Denyer & Tranfield, 2009; Jones & Gatrell, 2014; Kunisch et al., 2018). The pressure to publish in elite journals (Aguinis, Cummings, et al., 2020; Bartunek, 2020) is, to some extent, the culprit for insufficient clarity and thoroughness and the pervasiveness of QRPs in literature reviews given that authors’ motivation to publish may in some cases supersede their motivation to be transparent and clearly communicate judgment calls (Aguinis et al., 2018; Aguinis & Solarino, 2019; Bettis et al., 2016; Murphy & Aguinis, 2019; Schwab & Starbuck, 2017). Given their role as authoritative “how-to” resources, it is particularly important for methodological literature reviews to be clear about all procedures involved in deriving and presenting recommendations. Furthermore, because financial constraints often limit the methodological training offered to doctoral students (Aguinis et al., 2018; Byington & Felps, 2017; Schwab & Starbuck, 2017; Wright, 2016), lack of clarity on how the review was produced makes it harder for these future scholars to acquire the necessary declarative and procedural knowledge 1 to critically use and possibly produce different types of methodological literature reviews. In addition, given rapid advances in methodology, some journal editors and associate editors as well as reviewers may not be fully equipped to evaluate submitted manuscripts describing methodological literature reviews (Cortina, Aguinis, & DeShon, 2017), which is compounded by increased workloads due to the variety and quantity of manuscripts that are submitted (Caligiuri & Thomas, 2013; Corley & Schinoff, 2017; Jones & Gatrell, 2014).

The purpose of our article is to provide recommendations on what components to include in a methodological literature review to enhance its thoroughness, clarity, and ultimately, usefulness. Providing recommendations about what to include in a methodological literature review and how to present such information in a clear manner is of value for producers, evaluators, and users of methodological literature reviews (Aguinis et al., 2018; Jones & Gatrell, 2014). Without this information, potential authors lack sufficient guidance on how to produce such reviews, journal editors and reviewers evaluating such efforts are left questioning the trustworthiness of submitted manuscripts, and users are unable to determine whether they can rely on the accuracy of the recommendations offered.

Purpose and Approach

We categorized and content analyzed 168 methodological literature reviews published in 42 management and applied psychology journals. This process involved categorizing reviews into one or more of seven types: critical review, descriptive review, meta-analytic review, narrative review, qualitative systematic review, scoping review, and umbrella review. The content analysis involved uncovering implicit judgment calls and choices across the reviews. In other words, we uncovered the implicit choices authors of published methodological literature reviews have made—choices that led to a positive outcome, which is the publication of their articles in rigorous peer-reviewed journals. By focusing on reviews that received a “stamp of approval” from editors and reviewers after successfully navigating the peer-review process, we derived 10 latent factors and their 40 observable indicators that are associated with what are considered successful and rigorous (because they were published) reviews.

Our categorization and content analysis of published methodological literature reviews makes the following contributions. First, we uncovered that the majority of published reviews (85.10%) belong to three categories: critical, narrative, and descriptive reviews. This result shows that methodological literature reviews are fulfilling their role in helping develop a collective understanding of knowledge regarding an issue, highlighting inconsistencies, and outlining possible future research directions (Kunisch et al., 2018; Paré et al., 2015). But, we also found that few methodological literature reviews utilized data-integration approaches such as meta-analytic or umbrella reviews. As we describe in detail in the Discussion section, both of these review types provide unique and as of yet underutilized opportunities for future methodological and substantive advancements.

Second, as a result of our content analysis and identification of implicit features of published reviews, we provide a checklist of actionable recommendations on what components to include in a methodological literature review to enhance its thoroughness, clarity, and ultimately, usefulness. Our checklist also identifies particular features (e.g., scope of review, source of recommendations, software guidelines) that are unique to methodological literature reviews rather than literature reviews in general, and includes exemplars of published research that illustrate these features.

Third, our checklist can help address challenges regarding QRPs in the preparation of methodological literature reviews. Based on the performance management literature (Aguinis, 2019), research performance problems such as a lack of transparency and QRPs are a result of insufficient (a) knowledge, skills, and abilities (KSAs); or (b) motivation; or (c) both (Aguinis, Hill, & Bailey, 2020; Aguinis et al., 2018; Van Iddekinge et al., 2018). In other words, authors may engage in QRPs or avoid disclosing sufficient information when producing methodological literature reviews either because they lack sufficient KSAs on how to do so or because they do not wish to do so (i.e., lack of motivation). Our checklist addresses a lack of KSAs by providing future producers (i.e., potential authors) with declarative and procedural knowledge about what to consider, include, and disclose when conducting a methodological literature review. Specifically, we provide future producers with declarative knowledge on different types of methodological literature reviews and the goals addressed by each as well as procedural knowledge on how to utilize our checklist to inform the judgment calls and decisions made during the manuscript preparation process. Moreover, evaluators (i.e., journal editors and reviewers) can use the declarative and procedural knowledge in our checklist to evaluate methodological literature review submissions. The use of our checklist is also likely to influence authors’ motivation to avoid QRPs because they know their manuscripts will be more likely to be rejected if they do not transparently and clearly report information regarding judgment calls and decisions made during the production of the review. In addition, evaluators can use our checklist to provide feedback to authors on what components to include to increase transparency and reproducibility, thereby further reducing QRPs (Aguinis et al., 2018). Finally users, including substantive researchers interested in learning about a particular methodological issue and individuals tasked with training the next generation of scholars (e.g., doctoral seminar instructors), can use the declarative and procedural knowledge in our checklist to critically learn from—and also potentially produce—methodological literature reviews.

Fourth, we refer to critical areas where judgment calls must be made explicit. For example, our recommendations describe different approaches that can be used to: communicate the motivation for and importance of a methodological literature review, outline the scope of the review, and suggest best practices, which together will likely improve the chances of receiving a positive response from journal editors and reviewers (Jones & Gatrell, 2014). We describe choices and judgment calls found in published reviews based on our content analysis and provide detailed explications of exemplars that illustrate how those reviews made explicit those choices and judgment calls. Next, we describe our review.

A Literature Review of Methodological Literature Reviews

We followed a systematic and transparent six-step process as described by Aguinis et al. (2018) to identify literature reviews focused on methodological issues. As explained in more detail below, our process began with 100 journals, and the final list includes 168 methodological literature reviews published in 42 management and applied psychology journals. We then used an inductive and iterative process to identify the components included in methodological literature reviews. In the terminology of factor analysis, we derived 40 observable indicators and 10 latent factors that make explicit the implicit features underlying methodological literature reviews.

Step 1: Scope of Review

We conducted a critical review of the literature (Paré et al., 2015). That is, we examined the literature about a general issue (i.e., methodological literature reviews) to uncover challenges and generated knowledge that can aid future research in addressing those challenges (Paré et al., 2015). Accordingly, as is common in conducting a critical review (Paré et al., 2015), our process is designed to include a broad and representative but not necessarily comprehensive set of articles. Also, because methods evolve rapidly, we only considered reviews published more recently (i.e., between January 1, 1997, and July 31, 2018, including in-press articles).

In addition to being a critical review, our study also included policy-capturing methodology to identify and make explicit implicit variability across published reviews (Aiman-Smith et al., 2002; Karren & Barringer, 2002; Nokes & Hodgkinson, 2017). Thus, because our goal was to identify the implicit choices authors of published methodological literature reviews have made (i.e., choices that led to a positive outcome, which is the publication of their articles in rigorous peer-reviewed journals), our research design purposely included published and excluded unpublished manuscripts. In other words, we were specifically interested in making explicit the implicit features of published reviews to make it easier for researchers to conduct and successfully publish methodological literature reviews in the future.

Step 2: Journal Selection Procedures

Guided by

Step 3: Article Selection Procedures

We used a three-step process to identify methodological literature reviews, which are articles that formally or informally review the existing literature regarding practices about methodological issues, summarize the literature, and provide recommendations for improved practice. In the first step, we searched the full text of articles published in each of the 100 journals using the following seven keywords:

In the second step, the coders used a manual search process to examine the 500 most cited articles published between January 1997 and July 2018, as listed in the WoS management and applied psychology categories. We implemented this additional step to ensure that we did not overlook any highly cited methodological literature reviews that were published in journals not included on our initial list or remained undetected based on our keyword search of the full text of the articles. Following the previously described procedure, the coders identified 56 articles that met our inclusion criteria. Of these, 27 were already included on our list. However, we found 29 additional articles. Only one of these 29 articles was published in a journal (i.e.,

In our third and final step, the coders independently classified each of the 284 articles as meeting our definition of methodological literature reviews, and they agreed on 96% of their classifications. Disagreements were resolved through mutual discussion. At the end of this coding process, we found that 116 of the 284 initially identified articles did not pertain to methodological literature reviews. Thus, the final number of articles included in our review is 168, which were published in 42 journals. 4 In the interest of full disclosure and transparency, the list of journals included in our literature review and the number of articles drawn from each is listed in the Supplemental Material available in the online version of the journal (Appendix A). Also, the Supplemental Material available in the online version of the journal lists the 168 articles included in our review (Appendix B) and the 116 articles that we considered initially but eventually excluded (Appendix C). 5

Finally, an additional consideration in our article selection procedure is that, as mentioned earlier, our article is a critical review of reviews. In keeping with this approach to literature reviews (Paré et al., 2015), we did not weigh the articles selected by, for example, the number of citations received by each. 6

Step 4: Categorization of Methodological Literature Reviews

Next, to gain an understanding of the state of methodological literature reviews in management and applied psychology, we categorized the 168 articles by adapting the taxonomy of literature reviews by Paré et al. (2015). This is an inductively derived taxonomy based on the framework by Tranfield et al. (2003) that has been used to categorize reviews in several fields, including “health sciences, nursing, education, library and information sciences, management, software engineering, and information systems” (Paré et al., 2015, p. 184). Because we focus specifically on methodological literature reviews, we omitted two categories (i.e., theoretical and realist reviews) that do not pertain directly to these types of reviews.

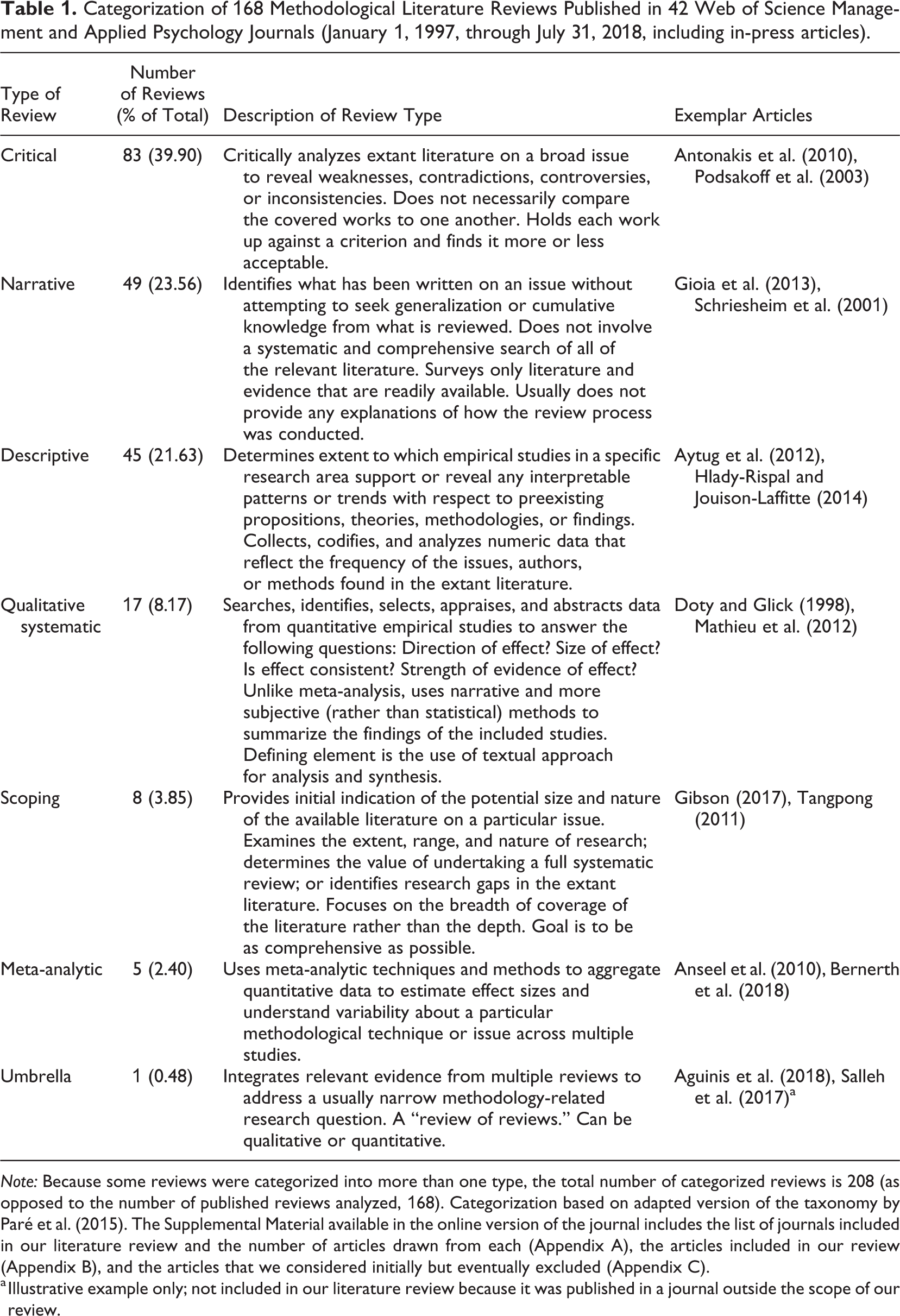

The coders each read the full text and independently categorized the articles as belonging in one or more of seven review types: critical review, descriptive review, meta-analytic review, narrative review, qualitative systematic review, scoping review, and umbrella review (Paré et al., 2015). We compared the coding using a simple matching function in Excel, and there was 97% agreement regarding the categorizations. Results summarized in Table 1 show that the most common types of methodological literature reviews are critical (39.90%, 83) and narrative (23.56%, 49). Other review types include descriptive (21.63%, 45), qualitative systematic (8.17%, 17), scoping (3.85%, 8), meta-analytic (2.40%, 5), and umbrella (0.48%, 1). 7

Categorization of 168 Methodological Literature Reviews Published in 42 Web of Science Management and Applied Psychology Journals (January 1, 1997, through July 31, 2018, including in-press articles).

a Illustrative example only; not included in our literature review because it was published in a journal outside the scope of our review.

Step 5: Creation of Content Analysis Taxonomy

To minimize rater bias effects and increase transparency and reliability, we developed our coding scheme following the eight-step procedure described by Weber (1990) and recommended by Duriau et al. (2007). 8 First, we developed first-cycle codes using a combination of descriptive and magnitude coding following best-practice recommendations provided by Aguinis and Solarino (2019). Specifically, because our data are drawn from a variety of sources and address many distinct research areas and topics, we used descriptive coding in which coders attempt to capture the essence of distinct sections of qualitative data using a few words or a short phrase (Saldana, 2013). Additionally, to provide a richer description and generate data for subsequent quantitative analysis, we used magnitude coding, in which a subcode is added to an already coded item to note its presence or absence (Saldana, 2013). Then, we developed second-cycle codes using pattern coding. We adopted this approach, which involves developing a set of descriptive codes to identify emergent concepts (Saldana, 2013), to provide a parsimonious summary of the key concepts we identified (Aguinis & Solarino, 2019).

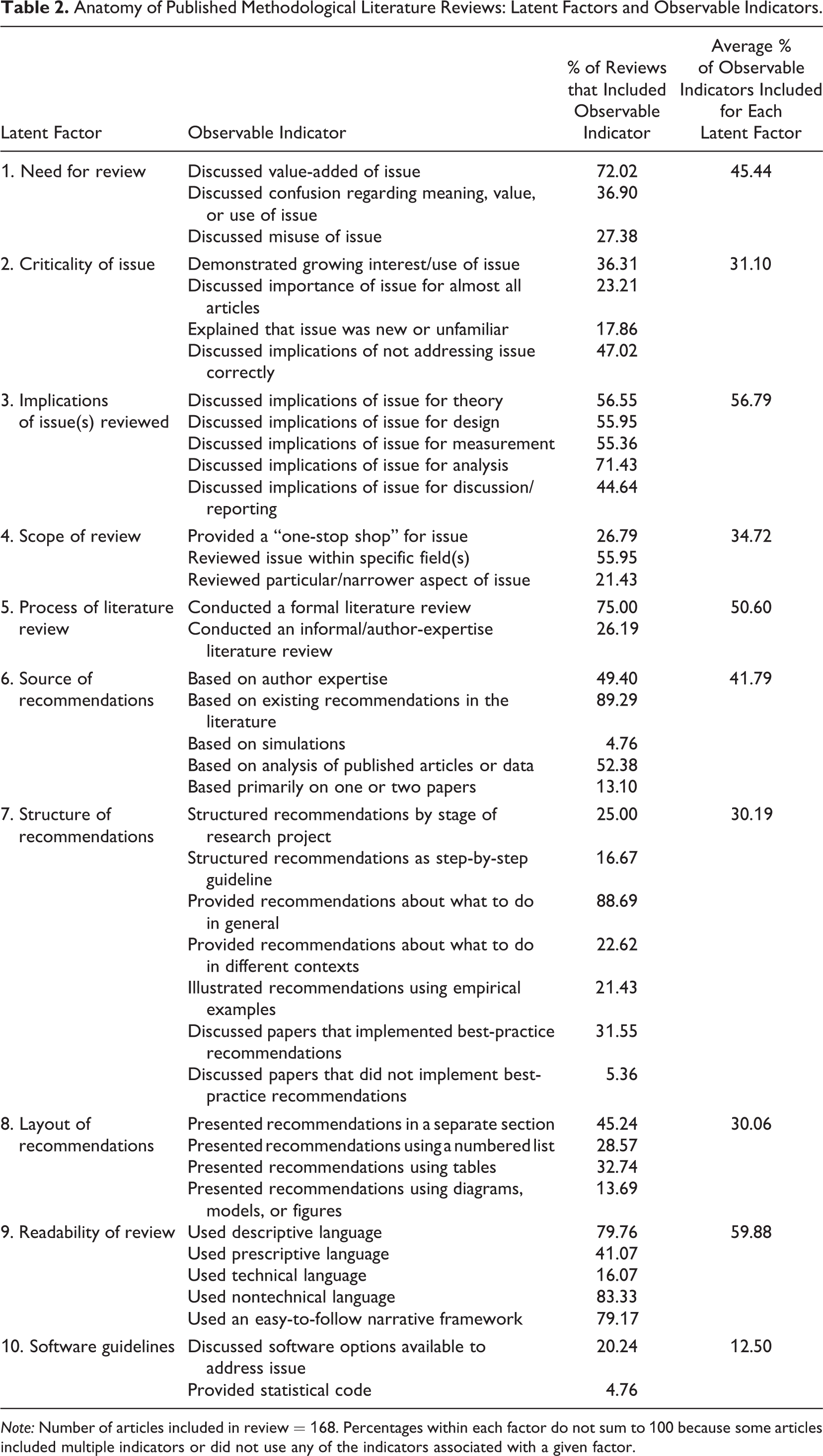

Anatomy of Published Methodological Literature Reviews: Latent Factors and Observable Indicators.

Step 6: Coding of Features of Methodological Literature Reviews

To begin, both coders read and independently coded 10 randomly selected articles to note the presence of the 40 indicators using binary coding (i.e., present or absent). We compared the coding of the indicators using a simple matching function in Excel and found 98% agreement. Given the collaborative nature of the development of the coding scheme and high intercoder agreement, the coders then randomly divided the remaining articles. Table 2 includes the percentage of methodological literature reviews that featured each of our 40 inductively derived indicators and the average percentage of observable indicators included for each latent factor.

Descriptive Insights

Discussion of Categorization of Methodological Literature Reviews

We draw two implications from our results on the categorization of methodological literature reviews summarized in Table 1. First, the majority of reviews (85.10%) 9 belong in three categories: critical, narrative, and descriptive reviews. Given the nature and goals of these three review types, this shows that methodological literature reviews are fulfilling their role in helping develop a collective understanding of knowledge regarding an issue, highlighting inconsistencies, and outlining possible future research directions (Kunisch et al., 2018; Paré et al., 2015).

Second, we found that relatively few methodological literature reviews utilized data integration approaches such as meta-analytic or umbrella reviews. Each of these less popular types can be particularly useful in addressing QRPs and accordingly offer an opportunity for future methodological literature reviews. Meta-analytic reviews apply data-integration techniques to aggregate quantitative data to estimate effect sizes and understand variability about a particular methodological technique or issue across multiple studies. By quantifying the effect of different methodological choices on results, these reviews help researchers make informed decisions regarding the best approach about the methodological technique or issue within the context of their own studies. For example, choices regarding the inclusion or exclusion of control variables influence the relationship between the predictor and criterion variable and inferences drawn from these results (Bernerth & Aguinis, 2016). Without evidence about how the use of particular control variables affects relationships between constructs of interest, researchers are more likely to make questionable choices, thereby decreasing reproducibility (Aguinis et al., 2018; Banks et al., 2016). To address this issue, Bernerth et al. (2018) conduced a meta-analytic review of the relationship between commonly used control variables and three popular leadership perspectives (i.e., leader-member exchange, transformational leadership, and transactional leadership). Their meta-analytic review showed how the use of those control variables reduced degrees of freedom in statistical analyses and consequently led to inaccurate inferences. Based on these results, the authors provided recommendations on the appropriateness of using particular control variables in leadership research. Similarly, Anseel et al.’s (2010) meta-analytic literature review illustrated the effect of using different response-enhancing techniques for different types of respondents on empirical results and provided recommendations on the response enhancement techniques most suited for specific sample types.

In addition, umbrella reviews address a particular question by integrating relevant evidence from multiple reviews to address a usually narrow methodology-related research question. As such, they constitute “reviews of reviews.” An example of a methodological literature review that adopted an umbrella approach is Aguinis et al.’s (2018) review of methodological transparency in management research. By synthesizing recommendations from 96 methodological literature reviews, the authors were able to provide a “one-stop shop” on how to minimize QRPs and increase methodological transparency.

Together, these results show that although meta-analytic and umbrella approaches to methodological literature reviews are not currently widely utilized in the management and applied psychology literature, they represent a promising future research direction.

Discussion of Features of Methodological Literature Reviews Based on Content Analysis

The summary included in Table 2 reveals the “anatomy” of published methodological literature reviews, that is, the structure and internal workings of methodological literature reviews. We note that although some of the factors and indicators we identified may be known, others may be less familiar and obvious, particularly for researchers who have not previously produced or evaluated a methodological literature review (i.e., junior scholars in particular). Also, we view the use of different factors and their associated indicators across published reviews as a positive sign and indicative of equifinality (Gresov & Drazin, 1997). Stated differently, there are different ways to craft a methodological literature review.

To illustrate our point, consider the indicators used to justify the need for and criticality of the methodological literature review (i.e., latent factors 1 and 2 in Table 2). We found that some articles cited confusion about the methodological issue (about 37%), whereas others referred to misuse to justify the need for the review (about 27%). Others did so by citing widespread interest in or use of the particular methodology addressed in the review (about 36%). Still others used a combination of the seven indicators from the factors “need for review” and “criticality of issue” by, for example, mentioning that there was growing interest in the topic and also providing evidence of incorrect use or confusion. However, each of these reviews was nevertheless successful because it was able to achieve the same positive outcome (i.e., they received a stamp of approval from journal editors and reviewers after successfully navigating the peer-review process). 10

In other words, results summarized in Table 2 show that although certain factors and indicators are more commonly used across methodological literature reviews, contrary to the idea of a single set of best practices, different reviews have used different approaches to achieve the same positive publication outcome. Next, we offer a more detailed discussion of results and implications of the features we identified in the published reviews in the form of best-practice recommendations and a checklist.

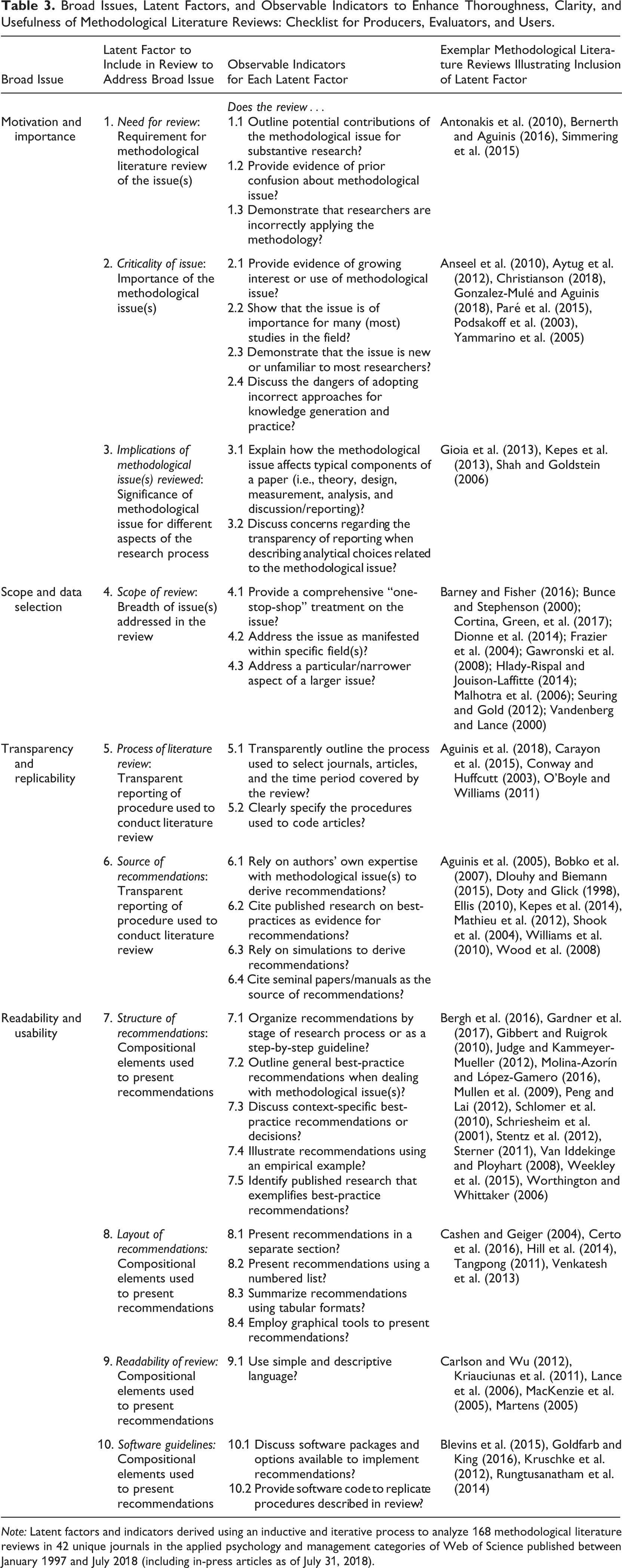

Prescriptive Insights: Best-Practice Recommendations and Checklist

A summary of our recommendations is presented in Table 3 in the form of a checklist. The checklist organizes the latent factors and indicators we identified around the following four broad issues: (1) How can the motivation for and importance of a methodological literature review be justified? (2) What strategies can be used to inform data selection decisions regarding the scope of a review? (3) How can the transparency and replicability of the process used to identify included articles and recommendations be enhanced? and (4) What features can be used to report results and improve the reliability and usability of a review’s recommendations? This checklist provides authors with declarative and procedural knowledge about what to consider, include, and disclose when producing a methodological literature review. Future producers of methodological literature reviews can proceed sequentially through these four broad issues using the associated indicators, as appropriate. Producers of future reviews can also reference the exemplars included in Table 3 for more information on how to implement the features included in our checklist. Evaluators (i.e., journal editors and reviewers) can use the declarative and procedural knowledge in the checklist to evaluate submitted manuscripts and provide developmental feedback to potential authors on what components to include to increase transparency and reproducibility, thereby reducing QRPs (Aguinis, Hill, & Bailey, 2020). Finally, users (i.e., including substantive researchers who do not self-identify as methodologists as well as doctoral student educators) can utilize the declarative and procedural knowledge in our checklist to critically learn from and instruct students about methodological literature reviews.

Broad Issues, Latent Factors, and Observable Indicators to Enhance Thoroughness, Clarity, and Usefulness of Methodological Literature Reviews: Checklist for Producers, Evaluators, and Users.

We make an important clarification regarding the exemplars in Table 3. One of our goals is to distill the features of published reviews to make them explicit and therefore facilitate the production of reviews in the future. Accordingly, we focused on identifying what components to include in a methodological literature review to improve its thoroughness, clarity, and ultimately, usefulness. Furthermore, in keeping with our critical review approach, we did not assess the quality of the articles selected or the efficacy with which a particular component was utilized. Instead, we applied our inductively developed coding scheme to identify the presence or absence of these components. Therefore, given that reviews have used different approaches to achieve the same positive publication outcome, we used the criteria of transparency and clarity of communication and reporting regarding the use of the indicators when choosing exemplars. That is, the exemplars included in Table 3 are such because they used phrasing that makes it easier for others (e.g., other researchers, journal editors and reviewers, instructors of research methods seminars) to recognize the presence of the indicators or factors identified in our review. However, this does not mean that there is only one way of doing so. Accordingly, we include multiple exemplars for each factor to illustrate different ways to provide compelling and clear communication when explicating a particular factor.

Motivation and Importance

Our first broad issue and set of recommendations address how to establish the motivation for and importance of conducting the review. We identified three factors (Table 3, latent factors 1-3): (1) need for review, (2) criticality of issue, and (3) implications of methodological issue(s) reviewed.

We note that in general, combining multiple indicators and/or factors (e.g., need for review and criticality of issue) constitutes a more effective motivation than using fewer of them because it makes it easier for researchers to gain an understanding of current debates or practices regarding a methodological issue. Furthermore, using phrasing that makes it easier to identify the indicators or factors employed is also more effective in motivating the need for and importance of the review than using vague phrasing that is not transparent about the use of the indicators or factors.

1. Need for Review

The need for a review can be communicated by outlining potential contributions of the methodological issue for substantive research, providing evidence of prior confusion about the methodological issue, and demonstrating that researchers are incorrectly applying the methodology. An exemplar of communicating the motivation for the study is Simmering et al. (2015), which cited articles and reviews published over a number of years to provide evidence of the confusion regarding how to identify and select marker variables. An alternative approach is demonstrated in the review by Antonakis et al. (2010), who justified the need for a review on establishing evidence of causality using the indicators of incorrect application of techniques along with outlining implications for substantive research. Antonakis et al. began by stating that the methodological issue had been incorrectly addressed in the past and highlighted the implications of these incorrect applications for substantive research. They then provided further evidence of the need for the review by citing prior reviews from different fields in which researchers raised concerns about the issue.

2. Criticality of Issue

Another way to justify the motivation for and importance of the review is to provide evidence of growing interest or use of the methodological issue, show its importance for many (most) studies in the field, demonstrate that the issue is new or unfamiliar to most researchers, and discuss the dangers of adopting incorrect approaches for knowledge generation and practices. An exemplar of communicating the criticality of the issue is Paré et al.’s (2015) review of different types of reviews. Paré et al. explicated the motivation for their study by demonstrating growing interest in review articles, confusion regarding the types of reviews, and the challenges this posed for knowledge generation. An alternative approach is demonstrated in Aytug et al.’s (2012) review of transparency of reporting in meta-analyses. Aytug et al. identified the potential contributions of the methodological issue for substantive research by noting that “meta-analysis have moved from being somewhat controversial to generally being a preferred way of integrating research findings” (p. 103), and provided evidence of growing interest and use of the methodological issue reviewed by noting that “Evidence of the growing dependence on meta-analysis…comes from…the increase in the number of meta-analyses published and the increase in the number of citations of meta-analyses over time” (p. 103). Yet another exemplar that used the indicator of the topic being new or unfamiliar is Christianson’s (2018) review of the use of video recordings in organizational research. She noted that although video recordings had been used in other fields, “this conversation has largely been absent from our field” (p. 262). Christianson also stated that “there are likely to be a wide range of approaches that researchers might use to collect and analyze video recordings” and that many “questions remain about how video can help illuminate theoretical questions about organizations” (p. 262).

3. Implications of Methodological Issue(s) Reviewed

The third factor useful for justifying the motivation for and importance of a methodological literature review is to explain how the issue affects typical components of a paper (i.e., theory, design, measurement, analysis, and discussion/reporting; Aguinis et al., 2018) or discuss concerns regarding the transparency of reporting when describing analytical choices related to the methodological issue. Results summarized in Table 2 showed that on average, only about half (53.13%) of the published methodological literature reviews explicated the implications of the methodological issue for aspects of a research study other than data analysis. This finding suggests that to justify its motivation and importance, future reviews should give greater consideration to how the methodological issue affects all aspects of the research process—not just data analysis. An exemplar that considers how the issue being reviewed affects all aspects of the research process is Gioia et al.’s (2013) review of rigor in qualitative studies. These authors specified how a lack of methodological rigor in conducting qualitative studies could impact theory (“the risk of ‘going native’…thus losing the higher-level perspective necessary for informed theorizing,” p. 19) while outlining best practices for research design (“pay extraordinary attention to the initial interview protocol,” p. 19), measurement (“trying to use their terms, not ours, to help us understand their lived experience,” p. 19), analysis (“If agreements about some codings are low, we revisit the data, engage in mutual discussions, and develop understandings for arriving at consensual interpretations,” p. 22), and reporting (“we go to some length to explain exactly what we did in designing and executing the study and the procedures we used to explicate our induction of categories, themes, and dimensions,” p. 23). Another exemplar of clearly outlining implications of the methodological issue for different aspects of the research process is Tranfield et al.’s (2003) examination of systematic literature reviews, in which the authors discussed how ontological assumptions, research designs, data extraction, and reporting are all negatively impacted when methodological best practices are not followed.

An exemplar that used the indicator of transparency of reporting when describing analytical choices related to the methodological issue is Kepes et al.’s (2013) review of meta-analytic reviews. The authors noted that “the quality of the systematic review depends upon the data,” and accordingly, “it is the responsibility of the meta-analyst…to be transparent about the process of data extraction and analysis” (p. 124). Kepes et al. then discussed concerns regarding transparency as applicable to choices researchers make regarding different components of a meta-analytic review, including the title, introduction, design, statistical analysis, and reporting of results.

Overall, using the three aforementioned factors and their associated nine observable indicators (Table 3) can help justify the motivation for and importance of the review. Authors can use these factors to justify why their manuscript is worthy of publication while also outlining the potential impact of their review. At the same time, journal editors and reviewers can assess the presence of these factors and indicators to evaluate the manuscript’s potential contribution. Journal editors and reviewers who believe that a manuscript has not been able to provide sufficient information regarding this broad issue may suggest that the authors revisit the factors and indicators listed in Table 3 to provide a stronger justification. For example, editors and reviewers can encourage authors to include more of the indicators associated with a particular factor to increase the breadth and depth of the manuscripts they review.

Scope and Data Selection

Our second broad issue and set of recommendations (Table 3, latent factor 4) addresses the review’s scope and data selection decisions. Although some methodological literature reviews provide a comprehensive one-stop-shop treatment, others address the issue as manifested within specific field(s) or address a particular and narrower aspect of a larger issue. We note that each choice can lead to meaningful contributions as long as the authors clearly state the boundaries regarding the scope of their review and its implications for the topic reviewed.

4. Scope of Review

This factor defines the breadth of issue(s) addressed in the review. Therefore, it influences and constricts subsequent decisions regarding the studies included in the review.

An exemplar that provides a comprehensive one-stop-shop treatment is Vandenberg and Lance’s (2000) review of measurement invariance. These authors articulated their perspective beginning in the abstract of their article and then outlined the boundaries of their review as follows: “Our review is confined to evaluation of measurement equivalence in a confirmatory-factor analytical (CFA) framework” (p. 5). Vandenberg and Lance also examined past recommendations and substantive applications of the issue, discussed differences between various proposed approaches, provided an illustration using an empirical example, and outlined a stepwise program for other researchers to follow when conducting tests of measurement invariance.

Other reviews provided a more focused examination. But offering a more circumscribed scope is not necessarily a disadvantage or flaw for methodological literature reviews. In fact, as opposed to substantive literature reviews that typically aim to summarize and integrate broad fields and rely on multiple theories (Parmigiani & King, 2019), a more focused approach may help make the methodological literature review more accessible to substantive researchers seeking guidance on a specific aspect of a broader methodological topic. An exemplar review that examined a particular or narrower aspect of a larger methodological issue is Gawronski et al.’s (2008) article about the effects of response interference when using implicit measures. The authors clearly specified their focus by stating that although there were many different mechanisms that mediated the “impact of activated associations on task performance,” their review focused only on the “particular mechanism” of “response interference” (p. 218). Another exemplar that focused on a particular or narrower aspect is Cortina, Green, et al.’s (2017) review of degrees of freedom in structural equation modeling (SEM), in which the authors explicitly noted that they focused on degrees of freedom to demonstrate challenges regarding the transparency and reproducibility of research using SEM.

Another approach to outlining the scope of the review is to specify the particular fields in which the methodological issue is examined. Some (e.g., Barney & Fisher, 2016; Bunce & Stephenson, 2000) focused on relatively broad fields (e.g., organizational research, stress), whereas others (e.g., Dionne et al., 2014; Hlady-Rispal & Jouison-Laffitte, 2014; Malhotra et al., 2006) focused on more specific subfields (e.g., leadership, specific domains within entrepreneurship).

An exemplar of examining an issue within a specific field is Frazier et al.’s (2004) review on approaches to testing moderator and mediator effects. The authors communicated the scope of their review in the title (“Testing Moderator and Mediator Effects in Counseling Psychology Research”), in explaining the need for the review (i.e., “confusion over the meaning of, and differences between, these terms is evident in counseling psychology research,” p. 115), and in their selection of articles to review (i.e., “we review research testing moderation and mediation that was published in the

Overall, clearly communicating the scope can help authors address concerns about the comprehensiveness of their review and highlight the specific aspects of the methodological issue that are relevant to their effort while also clarifying the boundaries of the issues examined in their review. A clear description of a review’s scope is also useful for journal editors and reviewers to understand the breadth of the issues addressed and helps answer questions regarding possible shortcomings or omissions in the scope of the review.

Transparency and Replicability

Our third broad issue addresses matters pertaining to the procedures used to conduct the review. In other words, it is targeted at enhancing the transparency of the process used to conduct the literature review and consequently, the trustworthiness of a review’s recommendations (Aguinis et al., 2018; Aguinis & Solarino, 2019). Based on our content analysis, we identified two latent factors (Table 3, latent factors 5 and 6, respectively) to address this issue: (5) process of literature review, and (6) source of recommendations.

Our results summarized in Table 2 regarding the anatomy of published methodological literature reviews show that some authors described conducting a formal literature review, clearly reported the method used to select journals and article inclusion and exclusion criteria, and offered a thorough explanation of the coding process. In contrast, others provided minimal reporting on how they reviewed papers by, for example, noting they reviewed a certain time period in certain journals but falling short of providing information on inclusion criteria and coding procedures.

5. Process of Literature Review

Echoing concerns regarding rigor in literature reviews, Table 2 shows that 25% of the articles did not conduct a formal literature review. To examine this result more closely, we split our sample into two equivalent time periods by year of publication (i.e., 1997-2007 and 2008-2018). We then analyzed the presence of this indicator in each subsample and found that the percentage of articles that conducted a formal literature review declined during the time period covered in our review (i.e., 82.35% for 1997-2007 vs. 71.97% for 2008-2018). These results show that a lack of systematic approaches and verifiable evidence-based guidance is not just a challenge for literature reviews in general (Callahan, 2014; Denyer & Tranfield, 2009) but also extends specifically to methodological literature reviews. Clearly, the greater the transparency in communicating how the review was conducted, the more replicable the review and the better it is able to answer concerns about potential selection biases (Adams et al., 2017; Briner et al., 2009; Jones & Gatrell, 2014). As Rousseau et al. (2008) noted, “Literature reviews are often position papers, cherry-picking studies to advocate a point of view” (p. 476). Therefore, a detailed specification of the process used to conduct the literature review that includes, for example, the time period covered, the sources (e.g., books, journal articles, edited volumes) examined, databases and keywords used in the search, inclusion and exclusion criteria, and information regarding interrater agreement (as applicable) can help alleviate such concerns.

Our content analysis also identified the need to be explicit about the type of review because this choice guides decisions about how the literature review is conducted (as summarized in Table 1 describing seven different types of reviews). For example, qualitative systematic reviews use a structured process by including more information on what articles were selected and how data were analyzed to arrive at the synthesis. In contrast, narrative reviews usually do not provide any explanations of how the review process was conducted and are more likely to include only literature and evidence that are readily available to the authors. Because narrative reviews typically do not provide details about how the review was conducted, they are less reproducible.

An exemplar that transparently outlined the process used to select journals, articles, and the time period covered by the review and clearly specified the procedures used to code articles is Carayon et al.’s (2015) article on mixed-methods research. The authors clearly specified how they defined the methodological issue (i.e., “apply the four quality criteria for mixed methods research defined by Creswell and Plano Clark [2011],” p. 293), listed the inclusion criteria (i.e., “study was included if it met all four inclusion criteria,” p. 293), and provided a detailed narrative and graphical explanation of the process used to search the literature, include or exclude studies, data extraction, coding, and interrater agreement (pp. 293-294). Other exemplar articles include Conway and Huffcutt’s (2003) review of exploratory factor analysis, and O’Boyle and Williams’s (2011) review of model fit indices in SEM.

6. Source of Recommendations

Another important consideration is to transparently report the process used to produce the recommendations put forth in the review. Doing so is particularly important for methodological literature reviews because it reassures evaluators and users that the authors did not engage in QRPs such as cherry-picking best-practice recommendations that aligned with their preferred viewpoint. Although some reviews rely on the authors’ own expertise to derive recommendations, others cite published research on best practices as evidence. Still others rely on simulations or cite seminal published sources as the rationale for their recommendations.

An exemplar that transparently explicated and reported the source of the recommendations is Wood et al.’s (2008) review of studies that used mediation analysis. The authors provided a narrative (“procedures recommended by statisticians,” p. 270) and detailed tabular (pp. 272-277) explanation of the source of the knowledge used to critique current practices and on which they based their recommendations. As another example, Williams et al. (2010) noted that their recommendations were built on the foundation of Lindell and Whitney’s (2001) marker technique for controlling method variance.

Overall, using the two factors “process of literature review” (factor 5) and “source of recommendations” (factor 6) and their associated observable indicators can help alleviate concerns regarding the trustworthiness and credibility of the review as well as address concerns about the replicability of reviews and recommendations included therein (Adams et al., 2017; Aguinis et al., 2018; Jones & Gattrell, 2014; Kunisch et al., 2018). These two factors are also useful for journal editors and reviewers by helping identify potential biases that may affect recommendations. Editors and reviewers can also use this information to provide constructive feedback to authors to ameliorate potential QRPs. Finally, substantive researchers—including instructors of research methods—can utilize these factors and observable indicators when evaluating which methodological literature reviews to rely on because the use of these factors and indicators suggests that producers of the review likely did not engage in QRPs.

Readability and Usability

Methodological literature reviews synthesize voluminous and sometimes complex and technical material and are usually targeted at audiences who may not be experts on the particular issue. Therefore, our fourth broad issue focuses on features that make it easier for substantive researchers to access the declarative and procedural knowledge included in the review, improve the usability of the review’s recommendations, and identify QRPs to avoid when addressing a particular methodological issue. Our content analysis uncovered the following four latent factors (Table 3, factors 7-10, respectively): (7) structure of recommendations, (8) layout of recommendations, (9) readability of review, and (10) software guidelines.

7. Structure of Recommendations

Some reviews organize recommendations by stage of research process or as a step-by-step guideline. Others outline general best-practice recommendations when dealing with a methodological issue or discuss context-specific best-practice recommendations or decisions. Still others offer illustrations based on an empirical example or identify published research that exemplifies best-practice recommendations. Using the indicators associated with this factor is particularly important because—unlike substantive literature reviews (Parmigiani & King, 2019; Short, 2009)—a critical role of methodological literature reviews is to ameliorate QRPs and improve current practices regarding a specific methodological issue.

Presenting recommendations in a systematic manner that mirrors the sequential stages of a typical research study enhances the usability of the recommendations by allowing researchers to understand the methodological issue in the context of their own research. Also, presenting recommendations as a sequential series of decisions or actions allows researchers to consider them one at a time, thereby decreasing the complexity surrounding the recommendations and facilitating their use.

An exemplar that illustrated the indicator of providing recommendations based on stage of research project is Peng and Lai’s (2012) review of the use of partial least squares (PLS). The authors provided a comprehensive guide on how to use PLS with subsections related to the research objectives and types of questions PLS can answer, issues related to sample size and model complexity, data requirements, analytical considerations, and interpreting and reporting results. The authors also provided context-specific best-practice recommendations regarding decisions involved in each step. Another exemplar is Gardner et al.’s (2017) review of methodological issues in testing interactive and quadratic relationships in which the authors presented their recommendations based on different phases such as when hypothesizing interactions, pretesting, data analysis, and examining results and transparently hypothesizing after results are known (THARKing).

An exemplar that used a step-by-step approach to present recommendations is Schlomer et al.’s (2010) review of missing data approaches. In addition to outlining recommendations in the body of the article, the authors also provided an appendix that included an overview of the steps, and recommendations based on the results of each step. Weekley et al.’s (2015) review of low-fidelity simulations in situational judgment tests and Worthington and Whittaker’s (2006) review of scale development research are additional exemplars of the use of the step-by-step approach to present recommendations.

Two other indicators related to the structure of recommendations include outlining general recommendations when addressing the methodological issue and discussing context-specific decisions or recommendations. Providing general or nonspecific recommendations is useful because it allows researchers to recognize key considerations and understand how they should address them. An exemplar is Mullen et al.’s (2009) review of research methods in small business and entrepreneurship in which the authors provided general recommendations for entrepreneurship research related to sampling issues, construct validity, and internal and external validity.

In contrast, discussing context-specific decisions or recommendations helps researchers understand possible trade-offs, allowing them to make appropriate decisions based on their study’s goals. An exemplar is Van Iddekinge and Ployhart’s (2008) review of criterion-related validation in which the authors provided context-specific recommendations such as comparing procedures for single versus multiple raters, using broad versus narrow criteria, and analyzing maximum versus typical performance. Another exemplar is Judge and Kammeyer-Mueller’s (2012) review of general versus specific measures, in which the authors provided four general questions authors must ask themselves to determine whether general or specific measures should be used and then offered recommendations based on the answers to those questions.

The final two indicators related to the structure of recommendations involve illustrating recommendations using an empirical example and identifying published research that exemplifies the recommendations. An exemplar that used the indicator of an illustrative empirical example is Schriesheim et al.’s (2001) review of levels-of-analysis research in leadership. The authors provided a detailed illustration using leader-member exchange (LMX) theory to demonstrate the importance of aligning levels of theory with levels of analysis and to “illustrate how others who are not interested in the LMX approach may still test their theories, models, and/or hypotheses for effects at different levels of analysis” (p. 527). Another exemplar is Bergh et al.’s. (2016) review of meta-analytic structural equation modeling (MASEM), in which the authors illustrated their recommendations by using MASEM to examine the link between strategic leadership and firm performance.

Results of our content analysis summarized in Table 2 showed that only about 32% of reviews identified published research that exemplified best-practice recommendations to provide evidence that the recommendations are realistic and not just wishful thinking. An exemplar of best practices is Molina-Azorín and López-Gamero’s (2016) review of mixed methods in environmental management research, in which the authors included a table listing published research that exemplified best-practice recommendations. A second exemplar is Gibbert and Ruigrok’s (2010) review of rigor in case studies, in which the authors extracted best practices related to ensuring rigor from exemplar articles and provided direct quotes from the articles to illustrate their recommendations.

Finally, Table 2 also shows that an alternative approach we uncovered regarding the structure of recommendations is to explicitly identify and critique prior research that did not adhere to the review’s recommendations (i.e., approximately 5% of published reviews used this approach). Although we do not recommend this approach because we believe it is more productive to highlight good compared to bad practices, implementing this practice is a judgment call that authors of future methodological literature reviews must make for themselves.

8. Layout of Recommendations

Our content analysis showed that reviews use a variety of approaches to present their recommendations, including presenting recommendations in a separate section and using numbered lists, tables, and graphical tools (i.e., diagrams, models, or figures). We found that 45.24% of the reviews presented their recommendations in a separate section and 32.74% used tables to present their recommendations (see Table 2).

Exemplars of reviews that used indicators related to the layout of recommendations include Hill et al.’s (2014) review of unobtrusive measurement, which presented recommendations in a separate section; Tangpong’s (2011) review of content analysis research, which used a numbered list to present recommendations; Cashen and Geiger’s (2004) review of statistical power, which offered recommendations using a tabular format; and Venkatesh et al.’s (2013) review of mixed-methods research, which used figures to illustrate the recommendations. Taken together, these approaches to the review’s layout of recommendations are likely to increase the ease of with which substantive researchers, including doctoral students, can access the declarative and procedural knowledge included in the review (Short, 2009).

9. Readability of Review

Readability is obviously important in all reviews but particularly so in methodological literature reviews given their often technical nature. Exemplars of articles that include the indicator of simple and descriptive language include Carlson and Wu’s (2012) review of control variable usage, Kriauciunas et al.’s (2011) review of using surveys in nontraditional contexts, Martens’s (2005) review of SEM, and MacKenzie et al.’s (2005) review of measurement model misspecification.

10. Software Guidelines

Our content analysis uncovered that a final factor regarding the usability of a review’s recommendations, which is particularly unique to methodological reviews and nonapplicable to other types of reviews, involves discussing software packages and options available to implement recommendations or providing software to replicate procedures described in the review. However, only about 20% of the methodological literature reviews discussed software packages, and only about 5% included software to replicate the procedures described (see Table 2). An exemplar that discussed software packages and options is Waller and Kaplan’s (2018) review of video-based approaches, which included a description of technological alternatives for coding video-based data. Exemplars of articles that provided software to replicate the review’s procedures or illustrations include Bonett and Wright’s (2014) review of Cronbach’s alpha, which provided code to calculate recommended confidence intervals for Cronbach’s alpha using R, and Rungtusanatham et al.’s (2014) review of mediation, which included syntax to conduct both bootstrap and Bayesian tests of mediation using Mplus.

Opportunities for Future Methodological Reviews

Our categorization of reviews points to opportunities and promising directions for future methodological literature reviews, especially in addressing the critical challenge posed by QRPs. As our results showed, most methodological literature reviews are aimed at deepening the field’s understanding about a technique or issue and outlining possible future research directions. Although these contributions are useful and indeed necessary, two underutilized types of methodological literature reviews, namely, meta-analytic and umbrella reviews, hold great promise in helping alleviate QRPs. Meta-analytic methodological literature reviews bring clarity to the literature by analyzing and distilling knowledge from individual studies to understand how results obtained using a particular methodological issue or technique may vary. Moreover, by aggregating data to derive standardized effect sizes, meta-analytic reviews can provide evidence on how different methodological practices (e.g., including or excluding control variables, sample selection, HARKing) may influence results and inferences. Therefore, they help substantive researchers choose an approach that is best aligned with their research goals, and reviewers and editors in evaluating the appropriateness of a researcher’s decisions and judgment calls when utilizing a particular methodological technique. In addition, umbrella reviews bring clarity by highlighting similarities and resolving potential contradictions across multiple reviews of the same methodological issue or technique. Such knowledge is especially relevant given the rapid pace of methodological advancements (Cortina, Aguinis, & DeShon, 2017), which renders once commonly accepted practices, such as summarily excluding outliers or managing control variables (Aguinis, Hill, & Bailey, 2020; Bernerth & Aguinis, 2016), into potential QRPs.

As an example, consider Vandenberg and Lance’s (2000) review of measurement invariance. This critical and descriptive methodological literature review is the most cited article in our sample, with about 3,400 WoS citations (as of June 2020), and is exemplary in its use of many of the factors and latent indicators identified in our review. As a follow-up to Vandenberg and Lance, a meta-analytic review could examine variability about measurement invariance practices across multiple studies. For example, a meta-analytic review that examines variability in measurement invariance practices in the use of particular measures of LMX or different dimensions of justice (i.e., distributive, procedural, interpersonal, and informational) could help minimize QRPs by informing researchers about the effects of using those measures on parameter estimates. As an additional opportunity, Vandenberg and Lance’s article was published over 20 years ago and before recent methodological advancements, such as the use of Bayesian methods to assess measurement invariance (Kim et al., 2017), or more recent reviews of measurement invariance (e.g., Putnick & Bornstein, 2016; Schmitt & Kuljanin, 2008). Therefore, a follow-up umbrella review could help researchers avoid QRPs by integrating relevant evidence across multiple reviews and providing state-of-the-science recommendations. We hope that our article will spur more researchers to adopt meta-analytic and umbrella review approaches, thereby helping methodological literature reviews better address the challenges posed by QRPs.

Conclusions

Methodological literature reviews summarize complex and technical information usually based on large bodies of existing work. Also, they provide recommendations that help substantive researchers stay abreast with rapid developments in methodology, instructors of methods in educating doctoral students, and the entire field in terms of identifying and minimizing QRPs and the exploitation of methodological gray areas. Our content analysis uncovered implicit features of published methodological literature reviews and made them explicit—the “anatomy” of methodological literature reviews. Based on these features, we created a checklist of actionable recommendations on what components to include in a methodological literature review to improve thoroughness, clarity, and ultimately, usefulness. Furthermore, we identified features (e.g., source of recommendations, software guidelines) that are specific and unique to methodological literature reviews and not necessarily relevant for other types of literature reviews. Our article offers recommendations that address the needs of three methodological literature review stakeholder groups: producers (i.e., potential authors), evaluators (i.e., journal editors and reviewers), and users (i.e., substantive researchers interested in learning about a particular methodological issue and individuals tasked with training the next generation of scholars). Future producers will benefit from declarative knowledge on different types of methodological literature reviews and the goals addressed by each as well as procedural knowledge on how to utilize our checklist to inform the judgment calls and decisions made during the manuscript preparation process. Evaluators can use the declarative and procedural knowledge in our checklist to evaluate methodological literature review submissions and use our checklist to provide feedback to authors on what components to include to increase transparency and reproducibility, thereby reducing QRPs. Users can utilize the declarative and procedural knowledge in our checklist to critically learn from—and also potentially produce—methodological literature reviews. As methods evolve, we encourage future research to examine whether our results are generalizable to fields beyond management and applied psychology and to revise and update our checklist to reflect the state of the art in terms of best practices for methodological literature reviews.

Supplemental Material

Supplemental Material, orm-19-0013.r4_online_supplement_June_19_2020 - Best-Practice Recommendations for Producers, Evaluators, and Users of Methodological Literature Reviews

Supplemental Material, orm-19-0013.r4_online_supplement_June_19_2020 for Best-Practice Recommendations for Producers, Evaluators, and Users of Methodological Literature Reviews by Herman Aguinis, Ravi S. Ramani and Nawaf Alabduljader in Organizational Research Methods

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.