Abstract

Developing trustworthy cyber-physical systems (CPS) demands cross-boundary collaboration among stakeholders with diverse disciplinary backgrounds, often creating challenges in crossing knowledge boundaries. Boundary objects, i.e., artifacts that enable shared understanding across domains, can help mitigate these challenges. This study examines the trustworthiness framework (T-Framework), as a boundary object, to support cross-boundary collaboration in trustworthy CPS development. Specifically, it addresses two research questions: (1) To what extent does the T-Framework, as a boundary object, support the crossing of knowledge boundaries in the development of trustworthy CPS? and (2) How can the T-Framework and its associated method be extended to address any identified gaps? Drawing on theories of knowledge boundaries and boundary objects, the study empirically evaluates the T-Framework through focus groups with CPS practitioners from academia and industry. Insights from these focus group sessions inform refinement of the T-Framework and its method to address identified gaps in its capability to support cross-boundary collaboration. In addition, the findings highlight the importance of incorporating structured mechanisms into both the design and utilization of architectural frameworks to promote shared understanding, address conceptual misalignment, and balance stakeholder influence during cross-boundary collaboration in the development of trustworthy CPS.

Keywords

Introduction

The integration of artificial intelligence (AI) into cyber-physical systems (CPS) has catalyzed significant advancements, enabling CPS to address a broad spectrum of societal challenges in domains such as transportation, healthcare, and manufacturing (Cao et al., 2021). By leveraging AI, CPS can operate more autonomously, intelligently, and efficiently by facilitating improved decision-making and optimized resource allocation. These capabilities have given rise to AI-enhanced CPS–often referred to as complex CPS–which are increasingly embedded in everyday infrastructure (Lee, 2015). Such systems not only elevate service quality but also contribute to tackling global issues like urban congestion, healthcare accessibility, sustainable production, and energy efficiency.

However, the development and deployment of these complex CPS raises substantial concerns regarding their trustworthiness. Trustworthiness in CPS spans traditional dependability attributes (e.g., safety, reliability, cybersecurity) and broader ethical considerations (e.g., fairness, transparency, autonomy, and privacy). While governance initiatives such as the National Institute of Standards and Technology (NIST) AI Risk Management Framework (Tabassi, 2023) and the EU AI Act (High-Level Expert Group on AI of European Commission, 2019) provide essential regulatory guidance, operationalizing these principles within technical CPS development processes remains difficult due to the missing of architectural framework and method that jointly address both the ethical aspect of AI and the classical dependability aspects of CPS (Schiff et al., 2021).

Moreover, other key challenges in the development of trustworthy CPS is fostering effective cross-boundary collaboration. This remains particularly difficult because crossing knowledge boundaries involves navigating differences in language, meanings, and interests among diverse stakeholders (Carlile, 2002). To address these challenges, stakeholders can draw on the concept of boundary objects, which can be defined as artifacts that are robust enough to maintain a common identity across different social or disciplinary worlds, yet flexible enough to be interpreted locally by each group (Star & Griesemer, 1989). Boundary objects are particularly valuable in complex engineering environments where shared understanding must be negotiated across technical, organizational, and regulatory perspectives.

In this context, the trustworthiness framework (T-Framework) is proposed to facilitate alignment among diverse stakeholders involved in the development of trustworthy CPS. Building on our previous work (Ramli & Törngren, 2022), our goal is to develop a novel architectural framework and method for realizing trustworthy CPS that adheres to the principles of ISO/IEC 42010, which emphasizes the use of architectural descriptions to manage system complexity by addressing multiple stakeholder concerns through defined viewpoints (Martin, 2021). In other words, the T-Framework is intended to function as a boundary object that supports cross boundary collaboration, enabling stakeholders to cross knowledge boundaries throughout the development of trustworthy CPS.

This paper extends our previous work by empirically evaluating the T-Framework through focus groups composed of CPS practitioners. Drawing on theories of boundary objects (Star & Griesemer, 1989) and knowledge boundaries (Carlile, 2002), we analyze how the proposed framework and method supports communication and coordination across diverse domains. Based on the focus group findings, we refine the T-Framework method to enhance its practical utility and alignment with stakeholder needs. Hence, this study focuses on the following two research questions (RQ):

The remainder of this paper is structured as follows: Section “Theoretical Background” discusses the theoretical foundation. Section “Research Method” presents the research methodology. Section “Results” explains the findings. Section “Discussion” elaborates on the discussion of the findings. Finally, Section “Conclusions and Future Work” presents conclusions and future work.

Theoretical Background

This section first presents the current landscape of and challenges related to knowledge boundaries in realizing trustworthy CPS. Secondly, the T-Framework, developed to support cross-boundary collaboration to develop trustworthy CPS, is introduced. Finally, this section briefly positions the T-Framework as a boundary object.

Knowledge Boundaries in Realizing Trustworthy CPS

CPS, combining physical and cyber (computing and communication) technologies, can today be found in almost every aspect of daily life, such as transportation, healthcare, energy supply, and manufacturing. CPS offer new capabilities thanks to the advancement of technology, especially within telecommunications and AI (Singh et al., 2024). At a conceptual level, the trends are towards CPS becoming increasingly connected, collaborating and automated. Due to their new capabilities, these CPS are also expected to interact more with other entities such as other systems, environments, and people (Törngren, 2021).

More advanced capabilities and applications reflect the deployment and operation of CPS in more open and less structured environments. An implication of this development is that the CPS will face increasing requirements regarding a number of trustworthiness attributes: CPS will not only need to be safe, secure and reliable, they also need to respect privacy, confidentiality, fairness and transparency (Li et al., 2023). Trustworthiness thus needs to be addressed from both technical and social perspectives. The multitude of trustworthiness attributes that need consideration, and the fact that these are not independent, pose challenges that need to be explicitly supported to enable the realization of trustworthy CPS. Therefore, there is a need for guidelines to help stakeholders in addressing these trustworthiness attributes.

Specifically, in part due to the increasing requirements regarding trustworthiness, the stakeholders realizing trustworthy CPS need to conduct cross boundary collaboration from the development and deployment phases through to system retirement (Weyns et al., 2022). However, a significant ”principles-to-practices” gap persists in achieving trustworthy AI-based systems (Shneiderman, 2020). This gap is driven by factors such as the ”many-hands” problem, challenges in knowledge governance, the complexity introduced by AI, and disciplinary divides. These factors hinder effective collaboration among stakeholders. These challenges are closely linked to knowledge boundaries, as described by Carlile (2002), who categorizes knowledge boundaries into three levels: syntactic, semantic, and pragmatic.

At the syntactic level, knowledge boundaries arise when stakeholders use different technical languages or models. For instance, from a technical perspective the use of distinct programming languages creates barriers, while socially differing technical terminologies can lead to confusion. Overcoming syntactic boundaries requires the development of a common language or shared lexicon.

At the semantic level, knowledge boundaries occur due to misinterpretation between the knowledge provider and the recipient, often related to how knowledge is translated across disciplines. For example, a cybersecurity engineer may misunderstand the concept of risk as used by a safety engineer because the term carries different connotations in each field. Addressing semantic boundaries involves creating shared meanings and interpretations among stakeholders. Additionally, making implicit knowledge explicit is key to overcoming semantic barriers.

Pragmatic knowledge boundaries arise from conflicting interests and power imbalances between stakeholders. In engineering contexts, these boundaries relate to the challenge of translating shared meaning into actual products or systems. Resolving pragmatic boundaries requires both practical and political efforts.

Since the development and operation of trustworthy CPS involves multiple stakeholders, navigating these knowledge boundaries is a critical part of the process. In addition, knowledge boundaries can vary in complexity—becoming “thick” or “thin” depending on differences in language, interpretation, and interests (Edmondson & Harvey, 2018).

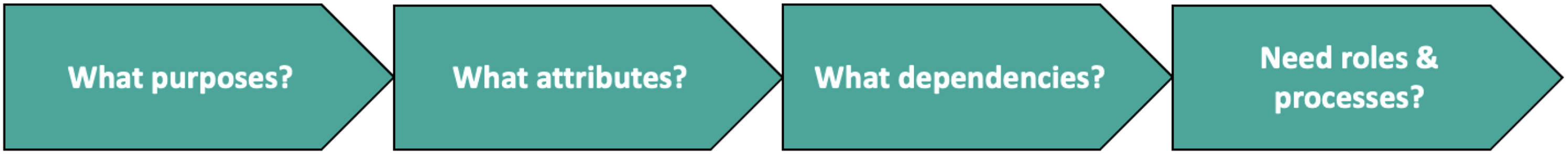

T-Framework: Framework and Method for Trustworthy CPS

The T-Framework is an architectural framework designed to support the development of trustworthy CPS by integrating both ethical aspects of AI and classical dependability aspects of CPS. As an architectural framework, it provides a conceptual structure to represent, analyze, and reason about trustworthiness attributes and their relationships within CPS. Furthermore, to guide stakeholders in the practical application of the T-Framework during system development, deployment, and governance a workflow has been developed, which is referred to as the T-Framework method. The T-Framework is structured around the following guiding questions:

What attributes of trustworthiness are relevant for this CPS, and how do these attributes relate to trustworthiness? What are the relationships, dependencies and trade-offs between (i) trustworthiness attributes and their goals? (ii) How do the attributes of a system relate to its different aspects, such as data, functions and computations? (iii) What dependencies exist between attributes due to their relationships with aspects? What roles and processes are needed to ensure the trustworthiness of a CPS during its life-cycle?

These questions enable stakeholders to engage in systematic reflection about the socio-technical aspects of CPS design and operation. The first question helps to identify high-level trustworthiness requirements tailored to the specific CPS context, including attributes such as safety, security, privacy, and fairness (Floridi, 2018; Schiff et al., 2021). The second question highlights the traceability and interdependency of attributes across system aspects, allowing stakeholders to analyze trade-offs and anticipate design conflicts (Dignum, 2019). The third question addresses organizational roles and governance mechanisms (e.g., safety managers, ethics officers, lifecycle processes), ensuring that responsibilities for trustworthiness are embedded within CPS workflows (Freund, 2005).

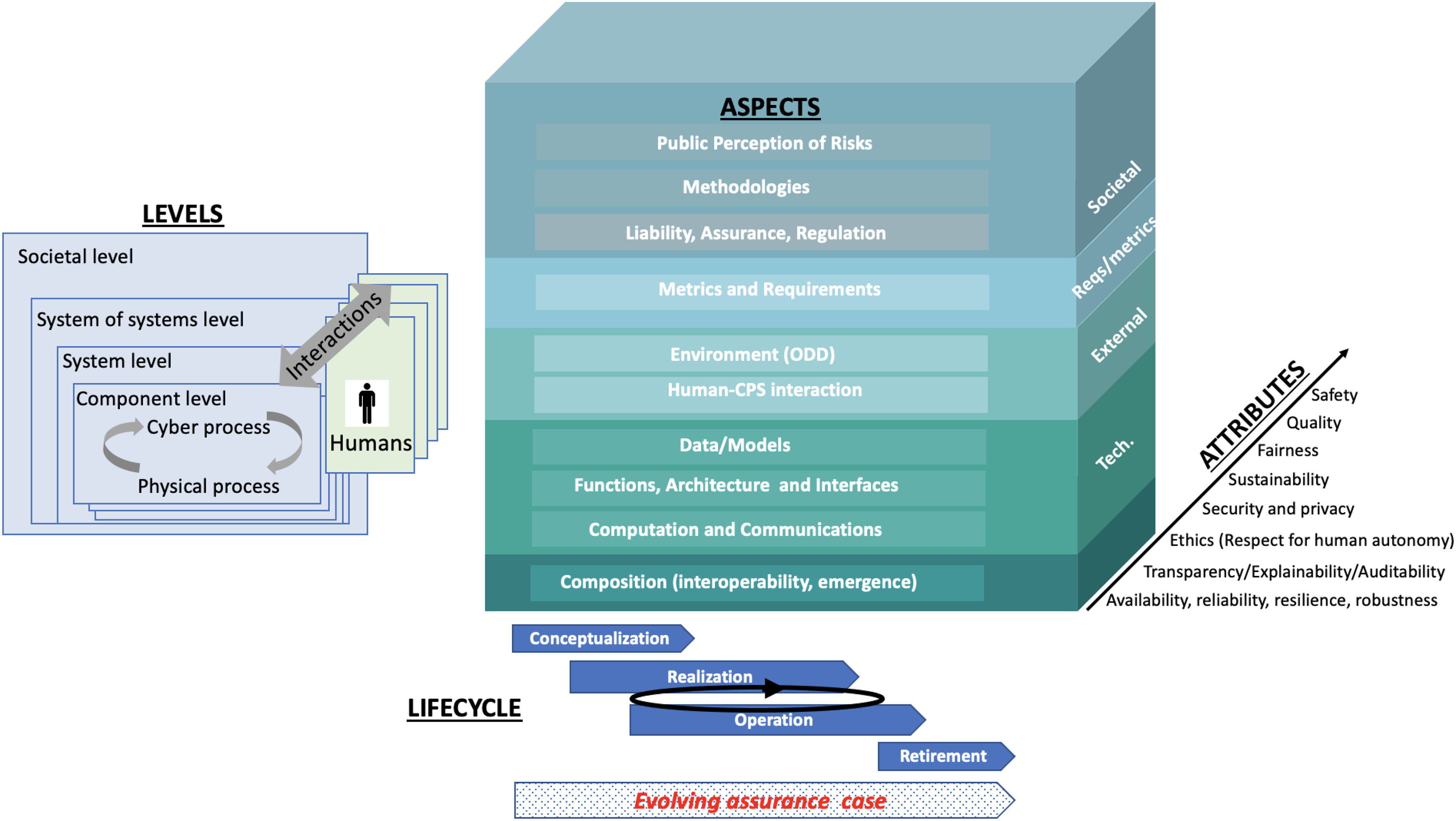

As depicted in Figure 1, the visualization of the T-Framework draws inspiration from established architectural frameworks for CPS development, including the NIST CPS Framework (Griffor et al., 2017), the Reference Architectural Model Industrie 4.0 (RAMI 4.0) (Hankel & Rexroth, 2015), and the Reference Architecture Model for Edge Computing (RAMEC) (Willner & Gowtham, 2020). The viewpoint specification is grounded in the ISO/IEC 42010 standard (Martin, 2021), and the architectural description is informed by the Architectural Tradeoff Analysis Method (ATAM) (Kazman et al., 1998). Unlike existing architectural frameworks, however, the T-Framework puts trustworthiness attributes at the center of lifecycle considerations. It provides a shared vocabulary and conceptual model to foster alignment among diverse stakeholders. To support its application, a structured workflow (Figure 2) that guides stakeholders in applying the framework systematically has been developed. A more detailed account of the T-Framework’s design and foundational principles is available in our prior work (Ramli & Törngren, 2022).

T-Framework, an architectural framework for realizing trustworthy CPS.

Initial method of the T-Framework.

Boundary Objects

Star and Griesemer (1989) introduced the concept of boundary objects to explore how a shared understanding can be established between different communities of practice. A widely accepted definition of a boundary object describes it as “an object that is flexible enough to adapt to the needs and constraints of various parties using it, yet stable enough to maintain a consistent identity across different contexts”. In the realm of systems and software engineering, boundary objects have been extensively studied to understand their role in supporting stakeholders, such as in agile practices (Wohlrab et al., 2019) and cross-boundary collaboration for system-of-systems development (Kohlke et al., 2021). These studies demonstrate that boundary objects can bridge knowledge gaps and help stakeholders achieve mutual goals during system development. Architectural frameworks, such as the T-Framework, can function as boundary objects by facilitating effective communication among engineers. For instance, the T-Framework is designed to provide a shared understanding across organizations and disciplines, as well as to support the future development of systems. It also aims to promote a common understanding of trustworthiness aspects and attributes.

Research Method

As the T-Framework is an artefact, design science (Hevner et al., 2004; Wieringa, 2014) was used in this study. This approach is described in Section “Design Science Approach”. Thereafter, the details of the associated data collection and data analysis are described.

Design Science Approach

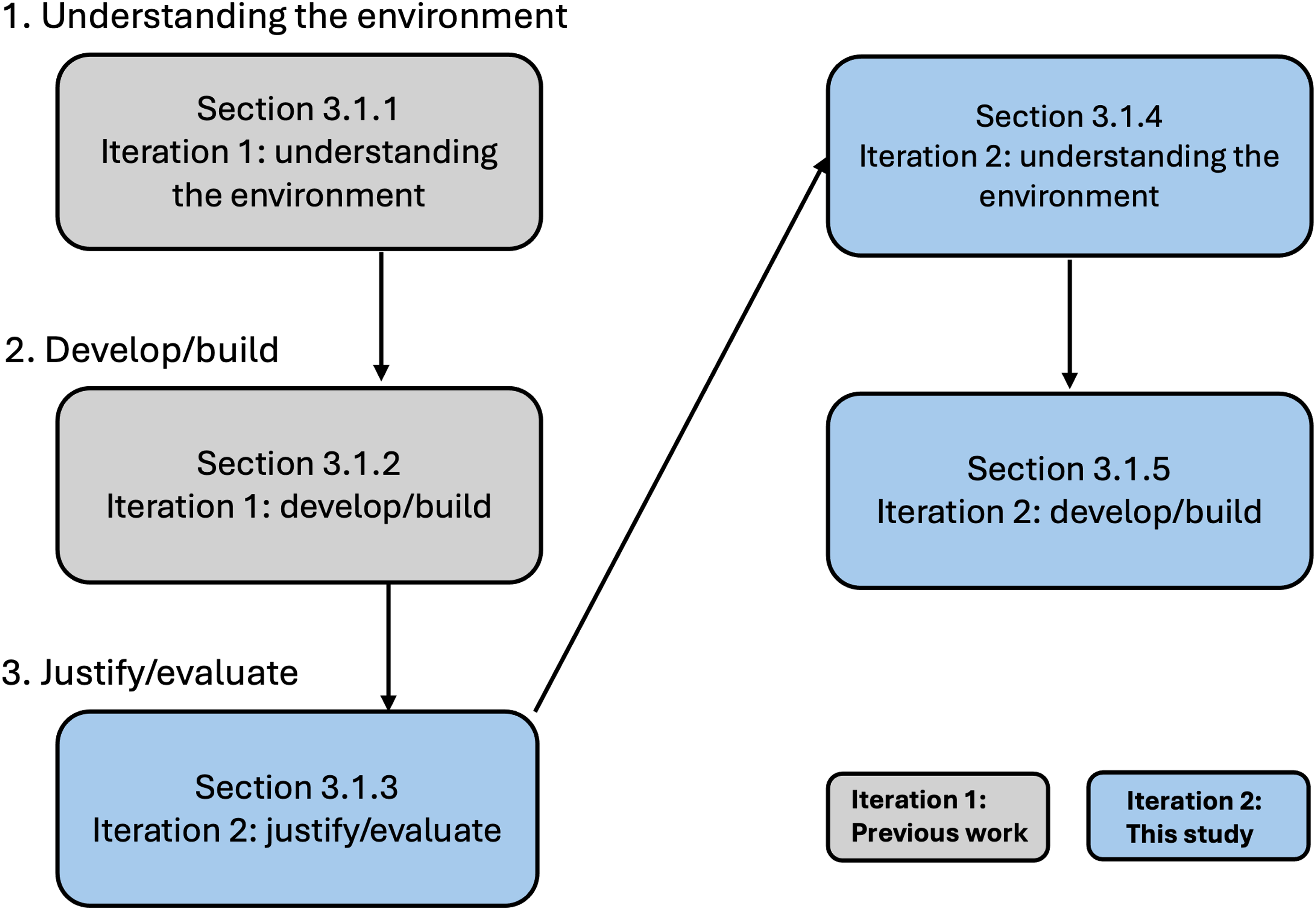

As visualized in Figure 3, the approach used in this work is structured around three iterations: understanding the environment, develop/build, and justify/evaluate. Based on prior work, this study began in the third iteration, then returned to the first iteration, and concluded in the second iteration.

Overview of the design science process adopted for this study.

Preparations for the study began with establishing a contextual understanding of the system development environments in which trustworthy CPS are designed and operated (Ramli & Törngren, 2022). This foundational understanding informed the formulation of our research questions and guided the design of the empirical evaluation.

Following this, the T-Framework and a preliminary version of the T-Framework method were elicited and developed (Ramli & Törngren, 2022).

The study itself started with the third iteration, focusing on evaluating the applicability and utility of the T-Framework method in supporting cross-boundary collaboration among diverse stakeholders involved in the development of trustworthy CPS. Details of this evaluation are provided in Sections “Results” and “Discussion”.

The research then returned to the first iteration—not to re-examine the architectural landscape, but to apply the existing contextual understanding in order to assess how the T-Framework addresses real-world trustworthiness challenges and supports collaborative development in CPS settings.

Finally, the insights gained through the evaluation and the re-application of this understanding were used to develop a refined method for applying the T-Framework. This refinement process is further elaborated in Section “Discussion”.

Data Collection

To gather data focus groups were conducted during a workshop on ”Understanding Trustworthiness in CPS” at the 10th Scandinavian Conference on System and Software Safety (SCSSS 2022).

Focus groups are widely used in design science research to gather structured feedback on artifacts by facilitating group discussions among relevant stakeholders (Hevner et al., 2004; Tremblay et al., 2010). Unlike interviews or surveys, focus groups enable participants to collectively reflect on their experiences and perspectives, and to challenge or expand upon each other’s interpretations—making them especially suitable for evaluating boundary objects and socio-technical methods such as the T-Framework (Barbour, 2007).

Although the focus group sessions were conducted within a workshop setting, their purpose, design, and facilitation adhered to the focus group methodology. That is, participants were not merely attending an informational or instructional session, but were actively engaged in guided discussions about their experience applying the T-Framework to realistic CPS development scenarios. The session was moderated using semi-structured prompts and collaborative exercises aimed at eliciting feedback on the framework’s comprehensibility, usefulness, and limitations. The group interaction was essential to surface areas of agreement, conflict, and divergence across stakeholder roles—an important aspect of evaluating the T-Framework as a boundary object.

The design of the focus groups, including participant recruitment, discussion prompts, and facilitation strategies, is described in detail in the following subsection.

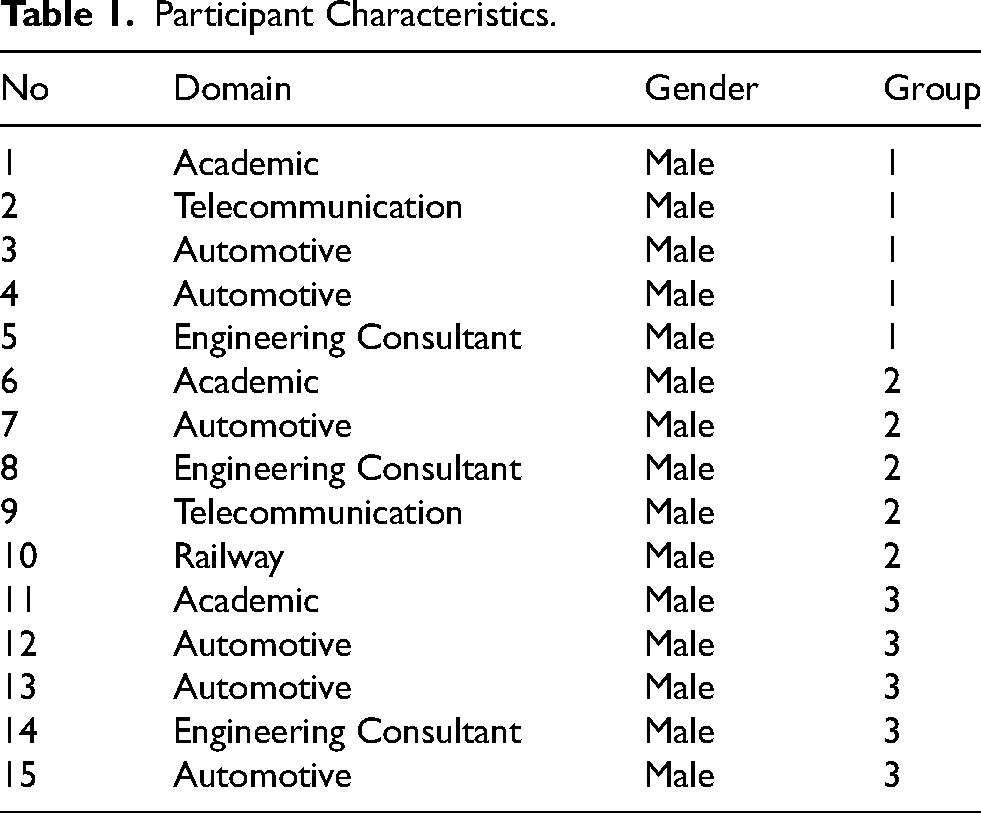

Participants Recruitment and Characteristics

Participants were recruited using a convenience sampling approach, drawn directly from attendees of a workshop focused on systems engineering (Baltes & Ralph, 2022). All participants had relevant backgrounds in either academia or industry, with expertise spanning cybersecurity, safety, and systems engineering. A total of 15 individuals took part in the focus group sessions. They were divided into three groups, each consisting of one academic participant and four industry professionals. All participants were male, domiciled in Sweden, and fluent in English, which was used as the language of communication during the sessions. Table 1 summarizes the characteristics of the participants in this study.

Participant Characteristics.

The Moderator and Observers

The primary researcher served as the moderator for the focus group sessions, guiding the discussions and ensuring the agenda was followed. Additional researchers supported the moderator by taking detailed notes, providing a final summary, and managing time. Each observer was also assigned to a specific group to closely monitor the discussions.

Focus Groups Facility

The venue for the focus groups plays an important role in the success of the research (Greenbaum, 1998). The chosen facility was centrally located, easily accessible via walking and public transportation from the downtown area of the city. The venue was quiet and free from interruptions, with a modular layout that allowed the room to be customized for the sessions. The setup included six tables with seven chairs per table, along with large A1 papers and pens provided at each table for group discussions. Refreshments and coffee were available throughout the sessions, ensuring a comfortable environment for participants.

Focus Groups Guide

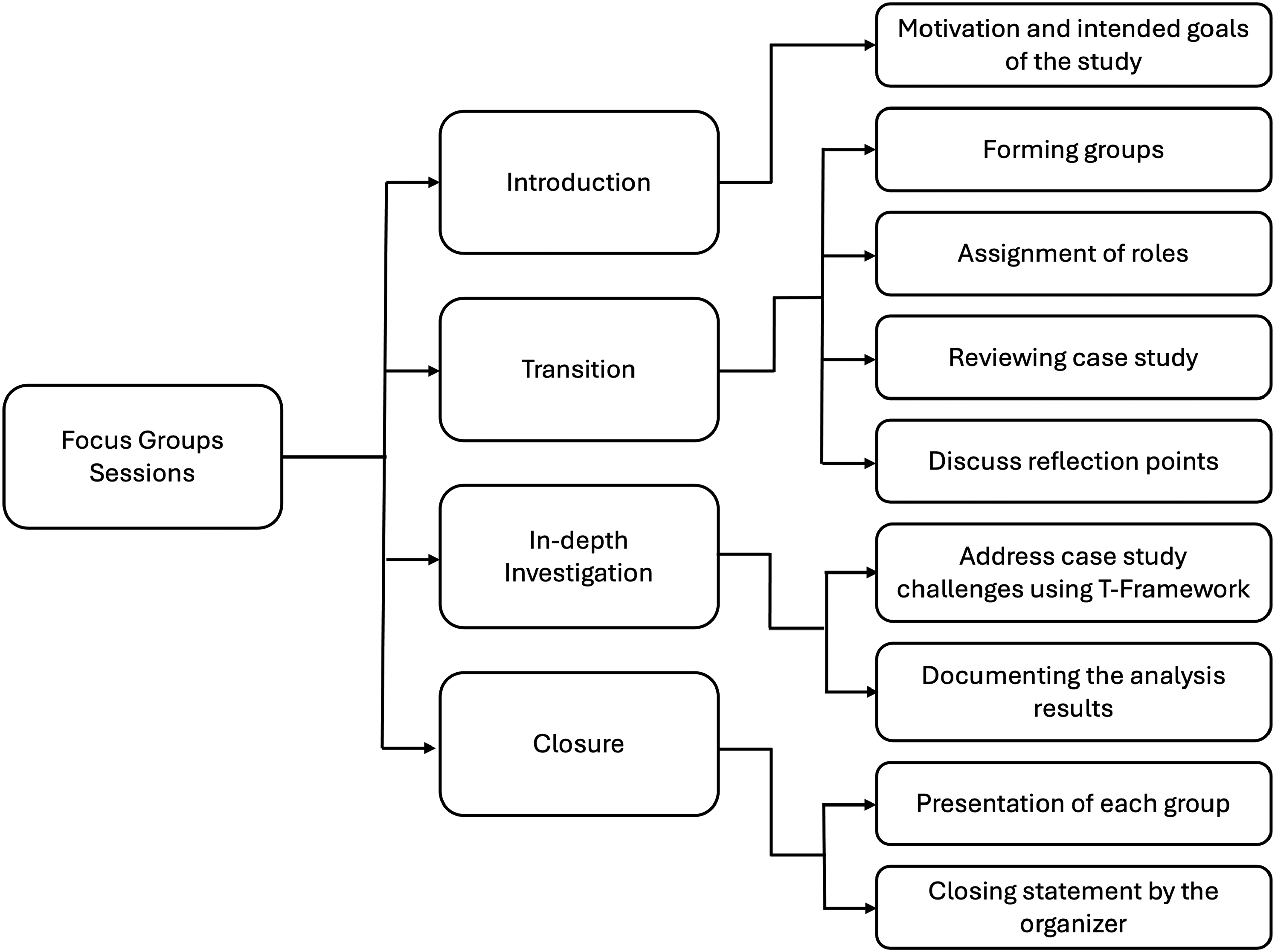

A structured focus group guide 1 was developed, following the guidelines of Krueger and Casey (2000), through several rounds of review and revision. The guide was divided into four stages, i.e., introduction, transition, in-depth investigation, and closure. The details of each stage is:

Introduction: The moderator introduced the topic and purpose of the focus group through a presentation. Participants were briefed on a role-playing exercise and the case that would guide the discussions. Transition: The moderator posed preliminary questions to transition the participants toward the core topics. These included reflection points on cross-boundary collaboration for trustworthy CPS, focusing on issues like ethical AI, privacy, and potential trade-offs between system aspects and trustworthiness. In-depth Investigation: The participants engaged in a detailed discussion of key issues, particularly the applicability of the T-Framework method to support cross-boundary collaboration in realizing trustworthy CPS. Closure: Participants summarized their findings and shared them with the group, allowing for collective discussion.

Focus Groups Sessions

Figure 4 shows the flow chart of focus group sessions. The first session began at 1:30 p.m., focusing mainly on introductions. The moderator introduced himself and the research team, explained the purpose and intended goals of the study, and informed participants that their input would contribute to the research. This introductory session lasted 30 minutes.

Flow chart of focus group sessions.

The second session started at 2:15 p.m., during which participants were divided into groups of five. Each group received a copy of the focus group guide. To support diverse and structured reflection, participants were assigned roles aligned with their domain expertise—such as systems engineer, safety expert, cybersecurity specialist, data protection officer, and environmentalist. These roles were not part of a theatrical role-play, but were instead used as a facilitation device to anchor discussions in realistic stakeholder perspectives and to elicit insights related to the interdisciplinary application of the T-Framework.

After reviewing the case study described in the guide, each group was instructed to reflect on the scenario and discuss how to address its challenges using the T-Framework. As part of the guided activities, all groups were explicitly given the task to use a voting mechanism to prioritize the T-Framework’s trustworthiness attributes from the perspective of their assigned roles. Participants individually assessed whether each attribute was relevant to their role, and their responses were compiled in a matrix format, with attributes as rows and stakeholder roles as columns. Each group then documented their analysis and reflections on A1 paper, and a representative from each group presented the results in a plenary session. The focus group concluded with a thank-you and closing summary from the moderator.

Data Analysis

Data analysis was based on field notes taken by the moderator and observers during the sessions. In addition to these notes, the written reflections and A1 paper outputs of each group served as primary data. All notes were carefully reviewed and analyzed by the research team to extract insights and themes from the focus group discussions.

Results

Important observations from each focus group are presented below. These are then related to the frequent challenges of developing trustworthy CPS.

Group 1

In this reflection phase, Group 1 did not respect the point of view of their impersonated role, rather eliciting generic safety-related concerns. No common grammar was defined, and several questions arose regarding the interpretation of the T-Framework’s visual representation. For instance, in the framework, the attributes are placed along a pointed arrow, this prompted questions regarding whether this means that they have been ordered according to some metric?

In regard to the task, the group was concerned with the attack surface that connectivity exposes a complex CPS to, and discussed related cyber-attack scenarios. Opinions were exchanged about the intended use of the system, possible abuses and misuses, touching upon fairness and ethical properties. Successively, the group ranked the T-Framework attributes via a voting mechanism. Each member had to evaluate if a given attribute was of concern for his/her role. The answers were noted on a matrix where the rows represented the attribute and the column the role name. Thereafter, the attributes were sorted according to the number of votes. The result saw availability, safety, and security with the same number of votes. Furthermore, all the attributes but ethics received at least one vote. When voting, the members were focusing on the specific role’s perspective without considering others’ viewpoints, such that they could optimize the quality requirements concerning their role.

At the end of the task, the group claimed to be missing a structured approach about how to tackle the assignment. They would have appreciated a framework that enumerates the steps to follow to sequentially produce the system of system analysis in a timely manner. In this way, they proposed that progress could be tracked, and brainstorming could avoid becoming an endless recantation.

Group 2

Group 2 focused early on the reflection points, with the group being concerned about the trade-offs between dependability attributes (e.g., safety vs cybersecurity) and where the trade-offs could emerge in realizing trustworthy CPS. They also anticipated the potential trade-offs between ethical and dependability attributes (e.g., privacy vs cybersecurity) when developing complex CPS. They argued that the T-Framework could make stakeholders aware and push them to address these trade-offs, especially those regarding the ethical vs dependability attributes.

Regarding the task, the groups suggested that all trustworthiness attributes in the T-Framework are relevant. Taking a different standpoint than Group 3, they ranked safety and cybersecurity as the highest priority. They also regarded sustainability as a high priority. They rationalized that safety and cybersecurity are interdependent aspects - there is no safety without cybersecurity. The same goes for sustainability, which also relates to safety - sustainability involves caring about lives but in a longer timeframe. Nonetheless, they found a need for clearer definitions regarding the trustworthiness attributes of the T-Framework. The groups concluded that the explicit definitions of the trustworthiness attributes are essential to avoid ambiguity.

Group 3

Group 3 focused early on the task and the attributes in the T-Framework. The attributes were all seen as important, relevant and possible to rank. The opinion of the group was that transparency and ethics should be prioritized, as it is impossible for a neutral party to verify the other attributes if a system is opaque. However, the group stressed that the ranking would depend on the roles of those involved in each individual case, and the relationship between these people. It would be quite normal for persons that command respect through seniority or formal position to influence the ranking of the attributes. This was summarized as an open question for those attempting to use the T-Framework to consider, e.g., ”Whose voice matters?”.

A way to remedy this could be to start in a narrow case with limited risks to consider, and then slowly increase the scope. It would also be helpful if each attribute in the T-Framework had clear metrics and evaluation models, which each role could use to create the common vocabulary that the group was asked to construct. However, the group also thought the guidance that could be created and standardized to support the use of the T-Framework is endless, including common dependencies between attributes, architectural design patterns, etc.

Regarding the reflection points, the group repeatedly came back to the issue of regulation. All of the described problems could easily emerge when a complex CPS arises that spans across the levels, aspects and attributes described by the T-Framework. An engineer can hardly be asked to juggle all of these issues. This suggested to the group participants that the T-Framework could possibly be of most use when trying to identify where e.g., ethical and legal dilemmas could arise for a particular complex CPS. This could help decision-makers and regulators to act proactively.

The Relationship to Frequent Challenges of Developing Trustworthy CPS

Across all three focus groups, participants encountered distinct challenges during key decision-making activities, such as prioritizing trustworthiness attributes, negotiating trade-offs, and interpreting the T-Framework. These challenges can be interpreted through the lens of knowledge boundaries, as conceptualized by Carlile (Carlile, 2002).

These findings form the basis for the following discussion, where we examine how the T-Framework functioned as a boundary object in relation to these boundaries, and what refinements may be needed to enhance its collaborative utility.

Discussion

The findings presented in Section “Results” demonstrate that while the T-Framework effectively initiated cross-boundary discussion, it also had significant limitations when applied in practice. Each focus group encountered a distinct type of knowledge boundary: syntactic, semantic, or pragmatic. These boundaries shaped the dynamics of collaboration and influenced participants’ ability to prioritize trustworthiness attributes, negotiate trade-offs, and engage in shared decision-making. These observations suggest that although the T-Framework offers foundational support as a boundary object (Star & Griesemer, 1989), its original form does not fully address the complex coordination challenges that emerge in real-world CPS development.

In the remainder of this section, we analyze and discuss the three knowledge boundary types in greater depth and examine how they manifested in the focus groups. We then reflect on their implications for refining the T-Framework as a boundary object capable of supporting more inclusive, effective, and structured collaboration in the realization of trustworthy CPS.

Implications for the T-Framework As a Boundary Object

In this section, how the T-framework contributed to or hindered collaboration in response to the different types of knowledge boundaries is examined.

Crossing Syntactic Boundaries

The findings from Group 1 illustrate the presence of syntactic boundaries, which arise when stakeholders from different domains use distinct terminologies, representations, or formal languages that hinder effective communication. According to Carlile (Carlile, 2002), these boundaries occur when there is no shared linguistic foundation to support mutual understanding. In the context of collaborative CPS development, this is particularly relevant when experts from technical disciplines interact with stakeholders concerned with ethics, law, or environmental impact.

In Group 1, the participants encountered difficulties in addressing the case study using the T-Framework due to the lack of a clear and structured method. They also encountered difficulties when discussing trustworthiness attributes, especially those related to ethics. Although the group succeeded in identifying relevant concerns, such as availability, safety, and security, they struggled to maintain a structured dialogue due to the lack of a common vocabulary. Ethical attributes, in particular, led to vague or generic commentary, and the group was unable to reach alignment on how to analyze or integrate these concerns systematically. In other words, although the framework provided a shared set of terms, some participants interpreted key concepts like ”ethics” or ”privacy” differently, depending on their background. Without more precise and universally understood definitions, these terms remained ambiguous and occasionally led to misalignment in discussions. This is particularly relevant in CPS development when technical experts interact with stakeholders concerned with ethics, regulation, or sustainability (Shneiderman, 2020).

Furthermore, participants also questioned the visual arrangement of attributes, such as whether their order suggested a hierarchy or sequence. This confusion indicates a need for greater visual clarity. Similar concerns have been observed in prior studies on design artifacts in systems and software engineering, where ambiguity in visual structure reduces interpretability (Wohlrab et al., 2019).

Improving the clarity of the T-Framework is therefore essential for supporting the crossing of syntactic boundaries. This could involve refining the visual representation to improve its clarity in guiding the stakeholders, as well as more structured methodological approach to define stakeholder roles, system levels, requirements, assurance cases, and lifecycle documentation (Flammini et al., 2022).

Crossing Semantic Boundaries

For Group 2, the T-Framework demonstrated several useful properties. First, it supported semantic alignment by presenting a defined set of trustworthiness attributes, which gave participants a stable reference point for discussion. This structured scope helped reduce confusion and allowed the group to focus their efforts. Second, it provided contextual structure that highlighted how different attributes contribute to long-term system concerns. For example, the inclusion of sustainability in the framework prompted participants to reflect beyond immediate technical priorities.

The T-Framework also demonstrated a valuable degree of interpretive flexibility. This allowed participants to adapt the meaning of trustworthiness attributes to their respective roles while still engaging in a common discussion. In cross-boundary collaborations, such flexibility is critical. Experts in environmental science, cybersecurity, and systems engineering each bring different priorities and assumptions. The ability to interpret the framework through their own lens while remaining within a shared structure supported meaningful cross-disciplinary exchange.

Despite these strengths, Group 2 identified several important limitations. From our empirical observation, participants noted that the lack of clear and agreed-upon definitions for each trustworthiness attribute made it difficult to determine trade-offs and prioritize concerns in a systematic way. While the framework provided a shared vocabulary, the meanings of key terms such as “sustainability,” “fairness,” or “transparency” were interpreted differently depending on participants’ disciplinary backgrounds and professional roles. As a result, some discussions became circular or stalled, especially when attempting to assess how one attribute should be weighed against another in specific decision-making contexts.

This issue reflects a well-documented challenge in the design of boundary objects and collaborative tools: the need to balance interpretive flexibility, which allows actors from different domains to engage meaningfully, with conceptual clarity, which ensures mutual intelligibility and actionable outcomes. Too much openness can fragment understanding and prevent convergence, while too much rigidity can alienate stakeholders who require contextual adaptation (Caccamo et al., 2023). In the context of trustworthy CPS development, this balance is particularly critical, as discussions often involve complex trade-offs between social, technical, ethical dimensions (Kohlke et al., 2021; Pareto et al., 2012; Shneiderman, 2020; Wohlrab et al., 2019).

These findings point to a central design implication: for the T-Framework to function more effectively as a boundary object, it should include clearer definitions of each attribute, ideally supported by role-specific prompts, use-case illustrations, and contextual examples that guide interpretation without overly constraining it. Such scaffolding would not only enhance semantic alignment but also support deliberative processes where competing values and priorities must be negotiated transparently. In turn, this could improve the capacity of T-Framework to foster shared understanding, support informed compromise, and enable more inclusive and trustworthy cross-stakeholder collaboration in CPS development.

Crossing Pragmatic Boundaries

The experience of Group 3 highlights the persistent challenge of pragmatic boundaries, which arise not from issues of language or interpretation, but from structural and institutional conditions such as conflicting interests, uneven access to decision-making, and power asymmetries among stakeholders. These boundaries are particularly difficult to manage because they are embedded in organizational hierarchies, role legitimacy, and established norms of authority. During the focus group, participants explicitly questioned the distribution of influence in collaborative settings. One participant asked, for instance, “Whose voice matters?” This question reflects a broader concern regarding equitable participation, particularly when some actors possess more institutional authority, technical expertise, or communicative confidence than others.

This observation is consistent with prior research on boundary objects, which shows that such artifacts are not inherently neutral. Rather, they can reflect and reproduce existing power dynamics within the institutions in which they are used. Thomas et al. (2007) argues that boundary objects can be appropriated by dominant groups to advance specific agendas while marginalizing alternative perspectives. Similarly, Huvila (2011) shows how the design of boundary objects can be shaped by hegemonic assumptions, which in turn define what counts as legitimate knowledge and whose contributions are recognized. In both cases, boundary objects may inadvertently reinforce asymmetries unless they are explicitly designed to engage with questions of power, legitimacy, and inclusion.

From our empirical observation, although participants in Group 3 appreciated the T-Framework as a tool for organizing and visualizing trustworthiness attributes, they noted that it lacked the procedural mechanisms needed to address these structural imbalances. For example, stakeholders with technical or managerial authority were more confident in steering the discussion, while those representing environmental, ethical, or user-centered concerns were less vocal or struggled to assert influence. While the framework provided a shared structure for discussion, it did not ensure that all participants could contribute meaningfully to decision-making.

To navigate pragmatic boundaries effectively, both methodological and organizational strategies are necessary. Participants suggested that the T-Framework might be more effective if first applied in low-risk or narrowly scoped cases, where participants can develop shared habits of collaboration and build trust through repeated engagement. This stepwise approach may help establish the procedural norms required for more complex and contentious settings. However, such an approach must be complemented by institutional arrangements that promote inclusive facilitation, distributed authority, and mechanisms for addressing disagreement.

Ultimately, pragmatic boundaries require more than conceptual tools or visual artifacts. They demand a careful attention to participation dynamics, role legitimacy, and the broader governance structures in which collaboration occurs. The effectiveness of the T-Framework as a boundary object will therefore depend on how it is embedded within social and procedural contexts that enable all stakeholders to engage on equal terms. By combining conceptual clarity with equitable participation structures, the T-Framework can support both shared understanding and shared responsibility, and in doing so contribute to more inclusive and accountable collaboration in the development of trustworthy cyber-physical systems.

Refined Method for Applying T-Framework As Boundary Object

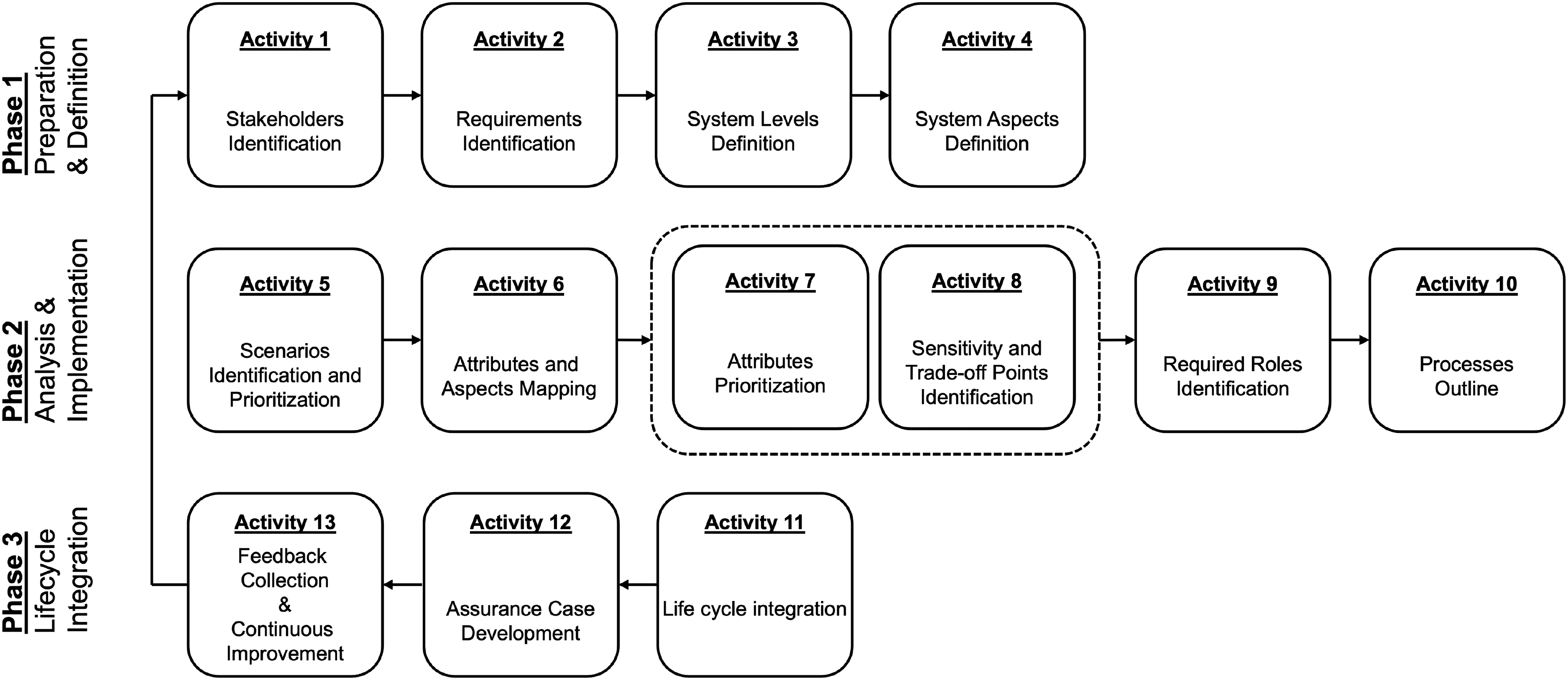

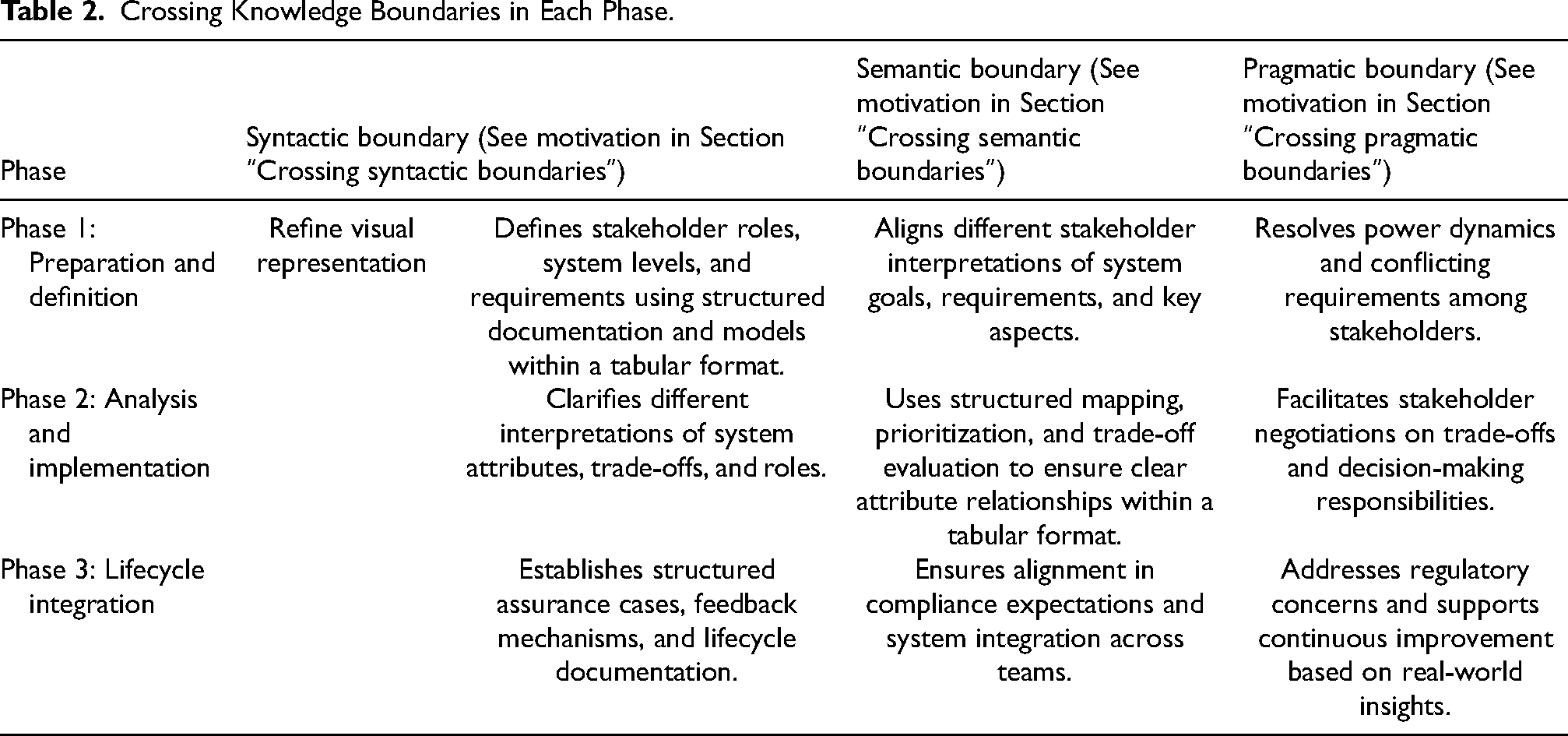

Based on the insights gathered from the focus groups, the T-Framework method can be refined to better serve as a boundary object in realizing trustworthy CPS. As illustrated in Figure 5, the refined method features more structured phases and activities designed to support stakeholders in the cross-boundary collaboration, in contrast to the initial version shown in Figure 2. To support its implementation, we created a dedicated website offering detailed guidance on applying the refined T-Framework method 2 . In the following subsections, we provide a brief explanation of how each activity within the phases addresses specific knowledge boundaries.

Refined method for implementing T-Framework as boundary object.

Specifically, Table 2 summarizes how the refined T-Framework method can be used to addresses the challenges of crossing knowledge boundaries that frequently emerge in the development of trustworthy CPS. In comparison to the initial method (Figure 2), the refined method (Figure 5) offers enhanced support for stakeholders in addressing syntactic boundaries, such as those faced by Group 1. Their problem was rooted in the unclear method of implementing the T-Framework (see Figure 2). To address this issue, the visualization of the method was systematically refined to convey a clearer and more logically coherent sequence of steps and activities, thereby offering stakeholders structured and actionable guidance throughout its implementation. Beyond the refinement of its visual representation, the method has been systematically enhanced at the methodological level to facilitate stakeholder engagement through structured activities that prompt the definition and analysis of roles, requirements, system aspects, and trade-offs. In this context, stakeholders are required to document their analysis results in a tabular format at the end of each activity to ensure consistency and traceability. This not only facilitates a more organized documentation of their discussion but also scaffolds a common vocabulary. Therefore, it can reduce ambiguity and enabling clearer communication across groups.

Crossing Knowledge Boundaries in Each Phase.

Furthermore, the refined method addresses semantic boundaries similar to those encountered by Group 2, whose members struggled to align interpretations of trustworthiness attributes across disciplinary perspectives. By capturing essential information in a well-structured and consistent format, the method facilitates the development of shared meanings and interpretations. Through the standardization of inputs and conceptual framing, stakeholders are guided toward a common understanding, which helps mitigate the risk of misinterpretation and semantic misalignment during trustworthy CPS development.

The refined method can also better handle the pragmatic boundary, as illustrated by the experience of Group 3. By explicitly capturing stakeholder roles, prioritization, and trade-off decisions in a structured format, the method links abstract requirement to actionable considerations. This traceability enables stakeholders to better understand the implications of their decisions in context. Thus, the alignment between intended goals and practical implementation can be fostered. In addition, the systematic documentation of feedback and prioritization helps ensure that the resulting solutions are acceptable across stakeholder groups.

Phase 1: Preparation and Definition

This phase lays the groundwork for system development by identifying key stakeholders, defining system requirements, and organizing the architectural structure. It begins with Activity 1: Stakeholders Identification, which involves identifying all relevant actors such as engineers, policy-makers, and users. This supports the resolution of syntactic boundaries by clarifying communication roles, semantic boundaries by promoting shared interpretations of stakeholder concerns, and pragmatic boundaries by establishing responsibilities and accountability.

Activity 2: Requirements Identification gathers both functional and non-functional requirements. This helps reduce syntactic issues through the use of standardized templates, supports semantic alignment by clarifying system expectations, and manages pragmatic concerns by prioritizing conflicting stakeholder demands.

In Activity 3: System Levels Definition, the system is broken down into hierarchical levels to ensure consistency in how each component is addressed. This structure improves syntactic clarity, supports semantic disambiguation between layers of abstraction, and helps align responsibilities across organizational units.

Activity 4: System Aspects Definition outlines key concerns such as safety, usability, and maintainability across the system. This activity contributes to syntactic coherence by introducing shared terminology, to semantic consistency by clarifying how aspects interact with system goals, and to pragmatic clarity by surfacing potential conflicts among priorities.

Phase 2: Analysis & Implementation

This phase focuses on scenario exploration, attribute evaluation, role definition, and process planning to support system realization. It begins with Activity 5: Scenarios Identification and Prioritization, where operational and failure scenarios are identified based on system goals and risks. This structured definition of scenarios helps stakeholders use a common reference point (syntactic), facilitates a shared understanding of critical events (semantic), and supports alignment on development priorities (pragmatic).

Activity 6: Attributes and Aspects Mapping involves linking trustworthiness attributes such as security, resilience, and ethics to the aspects of the system. This mapping enhances syntactic consistency by using standardized formats, supports semantic coherence by clarifying how attributes contribute to system properties, and addresses pragmatic considerations by identifying interdependencies across stakeholder concerns.

In Activity 7: Attributes Prioritization, stakeholders collectively rank trustworthiness attributes based on relevance and criticality. This ranking process encourages structured communication formats (syntactic), resolves interpretive differences (semantic), and facilitates negotiation around conflicting values (pragmatic).

Activity 8: Sensitivity and Trade-off Points Identification identifies where critical trade-offs exist between attributes or system functions. This activity provides a structured approach to evaluating trade-offs (syntactic), clarifies the consequences of competing design choices (semantic), and supports constructive dialogue to resolve competing interests (pragmatic).

Activity 9: Required Roles Identification defines who is responsible for what across the CPS lifecycle. This helps ensure common understanding of responsibilities (syntactic), clarifies expectations between roles (semantic), and addresses authority and decision-making distribution (pragmatic).

Finally, Activity 10: Processes Outline defines the main development, testing, and assurance workflows. This contributes to syntactic alignment through clear documentation, supports semantic alignment by standardizing process interpretation, and facilitates compliance and operational clarity (pragmatic).

Phase 3: Lifecycle Integration

The final phase ensures that the system is monitored, evaluated, and improved over time. Activity 11: Lifecycle Integration focuses on maintaining consistency across the development lifecycle and ensuring that system trustworthiness is preserved through transitions. This activity supports syntactic coordination through structured documentation, semantic continuity across teams, and pragmatic alignment in system evolution.

Activity 12: Assurance Case Development involves building structured arguments and collecting evidence to demonstrate that the system meets its trustworthiness goals. This process supports syntactic precision through formal argument structures, aids semantic clarity by aligning stakeholder interpretations of assurance, and helps address external audit and regulatory concerns (pragmatic).

Finally, Activity 13: Feedback Collection and Continuous Improvement facilitates learning and adaptation by integrating lessons from real-world operation. This activity helps create consistent formats for feedback (syntactic), encourages shared reflection on system behavior (semantic), and supports iterative improvement in governance and decision-making processes (pragmatic).

Implications for Trustworthy CPS Development

Given stakeholders often come from diverse disciplines, cross-boundary collaboration is inherently prone to the risk of conceptual misalignment. This challenge is particularly salient in the context of trustworthy CPS development, where ethical aspects are just as important as dependability aspects. Ethical considerations, however, are relatively novel within traditional engineering domains and are therefore less likely to have standardized interpretations among stakeholders. As a result, it is essential that any architectural frameworks either include explicit definitions for critical terms or incorporate mechanisms to facilitate consensus-building around their meaning. Without such support, stakeholders may rely on their own disciplinary assumptions, potentially leading to divergent interpretations and impaired coordination.

Carlile (2002) highlights that different types of knowledge boundaries such as syntactic, semantic, and pragmatic, require tailored integration mechanisms. In the development of trustworthy CPS, the challenges frequently extend into the pragmatic domain, where stakeholders not only speak different languages or use different concepts, but also operate under different values, priorities, and incentives. Addressing these boundaries requires the transformation of knowledge in a way that allows for shared understanding and practical collaboration. Architectural frameworks must therefore do more than organize information; they must serve as instruments for dialogic engagement and reflexive learning.

Moreover, power dynamics among stakeholders play a crucial role in shaping how effectively an architectural framework is utilized in practice. In collaborative settings such as trustworthy CPS development, the utility of an architectural framework depends not only on its technical structure but also on its capacity to mediate social interactions and support joint ownership of decisions. Assuming that collaboration will naturally emerge from the use of shared tools risks overlooking the influence of disciplinary hierarchies and institutional asymmetries. Carlile (2002) further emphasizes that in order to transform knowledge across pragmatic boundaries, where stakeholders differ not only in language but in interests and values, frameworks must facilitate mechanisms for negotiation and deliberation. This includes enabling the articulation of contested perspectives and fostering a balance of influence among participants. Without such provisions, even well-designed frameworks risk becoming instruments of dominance rather than platforms for inclusive collaboration

According to Wohlrab et al. (2019), such deliberative capacity is essential, particularly in agile or adaptive systems contexts where stakeholder goals and interpretations may evolve rapidly. They argue that boundary objects should not be static artifacts but instead dynamic mediators that evolve with stakeholder practices and concerns. This view aligns with a previous empirical study on the utilization of reference architectures as boundary objects (Ramli & Asplund, 2025), in which it was found that differences in the maturity of disciplines, such as between the well-established field of safety engineering and the emerging domain of cybersecurity, can significantly complicate collaboration. In that study, power was identified as a critical contextual factor influencing how reference architectures are interpreted and negotiated in cross-disciplinary settings. Disciplines with more mature practice often have access to established standards, stronger organizational legitimacy, and a greater repertoire of reusable artifacts. This enables them to exert more influence in shaping architectural decisions. Conversely, stakeholders from newer or less mature domains may face challenges in having their expertise recognized and their priorities reflected in design discussions, even when their contributions are essential for achieving system-level trustworthiness.

The implications of these dynamics for trustworthy CPS development are substantial. Achieving trustworthiness requires the integration of technical, organizational, and ethical perspectives in a way that is both rigorous and inclusive. When architectural frameworks do not explicitly acknowledge or respond to asymmetries in stakeholder influence, they risk reinforcing dominant interpretations and marginalizing viewpoints that are equally important for safeguarding ethical and societal concerns. (Lakemond et al., 2021) argue that digital transformation in complex systems brings new actors, capabilities, and value structures that challenge traditional power configurations. This evolution calls for governance mechanisms and collaborative structures that can support distributed control, accommodate role innovation, and legitimize diverse perspectives. Without such mechanisms, architectural frameworks may fail to respond effectively to emergent trust-related concerns, particularly those raised by stakeholders outside dominant engineering communities.

Conclusions and Future Work

This study examined the T-Framework as a boundary object to support cross-boundary collaboration in the development of trustworthy CPS. Focus group sessions with CPS practitioners were conducted to evaluate the effectiveness of the T-Framework and its method. The findings revealed challenges related to crossing syntactic, semantic, and pragmatic knowledge boundaries. These challenges will affect critical activities during the development of trustworthy CPS, such as the prioritization of trustworthiness attributes, the negotiation of trade-offs, and the establishment of shared understanding among stakeholders with diverse disciplinary backgrounds, priorities, and interests. These findings informed several refinements to the T-Framework method. The visual representation of the framework was redesigned to convey a clearer and more logically coherent sequence of steps and activities, thereby providing stakeholders with structured and actionable guidance throughout its implementation. In addition to visual improvements, the method was systematically refined at the methodological level to better support stakeholder engagement. This includes the integration of structured activities that facilitate the definition and analysis of roles, requirements, system aspects, trade-offs, assurance cases, as well as documentation of feedback and lifecycle. The findings thus highlight the importance of incorporating structured mechanisms into both the design and utilization of architectural frameworks to promote shared lexicons, common understanding, and balance stakeholder influence during cross-boundary collaboration in the development of trustworthy CPS.

Future work will be the third iteration of this study and will involve applying and examining the refined T-Framework in operational contexts, such as industrial CPS projects.

Footnotes

Acknowledgment

This research has been carried out as part of the TECoSA Vinnova Competence Center for Trustworthy Edge Computing Systems and Applications at KTH Royal Institute of Technology.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interest

The author(s) declared no potential conflicts of interest for the research, authorship, and/or publication of this article.