Abstract

The purpose of this study was to determine the topics being studied, theoretical perspectives being used, and methods being implemented in current literacy research. A research team completed a content analysis of nine journals from 2009 to 2014 to gather data. In the 1,238 articles analyzed, the topics, theoretical perspectives, research designs, and data sources were recorded. Frequency counts of these findings are presented for each journal. Chi-square tests of independence revealed statistically significant differences among the topics, theoretical perspectives, designs, and data sources across the nine journals. These results suggest that the field of literacy research may be fragmented, which has been a concern for literacy researchers since the paradigm wars of the 1980s and 1990s. We urge the literacy research community to continue to demand rigorous research, but to do so in a way that appreciates the power in viewing and studying teaching and learning from diverse perspectives, using diverse methods, and with recognition that a foundational aspect of rigorous research is the match between research questions asked and research methods used.

It is the job of literacy scholars to stay current in the field of literacy research. However, staying current is becoming increasingly difficult as the field has experienced rapid and continued growth in scope, volume, perspectives, epistemologies, and methodologies (Beretvas, 2004; Duke & Mallette, 2011; Kamil, Afflerbach, Pearson, & Moje, 2011; Lomax, 2004). This expansion has resulted in heated debate about appropriate perspectives and methods, as evidenced by the “reading and paradigm wars” of the 1980s and 1990s, which pitted whole language against phonics and quantitative research against qualitative research (Kamil, 1995; Kamil, Afflerbach, et al., 2011; Pearson, 2004; Stanovich, 1990). The new millennium brought No Child Left Behind (NCLB; 2002) and the

In 2001, Duke and Mallette hypothesized that the field of literacy research would welcome “ecological balance,” an understanding of and appreciation for different paradigms and methodologies, as opposed to fragmentation. More recently, in the preface to the fourth volume of the

In light of this sometimes-fractious history, we conducted a content analysis of literacy journals to develop a picture of what is currently being published in the field of literacy. In our investigation, we answer the following questions:

Related Research

In conducting this analysis, we follow other researchers who have explored trends and issues in literacy research. Past content analyses have often been organization-specific, such as the retrospective analysis of the National Reading Conference’s publications, conducted by Baldwin and colleagues (1992), which summarized 40 years of trends in the

Several content analyses of single journals over long periods of time focused on approaches and authorship changes. For example, Dunston, Headley, Schenk, Ridgeway, and Gambrell (1998) examined 694 research studies in 20 years of the

Similarly, Guzzetti, Anders, and Neuman’s (1999) analysis of the

Taking a different approach, McKenna and Robinson (1999) analyzed the citation frequency of

More recently, content analyses have focused on the increasing diversity of approaches in literacy research. Still and Gordon (2011) completed a content analysis of Association of Literacy Educators and Researchers’ (ALER’s; formerly the College Reading Association) conference programs from 1960 to 2010 to commemorate the 50th anniversary of the organization, offering insights into the historical context and professional implications for changes in research topics that reflected a greater interest in diversity and a sustained commitment to the meaning-making role of reading. In addition, Morrison and his colleagues (2011) conducted a content analysis covering the authors, methods, participants, and topics in ALER’s journal, now

Two recent content analyses also look at single journals over time. Reutzel and Mohr (2015) looked at the ways

Bauer and Theado (2015) conducted a content analysis of the

The bulk of the literature on content analysis has centered on the topics, research methods, and shifts of one or two publications over time—often decades—and helps develop a picture of each journal’s contributions in an effort to capture how its past might influence its future. Despite this pattern of looking back to look forward, few studies have examined the contents of several journals. One study that looks at literacy scholarship over several journals was conducted by Knudson, Onofrey, Theurer, and Boyd-Batstone (2002), who examined literacy research published in three journals:

The research reported here builds upon this previous work but takes a different approach, analyzing the content of nine publications over a 6-year period, including

Perspective

In analyzing multiple journals, we aimed to capture a broader view of literacy scholarship in terms of audience and purpose than was evident in previous content analyses. Because conceptions of literacy are constantly evolving (Leu, 2000), it is critical to understand how prior definitions of literacy form the basis of new definitions. For this reason, we used a history of science perspective, which maintains that paradigms, as basic theoretical understandings and assumptions held by groups of scholars within the same discipline, inform scientific knowledge at specific times (Fleck, 1979; Kuhn, 1962). Fleck called groups of scholars within the same discipline working in similar time periods “thought collectives.” He described how thought collectives develop “thought styles,” which parallel Kuhn’s paradigms in that both are undergirded by common understandings and assumptions held by a community of scholars. From this perspective, significant scientific progress takes place when there are paradigm shifts. Kuhn explained that when a scientific community faces a series of anomalies that cannot be explained by the current paradigm, the result is a crisis. To resolve the crisis, another paradigm, driven by advances in research, must take its place; thus, a paradigm shift occurs (Kuhn, 1962).

The scholarship published in high-profile literacy journals represents the current conversation in the field—the “paradigm” or set of paradigms of the current literacy research “thought collective” (or thought collectives). Although a history of science perspective, by nature, takes a long view, looking at progress over time and the scientific revolutions that have taken place, it is important to carefully examine the current state of a thought collective to capture the paradigm or set of paradigms that exist and dominate. This allows scholars in the field to see the existing paradigms and thought collectives, which can lead to more collective understanding and ultimately more scientific progress. Such is the goal of the current project. We documented and present the topics, theoretical perspectives, research designs, and data sources in nine literacy research journals over the last 6 years to present a picture of the current paradigms and thought collectives that exist in the field of literacy research.

Method

To investigate the research questions, we conducted a content analysis (Holsti, 1969; Krippendorff, 2004). Hoffman, Wilson, Martínez, and Sailors (2011) explained, “content analysis is a flexible research method for analyzing texts” (p. 29). We used this method to examine the topics, theoretical perspectives, research designs, and data sources found in articles published in nine literacy journals. Key considerations when engaging in content analyses are sampling, objectivity, and systematicity (Holsti, 1969; Krippendorff, 2004). Sampling is important because it determines the validity of the content analysis. Can the documents analyzed answer the research questions presented? Objectivity requires researchers to eliminate, as much as possible, personal predispositions by following rules and procedures (Holsti, 1969). This aspect of content analysis speaks to reliability (Krippendorff, 2004). Would other researchers who analyzed the same texts draw the same conclusions? Systematicity, which is closely related to objectivity, refers to the extent to which the researchers are systematic in all processes of the study, including sampling and coding (Holsti, 1969). In the following, we demonstrate how we strove to be systematic and objective in all aspects of our content analysis, including our sampling of texts.

Our sampling occurred in two stages. Because we wanted to look at the most current literacy research being published, we originally limited our study to the most recent 5 years for which complete volumes were available: 2009-2013. In this stage, to determine the journals, the lead researcher informally surveyed literacy colleagues. He asked 20 literacy scholars from across the United States for their opinion of the five most influential literacy research journals in the field. Although most of the respondents replied to this request accordingly, others indicated that they were unable to limit their response to only five; in these cases, all responses were recorded. We took the results of this informal survey and removed journals that (a) served primarily a practitioner audience (

In Phase 2, this list was compared with impact factor databases: Thomson Reuters’ Journal Citation Reports and SCImago Journal Rankings (www.scimagojr.com). We then included literacy journals that were at the top of these lists but did not emerge from the informal survey of colleagues. Therefore, we added

Data Sources

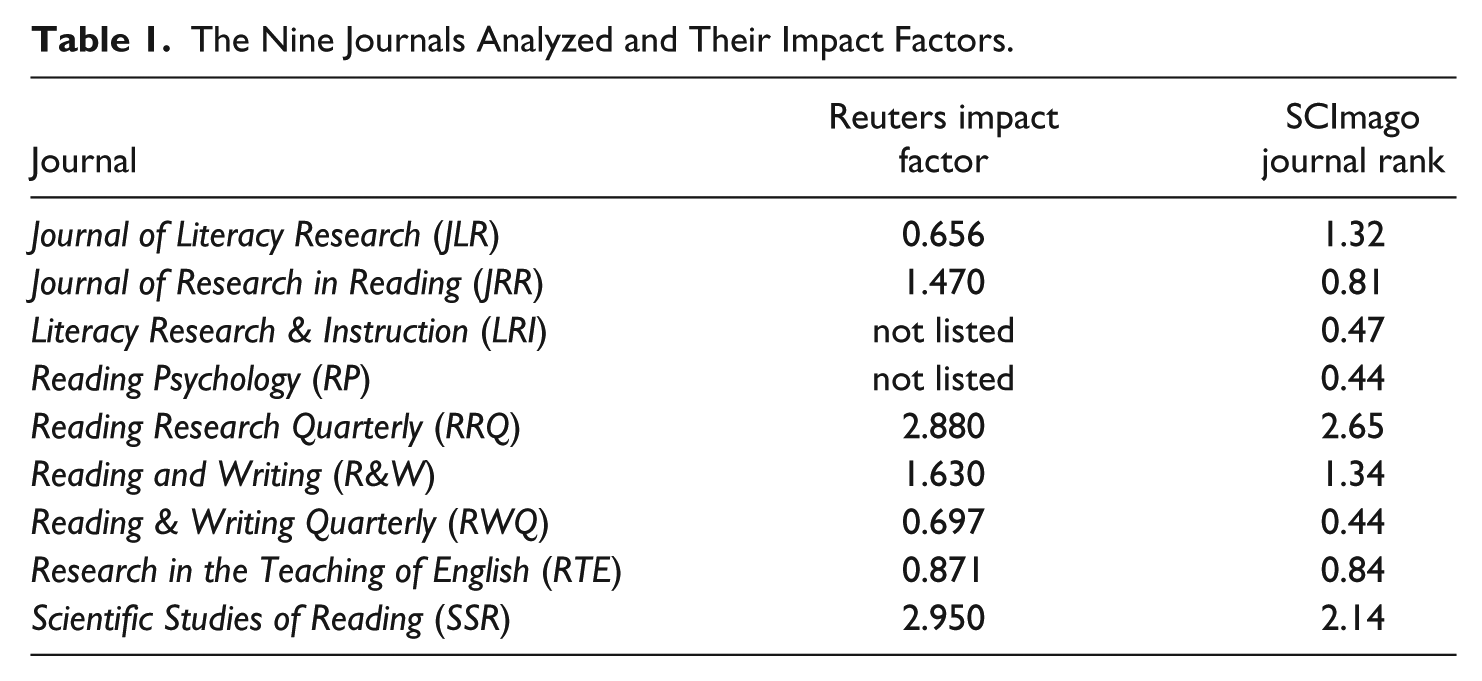

The nine journals included in this content analysis are presented in Table 1, which displays the journals as well as their impact factors and ratings.

The Nine Journals Analyzed and Their Impact Factors.

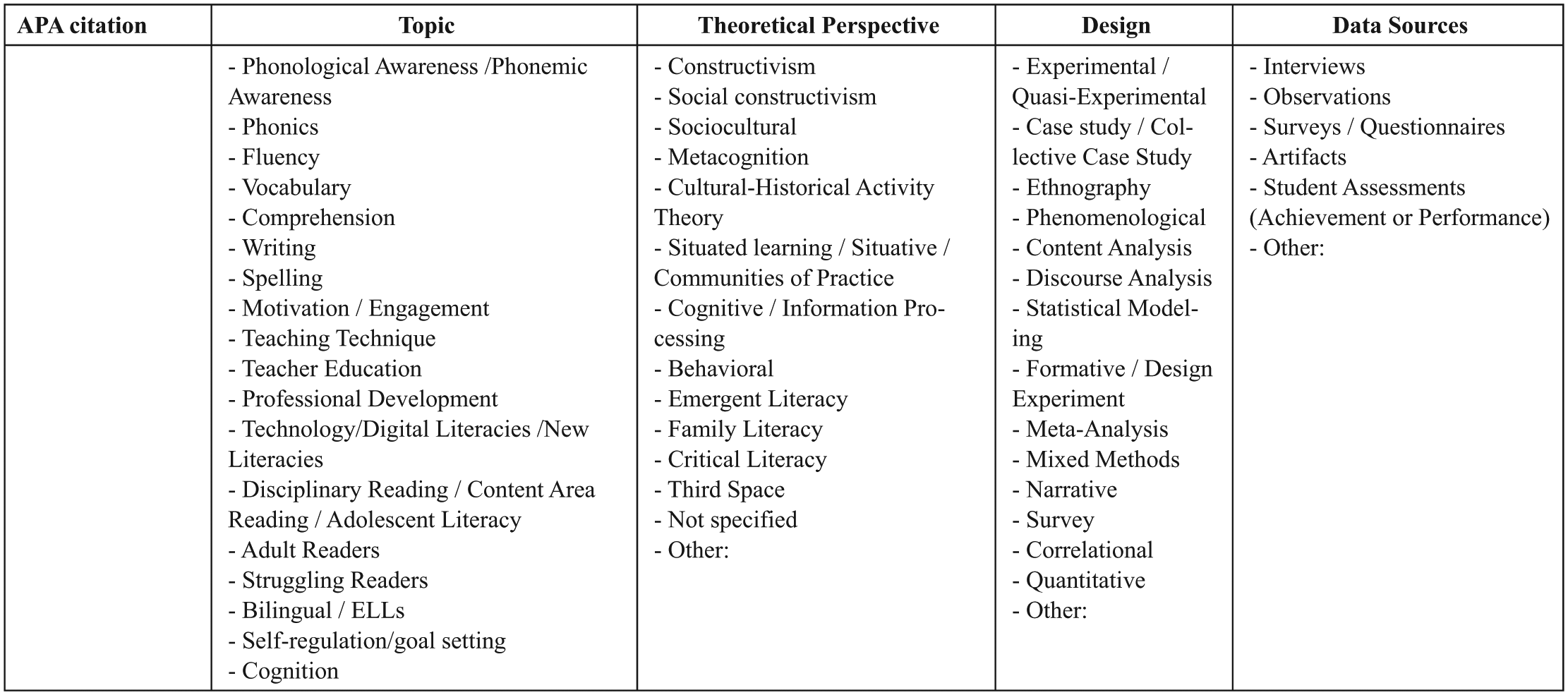

Every article in each of the nine journals in this study from 2009 to 2014 was analyzed. These analyses included researchers documenting the (a) topic, (b) theoretical perspective, (c) research design, and (d) data sources from each article. It is important to note that to reduce researcher bias and move toward objectivity, the researchers only reported what the authors of the articles within the journal listed for each of the areas. For example, if the authors did not explicitly state a theoretical perspective, the researchers did not seek to infer one but rather coded this “not specified.” In addition, researchers coded up to three main topics using the keywords, title, and abstract of the article, and up to three data sources as stated in the “Method” section. Therefore, the frequency counts for topics and data sources are not consistent with the number of articles because many articles had more than one topic and/or more than one data source. The study was limited to articles; that is, editorials, book reviews, interviews, policy statements, and the like were not analyzed. We used an Excel spreadsheet to catalog these data (see Figure 1).

Excel headings and the dropdown options in the spreadsheet.

Data Analysis

Developing codes

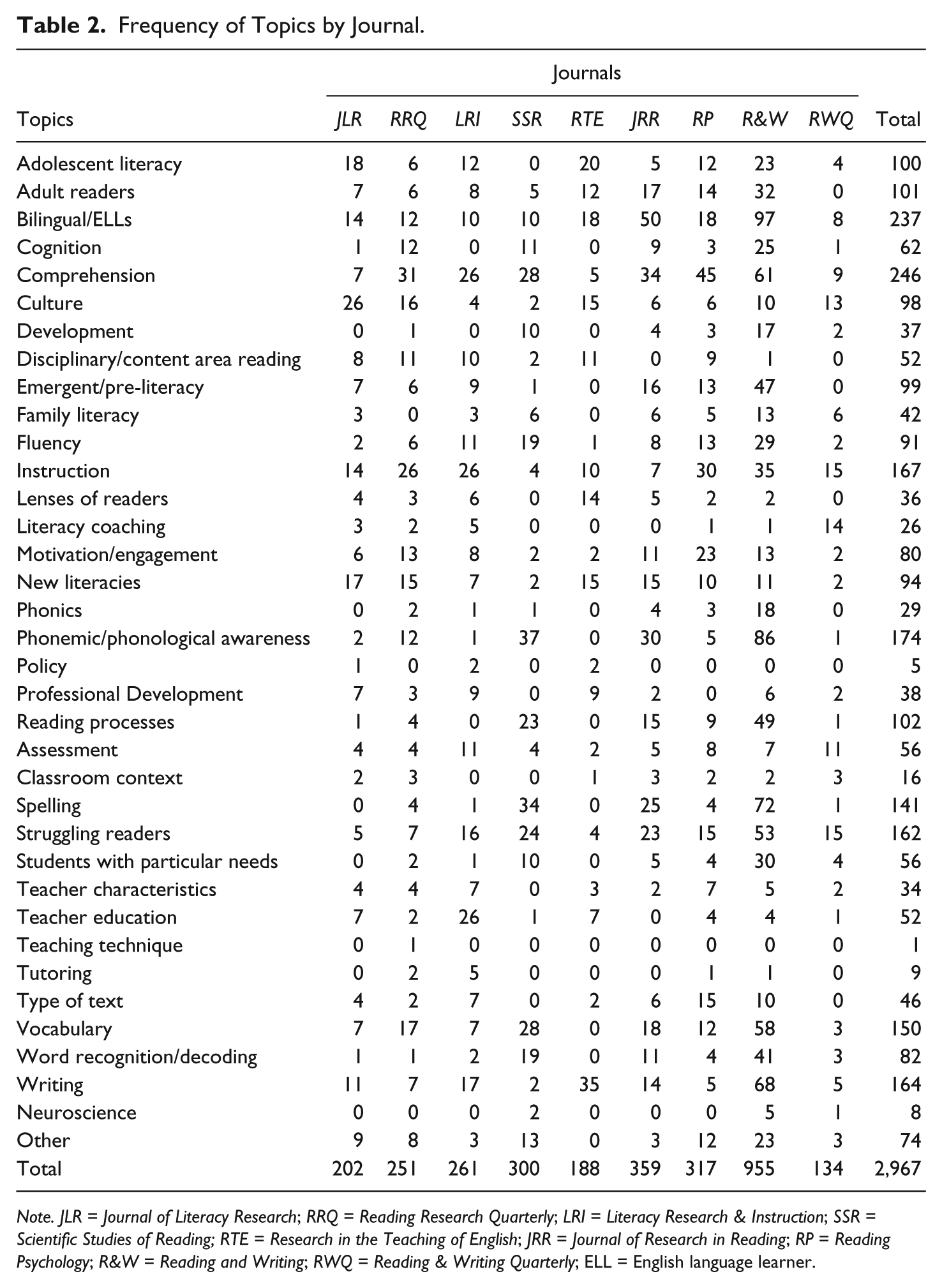

To begin the coding process, the lead researcher created codes using a variety of sources. To create potential topic codes, the lead researcher used the most current “What’s Hot” list (Cassidy & Grote-Garcia, 2013) and the table of contents of current comprehensive literacy research synthesis texts:

During a research team meeting following the first round of analysis, it was evident that the predetermined categories used to code the topics, theoretical perspectives, and research designs were not expansive enough. For example, the code “Other” was applied for nearly half of the topics and a large number of theoretical perspectives. Accordingly, the lead researcher compiled the list of topics and theoretical perspectives that were coded “Other” to pull out additional codes that could more comprehensively capture the topics and theoretical perspectives being studied. After the “Other” topics and theoretical perspectives were recoded with these additional codes, fewer than 7% of the articles remained in the “Other” category. The lead researcher also defined each topic code (initial and revised), so there was a shared understanding among the team (see the revised coding sheet in Figure 2).

Updated codes with definitions for topics and “rules” for applying codes.

During a research team meeting following the first round of analysis, the team decided to code topics based on keywords identified in the article, allowing up to three topics per article. If an article did not have keywords, then the title and abstract were used to determine the topics covered by the article. The team also decided that unless the theoretical perspective was explicitly named in the article, it was to be coded “Not Specified” to ensure that we were not making inferences based on other information in the article. In addition, the research team decided that the “rule” for coding research designs was that it must be named (e.g.,

After the second round of the analysis, we analyzed inter-coder consistency to establish reliability among coders using a random number generator to select 10% of the issues. From these selected issues, each researcher coded articles in a journal previously coded by another team member and then compared the results with the original researcher’s codes. This inter-coder consistency check revealed 87% agreement between coders, and relatively rare discrepancies were discussed and reconciled.

To answer the first research question (What are the topics, theoretical perspectives, research designs, and data sources in the articles published in nine literacy journals over the last 6 years?), we calculated frequency counts for topics, theoretical perspectives, research designs, and data sources in each article (

Results

Frequency counts revealed the topics, theoretical perspectives, research designs, and data sources that were most common in nine journals from 2009 to 2014. In this section, we first present the most frequent codes for each of these components and then present the results of the chi-square tests of independence.

Topics

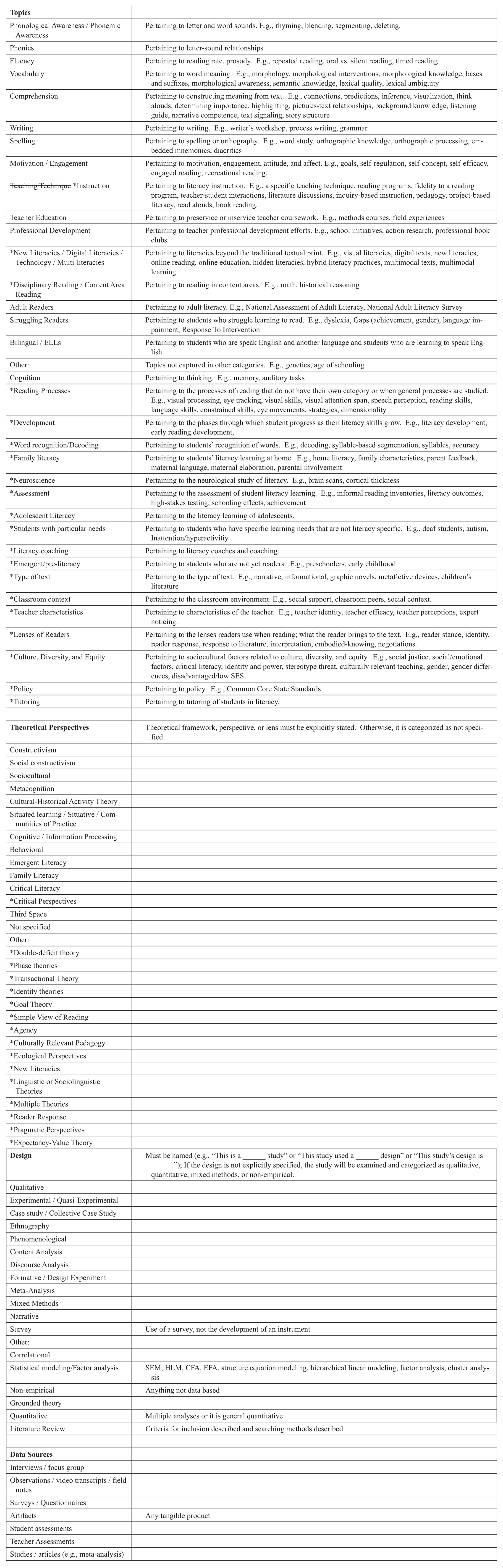

Frequency counts demonstrated that the five topics most written about in these nine journals were (a) Comprehension, (b) Bilingual/ELLs (English language learner), (c) Phonemic/Phonological Awareness, (d) Instruction, and (e) Writing. A chi-square test of independence was conducted to examine the relationship between article topics and the journal in which they were published. The relationship between these variables was significant, χ2(280, N = 2,967) = 1,620.20,

Frequency of Topics by Journal.

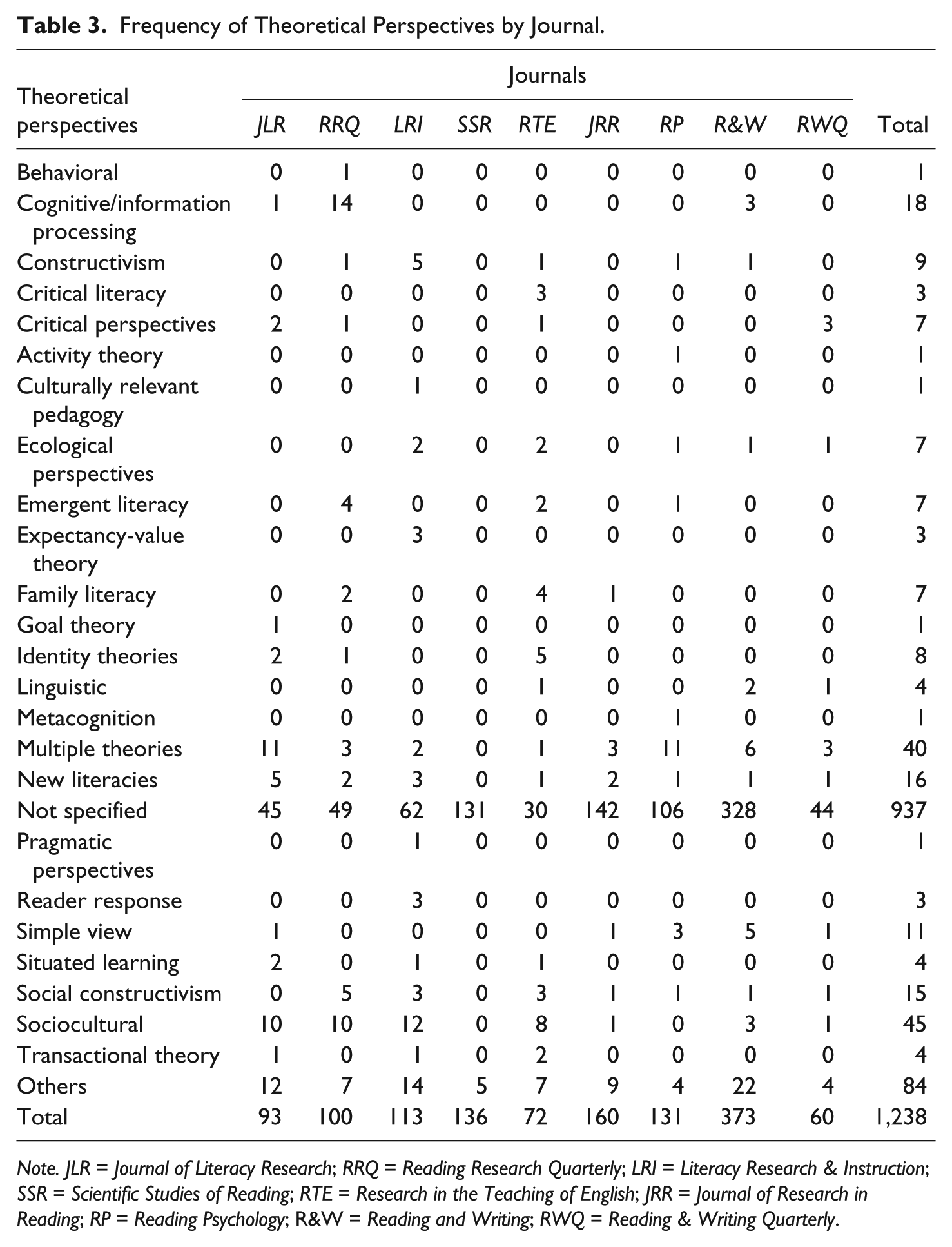

Theoretical Perspectives

Theoretical perspectives were not specified in 75.69% (

Frequency of Theoretical Perspectives by Journal.

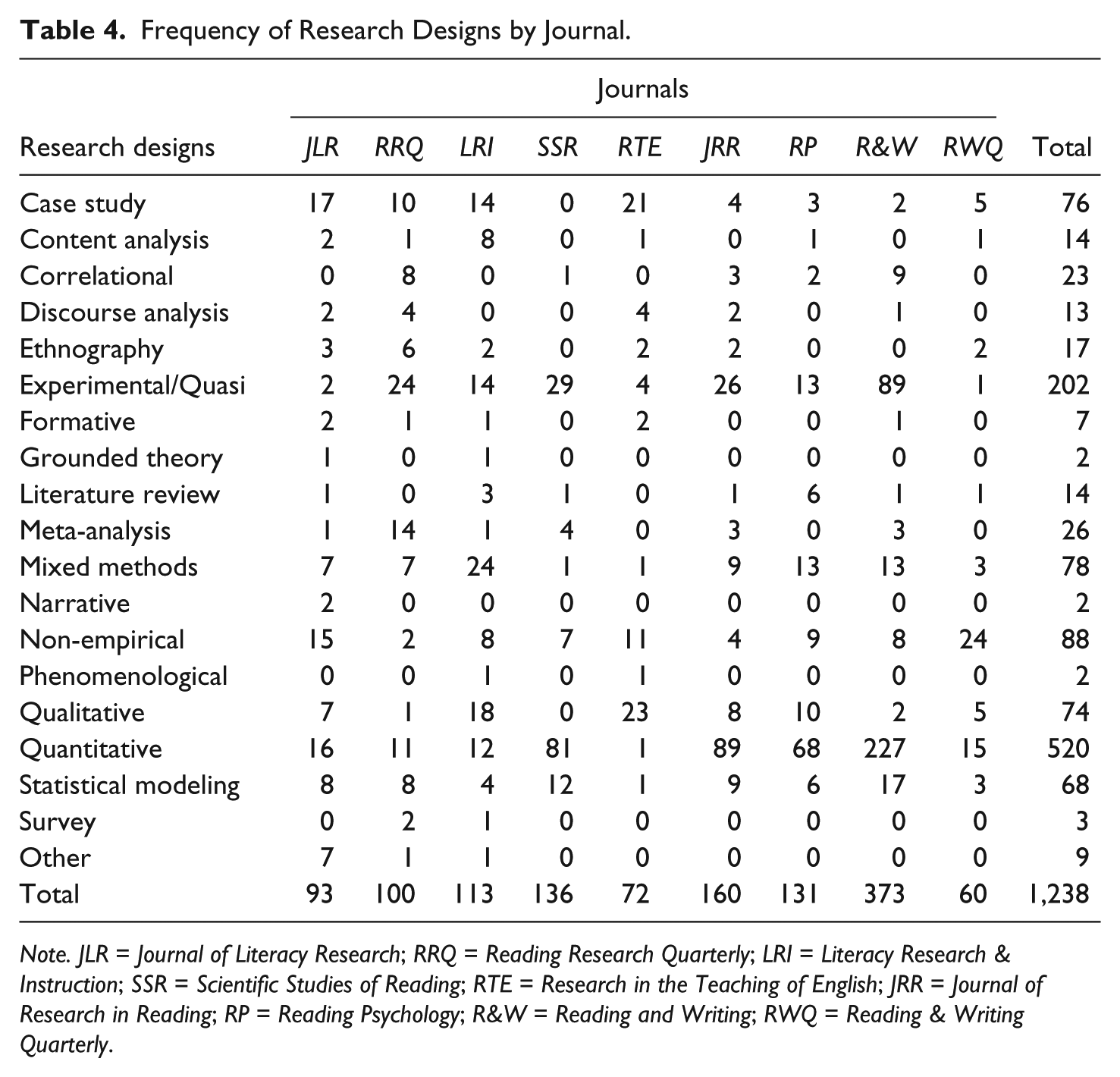

Research Designs

The most frequently reported research designs across all journals were (a) General Quantitative, (b) Experimental/Quasi-Experimental, (c) Non-Empirical, (d) Mixed Methods, and (e) Case Study. Another chi-square test of independence was run to determine whether there was a significant relationship between research design and journals. This relationship was statistically significant, χ2(144, N = 1,238) = 872.39,

Frequency of Research Designs by Journal.

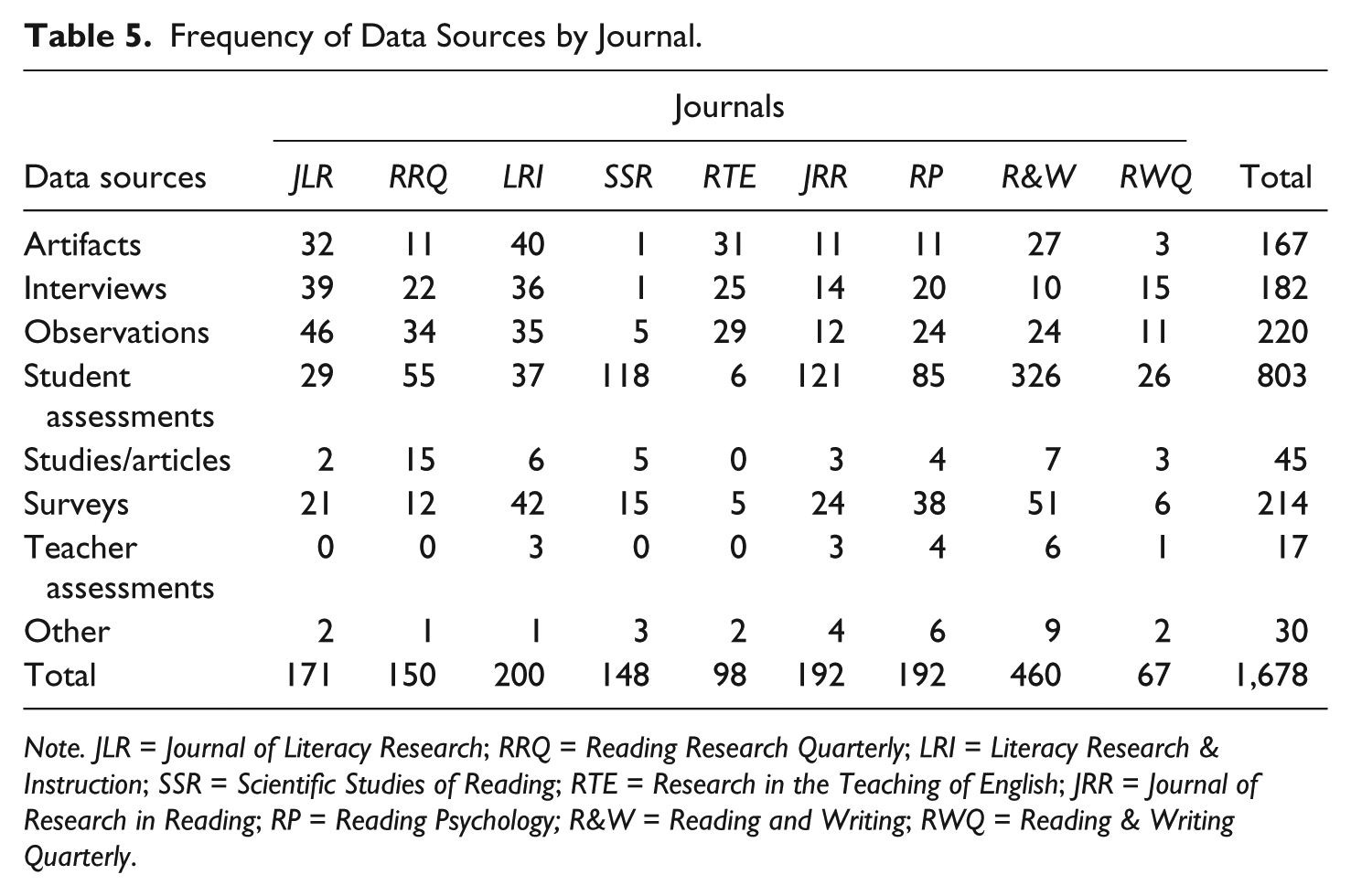

Data Sources

Last, frequency counts indicated that the most commonly observed data sources across all the journals were (a) student assessments, followed by (b) observations/video transcripts/field notes, and (c) surveys/questionnaires. A fourth chi-square test of independence was run to determine whether there was a relationship between data sources and the journal. This was also statistically significant, χ2(56, N = 1,678) = 630.29,

Frequency of Data Sources by Journal.

Thus, based on topics, theoretical perspectives, research designs, and data sources in nine literacy journals are all significantly different with moderate (

Discussion

This study provides a content analysis of nine peer-reviewed journals, six of them representing literacy organizations: ALER (

Overall, the topics appearing most frequently in these journals include Comprehension (20% of articles), Bilingual/ELLs (19%), Phonemic/Phonological Awareness (14%), Instruction (14%), Writing (13%), Struggling Readers (13%), Vocabulary (12%), and Spelling (11%). Articles could receive up to three topic codes, so these percentages are not mutually exclusive. In addition, the chi-square tests of independence demonstrated significant differences in the topics these journals are publishing. In short, these journals are publishing articles on different topics at varying frequencies.

The frequency of topics published in different journals evidences the finding described above. Although

Because the current content analysis took a different approach than previous content analyses (we examined multiple journals for 6 years; previous analyses typically examined only one or just a few journals for many years), it is difficult to draw comparisons and impossible, with our data, to trace trends over time. However, previous content analyses found, like we did, that

Using this content analysis as a snapshot of the current “paradigm,” it appears that there have been three shifts (i.e., topics of study that are currently receiving much attention that previously were not). First, there appeared to be increased attention paid to special populations:

This content analysis found that 937 of the 1,238 (76%) articles did not explicitly name a theoretical perspective. This high percentage is noteworthy as literacy researchers explain that theoretical perspectives strengthen research because they provide a broader perspective, give an external source for anchoring data analysis, and help build theories of human and social behavior (Dressman & McCarthey, 2011; Unrau & Alvermann, 2013). However, theoretical perspectives are most commonly associated with qualitative research (Dressman & McCarthey, 2011; Unrau & Alvermann, 2013), which was less common than quantitative research in the journals we analyzed. Also, we recognize that theoretical perspectives are often not explicitly stated. That is, theoretical perspectives are frequently implied by the problem addressed, the research questions asked, the literature cited, the data collected, the analysis used, and the conclusions drawn. Nonetheless, our research team applied an explicit operationalization to document this often-implicit aspect of research. It is important, then, to recognize that the theoretical perspective code “Not Specified” in this study does not mean nonexistent. Rather, it means that the operational definition of theoretical perspective we used excluded implied theoretical perspectives.

The implied nature of theoretical perspectives aligns with the history of science perspective used in this study. Kuhn (1962) and Fleck (1979) demonstrated that thought collectives are composed of paradigms; that is, they share common understandings and assumptions. From this perspective, it might be unnecessary for scholars to explicate the theoretical perspective they are working from because it is shared among the thought collective. Fleck also explained that fields of study could have multiple thought collectives. Different thought collectives might align with particular questions. For example, a researcher studying digital literacies may not need to explicitly state she is coming from a New Literacies perspective. A researcher studying the opportunities afforded minority female students compared with majority male students might not need to explicitly state that he is using a critical perspective.

Across the nine journals in this content analysis, the most frequently used research designs included Quantitative (42% of articles), Experimental/Quasi-Experimental (16%), Non-Empirical (7%), Mixed Methods (6%), Qualitative (6%), and Statistical Modeling (6%). In addition, the chi-square tests of independence demonstrated significant differences in the research designs used in the research these nine journals are publishing. As literacy researchers might expect, particular journals tended toward particular research designs (Duke & Mallette, 2001).

According to this analysis, literacy researchers most frequently use student assessments as a data source (

Although there appears to be an “ecological balance” (Duke & Mallette, 2001) of methods used in research published in some journals, the field still might be “fractious” (Stanovich, 1998) in that some journals only publish research that comes from a particular paradigm. Viewed more positively, these patterns in different journals from different organizations publishing research from different epistemological perspectives using different methodologies could represent “thought collectives” (Fleck, 1979). That is, researchers affiliate with, collaborate with, and publish for colleagues who share the same epistemologies and areas of expertise. Different thought collectives could be positive for the field. Fleck explained that thinking is a social activity, so the exchange of ideas across thought collectives can spur progress as paradigms are questioned and anomalies arise. Such intellectual and research activity might compel thought collectives to merge as paradigm shifts take place and knowledge is built. Does the current study expose a fractious field or multiple thought collectives? Are readers of

Previous content analyses found shifts from quantitative methods to qualitative methods (Baldwin et al., 1992; Dunston et al., 1998; Guzzetti et al., 1999; Morrison et al., 2011). Our study sought a more nuanced view of designs than just Quantitative, Qualitative, and Mixed Methods, which was typical in previous content analyses of literacy research. Therefore, when we classify our data as Quantitative, Qualitative, and Mixed, there are some gray areas because certain designs (e.g., Formative and Content Analysis) use all three types at various times. However, a rough breakdown of the empirical articles in the nine journals in our study is 75% quantitative, 18% qualitative, and 7% mixed methods.

Certain journals have shifted toward more qualitative research. For example,

Limitations

One limitation is the specific journals analyzed in this content analysis. We attempted to be objective and systematic in selecting the journals. However, we recognize that there are other journals that are of high quality and publish influential literacy research and ideas. Regardless, the journals included in this content analysis, although certainly not inclusive of all literacy journals, are undoubtedly well known and impactful. Another limitation is the rules used to identify research designs and theoretical perspectives—that the authors had to explicitly state the design or theoretical perspective. We recognize that research designs and theoretical perspectives are not always explicitly stated but rather more implicitly described. Nonetheless, we erred on the side of objectivity, setting up rules and procedures that could be systematically followed, in the traditions of content analysis (Holsti, 1969; Krippendorff, 2004).

Conclusion

This content analysis enabled us to see the current paradigms (Kuhn, 1962) and thought collectives (Fleck, 1979) in the field of literacy research. As literacy researchers, we are encouraged by the wide range of topics being studied through a multitude of research designs. We urge the literacy research community to continue to demand rigorous research, but to do so in a way that appreciates the power in viewing and studying teaching and learning from diverse perspectives, using diverse methods, and with recognition that a foundational aspect of rigorous research is the match between research questions asked and research methods used (Duke & Mallette, 2011; Kamil, Afflerbach et al., 2011). Engaging in scholarly dialog across thought collectives will help us identify anomalies in our current understanding, which will advance us as a field by compelling additional research and subsequent paradigm shifts to help us move toward advanced understandings of literacy processes, literacy teaching, and literacy learning (Fleck, 1979; Kuhn, 1962).

Therefore, we encourage literacy researchers to engage in scholarly work in new thought collectives. Attend conferences that present epistemologies different from your own—and participate in the conversation, asking pressing questions and thoughtfully considering their responses. As a field, we must engage in this sort of cross-thought collective collaboration, or we will not move toward “ecological balance” (Duke & Mallette, 2001). We hope this content analysis will contribute to the field by demonstrating what topics are currently being studied (and which topics are not). We also hope to encourage conversation and action regarding research designs where literacy researchers are posing the most important questions of the day and studying them using rigorous and appropriate research designs that improve our work in helping all people live literate lives.

Footnotes

Authors’ Note

The George Mason University Content Analysis Team includes Jan Ainger, Mary Carmen Bartolini, Alicia K. Bruyning, Ellen Clark, Sarah Crain, Nisreen Daoud, Karen Sutter Doheney, Stacey Duff, Susan V. Groundwater, Jacqueline Heller, Amber Jensen, Lesley A. King, Jennifer Lindenauer, Joanna Newton, Allison Ward Parsons, Erin M. Ramirez, Jayne Sherman, and Peet Smith.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.