Abstract

Obstacles, and instructional responses to them, that emerged in two middle school science classes during a formative experiment investigating Internet Reciprocal Teaching (IRT), an instructional intervention aimed at increasing digital literacy on the Internet, are reported in this manuscript. Analysis of qualitative data revealed that IRT enabled students to explain and demonstrate appropriate strategies for locating and evaluating information on the Internet when they were asked to do so. However, students did not use these strategies or they quickly abandoned them when working independently or in small groups during inquiry projects. Data revealed three obstacles that inhibited efforts to promote consistent, independent use of strategies: the teacher’s role in student inquiry, the structure of inquiry projects, and students’ previous strategies. Results suggest notable challenges to implementing instruction that inculcates dispositions among middle school students leading to consistent, independent use of appropriate strategies for locating and evaluating information on the Internet. Implications for practitioners, policy makers, and researchers are discussed.

Keywords

Leading professional organizations have called for increased integration of digital literacy into the school curriculum, including the ability to find and evaluate information on the Internet (International Reading Association, 2009; National Council for the Teaching of English, www.ncte.org; Partnership for 21st Century Skills, 2008). A frequently cited reason for increasing integration is that individuals who are able to efficiently access useful and reliable information and communicate that information effectively will be the most successful in an increasingly global economy that requires high levels of digital literacy (e.g., Leu & Kinzer, 2000). Appropriate skills, strategies, and dispositions associated with digital literacy are also likely to be increasingly important in developing informed citizens who can critically evaluate information and ideas. In addition, there is theoretical and some empirical support for the position that reading on the Internet requires comprehension skills and strategies beyond those necessary to understand conventional printed texts (e.g., Leu et al., 2005; McEneaney, 2006; Reinking, 2010).

Middle school is a logical focal point for increasing integration of digital literacy into the curriculum. Middle school students are expected to read independently and to find relevant textual information in specific-subject areas, and middle school is often where students acquire the foundation for future academic success in increasingly specialized subject areas. However, to integrate digital literacy into their teaching, it is not likely that teachers will completely rework conventional content, activities, and approaches based on printed materials. Furthermore, there is evidence that teachers struggle to successfully implement Internet technologies into their classrooms (Groff & Mouza, 2008), facing a wide range of obstacles to integrating digital literacy into their teaching (Hutchison & Reinking, 2010, 2011).

The present study extends previous investigations of how development of digital literacy can be realistically integrated into middle school instruction. That work focuses on Internet Reciprocal Teaching (IRT), an instructional framework that has shown promise toward achieving that goal (Leu et al., 2005; 2007; 2008). Using formative experiments (Reinking & Bradley, 2008) as our methodological approach, we investigated what factors enhance or inhibit its effectiveness, efficiency, and appeal, and how IRT can be adapted in light of those factors. Although our focus is on IRT, we aim to develop general pedagogical understandings that may inform other efforts to develop digital literacy among middle school students.

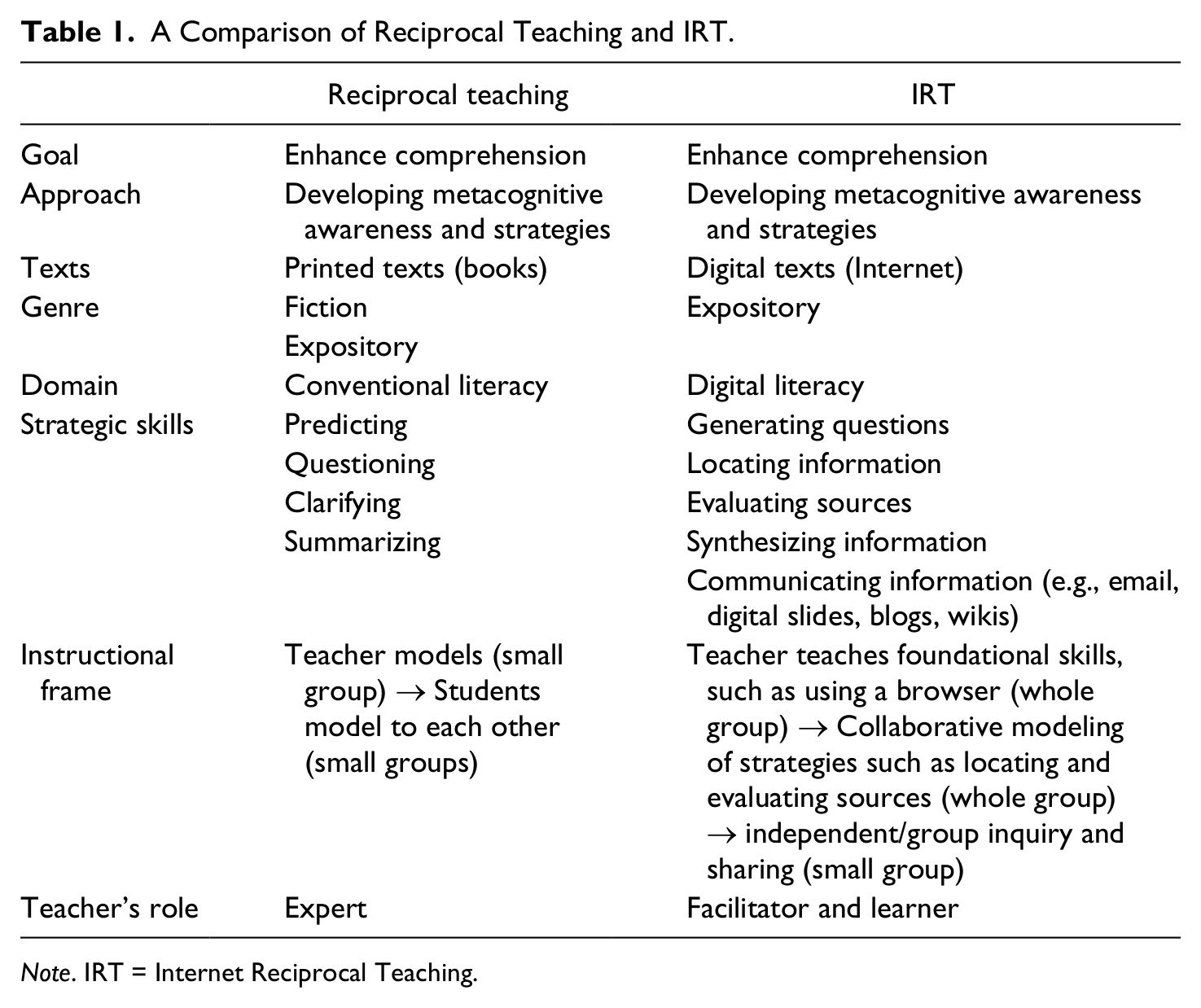

IRT is a variation of Reciprocal Teaching, a longstanding, well-researched, and widely used instructional activity in language arts classrooms. Reciprocal Teaching aims to reveal and to develop orientations and strategies that successful readers use to comprehend conventional printed texts (Palincsar, 1986; Palincsar, Anderson, & David, 1993; Palincsar & Brown, 1985, 1989). Similarly, IRT is an instructional activity that promotes modeling and discussion of effective strategies, although a teacher’s role is less as expert than as a facilitator and a fellow learner. Table 1 compares IRT and Reciprocal Teaching across several dimensions with the IRT column comprising the essential elements of the intervention under study. Essential elements of an intervention are those not subject to modification without undermining the integrity of the intervention or creating a new intervention (Colwell & Reinking, in press).

A Comparison of Reciprocal Teaching and IRT.

Note. IRT = Internet Reciprocal Teaching.

Previous work has investigated how IRT might develop digital literacy in middle school language arts classrooms (Leu et al., 2005, 2007, 2008). That research has revealed that conditions inherent to language arts classrooms often limited options for increasing the effectiveness of IRT. For example, language arts instruction in middle schools is often centered on whole-group instruction. Thus, the small-group work that is beneficial to Reciprocal Teaching and to IRT may require language arts teachers to adopt instructional approaches used less often or that are less consistent with usual practice.

Middle school language arts instruction is also frequently driven by curricular standards that do not, or only minimally, address digital literacy (Leu, Kinzer, Coiro, & Cammack, 2004), and thus, integrating digital literacy may require additional preparation and instructional time, limiting flexibility in implementing IRT. In addition, previous research on IRT was conducted in challenging contexts, for example, schools that served transient populations with high dropout rates and low reading achievement (Leu et al., 2008). Much of what was learned focused on adapting IRT to contexts that are distinctly unfavorable to its success. Thus, the present study aimed to investigate implementing IRT under more favorable conditions. Taking that approach allowed us to determine more clearly how IRT might be implemented in more typical classrooms and which aspects of implementation might be more dependent on context or might lead to more generalizable pedagogical understandings.

Thus, in this study, we investigated the use of IRT in two science classes located in an academic environment with fewer challenges to academic success and in a subject area that may be more conducive to IRT. For example, the inquiry approaches typically used in teaching science, often include small-group work (Palincsar et al., 1993). In addition, science teachers are often urged to incorporate inquiry approaches to science learning (see Liftig, 2011). Often, inquiry approaches in science instruction are scaffolded, moving students through the following sequence: (a) confirmation inquiry (e.g., defined procedures to confirm a previously introduced idea), (b) structured inquiry (e.g., questions with procedures to explore them and drawing conclusions), (c) guided inquiry (e.g., investigating a teacher-prompted research question using self-selected procedures), and (d) open inquiry (e.g., opportunities to design and conduct their own investigations; Banchi & Bell, 2008). Science classrooms that use inquiry approaches typically require students to develop further questions based on knowledge gained from initial questions and evidence (Bohanan, Broderick, Lehrer, & Lucas, 2005), which aligns with the purposes of IRT to generate appropriate questions and to evaluate sources.

The integration of technology into teaching science has also been viewed as ahead of integration in other subject areas (Linn & Davis, 2004; Mahari, 2011). Furthermore, because IRT involves seeking information online to address specific questions, it might fit well with the content, structure, and organization of science classrooms, especially those using inquiry approaches. However, we assumed that integrating digital literacy in general and IRT in particular into existing instruction might still be challenging. To investigate the extent of that challenge and to extend previous work through replication, we conducted a formative experiment in two seventh-grade science classes.

A formative experiment develops an intervention that addresses a specific pedagogical goal in an authentic instructional context. In the present formative experiment, our pedagogical goal was as follows: in two seventh-grade science classes, to increase students’ online research skills, strategies, and dispositions specifically by generating researchable questions, locating relevant information, determining credible sources, synthesizing information, and communicating the results of inquiry.

Data collection and analysis were guided by the following questions proposed by Reinking and Bradley (2008) as a general framework for conducting formative experiments: (a) What factors enhance or inhibit the intervention’s effectiveness in achieving the pedagogical goal? (b) How can the intervention be modified in light of these factors? (c) What noteworthy unanticipated outcomes does the intervention produce? (d) Is there any evidence that the intervention transforms the instructional environment? (e) What pedagogical theories are supported or generated?

In this report, we focus on reporting and discussing data pertaining to Questions a, b, and e, focusing on obstacles we discovered when implementing IRT in a context that was conducive to success. We focus on obstacles because they were a dominant issue in this investigation and because previous studies suggest that the challenges to integrating digital literacy into conventional instruction have inhibited their wider use. For example, based on a national survey in the United States, Hutchison and Reinking (2010) concluded that “Researchers need to focus attention on identifying factors that are barriers to integrating ICTs into literacy instruction and to explore possible interventions that take into account those barriers” (p. 240). The present investigation addressed their recommendation.

Pedagogical Theories, Perspectives, and Assumptions

A formative experiment is conceptually grounded in a rationale for the value of its guiding pedagogical goal and for the proposed instructional intervention as a means to address that goal (Reinking & Bradley, 2008). In this section, we augment the rationale offered thus far with specific pedagogical theories, perspectives, and assumptions that justify the goal and the intervention as a potentially useful intervention in middle school science instruction.

First, the rationale for the goal and the intervention investigated in this study is grounded in what is often referred to as a new literacies theory or perspective (Coiro, Knobel, Lankshear, & Leau, 2008; Gee, 2003; Kress, 2000; Leu et al., 2004) and how the rapid evolution of technology is affecting literacy practices (Lankshear & Knobel, 2003), particularly practices of searching for and evaluating online information (Coiro & Dobbler, 2007). That perspective has been instantiated specifically in relation to reading comprehension on the Internet in academic contexts as an exercise in problem-based inquiry involving skills, strategies, and dispositions that fall within the following taxonomy: (a) identifying relevant questions that can be reasonably researched, (b) locating information, (c) critically evaluating information, (d) synthesizing information, and (e) communicating information (Castek et al., 2007; Coiro, 2003; Henry, 2006; Leu et al., 2004). The taxonomy has been supported through empirical research using verbal protocols to reveal students’ activities and strategies while searching for academic information on the Internet (Leu et al., 2007; Reinking, 2007; Reinking & McVerry, 2008). Professional organizations promoting literacy have identified skills, strategies, and disposition associated with this taxonomy as important components of literacy in a global information age (International Reading Association, 2009; Partnership for 21st Century Skills, 2008). The goal of the present study and the intervention investigated aimed to extend understanding of how these perspectives and goals might be instantiated in an authentic instructional context.

Nonetheless, research suggests that there is a broad array of obstacles that may hinder the integration of digital technologies into instruction (Hutchison & Reinking, 2010, 2011). These include categories such as the availability of technical resources and support, appropriate professional development, time to plan and develop lessons and activities, useful teaching frameworks, and teachers beliefs and perceptions about, for example, the importance and role of digital technologies in school, their own technological capabilities, and what integration entails in relation to the content they teach and how they approach teaching it. The present study investigated the extent to which such obstacles, including those we have identified in earlier work, might be mitigated in a context and in a subject area more conducive to IRT.

Science instruction entails several characteristics that complement IRT’s aims pertaining to digital literacy on the Internet: (a) researching questions, (b) giving priority to evidence when responding to those questions, (c) formulating explanations from evidence, and (d) connecting explanations to scientific knowledge (National Research Council, 1996). Furthermore, since 1993, efforts to reform science education have encouraged teachers to implement inquiry-based learning into science classrooms (American Association for the Advancement of Science, 1993). Research supports using scientific inquiry to effect increases in student achievement (Chang & Mao, 1999; Endler & Bond, 2008; Stohr-Hunt, 1996). In this study, our definition of inquiry instruction in science followed Olson and Loucks-Horsley (2000), who argued that science instruction grounded in inquiry must (a) engage learners in scientifically oriented questions, (b) prioritize evidence, (c) develop explanations, (d) evaluate explanations in light of alternatives, and (e) communicate and justify proposed explanations. Because these characteristics align with comprehension on the Internet, integrating IRT with scientific inquiry may simultaneously develop online literacy and further inquiry in science teaching, which may appeal to science teachers.

Method

Formative experiments are less established than other approaches to literacy research that are framed as scientific experiments or qualitative investigations. Nonetheless, this methodological approach has a strong footing in the field. Interventions and goals studied using this approach include using self-selected readings to increase engagement of second-language learners (Ivey & Broaddus, 2007), cognitive strategy lessons to increase literacy among Latina/o middle school students (Jiménez, 1997), flooding child care centers with books to enhance young children’s literacy development (Neuman, 1999), and engaging students in multimedia book reviews to increase upper-elementary students’ independence in reading (Reinking & Watkins, 2000). The methodological frame for the present study aligns closely with two of these studies (Ivey & Broaddus, 2007; Reinking & Watkins, 2000). A main purpose of this approach is to refine and develop pedagogical theories and principles in the crucible of authentic practice, revealing factors that enhance or inhibit a promising intervention’s success in accomplishing a valued pedagogical goal, thus suggesting useful adaptations to increase effectiveness, appeal, efficiency, and workability. Thus, it more directly informs practitioners and others close to instructional practice (Bradley & Reinking, 2011), generating useful pedagogical principles and theories that Cobb, Confrey, diSessa, Lehrer, and Schauble (2003) refer to as humble or local.

In a formative experiment, instructional difficulties, obstacles, and even failures, are viewed as useful data that can inform instruction and help build pedagogical understanding. Furthermore, this approach acknowledges that even promising interventions that might prove effective in some contexts may not be as effective in other contexts, and it seeks to examine why. Engineering, ecology, and evolution are metaphors underlying this approach (Reinking, 2011). For example, the engineering metaphor suggests that determining thresholds of failure is a legitimate goal of education research and one that can help close the gap between research and practice (Walker, 2006). Furthermore, the aim is not to conduct research that leads to prescriptions, but to identify relevant factors, including obstacles, that inform how instruction can better achieve valued goals. The overall aim is to reduce ignorance rather than find truth (Wagner, 1993) and to make informed recommendations, not inflexible prescriptions.

Participants and Context

Participants were 48 seventh-grade students in two sections of a required science class in Langley Middle School (all names are pseudonyms), a mixed-gendered charter school with single-gender classrooms, located in the Southeastern United States. The participants were female Caucasians, except for one African American student. Langley’s focus as a charter school was on service learning and family involvement. For example, students were required to participate in community service for at least 12 hr each quarter. Family involvement was evident with parents frequently present at the school, for example, picking up and serving hot lunches, serving as volunteers for service learning opportunities, and leading fund-raising. Langley has little turnover of teachers and the student population is stable from year to year. It attracts students who are average to above-average academically with 35% of the students being categorized as gifted and talented. The school did not accept students who qualified for special education.

Ms. Rich, the teacher, had taught high school and middle school science for 16 years. She regularly conducted teacher workshops for the local school district on integrating inquiry projects into science instruction. We recruited her to participate in the present study through her involvement with a content-area literacy initiative at a nearby university. In that initiative, Ms. Rich was considered to be a teacher who was open to new instructional approaches and who commonly incorporated inquiry into instruction.

Ms. Rich had not regularly used computers or the Internet in her instruction, but she was receptive to and enthusiastic about integrating laptops and computer-based activities into her teaching toward helping her students acquire digital literacy on the Internet. In a semistructured interview with Ms. Rich in an early phase of this investigation, we noted that she was open to and enjoyed experimenting with new teaching methods and activities with her students. During that interview, we noted that Ms. Rich’s stated understanding of inquiry aligned with current views in science education.

Prior to implementing IRT, we collected observational data to better understand the environment of the school and specifically to observe the two sections of the class in which we would be working. The intent of these observations was to create a thick description of the classroom setting (Patton, 2002), which is an important phase in conducting a formative experiment (Reinking & Bradley, 2008). The students’ classroom and learning routines were well established when we gathered these data. In her classroom, Ms. Rich displayed completed student projects and experiments along with aquariums, models of insects, scientific charts and tables, and lab safety rules posted on the walls. However, the class projected a relaxed and inviting environment that complemented her informal demeanor. For example, one corner of the classroom had a couch and an ottoman where students could lounge while discussing a group project. Students chose their own seats each day after placing their book bags in the center of the room, and they moved freely around the room as they worked on projects.

To further understand students’ experiences and capabilities in relation to the goals of IRT, we asked them to complete an online survey about their knowledge and use of the Internet before the intervention was introduced. That survey, requiring approximately 40 min, was validated and used in prior research with middle-grade students (Carter-Hutchison, 2009). On the survey, respondents indicated how often they used the Internet in various contexts and for what purposes. The full survey can be viewed at http://www.surveymonkey.com/s/7KDRBK7.

The survey indicated that all students used the Internet outside of school with approximately one third of the responses being several times a week, daily use, or several times a day. Almost all participants indicated that they used search engines outside of school, but half reported never using a search engine in school. However, one third of participants indicated that they used the Internet in school less than once a week and the remainder responded that they never used the Internet at school. Students’ responses were consistent with our observations that, although the latest technologies were available at Langley, they were not used frequently or integrated regularly into instruction. Each classroom had an interactive whiteboard, and teachers could reserve a cart with wireless laptops connected to the Internet, although we noted that the cart was rarely reserved except for our project. Taken together, our systematic observations and survey data were consistent with our intent to investigate an environment conducive to IRT’s success, but where success was not virtually assured.

Implementing the Intervention

Prior to the implementation phase, we discussed with Ms. Rich how IRT might be integrated into her teaching. Working from a matrix of state standards Ms. Rich used to plan instruction, we selected three consecutive inquiry-based units that might fit well with IRT: elements from the periodic table, ecosystems, and genetics. For each unit and its associated inquiry project, we studied Ms. Rich’s planned activities to determine how IRT could fit into the unit. We then created a proposal for integrating IRT with her plans. After consulting with her, we made relatively minor revisions.

Because Ms. Rich was unaccustomed to using laptops in her instruction, we agreed to assist her once a week during two of her classes on a day designated for students to use wireless laptops as a source for completing their inquiry projects. Ms. Rich also agreed to assist in collecting data by regularly completing forms designed to record observations. These forms supplemented our data, especially when we were not present in the classroom. They were also useful in the regular weekly debriefings with Ms. Rich about our observations, in sharing our thoughts about what was or was not working and why, and discussing what adaptations to IRT might be necessary or helpful.

During the intervention phase of this investigation, IRT was implemented in two of Ms. Rich’s general science classes, once a week for 16 consecutive weeks beginning in January. During the first 8 weeks, we assumed the role of participant observers (Patton, 2002). During that period, Ms. Rich requested that we lead 20-min, whole-group introductory lessons, because she was interested in observing us and learning more about Internet reading comprehension, which enabled her to incorporate similar lessons into her other classes. This approach created an environment where students treated us as assistant teachers and resources to Ms. Rich. However, Ms. Rich was clearly in charge and, as will be noted in the “Results” section, throughout the investigation, students turned primarily to her for guidance in completing tasks associated with inquiry projects.

Previous research suggested that IRT was more effective when implemented in stages that began with whole-group modeling and discussion of foundational skills and strategies followed by small-group or independent work (Leu et al., 2005, 2007, 2008). Thus, at the outset we conducted several preliminary lessons that introduced foundational skills and strategies to the entire class. For example, in one lesson we introduced the use of Boolean operators to make searches more efficient (i.e., connecting search terms with and or or). In another lesson, we called attention to general markers of reliability such as distinctions between URLs ending in .com, .org., and .gov., and in another, we introduced strategies for skimming websites for evidence of bias. These lessons consisted of introducing the topic or skill, modeling it on the interactive whiteboard, and then involving students in practicing the strategy or skill with a partner, followed by having a few partners demonstrate their work to the class.

During these lessons, we collected data as participant observers (Patton, 2002). If our data, which we discussed each day after working in the two classes, indicated that students were having difficulty understanding and applying these strategies, we revised them accordingly after consulting with Ms. Rich. During the final 8 weeks of the intervention phase, these introductory lessons were discontinued, although students were frequently reminded of the strategies that had been introduced and practiced previously as they continued to work on projects using the Internet. We continued to collect data as participant observers during the subsequent 8 weeks of the intervention. During this final 8-week period, all instruction in class was focused on science content, with most of the class period devoted to students working on assigned inquiry projects.

Data Collection and Analysis

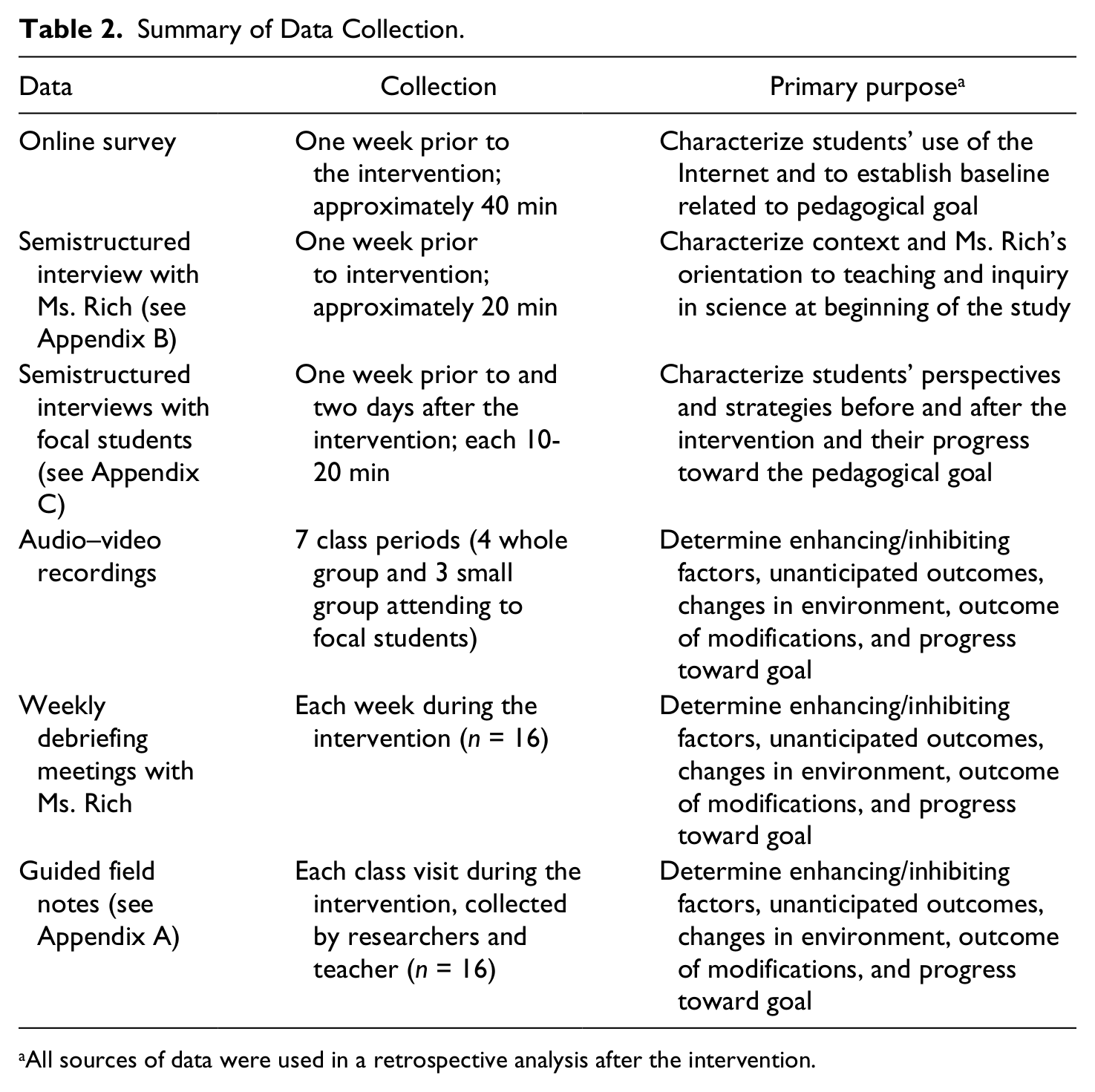

Data included field notes guided by questions and prompts addressing skills, strategies, and dispositions targeted by IRT (see Appendix A); participant observations (Patton, 2002); video and audio recordings of whole-class and small-group activities; and semistructured interviews with Ms. Rich and with focal students (see Appendices B and C). A summary of the data collected and analyzed and how that data informed the purposes of this investigation is provided in Table 2.

Summary of Data Collection.

All sources of data were used in a retrospective analysis after the intervention.

Six focal students were selected in each class based on students’ responses to the survey indicating how often they used the Internet. Two focal students represented each of the following levels: high, medium, or low use of the Internet. Although we collected data when observing the entire class, we more frequently observed focal students. We attended specifically to their participation during Ms. Rich’s lessons and activities during the intervention, the artifacts they produced during these lessons and activities, and their responses to the intervention during interviews. The use of focal students has been a frequently used methodological approach in formative experiments (Reinking & Bradley, 2008).

Consistent with previous formative experiments, we used the analyses of field notes and videos from classroom observations of students participating in activities during the intervention to inform iterative modifications of the intervention during the intervention phase and to inform a retrospective analysis after the intervention phase (see Gravemeijer & Cobb, 2006; Reinking & Bradley, 2008). The day-to-day observations and analyses of classroom activities often led to relatively minor adjustments to how IRT was implemented. Toward that end, we met after each class period to compare and consolidate field notes and to discuss how they might address the questions guiding this study and how they might inform needed or useful modifications. Furthermore, in the present study, the inclusion of three sequential units provided two intermediary points at which we could analyze data more holistically and use our findings to make more substantive adjustments to IRT. In addition to these ongoing modifications, our observations, field notes, and interview data informed what Gravemeijer and Cobb (2006) called retrospective analysis, which is a holistic analysis conducted after all data have been collected.

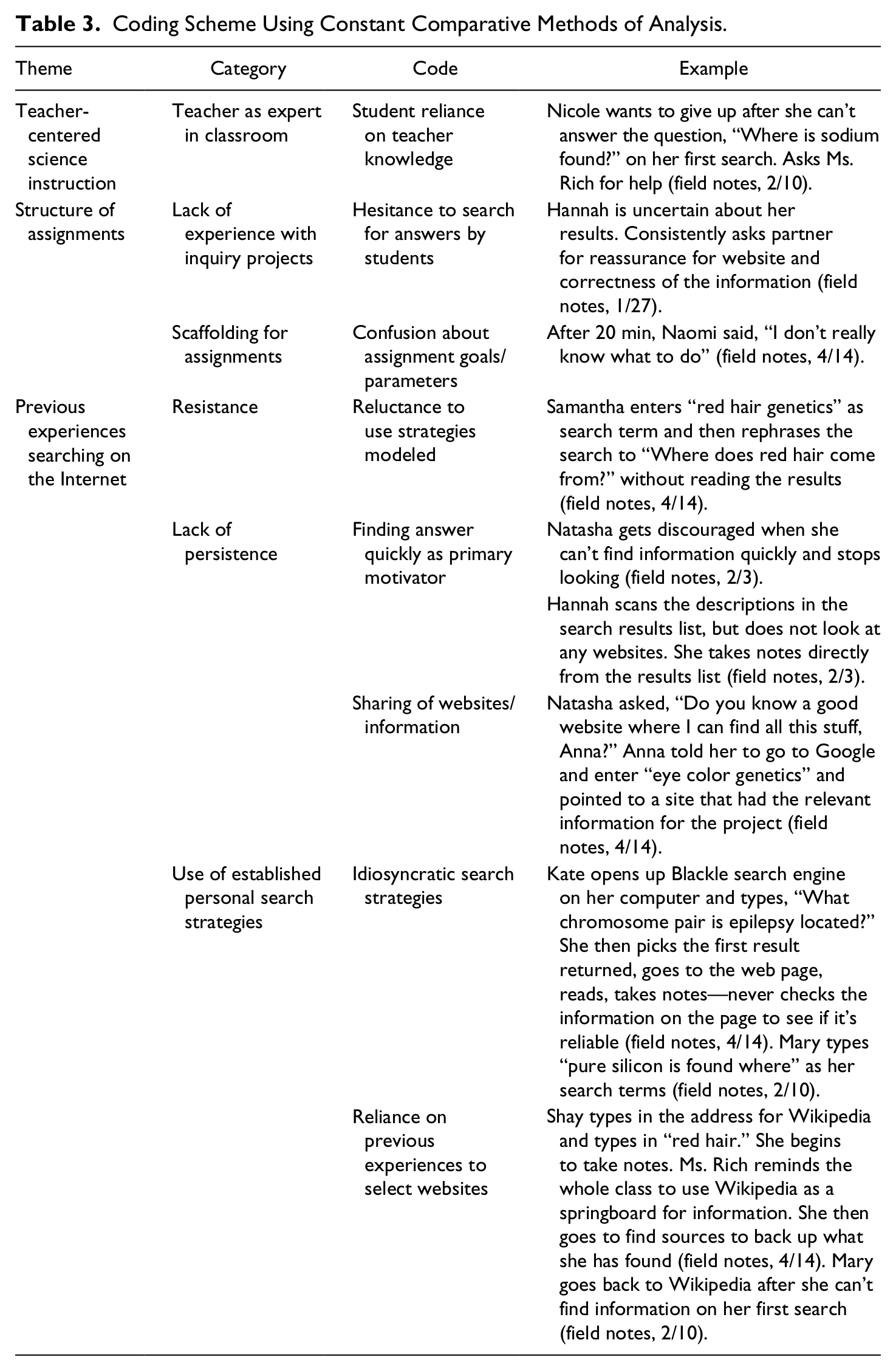

Beginning at the outset of the intervention phase and continuing through retrospective analyses, we used constant comparison (Corbin & Strauss, 2008) to analyze the data. Specifically, we assigned codes to the initial data, with some data being assigned more than one code. After assigning initial codes, we recoded and made connections between the data relating concepts and categories (Corbin & Strauss, 2008) to determine themes. Table 3 illustrates themes, categories, codes, and excerpts from our data that are representative of our coding.

Coding Scheme Using Constant Comparative Methods of Analysis.

To achieve triangulation (Creswell, 2002), which is a criterion for rigor in formative experiments (Reinking & Bradley, 2008), we considered and compared data from video recordings of selected lessons and from semistructured interviews and regular weekly debriefings with Ms. Rich to ascertain codes and themes. To increase validity, member checks (Creswell, 2002) were also conducted with Ms. Rich during these debriefings and with focal students after each semistructured interview.

Results

Several key obstacles emerged as we implemented IRT. In this section, we report those obstacles, provide examples from our data, explain the adjustments to instruction in light of those obstacles, and note outcomes.

Teacher-Centered Science Learning

An interview with Ms. Rich prior to implementing IRT indicated her commitment to student-centered learning. She stated that her students were responsible for guiding their own learning through set learning matrices from state standards and that she was invested in providing students with, “hands-on activities to provide inquiry-based learning.” Indeed, she had taught workshops to other teachers about inquiry methods.

However, our subsequent observations in her classroom provided evidence that contradicted her stated dedication to inquiry methods. Observing in her classes prior to introducing IRT, we noted that completion of the inquiry-based learning projects depended substantially on Ms. Rich’s knowledge and guidance. Little student-driven inquiry was evident. Although students completed hands-on projects, they were not necessarily developing their own scientifically oriented questions, prioritizing evidence, or developing their own explanations. Rather, these activities aligned more with definitions of confirmation inquiry (Banchi & Bell, 2008), in which students conduct investigations to reinforce a previously introduced idea. Consequently, before IRT was introduced into these classrooms, students apparently had had little experience searching for information independently. Rather, students typically turned to Ms. Rich when they needed information or guidance in completing their projects or when they were experiencing difficulty completing the matrices. There was no clear imperative for students to locate, evaluate, and synthesize information independent of her assistance, either before or after IRT was introduced. Thus, we realized Ms. Rich’s definition of inquiry stated in her interviews aligned more closely with Banchi and Bell’s (2008) differed from her instructional methods. In interviews, Ms. Rich suggested her instruction was grounded in guided inquiry, in which student’s determine their own methods for researching teacher-provided questions, but this definition was not entirely consistent with the instruction we observed her implement in her classroom, which seemed to more closely represent forms of confirmation inquiry instruction.

Both forms of inquiry are legitimate and confirmation inquiry indeed seemed more suitable for Ms. Rich’s learning objectives in her science classroom, but this circumstance became an obstacle to accomplishing the specific goal of this IRT study when we observed students locating information on the Internet. Throughout the intervention phase, Ms. Rich required students to independently locate information on the Internet and she did not establish a specific number or type of online sources for students to locate during any of the inquiry projects to provide flexibility in locating information on the Internet; however, students continued to expect Ms. Rich to provide the necessary information and sources to complete projects. Thus, there was a disjuncture between the aims of IRT to develop students’ independent use of appropriate and effective strategies for finding reliable and useful information on the Internet and Ms. Rich’s role in the classroom. Our observational data repeatedly revealed how her stance interfered with accomplishing IRT’s goal. For example, students tended to become frustrated giving up quickly when the first website they visited did not provide needed information and they turned quickly to Ms. Rich for help. Caroline, a focal student stated in an interview:

When I can’t find something right away it’s sort of hard because the first websites that pop up sometimes aren’t sites I’d want to go to because the website looks weird, but when I’m in class I can ask [Ms. Rich] for help and it makes it a lot easier . . . Ms. Rich’s pretty cool about [when we can’t find information]. You know, she’s pretty lenient . . . she doesn’t want us to spend our hard earned time doing all this stuff and not having any results.

However, we observed that students were frustrated, and often seemed unmotivated, to continue searching for information on the Internet, because they were unable to quickly locate reliable even after we and Ms. Rich offered students suggestions and reminded them of strategies that had been discussed and demonstrated in the introductory lessons. Rather than searching widely for sites that might help answer their research questions, some students would stop looking after finding one or two sites, claiming that there was no further information on their topic. Ms. Rich also often offered permission for students to stop looking or simply provided answers to students’ questions, which was consistent with her role during projects prior to the introduction of IRT. Ms. Rich’s offerings of information or permission to stop searching were well meaning and understandable; for example, she seemed focused on keeping students engaged with content. However, a byproduct of her stance was that students, in effect, had little need or motivation to invest in acquiring skills and strategies associated with locating and evaluating information on the Internet, nor an opportunity to apply them authentically in completing their science projects.

Indeed, we observed no genuine commitment to using efficient means to locate information or to exerting the effort needed to ensure its reliability, despite the repeated emphasis on and discussion of appropriate strategies. When we explicitly asked focal students to tell us what they were doing to evaluate a website, they were able to specify the presented strategies, indicating they were aware of them. However, observations showed that students did not consistently use these strategies in their independent searches of the Internet, opting instead to use information that was easily located without a concern for its reliability. As Josie told us, “I think it’s [a strategy for evaluating information on a site] helpful if the first websites that pop up are credible.” Completing the assignment was more important than critically evaluating the information or devoting much time and effort to finding useful and valid information. Ms. Rich’s role as expert and her willingness to be an authoritative source of information seemed to sustain that perception.

Students’ reluctance to critically evaluate information, particularly by comparing and contrasting information at multiple sites, was also reinforced by a perception that the Internet’s value was to find quick and immediate access to appropriate information. We also came to realize that our presence as participant observers was a factor that exacerbated students’ dependence on quick sources of information. For example, we found students’ comments in our data, such as the following: “It’s a lot easier because if you don’t understand, you can ask a teacher or one of you guys to help, so that way you aren’t just sitting there trying to figure it out.” Consequently, we redoubled our resolve to avoid providing answers and to scaffold strategies with strategic suggestions.

We also introduced more small-group work, reasoning that students might be more likely to use the strategies discussed, if they could help and reinforce each other. In addition, during whole-group discussions, we emphasized the need to be persistent in using strategies for locating and evaluating websites. We moved immediately to working with partners or in small groups after these introductory, whole-group discussions. Consistent with the small-group interactions and modeling that previous work has shown to be a key component of IRT, students were encouraged to share their strategies and approaches with each other in finding and evaluating information. We hoped that work in small groups might reinforce persistence and carry over to individual work and reduce the inclination to seek Ms. Rich’s, or our, assistance. We also discussed with Ms. Rich what we had observed and, with her agreement, we decided that it might be beneficial for her to shift the emphasis of the inquiry projects away from individual contributions to shared responsibilities in a group. We hoped that doing so would create an environment where students would rely on each other with less pressure to find sources quickly.

These modifications seemed somewhat successful for one or two class periods. However, use of practiced strategies diminished rapidly after being initially introduced, and students, even in small groups, quickly reverted to asking Ms. Rich for answers and help after only perfunctory searches for information. Furthermore, Ms. Rich was willing to assist them by providing answers or definitive, reliable sources, which might have been a natural response to knowing that her students would be held accountable for concepts and facts on high stakes, standardized assessments. Furthermore, although group projects did increase student interactions about strategies, it was also clear that one student in a group often took the responsibility for finding information with the group’s acquiescence, shouldering the pressure to find information quickly with little evaluation.

For example, Mary, Rita, and Shay were working as a group on a project about genetics. We observed Mary reading from her laptop while Shay took notes, and Rita worked on a design for a cover sheet. The following exchange occurred when Mary expressed doubt about a website:

I can’t find the author of this website, so I’m not sure that it’s an okay site. Should we use it?

I don’t know. That’s your job.

Well, it’s the only one with stuff I understand and we need to finish, so I’m using it.

Our field notes had many such examples, illustrating the subtle factors that may inhibit the developing of strategies for locating and evaluating information on the Internet.

Structure of Inquiry Projects

A related obstacle that emerged in our data was the structure of the inquiry projects. The projects did not consistently support our definition of inquiry in science, because they required finding specific information to meet Ms. Rich’s requirements. For example, the first project involved students researching independently an element of the periodic table that they selected based on the first letter of their last names (e.g., Smith/sulfur). Students were asked to use the Internet to complete questions and prompts about the element as the basis for developing a pamphlet featuring their element. However, the questions were framed to accommodate a single correct answer. Thus, the Internet became like an index to locate correct answers. Even then, students became frustrated because some technical information (e.g., the half-life of a man-made element) was difficult to find.

We also observed that students rarely clicked on a website’s links to other sources. They seemed to be searching for a single website with all of the information needed to answer the questions guiding the activity. For example, students would read the short descriptions of each site’s content found on the page showing the results of their search. Then, they would discuss the list of sites with one another to determine which site most likely had all of the information for the answers they were seeking. In one instance, Mary found a website that contained the answers to many of the project questions for her element and shared the site aloud. Within minutes, almost all students had navigated to that site, rather than engaging in persistent exploration across multiple sites to gather information and to evaluate credibility and utility. Not finding such a site, they would ask Ms. Rich for help, claiming that the sought-after information was not available.

We observed this pattern repeatedly throughout the first inquiry project on elements. Furthermore, students rarely moved beyond the list displayed on the first screen to appear after entering a search term or phrase into a browser, sometimes even being hesitant to explore beyond the first site listed in the results. For example, in our field notes we observed that Stacy consistently asked a classmate sitting nearby if she should click on links other than the first one listed. Further observations and interview data revealed that students were often looking for answers to the questions in the search results list itself, and they were assessing the usefulness of sites based on whether an answer to one or more of the project’s questions was addressed in the brief preview under each site listed on the first page. When we inquired about this reluctance to explore multiple sites displayed on the search results, a student, Kate, responded that “none of these sites look useful [because I don’t see an answer to one of the questions].”

Furthermore, students did not attend strategically to the organization of an accessed website, nor did they often follow associated links within a website, even if those links might be promising sources of information. Despite that the importance of checking the consistency of information across sources had been emphasized in the whole-group sessions, students rarely looked beyond one source to verify information. Also consistent with Ms. Rich’s stance, we observed her telling students that she had already found websites with pertinent, reliable information for their projects. Although her intentions were to be helpful and to reinforce the issue of reliability, her stance reduced opportunities for students to practice and perhaps internalize diligent and strategic searching for information in the less-definitive landscape of information on the Internet.

To address these findings, we collaborated more closely with Ms. Rich to modify the inquiry project for the next unit, making suggestions about how it might foster more interdependent group work, mutual learning, and less specificity in final products. Thus, the second project was more open-ended allowing students to select and design an ecosystem based on information located on the Internet. Ms. Rich informed students that they could display their results in any manner ranging from a written report to a diorama as long as it was based on reliable information from the Internet. The only requirement was to follow the state standards provided to students at the start of the project.

Although this project offered greater freedom for inquiry and more naturally implied the use of multiple Internet sites to find information, students seemed ill prepared to conduct a project with so little structure and explicit direction. As in the first project, students often gave up when it was difficult to locate information. However, in the second project, this response seemed to be a result of students’ uncertainty about the steps or processes they should take to initiate the project. Nonetheless, our observations suggested that students were more enthusiastic working in groups in this project, and they were pleased that they could decide on the final product. For example, Kate remarked that she liked the group work: “It’s easier because you are not by yourself and frustrated. It’s [sic] like supports for you.” Caroline, another focal student, noted that group work sometimes made research easier: “If I found something, my friend and I could go to the same website and find something, and vice versa if she found something I could use it. And we could give each other tips and tricks that we liked.”

Based on these results, an additional modification was made in a third inquiry project. To further scaffold the students’ inquiry process, the third project provided students with guiding questions for researching a specific genetic trait (e.g., hair or eye color) of their choosing. These guiding questions enabled more flexibility than the first project, but provided more structure than the second. Although our observations for this project were limited because the school year was drawing to a close, we did observe a few indications that this adaptation was beneficial.

The more open-ended approach encouraged students to increase their use of appropriate search strategies to find multiple websites with information instead of searching for the answer to a specific question. For example, earlier in the intervention, we observed students typing entire questions into a browser’s search bar to find specific answers. But, by the final project, students had begun to utilize some of the strategies discussed during the introductory lessons, such as using and/not and quotation marks to more efficiently and precisely find relevant information.

Overall, our data suggested that moderately structured, open-ended inquiry projects were better suited to stimulating students to practice appropriate strategies introduced in the introductory lessons. However, there was no evidence that these projects, regardless of structure, induced students to adopt and apply these strategies independent of the tasks at hand.

Students’ Previous Experiences Using the Internet

Another obstacle was a disjuncture between the research skills students had used before the project and approaches highlighted during IRT lessons and the inquiry projects. As indicated by their responses to the survey, most students used the Internet regularly, although more outside, than inside, of school. In interviews prior to implementing IRT, almost all students stated that they used one of the popular search engines to find information for schoolwork, mainly at home.

These previous uses of the Internet apparently established personal, idiosyncratic skills, strategies, and dispositions for using the Internet to locate and deal with information. Our data suggested that students’ experiences outside of school became default strategies in school and persisted as preferred approaches, even when IRT introduced more academically valued strategies. During interviews prior to the intervention, students alluded to their personal strategies when asked about how they located information on the Internet, and we observed them revert to those strategies when engaging in the inquiry projects, even shortly after more reliable strategies had been introduced and practiced. For example, a common strategy was entering a question as a search term and considering as equally credible any links listed in the results of a search. When we first observed this strategy, we offered students a short lesson on the way browsers organize websites, including pointing out paid advertisements and explaining how programmers can use tags, thus altering content so that a website would appear earlier in a result list, often to generate more traffic for the site. Students seemed to apply this awareness immediately after it was introduced, but they persisted in selecting sites that appeared at the top of the list in subsequent searches.

Although students’ personal search strategies may be legitimate and allow them to locate credible websites, few students’ reported using the Internet outside of school in ways that required diligent searching for useful and reliable information. Instead, as evidenced from transcripts of interviews, it was apparent that students engaged in a variety of mostly nonacademic activities, such as visiting craft, gaming, wildlife, and social websites or just surfing the Internet serendipitously. For example, one student, Caroline, explained, “I like to go to ft.com, which is a crafting website, and then I like just going to Google and searching stuff.” Thus, students were not well practiced in searching for information on the Internet in ways considered appropriate for academic work, and they tended to retain more superficial strategies even when academic work was framed to emphasize a more diligent and critical stance.

Even after the IRT lessons, which included guided practice, and after reframing the projects in the intervention, students frequently used inefficient and sometimes inappropriate strategies and encouraged their use, implicitly and explicitly, among their peers during small-group work. We observed that students often shared personal strategies that undermined or short-circuited the strategies presented within the IRT lessons, thus legitimatizing their use among peers. For example, in the unit on genetics, one group of students decided to research if cystic fibrosis was genetically inherited and, if so, how. When one of the group members, Claire, found a website that described a host of genetic disorders including cystic fibrosis, she immediately shared the site with others in her group and they then shared the site with the entire class. This strategy for finding information was observed repeatedly throughout the intervention even after warnings that the credibility of a site needed to be determined in advance. Claire and her group mates described the site they found as “full of information” and almost immediately all students navigated there and culled information. However, no student mentioned ascertaining the credibility of the site. When we, and Ms. Rich, asked students to explain why they thought this site contained credible information, they then reluctantly followed procedures they had learned to gauge credibility.

Furthermore, even after Ms. Rich pointed out that many sites about disorders are written by people who have been diagnosed with that disorder and not by a doctor or medical group, students persisted in using these sites. Although someone diagnosed with a disorder may present valuable and applicable information in an online forum or website, she emphasized that such information should be weighed in light of more professional opinion from more authoritative sites.

To address the realization that students’ idiosyncratic strategies were resistant to change, we increased attention to IRT’s focus on students as informants open to comments and critique from their peers. In other words, we introduced more group discussion critiquing any strategy that might be suggested, hoping to increase students’ personal investment in using them. We reasoned that students who suggested appropriate activities that were sanctioned by the group might be more likely to use them and that they might more likely be adopted among their peers. Thus, we encouraged students to volunteer their own strategies to the group and we drew attention to students who were using useful strategies spontaneously by asking them to share what they were doing with the class. A student or group that volunteered was encouraged to present a strategy informally to the entire group using the interactive whiteboard. This approach allowed for critique, comment, and comparison with other possible strategies, often involving minor adaptations to the strategies we had introduced in the introductory lessons and sometimes to new, and often reasonable, alternative strategies.

This modification was somewhat successful, because subsequently we observed students occasionally using simple evaluation criteria to determine reliability. For example, our field notes indicated that shortly after these modifications, three focal students independently searched for information about authors mentioned in one informational site. When asked to explain, their responses indicated that they were trying to determine whether the authors were credible sources. Yet, these more encouraging results, too, gradually faded. In the final week of the study, we observed students returning again to inefficient and inappropriate evaluative strategies based on their previous experiences or simply avoiding the issue of reliability entirely. For example, in the final project at the end of this study, Latrise, one of the students who had previously searched authors to establish credibility, explained that she looked only for sites with the keyword science in the title, because, as she explained, “They have a lot of science information in one place so you don’t have to read a lot of different pages.”

Yet, the postintervention interviews with students clearly revealed that the intervention had prompted more conscious consideration of issues of locating and evaluating information. That is, even though we did not observe students consistently using the search or evaluation strategies in IRT lessons, they were aware that they should be using them, and they were able to identify them when asked. For example, when students were asked what they would tell a friend who was looking for information about Global Warming on the Internet, students consistently identified the strategies introduced in the IRT lessons, as illustrated in the following transcript of an interview with Kate:

First I’d tell them to scroll down to the bottom of the page and see when it was made and see if it had the author. Then I’d tell them to go to where it says the website address and go all the way to where it has a .com or .org and then go there and see . . . if it’s credible.

Thus, it should be noted that despite the obstacles reported here, the goal of this formative experiment was met partially. Students, when asked, could articulate appropriate strategies highlighted in IRT and could demonstrate them, at least superficially. But, they did not internalize them to use spontaneously when engaged in inquiry projects.

Discussion

This investigation aimed to investigate implementing IRT in two science classes deemed conducive to achieving the goal of developing digital literacy on the Internet. However, several prominent obstacles emerged in our data that inhibited students internalizing strategies and dispositions related to locating and evaluating information on the Internet, at least to the extent that they would use them consistently in independent work. These overlapping obstacles and our attempts to accommodate them in implementing IRT, as reported in previous sections, extend and clarify previous findings, suggest implications for further research and the development of relevant pedagogical understandings, and inform practice. In this section, we discuss findings in relation to these areas as well as the limitations and generalizability of findings and conclusions.

Overall, our findings reinforce and extend the existing literature that identifies a relatively long list of diverse obstacles to integrating digital literacy into instruction aimed at developing digital literacy (Hutchison & Reinking, 2010, 2011). Our findings add another, perhaps subtler and potentially more challenging, category of pedagogical obstacles to effective curricular integration, specifically, integration that develops ingrained, spontaneous use of strategies for locating and evaluating information on the Internet when completing academic tasks.

For example, even when an environment is particularly conducive to developing digital literacy, many pedagogical challenges remain. In the present study, engaging students in inquiry-oriented projects and highlighting useful strategies did not lead to sustained use of those strategies, even in an academic context where they are particularly appropriate and applicable. Students were able to describe appropriate strategies at the conclusion of this investigation, and they could demonstrate them shortly after strategies had been introduced, discussed, and practiced in class. However, soon after, they reverted to more superficial, less effective strategies they had used previously. For the students in this study, those previous strategies, often developed through activities on the Internet outside of school, appeared to be firmly entrenched and not easily altered beyond a few tasks for a relatively brief time.

That finding has implications for assessing students’ strategies and dispositions to find and evaluate information on the Internet. That is, assessments immediately following instruction may suggest that students can identify and use appropriate strategies. However, it may not necessarily be an indication that they will subsequently use them spontaneously in future tasks, nor that they have acquired the dispositions that lead to more strategic and critical stances toward information on the Internet. As in many other efforts to develop strategic skills and dispositions, spontaneous transfer to more authentic tasks is the acid test that should be the measure of an intervention’s success. In that regard, our findings suggest that future investigations of IRT or other related interventions should adopt goals and indicators focused on the transfer of strategies to tasks on the Internet that are some distance in time and context from the explicit introduction of strategies.

Our data and instructional modifications suggest avenues for obtaining more desirable longer-term outcomes. Increasing small-group work and reframing the inquiry projects appeared to promote more use of the strategies highlighted, although these modifications ultimately did not lead to lasting effects. Specifically, instructional activities that were more open-ended, not suggesting a single correct response, may be more conducive to promoting effective search strategies for and critical evaluation of content on the Internet among middle-grade students. Small-group work dedicated to finding and evaluating information on the Internet, which was easily and naturally integrated into the inquiry projects in these science classes, may also help reinforce strategies and dispositions, especially when at least one of the students in a group is inclined to use them and when the group work is framed specifically to highlight strategic and critical stances. However, our data suggest that group work must be structured in such a way that all students become involved in exercising the appropriate strategies and dispositions as opposed to one student taking on that role consistently. For example, students could rotate roles including the role of establishing reliability, which is an approach frequently taken in reciprocal teaching.

However, in the present investigation, Ms. Rich’s strong, and understandable, inclination to be a ready source of information relevant to completing the inquiry projects may have mitigated the influence of such modifications. Despite Ms. Rich’s commitment to inquiry learning, with its emphasis on developing alternative explanations independently (e.g., Olson & Loucks-Horsley, 2000), her need to be helpful, along with external pressures, such as following state standards and preparing students for state tests, worked against framing instructional tasks as open-ended, independent inquiry. We conclude that without such an authentic frame to inquiry, science as a subject area may not be particularly conducive to accomplishing the pedagogical goal of this formative experiment. Thus, part of our emerging pedagogical theory in relation to IRT is that teachers may need to consider broadly how information is made available to students in the context of inquiry-oriented projects and specifically to consider their own role in assisting students’ searches for relevant information.

Our investigation suggests that, at least in the context of inquiry projects involving the use of the Internet, it might be appropriate for teachers to strive against the often natural inclination to be a ready source of specific information for students. Instead, when students ask for specific information, a teacher might use such requests as an opportunity to query students about what strategies they have used thus far, to constructively critique those strategies, to make suggested modification in their approach, and to model appropriate strategies (e.g., “Here is what I might do to find relevant information.”). The influence of such a stance and such responses in enabling students to internalize strategies and dispositions awaits further study. Likewise, it may be helpful to have students share their own strategies with the entire class followed by discussion and critique within the context of searching for information on the Internet.

One useful pedagogical understanding that is reinforced in the present investigation is that locating and evaluating information is perhaps the central, and most challenging, aspect of developing digital literacy on the Internet among middle school students. A similar conclusion was reached in previous investigations of IRT with middle school students in language arts classes (Leu et al., 2005, 2007, 2008). Apparently, the strategies and dispositions associated with finding and evaluating information on the Internet are no less challenging in science classrooms where there is above-average achievement in a socially stable context and an experienced teacher dedicated to using inquiry methods and open to integrating technology into instruction. Consequently, researchers and teachers aiming to develop digital literacy on the Internet might give more, and more specific, attention to locating and evaluating information on the Internet and have realistic expectations about the pedagogical challenges of inculcating an appropriately strategic and critical stance.

Cumulatively, investigations of IRT suggest that other aspects of digital literacy on the Internet such as asking good questions and appropriately synthesizing and communicating information are likely to be developed when students have a heightened awareness of and commitment to strategically searching for reliable information on the Internet. Search strategies, too, can be at least reinforced by, if not grounded in, the critical stance associated with evaluating sources. This tentative interpretation emerges more holistically from our retrospective analysis across all of our data (Gravemeijer & Cobb, 2006) and which is consistent with data and analyses in previous studies.

A more general pedagogical theory that fits our data and that may be particularly useful for educators and researchers interested in developing appropriate skills, strategies, and dispositions for using the Internet is the principle of least effort. Originally proposed by Zipf (1949) as a general theory of human ecology, the principle of least effort has been applied more specifically to research in information science (e.g., see Liu & Yang, 2004). The design of online library databases, for example, is guided by the assumption that users want to find the most information with the least effort. Similarly, in this study, and in our previous work, we have found that many middle-grade students do Internet searches guided essentially by that principle, although in school, maximizing academic achievement or at least demonstrating satisfactory performance on an academic task may be the primary motivation instead of maximizing the return of information.

The principle of least effort seems to capture the essence of the obstacles we faced in effectively implementing IRT and may be the key to confronting them instructionally. How can interventions with goals similar to IRT be implemented to accommodate or confront the principle of least effort? The principle of least effort provides an explanation for students’ continued reliance on their previous well-practiced strategies for locating information (why learn or practice a different approach?); their focus on getting the correct answer, which is easier than dealing with ambiguity; and the availability of Ms. Rich’s ready assistance, which inhibited independent efforts and perseverance.

A central challenge, then, in designing and implementing instruction toward accomplishing the pedagogical goal of developing digital literacy on the Internet, may be to suppress or to overcome these natural tendencies. Addressing that challenge may take much longer than even the months devoted to IRT in the present investigation. It also suggests that it may be necessary to lay the groundwork for strategic and critical stances to Internet in elementary school, a possibility that education leaders and policy makers may need to consider in creating curriculum and assessments.

A related insight from our retrospective analysis is that it may be necessary to create situations and contexts that demonstrate the inappropriateness and inefficiencies of students’ preferred strategies accompanied by opportunities to illustrate the conditions under which students’ preferred strategies are effective and produce reliable results. Our efforts to encourage individual students and groups to share strategies for critique and discussion may be a starting point for addressing that issue.

Findings and conclusions from a formative experiment such as the present investigation in two classrooms may be viewed as having relatively limited generalizability. However, Firestone (1993) argued that there are several types of generalization. There is case-to-case generalization in which one case informs similar cases. Thus, the present study may be particularly informative to middle-grade science teachers who use or are considering inquiry methods under conditions similar to this study. In that regard, formative experiments such as this one contribute to closing the gap between research and practice (Reinking & Bradley, 2008). There is also theoretical generalization, where specific examples create or substantiate theories across diverse contexts, which implies multiple replications. The present study represents only one replication, although it helps clarify previous work and informs future replications. It has deepened our pedagogical understandings of IRT specifically and of integrating digital literacy into instruction more generally. At the same time, it has led us to think more divergently about pedagogical perspectives and theories that might apply more generally such as the principle of least effort. It may be particularly useful to know that broadly speaking, there are some pedagogically similar challenges and obstacles across diverse contexts and populations of students.

Nonetheless, based on our experience in these classrooms, we believe that IRT continues to be a viable approach for integrating digital literacy into middle school science instruction at least when it is oriented toward independent inquiry. In fact, we could imagine it to be a stimulus for initiating or more authentically instantiating such instruction. However, the obstacles we encountered were pedagogical challenges that await further study and modifications aimed at addressing them. They were not due to resistance from Ms. Rich who was an enthusiastic and cooperative partner, nor did they emanate from working against the grain of the activities and pedagogical goals of science instruction. Thus, as we believe to be the case in the present investigation, IRT may represent a useful option for integrating digital literacy into the curriculum and science classrooms may, under appropriate conditions, offer fertile ground for addressing the obstacles we uncovered.

In the end, the present investigation reveals only part of a large and complex puzzle about how digital literacy might be developed among middle school students and how IRT in particular might accomplish that goal. Formative experiments and design-based methods in general acknowledge that the most important goals in education and how to effectively achieve them pedagogically are complex and nuanced, requiring extended research in diverse contexts. Yet, each attempt contributes to reducing our ignorance (Wagner, 1993), providing deeper understandings of pedagogical theory and informed recommendations to practitioners. We believe the present investigation makes such a contribution, and we look forward to further replications in other contexts and to the clarity those replications will add to pedagogical theory and to instructional practice.

Footnotes

Appendix A

Questions Guiding Semistructured Interviews With Focal Students

Searching for Information: uses search engines, search engine terms, skims and scans result lists to find relevant sites, uses relevant sites to find information, navigates hyperlinks

Critical Evaluation: looks for author/copyright date, assesses reliability/validity of source, assesses language for bias or author’s stance, skims and scans for relevance/critical elements before using the page

Synthesis: uses multiple sources to verify information, creates a product demonstrating understanding of information found from multiple sources

Communication: effectively communicates techniques for peers, communicates effectively with others in small groups about relevant topics, sites sources (URLs) appropriately when sharing information

Group Dynamics: stays on task in groups, active listener, participates appropriately, leads group.

Appendix B

Appendix C

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.