Abstract

This study investigated the extent to which new reading comprehension proficiencies may be required when adolescents read for information on the Internet. Seventh graders (N = 109) selected from a stratified random sample of diverse middle school students completed a survey of topic-specific prior knowledge and parallel scenario-based measures of online reading comprehension. Standardized reading comprehension scores were also collected. Results indicated performance on one measure of online reading comprehension accounted for a significant amount of unique variance in performance on a second measure of online reading comprehension after controlling for standardized test scores of offline reading comprehension and topic-specific prior knowledge. Furthermore, there was an interaction between prior knowledge and online reading comprehension, such that higher levels of online reading comprehension skills may help compensate for lower levels of topic-specific prior knowledge when adolescents are asked to locate, critically evaluate, synthesize, and communicate information using the Internet. The author discusses a series of alternative interpretations of the data as well as their implications for literacy theory and research.

Keywords

The Internet has prompted new technologies that challenge students’ abilities to comprehend informational text. The RAND Reading Study Group (RRSG, 2002) reported, “[E]lectronic texts that incorporate hyperlinks and hypermedia . . . require skills and abilities beyond those required for the comprehension of conventional, linear print” (p. 14). Others have speculated that previous research on traditional comprehension strategies can inform, but not complete, our understanding of online reading comprehension (see Coiro, 2003; Hartman, Morsink, & Zheng, 2010; Leu, Kinzer, Coiro, & Cammack, 2004; Spires & Estes, 2002). Since 97% of K–12 classrooms in the United States now have at least one computer connected to the Internet (National Center for Education Statistics, 2010) and 94% of online teens use the Internet for school-related research (Lenhart, Simon, & Graziano, 2001), there is a need to better understand what reading on the Internet entails if we wish to prepare all students to succeed in a world where these skills are so important.

Recent qualitative findings suggest the skills and strategies required to comprehend printed text are intertwined with a set of new and more complex skills and strategies to read successfully for understanding on the Internet (see Afflerbach & Cho, 2008, 2009; Coiro & Dobler, 2007; Coiro, Malloy, & Rogers, 2006). Traditional conceptions of reading comprehension may no longer be sufficient in online reading contexts. For example, many adolescents who are skilled at reading offline are ill equipped to deal with the new comprehension demands of querying search engines (Eagleton & Guinee, 2002), understanding search results (Henry, 2006), and critically evaluating information laden with social, commercial, and political motives (Fabos, 2008). Unfortunately, little statistical evidence has been gathered to highlight the reader characteristics that contribute most to successful reading comprehension in open Internet spaces. Even more unfortunate is that despite both informed speculation (Coiro, 2003; Henry, 2006; International Reading Association, 2009; RRSG, 2002) and evidence to the contrary (see Coiro & Dobler, 2007; Henry, 2007; Kingsley, 2011; Leu et al., 2005), our field often assumes online reading skills to be primarily the same as offline reading skills (Leu, O’Byrne, Zawilinski, McVerry, & Everett-Cacopardo, 2009) or sets them aside as technology skills rather than new reading comprehension skills (Karchmer, 2001).

If policy makers and educators continue to ignore the growing evidence that new skills and strategies may be required to read, learn, and solve problems with the Internet, our students will not be prepared for the future (Educational Testing Service, 2003; Leu, 2007; OECD Program for International Student Assessment, 2003). Furthermore, the absence of measures to assess online reading comprehension leaves the reading community with no means to evaluate progress or help diagnose the challenges some students face when reading on the Internet.

The speed with which the Internet has emerged has forced us to confront the issue of how, why, and to what extent reading might be different as the Internet continues to transform and define literacy in the 21st century (Hartman et al., 2010). This study builds on previous work to investigate quantitatively the extent to which additional reading comprehension proficiencies may be required on the Internet beyond those typically measured by standardized tests of offline, printed text comprehension.

Theoretical Framework

There are many different conceptions of literacy around which this study of online reading comprehension could have been framed. For example, it could have been framed in terms of information literacy (Hobbs, 2006) or digital literacy (Martin, 2006) or Internet inquiry (Eagleton & Dobler, 2007). However, this study was informed by three theoretical perspectives that lend themselves to applications in academic classroom contexts—the area in which I was most interested. The first is the well-articulated perspective of reading comprehension outlined in the RRSG’s (2002) report. This perspective summarizes a long line of research demonstrating that reading comprehension is an active, constructive, meaning-making process (e.g., Bransford, Barclay, & Franks, 1972; Kintsch & Kintsch, 2005; Pearson, Roehler, Dole, & Duffy, 1992) in which the reader, the text, and the activity play a central role (Alexander & Jetton, 2002; Pearson, 2001). According to this perspective, text comprehension involves the integration of cognitive and metacognitive reading strategies with a reader’s prior knowledge about text structures and the topic (see Kintsch, 1988). Text comprehension is dependent on reader characteristics such as the abilities to generate timely inferences while processing text information, to draw connections between texts and their prior knowledge, and to self-regulate their use of strategies according to a particular reading purpose (see Dole, Duffy, Roehler, & Pearson, 1991; Pressley & Afflerbach, 1995). The emergence of a greater range of texts, traditional and alternative, introduces additional dimensions to this set of cognitive reading strategies that reshape the cognitive processes of readers as they engage the reading process (Alexander & Fox, 2004). The RRSG’s interactive view of reading also suggests social context and cultural variables play a role in meaning construction, but the present study focuses primarily on the role of new cognitive processes the reader must learn to comprehend text successfully on the Internet.

A second perspective that informed this study is that of new literacies (Lankshear & Knobel, 2003; Leu et al., 2004). Broadly conceived, this perspective argues the nature of literacy and learning is rapidly changing and transforming as new technologies emerge (Coiro, Knobel, Lankshear, & Leu, 2008). Within this broader context of new literacies theory (see Leu et al., 2009), this study focuses specifically on the new literacies of online reading comprehension (Coiro & Dobler, 2007) as they are applied in formal classroom settings, which many now argue is a critical context for new literacies research (e.g., Partnership for 21st Century Skills, 2010). This perspective frames online reading comprehension as a web-based inquiry process involving skills and strategies for locating, evaluating, synthesizing, and communicating information with the Internet. Accordingly, many dimensions of online reading may require new comprehension skills and strategies over and above those required when reading printed books (e.g., Afflerbach & Cho, 2009; Hartman et al., 2010; Kingsley, 2011; Kuiper, 2007; Spires & Estes, 2002). This study seeks to more clearly understand the complex interplay between offline and online reading skills as adolescents read for information on the Internet.

A third perspective is that of cognitive flexibility (Spiro, Feltovich, Jacobson, & Coulson, 1991). This theory posits that the Internet is an ill-structured context for reading that requires readers to apply what they know about reading printed text more flexibly while adapting to new and constantly changing online reading situations. As a result, previous frameworks used to define the offline reading process no longer sufficiently explain the knowledge required of readers in web-based contexts (DeSchryver & Spiro, 2009). In fact, Spiro (2004) argues approaches that work in simpler contexts (e.g., recalling from memory a set of prescriptive procedures to learn from static representations of information) may be exactly opposite to those approaches that are best for dealing with complex environments such as the Internet. Spiro suggests that online reading activities incorporate multiple representations of information and rapidly changing contexts, thus demanding a broader knowledge of flexible comprehension procedures and the ability to tease out and strategically respond to diverse situational cues encountered within each new online text. To understand and teach ways of reading online, it is important to identify those approaches to text that contribute most to reading comprehension in complex online informational contexts. This study therefore was intended to draw attention to what readers need to know and know how to do when reading informational text on the Internet.

Previous Work

Comprehending Offline Texts

In the present study, offline text refers to informational text that appears on the printed page and not in a digital format. Examples of offline text include trade books, textbooks, and newspapers. Research indicates expert readers apply a range of skills and strategies to comprehend offline text (Duke & Pearson, 2002; McNamara, Miller, & Bransford, 1991; S. G. Paris, Wasik, & Turner, 1991; Pressley & Afflerbach, 1995). Skilled readers also perceive text structure and reading purpose and then adjust their strategy use accordingly. Narrative texts, for example, are organized around a familiar story grammar (Stein & Glenn, 1979) that guides readers’ ability to connect and comprehend the main components of a story (Graesser, Golding, & Long, 1991; A. H. Paris & Paris, 2003). In contrast, informational texts include complex concepts, specialized vocabulary, and unfamiliar text structures that significantly affect a reader’s ability to locate, understand, and use the information (Weaver & Kintsch, 1991). These challenges require readers to attend to and make inferences about less familiar hierarchical text structures (Kintsch & Kintsch, 2005), consciously monitor their textual inferences in terms of what they already know (Baker, 2002), and flexibly adopt alternative strategies when others do not work (S. G. Paris, Lipson, & Wixson, 1983).

Studies of how textual differences influence offline text comprehension indicate both children and adults have more difficulty reading informational text than reading narrative text (Biancarosa & Snow, 2004). Dreher and Guthrie (1990) reported that reading to locate a specific subset of information relevant to a particular goal, as opposed to reading to recall an entire text’s content, requires different processes that do not appear to correlate with a student’s performance on traditional passage recall comprehension tasks. Fourth grade readers who scored well on standardized tests of narrative text comprehension struggled when they were asked to find answers to straightforward questions in informational text (Neville & Pugh, 1975). Similarly, Dreher and Sammons (1994) found only 30% of fifth graders in their sample who were reading at grade level were able to locate answers to at least two of three simple questions using a familiar-topic textbook.

Studies of how reading purposes influence offline reading comprehension suggest that difficulties posed by offline informational text become more challenging when readers are asked to formulate a task and select the resources themselves (Dreher, 2002). Many students have difficulty with informational reading tasks in elementary school (Brown, 1999), middle school (see Lenski, 1998; Moore, 1995), high school (Kulthau, 1989), and college (Nelson & Hayes, 1988), particularly when those tasks involve generating a search plan, narrowing a search process for particular purposes, and recording relevant responses. It would make sense then that the additional complexities of online text structures would further complicate these types of informational reading tasks.

Comprehending Online Texts

For the purposes of this study, online texts are broadly conceived to include information presented via one or more elements such as hyperlinks, images, animation, audio, and/or video within a online networked system (i.e., the Internet) that is continually expanding and, thus, largely unbounded. However, when reviewing previous work, the term hypertext is sometimes used in studies conducted in more restricted digital environments (as compared to unbounded Internet environments). In those cases, the term hypertext is used to refer to digital informational texts that are linked within a closed electronic system such as a CD-ROM encyclopedia or library database, whereas Internet text refers to informational texts found within the open networked system of the Internet. In closed hypertext systems, the linkages typically impose a conceptual or logical frame and organization of information from outside the reader (i.e., by the designer of the hypertext space). In contrast, an unbounded open system such as the Internet directly involves the reader in discovering and also creating new links between the information. This difference introduces one source of complexity for readers as they move from hypertext to Internet text environments.

As readers move from static hypertext systems to dynamic unbounded Internet text environments (see Lawless & Schrader, 2008), the rapidly changing nature of multiple mediums further complicates online reading processes (Hartman et al., 2010). Online Internet texts are part of a dynamic open-ended information system (see Hill & Hannafin, 1997) that changes daily in structure, form, and content (Afflerbach & Cho, 2009; Zakon, 2005). These dynamic mediums foster increasingly complex interactions among elements of reader, text, author, task, context, and technology that “[suggest] a cognitive conception of online comprehension that is more complex, iterative, and protean than Huey (1908) could have ever imagined a century earlier” (Hartman et al., 2010, p. 152). Furthermore, online texts often contain hidden social, economic, and political agendas that require higher degrees of critical evaluation skills than typically found in offline text comprehension (Cope & Kalantzis, 2000; Fabos, 2008). Online information texts also introduce infinite numbers of intertextual connections (Caney, 1999) and intercultural negotiations (Snyder & Bulfin, 2008) that prompt new complexities for readers trying to synthesize and communicate information across globally linked Internet texts. Each of these complexities presents new reading demands for online learners related to processes of online location, critical evaluation, synthesis, and communication (Leu et al., 2004; C. R. Wolfe, 2001). This study examines the extent to which new reading demands associated with Internet texts predict performance in online reading comprehension over and above offline reading skills.

Contributions of Offline and Online Reading Comprehension Skills in Internet Text Environments

To date, studies of students who use the Internet for school-related tasks generally fail to frame the matter as a reading comprehension issue (Lawless & Schrader, 2008). However, a small set of emerging studies has sought to characterize the nature of reading comprehension skills when students read for information in diverse, open-ended Internet contexts.

In an early case study designed to explore the possibility that the Internet places special demands on readers, Schmar-Dobler (2003) found that five skilled fifth grade readers wove new Internet reading strategies for skimming, scanning, searching, and navigating into their application of traditional offline text comprehension strategies, such as determining important ideas, monitoring and repairing comprehension, activating prior knowledge, and making inferences. Coiro and Dobler (2007) employed qualitative methods to more formally explore online reading comprehension with 11 skilled sixth grade readers. Data from think-aloud protocols, field observations, and semistructured interviews suggested successful Internet reading experiences appeared to simultaneously require both similar and more complex applications of (a) prior knowledge sources, (b) inferential reasoning strategies, and (c) self-regulated reading processes. Coiro and Dobler (2004) replicated their study of 11 skilled Internet readers with 10 less-skilled Internet readers and observed that although these strategy applications occurred less often among less skilled online readers, these same three themes were revealed in their reading processes.

Zhang and Duke (2008) examined the different reading strategies used by 12 skilled adult Internet readers while reading on the Internet for three different purposes, including seeking specific information, acquiring general knowledge, and being entertained. They found that readers reportedly adopted different patterns of both familiar (e.g., printed) and new Internet reading strategies for different reading purposes. These new reading strategies included generating digital queries, applying prior knowledge of search engines and websites, and monitoring one’s reading pathways and speed in relation to one’s online reading purposes. Across these four qualitative studies, the authors provided examples of similarities and differences between offline and online reading comprehension skills. Each set of authors also recommends more focused research on these differences to better understand how larger samples of diverse learners interact with texts in open networked information environments.

I am aware of one study to date (Leu et al., 2005) that has applied quantitative methods to explore the nature of online reading comprehension from a new literacies perspective in relation to offline reading skills. In this study, the authors found a very small and nonsignificant correlation (r = .103, p = .352) between a psychometrically valid and reliable measure of online reading comprehension (called ORCA-Blog) and a common standardized state assessment of traditional reading comprehension (Touchstone Associates, 2004). These findings provide preliminary quantitative evidence to suggest reading in online settings may require a different set of skills from those required to read in offline settings. The authors also reported cases of students who were highly proficient offline readers but unskilled online readers. Cases such as these add another layer of complexity to work that suggests online reading comprehension requires similar, but more complex, reading skills compared to comprehension of traditional offline text.

Finally, Afflerbach and Cho’s (2008) examination of 46 think-aloud protocol studies provides important evidence to suggest online reading comprehension involves processes unique to online texts. Analyses revealed traditional and novel variations of reading strategies that clustered around three previously defined categories of offline text comprehension, including (a) identifying and learning text content, (b) evaluating, and (c) monitoring (see Pressley & Afflerbach, 1995). In addition, “the investigation of accomplished Internet readers’ Internet reading revealed strategies that appeared to have no counterpart in traditional reading” (Afflerbach & Cho, 2009, p. 217). “This group of strategies, realizing and constructing potential texts to read, is representative of accomplished readers’ strategic approaches to reducing uncertainty, determining the most appropriate reading path, and managing a shifting problem space” (p. 212). The authors proposed that the catalog of reading comprehension strategies developed by Pressley and Afflerbach be expanded to include this new category of strategies unique to online reading environments. Results of the present study can provide important quantitative evidence to support the inclusion of a new category of online reading comprehension strategies.

The Role of Prior Knowledge in Offline and Online Reading Comprehension

A long line of research supports the notion that prior knowledge is central to offline text comprehension (e.g., Bransford & Johnson, 1972; Means & Voss, 1985). Prior knowledge is defined, generally, as the knowledge a reader brings to any text or learning situation (Alexander, 1992). Studies of offline text comprehension indicate skilled readers call on general world knowledge, prior subject-matter knowledge, and prior knowledge of text structure (e.g., Alexander, Schallert, & Hare, 1991; Meyer, Brandt, & Bluth, 1980) to construct meaning (Anderson & Pearson, 1984), determine importance (Afflerbach, 1986), make inferences (Graesser, Singer, & Trabasso, 1994), and monitor their comprehension (Baker, 2002) of offline informational text.

Similarly, studies of hypertext and Internet text comprehension indicate readers call on their prior knowledge sources to guide their navigation (Barab, Bowdish, & Lawless, 1997; Lawless & Kulikowich, 1996), make inferences (Burbules & Callister, 2000; Foltz, 1996), locate relevant resources (Balcytiene, 1999; Yang, 1997), and construct meaning (Calisir & Gurel, 2003; Rouet & Levonen, 1996). Generally, results indicate readers with high levels of topical prior knowledge (Potelle & Rouet, 2003), broad prior knowledge (Dee-Lucas, 1999; Lawless & Kulikowich, 1996), or prior knowledge of Internet text systems (Hill & Hannafin, 1997) are better at navigating, using, and comprehending information in hypertext environments compared to readers with lower levels of prior knowledge (e.g., Dillon & Gabbard, 1998; MacGregor, 1999).

However, in studies that more closely considered differences in text coherence and prior knowledge, the reported effects of prior knowledge on hypertext comprehension are inconsistent (Salmerón, Cañas, Kintsch, & Fajardo, 2005). Balcytiene (1999), for example, found an interaction between treatment and prior knowledge for different levels of readers. In addition, Calisir and Gurel (2003) found that prior knowledge of text structure and content played a significant role in comprehension of linear and hierarchical hypertext but had little or no effect on the comprehension of mixed hypertext (that contained both linear and hierarchical hypertext structures). That is, prior knowledge differentially influenced hypertext comprehension as a function of text structure. Elsewhere, two studies of offline text comprehension (Rupley & Willson, 1996; Willson & Rupley, 1997) found quantitative evidence that suggested background knowledge of a topic begins to diminish in importance at about fourth grade when strategy knowledge begins to play a more important role in reading informational text.

With respect to the role of prior knowledge in Internet text environments, additional nuances emerge. Bilal (2000, 2001) explored the relationship between seventh graders’ attributes (i.e., prior knowledge and reading ability) and their success using a children’s search engine. Results indicated differences among students in the effectiveness and efficiency of strategies used to navigate online informational text in two different contexts (i.e., search engines and information websites). Surprisingly, in both studies, children’s domain knowledge, topic knowledge, and reading ability did not significantly influence their success (Bilal, 2000, 2001). Rather, Bilal found students’ knowledge of how best to gather information in hypermedia systems to be a more important indicator of their ability to complete the online reading tasks.

More recently, Willoughby, Anderson, Wood, Mueller, and Ross (2009) examined the role of domain knowledge (in this case, knowledge about biology and about urban environments) among 100 undergraduate students when retrieving and using information from the Internet as a resource for two essay writing tasks on these topics. Findings indicated that participants were able to use search engines to access relevant websites fairly accurately, even when prior domain knowledge was low. Willoughby and colleagues suggested that, because Internet search engines have become increasingly more sophisticated, “perhaps knowledge has a more subtle, subjective impact on search behaviors that may require more extensive examination” (p. 647).

Together, findings from the few studies that examined the role of prior knowledge in online reading contexts suggest prior knowledge of the topic may be somehow less important when reading on the Internet. These findings are not in line with previous work that highlights the importance of prior knowledge of key concepts on search success in online library databases (Borgman, Hirsch, Walter, & Gallagher, 1995) and open Internet information environments (Hill & Hannafin, 1997). Consequently, the issue is more complicated than previously thought, and more research is needed to better understand how prior knowledge interacts with offline and online reading skills to facilitate comprehension in Internet text environments.

In summary, four studies that explored Internet use as an inquiry-based process of online reading comprehension were inconclusive. One study reported the strategies required for online text comprehension are complementary but orthogonal to traditional offline text comprehension strategies (Leu et al., 2005); three studies found preliminary evidence to suggest the skills and strategies required to read on the Internet may indeed be similar to, but more complex than, those required to comprehend printed text (Coiro & Dobler, 2004, 2007; Schmar-Dobler, 2003; Zhang & Duke, 2008), and one review (Afflerbach & Cho, 2008) acknowledged many overlaps with offline reading comprehension but also proposed an entirely new and unique category of online reading comprehension. As the nature of reading comprehension continues to evolve, Hartman and his colleagues (2010) argue, “It seems particularly important to articulate a working model of what online reading comprehension looks like. The practical relevance and usefulness of such a model has never been greater” (p. 155).

The present study seeks to meet this call for new research by building directly on emerging sets of qualitative findings with a systematic quantitative investigation of the following question: In a regression analysis, does performance on one measure of online reading comprehension significantly predict performance on a second, parallel, measure of online reading comprehension over and above scores of offline reading comprehension and topic-specific prior knowledge?

Method and Procedure

Participants

Participants in this study were 118 seventh grade students selected from a large convenience sample of seventh graders (N = 510) in six middle schools representing five school districts in one northeastern U.S. state. To obtain a more diverse population, stratified random sampling procedures were applied to sort the sample into two groups: (a) students attending schools in economically advantaged school districts (n = 157) and (b) students attending schools in economically challenged school districts (n = 353). Students in each of the two strata were entered into a pool from which 60 students were randomly selected from each stratum using an online random number generator (n = 120). Once the study began, two students (from the economically challenged district) who were absent for much of the school year were dropped from the study after three failed attempts to reschedule their online reading sessions. Thus, the final sample selected to participate in the study included 118 ethnically, economically, and academically diverse seventh grade students (51 males, 67 females) from six middle schools.

Of the 118 participants, 70% of the students (n = 82) identified themselves as White, 24% as either Hispanic (n = 15) or Black (n = 14), 3.5% as Asian or Pacific Islander (n = 4), and 1.5% as Other (n = 3). With respect to their offline reading performance, 16% of the participants (n = 20) scored at the advanced level on the state standardized reading test, 64% scored at either goal (n = 60) or proficiency (n = 13) reading levels, and 20% scored at the basic (n = 9) or below basic (n = 16) levels.

A 10-item Internet Use Questionnaire was also administered to all participants to provide additional information about the sample in terms of their Internet use in school and outside of school for a range of purposes. Responses to these Likert-type items were scored on a 4-point scale, never, sometimes, often, and daily. A summary of survey responses collected for the 118 participants indicated 98% of the sample had used the Internet at school and 93% of the sample used the Internet from home for school assignments. This suggests that almost all students were familiar with using the Internet for academic reading purposes (the focus of this study) in either school or home settings. Survey responses also suggested the sample represented adolescents with a range of online Internet experiences; a majority of students used the Internet sometimes or more often to search for information, play online games, listen to music, and make things online. About half of the students in the sample used email or instant message to communicate with others.

Instrumentation

It is important to preface the following section with an acknowledgment that online reading, like all reading, is situated. That is, in reality, the conceptualization of offline and online reading comprehension varies from situation to situation, and from reader to reader. Thus, in the present study, the researcher’s conception of offline reading comprehension is situated in the context of a rigorous state standardized reading test and online reading comprehension is situated in the context of two parallel series of online information tasks about science content. In addition, the conceptualization of prior knowledge is situated in items related to topic-specific prior knowledge about particular science terms, as opposed to prior knowledge more broadly defined. Consequently, although for purposes of brevity the terms online reading comprehension, prior knowledge, offline reading comprehension, and prior knowledge may sometimes be used in isolation, any findings and interpretations from the present study are limited to these specific contexts until further research is conducted across a broader range of assessment situations. Three instruments were used to measure the three variables examined in this study.

Offline reading comprehension

We estimated each student’s level of offline reading comprehension by his or her Grade 6 standardized reading score on the state’s Reading Mastery Test (Connecticut State Department of Education, 2003). The statewide reading achievement score is based on a combination of scores on three reading comprehension subtests (50%—including Forming an Initial Understanding, Developing an Interpretation, and Demonstrating a Critical Stance) and the Degrees of Reading Power subtest (50%). Scores on the four subtests are totaled, scaled, and reported as one composite called Total Reading Comprehension. Reliability coefficients for this test are greater than .85.

Prior knowledge

Each student’s level of prior knowledge was estimated on a six-item questionnaire intended to measure a combination of topic-specific and task-specific knowledge required in the online reading task. Prior knowledge was defined as the total number of accurate idea statements provided about four topic-specific concepts related to the reading tasks (the lungs, the breathing process, oxygen, and carbon monoxide poisoning) and two task-specific concepts included in the directions (animation and reliable information). Using procedures similar to those used by Leslie and Caldwell (1995) and M. B. Wolfe and Goldman (2005), scores for each of the six concepts were summed to provide one final raw score for total prior knowledge. Internal consistency estimates of the prior knowledge measure was calculated at r = .85, demonstrating adequate reliability.

Online reading comprehension

Online reading comprehension was estimated by overall performance on two parallel measures: (a) the Online Reading Comprehension Assessment–Scenario I (ORCA–Scenario I), designated as one of the predictor variables in the regression model, and (b) Online Reading Comprehension Assessment–Scenario II (ORCA–Scenario II), designated as the dependent variable in the model. The development of ORCA–Scenarios I and II was informed by a new literacies theory of online reading comprehension (Leu et al., 2004), earlier pilot studies (Coiro & Dobler, 2004, 2007), and a previous measure of online reading comprehension that had good psychometric properties (Leu at al., 2005).

ORCA–Scenario I (http://www.quia.com/quiz/700739.html) and ORCA–Scenario II (http://www.quia.com/quiz/744604.html) assessed online reading comprehension through a series of three related information requests. These online information requests were contained within an Internet treasure hunt designed by two fictitious seventh graders, James P. and Natasha R. from Australia, to invite students to use the Internet as part of a cooperative information exchange. Each instrument included 20 open-ended items constructed to measure aspects of reading comprehension while locating, critically evaluating, synthesizing, and communicating online information.

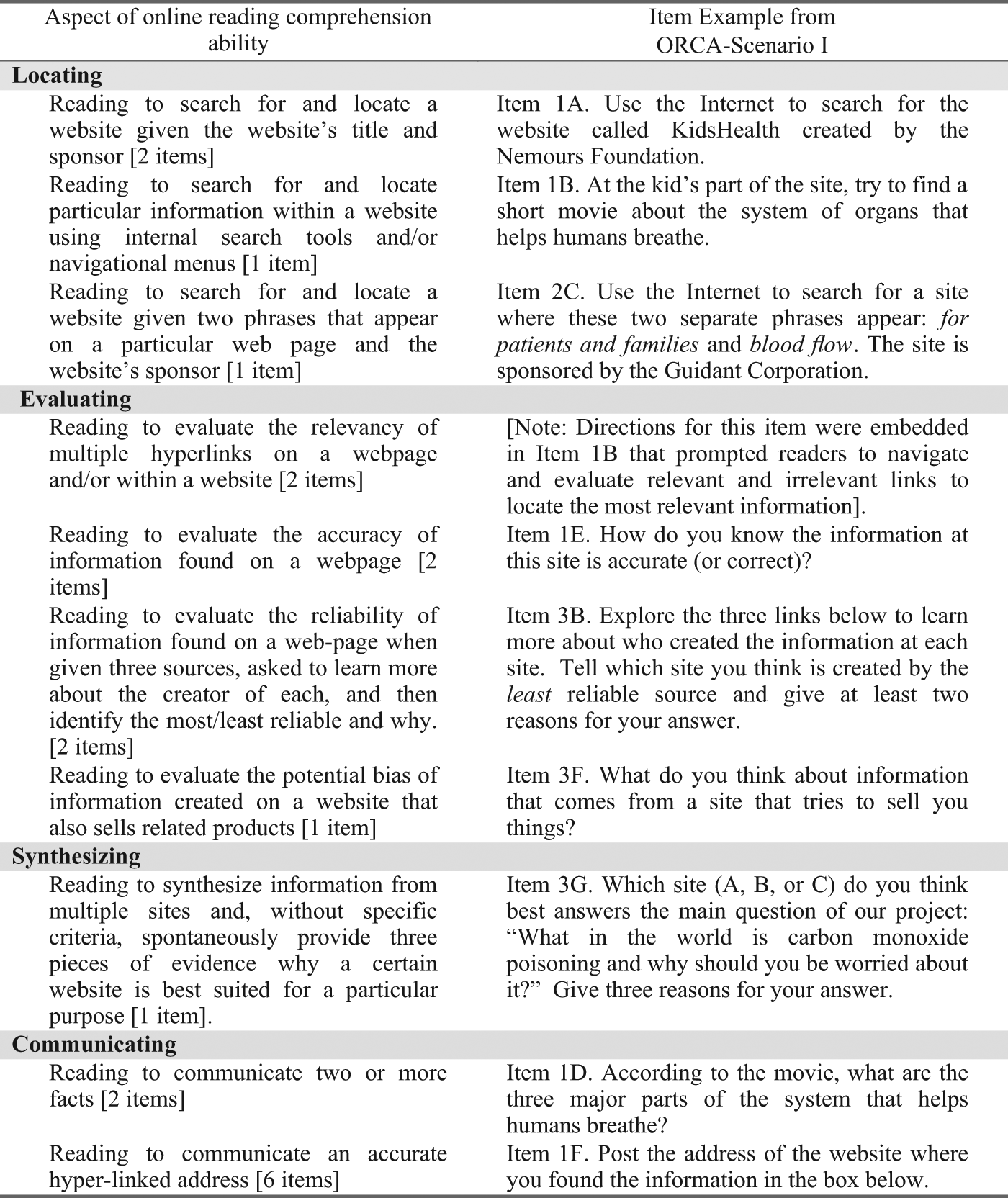

ORCA–Scenarios I and II demonstrated high internal consistency, with a reported Cronbach’s alpha of .918 and .909, respectively. To establish construct validity, task scores from both ORCA instruments were submitted to separate principal axis analyses with direct oblimin rotation that transforms factors to a simple structure where most of the loadings on any specific factor are small and a few loadings are as large as possible. An examination of the pattern matrix showed that task scores for both ORCA–Scenario I and ORCA–Scenario II clustered together at a satisfactory level and were predicted by one general factor that explained, respectively, 51.7% and 44.1% of the variance in scores in online reading comprehension. Figure 1 summarizes the task-specific operational definitions for each specific online reading skill and its associated item examples as measured in ORCA–Scenario I.

Summary of online reading skills and strategies and associated item examples as measured in Online Reading Comprehension Assessment (ORCA)–Scenario I

The guiding question for the first treasure hunt (ORCA–Scenario I) was, “What in the world is carbon monoxide poisoning and why should you be worried about it?” The three related activities required students to use the Internet to learn more about the respiratory system and the dangers of carbon monoxide poisoning. Tasks 1 and 2 involved primarily locating and communicating skills, and Task 3 involved a range of critical evaluation and synthesis skills. More specifically, in Task 2, for example, readers were asked to locate particular animations about the lungs and the heart, communicate the answers to questions about how carbon monoxide affects your body, and explain how they knew the information was accurate. Later, in Task 3, they were asked to explore three links to learn more about who created the information at each site, synthesize the main ideas and author’s claims found within three documents, and then communicate the addresses of the sites they believed to be most reliable and least reliable, along with an integrated explanation of their reasoning.

ORCA–Scenario II was framed as James’s and Natasha’s second online science project and required students to learn a few more important things about the respiratory system and the dangers of carbon monoxide poisoning. Since ORCA–Scenarios I and II were designed as parallel instruments, the guiding question and the item format remained almost identical across both instruments, but participants were asked to visit different websites to locate and evaluate different information each time.

Instrument Administration and Scoring

The prior knowledge instrument and parallel forms of the ORCA–Scenario online reading assessment instrument were administered to participants using a standardized protocol during two 75-min sessions scheduled 16 weeks apart in the spring of 2006. Students first met individually with a researcher for 15 min to complete the prior knowledge instrument and Internet use survey. Then, ORCA–Scenario I was presented to small groups of 6–9 participants within an online quiz interface. Students worked individually at their own computers and were given up to 60 min to complete the three tasks. Each student’s online reading actions were recorded and saved in a real-time video recording using screen capture software called Camtasia (http://www.camtasia.com). Students’ typed responses were automatically collected within the Quia interface (http://www.quia.com) and later exported into an Excel spreadsheet for analysis. Standardized reading scores were also collected from district records. After students completed the tasks and their work was saved, they were told that James and Natasha (the fictitious students) would use their answers to design a second Internet treasure hunt. Students were told they would be invited back to explore the second treasure hunt after it was completed later in the school year.

Approximately 16 weeks later, students returned to complete the same measure of prior knowledge and the parallel ORCA–Scenario II. The duration between the two reading sessions was originally planned to be much shorter, but participating school districts required a longer interlude to allow time for state standardized testing preparation, administration, and makeup sessions to occur. The duration between the ORCA–Scenarios I and II might have presented a threat to the internal validity of the instruments if students had recently studied the topic or used the Internet to research a topic similar to the one integrated into both online reading measures. However, interviews with classroom teachers indicated science instruction related to the human body was covered in the fall semester, prior to the administration of either instrument. In addition, paired t tests showed no significant change in prior knowledge scores from Time 1 to Time 2, t(108) = −0.887, p > .05. Consequently, prior knowledge scores from the second administration were used in the analysis because of their closer proximity to the administration of the dependent variable.

To score the online reading comprehension items, the researcher and one graduate student examined process data from each student’s online reading video and product data from his or her corresponding typed responses to each task. Scores were independently assigned to each student’s responses using a four-point (0–3) ORCA–Scenario Scoring Rubric (see the appendix) with possible total raw scores ranging from 0 to 60 points. A parallel scoring rubric using the same scoring system with slightly different task-specific criteria was used to score student data associated with ORCA–Scenario II. To establish interrater reliability for items on ORCA–Scenario I and ORCA–Scenario II, two independent raters scored all of the items on 25 assessments for both Scenario I and Scenario II (a 22% subset of the 109 assessments). After a training period with the researcher and one other graduate assistant scoring five practice sets of scores, the two scorers independently rated 20 sets of responses (selected randomly from the whole sample) according to the rubric. Interrater reliability estimates using Cohen’s kappa coefficients were κ = .96 for both ORCA–Scenario measures. After reliability was established on this 22% subset for each instrument, the researcher then scored the remaining assessments independently.

Analysis

Hierarchical multiple regression analysis (Tabachnick & Fidell, 2001) was employed to determine whether or not performance on one measure of online reading comprehension significantly predicted performance on a second, parallel measure of online reading comprehension over and above (a) offline reading comprehension and (b) topic-specific prior knowledge. Based on theoretical importance, the three independent variables were entered sequentially in the following order: offline reading comprehension, prior knowledge, and online reading comprehension (ORCA–Scenario I). The composite score generated from the second measure of online reading comprehension (ORCA–Scenario II) was used as the dependent variable. Correlations and beta weights between the relevant coefficients were calculated to determine the extent to which the set of variables and each independent variable significantly contributed to performance on the ORCA–Scenario II. Additional follow-up tests were conducted to test for any interaction effects between the predictor variables.

Results

Scores for the three predictor variables in the study were collected for 118 cases, but 9 students were absent during the administration of ORCA–Scenario II. As a result, 9 cases were removed with listwise deletion, leaving a sample size of 109. Green’s (1991) formula for calculating sample size with a regression analysis that tests the overall correlation and three individual predictors suggests n = 109 is appropriate.

Prior to the statistical analysis, all scores for the three independent variables and one dependent variable were examined for fit between their distributions and the assumptions of regression analysis. Examination of the residual plots determined variable distributions satisfied assumptions of linearity, homoscedasticity, and multivariate normality (see Tabachnick & Fidell, 2001). In addition, collinearity diagnostics of the bivariate correlations between the variables indicated no multicollinearity concerns.

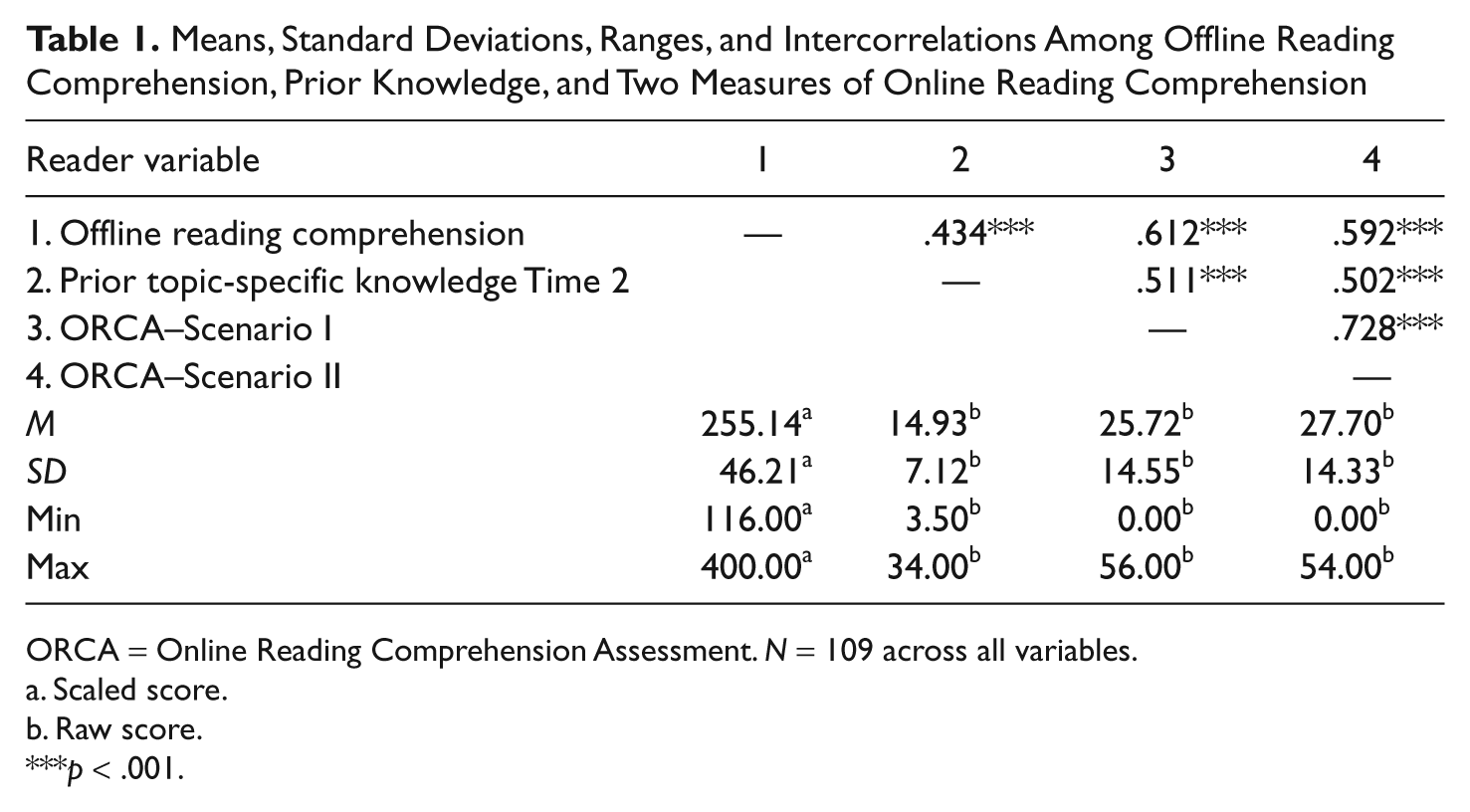

The means, standard deviations, ranges, and intercorrelations for all variables are presented in Table 1. Participants’ scaled scores for the standardized measure of offline reading comprehension ranged from 116 to 400, with possible scores ranging from 100 to 400. Raw scores on the prior knowledge instrument ranged from 3.5 to 40 points, and raw scores on ORCA–Scenarios I and II ranged from 0 to 56 out of possible 60 points. Students who received a score of 0 on one or both of the measures of online reading comprehension were unable to complete any of the tasks successfully. Typically, these students were not able to generate appropriate search terms to locate any of the relevant websites for any of the tasks, even with multiple search attempts. Consequently, they were not able to evaluate, synthesize, or communicate relevant information from the websites to gain points on any of the items.

Means, Standard Deviations, Ranges, and Intercorrelations Among Offline Reading Comprehension, Prior Knowledge, and Two Measures of Online Reading Comprehension

ORCA = Online Reading Comprehension Assessment. N = 109 across all variables.

Scaled score.

Raw score.

p < .001.

Bivariate correlation statistics in Table 1 show that offline reading comprehension correlated with both measures of online reading comprehension, r(109) = .612, p < .001 for ORCA–Scenario I and r(109) = .592, p < .001 for ORCA–Scenario II. Offline reading comprehension also correlated with prior topic knowledge, r(109) = .434, p < .001. Prior topic knowledge correlated with online reading comprehension, r(109) = .511, p < .001 for ORCA–Scenario I and r(109) = .502, p < .001 for ORCA–Scenario II. Finally, scores on ORCA–Scenario I correlated with scores on ORCA–Scenario II, r(109) = .728, p < .001.

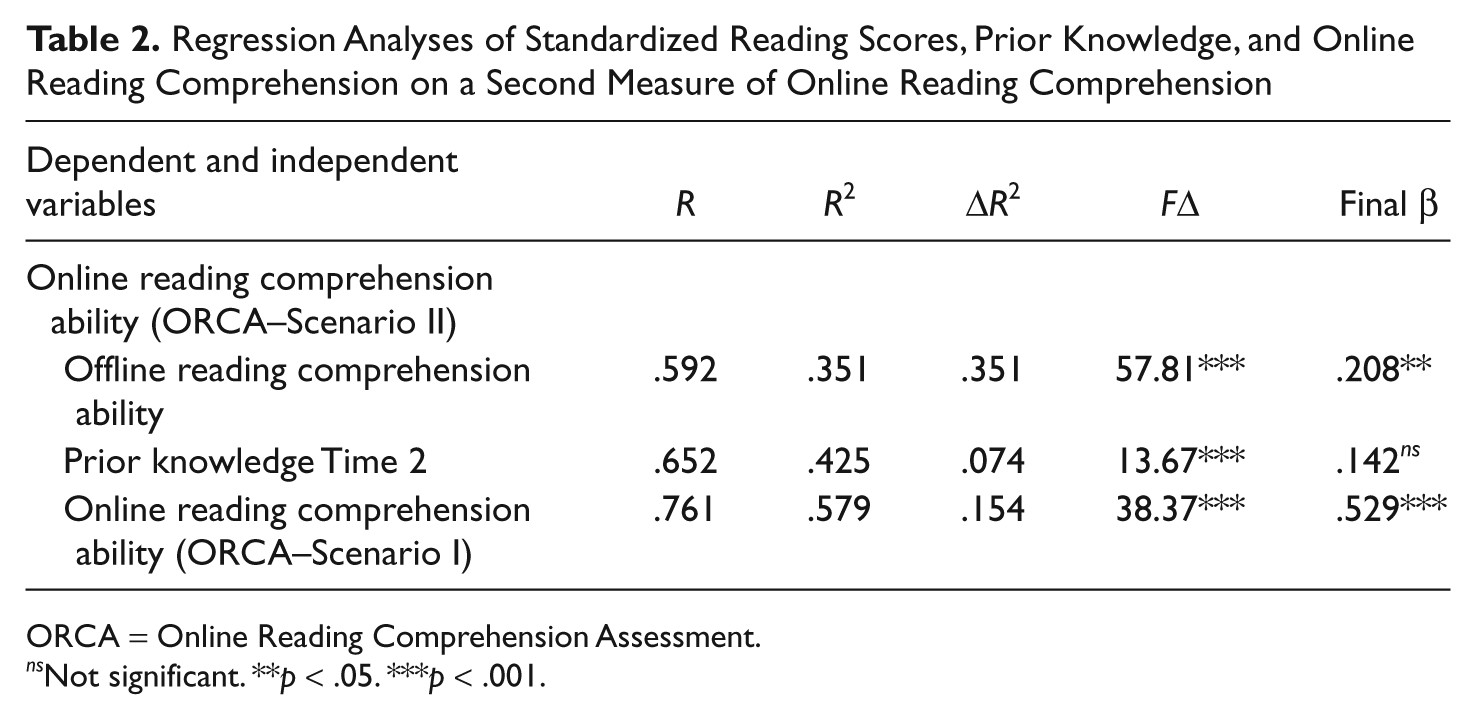

Results of the hierarchical regression analysis (see Table 2) with three predictor variables indicated, first, that offline reading comprehension, as measured by the combined scale score on a statewide sixth grade standardized reading assessment, explained 35.1% of the variance in online reading comprehension, which was significant, F change (1, 107) = 57.812, p < .001. The multiple R was .592, and the final beta for offline reading comprehension in the model was .208, t(107) = 2.549, p < .05.

Regression Analyses of Standardized Reading Scores, Prior Knowledge, and Online Reading Comprehension on a Second Measure of Online Reading Comprehension

ORCA = Online Reading Comprehension Assessment.

ns Not significant. **p < .05. ***p < .001.

After offline reading comprehension was accounted for, prior knowledge explained an additional 7.4% of the variance in online reading comprehension, which was significant, F change (1, 106) = 13.67, p < .001. The multiple R was .652. It is interesting that although prior knowledge explained a significant additional amount of the variance in the model, the final beta for prior knowledge in the model was .142, t(106) = 1.907, p = .059, which was not statistically significant. This suggested that topic-specific prior knowledge makes a strong joint contribution to explaining the scores in ORCA–Scenario II but does not make a strong unique contribution relative to offline reading comprehension performance on ORCA–Scenario I.

After both offline reading comprehension and prior knowledge were accounted for, scores on the first measure of online reading comprehension (ORCA–Scenario I) explained an additional 15.4% of the remaining variance in scores on the second measure of online reading comprehension (ORCA–Scenario II), which was significant, F change (1, 105) = 38.37, p < .001. The multiple R was .76, and the final beta for ORCA Scenario I scores was .529, t(105) = 6.194, p < .001.

These results indicated that performance on one measure of online reading comprehension accounted for a significant amount of unique variance in the scores on a second measure of online reading comprehension over and above that accounted for by offline reading comprehension and prior knowledge, in that order. Overall, for this sample of 109 seventh grade students, scores on a standardized reading comprehension test, a six-item measure of prior knowledge, and one measure of online reading comprehension explained 57.9% of the variance in scores on a second parallel measure of online reading comprehension. From a power perspective in the field of education (Cohen, 1988), this explanation had a large effect of .33.

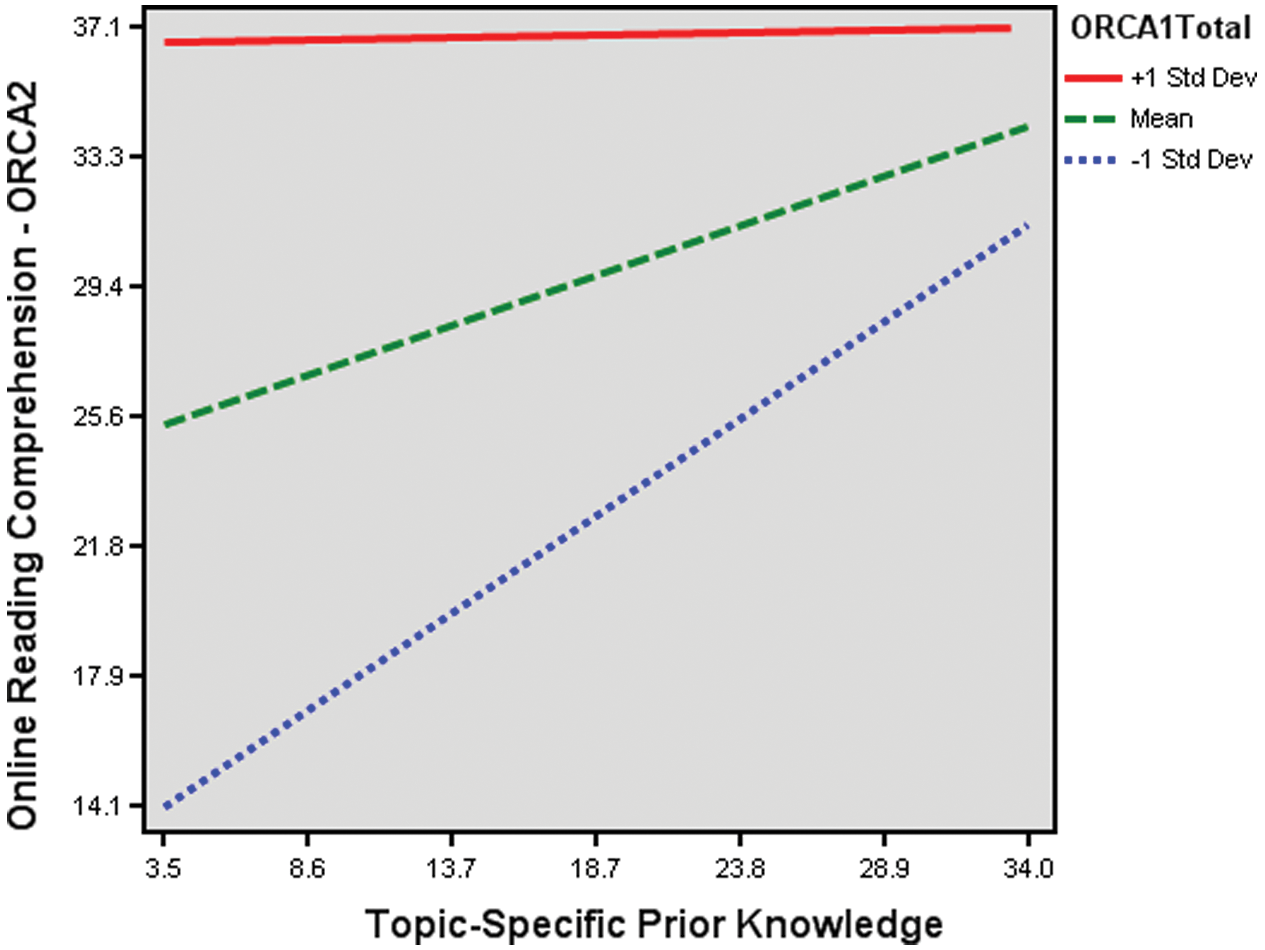

Analyses were also conducted to test for any interaction effects. For these analyses, the three independent variables were first centered to avoid problems of multicollinearity (Tabachnick & Fidell, 2001). Centering was accomplished by subtracting the mean from each variable so that each had a mean of zero (Aiken & West, 1991). Then the researcher computed interaction terms for each of the centered variables and ran a regression analysis that included first and second order terms for each variable. Results of these analyses found no significant interaction between offline reading comprehension and prior knowledge, t(105) = −0.321, p = .749, and no significant interaction between offline reading comprehension and the first measure of online reading comprehension (ORCA–Scenario I), t(105) = −0.467, p = .642. However, there was a significant negative interaction effect between prior knowledge and online reading comprehension (ORCA–Scenario I), t(105) = −2.28, p < .05.

When entered into the whole regression model as a fourth predictor variable, after statistically controlling for the significant main effects of offline reading comprehension, prior knowledge, and online reading comprehension (ORCA–Scenario I), the interaction between prior knowledge and online reading comprehension explained an additional 1.9% of variance in the model, which was significant, F change (1, 104) = 4.782, p < .05, multiple R = .77. The final beta for the interaction effect in the model was –.001, t(104) = −2.187, p < .05.

Follow-up analyses were conducted to assess the simple effects of prior knowledge and online reading comprehension skills at high and low levels (using rescaling procedures recommended by Aiken & West, 1991). Results indicated the following:

Among students with high levels of online reading comprehension skills (as measured by performance on ORCA–Scenario I), prior knowledge did not appear to significantly affect performance on ORCA–Scenario II, t(106) = 0.079, p = .937.

Among students with average levels of online reading comprehension skills on ORCA–Scenario I, prior knowledge did not appear to significantly affect performance on ORCA–Scenario II, t(106) = 1.965, p = .052.

Among students with low levels of online reading comprehension skills on ORCA–Scenario I, prior knowledge had a positive and significant effect on performance on ORCA–Scenario II, t(106) = 2.898, p < .01.

Figure 2 shows three separate regression lines representing the regression of online reading comprehension performance on ORCA–Scenario II on prior knowledge for the three levels of online reading comprehension performance on ORCA–Scenario I. The graph shows the nature of the interaction. For students with higher online reading comprehension skills (students with scores equal to 1 standard deviation above the mean score on ORCA–Scenario I), topic-specific prior knowledge had the least effect on ORCA–Scenario II scores. The flat solid line at the top of Figure 2 represents this lack of effect. Conversely, for students with lower online reading comprehension skills (students with scores equal to 1 standard deviation below the mean score on ORCA–Scenario I), prior knowledge had the greatest effect on their performance on ORCA–Scenario II. The dotted line with the greatest slope at the bottom of Figure 2 represents this effect. Finally, for students with average online reading comprehension skills (students with scores close to the mean score on ORCA–Scenario I), prior knowledge had some effect (although not significant) on their performance on ORCA–Scenario II, as illustrated by the dashed line with the medium slope in the middle of the figure.

A visual representation of the interaction effect when online reading comprehension performance on ORCA–Scenario II is regressed on prior knowledge for three levels of online reading comprehension performance on ORCA–Scenario I

In other words, students with higher levels of online reading ability tended to have higher ORCA–Scenario II scores, regardless of their level of topic-specific knowledge, whereas students with lower levels of online reading ability tended to have higher ORCA–Scenario II scores only if they came to the online reading task with higher levels of topic-specific prior knowledge. These findings suggest that for this sample of seventh graders, after statistically controlling for scores on offline reading comprehension, higher levels of online reading comprehension skills may help compensate for lower levels of prior knowledge when adolescents read on the Internet.

Discussion

This study sought to evaluate whether or not online reading comprehension played a significant and unique role in seventh graders’ online reading performance over and above students’ offline reading comprehension and prior knowledge. This section discusses the findings in more detail and their implications for literacy research, theory, and practice.

Theorizing About the Significant Correlation Between Offline Reading Comprehension and Online Reading Comprehension Found in This Study

Results of the regression analyses suggested the skills and strategies required to read on the Internet might be validly predicted by a combination of offline reading comprehension, topic-specific prior knowledge, and online reading comprehension. The finding that both offline and online reading comprehension skills made significant and independent contributions to performance on a series of online reading tasks is consistent with emerging evidence that offline and online reading comprehension may not be isomorphic processes (Leu et al., 2007). More specifically, these findings support other research that suggests the processes skilled readers use to comprehend online text are both similar to and more complex than what previous research suggests is required to comprehend offline informational text (see Afflerbach & Cho, 2008; Coiro & Dobler, 2007; Hartman et al., 2010; Kingsley, 2011).

However, the present study also found positive and significant intercorrelations between offline reading comprehension and online reading comprehension on ORCA–Scenario I (r = .612, p < .001) and on ORCA–Scenario II (r = .592, p < .001). These relatively large positive correlations between online and offline reading comprehension are contrary to findings from a previous study (Leu et al., 2005) that found no correlation between scores on the same measure of offline reading comprehension (e.g., standardized reading achievement scores) and online reading comprehension assessed with a series of online reading tasks situated in a blog interface (e.g., ORCA-Blog).

Understandably, one might wonder how two presumably valid and reliable instruments designed to assess aspects of the same online reading processes (e.g., online locating, evaluating, and communicating) could have such dramatically different relationships with the same standardized measure of offline reading comprehension. One possibility is that conceptions of online reading comprehension may very well be dependent on the complexity of the task. For example, as the structure of information in online reading environments moves from simple paragraphs on a page to complex lists of search engine results, and from multileveled information websites to highly populated blog interactions, it may be that each new text structure increases the likelihood that specialized sets of online reading strategies (i.e., new literacies) might be applied in ways that appear less and less similar to offline reading strategies. If this were the case, it might very well serve to explain how measures of similar online reading processes situated in differentially complex online reading environments (e.g., a blog versus an online quiz interface) could have very different relationships with the same standardized measure of offline reading achievement.

Other literacy researchers (Guthrie, Weber, & Kimmerly, 1993; Kirsch & Mosenthal, 1990) have proposed that different reading-to-do tasks in offline documents (e.g., charts, maps, and schedules) could be conceptualized as a series of ordered tasks that place lower or higher demands on the reader on the basis of document complexity and processing conditions. In the present study, the different correlations between online and offline task performance hinted at the possibility that online reading tasks may similarly fall on some sort of continuum of task difficulty based on new dimensions of text complexity and processing conditions associated with each online context. Thus, it might be possible that the nature of items and associated reading contexts in the ORCA–Scenario tasks were of less complexity (and thus more correlated with offline reading tasks) than the items and contexts encountered in the ORCA-Blog measure (and thus less correlated with offline reading tasks).

The differences in task items and reading contexts between the ORCA-Blog and ORCA–Scenario measures prompt the need for future conversations about how subtle differences in assessment designs might influence the level of ecological validity associated with the range of online reading tasks one might encounter on the Internet. Of course, much more work is necessary to better understand if this may indeed be the case. Nevertheless, conceiving of online reading comprehension as heavily dependent on online task complexity and processing conditions may help us begin to appreciate the infinite and continuously evolving range of tasks and texts that students may encounter on the Internet. Likewise, since it is virtually impossible to develop assessments that tap into all of these different tasks and texts in one instrument while keeping up with the rapid evolution of new texts, it makes us more aware of the challenges we face with respect to developing ecologically valid and reliable measures of new literacies in ways that can inform both research and practice on a regular basis.

Interpreting the Combined and Independent Contributions of Both Offline and Online Reading Comprehension in the Regression Model

Findings from the regression analyses can also inform emerging theories of online reading comprehension that seek to more precisely characterize the skills and strategies that independently contribute to online reading comprehension. At least four different scenarios can be offered as a tentative interpretation of what might characterize the combined and independent contributions of both offline and online reading comprehension in the model.

A first interpretation of the regression model could be that some online reading comprehension skills and strategies might be similar to offline reading comprehension but that others are unique to online reading. This possibility might be referred to as the “some are similar and others are unique” interpretation. For example, previous research has suggested that online locating processes such as accessing search engines, generating reasonable search terms, understanding website addresses, navigating multilevel websites, and using new information and communication tools might require new online reading comprehension strategies that are substantially different from offline reading comprehension strategies (Afflerbach & Cho, 2009; Henry, 2006; Jacobson & Ignacio, 1997; Sutherland-Smith, 2002). Consequently, performance on ORCA–Scenario items that tapped into online locating and communicating processes might be best predicted by knowing a reader’s level of online reading comprehension.

Likewise, one could argue that many online critical evaluation processes used to read more deeply within one or more texts might be quite similar to the range of critical reading processes highlighted as central to offline text comprehension (see Fitzgerald, 1997; McLaughlin & DeVoogd, 2003). In these cases, variance in performance on ORCA–Scenario items that measured online critical evaluation and synthesis skills might be best predicted by knowing a reader’s score on a measure of offline comprehension. Consequently, if each online reading skill required either new (online) or conventional (offline) reading processes but not both, offline comprehension ability would account for the variance in scores on some ORCA–Scenario II items and online comprehension ability would account for the variance in scores on other items in ORCA–Scenario II.

A second interpretation of the regression findings might be that many online reading comprehension skills have (a) something in common with offline reading comprehension skills and (b) something that reflects new or more complex dimensions of online reading comprehension skills. This possibility might be referred to as the “many are simultaneously similar and more complex” interpretation. In the present study, for example, many of the websites did not follow conventional offline text formats for citing sources, such as placing author and publisher information in a consistent location. As a result, readers attempting to determine the reliability of an online text in the ORCA–Scenarios needed to apply their new knowledge of how websites are organized to locate details about the information’s author and/or sponsor. Only those readers who could successfully locate relevant information about the author or the publishing body on the website (i.e., online reading skills) had an opportunity to apply more conventional offline practices to evaluate the source’s level of credibility (i.e., offline reading skills). Similarly, most offline information texts used in classrooms are not embedded with commercial interests to the extent that online information texts may be (Fabos, 2004). Consequently, in the ORCA–Scenario tasks, readers needed to first recognize the online techniques website designers used to embed commercial advertising within informational websites they encountered (i.e., online reading skills) before they could consider how that advertising might have influenced the way information was received (i.e., offline reading skills).

This second explanation of the regression model is consistent with earlier qualitative evidence that suggested successful Internet reading experiences appeared to simultaneously require both similar and more complex applications of offline reading processes (see Coiro & Dobler, 2007; Schmar-Dobler, 2003). Consequently, if each online reading skill required some combination of both new (online) and conventional (offline) reading processes, offline comprehension ability would account for some proportion of the variance and online comprehension ability would account for a unique proportion of variance in scores on each item in ORCA–Scenario II.

A third interpretation of the model combines aspects of the first two scenarios to suggest that some online reading skills might be unique and required only to comprehend online texts (e.g., querying electronic search engines) whereas other tasks and complex texts might prompt gradations of online reading skills that are sometimes more similar (e.g., reading within one webpage) and sometimes less similar (e.g., making inferences about hyperlinked addresses within a list of search engine results) to offline reading comprehension skills. This possibility might be referred to as the “some are similar, some are more complex, and some are unique” interpretation. This interpretation again calls on previous work with levels of offline document literacy (Kirsch & Mosenthal, 1990; Mosenthal, 1996) to propose the possibility that the complexity of online reading comprehension might be characterized by the extent to which online text structures and processing conditions are more and less similar to offline reading comprehension skills. In this scenario, (a) different proportions of offline and online reading comprehension ability would account for differences in ORCA–Scenario items that required an overlap of offline and online reading skills and (b) online reading comprehension ability would independently account for differences in ORCA–Scenario items that required primarily new online reading skills.

Finally, a fourth and slightly different interpretation of the regression model might be that the unique contribution of online reading comprehension ability may be explained, at least in part, by the different ways that offline and online reading comprehension were assessed in this study and, as a result, the skills each ORCA–Scenario instrument had the ability to tap. This explanation might be referred to as the “many online reading skills involve high-level strategic processes not fully captured in multiple-choice assessments” interpretation. That is, although standardized multiple-choice measures of reading achievement tend to capture aspects of offline reading comprehension that are reliable and correlated with other tests of offline reading skills (Pearson & Hamm, 2005), they tend to ignore issues related to prior knowledge (Johnston, 1983) and are less apt to include open-ended performance based items that tap into the strategic processes skilled readers use before, during, and after they respond to a comprehension question (Pearson & Hamm, 2005; Valencia, Heibert, & Afflerbach, 1994).

Thus, if online reading comprehension ability is characterized by high levels of strategic processing (see Coiro & Dobler, 2007; Hartman et al., 2010), it is possible that any multiple-choice measure of reading comprehension (offline or online reading comprehension) might pose a challenge to capturing all of the variance accounted for by performance-based process items that might be more validly matched to constructivist theories of online reading comprehension. This interpretation, of course, contributes to important debates among literacy researchers that have historically critiqued multiple-choice tests as not being able to capture the higher-level literacy abilities needed for participation in real-world literacy applications (see, e.g., Darling-Hammond & Wise, 1985; Johnston, 1983; Pearson & Hamm, 2005).

In summary, these findings raise a number of unanswered questions that suggest we have only scratched the surface with regard to understanding the overlapping and complex relationship between offline and online reading comprehension ability. And it is not surprising that the issue becomes even more complex when we consider the complicated role that prior knowledge appeared to play in online reading comprehension in this study, as discussed next.

Interpreting the Interaction Between Prior Knowledge and Online Reading Comprehension in the Regression Model

Results of the interaction effect suggested that although topic-specific prior knowledge played a significant role in online reading comprehension among readers with low levels of online reading skills, prior knowledge did not appear to influence online reading comprehension performance among readers with average and high levels of online reading skills. This finding is not in line with evidence from a large body of work that documents the important role that prior knowledge plays in offline reading comprehension (e.g., Anderson & Pearson, 1984; Bransford & Johnson, 1972; Kintsch, 1988; Means & Voss, 1985) and hypertext comprehension (e.g., Dillon & Gabbard, 1998; Lawless & Kulikowich, 1996; MacGregor, 1999) for all readers. Consequently, something unique may be taking place as low knowledge readers interact with and process hyperlinked text in open digital spaces.

Indeed, in the present study, there was evidence to suggest that some readers with higher levels of online reading skills and lower levels of topic-specific prior knowledge performed equally well or better than some students with higher prior knowledge and lower levels of online reading skills on a series of online reading tasks. Traditionally, when reading offline texts, we would expect higher levels of prior knowledge to automatically facilitate comprehension and lower levels of prior knowledge to impede comprehension (e.g., Kintsch, 1988; Voss, Vesonder, & Spilich, 1980). However, a review of the online reading Camtasia videos suggested that some lower-knowledge students with higher online reading skills were able to use the Internet to quickly locate the background information they needed and then proceed with the online reading task. In these cases, it appeared that the Internet might introduce new possibilities for low-knowledge readers to quickly locate information to which they might not otherwise have access.

These findings are consistent with results from Bilal’s (2001) study that indicated failure in online reading tasks did not appear to be associated with domain knowledge, topic knowledge, or offline reading ability. In fact, Bilal found that four students with higher levels of domain knowledge, topic knowledge, and offline reading ability were unsuccessful in completing the online search requests, whereas nine students with lower levels of prior knowledge completed their searches with at least partial success. Bilal concluded that unsuccessful readers in her study were more accurately described as readers with lower levels of knowledge about how best to gather information within associative, nonlinear hypermedia systems as opposed to readers with lower levels of knowledge about the topics they encountered.

These findings are also consistent with Hill and Hannafin’s (1997) study that found students’ lack of prior knowledge of Internet text systems, more than knowledge of the topic, often impaired their use of metacognitive strategies while reading online. Specifically, students who lacked knowledge of how to orient themselves in three-dimensional spaces had difficulty assessing situational requirements and developing successful plans to complete the tasks. Again, prior knowledge of the topic appeared to take a back seat to knowledge of how to navigate and negotiate multiple online texts when reading for information on the Internet.

Differences in the relative importance of topic-specific knowledge for online reading tasks may also be a consequence of the specificity of the overall tasks required to complete ORCA–Scenarios I and II. That is, in open-ended search tasks, content knowledge appeared to be more useful than the development of advanced search strategies to support the selection of quality keywords to use in a keyword search (Walton & Archer, 2004). However, well-defined online reading tasks that require students to search for precise, concrete information make higher demands on readers’ search strategies and lower demands on readers’ prior knowledge (Schacter, Chung, & Dorr, 1998).

The ORCA–Scenario tasks in the present study asked students to locate a particular fact on a specific website that was sponsored by a certain organization. Informed by Schacter and colleagues’ (1998) findings, the specificity of these tasks may have required more strategic knowledge about how to narrow down a search topic to produce a reasonable set of search results as opposed to any type of topical knowledge about the actual content of the website. Similarly, the critical evaluation tasks required readers to make inferences from biographic information about a website’s creator or detect advertising embedded within an informational website. Again, these tasks might have required more strategic knowledge of the conditions under which online information appeared to be untrustworthy or misleading as opposed to any prior knowledge about the website’s topic. Consequently, the specificity of ORCA–Scenario tasks may have contributed to the decreased role of topic-specific knowledge in relation to online strategic knowledge required to respond to the tasks appropriately.

This explanation is also consistent with findings from studies that examined the role of strategy knowledge and various types of background knowledge (e.g., content, domain, and word knowledge) in offline text comprehension among students in Grades 3 and 6 (Rupley & Willson, 1996; Willson & Rupley, 1997). In these studies, a range of quantitative analyses of the different variables indicated that topic-specific background knowledge begins to diminish in importance at about Grade 4, when strategy knowledge begins to play a more important role. Furthermore, findings suggested that by Grade 6, strategy knowledge of how to read text and what to read in text begins to dominate the prediction of reading comprehension for both narrative and expository text (Willson & Rupley, 1997).

Overall, previous work and evidence from the present study lend preliminary support to the possibility that well-defined online locating tasks may require readers to interact with nonlinear texts in ways that deemphasize the influence of topic-specific prior knowledge on online reading performance. Obviously, these very preliminary findings require much more investigation. In fact, it is important to note that in this study, prior knowledge was narrowly defined as knowledge about six concepts related to the topics and tasks in one set of online reading experiences. There are many other types of prior knowledge that could have been investigated in this study, such as general world knowledge (Anderson & Pearson, 1984), discourse knowledge (Meyer et al., 1980), and domain knowledge (Alexander et al., 1991). More detailed examinations of the role of various prior knowledge types are now needed to better understand the possibilities of such an unusual finding.

Furthermore, if the relative importance of prior knowledge is indeed a function of the specificity of online tasks or the nonlinear nature of online texts, an important direction for future research would be to more systematically identify (a) the online texts and tasks that tend to demand higher levels of domain- and/or topic-specific prior knowledge as well as (b) the online texts and tasks that might require students to call on alternative sources of knowledge unique to the Internet. This information could inform future research seeking to understand which factors to consider when determining the readability and appropriateness of complex online reading tasks for the range of students with whom we work.

Implications and Limitations

Findings from this study can contribute to literacy research in several important ways. First, findings can inform thinking about how to measure online reading comprehension. Historically, literacy scholars have outlined the challenges of designing theoretically motivated assessments that validly capture higher level reading comprehension processes as readers interact with text (Johnston, 1983; Pearson & Hamm, 2005; Valencia et al., 1994). With respect to research in online reading comprehension, this study offers the literacy community two innovative examples of assessment instruments (ORCA–Scenario I and ORCA–Scenario II) that appear to be valid, reliable, and motivated by a combination of established and new theories of reading comprehension. In addition, a regression model that included both of these instruments as separate measures of online reading comprehension can provide important new insights about what combination of variables appears to predict how seventh graders read for information on the Internet.

At the same time, we need to be cautious in how we interpret these findings. Both assessment instruments focused on externally assigned requests to locate and evaluate specific portions of online information rather than allowing students to self-select the topics and tasks themselves. Some research suggests that students read differently when they determine their own tasks (e.g., Bilal, 2001; Eagleton & Dobler, 2007; Leu et al., 2007). This study then provides more information about teacher-determined online reading and paves the way for additional research that compares these reading comprehension strategies to those used for student-determined online reading.

In addition, although the items were framed around a model of four new literacy components (e.g., locating, evaluating, synthesizing, and communicating), this study took a rather narrow view of synthesis and communication. For the present study, synthesis was limited to a reader’s ability to compare, contrast, and integrate information across three particular web pages with paragraphs of similar factual information about the same topic. Students were not asked, for instance, to synthesize information across multiple media formats (e.g., animations, video, audio, etc.) or across online locations that presented distinctly different perspectives on one or more topics. Similarly, students were asked to asynchronously communicate their answers using an online quiz interface as opposed to using the range of other online communication tools (e.g., email, weblogs, or instant messaging) as part of the online reading process. Requests to employ higher levels of synthesis and other real-time collaborative communication tools would most likely further inform our emerging understanding of online reading comprehension. Thus, the patterns emerging from this study only hint at the complexity likely to be found in future studies that examine how readers negotiate broader conceptions of all the dimensions of a new literacies perspective.

It is also important to keep in mind that the ordinal data associated with scores in online reading comprehension were treated as interval data to conduct more sensitive and powerful multivariate techniques (e.g., hierarchical regression). Although the practice of employing parametric statistical tests to analyze responses to open-ended items using an ordinal coding scheme is actually quite common in published scholarly research (see, for just two examples, Klauda & Guthrie, 2008; A. H. Paris & Paris, 2003), some classic measurement scholars (e.g., Stevens, 1946) believe that interval measures are required for customary statistical tests. Yet many have cited the robustness of correlation and other parametric coefficients with respect to any ordinal distortion of the data if the results are interpreted with caution (see, e.g., Jaccard & Wan, 1996; Kim, 1975). Moreover, Labovitz (1967) argued that such “measurement experimentation is not only justifiable, but necessary” (p. 156) to inform developing theory and help identify important next steps for early research in new literacies (also see Burke, 1963). Consequently, we should continue to be cautious in our interpretations of the findings until more research can replicate the regression patterns found in this study with additional online reading tasks and measures.

In addition, instruments that move beyond measuring online reading performance across tasks with relatively similar content (e.g., the respiratory system and carbon monoxide poisoning) to measure online reading skills that cut across a range of domains would be useful for providing a more generalized understanding of the online reading comprehension skills required to locate, evaluate, synthesize, and communicate information on the Internet. And finally, more focused attention on developing valid and reliable assessments of online reading comprehension that are also practical in a range of school contexts will be an important step toward expanding this type of investigation in diverse settings.

Findings from this study can also inform our thinking about a new literacies theory of online reading comprehension. Some have previously argued that most Internet technologies require fundamentally different ways of thinking about reading comprehension. On one hand, findings from this study suggest offline reading comprehension can inform, but not complete, our understanding of online reading comprehension. In essence, then, these findings may serve to cautiously validate the notion that reading skills and strategies beyond those measured by traditional reading assessments are required to read proficiently on the Internet (also see Afflerbach & Cho, 2009; Coiro, 2003; Coiro & Dobler, 2007; Hartman et al., 2010; Kingsley, 2011; Leu et al., 2009).