Abstract

The field of literacy education has long been concerned with the question of how to help classroom teachers improve their practices so that students will improve as readers. Although there is consensus on what characterizes effective professional development, the reading research on which this consensus is based most often is small scale and involves direct support provided by university faculty. The South Carolina Reading Initiative is an exception: It is a statewide, site-based, large-scale staff development effort led by site-selected literacy coaches. Although university faculty provide long-term staff development to the coaches, the faculty are not directly involved with the professional development provided to teachers. In this study we sought to understand whether site-based, site-chosen literacy coaches could help teachers’ beliefs and practices become more consistent with what the field considers to be best practices. To understand teacher change, we used two surveys (Theoretical Orientation to Reading Profile, n = 817; South Carolina Reading Profile, n = 1,005) and case study research (n = 39) to document teachers’ beliefs and practices. We also had access to a state department survey (n = 1,428). Across these data, we found that teachers’ beliefs and practices became increasingly consistent with best practices as defined by standards set by the South Carolina State Department of Education, standards that were consistent with national standards. This suggests that large-scale staff development can affect teachers when the providers are site-based, site-selected literacy coaches.

Stakeholders in literacy education have long agreed that it is the teacher, not the method, that makes a difference (Anderson, Hiebert, Scott, & Wilkinson, 1985; Bond, Dykstra, Clymer & Summers, 1997; Darling-Hammond, 1997, 2000; Duffy, 1983; Duffy & Hoffman, 1999; Duffy, Roehler, & Putnam, 1987; Ferguson, 1991; Langer, 2001; Hoffman et al., 1998; National Institute of Child Health and Human Development, 2000; Sanders, 1998; Shanahan & Neuman, 1997). Stakeholders also generally agree that some forms of staff development can help teachers increase their effectiveness. Indeed, some argue that professional development is a key component of teacher change (Wei, Darling-Hammond, Andree, Richardson, & Orphanos, 2009). Sparks (2000) suggests that effective professional development should focus on content and methods that teachers need to use with students, whereas Darling-Hammond and McLaughlin (1995) stress that the goal of professional development should be “deepening teachers’ understanding of the process of teaching and learning and of the students they teach” (p. 598). As Gusky (2000) notes, a single workshop or event cannot meet these goals; rather, professional development must be intentional, ongoing, and systemic. Richardson and Placier (2001) summarized the research on teacher change in the Handbook of Research on Teaching and concluded that effective staff development is schoolwide and context specific, supported by principals, long term with adequate support and follow-up, collegial, based on current knowledge obtained through well-defined research, and adequately funded.

Although the field generally accepts all of these characteristics, the research on which they are drawn has two other often-overlooked characteristics: It is often small scale and directly involves the expertise of university faculty. Consider, for example, three recent studies of staff development in reading for in-service teachers that were consistent with the characteristics identified by Richardson and Placier (2001). In all three, university faculty played a significant role in the professional development at the school level. Dutro, Fisk, Koch, Roop, and Wixson (2002) studied 48 teachers and administrators from four school districts who participated in study groups for 2 years lead by university faculty. Morrow and Casey (2004) studied and participated in a 2-year-long professional development effort that involved 12 teachers. The study conducted by Taylor, Pearson, Peterson, and Rodriguez (2005) differs from these studies both in scope and in the role of the university faculty. Their study focused on 92 teachers in 13 schools who participated in the Center for the Improvement of Early Reading Achievement school change framework. The study groups did not have a university-based facilitator; however, all materials for the study group, including information gathered via observations on the classroom practices of participating teachers used to determine the content of the study group, was provided by university faculty.

These studies provide the field with useful information about effective staff development led by university faculty. However, they do not provide information about whether or not a large-scale professional development effort using site-based, site-selected literacy coaches could also have a positive effect on changing teacher beliefs and practices. Although the use of literacy coaches is currently in vogue, in part because of the large-scale professional development mandated under the Elementary and Secondary Education Act (2001), there is little research on the impact of literacy coaches. Instead, most of the research details what coaches do, that is, how much time they spend in classrooms versus with other tasks (e.g., Alverman, Commeryas, Cramer, & Harnish, 2005; Coggins, Stoddard, & Culter, 2003; Poglinco et al., 2003; Roller, 2006; Smith, 2006). They do not report on the impact of coaches on teachers’ beliefs and practices. One study (Kinnucan-Welsch, Rosemary, & Grogan, 2006) looked at the impact of literacy coaches on teacher’s practices. These researchers studied the Literacy Specialist Project in Ohio schools. University faculty met with coaches once a month to review the material and plan study groups. To assess the impact of this training, the researchers created a list of 24 concepts and asked teachers at the beginning and end of the year to assess their understandings of those concepts using a 5-point scale. Comparing pre and post means using a t test, Kinnucan-Welsch et al. (2006) reported that teachers in all three years (n = 161, 229, and 393, respectively) felt they better understood the taught concepts at the end of the staff development year than at the beginning. The researchers concluded that by using the core curriculum teachers were able to “learn about literacy teaching” (p. 434).

To better understand the impact of literacy coaches on teachers’ knowledge base and practices, we studied South Carolina’s statewide professional development model, the South Carolina Reading Initiative (SCRI). SCRI is grounded in the characteristics of effective professional development. However, in contrast to most studies we reviewed, SCRI is large in scale and literacy coaches, not university faculty, conduct all site-based work.

Background of the Study

The South Carolina Reading Initiative

In 2000, the South Carolina State Department of Education (SDE) developed SCRI, a multiyear, K–5, statewide effort to improve the knowledge base of teachers so they could make more informed curricular decisions about the teaching of reading. In the first iteration (2000–2003) 73 literacy coaches each worked with 8–10 elementary teachers and administrators on site at four schools. They facilitated bimonthly study groups and spent 4 days a week working with teachers in their classrooms. Across the state, 215 schools and 1,500 to 1,700 teachers participated each year; 1,082 teachers participated all 3 years.

The SDE asked districts to apply to participate in SCRI. They had to make a 3-year commitment and provide the coach with adequate materials and support (e.g., an office and a computer). Districts received $50,000 a year for 3 years to offset the costs associated with hiring a literacy coach. The legislative allotment for the initiative was $3 million.

Literacy Coaches and Participants

The SDE required that all coaches had a master’s degree. Beyond that, each district established criteria for hiring their literacy coach. In some cases, coaches were hired from within the district; sometimes they were hired from outside the district. Some districts selected strong classroom teachers; some inadvertently hired weak ones; some hired teachers with administrative experience; others did not; some hired relatively new teachers; some hired retirees.

Principals had to agree to participate in study groups to receive funding for SCRI. In addition, at least one teacher at every grade level needed to participate. The number of years of teaching experience varied from first-year teachers to those who retired during the 3 years of SCRI.

Support and Materials for the Literacy Coaches

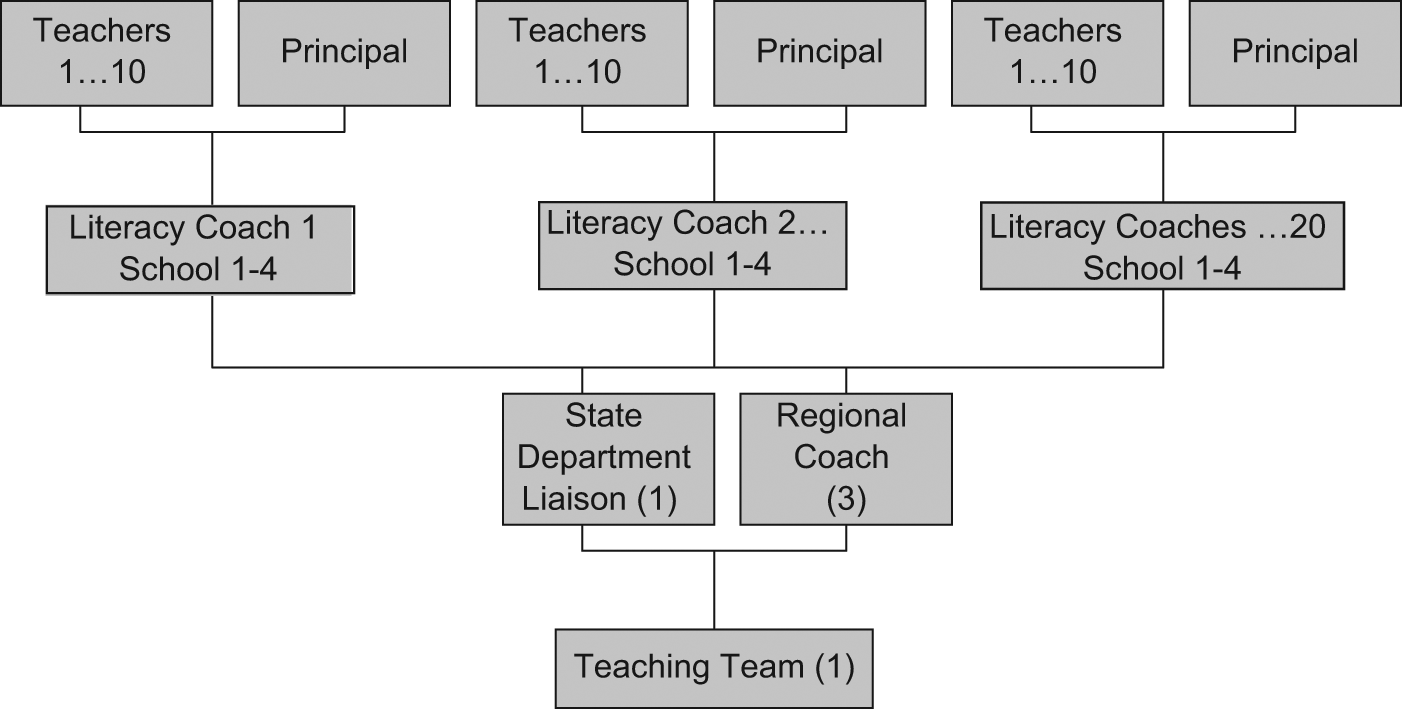

The SDE provided three levels of support to the literacy coaches: university faculty (known as teaching team members), regional literacy coaches, and state department liaisons (see Figure 1). To ensure that all literacy coaches had a broad, deep, and shared understanding about reading, they were grouped into learning cohorts led by a teaching team member. Within those cohorts, they took nine graduate hours each year in language and literacy. This was referred to as State Study. Literacy coaches traveled to a central location from their home districts one day a month and for 8 days in the summer for State Study. The courses were grounded in a sociocultural (e.g., Moll, Amanti, Neff, & Gonzalez, 1992), sociopsycholinguistic perspective (e.g., K. S. Goodman, 1967; Halliday, 1973)—in a belief that language learning is a sociocultural process and that learning written language has many parallels to oral language acquisition (Brown, 1970; Harste, Burke, & Woodward, 1984; Lindfors, 1991). The courses focused on topics such as reading foundations, methods, and assessment. Although no formal instruction was provided on coaching, there was often time within State Study to discuss materials (articles, videos, and books) that might help teachers understand frameworks such as read-aloud, shared reading, and guided reading. In addition, conversations about working with peers were woven into class discussions.

South Carolina Reading Initiative cohort model for professional development

Regional literacy coaches, hired by the state department of education, also enrolled in these courses. Each regional coach supported six to eight of the coaches in their cohort. They met with them one additional day a month to delve deeper into content presented in State Study and to help them with implementation issues. In addition, regional coaches made monthly on-site visits to coaches and provided them with feedback on their study group facilitation and on their in-classroom support of teachers. Literary coaches also had a state department liaison, a literacy education employee from the department who provided additional support to the literacy coaches in their schools. As necessary, the state department liaisons helped literacy coaches and regional literacy coaches resolve implementation challenges (Morgan et al., 2003).

To help coaches with study groups, the state department purchased an array of articles, books, and videos. These materials, many developed by the National Council of Teachers of English, were used in their courses (see Appendix A for a list of books and articles provided to coaches). Coaches could choose to use these materials in their study groups. For example, in State Study, coaches read books by classroom teachers (e.g., Sharon Taberski’s [2000] On Solid Ground), which might then be used in study groups. Coaches also could choose resources not used in State Study. (For further information about courses and supports provided to the literacy coaches, see Donnelly et al., 2005).

The Role of the Literacy Coach

The coaches had two main roles in the schools: facilitate study groups and provide in-classroom coaching support.

Study groups

Consistent with the characteristics of effective staff development, for 3 years literacy coaches facilitated bimonthly after-school study groups with 8 to 10 elementary school teachers and their principal. Because each coach supported four schools, this meant the coach led eight study group sessions per month. The content of the study groups was grounded both in research and in theory and was consistent with the research on best practices (e.g., Anderson et al., 1985; Darling-Hammond, 1997; Pressley, Rankin, & Yokoi, 1996; Taylor, Pearson, Clark, & Walpole, 2000; Wharton-McDonald, Pressley, & Hampston, 1999). Although teachers learned about best practices in reading instruction, they also learned about the theory and research behind those practices, enabling them to understand why these best practices (e.g., flexible small-group instruction for children based on assessed needs at a particular point in time) were effective. Thus, teachers read about oral and written language acquisition, reading Halliday (1973) and Pinnell (1985) on how children acquire oral language; Lindfors (1991) and Harste et al. (1984) on how oral and written language is learned; and Cambourne (1998) on conditions under which oral and written language is learned. They also learned about cue systems in language (Clay, 1991; Y. Goodman, Watson, & Burke, 1980), how to take a running record (Clay, 1993), and how to complete a miscue analysis (Y. Goodman & Burke, 1972). They explored how to use the information from these assessments to provide effective whole-group, small-group, and one-on-one instruction. In addition, teachers learned about reading workshop (Atwell, 1998) and writing workshop (Graves, 1983) frameworks.

Although there was not a prescribed format for the school-based study groups, coaches sometimes used a modification of the format used in their State Study: read-aloud, sharing, literature discussion, mini or maxi lessons. However, the coach determined the form and content based on the group’s needs. Just as the field wants teachers to assess a child as a reader and design instruction to meet the needs of the child, literacy coaches were charged with understanding each teacher as a teacher of readers and with providing instruction (such as demonstrations, engagements, team teaching, visits to other schools, professional readings) to help the teacher become a more capable teacher of readers. Their job as literacy coach was not to tell teachers what to do but to help them develop a broad and deep understanding of the reading process and of assessment so that teachers could make curricular decisions that best met the needs of each and every child as a reader. Coaches were mentors and facilitators, not enforcers of particular programs or products. This meant that in a given week, a coach in one district might be focusing on classroom management whereas another might be focusing on reading conferences. The study group plans then grew out of what the coach knew about teachers, what teachers wanted to learn, and what information the coach felt would help teachers.

In classroom support

Coaches spent 4 days a week in classrooms helping teachers experiment with ideas they were learning about in study groups. Some coaches had a rotating schedule; others asked teachers to sign up; still others visited informally and only some sessions were prearranged. At any time, teachers with specific needs could request assistance.

Coaches supported teachers by demonstrating instructional strategies, conferring about how to best match instruction to children’s literacy needs, and sharing instructional resources. In so doing, coaches encouraged teachers to critically examine their current practices and envision new ways of thinking about literacy instruction and learning. While in the classroom, coaches also informally gathered information about children’s reading and writing performance and, through observation and conversation, gained insights into teachers’ beliefs about teaching and learning. With this information, they used side-by-side classroom demonstrations and conversations to help teachers better understand research-based practices. Having a coach in the classroom was designed to help teachers broaden their instructional repertoires, better assess student’s needs, and develop specific instructional strategies aligned with children’s needs.

Studying the Impact of Literacy Coaches on Teachers: Research Focus

SCRI was grounded in the characteristics of effective practice as detailed by Richardson and Placier (2001). In contrast to the studies on which those characteristics were derived, however, SCRI was large scale and literacy coaches handled all site-based work. University faculty did not work directly with the participating schools, nor did they prescribe the content used by coaches; instead, it was the literacy coach who determined the content of and facilitated 3-year-long, bimonthly, after-school, site-based study groups and provided support to teachers in their classrooms during the school day. The goal of SCRI was for literacy coaches to help broaden and deepen teachers’ understanding of the reading process so that children in the classrooms of SCRI teachers would become better readers. The purpose of our research was to understand the degree to which, via literacy coaches, SCRI was able to accomplish this goal. To do so, we conducted two surveys (Theoretical Orientation to Reading, n = 817; South Carolina Reading Profile, n = 1,005) and case study research (n = 39). We then examined the data for patterns across all 39 cases. During the time we were collecting data, the SDE also surveyed participants at end of the 3rd year (n = 1428), and we had access to those data.

Study 1: Survey Research

We used data from three survey instruments to determine the impact of literacy coaches on teachers’ beliefs and practices: the Theoretical Orientation to Reading Profile (DeFord, 1985), the South Carolina Reading Profile (Stephens, Donnelly, & Johnson, 2001), and an SDE Survey (SDE, 2003).

Theoretical Orientation to Reading Profile

We administered the Theoretical Orientation to Reading Profile (TORP) in the fall and spring of all 3 years of SCRI. This instrument was not ideal for our purposes as its intent is to identify theoretical orientation. However, it was available at the start of SCRI and was a way for us to gather large-scale baseline data about teachers’ self-reported beliefs.

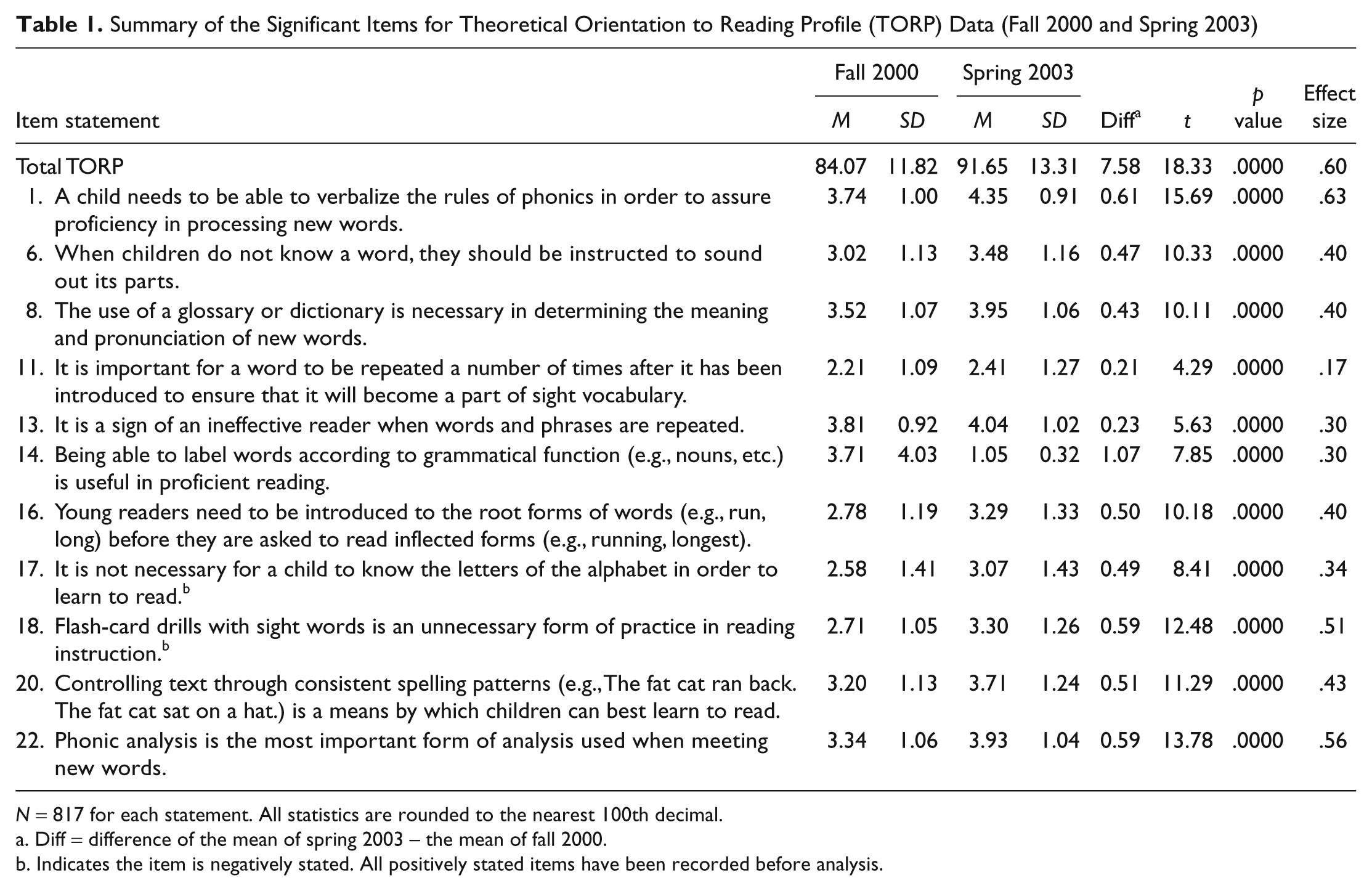

After a careful review of the items on the TORP, the research team selected as its focus 11 items that asked teachers about aspects of teaching, which were consistent with what teachers were learning in SCRI. There were 1,082 teachers who were in all 3 years of SCRI, 817 of whom completed the TORP all six times it was administered. By examining the 817 responses to those items across time, we ascertained that teachers who were participating in SCRI were becoming increasingly consistent with SCRI in those areas (see Table 1). The effect sizes for all items ranged from a low of .17 on Item 11, to modest (.24 on Item 13, .30 on Item 14, .34 on Item 17), to substantial (.40 to .43 for Items 6, 8, 16, and 20; .51 for Item 18; and .63 for Item 1).

Summary of the Significant Items for Theoretical Orientation to Reading Profile (TORP) Data (Fall 2000 and Spring 2003)

N = 817 for each statement. All statistics are rounded to the nearest 100th decimal.

Diff = difference of the mean of spring 2003 – the mean of fall 2000.

Indicates the item is negatively stated. All positively stated items have been recorded before analysis.

South Carolina Reading Profile

To better capture teachers’ perceived consistency with SCRI beliefs and practices, during the first year of SCRI, we created a large-scale survey instrument, the South Carolina Reading Profile (SCRP). The SCRP is a 60-item survey. The items are based on the SCRI Belief Statements (see Appendix B). The statements, in turn, are consistent with state and national (National Council of Teachers of English or International Reading Association) standards for English language arts (ELA) and therefore with research on what the field considers best practice (e.g., Anderson et al., 1985; Darling-Hammond, 1997; Pressley et al., 1996; Taylor et al., 2000; Wharton-McDonald et al., 1999). The purpose of the SCRP is to assess changes in teachers’ beliefs and practices while they were involved in SCRI. Each item uses a Likert-type scale ranging from 1 (strongly disagree) to 4 (strongly agree). A total of 34 items are stated in a positive manner consistent with the SCRI Belief Statements (e.g., “In my classroom, one of the reasons I have students write is because writing helps them as readers”). For these items, a higher number indicates that the response was more consistent with SCRI Belief Statements.

Of the survey items, 26 are stated negatively relative to the SCRI Belief Statements (e.g., “All reading instruction should be whole group; all students should read the same story at the same time”). As part of the analysis of the SCRP, the values for the negatively stated items are recoded so that a teacher who indicated a strong disagreement with a practice inconsistent with the SCRI Belief Statements would receive a high score.

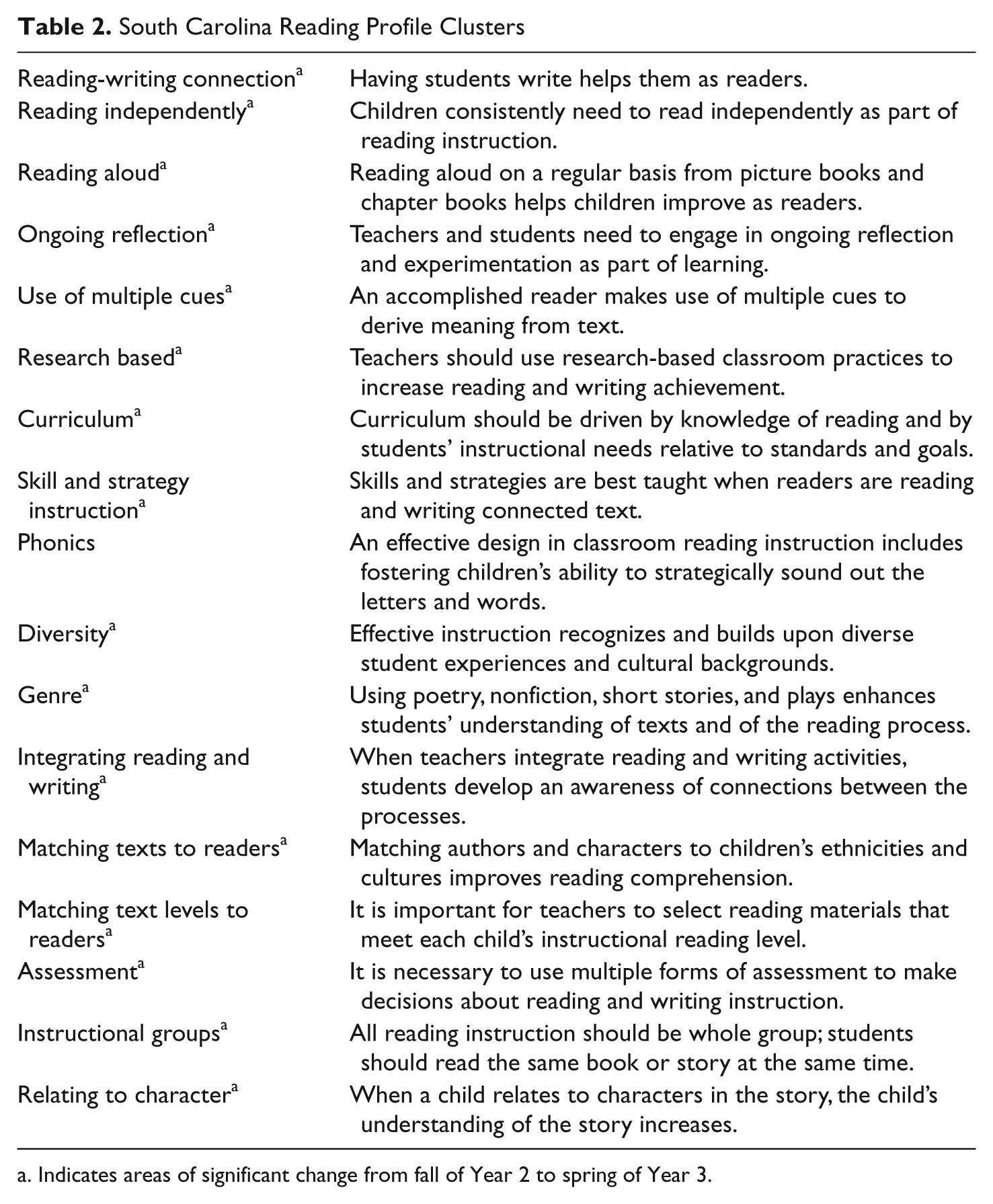

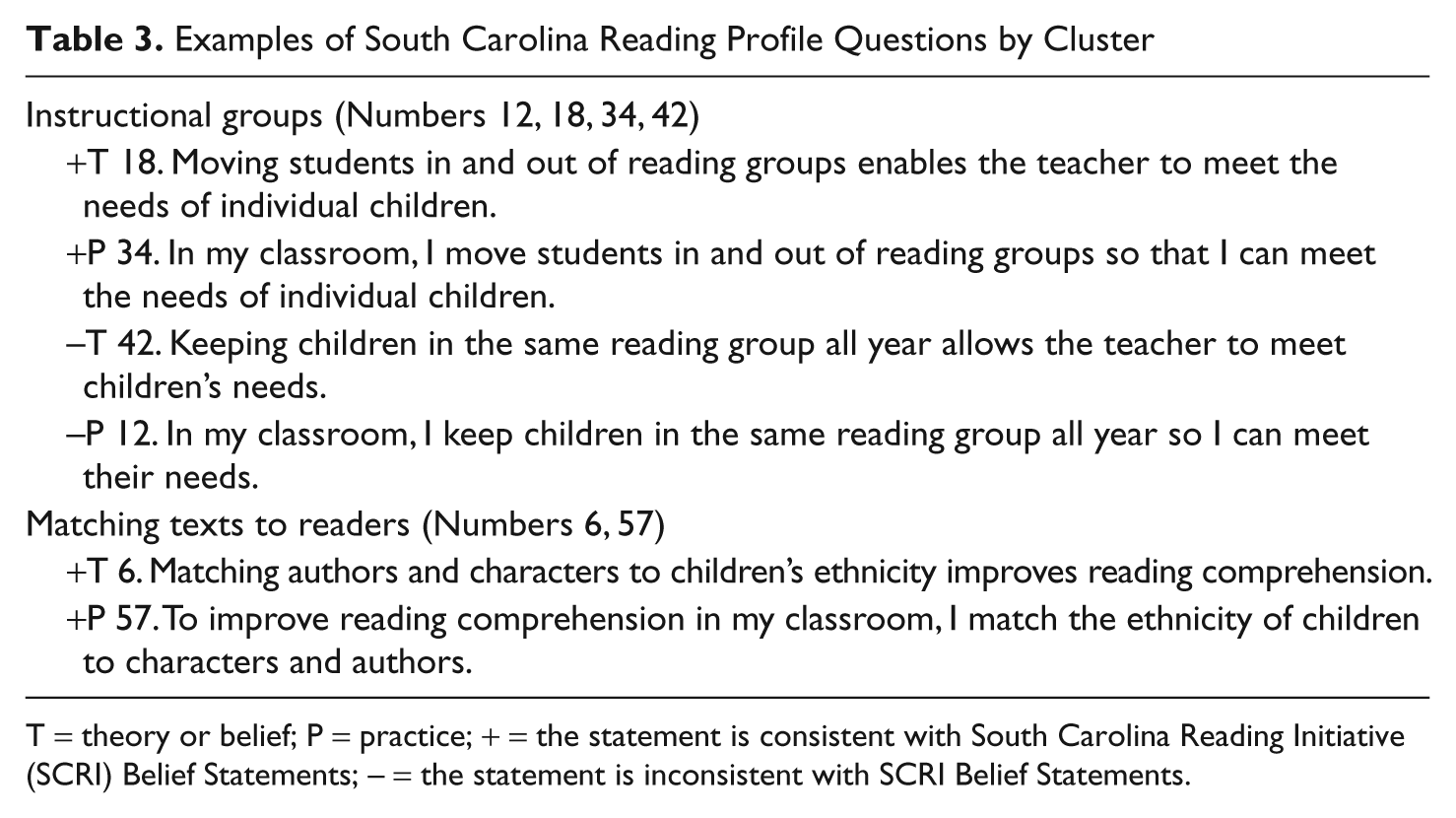

The 60 SCRP items assess 17 clusters of teachers’ beliefs and practices (see Table 2). These clusters measure a teacher’s agreement or disagreement with a core theoretical belief and an associated classroom practice. There are four items for 13 of the clusters and two items for 4 of them. When there are four items, two ask about beliefs or theories and two ask about practice; two are stated positively, two are stated negatively. When there are two items, one addresses beliefs or theories and one addresses practices; both are stated positively. Examples of a four-item and a two-item cluster are shown in Table 3.

South Carolina Reading Profile Clusters

Indicates areas of significant change from fall of Year 2 to spring of Year 3.

Examples of South Carolina Reading Profile Questions by Cluster

T = theory or belief; P = practice; + = the statement is consistent with South Carolina Reading Initiative (SCRI) Belief Statements; − = the statement is inconsistent with SCRI Belief Statements.

To evaluate the quality of the items, the SCRP was administered to two groups. The first group was 32 preservice teachers. Giving the SCRP to preservice teachers allowed us to determine if the language used in the items clearly communicated to a group with a beginning understanding of ELA. It also provided information about the internal consistency of the instrument. To identify any items that were not functioning appropriately, we examined the correlation between the items and the total score on the scale. An item is considered to appropriately discriminate as the correlation approaches 1.0. After items that had low item-to-total score correlations were deleted, the alpha ranged from .9183 to .9283. In terms of internal consistency of the items, 56 correlated positively with the total score. However, 4 items did not correlate or correlated negatively. These items, all of which involved the phonics cluster, were reviewed and revised before the second administration. The internal consistency for this sample was .93.

In the second administration, 88 SCRI coaches completed the SCRP. We wanted to determine if the language used in the items communicated clearly to a group of individuals with a more sophisticated ELA knowledge base than the preservice teachers. In this sample, 52 items correlated positively with the total score and 8 items (half of which involved the phonics cluster) did not correlate or correlated negatively. Again, these items were reviewed by the research team and revised. The internal consistency for this sample was .81.

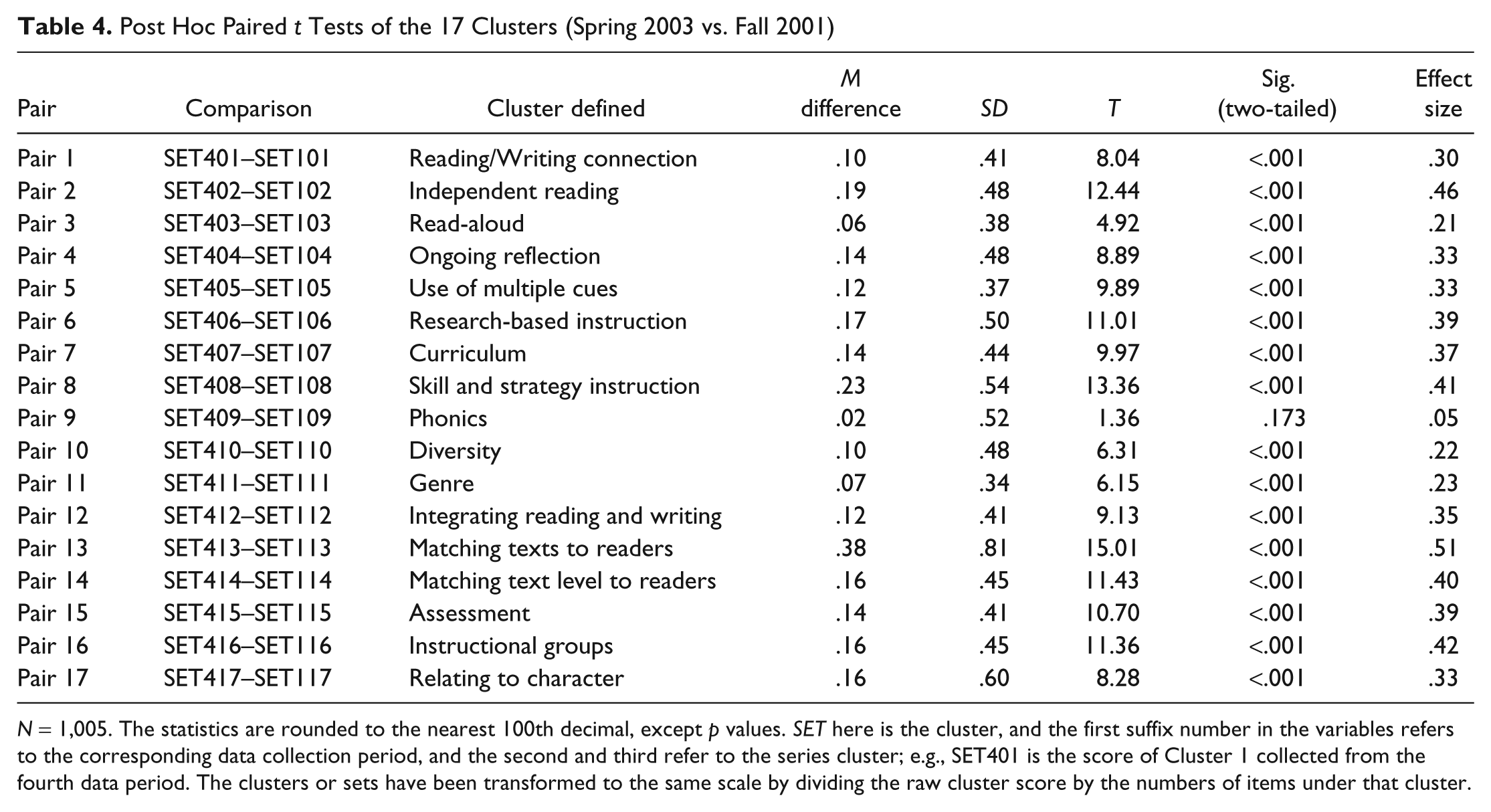

Findings

The SCRP was administered in the fall and spring of the 2nd and 3rd years of SCRI. The internal consistency of the pretest was .89. Of the 1,324 teachers who were participants in both Years 2 and 3, 1,005 took the SCRP all four times it was administered. The overall shift (diff = 8.22) from fall 2001 (M = 212.37) to spring 2003 (M = 220.59) was statistically significant (p < .001). Of the 17 cluster mean differences, 16 were statistically significant (see Table 4). The shift for the phonics cluster was not significant.

Post Hoc Paired t Tests of the 17 Clusters (Spring 2003 vs. Fall 2001)

N = 1,005. The statistics are rounded to the nearest 100th decimal, except p values. SET here is the cluster, and the first suffix number in the variables refers to the corresponding data collection period, and the second and third refer to the series cluster; e.g., SET401 is the score of Cluster 1 collected from the fourth data period. The clusters or sets have been transformed to the same scale by dividing the raw cluster score by the numbers of items under that cluster.

Findings from the SCRP suggest, then, that over the 2-year period, participating teachers believed that their beliefs and practices became increasingly consistent with SCRI beliefs and practices. Interestingly, we found that, for the first three administrations, teachers’ mean responses to belief items were significantly higher than their responses to practice items (mean differences of 1.14, 0.50, and 0.68, respectively). In the last administration, given after 3 years of SCRI participation, there was no significant difference between their reported beliefs and their reported practices (mean difference of .27).

The SCRP is a self-report instrument, and like all self-report instruments, it has limitations. First, the SCRP does not assess what respondents believe but what they report to believe. Second, over time, it is possible that the knowledge base of respondents changes and that they hold different meanings for terms than they did previously. For example, a teacher initially may strongly agree with the statement, “It is important for teachers to select reading materials that meet each child’s instructional level.” A year later, however, the teacher may realize that what he or she meant by “instructional level” is not the same as what SCRI means. The net result is that initial scores may be somewhat inflated because the meanings held by the teacher and SCRI differed. Third, the instrument failed to discriminate among coaches in their 3rd year. Although it discriminated adequately for our piloted groups (preservice teachers, coaches beginning their 2nd year of professional development in the State Study) and for teachers and coaches in Years 1 and 2, it did not discriminate among coaches during their 3rd year. It is also possible this is true for 3rd-year teachers, who were fairly advanced in their understandings of SCRI beliefs and practices.

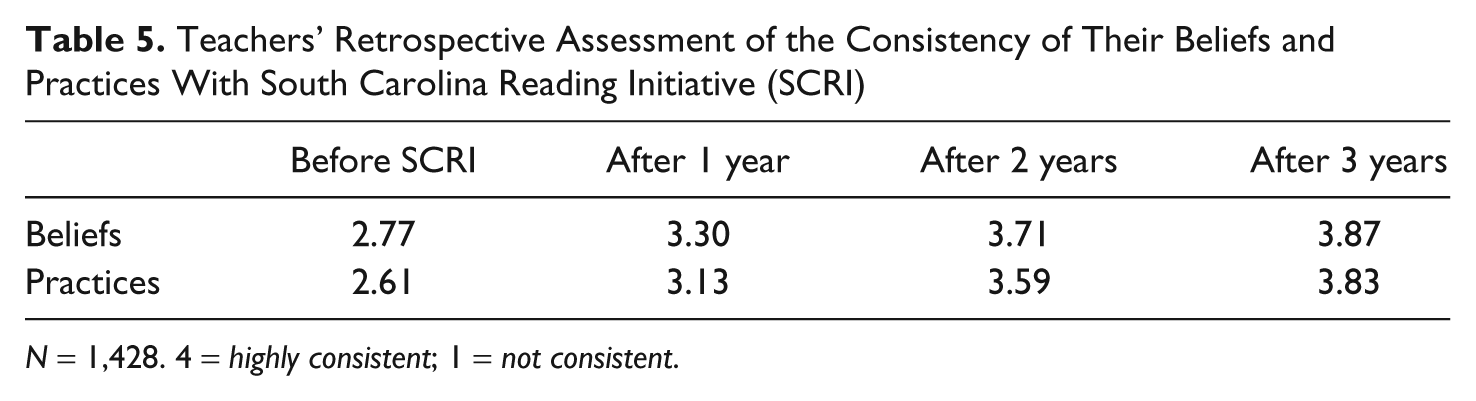

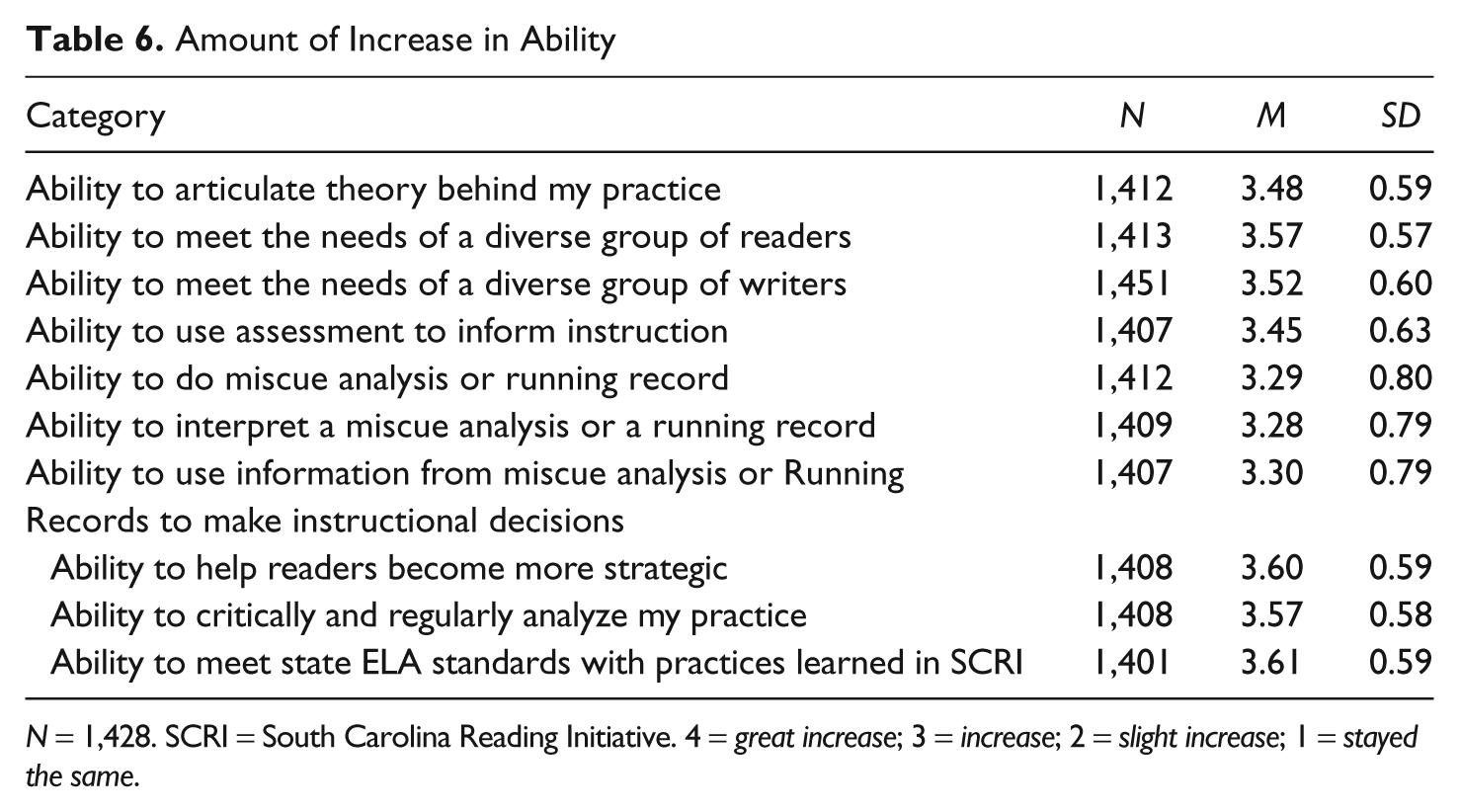

SDE Survey

To address the fact that teachers’ understandings may change over time, during the last month of SCRI the SDE administered a survey to all participants. There were 1,593 teachers participating that year; 1,428 completed the survey. Three of the questions asked participants to reflect on their changes over time. The first question asked teachers to rate the consistency of their beliefs and practices with SCRI in each year of the initiative (4 = highly consistent and 1 = not consistent; see Table 5). Teachers’ responses to this question suggest they believed that both their beliefs and their practices became increasingly consistent with SCRI. The mean of their pre-SCRI beliefs was 2.77, and their post-SCRI beliefs was 3.87. The mean of their pre-SCRI practices was 2.61, and their post-SCRI practice mean was 3.83. On the second question, teachers were asked to rate the increase in their ability in 10 areas (4 = great increase and 1 = stayed the same). All areas were considered to be consistent with SCRI. As shown in Table 6, the mean for every item was greater than 3 (indicating an increase), and the mean for half the items was greater than 3.5, indicating that teachers felt the amount of increase was considerable. On a third question, teachers were asked to rate the increase in their knowledge about instructional practices to support literacy. The mean increase was 3.68. This response then also supported the pattern of increased consistency. Although it is possible to question the trustworthiness of retrospective assessments, the pattern from these data paralleled the pattern from the self-report data on the TORP and the SCRP, and there was considerable overlap among the groups who completed these three measures. Of the teachers who participated in Year 3, 90% completed the SDE survey, 82% of the teachers who were in SCRI in that year and the other 2 years completed the TORP all six times it was administered, and 76% of those teachers who were in SCRI that year and Year 2 completed the SCRP all four times it was administered. It therefore seems reasonable to conclude that teachers’ self-reported beliefs and practices became increasingly consistent with the beliefs and practices advocated by SCRI.

Teachers’ Retrospective Assessment of the Consistency of Their Beliefs and Practices With South Carolina Reading Initiative (SCRI)

N = 1,428. 4 = highly consistent; 1 = not consistent.

Amount of Increase in Ability

N = 1,428. SCRI = South Carolina Reading Initiative. 4 = great increase; 3 = increase; 2 = slight increase; 1 = stayed the same.

Study 2: Case Study Research

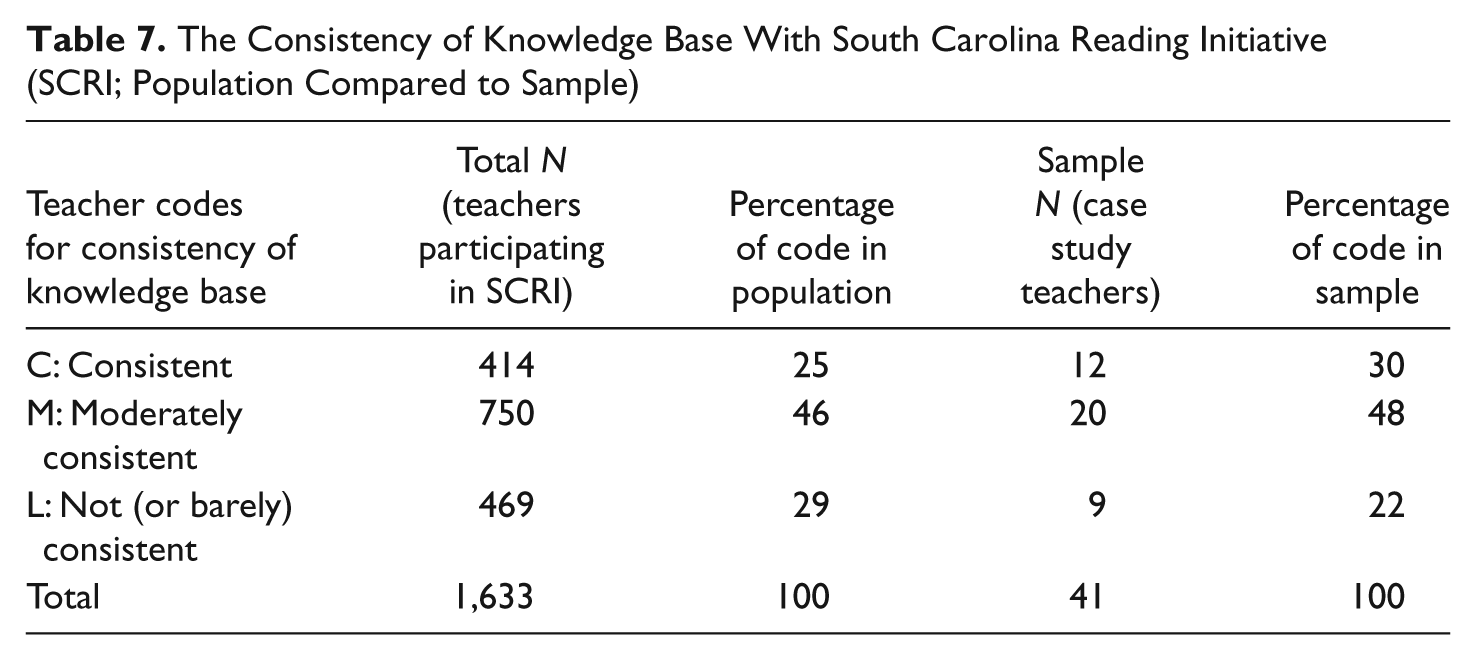

The TORP, SCRP, and the SDE survey all provide data about teachers’ perspectives on their beliefs, practices, and changes. To understand the change process from another perspective, that of a researcher, we conducted case study research on 39 teachers. We then examined patterns across these 39. We began by identifying 96 teachers (two sets of 48) who we considered representative of the 1,633 teachers participating in SCRI at the beginning of the 2nd year. To determine representativeness, at the end of the 1st year of SCRI, we asked literacy coaches to complete rubrics about each teacher’s initial enthusiasm, voice (confidence in their ability to articulate ideas to support their theories and practices), and knowledge base, and about the supportiveness of school context. We also used publicly available information about the socioeconomic status of the children in the school, grade level of participating teachers, and geographical region of the state. Once we knew these patterns for all SCRI teachers in the state, we identified potential case study teachers in proportion to state patterns. For example, we knew that 25% of the teachers in the state were considered by their coaches to have a knowledge base consistent with SCRI when SCRI began. Our goal then was for 25% of our 48 teachers to represent those rated consistent with SCRI beliefs and practices. We followed the same pattern for the other salient characteristics. We contacted the first person in each pair and asked if that person would be willing to participate. If that person declined, we asked the other teacher in the pair. In this way, we ended up with 48 representative teachers who agreed to participate.

Once 48 representative teachers agreed to participate, we scheduled interviews and observations. During the 2nd and 3rd years of SCRI, we conducted beginning and end-of-year interviews, observed each teacher three times yearly, and conducted debriefing conversations after each observation. Field notes were elaborated and debriefing sessions and interviews transcribed. The elaborated field notes and interviews were returned to teachers for feedback. Of the 48 teachers who originally agreed to participate, 7 were not followed for both years. Either they postponed observations and interviews indefinitely or did not continue in SCRI in Year 3. We also interviewed each teacher’s literacy coach and principal.

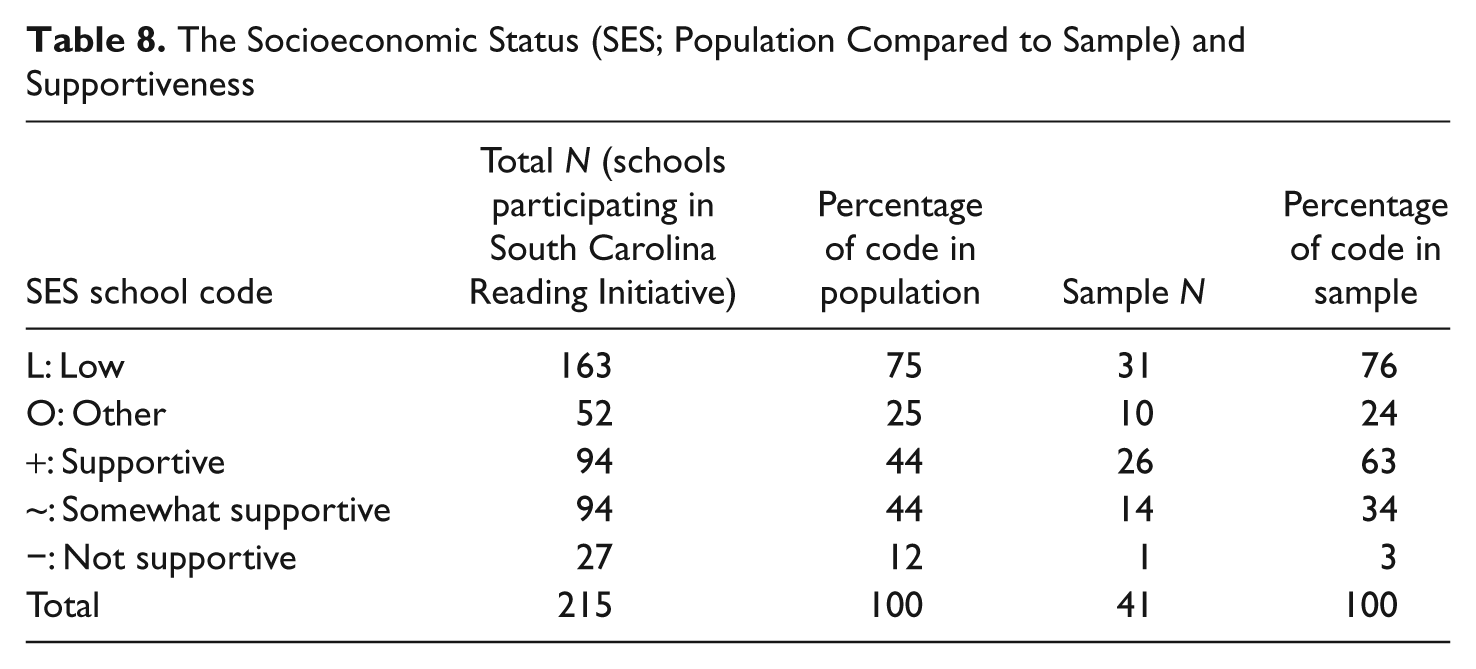

As can be seen in Tables 7 and 8, the percentages in the sample for inconsistent knowledge base and nonsupportive context are slightly lower than the percentages in the population of teachers in SCRI. This is the result of the fact that the seven teachers who cancelled observations and interviews, or who did not continue in SCRI, were disproportionately from the two groups coaches determined to be inconsistent with SCRI and from nonsupportive contexts.

The Consistency of Knowledge Base With South Carolina Reading Initiative (SCRI; Population Compared to Sample)

The Socioeconomic Status (SES; Population Compared to Sample) and Supportiveness

The goal of SCRI, via literacy coaches, was to increase the degree to which teachers’ beliefs and practices were consistent with SCRI as defined in the SDE Belief Statements (see Appendix B). We consequently developed a Consistency Rubric as a way for the research team to look similarly at the changes in the beliefs and practices of all 39 teachers over time. To develop the rubric, we elaborated on each of the SCRI Belief Statements. For example, the first belief statement is, “Teachers understand young children and commit to their learning.” The elaborated definition is,

Teachers understand, recognize, and appreciate the intellectual, linguistic, physical, social, oral, emotional, creative, and cognitive needs of young children. They design instruction that facilitates the development of the individual child. They create classrooms that promote positive, productive, language and learning environments. They foster students’ self-esteem and show respect for diversity (i.e., they immerse students in books, language, music, art, and use personal artifacts that represent individual, cultural, religious and racial differences).

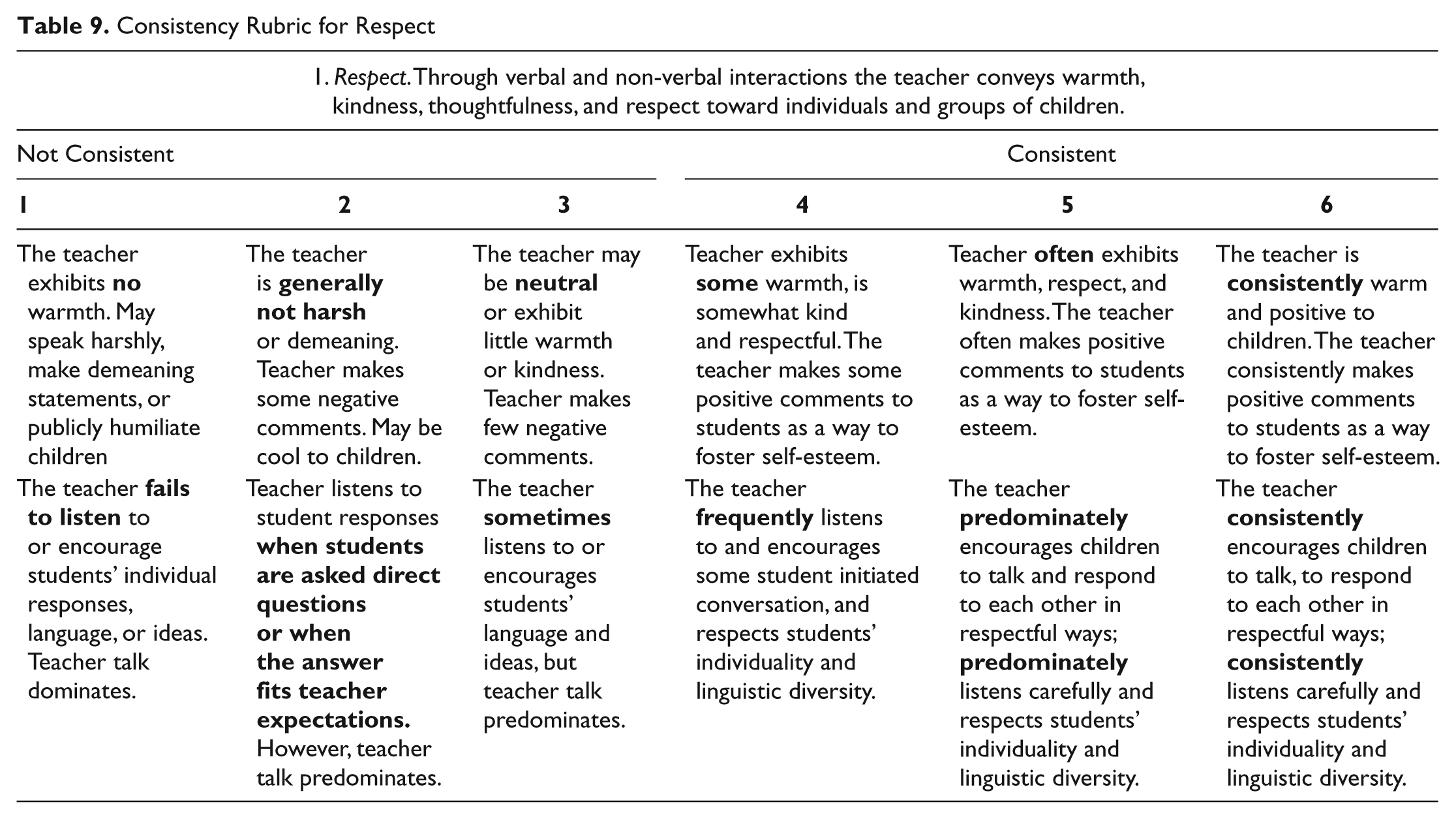

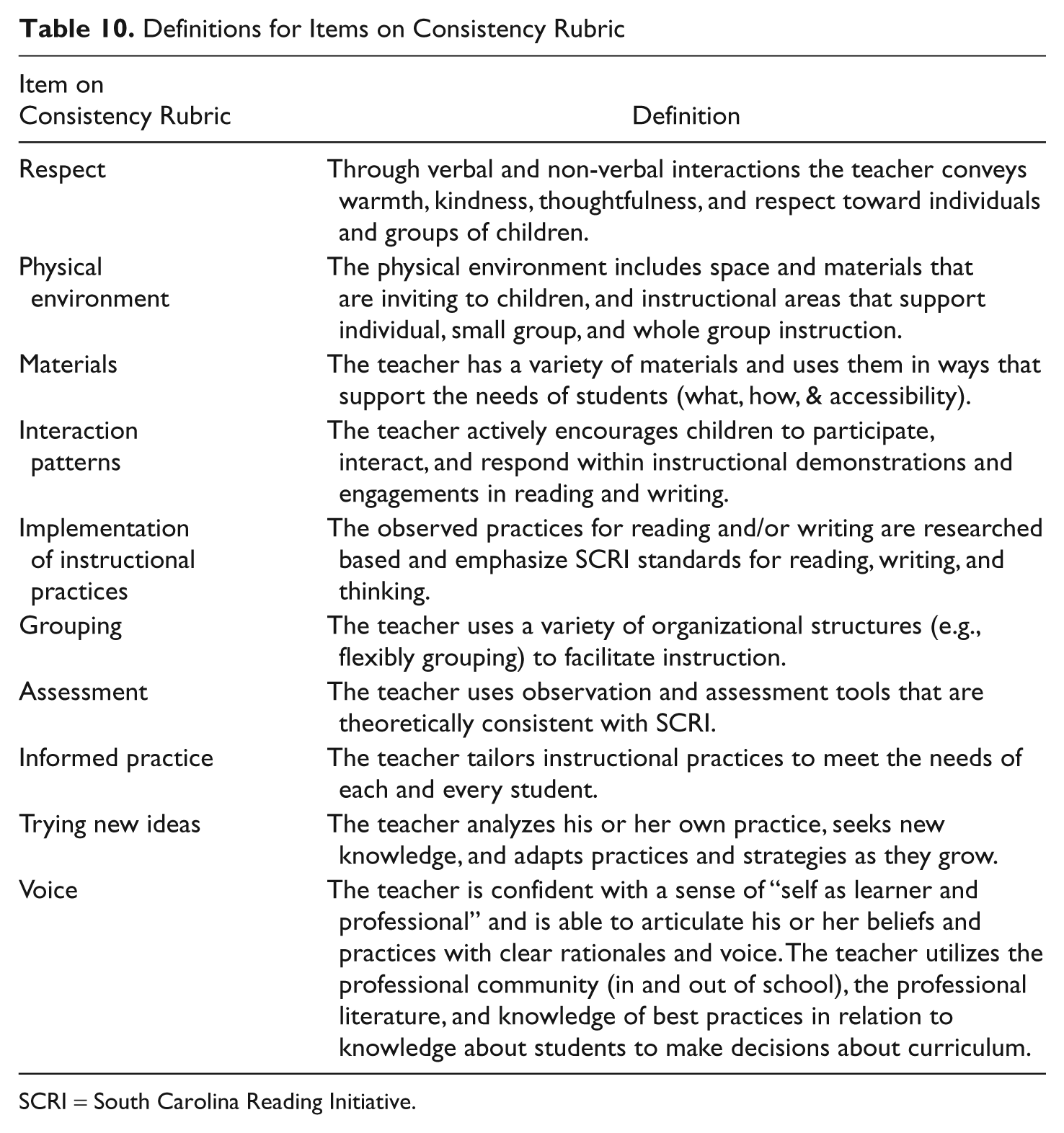

We discussed what this standard would look like and sound like in teachers’ classrooms and in our interviews with them and decided that we could assess consistency with this standard by examining the data for evidence of respect, physical environment, materials, and interaction patterns against these elaborated definitions.

The second and third belief statements call for teachers to “possess specialized knowledge of the reading process” and for them to “integrate reading and writing instruction.” We decided we could assess consistency with these statements by rating teachers on implementation of instructional practices. The fourth belief statement is that teaches should “assess students’ progress and performance,” and we assessed consistency via an assessment rubric. The fifth and sixth belief statements establish that teachers “critically and regularly analyze their practice” and that they “collaborate in a professional community.” We decided we could assess consistency to these statements by developing rubrics for trying new ideas and voice. These 10 aspects of teaching then became the 10 items on the Consistency Rubric:

Respect

Physical environment

Materials

Interaction patterns

Implementation of instructional practices

Grouping

Assessment

Informed practice

Trying new ideas

Voice

We then defined each of these aspects of teaching. Respect, for example, was described as “through verbal and non-verbal interactions the teacher conveys warmth, kindness, thoughtfulness, and respect toward individuals and groups of children.” We next developed rubrics for each of the 10 aspects of teaching on the Consistency Rubric (see Table 9 for an example of the rubric for respect and Table 10 for definitions of all other aspects assessed). To ensure rater agreement, the six members of the research team viewed classroom videos of several non-SCRI teachers and independently assessed the teachers using the Consistency Rubric. We then shared our ratings. If there were points of disagreement, they were often the result of our rubric not being explicit enough, and so changes were made to the rubric. We also read and rated transcripts of interviews and field notes. Over the course of a year, we used consensus to achieve rater agreement on our scoring. Once we had reached consensus, each of us used our data to rate the teachers on all 10 items on the Consistency Rubric. We did this for the data collected at the beginning and end of each year. To obtain an overall consistency score for a teacher at a given point in time, we averaged the score from all 10 areas on the rubric. In this way, we were able to look at change patterns across the 39 teachers.

Consistency Rubric for Respect

Definitions for Items on Consistency Rubric

SCRI = South Carolina Reading Initiative.

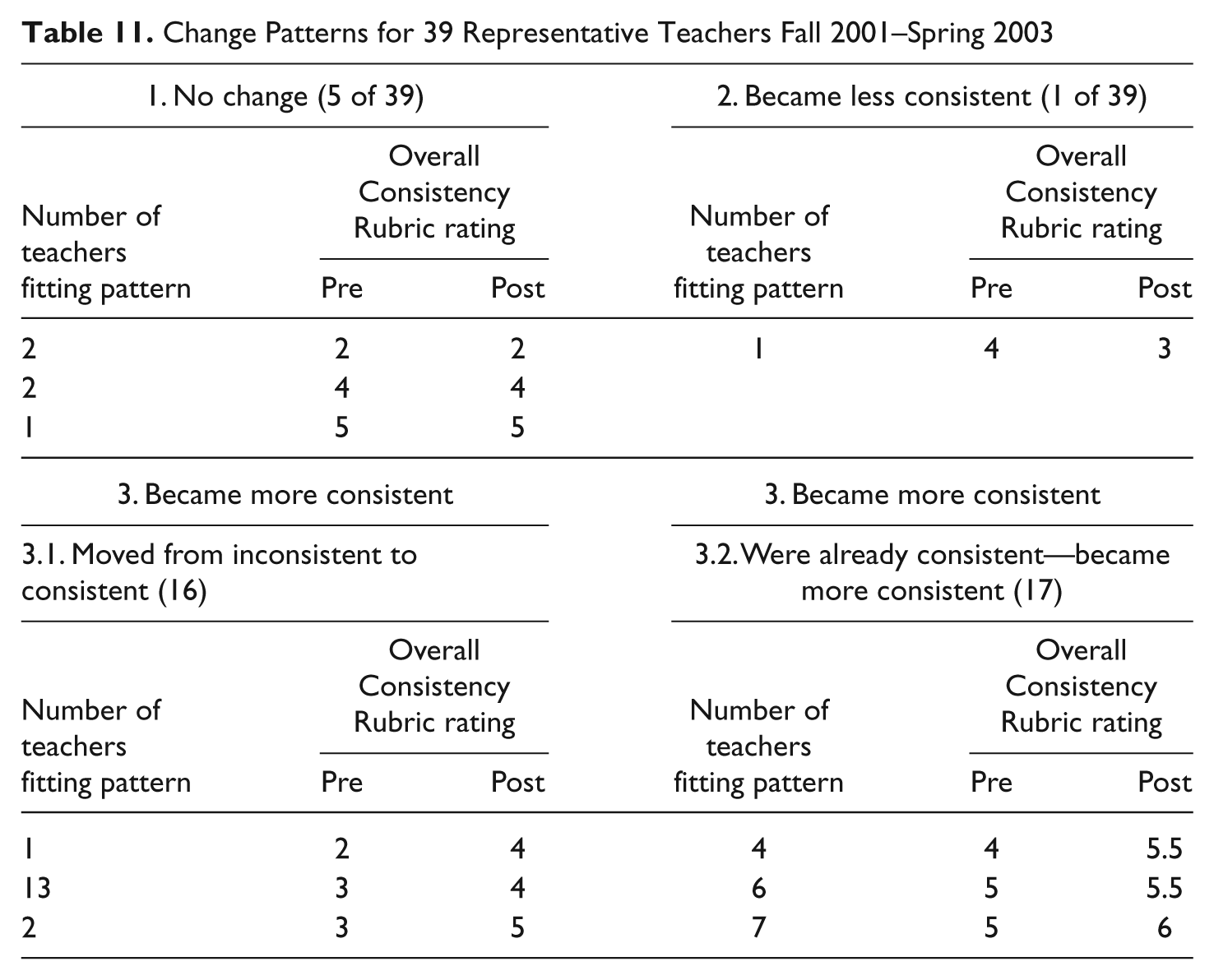

Findings

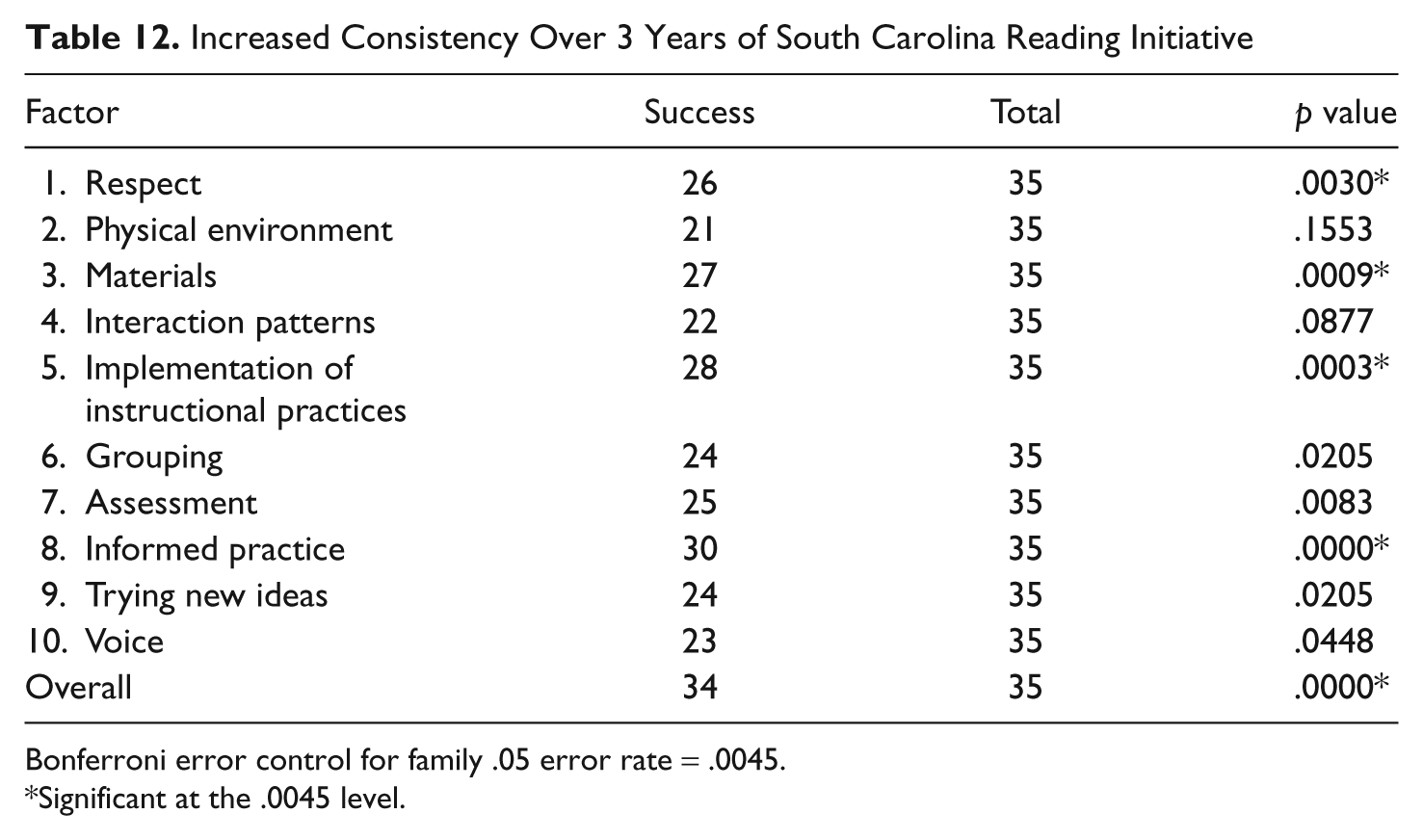

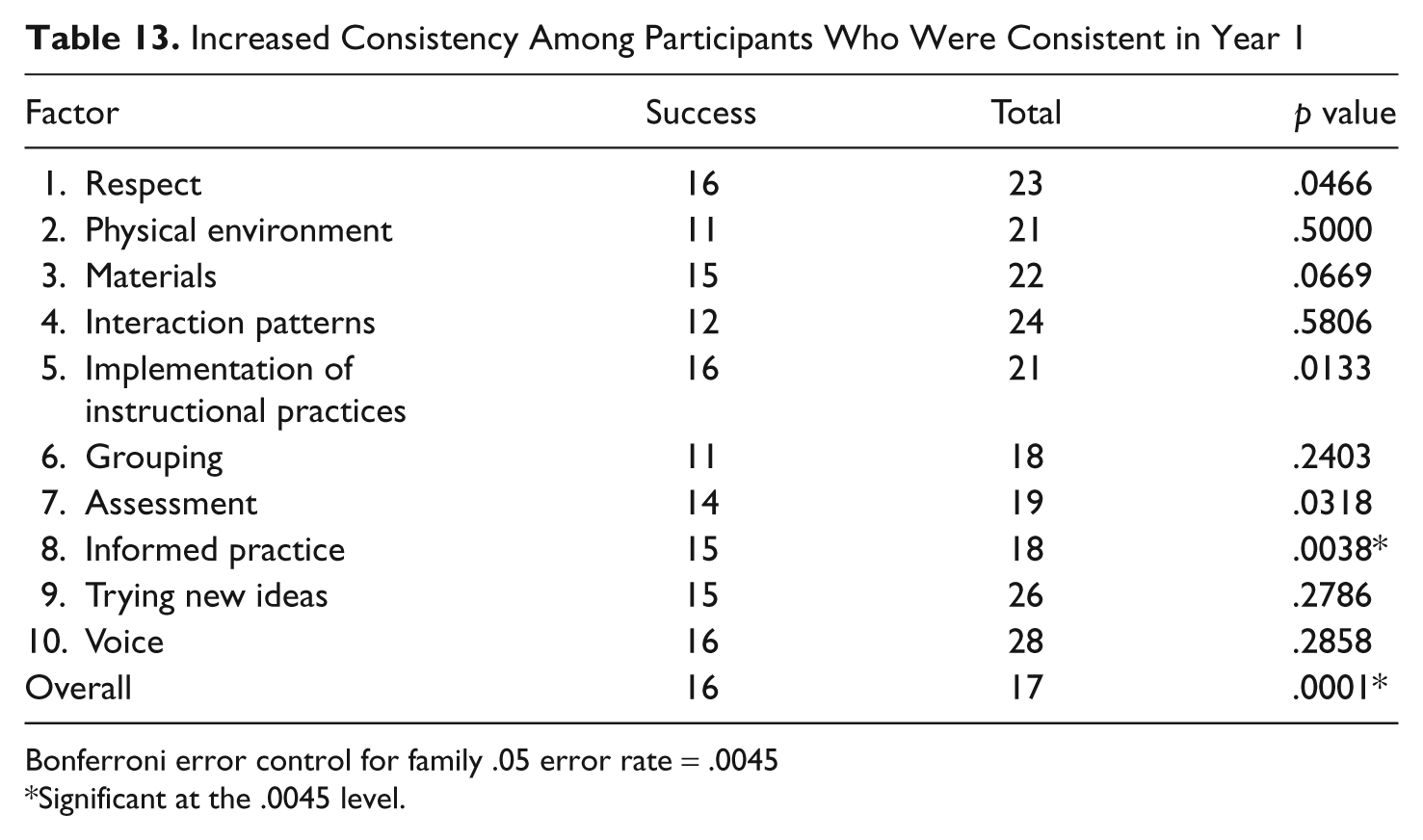

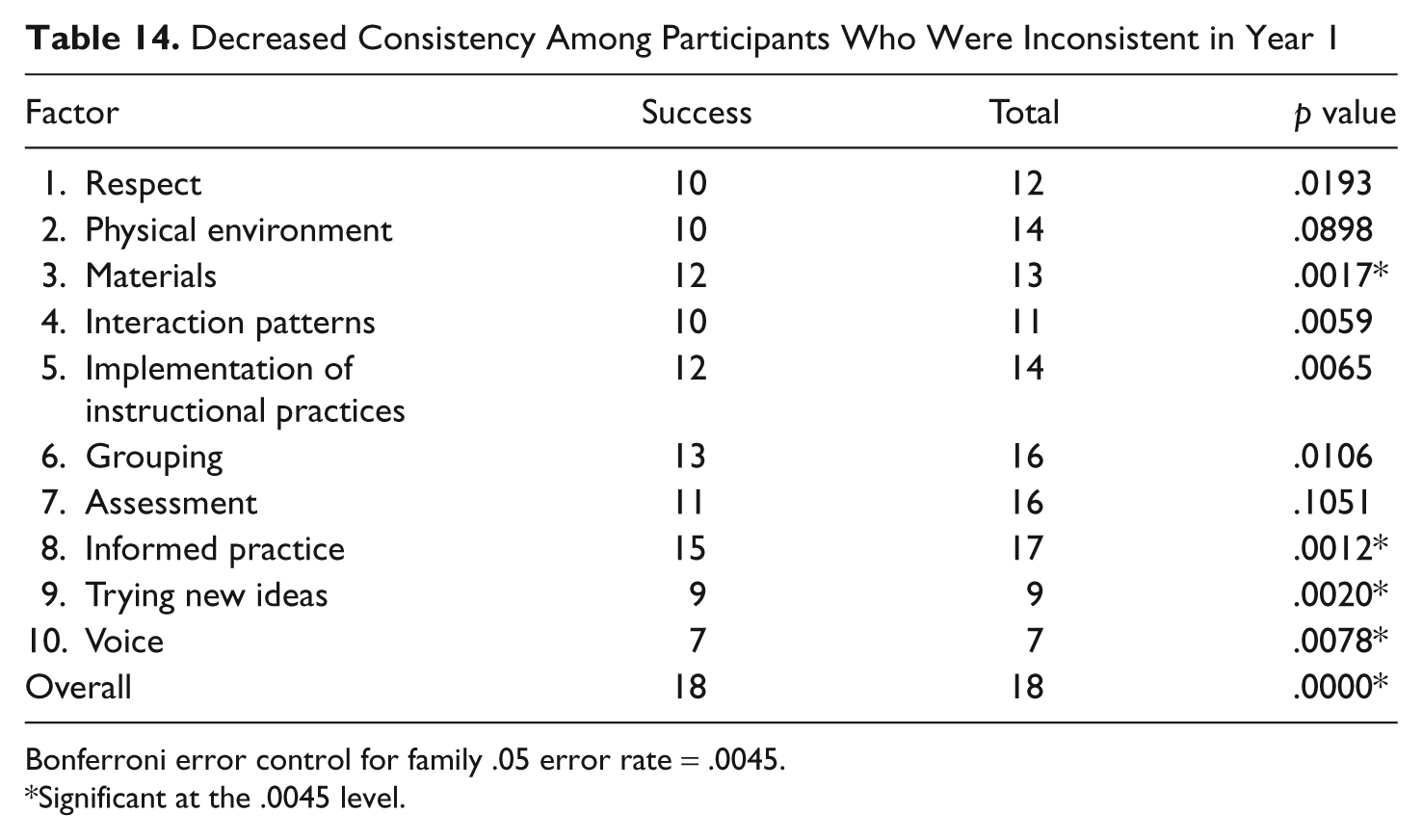

As shown in Table 11, we found that 33 of the 39 teachers had become more consistent with SCRI; for 5 teachers there was no change, and 1 teacher became less consistent. To identify areas of statistically significant change, we conducted a sign test comparing (a) shifts in consistency for all teachers, (b) shifts in consistency for all teachers originally considered to be consistent, and (c) shifts in consistency for all teachers originally considered to be inconsistent. The findings are detailed in Tables 12, 13, and 14. We found that SCRI literacy coaches had a significant impact on all 39 teachers (p = .0000) and that they affected both those teachers considered, at the beginning of SCRI, to be consistent (p = .0001) and those considered to be inconsistent (p = .0000). Looking at all teachers, the significant impact of the literacy coaches was on respect (p = .0030), materials (p = .0009), instructional practices (p = .0003), and informed practices (p = .0000).

Change Patterns for 39 Representative Teachers Fall 2001–Spring 2003

Increased Consistency Over 3 Years of South Carolina Reading Initiative

Bonferroni error control for family .05 error rate = .0045.

Significant at the .0045 level.

Increased Consistency Among Participants Who Were Consistent in Year 1

Bonferroni error control for family .05 error rate = .0045

Significant at the .0045 level.

Decreased Consistency Among Participants Who Were Inconsistent in Year 1

Bonferroni error control for family .05 error rate = .0045.

Significant at the .0045 level.

When the impact on teachers was divided into the two categories of those originally considered and not considered inconsistent, differences in impact became noticeable. Teachers who initially were considered consistent with SCRI statistically increased their ability to use their knowledge base to meet instructional needs (p = .0038). Teachers who were initially considered inconsistent not only significantly increased their understanding in this area (p = .0012) but also became more consistent in their use of instructional materials (p = .0017), tried more new ideas (p = .0020), and were better able to name the theories and research that informed their practices (p = .0078).

The findings from the Consistency Rubric confirmed the self-report data. It was clear, from teachers’ perspectives and ours, that SCRI coaches helped teachers’ beliefs and practices become more consistent with SCRI beliefs and practices.

Two Case Studies

These findings can perhaps best be understood by looking at two different teachers (all names are pseudonyms): Olivia Estes, one of the five we considered not to have changed, and Clara Madison, one of the 16 whom we initially considered inconsistent and who became consistent. Both teachers worked in districts with a high percentage of students on free or reduced-price lunch. On the Consistency Rubric, the mean across all items for both teachers, based on the initial interview, the first observation, and the debriefing conversation, was considered not consistent, both receiving a mean score of 2 across all items on the Consistency Rubric.

Olivia Estes

In the first year of SCRI, Olivia Estes was in her 11th year of teaching and had taught second grade for 10 of those 11 years. Olivia had undergraduate and master’s degrees in elementary education. Olivia decided to join SCRI because she “love[d] reading” and “want[ed] to be a better reading teacher.” She “love[d]” her coach.

When Olivia first started teaching, she used the basal but did not like the way she was teaching reading: “I was just out of college and didn’t know any better. . . . I blame a lot of my professors in college because they didn’t tell you what was out there.” Two years ago, Olivia’s district adopted “Pat Cunningham’s Four Blocks” approach to reading. As part of the implementation, a small number of teachers attended the Four Blocks training. When they came back, they did one all-day workshop for the rest of the staff. Olivia reported that she had a “hard time with it because I’m just the kind of teacher . . . I’m not as organized as some.” When her SCRI coach came into her classroom, the coach explained to Olivia that she was not “doing” guided reading consistent with Four Blocks or with SCRI. Olivia noted, “and even now, after being in the [SCRI] class for a year, I’ve still not got it. The guided reading part is my weakness.” Olivia also felt that she did not have the “right” books in her classroom as most of them were too hard for her students.

When we first visited her, at the beginning of the 2nd year of SCRI, Olivia taught ELA in the morning: She first did what she called guided reading and then writing. Olivia explained that guided reading, usually whole group, was “real basal oriented.” On Fridays, she gave a test on the basal selection of the week. She reported that she felt overwhelmed by Four Blocks, but that was what her grade level decided to do.

There were often contradictions between the various statements Olivia made and what she did in the classroom. She explained that those contradictions existed because, “[s]ee, I know what I am supposed to do. I know I am supposed to have small groups on their levels and the others are doing literacy centers, but, honey, I’m not there yet. I’m not there yet.” Olivia explained that she wanted to be teaching the way she had seen on videotapes and her coach had modeled, but she just could not “let go of the basal.”

Olivia spoke very positively of SCRI. She enjoyed attending study group: “The class is good, I’m learning, but like I said, I like it because I found out I could start getting out of this Four Block thing.” Olivia preferred, however, having her coach demonstrate in the classroom what she was teaching: “See, I have to learn by doing. I’m a learn by doing person, and I can read about it, but until I do it, I don’t know how to do it.” Olivia considered her coach very helpful in this regard: “If you need help, you can sign up for a time and she will come in our class and she will do a lesson with you and do it for your children.”

In her final year, Olivia noted that she had not done small-group instruction until quite recently and that it was because of SCRI that she began this practice. She commented that “my reading used to be very boring. I did not like the way I taught reading. . . . My reading has evolved and it’s changed and I’m glad.” She noted, however, that although SCRI affected her a lot and she now knew what she need to do, she didn’t have it “all set up.” As Olivia concluded, “I’ve got a long way to go and I know that, a long way to go, but I know where I need to go.” At the same time, Olivia was worried about what would happen to the second graders when they got to third grade if she had not thoroughly done each story and test in the basal. She noted, “I’ve learned from SCRI that’s not what to do [drill and test], [but], being a teacher, it’s the system and it’s the way it is and I have to get these children ready for PACT [the standardized test in third grade].” Looking ahead, Olivia noted that she “really, really, really, really want to get away from using my basal so much. That’s my security blanket and I’ve got to let go.”

Olivia’s principal had different ideas about the importance of the basal. She noted that it was “used as a supplement and that in some classrooms it was not used at all, because there are just so many other things out there that are just as effective, if not better.” She concluded, “So the basal is there, the basal is used a little bit, but it’s not, I mean they could take it away tomorrow and we’d do just as well without it.”

As a result of her participation in SCRI, Olivia felt she was more able to meet the needs of diverse groups of students, more able to help readers become strategic, and more able to critically and regularly analyze her practice. At the same time, Olivia noted that although she wanted to ground her teaching in her new knowledge base and in ways that were consistent with SCRI, that might not happen, because, as she said, she was a “wimp.” She noted that she worried too much about covering the basal so that the students would be ready for the standardized test in third grade.

Asked to describe Olivia’s classroom, her coach responded,

Well, when I walk in, I never know exactly what I’m going to see, because it is sort of like she has a plan, but she doesn’t stick with it, which is good in a lot of ways, but she can get sidetracked and she never gets back. . . . You know, it’s okay to get sidetracked if you’re following the children, but if you never get back to what it is they need, their instructional needs and that structure to get them back under control so you can do the next thing. . . . That’s what I see happening. . . . I would, if I could say, that I want Olivia to do something different, it would be that she would have a consistency about her teaching and let the day be more routine so the children would know what to expect. And I would want her to work with the children individually in small group. I see too much whole group.

At the end of the 3rd year, the mean of Olivia’s scores on the Consistency Rubric was still a 2. Although Olivia had made some small shifts, she had not become more consistent with SCRI.

Clara Madison

At the time she joined SCRI, Clara had been teaching third grade for 8 years. Clara’s third grade classroom was very structured and teacher centered. During the time of day called Reading, children read and then answered questions about what they had read. During social studies, Clara often read the textbook to the students. She did so because she felt that only two of her students were reading on grade level and could read the book themselves. Clara joined SCRI because she “needed some direction as far as my language arts program was concerned.” She felt that “certain things” she did were “okay” but she wasn’t sure she gave the children “what they needed to be successful.” Clara wasn’t sure she “was giving them enough technique. . . . My instruction I felt was okay, it was good but it could be better, but what could I do? I didn’t know any other strategies to use to make it better.”

When we first visited Clara, we had heard her make remarks to the third graders which, in the words of her literacy coach, were not “mean” but also “not kind.” Clara controlled most of the things that went on in the classroom—curricular and otherwise (e.g., where and how a child should sit, when a child could stand up, and when a child could go to the bathroom, which was only when they all went as a group). Clara had been participating in study group for a year. She liked the study group and considered her SCRI colleagues as her “family at school.” In study group, Clara had learned about some “best practices” she could use. Outside of study groups, she read “some” of what the coach requested. She noted that she did not “have a whole lot of time to read” and, besides, what helped her most was talking to others. Clara was not confident about trying new things. She noted, for example, that although she had learned it was important to have books in the classroom that children could read, a lot of her books were not leveled. Clara reasoned that if she wanted to be sure the children could read the books, she would “have to just look at them” herself. Clara preferred not to do that. She instead wanted books that were already leveled. Clara felt that would be easier and also it would ensure that she was “giving the child the correct book for their level.” Clara also explained that she found change hard: She was

in a mode of certain things that I do and it’s difficult sometimes to change from what I’ve been doing into something else. Sometimes just hearing about [new things] may be fearful. I know I want my instruction to be better, but I’ve got to step outside my norm and make some changes, so that’s the scary part.

Clara, however, did change. During the summer between the 2nd and 3rd years of SCRI, Clara learned that she would be moving from third grade to first. The coach happened to be at the school the day that Clara found out about the change. The coach had taught first grade for 13 years and offered to help Clara. The coach explained to Clara that she did not need to worry about doing anything “right”—she would just be learning about how things got done in first grade. Clara began collaborating closely with her coach that summer. They had not worked closely together before this. As the coach explained, the school had not only a coach but also a teacher specialist, hired by the SDE to provide instructional support to Clara’s school, which was not making adequate progress on state-mandated tests. Teacher specialists were assigned particular grade levels, and at Clara’s school, the teacher specialist worked at the third grade level. During the first year of SCRI, the teacher specialist came in and out of Clara’s classroom, teaching predetermined lessons according to a schedule set by the teacher specialist. The coach felt that the teacher specialist was a disruptive influence and tried to not come very often to Clara’s classroom because she felt she would be adding another layer of disruption. In addition, the coach stayed away because she and the teacher specialist disagreed about what counted as “best practices” and the coach did not want to put Clara in the middle. That summer, however, when Clara changed to first grade, the SDE moved the teacher specialist to a different school. The coach felt these changes made it possible for her to work with Clara. Indeed, the coach wanted to make up for “lost time” and so spent a considerable amount of time that 3rd year with Clara.

When Clara and the coach met and talked that summer, they not only talked about literacy but also talked about things like classroom management. In separate interviews, for example, both of them told us about their conversation about rules for using the bathroom. As Clara explained, she needed to figure out if she wanted the children “to raise their hands because that’s going to be very distractive” or if she wanted them to “just go ahead and use the bathroom as they need to.” From the coach’s perspective, these kinds of conversations were conversations “about helping Clara let the children take on more responsibility for themselves.”

Their collaboration continued into the next year. We visited on the 8th day of school, and when we entered, the children were gathered on the floor around Clara. She and the children were about to read a story. Before they started, she told them they were going to review their two reading strategies. These were written on chart paper and taped to the white board. The first one was “Look at the picture” and the second was “Skip it and read to the end of the sentence.” The children read this in unison. Clara asked the group, “If you come to a word you don’t know, what are you going to do?” Several of the children spoke at once saying they would look at the list of strategies. Clara then began reading and the children “read” along with her; some picked up on a single word, others were reading whole sentences. During the reading, Clara occasionally covered up a word and asked the children to guess what the word was. She then asked them what that word would start with and uncovered the first letter so they could check their prediction. As the lesson continued, Clara also talked to the children about rhyming words and read the book a second time so they could make a list of them. Afterward, Clara explained about the lesson,

I love shared reading. Especially in the first grade; they are really interested; they get excited. . . . I can barely finish the story because they have so much to say at the moment. I like it and when I see them get excited, it makes me excited.

Clara’s first grade classroom was radically different from her third grade classroom. Clara explained how this happened: She said that “from the very beginning of the year,” her coach “was nice enough to come in and give me some strategies.” Clara said, for example, that she “didn’t know how to start a word wall with them” and the coach “did a couple of demonstrations” and got her “started” and “going” with those things. Clara noted that “any little thing that I had a question about . . . she was very helpful . . . it was very helpful when she came in.” Clara felt that because of her coach, she became “more interested” in what she was teaching and how she was teaching it. . . . She also became more interested in whether or not the students learned what she was teaching. Clara liked seeing the “light bulb go on” for kids and saw herself as being “more into my children now, more seeing what they are doing.”

The coach’s comments were similar:

I think what happened was that Clara tried a lot of stuff out, found that some of it worked, found that most of it worked, found the kids were learning, became confident. . . . She started seeing the difference she could make with kids. She started saying things to me like, “You know, this stuff reading works.” And she started telling out teachers, “You’ve got to try it this way.” Clara would explain in study group, “Shared reading is so good, let me tell you. It is good for even my little ones that can’t read a word, they jump in when they can, when they see some word, you know, words coming up they know. And by the third or fourth reading, everybody in the room is pretty much reading it.”

Compared to the beginning of the 2nd year, by the end of the 3rd year, Clara had become increasingly consistent with SCRI. Her mean score across all items on the Consistency Rubric was a 4.

Looking Back, Looking Forward

Our study demonstrates SCRI literacy coaches were able to affect the beliefs and practices of large numbers of teachers. This finding has considerable implications for schools, districts, and states that are interested in improving literacy instruction on a large scale. It suggests that effective staff development can be large in scale and does not require on-site support from university faculty.

We cannot, however, claim that all coaches can have a similar impact on teacher change. These SCRI coaches had extensive ongoing professional development and an extensive support system. It is also important to note that our description of the findings masks implementation realities we think other stakeholders would need to consider. It also masks questions we did not ask.

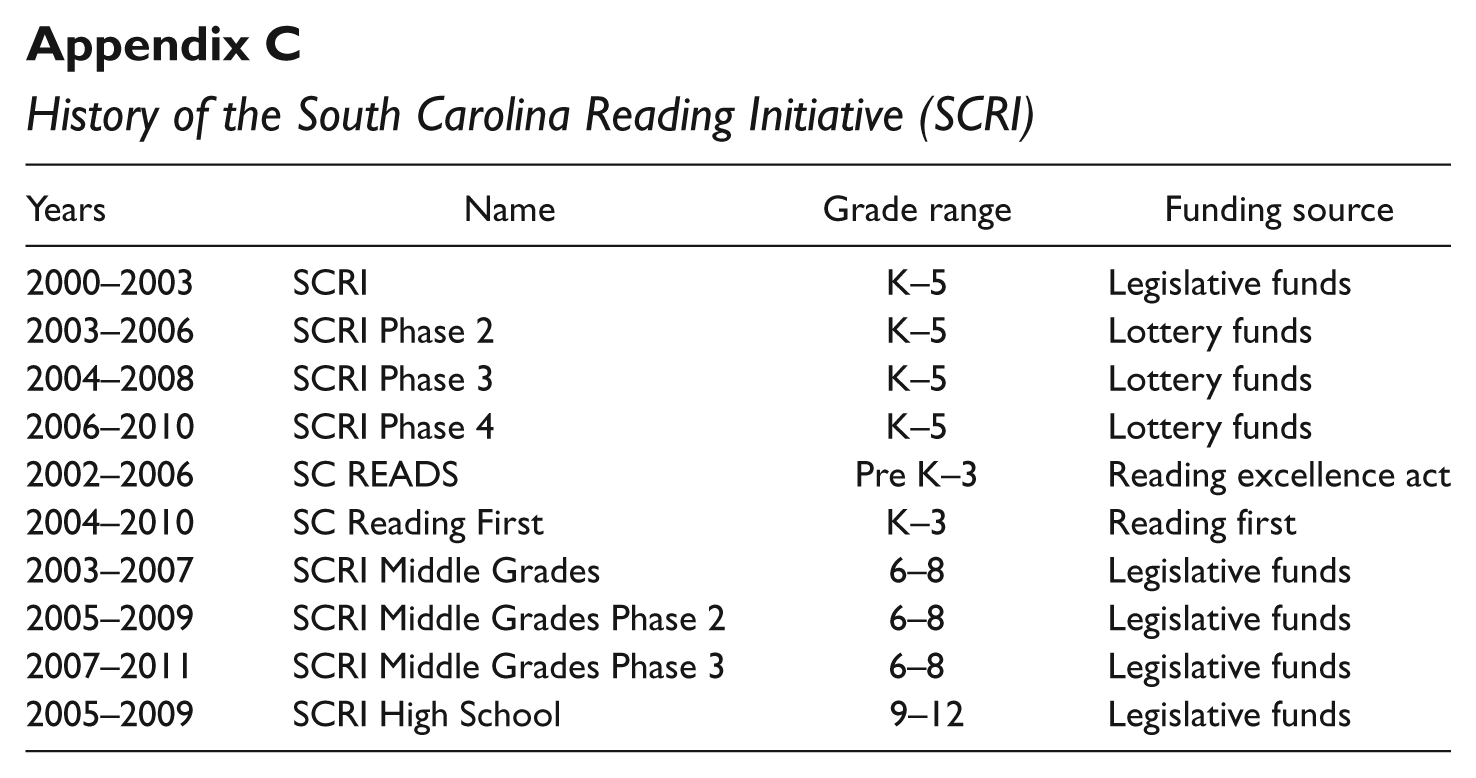

The Realities of Implementation

The first implementation reality is that for coaches to help teachers, the SDE realized the coaches themselves needed to live the best practices they were learning about (e.g., oral reading analysis, focused small-group instruction). In response, subsequent iterations of SCRI (see Appendix C) included a fourth year of State Study. Years 2, 3, and 4 paralleled what the coaches experienced in our study. However, coaches now spend the 1st year of professional study simultaneously teaching in a K–12 classroom. This allows coaches and their partner–teachers to put in place the best practices being taught in State Study. This change expanded the number of graduate courses as coaches begin the university-based course work the summer before they coteach and continue with course work through all 4 years.

The second implementation reality is that the coaches needed more help learning to support their peers than SDE originally anticipated. Course work in this first round of SCRI focused on the content of ELA instruction. Although the teaching team integrated information into those courses about how to facilitate study groups and coach peers, SDE realized that coaches needed to spend more time learning about coaching. As a result, SDE expanded State Study from 1 day a month to 2 and used the additional time to provide professional development on how to coach teachers. In addition, SDE added a coaching course to the summer between Year 1 (when coaches are partner teachers) and Year 2 (when coaches leave the classroom to coach).

The third implementation reality that proved problematic was the original job description of the coach. Coaches were responsible for 8 to 10 teachers each at four schools. This decision originally was made out of concern for funding. Some of the SDE team thought it would be fiscally unacceptable to the legislature for coaches to serve too few schools. The net result though was that coaches had only 1 day a week per building, which coaches and SDE subsequently felt did not provide enough teacher support. In subsequent iterations, coaches worked in only one building with no more than 25 teachers.

The fourth implementation reality had to do with support at the building level. SDE found that principals sometimes scheduled mandatory meetings that conflicted with study group or simultaneously adopted literacy curricula that were not philosophically congruent with SCRI’s sociopsycholinguistic, sociocultural approach (e.g., requiring kindergartners to spend 30 minutes a day doing isolated phonics instruction on computers). To address this need, the SDE created School Leadership Teams made up of administrators, project directors, a representative classroom teacher, the special education teacher, the media specialist, and the coach. These teams meet four times a year for SDE-led professional development, and many teams also meet regularly at their schools to coordinate efforts across the school (for more information about school leadership teams, see Morgan & Clonts, 2008).

Questions We Did Not Ask

Looking back, we realized there were at least four critical questions we did not ask and that need to be asked. First, on the Consistency Rubric we did not assess the degree to which the classroom teacher was strategic in teaching readers. Practice needs to be assessed at this level so that the field can better understand the specific practices that enhance the reading trajectory of a child. Second, although we did study the impact of SCRI on student achievement (Stephens et al., 2007), we reported broad changes. We believe there needs to be research that looks closely at shifts in children’s strategic reading behaviors relative to reading achievement. Third, we did not examine the degree to which coaches themselves were able to provide effective, strategic instruction in large-group, whole-group, and one-on-one settings. We believe that mastery of this art is essential if coaches are to help teachers develop their expertise in these areas. Finally, we did not look closely at the relationship between the level of support provided by the school and the degree of change in teachers’ beliefs and practices. We know now that there were considerable differences in those support structures and hypothesize that those structures have a considerable impact on teacher growth.

Despite this plethora of implementation realities and the questions we did not ask, we believe that our study contributes to the scant literature about the impact of literacy coaches. Wei et al. (2009, p. 61) have noted that the professional development needed for teachers to improve their practices is simply not in place in most contexts. They wrote,

While American teachers participate in workshops and short-term professional development events at similar levels as that of OECD [Organisation for Economic Co-operation and Development] nations, the U.S. is far behind in providing public school teachers with opportunities to participate in extended learning opportunities and productive collaborative communities in which they conduct research on education-related topics, work together on issues of instruction, learn from one another through mentoring or peer coaching, and collectively guide curriculum, assessment, and professional learning decisions. (p. 62)

The SDE did, however, provide and continues to provide these kinds of learning opportunities for teachers in South Carolina. In doing so, they have attempted to address the question raised by Wei et al. (2009): “How can states, districts, and schools build their capacities to provide the kinds of high quality professional development that is effective in building teacher knowledge, improving their instruction, and supporting student learning?” (p. 62).

Our study documents the impact literacy coaches had on the teachers who participated in SCRI. Hopefully, more schools, districts, and states will begin to provide the kind of professional development called for by Wei et al. (2009) and future studies will take closer looks at issues of implementation and teacher change so that the field can begin to fine-tune its knowledge of how best to improve literacy instruction and, consequently, enhance the literacy growth of students.

Footnotes

Appendix A

Appendix B

Appendix

History of the South Carolina Reading Initiative (SCRI)

| Years | Name | Grade range | Funding source |

|---|---|---|---|

| 2000–2003 | SCRI | K–5 | Legislative funds |

| 2003–2006 | SCRI Phase 2 | K–5 | Lottery funds |

| 2004–2008 | SCRI Phase 3 | K–5 | Lottery funds |

| 2006–2010 | SCRI Phase 4 | K–5 | Lottery funds |

| 2002–2006 | SC READS | Pre K–3 | Reading excellence act |

| 2004–2010 | SC Reading First | K–3 | Reading first |

| 2003–2007 | SCRI Middle Grades | 6–8 | Legislative funds |

| 2005–2009 | SCRI Middle Grades Phase 2 | 6–8 | Legislative funds |

| 2007–2011 | SCRI Middle Grades Phase 3 | 6–8 | Legislative funds |

| 2005–2009 | SCRI High School | 9–12 | Legislative funds |

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

The author(s) received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.