Abstract

Recent advances in research and clinical practice concerning aging and auditory communication have been driven by questions about age-related differences in peripheral hearing, central auditory processing, and cognitive processing. A “site-of-lesion” view based on anatomic levels inspired research to test competing hypotheses about the contributions of changes at these three levels of the nervous system. A “processing” view based on psychologic functions inspired research to test alternative hypotheses about how lower-level sensory processes and higher-level cognitive processes interact. In the present paper, we suggest that these two views can begin to be unified following the example set by the cognitive neuroscience of aging. The early pioneers of audiology anticipated such a unified view, but today, advances in science and technology make it both possible and necessary. Specifically, we argue that a synthesis of new knowledge concerning the functional neuroscience of auditory cognition is necessary to inform the design and fitting of digital signal processing in “intelligent” hearing devices, as well as to inform best practices for resituating hearing aid fitting in a broader context of audiologic rehabilitation. Long-standing approaches to rehabilitative audiology should be revitalized to emphasize the important role that training and therapy play in promoting compensatory brain reorganization as older adults acclimatize to new technologies. The purpose of the present paper is to provide an integrated framework for understanding how auditory and cognitive processing interact when older adults listen, comprehend, and communicate in realistic situations, to review relevant models and findings, and to suggest how new knowledge about age-related changes in audition and cognition may influence future developments in hearing aid fitting and audiologic rehabilitation.

1. Introduction

Over the last decade, there have been landmark advances in the design of hearing aids. Most hearing aids sold in 1999 were analogue (60%), and relatively few were digital (12%); however, by 2003 the pattern was reversed, with sales of digital hearing aids being the most common (58%) and sales of analogue aids being reduced (26%) (Fabry, 2003). Complex digital signal-processing algorithms now enable fittings that are vastly more variable than would have been possible with analogue technology.

Conventional hearing aids had relatively few options (e.g., gain, output, frequency response) that were set by the fitter based on the audiometric profile of the user following “rules” guided by research on the correspondence between the audibility and the intelligibility of speech. In addition to the settings determined by the fitter, conventional hearing aids also had a small number of controls (e.g., on/off, volume, tone) that could be adjusted by the user according to his or her situation-specific listening preferences. In contrast, the fitting of current complex digital signal processing hearing aids can vary according to a wider variety of personal and acoustical factors, and the response of the device can be dynamically altered according to ongoing sampling and analysis of input by the device.

The main personal factor guiding hearing aid fitting continues to be the basic audiometric profile of the individual; however, increasingly, other non-audiometric factors may also influence fitting decisions (Kricos, 2000). Acoustical factors in hearing aid fitting continue to be dominated by the properties of the speech signal that are known to be relevant to intelligibility in quiet; however, digital technology has much greater flexibility to adjust automatically to an incoming acoustical signal based on assumptions regarding the dynamic interaction of a target speech signal with simultaneous competing signals. The need for user-operated controls is eliminated by such automatic adjustments. The new “intelligent” devices respond to ongoing analysis of the presumed signal and the presumed background. In essence, beyond operations such as filtering, amplification, and compression that resemble auditory processing by the cochlea, hearing aids have begun to incorporate more complex operations that emulate aspects of higher-level auditory and cognitive processing such as attention, memory, and language.

The approach to fitting conventional hearing aids was based on knowledge about how young adults hear relatively simple sounds in ideal environments. In contrast, new approaches that will be better suited to the fitting of digital signal-processing hearing aids to the average wearer must be based on knowledge about both auditory and cognitive processing of information by older listeners in real acoustic ecologies.

As our understanding of audiologic functioning grows and develops, it is increasingly evident that the auditory world is more complex than our initial focus on hearing suggests. In a consensus statement guided by the World Health Organization's International Classification of Functioning, Disability and Health (WHO's ICF) (WHO, 2001) and designed to provide a framework for current and future needs of research, Kiessling et al. (2003) outline not one but four processes—hearing, listening, comprehending, and communicating—that more fully describe auditory functioning:

Hearing is essentially a passive function that provides access to the auditory world via the perception of sound; it is primarily useful to describe impairment, typically using audiometry.

Listening is the process of hearing with intention and attention for purposeful activities demanding the expenditure of mental effort.

Comprehending follows and is defined as the unidirectional reception of information, meaning, and intent, and

Communicating is the bidirectional transfer of information, meaning, or intent between two or more people.

Comprehending and communicating are critical to functioning at the WHO ICF levels of both activity and participation. Beyond auditory processing, cognitive processing is crucial to the functions of listening, comprehending, and communicating.

New insights into the connection between auditory and cognitive processing suggest how brain plasticity enables a hearing aid user to learn new mappings between sound inputs and stored knowledge. Although the importance of higher-level auditory and cognitive processes was recognized even in the earliest days of audiology, only recently have the opportunities afforded by new technologies resulted in a renewed recognition of the need to apply advances in knowledge of these higher-level psychologic processes. Applying this knowledge will guide new practices in rehabilitative audiology that will facilitate acclimatization to sound inputs processed by devices and enable fuller participation in everyday life. Importantly, individual differences in processing abilities seem to provide a key to understanding why two people with similar audiograms do not derive the same benefit from a particular hearing aid fitting and how rehabilitative training may offset some of these differences.

Current cognitive models will be summarized and relevant research findings on age-related differences in auditory and cognitive processing will be examined. We conclude by suggesting why and how new approaches to audiologic rehabilitation must accompany new technologic developments in hearing aid design that are customized to individuals and their lifestyles.

2. A Cognitive Neuroscience Framework for Rehabilitative Audiology

Two views, the site-of-lesion view and the processing view, have fueled research on hypotheses concerning the relationship between age-related changes in audition and cognition. We suggest that a new hybrid view should develop following the example set by cognitive neuroscience. In the present section, the site-of-lesion, the processing, and the hybrid views are described, the hypotheses are tested, and the evidence arising from these views are presented, concluding with reasons for adopting a unified view of auditory cognition. Note that since most of the research to date has been focused primarily on auditory aging or on cognitive aging without emphasizing the connection between these factors, Sections 3 and 4 will highlight relevant aspects of these two largely nonoverlapping literatures. In Section 5 we synthesize this knowledge and highlight implications for hearing aid fitting and rehabilitative audiology.

2.1 Description of Views Influencing Rehabilitative Audiology

Diagnostic audiology seeks to identify the site-of-lesion of a hearing loss in terms of abnormality at a specific anatomic level of the auditory system. From a site-of-lesion view, the auditory system consists of functionally autonomous, distinct anatomic sites with connections that are organized in a largely bottom-up, serial fashion dominated by afferent innervation. Accordingly, types of hearing loss are categorized as being conductive, sensorineural, retrocochlear, or central. This simplified view of the auditory system has been clinically useful, especially for diagnostic purposes. Grounded in this view, the first basic audiometric tests, and later numerous advanced behavioral and electrophysiologic tests, were developed to enable the diagnostic categorization and quantification of hearing impairments. Not surprisingly, early diagnostic measures of hearing were also used to guide rehabilitative decisions.

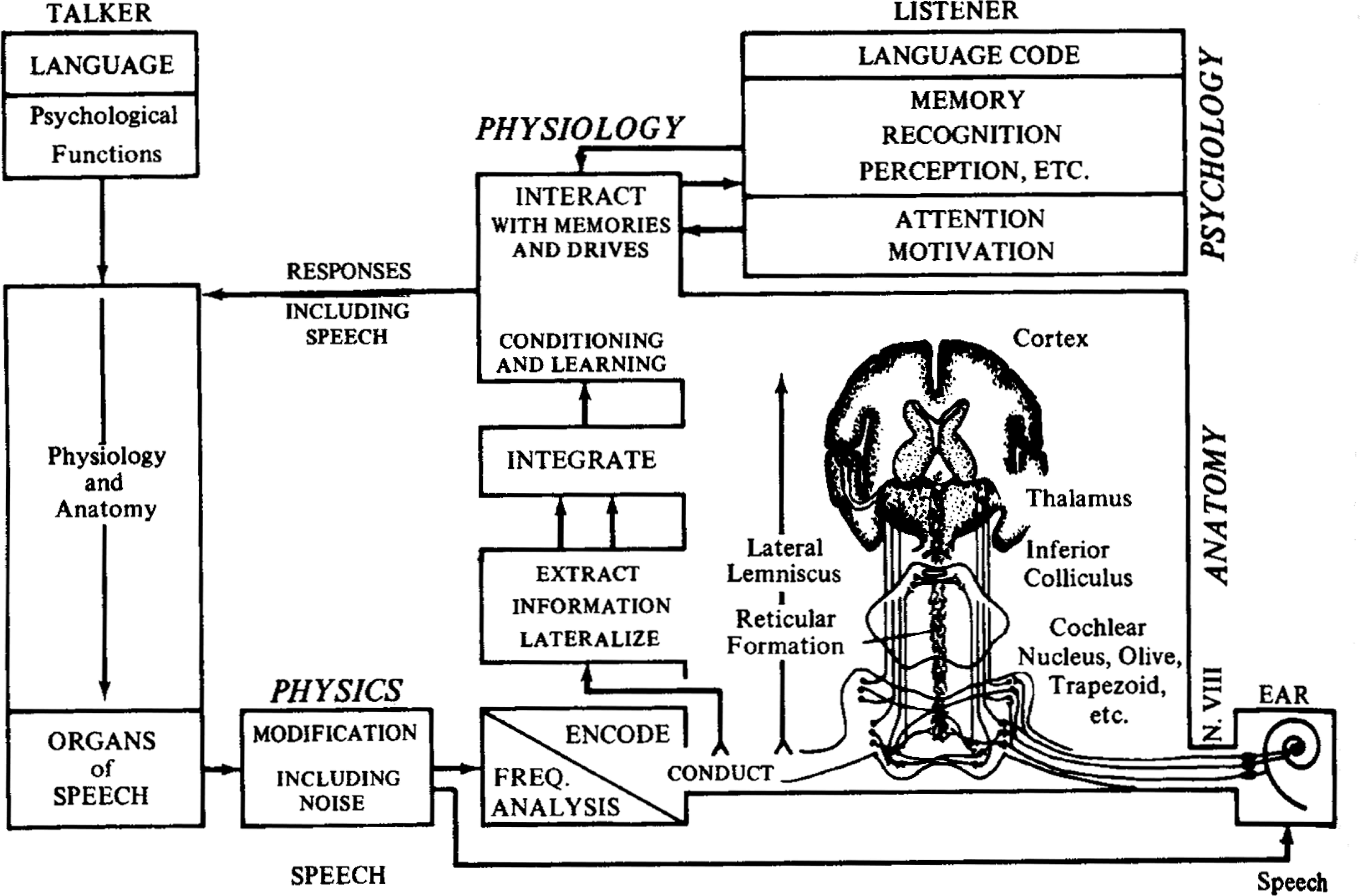

Nevertheless, even early pioneers in audiology recognized that the site-of-lesion view was over-simplified because different levels of the auditory system overlap and efferent feedback accompanies afferent encoding, with top-down control by higher levels of more peripheral levels in the system (e.g., Davis and Silverman, 1970). As shown in Figure 1, one early model anticipated that to fully understand how the entire auditory system works, audiologists would need to take account of higher-level cognitive processes such as attention, memory, and language that were involved in hearing, listening, comprehending, and communicating (Davis, 1964).

From Davis (1964) as printed in Davis and Silverman (1970, p. 76).

From the processing view, auditory processing at various levels from cochlea to cortex and processing by other modalities and higher brain centers interact to accomplish functions under a combination of top-down and bottom-up influences. These functions include hearing, listening, comprehending, and communicating, which in turn involve more general cognitive functions such as attention, memory, and language. The processing view may be more useful than the site-of-lesion view for rehabilitation because it is more closely connected to function in everyday life. It is very important to understand the interplay of the perceptual and cognitive factors that contribute to the communication difficulties of older listeners so that they can be remediated. Conversely, recent evidence suggests that rehabilitation has positive consequences to cognitive and social function in older communicators (e.g., Mulrow et al., 1990; Mulrow et al., 1992a, b; Cacciatore et al., 1999; Palmer et al., 1999). There has been increasing interest in understanding how auditory and cognitive processing inter-relate, partly because of the need to understand and address the everyday needs of older adults.

During the last 15 years, in addition to research focused on age-related aspects of either auditory or cognitive processing (see Sections 3 and 4 below, respectively), there has been a steady increase in the number of articles that have linked auditory and cognitive processing. Insofar as research linking audition and cognition is consistent with the integrated processing view, it is interesting to examine these publication patterns. As shown in Figure 2, there has been a gradual increase in the average annual number of articles that link audition and cognition, including an increase in the number of these papers specifically concerning older adults and also amplification. The increase in the articles linking auditory and cognitive processing may foreshadow the merging of the two traditional views, the site-of-lesion anatomic view and the processing psychologic view. Such a unified view was anticipated in a recent international consensus paper on audiologic rehabilitation for older adults (Kiessling et al., 2003).

The average annual number of publications in each of four 4-year periods. Squares represent articles on hearing and cognition. Circles represent the subset of the articles on hearing and cognition that were about aging. Triangles represent the subset of the articles on hearing and cognition and aging that were about hearing aids. Search terms for hearing included: hearing, auditory, auditive, and audition. Search terms for cognition included cognitive, cognition, memory, attention, inhibition, speed of processing, and top-down). Electronic bibliographic searches based on title, abstract and keywords were conducted for five journals: (1) Ear and Hearing, the (2) International Journal of Audiology (IJA) (searches for years before 2002 were conducted on the three journals that were amalgamated into IJA in 2002, namely Audiology, the British Journal of Audiology, and Scandinavian Audiology), (3) the Journal of the Acoustical Society of America; (4) the Journal of the American Academy of Audiology (note, inaugural issue printed 1990); and (5) the Journal of Speech, Language, and Hearing Research (searches prior to 1997 were conducted on the Journal of Speech and Hearing Research).

2.2 The Site-of-Lesion View

Much of the research on auditory aging conducted over the last 15 years has been guided by the Report of the Working Group on Speech Understanding and Aging, Committee on Hearing and Bioacoustics and Biomechanics, US National Research Council (CHABA, 1988). The report outlined three hypotheses concerning age-related declines in spoken language comprehension: (1) the peripheral hypothesis, (2) the central-auditory hypothesis, and (3) the cognitive hypothesis. Consistent with the traditional diagnostic site-of-lesion view, hearing researchers tended to conceptualize the three hypotheses in relation to different levels of the auditory system. At first, it was often assumed that the hypotheses were competing and mutually exclusive. Thus, evidence was sought to support the correct hypothesis and rule out the incorrect hypotheses, pitting explanations based on peripheral hearing loss indexed by the audiogram against explanations based on higher-level losses indexed by non-audiometric measures.

Most people with presbycusis have hearing loss resulting from damage to the cochlea, including loss of both outer and inner hair cells (sensory loss). Many older adults also have hearing impairment resulting from loss of ganglion cells and neural damage (neural loss). Still other older listeners, including some who have no clinically detectable sensorineural loss, have central losses. Evidence from animal studies comparing genetic strains differing in predisposition to presbycusis indicate that age-related changes in the central auditory system may be a sequelae of peripheral loss or they may be due to biologic aging independent of peripheral loss (for reviews see Willott, 1991; Frisina et al., 2001; Kiessling et al., 2003).

Chmiel and Jerger (1996) emphasized the need to recognize central as well as more peripheral forms of presbycusis, with the prevalence of central auditory processing disorders among the elderly being about 10% to 20% among a stratified random sample of the United States population (Cooper and Gates, 1991), but as high as 80% to 90% in a clinical population with co-occurring sensorineural loss (Stach et al., 1990). Thus, it is common for these various types of loss to exist independently or to coexist in older adults. It has been suggested that the value of the label presbycusis is limited because it fails to differentiate age-related auditory problems in terms of etiology, biologic damage, or functional significance (Kiessling et al., 2003).

Despite the heterogeneity of the older population and their hearing problems, it is common for older adults to experience problems understanding spoken language in everyday life. Based on audiometric profile, some but not all of these older adults would be clear candidates for amplification. It is important to try to sort out how much of the effect of age on speech communication is actually due to simple loss of audibility and the associated effects of cochlear pathology (e.g., loss of frequency selectivity) compared with how much is due to other changes in auditory or cognitive processing, or both, that are not predictable from the audiogram.

If the speech perception performance of younger and older listeners can be explained solely in terms of audiometric threshold elevation and cochlear pathology, then there is no particular need to worry about the age of listeners either when experimental research is conducted or when audiologic interventions are planned or evaluated. To the extent that their problems can be accounted for by cochlear deficits resulting in loss of audibility as indexed by elevations of pure-tone thresholds, it is reasonable to assume that amplification will restore the audibility of speech sounds and enhance speech perception as effectively for older listeners as for their younger counterparts with equivalent audiometric losses. If multiple pathologies are at play, or if the functional significance of these deficits varies owing to nonaudiometric factors, then experimental designs and clinical protocols, including hearing aid fitting procedures, will need to include measures beyond the traditional audiometric measures of hearing impairments. Such measures could inform hearing aid fitting as well as the recommendation of other forms of rehabilitation as described in the companion paper by Kricos (this issue).

Word recognition for speech presented in quiet at low-to-conversational levels shows a linear decline with age of approximately 12% per decade for adults older than 60 years (Gates et al., 1990). Humes (1996) argues convincingly that poorer high-frequency thresholds can account for nearly all of the changes in speech perception with age, at least for speech in quiet, and at least when the sample includes adults with a range of degrees of audiometric loss. For example, older listeners with audiometric threshold elevations perform very similarly to younger listeners with matched audiometric elevations simulated by masking noise (Humes and Christopherson, 1991; Humes et al., 1991). Other studies found equivalent speech perception performance between age groups when younger and older listeners were carefully matched based on hearing sensitivity (Takahashi and Bacon, 1992; Souza and Turner, 1994). Consistent with the claim that threshold elevation, rather than age per se, is the primary determinant of speech perception in quiet, older listeners’ percent correct scores for NU6 words and SPIN sentences in quiet are close to those predicted by the Articulation Index (Dubno et al., 1984; Schum et al., 1991). The same is true for recognition of monosyllabic words in speech-weighted or low-frequency noise (Magnusson et al., 2001). Generally, the studies described above used relatively simple stimuli: words or sentences presented in quiet or in steady-state background noise. These findings suggest that degree of cochlear hearing loss rather than other age-related changes accounts for problems in speech perception.

Nevertheless, a number of studies have shown a difference in speech perception with age, even after accounting for threshold elevation (for a review see Pichora-Fuller and Souza, 2003). Furthermore, the speech performance of older listeners in noise is poorly correlated with performance in quiet (e.g., Plomp, 1986). Nonaudiometric factors must account for the residual age-related differences in auditory processing of speech, although their contribution may be crucial only in complex listening situations.

Nonaudiometric age-related deficits seem to be most apparent in difficult auditory tasks (Gordon-Salant, 1987; Fitzgibbons and Gordon-Salant, 1996; Divenyi and Simon, 1999). For example, studies show an age-related deficit for speech presented in noisy or reverberant listening conditions (e.g., Helfer and Wilber, 1990; Pichora-Fuller et al., 1995), particularly in a background of interrupted or modulated noise (Turner et al., 1995; Stuart and Phillips, 1996), or when there is competing speech, even from a single talker (Wingfield and Tun, 2001; Larsby et al., 2005). Predictions of older listeners’ performances that are based on audibility are generally accurate in quiet but overestimate performances in noise (Schum et al., 1991; Hargus and Gordon-Salant, 1995). In these situations, older listeners are more likely to demonstrate suprathreshold deficits in addition to the effects of reduced audibility. Evidence consistent with the central auditory processing and cognitive hypotheses offers some explanation for the deficits associated with performance in more complex realistic communication situations.

Most of the studies undertaken by hearing researchers following the publication of the CHABA report in 1988 used standardized speech tests (often word or sometimes sentence tests) as outcome measures, with explanatory measures including tests of the peripheral auditory system (e.g., audiometry), the central auditory system (e.g., dichotic tests), the cognitive system (e.g., verbal IQ or digit span memory tests), or a combination. Although peripheral auditory measures accounted for most of the variance on relatively simple speech tests, it is noteworthy that in some studies significant effects on speech performance were also attributed to nonaudiometric factors such as memory, but researchers were cautious in their interpretation of the significance of these findings (e.g., Humes and Roberts, 1990).

It is interesting to reconsider the earlier findings based on laboratory studies of speech perception in light of recent individual differences research on hearing aid outcomes for older adults. Over more than three decades, hearing aid outcome measures have evolved to include various dimensions such as use, performance, benefit, and satisfaction (Cox et al., 2000).

In a recent large-scale study, 134 older adults were evaluated over a 1-year period after the hearing aid fitting using a battery of outcome measures and tests for variables that might predict the success of older adults with hearing aids (Humes, 2003; Humes and Wilson, 2003). Structural equation modeling was used to determine the relationship between outcome measures and predictor variables. Three outcome measures were modeled: speech recognition, hearing aid usage, and subjective benefit and satisfaction. Not surprisingly, speech recognition performance was predictable from audibility and age. Subjective benefit and satisfaction was negatively related to judgment of sound quality and to loudness discomfort level.

Hearing aid usage was positively related to duration of prior hearing aid experience and to number of years in the workforce, but it was negatively related to adjustment, speech recognition, and subjective benefit and satisfaction (i.e., use was higher for those with more problems). The one predictor variable that was related to all three outcome measures was cognitive ability (verbal IQ), which was positively related to speech recognition and subjective benefit and satisfaction but negatively related to hearing aid usage. Based on these findings, beyond audibility, age and cognitive ability do contribute to speech recognition, and it seems that hearing aid usage is greater by those who may be less able to compensate (lower adjustment and lower cognitive ability).

Although less predictable, even subjective benefit and satisfaction are related to both suprathreshold auditory measures (sound quality and loudness discomfort) and cognitive ability. It seems that even though peripheral auditory factors may dominate performance on speech recognition tests, especially in quiet, higher-level auditory and cognitive processing are relevant to everyday hearing aid success and must guide audiologic rehabilitation. Furthermore, other factors such as social cognition, emotion, and personality may also interact with cognitive or auditory function, or both, in ways that we have only begun to explore; for example, personality variables may account for a small but significant amount (around 10%) of the variance in benefit from hearing aids (Cox et al., 1999).

2.3 The Processing View

In parallel with hearing research conducted after the publication of the CHABA report (1988), important advances were made in cognitive gerontology. Paul Baltes and his colleagues set the stage for new directions in cognitive aging research with a report from the Berlin Aging Study that showed powerful links between sensory and cognitive abilities. Specifically, basic measures of hearing sensitivity and visual acuity were even more strongly correlated with age-related variations in intelligence than were the cognitive measures of speed of processing that had gained widespread acceptance as robust indicators of cognitive aging (Lindenberger and Baltes, 1994; Baltes and Lindenberger, 1997). The Berlin group proposed four hypotheses concerning possible explanations for the powerful inter-system connections between perception and cognition in aging:

declines are symptomatic of widespread neural degeneration (common cause hypothesis);

cognitive decline results in perceptual decline (cognitive load on perception hypothesis);

perceptual decline results in permanent cognitive decline (deprivation hypothesis);

impoverished perceptual input results in compromised cognitive performance (information degradation hypothesis).

Importantly, the information degradation hypothesis implies that age differences in cognitive performance could be alleviated by interventions to improve the quality of perceptual input (e.g., hearing aids). These hypotheses encouraged research into the nature of the relationship between auditory and cognitive declines. In effect, there was a call to move from a modular site-of-lesion view to an integrated information-processing view (Schneider and Pichora-Fuller, 2000).

Any causal relationship between age-related changes in audition and cognition would be inconsistent with the common cause hypothesis. The hypothesis that cognitive load hampers perception is not supported by evidence that older adults benefit more than younger adults from sentence context, and it even suggests that expertise enables older adults to use stored knowledge to compensate for auditory deficits (for reviews see Wingfield, 1996; Wingfield and Tun, 2001). The deprivation and information degradation hypotheses, respectively, address the possibilities that there may be long-term or short-term influences of auditory processing on cognitive performance. It is difficult to assess the long-term effects of auditory deprivation on cognitive performance in older adults because there are few longitudinal or experimental studies to provide evidence of causality (Arlinger, 2003). There is some indirect evidence that use of amplification may reduce the effects of cognitive decline. Population studies indicate that, compared with those who do not use hearing aids, older adults who use amplification have better emotional and social well-being and even greater longevity (e.g., Appollonio et al., 1996; Cacciatore et al., 1999; Naramura et al., 1999; Seniors Research Group, 1999). An intervention study indicated that use of amplification significantly reduced problem behaviors in older adults with dementia (Palmer et al., 1999). It has been easier to test the information degradation hypothesis in experimental studies of the short-term effects of systematic manipulation of perception on cognitive processing.

2.3.1 Information Degradation Hypothesis

The information degradation hypothesis has been tested in a series of studies that have attempted to equate the performance of participants on auditory tasks or used simulations of auditory aging, thereby permitting cognitive measures to be obtained in younger and older adults in more comparable perceptual conditions (for reviews see Schneider et al., 2002; Pichora-Fuller, 2003a). The studies exploring the information degradation hypothesis are especially relevant to audiologic rehabilitation, since evidence supporting this hypothesis would justify interventions to overcome declines in hearing.

The goal of research exploring the information degradation hypothesis has been to determine how changes in audition and cognition interrelate and contribute to the performance of older listeners when they are engaged in complex tasks involving the auditory processing of naturalistic signals in demanding, realistic, social and physical environments. Whereas listening in ideal conditions is effortless for normal-hearing young listeners, listening becomes effortful when perception is compromised by signal degradation (such as in competing background noise) or when deficits in auditory processing reduce the clarity of the input signal, or both (Pichora-Fuller, 2003a). If the increased listening effort associated with such environmental or biologic challenges increases cognitive processing demands, then cognitive performance should vary with the effortfulness of listening. Cognitive performance under varying listening conditions has primarily been evaluated in terms of measures of memory, comprehension, attention, or speed of processing (see Section 4 for a review of attention and memory).

2.3.2 Listening Effort and Memory

In conditions of effortful listening in the presence of background babble, regardless of whether words are correctly or incorrectly perceived, they are not remembered as well as when they were heard in quiet (Pichora-Fuller et al., 1995; Pichora-Fuller, 1996; Brown and Pichora-Fuller, 2000). The predicted influence of listening condition on memory has been clearly demonstrated in a study in which background noise rendered the paired-associate memory of younger adults like that of older adults (Murphy et al., 2000). In the paired-associate memory task, listeners learn a list of pairs of words, and then they are later given one of the words and asked to recall the word they learned to pair with it. Over a number of lists of pairs, the recall of words in different positions from first to last in the list is tested to determine the number of words from each position that are successfully recalled. In quiet, even though both age groups have excellent recall for recently heard items, they recall significantly fewer paired associates presented early in the list, and the old listeners have even more difficulty recalling these early items than do the young listeners. Importantly, when younger listeners are tested in noise, their recall of early items is reduced so that their performance becomes similar to the performance of older adults tested in quiet.

It is interesting to note that similar findings of reduced memory have been reported when vision is impaired (Owsley et al., 1998), or when a motor task such as walking along a designated path is made more challenging (Li et al., 2001). Furthermore, while perceptual or motor stress undermines recall, increasing memory load by adding more items to the set to be recalled does not have a deleterious effect on the accuracy of word identification (Pichora-Fuller et al., 1995) or on motor performance (Li et al., 2001). The assumption is that, at least for comprehending spoken language or walking along a designated path, priority is given to perceptual or motor processing; in effortful conditions, processing resources are diverted to these goals and away from memory storage. The goals of the listener may importantly dictate how processing resources are allocated and whether hearing is adequate to satisfy the communication goal (Pichora-Fuller, 2003a).

2.3.3 Listening Effort and Comprehension

Comprehension should be compromised if prior information is not stored long enough to be integrated with incoming information. Numerous studies have claimed that older adults comprehend less than their younger counterparts, but few of these studies have controlled the perceptual conditions. In one study, comprehension of monologues was tested by having listeners answer questions about what they heard (Schneider et al., 2000). Younger and older listeners were tested in easy, moderate, and difficult listening conditions. The signal-to-noise ratio (SNR) conditions were selected for each individual based on his or her performance on the low-context sentences of the Speech Perception in Noise Test (SPIN-R) (Bilger et al., 1984). Importantly, when the SNR is adjusted to equate the difficulty of the listening condition for each individual listener, many of the age-related differences in discourse comprehension observed in fixed SNR conditions cease to be significant.

2.3.4 Listening Effort and Attention

Natural listening situations can be considerably more complex than listening to a single talker in a noisy background. Often the listener must perform another task while listening. Older adults may be more susceptible to distractions than younger adults, and they may find it more difficult to divide their attention between two concurrent tasks (McDowd and Shaw, 2000). To determine whether the addition of a secondary task would produce a negative age effect for monologue comprehension, a sentence verification task was added to the monologue experiment described above (Schneider et al., 2000). While listening to connected discourse, listeners performed a sentence-reading task in which they had to monitor for the appearance of a written sentence on a computer screen, and when it appeared, they had to read the sentence silently to themselves and then verify whether it was true or false. Adding the distractor task had the effect of decreasing performance on the comprehension questions, but it did so equally for both younger and older listeners. Thus, when perceptual stress is equivalent for all participants, older adults are no more susceptible to distraction than are younger adults.

Attention may also play an important role when there are two talkers rather than one because the listener must follow not just what is being said, but who is saying what and when. Moreover, dialogue may impose additional perceptual demands on the listener because the two talkers participating in a conversation are typically in different locations and the listener must keep track of and integrate auditory messages that are coming from two spatially separate locations (Boehnke and Phillips, 1999). Older listeners could be disproportionately affected by the additional cognitive demands of following two-person conversations, the additional perceptual demands of integrating auditory information from spatially separate locations, or both. In a sequel to the monologue study described above, participants listened to dialogues from one-act plays, with the voice of one actor presented over a loudspeaker to the left, the voice of the other actor presented over a loudspeaker to the right, and with babble presented over a central loudspeaker (Murphy et al., in press). Even when the presentation levels were adjusted to make it equally difficult to hear individual words, older listeners were still at a disadvantage relative to younger listeners. Hence, equating for perceptual stress appears to eliminate age differences in comprehending monologues but not dialogues.

The failure to eliminate the negative age difference for comprehending dialogues could be due to the increased cognitive complexity of dialogues, or to difficulty in auditory spatial localization. In a follow-up experiment, the spatial separation of the talkers was eliminated by presenting both talkers and the background babble from the same loudspeaker. Removal of the spatial separation eliminated the negative age difference for dialogue, so that the pattern was the same as that for monologues, suggesting that older adults may have difficulty in integrating information from sources at separate locations. It could be that older adults are less able to use acoustic cues arising from spatial separation to separate the information coming from the two talkers, or the problem could be more central in that older adults are less able to integrate information coming from different spatial channels (Boehnke and Phillips, 1999), or they may have a cognitive loss in inhibitory control (Hasher and Zacks, 1988; Hasher et al., 1999), or a combination of these, that results in comprehension difficulties (Tun et al., 2002).

In conditions of actual spatial separation, when one voice is presented from the left loudspeaker and the other voice is presented from the right loudspeaker, both interaural time and amplitude cues are available. At low frequencies, interaural time differences provide the dominant cues for localization. At high frequencies, cues to localization are mostly provided by interaural differences in the frequency response owing to head shadow.

To minimize the cues owing to head shadow, instead of actual separation of the sources, apparent spatial separation can be achieved by using a paradigm that takes advantage of the precedence effect (Freyman et al., 1999). Using the precedence effect paradigm, each voice is presented from both loudspeakers, eliminating interaural cues due to head shadow, but the voices seem to be spatially separated because for one voice there is a short lag (e.g., 3 msec) between when it is presented from one loudspeaker relative to when it is presented at the other loudspeaker. The lag induces the perception that the voice is located at the position of the leading sound.

Li et al. (2004) used the precedence effect paradigm in a study in which younger and older adults were asked to repeat meaningless target sentences presented in either a continuous broadband noise background (energetic masker) or in a background of two people speaking nonsense sentences (informational masker). In one condition, both the target and masker were perceived as coming from the same spatial location; in the other, they were perceived as coming from different spatial locations. Freyman et al. (1999) showed that for younger participants, the intelligibility of the target sentences improved when the target and informational masker were perceived as originating from separate locations, even though no improvement was observed for the energetic masker under the same conditions. They interpreted this as a release from “informational masking,” and showed that it could not be due to energetic masking in the auditory periphery.

Li et al. (2004) used this paradigm to test whether older adults would find it more difficult than younger adults to inhibit the processing of irrelevant speech. Clearly, a speech distractor is more similar to a speech target than is a noise distractor, and spatial separation between target and distractor should aid in the inhibition of the processing of irrelevant information. Thus, if older adults have trouble inhibiting irrelevant information then they should demonstrate more interference from informational masking than do younger adults, especially when there is no perceived spatial separation between target and masker. Contrary to this prediction, the only difference between younger and older listeners was that the older adults required a higher SNR for 50% detection (about 3 dB) than the younger adults, but otherwise there were no age-related differences between the psychometric functions of young and old in any condition.

2.3.5 Listening Effort and Slowing

Another prevalent theory in aging research is that a generalized slowing in brain function with age is responsible for most, if not all, of the age-related declines in problem solving, reasoning, memory, and language (Cerella, 1990; Lindenberger and Baltes, 1994; Wingfield and Stine-Morrow, 2001). The contribution of speed of processing in language comprehension has been studied by comparing the performance of younger and older adults when speech is artificially speeded (Wingfield et al., 1985; Fitzgibbons and Gordon-Salant, 1996; Gordon-Salant and Fitzgibbons, 1993, 1999, 2001; Wingfield, 1996), and the typical finding has been that comprehension declines more rapidly with speeding for older adults than for younger adults.

The greater loss of comprehension in older adults is often attributed to a generalized slowing in cognitive functions with age, or to age-related losses in the cognitive or linguistic abilities, or both. However, in addition to increasing the rate of flow of information, speeding speech also tends to degrade and distort the speech signal to a greater or lesser degree, depending on how the time compression is implemented (Gordon-Salant and Fitzgibbons, 1999; Wingfield et al., 1999). The effects of three different methods of speeding speech were tested in an effort to determine whether age differences in sensitivity to the acoustical distortions induced by speeding could account for the poorer performance of older adults in speeded speech tasks (Schneider et al., 2005).

The first method was to eliminate every nth amplitude sample in a digitized version of the high- and low-context sentences of the SPIN-R (Bilger et al., 1984). In general, speeding speech by eliminating every nth amplitude sample (1) shifts the energy into a higher frequency range, (2) speeds up all transitions, and (3) shortens all gaps (periods of silence or relative silence in the speech signal). These three consequences might prove to be particularly difficult for older listeners given their loss of high-frequency sensitivity and their declines in temporal processing (Fitzgibbons and Gordon-Salant, 1996; Schneider and Pichora-Fuller, 2001).

The second method was to divide the speech signal into 10-msec segments, and eliminate every third segment (following Wingfield et al., 1985), thus increasing speed without a frequency shift but removing speech segments without regard to their informational content.

The third method was to increase speed by the same amount without distorting the transition cues that are known to be important in phoneme perception. Specifically, the speech signal was examined to locate steady-state portions such as pauses between words or syllables, or portions of a steady-state vowel and these segments were reduced in duration.

Gordon-Salant and Fitzgibbons (1999) have argued that there is convergent evidence that “older listeners have difficulty following the rapidly changing acoustic elements in a speech sequence” (p. 301), and they have shown that older adults find it especially difficult to deal with selective time compression of consonants (Gordon-Salant and Fitzgibbons, 2001). By leaving the transitions relatively intact while speeding the speech, the effects of speeding on the aging auditory system were minimized. Furthermore, the SNR in the baseline unspeeded condition was individualized to make it equally difficult for younger and older adults to identify individual words. Speeding speech by removing every third amplitude value or by removing every third 10-msec segment had a more deleterious effect on older than on younger adults, whereas no age differences or age by speed interactions were observed when speech was speeded by deleting selected steady-state segments. Thus, word recognition by the older adults compared with younger adults was reduced only in the conditions where phonologic information was degraded, supporting the information degradation hypothesis.

2.3.6 Summary of Evidence Supporting the Information Degradation Hypothesis

Consistent with the information degradation hypothesis, the results described above suggest that many of the comprehension difficulties experienced by older adults in everyday listening situations have a peripheral auditory origin. The evidence for this is that when the listening conditions are matched so that it is as difficult for younger adults to identify individual words as it is for older adults, apparent age-related declines in cognitive performance on measures of memory, attention, and discourse comprehension largely disappear. Presumably, sensory declines in older adults result in inadequate or error-prone representations of external events. These inadequacies and errors at the perceptual level then cascade upwards and lead to errors in cognitive processing during challenging conditions.

2.4 Cognitive Neuroscience of Aging

So far we have argued that the site-of-lesion view contrasts with the processing view insofar as the former is a simplified, largely bottom-up modular view based on anatomic levels and measured with artificial stimuli and tasks, whereas the latter is a more complex, interactive view that appreciates the role of both top-down and bottom-up influences on more realistic everyday communication behaviors. The site-of-lesion view tended to set up competing hypotheses, whereas the processing view suggested multiple, not necessarily mutually exclusive hypotheses, each concerning the relationship between sensory and cognitive processing. In many ways, the apparent differences between these views are a byproduct of the state of our knowledge of neuroscience at the time.

As Davis and Silverman (1970) observed long ago, although it was obviously important to understand how brain systems coordinated information when the person engaged in complex behaviors such as communication, the methods for studying the relationship between our brain hardware and our cognitive software were lacking. Fortunately, we are now beginning to be able to study this relationship, thereby bridging the site-of-lesion and processing views. The growing use of functional neuroimaging, especially positron emission tomography (PET) and functional magnetic resonance imaging (fMRI) in cognitive neuroscience, has opened the door to a new understanding of the microarchitecture of the brain in living humans and how it relates to complex high-level behaviors including perception, memory, attention, and language (e.g., Haxby et al., 1991; Goodglass and Wingfield, 1998). Although incredible progress has been made already, much future research will be needed to further develop neuroimaging methods to allow us to understand more fully the functional localization of the human brain, especially for brain areas involved in higher cognitive functions such as language and communication that cannot be studied adequately in animals.

2.4.1 Research Strategies and Approaches

In cognitive neuroscience, two common strategies have been to look for differences by comparing patterns of brain activation on two (or more) tasks or to compare activation in two (or more) brain regions to determine the network of connections mediating a task (e.g., Horwitz et al., 1995). The first strategy investigates the role of an anatomic site in a variety of tasks (akin to the site-of-lesion view); the second strategy investigates how different anatomic sites combine when a task is performed (akin to the processing view). Building on these two strategies, “cross-function” and “within-function” approaches have been described whereby brain organization is studied to understand how the neural correlates of different cognitive functions overlap and interact (Cabeza and Nyberg, 2002). The goal of the cross-function approach is to determine all of the functions (or tasks) that involve a given brain region; the goal of the within-function approach is to determine all of the brain regions that participate in a given function (or task). These two approaches have been applied to recent research on aging, and we can anticipate that eventually research of this sort will illuminate the mysteries of central auditory processing during realistic communication situations by enabling us to relate activity in various networked brain regions to complex auditory behaviors that rely on a combination of sensory, perceptual, and cognitive processes.

2.4.2 The Aging Brain

The cognitive neuroscience of aging is a new hybrid field that has emerged over the last decade to combine the behavioral research approaches of the cognitive psychology of aging and the biologic approaches of the neuroscience of aging (Cabeza, 2001; 2004). Postmortem studies provided early evidence that the brain shrinks with advancing age; in contrast, new imaging techniques have enabled more accurate quantification of the effects of age on brain loss for specific brain regions in large samples of living human brains. Studies of age-related changes in specific brain regions related to cognitive function have repeatedly pointed to reduced symmetry in the activation of prefrontal cortex in older adults, and it has been suggested that the right hemisphere may age faster than the left (Daselaar and Cabeza, 2005). Importantly, when young and older adults perform a task, including perceptual tasks, at the same level of proficiency, mounting evidence indicates that different areas of the brain are activated depending on the age of the person, with the general pattern being that older adults use more brain regions, including regions of both hemispheres (for reviews see Grady, 1998, 2000).

The influential hemispheric asymmetry reduction in older adults (HAROLD) model is based on the findings from cognitive neuroscience that under similar circumstances, prefrontal activity during cognitive performance (perception, memory, and attention) tends to be less lateralized with age. This functional reorganization, or plasticity, of the brain might result from dedifferentiation of brain function as a consequence of difficulty in activating specialized neural circuits, or it might result from compensatory adaptation to offset age-related neuro-cognitive declines (e.g., Cabeza, 2002). Evidence supporting the compensatory interpretation is that healthy older adults who have low performance on cognitive measures recruit the same prefrontal cortex regions as young adults, whereas older adults who achieve high performance engage bilateral regions of prefrontal cortex (Cabeza et al., 2002). Rehabilitative interventions to increase such compensation may be useful, especially for older adults who do not learn to perform well with a new hearing aid without training.

2.5 New Frontiers for Rehabilitative Audiology

Over the last decade and a half, guided by the three hypotheses arising from a modular site-of-lesion view and the four hypotheses arising from an interactive processing view, research findings have converged in support of the information degradation hypothesis. Not surprisingly, for speech in quiet, most of the difficulties of older adults can be attributed to audiometric hearing loss and associated sensory cochlear deficits. For speech in noise, older adults, even those who are not candidates for amplification based on audiometric criteria, require slower speech or better SNR conditions to equal the performance of younger adults on simple measures such as single word recognition. The reduced word recognition performance of older adults in challenging acoustic conditions points to the importance of more central aspects of auditory processing, including temporal processing (discussed further in Section 3 below). The performance of healthy older listeners is poorer than that of younger counterparts on cognitive measures, such as memory, attention, and comprehension, when both age groups are tested in physically identical perceptually challenging conditions (discussed further in Section 4 below). Importantly, apparent age-related differences in cognitive performance are largely eliminated when the two age groups are tested under conditions that are equated for perceptual difficulty as measured by simple word recognition.

Interventions to ease listening should, in turn, reduce demands on cognitive resources that must be diverted from other purposes. Conversely, those who have fewer cognitive resources should be less able to compensate when listening is effortful. From a cognitive neuroscience of aging view, when perception is easy, there should be more localized brain activation; whereas when perception is difficult, those who are able to engage the frontal lobes in compensatory top-down processing will have more widely distributed brain activation. Thus, greater demand on cognitive resources and, consequently, more distributed brain activation are associated with effortful listening.

A new neuroscience view of auditory cognition promises to provide a way to unify the site-of-lesion view and the processing view. The promise of this view is suggested by a study in which age-related declines on dichotic and temporal central auditory-processing tasks were found to be consistent with a reduction in interhemispheric function (Bellis and Wilber, 2001). A new neuroscience view may help audiologists shift from the traditional audiometric and speech recognition measures and pave the way to the development of more ecologically valid research and clinical tools to measure auditory function (e.g., listening effort) in terms of both brain and behavior.

Applying the WHO ICF model (2001) to audiologic rehabilitation (Kiessling et al., 2003) requires a new bridge between hearing and cognitive aging research (Kricos, this issue). Although it seems that cognitive performance is negatively affected by reduced auditory performance, it may also be the case that individual differences in cognitive ability might influence success with amplification, especially in effortful listening conditions. Indeed, recent work suggests that those with better working-memory capacity may have greater success with complex signal-processing schemes than those with less working-memory capacity (Gatehouse et al., 2003; Lunner, 2003; discussed further in Section 5). Therefore, the rehabilitative audiologist may need to consider a client's cognitive abilities when selecting a hearing aid fitting. More generally, a careful analysis of the older listener's communication goals and how processing resources are allocated to various competing tasks may guide the rehabilitative audiologist's understanding of how and why activities are limited or participation is restricted under some conditions and not others, despite the stability of hearing ability (Pichora-Fuller, 2003a). Indeed, such an understanding of the individual's listening preferences and strategies might even be designed into future signal-processing algorithms.

3. Auditory Factors Contributing to Age-Related Differences in Auditory Communication

In Section 3, we present core findings from hearing research that was not focused on studying the relationship between auditory and cognitive processing. These findings illuminate possible reasons for the age-related differences in peripheral and auditory processing that were described in Section 2.

3.1 Audiometric Status

It is well known that presbycusis is characterized by symmetrical, high-frequency sensorineural hearing loss. Such age-related audiometric loss can begin as early as the fourth decade of life, with greater decreases in sensitivity with increasing age (Willott, 1991). The pattern is different for men and women, with men showing a greater drop overall and a more pronounced loss in the high-frequency range (Gates et al., 1990). A typical finding is that one third of adults older than 65 years report significant hearing loss. As many as 50% of adults aged 75 to 79 years have some degree of audiometric hearing loss (for reviews see Davis, 1995; Kricos, 1995), as do most of those living in institutional care, with reports of the prevalence of hearing loss being as high as 80% for the frail elderly living in residential care facilities (Schow and Nerbonne, 1980). Curiously, prevalence figures based on audiometry are usually higher than those based on subjective reports (Pichora-Fuller and Carson, 2000).

Given the aging of the population and the increasing prevalence of hearing loss with age, it is not surprising that most of those who are candidates for hearing aids and assistive listening devices are senior citizens (Kricos, this issue). Indeed, the average age of those fit with their first hearing aid is about 70 years (Davis, 2003). Importantly, most actual and potential wearers of hearing aids are older adults, but the older population is extremely heterogeneous and there may be significant individual differences, with some older adults performing as well as their younger counterparts and others performing much more poorly, with clear implications for rehabilitation (Pichora-Fuller and Souza, 2003; Kricos, this issue).

3.2 Auditory Temporal Processing

A consideration of the listening conditions in which age-related differences are most pronounced points to the kinds of changes in auditory processing that seem most likely to be involved. There is extensive evidence that fast speech rate or the presence of background noise or reverberation (room echo) disrupts word identification more for older than younger listeners, even when their audiometric thresholds are considered to be clinically normal (e.g., Dubno et al., 1984; Gordon-Salant and Fitzgibbons, 1993, 1995; Pichora-Fuller et al., 1995; Stuart and Phillips, 1996; Frisina and Frisina, 1997). The effects of speech rate on word identification are also well documented (Wingfield and Tun, 2001). What kinds of changes in auditory processing would give rise to the particular vulnerabilities of older listeners when speech is heard in a noise background or at fast rates?

The specific auditory processing declines that are most likely to be important include declines in monaural and binaural auditory temporal processing. Importantly, the traditional view of hearing loss in terms of reduced audibility for spectrally characterized sounds is inadequate to account for the particular difficulties of older listeners in noise (for reviews see Divenyi and Simon, 1999; Schneider and Pichora-Fuller, 2000). In search of other possible explanations, researchers have devoted increasing effort over the last decade to the investigation of the contribution and nature of behavioral and physiologic declines in auditory temporal processing with age (e.g., Fitzgibbons and Gordon-Salant, 1996; Frisina and Frisina, 1997; Schneider and Pichora-Fuller, 2000; Frisina et al., 2001; Tremblay et al., 2003).

Given the variety and the importance of different temporal cues for particular aspects of speech processing (Rosen, 1992; Greenberg, 1996), diminished abilities in this domain may explain many of the problems that older listeners experience in the acoustically challenging conditions of everyday communication. It is useful to frame recent research on auditory temporal processing in aging by distinguishing temporal cues according to their relevance to speech intelligibility at three main levels: at the supra-segmental or prosodic level, the rate and rhythm of speech influences lexical and syntactic processing; at the segmental level, gap and duration cues influence phoneme identification; and at the subsegmental level, periodicity or synchrony cues influence voice pitch, quality and clarity (Schneider and Pichora-Fuller, 2001). Suprasegmental and segmental information is conveyed by the temporal envelope of the sound wave, whereas voice properties are conveyed by its temporal fine structure. Although older adults have greater difficulty than younger adults when the rate of speech is more rapid, use of prosody remains largely unaffected by age (Wingfield et al., 2000; Wingfield and Tun, 2001). In contrast, age-related differences at the segmental level may be related to difficulty in word identification and age-related differences in synchrony coding may be related to difficulty segregating voices. The research of our Toronto group has focussed on age-related differences at these last two levels of auditory temporal processing: gap detection and synchrony coding.

3.2.1 Gap Detection

A number of studies of gap detection using tonal signals have demonstrated that older adults do not detect a gap in the signal until the size of the gap is about twice as large as the smallest gap detectable by younger adults (about 6 vs 3 msec), with gap detection thresholds not being predictable from pure-tone hearing thresholds in listeners with good audiograms (e.g., Schneider et al., 1994; Gordon-Salant and Fitzgibbons, 1993; Snell, 1997; Strouse et al., 1998). In particular, older adults have significantly larger gap detection thresholds when the surrounding marker signal is short (e.g., 5 msec), but not when it is longer (e.g., 500 msec) (e.g., Schneider and Hamstra, 1999).

Gaps in speech provide segmental information, such as marking the presence of stop consonants; for example, spoon differs from soon because of the presence of the stop consonant [p], which corresponds to a gap in the speech time waveform. Some studies have found relationships between gap detection thresholds and speech perception in noise (e.g., Tyler et al., 1982; Snell et al., 2002). Nevertheless, a clear link between nonspeech gap detection ability and specific speech perception abilities in old age has been difficult to establish (e.g., Strouse et al., 1998; Snell and Frisina, 2000). The discrepancies may be due to the particular nonspeech stimuli used and the aspects of temporal processing involved. Some investigators have studied gap detection using nonspeech markers that approximated the spectral properties of consonant-vowel utterances (e.g., Formby et al., 1993; Phillips et al., 1997), and others have used such synthetic speech markers (e.g., Lister and Tarver, 2004).

In a recent study, we investigated age-related differences in ability to detect gaps in analogous nonspeech and speech markers (Pichora-Fuller et al., in press). “Within-channel” processing was tested with spectrally symmetrical markers: identical 500-Hz tones were nonspeech markers, and identical tokens of the vowel [u] were speech markers. “Between-channel” processing was tested with spectrally asymmetrical markers: the nonspeech leading marker was a 1- to 6-kHz bandpass noise and the lagging marker was a 500-Hz tone; the speech leading marker was a fricative [s] and the lagging marker was [u]. Gap detection thresholds were larger for older than for younger listeners in all conditions, with the size of the age-related difference increasing with stimulus complexity. For both age groups, and for both speech and nonspeech stimuli, gap detection thresholds were far smaller when the markers were spectrally symmetrical compared with when they were spectrally asymmetrical. Importantly, age-related differences in the spectrally asymmetrical marker conditions were less for gaps in speech markers than in nonspeech markers. We suggest that this pattern of results may reflect the benefit of activating well-learned gap-dependent phonemic contrasts. Although age-related differences in ability to detect gaps may have negative consequences to speech perception, it also seems that older adults may compensate by using phonologic knowledge.

3.2.2 Synchrony Coding

Synchrony coding is another aspect of auditory processing that may alter older listeners’ abilities to perceive speech in challenging everyday listening conditions. It is well known that the response of auditory neurons is synchronized, or phase-locked, to the signal's frequency (Rose et al., 1971). Loss of synchrony has been implicated in age-related changes on a number of nonspeech and speech perception abilities dependent on the extraction of temporal fine-structure cues (Pichora-Fuller and Souza, 2003). Monaurally, loss of synchrony could explain why age-related increases in frequency difference limens (DL) are greater for low frequencies than for high frequencies (e.g., Abel et al., 1990). Because frequency DL is thought to depend on phase locking at low frequencies, a loss of synchrony would differentially affect DLs for low-frequency signals. Binaurally, age-related changes in masking-level differences have also been attributed to an age-related increase in temporal jitter or a loss of temporal synchrony (Pichora-Fuller and Schneider, 1992).

One important contribution of synchrony coding is that it enables a listener to extract voice properties such as the fundamental frequency and harmonic structure. These voice properties are important because they may help listeners to attend to a target speech source when there are competing sources, especially when such competing sources are spectrally similar to the target signal. For example, monaurally presented concurrent vowels with identical formant characteristics, such as might be produced by two talkers speaking at once, are segregated when they differ minimally (less than a semitone) in fundamental frequency and harmonic structure (e.g., Culling and Darwin, 1994). Age-related declines in ability to segregate concurrent vowels suggest that loss of synchrony affects monaural speech identification when there is a competing speech signal (Summers and Leek, 1998; Vongpaisal and Pichora-Fuller, 2004). Furthermore, when we jittered the low-frequency components of speech to simulate age-related loss of synchrony coding, both word identification and recall in younger listeners mimicked the performance of older listeners who heard intact speech (Brown and Pichora-Fuller, 2000).

3.3 New Conceptualization of Diagnostic Categories

Taken together, the results described above provide evidence of subclinical changes in auditory processing that result in greater perceptual stress for older compared with younger adults when listening conditions are challenging. It is interesting that many of the auditory temporal processing declines observed in aging are also found to a more extreme degree in cases of auditory neuropathy (Sininger and Starr, 2001). Following the diagnostic trails blazed by those who have mapped the characteristics of auditory neuropathy, it may be worthwhile to reconceptualize sensorineural hearing loss into two different components:

the sensory component of cochlear pathology could be assessed by tools such as pure-tone audiometry or otoacoustic emissions and would involve predominantly outer hair cell damage;

the neural component could be measured by behavioral or physiologic auditory temporal processing measures and would involve predominantly inner hair cell damage, or neural damage, or both.

For example, Tremblay et al. (2003) found age-related differences in specific auditory evoked potentials in participants with or without audiometric threshold elevation. Individual differences between presbycusics would depend on the relative contributions of the sensory and neural components. A given degree of sensory loss would be expected to have similar effects on all adults regardless of age. Neural loss in presbycusis would be expected to resemble mild auditory neuropathy.

4. Cognitive Factors Contributing to Age-Related Differences in Auditory Communication

In Section 4, we present core findings from cognitive research that was not focused on studying the relationship between auditory and cognitive processing. These findings illuminate possible reasons for the age-related differences in cognitive processing that were described in Section 2.

4.1 Preservation and Declines in Cognitive Aging

For decades, researchers have investigated how age affects cognitive performance. With increasing age, some abilities are preserved or even enhanced, whereas others decline. In general, age-related perceptual declines in hearing and vision begin in the fourth decade, followed by cognitive decline. While many aspects of perception and cognition are declining, some aspects of linguistic and social performance may actually continue to improve (Baltes and Staudinger, 2000). Expertise and wisdom may provide compensation for some declines. Shifts in goals may also alter demands on communicators of different ages (Pichora-Fuller, 2003a). Importantly, “crystallized” world knowledge stored in long-term semantic memory is well preserved in old age, and age-related difficulties are isolated to difficulties in “fluid” knowledge such as the fast, moment-to-moment processing of information in working memory during language comprehension and production (Kemper, 1992; Schneider et al., 2002; Kemper et al., 2003). Such age-related differences in cognitive processing are exacerbated in challenging conditions that make listening effortful because the rapid rates of input require fast processing, distracting streams of sensory input require selective attention to a target, coordination of multiple tasks requires dividing attention, or complex ideas require deep processing to comprehend meaning.

4.2 Cognitive Processing of Heard Information

Consistent with Figure 1, a veridical understanding of human hearing, listening, comprehending, and communicating must consider the interaction of top-down attentional, memory, and language mechanisms with bottom-up, stimulus-based auditory influences. In the following sections, we highlight research on attention and memory related to language processing, introducing the reader to core theoretical frameworks and exploring how they can be applied and related to auditory functioning.

4.2.1 Attention

Similar to the concerns that motivate today's clients to seek audiologic rehabilitation, early studies of attention sought to understand and improve how people perform complex tasks in challenging environments. The most common complaint of hearing aid wearers is difficulty hearing in noisy environments. Important aspects of this problem may be captured by William James’ (1890) definition of attention as the taking possession by the mind in clear and vivid form of one out of what seem several simultaneous objects or trains of thought. The most common such problem for older listeners is following conversation in groups. Another early attention researcher referred to the phenomenon of tracking one conversation in the face of the distraction of other conversations as the cocktail party effect (Cherry, 1953). Attention research may offer audiologists new insights into these widespread but as of yet unsolved rehabilitative problems.

Attention theories differ in how they account for the underlying mechanism by which attention operates. Some theories of attention suggest the mechanism functions like an attentional filter, through which information is selectively attenuated as it passes from one level of processing to the next (e.g., Norman, 1968). Alternative theories propose that people have a fixed amount of attentional resources that are allocated according to perceived task requirements (e.g., Kahneman, 1973). Filter and resource theories may be somewhat complementary insofar as filter theories may more suitably describe selective attention processes, and resource theories may more suitably describe divided attention processes (Pashler, 1994).

Functionally, attention is the means by which we actively limit the amount of information we process from the enormous amount of information available through our senses, memories, and cognitive processes. Attention serves to identify important features of one's environment (i.e., either through vigilance- or search-based processes). Research has further delineated between those tasks requiring selective attention—the process of attending to one stream while simultaneously ignoring others, and tasks requiring divided attention—the process by which an individual allocates available attentional resources to coordinate the performance of more than one task at a time.

In cognitive aging research, the inhibitory deficit hypothesis (Hasher and Zacks, 1988; Hasher et al., 1999) proposes that activation (the ability to engage the search process) is spared, but inhibition (including selective and divided attention) is negatively affected (McDowd and Shaw, 2000). Compared with younger adults, older adults demonstrate reduced ability to inhibit or restrict attention to irrelevant or distracter information. Age-related differences are more obvious in complex than in simple situations because older adults are able to focus more effectively when distractions are kept to a minimum.

Broadly, we suggest that there are three types of attention that modulate listening: (1) vigilance- and search-based attentional processes, (2) selective attention (filtering), and (3) divided attention (resource allocation). The role of vigilance- and search-based attentional processes is illustrated by the example of listening in quiet, where hard-of-hearing older listeners, either aided or unaided, often complain that “I can hear, but it is tiring or stressful or effortful to listen.” This declaration highlights vigilance-based attentional processes involving sustained awareness of a field of stimulation over a prolonged period (e.g., a listener attempting to detect relevant utterances in a group conversation over a length of time), and search-based attentional processes involving sustained scanning of environments for particular features (e.g., a listener attempting to detect his or her name in a group conversation). Within a more integrated information-processing framework, vigilance- and search-based attentional processes can be modulated in three ways: by top-down influences (e.g., motivation, goals) guiding vigilance and search (Rothermund et al., 2001), by reallocation of resources to alleviate attentional strain, and by bottom-up stimulus-driven influences on attention (Arnott and Alain, 2002). Notably, research has found that top-down influences on attention can be as effective for older adults as for younger adults (Madden et al., 2004).

The role of selective attention is illustrated by the example of listening in a group. As suggested above, the “cocktail party effect” has been studied using the classic selective attention research paradigm. When listening, a person expends mental effort to direct attention toward the target sound source(s) and away from others (Arbogast and Kidd, 2000), so that his or her purposes, intentions, and goals can be fulfilled. Recent work investigating auditory selective attention has begun to delineate how normal listeners perceptually separate targets from noise (Woods et al., 2001). Consistent with the more general inhibitory deficit hypothesis (Hasher and Zacks, 1988), older adults’ listening difficulties in noise may reflect an age-related decline in the processing of task-irrelevant stimuli (Alain and Woods, 1999; for reviews see Alain and Arnott, 2000 and Giard et al., 2000).

The role of divided attention is illustrated by the example of having a conversation while engaged in another task (e.g., walking). For older adults, hearing loss is often accompanied with impairments in other sensory-motor abilities such as reductions in vision, balance-gait, and dexterity. To achieve optimal functioning in these systems, a person allocates a fixed amount of attentional resources to coordinate multiple tasks, essentially dividing attention between the concurrent tasks (e.g., listening and walking). More resources are allocated to compensate for increases in task demands that are due to impairments or adverse environmental conditions (or both), such as noise or obstacles in a walking path (e.g., Otten et al., 2000; Schneider and Pichora-Fuller, 2000; Li et al., 2001; Kemper et al., 2003). Using the divided attention paradigm, Rakerd et al. (2000) found that when hearing-impaired listeners performed two tasks simultaneously, speech listening was particularly effortful.

We suggest that most normal everyday listening can be conceptualized as moving between environments relatively free of demands to those that place many demands on people's ability to divide attention. Looking towards the future, Jerger et al (2000) devised a more ecologically valid measure of speech understanding in noise based on a vigilance task that could be used to compare unaided vs aided performance, or monaural vs binaural conditions, or conventional hearing aids vs alternative listening devices. Research on the role of attention on auditory processing has passed the half-century mark, yet much work remains as audiologists build new approaches on this foundation of basic cognitive research.

4.2.2 Memory

Memory is a core topic of research within cognitive psychology, with well over 100,000 scholarly articles having been written on the topic. This next section will attempt to provide a synopsis, admittedly brief, of the subject matter most relevant to communication and aging. We will begin with an overview of prominent theoretic frameworks, followed by a discussion of basic distinctions and properties that guide research on memory, and ending with a more in-depth examination of the topic of working memory and its application to auditory processing and language comprehension by older adults.

Most audiologists are familiar with an early conceptualization of memory in terms of three hypothetical memory stores: (1) a sensory store, capable of storing limited amounts of information for brief periods; (2) a capacity limited short-term store, capable of storing information for longer periods; and (3) a long-term store of very large capacity, capable of storing information for even longer, perhaps indefinite periods (Atkinson and Shiffrin, 1968). Although this conceptualization is overly simplistic, its functional architecture provided a starting point for a more extensive exploration of memory systems and processes over the last four decades.

Tulving (1972) proposed a basic distinction between two kinds of memory systems that are relevant when discussing age-related declines in memory and later went on to develop a more comprehensive typology of distinct memory systems related to research on brain-behavior mappings in cases of normal and impaired memory (Tulving, 2000). Episodic memory and semantic memory are both types of explicit memory. Episodic memory refers to the ability to remember specific events situated in time and place (e.g., the content of a phone conversation one just listened to or the sound of fireworks heard last night). In contrast, semantic memory represents our vast store of knowledge and skills, including the semantic, syntactic, orthographic and phonological structures of our language. Episodic memory is relatively impaired with aging, with declines observed across a wide variety of stimuli (for reviews see Burke and Light, 1981; Smith, 1996). On the other hand, there is little age-related semantic memory impairment (Nyberg et al., 1996), and vocabulary is well preserved and often better in older compared with younger adults (Kemper, 1992).