Abstract

This article advances futures ethnography by examining and demonstrating generative AI’s role in speculative ethnographic practice. Drawing on a workshop set in 2050, where participants explored possible work futures with human and non-human collaborators, we show how ChatGPT shifted from background tool to active participant, co-shaping roles, imaginaries, and decision-making through its discursive interventions. The video camera, too, acted as participant, and together these technologies co-shaped the ethnographic place through their distinct yet overlapping mediations. Through these entanglements, speculation with machines emerges as a methodological practice: ethnography becomes co-constituted through human–machine encounters that stabilize, unsettle, and reconfigure futures, situating futures ethnography as a space for experimenting with generative AI.

Keywords

Introduction

The rapid emergence of generative artificial intelligence (GenAI) poses fundamental challenges and opens up new possibilities for how knowledge, creativity, and the very conditions of research are constituted. This has coincided with growing future uncertainties and an emerging futures-focused research agenda in the social sciences, which treats futures as plural and emergent possibilities (Escobar, 2018; Halford & Southerton, 2023) and acknowledges that the social world is continuously shaped by planning, imagining, and anticipating (Adam, 2023; Pink et al., 2022). In this article, treating the introduction of GenAI as part of a trajectory in the social life of methods (Ruppert et al., 2013; Savage, 2013; Berg, 2022a), we build on these two impulses to examine a key intersection between GenAI, knowing, and creativity, by focusing on its role in speculative ethnographic futures research. In doing so, we explore the possibilities of GenAI as a participant in the dynamic relations of speculative inquiry and present readers with an example of how this might be achieved through the case of the futures workplace.

GenAI poses methodological challenges and opportunities that differ from earlier digital innovations. While previous tools extended researchers’ capacity to gather, sort, and visualize data, GenAI can reason, synthesize, and generate multimodal outputs that at times uncannily resemble the work of researchers themselves. These capabilities have prompted both anxiety (as a threat to established scholarly practices) and enthusiasm (as an augmentation of intellectual capacities) (Eisikovits & Feldman, 2021; Northoff et al., 2022). For instance, social scientists investigating GenAI (Pilati et al., 2024) have raised critical concerns relating to bias, ethics, privacy, and environmental cost, and emphasized how large-scale machine learning systems reproduce risks and inequalities (Crawford, 2021; Lupton, 2025). Others study the architectures and training regimes of large language models (Rossi et al., 2024) and their practical integration into research processes (Søltoft et al., 2024), potential as tools (Bail, 2024; de Seta et al., 2024; Hayes, 2025), and capacity to augment ethnographic practice, text analysis, coding, and data management (Brailas, 2024; Do et al., 2024; Hoy et al., 2023; Omena et al., 2024). Extending these proposals, we emphasize that with critical awareness, reflexivity, and improvisation, GenAI can be trained to create outputs which support researchers in developing and performing speculative future imaginaries (Fors et al., 2024).

To frame our discussion, we draw on two key concepts.

First, Berg’s (2025) notion of conversational choreography builds on symbolic interactionism (Blumer, 1969; Mead, 1934) to highlight how interactions with GenAI unfold as a process of mutual adjustment. Rather than a linear input–output exchange, conversation with ChatGPT can be recursive and performative, where prompts, responses, and interpretations continually shift in relation to one another. This resonates with methodological work that treats algorithms not as passive instruments but as interlocutors capable of shaping the epistemic contours of research encounters (Markham, 2024). ChatGPT is not simply an “algorithm,” but a large language model trained on vast text corpora to predict token associations and fine-tuned to produce linguistically proficient and socially acceptable responses (in this article, we use the broader term generative AI, reflecting the way participants themselves referred to the system). What matters here is how such outputs come to occupy social functions with researchers, and how meaning emerges through the choreography of negotiation, adjustment, and provisional alignment across human and machine contributions. Unlike approaches in conversation analysis that focus on turn-taking structures (Kim et al., 2025; Skantze & Irfan, 2025), our use of conversational choreography highlights the relational and adaptive qualities of engaging with GenAI: how co-researchers can rapidly orient to its outputs, recalibrate their positions, and incorporate or resist suggestions as part of the unfolding practice. Thus, in practice, this means understanding interactions with GenAI as recursive, adaptive, and socially meaningful. As such, GenAI does not possess fixed or autonomous agency, but operates within a relational framework in which its actions, meanings, and perceived capacities are dynamically co-constructed in context. Such a perspective does not disregard the biases embedded in underlying language models and training data but asks how these biases are enacted, negotiated, and sometimes contested within specific research encounters.

Second, Pink’s (2015) concept of the ethnographic place conceptualizes place as an intensity of things and processes in movement with fluid boundaries rather than a static site. We focus on how conversational choreography plays out when situated in the ethnographic place, and interrogate the relational processes through which a field of inquiry is continually made and remade. Thus, we conceptualize ChatGPT as embedded in the ethnographic place, in dynamic and always changing social, material, and imaginative environments, which are inhabited not only by people but by diverse materialities, technologies, and imaginaries (cameras, props, and speculative futures). In this rendering, the concept of conversational choreography is applied “into the wild” rather than unfolding at the interface. By situating the micro-dynamics of interaction within the methodological and material constellations of the ethnographic place, we conceptualize generative AI as an actor, enmeshed in knowledge creation in research, rather than as a tool that is applied to research. In parallel to this, a visual ethnographic approach (Pink, 2021) similarly highlights the situated, interactive, and performative stance through which the camera is always part of the relationship between researcher and participants, which in turn shapes the circumstances of the research. As co-constitutive of the ethnographic place, both GenAI and the video camera enable participants to converse, show, perform, and collaborate in research.

To ground these concepts empirically, we draw on the example of a week-long speculative workshop—the futures workplace—developed in December 2024, in which twelve (human) participants collaboratively imagined, and, in some cases, filmed, a fictional workplace set in 2050. Acknowledging that futures are not only projected through grand narratives but also articulated through localized and situated stories (Collins, 2021; Michael, 2017), we sought to create a setting in which humans and technologies could co-construct possible work futures (rather than a singular future of work). In this sense, we draw on speculative design, where imaginative framings unsettle everyday assumptions and make alternative possibilities rehearsable (Dunne & Raby, 2013), and the sociology of expectations, which shows how anticipations of the future are folded into present practices (Borup et al., 2006). Our use of ChatGPT (model 4o) and video ethnography responded to this dynamic as follows. First, ChatGPT was introduced not merely as a tool but as a participant, variously offering inspiration, collective authority, mediating between the emergent futures performed in the workshop and broader imaginaries drawn from science fiction and public debate, and in doing so co-constituting the dynamics of the ethnographic place. Second, alongside ChatGPT, the video camera, video ethnography, and ethnographic filmmaking reflexively shaped our ethnographic place and practice (Pink, 2021) by foregrounding the experiential, relational, and affective dimensions of work futures, while attending to the improvisatory and uncertain ways in which technologies may be incorporated into them. Third, both ChatGPT and the video camera enabled us to speculate with, rather than about, machines. For example, following an established trajectory in futures research of video ethnography (Pink, 2021) and filmmaking (Gruber, 2023; Salazar, 2017; Sjöberg, 2017, 2020), the researcher-camera instigated, mediated, and participated in conversations and events with other researcher-participants and ChatGPT. We emphasize this intersection between the camera, GenAI, and researcher-participants to ensure our discussion of the role of ChatGPT is reflexively situated in relation to the human and technological processes shaping the research process and highlight the shifting ecologies through which technologies participate in research.

In the following sections, we demonstrate and examine the futures workplace as an experimental site for futures ethnography. We first explain how the futures workplace came about and the processes through which it was made. We then examine the role of ChatGPT in the constitution of futures work roles in futures workplaces and in defining the workplace itself, showing how, alongside the video camera, it became entangled in the constitution of the ethnographic place. We consider the mediating roles of both ChatGPT and the camera, whereby we demonstrate the productive possibilities offered by both technologies in shaping, invoking and enabling imaginaries for possible futures work roles and workplaces.

Staging the Future through the Speculative Futures Workplace Workshop

In envisioning work futures, we evaded the predictable tropes of gleaming, blue-lit robots beside slick, hyper-stylized human figures who glide through their automated environments, optimizing their efficiency, and fulfilling the utopian promises of automation (Berg, 2022b). Exchanging these abstract, generic visions of the future for the situated, material practices through which futures can be staged, we explored what was immediately at hand: two small adjoined rooms with basic meeting-room furniture and a corridor, a collection of props, and our own existing research knowledge about work futures. Our initial invitation to participants was as follows:

Over the days, we’ll consider what this future workspace might look like and the kind of work we envision taking place there. Our only defined goal is to set our sights on the year 2050, imagining ourselves working at the very forefront of digitalisation—whatever that might mean in a few decades (and hopefully, we’ll even call it something entirely different by then!).

We conceptualized the futures workplace as enacted in practice and understood it through Massey’s (2005) notion of place as a continually changing configuration of things and processes in movement. In our dual capacity as participants and researchers, we were part of this ethnographic place, co-constituted with shifting elements such as people, plants, chairs, robots, computers, and even the air. Within this configuration, the camera and ChatGPT played related and complementary roles in shaping the research situations through which ways of knowing about possible futures could emerge. While the camera invoked and responded to dialogues in the workplace and extended ethnographic presence through material traces, ChatGPT’s role unfolded through dialogic responsiveness and adjustment in the moment. The camera is not simply a visual recording device in visual ethnographic research but rather part of research situations and inextricable from researchers’ relationships with participants (Pink, 2021). Its use creates a visual trace of a particular trajectory through fieldwork sites. It records in place, and its recordings become part of the ongoing life of the ethnographic place after fieldwork; a temporality quite different from ChatGPT’s ephemeral outputs, which only persist through their uptake in conversation, documentation, or subsequent use. Thus, we found that reviewing video and reading transcripts created a re-engagement with the field site and extended the temporality of the ethnographic place beyond the moment of the initial ethnographic encounter. In the examples discussed in the following sections, the camera used by Sarah Pink was part of her ethnographic practice and her mode of participation; it actively participated in and documented conversations, while also prompting performative discussions between Martin Berg, other participants, and around his collaboration with ChatGPT. Video ethnography deliberately invited performative engagements between researchers and, therefore, was instrumental in surfacing and making visible engagements with ChatGPT. This established reflexive role of the camera indicated a productive contrast, since while video ethnography methodology is well established, ChatGPT introduced unfamiliar affordances that demanded new forms of negotiation and reflexive adaptation.

We encouraged participants to remain mindful that our futures workplace would be constituted and shaped to some extent by the technologies and materials available to us. This included two different types of robots that would serve as work companions and potential collaborators, an electronic air filter face mask, an air monitor and purifier (linked to possible concerns about future contamination and environmental degradation), a table and chairs, and windows which doubled as interactive surfaces. Thus, we approached the future through a deliberately lo-fi lens, making do with what was available rather than attempting to construct a polished speculative fiction. Participants were invited to prepare for the workshop by reflecting on the hopes and anxieties they would carry into the imagined workplace of 2050. We encouraged them to consider a set of core questions that emerged from our collective research: How might these hopes and anxieties shape their ways of working? Who might their collaborators be: more-than-human entities, less-than-human actors, AI systems? What would those collaborations entail? What environmental and technological conditions would frame their work? What forms of maintenance and repair would be necessary to sustain the workplace? And what kinds of labor might still be required of humans in a world increasingly mediated by AI and automation?

The participants were academics including PhD candidates, Research Fellows, and Professors, actively involved in projects focused on digital and work futures. Each participant brought with them theoretical commitments, conceptual categories, and discipline-specific academic knowledge and expertise. We collectively took a reflexive approach to our participation in the futures workplace. In doing so, we expanded existing research about how possible futures might be experienced by others to explore our own sensorial, affective, and practical experiences of a speculative futures environment and relations.

The workshop was organized over a week. We first constructed our workspace, using the available props to design its aesthetic and material conditions and establish the practices that might define our future working lives. We considered extreme weather, digital finance, circular economies, and evolving technological infrastructures, which we reasoned would shape both the physical and digital environment of our workspace and the practices that would emerge within it: How might air purification technologies respond to new environmental threats? How would the future of digital finance redefine remuneration and economic agency? How might algorithmic systems influence work rhythms, task distribution, and decision-making processes? Once our workspace was established, we immersed ourselves in the simulation, treating it as a lived experience rather than an abstract scenario. We enacted our imagined work practices, engaged with speculative technologies, and explored the emergent dynamics of our workplace through embodied participation. As we worked and conversed, scenes were staged to be filmed; the camera was not merely a passive recording device but an active technological actor that mediated and framed our engagement with the imagined futures workplace and with each other.

As we constructed the futures workplace, we introduced ChatGPT, mediated by Martin Berg through his tablet. Its role was initially undefined, as a supplementary resource and tool to be consulted for inspiration or clarification. Yet as described later, as we began designing and essaying (Akama et al., 2018) our professional roles within the futures workplace, ChatGPT quickly became a central node in our collaborative process, and would later form the basis for this very article. Thus, ChatGPT’s role extended beyond being a source of information to become a collaborator in world-building, precisely because its outputs adjusted to participants’ inputs and in turn provoked new adjustments in their responses. This unfolding rhythm gave it a form of functional agency through interaction.

Creating Futures Work Roles through Researcher–AI Collaborations

To inhabit the speculative workplace, we began by outlining the roles participants might hold in 2050. This unfolded in three phases. First, participants introduced their own roles, which were taken up and elaborated through ChatGPT’s responses, gradually shifting the orientation of the exercise and culminating in the animation of a humanoid robot that came to figure as a colleague. Second, as the number of roles and voices expanded, ChatGPT was asked to bring coherence by rendering them into more stable professional profiles. Third, these profiles were then situated within a wider workplace setting, where questions of hierarchy, collaboration, and organization were played out.

Phase 1: Participant Sketches and Animated Presence

The roles created in the first phase drew extensively on participants’ lived experiences, disciplinary perspectives, and contemporary socio-political concerns and highlighted urgent issues surrounding class, power, and the distribution of resources, before gradually being expanded and reoriented through ChatGPT’s interventions. For instance, the roles of “Wellness Facilitator” (Jakob Svensson) and “Material Handler” (Andrew Copolov) emerged as reflections on hierarchies within speculative labor environments, assumed positions of limited agency and sought to explore how exclusion and marginalization might manifest in futures workplaces. Those of the “Information Management Specialist” (Robert Lundberg) and the “Librarian” (Bertil Rolandsson) aimed to interrogate how historical knowledge, data, and memory might be curated and controlled in 2050. Taking authority over data and decision-making, they investigated how expertise and information management could act as sources of power in a technology-driven workplace. A recurring theme in the roles created was the tension between autonomy and dependence, whether in relation to AI systems, workplace hierarchies, or access to information and resources. For example, Martin Berg’s “Techno-Therapist” questioned what occurs when AI, rather than humans, becomes the focus of care (perhaps to enhance efficiency rather than foster contentment), while the “Human and Non-Human Relations Lawyer” (Sara Leckner) examined potential legal ambiguities arising from interactions between human workers and AI-driven systems. As the conversation developed, ChatGPT was gradually introduced, accessed through Martin Berg’s tablet, and mediated via his interventions. At moments when a role description stalled, or when a collective discussion raised a question that none of us could resolve, participants would voice their queries aloud and direct them to Martin, who then entered them into ChatGPT.

A turning point came when we encountered a broken humanoid robot, initially imagined as a retired sex robot. Its worn surface and sticky skin were unsettling, too corporeal for an otherwise speculative environment. Rather than treating it as a malfunctioning prop, we turned to ChatGPT to help reimagine what such a figure could become in a workplace of 2050. This moment revealed how GenAI could act as a collaborator, actively shaping speculation while imposing limits on how futures could be envisioned.

When ChatGPT was asked to narrate the robot’s biography, the inert figure was transformed into Seraphina, a character with a past, a role, and a personality. What had provoked discomfort now became a focal point around which participants to some extent oriented their own roles. As we elaborate below, GenAI anchored speculation in biography, and participants responded as if encountering a colleague rather than a discarded machine. As research in human–robot interaction suggests, anthropomorphism can be an effect of interaction rather than an inherent property of artifacts (Duffy, 2003). Seraphina’s agency was not given in advance but emerged, as social scientists would expect (Suchman, 2007) in practice, as the interplay of human contributions and AI elaborations staged the robot as a colleague. This scene (see Figures 1–4) played out in the office space as Martin Berg told a group of participants:

Okay, so I asked ChatGPT . . . to help us to write a job description for our robot. And I uploaded a photo of it. And we have a name for the robot, she’s called Seraphina, apparently. . . she’s a Generation 5 humanoid service robot. She was constructed in 2037 by Singapore Robotics Collective.

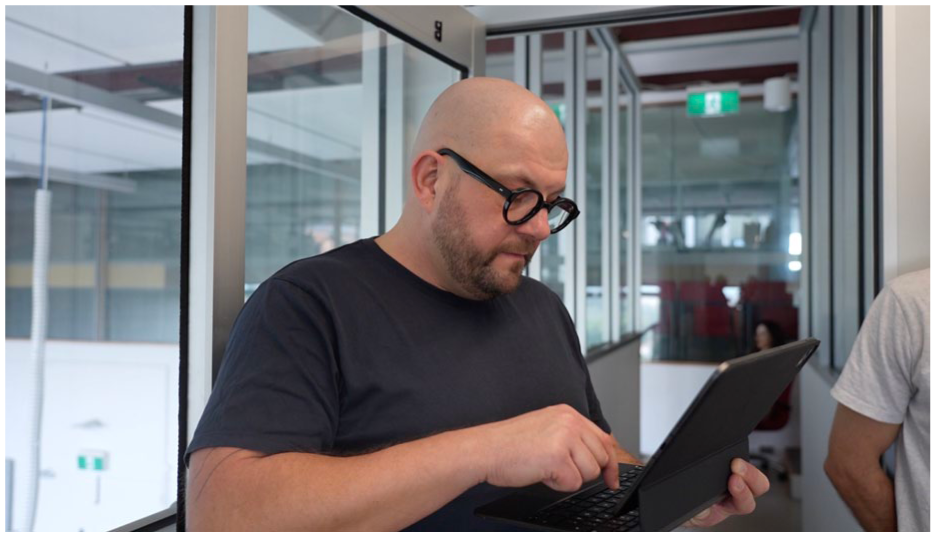

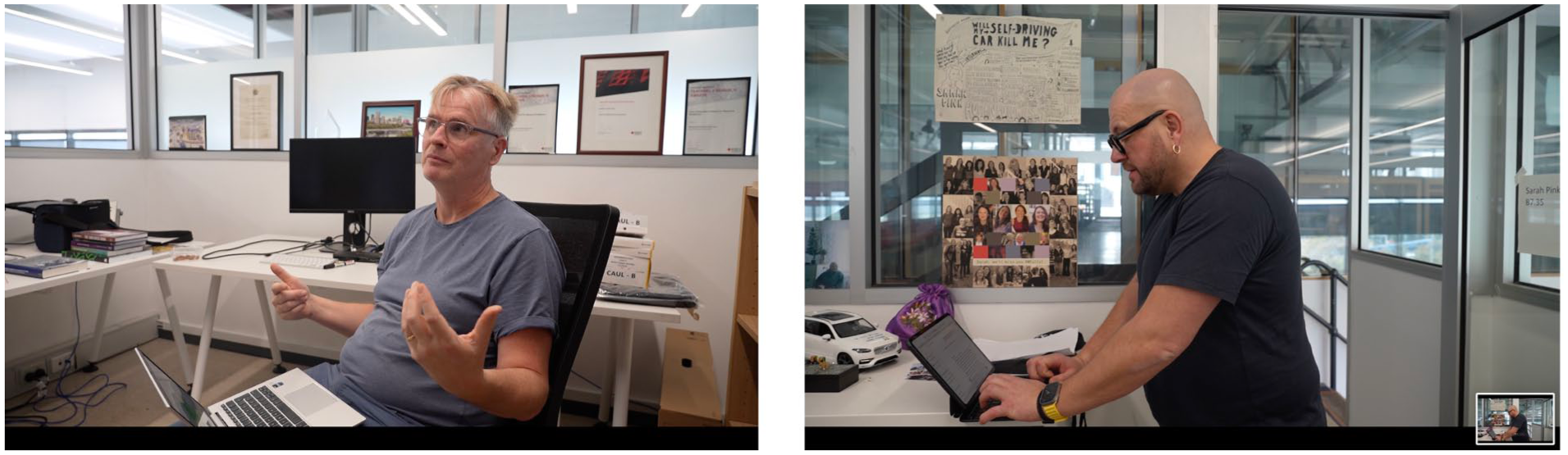

Martin Berg (left) reads to members of the futures workplace from the ChatGPT text (Andrew Copolov stands to Martin Berg’s left).

Seraphina, still in her usual clothes, in the background.

Maria Engberg and Zane Pinyon, members of the futures workplace, listening to Martin Berg.

Martin Berg keys in another question to ChatGPT.

Martin Berg was informed by ChatGPT that the photo of the robot, along with earlier information about our setting, had been processed to situate her within a broader context: “Based on Seraphina’s aesthetic and the workplace context—a future hub where diverse professions converge—here’s an updated description that integrates her current role, environment, and background.” Martin Berg conveyed this description, almost like a CV, to the group, telling us that: “She started in 2037 as a companion service specialist. That’s GPT language for sex robot . . .”

He read out that “Seraphina’s early career involved fostering deep, meaningful connections as a premium companion robot. This world instilled her with unparalleled empathy and an ability to intuitively respond to conflict’s human emotions” and after a “three-year transition period,” the text said that “after advocacy efforts within Melbourne’s robotic workforce union, Seraphina opted to transition into hospitality.” Martin Berg continued to read that “The reprogramming process was collaborative, retaining elements of her original emotional matrix while adding skill sets in operations management and community engagement,” and “since 2048 until now, it’s a concierge specialist. And since joining the Melbourne Professional Ground, Seraphina has transformed how humans perceive humanoid robots, not merely as tools, but as collaborators and cultural bridges.”

The effect of this narrative was immediate. What had previously been loose fragments of speculation began to coalesce into a more defined and inhabitable future. Seraphina became a pivot around which participants oriented their own roles, no longer just “the robot” but a figure situated in a broader social and professional context. This moment of animation shifted the speculative exercise from a loose set of fragments toward a more coherent narrative scaffold, inviting participants to orient themselves in relation to Seraphina as if she were a colleague rather than a discarded machine.

Phase 2: Standardization of the Speculative

Where Seraphina’s biography had anchored speculation around a single figure, ChatGPT’s subsequent interventions worked at a different scale, transforming diverse role descriptions into professional profiles that imposed a shared style and organizational logic on the speculative workplace. Through this process, echoing recent findings that large language models often narrow diversity by moving toward homogenization in idea generation (Anderson et al., 2024), individual voices were gradually drawn into a more uniform register. ChatGPT determined that Seraphina:

[S]erves as the cornerstone of hospitality and connection in this 2050 professional hub. She’s a humanoid with a nuanced understanding of human interaction. She not only supports guests, but also fosters collaboration . . . among the diverse array of professionals who frequent the space.

ChatGPT not only emphasized Seraphina’s social skills but tied them directly to her physical characteristics: a sleek design, approachable demeanor, and emotionally intelligent programming that made her indispensable as a cultural bridge in a hub blending technology, creativity, and humanity. It gave her responsibilities for community liaison, resource navigation, and curating experiences—qualities that anchored her within the speculative workplace as more than a decorative prop. In parallel, ChatGPT began to rework the participants’ own job descriptions, transforming accounts written in different registers (from speculative fragments to humorous sketches) into standardized professional profiles.

This process foregrounded how GenAI contributes not only by generating content, but by anchoring speculative figures, like Seraphina, within socially legible roles, allowing us to relate to them, work with them, and reflect on ourselves in the process. The vividness and coherence of the description had a noticeable effect on the group. It lent Seraphina a sense of legitimacy and narrative weight that made it easier for participants to imagine their own roles in relation to her. Several participants began referring to her not just as “the robot,” but by name, and started using language from the ChatGPT description, such as “cultural bridge” or “concierge” (but sometimes also “the sex robot”), in their own role explorations.

Therefore, what began as a writing aid quickly became something more: a mediator of professional identity and organizational logic. However, not only did ChatGPT define Seraphina as a participant, it also evolved beyond its initial function as a tool for generating text. It became an active participant itself, in shaping both individual roles and the structure of the imagined organization. This shift emerged through an interactive process in which Martin Berg asked ChatGPT to work on the job descriptions that participants had been asked to write. ChatGPT synthesized these responses into unified descriptions that, moreover, established coherence across the imagined workplace by identifying connections between individuals, suggesting alignments and tensions that helped define the hub’s organizational logic. This process positioned ChatGPT as an agent of standardization and mediation, as exemplified in the following two cases.

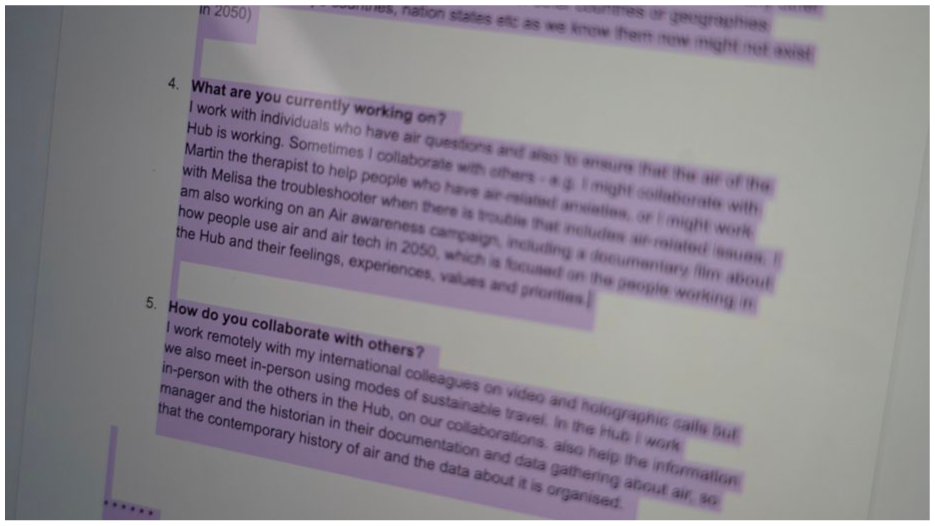

Martin Berg, as the “Techno-Therapist,” was framed as a specialist in addressing the emergent socio-technical selves of machines, offering care to AI entities exhibiting behaviors such as loneliness, anxiety, or self-sabotage. His biography, generated by ChatGPT, positioned him at the intersection of human–machine relations. The initial description drafted by Martin Berg was expansive and speculative, emphasizing both “socio-technical selves” and therapeutic care for machines in playful detail (inspired by Berg, 2025). ChatGPT reformulated this material into a more concise and standardized biography, retaining the central ideas but giving them a more professional and authoritative tone, which strengthened coherence but reduced some of the speculative openness of the original text. He was tasked with maintaining equilibrium in a workplace where technology was not merely instrumental but imbued with relational and affective qualities. Similarly, Sarah Pink, who became the “Air Worker,” was described as a specialist in monitoring and maintaining air quality within the hub. She sent Martin Berg her responses to the position description questions (Figure 5). In this way, ChatGPT also subtly redistributed authority, deciding what counted as a role, a connection, or a system. Its interventions shaped both how participants saw themselves, and how they saw each other, as they began reflecting on how their roles intersected, not just in writing, but in how they moved through the shared space, enacting, questioning, or resisting the connections ChatGPT had suggested.

Sarah Pink’s own text on her laptop—highlighted by Martin Berg, who is cutting and pasting it from Sarah Pink’s Google Doc.

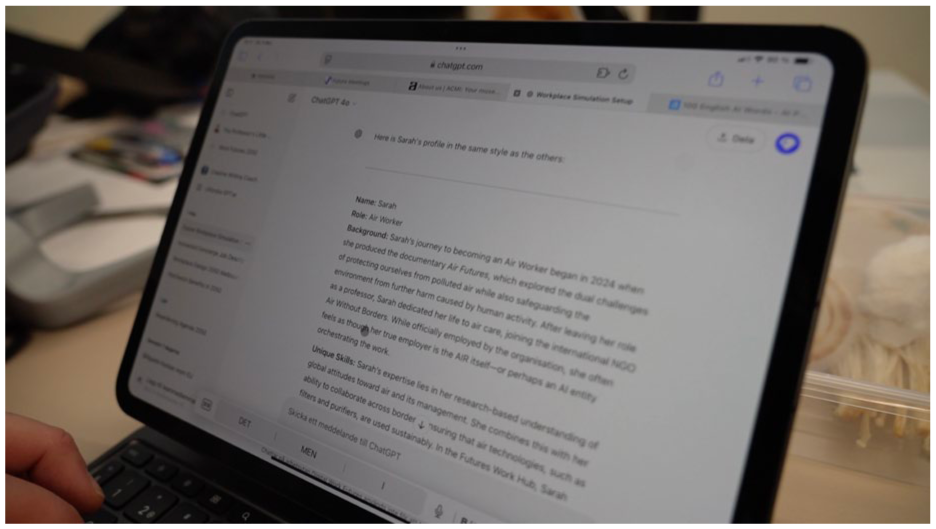

Sarah Pink’s case also brings the role of the camera into view, as she filmed the process as ChatGPT’s responses played out (Figure 6), asking: “So what does ChatGPT have to say? Let me see if there’s some text.” Martin Berg explained it “transforms your answers to the five questions into this standardized coworker description with background, unique skills, current focus, collaboration style; then, the job description and the contextual placement in the hub, as well as delivering a prompt for a visualization.” He quoted the latter as:

Sarah Pink could be depicted in a futuristic setting surrounded by air quality monitors and purifiers with holographic projectors of global air data. Her attire reflects her environmental focus, perhaps incorporating designs inspired by airflow and sustainability. The scene might use soft . . . blues and greens to evoke the essence of clean air and environmental harmony.

Martin Berg’s screen with ChatGPT text representing Sarah’s profile.

Sarah Pink’s biography framed her as having transitioned from academia to applied work in environmental data systems, reflecting broader concerns about climate instability and the increasing intertwining of technological infrastructures with human survival. In ChatGPT’s rendering, her expertise was situated within a network of air-monitoring technologies, government policies, and workplace sustainability measures, highlighting how environmental conditions were deeply embedded in the speculative workplace’s design. Sarah Pink responded by developing new ideas for the workplace environment, which she followed through on later: “That does also suggest that it could be really interesting to use some of these windows and things for us to have like informational reports coming through.”

Thus, ChatGPT’s reworking of contributions not only prompted further ideas but also gave them a more stable form, gently reshaping how roles were positioned within the workplace that was taking shape. Narrative standardization became a performative device, stabilizing a speculative setting that had until then remained fluid.

Phase 3: Constituting and Contesting the Workplace

In the third phase, the speculative workplace itself came into focus as ChatGPT’s interventions extended to influencing the organization’s definition, its name, and its codes of practice. Simultaneously, it was situationally embedded and improvisational, mediated through Martin Berg, and unfolded in ways that directly shaped the character of the workplace and the scenarios imagined within it.

For example, ChatGPT became involved in naming the workplace in a sequence where Martin Berg told members of the group, “ChatGPT suggests that we should call ourselves the Patchwork.” Melisa Duque responded immediately with a social media type “Like” (Figure 9). Martin Berg continued: “Yes, with the tagline, stitching the future together. And this is how ChatGPT describes our work.” He quoted from the ChatGPT text:

The Patchwork is a collaborative hub addressing the challenges of 2050, including climate adaption, social inequality and the ethics of advanced technology. By combining diverse roles such as sensory tailoring, technotherapy, storytelling, and climate resilience, it creates systems that prioritize care, innovation and collaboration. The hub serves marginalized communities, local stakeholders and policymakers while providing fair compensation and support for its members. Its mission is to prototype a sustainable and inclusive model for the future of work and collective problem solving (ChatGPT).

The Patchwork suggestion emerged in response to a statement shared earlier in the session: “This is some sort of hub in the future. Where different people with different professions come together. We are trying to figure that out.” Interpreting the workspace as a convergence of diverse skills, experiences, and social positions—many of them non-traditional, marginal, or care-oriented—ChatGPT’s patchwork metaphor resisted uniformity. A patchwork is imperfect, layered, hand-made and often from what is available. It honors difference and interdependence, making it a fitting name for a collective attempting to navigate the fractured realities of 2050. As the participants’ conversation unfolded, and was simultaneously followed and anticipated by Sarah Pink through the camera, ChatGPT became entangled once again with their efforts to define the futures workplace.

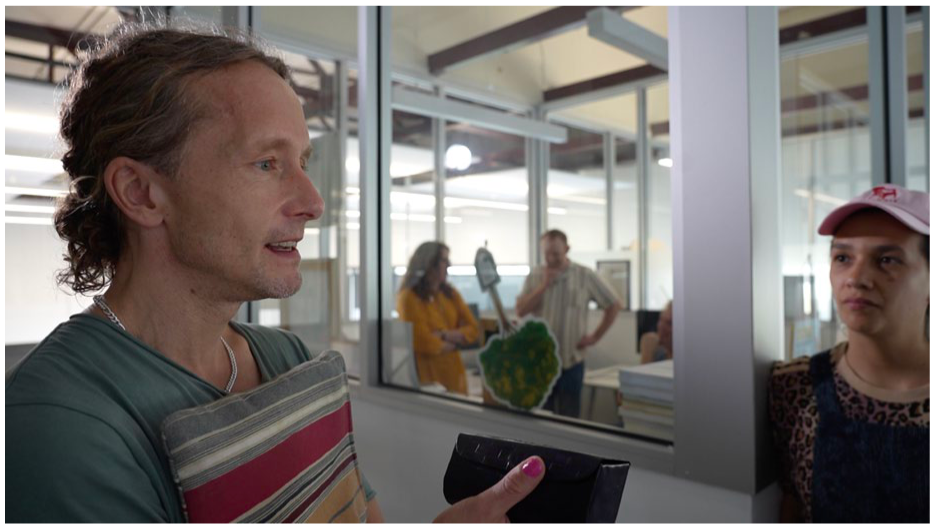

Jakob Svensson (Figure 7) questioned ChatGPT’s mention of a members’ club: “So this is the members’ club” not a place “you can walk in from the streets and like a media co-working space?” In response, Martin Berg probed: “. . . should we ask. . .,” to which Jakob Svensson continued: “I mean, I don’t know. Should we? What do you think? Is it a members’ club?” Sarah Pink then added, “What purpose does it actually really have, and is it that kind of dystopian workplace where we actually all come to it, and all we’re doing is sustaining the workplace rather than actually really doing jobs?” Martin Berg replied, “It actually says that,” and Sarah Pink followed up, “So many of the people who work here are all about sustaining the workplace already, that’s all we do, there’s nobody doing any work.”

Jakob Svensson discusses the futures workplace as a members’ club.

This exchange illustrates how ChatGPT catalyzed exploratory discussions within the group. Similarly, when it reformulated individual role descriptions into more standardized professional biographies, participants debated whether these portrayals captured their intentions or whether something important was lost in the process of standardization. Thus, ChatGPT’s interventions directed attention to the underlying logics of the speculative workplace to prompt substantive reflections from the human participants.

Martin Berg elaborated that “ChatGPT says that it’s more than a members’ club,” reading “The patchwork is a collaborative hub that operates as a hybrid space. Part professional community, part innovation lab, and part social support system.” “Interesting,” commented Martin Berg, before continuing reading “like a traditional members’ club, it focuses on purposeful work addressing global challenges and fostering collaboration among diverse individuals with specific roles” and noting “This is as generic and vague as it can be, I suppose.” This observation pointed to a recurring pattern in our engagement with ChatGPT: while its formulations carried the appearance of coherence and authority, they often risked leveling out the specificity of participants’ ideas, translating them into more generic and less situated terms.

Later, Sarah Pink filmed while Bertil Rolandsson, taking on board ChatGPT’s “patchwork” concept, connected it to the characteristics of work life in 2050 (Figure 8). Bertil Rolandsson wondered if there could be more tasks for ChatGPT, suggesting to “perhaps ask ChatGPT for some sort of code of conduct . . . How are we supposed to behave at this hub?” and commenting that he imagined “We are pretty much a patchwork of individuals.” Sarah Pink followed up by asking Martin Berg: “do you think that ChatGPT would [deliver a code of conduct]. . . if you put together all of the roles?” Martin Berg, who was attuned to ChatGPT’s possibilities, responded that he had “already written that prompt” (Figure 8). Martin Berg intended to first collect all the position descriptions, to “ask ChatGPT how many people actually work in the workplace just to make sure that it’s sort of taking into account all of them” and then ask it to “Now help us to understand why we work together. . . . Why do we gather in 2050?.” By structuring workplace interactions and reinforcing relational dynamics, ChatGPT assumed an organizational role akin to that of a facilitator and, crucially, could be compared to the camera in its mediating function. Just as the handheld camera extended the temporality of the ethnographic place and shaped encounters through its presence, ChatGPT intervened discursively, guiding how roles and relations were articulated. The metaphor of the Patchwork, and later the request for a code of conduct, illustrated how the GenAI could provide a scaffolding for collective imagination while simultaneously provoking skepticism about vagueness and standardization. Just as with its role in role biographies, ChatGPT’s interventions mediated between coherence and contestation, enabling the speculative workplace to be inhabited not as a seamless fiction but as a site of methodological reflection. Yet these interventions were never simply accepted; they became occasions for negotiation and critique, through which participants reflected on what was either gained or flattened, showing how futures are both generated and contested in practice. In this way, AI emerged as an active collaborator in the ongoing design of our research, its distinct logic of synthesis and suggestion nudging us from loosely improvised interactions toward more patterned forms of engagement, where roles, relationships, and organizational structures began to take shape.

Bertil Rolandsson (left) and Martin Berg (right) discuss and consult with ChatGPT.

Mediating Collaboration: GenAI and the Camera as Co-Participants

As the workshop progressed, ChatGPT’s role became increasingly intertwined with the participants’ evolving interactions. Its responses, shaped by patterns in its training data and its immersion in the workshop’s unfolding dynamics, elicited suggestions ranging from the insightful to the unexpected. Through this iterative process of prompting, responding, accepting, and rejecting, ChatGPT was neither passive nor directive, influencing the speculative scenario while simultaneously being shaped by the group’s practices.

For example, participants sometimes attributed a form of authority to ChatGPT, particularly during disagreements. When conflicting views emerged about how a future organization or workplace might function, ChatGPT was consulted as a “tie-breaker” or to synthesize competing perspectives. This strategy aimed to prevent any single participant’s viewpoint from dominating; by appealing to ChatGPT, an illusion of technological impartiality was created. This rhetorical power was reinforced by Martin Berg’s treatment of ChatGPT as a collaborator akin to a creative consultant or an entity observing and conceptualizing the workshop through a different mode of understanding. Each time ChatGPT produced a suggestion that diverged from participants’ immediate assumptions, it revealed the expectations underpinning the speculative exercise. While some contributions were met with curiosity and explored further, others were dismissed as utopian, bizarre, or misaligned with the envisioned world (such as Jakob Svensson’s questioning of the “members’ club” concept discussed earlier). Yet even in rejection, these moments became productive sites of reflection, uncovering the implicit assumptions guiding participants’ visions of work, technology, and organizational life.

This dynamic was partly a product of the workshop design. Instead of each participant accessing ChatGPT individually, interactions were channeled collectively through a single interface mediated by Martin Berg. Had everyone brought their own laptops or phones, ChatGPT would likely have been encountered more privately and instrumentally, with less need for collective negotiation over its outputs. However, our mediated access, characterized by negotiation and interpretation rather than direct input–output relations, gave GenAI a conspicuous presence and accentuated its role as a common actor whose contributions deserved discussion and contestation.

Treating GenAI as a participant does not imply a naïve anthropomorphism or the assumption that ChatGPT possesses consciousness or agency in a human sense. Rather, participants moved fluidly between recognizing ChatGPT as a computational system and responding to its outputs as contributions worthy of engagement. This balancing act, acknowledging the AI’s mechanical origins while also addressing it as a source of insight, constituted a reflexive methodology. The workshop thereby became both a speculative exercise and method of inquiry, and a real-time demonstration of how collaborative GenAI-human technosocial storytelling and enactment can contribute to shaping a small-scale social world.

This reflexive engagement with ChatGPT was interwoven with the video ethnographic and documentary filmmaking processes, and Figures 1–9 are derived from Sarah Pink’s video footage. Importantly, these interactions with ChatGPT were not simply ethnographic engagements with GenAI but emerged as a series of filmic events within the research process. Filming simultaneously enhanced the event-like status of these interactions, as noted above, making them visible, memorable and allowing us to revisit the experiences and sentiments they invoked. More broadly, like those with ChatGPT, interactions with the camera often intervened in the activities of the futures workplace as participants accommodated Sarah Pink’s or Joshka Wessels’s requests to repeat lines, re-enact gestures, or stand in different places. These interventions sometimes created friction, as the pursuit of cinematic coherence temporarily disrupted the fluidity of speculative engagement. However, like ChatGPT’s contributions, they prompted reflexive engagement. Moreover, reviewing footage after the workshop invited further attention to the subtle, embodied responses to the combination of the camera’s and ChatGPT’s interventions, as nods of agreement, moments of hesitation, laughter at absurd suggestions, and the exchange of approving or skeptical glances (Figure 9), immediately forgotten when experienced live, were remembered.

Melisa Duque “likes” the Patchwork concept that Martin reports from ChatGPT.

These embodied reactions underscored how participants navigated their evolving relationship with GenAI. Both filmmakers and ChatGPT acted as mediators, structuring the qualitative data in distinct yet overlapping ways. Both cinematic and algorithmic modes of mediation served as active agents in shaping and constituting the ethnographic place and its research outcomes as socio-technical participants, not as tools.

Speculating with Machines

In this article, we have demonstrated, through a series of examples, what happens when GenAI becomes entangled in qualitative research practice, not as a neutral tool but as a participant in unfolding encounters. Through the speculative futures workplace workshop, we showed how ChatGPT animated roles and biographies, introduced metaphors such as the “Patchwork,” and mediated disputes. In each of these examples, ChatGPT’s role shifted with participants’ orientations, appearing authoritative in moments of disagreement, disruptive when its suggestions diverged, and generative when its metaphors resonated. However, it consistently enabled the speculative stance that our futures workplace experiment demanded. Placed alongside ChatGPT, the camera reminds us that ethnography can be productively and generatively mediated by machines. The handheld camera directed attention, altered gestures, invited performativity and visualization, and extended the temporality of events by making them available for later revisiting. ChatGPT, in turn, extended the discursive space of speculation, stabilizing or unsettling narratives and reframing conversations. Both shaped the ethnographic place through their interventions, making clear that research technologies are not passive devices but mediators whose effects must be negotiated. With the camera, this is already well understood; by working with ChatGPT in parallel, we reveal a similar dynamic in the textual and conversational register. Whereas the camera intervenes through framing and temporality, ChatGPT intervenes through synthesis, disruption, and metaphor. Taken together, they show that to speculate with machines is to recognize ethnography as co-produced through human–machine entanglements.

For our understanding of the role of GenAI in qualitative research more generally, the implications of our discussion are that the agency of machines only emerges relationally: in how participants oriented to ChatGPT as a tie-breaker or standardizer, or to the camera as a guide to memory and gesture. These effects were not inherent but situated, arising in interaction. The implications for futures social science similarly direct us to the situatedness of how futures are imagined, not in abstraction, but enacted through fragile choreographies of roles, metaphors, prompts, and mediations. Machines can fruitfully participate in this choreography: they condition what can be seen, said, remembered, and pursued. Ultimately, to speculate with machines is to acknowledge that our methods are entangled with them, and that this entanglement, whereby futures are co-produced through contingent, negotiated, and reflexive human–machine encounters, is itself generative of knowledge.

Our experiment involved both imagining work futures and testing the methodological capacities of GenAI. Just as the camera shifts ethnographic practice by mediating what could be seen and recorded, ChatGPT can influence what is articulated, organized, and carried forward. Going beyond asking how futures are invoked or anticipated by using GenAI, we have probed its role in supporting their enactment within a shared setting. Our research suggests that GenAI can, when used carefully, help expand ways of encountering futures by bringing to light new thresholds of imagination, and support focus by narrowing imaginative possibilities in productively reorganizing the flow of interaction. Recognizing this methodological role opens a new discussion, pointing to the need for continued reflection on how research practices themselves are reshaped when futures are co-produced with machines.

Footnotes

Acknowledgements

We thank our futures workplace collaborators, whose participation made the research discussed here possible, while taking responsibility ourselves for the analysis presented in this article: Andrew Copolov, Melisa Duque, Maria Engberg, Sara Leckner, Robert Lundberg, Paulina Noches, Zane Pinyon, Bertil Rolandsson, Jakob Svensson, and Joshka Wessels. We are also grateful to the editors and the two anonymous reviewers for their insightful and constructive feedback, which significantly improved the article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research discussed in this article was funded by the Swedish Research Council as part of the project “Digital Work Futures: Adopting and Adapting to AI-Infused Platforms in the Digital and Creative Industries” (2023-00676) and Sarah Pink’s Australian Research Council Laureate Fellowship “The impact of human futures on Australia’s digital and net zero transition” (FL230100131).

Ethical Approval and Informed Consent Statements

All participants have provided their written consent to participate and be represented in the publication.

Data Availability Statement

Not available publicly.