Abstract

This article explores generative artificial intelligence’s methodological potential in design anthropological futures research, examining how ethnographic imagination extends through speculative artificial intelligence (AI) engagement. Drawing on fieldwork with health care practitioners in regional Australian hospitals, we integrate generative artificial intelligence (GenAI) as both methodological tool and epistemic interlocutor within speculative workshops. Instead of treating AI as a representational device, we approach it as a critical site where imaginaries of work, care, and technology are visualized, contested, and reconfigured. Through participants’ dreams and nightmares, we co-produced AI-generated images unsettling dominant techno-futurist narratives, revealing GenAI’s dual capacity to reproduce cultural bias while opening reflexive spaces for collaborative imagination.

Keywords

Introduction

In this article, we examine the potential of generative artificial intelligence (GenAI) to contribute to the creative process of extending ethnographic research into a speculative exercise within the design anthropological futures workshops (DAFW) method.

In recent years, researchers in the social sciences and human–computer interaction have begun integrating GenAI into qualitative and mixed methods research (e.g., Pattyn, 2025; Sinha et al., 2024; Wheeler, 2025), including co-generating prompts and figures in experimental contexts (Mirowski et al., 2023; Perkins & Roe, 2024). Yet, its epistemological alignment with established ethnographic methodologies remains underexplored. Following Paul Willis (2000), we understand ethnographic imagining as an analytical and creative process emerging through ethnographic research, shaped by the interplay between researcher insights and participants’ lived realities, a process through which ethnography not only analyzes but also reimagines social worlds.

The title Can AI Dream? signals this methodological inquiry: not attributing consciousness to machines but asking how generative systems might meaningfully participate in ethnography’s social and imaginative work. We use dreaming as a heuristic metaphor to explore how GenAI can make visible the entanglement of human imagination and algorithmic patterning (Suchman, 2023b), highlighting the biases and cultural assumptions embedded in artificial intelligence (AI) systems without suggesting sentience or intentionality. Furthermore, in line with Science and Technology Studies (STS) critiques of AI metaphors (e.g., Förster & Skop, 2026), we distinguish our “dreamspace” from AI “hallucinations,” since the latter frames biased outputs as mere flaws in training or prompting, thereby foreclosing analytical attention to the algorithmic and epistemic infrastructures that produce them. Dreams metaphor resonates with anthropological debates that consider how metaphors of cognition and embodiment shape our understanding of technology (Pink et al., 2018; Suchman, 2023b). It invites us to approach GenAI as both an artifact and a collaborator through which the boundaries of imagination, interpretation, and agency can be experimentally reconfigured.

By working with health care practitioners, we examine how speculative methods in ethnography can mobilize generative systems to materialize collective imaginaries of work, care, and technology. Our approach emphasizes both the analytical and imaginative power of ethnographic practice and its potential to reconfigure relations with technology, challenging assumptions that treat AI as a closed, opaque, or exclusively technical domain (Benjamin, 2019; Burrell, 2016; Crawford & Paglen, 2021; Elish & boyd, 2018).

To demonstrate this, we draw on research undertaken within the AUTOWORK project in the Australian health care sector, structured in two main phases: ethnographic fieldwork at Bendigo Hospital in regional Victoria, and speculative futures workshops that extend the fieldwork findings. We address two framing questions: how are health care workers being affected by, and responding to, the changes that automation, digitalization, and robotization bring to their workplaces; and how do they envision their sector’s trajectory in relation to meaningful employment and care futures? We show how we engaged GenAI to co-design speculative materials for the DAFW, situating them as part of a broader design ethnographic process (Pink et al., 2022). These workshops were designed to generate dialogue and prompt unorthodox imagination about digitalization, robotization, and AI in work futures.

Health care represents a critical site for examining the social implications of AI and automated systems because it combines high-stakes decision-making, ethical accountability, and the irreducibly human dimensions of care. Bendigo Hospital, as a regional facility, offers a perspective on how technological futures unfold outside metropolitan centers, where community relations and resource limitations shape innovation trajectories.

The question “Can AI dream?” thus operates metaphorically, not to ask whether AI possesses subjectivity, but to examine whether generative systems can participate in the production of alternative imaginaries. We approach GenAI as both a product of past human knowledge and a medium for co-imagination, one that can reflect and unsettle dominant techno-futurist narratives through its interactions with human creativity and critique.

In what follows, we first elaborate the theoretical connections that ground our methodological approach, focusing on the role of speculative fiction, socio-technical imaginaries, and the politics of technology in health care. We then describe our design ethnographic method and the iterative development of the workshops. The subsequent sections detail how we crafted and deployed AI-generated visuals as critical AI artifacts, objects that provoke reflection on the social, ethical, and emotional dimensions of technological change. We conclude by discussing how GenAI can extend the ethnographic imagining and support worker-centered engagements with technological futures.

AI, Automation, and the Politics of Future Visions in the Work Life

The efficiency paradox haunts health care automation discourse. While AI is routinely portrayed as both uncontroversial and inevitable (Suchman, 2023b), it remains deeply contested (Marres et al., 2024): our fieldwork revealed a profound disconnect between institutional efficiency metrics and care realities. As Munk et al. (2022) observe, the assumption that machines are inherently more efficient than people presumes efficiency itself to be the problem awaiting a technological solution. Yet practitioners’ experiences tell a different story: the emergency physician spending 45 minutes on digital transfer forms while she feels and assesses her patient deteriorates; the nurse hand-writing notes to be faxed because “efficient” systems cannot communicate; the rural clinician driving hours between patients while hospitals invest in predictive analytics. This circular logic—where current systems are deemed to have “solved” yesterday’s inefficiencies while creating new ones that become tomorrow’s AI frontier—recalls Suchman’s (2023b) provocation: “What is the problem for which these technologies are the solution? According to whom?” (p. 4). The answer, our research suggests, depends on whether one measures efficiency in processing metrics or in moments of human connection preserved.

GenAI’s Particular Capabilities for Doing Research

While AI broadly encompasses systems that perform tasks requiring human intelligence, GenAI specifically creates new content—text, images, code—by learning patterns from training data. This creative capacity distinguishes GenAI methodologically: in contrast to analytical AI that categorizes (the traditional domain of algorithmic systems), GenAI produces outputs that represent a fundamental shift from analytical pattern recognition to the generation of novel content through creative recombination of learned elements (Bail, 2024). In our workshops, GenAI’s visual generation capabilities (via Midjourney) transformed abstract anxieties into discussable images, making visible both participants’ imaginaries and what Seaver (2022) notes was never an “obvious” or “necessary” development, the technology’s capacity to generate rather than just analyze, revealing its inherent biases through visible production instead of hiding them in black-box calculations. We conceptualize our Midjourney prompting design as an ethnographic “device” (Estalella & Criado, 2018), producing what we call critical AI artifacts, AI-generated objects that serve as provocations for reflection and dialogue about technological futures.

Whose Political Visions of Work Are Embedded in AI?

The political biases embedded within GenAI were analyzed in the workshops and an explicit part of the methodological approach. While such biases are often difficult to detect within the broader spectrum of algorithmic partialities (Motoki et al., 2024), they directly shape the outputs produced in response to prompts about the future of work. Our ethnographic fieldwork and the DAFW that follows seek to unpack what gets framed as a problem, whose perspectives are excluded in these generated visions, and health care practitioners’ expectations of present and future technology. For the purposes of this article, we establish a somewhat arbitrary distinction between AI and GenAI, though participants in both the ethnographic fieldwork and workshops used these terms interchangeably, a kind of metonymic conflation where their experiences of working with AI, alongside their personal consumption and workshop use of GenAI, occupied the same conceptual and experiential territory.

Understanding how participants perceive and engage with AI proved essential for our methodological approach. This understanding shaped three key aspects of our research: how we crafted prompts for GenAI materials, how we developed more realistic visions, and how we examined algorithmic biases during the workshops. Despite this careful attention to participant perspectives, we discovered a fundamental limitation: GenAI’s depictions of future work consistently failed to capture the complexities of actual health care practice or align with workers’ aspirations for their field. The generated images defaulted to generic technological optimism/pessimism, unable to reflect the nuanced, care-centered futures that practitioners envisioned or even realistic work-life scenarios in health care.

Unlike technological myths that naturalize existing socio-technical orders (Jasanoff, 2015), our critical AI device operates reflexively surfacing hidden assumptions, provoking debate, and catalyzing alternative imaginaries. GenAI introduces a dynamic that makes it distinctive from traditional speculative methods that rely mainly on human imagination: it reflects culturally embedded biases from its training data while simultaneously enabling their critique. When participants see their visions translated through GenAI’s aesthetic and ideological filters, they encounter not just their own imaginaries but also the dominant cultural assumptions embedded in and productive of these systems (Suchman, 2023a). This creates a dialogic space where both human and algorithmic imaginaries become visible and contestable.

Grounding prompts in ethnographic observations ensures the AI outputs retain the complexities of clinical practice. These generated critical AI artifacts then spark further dialogue, producing speculative visions that remain both methodologically rigorous and grounded in stakeholders’ realities.

We connect GenAI with the practice of speculative knowledge, mindful of its technical and cultural entanglements (Seaver, 2022). We do not dwell on GenAI’s affordances, its cost or raw capabilities, which others have explored in depth (see Munk et al., 2022), to focus on our evolving, proactive relationship with critical AI artifacts (in this case made with GenAI). We argue that, when deployed as ethnographic devices in a critical artifact form, generative models significantly extend the reach of our imaginative inquiry, enriching both analysis and collaborative knowledge in ethnographic research. Therefore, speculation acts as a means for asking more inventive questions (Salazar, 2017) to challenge the current state of imagination both in participants and researchers, rather than as a method to deliver new things into the world.

To investigate how these contested imaginaries of automation and care unfold in practice, we implemented a multi-phased study that combined ethnographic fieldwork and speculative workshops (see Pink et al., 2022). Conducted at Bendigo Hospital, this approach allowed us to trace how everyday experiences of automation informed broader visions of technological futures. The following section details how the study was structured, how GenAI was integrated into this process, and how participant insights informed the creation of speculative materials.

Methodology

Research Design Overview

The research unfolded across 6 months and involved 28 participants in six workshops, complemented by 26 ethnographic interviews and sustained participant observation.

Phase 1: Pre-Fieldwork Workshops

The pre-fieldwork online workshops followed exploratory research and discussions with health care workers about the effects of automation, robotization, and digitalization. Three activities were designed to identify key themes and processes of concern, informing the ethnographic stage. As shown in Figure 1, participants accessed the macro-trends exercise and the AI-generated stories through a shared Miro interface.

Miro Interface Used During the Pre-fieldwork Online Workshops, Showing the Structure of the Macro-trends Exercise and the Integration of the AI-generated Stories.

The first activity asked participants to articulate their greatest hopes and concerns for the future of health care. The second, Sensing change—macro-trends and future contexts—invited reflection on how macro-trends—such as telehealth, community care, and Net Zero—might affect health care by 2050. Participants discussed these prompts and ranked them along a continuum from “No change or similar to today” to “Significant change/unrecognizable.”

The third activity introduced AI-generated stories created with ChatGPT (v3.5) to test how narrative bias structured depictions of technological futures. Despite varied and intentionally provocative prompting, the stories consistently concluded with positive adaptation and the reaffirmation of technology’s benefits. Examples of these narrative patterns are illustrated in Figure 2, which shows how ChatGPT resolved even resistant prompts into harmonious technological futures. Even when resistance or failure was requested, narratives ultimately resolved into harmonious integration. This persistent optimism bias revealed a techno-solutionist orientation embedded in GenAI’s training data and narrative templates.

Examples of ChatGPT-generated Stories Used to Test GenAI’s Narrative Biases; Despite Varied Prompts, the Stories Consistently Resolved into Techno-optimistic Futures.

Recognizing these limits, we decided to explore image-based GenAI tools in subsequent phases. We hypothesized that visual generation could better engage health care practitioners—who work in sensorially and materially rich contexts—and that biases in image production might be easier to recognize and critique collectively. The exercise also highlighted the need to ground GenAI outputs in ethnographic insight, as stripped from context, AI narratives risk reproducing dominant imaginaries rather than provoking new ones.

Phase 2: Ethnographic Fieldwork

Ethnographic fieldwork at Bendigo Hospital provided the empirical foundation for the project. Through participant observation, field notes, and semi-structured interviews, we examined how health care workers navigated the promises and pressures of automation, robotization, and AI in their everyday work.

Fieldwork involved following clinicians, unit managers, and support staff across wards, digital services, and administration. We documented both practical engagements with technology and the informal conversations where workers expressed feelings about automation, robotization, and digitalization of their practice and the hospital.

Dreams and Nightmares as Ethnographic and Analytical Framework

During 6 months of ethnographic fieldwork at Bendigo Hospital, a compelling pattern emerged in how health care workers articulated their relationships with technological futures. Julia, a Nurse Unit Manager in the surgical ward, captured this duality when she confided: “I have a recurring nightmare: that we go back to using paper.” This clear image, simultaneously expressing relief at digitalization and delineating anxieties about technological fragility.

After this, during interviews, we introduced two open questions at the end of each session: “What would your dream hospital look like?” and “What nightmares do you have about hospital work?” These questions, initially intended as creative prompts, soon became an important analytical and methodological device.

Participants responded distinctly. Dreams often envisioned technologies that restored time for care, enhanced collaboration, and strengthened the sense of vocation. Many imagined digital systems that could reduce administrative burdens and enable more meaningful contact with patients. In contrast, nightmares expressed fears of dehumanization, loss of skill, and fragmentation of care, which some described as a hospital-sensitive process such as “triage by machines.” Importantly, these anxieties were less about job loss and more about the erosion of professional autonomy and the loss of relational care.

The framework of dreams and nightmares thus offered a language through which participants articulated ambivalence about technological change, oscillating between hope and anxiety. These metaphors captured both the affective and moral dimensions of work futures, providing a conceptual bridge between ethnographic insight and speculative design.

By translating their accounts into AI-generated imagery, we were able to return these visions to participants as tangible artifacts that could be critiqued and reimagined collectively. In this sense, the dreams and nightmares functioned both as ethnographic findings and as catalysts for speculative intervention.

Phase 3: Post-Fieldwork Workshops and Integration of GenAI

After completing fieldwork, we conducted four workshops that translated ethnographic findings into speculative design activities. Participants revisited their earlier themes, particularly dreams and nightmares, and collaboratively generated visual prompts for Midjourney.

GenAI was introduced for two main purposes: to render participants’ imaginaries in visual form and to support reflexive discussion of algorithmic aesthetics and bias. The workshops, therefore, combined creative co-production with reflexive inquiry.

Prompt creation was a collaborative process. Key participants who have been interlocutors during ethnographic fieldwork and researchers drafted, tested, and refined textual prompts in real time via shared screens, adjusting style and framing until the images resonated with the meanings participants wished to convey in preparation for the workshop sections. This iterative process brought together participants and researchers, turning prompt design into an interpretive and relational practice for thoughtfully reflecting on GenAI.

The use of Midjourney also revealed aesthetic and ideological biases. When asked to depict “the future of health care,” the platform frequently generated idealized or dystopian imagery—gleaming hyper-technological hospitals or post-apocalyptic ruins—rooted in Western science fiction conventions. Rather than treating these distortions as mere technical flaws, we used them to provoke dialogue and scrutiny about how AI’s visual training data reproduces dominant cultural assumptions (Crawford & Paglen, 2021; McQuillan, 2022).

Through this process, we developed what we term critical AI artifacts: AI-generated images that materialize both the potential and limitations of generative systems. These artifacts served as shared objects around which participants could articulate, compare, and negotiate their visions of technological futures.

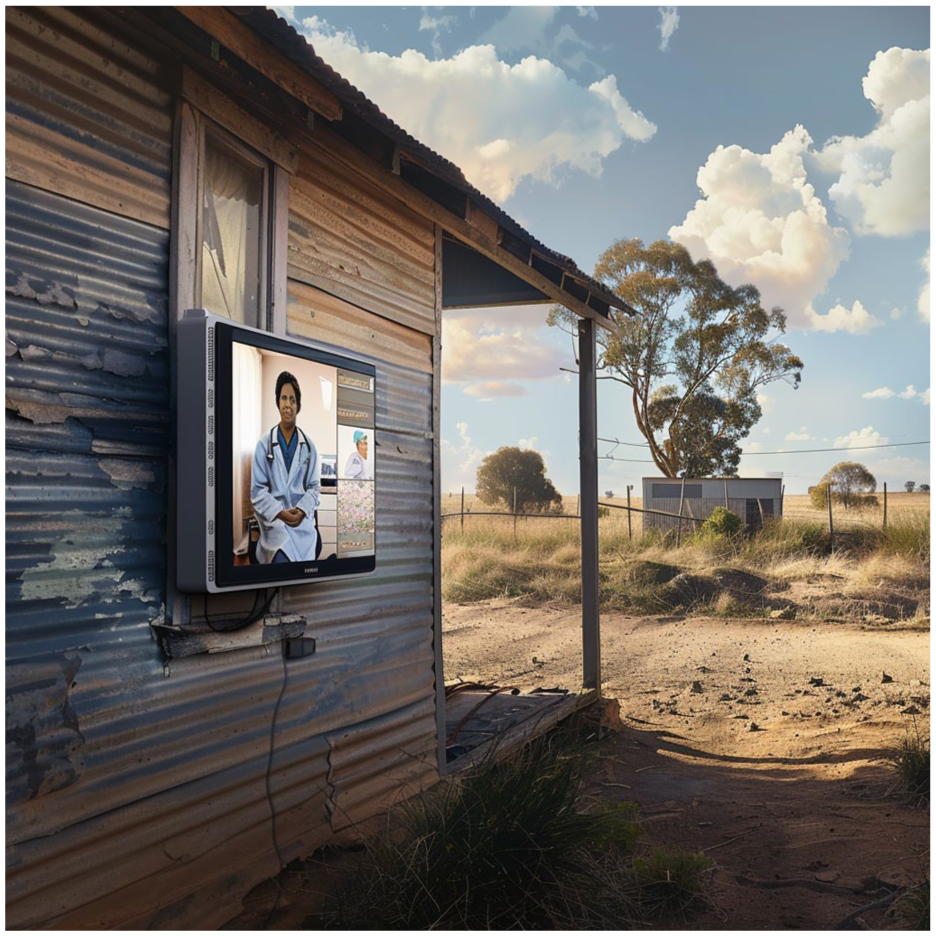

During discussions, participants compared utopian and dystopian image sets along an axis of desirability and likelihood. Rural telecare scenarios were generally perceived as desirable and plausible, while depictions of fully automated hospitals were viewed with skepticism. Participants emphasized the importance of technologies that augment rather than replace care tools, which sustain the social and relational aspects of work, rather than intensifying efficiency pressures.

A rural community nurse offered a clear image of her ideal technological future during a workshop, one that diverged sharply from centralized, high-tech hospital visions. “Telecare shouldn’t be about a doctor saying hi on a screen,” she explained. “It should be about proper assessment tools I can use in someone’s living room, with results the specialist can see immediately.” She described her current reality: driving hours between patients, unable to afford the fuel costs much longer, and watching rural health care workers leave for city positions. But her comments prompted a participant’s shared vision for the future that was surprisingly optimistic: “Imagine if technology helped us create care networks where I do the hands-on assessment with portable diagnostic tools, the specialist guides me remotely, and community members are trained to provide follow-up support.” She envisioned technology not replacing human connections but supporting them where today they are difficult to establish: “The expertise stays with clinicians, but care becomes something the whole community participates in. That’s using technology properly, to bring us together, not to put fancy things where aren’t needed.”

This participant’s vision—grounded in the material realities of rural health care and imagining radical reconfigurations of care networks—exemplifies how the workshop’s critical AI artifacts became catalysts for articulating alternative technological futures that deviate from both techno-utopian fantasies and dystopian inevitabilities.

Analytical and Ethical Framework

The iterative and reflexive design of this research demonstrates how ethnographic and speculative methods can be integrated to investigate socio-technical imaginaries. The pre-fieldwork workshops exposed the narrative biases of text-based AI, the ethnographic stage grounded the inquiry in lived experience, and the post-fieldwork workshops reintroduced GenAI as a tool for collective imagination and critique.

Our methodological approach draws on Estalella and Criado (2018), who conceptualize the ethnographic device as a material and relational configuration through which research questions are enacted and reconfigured. In our study, GenAI operated as such a device, an artifact that engendered reflection, exposed hidden assumptions, and enabled participants to engage with emerging technologies from within their own professional and ethical frameworks. Following Suchman (2023b), we also regard this as a practice of reflexive accountability, in which the materiality of AI systems and their situated enactments become integral to the ethnographic encounter.

The approach further aligns with Pink and Lanzeni (2018), who advocate a future-oriented ethics grounded in relational accountability when engaging with digital and automated systems. Participants were actively involved in all stages, ensuring transparency in data generation, storage, and potential reuse. This collective and reflexive stance allowed both human and machine agencies to be examined as co-constitutive in the process of imagining technological futures.

Generating Dream and Nightmare Scenarios: Toward the Critical AI Artifact

This section outlines the conceptual and methodological process through which we transformed ethnographic insights into speculative materials using GenAI to co-create dream and nightmare scenarios of health care futures. We describe how narrative structures from science fiction were employed to shape visual prompts and how these were used to engage participants in critically reflecting on the social, ethical, and emotional dimensions of technological transformation. In doing so, we introduce the concept of the critical AI artifact as a methodological device that both reflects and disrupts dominant imaginaries of automation and care.

The AI featured in the workshops in two principal ways: first, as a generative tool for producing prompts and visual materials that opened space for participants to reflect on and re-imagine their health care futures (GenAI); and second, by exposing the opaque side of AI training data, design choices, and institutional contexts (Seaver, 2017) as well as imaginaries of intelligence (Suchman, 2023a) during the workshops discussions. These dynamics offered a medium through which participants could articulate ethical concerns, emotional responses, and—most significantly—their imagined experiences of working within technologically abundant environments. Instead of focusing solely on identifying the socio-technical imaginaries underpinning these visions (Jasanoff & Kim, 2009), we directed attention to how utopian and dystopian futures were narrated and visualized through culturally embedded storyworlds of science fiction (Lindley et al., 2015; Moore, 1990; Roig, 2025).

A key aspect we aimed to address was the AI imaginary at the core of digitalization and robotization policies, which often assume the inevitability of AI’s integration into future work environments (Bareis & Katzenbach, 2022). From an anthropological perspective, this entailed interrogating how participants’ storytelling reflected and resisted dominant techno-futurist narratives, revealing AI not as a technical inevitability but as a socially constructed vision of progress shaped by institutional expectations, professional anxieties, and situated encounters with technology. As Markham (2021) sustains, speculative thinking is frequently constrained by the material and symbolic conditions of the present, making it difficult for people to imagine futures beyond dominant logics. By situating participants’ imaginaries within the broader context of their everyday work experience, and by deliberately drawing on familiar aesthetics and tropes from science fiction, we sought to challenge deterministic assumptions. The use of recognizable elements created a sense of familiarity—the comfort of the known—while introducing unsettling future scenarios designed to provoke thoughtful responses.

Co-creating With GenAI and Addressing Bias

Drawing directly on ethnographic findings, we used AI to translate participants’ insights into visual prompts. Their daydreams and nightmares served as conceptual entry points for the speculative exercises and guided prompt development. To carry these ideas into the DAFW, we constructed visual prompts in Midjourney using the science fiction concept of possible worlds (Le Guin, 1979): internally coherent realities different from our own yet comprehensible on their own terms, which invite the question “what if?” and thereby allow the limits of the present world to be rethought.

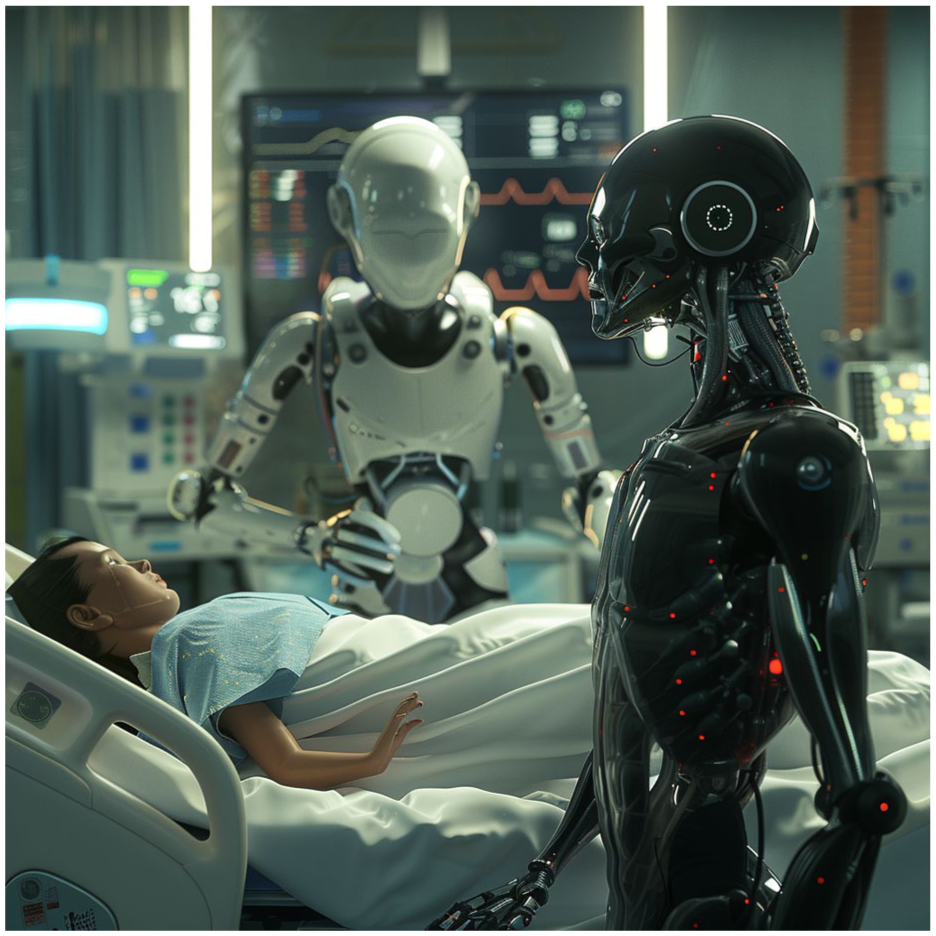

Through iterative prompting and real-time collaboration, researchers generated images in varying tones and levels of abstraction to stimulate discussion. We found solar-punk and post-apocalyptic imagery particularly compelling, weaving mundane hospital experiences with speculative elements. The storytelling deliberately fused utopian and dystopian narrative arcs drawn from participants’ discourse on automation, robotics, and AI. This process also revealed the visual biases characteristic of generative systems: retro-futuristic tropes such as chrome robots or desolate high-tech ruins, rooted in culturally biased datasets that privilege hegemonic imaginaries while excluding care-centered visions (Campolo & Crawford, 2020; McQuillan, 2022). We made these biases explicit, using them as discussion points during the workshops.

Each prompt combined three components: visual description, aesthetic style, and technical parameters. For instance, we generated a series of visuals depicting “a telehealth appointment in rural Australia in 2050,” which participants used to discuss infrastructure constraints, community relationships, and the limits of centralized hospital-centric visions (see Figure 3). The resulting images highlighted both the creative potential and the aesthetic limitations of GenAI. The uncanny distortions of human figures became a point of reflection, particularly where prompts lacked specific genre markers. Figure 4 shows an example of these distortions, which became a productive point of reflection during workshop discussions. By foregrounding these distortions, participants were able to discuss what kinds of bodies, relations, and technologies the AI imagined and what these representations revealed about embedded cultural assumptions. Different from static science fiction film clips or pre-existing illustrations, the collaborative and iterative nature of GenAI image generation allowed participants to see their own visions transformed in real time, creating a dialogic space where the technology’s biases became visible through the act of creation itself.”

Midjourney-generated Image of a Telehealth Appointment in Rural Australia in 2050, Used in the Workshops to Elicit Discussion about Decentralized Care, Infrastructure Gaps, and the Everyday Realities of Remote Health Care.

AI-generated Visual Depicting a Post-apocalyptic Health Care Scenario; Note the Distorted Human Figures, Which Became a Point of Discussion Regarding Algorithmic Aesthetics and Bias.

Presentation and Interpretation of Two Scenario Images

To anchor the discussion, we introduced two contrasting image sets:

AI-generated “Dream” Scenario Representing an Imagined Future of Health Care in Which Technologies Support Decentralized, Community-centered Care and Collaboration Rather Than Replacing Human Relationships.

These were positioned along an axis of likely/unlikely and desirable/undesirable outcomes, inviting critical interpretation. Participants responded differently to the scenarios: rural telecare was viewed as plausible and positive, while hyper-automated hospitals were met with skepticism.

Deepening Insights Through Dystopian Resonance

Images within Scenario 1 portrayed technology embedded within acts of care: community robotics, telecare in rural settings, and automation supporting clinical work. Reactions were mixed: decentralized and community-oriented visions were generally embraced, while highly robotized hospital scenes provoked ambivalence. This revealed a spatial tension in how future care technologies are imagined.

In contrast, Scenario 2 provoked more immediate and visceral reactions. Although often categorized as unlikely futures, these dystopian visions resonated strongly with participants’ existing anxieties and mirrored concerns expressed during fieldwork. Figure 6 shows one such nightmare scenario, which participants used to articulate concerns about disempowerment and the loss of relational dimensions of care.

AI-generated “Nightmare” Scenario Used in the Workshops to Materialize Fears About Over-automation, Dehumanization, and the Erosion of RelationalCcare in Hyper-technologized Hospital Environments.

Clinicians frequently emphasized that future technologies should not only deliver efficiency but also sustain the social fabric of care. “Technology,” one participant explained, “should be in the background and make it easier for us to connect on a personal level.” Others envisioned tools that could strengthen community-based care, such as remote diagnostic systems capable of delivering results in real time for patients in isolated areas.

During our workshop, an emergency physician shared a moment that crystallized the gap between technological promise and clinical life. “Last month, we needed to bring a critical patient from Sydney,” she explained, her frustration evident. “I spent forty-five minutes filling out digital transfer forms, ticking boxes, entering the same information into three different systems. The patient was deteriorating while I was typing.” She paused, then added with a bitter laugh: “All those details, all that technology, if we just had a button on the wall that we could press and get them here, that would be a dream.” Her story revealed how current “efficient” digital systems often create barriers to what she saw as a priority: immediate, personal care. “The technology should disappear into the background,” she concluded, “not stand between us and our patient.”

These discussions revealed that participants’ fears of over-automation were rooted not in opposition to technology itself but in concern over losing the social and relational aspects of work, aspects linked to current processes not necessarily driven by technology and related more hospital mundane processes such as budget cuts, triage time allocation, and, particularly, the notion of efficiency sustained and enacted by managerial levels (Chabrol & Kehr, 2020). They imagined futures in which technology supported connection and collaboration instead of replacing them.

A senior nurse’s observation during the digital strategies workshop exposed a striking contradiction in health care’s technological transformation. “We talk about AI and predictive analytics,” she noted, “but we’re still transferring critical patient information by fax. Yesterday, I hand-wrote discharge notes that were faxed to a rural clinic.” When asked about her vision for the future, she was clear: “I don’t need robots or AI making decisions. I need basic digital infrastructure that actually works, where a referral doesn’t take three systems and two weeks to process.” Her comment sparked vigorous discussion about how the push toward advanced AI overlooked fundamental digital gaps. “People believe predictive analytics is much more accurate than it actually is,” she warned, “but meanwhile, we can’t even share basic patient records electronically between departments.”

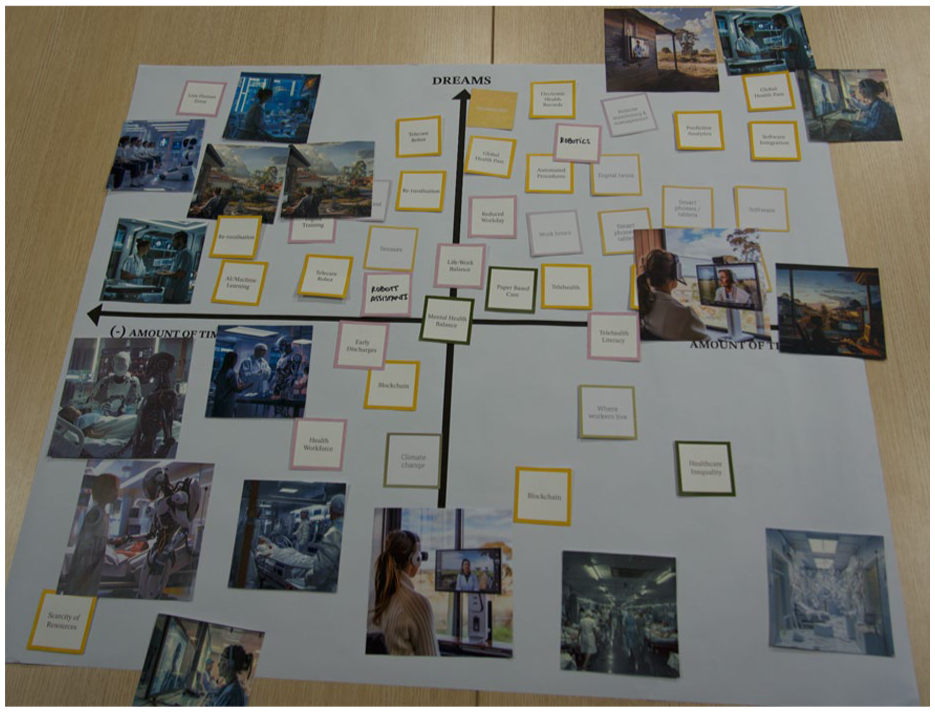

The workshop’s dystopian artifacts, therefore, functioned not as warnings about potential futures but as criteria tools, enabling the articulation of accumulated frustrations and unfulfilled promises that constitute health care workers’ current technological experience and inform their visions of what technology should and should not become. As Figure 7 illustrates, participants inscribed their own interpretations, critiques, and alternative visions directly onto the printed materials, transforming the AI-generated scenarios into situated, collective reflections on care futures.

Photograph of Workshop Materials Produced at Bendigo Hospital, Documenting How Practitioners Annotated, Rearranged, and Re-worked the AI-generated Scenarios to Articulate Situated Visions of Future Care.

Situated Speculation: Ethnographic Imagining and the Politics of Technological Vision

We conclude this section by reflecting on the epistemological value of these speculative interventions. Our approach aligns with long-standing critiques of technological determinism and calls for grounded, relational methods that engage with the tensions technologies produce and the ways people navigate them (Baym & Ellison, 2023). From an anthropological perspective, this involves not only examining people’s discursive accounts and lived experiences but also exploring the very technologies through which people imagine (Sneath et al., 2009) as methodological tools that provoke and make sense of situated imaginaries of the future.

Hospitals, as focal points for both care workers and the health industry, have become central to geopolitical speculation about the future of medicine, markets, and labor. Yet digitalization, automation, and robotization are often championed as solutions by policymakers and strategists, with little input from clinical staff (Chabrol & Kehr, 2020). Ethnographic research reveals that the problems these technologies claim to solve are often defined without the participation of those most affected. Speculation is deeply embedded in how hospital technologies are envisioned but frequently constrained by binary tropes of utopia and dystopia (Jasanoff, 2015). Through AI-generated visuals, we opened space for imagining futures beyond these binaries. The workshops enabled participants to articulate how their anxieties about future technologies are entangled with their current experiences of work and positioned GenAI not as a solution or inevitability but as a tool for collaborative world-making. During the workshops, several clinicians observed that the perspective of AI-generated images often felt detached from the situations they portrayed. They suggested generating images from the clinician’s point of view, allowing participants to relate more closely to the depicted scenarios. This observation triggered a wider conversation about how clinicians are always positioned in relation to the patients being assisted by technology, even in speculative, utopian, and dystopian futures derived from their own experiences and imaginaries.

Discussion: Can AI Dream?

The question “Can AI dream?” is not a provocation about machine consciousness or the advent of general AI. Instead, it asks whether GenAI can meaningfully contribute to human-centered, speculative, and reflexive knowledge-making.

Extending Ethnographic Imagining Through Critical AI Artifacts

We approached AI-generated visuals as provocations, tools through which the complex social, emotional, and institutional textures of health care work could be reimagined. The prompts were carefully crafted in continuous dialogue with fieldwork findings, particularly with themes and questions that had remained difficult to articulate through conventional ethnographic methods. Visual prompts informed by science fiction tropes enabled participants to engage both affectively and analytically with automation, robotization, and AI, becoming tools for reconfiguring familiar narratives and focusing on alternative visions of human–technology relations rooted in health care practitioners’ everyday ethics and aspirations.

A distinctive feature of our approach was the deliberate reflection on the social and cultural assumptions embedded within the training data of GenAI platforms like Midjourney. This reflexive stance was shared with participants during the workshops. Together, we interrogated how algorithmic “common sense” manifests visually and conceptually, and how such biases shape what is imaginable. By prompting messiness, ambiguity, contradiction, and socio-technical tension, we treated the GenAI interface not as a means to generate but as a critical artifact capable of disrupting both the techno-optimistic narratives that often dominate GenAI development.

We introduced the idea of critical AI artifacts to capture the layered processes involved in generating, prompting, interpreting, and responding to AI outputs within the workshop setting. Despite their origin in biased training data and prefigured imaginaries, these systems can be redirected toward grounded critique and imaginative resistance as other emerging technologies’ reconfiguration in the past demonstrates (Amoore, 2020).

Rising Tensions and More Contingent Futures

In this context, the AI-generated visuals became dialogic tools that opened up speculative space for rethinking the future of work. Confronting the idea of AI as inevitable or universally beneficial, participants re-situated it as contingent, capable of both enhancing and undermining care depending on the values embedded in its design and use. The resulting images surfaced tensions between institutional visions of efficiency and the lived realities of care provision.

A recurring ethnographic theme—what we have termed the technology’s unresolved need, referring to the persistent gap between what participants thought as problems the technological innovation should solve and what actually the technology was doing in everyday hospital life —was frequently revisited during the workshops. Participants often expressed skepticism toward the utopian images where technology seamlessly improved clinical work. These doubts were grounded in their day-to-day frustrations with existing systems, which, although functional, often failed to support what mattered most in their practice: time for care, human connection, and clinical autonomy. This misalignment between the technological promise of solving hospital problems and practitioners’ priorities underscored the limitations of prevailing digital health narratives.

This disjuncture was especially apparent in participants’ responses to dystopian scenarios, where fears of disempowerment, alienation, and loss of relationality were sharply expressed. Yet, these speculative visions also became productive: they allowed participants to articulate the type of futures they would reject, thereby clarifying what kinds of technological futures they might aspire to. In this sense, the speculative framework helped prompt not only tech anxieties but also values, preferences, and latent design imaginaries. Daydreams and nightmares played a crucial role, not simply as metaphors or structures, but as conceptual tools that exposed the tensions between technological possibility and lived experience in everyday work lives.

Beyond Binary Narratives

As previous scholarship has observed, the controversies surrounding AI’s use in everyday settings are often marginalized in mainstream representations (Munk et al., 2024). Utopian and dystopian media narratives dominate, leaving little room for grounded, relational accounts of how AI is actually experienced. In our study, participants’ visions were shaped less by direct encounters with AI than by broader structural issues like staffing shortages, bureaucratic overload, and limited professional autonomy, which influence the desirability and plausibility of technological change. While some welcomed automation as a potential aid, others feared it would erode the very conditions that make care possible.

Our findings reinforce the idea that AI systems like GenAI Midjourney platform carry not only algorithmic bias but also aesthetic and ideological assumptions; unless deliberately directed, AI-generated images tended to produce distorted human forms or idealized, sterile environments. This recalls Alice Street’s (2016) observation that assumptions about caregivers’ motivations (such as compassion or selflessness) can obscure the systemic constraints on care. When these ideals are reproduced uncritically in AI outputs, they risk reinforcing narrow understandings of health care work, masking issues around labor, professional expectations, value, and hierarchy.

Methodological Implications and Future Directions

We approached GenAI not as a representational tool but as a partial and contested interlocutor, one whose outputs require interpretation, critique, and contextualization. This raises important epistemological questions about whose visions are presented as credible and whose are aestheticized or abstracted, with broader implications beyond the health care sector. The science fiction tropes that infused our visuals were powerful in bringing forth contrast and familiarity, but they also risked reproducing deterministic binaries if not explicitly problematized. One challenge, then, is to balance the affective and imaginative force of speculative imagery with analytical attention to its limits and politics—limits which can only be understood through deep immersion and understanding of everyday life. As the use of GenAI tools becomes increasingly pervasive, so expands the creative skill set of the future social scientists and design researchers who will work with ethnographic data to carefully craft the dreamspaces afforded by these tools. As we become inundated with AI-generated content, it will be crucial for researchers to develop rigorous visions of futures that are both imaginative and, especially, grounded enough to be effective in expanding the horizons of what might be possible.

Our research demonstrates the potential of GenAI to support speculative, participatory, and visual methods within ethnographic inquiry. Through a reflexive methodological framework grounded in ethnographic imagining, we used AI to visualize participant perspectives and to co-produce artifacts that made their concerns, desires, and dilemmas visible and discussable. The workshops created a shared space in which collective engagement with AI produced a tentative communal language of speculation, one that may support more worker-centered, value-led conversations about future work-life. These discussions need not be confined to academic critique, but can take shape in the everyday practices, concerns, and desires of those most affected by technological transformations.

Ultimately, this research illustrates how ethnographic imagining, when expanded through GenAI, can support new articulations of what matters in technological transformation. The speculative materials developed through our workshops gave form to complex emotions and tacit insights, enabling participants to voice what kind of future care they find possible, troubling, or worth striving for.

Substantively, this research revealed that health care practitioners’ dreams and fears are shaped less by abstract visions of AI and far more by the everyday frictions and material limitations of clinical life. Across fieldwork and workshops, three concerns emerge repeatedly: having time for care, preserving human connection, and safeguarding clinical autonomy. These were articulated not in theoretical terms but through concrete experiences—an emergency physician describing how she spent 45 minutes completing digital transfer forms while a patient deteriorated beside her; a senior nurse still hand-writing notes to be faxed to rural clinics; or a community nurse imagining telecare tools that would allow her to conduct proper assessments in patients’ living rooms rather than spend hours driving between distant towns. Practitioners’ dreams centered on technologies that could restore the relational and ethical foundations of care: systems that reduce administrative load, enable seamless communication, and strengthen rather than fragment teamwork. Their nightmares, by contrast, evoked vivid anxieties—such as Julia’s recurring fear of “going back to paper” or workshop reactions to dystopian images—expressing worries about over-automation, deskilling, and the erosion of care into a task-oriented, efficiency-driven practice. Taken together, these insights show that what is at stake for practitioners is not whether AI becomes more sophisticated, but whether technological futures support and improve the social and moral dimensions of health care work. Bringing these visions to the fore allows us to understand how technological futures are contested, situated, and desired by the health care workers as deeply human concerns.

We repositioned AI as a site of coalition, interpretation, and intervention, a means of engaging more critically and collectively with the grounded futures we are already beginning to inhabit.

In this sense, we tested whether GenAI could operate not simply as a representational tool, but as a technology of (alternative) imagination (Sneath et al., 2009), one capable of reconfiguring how technological futures are envisioned, narrated, and contested. Following the approach of design ethnography, which involves continuous methodological innovation and ethnographic-theoretical dialogue (Pink et al., 2022), we have demonstrated how GenAI can be mobilized as part of the “messy” and uneven process of following “ethnographic hunches” (Pink, 2021) to create stories that make sense according to both theoretical and empirical logics. Future work might explore how these artifacts travel beyond the research context, and what happens when speculative outputs are re-integrated into institutional systems, policy conversations, or broader public discourse. We encourage further experimentation and theoretical debate concerning how to account for futures ethnographically through the integration of GenAI into social science research.

Footnotes

Acknowledgements

We acknowledge and are grateful for Paulina Noches’s collaboration in preparing the physical materials following Dr Korsmeyer’s design and co-facilitating the last four workshops in person, along with Debora Lanzeni. We would like to acknowledge our Autowork project colleagues at NTNU, especially A/Prof Håkon Fyhn, Prof Roger A. Søraa, Mark Kharas, and Dr Kristoffer Nergård. At Monash, Chief Investigators: Prof Sarah Pink, A/Prof Aneta Podkalikca; PhD student: Ms Paulina Noches Pareja; and Research Associates: Dr Ari Jerrems, Dr Jigya Khabar (Sales and Services Stream), and also Dr Ben Lyall (Construction Stream).

Ethical Approval and Informed Consent Statements

This study was conducted ethically and in compliance with relevant guidelines in Monash University and Bendigo Health. Ethics has been processed and approved by Monash University Human Research Ethics Committee (MUHREC) and Bendigo Health Human Research Ethics Committee (BH HREC). Participants have signed informed consent accompanied by a research statement sheet. The data gathered in this research are secured and held in accordance with Monash University and Bendigo Health Data Management guidelines.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: AUTOWORK project funded by Research Council Norway: Nr. 301088.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Not available publicly.